Signal Processing and Representation Theory Lecture 2 Outline

- Slides: 56

Signal Processing and Representation Theory Lecture 2

Outline: • • Review Invariance Schur’s Lemma Fourier Decomposition

Representation Theory Review An orthogonal / unitary representation of a group G onto an inner product space V is a map that sends every element of G to an orthogonal / unitary transformation, subject to the conditions: 1. (0)v=v, for all v V, where 0 is the identity element. 2. (gh)v= (g) (h)v

Representation Theory Review If we are given a representation of a group G onto a vector space V, then W V is a sub-representation if: (g)w W for every g G and every w W. A representation of a group G onto V is irreducible if the only sub-representations are W V are W=V or W=.

Representation Theory Review Example: – If G is the group of 2 x 2 rotation matrices, and V is the vector space of 4 -dimensional real / complex arrays, then: is not an irreducible representation since it maps the space W=(x 1, x 2, 0, 0) back into itself.

Representation Theory Review Given a representation of a group G onto a vector space V, for any two elements v, w V, we can define the correlation function: Corr (g, v, w)= v, (g)w Giving the dot-product of v with the transformations of w.

Representation Theory Review (Why We Care) Given a representation of a group G onto a vector space V, if we can express V as the direct sum of irreducible representations: V=V 1 … Vn then: 1. Alignment can be solved more efficiently by reducing the number of multiplications in the computation of the correlation. 2. We can obtain (robust) transformation-invariant representations.

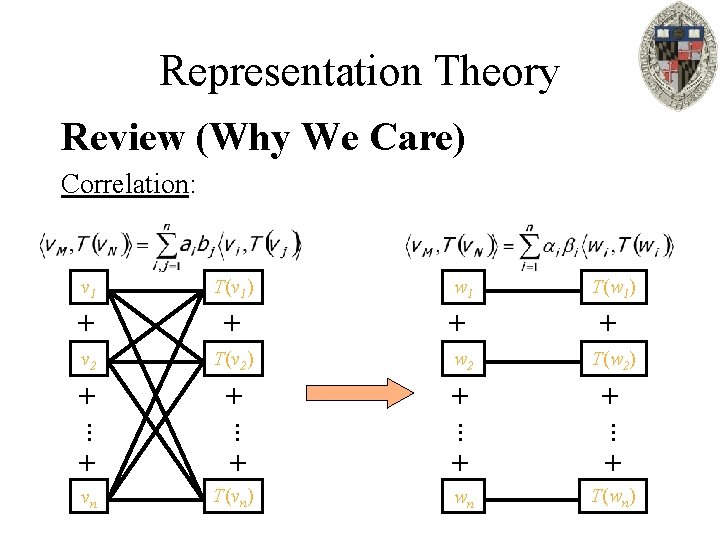

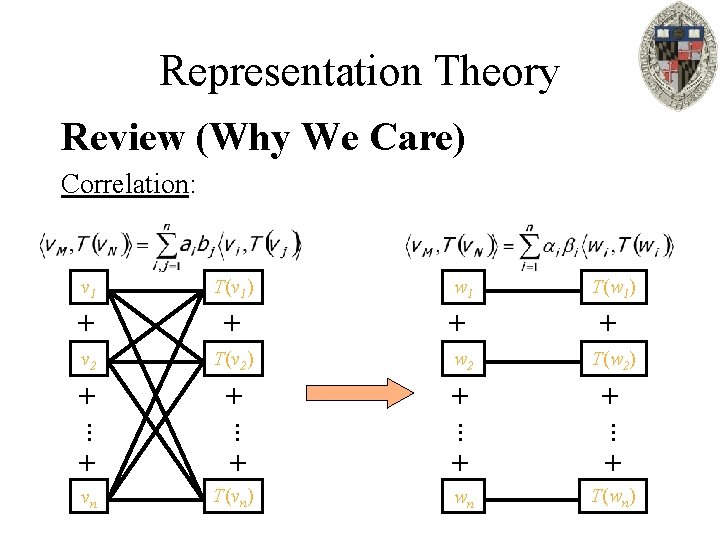

Representation Theory Review (Why We Care) Correlation: v 1 T(v 1) w 1 T(w 1) + + v 2 T(v 2) w 2 T(w 2) + + … … + + vn T(vn) wn T(wn)

Outline: • • Review Invariance Schur’s Lemma Fourier Decomposition

Representation Theory Motivation If v. M is a spherical function representing model M and vn is a spherical function representing model N, we want to define a map Ψ that takes a spherical function and return a rotation invariant array of values: – Ψ(v. M)=Ψ(T(v. M)) for all rotations T and all shape descriptors v. M. – ||Ψ(v. M)-Ψ(v. N)|| ||v. M-v. N|| for all shape descriptors v. M and v. N.

Representation Theory More Generally Given a representation of a group G onto a vector space V, we want to define a map Ψ that takes a vector v V and returns a G-invariant array of values: – Ψ(v)=Ψ( (g)v) for all v V and all g G. – ||Ψ(v)-Ψ(w)|| ||v-w|| for all v, w V.

Representation Theory Invariance Approach: Given a representation of a group G onto a vector space V, map each vector v V to its norm: Ψ(v)=||v|| 1. Since the representation is unitary, || (g)v||=||v|| for all v V and all g G. Thus, Ψ(v)=Ψ( (g)v) and the map Ψ is invariant to the action of G. 2. Since the difference between the size of two vectors is never bigger than the distance between the vectors, we have ||Ψ(v)-Ψ(w)|| ||v-w|| for all v, w V.

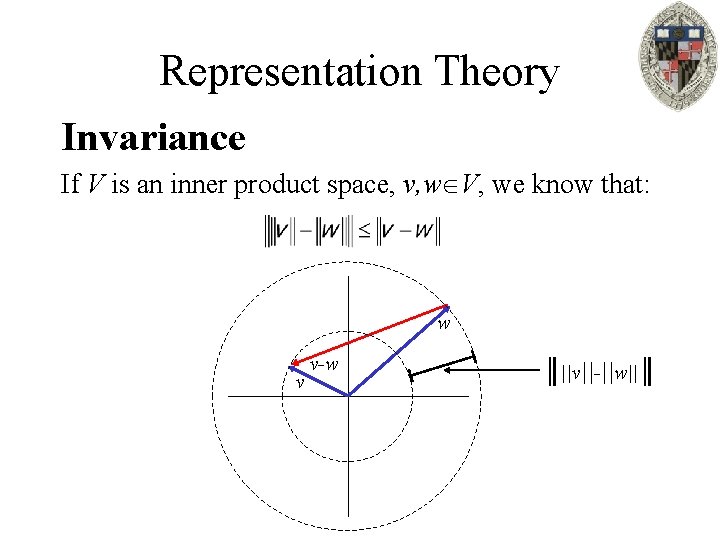

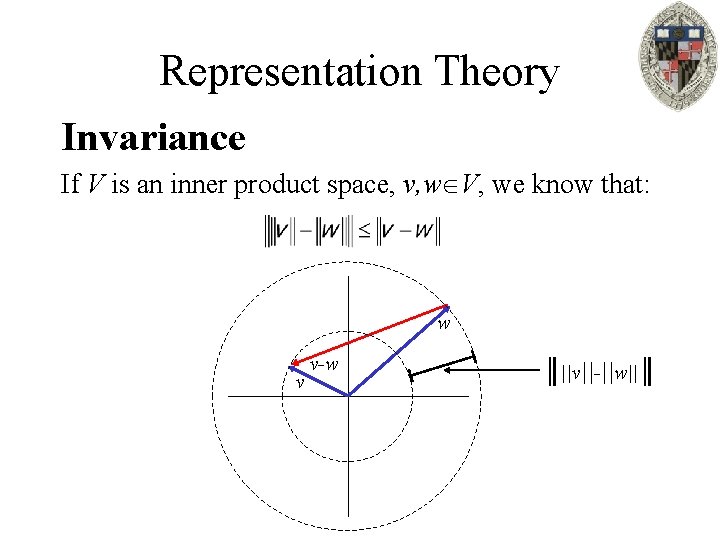

Representation Theory Invariance If V is an inner product space, v, w V, we know that: w v v-w ║||v||-||w||║

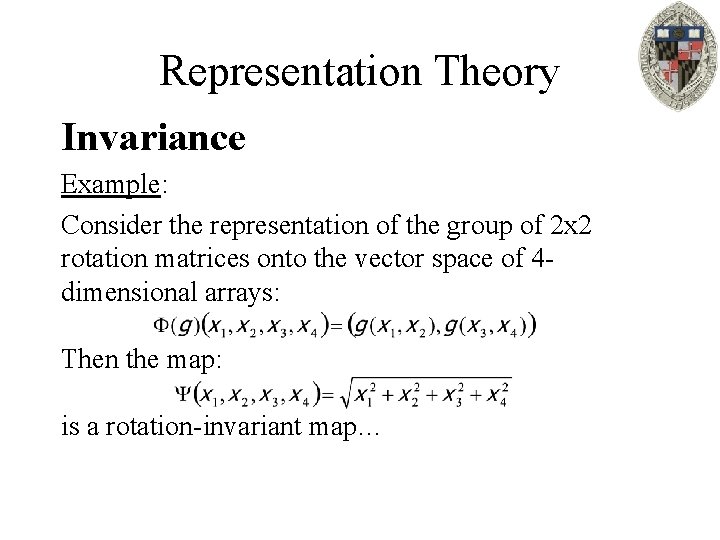

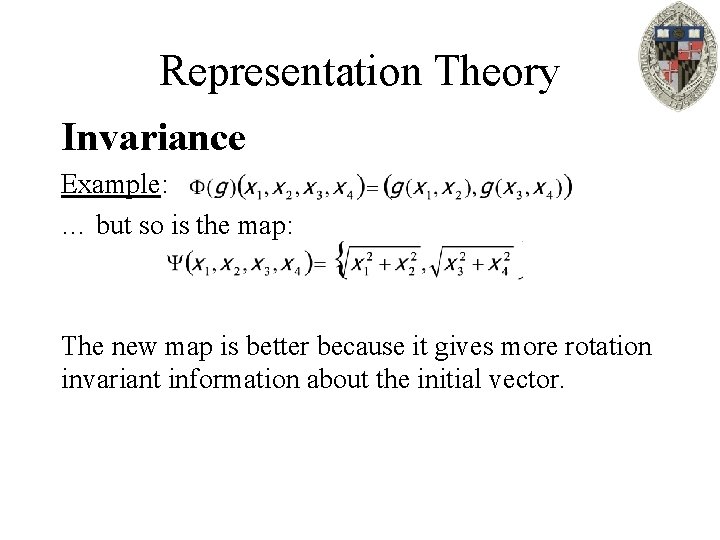

Representation Theory Invariance Example: Consider the representation of the group of 2 x 2 rotation matrices onto the vector space of 4 dimensional arrays: Then the map: is a rotation-invariant map…

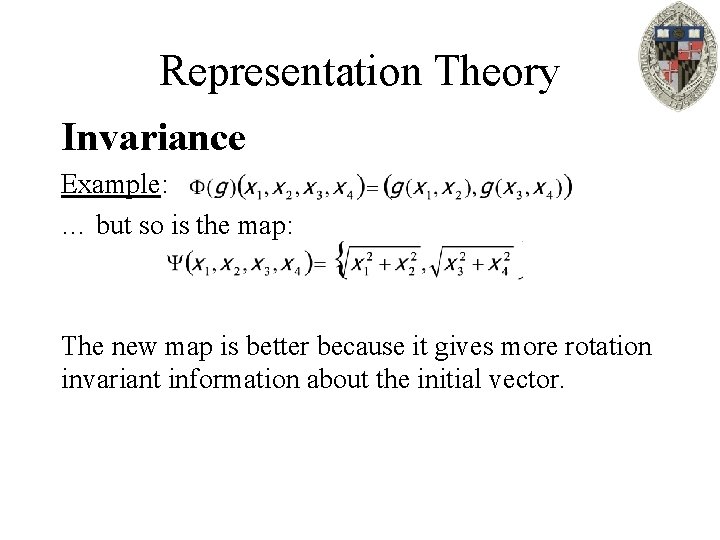

Representation Theory Invariance Example: … but so is the map: The new map is better because it gives more rotation invariant information about the initial vector.

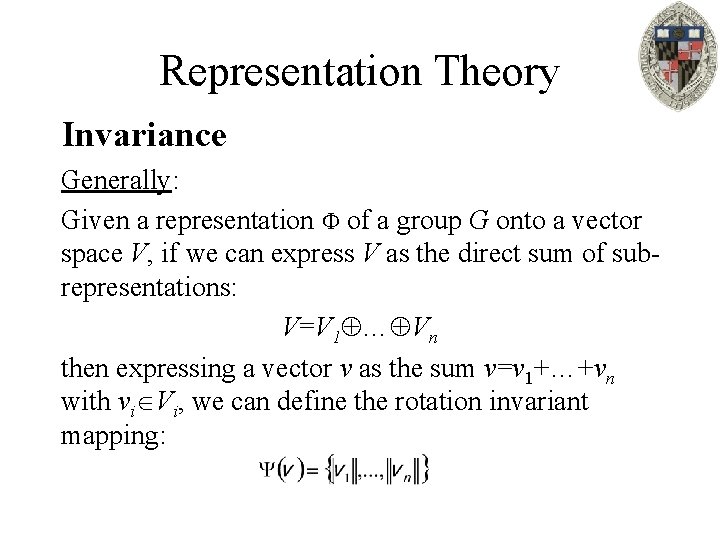

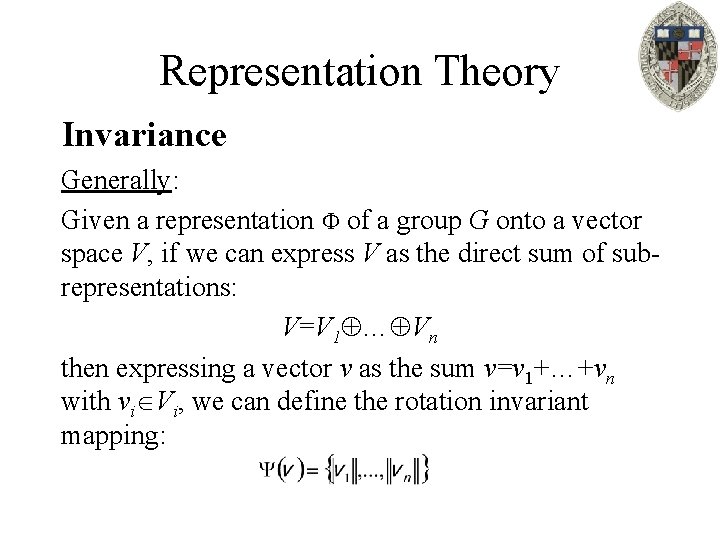

Representation Theory Invariance Generally: Given a representation of a group G onto a vector space V, if we can express V as the direct sum of subrepresentations: V=V 1 … Vn then expressing a vector v as the sum v=v 1+…+vn with vi Vi, we can define the rotation invariant mapping:

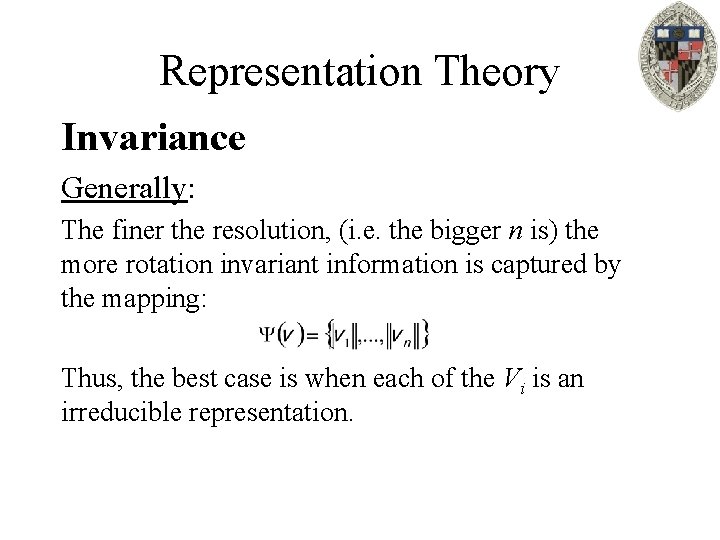

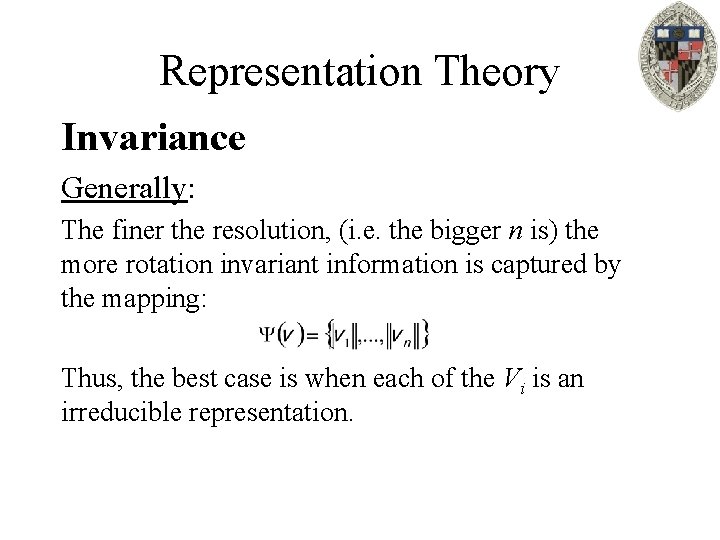

Representation Theory Invariance Generally: The finer the resolution, (i. e. the bigger n is) the more rotation invariant information is captured by the mapping: Thus, the best case is when each of the Vi is an irreducible representation.

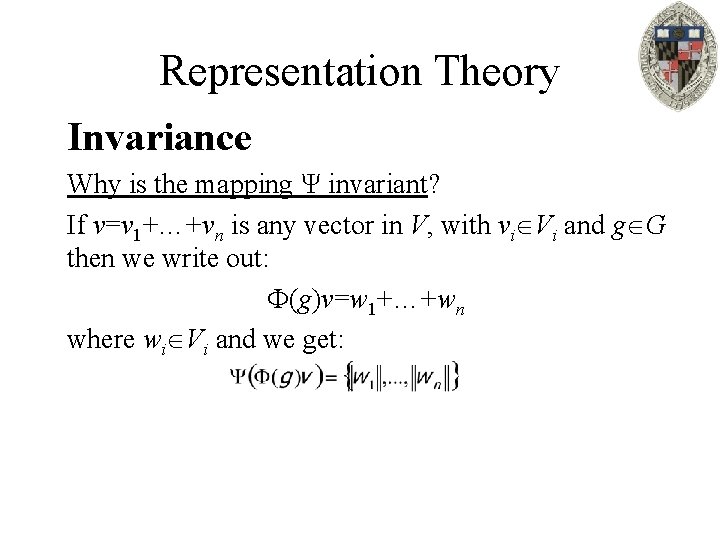

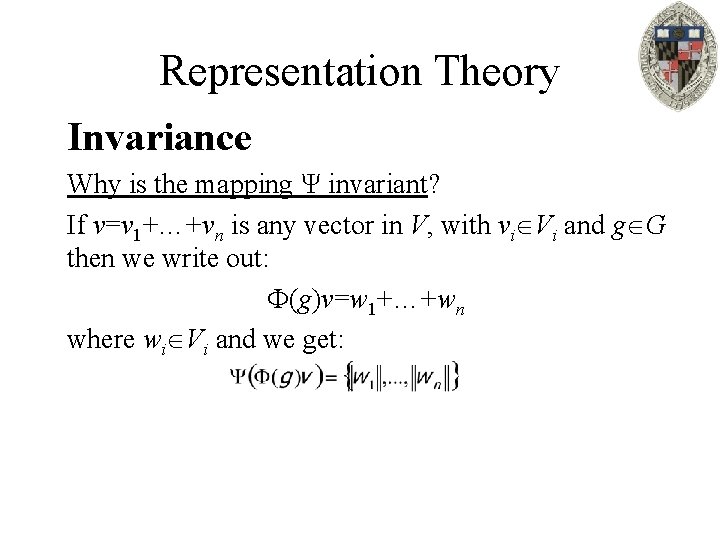

Representation Theory Invariance Why is the mapping Ψ invariant? If v=v 1+…+vn is any vector in V, with vi Vi and g G then we write out: (g)v=w 1+…+wn where wi Vi and we get:

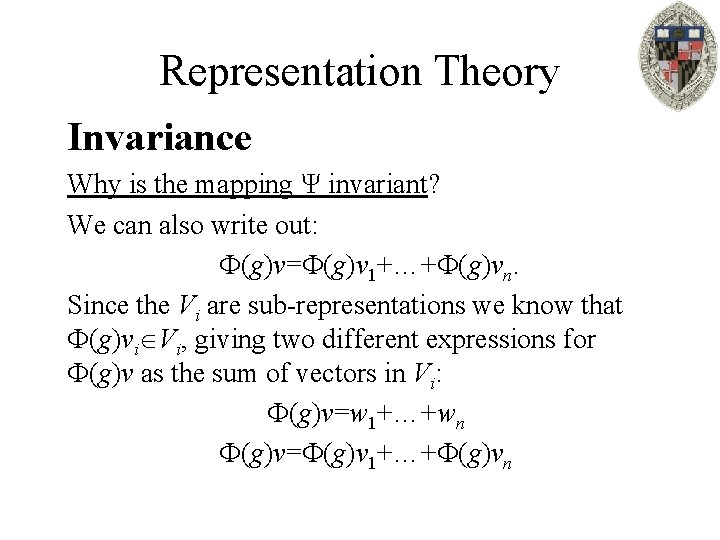

Representation Theory Invariance Why is the mapping Ψ invariant? We can also write out: (g)v= (g)v 1+…+ (g)vn. Since the Vi are sub-representations we know that (g)vi Vi, giving two different expressions for (g)v as the sum of vectors in Vi: (g)v=w 1+…+wn (g)v= (g)v 1+…+ (g)vn

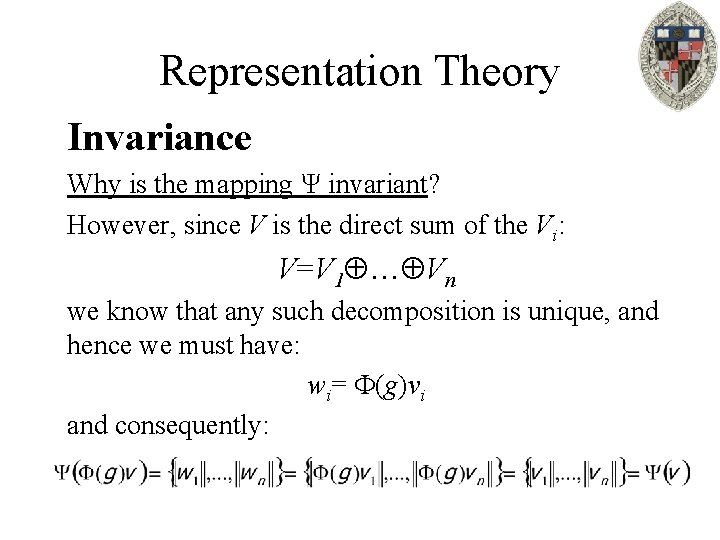

Representation Theory Invariance Why is the mapping Ψ invariant? However, since V is the direct sum of the Vi: V=V 1 … Vn we know that any such decomposition is unique, and hence we must have: wi= (g)vi and consequently:

Outline: • • Review Invariance Schur’s Lemma Fourier Decomposition

Representation Theory Schur’s Lemma Preliminaries: – If A is a linear map A: V→V, then the kernel of A is the subspace W V such that A(w)=0 for all w W.

Representation Theory Schur’s Lemma Preliminaries: – If A is a linear map, the characteristic polynomial of A is the polynomial: – The roots of the characteristic polynomial, the values of λ for which PA(λ)=0, are the eigen-values of A. – If V is a complex vector space and A: V→V is a linear transformation, then A always has at least one eigenvalue. (Because of the algebraic closure of ℂ. )

Representation Theory Schur’s Lemma: If G is a commutative group, and is a representation of G onto a complex inner product space V, then if V is more than one complex dimensional, it is not irreducible. So we can break up V into a direct sum of smaller, one-dimensional representations.

Representation Theory Schur’s Lemma Proof: Suppose that V is an irreducible representation and larger than one complex-dimensional… Let h G be any element of the group. Then for every h G and every v V, we know that: (g) (h)(v)= (h) (g)(v).

Representation Theory Schur’s Lemma Proof: Since (h) is a linear operator we know that it has a complex eigen-value λ. Set A: V→V to be the linear operator: A= (h)- λI. Note that because G is commutative and diagonal matrices commute with any matrix, we have: (g)A=A (g) for all g G.

Representation Theory Schur’s Lemma Proof: A= (h)- λI Set W V to be the kernel of A. Since λ is an eigenvalue of A, we know that W≠.

Representation Theory Schur’s Lemma Proof: Then since we know that: (g)A=A (g), for any w W=Kernel(A), we have: (g)(Aw)=0 A( (g)w)=0. Thus, (g)w W for all g G and therefore we get a sub-representation of G on W.

Representation Theory Schur’s Lemma Proof: Two cases: 1. Either W≠V, in which case we did not start with an irreducible representation. 2. Or, W=V, in which case the kernel of A is all of V, which implies that A=0 and hence (h)=λI. Since this must be true for all h G, this must mean that every h G, acts on V by multiplication by a complex scalar. Then any one-dimensional subspace of V is an irreducible representation.

Outline: • • Review Invariance Schur’s Lemma Fourier Decomposition

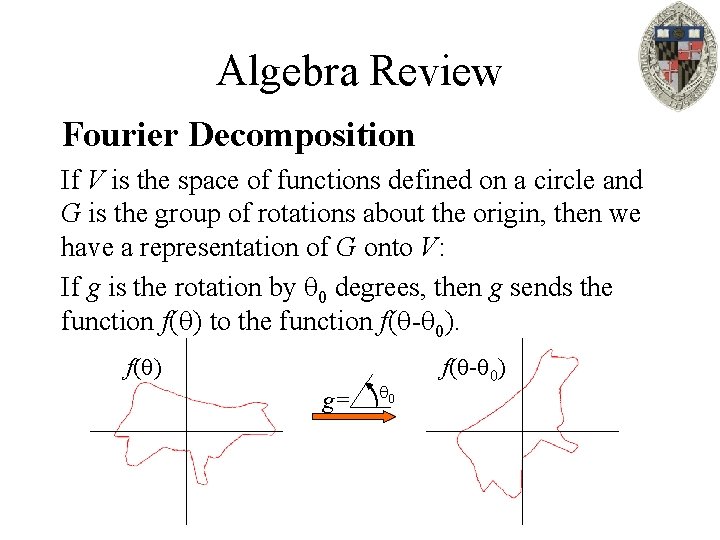

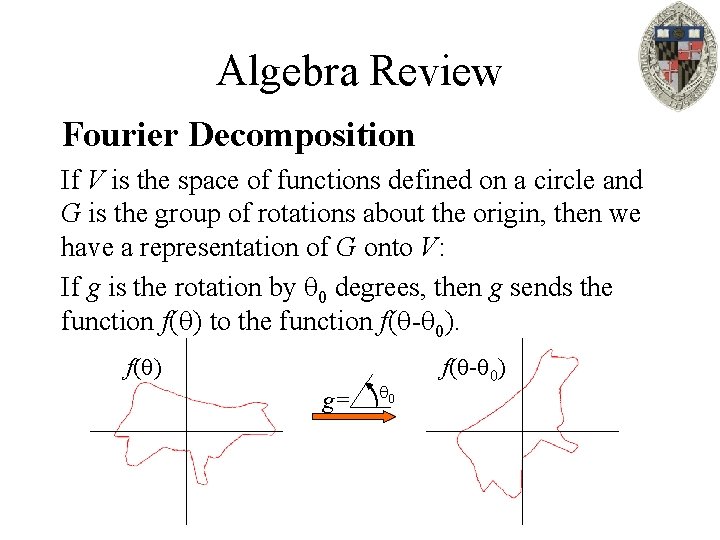

Algebra Review Fourier Decomposition If V is the space of functions defined on a circle and G is the group of rotations about the origin, then we have a representation of G onto V: If g is the rotation by 0 degrees, then g sends the function f( ) to the function f( - 0). f( ) g= 0 f( - 0)

Algebra Review Fourier Decomposition Since the group of 2 D rotations is commutative, by Schur’s lemma we know that there exists onedimensional sub-representations Vi V such that V=V 1 … Vn …

Algebra Review Fourier Decomposition Or in other words, there exist orthogonal, complexvalued, functions {w 1( ), …, wn( ), …} such that for any rotation g G, we have: (g)wi( ) =λi(g)wi( ) with λi(g) ℂ.

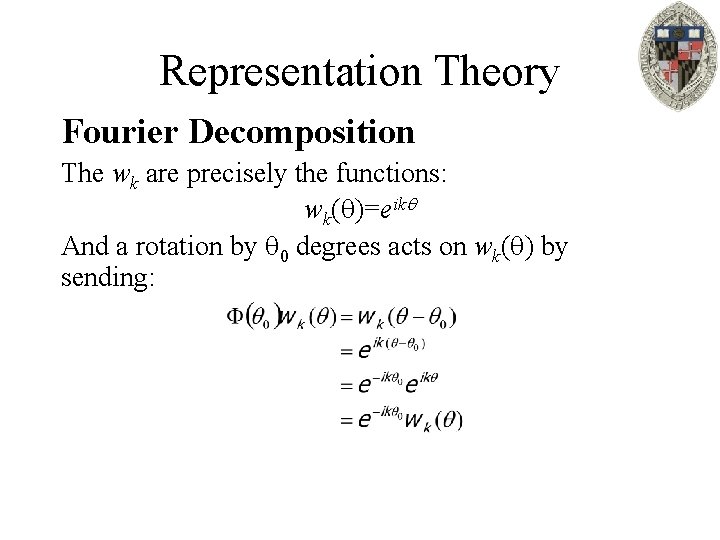

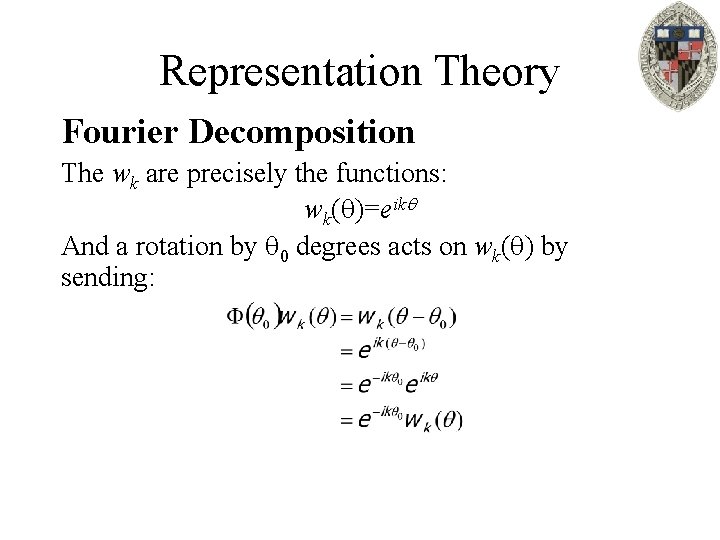

Representation Theory Fourier Decomposition The wk are precisely the functions: wk( )=eik And a rotation by 0 degrees acts on wk( ) by sending:

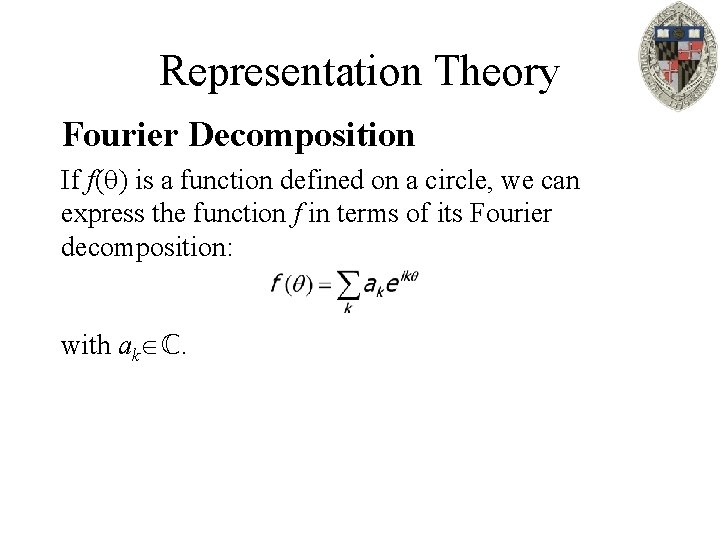

Representation Theory Fourier Decomposition If f( ) is a function defined on a circle, we can express the function f in terms of its Fourier decomposition: with ak ℂ.

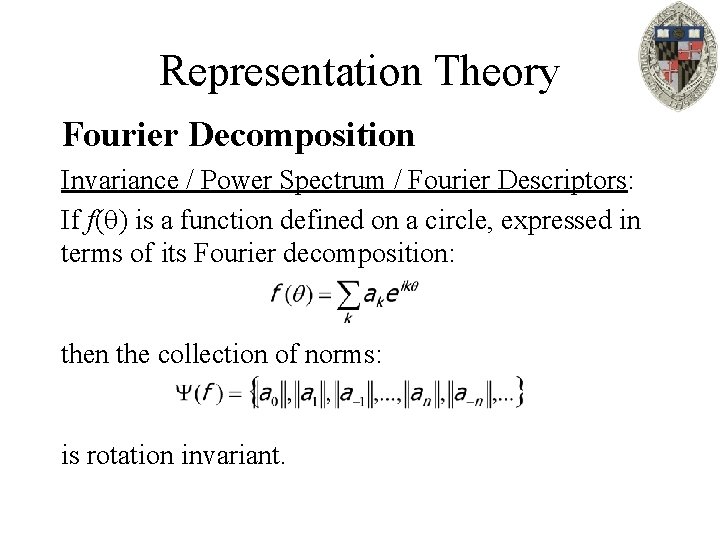

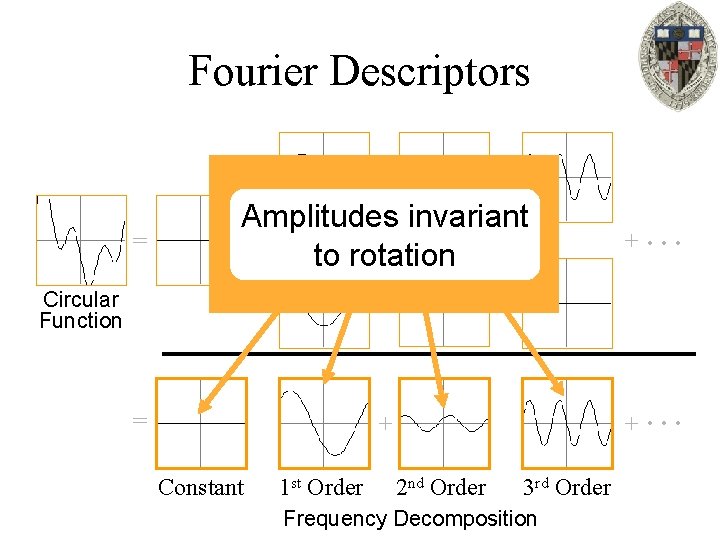

Representation Theory Fourier Decomposition Invariance / Power Spectrum / Fourier Descriptors: If f( ) is a function defined on a circle, expressed in terms of its Fourier decomposition: then the collection of norms: is rotation invariant.

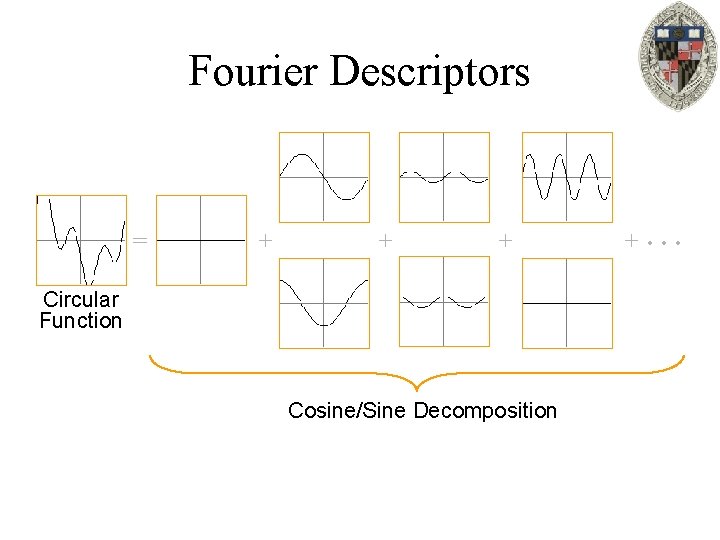

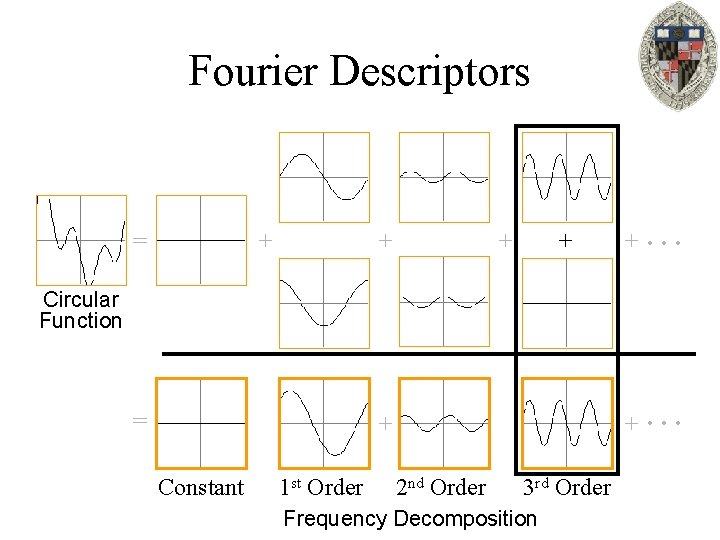

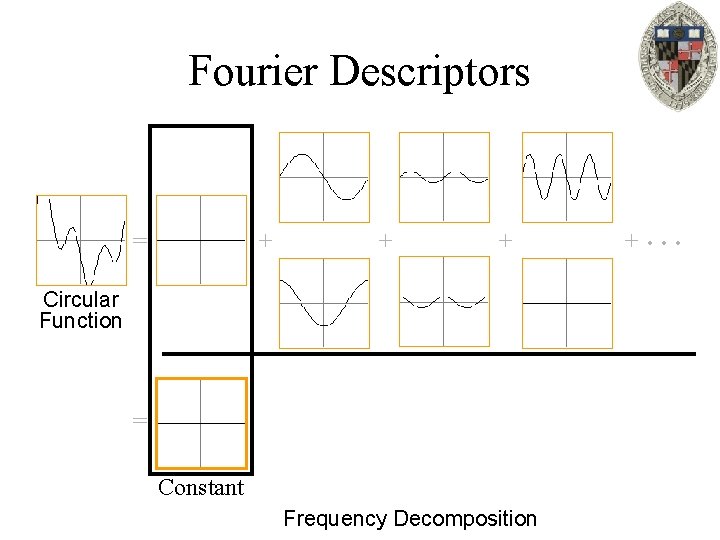

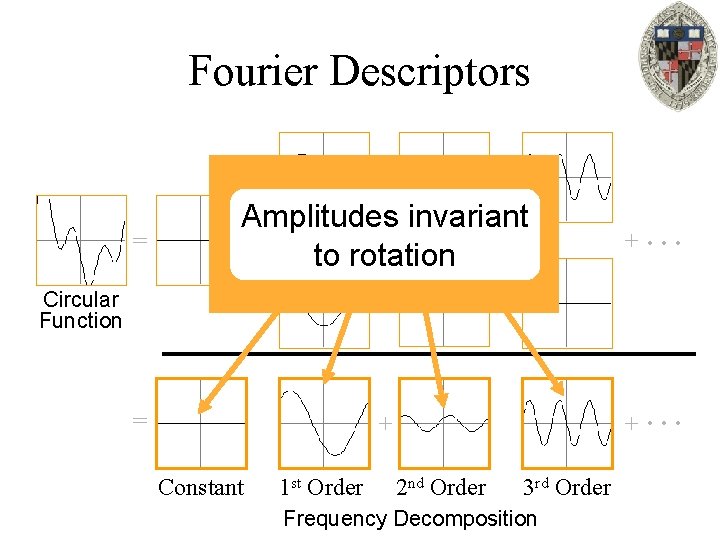

Fourier Descriptors Circular Function

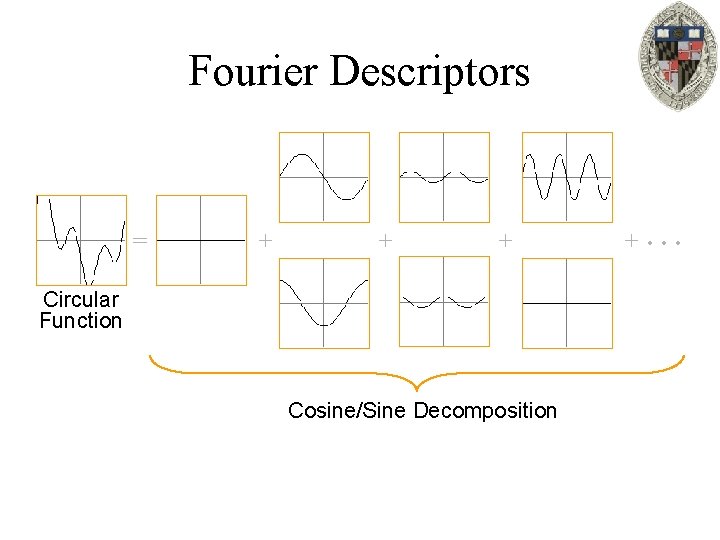

Fourier Descriptors = + + + Circular Function Cosine/Sine Decomposition + …

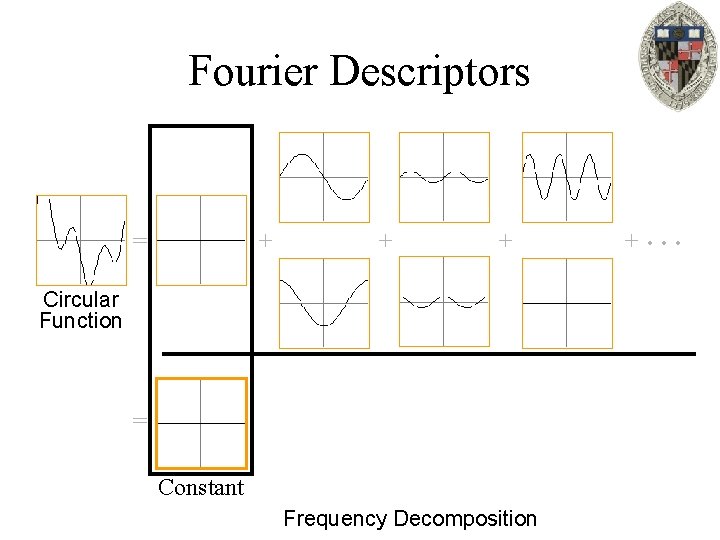

Fourier Descriptors = + + + Circular Function = Constant Frequency Decomposition + …

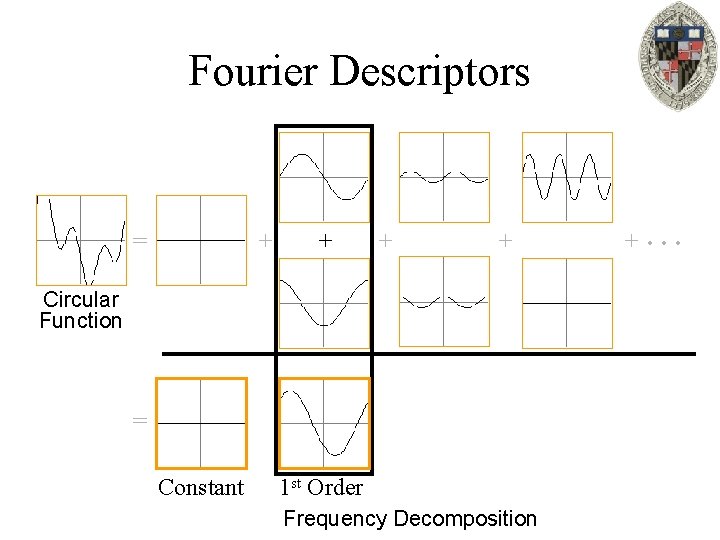

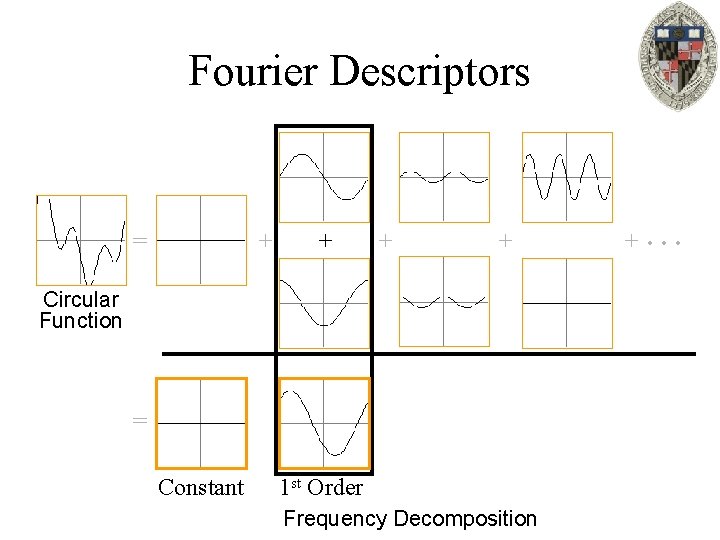

Fourier Descriptors = + + Circular Function = Constant 1 st Order Frequency Decomposition + …

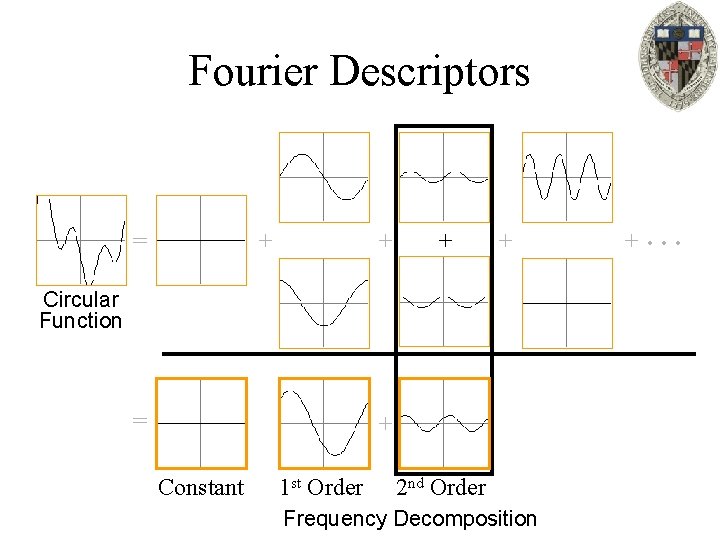

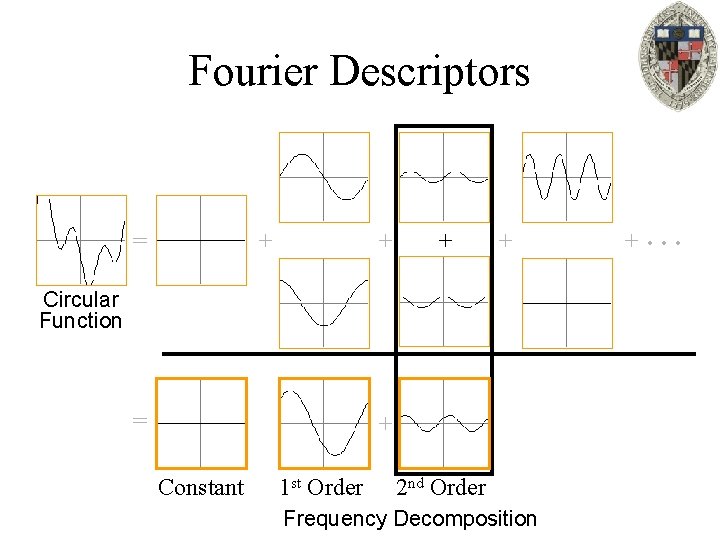

Fourier Descriptors = + + Circular Function = + Constant 1 st Order 2 nd Order Frequency Decomposition + …

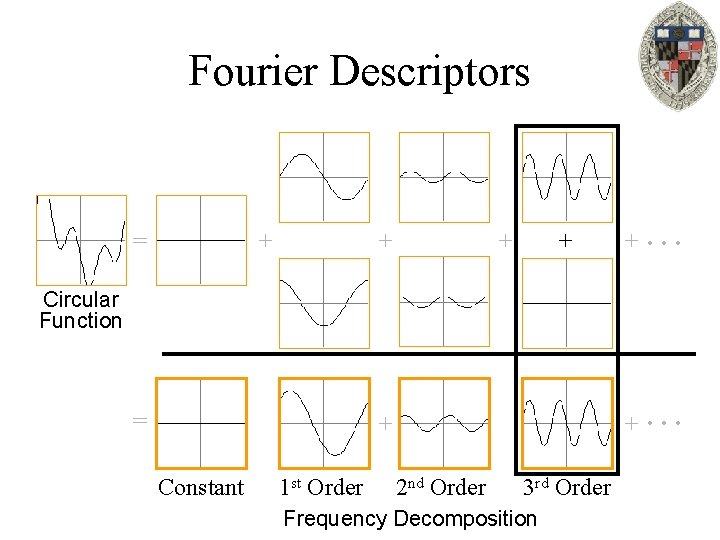

Fourier Descriptors = + + + … Circular Function = + Constant 1 st Order 2 nd Order 3 rd Order Frequency Decomposition

Fourier Descriptors = Amplitudes invariant + + + to rotation + … = + + … Circular Function Constant 1 st Order 2 nd Order 3 rd Order Frequency Decomposition

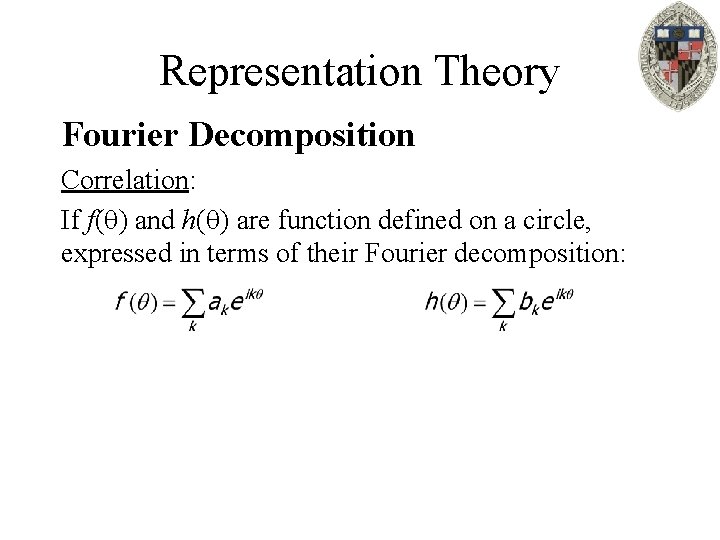

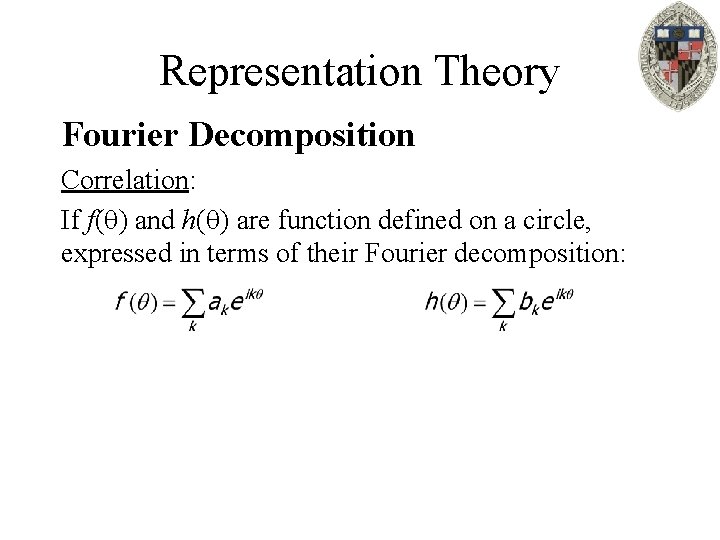

Representation Theory Fourier Decomposition Correlation: If f( ) and h( ) are function defined on a circle, expressed in terms of their Fourier decomposition:

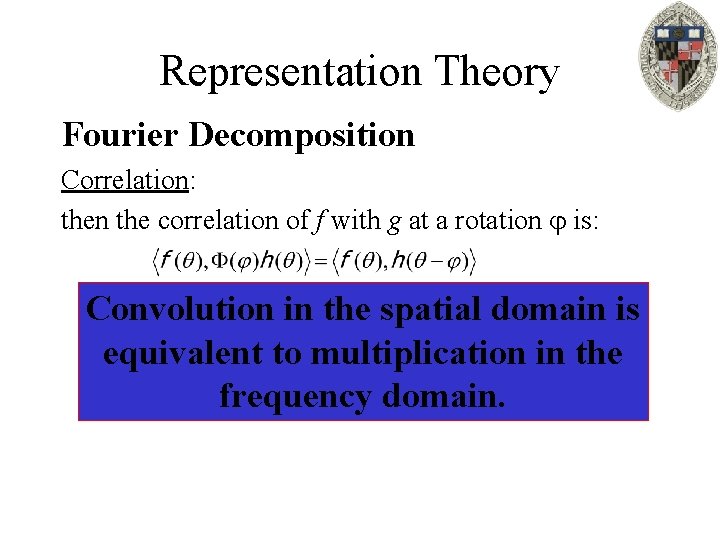

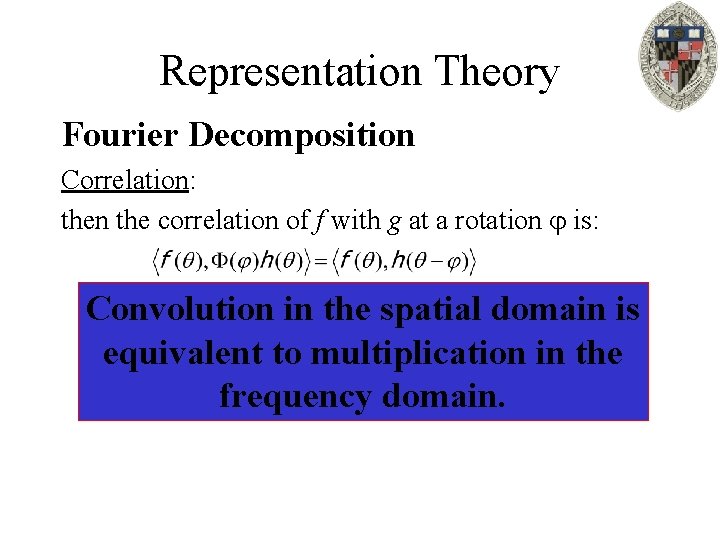

Representation Theory Fourier Decomposition Correlation: then the correlation of f with g at a rotation is: Convolution in the spatial domain is equivalent to multiplication in the frequency domain.

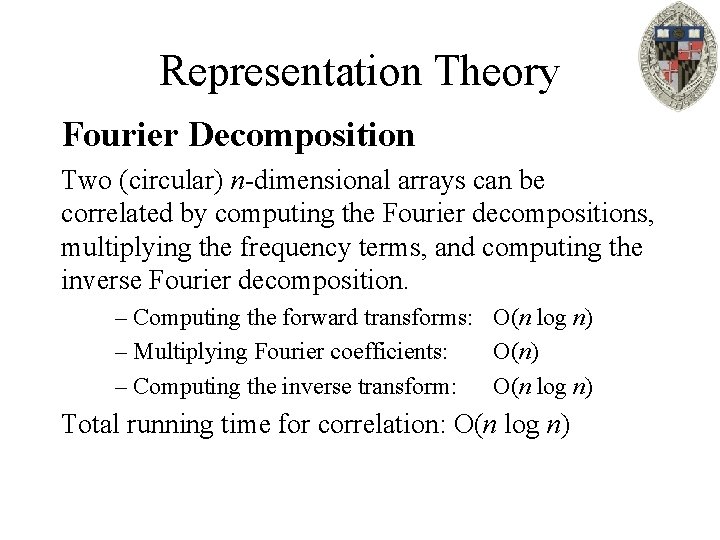

Representation Theory Fourier Decomposition Two (circular) n-dimensional arrays can be correlated by computing the Fourier decompositions, multiplying the frequency terms, and computing the inverse Fourier decomposition. – Computing the forward transforms: O(n log n) – Multiplying Fourier coefficients: O(n) – Computing the inverse transform: O(n log n) Total running time for correlation: O(n log n)

Representation Theory How do we get the Fourier decomposition?

Representation Theory Fourier Decomposition Preliminaries: If f is a function defined in 2 D, we can get a function on the unit circle by looking at the restriction of f to points with norm 1.

Representation Theory Fourier Decomposition Preliminaries: A polynomial p(x, y) is homogenous of degree d if it is the sum of monomials of degree d: p(x, y)=ad xd+ad-1 xd-1 y+…+a 1 xyd-1+a 0 yd monomials of degree d

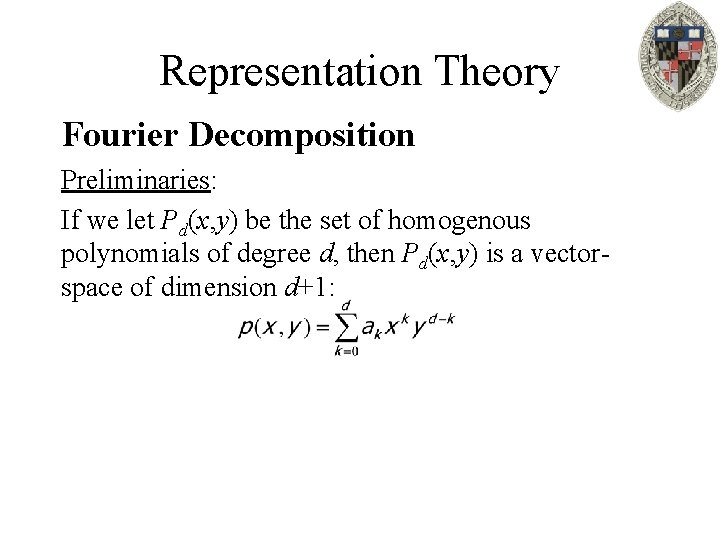

Representation Theory Fourier Decomposition Preliminaries: If we let Pd(x, y) be the set of homogenous polynomials of degree d, then Pd(x, y) is a vectorspace of dimension d+1:

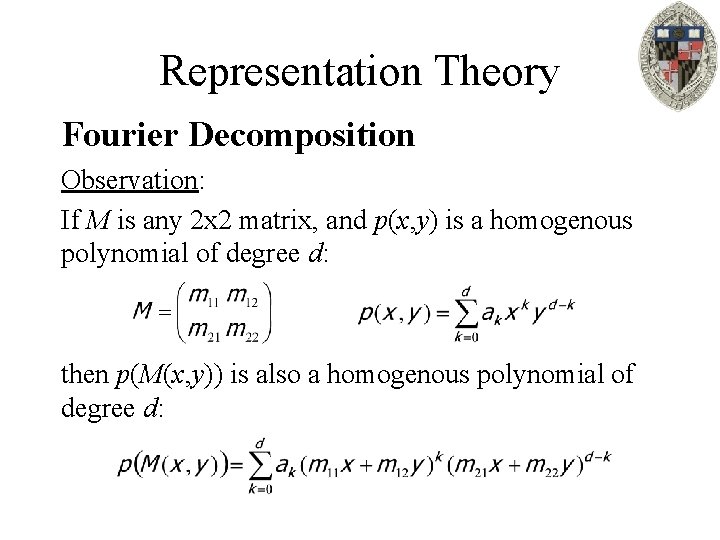

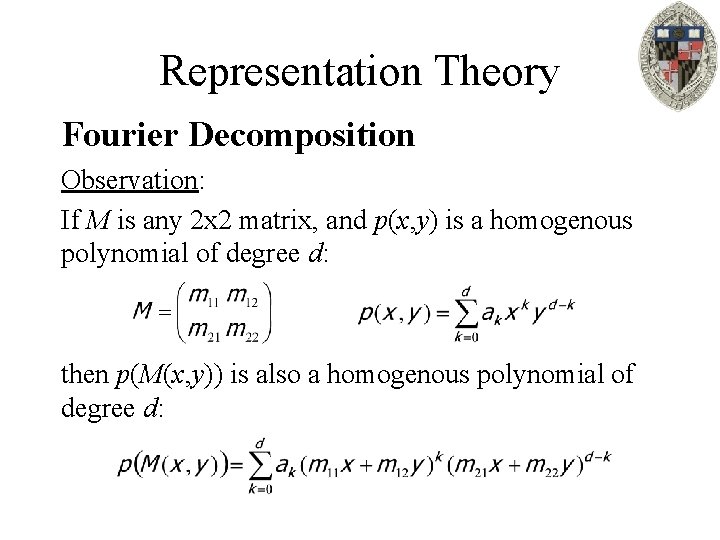

Representation Theory Fourier Decomposition Observation: If M is any 2 x 2 matrix, and p(x, y) is a homogenous polynomial of degree d: then p(M(x, y)) is also a homogenous polynomial of degree d:

Representation Theory Fourier Decomposition If V is the space of functions on a circle, we can set Vd V to be the space of functions on the circle that are restrictions of homogenous polynomials of degree d. Since a rotation will map a homogenous polynomial of degree d back to a homogenous polynomial of degree d, the spaces Vd are sub-representations.

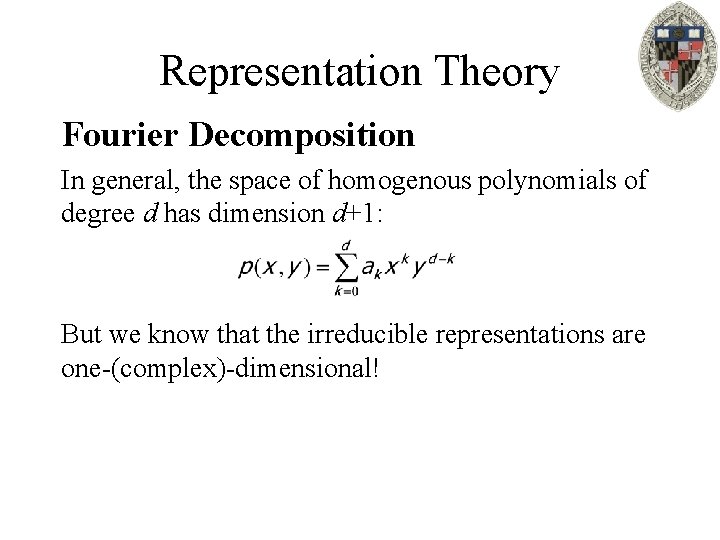

Representation Theory Fourier Decomposition In general, the space of homogenous polynomials of degree d has dimension d+1: But we know that the irreducible representations are one-(complex)-dimensional!

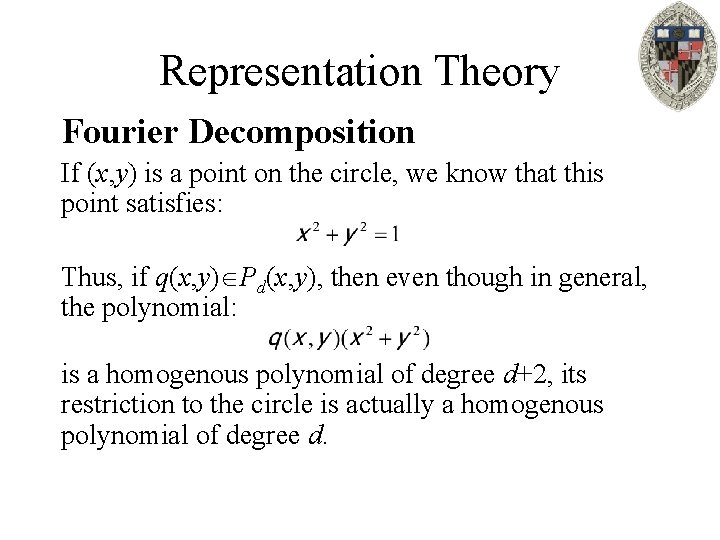

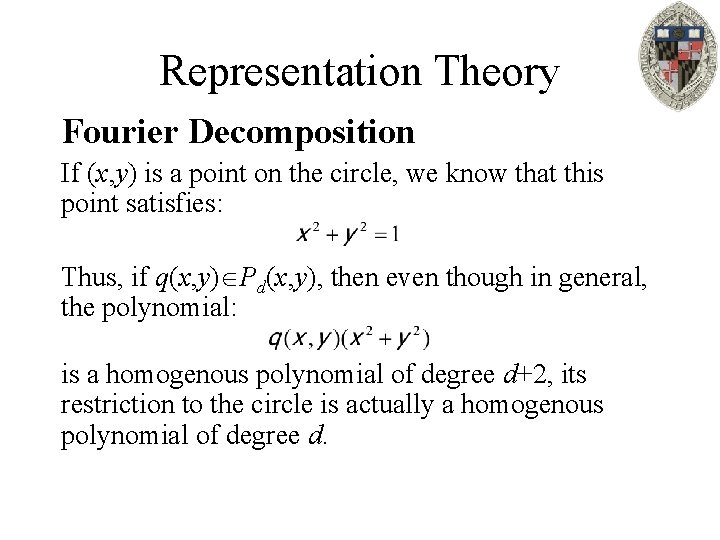

Representation Theory Fourier Decomposition If (x, y) is a point on the circle, we know that this point satisfies: Thus, if q(x, y) Pd(x, y), then even though in general, the polynomial: is a homogenous polynomial of degree d+2, its restriction to the circle is actually a homogenous polynomial of degree d.

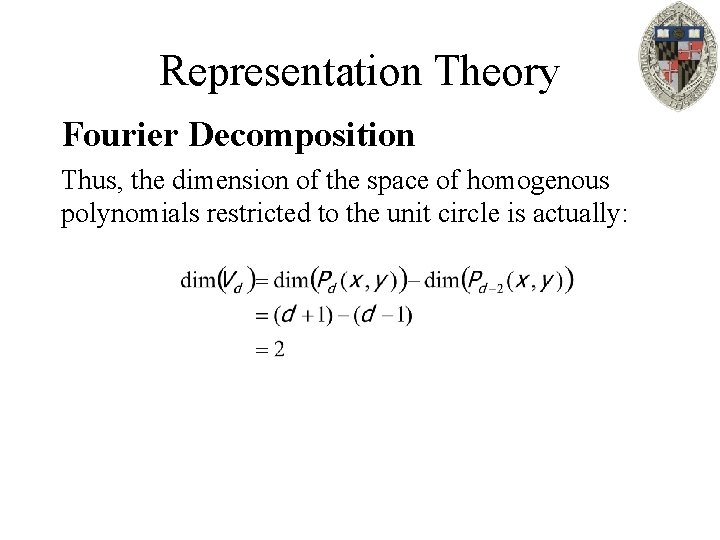

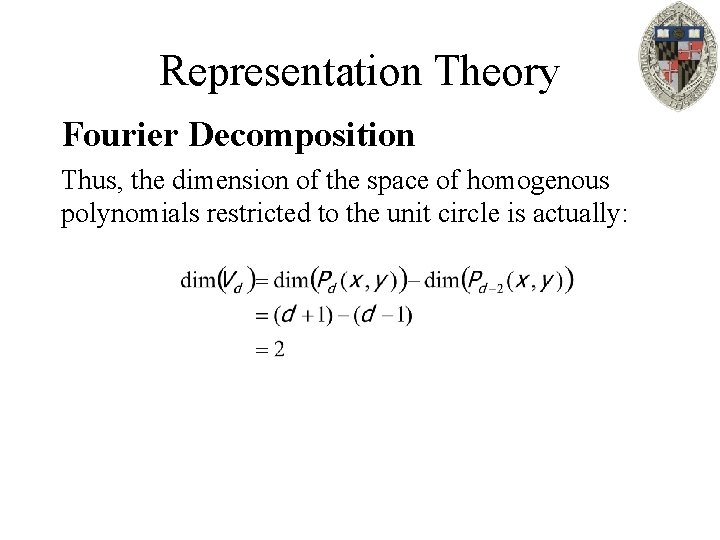

Representation Theory Fourier Decomposition Thus, the dimension of the space of homogenous polynomials restricted to the unit circle is actually:

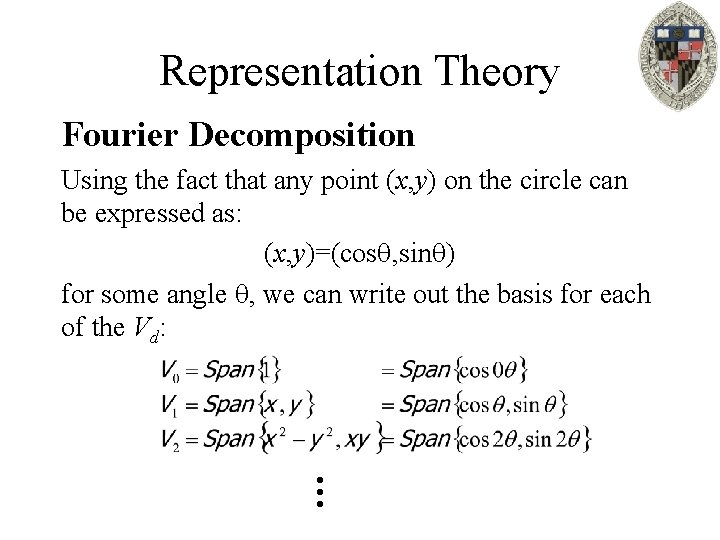

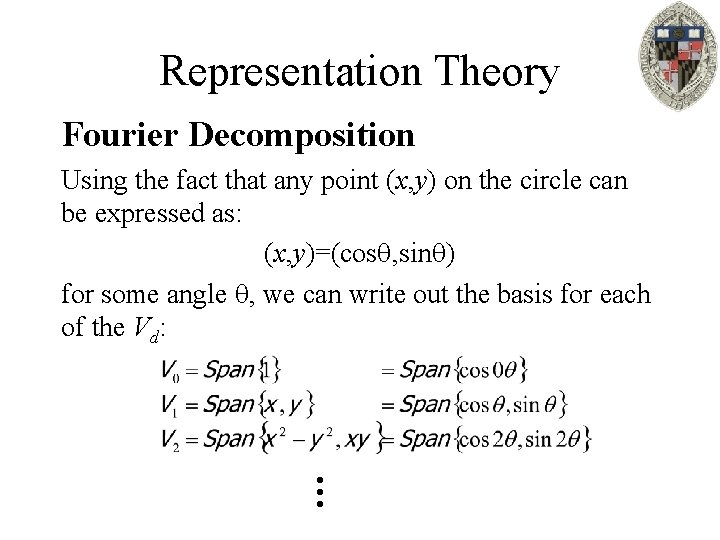

Representation Theory Fourier Decomposition Using the fact that any point (x, y) on the circle can be expressed as: (x, y)=(cos , sin ) for some angle , we can write out the basis for each of the Vd: