Pervasive Massively Multithreaded GPU Processors Michael C Shebanow

Pervasive Massively Multithreaded GPU Processors Michael C. Shebanow Sr. Arch Mgr, GPUs

The “Real” Title This talk is about SIMT Processors The Past, Present, and a glimpse of the Future ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

The Past ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

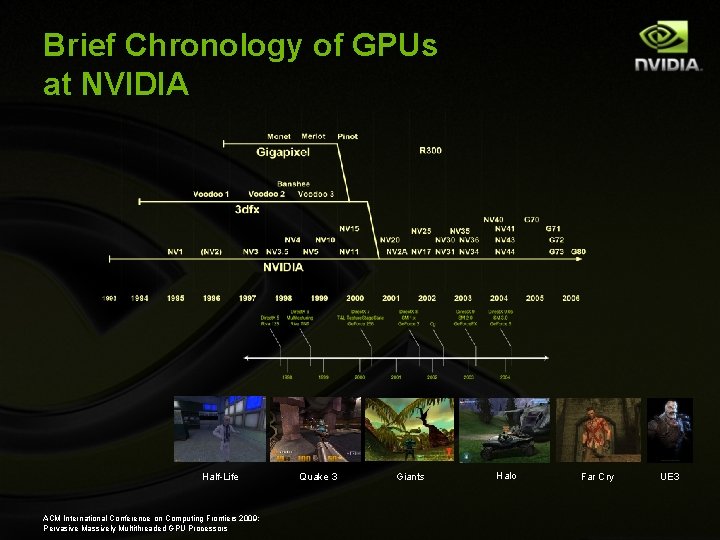

Brief Chronology of GPUs at NVIDIA Half-Life ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors Quake 3 Giants Halo Far Cry UE 3

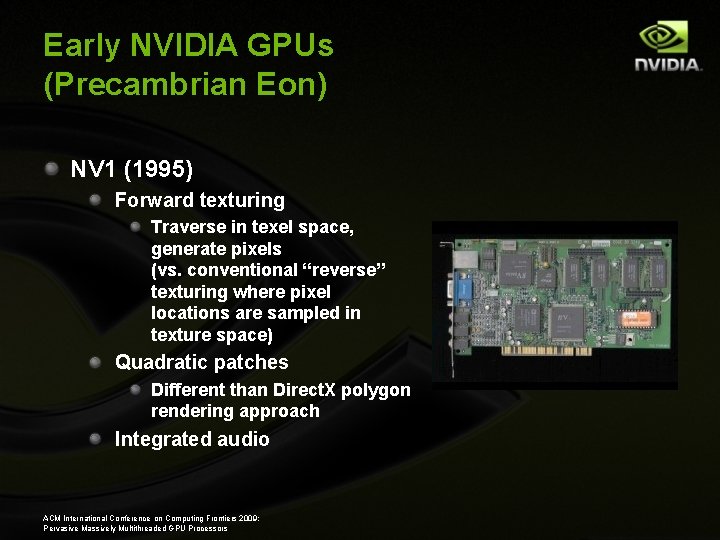

Early NVIDIA GPUs (Precambrian Eon) NV 1 (1995) Forward texturing Traverse in texel space, generate pixels (vs. conventional “reverse” texturing where pixel locations are sampled in texture space) Quadratic patches Different than Direct. X polygon rendering approach Integrated audio ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

Precambrian (cont’d) NV 3 - Riva 128 (Aug 1997) 1 st 128 -bit memory bus “Wider is better” Direct. X 3 support 1 pix/clk 100 MHz Unified memory for frame buffer and texture 16 b Z / 16 b color Integrated VGA from Weitek ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

Shades of Programmability (Phanerozoic Eon) NV 4 - Riva TNT (Summer 1998) 2 pix/clk @ 90 MHz Direct. X 5 Dual texturing @ 1 pix/clk Register combiners ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

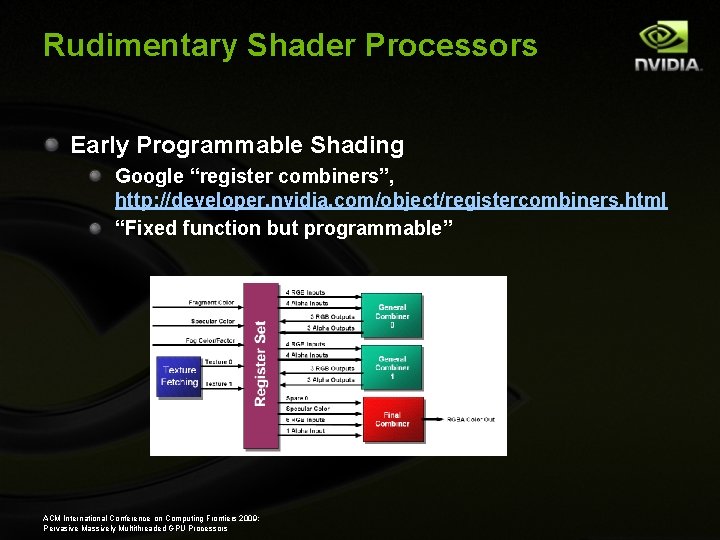

Rudimentary Shader Processors Early Programmable Shading Google “register combiners”, http: //developer. nvidia. com/object/registercombiners. html “Fixed function but programmable” ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

General Combiner Flow ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

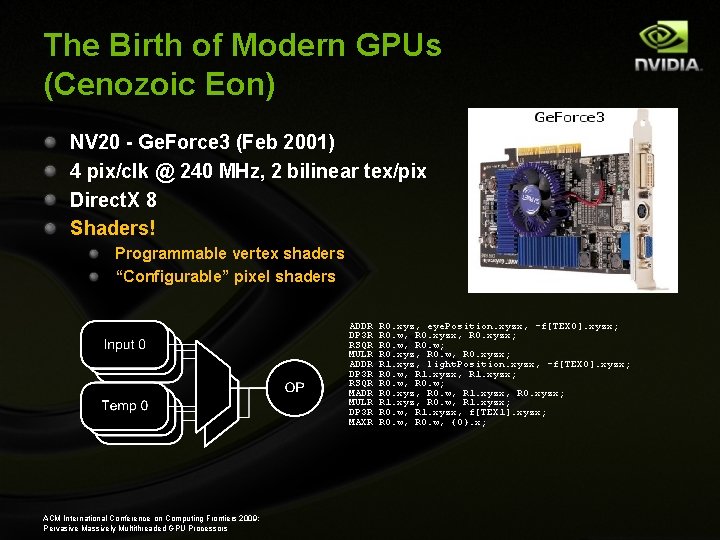

The Birth of Modern GPUs (Cenozoic Eon) NV 20 - Ge. Force 3 (Feb 2001) 4 pix/clk @ 240 MHz, 2 bilinear tex/pix Direct. X 8 Shaders! Programmable vertex shaders “Configurable” pixel shaders ADDR DP 3 R RSQR MULR ADDR DP 3 R RSQR MADR MULR DP 3 R MAXR ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors R 0. xyz, eye. Position. xyzx, -f[TEX 0]. xyzx; R 0. w, R 0. w; R 0. xyz, R 0. w, R 0. xyzx; R 1. xyz, light. Position. xyzx, -f[TEX 0]. xyzx; R 0. w, R 1. xyzx; R 0. w, R 0. w; R 0. xyz, R 0. w, R 1. xyzx, R 0. xyzx; R 1. xyz, R 0. w, R 1. xyzx; R 0. w, R 1. xyzx, f[TEX 1]. xyzx; R 0. w, {0}. x;

Shaders: Before and After ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors 11 Halo, © Bungie, Elder Scrolls 3: Morrowind, © Bethesda

Fully Programmable Shader Engines (Cretaceous Period) NV 30, NV 31 - Ge. Force FX (Jan 2003) 4 pix/clk 500 MHz (Ultra) 8 pix/clk for Z-only 128 pin DDR DRAM interface Superset of Direct. X 9 FP 32 programmable pixel shader Mainstream derivative: NV 31 Not a stellar market success ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

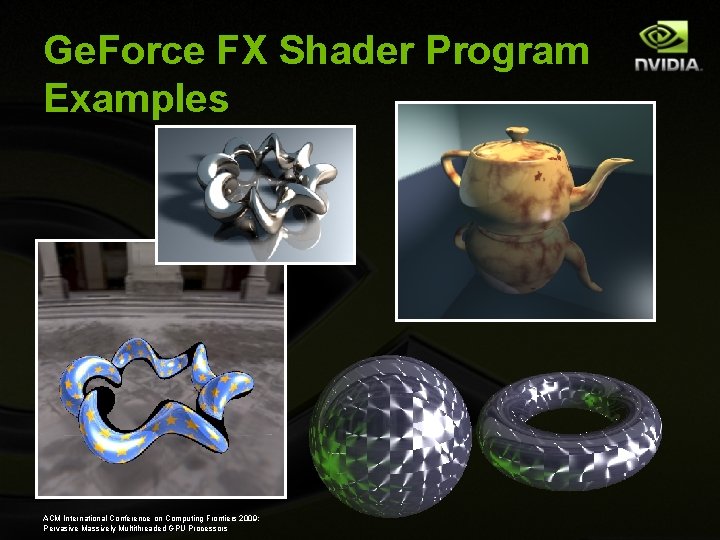

Ge. Force FX Shader Program Examples ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

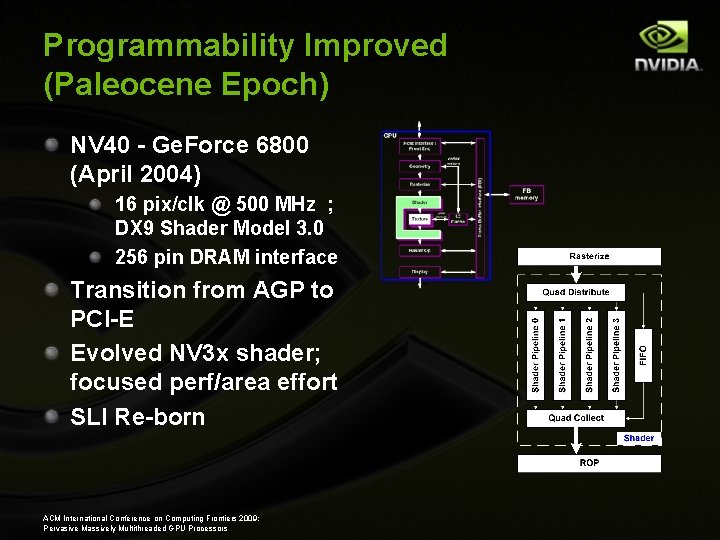

Programmability Improved (Paleocene Epoch) NV 40 - Ge. Force 6800 (April 2004) 16 pix/clk @ 500 MHz ; DX 9 Shader Model 3. 0 256 pin DRAM interface Transition from AGP to PCI-E Evolved NV 3 x shader; focused perf/area effort SLI Re-born ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

The Present ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

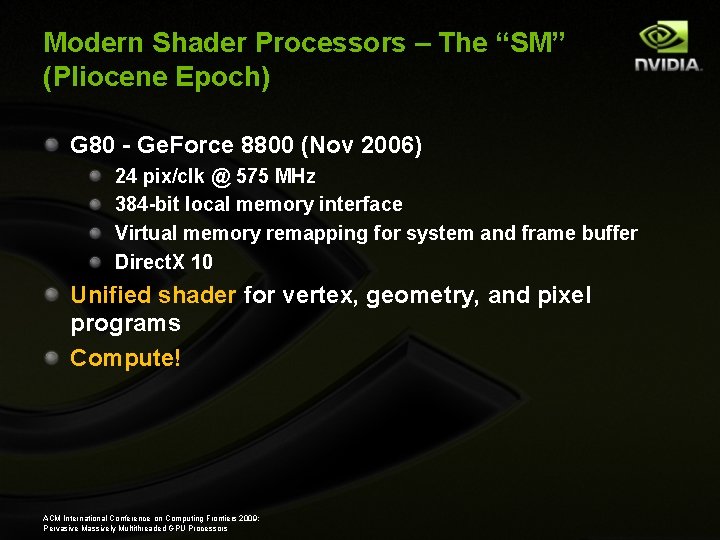

Modern Shader Processors – The “SM” (Pliocene Epoch) G 80 - Ge. Force 8800 (Nov 2006) 24 pix/clk @ 575 MHz 384 -bit local memory interface Virtual memory remapping for system and frame buffer Direct. X 10 Unified shader for vertex, geometry, and pixel programs Compute! ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

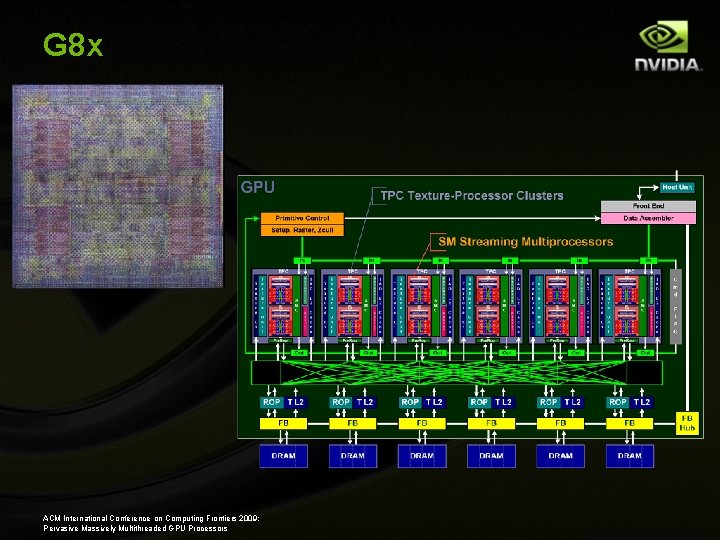

G 8 x ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

NVIDIA Tesla Scalable High Density Computing Massively Multi-threaded Parallel Computing ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

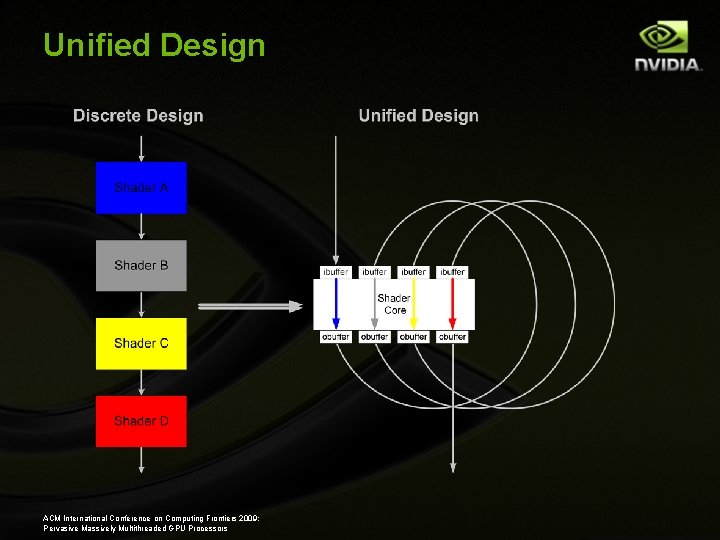

Unified Design ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

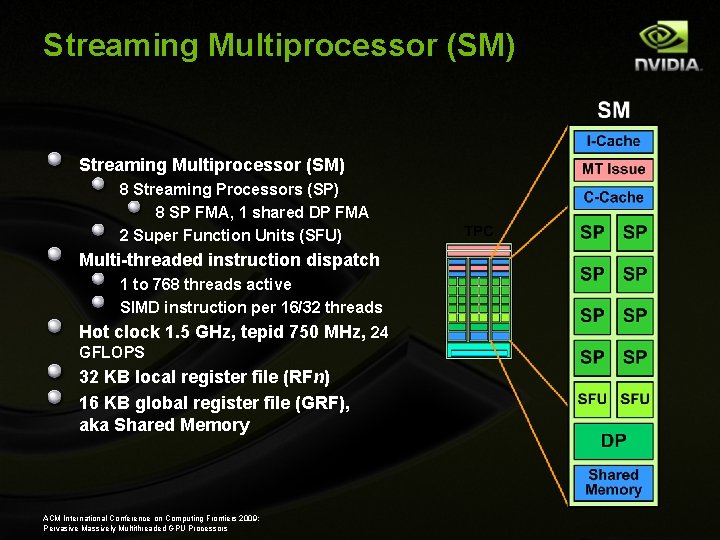

Streaming Multiprocessor (SM) 8 Streaming Processors (SP) 8 SP FMA, 1 shared DP FMA 2 Super Function Units (SFU) Multi-threaded instruction dispatch 1 to 768 threads active SIMD instruction per 16/32 threads Hot clock 1. 5 GHz, tepid 750 MHz, 24 GFLOPS 32 KB local register file (RFn) 16 KB global register file (GRF), aka Shared Memory ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

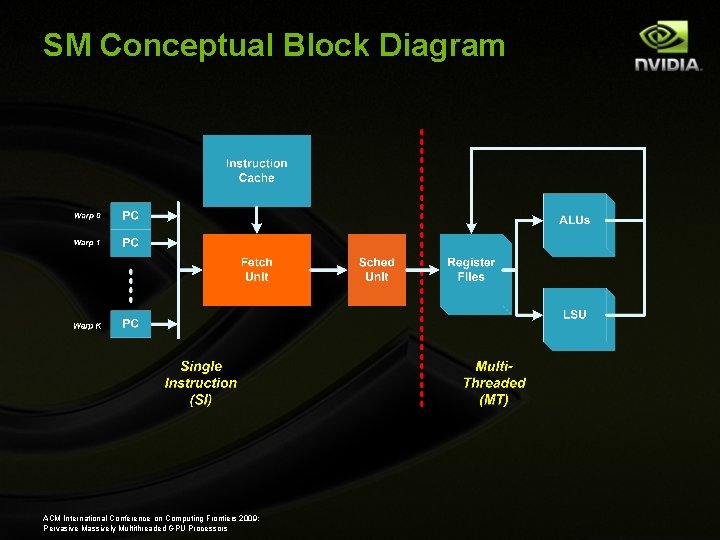

SM Conceptual Block Diagram ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

The Future ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

The CMOS “Canvas” ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

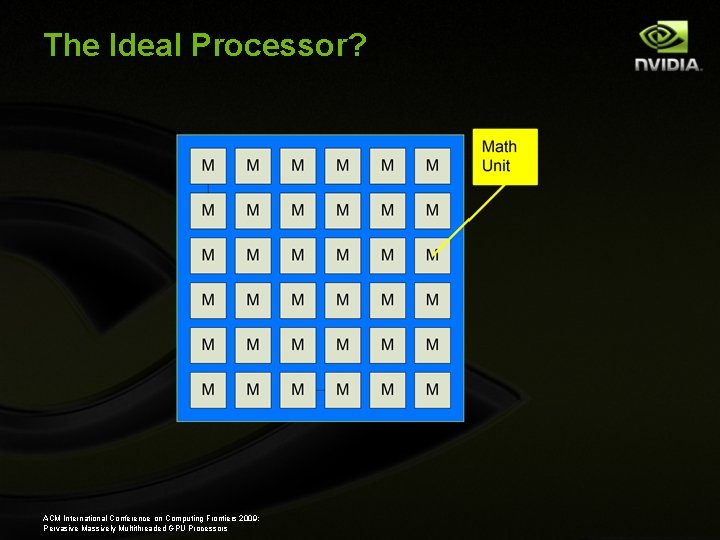

The Ideal Processor? ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

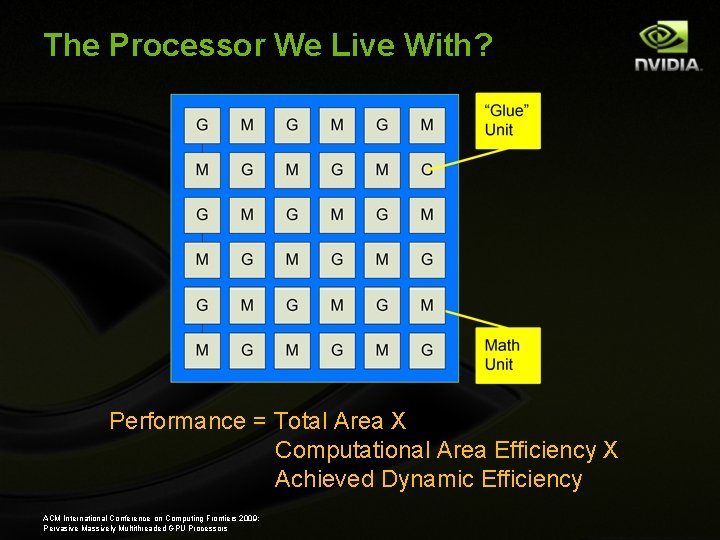

The Processor We Live With? Performance = Total Area X Computational Area Efficiency X Achieved Dynamic Efficiency ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

What is SIMT? SIMD ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors SIMT MIMD

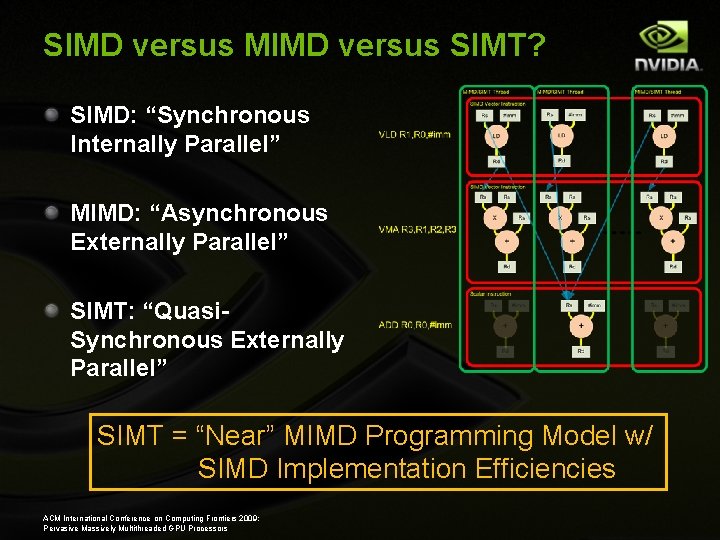

SIMD versus MIMD versus SIMT? SIMD: “Synchronous Internally Parallel” MIMD: “Asynchronous Externally Parallel” SIMT: “Quasi. Synchronous Externally Parallel” SIMT = “Near” MIMD Programming Model w/ SIMD Implementation Efficiencies ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

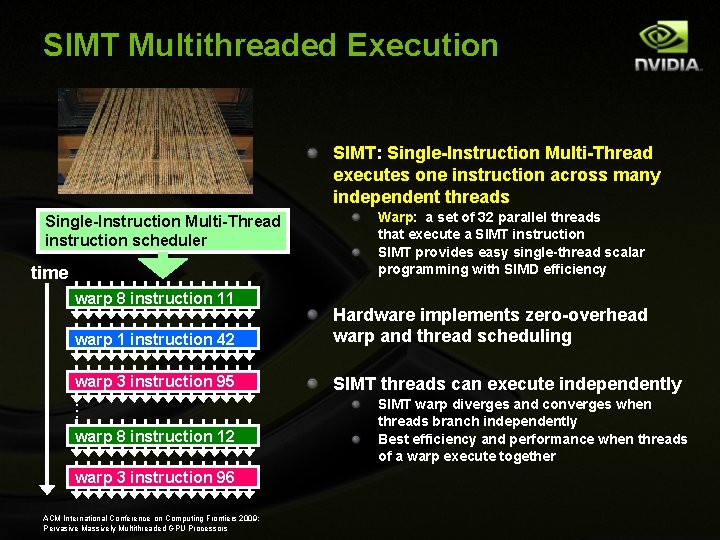

SIMT Multithreaded Execution SIMT: Single-Instruction Multi-Thread executes one instruction across many independent threads Single-Instruction Multi-Thread instruction scheduler time warp 8 instruction 11 warp 1 instruction 42 warp 3 instruction 95. . . warp 8 instruction 12 warp 3 instruction 96 ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors Warp: a set of 32 parallel threads that execute a SIMT instruction SIMT provides easy single-thread scalar programming with SIMD efficiency Hardware implements zero-overhead warp and thread scheduling SIMT threads can execute independently SIMT warp diverges and converges when threads branch independently Best efficiency and performance when threads of a warp execute together

A Few Open SIMT Problems Control Divergence Data Representation Coherence Diversity ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

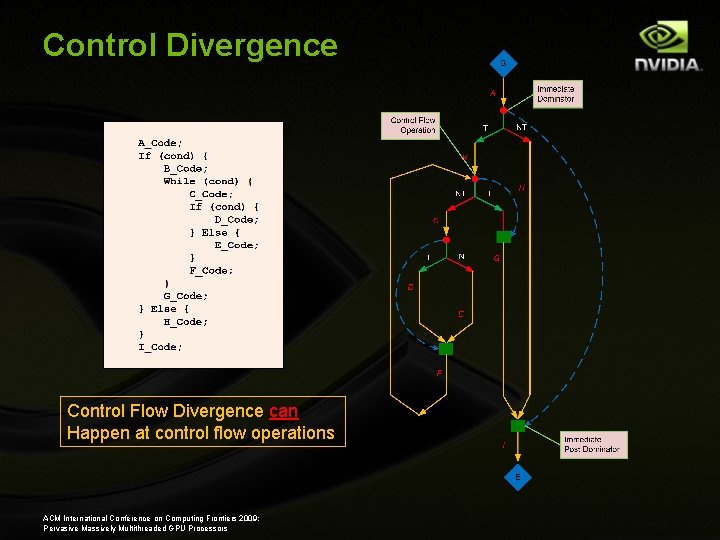

Control Divergence Control Flow Divergence can Happen at control flow operations ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

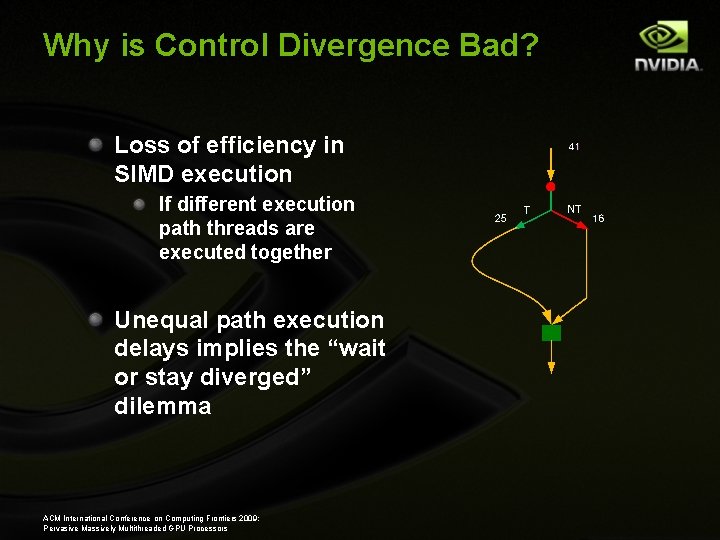

Why is Control Divergence Bad? Loss of efficiency in SIMD execution If different execution path threads are executed together Unequal path execution delays implies the “wait or stay diverged” dilemma ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

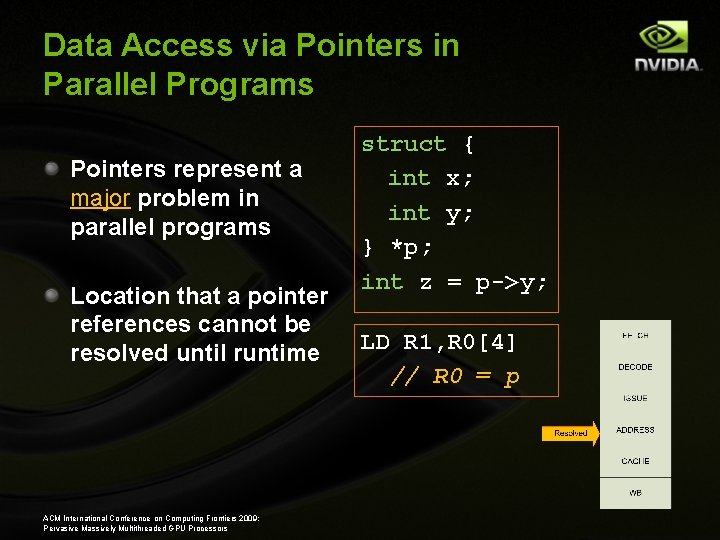

Data Access via Pointers in Parallel Programs Pointers represent a major problem in parallel programs Location that a pointer references cannot be resolved until runtime ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors struct { int x; int y; } *p; int z = p->y; LD R 1, R 0[4] // R 0 = p

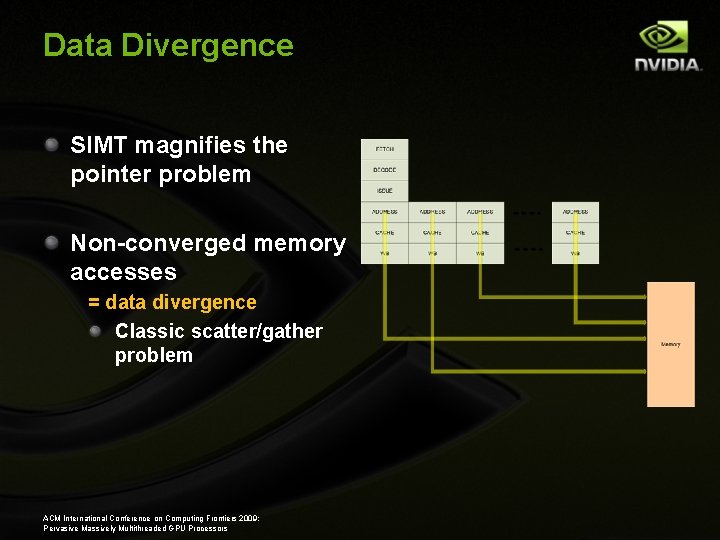

Data Divergence SIMT magnifies the pointer problem Non-converged memory accesses = data divergence Classic scatter/gather problem ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

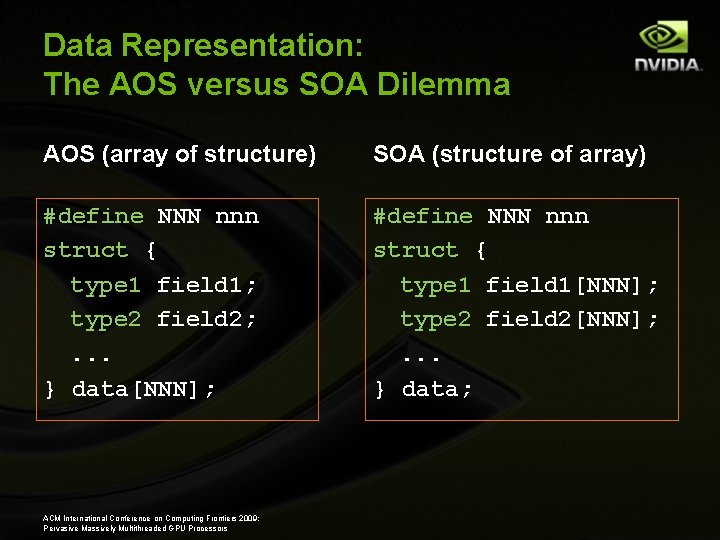

Data Representation: The AOS versus SOA Dilemma AOS (array of structure) SOA (structure of array) #define NNN nnn struct { type 1 field 1; type 2 field 2; . . . } data[NNN]; #define NNN nnn struct { type 1 field 1[NNN]; type 2 field 2[NNN]; . . . } data; ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

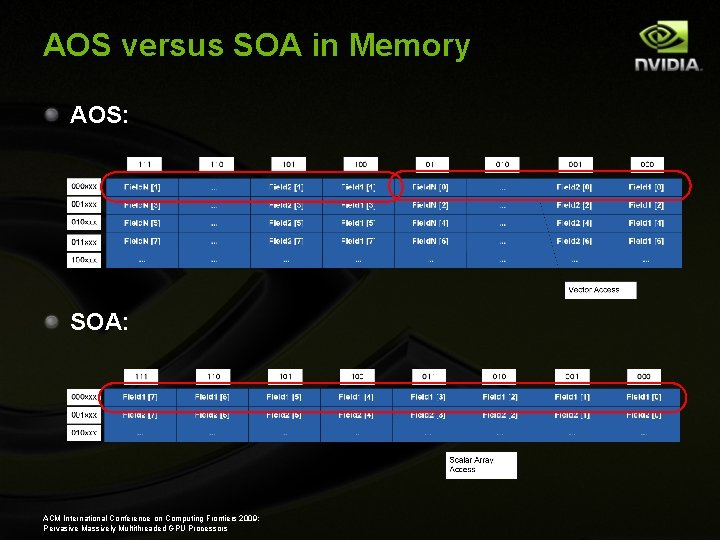

AOS versus SOA in Memory AOS: SOA: ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

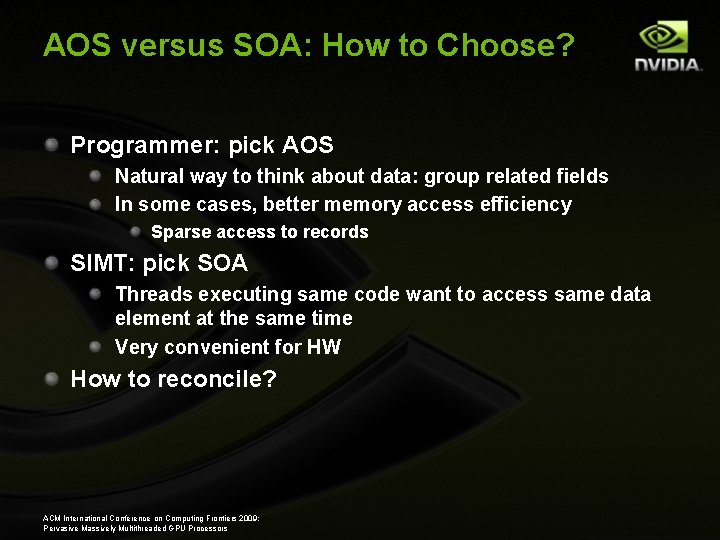

AOS versus SOA: How to Choose? Programmer: pick AOS Natural way to think about data: group related fields In some cases, better memory access efficiency Sparse access to records SIMT: pick SOA Threads executing same code want to access same data element at the same time Very convenient for HW How to reconcile? ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

Descriptors AKA “capabilities” For example, Plessey 250, Cambridge CAP, Intel 432 D 3 D employs a form of descriptor “Resources descriptors” are capabilities Major language issue for parallel programming? ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

Diversity: CPU-GPU Détente? Really SISD vs. SIMT Sequential applications on SIMT hardware? Conversely, thread parallel applications on multi-core scalar machines? Room for both? ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

Coherent Caches? Some small planes have built in parachutes Really good idea? Fact: existing GPUs don’t support cache coherency Bad? Should coherent caches be added? ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

The Future Revisited So what is the future in high performance computing? 1. SIMT 2. Lots of cores 3. Clouds ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

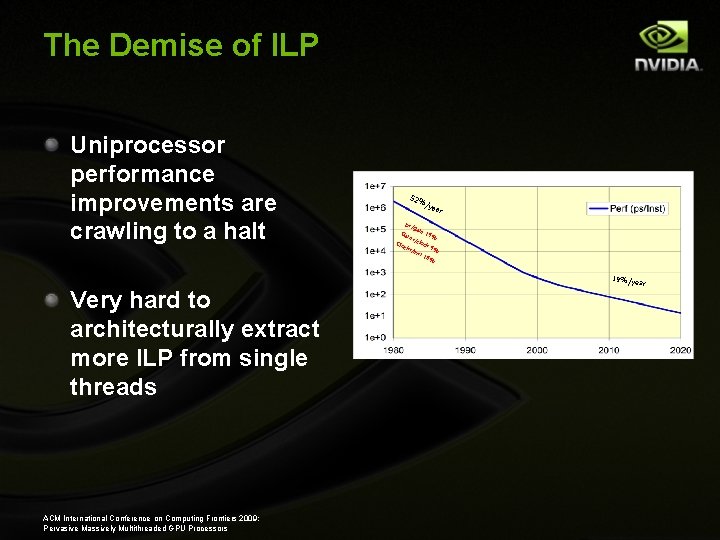

The Demise of ILP Uniprocessor performance improvements are crawling to a halt Very hard to architecturally extract more ILP from single threads ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors 52% /ye ar ps/ gat e 1 9% tes /clo Clo ck cks 9% /in st 1 8% Ga 19%/ye ar

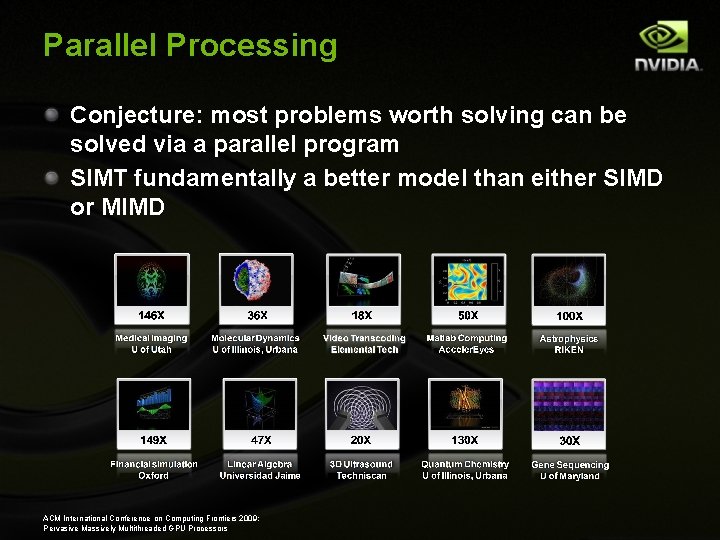

Parallel Processing Conjecture: most problems worth solving can be solved via a parallel program SIMT fundamentally a better model than either SIMD or MIMD ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

Scaling Can a single GPU do it all? Systems have to scale to multiple boxes Programming systems have to scale with them ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

Final Thoughts The future is bright for parallel programming Future supercomputers = networked SIMTbased processing systems Thanks! mshebanow@nvidia. com ACM International Conference on Computing Frontiers 2009: Pervasive Massively Multithreaded GPU Processors

- Slides: 44