Pattern Recognition using Support Vector Machine and Principal

- Slides: 53

Pattern Recognition using Support Vector Machine and Principal Component Analysis Ahmed Abbasi MIS 510 3/21/2007 1

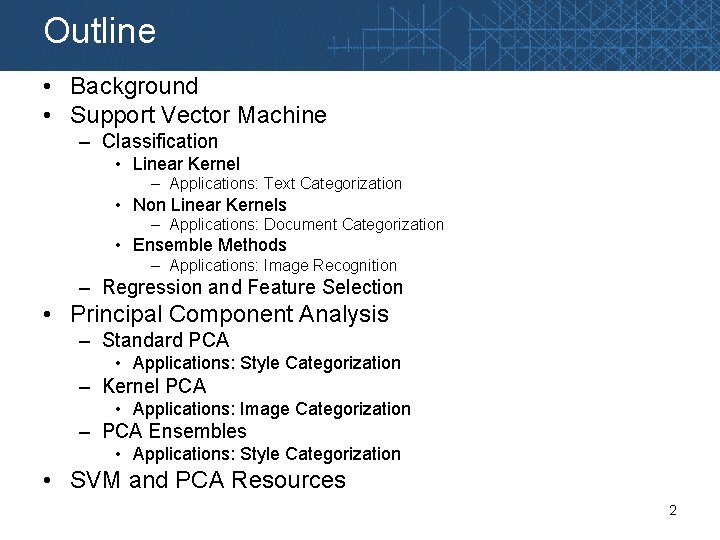

Outline • Background • Support Vector Machine – Classification • Linear Kernel – Applications: Text Categorization • Non Linear Kernels – Applications: Document Categorization • Ensemble Methods – Applications: Image Recognition – Regression and Feature Selection • Principal Component Analysis – Standard PCA • Applications: Style Categorization – Kernel PCA • Applications: Image Categorization – PCA Ensembles • Applications: Style Categorization • SVM and PCA Resources 2

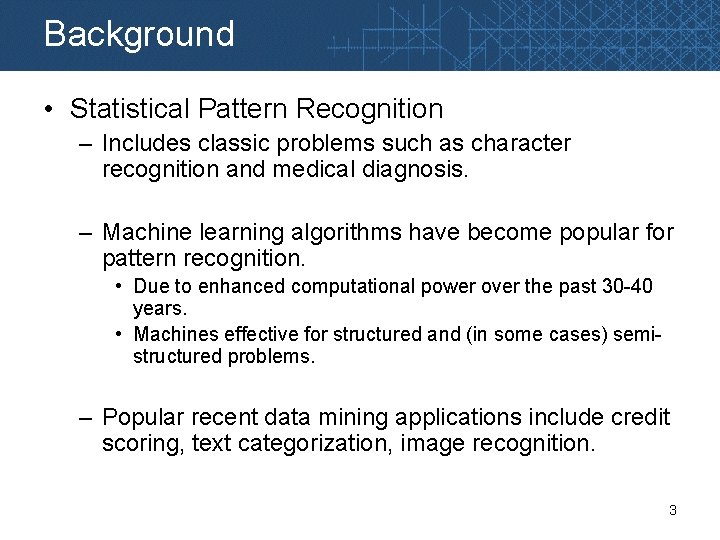

Background • Statistical Pattern Recognition – Includes classic problems such as character recognition and medical diagnosis. – Machine learning algorithms have become popular for pattern recognition. • Due to enhanced computational power over the past 30 -40 years. • Machines effective for structured and (in some cases) semistructured problems. – Popular recent data mining applications include credit scoring, text categorization, image recognition. 3

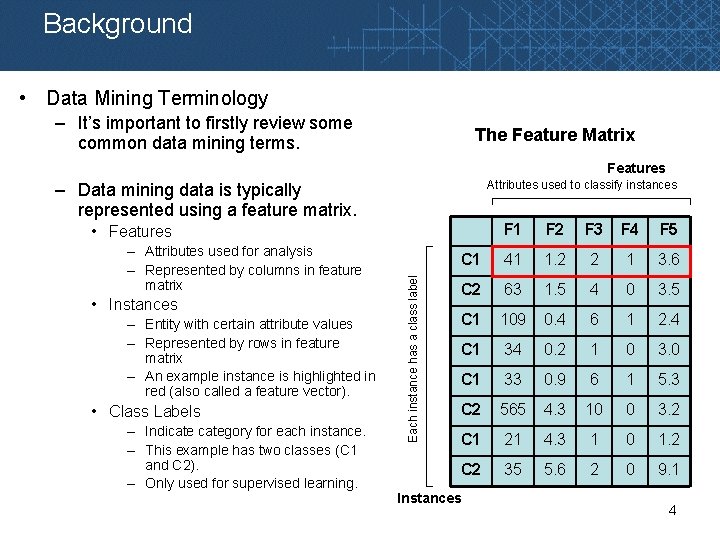

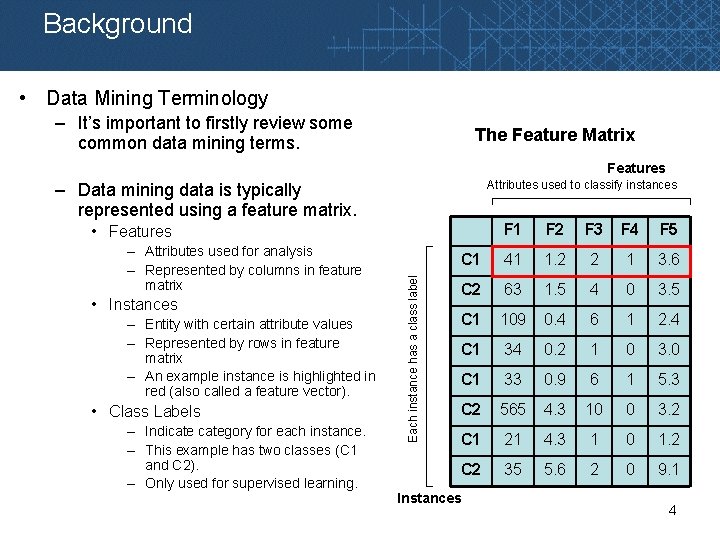

Background • Data Mining Terminology – It’s important to firstly review some common data mining terms. The Feature Matrix Features Attributes used to classify instances – Data mining data is typically represented using a feature matrix. F 1 F 2 F 3 F 4 F 5 C 1 41 1. 2 2 1 3. 6 C 2 63 1. 5 4 0 3. 5 C 1 109 0. 4 6 1 2. 4 C 1 34 0. 2 1 0 3. 0 C 1 33 0. 9 6 1 5. 3 C 2 565 4. 3 10 0 3. 2 C 1 21 4. 3 1 0 1. 2 C 2 35 5. 6 2 0 9. 1 – Attributes used for analysis – Represented by columns in feature matrix • Instances – Entity with certain attribute values – Represented by rows in feature matrix – An example instance is highlighted in red (also called a feature vector). • Class Labels – Indicategory for each instance. – This example has two classes (C 1 and C 2). – Only used for supervised learning. Each instance has a class label • Features Instances 4

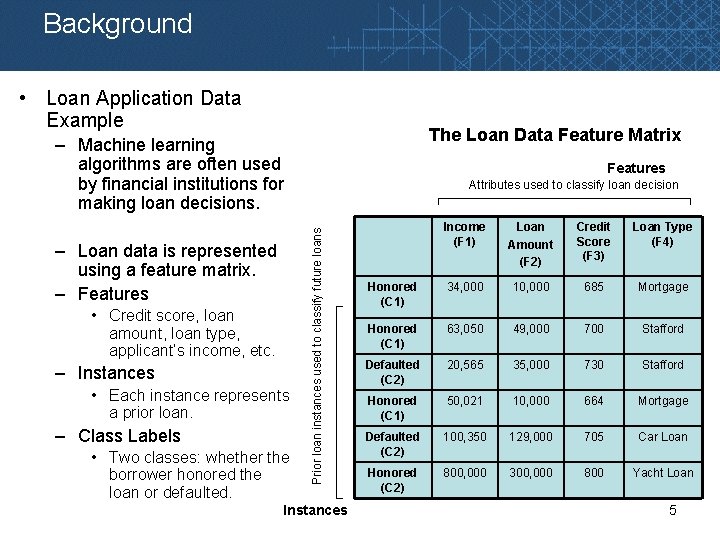

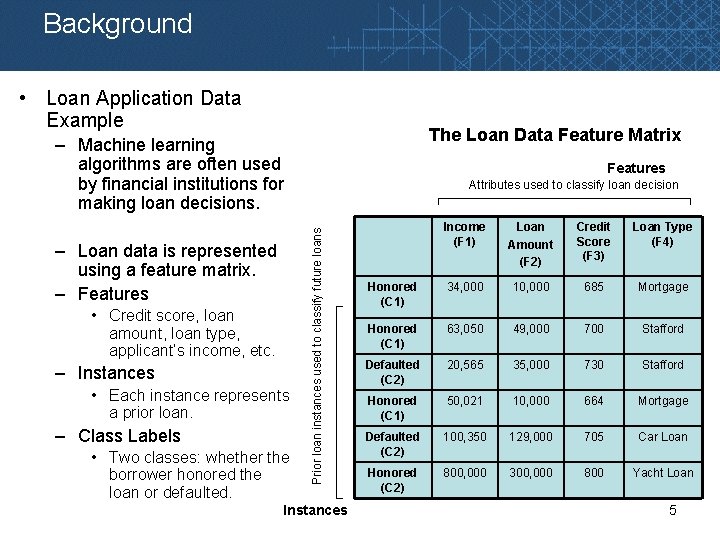

Background • Loan Application Data Example The Loan Data Feature Matrix – Machine learning algorithms are often used by financial institutions for making loan decisions. • Credit score, loan amount, loan type, applicant’s income, etc. – Instances • Each instance represents a prior loan. – Class Labels • Two classes: whether the borrower honored the loan or defaulted. Attributes used to classify loan decision Prior loan instances used to classify future loans – Loan data is represented using a feature matrix. – Features Instances Income (F 1) Loan Amount (F 2) Credit Score (F 3) Loan Type (F 4) Honored (C 1) 34, 000 10, 000 685 Mortgage Honored (C 1) 63, 050 49, 000 700 Stafford Defaulted (C 2) 20, 565 35, 000 730 Stafford Honored (C 1) 50, 021 10, 000 664 Mortgage Defaulted (C 2) 100, 350 129, 000 705 Car Loan Honored (C 2) 800, 000 300, 000 800 Yacht Loan 5

Background • Two broad categories of machine learning algorithms. • Supervised learning algorithms – Also called discriminant methods – Require training data with class labels • Some examples already discussed in previous lectures include Neural Networks and ID 3/C 4. 5 Decision Tree algorithms. • Unsupervised learning algorithms – Non-discriminant methods – Build models based on training data, without use of class labels 6

Background • In this lecture, we will discuss two popular machine learning algorithms. • Support Vector Machine – Supervised learning method • Principal Component Analysis – Unsupervised learning methods 7

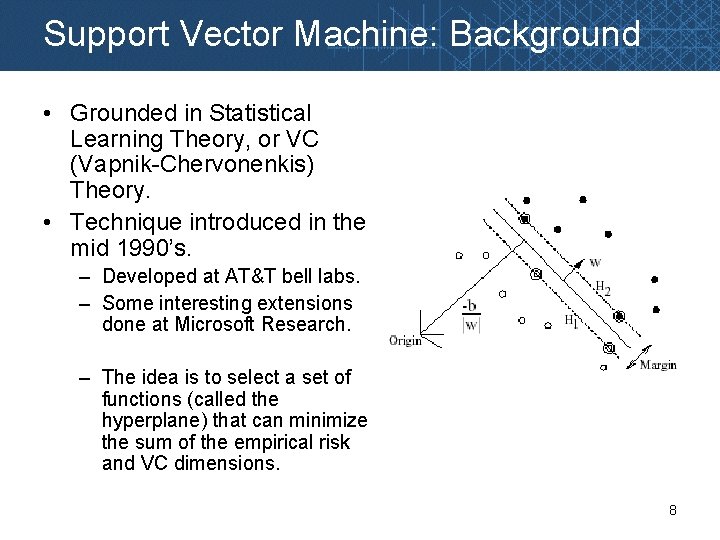

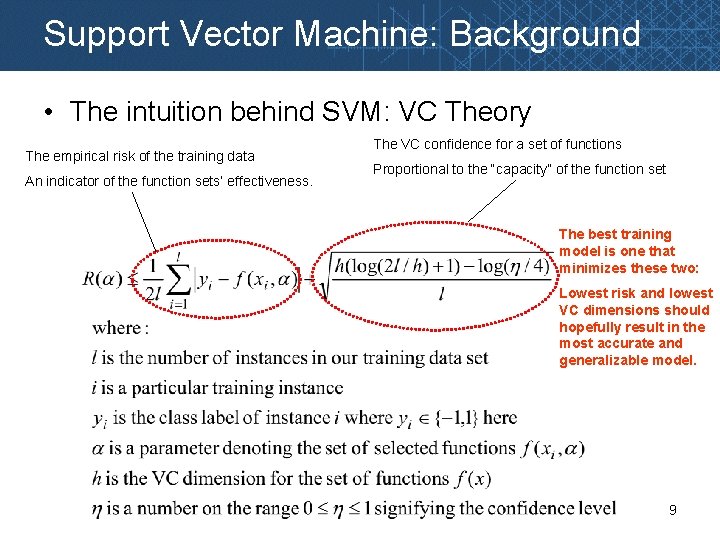

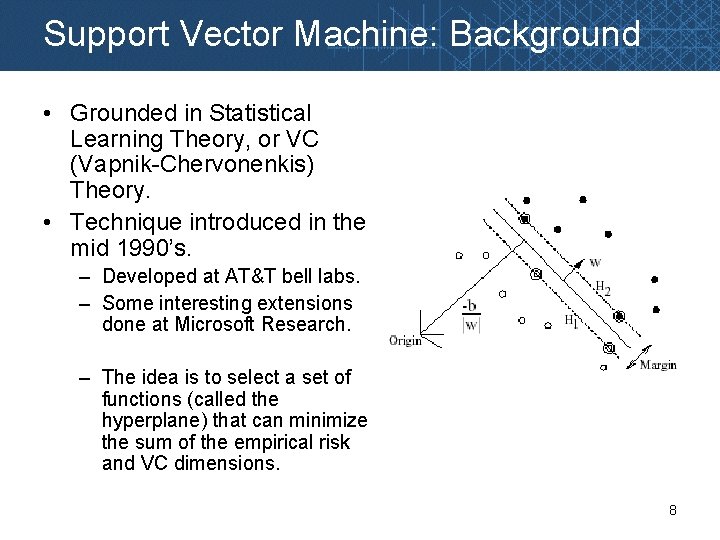

Support Vector Machine: Background • Grounded in Statistical Learning Theory, or VC (Vapnik-Chervonenkis) Theory. • Technique introduced in the mid 1990’s. – Developed at AT&T bell labs. – Some interesting extensions done at Microsoft Research. – The idea is to select a set of functions (called the hyperplane) that can minimize the sum of the empirical risk and VC dimensions. 8

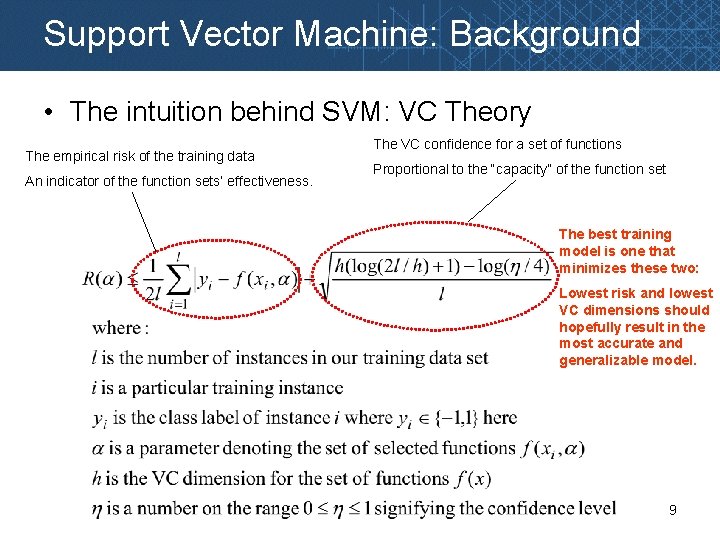

Support Vector Machine: Background • The intuition behind SVM: VC Theory The empirical risk of the training data An indicator of the function sets’ effectiveness. The VC confidence for a set of functions Proportional to the “capacity” of the function set The best training model is one that minimizes these two: Lowest risk and lowest VC dimensions should hopefully result in the most accurate and generalizable model. 9

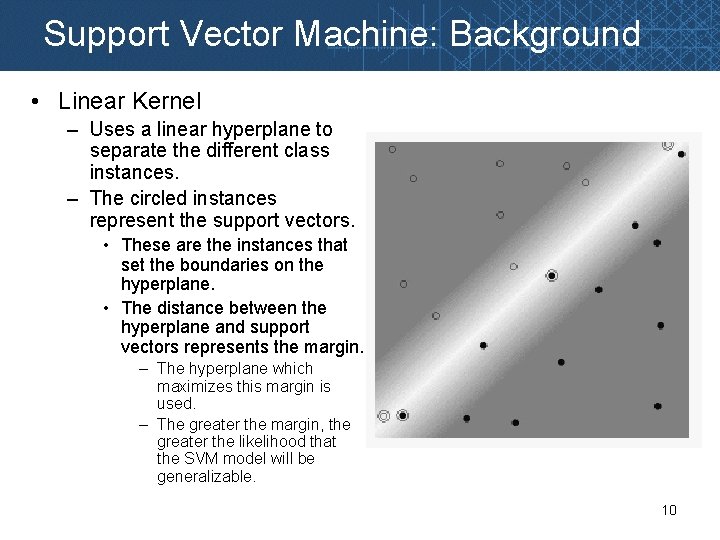

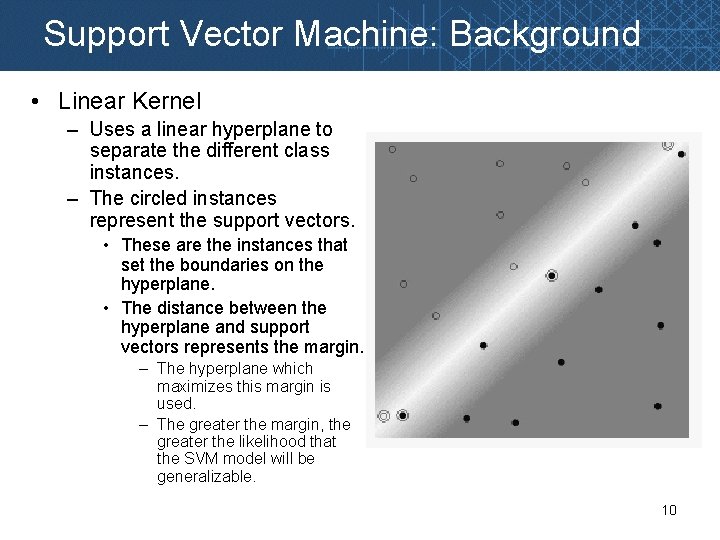

Support Vector Machine: Background • Linear Kernel – Uses a linear hyperplane to separate the different class instances. – The circled instances represent the support vectors. • These are the instances that set the boundaries on the hyperplane. • The distance between the hyperplane and support vectors represents the margin. – The hyperplane which maximizes this margin is used. – The greater the margin, the greater the likelihood that the SVM model will be generalizable. 10

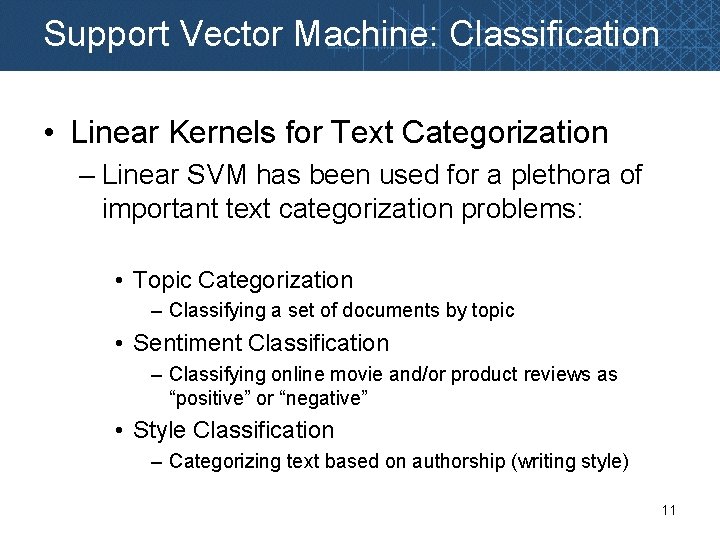

Support Vector Machine: Classification • Linear Kernels for Text Categorization – Linear SVM has been used for a plethora of important text categorization problems: • Topic Categorization – Classifying a set of documents by topic • Sentiment Classification – Classifying online movie and/or product reviews as “positive” or “negative” • Style Classification – Categorizing text based on authorship (writing style) 11

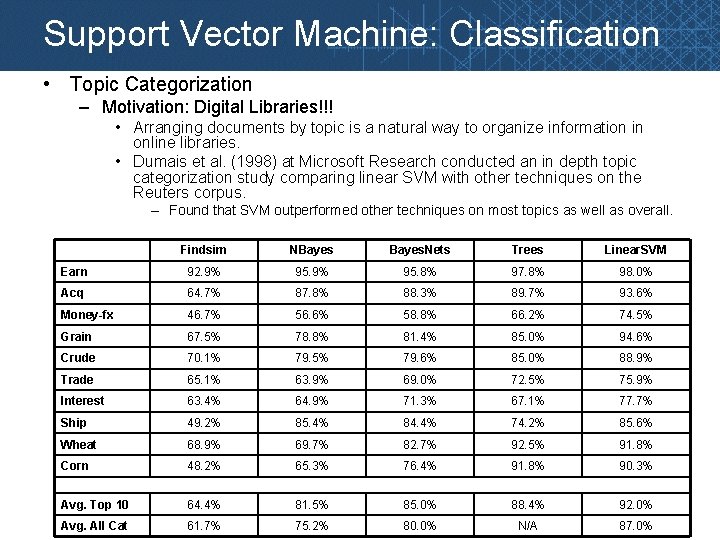

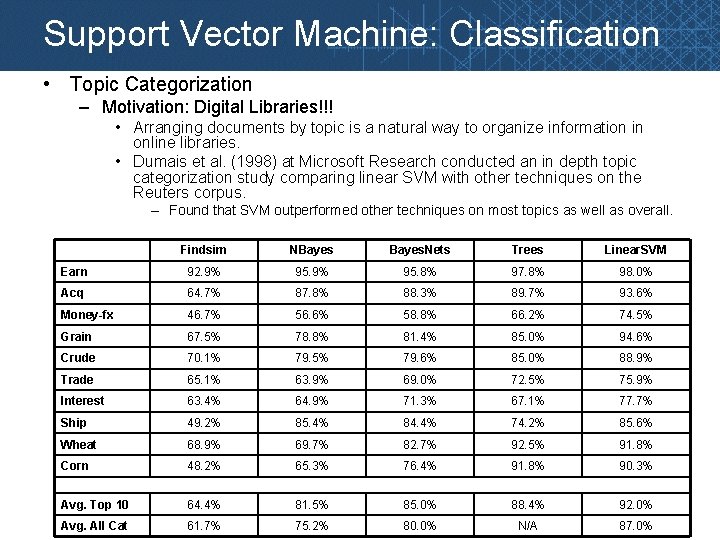

Support Vector Machine: Classification • Topic Categorization – Motivation: Digital Libraries!!! • Arranging documents by topic is a natural way to organize information in online libraries. • Dumais et al. (1998) at Microsoft Research conducted an in depth topic categorization study comparing linear SVM with other techniques on the Reuters corpus. – Found that SVM outperformed other techniques on most topics as well as overall. Findsim NBayes. Nets Trees Linear. SVM Earn 92. 9% 95. 8% 97. 8% 98. 0% Acq 64. 7% 87. 8% 88. 3% 89. 7% 93. 6% Money-fx 46. 7% 56. 6% 58. 8% 66. 2% 74. 5% Grain 67. 5% 78. 8% 81. 4% 85. 0% 94. 6% Crude 70. 1% 79. 5% 79. 6% 85. 0% 88. 9% Trade 65. 1% 63. 9% 69. 0% 72. 5% 75. 9% Interest 63. 4% 64. 9% 71. 3% 67. 1% 77. 7% Ship 49. 2% 85. 4% 84. 4% 74. 2% 85. 6% Wheat 68. 9% 69. 7% 82. 7% 92. 5% 91. 8% Corn 48. 2% 65. 3% 76. 4% 91. 8% 90. 3% Avg. Top 10 64. 4% 81. 5% 85. 0% 88. 4% 92. 0% Avg. All Cat 61. 7% 75. 2% 80. 0% N/A 87. 0%

Support Vector Machine: Classification • Sentiment Categorization – Motivation: Market Research!!! • Gathering consumer preference data is expensive • Yet its also essential when introducing new products or improving existing ones. – Software for mining online review forums…. $10, 000 – Information gathered……. priceless. (www. epinions. com) 13

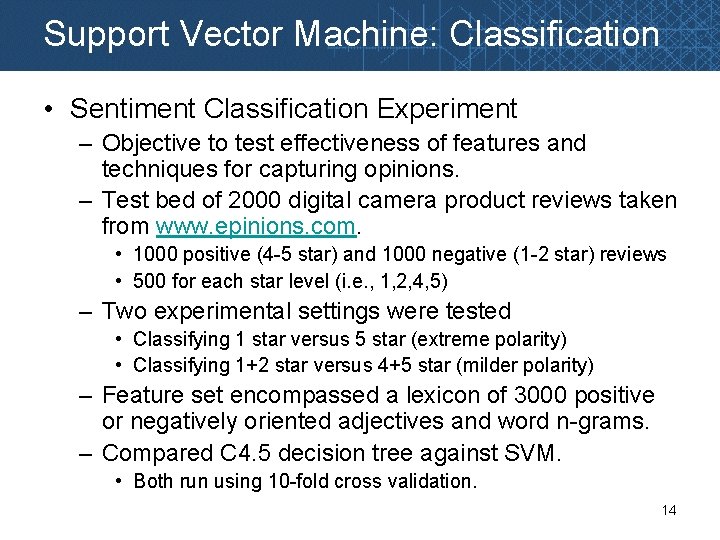

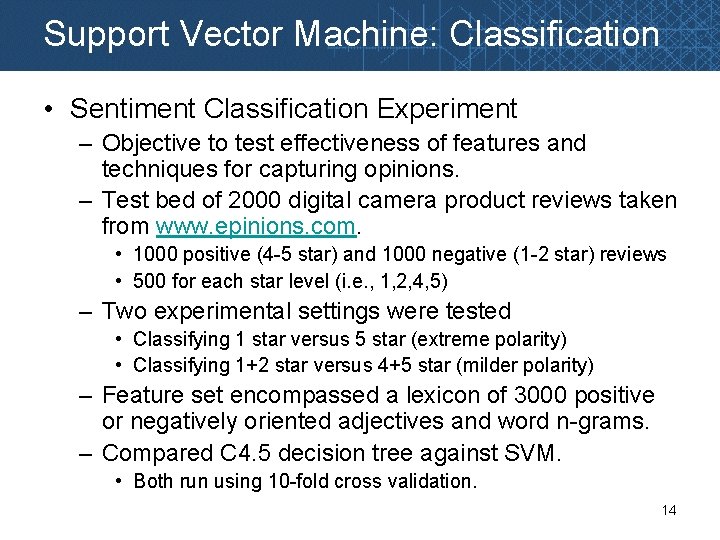

Support Vector Machine: Classification • Sentiment Classification Experiment – Objective to test effectiveness of features and techniques for capturing opinions. – Test bed of 2000 digital camera product reviews taken from www. epinions. com. • 1000 positive (4 -5 star) and 1000 negative (1 -2 star) reviews • 500 for each star level (i. e. , 1, 2, 4, 5) – Two experimental settings were tested • Classifying 1 star versus 5 star (extreme polarity) • Classifying 1+2 star versus 4+5 star (milder polarity) – Feature set encompassed a lexicon of 3000 positive or negatively oriented adjectives and word n-grams. – Compared C 4. 5 decision tree against SVM. • Both run using 10 -fold cross validation. 14

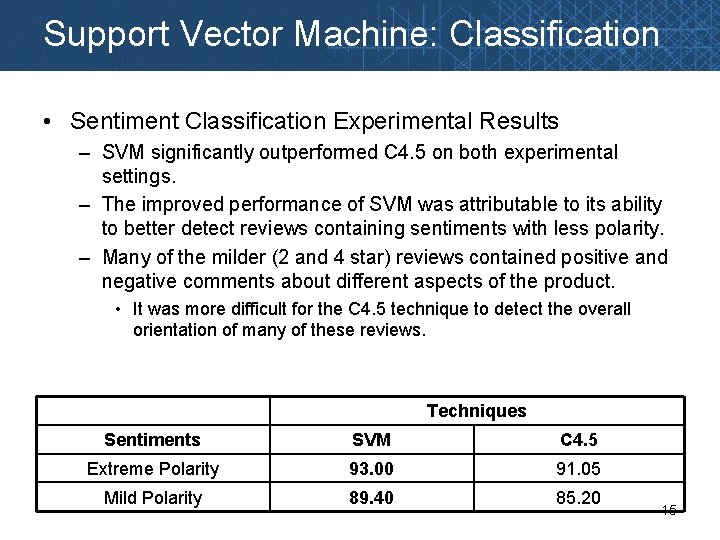

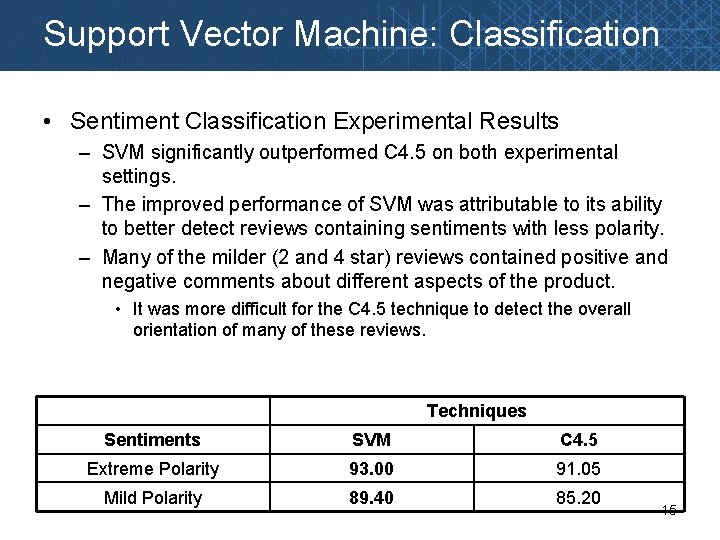

Support Vector Machine: Classification • Sentiment Classification Experimental Results – SVM significantly outperformed C 4. 5 on both experimental settings. – The improved performance of SVM was attributable to its ability to better detect reviews containing sentiments with less polarity. – Many of the milder (2 and 4 star) reviews contained positive and negative comments about different aspects of the product. • It was more difficult for the C 4. 5 technique to detect the overall orientation of many of these reviews. Techniques Sentiments SVM C 4. 5 Extreme Polarity 93. 00 91. 05 Mild Polarity 89. 40 85. 20 15

Support Vector Machine: Classification • Style Categorization – Motivation: Online Anonymity Abuse!!! • Ability to identify people based on writing style can allow the use of stylometric authentication. • Important for many online text-based applications: – – Email scams (email body text) Online auction fraud (feedback comments) Cybercrime (forum, instant messaging logs) Computer hacking (program code) 16

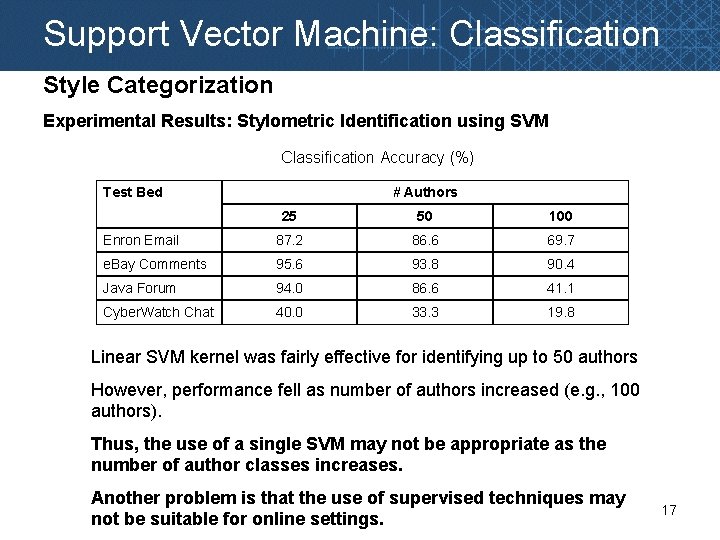

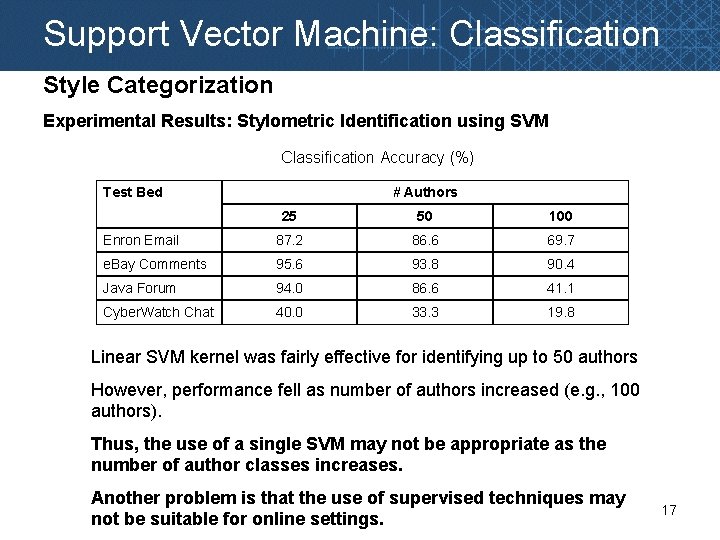

Support Vector Machine: Classification Style Categorization Experimental Results: Stylometric Identification using SVM Classification Accuracy (%) Test Bed # Authors 25 50 100 Enron Email 87. 2 86. 6 69. 7 e. Bay Comments 95. 6 93. 8 90. 4 Java Forum 94. 0 86. 6 41. 1 Cyber. Watch Chat 40. 0 33. 3 19. 8 Linear SVM kernel was fairly effective for identifying up to 50 authors However, performance fell as number of authors increased (e. g. , 100 authors). Thus, the use of a single SVM may not be appropriate as the number of author classes increases. Another problem is that the use of supervised techniques may not be suitable for online settings. 17

Support Vector Machine: Classification • More Complex Problems: Fraudulent Escrow Website Categorization – Motivation: Online Escrow Fraud nets billions of dollars in revenue annually!!! – Given the growing amount of fraudulent sellers/traders online, people are told to use escrow services for security. – So naturally, fake escrow websites have started to pop up. • Online fraud databases such as the Artists-Against-419 document an average of 30 -40 new sites every day!!! • Especially prevalent for online sales of larger goods, such as vehicles. 18

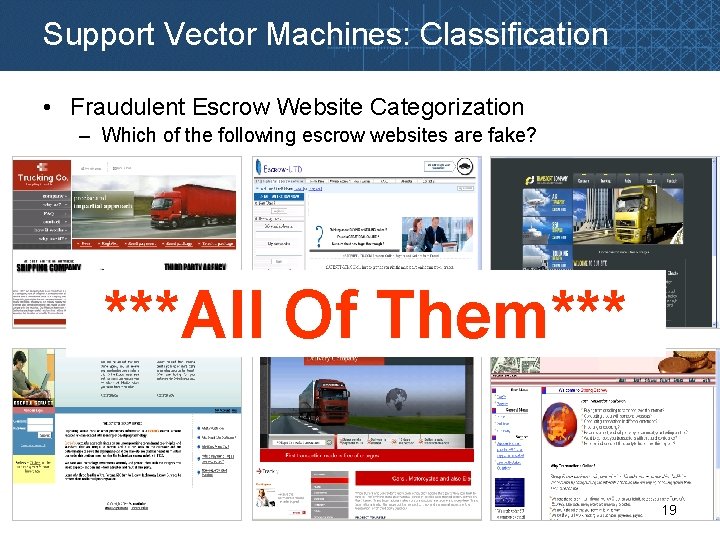

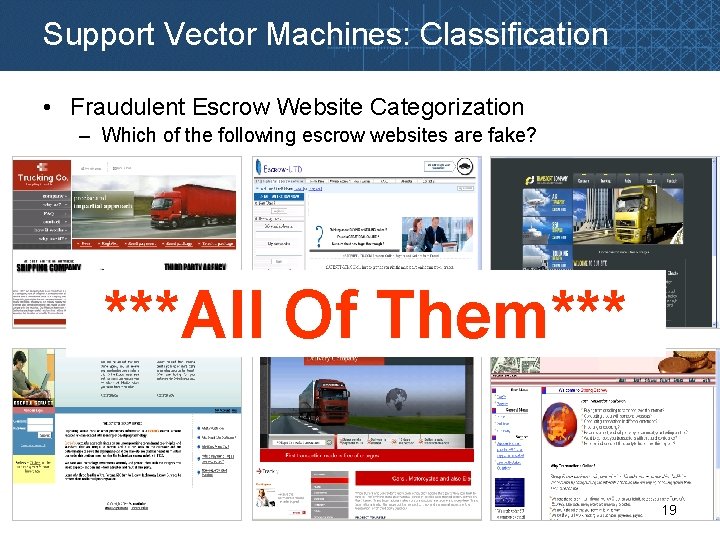

Support Vector Machines: Classification • Fraudulent Escrow Website Categorization – Which of the following escrow websites are fake? ***All Of Them*** 19

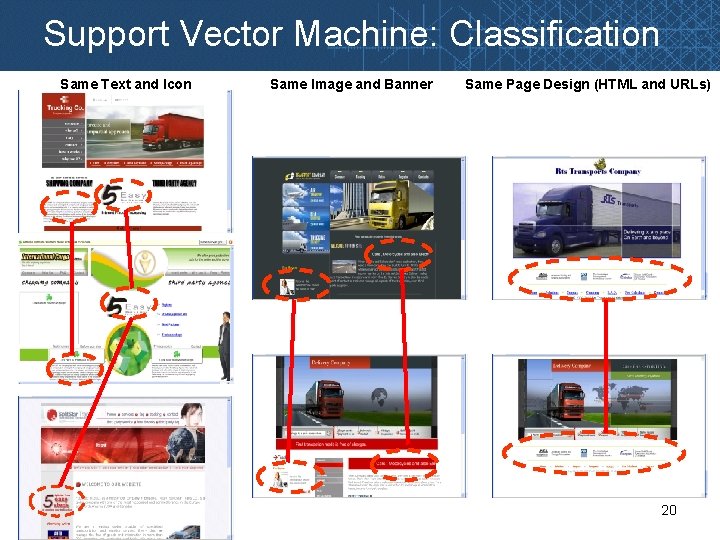

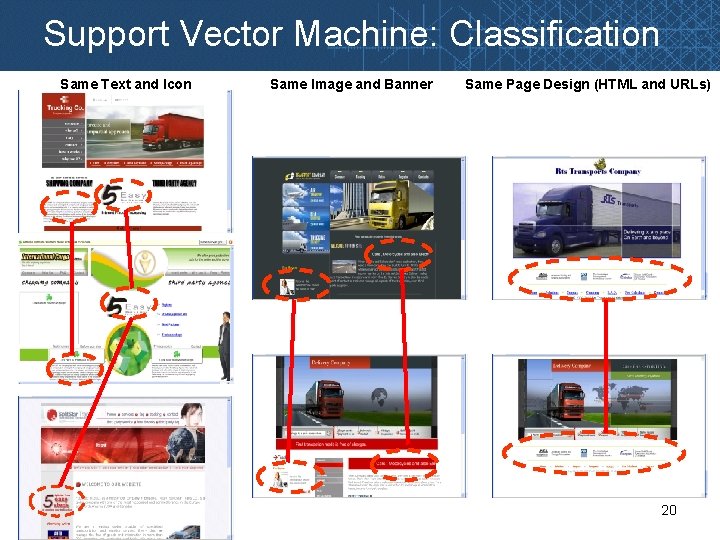

Support Vector Machine: Classification Same Text and Icon Same Image and Banner Same Page Design (HTML and URLs) 20

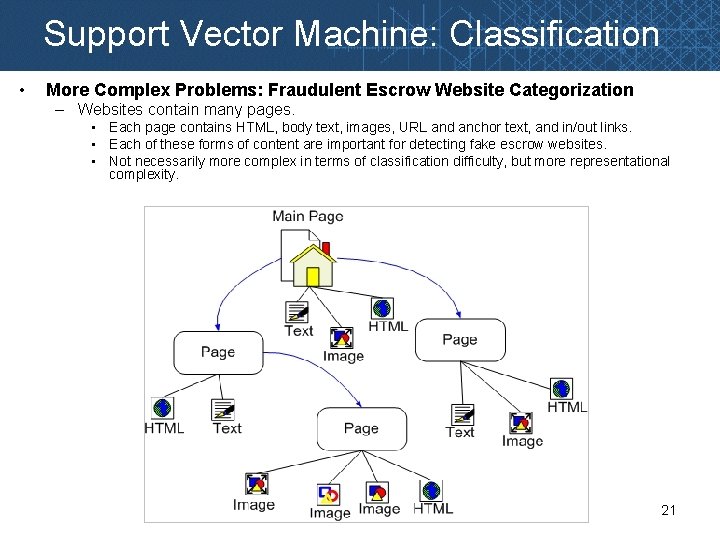

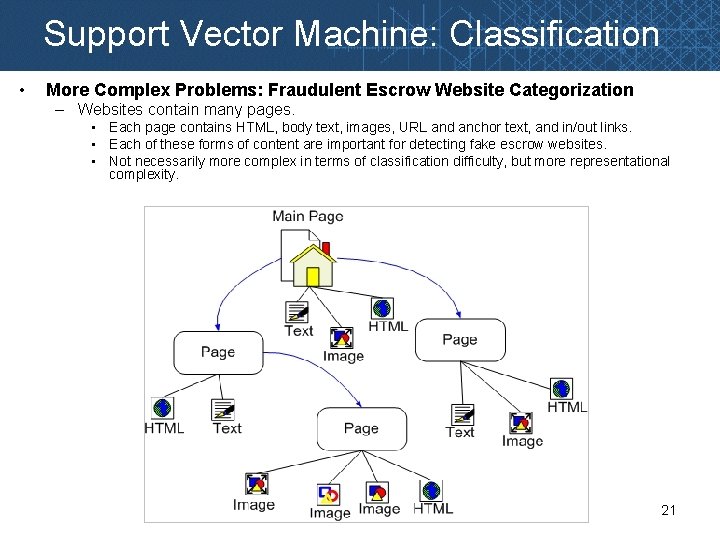

Support Vector Machine: Classification • More Complex Problems: Fraudulent Escrow Website Categorization – Websites contain many pages. • Each page contains HTML, body text, images, URL and anchor text, and in/out links. • Each of these forms of content are important for detecting fake escrow websites. • Not necessarily more complex in terms of classification difficulty, but more representational complexity. 21

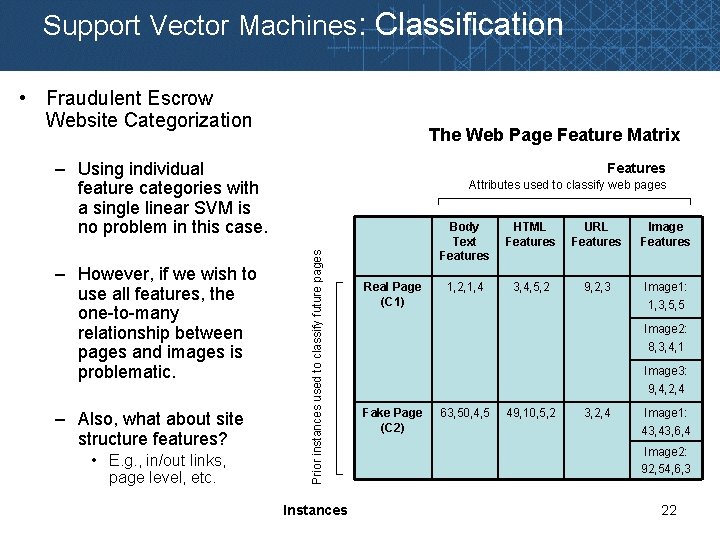

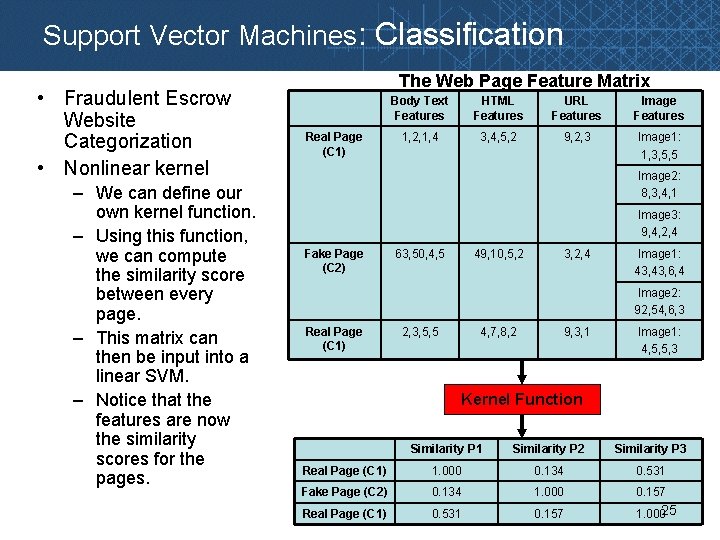

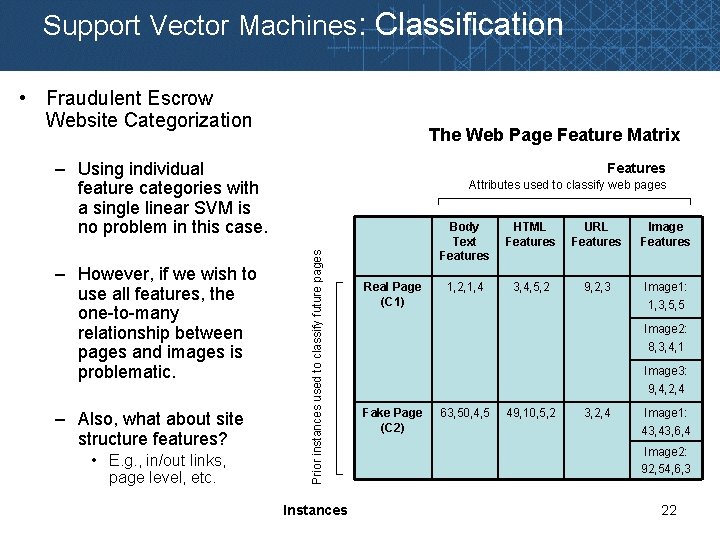

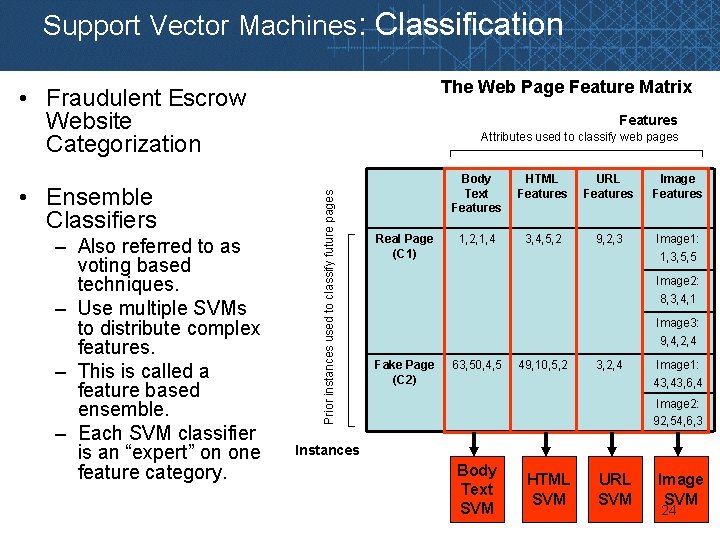

Support Vector Machines: Classification • Fraudulent Escrow Website Categorization The Web Page Feature Matrix – Using individual feature categories with a single linear SVM is no problem in this case. – Also, what about site structure features? • E. g. , in/out links, page level, etc. Attributes used to classify web pages Prior instances used to classify future pages – However, if we wish to use all features, the one-to-many relationship between pages and images is problematic. Features Instances Real Page (C 1) Body Text Features HTML Features URL Features Image Features 1, 2, 1, 4 3, 4, 5, 2 9, 2, 3 Image 1: 1, 3, 5, 5 Image 2: 8, 3, 4, 1 Image 3: 9, 4, 2, 4 Fake Page (C 2) 63, 50, 4, 5 49, 10, 5, 2 3, 2, 4 Image 1: 43, 6, 4 Image 2: 92, 54, 6, 3 22

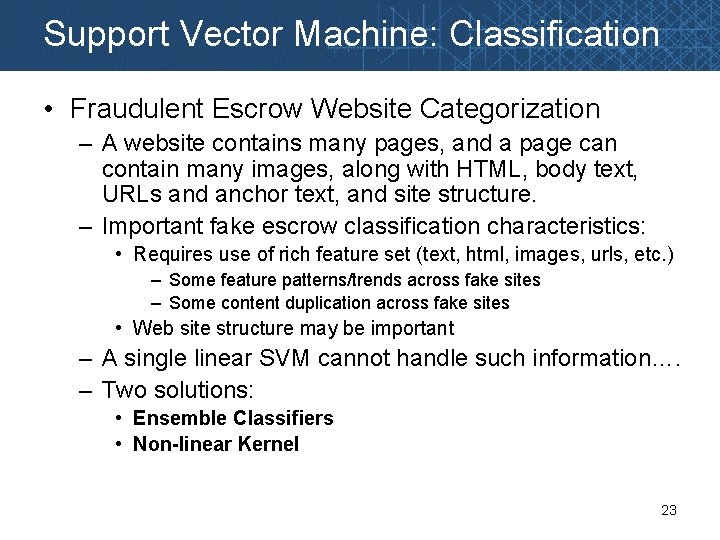

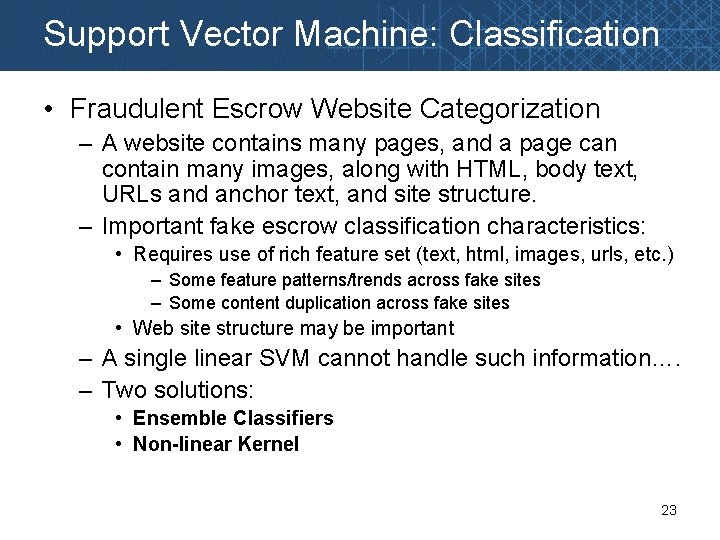

Support Vector Machine: Classification • Fraudulent Escrow Website Categorization – A website contains many pages, and a page can contain many images, along with HTML, body text, URLs and anchor text, and site structure. – Important fake escrow classification characteristics: • Requires use of rich feature set (text, html, images, urls, etc. ) – Some feature patterns/trends across fake sites – Some content duplication across fake sites • Web site structure may be important – A single linear SVM cannot handle such information…. – Two solutions: • Ensemble Classifiers • Non-linear Kernel 23

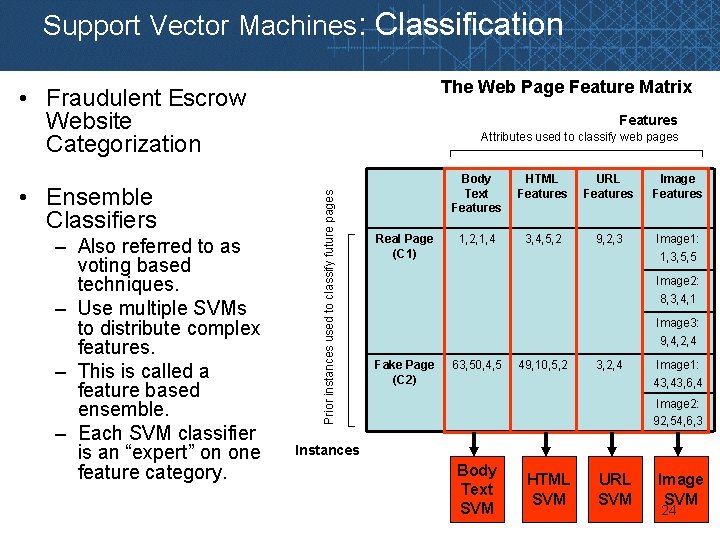

Support Vector Machines: Classification The Web Page Feature Matrix • Fraudulent Escrow Website Categorization – Also referred to as voting based techniques. – Use multiple SVMs to distribute complex features. – This is called a feature based ensemble. – Each SVM classifier is an “expert” on one feature category. Attributes used to classify web pages Prior instances used to classify future pages • Ensemble Classifiers Features Real Page (C 1) Body Text Features HTML Features URL Features Image Features 1, 2, 1, 4 3, 4, 5, 2 9, 2, 3 Image 1: 1, 3, 5, 5 Image 2: 8, 3, 4, 1 Image 3: 9, 4, 2, 4 Fake Page (C 2) 63, 50, 4, 5 49, 10, 5, 2 3, 2, 4 Image 1: 43, 6, 4 Image 2: 92, 54, 6, 3 Instances Body Text SVM HTML SVM URL SVM Image SVM 24

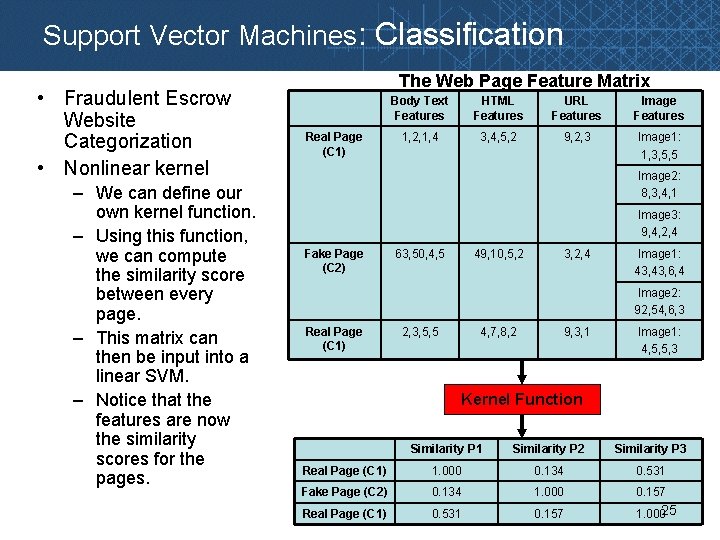

Support Vector Machines: Classification • Fraudulent Escrow Website Categorization • Nonlinear kernel – We can define our own kernel function. – Using this function, we can compute the similarity score between every page. – This matrix can then be input into a linear SVM. – Notice that the features are now the similarity scores for the pages. The Web Page Feature Matrix Real Page (C 1) Body Text Features HTML Features URL Features Image Features 1, 2, 1, 4 3, 4, 5, 2 9, 2, 3 Image 1: 1, 3, 5, 5 Image 2: 8, 3, 4, 1 Image 3: 9, 4, 2, 4 Fake Page (C 2) 63, 50, 4, 5 49, 10, 5, 2 3, 2, 4 Image 1: 43, 6, 4 Image 2: 92, 54, 6, 3 Real Page (C 1) 2, 3, 5, 5 4, 7, 8, 2 9, 3, 1 Image 1: 4, 5, 5, 3 Kernel Function Similarity P 1 Similarity P 2 Similarity P 3 Real Page (C 1) 1. 000 0. 134 0. 531 Fake Page (C 2) 0. 134 1. 000 0. 157 Real Page (C 1) 0. 531 0. 157 1. 00025

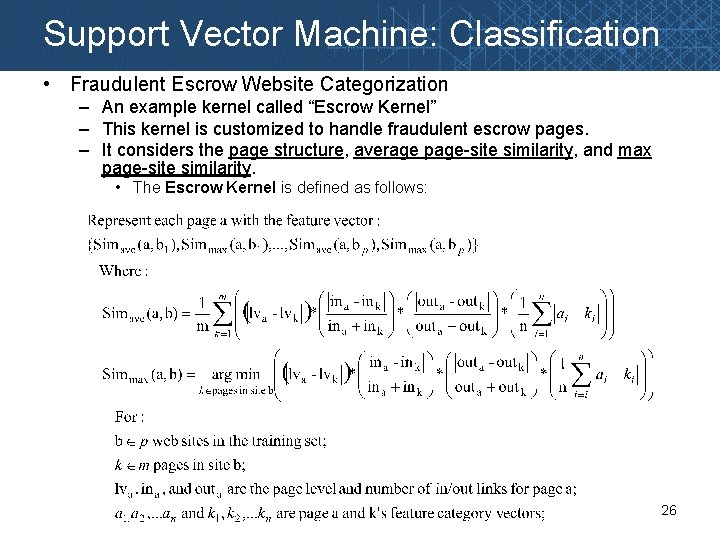

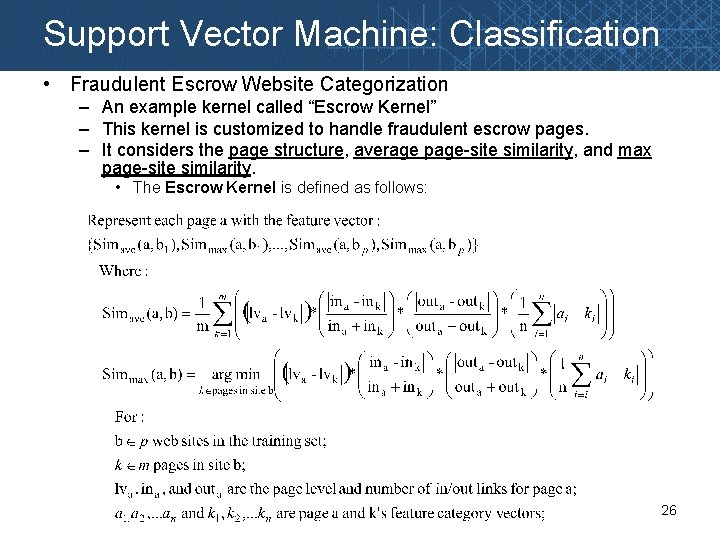

Support Vector Machine: Classification • Fraudulent Escrow Website Categorization – An example kernel called “Escrow Kernel” – This kernel is customized to handle fraudulent escrow pages. – It considers the page structure, average page-site similarity, and max page-site similarity. • The Escrow Kernel is defined as follows: 26

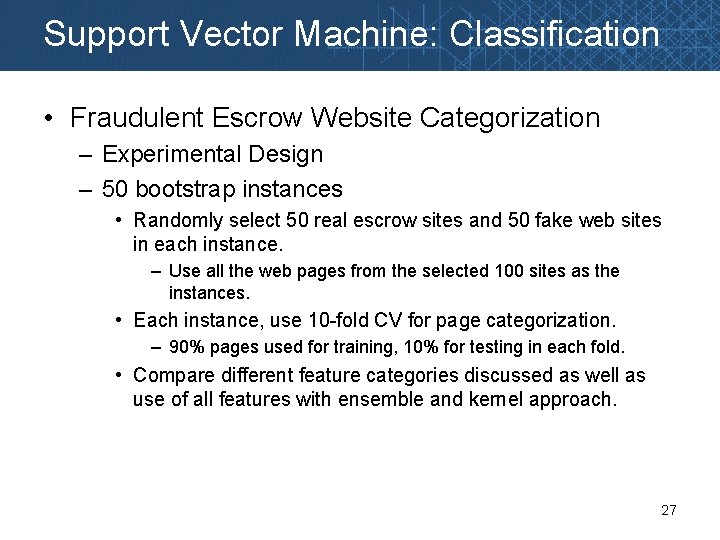

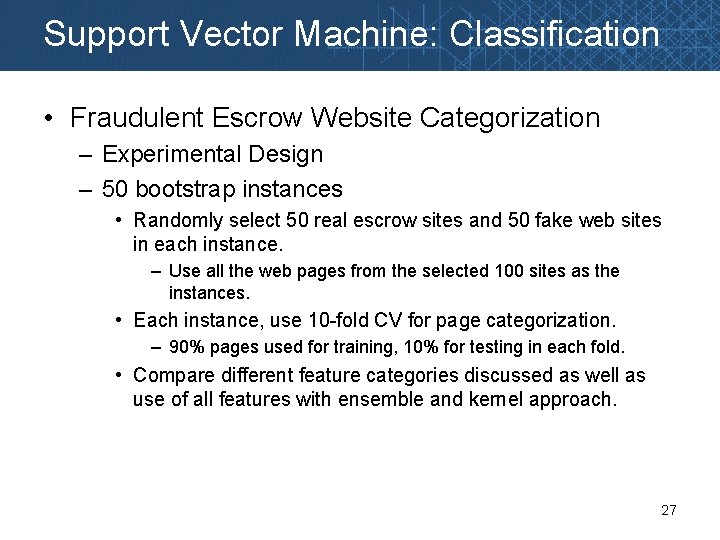

Support Vector Machine: Classification • Fraudulent Escrow Website Categorization – Experimental Design – 50 bootstrap instances • Randomly select 50 real escrow sites and 50 fake web sites in each instance. – Use all the web pages from the selected 100 sites as the instances. • Each instance, use 10 -fold CV for page categorization. – 90% pages used for training, 10% for testing in each fold. • Compare different feature categories discussed as well as use of all features with ensemble and kernel approach. 27

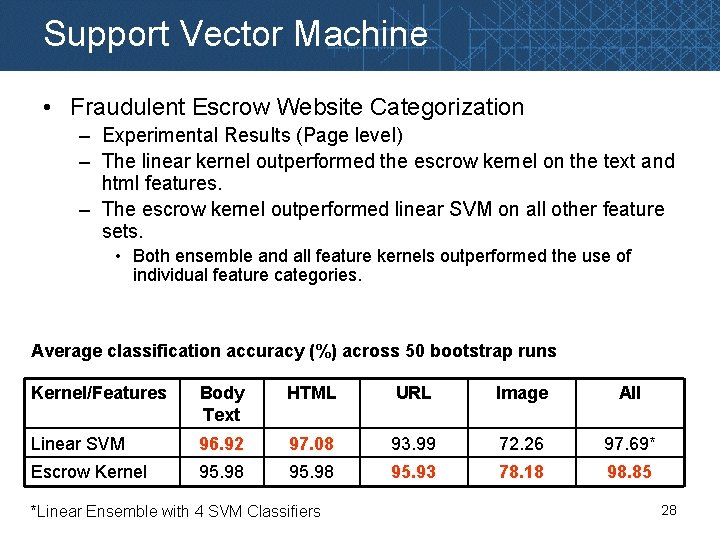

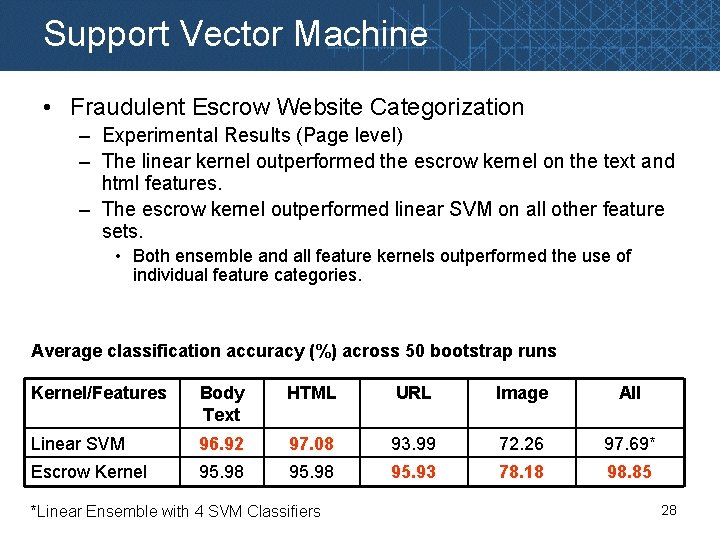

Support Vector Machine • Fraudulent Escrow Website Categorization – Experimental Results (Page level) – The linear kernel outperformed the escrow kernel on the text and html features. – The escrow kernel outperformed linear SVM on all other feature sets. • Both ensemble and all feature kernels outperformed the use of individual feature categories. Average classification accuracy (%) across 50 bootstrap runs Kernel/Features Body Text HTML URL Image All Linear SVM 96. 92 97. 08 93. 99 72. 26 97. 69* Escrow Kernel 95. 98 95. 93 78. 18 98. 85 *Linear Ensemble with 4 SVM Classifiers 28

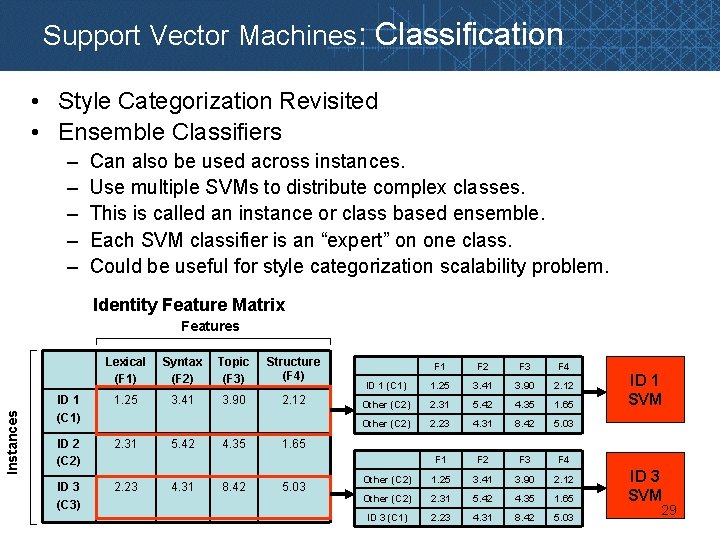

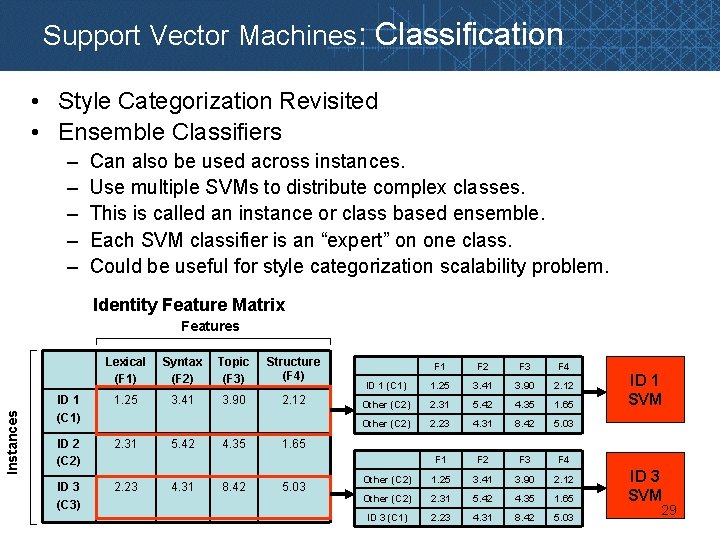

Support Vector Machines: Classification • Style Categorization Revisited • Ensemble Classifiers – – – Can also be used across instances. Use multiple SVMs to distribute complex classes. This is called an instance or class based ensemble. Each SVM classifier is an “expert” on one class. Could be useful for style categorization scalability problem. Identity Feature Matrix Instances Features Lexical (F 1) Syntax (F 2) Topic (F 3) Structure (F 4) ID 1 (C 1) 1. 25 3. 41 3. 90 2. 12 ID 2 (C 2) 2. 31 ID 3 (C 3) 2. 23 5. 42 4. 31 4. 35 8. 42 F 1 F 2 F 3 F 4 ID 1 (C 1) 1. 25 3. 41 3. 90 2. 12 Other (C 2) 2. 31 5. 42 4. 35 1. 65 Other (C 2) 2. 23 4. 31 8. 42 5. 03 F 1 F 2 F 3 F 4 Other (C 2) 1. 25 3. 41 3. 90 2. 12 Other (C 2) 2. 31 5. 42 4. 35 1. 65 ID 3 (C 1) 2. 23 4. 31 8. 42 5. 03 ID 1 SVM 1. 65 5. 03 ID 3 SVM 29

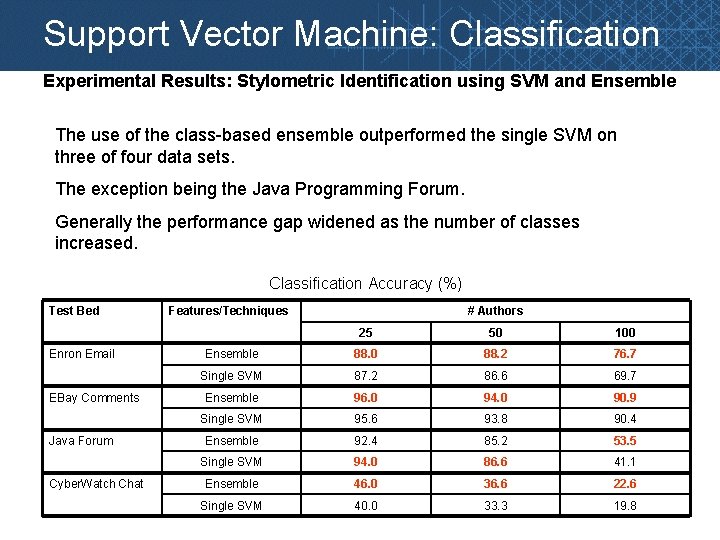

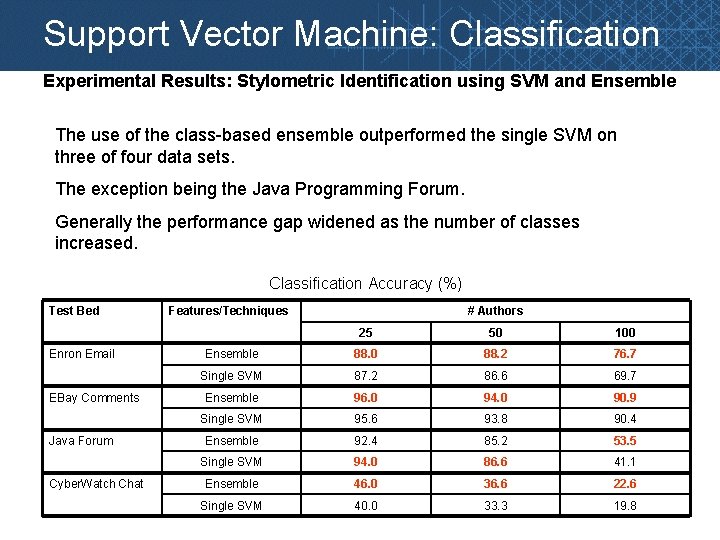

Support Vector Machine: Classification Experimental Results: Stylometric Identification using SVM and Ensemble The use of the class-based ensemble outperformed the single SVM on three of four data sets. The exception being the Java Programming Forum. Generally the performance gap widened as the number of classes increased. Classification Accuracy (%) Test Bed Enron Email EBay Comments Java Forum Cyber. Watch Chat Features/Techniques # Authors 25 50 100 Ensemble 88. 0 88. 2 76. 7 Single SVM 87. 2 86. 6 69. 7 Ensemble 96. 0 94. 0 90. 9 Single SVM 95. 6 93. 8 90. 4 Ensemble 92. 4 85. 2 53. 5 Single SVM 94. 0 86. 6 41. 1 Ensemble 46. 0 36. 6 22. 6 Single SVM 40. 0 33. 3 19. 8

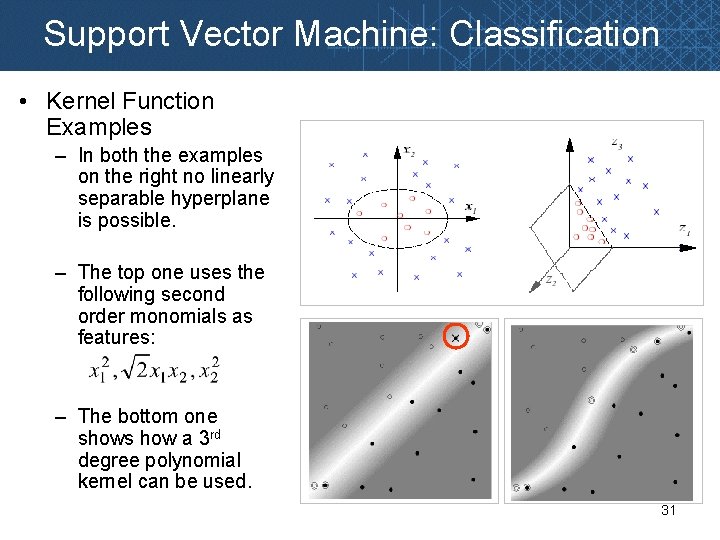

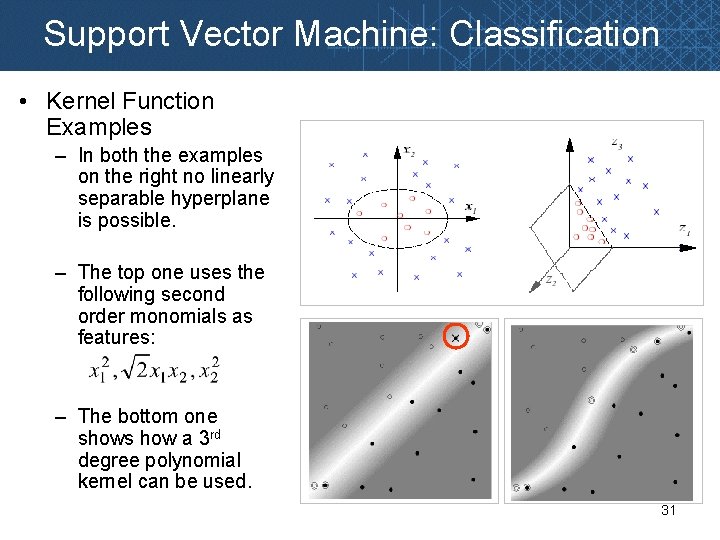

Support Vector Machine: Classification • Kernel Function Examples – In both the examples on the right no linearly separable hyperplane is possible. – The top one uses the following second order monomials as features: – The bottom one shows how a 3 rd degree polynomial kernel can be used. 31

Support Vector Machine: Classification • Popular Non-linear Kernel Functions – – – Polynomial Kernels Gaussian Radial Basis Function (RBF) Kernels Sigmoidal Kernels Tree Kernels Graph Kernels – Always be careful when designing a kernel • A poorly designed kernel can often reduce performance • The kernel should be designed such that the similarity scores or structure created by the transformation places related instances in a manner separable from unrelated instances. • Garbage in – garbage out • Live by the kernel…. die by the kernel. . . • ***Insert preferred idiom here*** 32

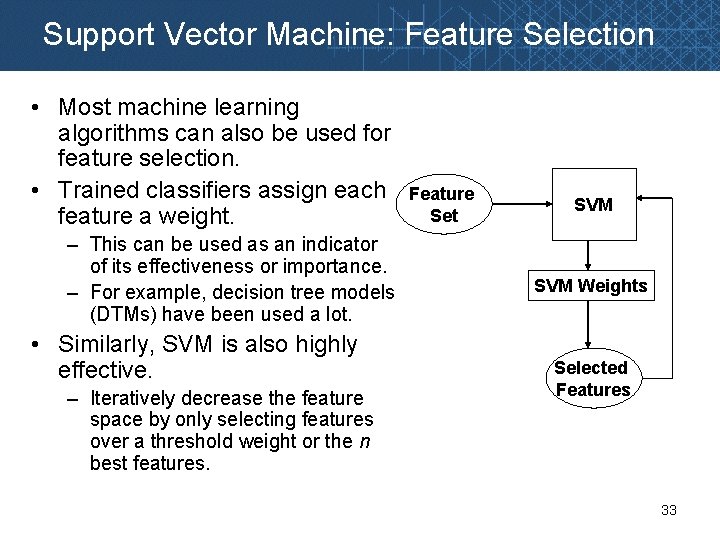

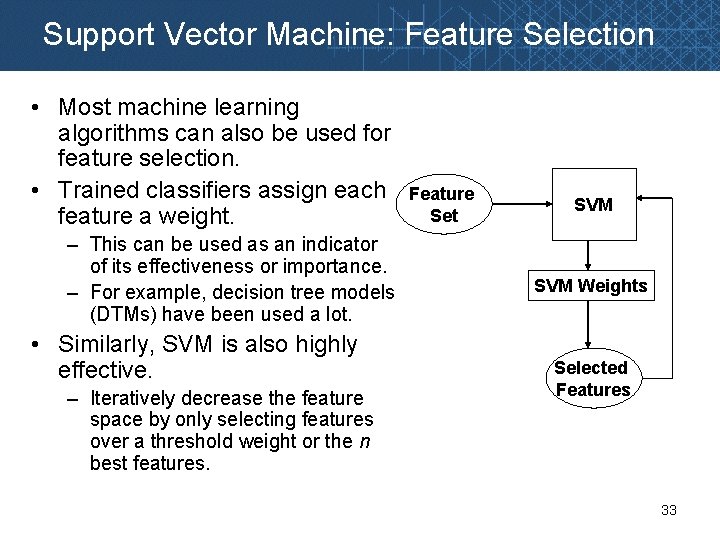

Support Vector Machine: Feature Selection • Most machine learning algorithms can also be used for feature selection. • Trained classifiers assign each feature a weight. – This can be used as an indicator of its effectiveness or importance. – For example, decision tree models (DTMs) have been used a lot. • Similarly, SVM is also highly effective. – Iteratively decrease the feature space by only selecting features over a threshold weight or the n best features. Feature Set SVM Weights Selected Features 33

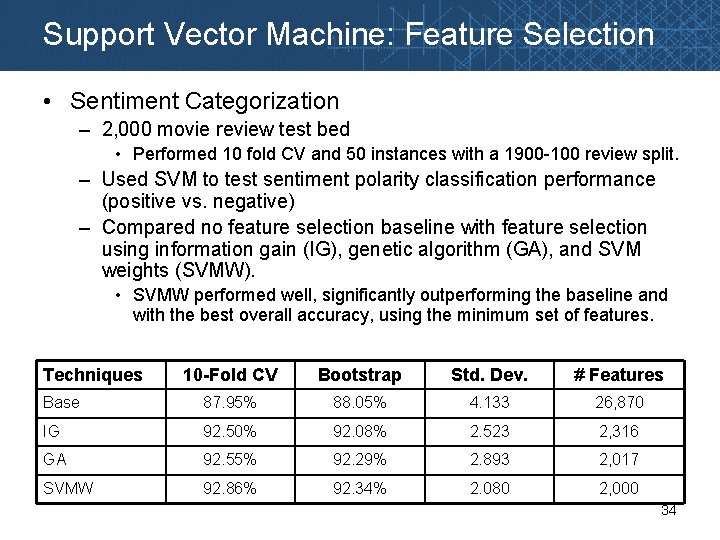

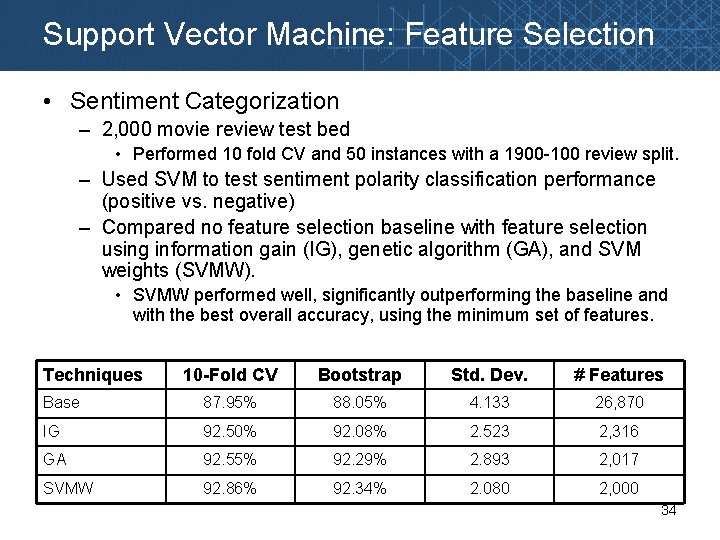

Support Vector Machine: Feature Selection • Sentiment Categorization – 2, 000 movie review test bed • Performed 10 fold CV and 50 instances with a 1900 -100 review split. – Used SVM to test sentiment polarity classification performance (positive vs. negative) – Compared no feature selection baseline with feature selection using information gain (IG), genetic algorithm (GA), and SVM weights (SVMW). • SVMW performed well, significantly outperforming the baseline and with the best overall accuracy, using the minimum set of features. Techniques 10 -Fold CV Bootstrap Std. Dev. # Features Base 87. 95% 88. 05% 4. 133 26, 870 IG 92. 50% 92. 08% 2. 523 2, 316 GA 92. 55% 92. 29% 2. 893 2, 017 SVMW 92. 86% 92. 34% 2. 080 2, 000 34

Support Vector Machine: Regression • SVM regression is designed to handle continuous data predictions. • Useful for problems where the classes lie along a continuum instead of discrete classes. – Stock Prediction • Predicting the impact a news story will have on a company’s stock price. – Sentiment Categorization • Differentiating 1, 2, 3, 4, and 5 star movie and product reviews. • Often the difference between a 1 and 2 star review is very subtle. • Being able to make more precise predictions can be useful here. 35

Principal Component Analysis: Background • PCA is a popular dimensionality reduction technique – Been around since the early 1900’s – Still used a lot for text and image processing – Idea is to project data into lower dimension feature space. • Where variables are transformed into a smaller set of principal components that account for the important variance in the feature matrix. – Used a lot for: • • Data preprocessing/filtering Feature selection/reduction Classification and clustering Visualization 36

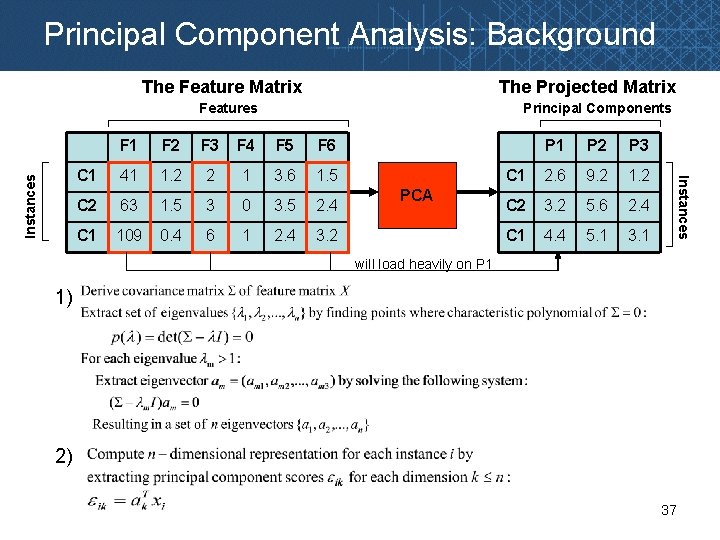

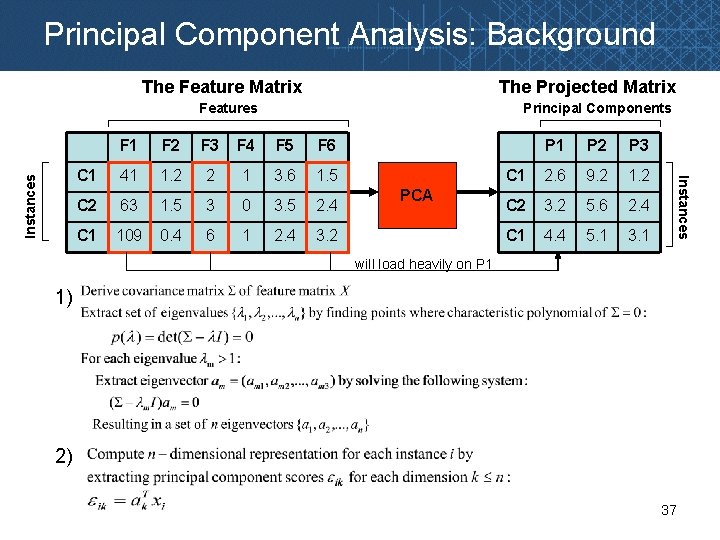

Principal Component Analysis: Background The Feature Matrix The Projected Matrix Principal Components F 1 F 2 F 3 F 4 F 5 F 6 C 1 41 1. 2 2 1 3. 6 1. 5 C 2 63 1. 5 3 0 3. 5 2. 4 C 1 109 0. 4 6 1 2. 4 3. 2 PCA P 1 P 2 P 3 C 1 2. 6 9. 2 1. 2 C 2 3. 2 5. 6 2. 4 C 1 4. 4 5. 1 3. 1 Instances Features will load heavily on P 1 1) 2) 37

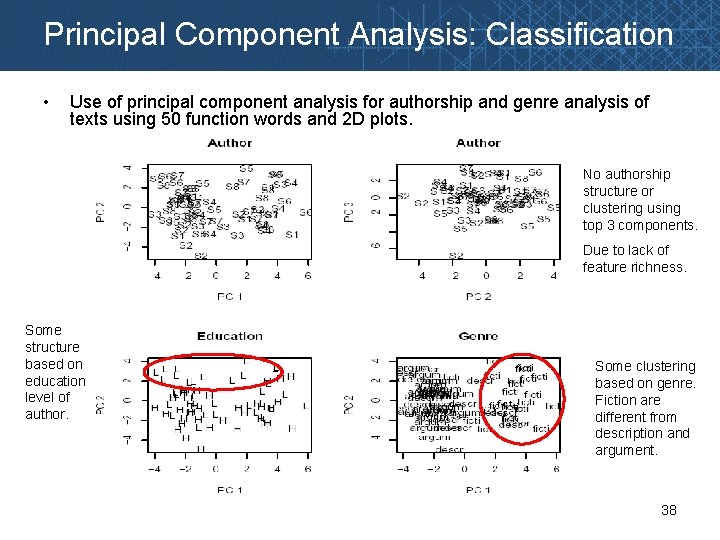

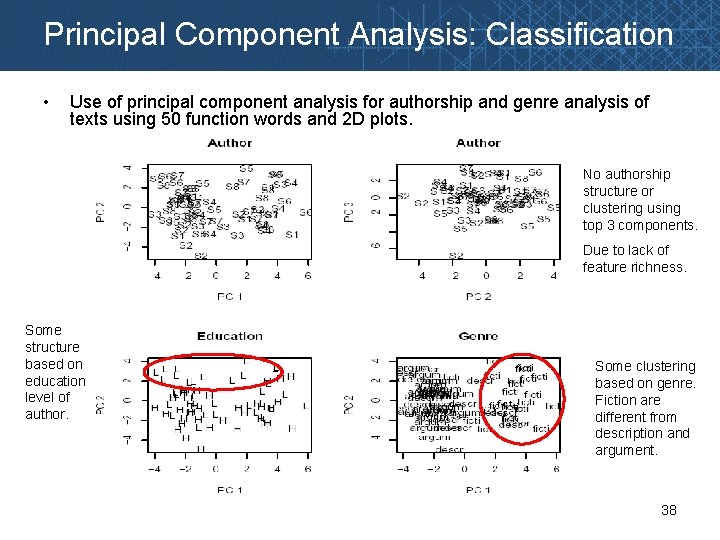

Principal Component Analysis: Classification • Use of principal component analysis for authorship and genre analysis of texts using 50 function words and 2 D plots. No authorship structure or clustering using top 3 components. Due to lack of feature richness. Some structure based on education level of author. Some clustering based on genre. Fiction are different from description and argument. 38

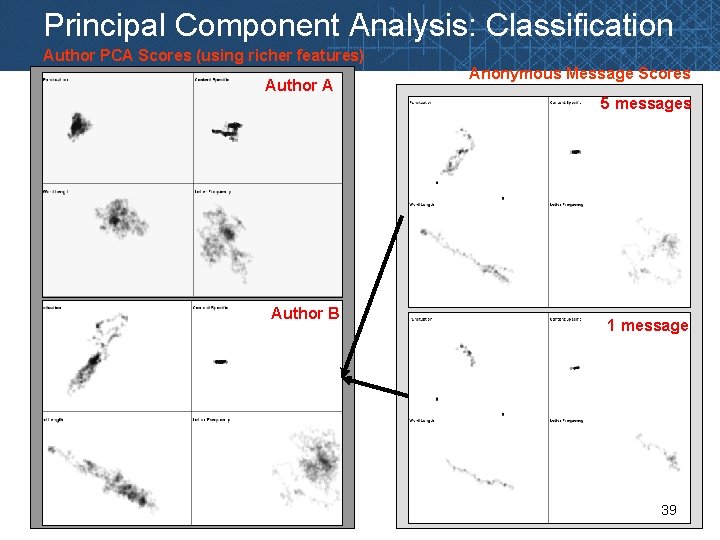

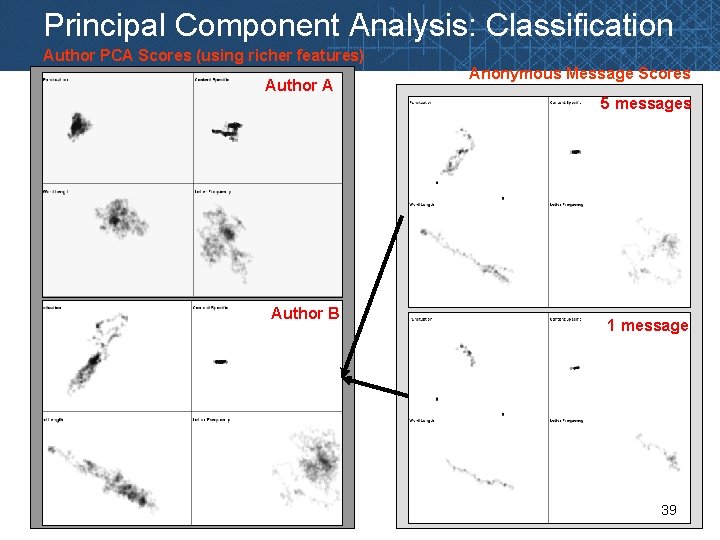

Principal Component Analysis: Classification Author PCA Scores (using richer features) Author A Anonymous Message Scores 5 messages Author B 1 message 39

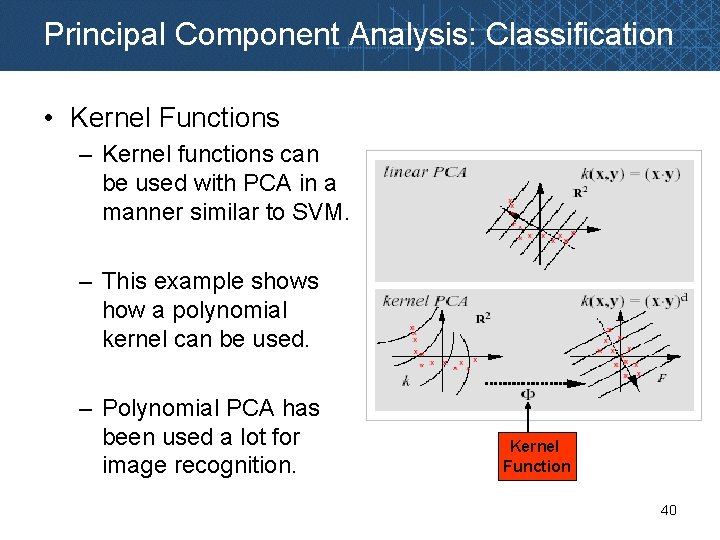

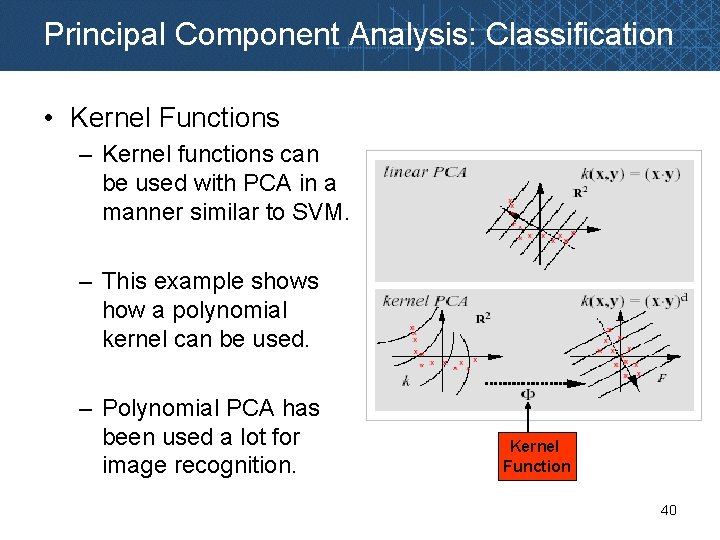

Principal Component Analysis: Classification • Kernel Functions – Kernel functions can be used with PCA in a manner similar to SVM. – This example shows how a polynomial kernel can be used. – Polynomial PCA has been used a lot for image recognition. Kernel Function 40

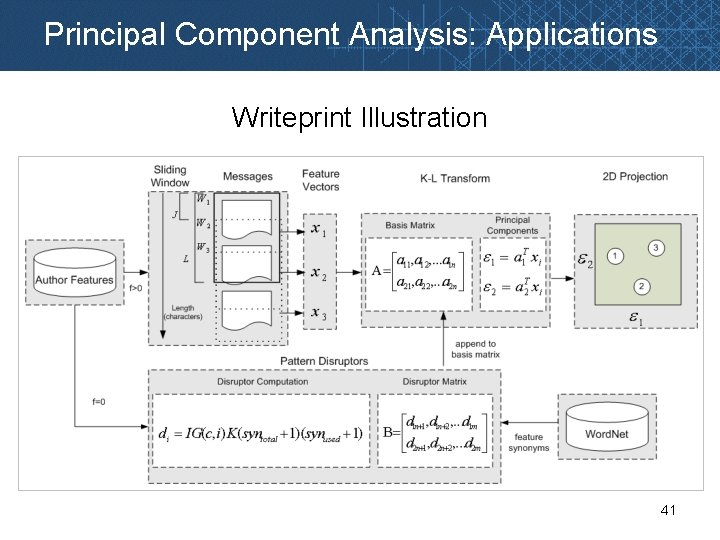

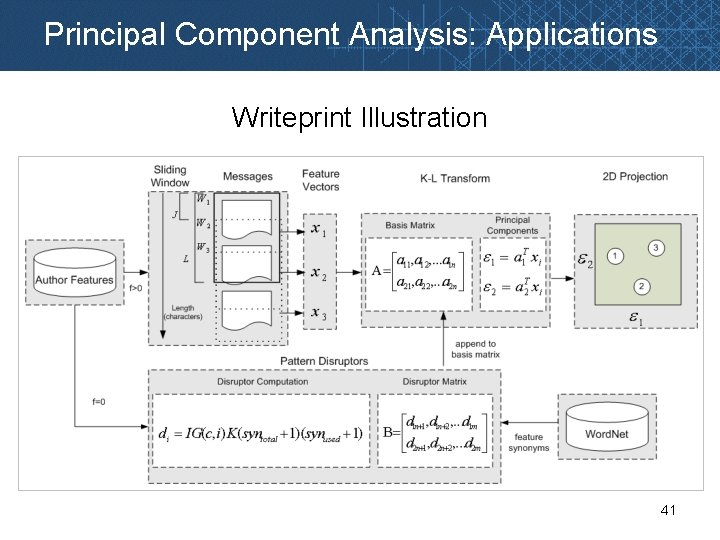

Principal Component Analysis: Applications Writeprint Illustration 41

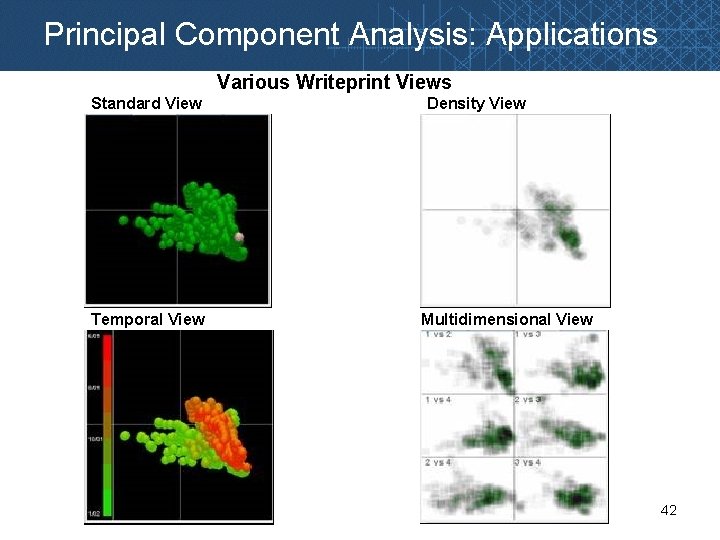

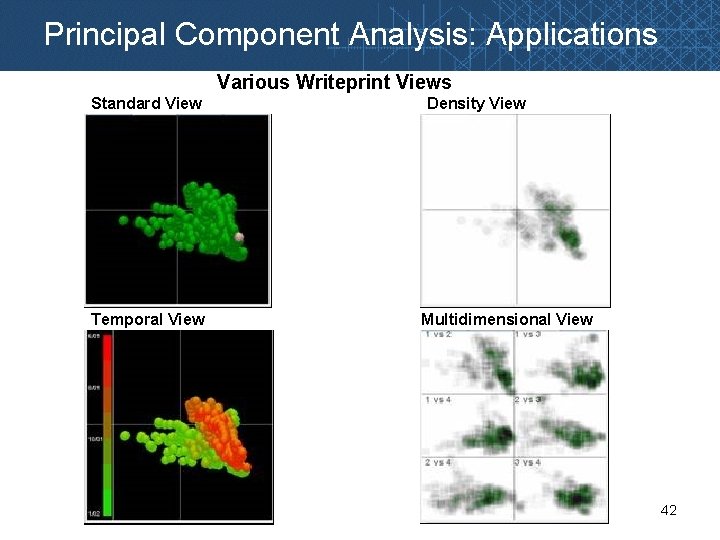

Principal Component Analysis: Applications Various Writeprint Views Standard View Temporal View Density View Multidimensional View 42

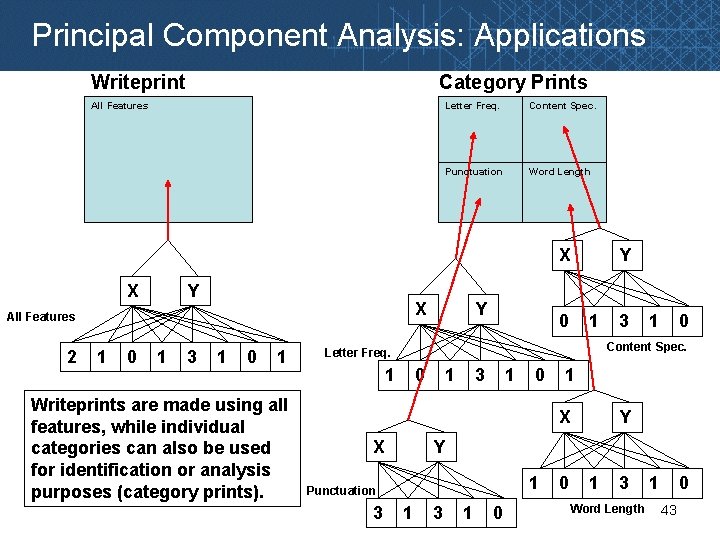

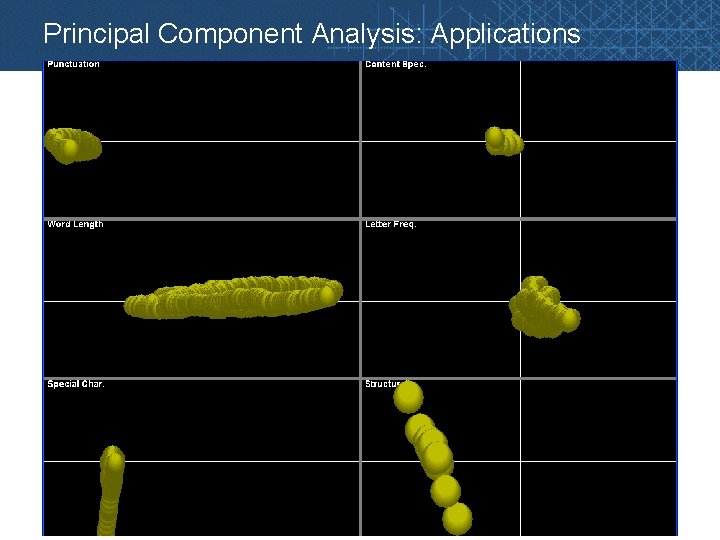

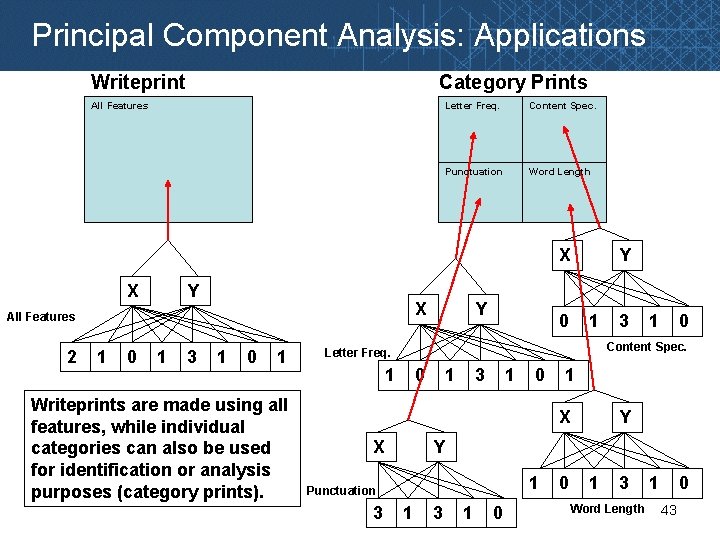

Principal Component Analysis: Applications Writeprint Category Prints All Features Letter Freq. Content Spec. Punctuation Word Length X X Y X All Features 2 1 0 1 3 1 0 1 Writeprints are made using all features, while individual categories can also be used for identification or analysis purposes (category prints). Y Y 0 1 1 0 Content Spec. Letter Freq. 1 0 1 3 1 0 1 X X Y Y 1 Punctuation 3 3 1 0 0 1 3 Word Length 1 0 43

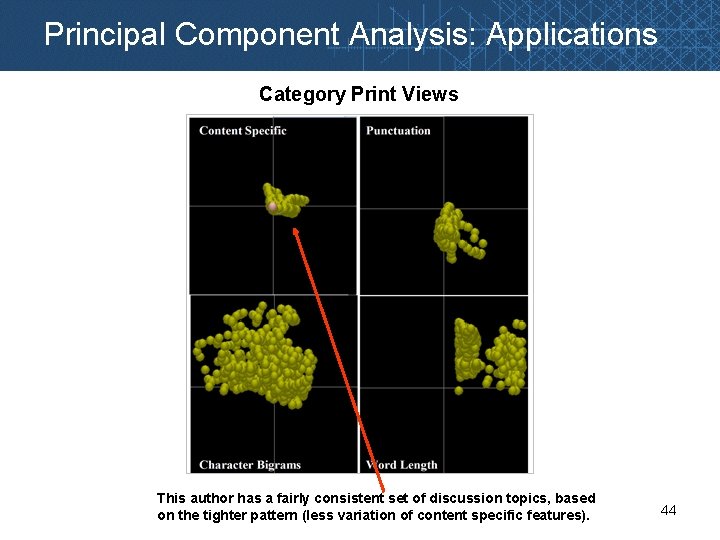

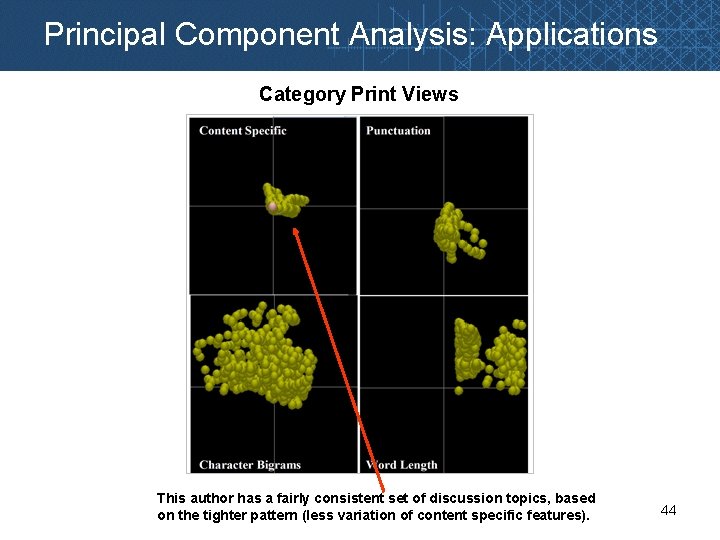

Principal Component Analysis: Applications Category Print Views This author has a fairly consistent set of discussion topics, based on the tighter pattern (less variation of content specific features). 44

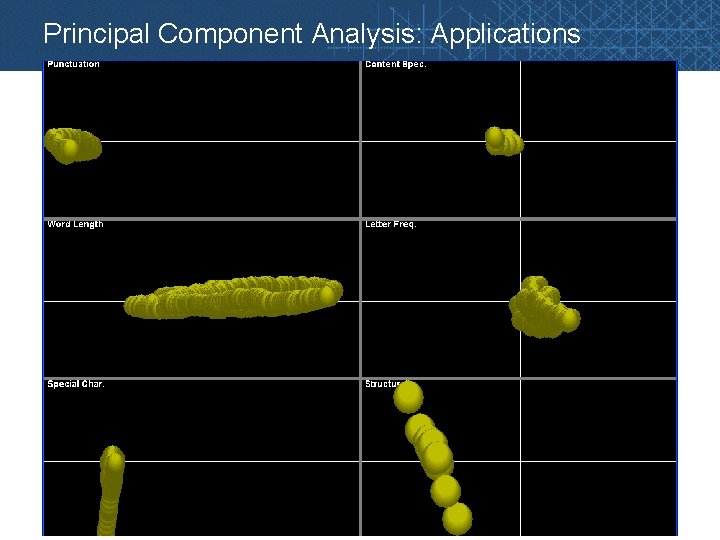

Principal Component Analysis: Applications 45

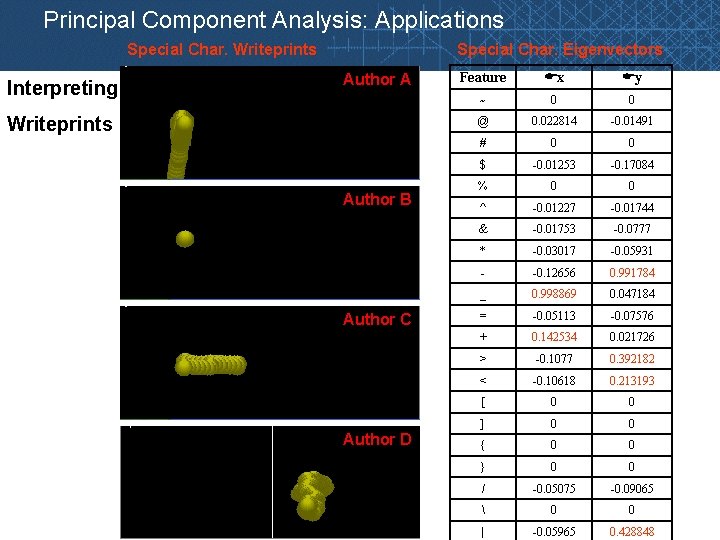

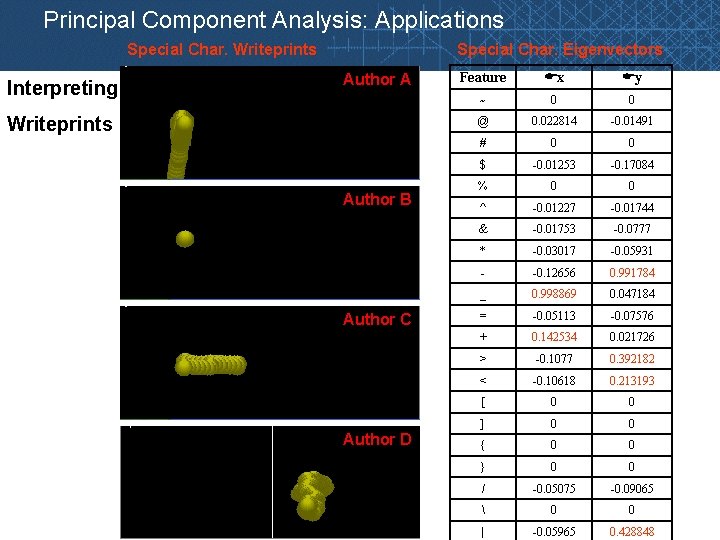

Principal Component Analysis: Applications Special Char. Writeprints Interpreting Special Char. Eigenvectors Author A Writeprints Author B Author C Author D Feature x y ~ 0 0 @ 0. 022814 -0. 01491 # 0 0 $ -0. 01253 -0. 17084 % 0 0 ^ -0. 01227 -0. 01744 & -0. 01753 -0. 0777 * -0. 03017 -0. 05931 - -0. 12656 0. 991784 _ 0. 998869 0. 047184 = -0. 05113 -0. 07576 + 0. 142534 0. 021726 > -0. 1077 0. 392182 < -0. 10618 0. 213193 [ 0 0 ] 0 0 { 0 0 } 0 0 / -0. 05075 -0. 09065 0 0 | -0. 05965 0. 428848

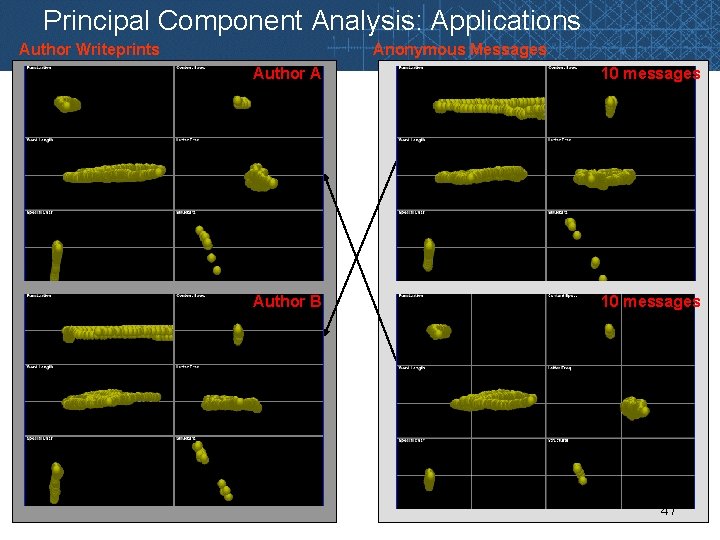

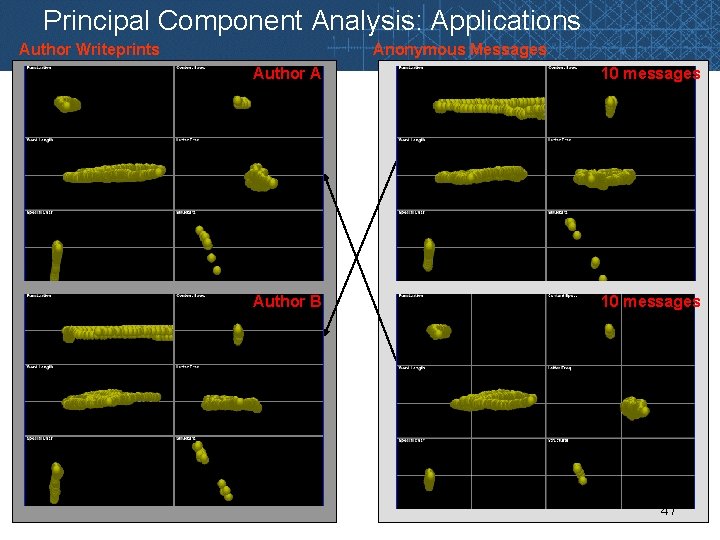

Principal Component Analysis: Applications Author Writeprints Anonymous Messages Author A 10 messages Author B 10 messages 47

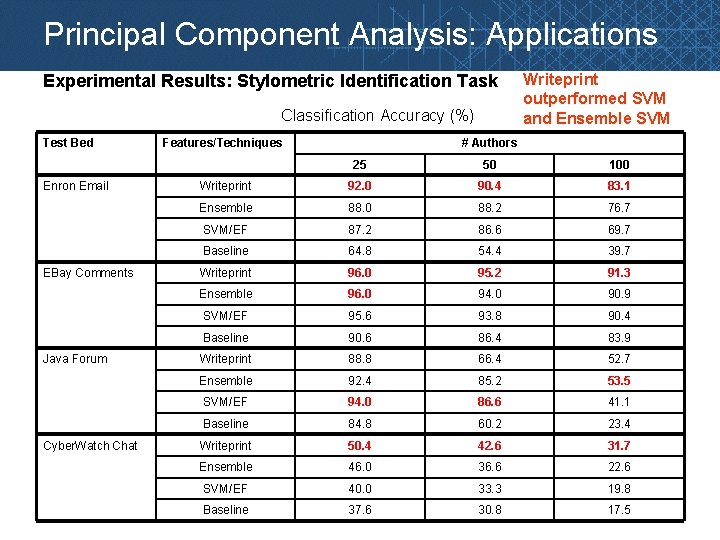

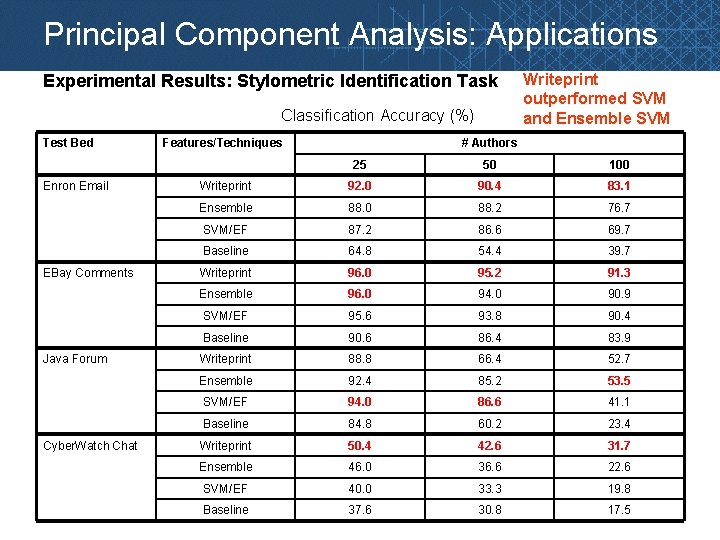

Principal Component Analysis: Applications Experimental Results: Stylometric Identification Task Classification Accuracy (%) Test Bed Enron Email EBay Comments Java Forum Cyber. Watch Chat Features/Techniques Writeprint outperformed SVM and Ensemble SVM # Authors 25 50 100 Writeprint 92. 0 90. 4 83. 1 Ensemble 88. 0 88. 2 76. 7 SVM/EF 87. 2 86. 6 69. 7 Baseline 64. 8 54. 4 39. 7 Writeprint 96. 0 95. 2 91. 3 Ensemble 96. 0 94. 0 90. 9 SVM/EF 95. 6 93. 8 90. 4 Baseline 90. 6 86. 4 83. 9 Writeprint 88. 8 66. 4 52. 7 Ensemble 92. 4 85. 2 53. 5 SVM/EF 94. 0 86. 6 41. 1 Baseline 84. 8 60. 2 23. 4 Writeprint 50. 4 42. 6 31. 7 Ensemble 46. 0 36. 6 22. 6 SVM/EF 40. 0 33. 3 19. 8 Baseline 37. 6 30. 8 17. 5

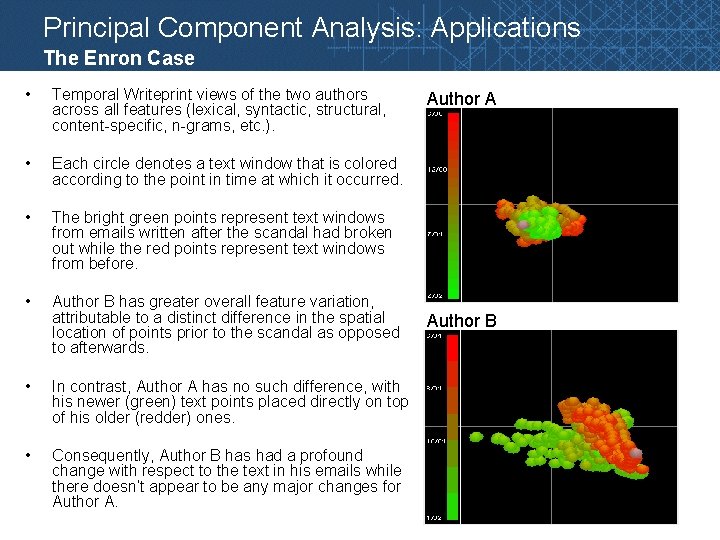

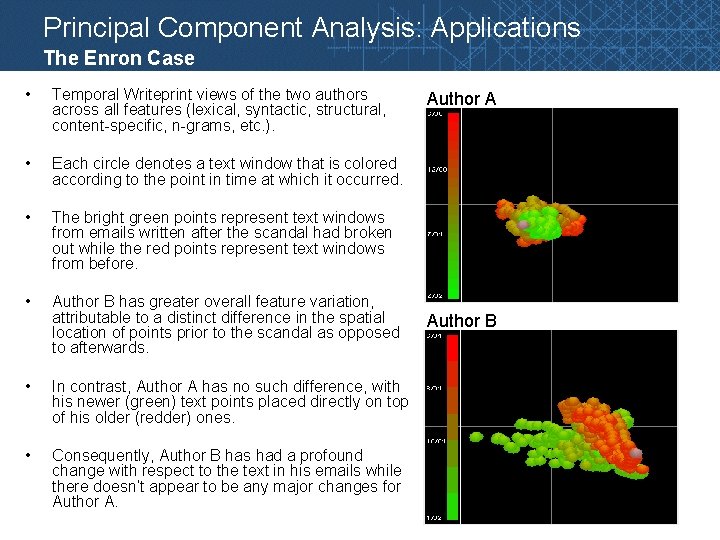

Principal Component Analysis: Applications The Enron Case • Temporal Writeprint views of the two authors across all features (lexical, syntactic, structural, content-specific, n-grams, etc. ). • Each circle denotes a text window that is colored according to the point in time at which it occurred. • The bright green points represent text windows from emails written after the scandal had broken out while the red points represent text windows from before. • Author B has greater overall feature variation, attributable to a distinct difference in the spatial location of points prior to the scandal as opposed to afterwards. • In contrast, Author A has no such difference, with his newer (green) text points placed directly on top of his older (redder) ones. • Consequently, Author B has had a profound change with respect to the text in his emails while there doesn’t appear to be any major changes for Author A Author B 49

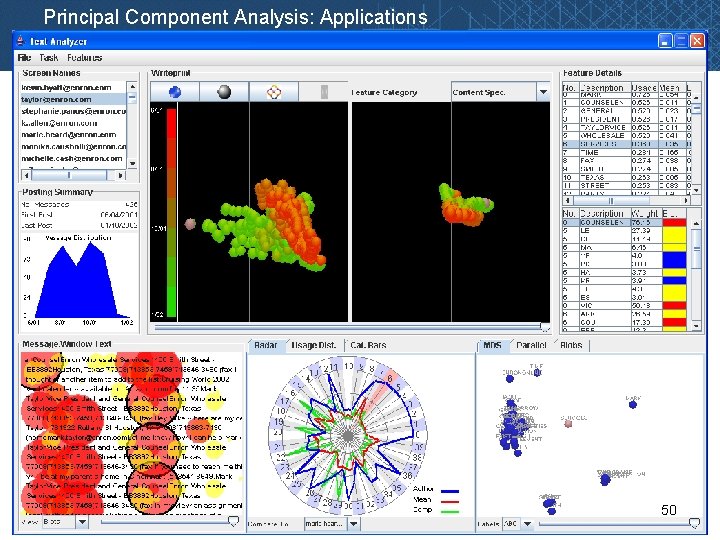

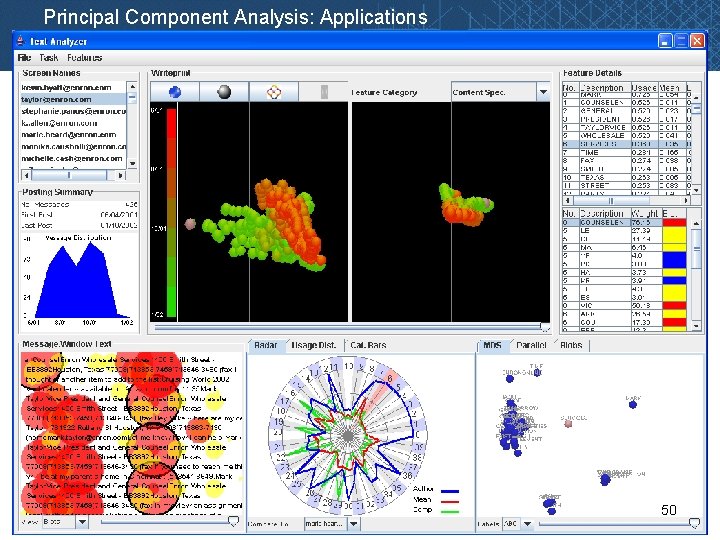

Principal Component Analysis: Applications 50

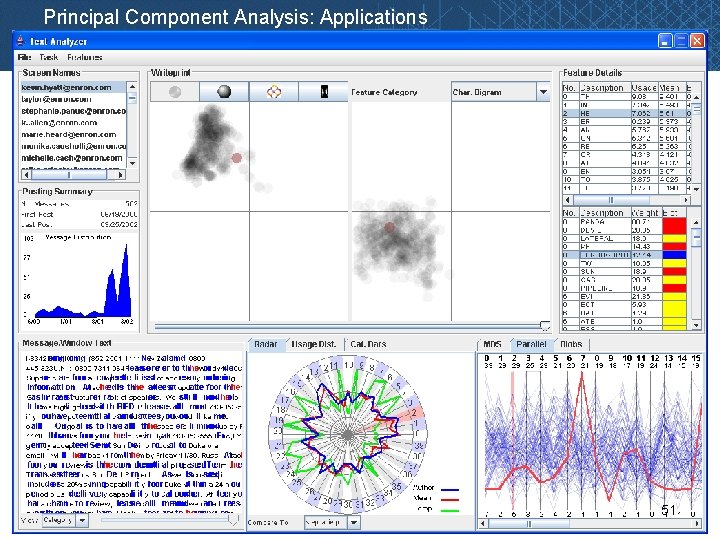

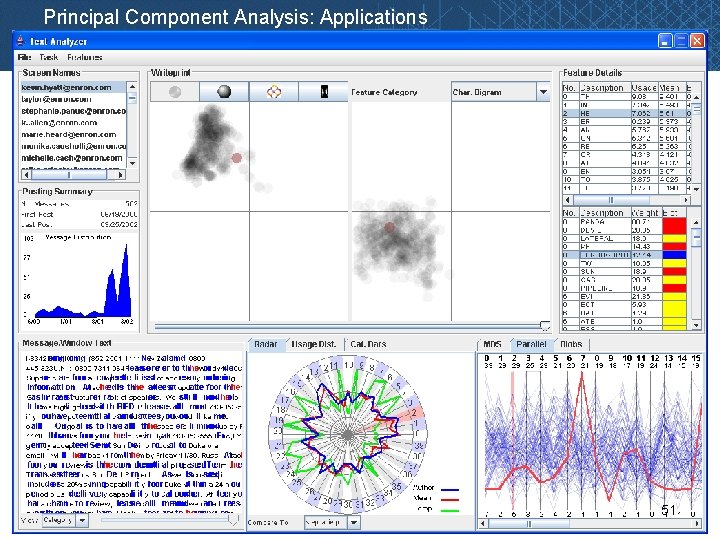

Principal Component Analysis: Applications 51

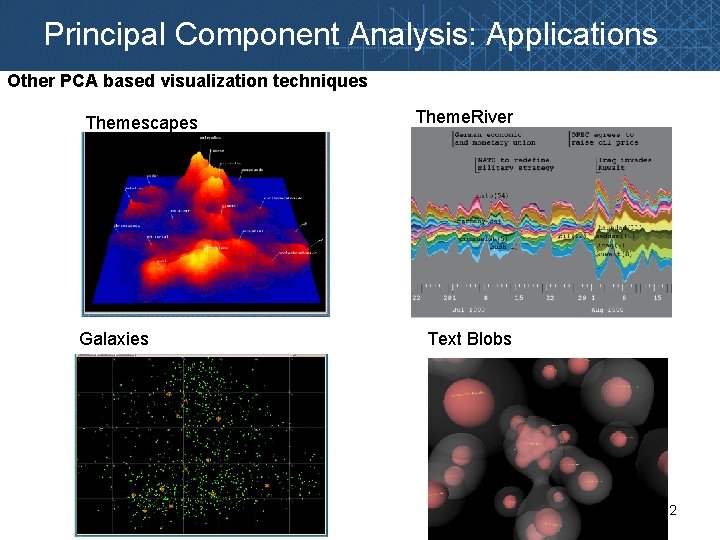

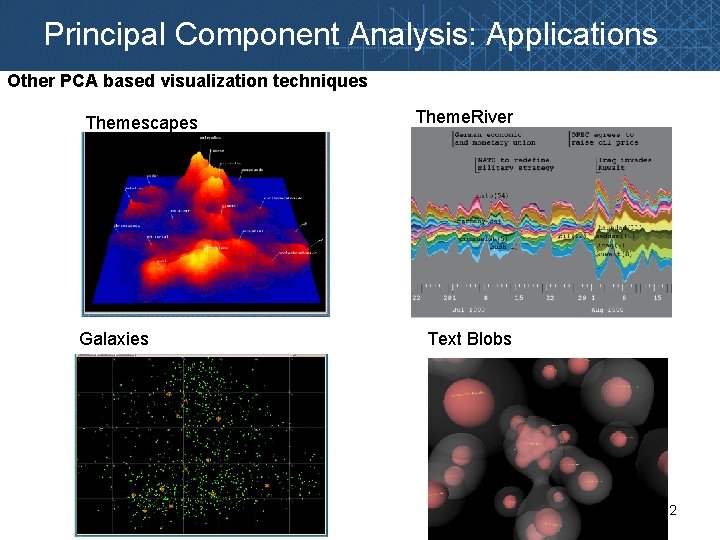

Principal Component Analysis: Applications Other PCA based visualization techniques Themescapes Galaxies Theme. River Text Blobs 52

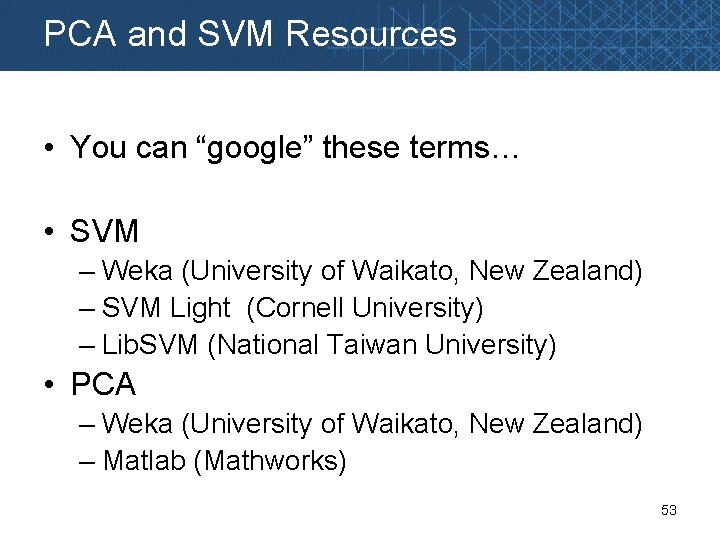

PCA and SVM Resources • You can “google” these terms… • SVM – Weka (University of Waikato, New Zealand) – SVM Light (Cornell University) – Lib. SVM (National Taiwan University) • PCA – Weka (University of Waikato, New Zealand) – Matlab (Mathworks) 53