Using Artificial Neural Networks and Support Vector Regression

- Slides: 6

Using Artificial Neural Networks and Support Vector Regression to Model the Lyapunov Exponent Adam Maus

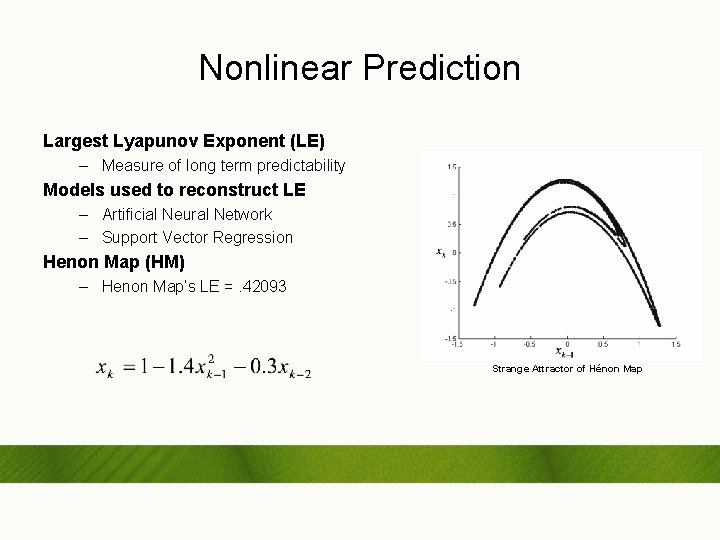

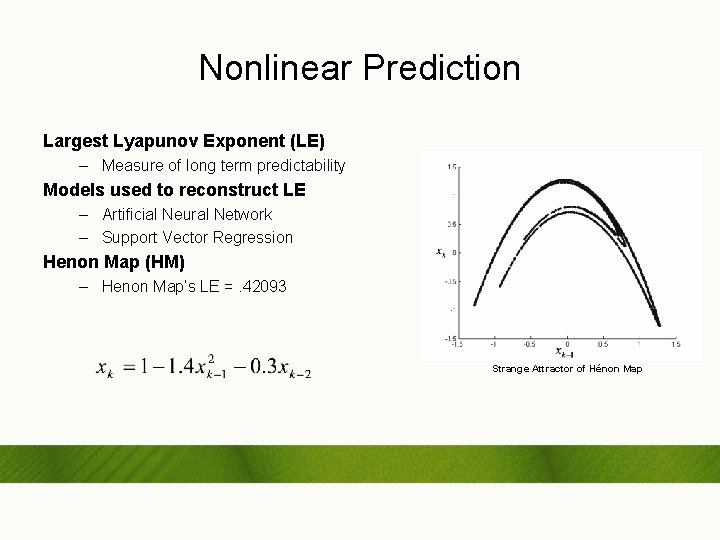

Nonlinear Prediction Largest Lyapunov Exponent (LE) – Measure of long term predictability Models used to reconstruct LE – Artificial Neural Network – Support Vector Regression Henon Map (HM) – Henon Map’s LE =. 42093 Strange Attractor of Hénon Map

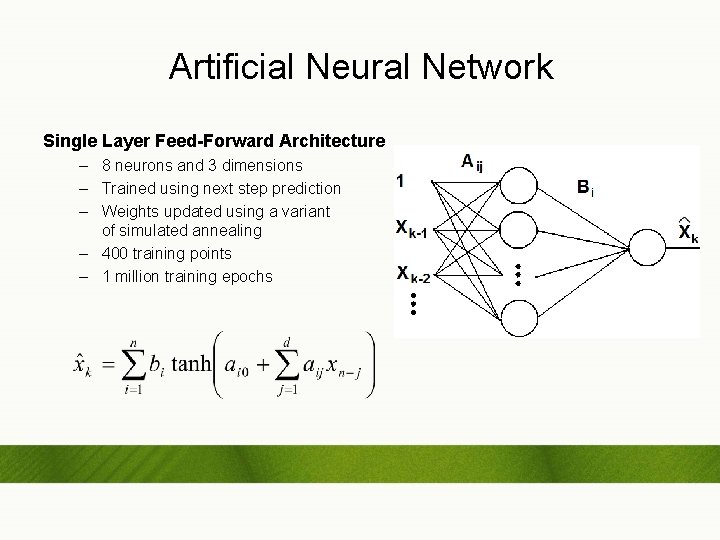

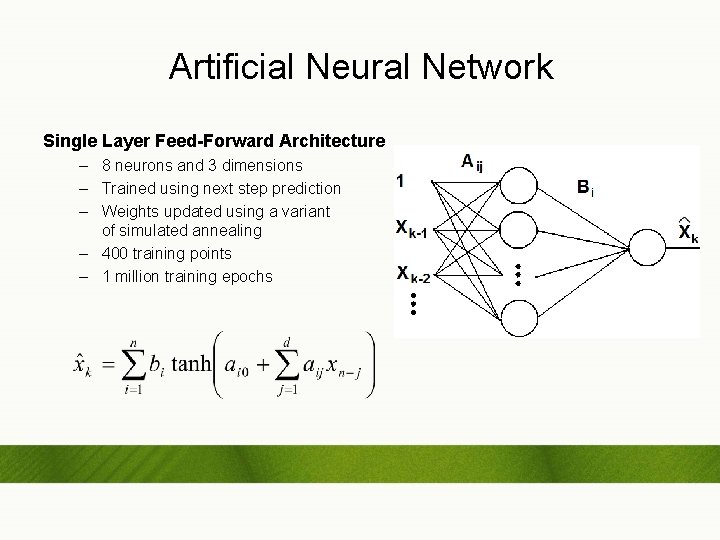

Artificial Neural Network Single Layer Feed-Forward Architecture – 8 neurons and 3 dimensions – Trained using next step prediction – Weights updated using a variant of simulated annealing – 400 training points – 1 million training epochs

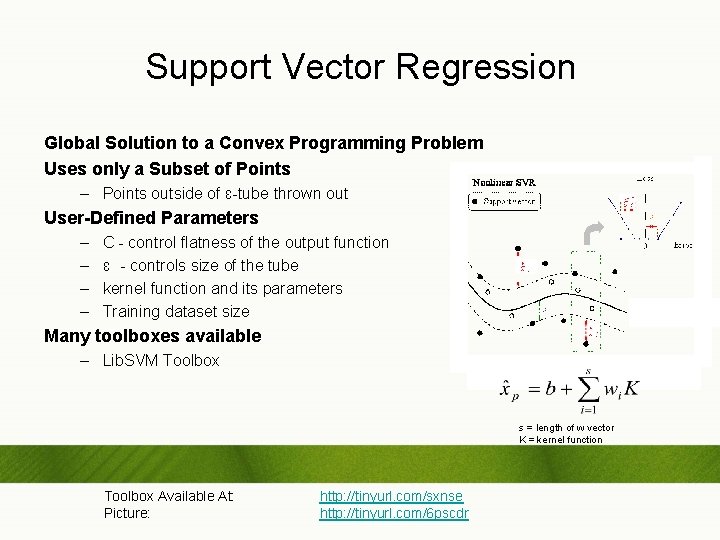

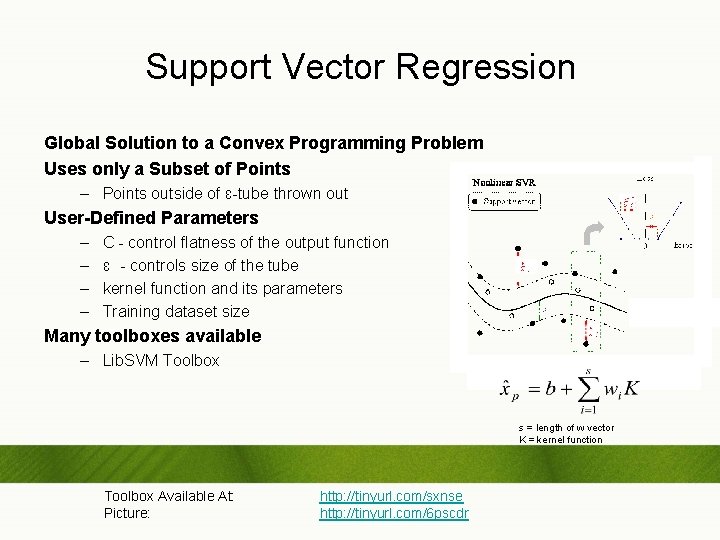

Support Vector Regression Global Solution to a Convex Programming Problem Uses only a Subset of Points – Points outside of ɛ-tube thrown out User-Defined Parameters – – C - control flatness of the output function ɛ - controls size of the tube kernel function and its parameters Training dataset size Many toolboxes available – Lib. SVM Toolbox s = length of w vector K = kernel function Toolbox Available At: Picture: http: //tinyurl. com/sxnse http: //tinyurl. com/6 pscdr

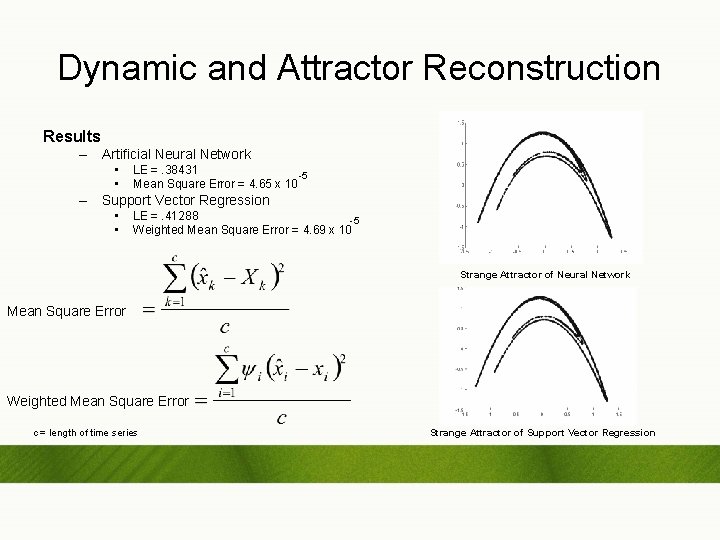

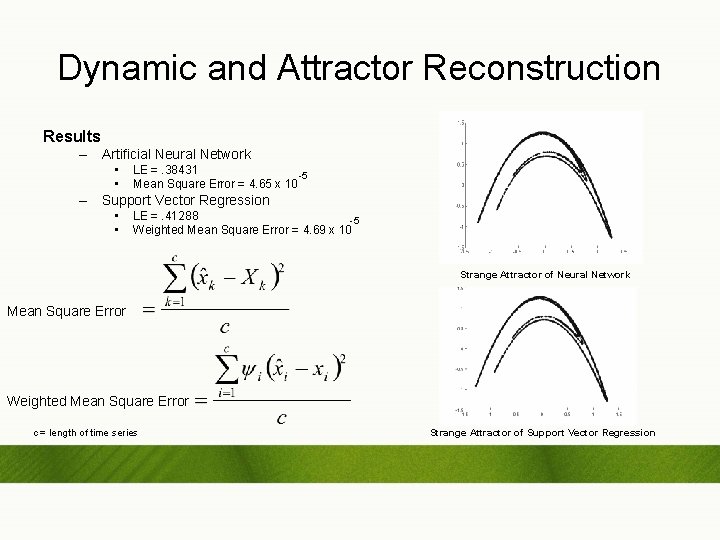

Dynamic and Attractor Reconstruction Results – Artificial Neural Network • • LE =. 38431 -5 Mean Square Error = 4. 65 x 10 – Support Vector Regression • • LE =. 41288 -5 Weighted Mean Square Error = 4. 69 x 10 Strange Attractor of Neural Network Mean Square Error Weighted Mean Square Error c = length of time series Strange Attractor of Support Vector Regression

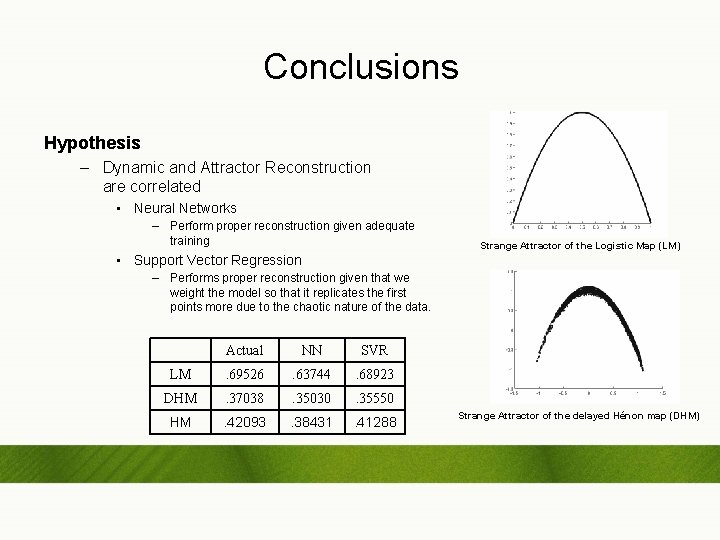

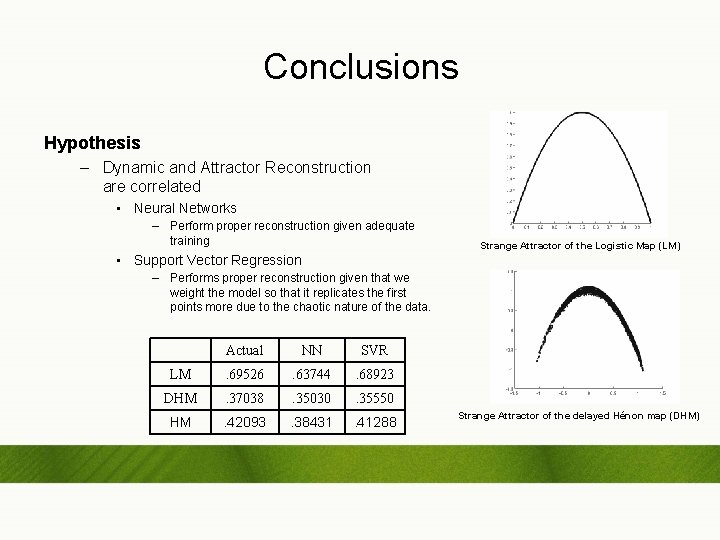

Conclusions Hypothesis – Dynamic and Attractor Reconstruction are correlated • Neural Networks – Perform proper reconstruction given adequate training • Support Vector Regression Strange Attractor of the Logistic Map (LM) – Performs proper reconstruction given that we weight the model so that it replicates the first points more due to the chaotic nature of the data. Actual NN SVR LM . 69526 . 63744 . 68923 DHM . 37038 . 35030 . 35550 HM . 42093 . 38431 . 41288 Strange Attractor of the delayed Hénon map (DHM)