Parallel Distributed Statistical Model Checking for Parameterized Timed

Parallel & Distributed Statistical Model Checking for Parameterized Timed Automata Kim G. Larsen Peter Bulychev Alexandre David Axel Legay Marius Mikucionis

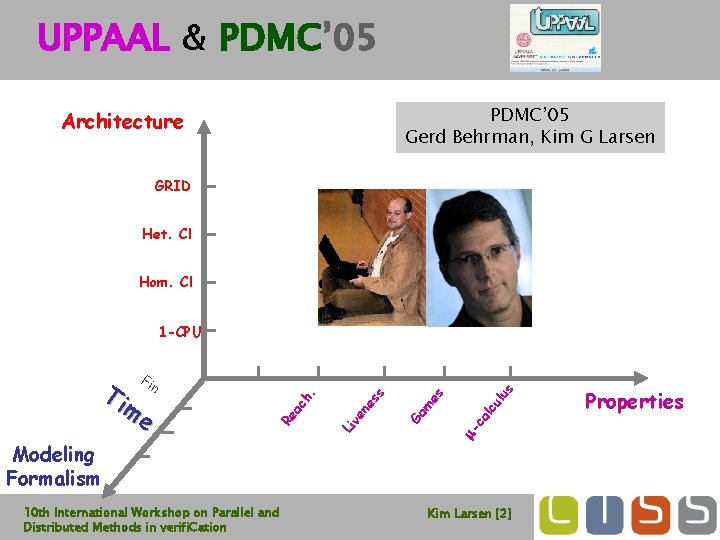

UPPAAL & PDMC’ 05 Gerd Behrman, Kim G Larsen Architecture GRID Het. Cl Hom. Cl 1 -CPU 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation us lc ul ca es ve ne ss Ga m m- Modeling Formalism Li ac Re Ti n me h. Fi Kim Larsen [2] Properties

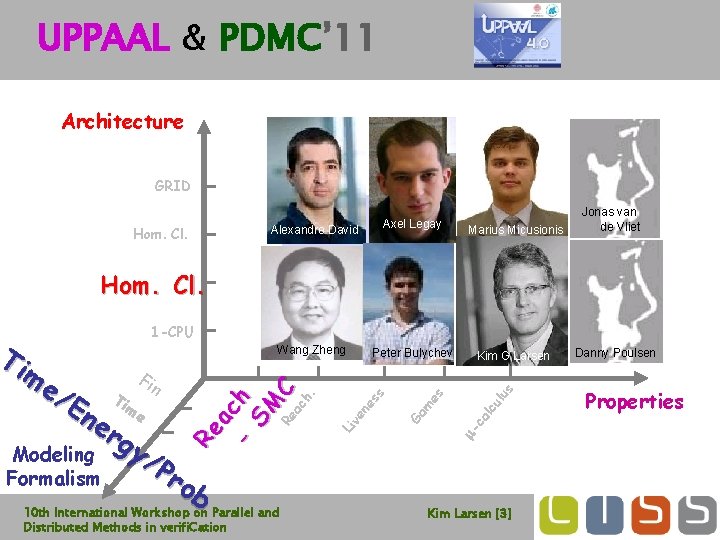

UPPAAL & PDMC’ 11 Architecture GRID Hom. Cl. Alexandre David Axel Legay Marius Micusionis Wang Zheng Peter Bulychev Kim G Larsen Jonas van de Vliet Hom. Cl. Pr ob 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation us lc ul es Ga m ve ne ss ca y/ Li rg m- Modeling Formalism me n . ne Ti ch /E Fi ac - h SM Re C a me Re Ti 1 -CPU Kim Larsen [3] Danny Poulsen Properties

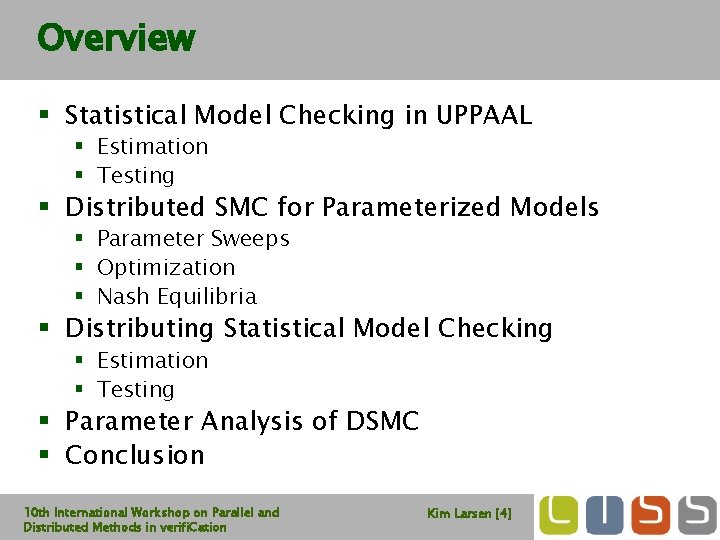

Overview § Statistical Model Checking in UPPAAL § Estimation § Testing § Distributed SMC for Parameterized Models § Parameter Sweeps § Optimization § Nash Equilibria § Distributing Statistical Model Checking § Estimation § Testing § Parameter Analysis of DSMC § Conclusion 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [4]

Overview § Statistical Model Checking in UPPAAL § Estimation § Testing § Distributed SMC for Parameterized Models § Parameter Sweeps § Optimization § Nash Equilibria § Distributing Statistical Model Checking § Estimation § Testing § Parameter Analysis of DSMC § Conclusion 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [5]

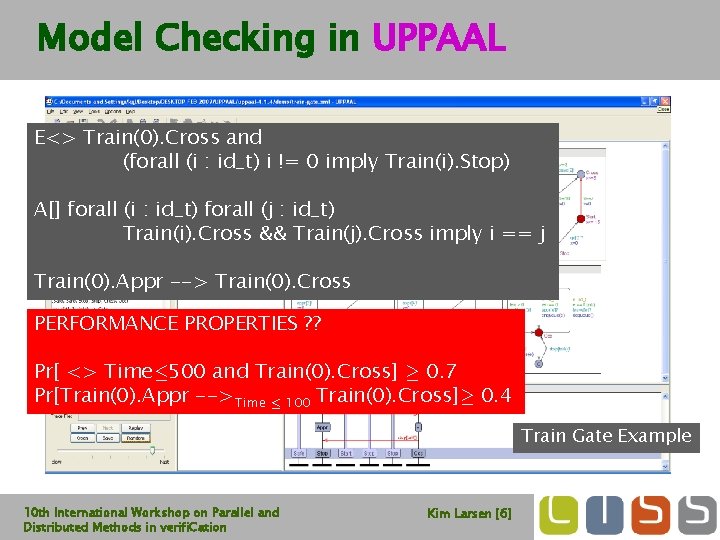

Model Checking in UPPAAL E<> Train(0). Cross and (forall (i : id_t) i != 0 imply Train(i). Stop) A[] forall (i : id_t) forall (j : id_t) Train(i). Cross && Train(j). Cross imply i == j Train(0). Appr --> Train(0). Cross PERFORMANCE PROPERTIES ? ? Pr[ <> Time· 500 and Train(0). Cross] ¸ 0. 7 Pr[Train(0). Appr -->Time · 100 Train(0). Cross]¸ 0. 4 Train Gate Example 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [6]

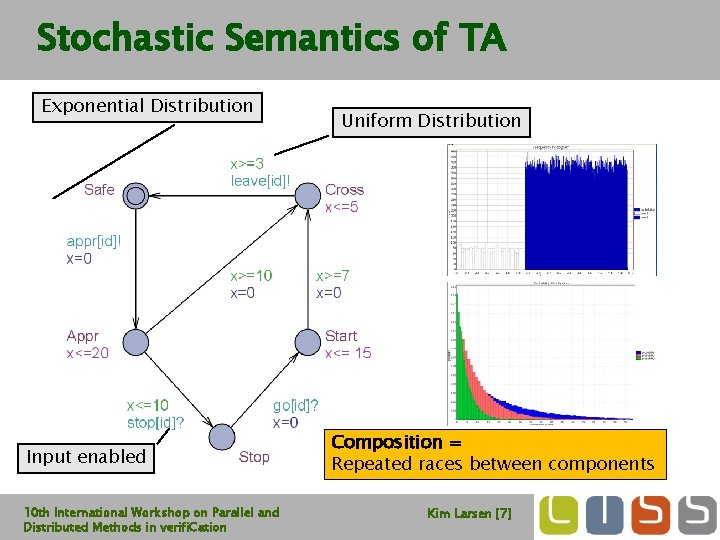

Stochastic Semantics of TA Exponential Distribution Input enabled 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Uniform Distribution Composition = Repeated races between components Kim Larsen [7]

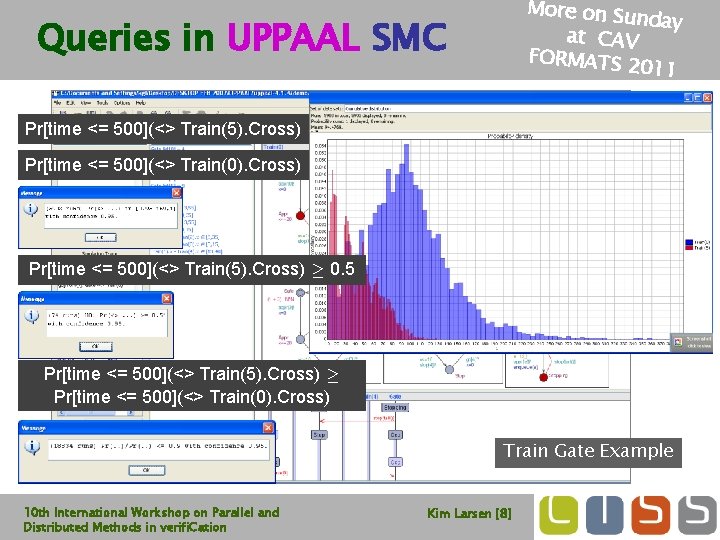

More on Su nday at CAV FORMATS 2 011 Queries in UPPAAL SMC Pr[time <= 500](<> Train(5). Cross) Pr[time <= 500](<> Train(0). Cross) Pr[time <= 500](<> Train(5). Cross) ¸ 0. 5 Pr[time <= 500](<> Train(5). Cross) ¸ Pr[time <= 500](<> Train(0). Cross) Train Gate Example 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [8]

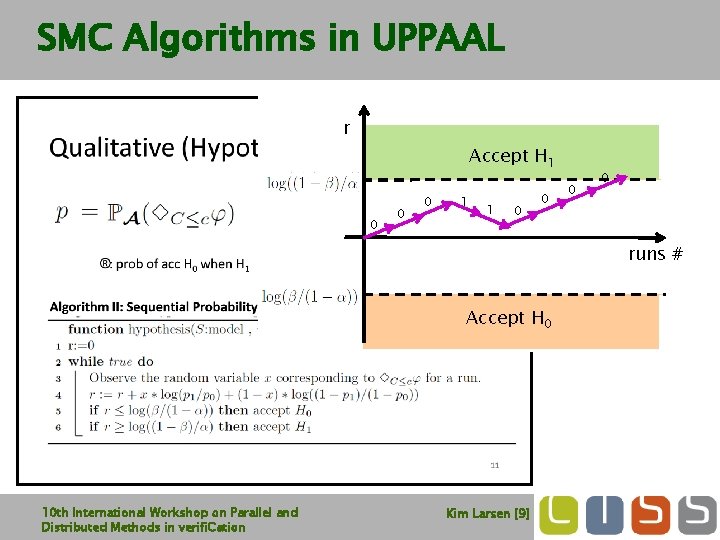

SMC Algorithms in UPPAAL r Accept H 1 0 0 0 1 1 0 0 runs # Accept H 0 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [9]

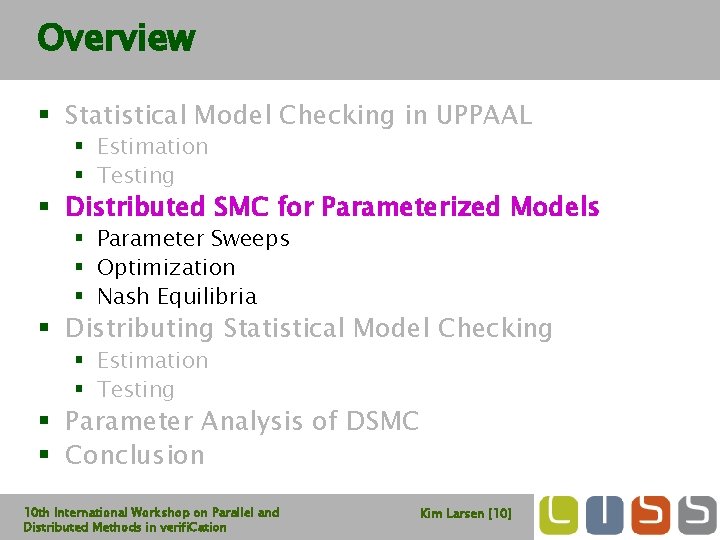

Overview § Statistical Model Checking in UPPAAL § Estimation § Testing § Distributed SMC for Parameterized Models § Parameter Sweeps § Optimization § Nash Equilibria § Distributing Statistical Model Checking § Estimation § Testing § Parameter Analysis of DSMC § Conclusion 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [10]

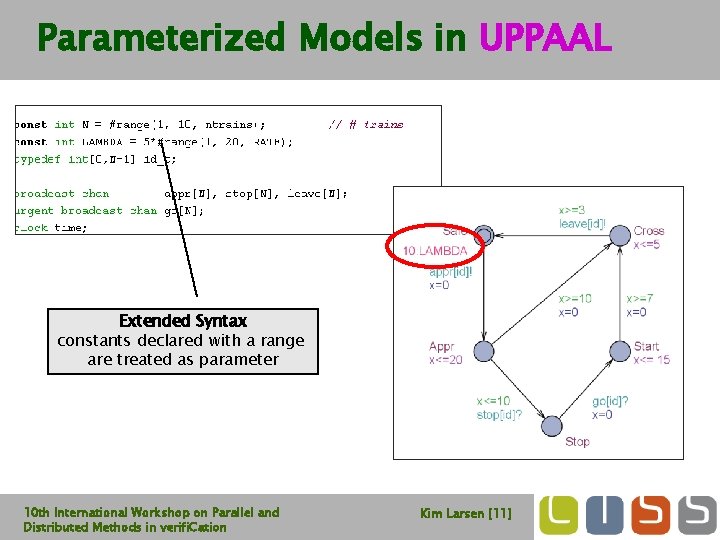

Parameterized Models in UPPAAL Extended Syntax constants declared with a range are treated as parameter 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [11]

. Cross ) “Embarrassingly Parallelizable” 10 th International Workshop Parameterized Analysis of Trains Pr[time<=100]( <>Train(0). Cross ) “Embarrassingly Parallelizable” 10 th International Workshop](http://slidetodoc.com/presentation_image_h/7fc497e52d5eb332634048213f9f497c/image-12.jpg)

Parameterized Analysis of Trains Pr[time<=100]( <>Train(0). Cross ) “Embarrassingly Parallelizable” 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [12]

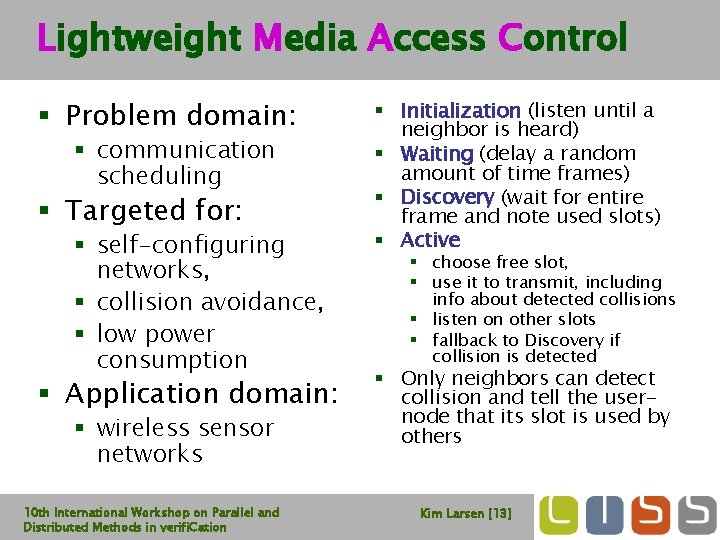

Lightweight Media Access Control § Problem domain: § communication scheduling § Targeted for: § self-configuring networks, § collision avoidance, § low power consumption § Application domain: § wireless sensor networks 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation § Initialization (listen until a neighbor is heard) § Waiting (delay a random amount of time frames) § Discovery (wait for entire frame and note used slots) § Active § choose free slot, § use it to transmit, including info about detected collisions § listen on other slots § fallback to Discovery if collision is detected § Only neighbors can detect collision and tell the usernode that its slot is used by others Kim Larsen [13]

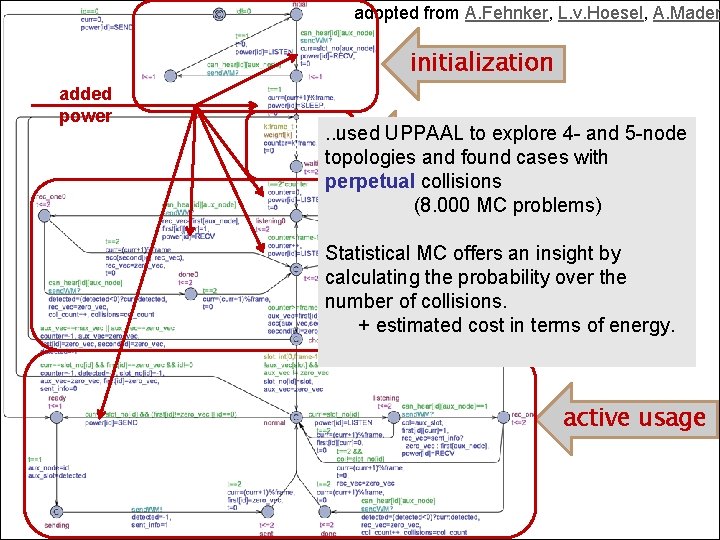

adopted from A. Fehnker, L. v. Hoesel, A. Mader initialization added power . . used UPPAAL to explore 4 - and 5 -node random wait topologies and found cases with perpetual collisions (8. 000 MC problems) discovery Statistical MC offers an insight by calculating the probability over the number of collisions. + estimated cost in terms of energy. active usage 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [14]

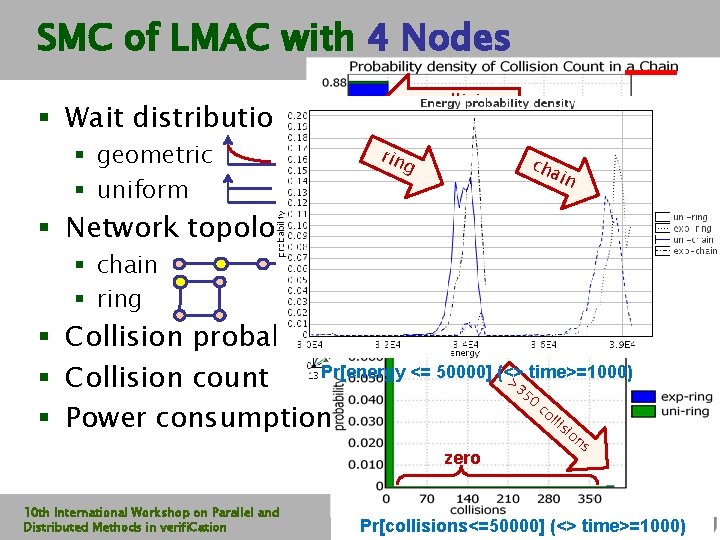

SMC of LMAC with 4 Nodes no collisions § Wait distribution: § geometric § uniform rin th cha er i e’ n s a lim it g § Network topology: <12 collisions rm tte ifo be un s em se § chain § ring r § Collision probability Pr[energy <= 50000] (<> > time>=1000) § Collision count 35 0 co Pr[<=160] (<> col_count>0) lli § Power consumption si o zero 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation ns Pr[collisions<=50000] (<> time>=1000)

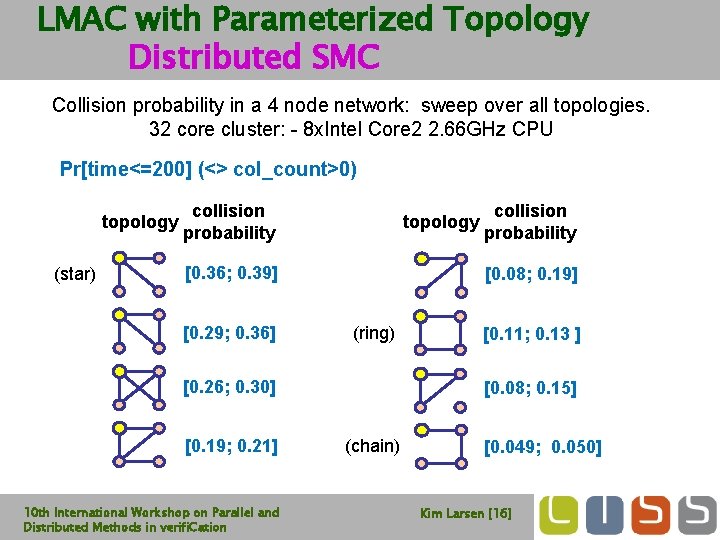

LMAC with Parameterized Topology Distributed SMC Collision probability in a 4 node network: sweep over all topologies. 32 core cluster: - 8 x. Intel Core 2 2. 66 GHz CPU Pr[time<=200] (<> col_count>0) topology (star) collision probability topology [0. 36; 0. 39] [0. 29; 0. 36] [0. 08; 0. 19] (ring) [0. 26; 0. 30] [0. 19; 0. 21] 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation collision probability [0. 11; 0. 13 ] [0. 08; 0. 15] (chain) [0. 049; 0. 050] Kim Larsen [16]

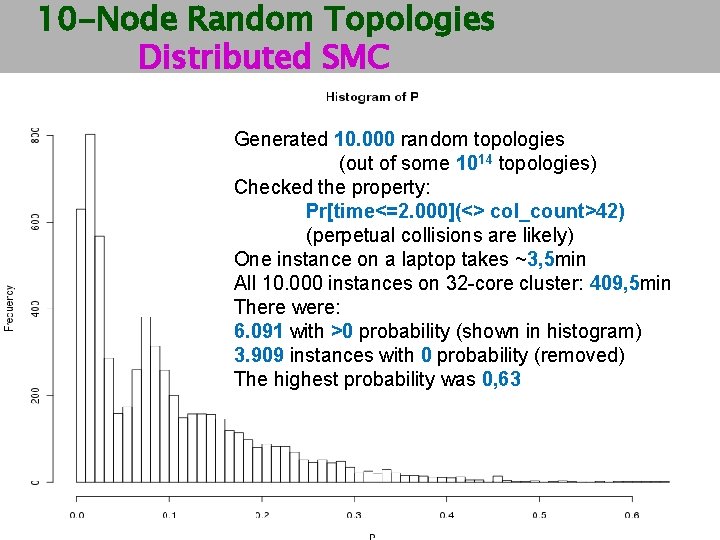

10 -Node Random Topologies Distributed SMC Generated 10. 000 random topologies (out of some 1014 topologies) Checked the property: Pr[time<=2. 000](<> col_count>42) (perpetual collisions are likely) One instance on a laptop takes ~3, 5 min All 10. 000 instances on 32 -core cluster: 409, 5 min There were: 6. 091 with >0 probability (shown in histogram) 3. 909 instances with 0 probability (removed) The highest probability was 0, 63 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [17]

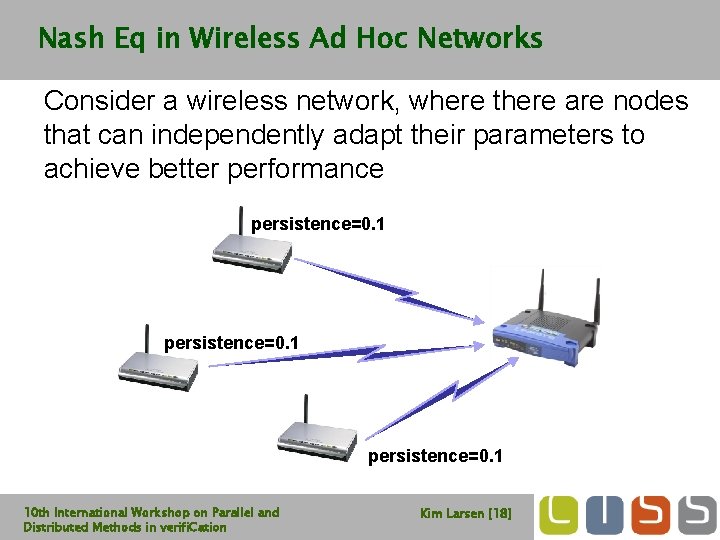

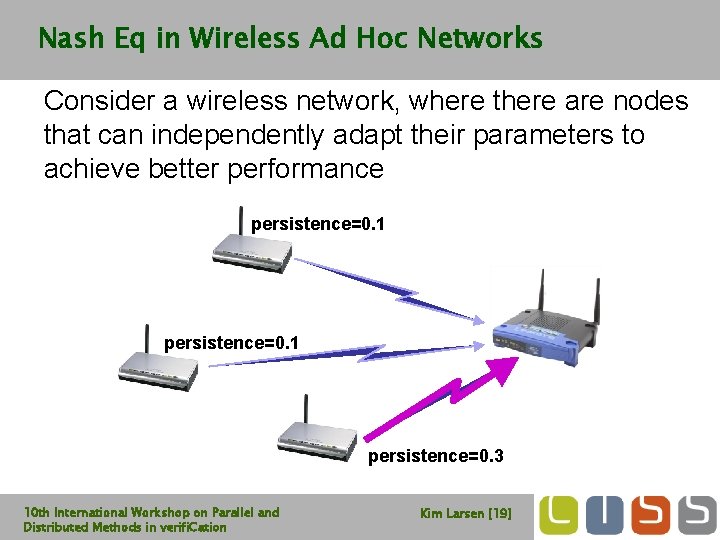

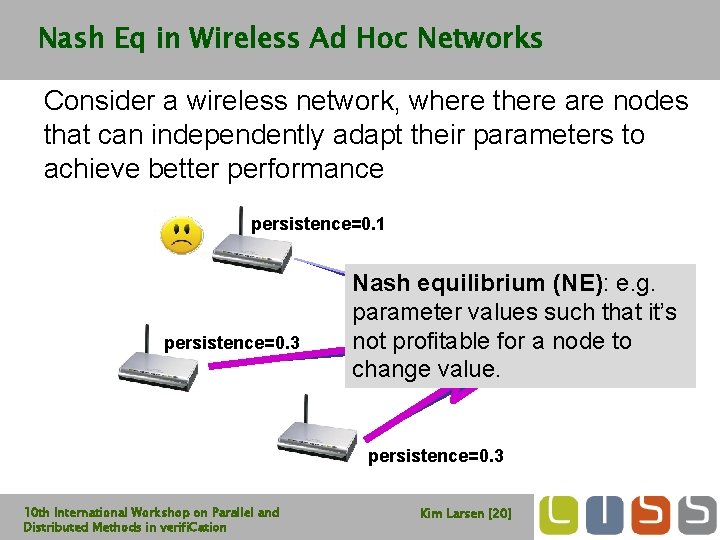

Nash Eq in Wireless Ad Hoc Networks Consider a wireless network, where there are nodes that can independently adapt their parameters to achieve better performance persistence=0. 1 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [18]

Nash Eq in Wireless Ad Hoc Networks Consider a wireless network, where there are nodes that can independently adapt their parameters to achieve better performance persistence=0. 1 persistence=0. 3 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [19]

Nash Eq in Wireless Ad Hoc Networks Consider a wireless network, where there are nodes that can independently adapt their parameters to achieve better performance persistence=0. 1 persistence=0. 3 Nash equilibrium (NE): e. g. parameter values such that it’s not profitable for a node to change value. persistence=0. 3 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [20]

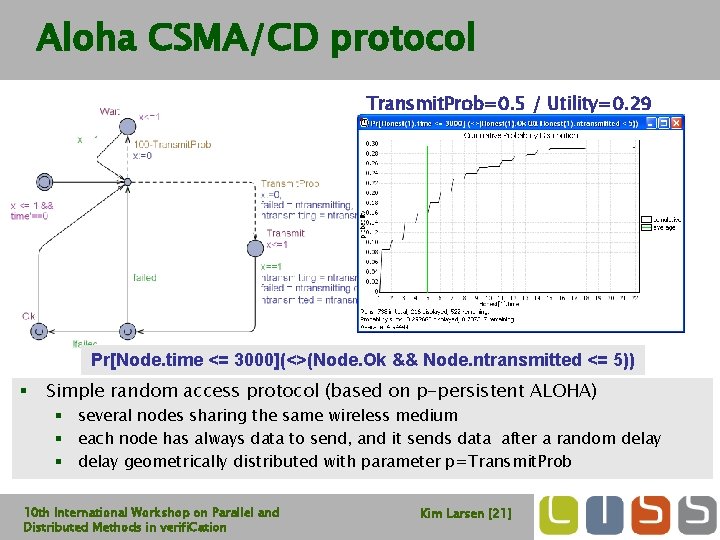

Aloha CSMA/CD protocol Transmit. Prob=0. 2 / Utility=0. 29 Utility=0. 91 Transmit. Prob=0. 5 Pr[Node. time <= 3000](<>(Node. Ok && Node. ntransmitted <= 5)) § Simple random access protocol (based on p-persistent ALOHA) § several nodes sharing the same wireless medium § each node has always data to send, and it sends data after a random delay § delay geometrically distributed with parameter p=Transmit. Prob 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [21]

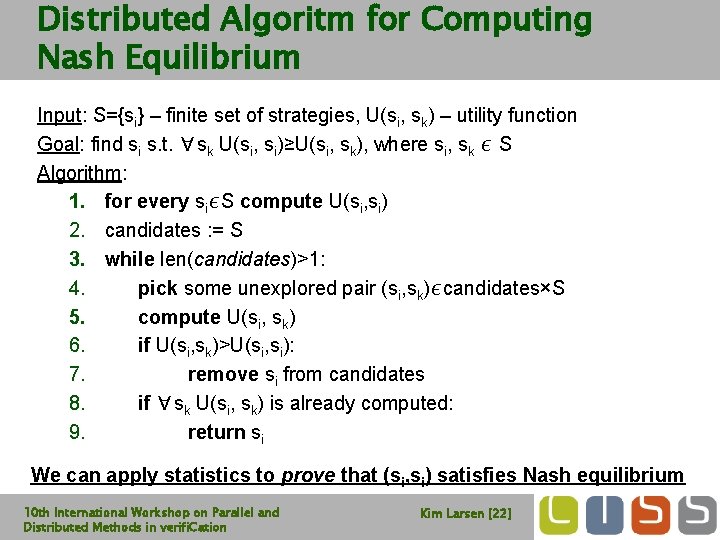

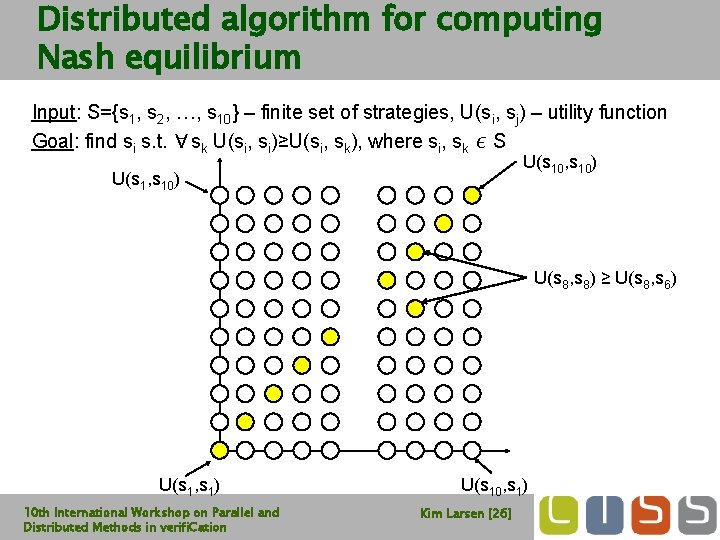

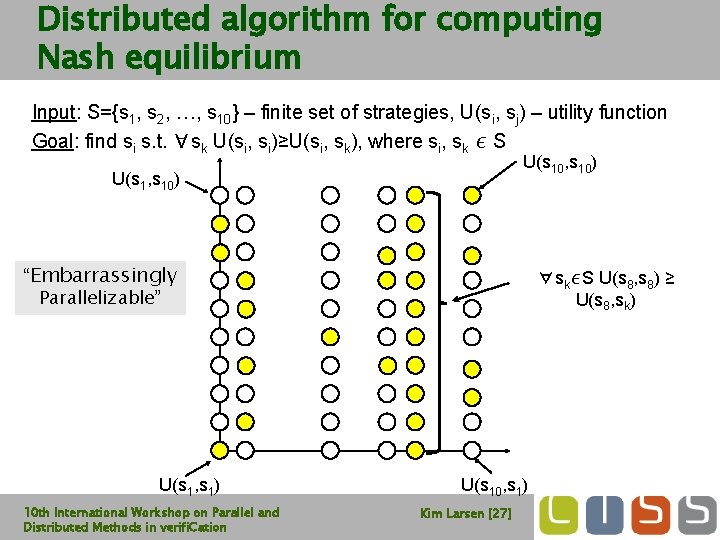

Distributed Algoritm for Computing Nash Equilibrium Input: S={si} – finite set of strategies, U(si, sk) – utility function Goal: find si s. t. ∀sk U(si, si)≥U(si, sk), where si, sk ∊ S Algorithm: 1. for every si∊S compute U(si, si) 2. candidates : = S 3. while len(candidates)>1: 4. pick some unexplored pair (si, sk)∊candidates×S 5. compute U(si, sk) 6. if U(si, sk)>U(si, si): 7. remove si from candidates 8. if ∀sk U(si, sk) is already computed: 9. return si We can apply statistics to prove that (si, si) satisfies Nash equilibrium 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [22]

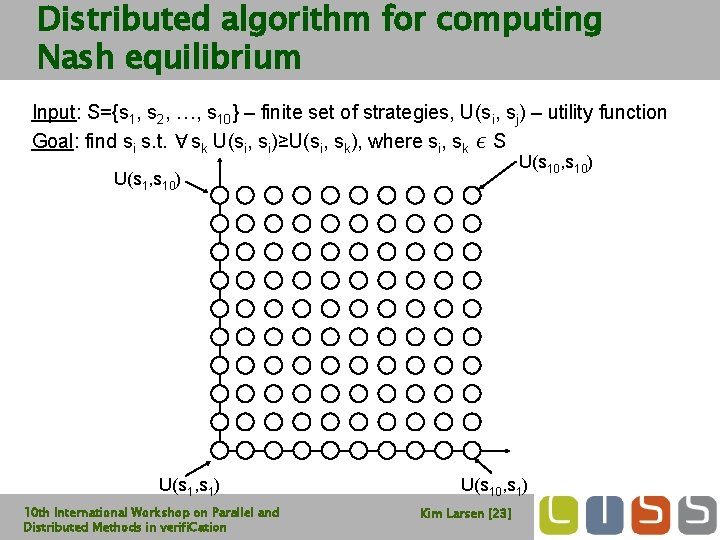

Distributed algorithm for computing Nash equilibrium Input: S={s 1, s 2, …, s 10} – finite set of strategies, U(si, sj) – utility function Goal: find si s. t. ∀sk U(si, si)≥U(si, sk), where si, sk ∊ S U(s 10, s 10) U(s 1, s 1) 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation U(s 10, s 1) Kim Larsen [23]

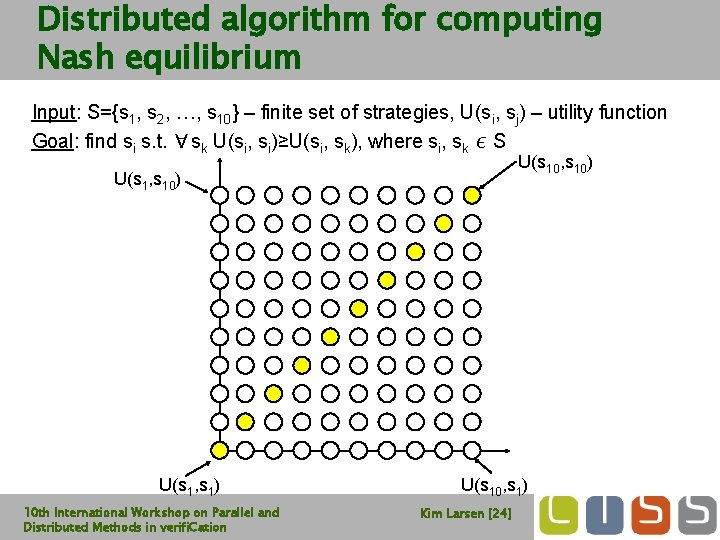

Distributed algorithm for computing Nash equilibrium Input: S={s 1, s 2, …, s 10} – finite set of strategies, U(si, sj) – utility function Goal: find si s. t. ∀sk U(si, si)≥U(si, sk), where si, sk ∊ S U(s 10, s 10) U(s 1, s 1) 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation U(s 10, s 1) Kim Larsen [24]

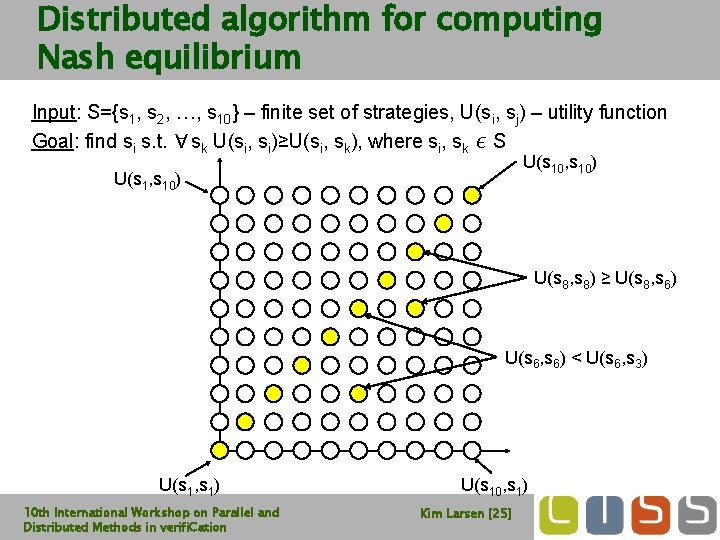

Distributed algorithm for computing Nash equilibrium Input: S={s 1, s 2, …, s 10} – finite set of strategies, U(si, sj) – utility function Goal: find si s. t. ∀sk U(si, si)≥U(si, sk), where si, sk ∊ S U(s 10, s 10) U(s 1, s 10) U(s 8, s 8) ≥ U(s 8, s 6) U(s 6, s 6) < U(s 6, s 3) U(s 1, s 1) 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation U(s 10, s 1) Kim Larsen [25]

Distributed algorithm for computing Nash equilibrium Input: S={s 1, s 2, …, s 10} – finite set of strategies, U(si, sj) – utility function Goal: find si s. t. ∀sk U(si, si)≥U(si, sk), where si, sk ∊ S U(s 10, s 10) U(s 1, s 10) U(s 8, s 8) ≥ U(s 8, s 6) U(s 1, s 1) 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation U(s 10, s 1) Kim Larsen [26]

Distributed algorithm for computing Nash equilibrium Input: S={s 1, s 2, …, s 10} – finite set of strategies, U(si, sj) – utility function Goal: find si s. t. ∀sk U(si, si)≥U(si, sk), where si, sk ∊ S U(s 10, s 10) U(s 1, s 10) “Embarrassingly Parallelizable” U(s 1, s 1) 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation ∀sk∊S U(s 8, s 8) ≥ U(s 8, sk) U(s 10, s 1) Kim Larsen [27]

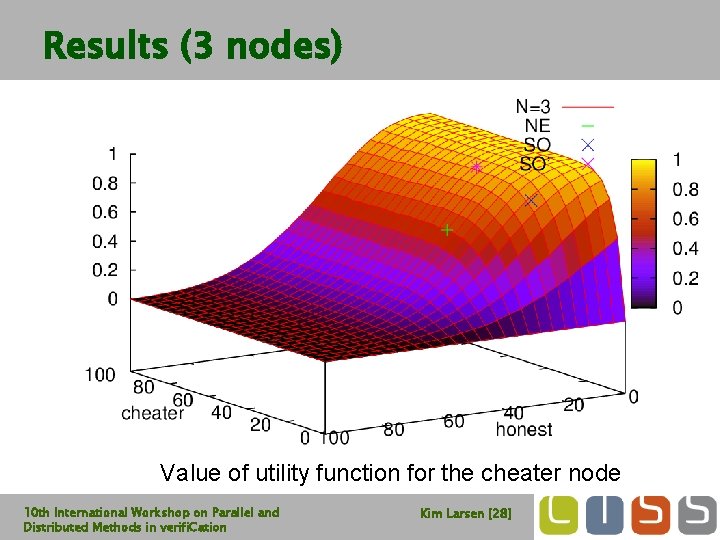

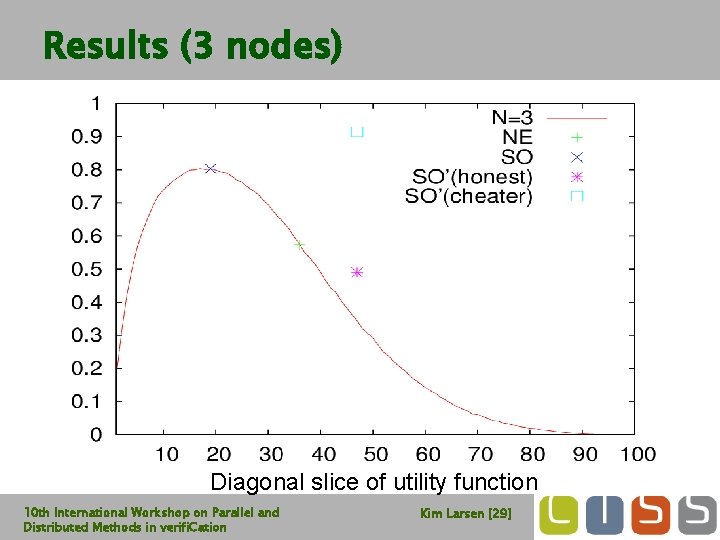

Results (3 nodes) Value of utility function for the cheater node 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [28]

Results (3 nodes) Diagonal slice of utility function 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [29]

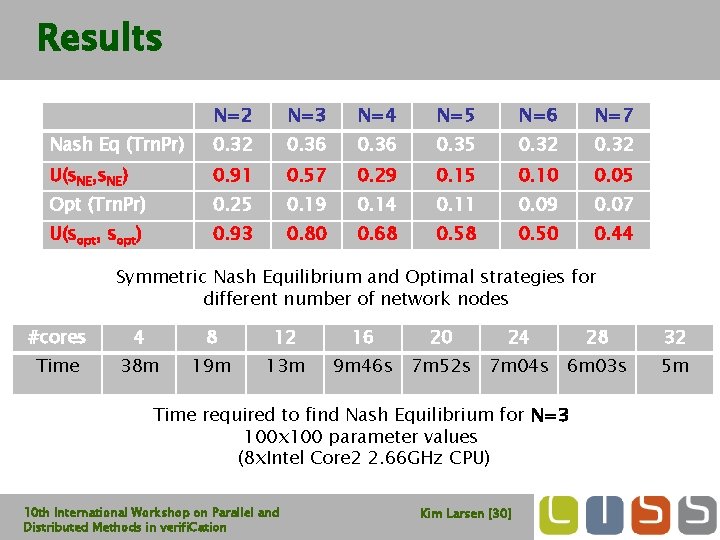

Results N=2 N=3 N=4 N=5 N=6 N=7 Nash Eq (Trn. Pr) 0. 32 0. 36 0. 35 0. 32 U(s. NE, s. NE) 0. 91 0. 57 0. 29 0. 15 0. 10 0. 05 Opt (Trn. Pr) 0. 25 0. 19 0. 14 0. 11 0. 09 0. 07 U(sopt, sopt) 0. 93 0. 80 0. 68 0. 50 0. 44 Symmetric Nash Equilibrium and Optimal strategies for different number of network nodes #cores 4 8 12 Time 38 m 19 m 13 m 16 20 24 9 m 46 s 7 m 52 s 7 m 04 s 6 m 03 s Time required to find Nash Equilibrium for N=3 100 x 100 parameter values (8 x. Intel Core 2 2. 66 GHz CPU) 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation 28 Kim Larsen [30] 32 5 m

Overview § Statistical Model Checking in UPPAAL § Estimation § Testing § Distributed SMC for Parameterized Models § Parameter Sweeps § Optimization § Nash Equilibria § Distributing Statistical Model Checking § Estimation § Testing § Parameter Analysis of DSMC § Conclusion 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [31]

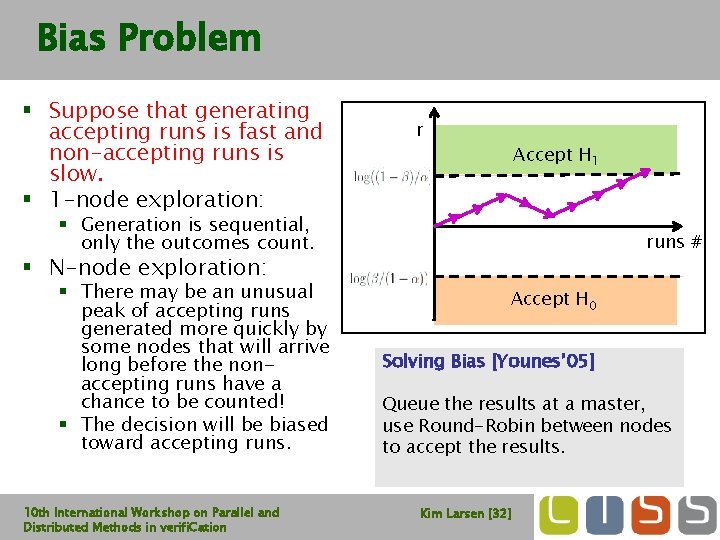

Bias Problem § Suppose that generating accepting runs is fast and non-accepting runs is slow. § 1 -node exploration: r Accept H 1 § Generation is sequential, only the outcomes count. runs # § N-node exploration: § There may be an unusual peak of accepting runs generated more quickly by some nodes that will arrive long before the nonaccepting runs have a chance to be counted! § The decision will be biased toward accepting runs. 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Accept H 0 Solving Bias [Younes’ 05] Rejecting runs Queue the Accepting results atruns a master, use Round-Robin between nodes to accept the results. Kim Larsen [32]

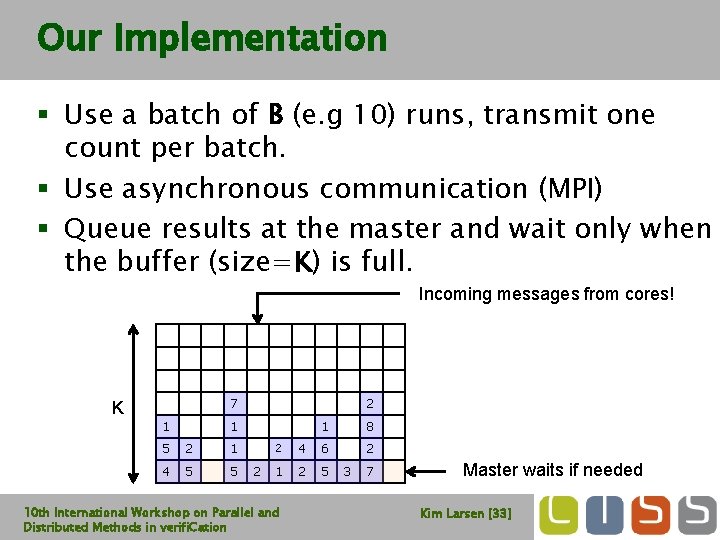

Our Implementation § Use a batch of B (e. g 10) runs, transmit one count per batch. § Use asynchronous communication (MPI) § Queue results at the master and wait only when the buffer (size=K) is full. Incoming messages from cores! 7 K 1 2 1 5 2 1 4 5 5 2 1 8 2 2 4 6 1 2 5 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation 3 7 Master waits if needed Kim Larsen [33]

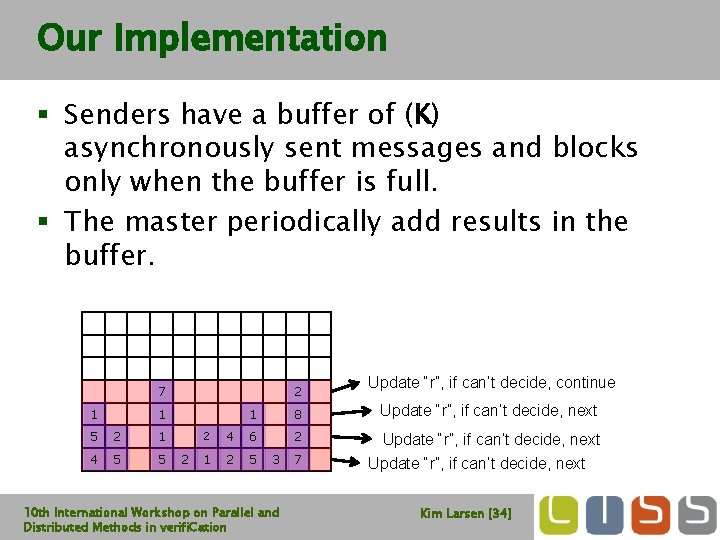

Our Implementation § Senders have a buffer of (K) asynchronously sent messages and blocks only when the buffer is full. § The master periodically add results in the buffer. 7 1 2 1 5 2 1 4 5 5 2 Update “r”, if can’t decide, continue 1 8 Update “r”, if can’t decide, next 2 4 6 1 2 5 3 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation 7 Update “r”, if can’t decide, next Kim Larsen [34]

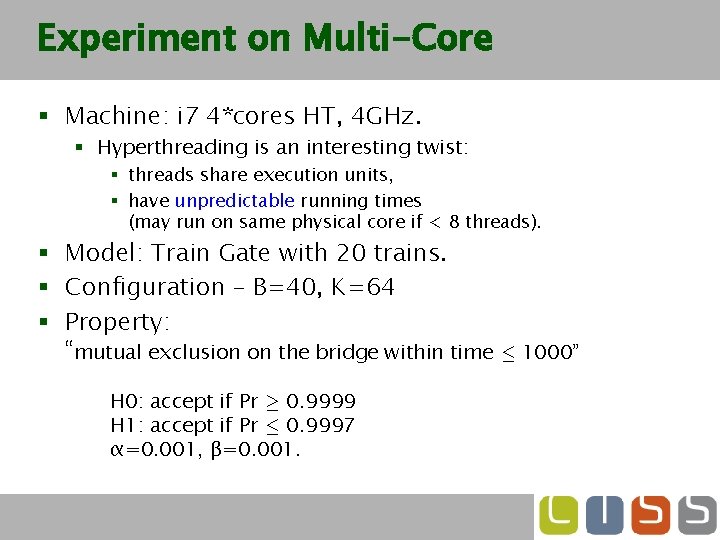

Experiment on Multi-Core § Machine: i 7 4*cores HT, 4 GHz. § Hyperthreading is an interesting twist: § threads share execution units, § have unpredictable running times (may run on same physical core if < 8 threads). § Model: Train Gate with 20 trains. § Configuration – B=40, K=64 § Property: “mutual exclusion on the bridge within time · 1000” H 0: accept if Pr ¸ 0. 9999 H 1: accept if Pr · 0. 9997 α=0. 001, β=0. 001.

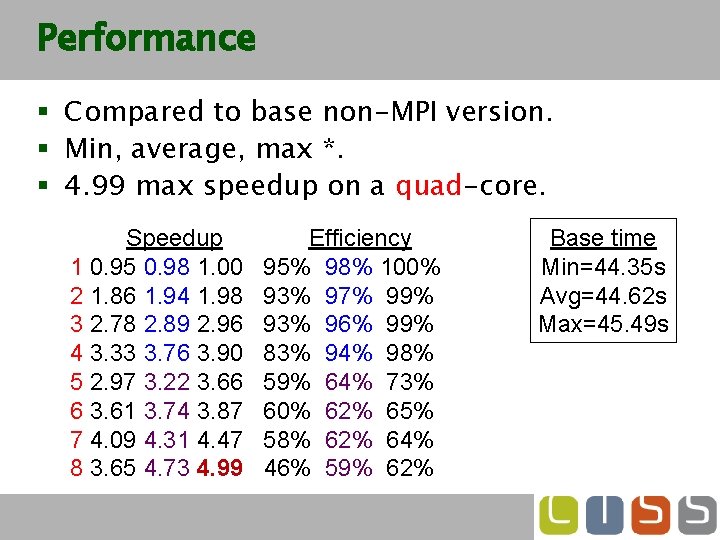

Performance § Compared to base non-MPI version. § Min, average, max *. § 4. 99 max speedup on a quad-core. Speedup Efficiency 1 0. 95 0. 98 1. 00 95% 98% 100% 2 1. 86 1. 94 1. 98 93% 97% 99% 3 2. 78 2. 89 2. 96 93% 96% 99% 4 3. 33 3. 76 3. 90 83% 94% 98% 5 2. 97 3. 22 3. 66 59% 64% 73% 6 3. 61 3. 74 3. 87 60% 62% 65% 7 4. 09 4. 31 4. 47 58% 62% 64% 8 3. 65 4. 73 4. 99 46% 59% 62% Base time Min=44. 35 s Avg=44. 62 s Max=45. 49 s

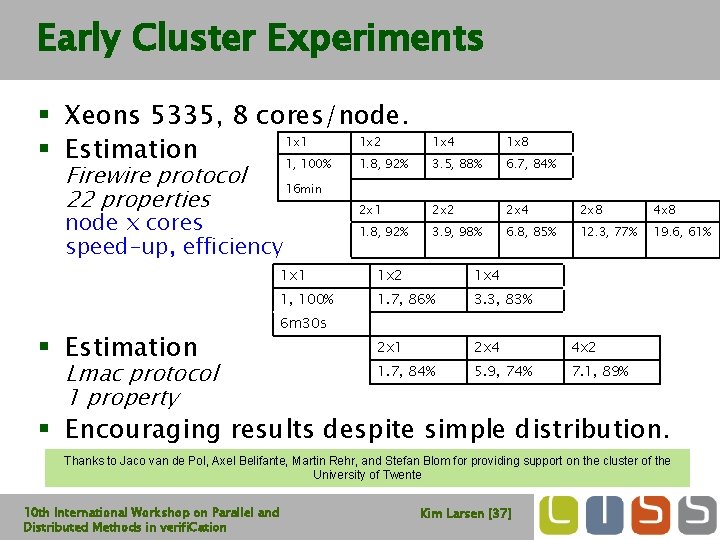

Early Cluster Experiments § Xeons 5335, 8 cores/node. 1 x 1 1 x 2 § Estimation 1, 100% 1. 8, 92% Firewire protocol 22 properties Lmac protocol 1 property 1 x 8 3. 5, 88% 6. 7, 84% 2 x 1 2 x 2 2 x 4 2 x 8 4 x 8 1. 8, 92% 3. 9, 98% 6. 8, 85% 12. 3, 77% 19. 6, 61% 16 min node x cores speed-up, efficiency § Estimation 1 x 4 1 x 1 1 x 2 1 x 4 1, 100% 1. 7, 86% 3. 3, 83% 2 x 1 2 x 4 4 x 2 1. 7, 84% 5. 9, 74% 7. 1, 89% 6 m 30 s § Encouraging results despite simple distribution. Thanks to Jaco van de Pol, Axel Belifante, Martin Rehr, and Stefan Blom for providing support on the cluster of the University of Twente 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [37]

Overview § Statistical Model Checking in UPPAAL § Estimation § Testing § Distributed SMC for Parameterized Models § Parameter Sweeps § Optimization § Nash Equilibria § Distributing Statistical Model Checking § Estimation § Testing § DSMC of DSMC § Conclusion 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [38]

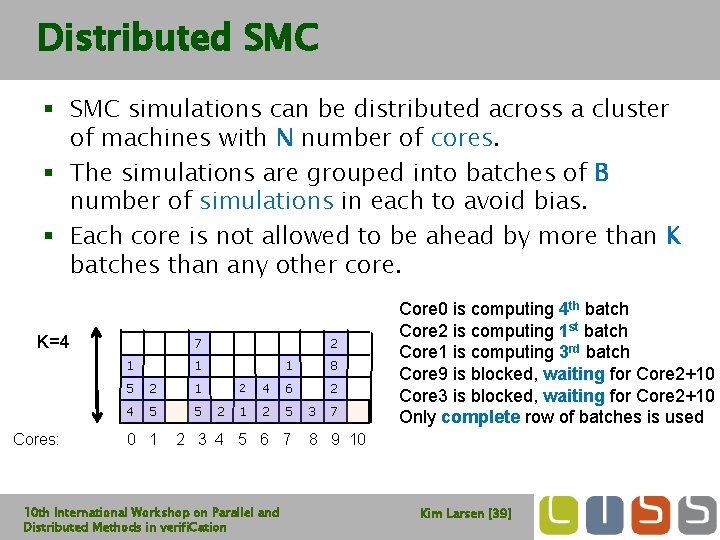

Distributed SMC § SMC simulations can be distributed across a cluster of machines with N number of cores. § The simulations are grouped into batches of B number of simulations in each to avoid bias. § Each core is not allowed to be ahead by more than K batches than any other core. K=4 7 1 2 1 5 2 1 4 5 5 2 1 8 2 2 4 6 1 2 5 3 7 Core 0 is computing 4 th batch Core 2 is computing 1 st batch Core 1 is computing 3 rd batch Core 9 is blocked, waiting for Core 2+10 Core 3 is blocked, waiting for Core 2+10 Only complete row of batches is used Cores: 0 1 2 3 4 5 6 7 8 9 10 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [39]

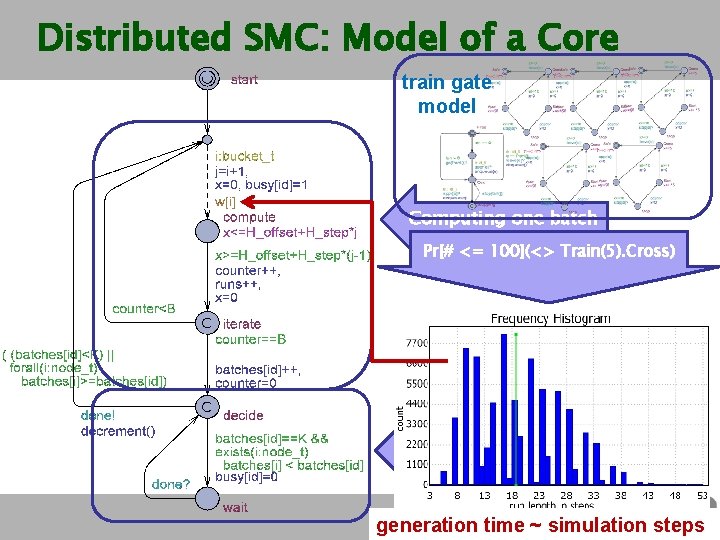

Distributed SMC: Model of a Core train gate model Computing one batch Pr[# <= 100](<> Train(5). Cross) Wait if ahead by K batches generation time ~ simulation steps

![DSMC: CPU Usage Time Parameter instantiation: N=8, B=100, K=2 Property used: E[time<=1000; 25000] (max: DSMC: CPU Usage Time Parameter instantiation: N=8, B=100, K=2 Property used: E[time<=1000; 25000] (max:](http://slidetodoc.com/presentation_image_h/7fc497e52d5eb332634048213f9f497c/image-41.jpg)

DSMC: CPU Usage Time Parameter instantiation: N=8, B=100, K=2 Property used: E[time<=1000; 25000] (max: usage)

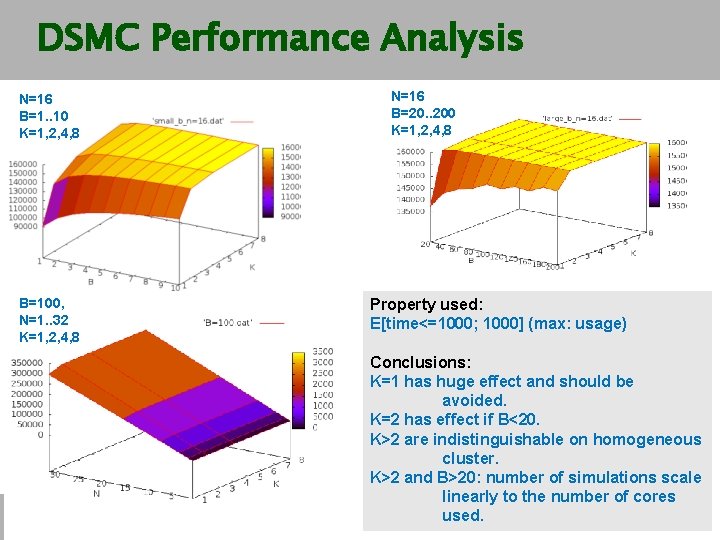

DSMC Performance Analysis N=16 B=1. . 10 K=1, 2, 4, 8 B=100, N=1. . 32 K=1, 2, 4, 8 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation N=16 B=20. . 200 K=1, 2, 4, 8 Property used: E[time<=1000; 1000] (max: usage) Conclusions: K=1 has huge effect and should be avoided. K=2 has effect if B<20. K>2 are indistinguishable on homogeneous cluster. K>2 and B>20: number of simulations scale linearly to the number of cores Kimused. Larsen [42]

Conclusion § Preliminary experiments indicate that distributed SMC in UPPAAL scales very nicely. § More work to identify impact of parameters for distributing individual SMC? § How to assign statistical confidence to parametric analysis, e. g. optimum or NE? § More about UPPAAL SMC on Sunday ! § UPPAAL 4. 1. 4 available (support for SMC, DSMC, 64 -bit, . . ) 10 th International Workshop on Parallel and Distributed Methods in verifi. Cation Kim Larsen [43]

- Slides: 43