NLP Deep Learning Libraries for Deep Learning Matrix

NLP

Deep Learning Libraries for Deep Learning

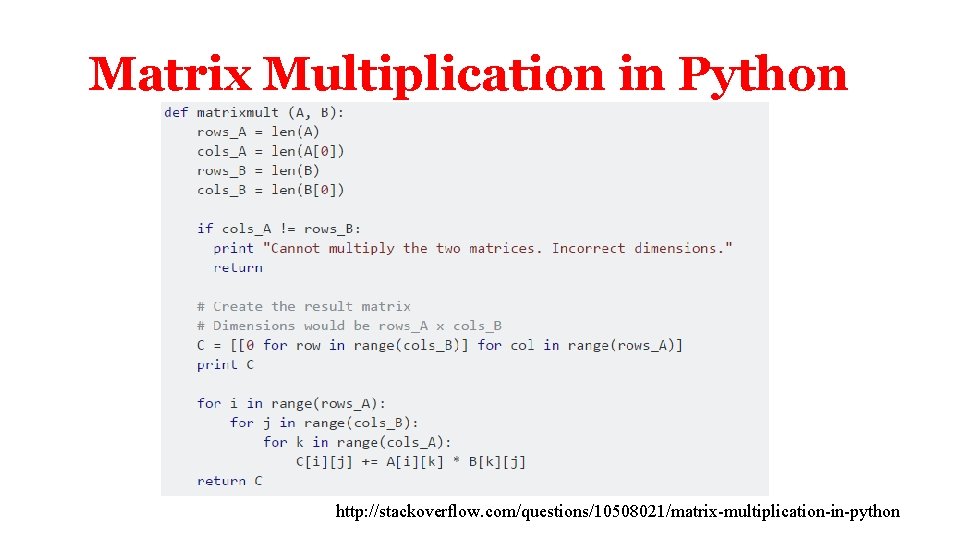

Matrix Multiplication in Python http: //stackoverflow. com/questions/10508021/matrix-multiplication-in-python

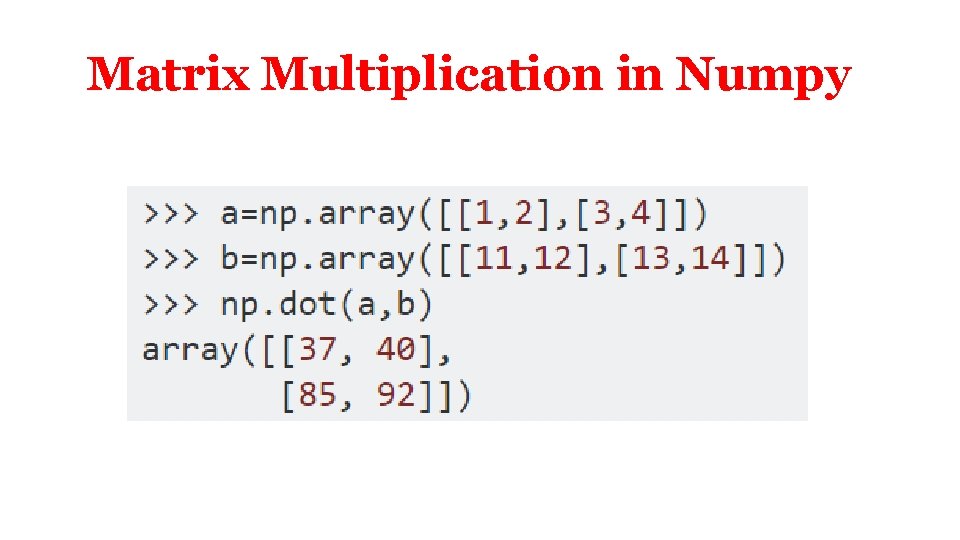

Matrix Multiplication in Numpy

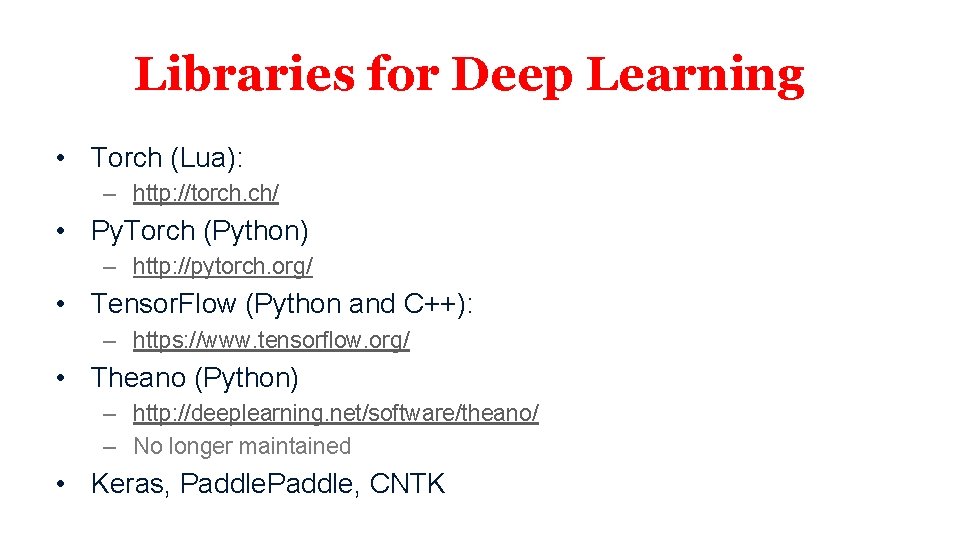

Libraries for Deep Learning • Torch (Lua): – http: //torch. ch/ • Py. Torch (Python) – http: //pytorch. org/ • Tensor. Flow (Python and C++): – https: //www. tensorflow. org/ • Theano (Python) – http: //deeplearning. net/software/theano/ – No longer maintained • Keras, Paddle, CNTK

Deep Learning Libraries for Deep Learning: Tensorflow (slides by Jason Chu)

What is Tensor. Flow? • • • Open source software library for numerical computation using data flow graphs Developed by Google Brain Team for machine learning and deep learning and made open-source Tensor. Flow provides an extensive suite of functions and classes that allow users to build various models from scratch These slides are adapted from the following Stanford lectures: https: //web. stanford. edu/class/cs 20 si/2017/lectures/slides_01. pdf https: //cs 224 d. stanford. edu/lectures/CS 224 d-Lecture 7. pdf

What’s a tensor? • Formally, tensors are multilinear maps from vector spaces to the real numbers • Think of them as n-dimensional array, with 0 -d tensors being scalars, 1 -d tensor vectors, 2 -d tensor matrices, etc

Some Basic Terminology ● ● ● Dataflow Graphs: entire computation Data Nodes: individual data or operations Edges: implicit dependencies between nodes Operations: any computation Constants: single values (tensors) “Tensor. Flow programs are usually structured into a construction phase, that assembles a graph, and an execution phase that uses a session to execute ops in the graph. ” - Tensor. Flow docs All nodes return tensors, or higher-dimensional matrices You are metaprogramming. No computation occurs yet!

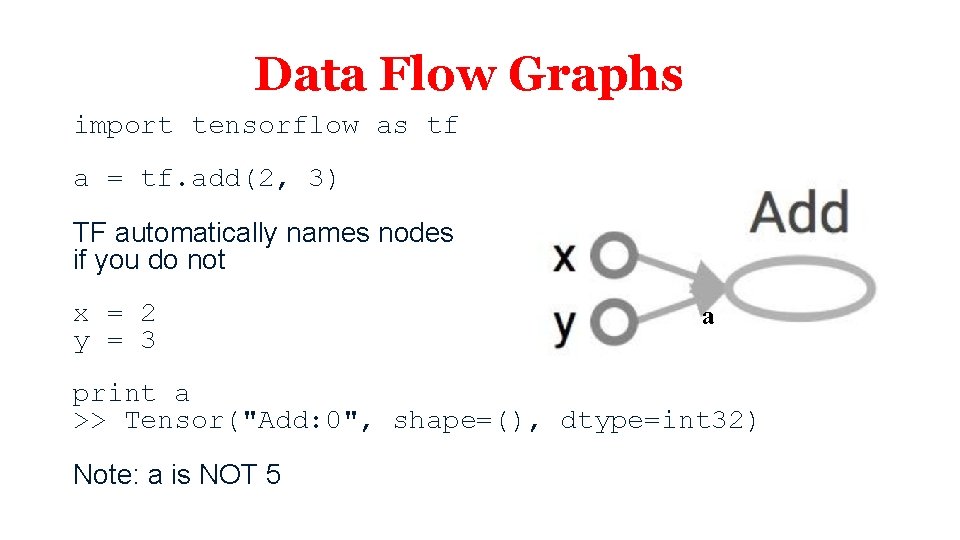

Data Flow Graphs import tensorflow as tf a = tf. add(2, 3) TF automatically names nodes if you do not x = 2 y = 3 a print a >> Tensor("Add: 0", shape=(), dtype=int 32) Note: a is NOT 5

Tensor. Flow Session • Session object encapsulates the environment in which Operation objects are executed and Tensor objects, like a in the previous slide, are evaluated import tensorflow as tf a = tf. add(2, 3) with tf. Session() as sess: print sess. run(a)

Tensor. Flow Sessions • There are 3 arguments for a Session, all of which are optional. 1. target — The execution engine to connect to. 2. graph — The Graph to be launched. 3. config — A Config. Proto protocol buffer with configuration options for the session

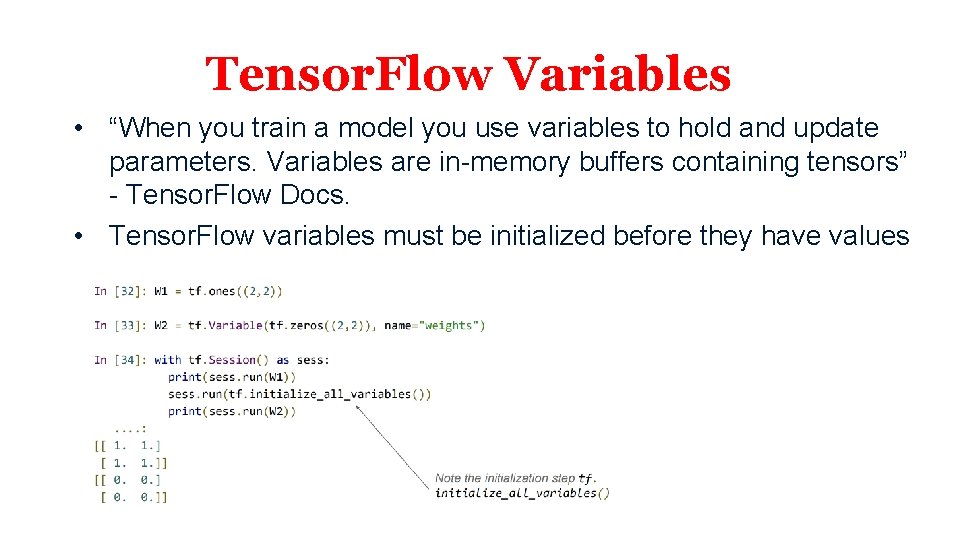

Tensor. Flow Variables • “When you train a model you use variables to hold and update parameters. Variables are in-memory buffers containing tensors” - Tensor. Flow Docs. • Tensor. Flow variables must be initialized before they have values

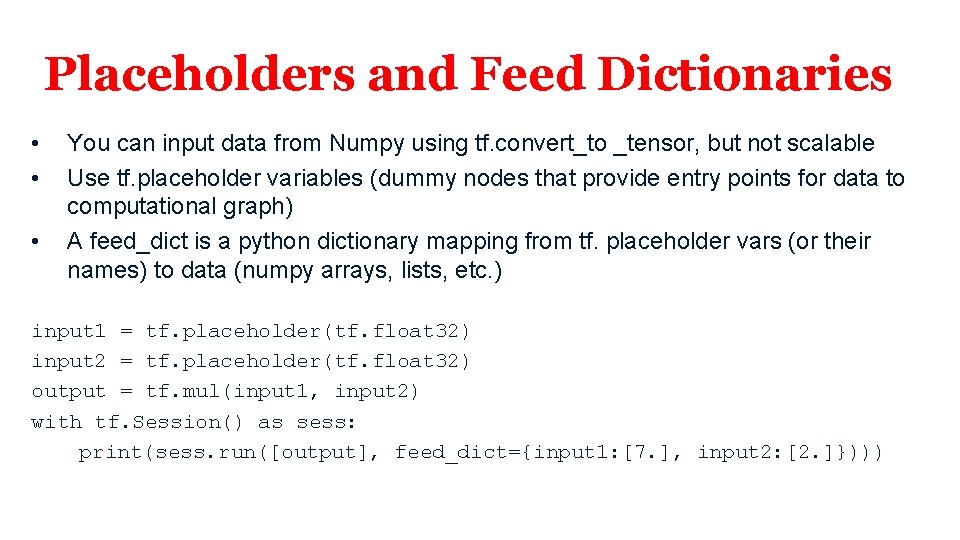

Placeholders and Feed Dictionaries • • • You can input data from Numpy using tf. convert_to _tensor, but not scalable Use tf. placeholder variables (dummy nodes that provide entry points for data to computational graph) A feed_dict is a python dictionary mapping from tf. placeholder vars (or their names) to data (numpy arrays, lists, etc. ) input 1 = tf. placeholder(tf. float 32) input 2 = tf. placeholder(tf. float 32) output = tf. mul(input 1, input 2) with tf. Session() as sess: print(sess. run([output], feed_dict={input 1: [7. ], input 2: [2. ]})))

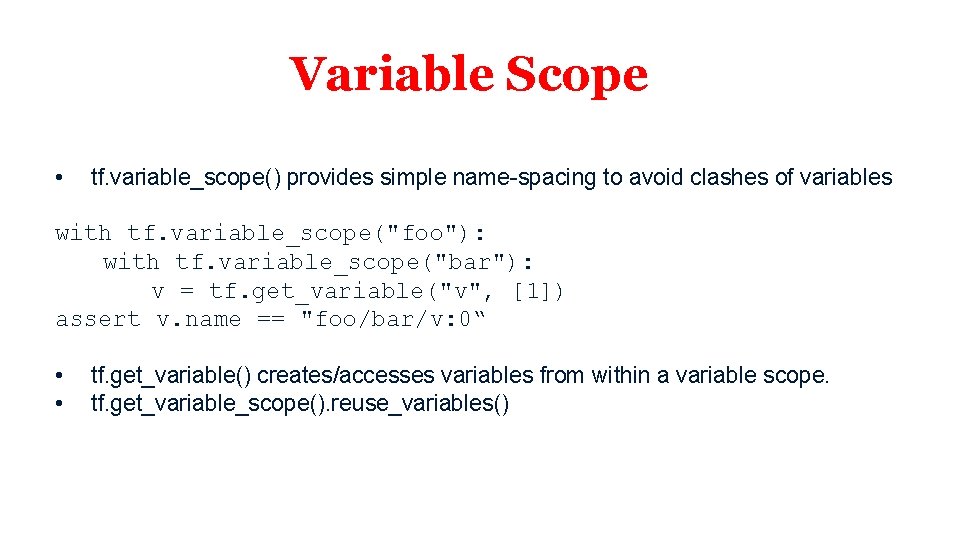

Variable Scope • tf. variable_scope() provides simple name-spacing to avoid clashes of variables with tf. variable_scope("foo"): with tf. variable_scope("bar"): v = tf. get_variable("v", [1]) assert v. name == "foo/bar/v: 0“ • • tf. get_variable() creates/accesses variables from within a variable scope. tf. get_variable_scope(). reuse_variables()

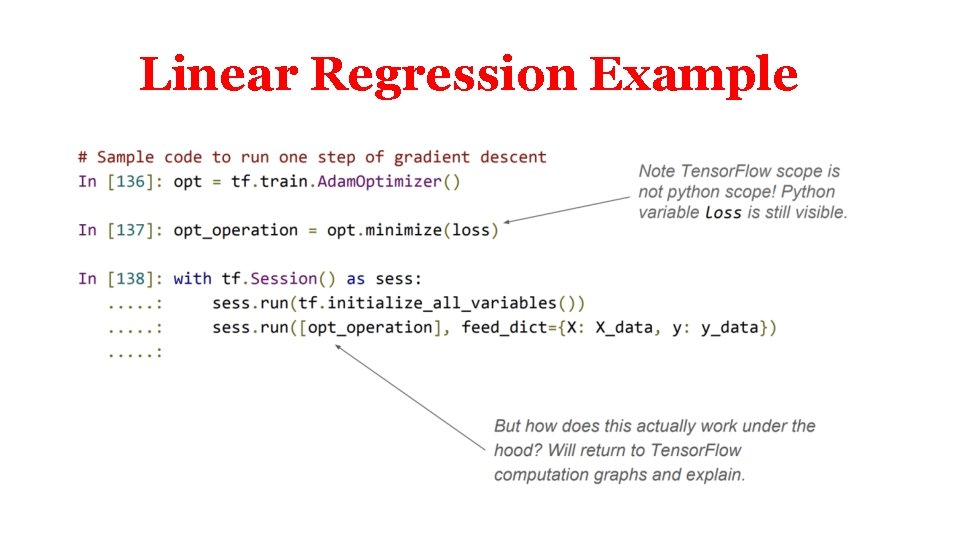

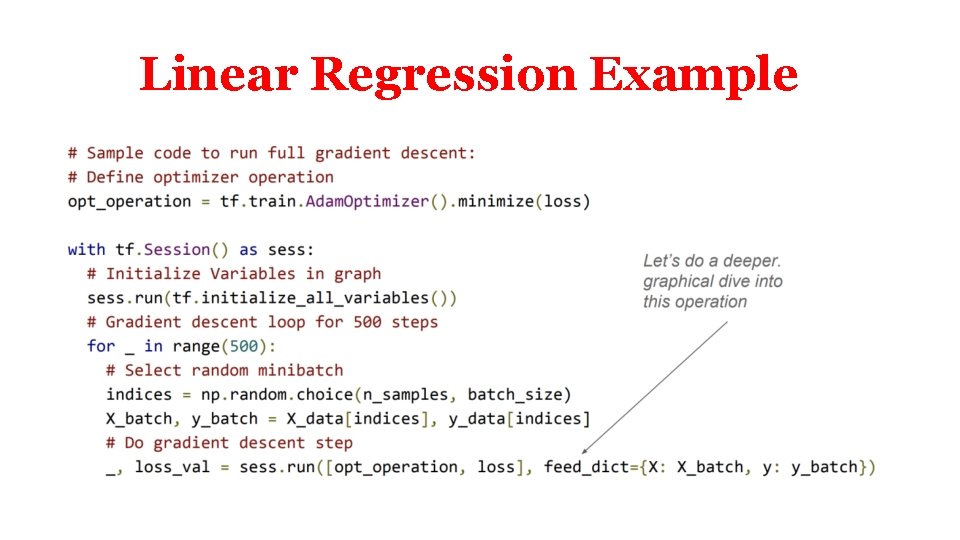

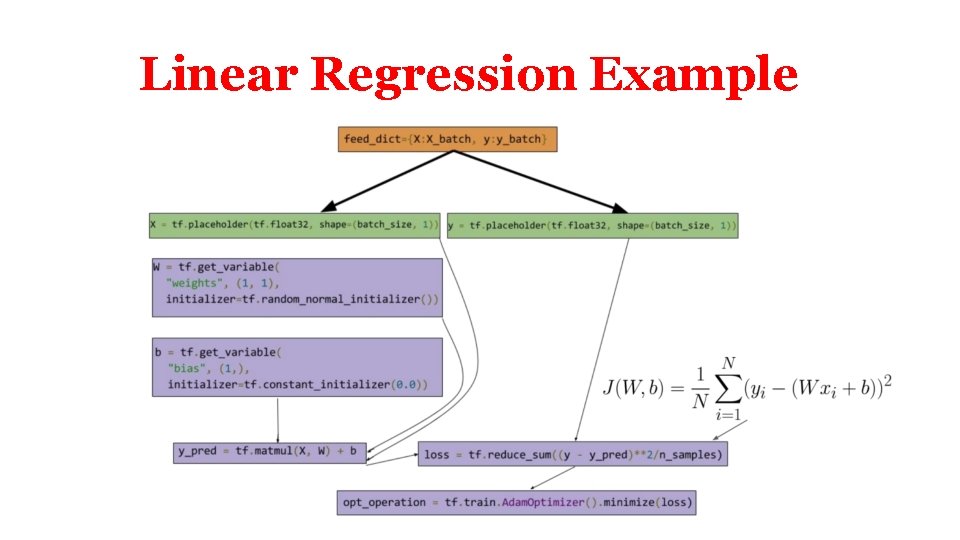

Linear Regression Example

Linear Regression Example

Linear Regression Example

Linear Regression Example

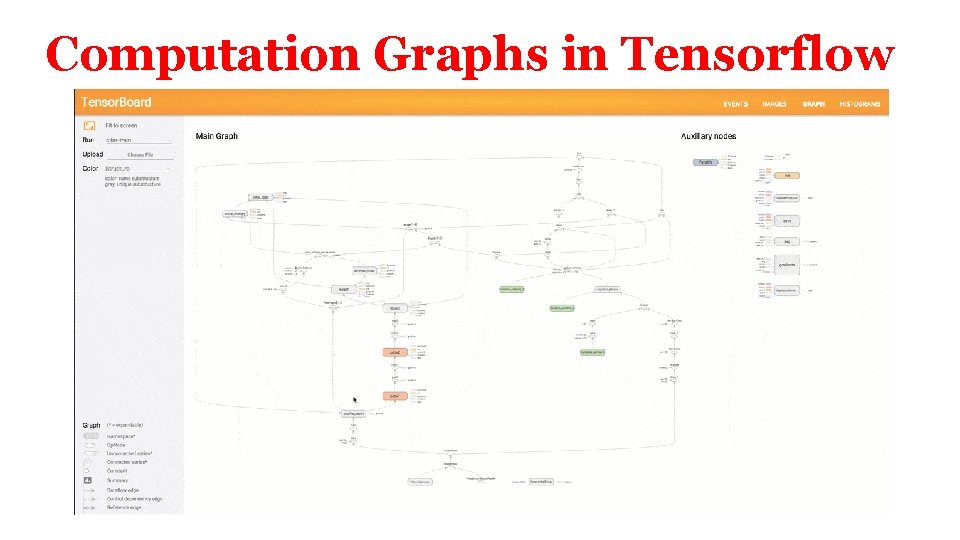

Computation Graphs in Tensorflow

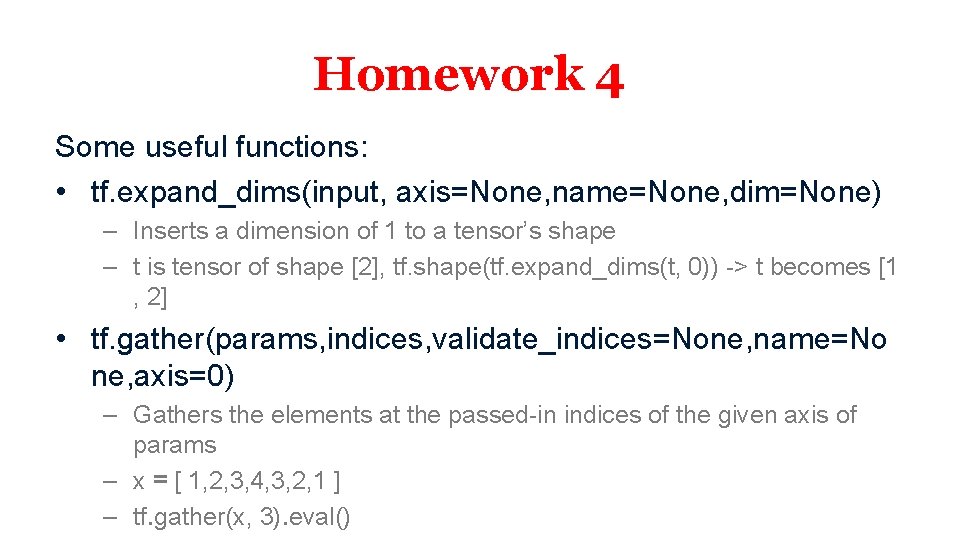

Homework 4 Some useful functions: • tf. expand_dims(input, axis=None, name=None, dim=None) – Inserts a dimension of 1 to a tensor’s shape – t is tensor of shape [2], tf. shape(tf. expand_dims(t, 0)) -> t becomes [1 , 2] • tf. gather(params, indices, validate_indices=None, name=No ne, axis=0) – Gathers the elements at the passed-in indices of the given axis of params – x = [ 1, 2, 3, 4, 3, 2, 1 ] – tf. gather(x, 3). eval()

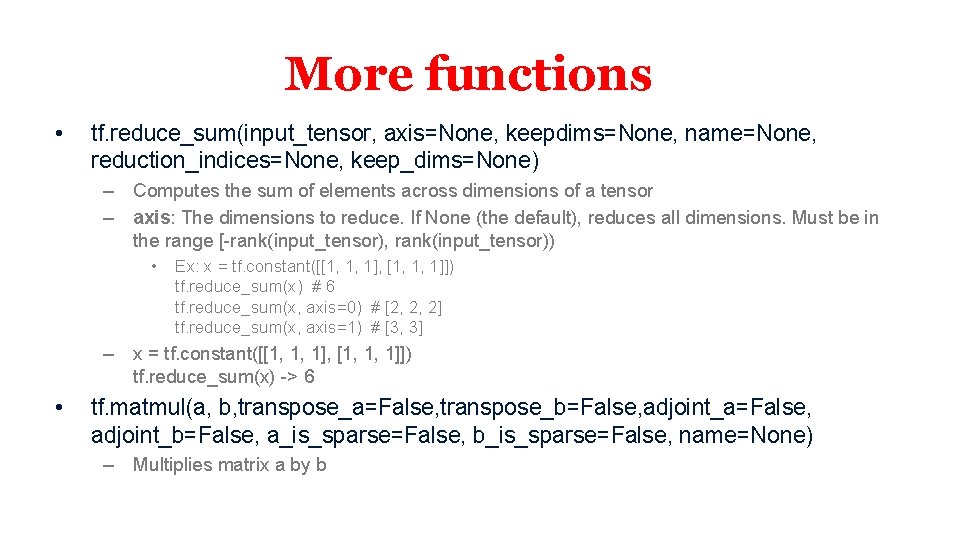

More functions • tf. reduce_sum(input_tensor, axis=None, keepdims=None, name=None, reduction_indices=None, keep_dims=None) – Computes the sum of elements across dimensions of a tensor – axis: The dimensions to reduce. If None (the default), reduces all dimensions. Must be in the range [-rank(input_tensor), rank(input_tensor)) • Ex: x = tf. constant([[1, 1, 1], [1, 1, 1]]) tf. reduce_sum(x) # 6 tf. reduce_sum(x, axis=0) # [2, 2, 2] tf. reduce_sum(x, axis=1) # [3, 3] – x = tf. constant([[1, 1, 1], [1, 1, 1]]) tf. reduce_sum(x) -> 6 • tf. matmul(a, b, transpose_a=False, transpose_b=False, adjoint_a=False, adjoint_b=False, a_is_sparse=False, b_is_sparse=False, name=None) – Multiplies matrix a by b

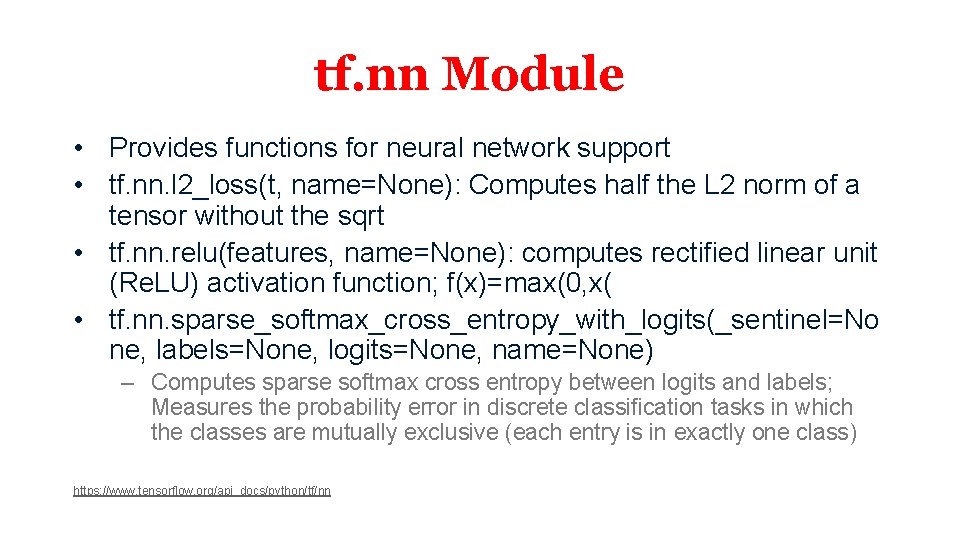

tf. nn Module • Provides functions for neural network support • tf. nn. l 2_loss(t, name=None): Computes half the L 2 norm of a tensor without the sqrt • tf. nn. relu(features, name=None): computes rectified linear unit (Re. LU) activation function; f(x)=max(0, x( • tf. nn. sparse_softmax_cross_entropy_with_logits(_sentinel=No ne, labels=None, logits=None, name=None) – Computes sparse softmax cross entropy between logits and labels; Measures the probability error in discrete classification tasks in which the classes are mutually exclusive (each entry is in exactly one class) https: //www. tensorflow. org/api_docs/python/tf/nn

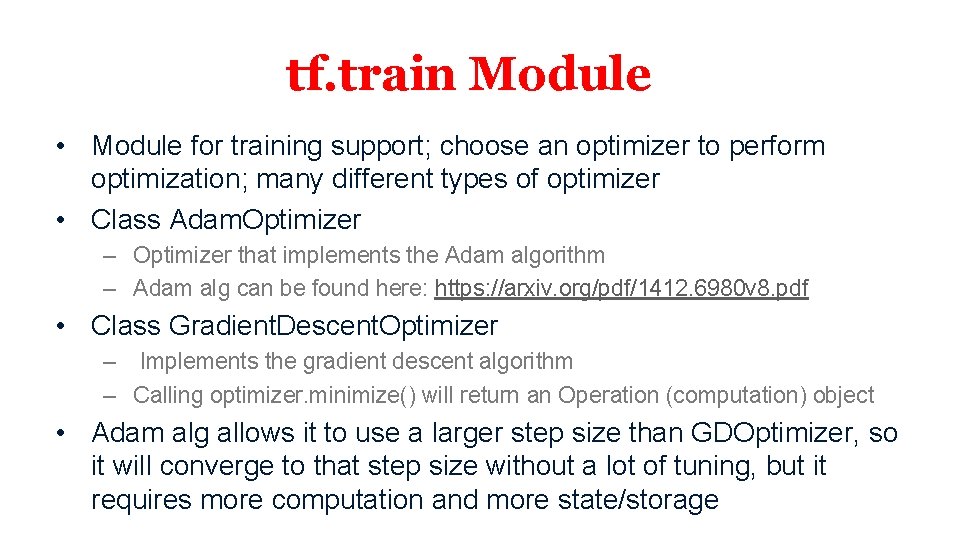

tf. train Module • Module for training support; choose an optimizer to perform optimization; many different types of optimizer • Class Adam. Optimizer – Optimizer that implements the Adam algorithm – Adam alg can be found here: https: //arxiv. org/pdf/1412. 6980 v 8. pdf • Class Gradient. Descent. Optimizer – Implements the gradient descent algorithm – Calling optimizer. minimize() will return an Operation (computation) object • Adam alg allows it to use a larger step size than GDOptimizer, so it will converge to that step size without a lot of tuning, but it requires more computation and more state/storage

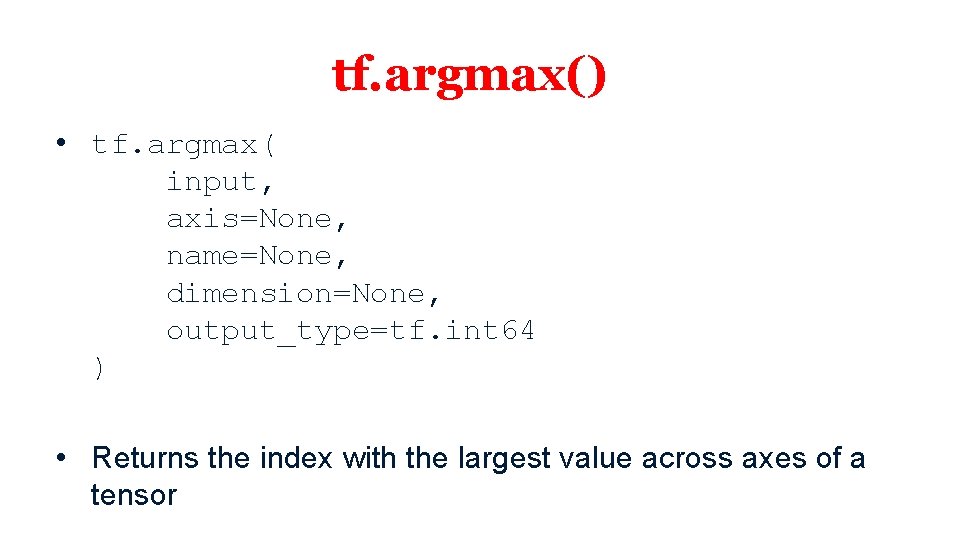

tf. argmax() • tf. argmax( input, axis=None, name=None, dimension=None, output_type=tf. int 64 ) • Returns the index with the largest value across axes of a tensor

Deep Learning Libraries for Deep Learning: Py. Torch (slides by Rui Zhang)

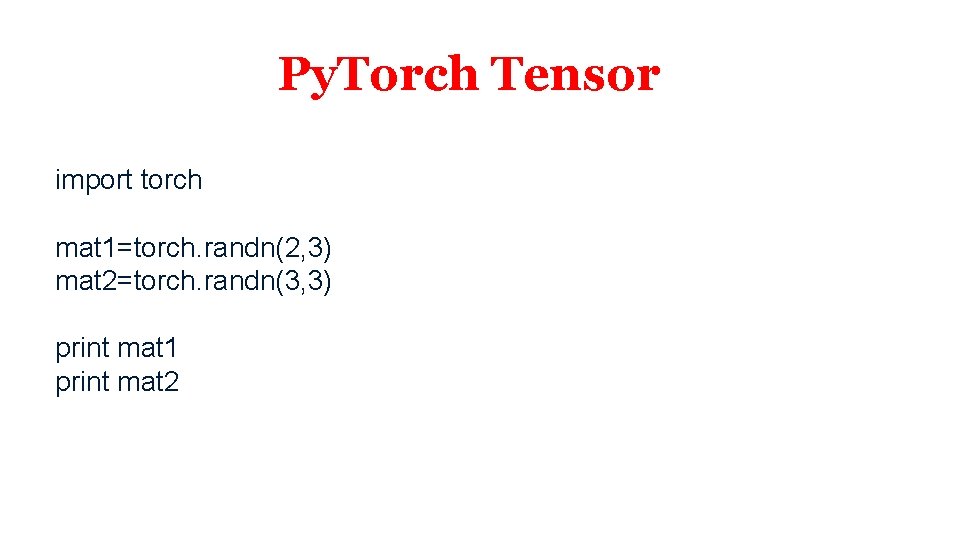

Py. Torch Tensor import torch mat 1=torch. randn(2, 3) mat 2=torch. randn(3, 3) print mat 1 print mat 2

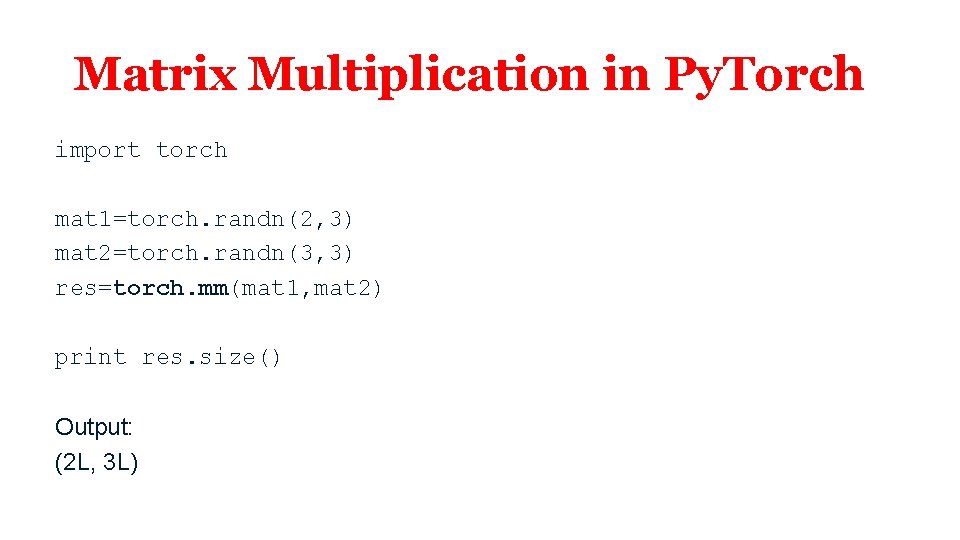

Matrix Multiplication in Py. Torch import torch mat 1=torch. randn(2, 3) mat 2=torch. randn(3, 3) res=torch. mm(mat 1, mat 2) print res. size() Output: (2 L, 3 L)

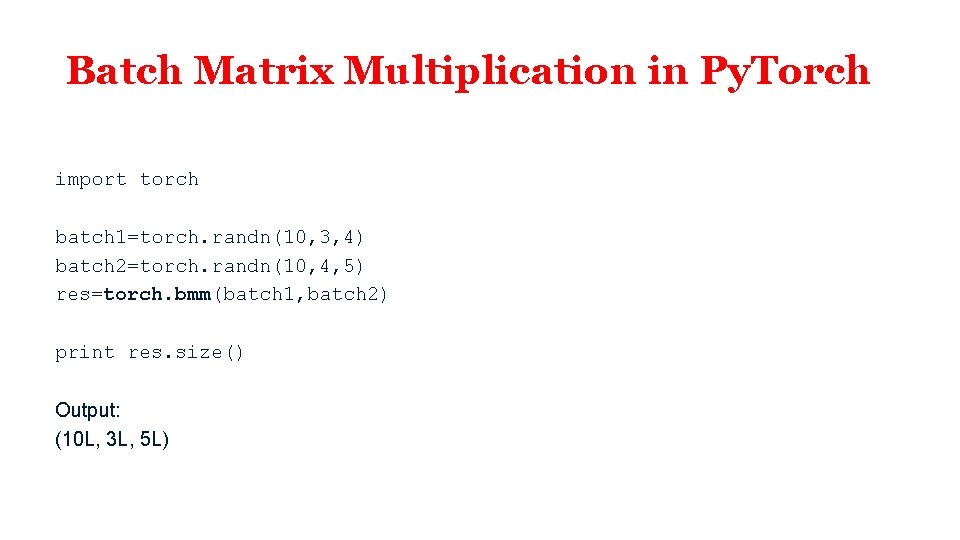

Batch Matrix Multiplication in Py. Torch import torch batch 1=torch. randn(10, 3, 4) batch 2=torch. randn(10, 4, 5) res=torch. bmm(batch 1, batch 2) print res. size() Output: (10 L, 3 L, 5 L)

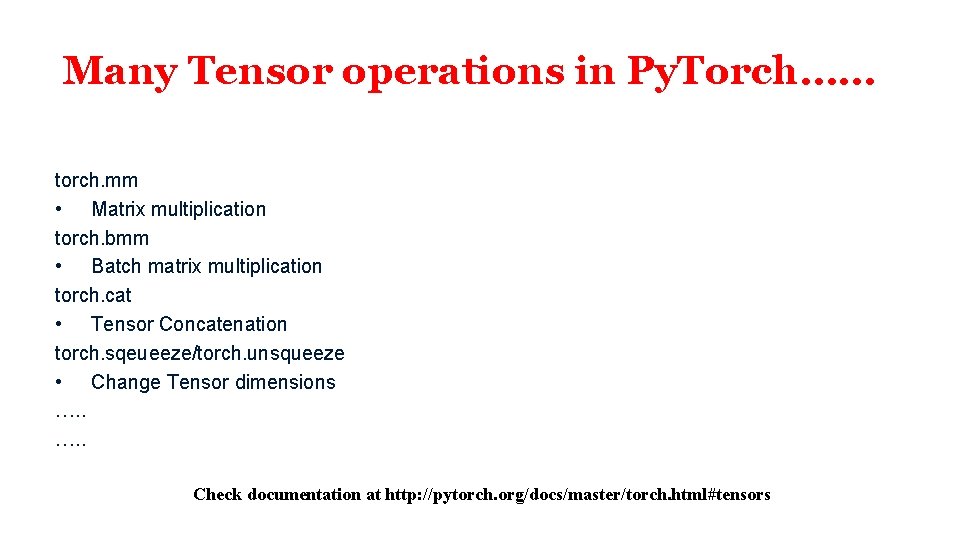

Many Tensor operations in Py. Torch…… torch. mm • Matrix multiplication torch. bmm • Batch matrix multiplication torch. cat • Tensor Concatenation torch. sqeueeze/torch. unsqueeze • Change Tensor dimensions …. . Check documentation at http: //pytorch. org/docs/master/torch. html#tensors

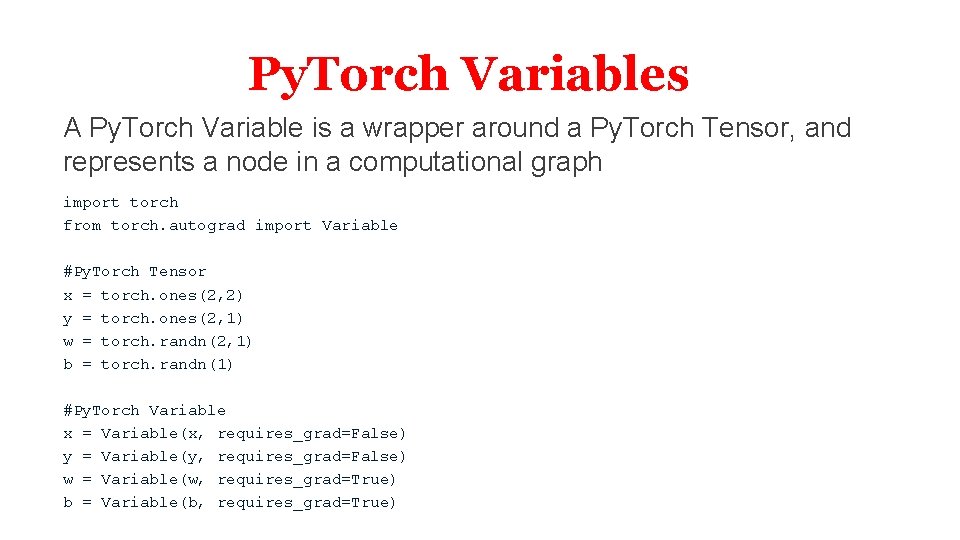

Py. Torch Variables A Py. Torch Variable is a wrapper around a Py. Torch Tensor, and represents a node in a computational graph import torch from torch. autograd import Variable #Py. Torch Tensor x = torch. ones(2, 2) y = torch. ones(2, 1) w = torch. randn(2, 1) b = torch. randn(1) #Py. Torch Variable x = Variable(x, requires_grad=False) y = Variable(y, requires_grad=False) w = Variable(w, requires_grad=True) b = Variable(b, requires_grad=True)

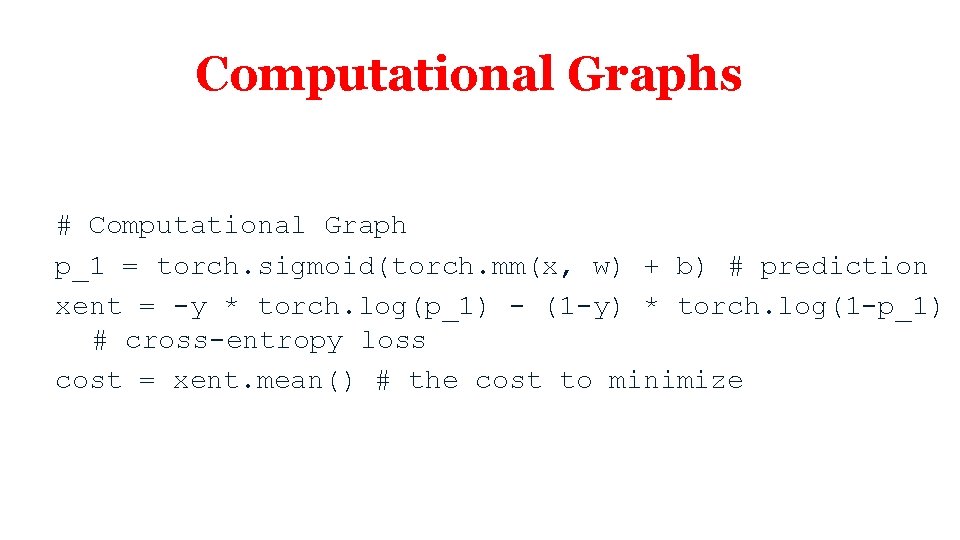

Computational Graphs # Computational Graph p_1 = torch. sigmoid(torch. mm(x, w) + b) # prediction xent = -y * torch. log(p_1) - (1 -y) * torch. log(1 -p_1) # cross-entropy loss cost = xent. mean() # the cost to minimize

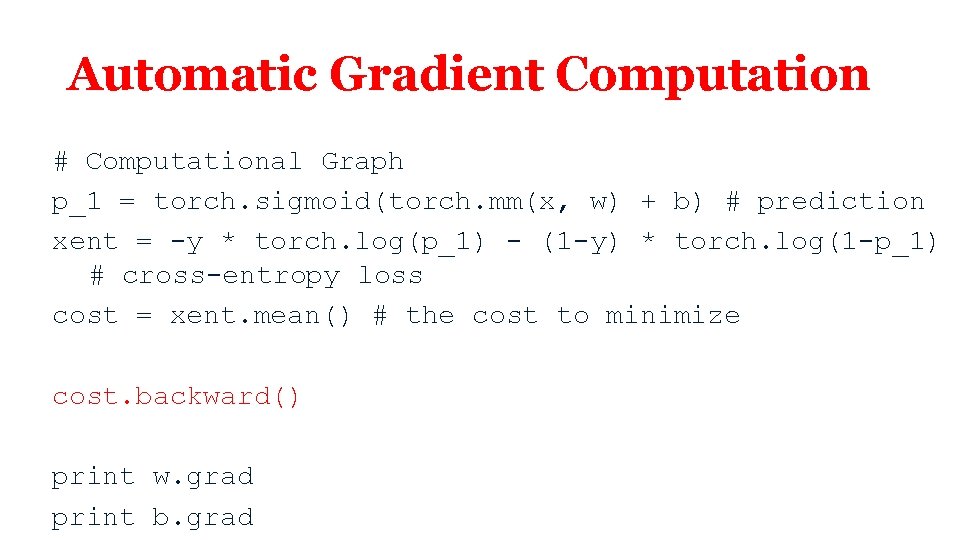

Automatic Gradient Computation # Computational Graph p_1 = torch. sigmoid(torch. mm(x, w) + b) # prediction xent = -y * torch. log(p_1) - (1 -y) * torch. log(1 -p_1) # cross-entropy loss cost = xent. mean() # the cost to minimize cost. backward() print w. grad print b. grad

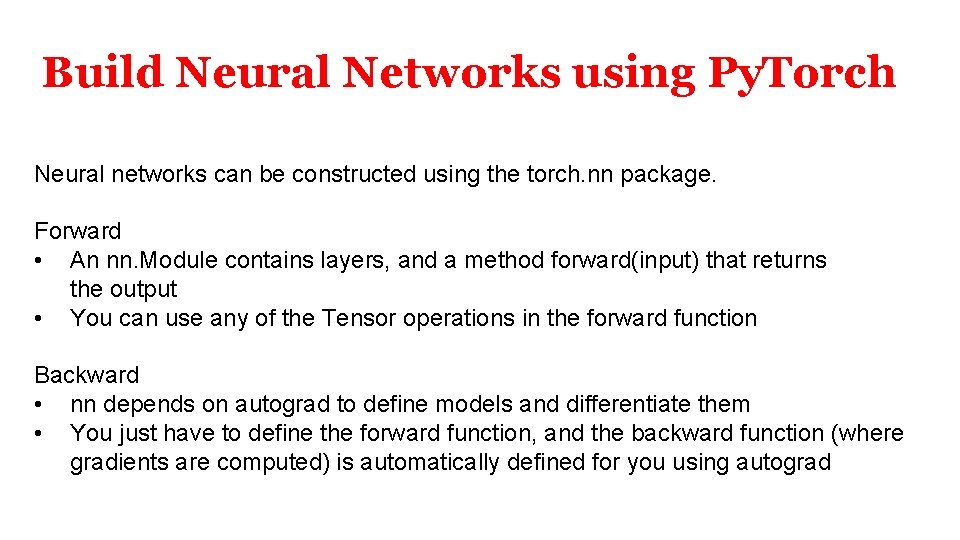

Build Neural Networks using Py. Torch Neural networks can be constructed using the torch. nn package. Forward • An nn. Module contains layers, and a method forward(input) that returns the output • You can use any of the Tensor operations in the forward function Backward • nn depends on autograd to define models and differentiate them • You just have to define the forward function, and the backward function (where gradients are computed) is automatically defined for you using autograd

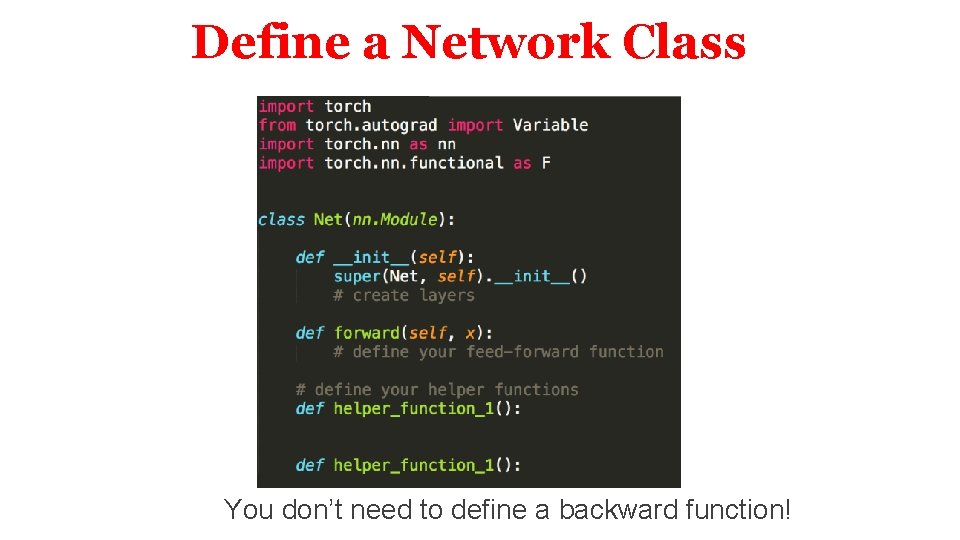

Define a Network Class You don’t need to define a backward function!

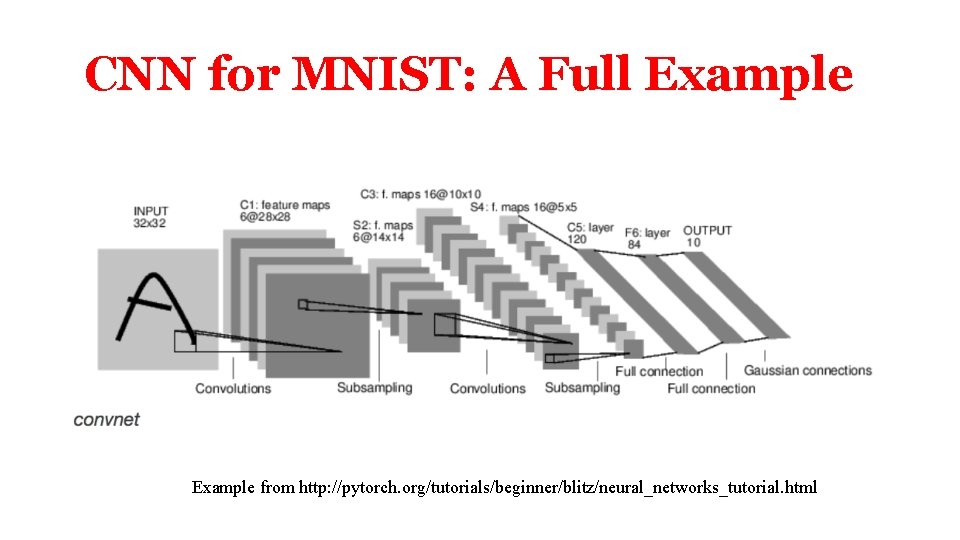

CNN for MNIST: A Full Example from http: //pytorch. org/tutorials/beginner/blitz/neural_networks_tutorial. html

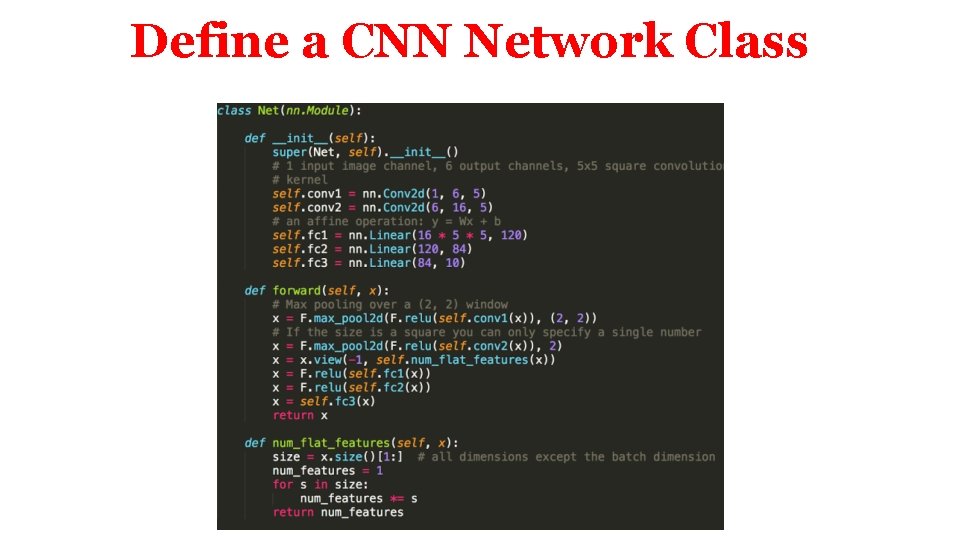

Define a CNN Network Class

Compute Loss input is a random image target is a dummy label

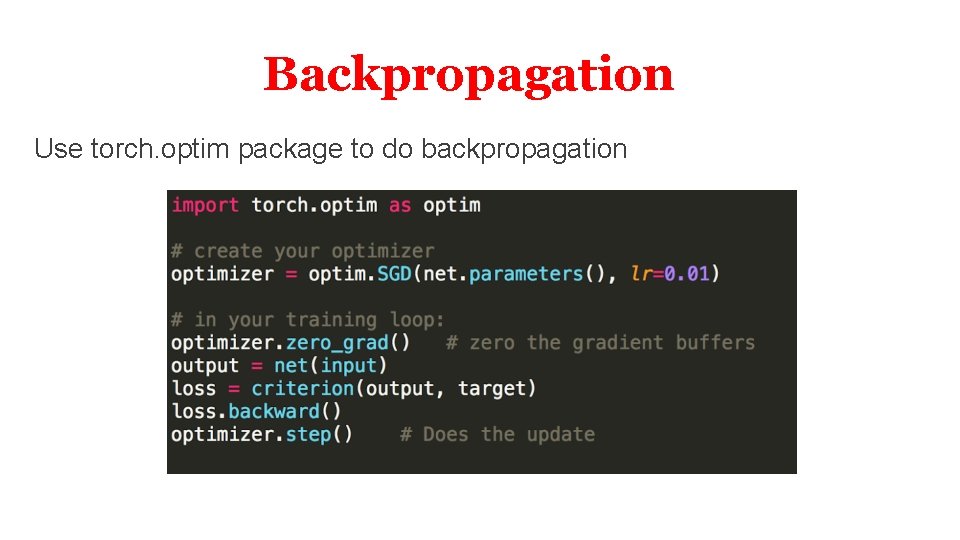

Backpropagation Use torch. optim package to do backpropagation

Links About Deep Learning • AAN: our search engine for resources and papers – http: //tangra. cs. yale. edu/newaan/ • Richard Socher’s Stanford class – http: //cs 224 d. stanford. edu/

Deep Learning Libraries for Deep Learning: Theano (Slides by Rui Zhang) (for reference only)

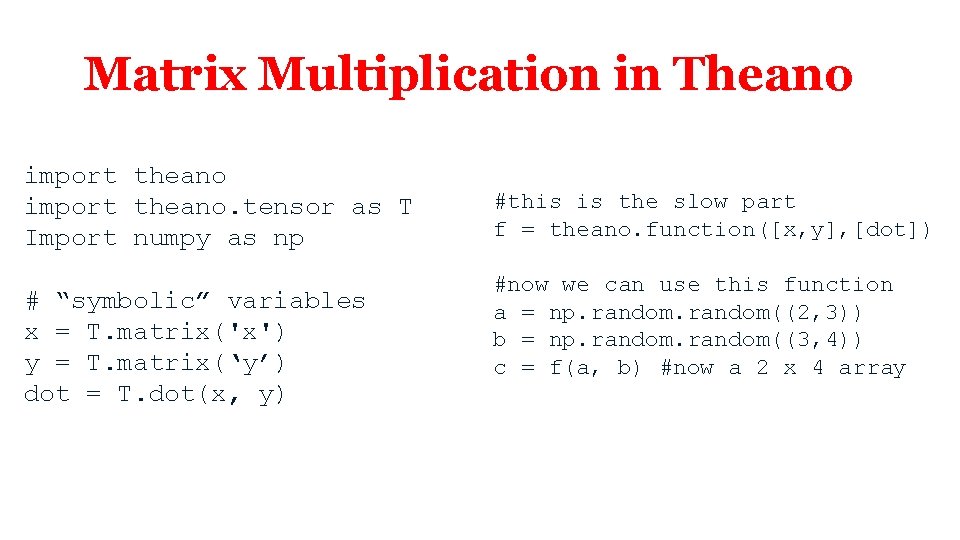

Matrix Multiplication in Theano import theano. tensor as T Import numpy as np # “symbolic” variables x = T. matrix('x') y = T. matrix(‘y’) dot = T. dot(x, y)

Matrix Multiplication in Theano import theano. tensor as T Import numpy as np # “symbolic” variables x = T. matrix('x') y = T. matrix(‘y’) dot = T. dot(x, y) #this is the slow part f = theano. function([x, y], [dot]) #now we can use this function a = np. random((2, 3)) b = np. random((3, 4)) c = f(a, b) #now a 2 x 4 array

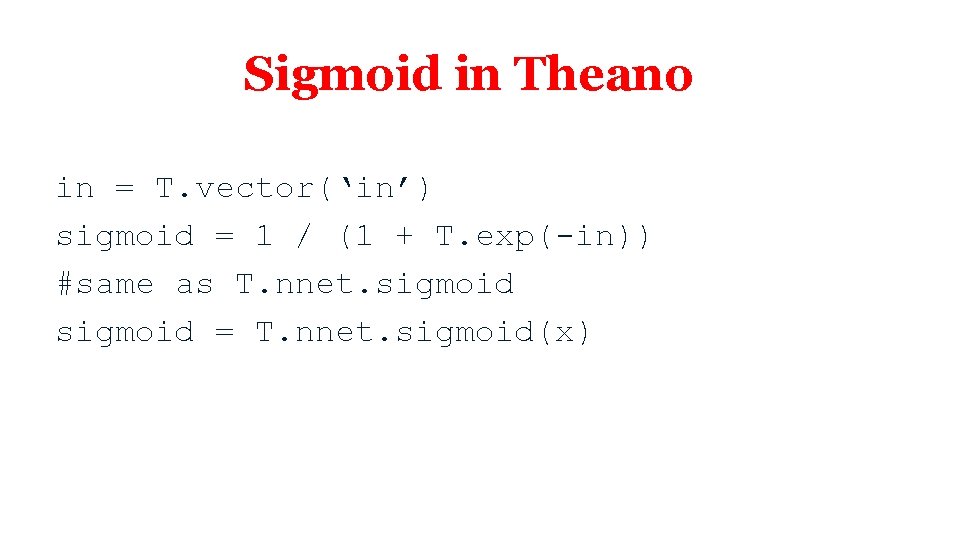

Sigmoid in Theano in = T. vector(‘in’) sigmoid = 1 / (1 + T. exp(-in)) #same as T. nnet. sigmoid = T. nnet. sigmoid(x)

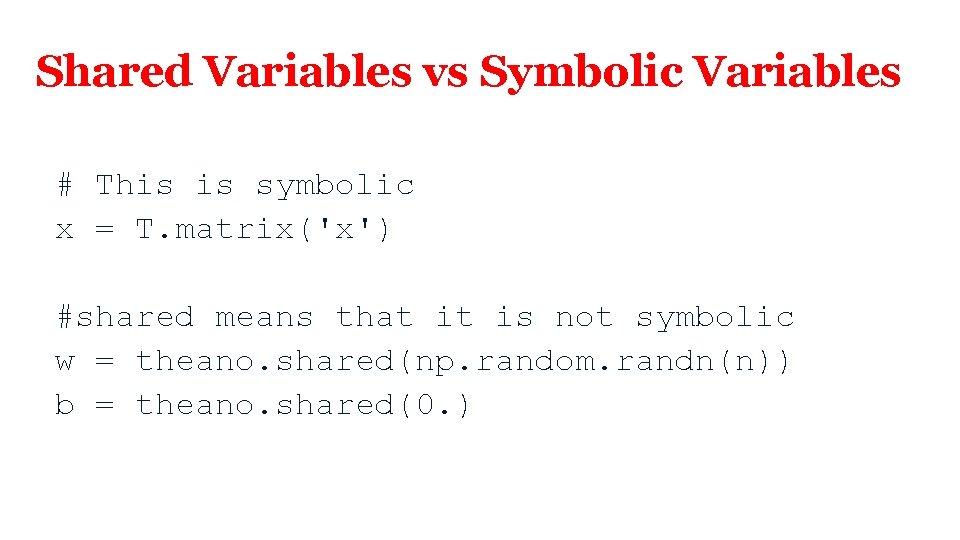

Shared Variables vs Symbolic Variables # This is symbolic x = T. matrix('x') #shared means that it is not symbolic w = theano. shared(np. random. randn(n)) b = theano. shared(0. )

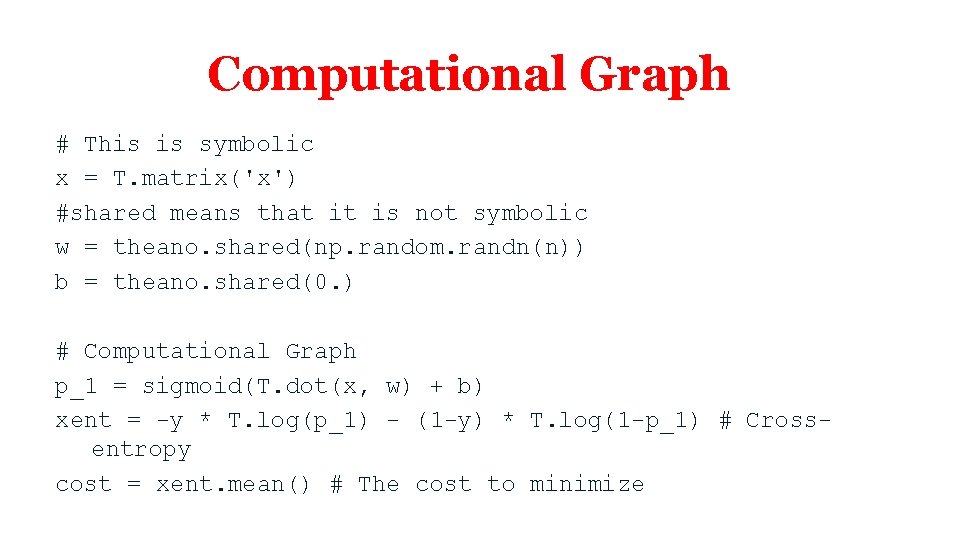

Computational Graph # This is symbolic x = T. matrix('x') #shared means that it is not symbolic w = theano. shared(np. random. randn(n)) b = theano. shared(0. ) # Computational Graph p_1 = sigmoid(T. dot(x, w) + b) xent = -y * T. log(p_1) - (1 -y) * T. log(1 -p_1) # Crossentropy cost = xent. mean() # The cost to minimize

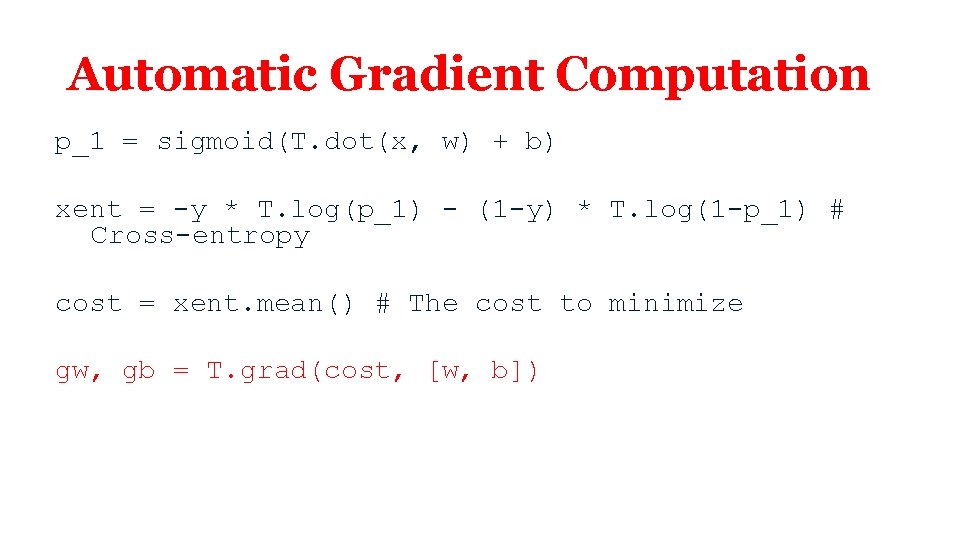

Automatic Gradient Computation p_1 = sigmoid(T. dot(x, w) + b) xent = -y * T. log(p_1) - (1 -y) * T. log(1 -p_1) # Cross-entropy cost = xent. mean() # The cost to minimize gw, gb = T. grad(cost, [w, b])

![Compile a Function train = theano. function( inputs=[x, y], outputs=[prediction, xent], updates=((w, w - Compile a Function train = theano. function( inputs=[x, y], outputs=[prediction, xent], updates=((w, w -](http://slidetodoc.com/presentation_image_h/ab72d903889f7bc0b3297826394ce16e/image-48.jpg)

Compile a Function train = theano. function( inputs=[x, y], outputs=[prediction, xent], updates=((w, w - 0. 1 * gw), (b, b - 0. 1 * gb)))

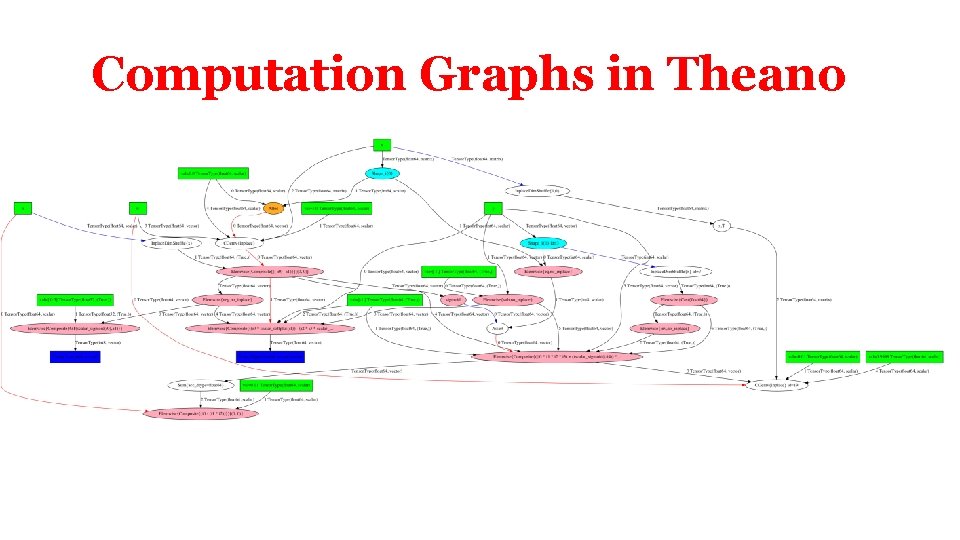

Computation Graphs in Theano

LSTM Sentiment Analysis Demo • If you’re new to deep learning and want to work with Theano, do yourself a favor and work through http: //deeplearning. net/tutorial/ • A LSTM demo is described here: http: //deeplearning. net/tutorial/lstm. html • Sentiment analysis model trained on IMDB movie reviews

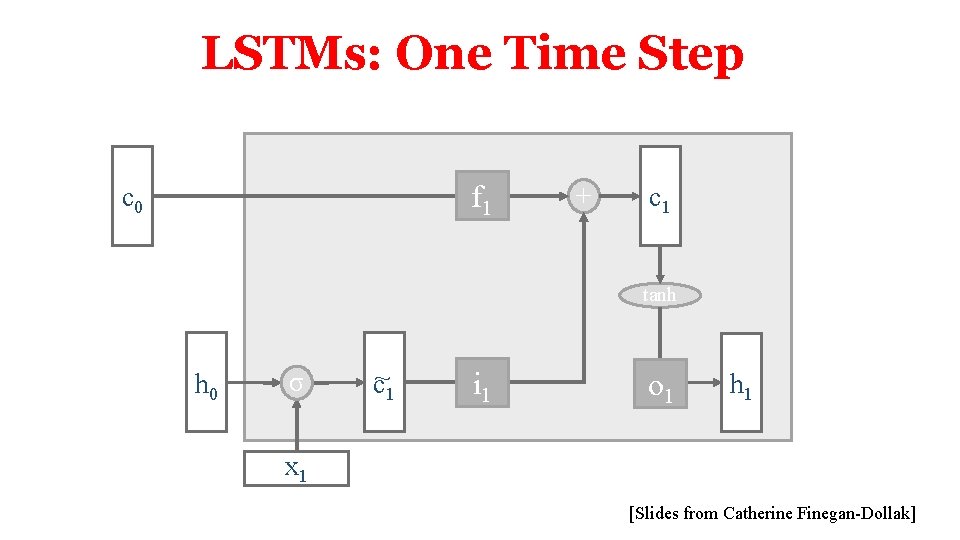

LSTMs: One Time Step f 1 c 0 + c 1 tanh h 0 σ c~1 i 1 o 1 h 1 x 1 [Slides from Catherine Finegan-Dollak]

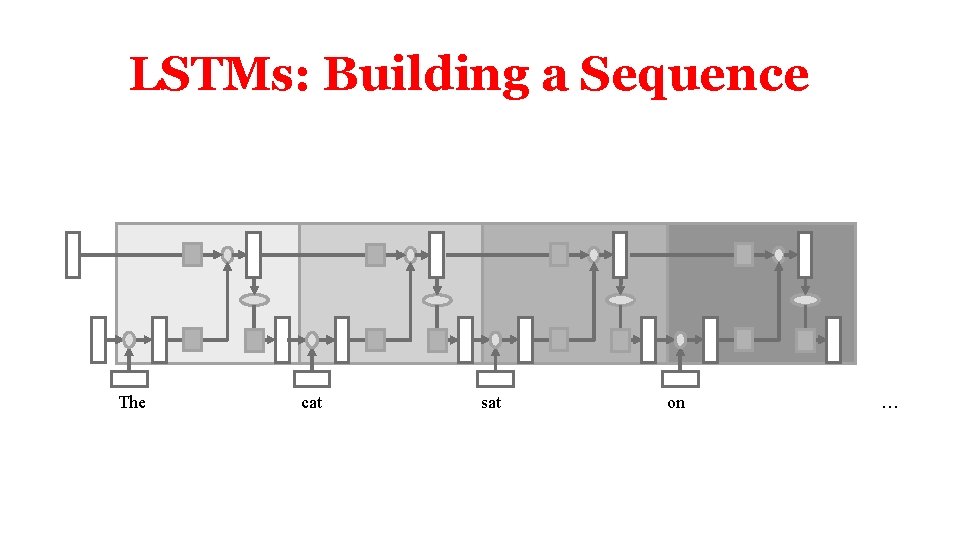

LSTMs: Building a Sequence The cat sat on …

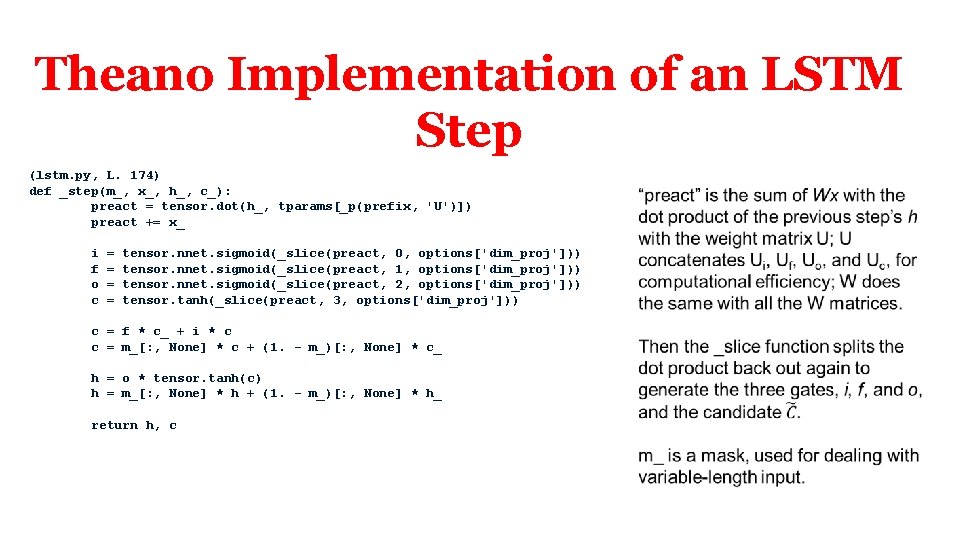

Theano Implementation of an LSTM Step (lstm. py, L. 174) def _step(m_, x_, h_, c_): preact = tensor. dot(h_, tparams[_p(prefix, 'U')]) preact += x_ i f o c = = tensor. nnet. sigmoid(_slice(preact, 0, options['dim_proj'])) tensor. nnet. sigmoid(_slice(preact, 1, options['dim_proj'])) tensor. nnet. sigmoid(_slice(preact, 2, options['dim_proj'])) tensor. tanh(_slice(preact, 3, options['dim_proj'])) c = f * c_ + i * c c = m_[: , None] * c + (1. - m_)[: , None] * c_ h = o * tensor. tanh(c) h = m_[: , None] * h + (1. - m_)[: , None] * h_ return h, c

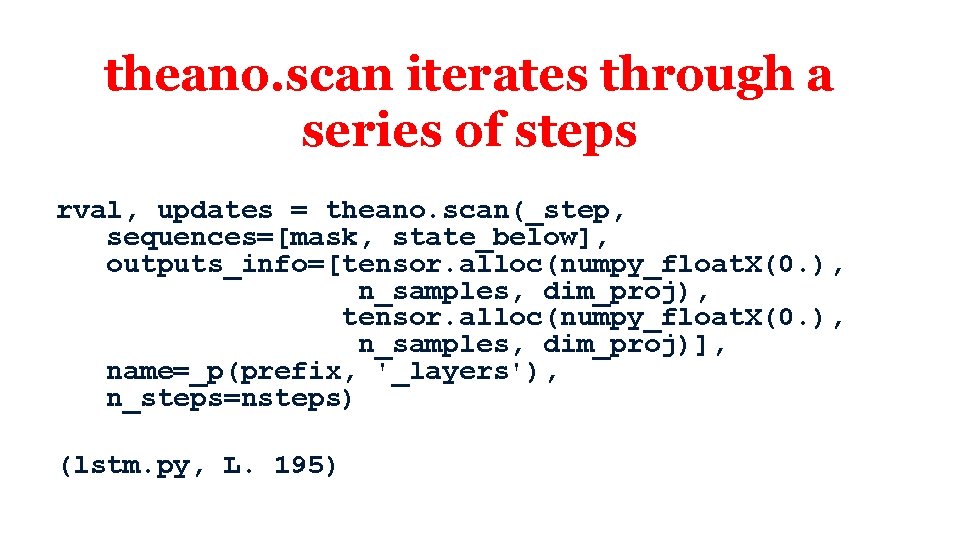

theano. scan iterates through a series of steps rval, updates = theano. scan(_step, sequences=[mask, state_below], outputs_info=[tensor. alloc(numpy_float. X(0. ), n_samples, dim_proj), tensor. alloc(numpy_float. X(0. ), n_samples, dim_proj)], name=_p(prefix, '_layers'), n_steps=nsteps) (lstm. py, L. 195)

NLP

- Slides: 55