Memory Subsystem and Cache Adapted from lectures notes

Memory Subsystem and Cache Adapted from lectures notes of Dr. Patterson and Dr. Kubiatowicz of UC Berkeley

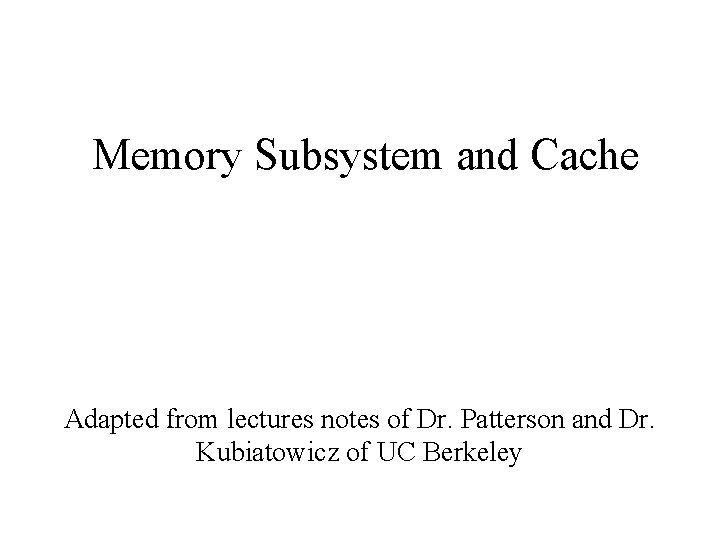

The Big Picture Processor Input Control Memory Datapath Output

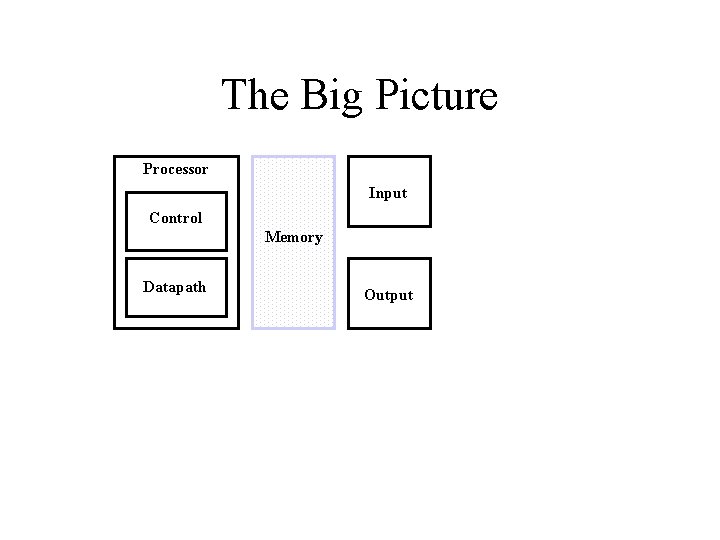

Technology Trends Logic: DRAM: Disk: Capacity 2 x in 3 years 4 x in 3 years Speed (latency) 2 x in 3 years 2 x in 10 years DRAM Year 1980 1983 1986 1989 1992 1995 1000: 1! Size 2: 1! 64 Kb 256 Kb 1 Mb Cycle Time 250 ns 220 ns 190 ns 4 Mb 16 Mb 64 Mb 165 ns 145 ns 120 ns

![Technology Trends [contd…] Processor-DRAM Memory Gap (latency) 100 10 1 µProc 60%/yr. “Moore’s Law” Technology Trends [contd…] Processor-DRAM Memory Gap (latency) 100 10 1 µProc 60%/yr. “Moore’s Law”](http://slidetodoc.com/presentation_image_h/25cd665b124bef85813d93d6f53b8179/image-4.jpg)

Technology Trends [contd…] Processor-DRAM Memory Gap (latency) 100 10 1 µProc 60%/yr. “Moore’s Law” (2 X/1. 5 yr) Processor-Memory Performance Gap: (grows 50% / year) “Less’ Law? ” DRAM 9%/yr. (2 X/10 yrs) CPU 1980 1981 1982 1983 1984 1985 1986 1987 1988 1989 1990 1991 1992 1993 1994 1995 1996 1997 1998 1999 2000 1000 Time

The Goal: Large, Fast, Cheap Memory !!! • Fact – Large memories are slow – Fast memories are small • How do we create a memory that is large, cheap and fast (most of the time) ? – Hierarchy – Parallelism

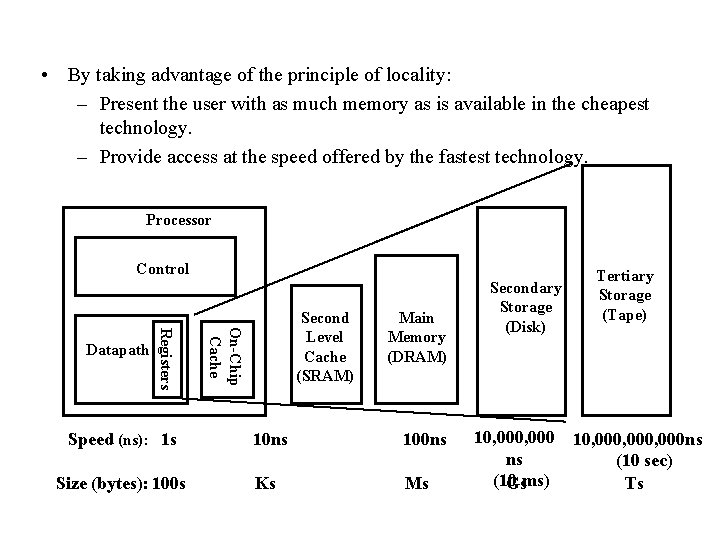

• By taking advantage of the principle of locality: – Present the user with as much memory as is available in the cheapest technology. – Provide access at the speed offered by the fastest technology. Processor Control On-Chip Cache Registers Datapath Second Level Cache (SRAM) Main Memory (DRAM) Speed (ns): 1 s 10 ns 100 ns Size (bytes): 100 s Ks Ms Secondary Storage (Disk) Tertiary Storage (Tape) 10, 000, 000 ns ns (10 sec) (10 Gsms) Ts

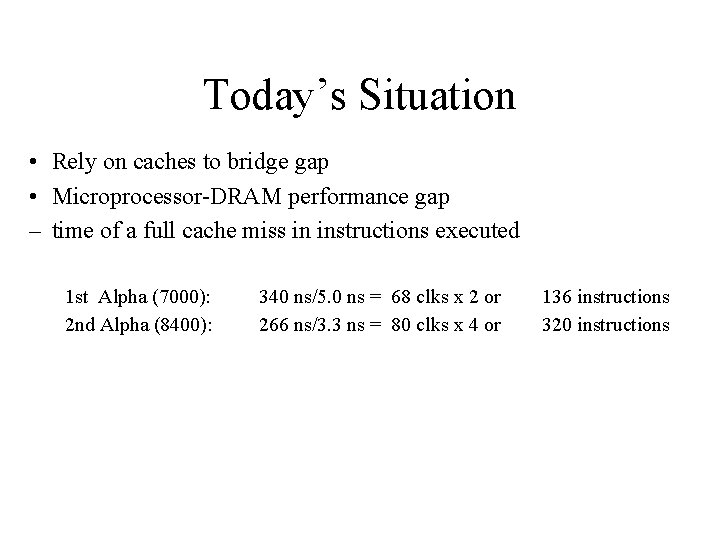

Today’s Situation • Rely on caches to bridge gap • Microprocessor-DRAM performance gap – time of a full cache miss in instructions executed 1 st Alpha (7000): 2 nd Alpha (8400): 340 ns/5. 0 ns = 68 clks x 2 or 266 ns/3. 3 ns = 80 clks x 4 or 136 instructions 320 instructions

Memory Hierarchy (1/4) • Processor – executes programs – runs on order of nanoseconds to picoseconds – needs to access code and data for programs: where are these? • Disk – HUGE capacity (virtually limitless) – VERY slow: runs on order of milliseconds – so how do we account for this gap?

Memory Hierarchy (2/4) • Memory (DRAM) – smaller than disk (not limitless capacity) – contains subset of data on disk: basically portions of programs that are currently being run – much faster than disk: memory accesses don’t slow down processor quite as much – Problem: memory is still too slow (hundreds of nanoseconds) – Solution: add more layers (caches)

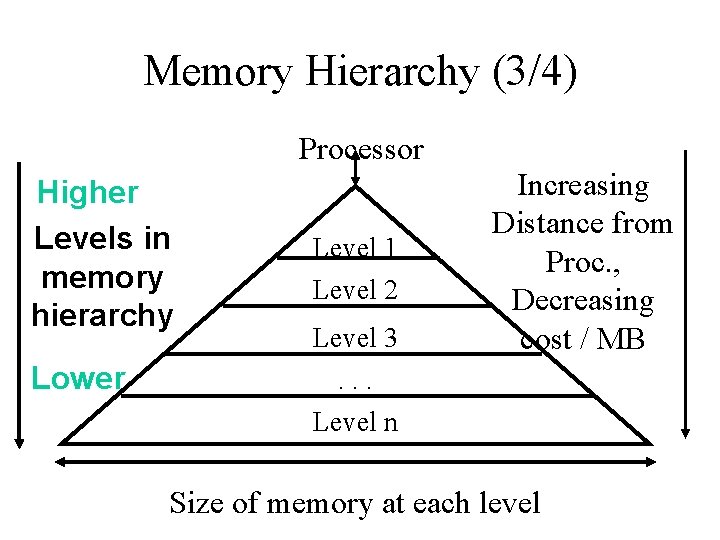

Memory Hierarchy (3/4) Processor Higher Levels in memory hierarchy Lower Level 1 Level 2 Level 3 Increasing Distance from Proc. , Decreasing cost / MB . . . Level n Size of memory at each level

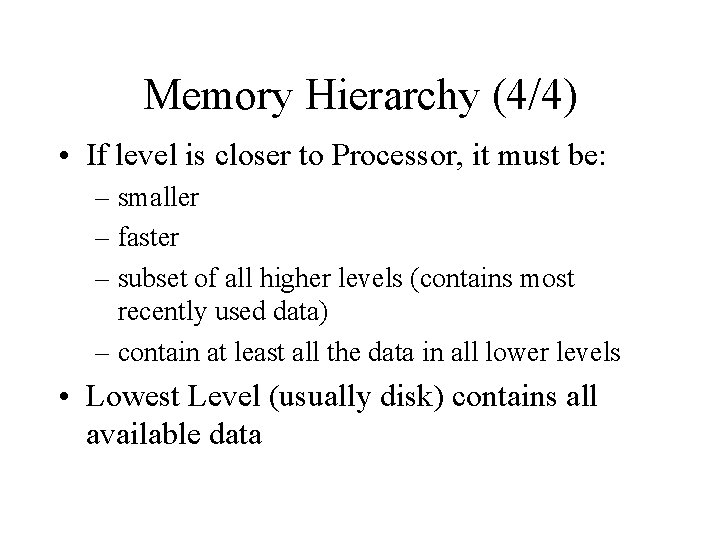

Memory Hierarchy (4/4) • If level is closer to Processor, it must be: – smaller – faster – subset of all higher levels (contains most recently used data) – contain at least all the data in all lower levels • Lowest Level (usually disk) contains all available data

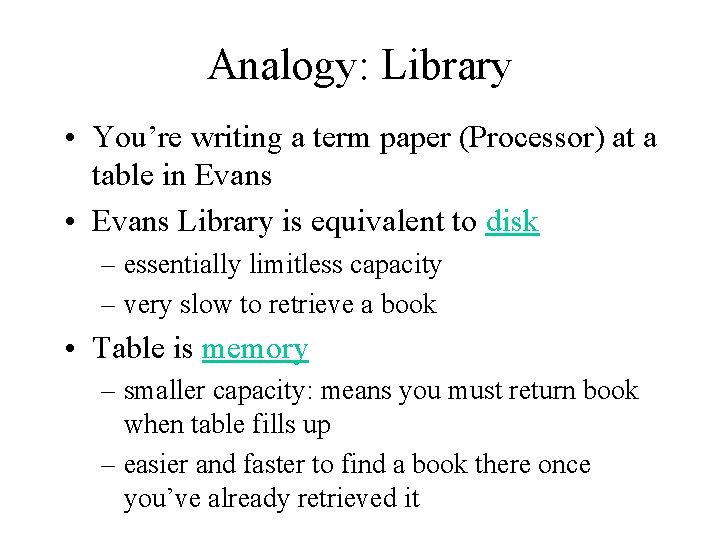

Analogy: Library • You’re writing a term paper (Processor) at a table in Evans • Evans Library is equivalent to disk – essentially limitless capacity – very slow to retrieve a book • Table is memory – smaller capacity: means you must return book when table fills up – easier and faster to find a book there once you’ve already retrieved it

![Analogy : Library [contd…] • Open books on table are cache – smaller capacity: Analogy : Library [contd…] • Open books on table are cache – smaller capacity:](http://slidetodoc.com/presentation_image_h/25cd665b124bef85813d93d6f53b8179/image-13.jpg)

Analogy : Library [contd…] • Open books on table are cache – smaller capacity: can have very few open books fit on table; again, when table fills up, you must close a book – much, much faster to retrieve data • Illusion created: whole library open on the tabletop – Keep as many recently used books open on table as possible since likely to use again – Also keep as many books on table as possible, since faster than going to library

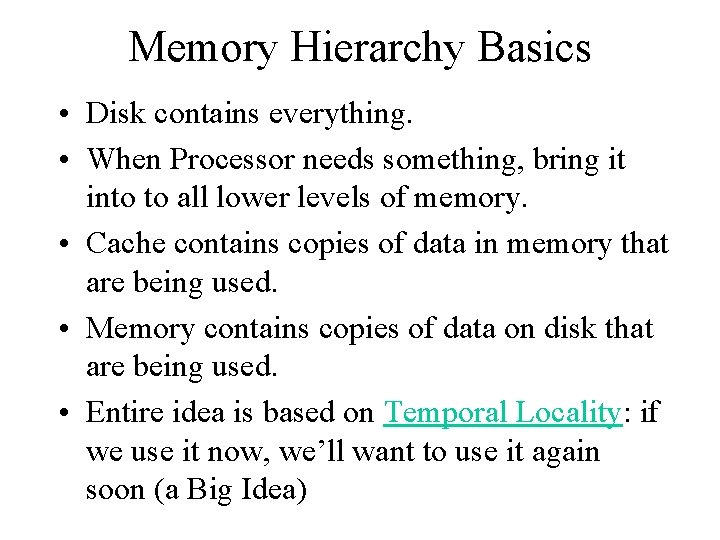

Memory Hierarchy Basics • Disk contains everything. • When Processor needs something, bring it into to all lower levels of memory. • Cache contains copies of data in memory that are being used. • Memory contains copies of data on disk that are being used. • Entire idea is based on Temporal Locality: if we use it now, we’ll want to use it again soon (a Big Idea)

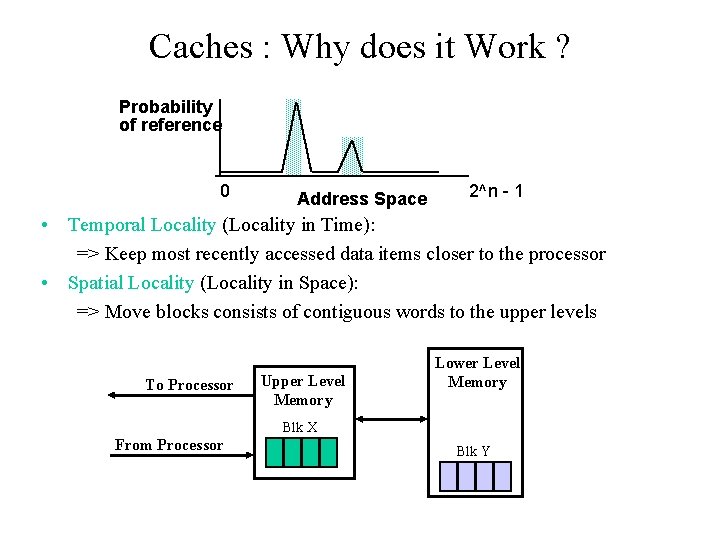

Caches : Why does it Work ? Probability of reference 0 Address Space 2^n - 1 • Temporal Locality (Locality in Time): => Keep most recently accessed data items closer to the processor • Spatial Locality (Locality in Space): => Move blocks consists of contiguous words to the upper levels To Processor Upper Level Memory Lower Level Memory Blk X From Processor Blk Y

Cache Design Issues • How do we organize cache? • Where does each memory address map to? (Remember that cache is subset of memory, so multiple memory addresses map to the same cache location. ) • How do we know which elements are in cache? • How do we quickly locate them?

Direct Mapped Cache • In a direct-mapped cache, each memory address is associated with one possible block within the cache – Therefore, we only need to look in a single location in the cache for the data if it exists in the cache – Block is the unit of transfer between cache and memory

![Direct Mapped Cache [contd…] Memory Address Memory 0 1 2 3 4 5 6 Direct Mapped Cache [contd…] Memory Address Memory 0 1 2 3 4 5 6](http://slidetodoc.com/presentation_image_h/25cd665b124bef85813d93d6f53b8179/image-18.jpg)

Direct Mapped Cache [contd…] Memory Address Memory 0 1 2 3 4 5 6 7 8 9 A B C D E F Cache Index 0 1 2 3 4 Byte Direct Mapped Cache • Cache Location 0 can be occupied by data from: – Memory location 0, 4, 8, . . . – In general: any memory location that is multiple of 4

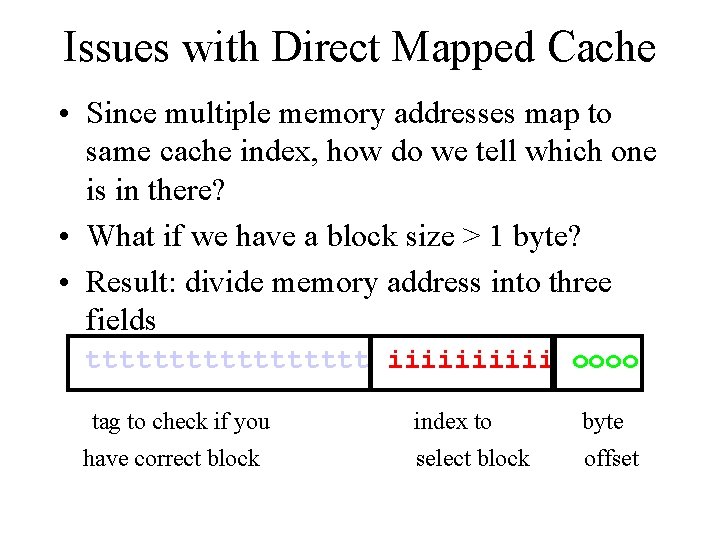

Issues with Direct Mapped Cache • Since multiple memory addresses map to same cache index, how do we tell which one is in there? • What if we have a block size > 1 byte? • Result: divide memory address into three fields ttttttttt iiiii oooo tag to check if you index to byte have correct block select block offset

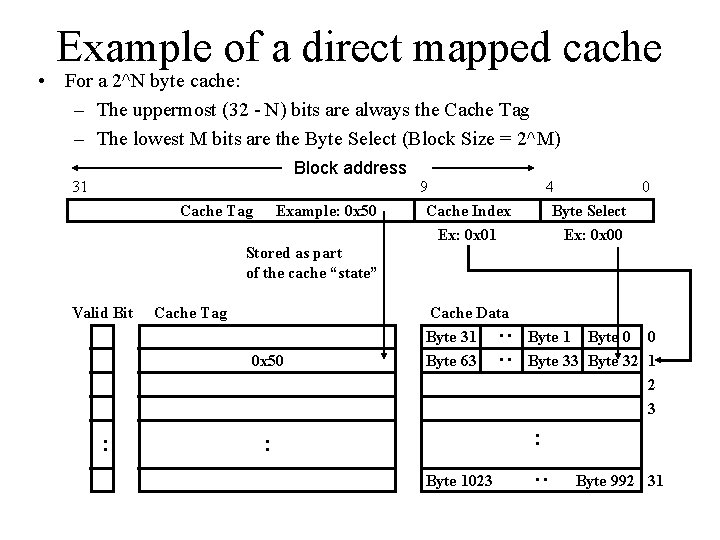

Example of a direct mapped cache • For a 2^N byte cache: – The uppermost (32 - N) bits are always the Cache Tag – The lowest M bits are the Byte Select (Block Size = 2^M) Block address 31 Cache Tag Example: 0 x 50 9 Cache Index Ex: 0 x 01 4 0 Byte Select Ex: 0 x 00 Stored as part of the cache “state” 0 x 50 : Cache Data Byte 31 Byte 63 Byte 1 Byte 0 0 Byte 33 Byte 32 1 2 3 : : Byte 1023 : Cache Tag : : Valid Bit Byte 992 31

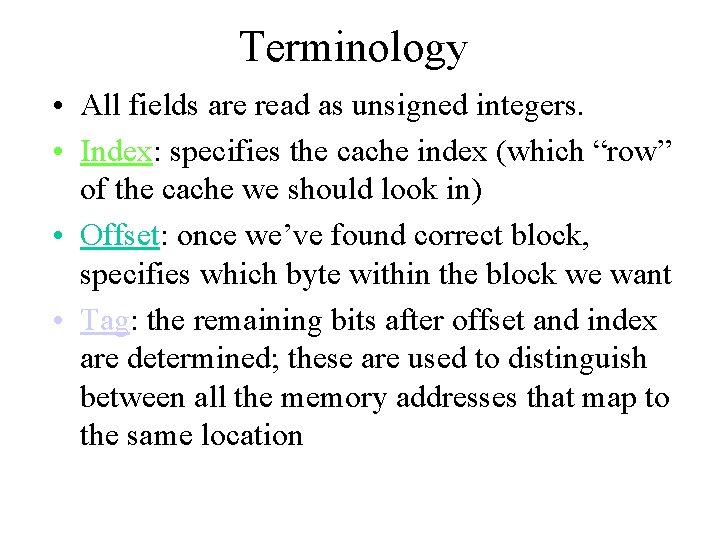

Terminology • All fields are read as unsigned integers. • Index: specifies the cache index (which “row” of the cache we should look in) • Offset: once we’ve found correct block, specifies which byte within the block we want • Tag: the remaining bits after offset and index are determined; these are used to distinguish between all the memory addresses that map to the same location

![Terminology [contd…] • Hit: data appears in some block in the upper level (example: Terminology [contd…] • Hit: data appears in some block in the upper level (example:](http://slidetodoc.com/presentation_image_h/25cd665b124bef85813d93d6f53b8179/image-22.jpg)

Terminology [contd…] • Hit: data appears in some block in the upper level (example: Block X) – Hit Rate: the fraction of memory access found in the upper level – Hit Time: Time to access the upper level which consists of RAM access time + Time to determine hit/miss • Miss: data needs to be retrieve from a block in the lower level (Block Y) – Miss Rate = 1 - (Hit Rate) – Miss Penalty: Time to replace a block in the upper level + Time to deliver the block the processor • Hit Time << Miss Penalty To Processor Upper Level Memory Lower Level Memory Blk X From Processor Blk Y

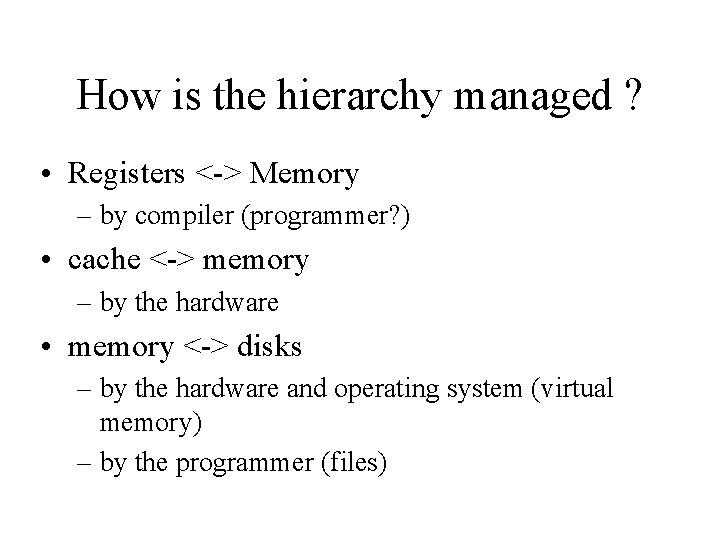

How is the hierarchy managed ? • Registers <-> Memory – by compiler (programmer? ) • cache <-> memory – by the hardware • memory <-> disks – by the hardware and operating system (virtual memory) – by the programmer (files)

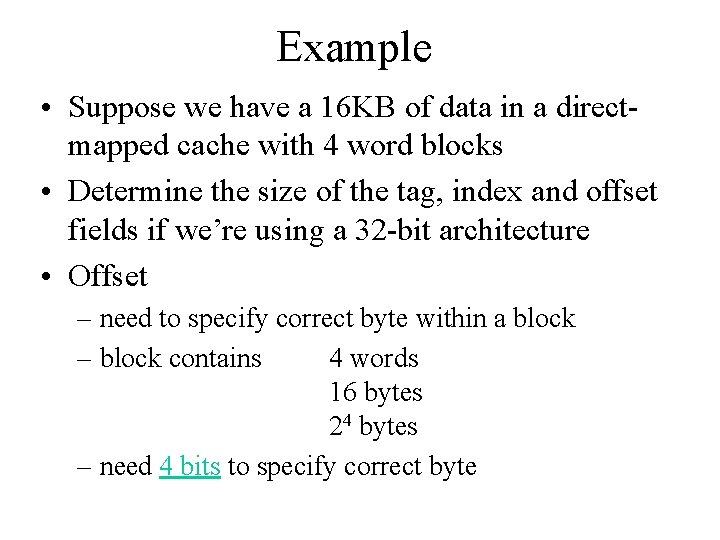

Example • Suppose we have a 16 KB of data in a directmapped cache with 4 word blocks • Determine the size of the tag, index and offset fields if we’re using a 32 -bit architecture • Offset – need to specify correct byte within a block – block contains 4 words 16 bytes 24 bytes – need 4 bits to specify correct byte

![Example [contd…] • Index: (~index into an “array of blocks”) – need to specify Example [contd…] • Index: (~index into an “array of blocks”) – need to specify](http://slidetodoc.com/presentation_image_h/25cd665b124bef85813d93d6f53b8179/image-25.jpg)

Example [contd…] • Index: (~index into an “array of blocks”) – need to specify correct row in cache – cache contains 16 KB = 214 bytes – block contains 24 bytes (4 words) – # rows/cache = # blocks/cache (since there’s one block/row) = bytes/cache bytes/row 214 bytes/cache 24 bytes/row = 210 rows/cache – need 10 bits to specify this many rows =

![Example [contd…] • Tag: use remaining bits as tag – tag length = mem Example [contd…] • Tag: use remaining bits as tag – tag length = mem](http://slidetodoc.com/presentation_image_h/25cd665b124bef85813d93d6f53b8179/image-26.jpg)

Example [contd…] • Tag: use remaining bits as tag – tag length = mem addr length - offset - index = 32 - 4 - 10 bits = 18 bits – so tag is leftmost 18 bits of memory address

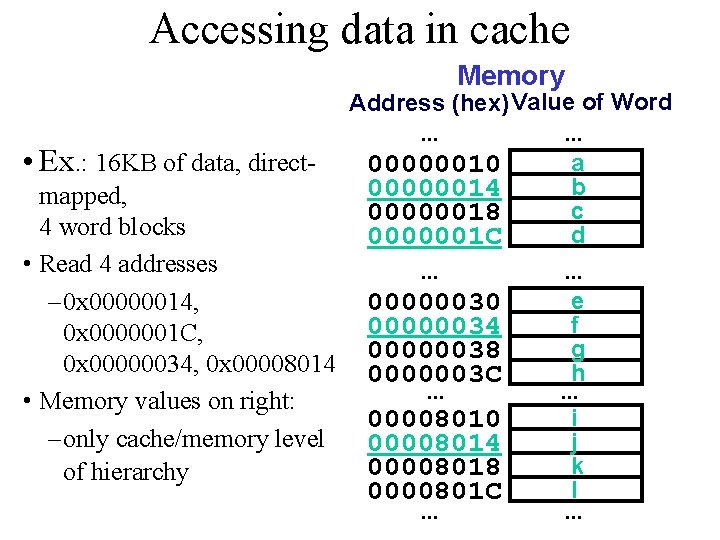

Accessing data in cache Memory Address (hex) Value of Word. . . • Ex. : 16 KB of data, directa 00000010 b 00000014 mapped, c 00000018 4 word blocks d 0000001 C • Read 4 addresses. . . e – 0 x 00000014, 00000030 f 00000034 0 x 0000001 C, g 00000038 0 x 00000034, 0 x 00008014 0000003 C h. . . • Memory values on right: i 00008010 – only cache/memory level 00008014 j k 00008018 of hierarchy l 0000801 C. . .

![Accessing data in cache [contd…] • 4 Addresses: – 0 x 00000014, 0 x Accessing data in cache [contd…] • 4 Addresses: – 0 x 00000014, 0 x](http://slidetodoc.com/presentation_image_h/25cd665b124bef85813d93d6f53b8179/image-28.jpg)

Accessing data in cache [contd…] • 4 Addresses: – 0 x 00000014, 0 x 0000001 C, 0 x 00000034, 0 x 00008014 • 4 Addresses divided (for convenience) into Tag, Index, Byte Offset fields 0000000001 0100 0000000001 1100 00000000011 0100 0000000010 000001 0100 Tag Index Offset

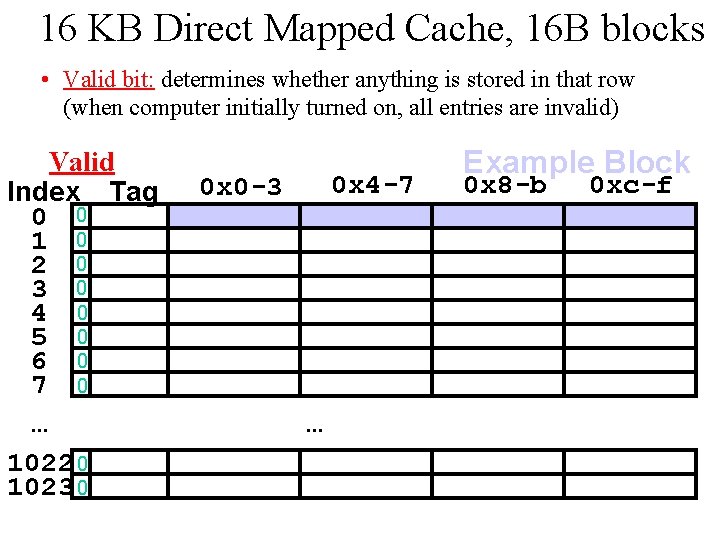

16 KB Direct Mapped Cache, 16 B blocks • Valid bit: determines whether anything is stored in that row (when computer initially turned on, all entries are invalid) Valid Index Tag 0 0 1 0 2 0 3 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 0 x 4 -7 0 x 0 -3 . . . Example Block 0 x 8 -b 0 xc-f

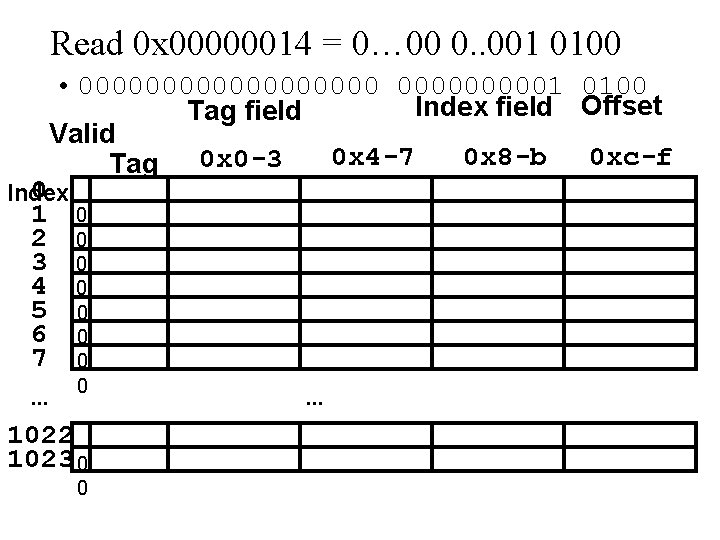

Read 0 x 00000014 = 0… 00 0. . 001 0100 • 0000000001 0100 Index field Offset Tag field Valid 0 x 4 -7 0 x 8 -b 0 xc-f Tag 0 x 0 -3 0 Index 1 2 3 4 5 6 7 . . . 0 0 0 0 1022 1023 0 0 . . .

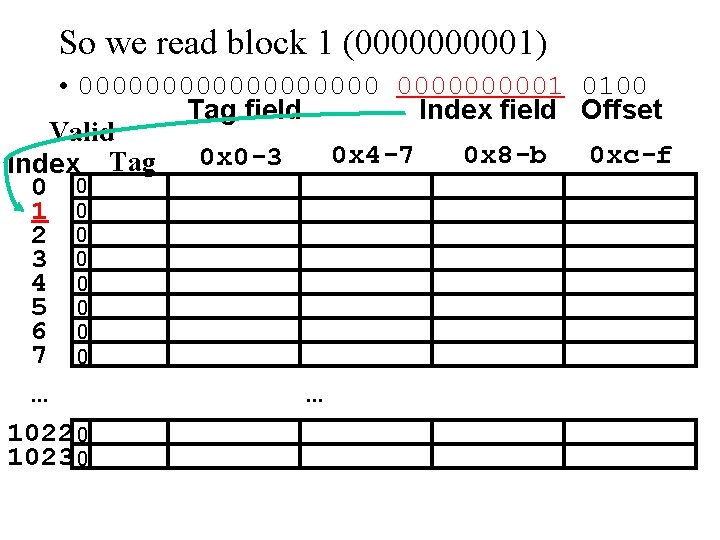

So we read block 1 (000001) • 0000000001 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 1 0 2 0 3 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

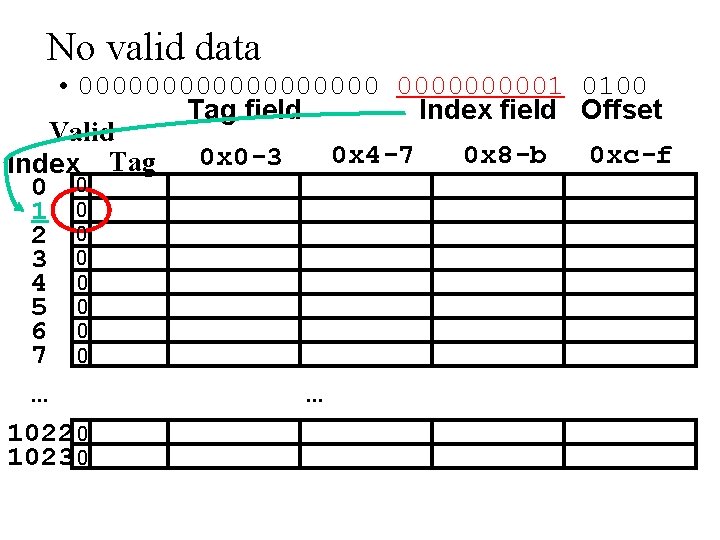

No valid data • 0000000001 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 1 0 2 0 3 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

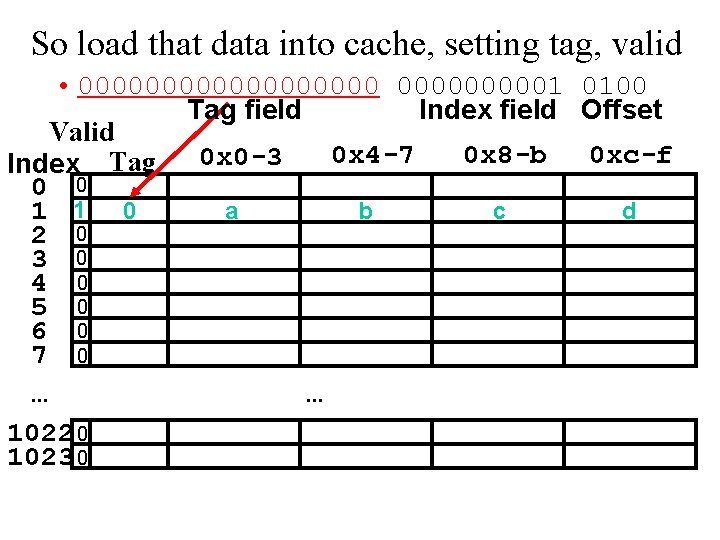

So load that data into cache, setting tag, valid • 0000000001 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 3 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

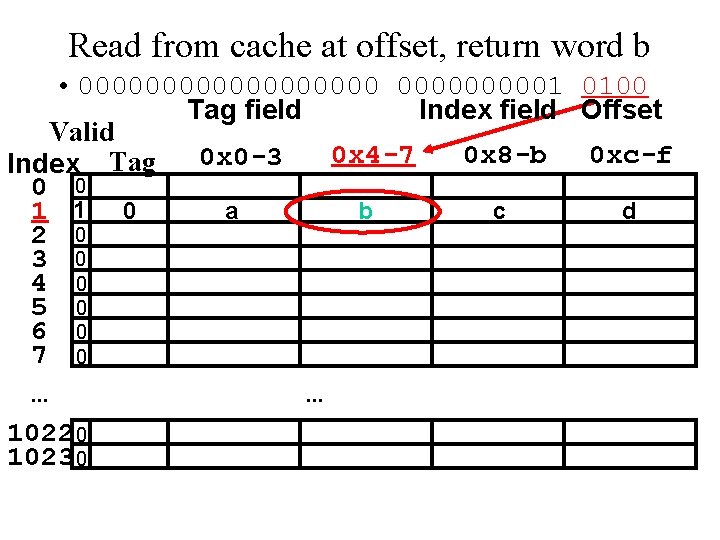

Read from cache at offset, return word b • 0000000001 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 3 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

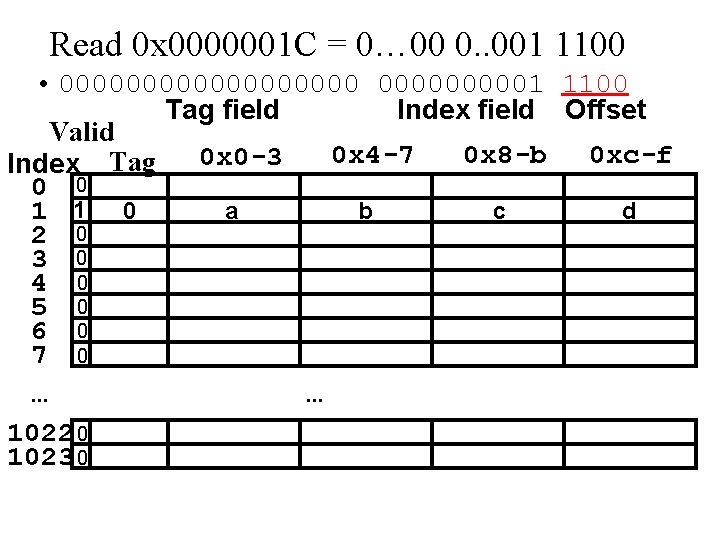

Read 0 x 0000001 C = 0… 00 0. . 001 1100 • 0000000001 1100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 3 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

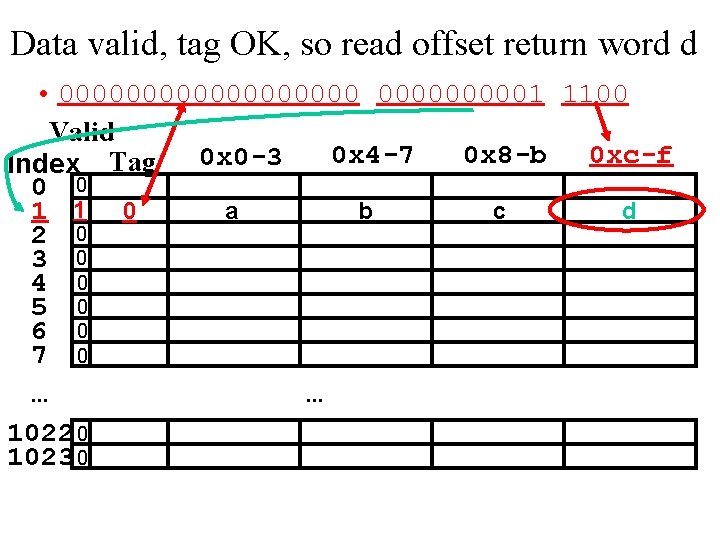

Data valid, tag OK, so read offset return word d • 0000000001 1100 Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 3 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

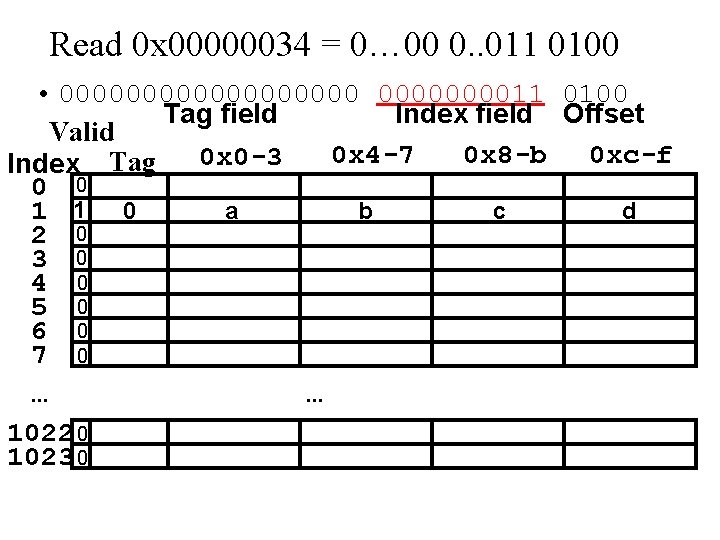

Read 0 x 00000034 = 0… 00 0. . 011 0100 • 00000000011 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 3 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

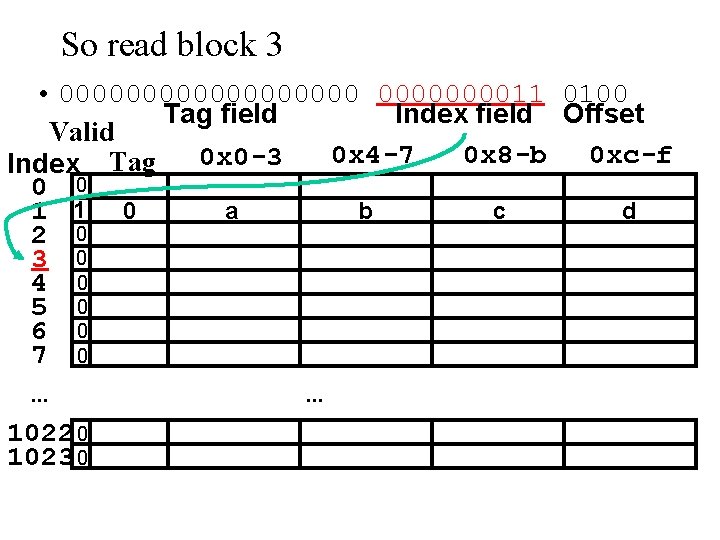

So read block 3 • 00000000011 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 3 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

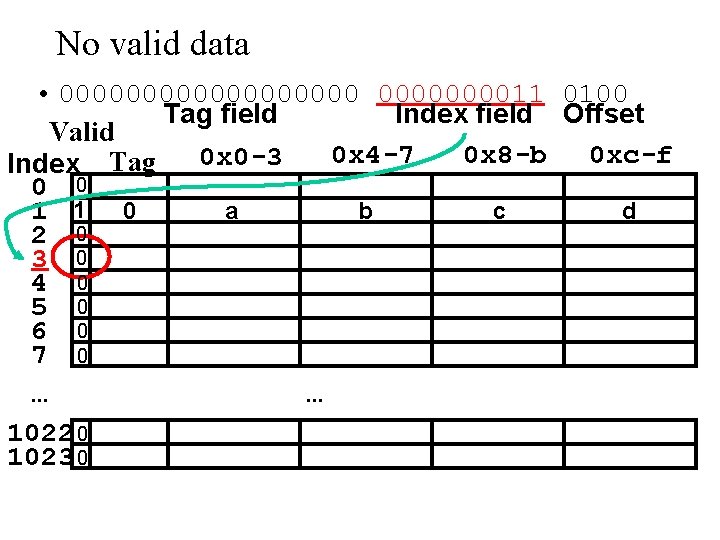

No valid data • 00000000011 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 3 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

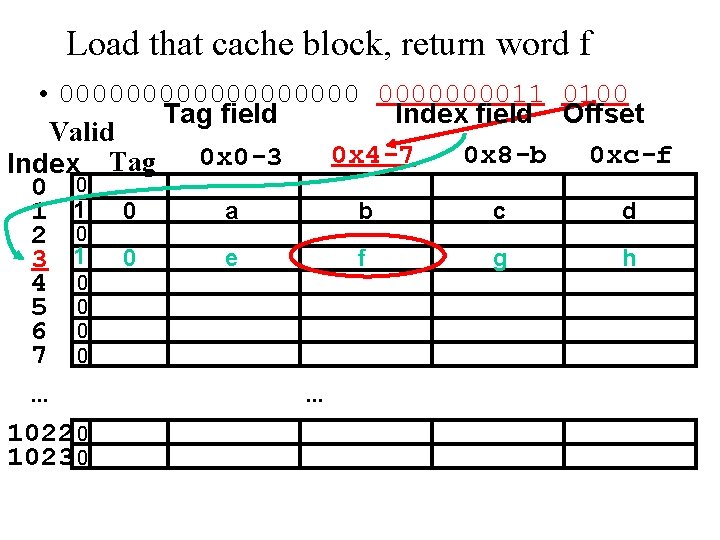

Load that cache block, return word f • 00000000011 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 e f g h 3 1 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

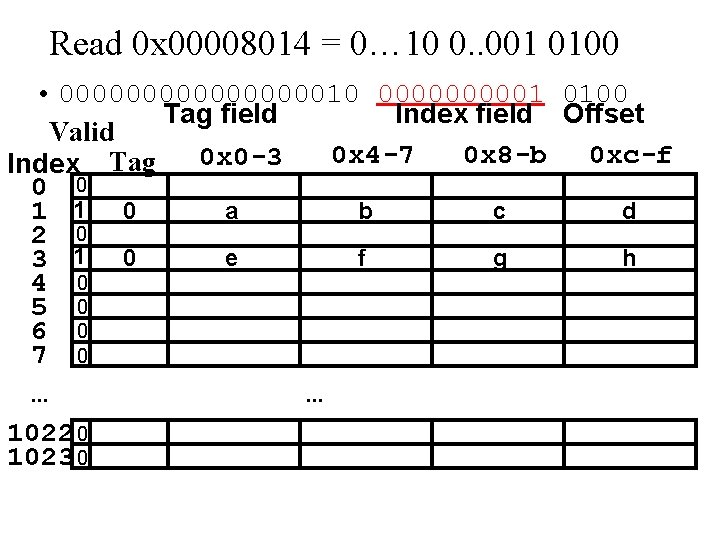

Read 0 x 00008014 = 0… 10 0. . 001 0100 • 0000000010 000001 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 e f g h 3 1 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

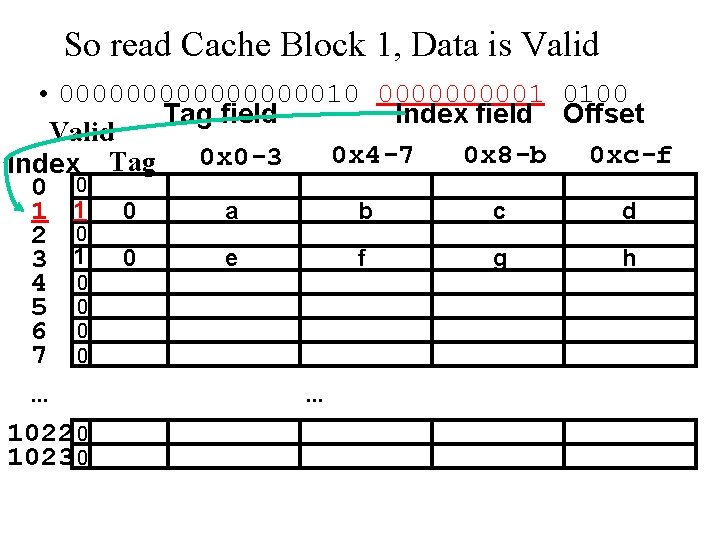

So read Cache Block 1, Data is Valid • 0000000010 000001 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 e f g h 3 1 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

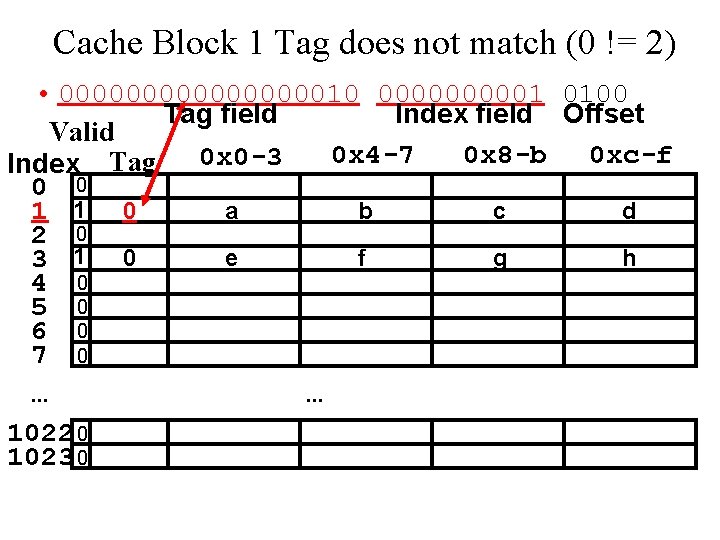

Cache Block 1 Tag does not match (0 != 2) • 0000000010 000001 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 a b c d 1 1 0 2 0 e f g h 3 1 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

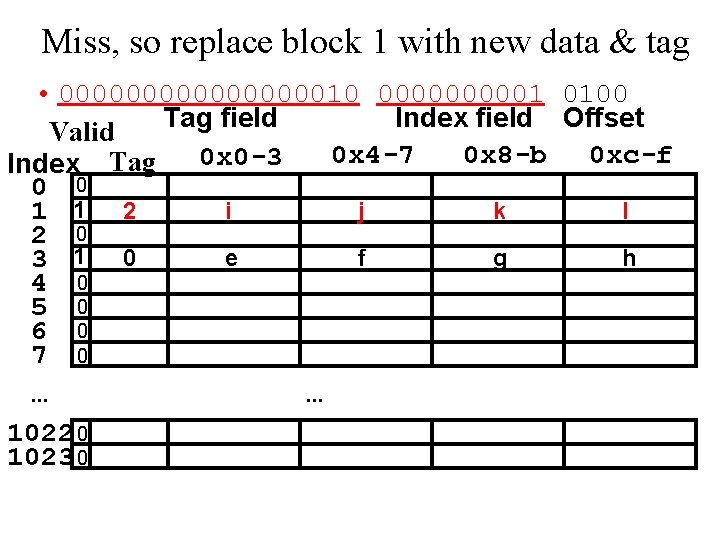

Miss, so replace block 1 with new data & tag • 0000000010 000001 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 i j k l 1 1 2 2 0 e f g h 3 1 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

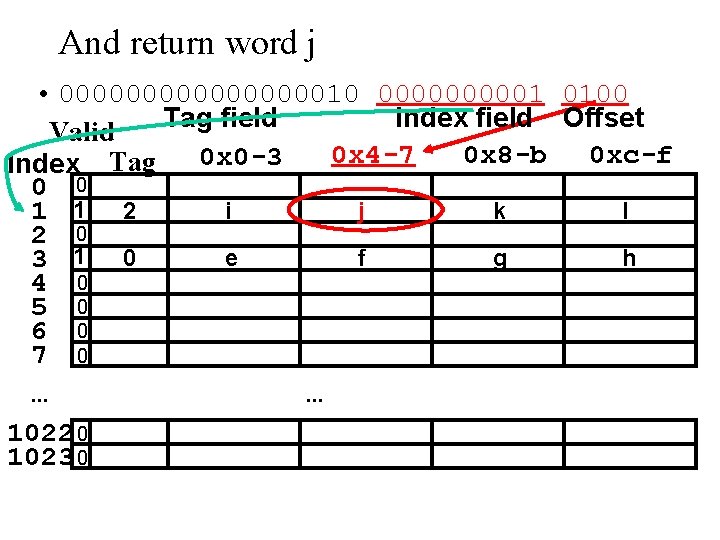

And return word j • 0000000010 000001 0100 Tag field Index field Offset Valid 0 x 4 -7 0 x 8 -b 0 xc-f 0 x 0 -3 Index Tag 0 0 i j k l 1 1 2 2 0 e f g h 3 1 0 4 0 5 0 6 0 7 0. . . 1022 0 1023 0 . . .

Things to Remember • We would like to have the capacity of disk at the speed of the processor: unfortunately this is not feasible. • So we create a memory hierarchy: – each successively lower level contains “most used” data from next higher level • Exploit temporal and spatial locality

- Slides: 46