KalmanParticle Filters Tutorial Haris Baltzakis November 2004 1

Kalman/Particle Filters Tutorial Haris Baltzakis, November 2004 1

Problem Statement • Examples • A mobile robot moving within its environment • A vision based system tracking cars in a highway • Common characteristics • A state that changes dynamically • State cannot be observed directly • Uncertainty due to noise in: state/way state changes/observations 2

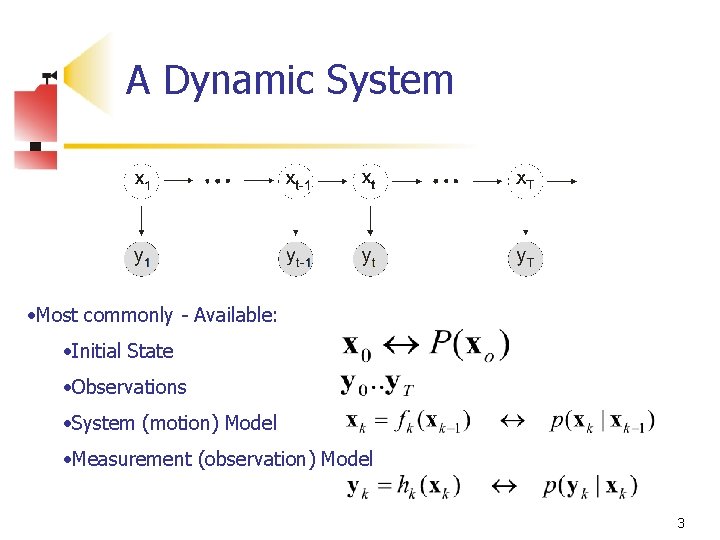

A Dynamic System • Most commonly - Available: • Initial State • Observations • System (motion) Model • Measurement (observation) Model 3

Filters n Compute the hidden state from observations n Filters: n n n Terminology from signal processing Can be considered as a data processing algorithm. Computer Algorithms Classification: Discrete time - Continuous time Sensor fusion Robustness to noise Wanted: each filter to be optimal in some sense. 4

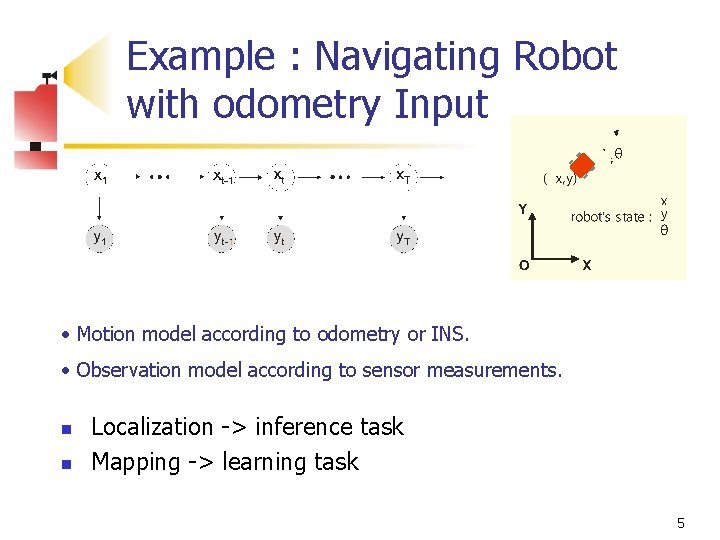

Example : Navigating Robot with odometry Input • Motion model according to odometry or INS. • Observation model according to sensor measurements. n n Localization -> inference task Mapping -> learning task 5

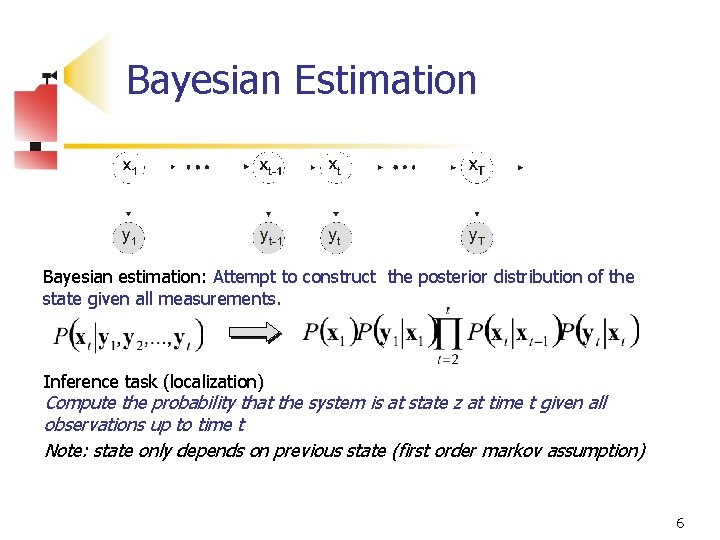

Bayesian Estimation Bayesian estimation: Attempt to construct the posterior distribution of the state given all measurements. Inference task (localization) Compute the probability that the system is at state z at time t given all observations up to time t Note: state only depends on previous state (first order markov assumption) 6

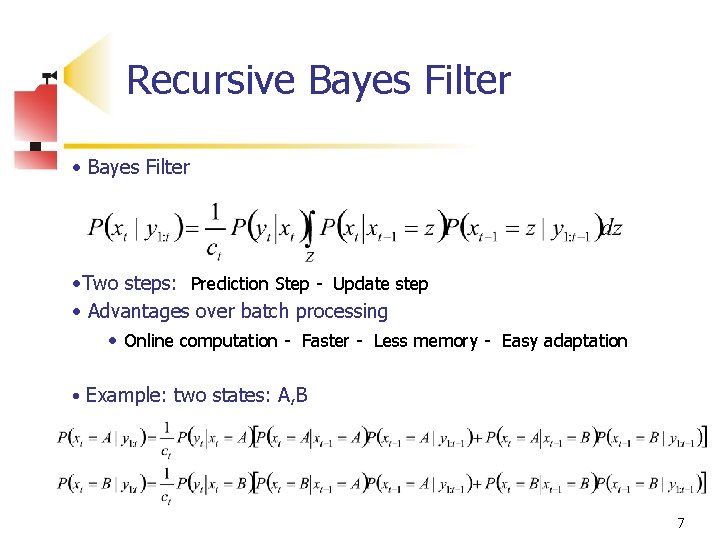

Recursive Bayes Filter • Bayes Filter • Two steps: Prediction Step - Update step • Advantages over batch processing • Online computation - Faster - Less memory - Easy adaptation • Example: two states: A, B 7

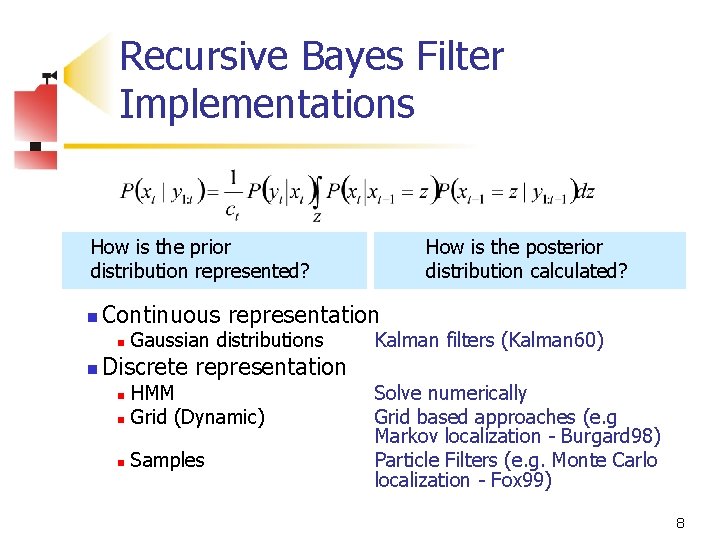

Recursive Bayes Filter Implementations How is the prior distribution represented? n Continuous representation n n How is the posterior distribution calculated? Gaussian distributions Discrete representation HMM n Grid (Dynamic) n n Samples Kalman filters (Kalman 60) Solve numerically Grid based approaches (e. g Markov localization - Burgard 98) Particle Filters (e. g. Monte Carlo localization - Fox 99) 8

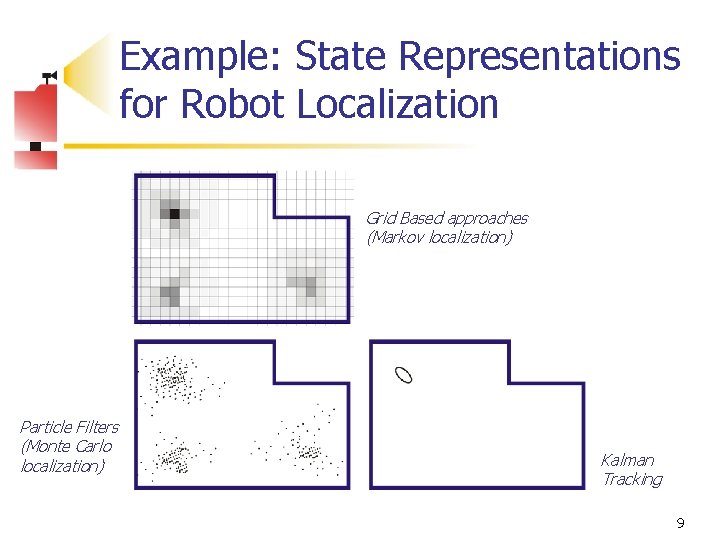

Example: State Representations for Robot Localization Grid Based approaches (Markov localization) Particle Filters (Monte Carlo localization) Kalman Tracking 9

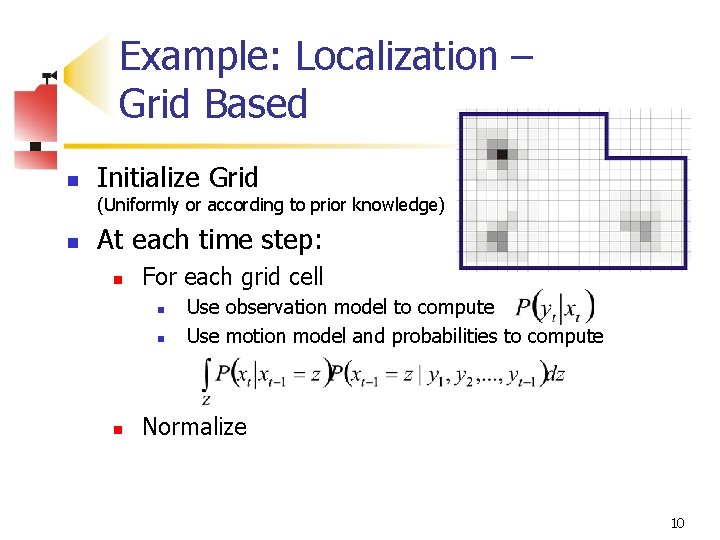

Example: Localization – Grid Based n Initialize Grid (Uniformly or according to prior knowledge) n At each time step: n For each grid cell n n n Use observation model to compute Use motion model and probabilities to compute Normalize 10

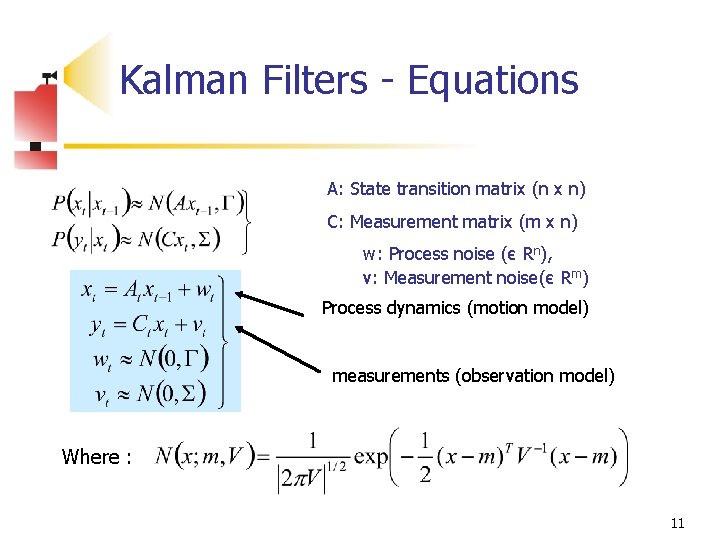

Kalman Filters - Equations A: State transition matrix (n x n) C: Measurement matrix (m x n) w: Process noise (є Rn), v: Measurement noise(є Rm) Process dynamics (motion model) measurements (observation model) Where : 11

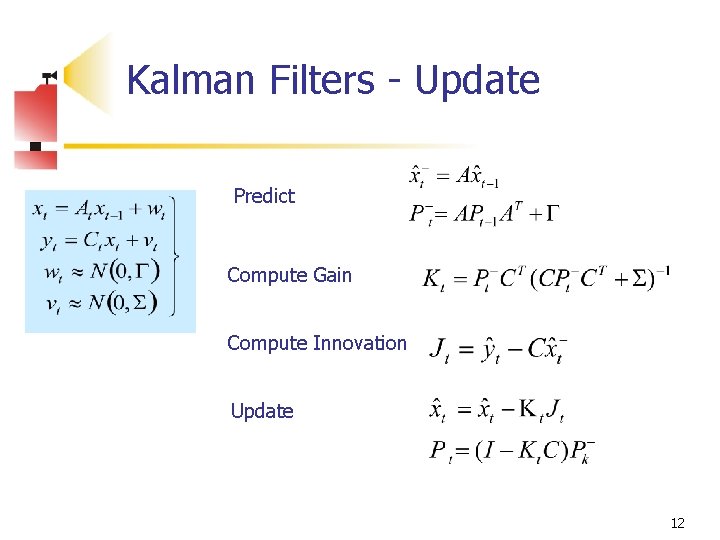

Kalman Filters - Update Predict Compute Gain Compute Innovation Update 12

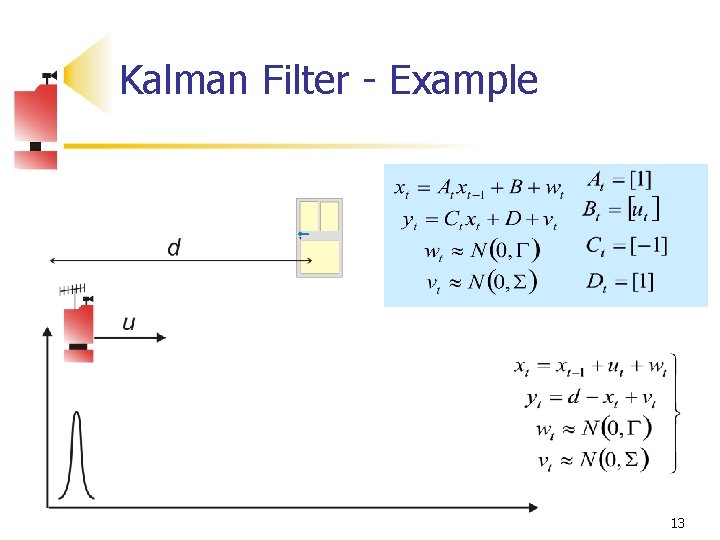

Kalman Filter - Example 13

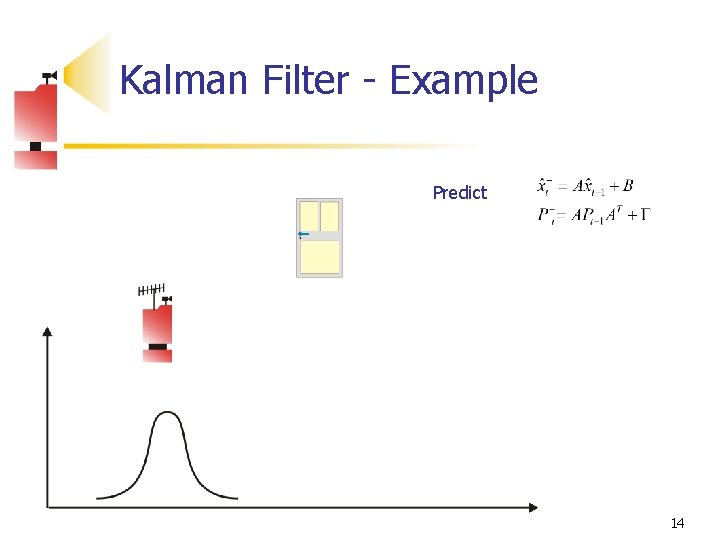

Kalman Filter - Example Predict 14

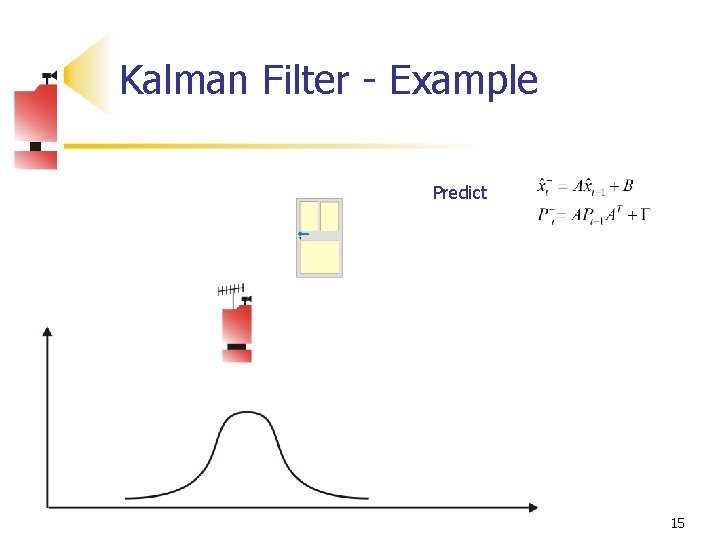

Kalman Filter - Example Predict 15

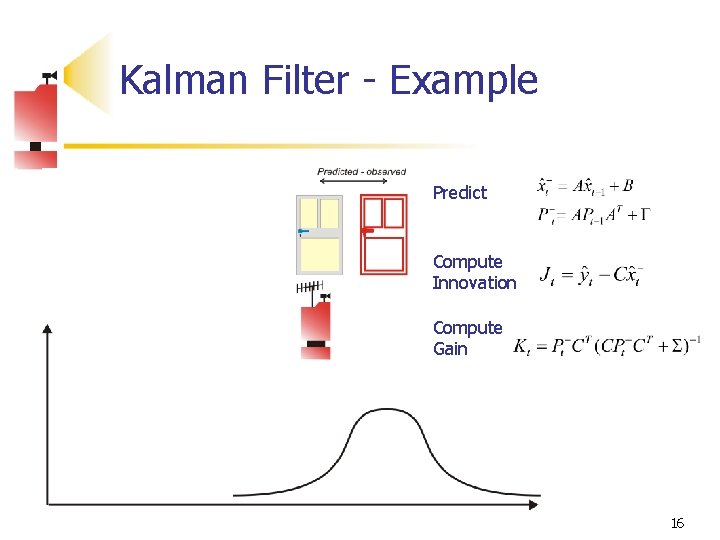

Kalman Filter - Example Predict Compute Innovation Compute Gain 16

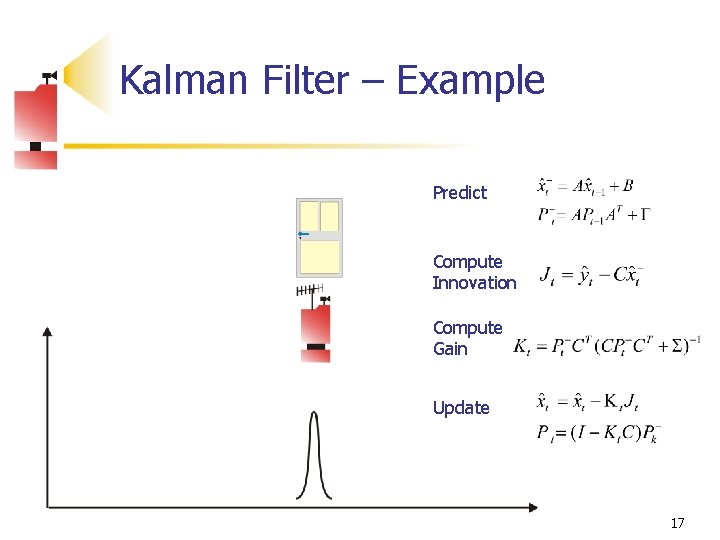

Kalman Filter – Example Predict Compute Innovation Compute Gain Update 17

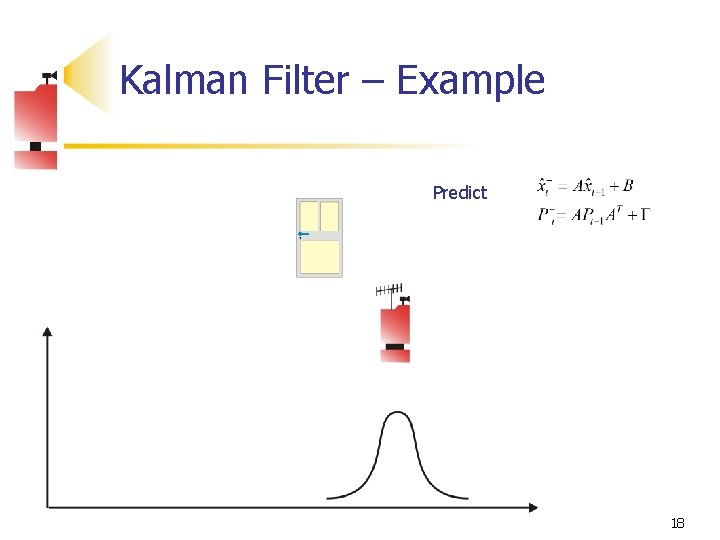

Kalman Filter – Example Predict 18

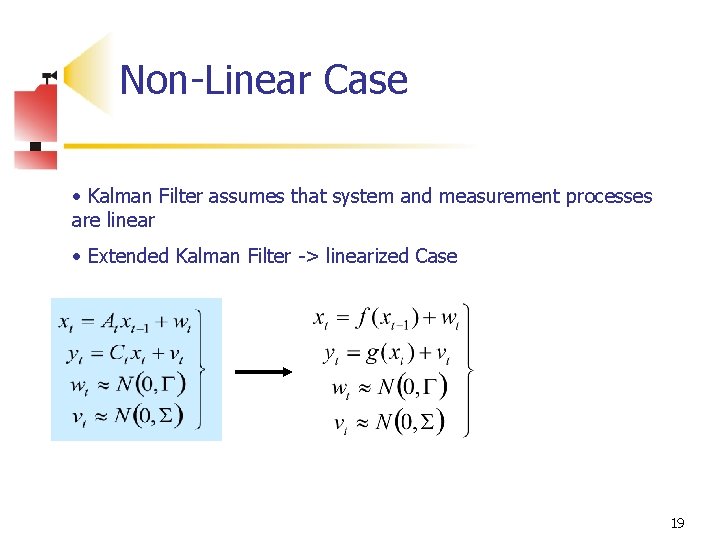

Non-Linear Case • Kalman Filter assumes that system and measurement processes are linear • Extended Kalman Filter -> linearized Case 19

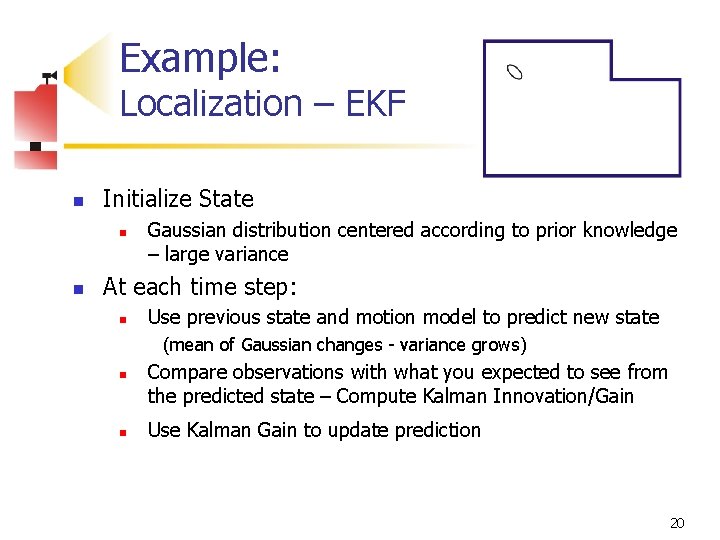

Example: Localization – EKF n Initialize State n n Gaussian distribution centered according to prior knowledge – large variance At each time step: n Use previous state and motion model to predict new state (mean of Gaussian changes - variance grows) n n Compare observations with what you expected to see from the predicted state – Compute Kalman Innovation/Gain Use Kalman Gain to update prediction 20

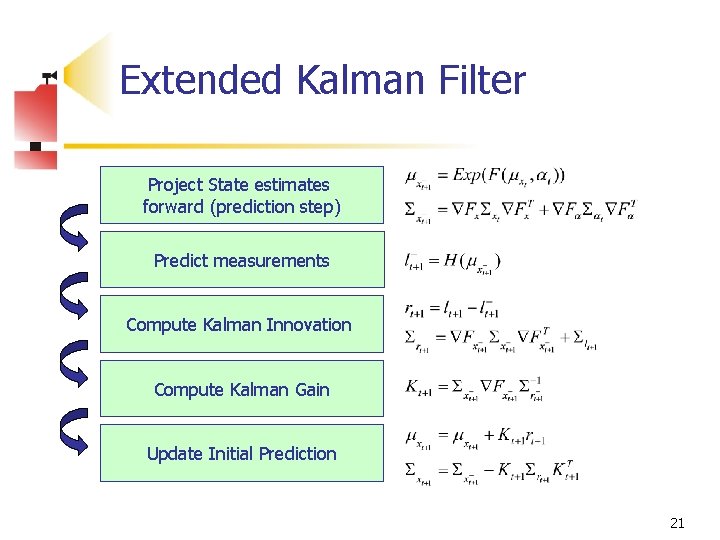

Extended Kalman Filter Project State estimates forward (prediction step) Predict measurements Compute Kalman Innovation Compute Kalman Gain Update Initial Prediction 21

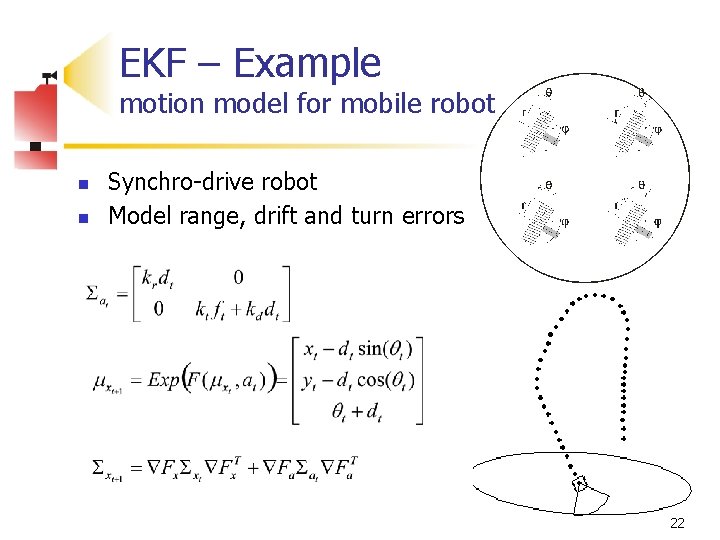

EKF – Example motion model for mobile robot n n Synchro-drive robot Model range, drift and turn errors 22

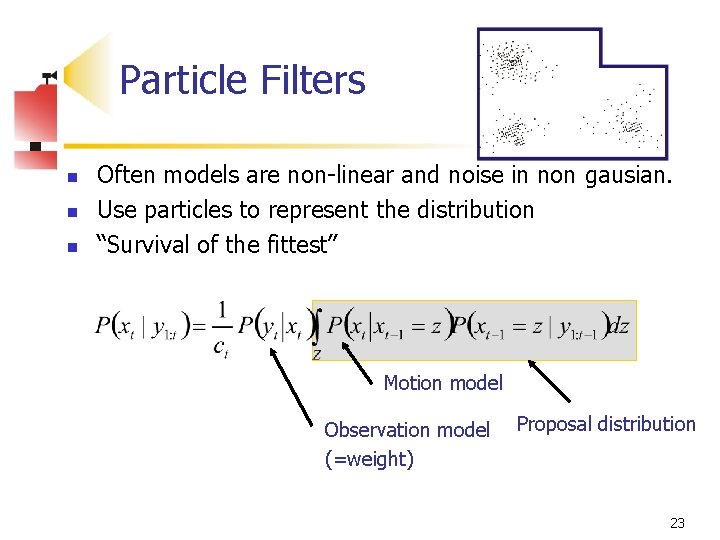

Particle Filters n n n Often models are non-linear and noise in non gausian. Use particles to represent the distribution “Survival of the fittest” Motion model Observation model (=weight) Proposal distribution 23

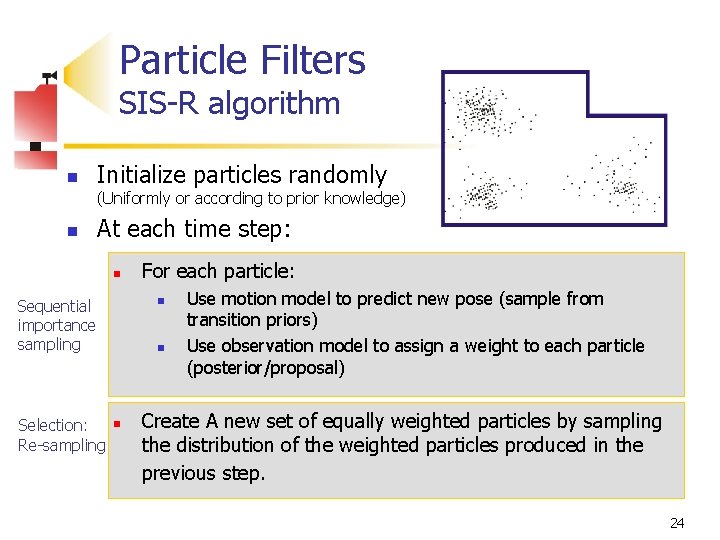

Particle Filters SIS-R algorithm n Initialize particles randomly (Uniformly or according to prior knowledge) n At each time step: n Sequential importance sampling Selection: Re-sampling For each particle: n n n Use motion model to predict new pose (sample from transition priors) Use observation model to assign a weight to each particle (posterior/proposal) Create A new set of equally weighted particles by sampling the distribution of the weighted particles produced in the previous step. 24

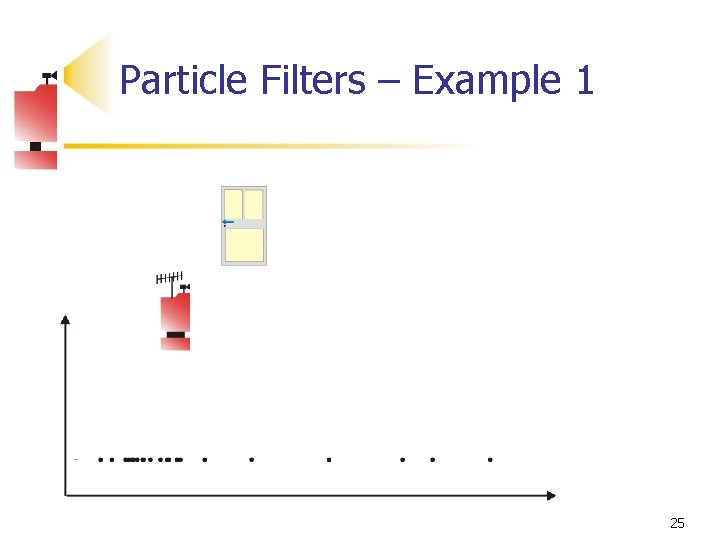

Particle Filters – Example 1 25

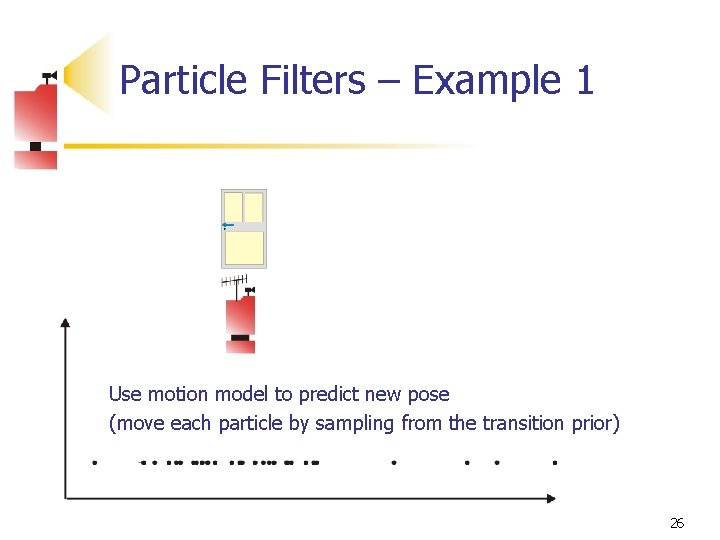

Particle Filters – Example 1 Use motion model to predict new pose (move each particle by sampling from the transition prior) 26

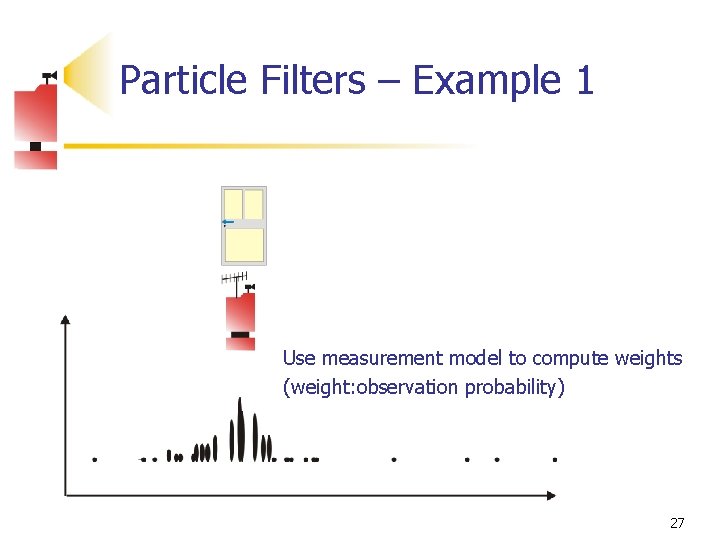

Particle Filters – Example 1 Use measurement model to compute weights (weight: observation probability) 27

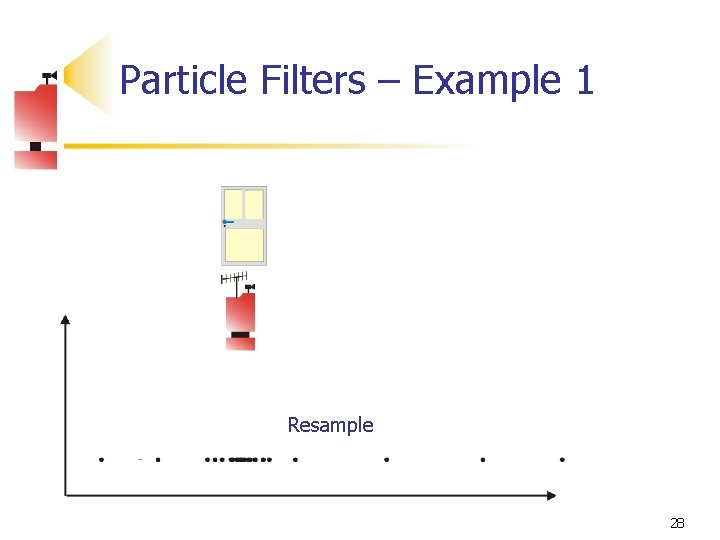

Particle Filters – Example 1 Resample 28

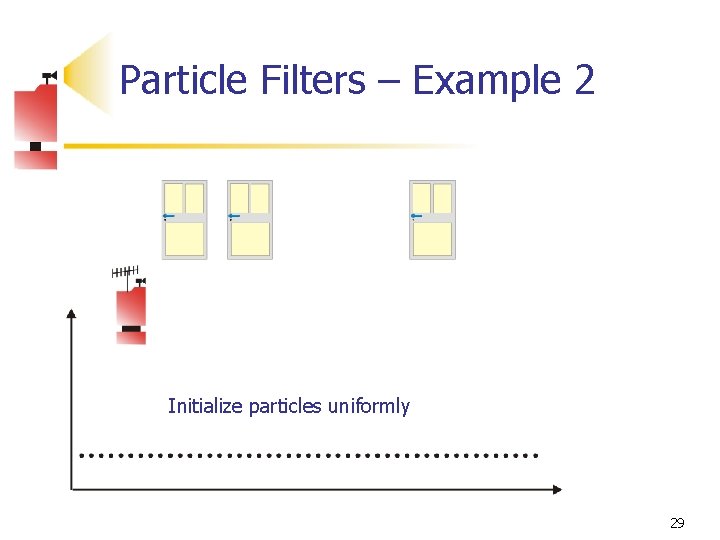

Particle Filters – Example 2 Initialize particles uniformly 29

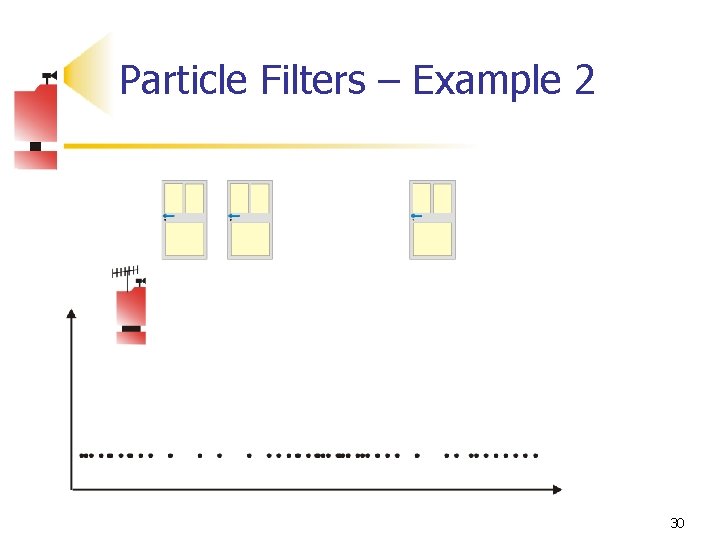

Particle Filters – Example 2 30

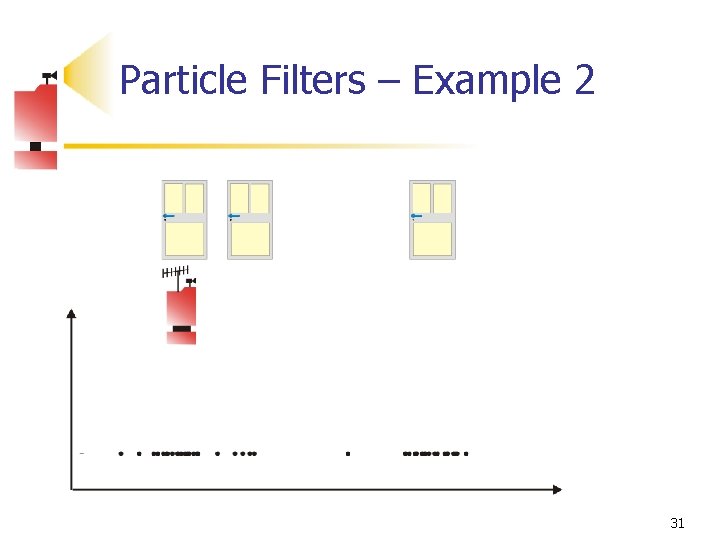

Particle Filters – Example 2 31

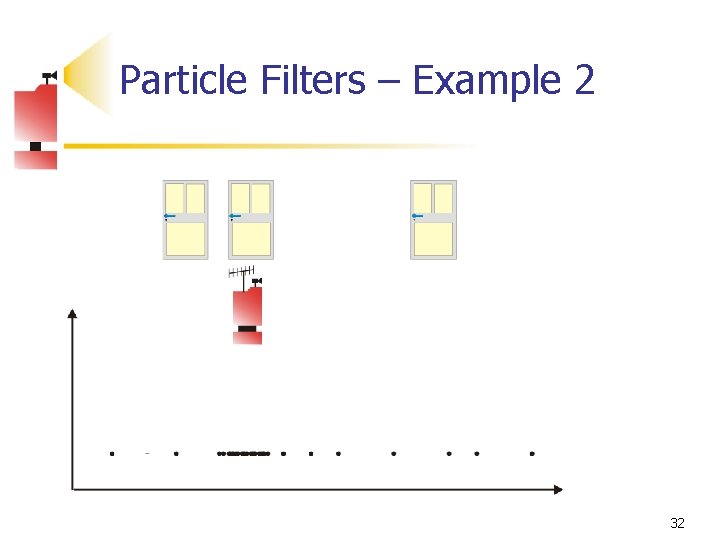

Particle Filters – Example 2 32

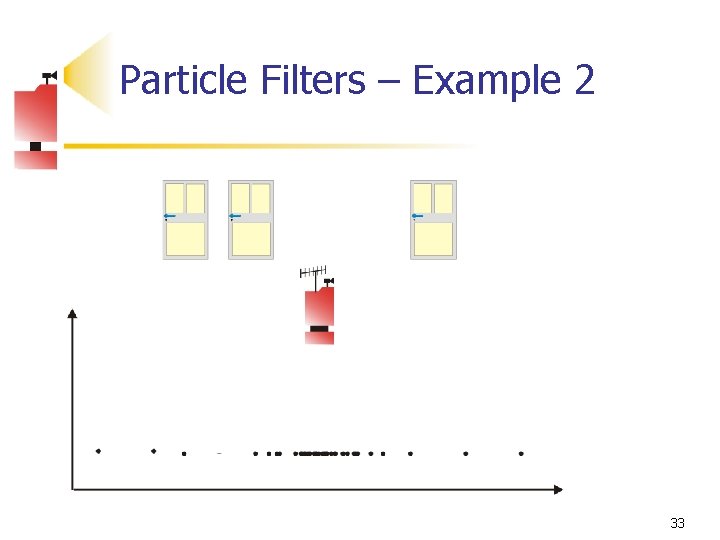

Particle Filters – Example 2 33

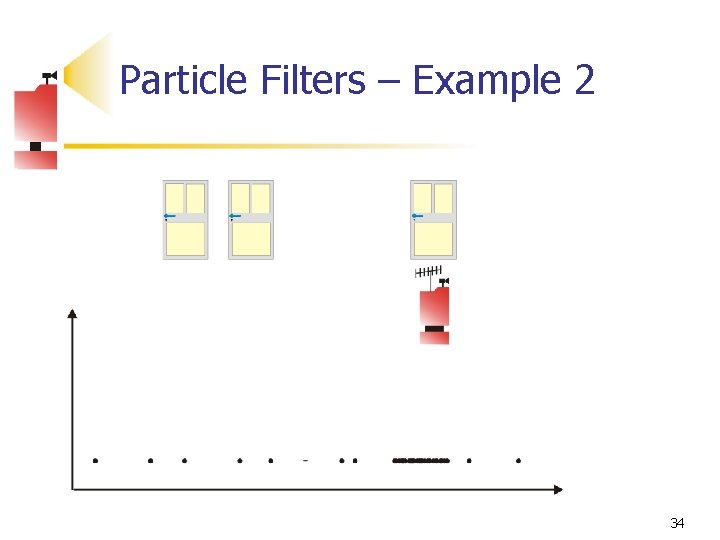

Particle Filters – Example 2 34

Continuous State Approaches ì Perform very accurately if the inputs are precise (performance is optimal with respect to any criterion in the linear case). ì Computational efficiency. î Requirement that the initial state is known. î Inability to recover from catastrophic failures î Inability to track Multiple Hypotheses the state (Gaussians have only one mode) 35

Discrete State Approaches ì Ability (to some degree) to operate even when its initial pose is unknown (start from uniform distribution). ì Ability to deal with noisy measurements. ì Ability to represent ambiguities (multi modal distributions). î Computational time scales heavily with the number of possible states (dimensionality of the grid, number of samples, size of the map). î Accuracy is limited by the size of the grid cells/number of particles-sampling method. î Required number of particles is unknown 36

Thanks! Thanks for your attention! 37

- Slides: 37