Hadoop and DB 2 11 both sides of

Hadoop and DB 2 11 – both sides of the story Emil Kotrc, CA Technologies Session Code: E 7 14: 00 -15: 00 Tuesday, 17 th November 2015 Platform: DB 2 for z/OS

Abstract DB 2 for z/OS and Hadoop are very good friends since the version 11. DB 2 11 can easily access the data stored in the Hadoop/IBM Big. Insights cluster as well as submit a map/reduce job and read the results back via IBM supplied user defined functions. On the other hand, a typical Hadoop installation can access the DB 2 data using the standard interfaces out of the box. In this presentation we will show and discuss the possibilities of one or two ways integration of DB 2 for z/OS and Hadoop. 2

Objectives • A very brief introduction to Hadoop, IBM Big. Insights, and related technologies. • Show DB 2 can access data in Hadoop or submit jobs to Hadoop using IBM supplied UDFs. Explain the implementation steps. • Show Hadoop can access data in DB 2 using Sqoop and other tools. • Discuss possible integration of DB 2 and Hadoop and show some examples. 3

Agenda • • • Motivation Hadoop overview – HDFS, map reduce DB 2 and Hadoop and DB 2 Next Big Thing… Spark? References 4

MOTIVATION 5

Motivation • Two way integration of DB 2 for z/OS and Hadoop • Data from Hadoop to DB 2 • Data from DB 2 to Hadoop • IBM DB 2 11 Flash Books 6

HADOOP OVERVIEW 7

Hadoop Overview • Hadoop – open source framework for 1. Storage, and 2. Large scale parallel processing • Batch oriented • Designed to run on commodity hardware • All components can cope with hardware failures • Implemented in Java, Apache License, First release in 2007 • Based on two Google’s papers • The Google File System, Sanjay Ghemawat, Howard Gobioff, and Shun-Tak Leung, 2003 • Map. Reduce: Simplified Data Processing on Large Clusters, Jeffrey Dean and Sanjay Ghemawat, 2004 8

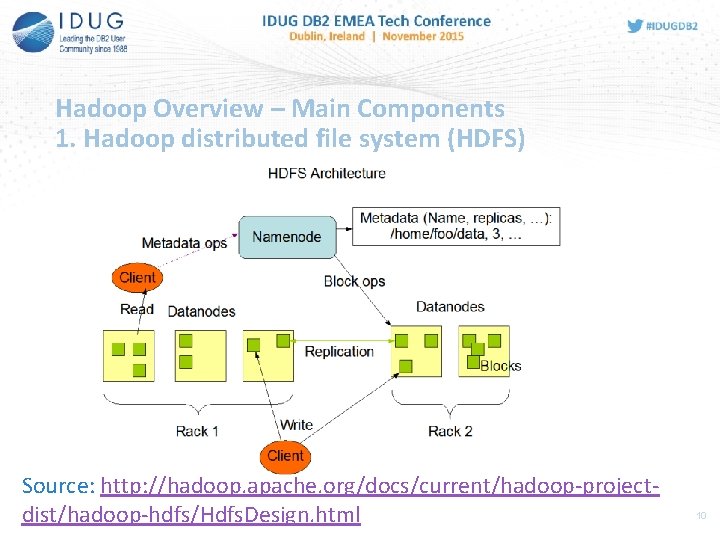

Hadoop Overview – Main Components 1. Hadoop distributed file system (HDFS) • Data stored in blocks and replicated across multiple nodes • Rack awarness • HDFS cluster consists of • Name. Node – keeping the metadata • Data. Nodes – serving block of data • Interfaces - HDFS shell, REST API • • $ $ hadoop fs –ls /tmp hadoop fs –cat file hdfs –cat file hadoop fs –copy. From. Local local. csv remote. csv • • http: //<HOST>: <HTTP_PORT>/webhdfs/v 1/<PATH>? op=<OPERATION> $ curl --user: password http: //server: 14000/webhdfs/v 1/tmp/test. csv? op=OPEN 9

Hadoop Overview – Main Components 1. Hadoop distributed file system (HDFS) Source: http: //hadoop. apache. org/docs/current/hadoop-projectdist/hadoop-hdfs/Hdfs. Design. html 10

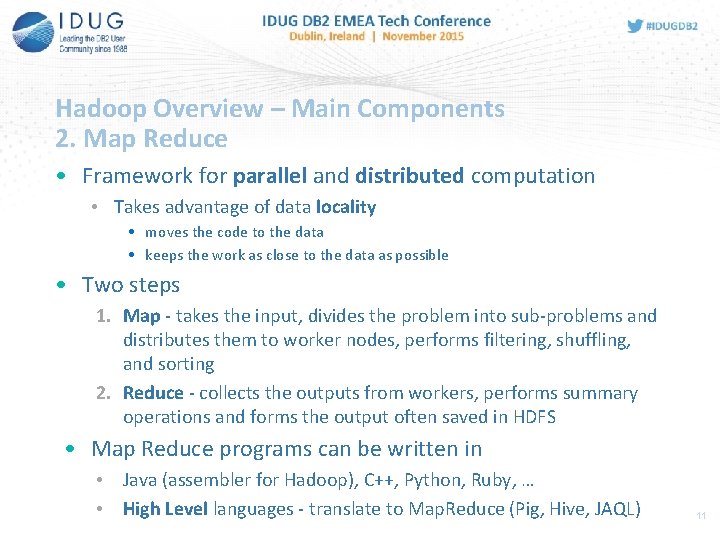

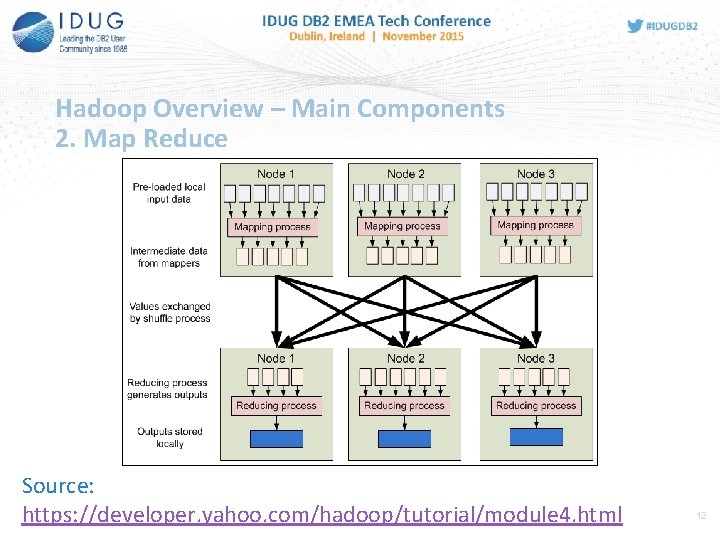

Hadoop Overview – Main Components 2. Map Reduce • Framework for parallel and distributed computation • Takes advantage of data locality • moves the code to the data • keeps the work as close to the data as possible • Two steps 1. Map - takes the input, divides the problem into sub-problems and distributes them to worker nodes, performs filtering, shuffling, and sorting 2. Reduce - collects the outputs from workers, performs summary operations and forms the output often saved in HDFS • Map Reduce programs can be written in • Java (assembler for Hadoop), C++, Python, Ruby, … • High Level languages - translate to Map. Reduce (Pig, Hive, JAQL) 11

Hadoop Overview – Main Components 2. Map Reduce Source: https: //developer. yahoo. com/hadoop/tutorial/module 4. html 12

Hadoop Overview Related Projects • Oozie – workflow scheduler • Avro – data serialization system • Hive – data warehouse for Hadoop • Provides SQL-like language (Hive. QL) • HBase – Big. Table (Google) like capabilities for Hadoop • Non-relational model • Sqoop – application for transferring data between relational databases and Hadoop • Flume – service for collecting, aggregating, and moving large amounts of data • Pig – high level platform for Map Reduce (Pig Latin) 13

DB 2 AND HADOOP 14

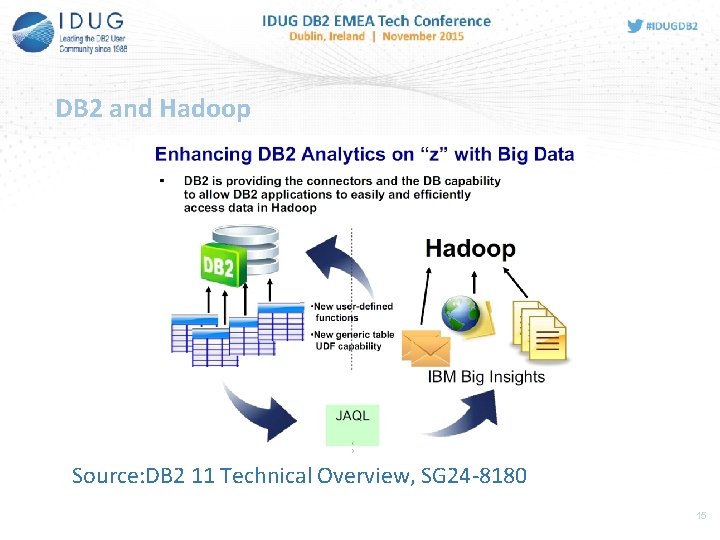

DB 2 and Hadoop Source: DB 2 11 Technical Overview, SG 24 -8180 15

DB 2 and Hadoop Overview • IBM Provides DB 2 connectors for IBM Big. Insights • Implemented as two DB 2 UDFs – part of DB 2 11 Base installation • Integration of DB 2 with Big Data analytics • Think of it as of Proof of Concept • DB 2 can submit a Map. Reduce job to Hadoop/Big. Insights • Using a JAQL query via JAQL_SUBMIT() • DB 2 can read the data from HDFS • Can be used in SQL as relational data via HDFS_READ() • Combined - DB 2 can submit a Map. Reduce job, read the outputs back, process them using SQL. 16

DB 2 and Hadoop Big. Insights • IBM Big. Data platform based on Hadoop, Spark • Runs on x 86 -64, POWER, z Systems (z. Linux) • Quick Start Edition – Free, Downloadable, Non-production • VMware image • Native Installation for Linux • Version 3. 0 vs 4. 0 • IBM Open Platform with Apache Hadoop • IBM Big. Insights for Apache Hadoop v 4 • Value added features for analytics (Data scientist, Analyst, Enterprise management) • Migration from 3. 0 to 4. 0 (? ), should come in 4. 1 (? ) 17

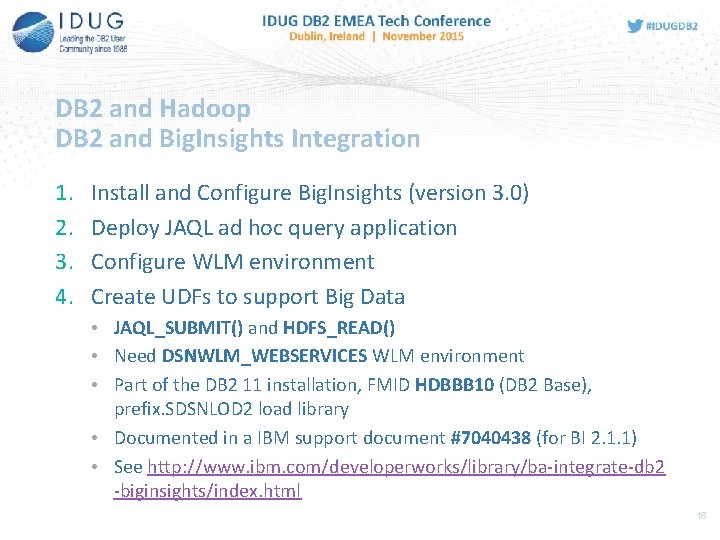

DB 2 and Hadoop DB 2 and Big. Insights Integration 1. 2. 3. 4. Install and Configure Big. Insights (version 3. 0) Deploy JAQL ad hoc query application Configure WLM environment Create UDFs to support Big Data • JAQL_SUBMIT() and HDFS_READ() • Need DSNWLM_WEBSERVICES WLM environment • Part of the DB 2 11 installation, FMID HDBBB 10 (DB 2 Base), prefix. SDSNLOD 2 load library • Documented in a IBM support document #7040438 (for BI 2. 1. 1) • See http: //www. ibm. com/developerworks/library/ba-integrate-db 2 -biginsights/index. html 18

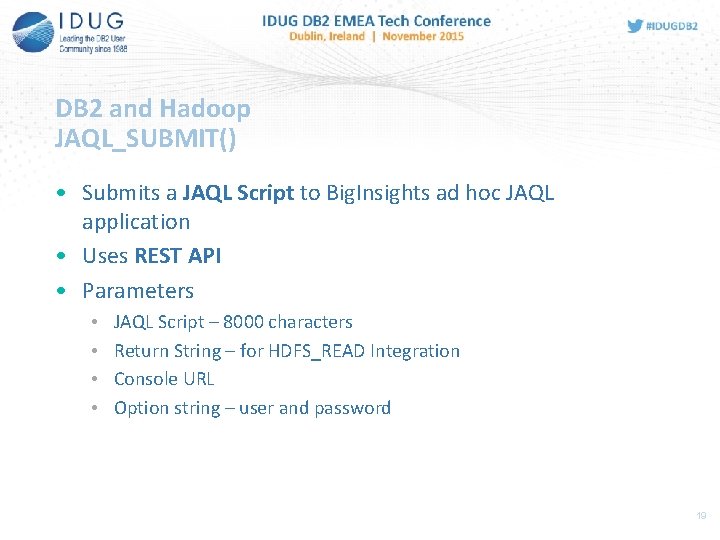

DB 2 and Hadoop JAQL_SUBMIT() • Submits a JAQL Script to Big. Insights ad hoc JAQL application • Uses REST API • Parameters • • JAQL Script – 8000 characters Return String – for HDFS_READ Integration Console URL Option string – user and password 19

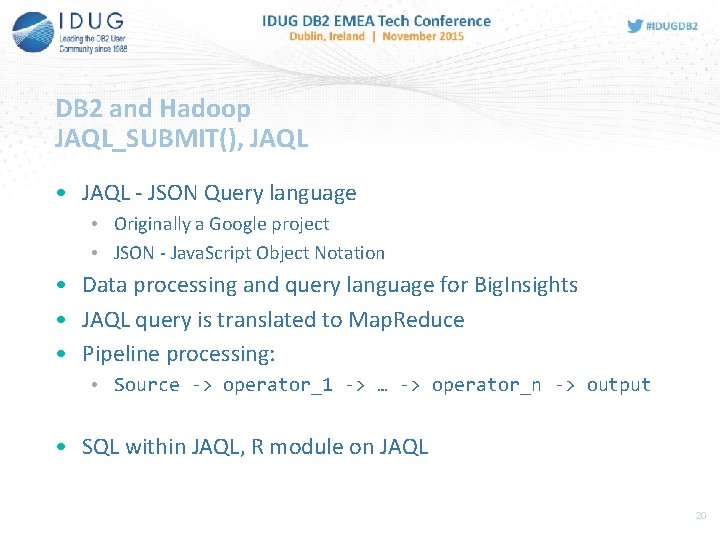

DB 2 and Hadoop JAQL_SUBMIT(), JAQL • JAQL - JSON Query language • Originally a Google project • JSON - Java. Script Object Notation • Data processing and query language for Big. Insights • JAQL query is translated to Map. Reduce • Pipeline processing: • Source -> operator_1 -> … -> operator_n -> output • SQL within JAQL, R module on JAQL 20

![DB 2 and Hadoop JAQL_SUBMIT(), JAQL example [ {name: ] "Jon Doe", income: 20000, DB 2 and Hadoop JAQL_SUBMIT(), JAQL example [ {name: ] "Jon Doe", income: 20000,](http://slidetodoc.com/presentation_image_h2/14c7bbada083db389cffe62200fbdeea/image-21.jpg)

DB 2 and Hadoop JAQL_SUBMIT(), JAQL example [ {name: ] "Jon Doe", income: 20000, mgr: false}, "Vince Wayne", income: 32500, mgr: false}, "Jane Dean", income: 72000, mgr: true}, "Alex Smith", income: 25000, mgr: false} read(json. Text("/tmp/employees. json")) -> filter not $. mgr -> sort by [$. income desc] -> write(del("/tmp/emplist. csv", { schema: schema { name, income }})); 21

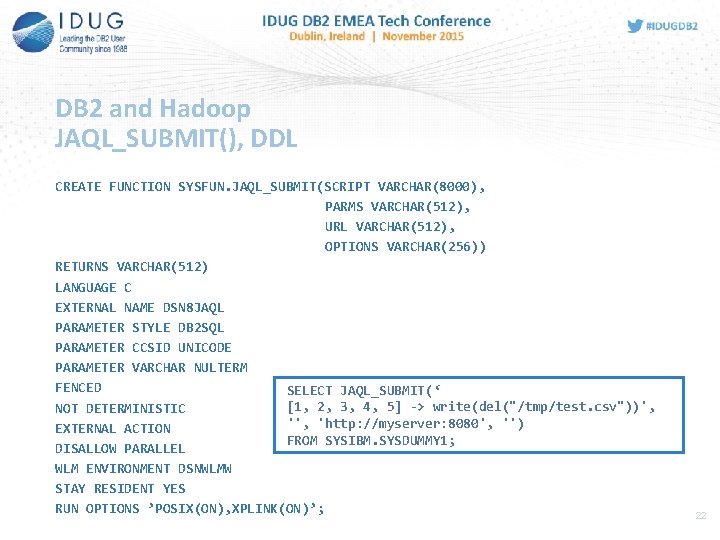

DB 2 and Hadoop JAQL_SUBMIT(), DDL CREATE FUNCTION SYSFUN. JAQL_SUBMIT(SCRIPT VARCHAR(8000), PARMS VARCHAR(512), URL VARCHAR(512), OPTIONS VARCHAR(256)) RETURNS VARCHAR(512) LANGUAGE C EXTERNAL NAME DSN 8 JAQL PARAMETER STYLE DB 2 SQL PARAMETER CCSID UNICODE PARAMETER VARCHAR NULTERM FENCED SELECT JAQL_SUBMIT(‘ [1, 2, 3, 4, 5] -> write(del("/tmp/test. csv"))', NOT DETERMINISTIC '', 'http: //myserver: 8080', '') EXTERNAL ACTION FROM SYSIBM. SYSDUMMY 1; DISALLOW PARALLEL WLM ENVIRONMENT DSNWLMW STAY RESIDENT YES RUN OPTIONS ’POSIX(ON), XPLINK(ON)’; 22

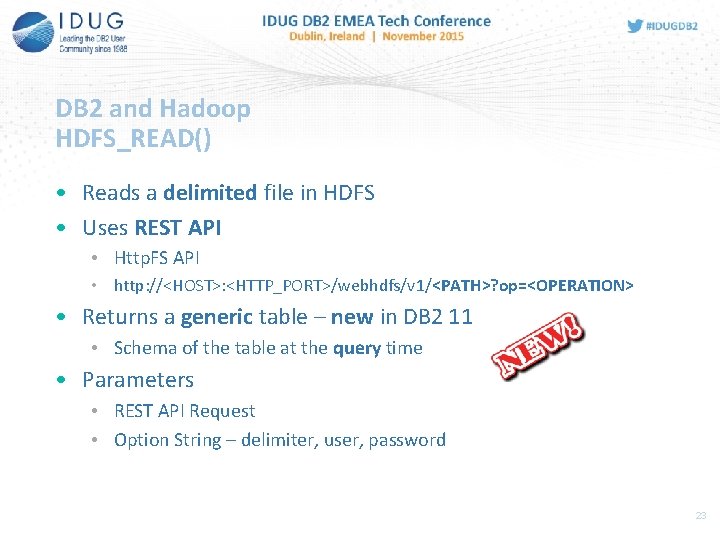

DB 2 and Hadoop HDFS_READ() • Reads a delimited file in HDFS • Uses REST API • Http. FS API • http: //<HOST>: <HTTP_PORT>/webhdfs/v 1/<PATH>? op=<OPERATION> • Returns a generic table – new in DB 2 11 • Schema of the table at the query time • Parameters • REST API Request • Option String – delimiter, user, password 23

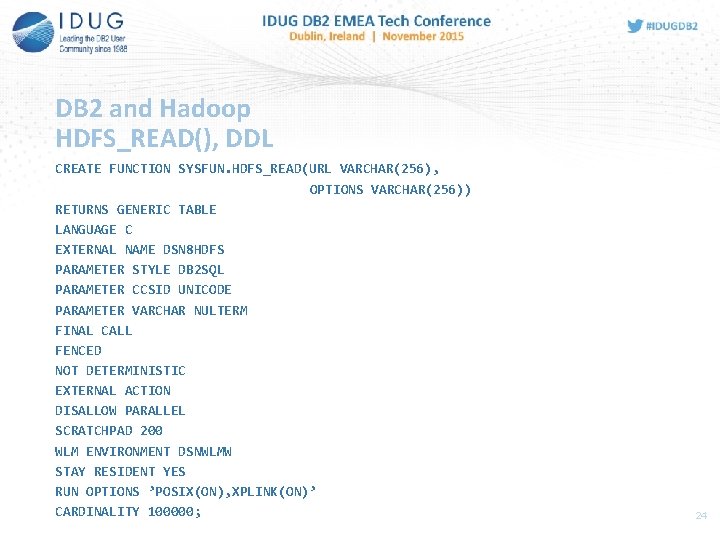

DB 2 and Hadoop HDFS_READ(), DDL CREATE FUNCTION SYSFUN. HDFS_READ(URL VARCHAR(256), OPTIONS VARCHAR(256)) RETURNS GENERIC TABLE LANGUAGE C EXTERNAL NAME DSN 8 HDFS PARAMETER STYLE DB 2 SQL PARAMETER CCSID UNICODE PARAMETER VARCHAR NULTERM FINAL CALL FENCED NOT DETERMINISTIC EXTERNAL ACTION DISALLOW PARALLEL SCRATCHPAD 200 WLM ENVIRONMENT DSNWLMW STAY RESIDENT YES RUN OPTIONS ’POSIX(ON), XPLINK(ON)’ CARDINALITY 100000; 24

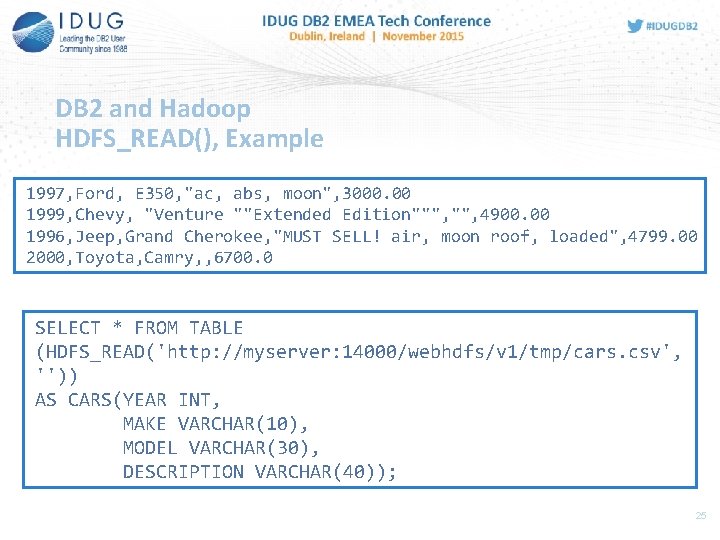

DB 2 and Hadoop HDFS_READ(), Example 1997, Ford, E 350, "ac, abs, moon", 3000. 00 1999, Chevy, "Venture ""Extended Edition""", 4900. 00 1996, Jeep, Grand Cherokee, "MUST SELL! air, moon roof, loaded", 4799. 00 2000, Toyota, Camry, , 6700. 0 SELECT * FROM TABLE (HDFS_READ('http: //myserver: 14000/webhdfs/v 1/tmp/cars. csv', '')) AS CARS(YEAR INT, MAKE VARCHAR(10), MODEL VARCHAR(30), DESCRIPTION VARCHAR(40)); 25

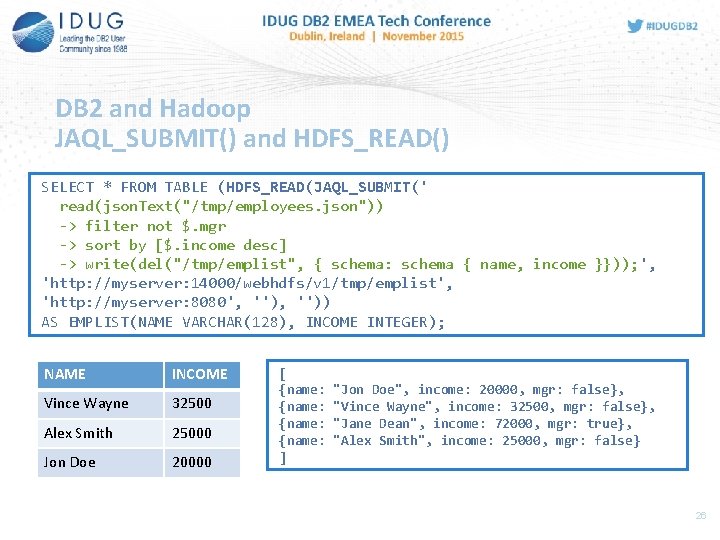

DB 2 and Hadoop JAQL_SUBMIT() and HDFS_READ() SELECT * FROM TABLE (HDFS_READ(JAQL_SUBMIT(' read(json. Text("/tmp/employees. json")) -> filter not $. mgr -> sort by [$. income desc] -> write(del("/tmp/emplist", { schema: schema { name, income }})); ', 'http: //myserver: 14000/webhdfs/v 1/tmp/emplist', 'http: //myserver: 8080', '')) AS EMPLIST(NAME VARCHAR(128), INCOME INTEGER); NAME INCOME Vince Wayne 32500 Alex Smith 25000 Jon Doe 20000 [ {name: ] "Jon Doe", income: 20000, mgr: false}, "Vince Wayne", income: 32500, mgr: false}, "Jane Dean", income: 72000, mgr: true}, "Alex Smith", income: 25000, mgr: false} 26

DB 2 and Hadoop Key points for integration • Lessons learned from JAQL_SUBMIT() and HDFS_READ() • Hadoop and related projects • REST APIs • Map Reduce high level languages • DB 2 • external UDFs • DB 2 11 generic table UDF 27

DB 2 and Hadoop Generic functions • New Feature of DB 2 11 • Generic functions return a generic table • Schema at the query time • Can be implemented as an external UDF • Can be implemented in C • See header file SDSNC. H(DSNUDF) for Structures used for Generic Table UDF • APAR PI 42256 providing samples 28

DB 2 and Hadoop Big. Insights and Big. SQL • Big. SQL – provides SQL access to data across Big. Insights • Supports JDBC and ODBC • Query engine for Hadoop data • Table is like a view to Hadoop data (files in HDFS, Hive, …) • Local query vs Map Reduce • Competition – Cloudera Impala, Hive (Stinger), Oracle Big Data SQL, Pivotal HAWQ (Greenplum), Teradata SQL-H, … 29

HADOOP AND DB 2 30

Hadoop and DB 2 Overview • Sqoop – SQL to Hadoop • Flume - collecting, aggregating and moving large amounts of data • UNLOAD, LOAD • HDFS shell, … • IBM and 3 rd party • Big. Insights – Data Import, Jaql JDBC • Veristorm v. Storm • IBM System z Connector for Hadoop 31

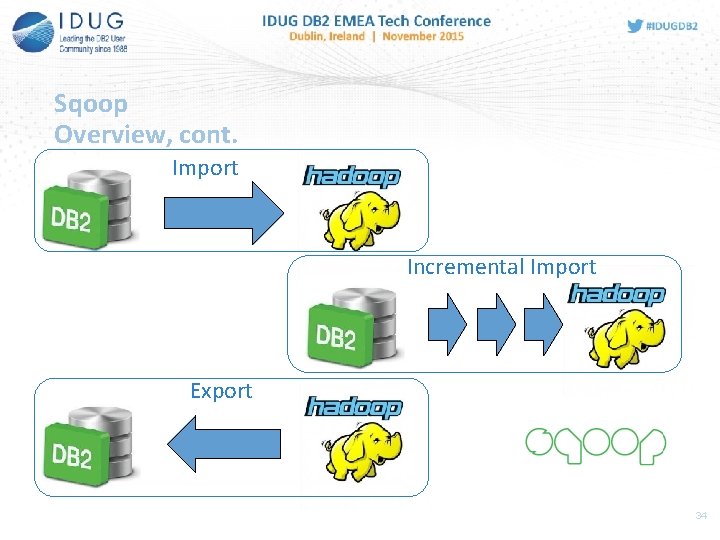

Sqoop Overview • Originally SQL To Hadoop • Transferring bulk data between Hadoop and RDBMS • Import data • incremental loads or free-form SQL query • to text/binary files or to Hive/Hbase • Import from mainframe • Export data from Hadoop to RDBMS • Uses Map Reduce framework • Parallel operation • Fault tolerance 32

![Sqoop Overview, cont. • Command line interface • sqoop COMMAND [generic and specific args] Sqoop Overview, cont. • Command line interface • sqoop COMMAND [generic and specific args]](http://slidetodoc.com/presentation_image_h2/14c7bbada083db389cffe62200fbdeea/image-33.jpg)

Sqoop Overview, cont. • Command line interface • sqoop COMMAND [generic and specific args] • • • Implemented in Java Top-level Apache project since 2012 Licensed under Apache License 2. 0 Sqoop stable version > 1. 0 Sqoop 2 – not intended for production 33

Sqoop Overview, cont. Import Incremental Import Export 34

Sqoop Configuration • Built-in support for several databases • DB 2 not included • Connectors - Specific vs Generic, 3 rd party • Direct connectors • JDBC interface • Driver vs connection manager • src/java/org/apache/sqoop/manager/Db 2 Manager. java • Copy JARs to $SQOOP_HOME/lib • DB 2 JDBC Driver • DB 2 for z/OS License JAR 35

Sqoop Import from DB 2 Import • Imports data in a table-centric fashion • Selecting data using parameters (--table, --column, --where), • Or using free form queries (--query) • Data can be imported as • Text files (default) • Null values as “null” • Binary files • Sequence file • Avro • Supports compression • A Java class generated to hold a row 36

Sqoop Import from DB 2, cont. 1. Examine the table • List of columns, types 2. Create table-specific class to hold a row • Generated code • JAR file 3. Map Reduce launched • Dividing query across multiple nodes • Splitting column • Guessing a column (primary key) or --split-by • -m 1 avoids splitting 37

Sqoop Import from DB 2, Considerations • Consistency – multiple parallel jobs • • Map tasks reading from database run in parallel as separate jobs Thus do not share a single transaction Disable updates Transaction isolation – read committed or read uncommitted (-relaxed-isolation) • Direct-mode imports – only for some systems • Must be explicitly enabled 38

Sqoop Import from DB 2, Considerations • Text based imports can be used in streaming or map reduce as Text. Input. Format • Generated class by Sqoop can be used to parsing the data • Importing to Hive – Hive schema based on source schema • Importing large objects • In separate files (lobfile), --inline-lob-limit 39

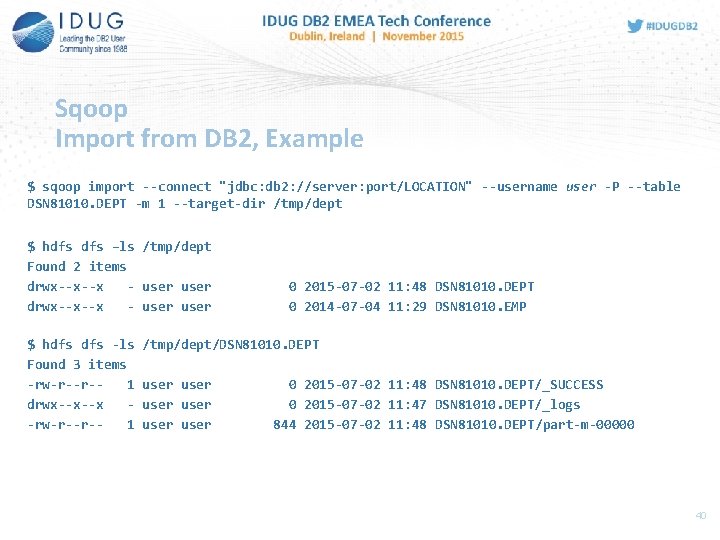

Sqoop Import from DB 2, Example $ sqoop import --connect "jdbc: db 2: //server: port/LOCATION" --username user -P --table DSN 81010. DEPT -m 1 --target-dir /tmp/dept $ hdfs –ls /tmp/dept Found 2 items drwx--x--x - user $ hdfs -ls Found 3 items -rw-r--r-1 drwx--x--x -rw-r--r-1 0 2015 -07 -02 11: 48 DSN 81010. DEPT 0 2014 -07 -04 11: 29 DSN 81010. EMP /tmp/dept/DSN 81010. DEPT user user 0 2015 -07 -02 11: 48 DSN 81010. DEPT/_SUCCESS 0 2015 -07 -02 11: 47 DSN 81010. DEPT/_logs 844 2015 -07 -02 11: 48 DSN 81010. DEPT/part-m-00000 40

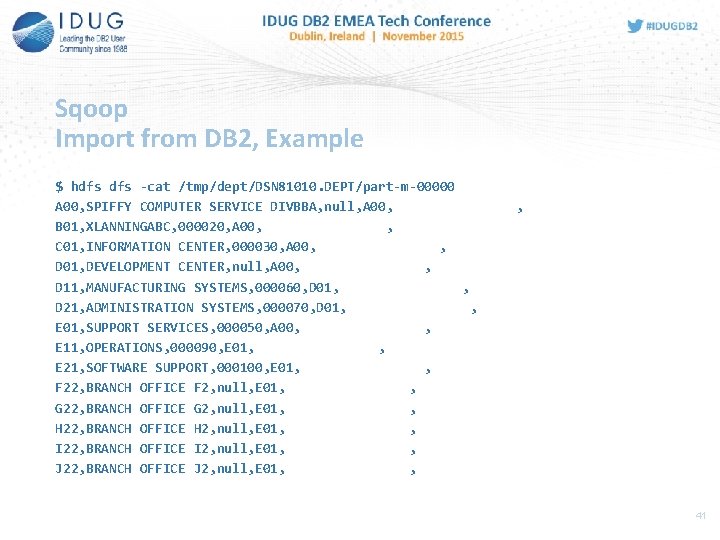

Sqoop Import from DB 2, Example $ hdfs -cat /tmp/dept/DSN 81010. DEPT/part-m-00000 A 00, SPIFFY COMPUTER SERVICE DIVBBA, null, A 00, B 01, XLANNINGABC, 000020, A 00, , C 01, INFORMATION CENTER, 000030, A 00, , D 01, DEVELOPMENT CENTER, null, A 00, , D 11, MANUFACTURING SYSTEMS, 000060, D 01, , D 21, ADMINISTRATION SYSTEMS, 000070, D 01, , E 01, SUPPORT SERVICES, 000050, A 00, , E 11, OPERATIONS, 000090, E 01, , E 21, SOFTWARE SUPPORT, 000100, E 01, , F 22, BRANCH OFFICE F 2, null, E 01, , G 22, BRANCH OFFICE G 2, null, E 01, , H 22, BRANCH OFFICE H 2, null, E 01, , I 22, BRANCH OFFICE I 2, null, E 01, , J 22, BRANCH OFFICE J 2, null, E 01, , , 41

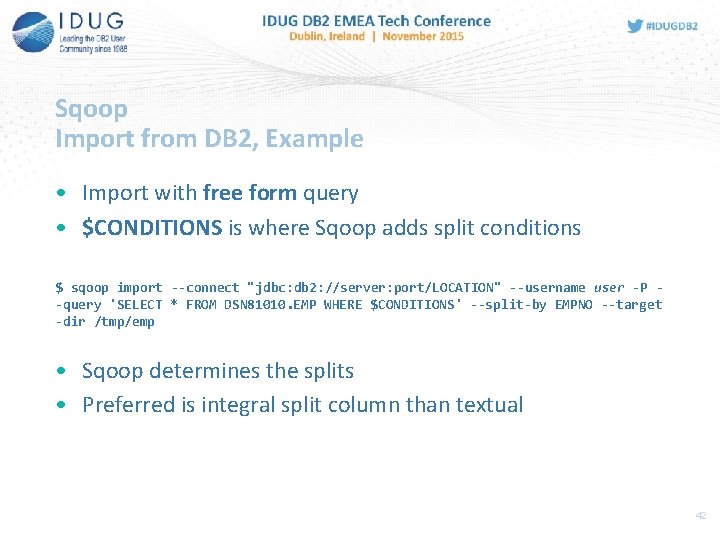

Sqoop Import from DB 2, Example • Import with free form query • $CONDITIONS is where Sqoop adds split conditions $ sqoop import --connect "jdbc: db 2: //server: port/LOCATION" --username user -P -query 'SELECT * FROM DSN 81010. EMP WHERE $CONDITIONS' --split-by EMPNO --target -dir /tmp/emp • Sqoop determines the splits • Preferred is integral split column than textual 42

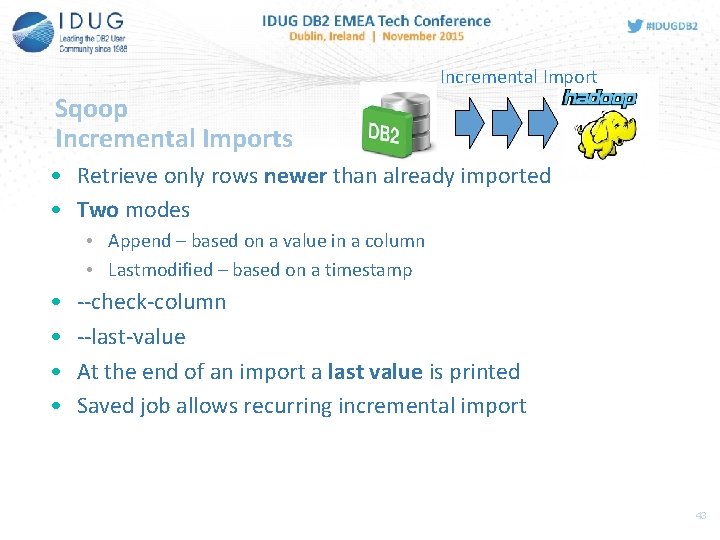

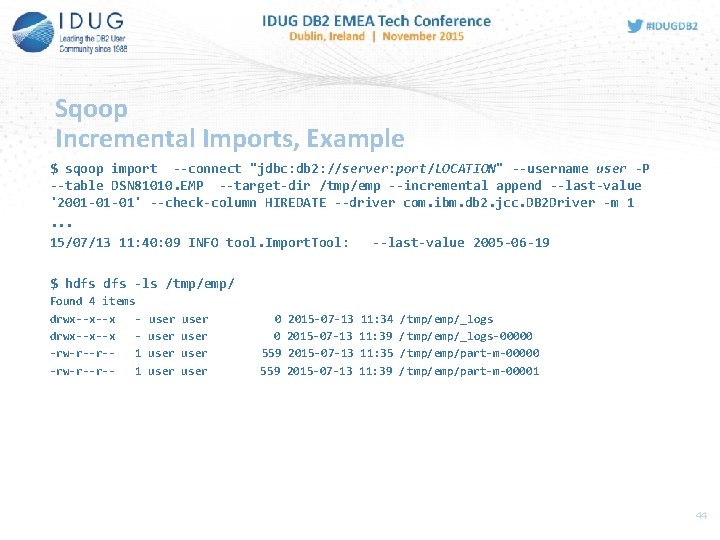

Incremental Import Sqoop Incremental Imports • Retrieve only rows newer than already imported • Two modes • Append – based on a value in a column • Lastmodified – based on a timestamp • • --check-column --last-value At the end of an import a last value is printed Saved job allows recurring incremental import 43

Sqoop Incremental Imports, Example $ sqoop import --connect "jdbc: db 2: //server: port/LOCATION" --username user -P --table DSN 81010. EMP --target-dir /tmp/emp --incremental append --last-value '2001 -01 -01' --check-column HIREDATE --driver com. ibm. db 2. jcc. DB 2 Driver -m 1. . . 15/07/13 11: 40: 09 INFO tool. Import. Tool: --last-value 2005 -06 -19 $ hdfs -ls /tmp/emp/ Found 4 items drwx--x--x -rw-r--r-1 user user 0 0 559 2015 -07 -13 11: 34 11: 39 11: 35 11: 39 /tmp/emp/_logs / tmp/emp/_logs-00000 /tmp/emp/part-m-00000 / tmp/emp/part-m-00001 44

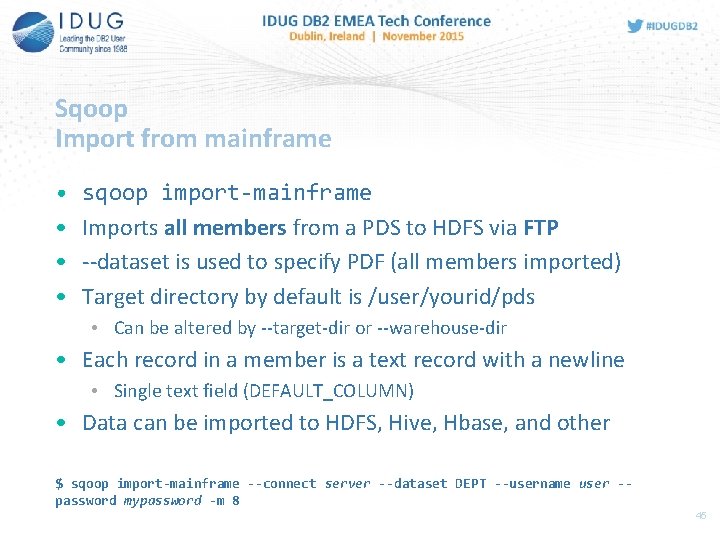

Sqoop Import from mainframe • • sqoop import-mainframe Imports all members from a PDS to HDFS via FTP --dataset is used to specify PDF (all members imported) Target directory by default is /user/yourid/pds • Can be altered by --target-dir or --warehouse-dir • Each record in a member is a text record with a newline • Single text field (DEFAULT_COLUMN) • Data can be imported to HDFS, Hive, Hbase, and other $ sqoop import-mainframe --connect server --dataset DEPT --username user -password mypassword -m 8 45

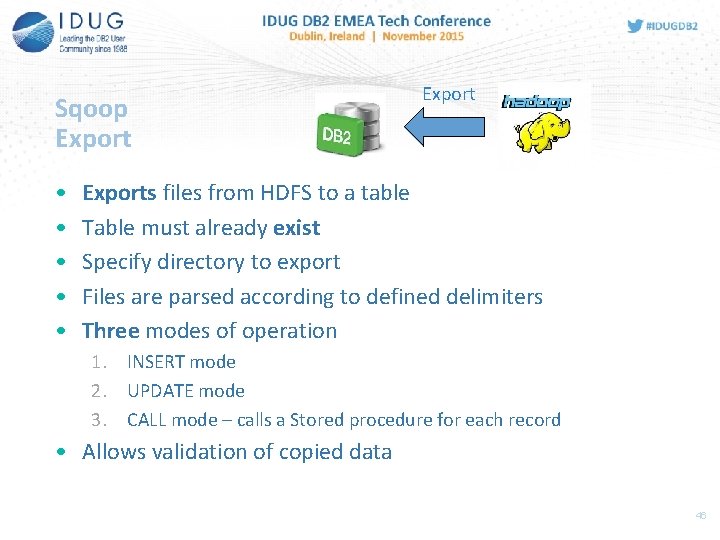

Sqoop Export • • • Exports files from HDFS to a table Table must already exist Specify directory to export Files are parsed according to defined delimiters Three modes of operation 1. INSERT mode 2. UPDATE mode 3. CALL mode – calls a Stored procedure for each record • Allows validation of copied data 46

Sqoop Export, cont. • 1. 2. 3. Works in similar steps as import: Metadata of the table Generated class to parse text files Insert into table 1. Map. Reduce job – batch insert statements 2. Degree of parallelism 47

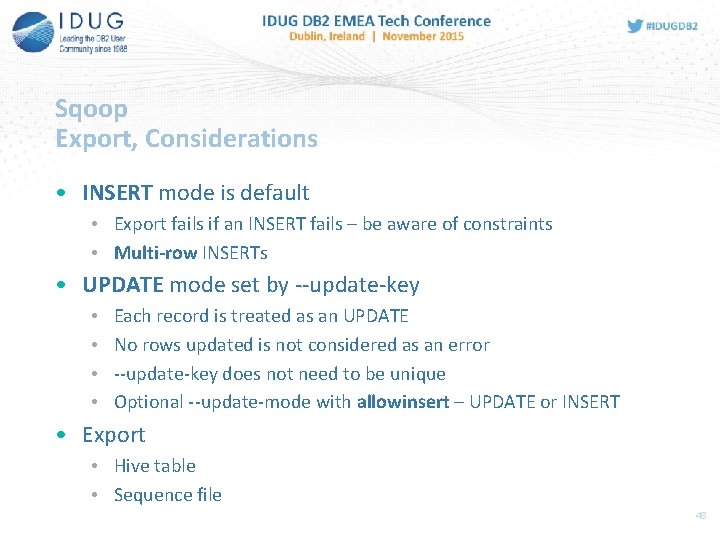

Sqoop Export, Considerations • INSERT mode is default • Export fails if an INSERT fails – be aware of constraints • Multi-row INSERTs • UPDATE mode set by --update-key • • Each record is treated as an UPDATE No rows updated is not considered as an error --update-key does not need to be unique Optional --update-mode with allowinsert – UPDATE or INSERT • Export • Hive table • Sequence file 48

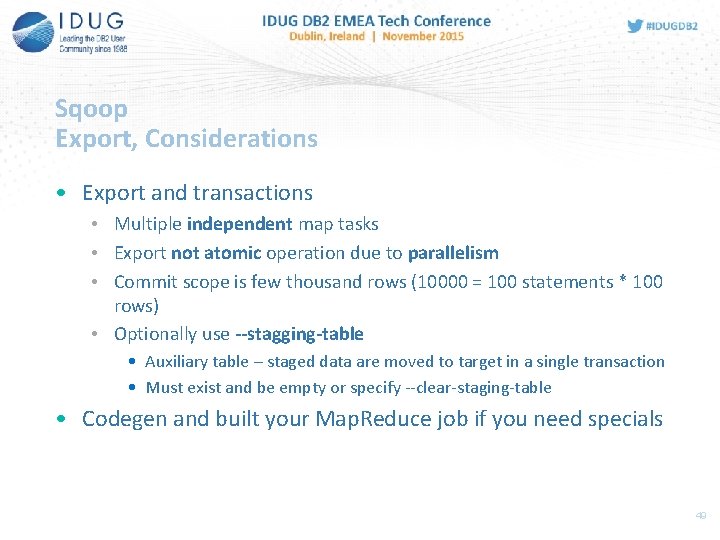

Sqoop Export, Considerations • Export and transactions • Multiple independent map tasks • Export not atomic operation due to parallelism • Commit scope is few thousand rows (10000 = 100 statements * 100 rows) • Optionally use --stagging-table • Auxiliary table – staged data are moved to target in a single transaction • Must exist and be empty or specify --clear-staging-table • Codegen and built your Map. Reduce job if you need specials 49

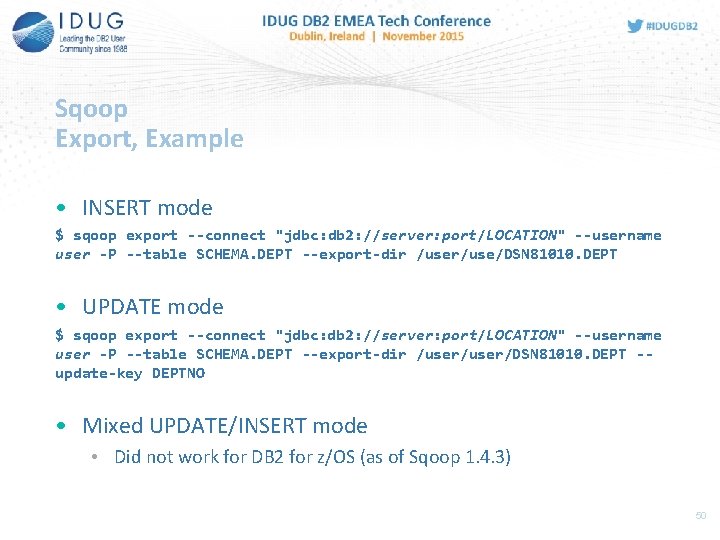

Sqoop Export, Example • INSERT mode $ sqoop export --connect "jdbc: db 2: //server: port/LOCATION" --username user -P --table SCHEMA. DEPT --export-dir /user/use/DSN 81010. DEPT • UPDATE mode $ sqoop export --connect "jdbc: db 2: //server: port/LOCATION" --username user -P --table SCHEMA. DEPT --export-dir /user/DSN 81010. DEPT -update-key DEPTNO • Mixed UPDATE/INSERT mode • Did not work for DB 2 for z/OS (as of Sqoop 1. 4. 3) 50

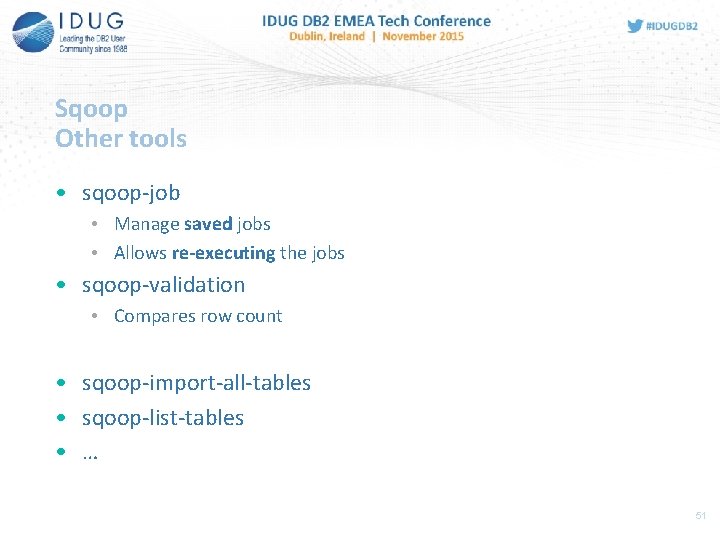

Sqoop Other tools • sqoop-job • Manage saved jobs • Allows re-executing the jobs • sqoop-validation • Compares row count • sqoop-import-all-tables • sqoop-list-tables • … 51

Flume • • • Collecting, aggregating, and moving data Designed to work with log data Based on streaming data flows Allows complex flows Uses transactional approach for reliability flume-ng-sql-source plugin allows to communicate with SQL databases • Despite Sqoop Flume streams data continues to run as new data becomes available 52

Big. Insights Imports from DB 2 • Data Import • JDBC based • Create a properties file • Copy DB 2 driver to /biginsights/oozie/shared. Libraries/db. Drivers/ HDFS directory • Jaql JDBC module • Big. SQL LOAD • Load tables or delimited files from external sources • Uses sqoop for RDBMS – LOAD HADOOP USING JDBC CONNECTION URL … WITH PARAMETERS 53

NEXT BIG THING… SPARK? 54

Spark • Open source cluster computing framework • Initial version in 2009, UC Berkeley • Implemented in Scala, Apache License 2. 0 • One of the Most active Open source project on Big Data • Requires distributed file system and cluster management • Can be configured on top of Hadoop technologies – HDFS, YARN, … • Uses multi-stage in-memory primitives instead of two-stage disk-based Map Reduce • Resilient Distributed Datasets (RDD) – logical collection of data, memory, disk, cache; Transformations and actions on RDD • Directed Acyclic Graph (DAG) – general execution graphs 55

Spark • Well suited for machine learning and streaming • MLIB – distributed machine learning framework • API for Scala, Java, python, … • Interactive shell – load, cache, transform, query • Spark. SQL – SQL on Spark (not based on Hive) • Data Frames - distributed collection of data organized into named columns • Spark. R – R Wrapper for Spark • Stay tuned… 56

REFERENCES 57

References • Integrating DB 2 for z/OS and Hadoop: http: //www. ibm. com/developerworks/library/ba-integrate -db 2 -biginsights/index. html • Description of HDFS_READ and JAQL_SUBMIT: http: //www 01. ibm. com/support/docview. wss? uid=swg 27040438&aid= 1 • https: //hadoop. apache. org/ • http: //sqoop. apache. org/ • http: //spark. apache. org/ 58

Summary • DB 2 for z/OS can exploit Hadoop via UDFs • JAQL_SUBMIT(), HDFS_READ() • Generic UDF support in DB 2 11 • Sqoop can be used to import/export DB 2 data • Import from DB 2 to Hadoop • Incremental Import from DB 2 to Hadoop • Export from Hadoop to DB 2 59

Q&A 60

Emil Kotrc CA Technologies Emil. Kotrc@ca. com Hadoop and DB 2 11 – both sides of the story Please fill out your session evaluation before leaving!

- Slides: 61