GMAC Global Memory for Accelerators Isaac Gelado PUMPS

GMAC Global Memory for Accelerators Isaac Gelado PUMPS Summer School - Barcelona

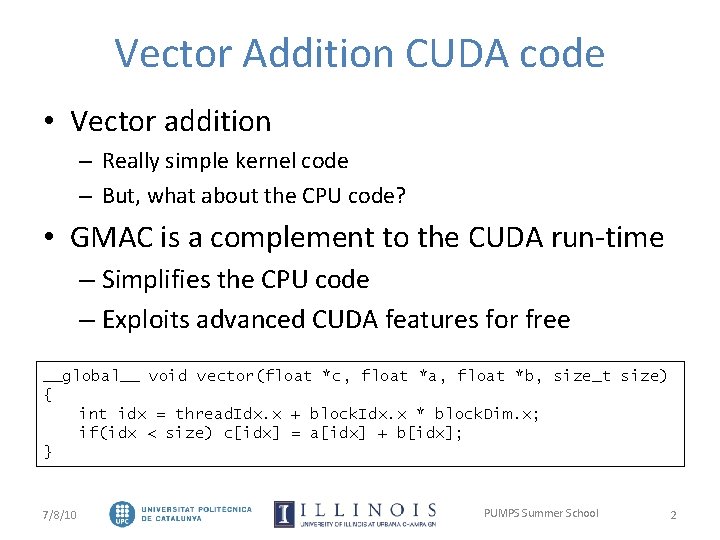

Vector Addition CUDA code • Vector addition – Really simple kernel code – But, what about the CPU code? • GMAC is a complement to the CUDA run-time – Simplifies the CPU code – Exploits advanced CUDA features for free __global__ void vector(float *c, float *a, float *b, size_t size) { int idx = thread. Idx. x + block. Idx. x * block. Dim. x; if(idx < size) c[idx] = a[idx] + b[idx]; } 7/8/10 PUMPS Summer School 2

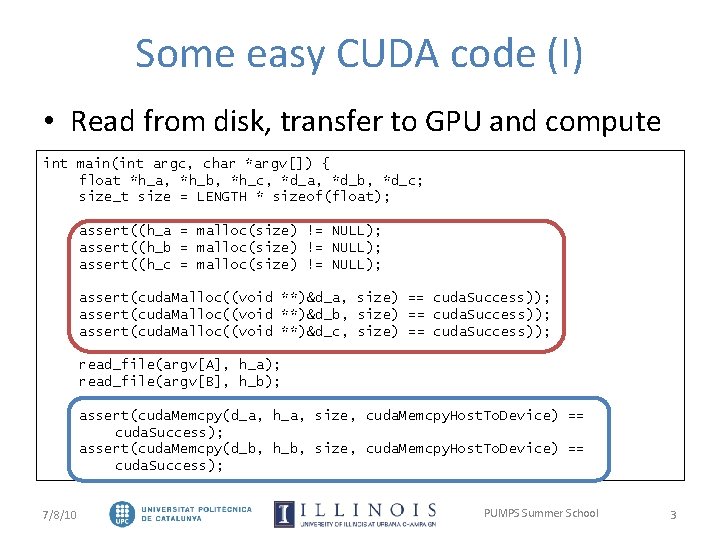

Some easy CUDA code (I) • Read from disk, transfer to GPU and compute int main(int argc, char *argv[]) { float *h_a, *h_b, *h_c, *d_a, *d_b, *d_c; size_t size = LENGTH * sizeof(float); assert((h_a = malloc(size) != NULL); assert((h_b = malloc(size) != NULL); assert((h_c = malloc(size) != NULL); assert(cuda. Malloc((void **)&d_a, size) == cuda. Success)); assert(cuda. Malloc((void **)&d_b, size) == cuda. Success)); assert(cuda. Malloc((void **)&d_c, size) == cuda. Success)); read_file(argv[A], h_a); read_file(argv[B], h_b); assert(cuda. Memcpy(d_a, h_a, size, cuda. Memcpy. Host. To. Device) == cuda. Success); assert(cuda. Memcpy(d_b, h_b, size, cuda. Memcpy. Host. To. Device) == cuda. Success); 7/8/10 PUMPS Summer School 3

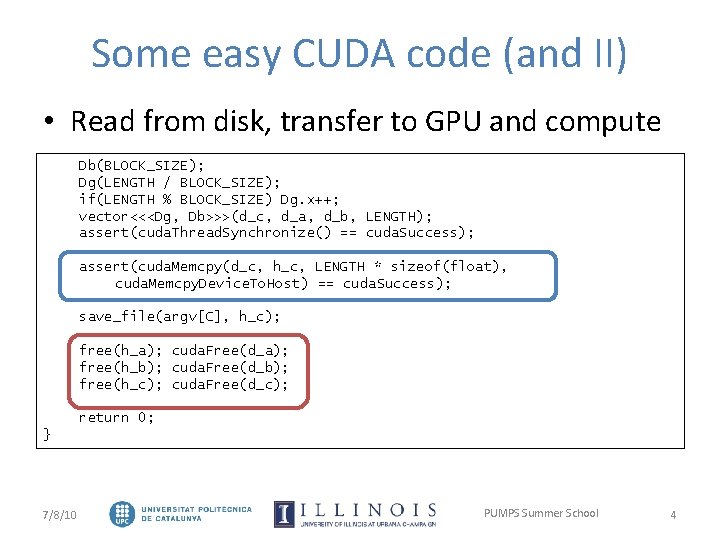

Some easy CUDA code (and II) • Read from disk, transfer to GPU and compute Db(BLOCK_SIZE); Dg(LENGTH / BLOCK_SIZE); if(LENGTH % BLOCK_SIZE) Dg. x++; vector<<<Dg, Db>>>(d_c, d_a, d_b, LENGTH); assert(cuda. Thread. Synchronize() == cuda. Success); assert(cuda. Memcpy(d_c, h_c, LENGTH * sizeof(float), cuda. Memcpy. Device. To. Host) == cuda. Success); save_file(argv[C], h_c); free(h_a); cuda. Free(d_a); free(h_b); cuda. Free(d_b); free(h_c); cuda. Free(d_c); return 0; } 7/8/10 PUMPS Summer School 4

![Some really easy GMAC code int main(int argc, char *argv[]) { float *a, *b, Some really easy GMAC code int main(int argc, char *argv[]) { float *a, *b,](http://slidetodoc.com/presentation_image_h2/789cd2b1ae7f738e104d16e80c97ace3/image-5.jpg)

Some really easy GMAC code int main(int argc, char *argv[]) { float *a, *b, *c; size_t size = LENGTH * sizeof(float); assert(gmac. Malloc((void **)&a, size) == gmac. Success)); assert(gmac. Malloc((void **)&b, size) == gmac. Success)); assert(gmac. Malloc((void **)&c, size) == gmac. Success)); read_file(argv[A], a); read_file(argv[B], b); There is no memory copy Db(BLOCK_SIZE); Dg(LENGTH / BLOCK_SIZE); if(LENGTH % BLOCK_SIZE) Dg. x++; vector<<<Dg, Db>>>(c, a, b, LENGTH); assert(gmac. Thread. Synchronize() == gmac. Success); save_file(argv[C], c); gmac. Free(a); gmac. Free(b); gmac. Free(c); There is no memory copy return 0; } 7/8/10 PUMPS Summer School 6

Getting GMAC • GMAC is at http: //adsm. googlecode. com/ • Debian / Ubuntu binary and development. deb files • UNIX (also Mac. OS X) source code package • Experimental versions from mercurial repository 7/8/10 PUMPS Summer School 7

Outline • Introduction • GMAC Memory Model – Asymmetric Memory – Global Memory • GMAC Execution Model – Multi-threading – Inter-thread communication • Conclusions 7/8/10 PUMPS Summer School 8

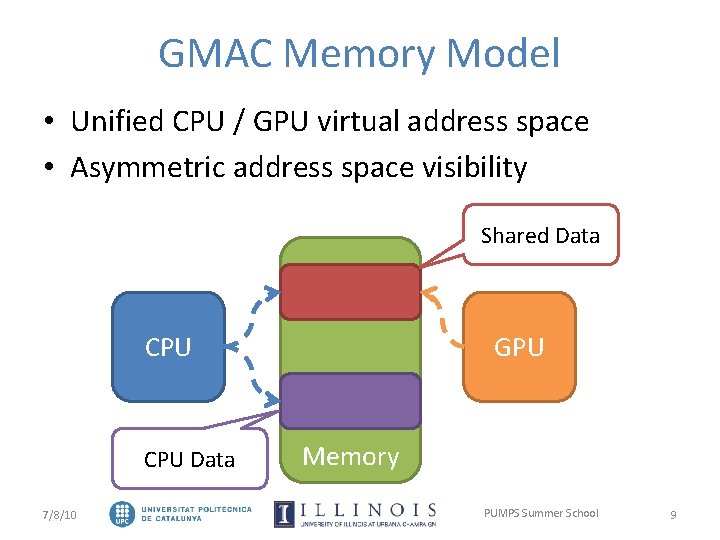

GMAC Memory Model • Unified CPU / GPU virtual address space • Asymmetric address space visibility Shared Data CPU Data 7/8/10 GPU Memory PUMPS Summer School 9

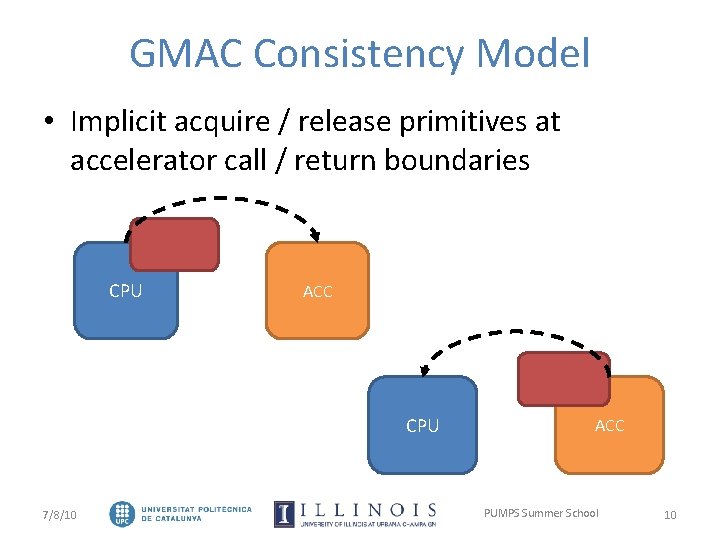

GMAC Consistency Model • Implicit acquire / release primitives at accelerator call / return boundaries CPU ACC CPU 7/8/10 ACC PUMPS Summer School 10

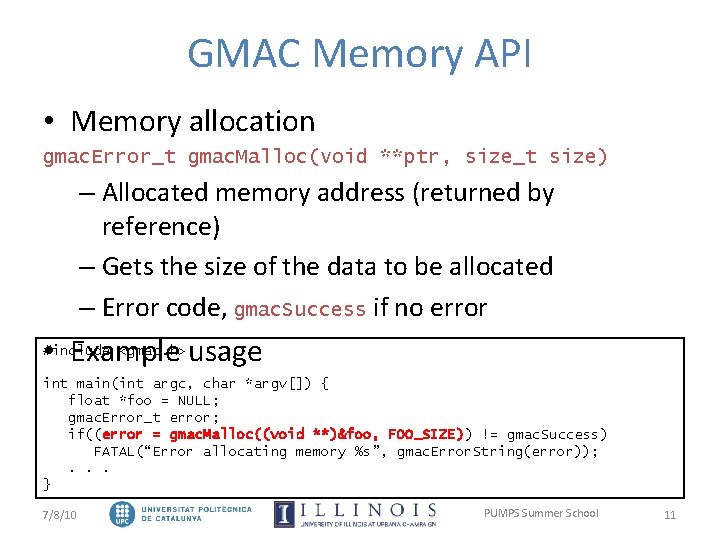

GMAC Memory API • Memory allocation gmac. Error_t gmac. Malloc(void **ptr, size_t size) – Allocated memory address (returned by reference) – Gets the size of the data to be allocated – Error code, gmac. Success if no error • Example usage #include <gmac. h> int main(int argc, char *argv[]) { float *foo = NULL; gmac. Error_t error; if((error = gmac. Malloc((void **)&foo, FOO_SIZE)) != gmac. Success) FATAL(“Error allocating memory %s”, gmac. Error. String(error)); . . . } 7/8/10 PUMPS Summer School 11

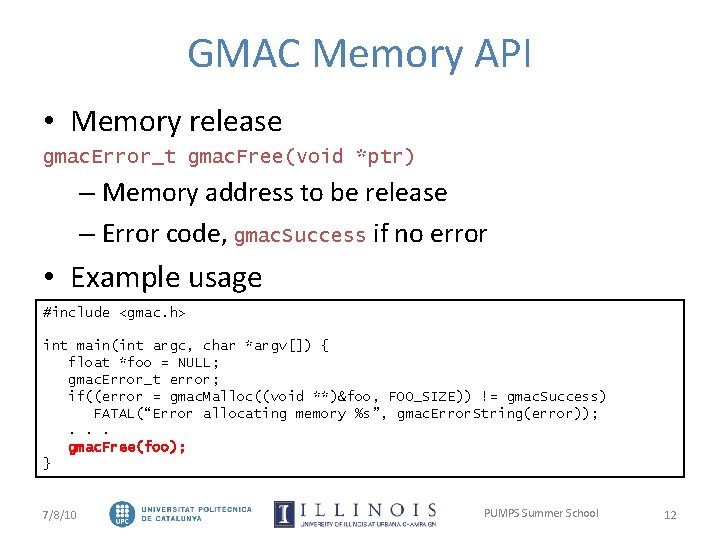

GMAC Memory API • Memory release gmac. Error_t gmac. Free(void *ptr) – Memory address to be release – Error code, gmac. Success if no error • Example usage #include <gmac. h> int main(int argc, char *argv[]) { float *foo = NULL; gmac. Error_t error; if((error = gmac. Malloc((void **)&foo, FOO_SIZE)) != gmac. Success) FATAL(“Error allocating memory %s”, gmac. Error. String(error)); . . . gmac. Free(foo); } 7/8/10 PUMPS Summer School 12

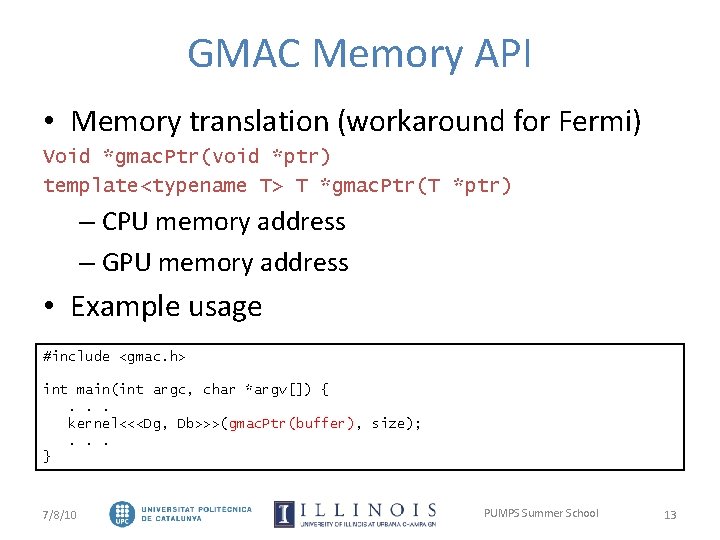

GMAC Memory API • Memory translation (workaround for Fermi) Void *gmac. Ptr(void *ptr) template<typename T> T *gmac. Ptr(T *ptr) – CPU memory address – GPU memory address • Example usage #include <gmac. h> int main(int argc, char *argv[]) {. . . kernel<<<Dg, Db>>>(gmac. Ptr(buffer), size); . . . } 7/8/10 PUMPS Summer School 13

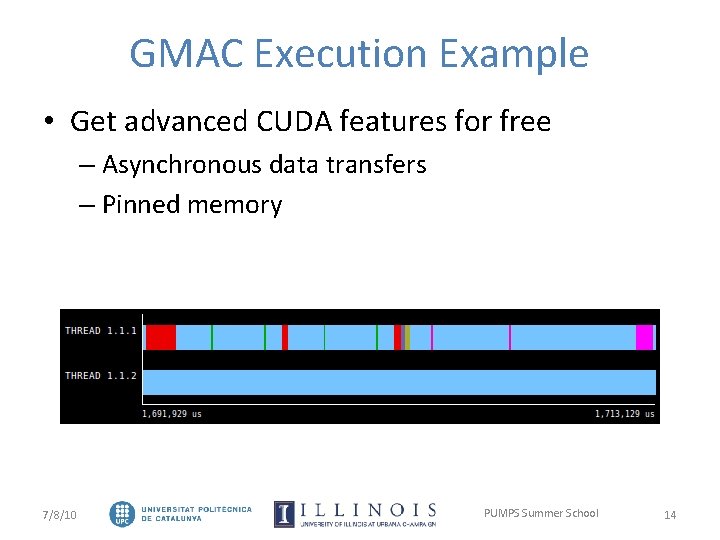

GMAC Execution Example • Get advanced CUDA features for free – Asynchronous data transfers – Pinned memory 7/8/10 PUMPS Summer School 14

Outline • Introduction • GMAC Memory Model – Asymmetric Memory – Global Memory • GMAC Execution Model – Multi-threading – Inter-thread communication • Conclusions 7/8/10 PUMPS Summer School 15

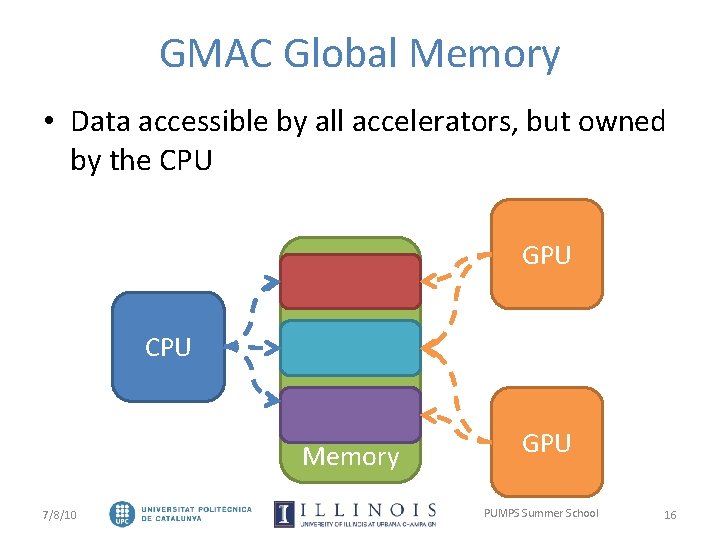

GMAC Global Memory • Data accessible by all accelerators, but owned by the CPU GPU CPU Memory 7/8/10 GPU PUMPS Summer School 16

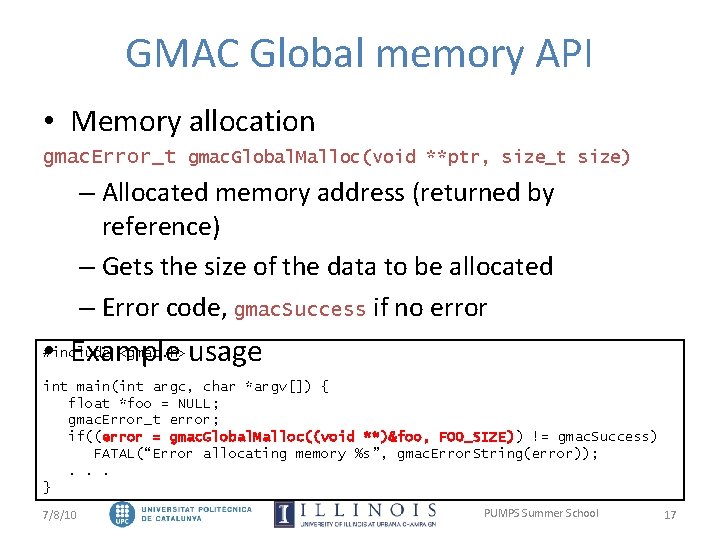

GMAC Global memory API • Memory allocation gmac. Error_t gmac. Global. Malloc(void **ptr, size_t size) – Allocated memory address (returned by reference) – Gets the size of the data to be allocated – Error code, gmac. Success if no error • Example usage #include <gmac. h> int main(int argc, char *argv[]) { float *foo = NULL; gmac. Error_t error; if((error = gmac. Global. Malloc((void **)&foo, FOO_SIZE)) != gmac. Success) FATAL(“Error allocating memory %s”, gmac. Error. String(error)); . . . } 7/8/10 PUMPS Summer School 17

Outline • Introduction • GMAC Memory Model – Asymmetric Memory – Global Memory • GMAC Execution Model – Multi-threading – Inter-thread communication • Conclusions 7/8/10 PUMPS Summer School 18

GMAC and Multi-threading • In the past, one thread one CPU • In GMAC, one thread: – One CPU – One GPU • A thread is running in the GPU or the CPU, but not in both at the same time • Create threads using what you already know – pthread_create(. . . ) 7/8/10 PUMPS Summer School 19

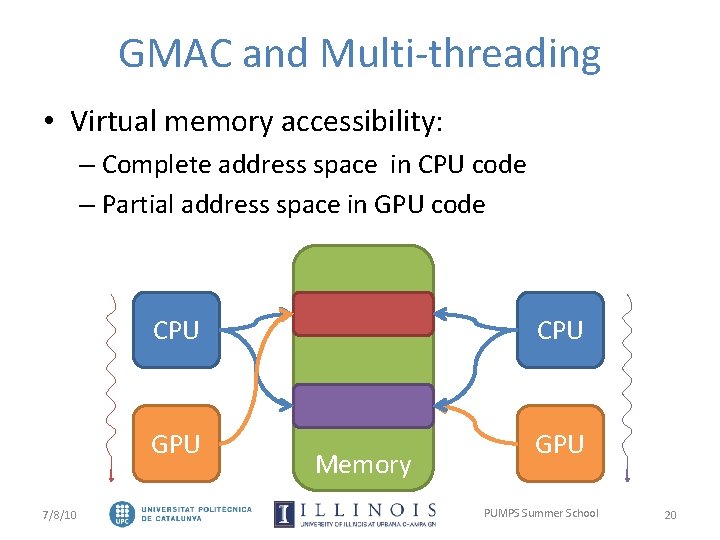

GMAC and Multi-threading • Virtual memory accessibility: – Complete address space in CPU code – Partial address space in GPU code 7/8/10 CPU GPU Memory PUMPS Summer School 20

Outline • Introduction • GMAC Memory Model – Asymmetric Memory – Global Memory • GMAC Execution Model – Multi-threading – Inter-thread communication • Conclusions 7/8/10 PUMPS Summer School 21

GPU Passing and Copying • GPU passing: – Send the thread’s virtual GPU to another thread – Do not move data, move computation • API Calls – Virtual GPU sending gmac. Error_t gmac. Send(thread_id dest) – Virtual GPU receiving gmac. Error_t gmac. Receive() – Virtual GPU copying gmac. Error_t gmac. Copy(thread_id dest) 7/8/10 PUMPS Summer School 22

Outline • Introduction • GMAC Memory Model – Asymmetric Memory – Global Memory • GMAC Execution Model – Multi-threading – Inter-thread communication • Conclusions 7/8/10 PUMPS Summer School 23

Conclusions • Single virtual address space for CPUs and GPUs • Use CUDA advanced features – Automatic overlap data communication and computation – Get access to any GPU from any CPU thread • Get more performance from your application more easily • Go: http: //adsm. googlecode. com 7/8/10 PUMPS Summer School 24

GMAC Global Memory for Accelerators Isaac Gelado PUMPS Summer School - Barcelona

Backup Slides

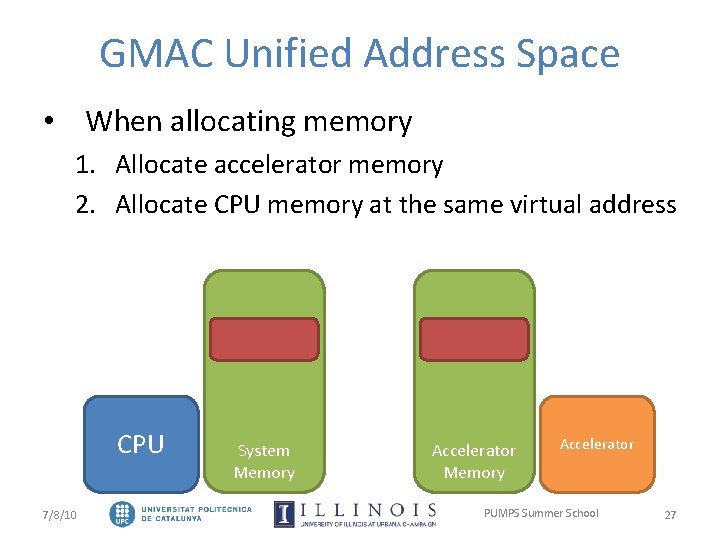

GMAC Unified Address Space • When allocating memory 1. Allocate accelerator memory 2. Allocate CPU memory at the same virtual address CPU 7/8/10 System Memory Accelerator PUMPS Summer School 27

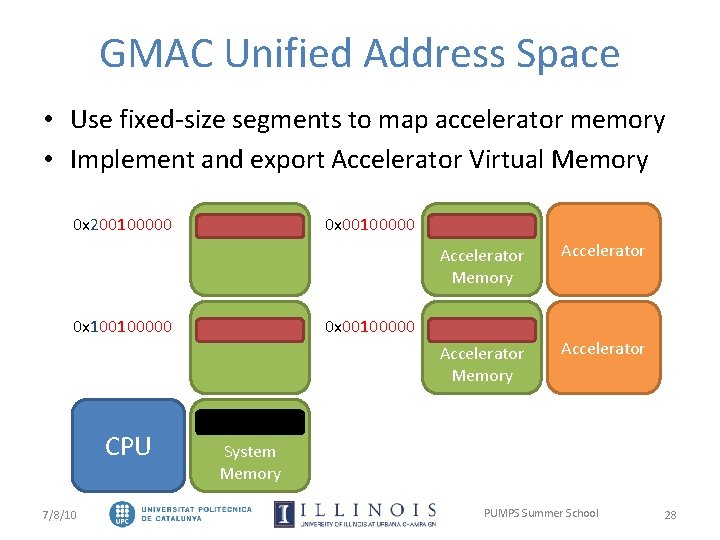

GMAC Unified Address Space • Use fixed-size segments to map accelerator memory • Implement and export Accelerator Virtual Memory 0 x 200100000 0 x 100100000 CPU 7/8/10 Accelerator Memory Accelerator 0 x 00100000 System Memory PUMPS Summer School 28

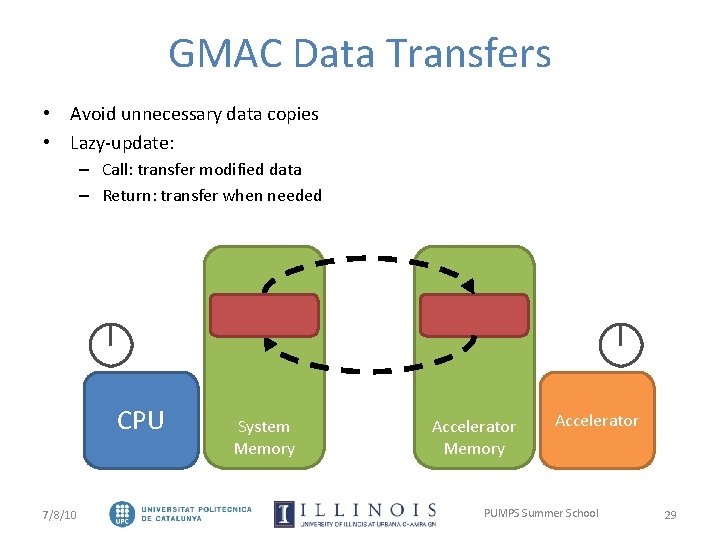

GMAC Data Transfers • Avoid unnecessary data copies • Lazy-update: – Call: transfer modified data – Return: transfer when needed CPU 7/8/10 System Memory Accelerator PUMPS Summer School 29

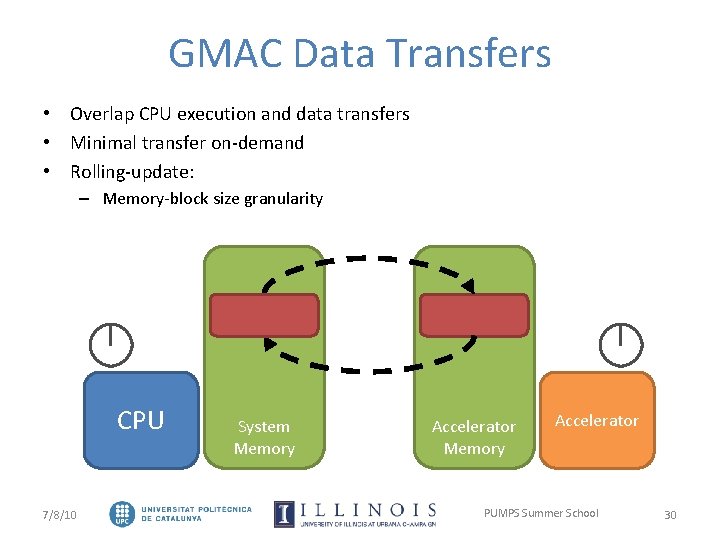

GMAC Data Transfers • Overlap CPU execution and data transfers • Minimal transfer on-demand • Rolling-update: – Memory-block size granularity CPU 7/8/10 System Memory Accelerator PUMPS Summer School 30

GMAC Global Memory for Accelerators Isaac Gelado PUMPS Summer School - Barcelona

- Slides: 30