FFT on XMT Case Study of a BandwidthIntensive

FFT on XMT: Case Study of a Bandwidth-Intensive Regular Algorithm on a Highly-Parallel Many Core James Edwards, Uzi Vishkin University of Maryland (UMD)

Current state of parallel computing • The parallel computing community has increasingly shifted its attention to communication avoidance (CA) as a way to address the end of Dennard scaling and the attendant difficulty in scaling down power consumption. – The National Academies “Game Over” report (2011) – Recent comp. arch. books published by Morgan and Claypool (2014) – Focus of many meetings (e. g. , session on Wednesday) • However, there are limits to the performance improvements that can be attained by focusing on reducing data movement. – Strength of current parallel architectures: regular algorithms requiring only limited communication • Ex. : dense-matrix multiplication – Limited speedups for other algorithms on current platforms • Furthermore, the challenges of communication avoidance have arguably harmed programmers’ productivity (2014 CACM article). 2

A “what-if” question • Despite these difficulties, the default mode for parallel programming research is reliance on off-the-shelf hardware. • But what if alternative machines, or hardware features, are feasible, and can offer significant advantages? • Clearly, such out-of-the-box hardware and the enabling technologies it may require are unlikely to ever be developed before their advantages are sufficiently understood. • In contrast to work that seeks to avoid data movement, here we examine the problem from an alternate angle: – Assuming that is it possible to reduce the energy cost of data movement, is it possible to obtain strong speedups on problems for which such speedups have proven elusive? • Power consumption of XMT ICN is ≤ 18% of total for ≥ 16 k TCUs and decreasing 3

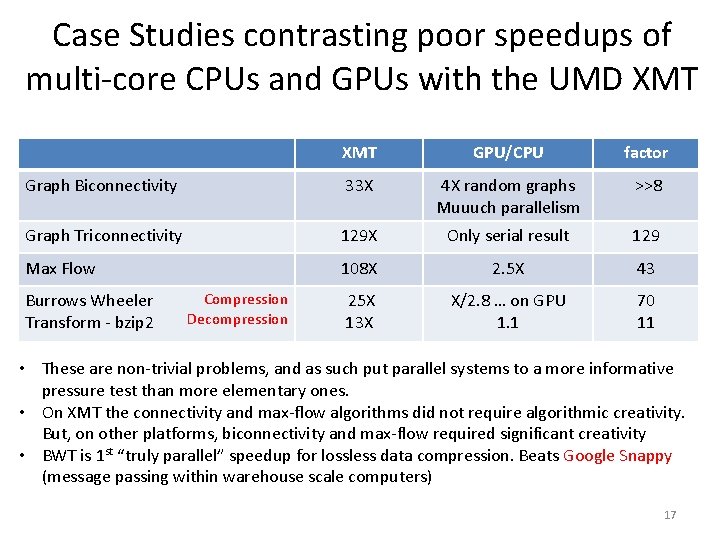

Case study in permissive communication: XMT @ UMD • The preceding question has been partially answered in the affirmative by prior work on the Explicit Multi-Threading (XMT) general-purpose architecture. • Goals of XMT: – Faster single-task completion time – Improved ease-of-programming – Efficient support for Parallel Random Access Model (PRAM) programming. • Published speedups of 8 -129× vs. GPU/CPU on basic and advanced irregular problems such as graph algorithms & data compression (backup slide) • This paper: regular but communication intensive algorithms 4

Fast Fourier transform (FFT) • 5

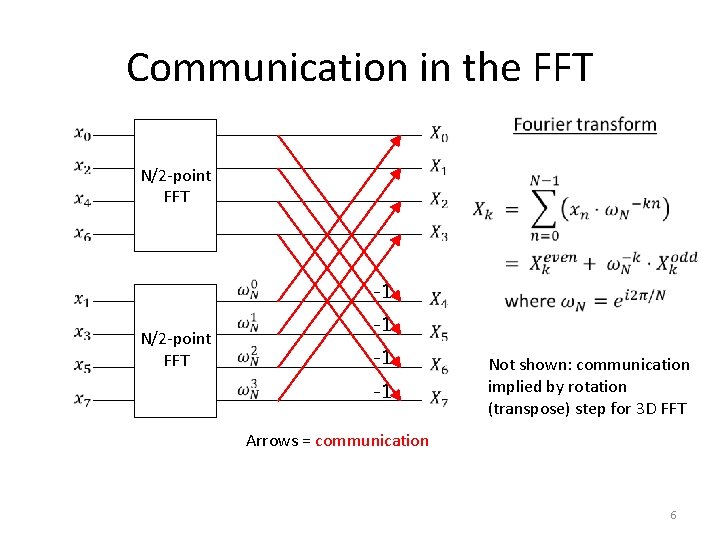

Communication in the FFT N/2 -point FFT -1 -1 -1 Not shown: communication implied by rotation (transpose) step for 3 D FFT Arrows = communication 6

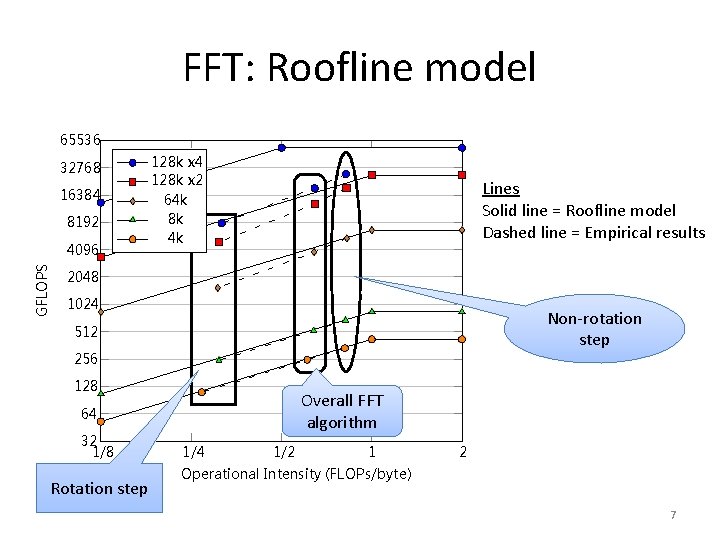

FFT: Roofline model 65536 32768 16384 8192 GFLOPS 4096 128 k x 4 128 k x 2 64 k 8 k 4 k Lines Solid line = Roofline model Dashed line = Empirical results 2048 1024 Non-rotation step 512 256 128 Overall FFT algorithm 64 32 1/8 Rotation step 1/4 1/2 1 2 Operational Intensity (FLOPs/byte) 7

![3 D Fast Fourier Transform (FFT) on XMT [Edwards, Vishkin, in press] • With 3 D Fast Fourier Transform (FFT) on XMT [Edwards, Vishkin, in press] • With](http://slidetodoc.com/presentation_image_h2/d2d82da5d38b69ef9f539866cc33f562/image-8.jpg)

3 D Fast Fourier Transform (FFT) on XMT [Edwards, Vishkin, in press] • With enabling technologies, XMT can outperform a much larger supercomputer (Edison) on 3 D FFT • XMT speedups vs. best serial (FFTW): – Without enabling technologies (8 k TCUs): 31 X (0. 24 TFLOPS) – With enabling technologies (128 k TCUs): 2, 494 X (19. 0 TFLOPS) Comparison of Edison machine (Cray XC 30) to XMT # processing elements # processor groups Total cache memory # chips Total silicon area (process) Normalized silicon area (22 nm) Peak power consumption Peak tera. FLOPS Tera. FLOPS for FFT (size) % of peak FLOPS XMT (128 k x 4) 131, 072 TCUs 4, 096 clusters 128 MB 1 35. 4 cm 2 (14 nm) 66 cm 2 7. 0 KW 54 19. 0 (5123) 35% Edison (Cray XC 30) 124, 608 cores 5, 192 nodes 311, 520 MB 10, 384 CPU + 1, 298 router 56, 177 cm 2 (22 nm) + 4, 072 cm 2 (40 nm) 57, 409 cm 2 2, 500 KW 2, 390 13. 6 (10243) 0. 57% Note: TCU = Thread Control Unit (lightweight processor) Factor 2433 11682 871 357 44 /1. 4 /61 8

Enabling technologies • For increased on-chip bandwidth to shared cache: – 3 D VLSI – Microfluidic cooling • The above technologies enable XMT to scale up to 8 x larger than would be possible without them – Temperature and power results reported as part of a new software spiral proposal [Intel CATC 2015] • Extension to off-chip bandwidth to DRAM: – Silicon photonics • While it is expected that increased bandwidth enabled by such technologies would lead to improved performance the (high) rate of improvement shows great promise. 9

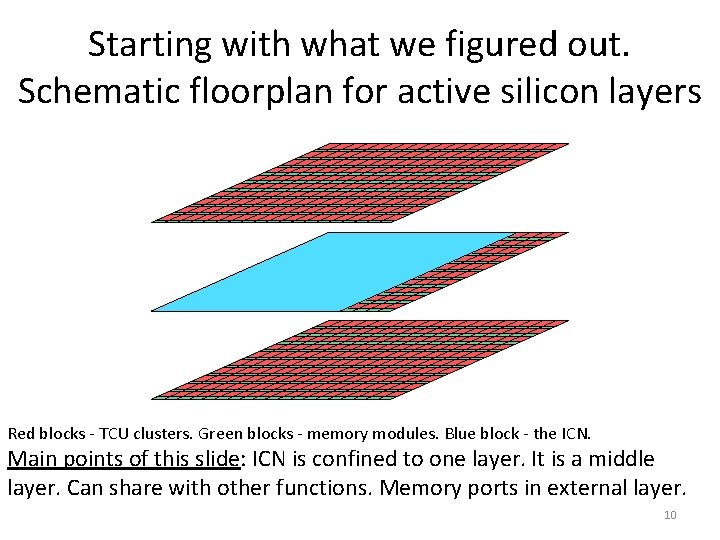

Starting with what we figured out. Schematic floorplan for active silicon layers Red blocks - TCU clusters. Green blocks - memory modules. Blue block - the ICN. Main points of this slide: ICN is confined to one layer. It is a middle layer. Can share with other functions. Memory ports in external layer. 10

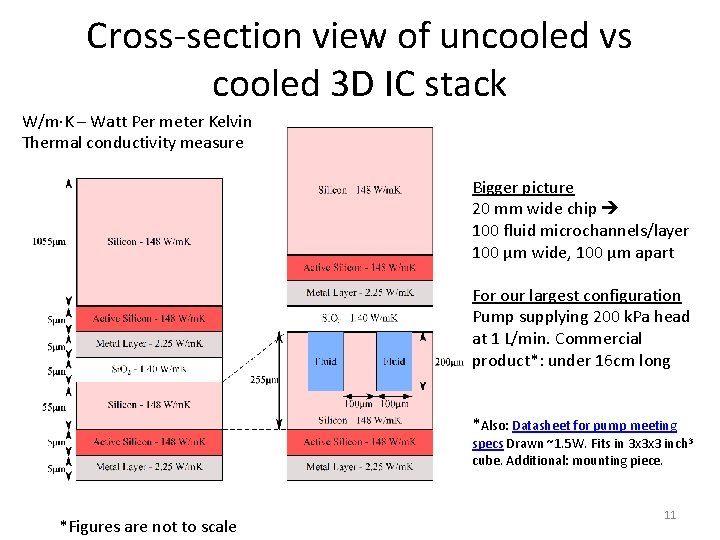

Cross-section view of uncooled vs cooled 3 D IC stack W/m∙K – Watt Per meter Kelvin Thermal conductivity measure Bigger picture 20 mm wide chip 100 fluid microchannels/layer 100 µm wide, 100 µm apart For our largest configuration Pump supplying 200 k. Pa head at 1 L/min. Commercial product*: under 16 cm long *Also: Datasheet for pump meeting specs Drawn ~1. 5 W. Fits in 3 x 3 x 3 inch 3 cube. Additional: mounting piece. *Figures are not to scale 11

Silicon (Si) photonics • Promise (since we do not have results): can scale XMT to 128 k TCUs with high off-chip bandwidth • Potential for even greater scaling with multi-chip design • But, need to sort out many approaches & tradeoffs to silicon photonics – How “monolithic” can integration be? – To what extent can electronics and photonics 1. share the same wafer improving density and lowering parasitic effects, versus 2. be fabricated on different platforms that favor each better; then brought together, e. g. , by short wire bonds and flip chip – How can temperature fluctuation be managed? – How to scale reliably to 100 K high-bandwidth devices on chip using automatic fabrication? • Various approaches offer different tradeoffs to the above questions. • Will microfluidic cooling (and 3 D-VLSI) provide the key for the scale we seek? Not only above issues. Also: greater flexibility with the remaining pieces since intermediate steps may be power inefficient 12

Conclusion • XMT shows that strong speedups are possible for problems that have proven difficult for current platforms. • Enabling technologies allow the advantage of XMT to be extended to larger scales and more types of problems. • The results presented here help to resolve the chicken-and-egg problem posed by enabling technologies: – development of enabling technologies will not advance without evidence of their benefit, while such evidence apparently cannot be obtained until these technologies have already been developed. • Benefit is not limited to XMT – Main difference of XMT from other platforms is ICN – Enabling technologies can improve switches used by existing platforms • Final point for consideration: Has the (exclusive) focus on communication avoidance been a strategic mistake? – Focus on CA vendors will produce HW that requires CA – In the serial era, technology obstacles to the programming model were put on a roadmap (e. g. , ITRS) and addressed. CA took the steam away from this successful model. 13

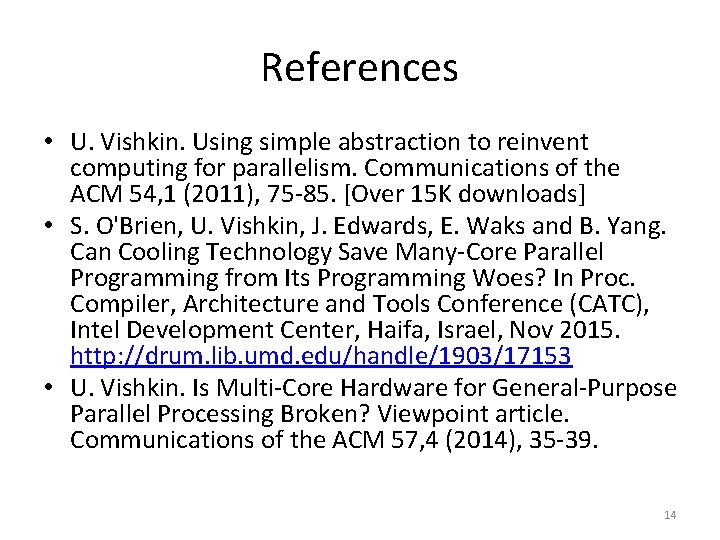

References • U. Vishkin. Using simple abstraction to reinvent computing for parallelism. Communications of the ACM 54, 1 (2011), 75 -85. [Over 15 K downloads] • S. O'Brien, U. Vishkin, J. Edwards, E. Waks and B. Yang. Can Cooling Technology Save Many-Core Parallel Programming from Its Programming Woes? In Proc. Compiler, Architecture and Tools Conference (CATC), Intel Development Center, Haifa, Israel, Nov 2015. http: //drum. lib. umd. edu/handle/1903/17153 • U. Vishkin. Is Multi-Core Hardware for General-Purpose Parallel Processing Broken? Viewpoint article. Communications of the ACM 57, 4 (2014), 35 -39. 14

BACKUP SLIDES Source for most slides: CATC 2015 presentation (available at http: //www. umiacs. umd. edu/users/vishkin/XMT/index. shtml) 15

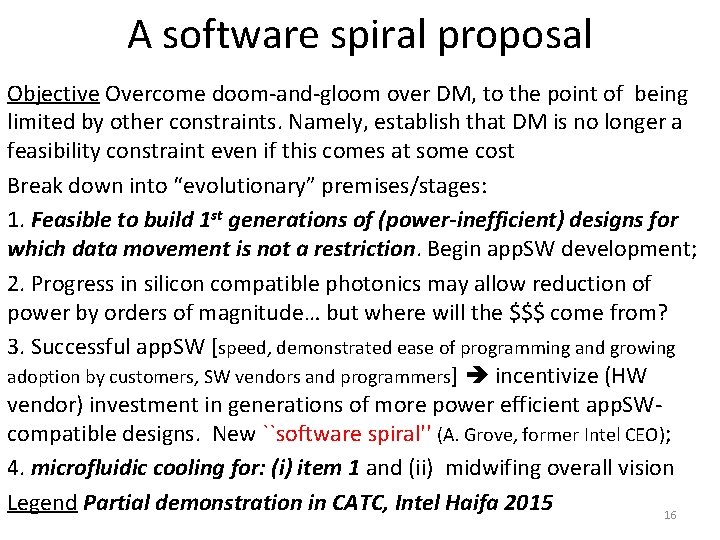

A software spiral proposal Objective Overcome doom-and-gloom over DM, to the point of being limited by other constraints. Namely, establish that DM is no longer a feasibility constraint even if this comes at some cost Break down into “evolutionary” premises/stages: 1. Feasible to build 1 st generations of (power-inefficient) designs for which data movement is not a restriction. Begin app. SW development; 2. Progress in silicon compatible photonics may allow reduction of power by orders of magnitude… but where will the $$$ come from? 3. Successful app. SW [speed, demonstrated ease of programming and growing adoption by customers, SW vendors and programmers] incentivize (HW vendor) investment in generations of more power efficient app. SWcompatible designs. New ``software spiral'' (A. Grove, former Intel CEO); 4. microfluidic cooling for: (i) item 1 and (ii) midwifing overall vision Legend Partial demonstration in CATC, Intel Haifa 2015 16

Case Studies contrasting poor speedups of multi-core CPUs and GPUs with the UMD XMT GPU/CPU factor Graph Biconnectivity 33 X 4 X random graphs Muuuch parallelism >>8 Graph Triconnectivity 129 X Only serial result 129 Max Flow 108 X 2. 5 X 43 25 X 13 X X/2. 8 … on GPU 1. 1 70 11 Burrows Wheeler Transform - bzip 2 Compression Decompression • These are non-trivial problems, and as such put parallel systems to a more informative pressure test than more elementary ones. • On XMT the connectivity and max-flow algorithms did not require algorithmic creativity. But, on other platforms, biconnectivity and max-flow required significant creativity • BWT is 1 st “truly parallel” speedup for lossless data compression. Beats Google Snappy (message passing within warehouse scale computers) 17

More applications • The fact that at issue is general-purpose computing and programmer’s productivity does not mean that this is less relevant for applications than other approaches • Applications include: Bio, precision medicine Machine learning, big data SW&HW formal verification Numerical simulations to study instabilities (in liquids, gases and plasmas). Perhaps, could turn around fortune of LLNL ICF $5 B fusion facility – Quantum effects defy locality – Scaling graph algorithms – Large FFT (noted before) – – • DOE on a mission to “reinvent physics” for communication avoidance. Instead match computing to classic physics. 18

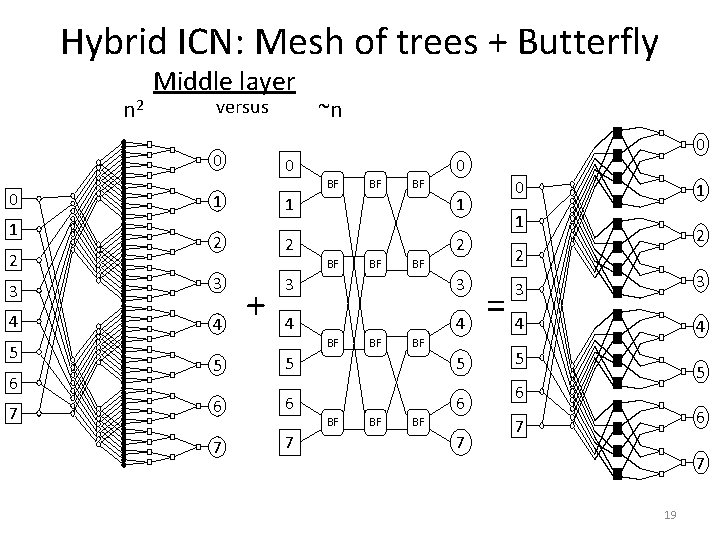

Hybrid ICN: Mesh of trees + Butterfly n 2 Middle layer versus 0 0 1 2 BF BF 2 2 2 BF 4 7 BF 1 4 + 0 0 1 3 6 0 1 3 5 ~n BF BF 0 1 3 4 4 =4 5 BF BF 5 5 5 6 6 6 7 7 BF BF BF 7 2 2 3 BF 1 3 3 4 5 6 6 7 7 19

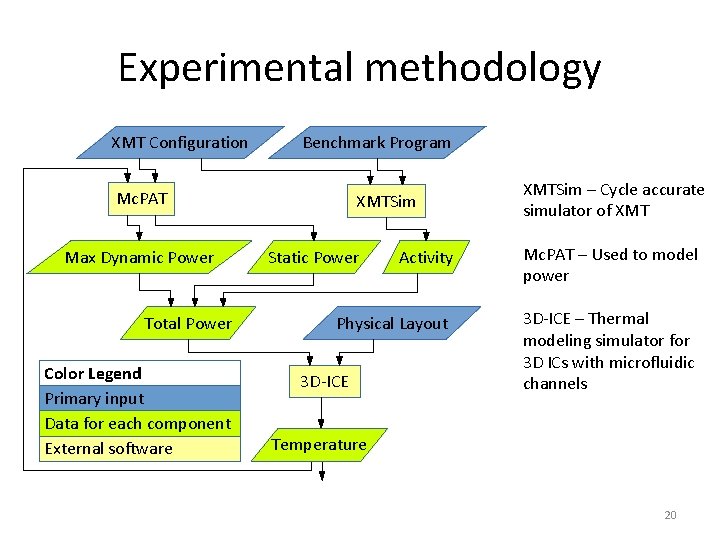

Experimental methodology XMT Configuration Benchmark Program Mc. PAT Max Dynamic Power Total Power Color Legend Primary input Data for each component External software XMTSim Static Power Activity Physical Layout 3 D-ICE XMTSim – Cycle accurate simulator of XMT Mc. PAT – Used to model power 3 D-ICE – Thermal modeling simulator for 3 D ICs with microfluidic channels Temperature 20

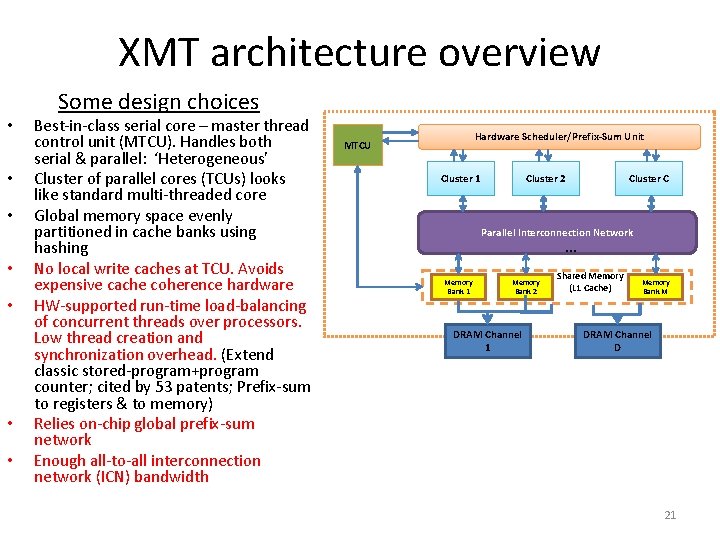

XMT architecture overview • • Some design choices Best-in-class serial core – master thread control unit (MTCU). Handles both serial & parallel: ‘Heterogeneous’ Cluster of parallel cores (TCUs) looks like standard multi-threaded core Global memory space evenly partitioned in cache banks using hashing No local write caches at TCU. Avoids expensive cache coherence hardware HW-supported run-time load-balancing of concurrent threads over processors. Low thread creation and synchronization overhead. (Extend classic stored-program+program counter; cited by 53 patents; Prefix-sum to registers & to memory) Relies on-chip global prefix-sum network Enough all-to-all interconnection network (ICN) bandwidth Hardware Scheduler/Prefix-Sum Unit MTCU Cluster 1 Cluster 2 Cluster C Parallel Interconnection Network … Memory Bank 1 Memory Bank 2 DRAM Channel 1 Shared Memory (L 1 Cache) Memory Bank M DRAM Channel D 21

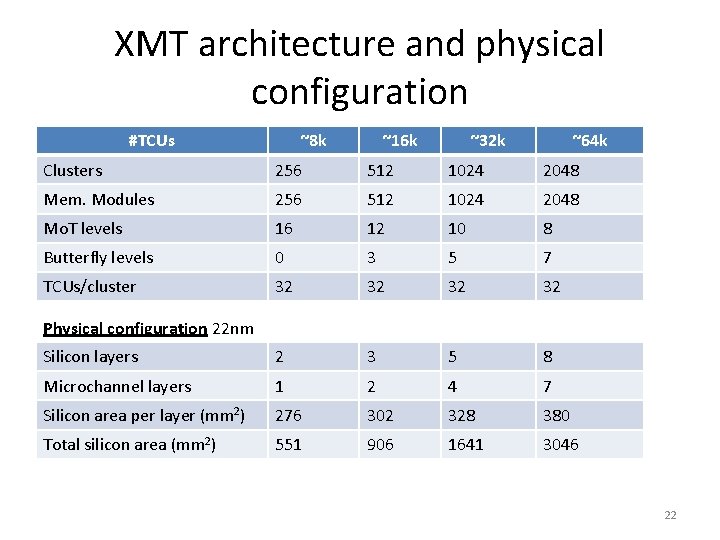

XMT architecture and physical configuration #TCUs ~8 k ~16 k ~32 k ~64 k Clusters 256 512 1024 2048 Mem. Modules 256 512 1024 2048 Mo. T levels 16 12 10 8 Butterfly levels 0 3 5 7 TCUs/cluster 32 32 Silicon layers 2 3 5 8 Microchannel layers 1 2 4 7 Silicon area per layer (mm 2) 276 302 328 380 Total silicon area (mm 2) 551 906 1641 3046 Physical configuration 22 nm 22

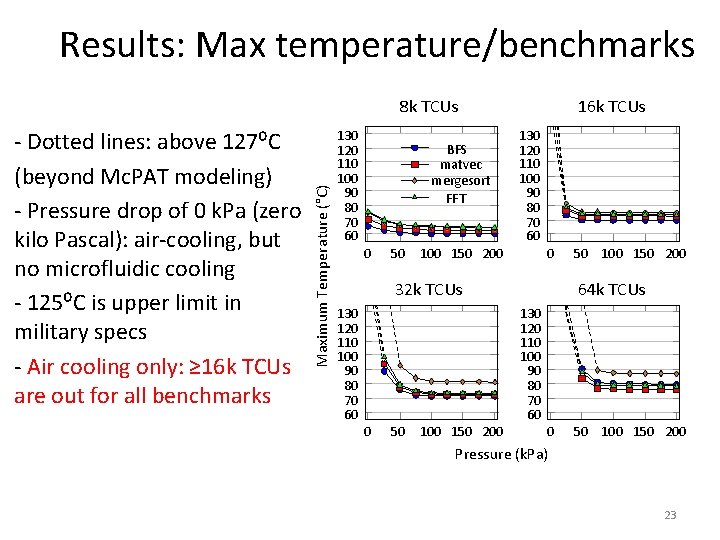

Results: Max temperature/benchmarks - Dotted lines: above 127⁰C (beyond Mc. PAT modeling) - Pressure drop of 0 k. Pa (zero kilo Pascal): air-cooling, but no microfluidic cooling - 125⁰C is upper limit in military specs - Air cooling only: ≥ 16 k TCUs are out for all benchmarks Maximum Temperature (°C) 8 k TCUs 130 120 110 100 90 80 70 60 BFS matvec mergesort FFT 0 50 16 k TCUs 130 120 110 100 90 80 70 60 100 150 200 0 32 k TCUs 130 120 110 100 90 80 70 60 0 50 100 150 200 64 k TCUs 130 120 110 100 90 80 70 60 0 50 100 150 200 Pressure (k. Pa) 23

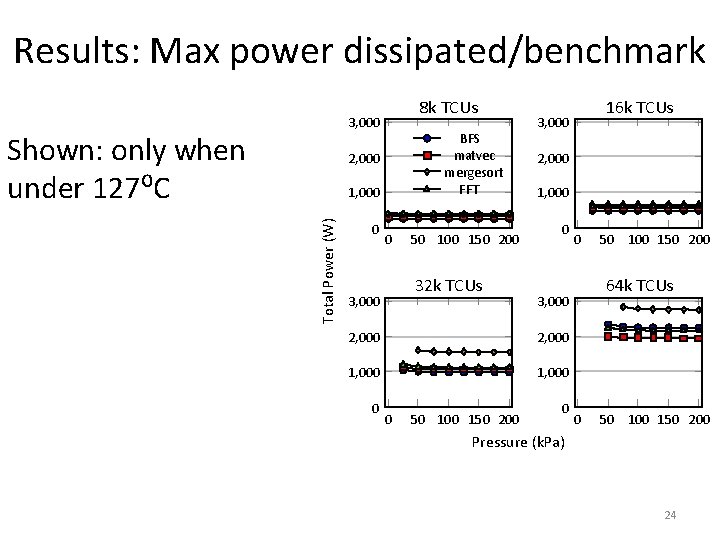

Results: Max power dissipated/benchmark 8 k TCUs 3, 000 Shown: only when under 127⁰C BFS matvec mergesort FFT 2, 000 Total Power (W) 1, 000 0 0 50 100 150 200 32 k TCUs 3, 000 2, 000 1, 000 0 50 100 150 200 64 k TCUs 3, 000 2, 000 0 16 k TCUs 3, 000 0 50 100 150 200 Pressure (k. Pa) 24

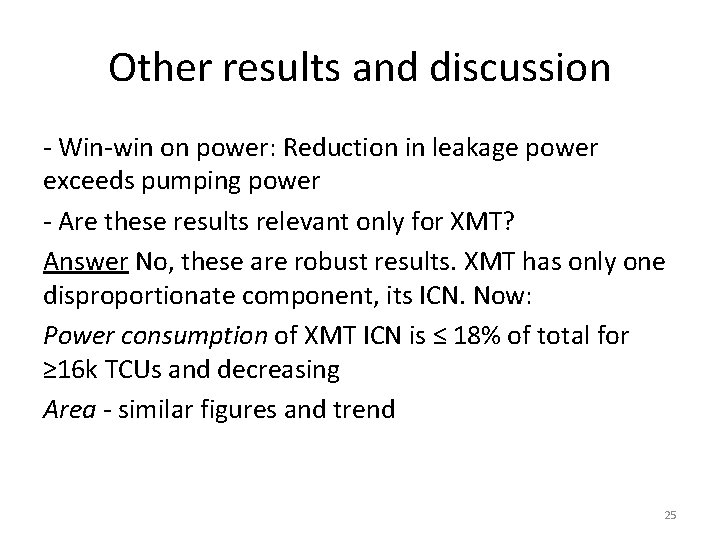

Other results and discussion - Win-win on power: Reduction in leakage power exceeds pumping power - Are these results relevant only for XMT? Answer No, these are robust results. XMT has only one disproportionate component, its ICN. Now: Power consumption of XMT ICN is ≤ 18% of total for ≥ 16 k TCUs and decreasing Area - similar figures and trend 25

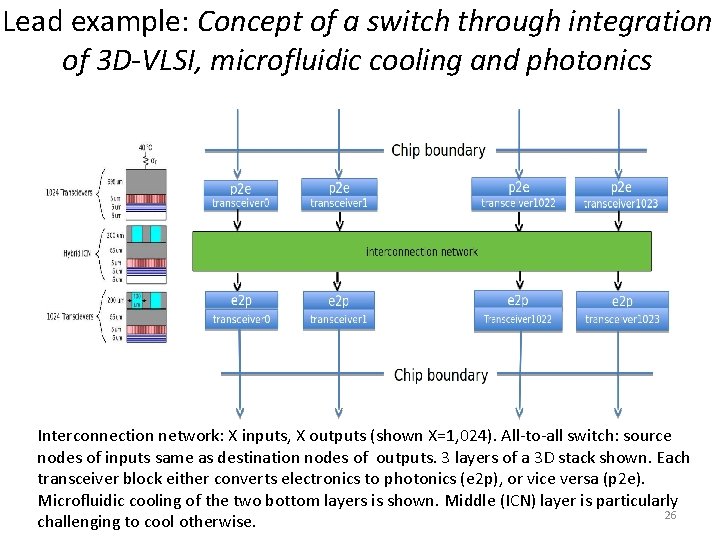

Lead example: Concept of a switch through integration of 3 D-VLSI, microfluidic cooling and photonics Interconnection network: X inputs, X outputs (shown X=1, 024). All-to-all switch: source nodes of inputs same as destination nodes of outputs. 3 layers of a 3 D stack shown. Each transceiver block either converts electronics to photonics (e 2 p), or vice versa (p 2 e). Microfluidic cooling of the two bottom layers is shown. Middle (ICN) layer is particularly 26 challenging to cool otherwise.

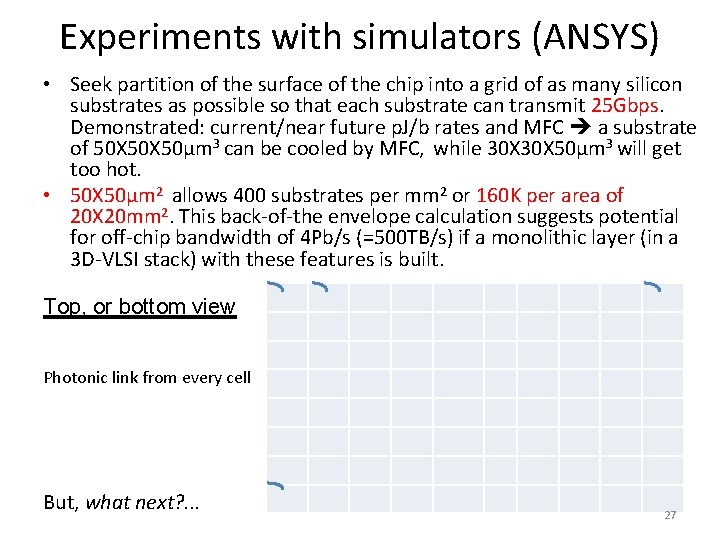

Experiments with simulators (ANSYS) • Seek partition of the surface of the chip into a grid of as many silicon substrates as possible so that each substrate can transmit 25 Gbps. Demonstrated: current/near future p. J/b rates and MFC a substrate of 50 X 50µm 3 can be cooled by MFC, while 30 X 50µm 3 will get too hot. • 50 X 50µm 2 allows 400 substrates per mm 2 or 160 K per area of 20 X 20 mm 2. This back-of-the envelope calculation suggests potential for off-chip bandwidth of 4 Pb/s (=500 TB/s) if a monolithic layer (in a 3 D-VLSI stack) with these features is built. Top, or bottom view Photonic link from every cell But, what next? . . . 27

Some comments & comparison(? ) • For a 64 b(=8 B) architecture and 3 GHz processors, around 20 K words (of 8 B each) can be transmitted per clock. • Different objectives (e. g. , packet sizes, longer distance), but it appears that top-of-the-line 130 Tb/s Mellanox switch would allow transmission of around 650 words per clock. The larger substrate volume option also enables replacing a single 25 Gb/s structure by several (e. g. , three 8. 3 Gb/s) structures, which is likely to be cheaper, as these structures are simpler. • Lower adoption bar for a switch: “equal exchange” of current component. However, lower scaling demands for many-cores… 28

- Slides: 28