EyeBased Interaction in Graphical Systems Theory Practice Part

Eye-Based Interaction in Graphical Systems: Theory & Practice Part II Eye Tracking Systems

E: The Eye Tracker • Two broad applications of eye movement monitoring techniques: • measuring position of eye relative to the head • measuring orientation of eye in space, or the “point of regard” (POR)—used to identify fixated elements in a visual scene • Arguably, the most widely used apparatus for measuring the POR is the video-based corneal reflection eye tracker

E. 1: Brief Survey of Eye Tracking Techniques • Four broad categories of eye movement methodologies: • electro-oculography (EOG) • scleral contact lens/search coil • photo-oculography (POG) or video-oculography (VOG) • video-based combined pupil and corneal reflection

E. 1: Brief Survey of Eye Tracking Techniques (cont’d) • First method for objective eye movement • • • measurements using corneal reflection reported in 1901 Techniques using contact lenses to improve accuracy developed in 1950 s (invasive) Remote (non-invasive) trackers rely on visible features of the eye (e. g. , pupil) Fast image processing techniques have facilitated real-time video-based systems

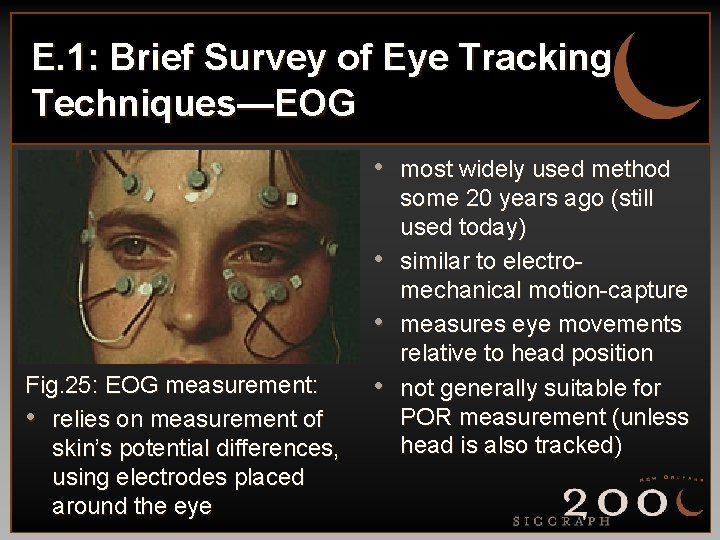

E. 1: Brief Survey of Eye Tracking Techniques—EOG • most widely used method • • Fig. 25: EOG measurement: • relies on measurement of skin’s potential differences, using electrodes placed around the eye • some 20 years ago (still used today) similar to electromechanical motion-capture measures eye movements relative to head position not generally suitable for POR measurement (unless head is also tracked)

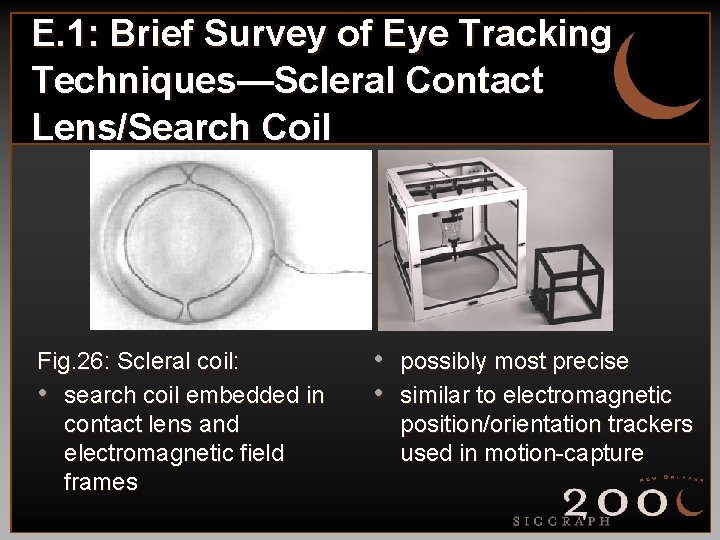

E. 1: Brief Survey of Eye Tracking Techniques—Scleral Contact Lens/Search Coil Fig. 26: Scleral coil: • search coil embedded in contact lens and electromagnetic field frames • possibly most precise • similar to electromagnetic position/orientation trackers used in motion-capture

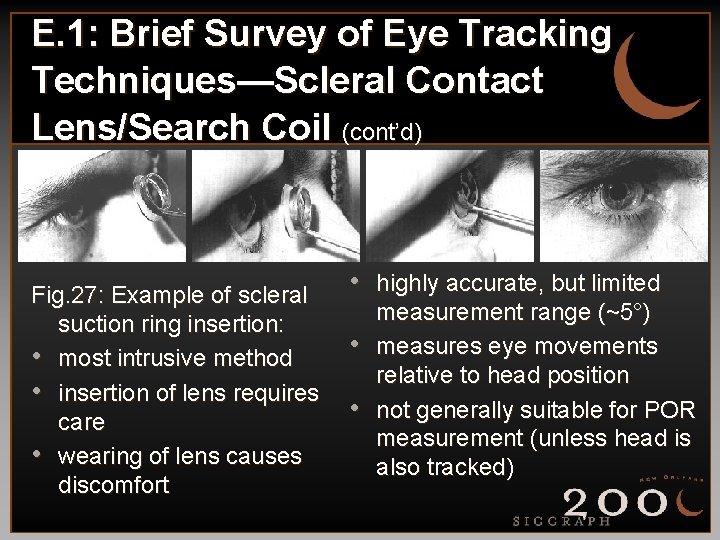

E. 1: Brief Survey of Eye Tracking Techniques—Scleral Contact Lens/Search Coil (cont’d) Fig. 27: Example of scleral suction ring insertion: • most intrusive method • insertion of lens requires care • wearing of lens causes discomfort • highly accurate, but limited • • measurement range (~5°) measures eye movements relative to head position not generally suitable for POR measurement (unless head is also tracked)

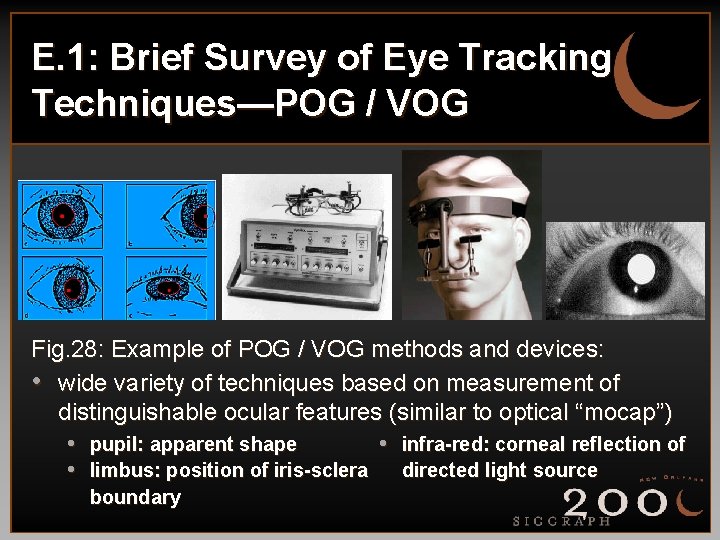

E. 1: Brief Survey of Eye Tracking Techniques—POG / VOG Fig. 28: Example of POG / VOG methods and devices: • wide variety of techniques based on measurement of distinguishable ocular features (similar to optical “mocap”) • pupil: apparent shape • infra-red: corneal reflection of • limbus: position of iris-sclera directed light source boundary

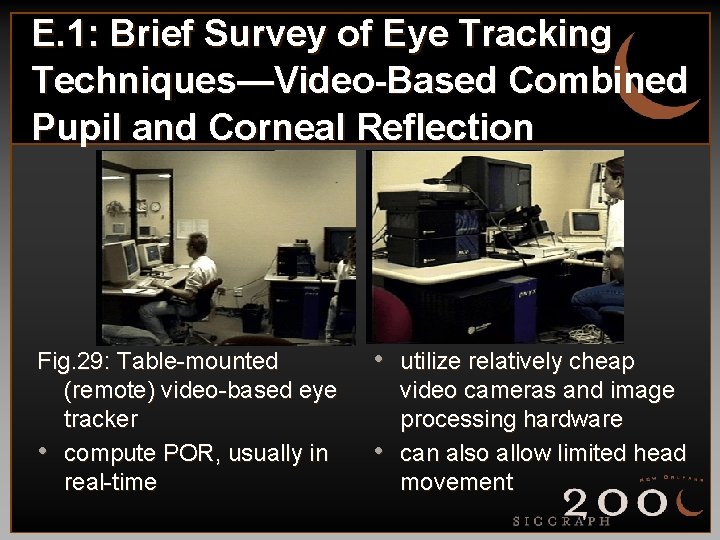

E. 1: Brief Survey of Eye Tracking Techniques—Video-Based Combined Pupil and Corneal Reflection Fig. 29: Table-mounted (remote) video-based eye tracker • compute POR, usually in real-time • utilize relatively cheap • video cameras and image processing hardware can also allow limited head movement

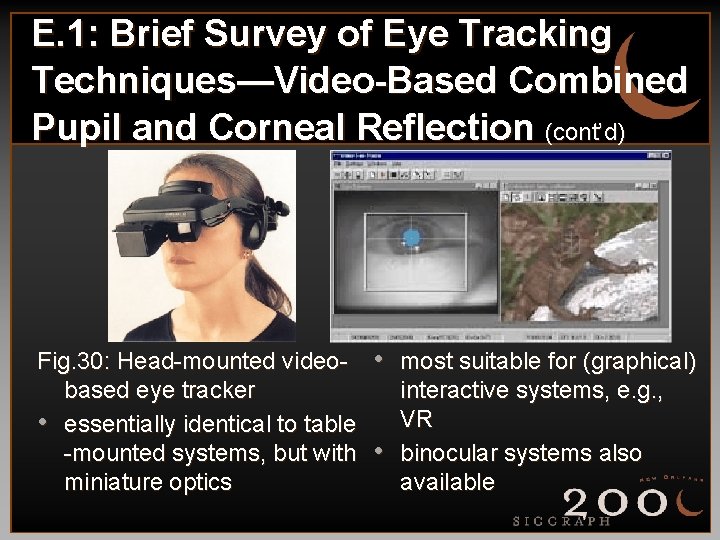

E. 1: Brief Survey of Eye Tracking Techniques—Video-Based Combined Pupil and Corneal Reflection (cont’d) Fig. 30: Head-mounted videobased eye tracker • essentially identical to table -mounted systems, but with miniature optics • most suitable for (graphical) • interactive systems, e. g. , VR binocular systems also available

E. 1: Brief Survey of Eye Tracking Techniques—Corneal Reflection • Two points of reference on the eye are • needed to separate eye movements from head movements, e. g. , • pupil center • corneal reflection of nearby, directed light source (IR) Positional difference between pupil center and corneal reflection changes with eye rotation, but remains relatively constant with minor head movements

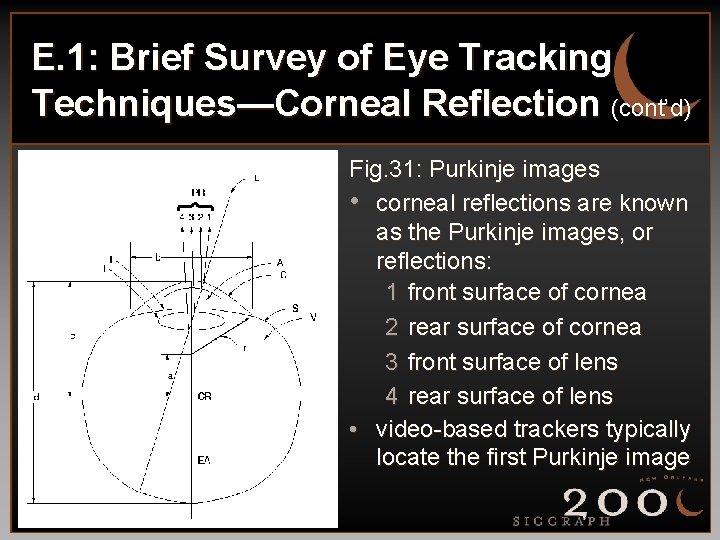

E. 1: Brief Survey of Eye Tracking Techniques—Corneal Reflection (cont’d) Fig. 31: Purkinje images • corneal reflections are known as the Purkinje images, or reflections: 1 front surface of cornea 2 rear surface of cornea 3 front surface of lens 4 rear surface of lens • video-based trackers typically locate the first Purkinje image

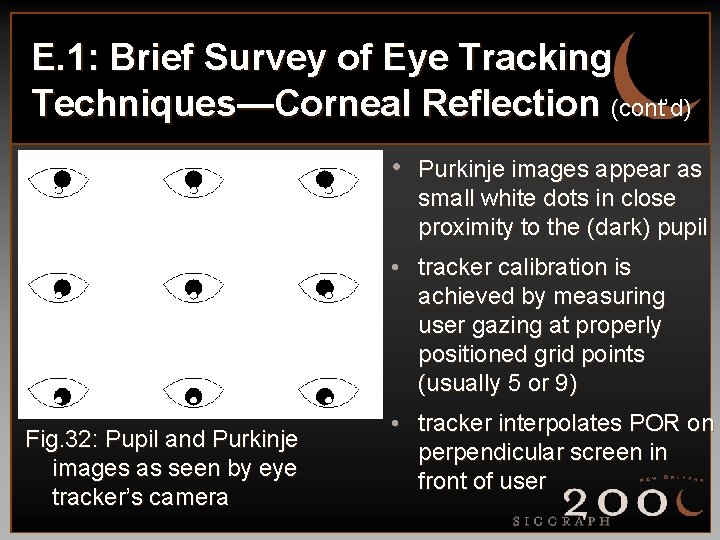

E. 1: Brief Survey of Eye Tracking Techniques—Corneal Reflection (cont’d) • Purkinje images appear as small white dots in close proximity to the (dark) pupil • tracker calibration is achieved by measuring user gazing at properly positioned grid points (usually 5 or 9) Fig. 32: Pupil and Purkinje images as seen by eye tracker’s camera • tracker interpolates POR on perpendicular screen in front of user

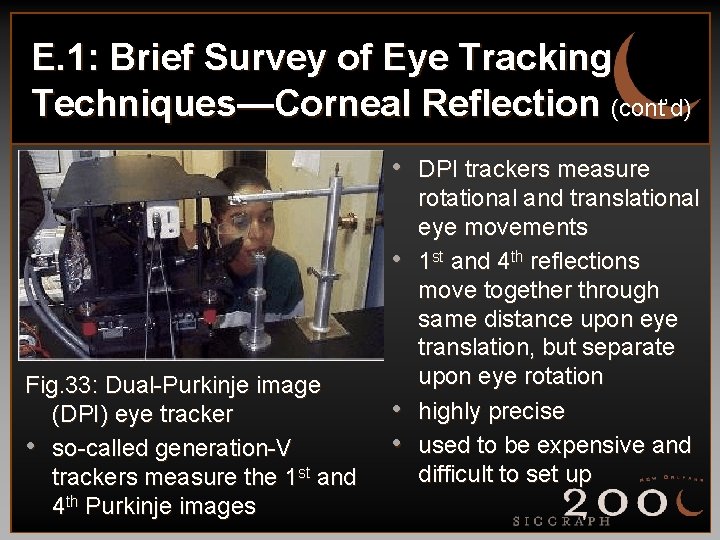

E. 1: Brief Survey of Eye Tracking Techniques—Corneal Reflection (cont’d) • DPI trackers measure • Fig. 33: Dual-Purkinje image (DPI) eye tracker • so-called generation-V trackers measure the 1 st and 4 th Purkinje images • • rotational and translational eye movements 1 st and 4 th reflections move together through same distance upon eye translation, but separate upon eye rotation highly precise used to be expensive and difficult to set up

F: Integration Issues and Requirements • Integration of eye tracker into graphics • • system chiefly depends on: • delivery of proper graphics video stream to tracker • subsequent reception of tracker’s 2 D gaze data Gaze data (x- and y-coordinates) are typically either stored by tracker or sent to graphics host via serial cable Discussion focuses on video-based eye tracker

F: Integration Issues and Requirements (cont’d) • Video-based tracker’s main advantages over other systems: • relatively non-invasive • fairly accurate (to about 1° over a 30° field of view) • for the most part, not difficult to integrate • Main limitation: sampling frequency, typically limited to video frame rate, 60 Hz

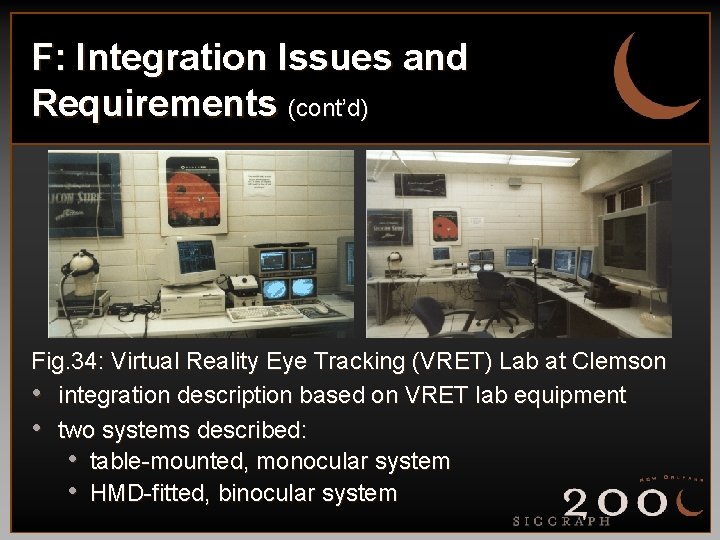

F: Integration Issues and Requirements (cont’d) Fig. 34: Virtual Reality Eye Tracking (VRET) Lab at Clemson • integration description based on VRET lab equipment • two systems described: • table-mounted, monocular system • HMD-fitted, binocular system

F: Integration Issues and Requirements—VRET lab equipment • SGI Onyx 2® Infinite. Reality™ graphics host • dual-rack, dual-pipe, 8 MIPS® R 10000™ CPUs • 3 Gb RAM, 0. 5 G texture memory • ISCAN eye tracker • table-mounted pan/tilt camera monocular unit • HMD-fitted binocular unit • Virtual Research V 8 HMD • Ascension 6 Degree-Of-Freedom (DOF) Flock Of Birds (FOB) d. c. electromagnetic head tracker

F: Integration Issues and Requirements—preliminaries • Primary requirements: • knowledge of video format required by tracker (e. g. , • NTSC, VGA) knowledge of data format returned by tracker (e. g. , byte order, codes) • Secondary requirements—tracker capabilities: • fine-grained cursor control and readout? • transmission of tracker’s operating mode along with gaze data?

F: Integration Issues and Requirements—objectives • Scene alignment: • required for calibration, display, and data mapping • use tracker’s fine-cursor to measure graphics display dimensions—it is crucial that graphically displayed calibration points are aligned with those displayed by eye tracker • Host/tracker synchronization: • required for generation of proper graphics display, • i. e. , calibration or stimulus use tracker’s operating mode data

F. 1: System Installation • Primary wiring considerations: • video cables—imperative that graphics host generate video signal in format expected by eye tracker • example problem: graphics host generates VGA signal (e. g. , as required by HMD), eye tracker expects NTSC • serial line—comparatively simple; serial driver typically facilitated by data specifications provided by eye tracker vendor

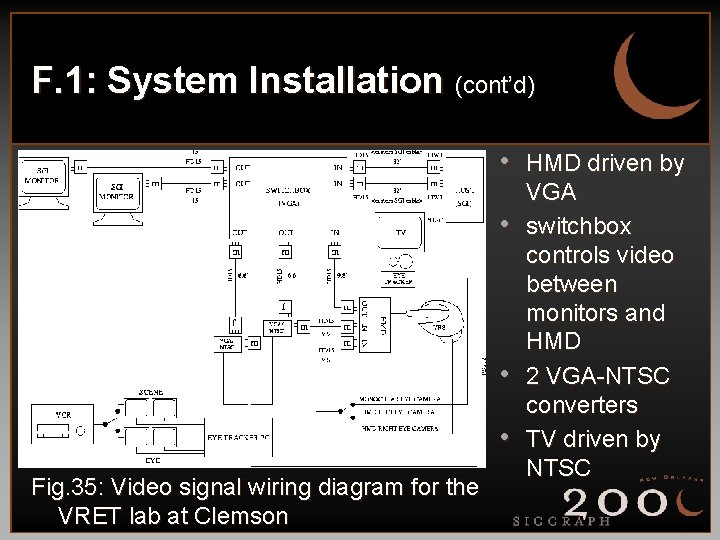

F. 1: System Installation (cont’d) • HMD driven by • • • Fig. 35: Video signal wiring diagram for the VRET lab at Clemson VGA switchbox controls video between monitors and HMD 2 VGA-NTSC converters TV driven by NTSC

F. 1: System Installation—lessons learned at Clemson • Various video switches were needed to • • • control graphics video and eye camera video Custom VGA cables (13 W 3 -HD 15) were needed to feed monitors, HMD, and tracker Host VGA signal had to be re-configured (horizontal sync not sync-on-green) Switchbox had to be re-wired (missing two lines for pins 13 and 14!)

F. 2: Application Program Requirements • Two example applications: • 2 D image-viewing program (monocular) • VR gaze-contingent environment (binocular) • Most important common requirement: • mapping eye tracker coordinates to application program’s reference frame • Extra requirements for VR: • head tracker coordinate mapping • gaze vector calculation

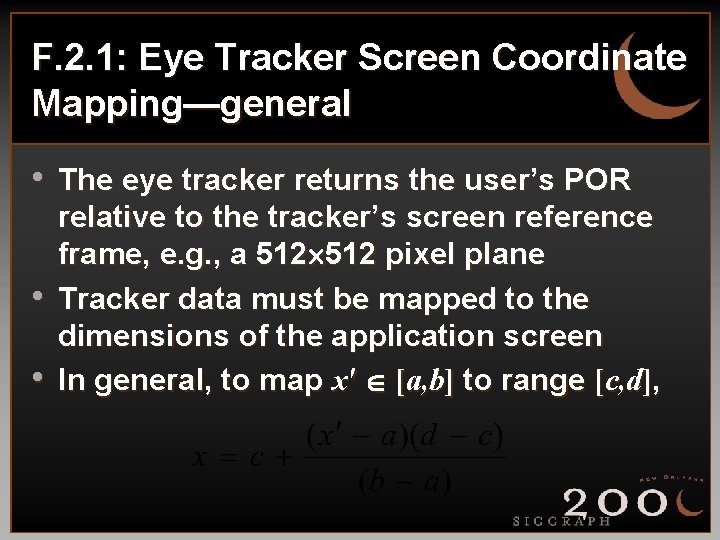

F. 2. 1: Eye Tracker Screen Coordinate Mapping—general • The eye tracker returns the user’s POR • • relative to the tracker’s screen reference frame, e. g. , a 512 pixel plane Tracker data must be mapped to the dimensions of the application screen In general, to map x' [a, b] to range [c, d],

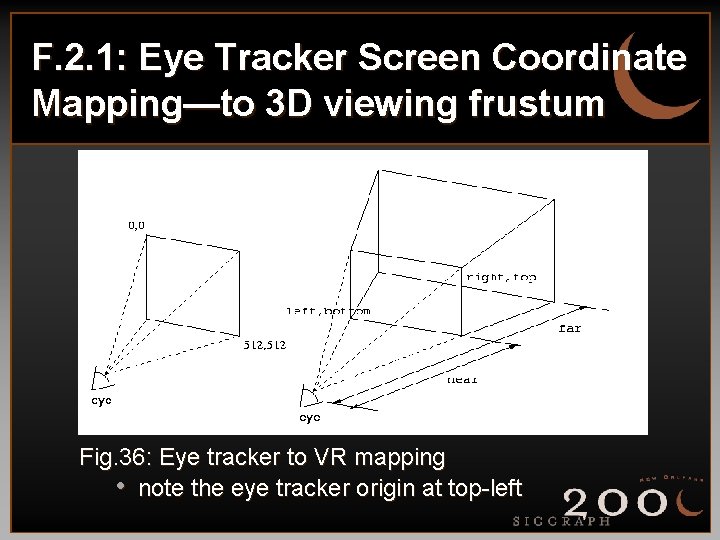

F. 2. 1: Eye Tracker Screen Coordinate Mapping—to 3 D viewing frustum Fig. 36: Eye tracker to VR mapping • note the eye tracker origin at top-left

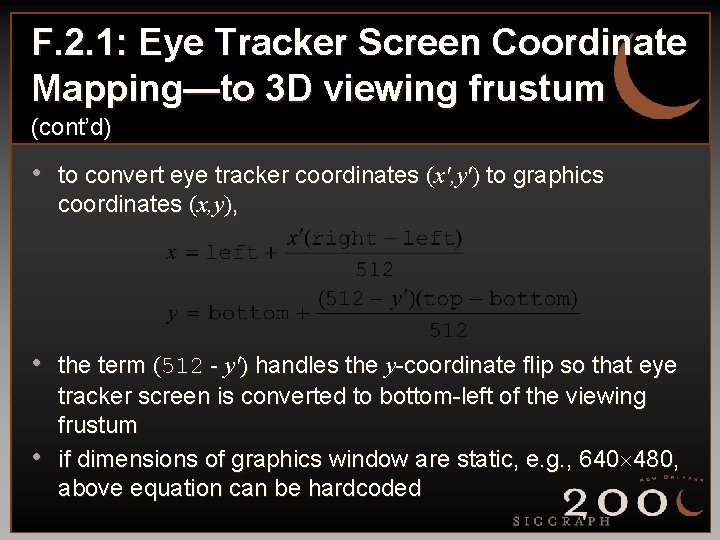

F. 2. 1: Eye Tracker Screen Coordinate Mapping—to 3 D viewing frustum (cont’d) • to convert eye tracker coordinates (x', y') to graphics coordinates (x, y), • the term (512 - y') handles the y-coordinate flip so that eye • tracker screen is converted to bottom-left of the viewing frustum if dimensions of graphics window are static, e. g. , 640 480, above equation can be hardcoded

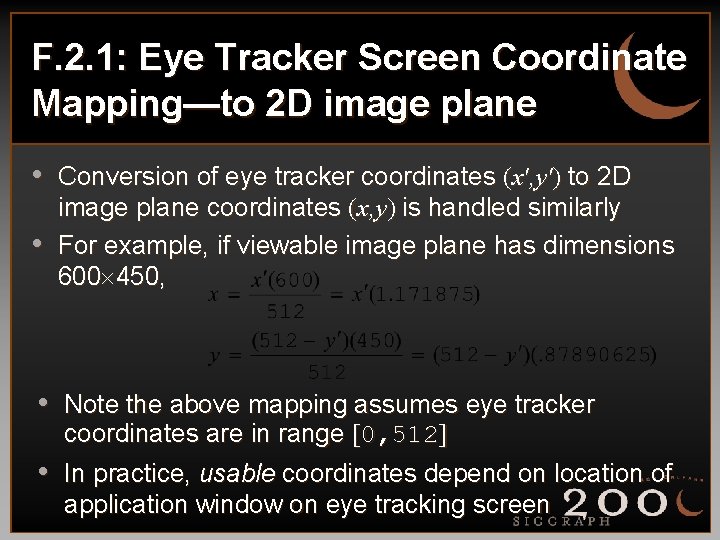

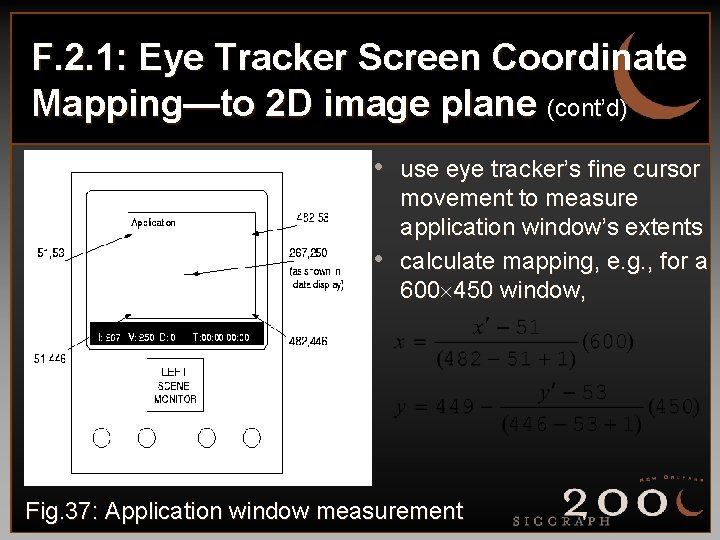

F. 2. 1: Eye Tracker Screen Coordinate Mapping—to 2 D image plane • Conversion of eye tracker coordinates (x', y') to 2 D • image plane coordinates (x, y) is handled similarly For example, if viewable image plane has dimensions 600 450, • Note the above mapping assumes eye tracker • coordinates are in range [0, 512] In practice, usable coordinates depend on location of application window on eye tracking screen

F. 2. 1: Eye Tracker Screen Coordinate Mapping—to 2 D image plane (cont’d) • use eye tracker’s fine cursor • movement to measure application window’s extents calculate mapping, e. g. , for a 600 450 window, Fig. 37: Application window measurement

F. 2. 2: Mapping Flock Of Birds Tracker Coordinates • For VR applications, position and • • orientation of the head is required (obtained from head tracker, e. g. , FOB) The tracker reports 6 Degree-Of-Freedom (DOF) information regarding sensor position and orientation Orientation is given in terms of Euler angles

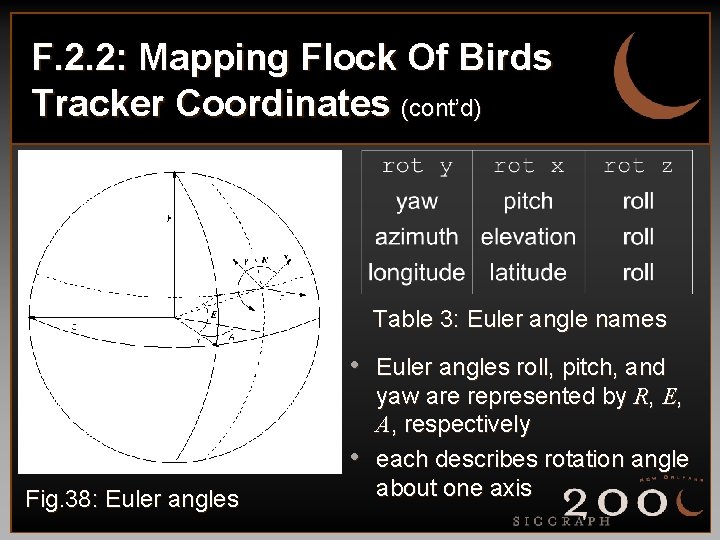

F. 2. 2: Mapping Flock Of Birds Tracker Coordinates (cont’d) Table 3: Euler angle names • Euler angles roll, pitch, and • Fig. 38: Euler angles yaw are represented by R, E, A, respectively each describes rotation angle about one axis

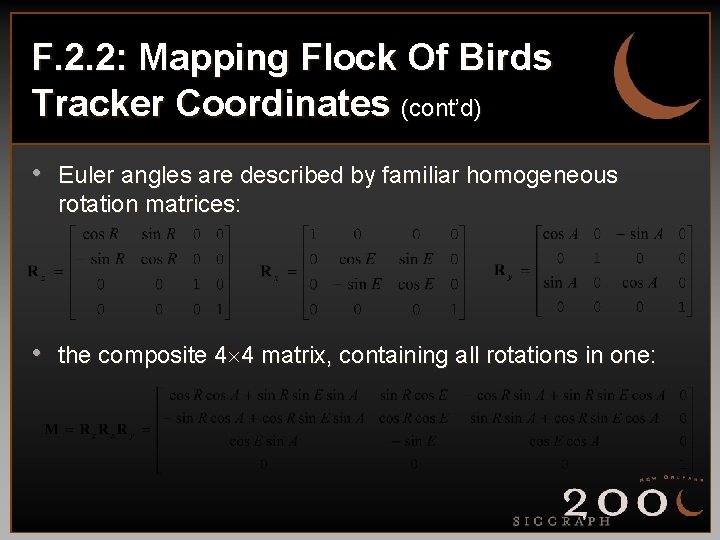

F. 2. 2: Mapping Flock Of Birds Tracker Coordinates (cont’d) • Euler angles are described by familiar homogeneous rotation matrices: • the composite 4 4 matrix, containing all rotations in one:

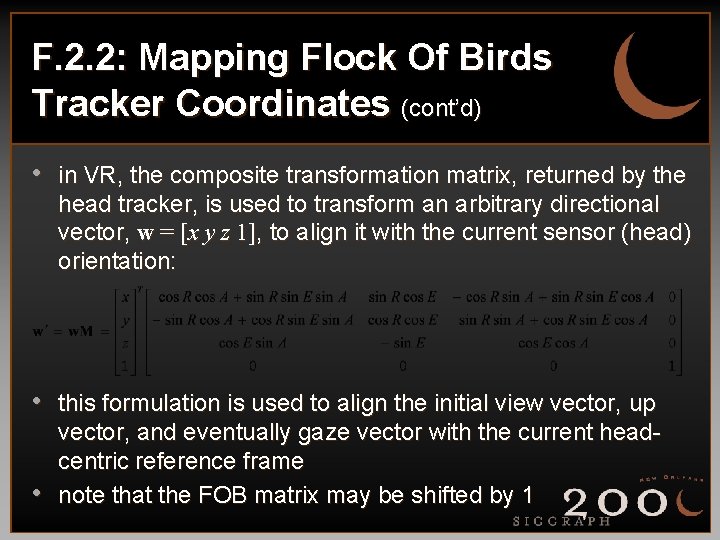

F. 2. 2: Mapping Flock Of Birds Tracker Coordinates (cont’d) • in VR, the composite transformation matrix, returned by the head tracker, is used to transform an arbitrary directional vector, w = [x y z 1], to align it with the current sensor (head) orientation: • this formulation is used to align the initial view vector, up • vector, and eventually gaze vector with the current headcentric reference frame note that the FOB matrix may be shifted by 1

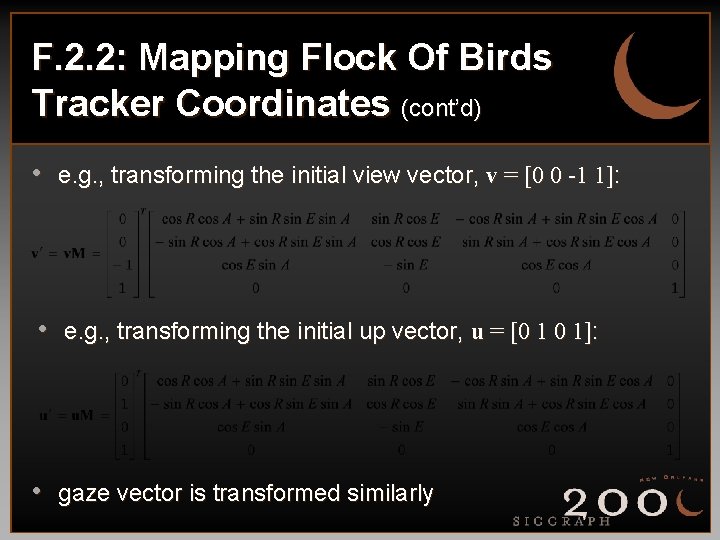

F. 2. 2: Mapping Flock Of Birds Tracker Coordinates (cont’d) • e. g. , transforming the initial view vector, v = [0 0 -1 1]: • e. g. , transforming the initial up vector, u = [0 1 0 1]: • gaze vector is transformed similarly

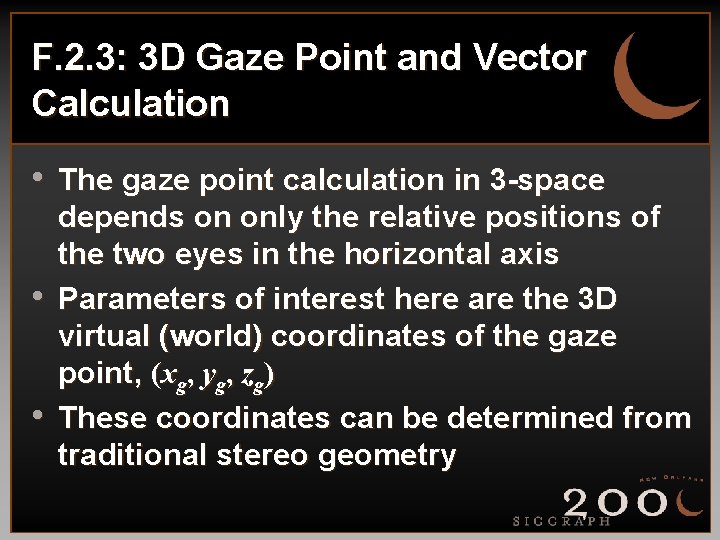

F. 2. 3: 3 D Gaze Point and Vector Calculation • The gaze point calculation in 3 -space • • depends on only the relative positions of the two eyes in the horizontal axis Parameters of interest here are the 3 D virtual (world) coordinates of the gaze point, (xg, yg, zg) These coordinates can be determined from traditional stereo geometry

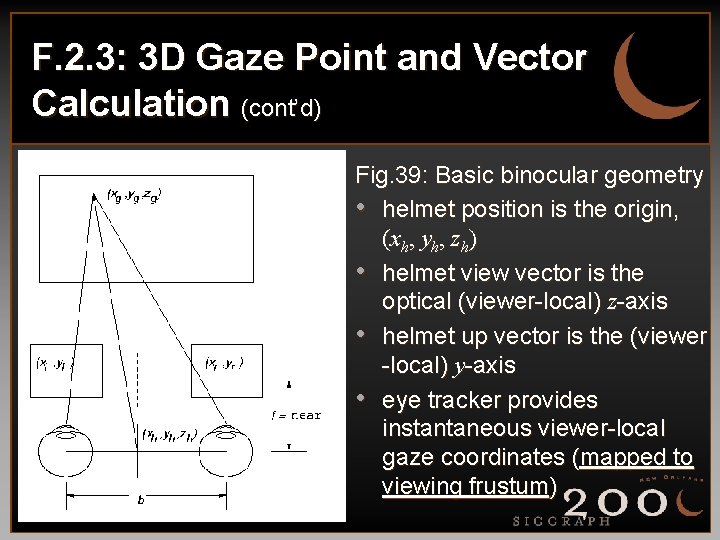

F. 2. 3: 3 D Gaze Point and Vector Calculation (cont’d) Fig. 39: Basic binocular geometry • helmet position is the origin, (xh, yh, zh) • helmet view vector is the optical (viewer-local) z-axis • helmet up vector is the (viewer -local) y-axis • eye tracker provides instantaneous viewer-local gaze coordinates (mapped to viewing frustum)

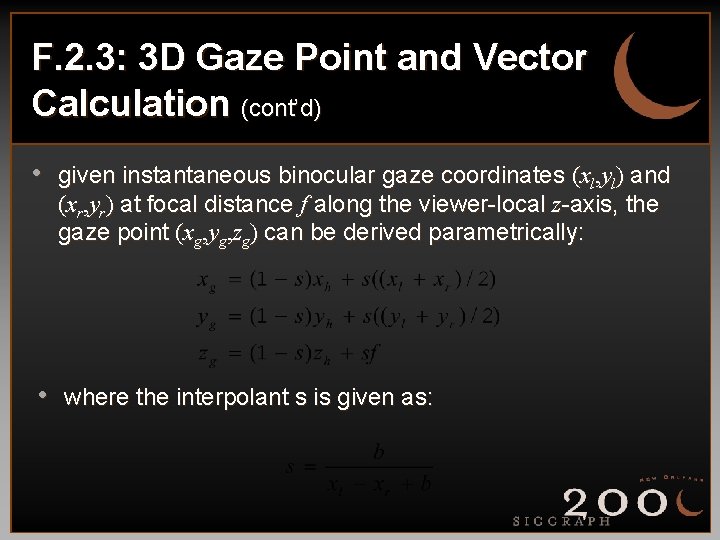

F. 2. 3: 3 D Gaze Point and Vector Calculation (cont’d) • given instantaneous binocular gaze coordinates (xl, yl) and (xr, yr) at focal distance f along the viewer-local z-axis, the gaze point (xg, yg, zg) can be derived parametrically: • where the interpolant s is given as:

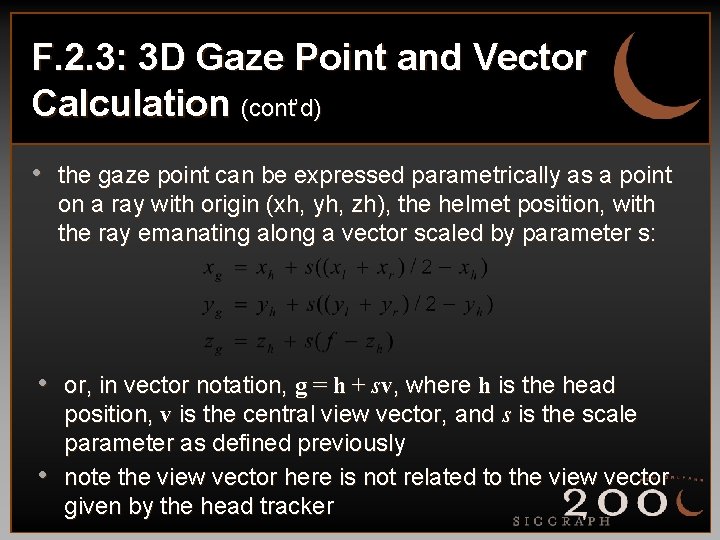

F. 2. 3: 3 D Gaze Point and Vector Calculation (cont’d) • the gaze point can be expressed parametrically as a point on a ray with origin (xh, yh, zh), the helmet position, with the ray emanating along a vector scaled by parameter s: • or, in vector notation, g = h + sv, where h is the head • position, v is the central view vector, and s is the scale parameter as defined previously note the view vector here is not related to the view vector given by the head tracker

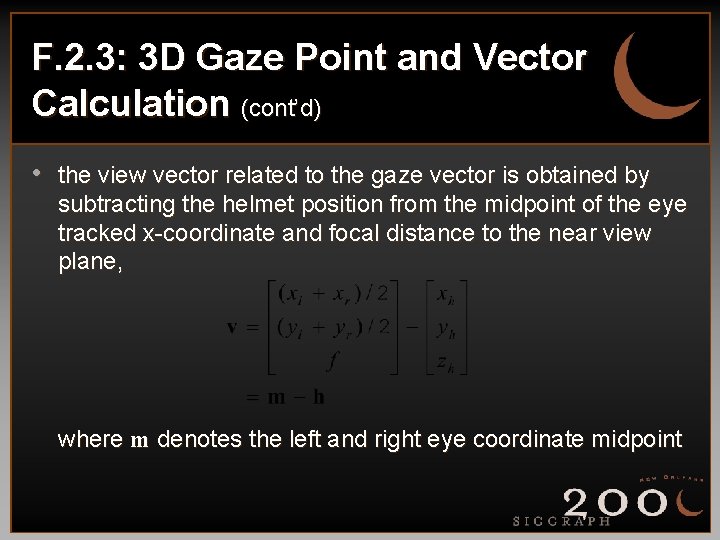

F. 2. 3: 3 D Gaze Point and Vector Calculation (cont’d) • the view vector related to the gaze vector is obtained by subtracting the helmet position from the midpoint of the eye tracked x-coordinate and focal distance to the near view plane, where m denotes the left and right eye coordinate midpoint

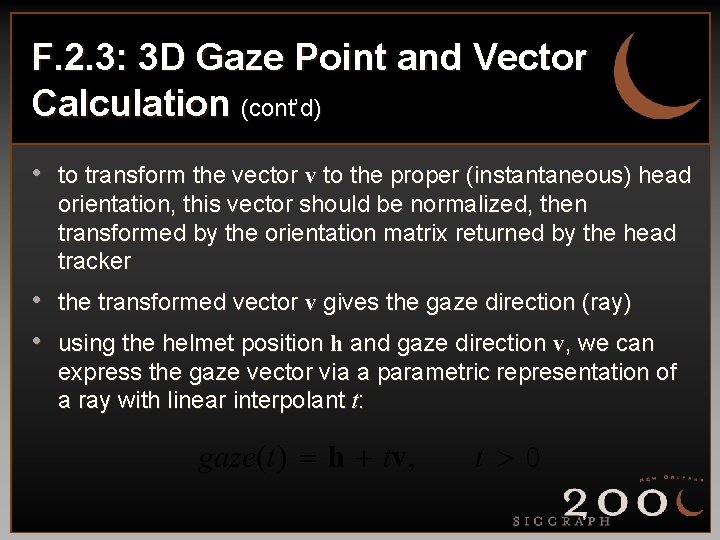

F. 2. 3: 3 D Gaze Point and Vector Calculation (cont’d) • to transform the vector v to the proper (instantaneous) head orientation, this vector should be normalized, then transformed by the orientation matrix returned by the head tracker • the transformed vector v gives the gaze direction (ray) • using the helmet position h and gaze direction v, we can express the gaze vector via a parametric representation of a ray with linear interpolant t:

F. 2. 4: Virtual Fixation Coordinates • The gaze vector can be used in VR to • • calculate virtual fixation coordinates Fixation coordinates are obtained via traditional ray/polygon intersection calculations, as used in ray tracing The fixated object of interest (polygon) is the one closest to the viewer which intersects the ray

F. 2. 4: Virtual Fixation Coordinates— ray/plane intersection • The calculation of a ray and all polygons in the scene is obtained via a parametric representation of the ray: • where ro defines the ray’s origin (point) and rd defines the ray direction (vector) For gaze, use ro = h, the head position, rd = v, the gaze direction vector

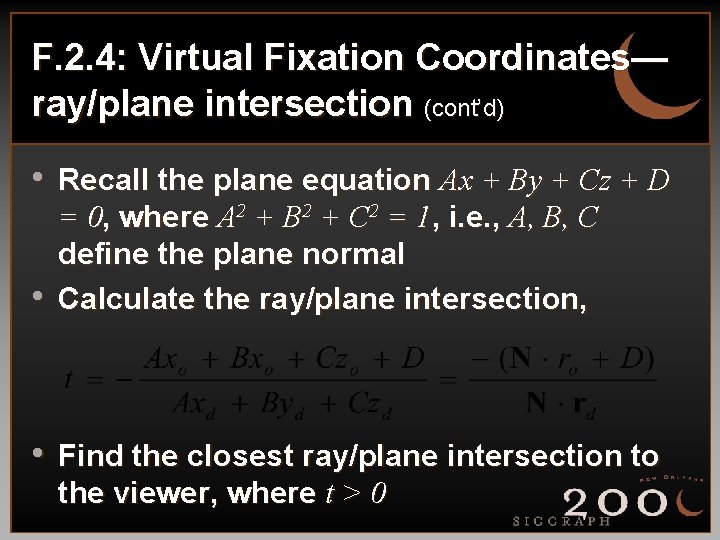

F. 2. 4: Virtual Fixation Coordinates— ray/plane intersection (cont’d) • Recall the plane equation Ax + By + Cz + D • = 0, where A 2 + B 2 + C 2 = 1, i. e. , A, B, C define the plane normal Calculate the ray/plane intersection, • Find the closest ray/plane intersection to the viewer, where t > 0

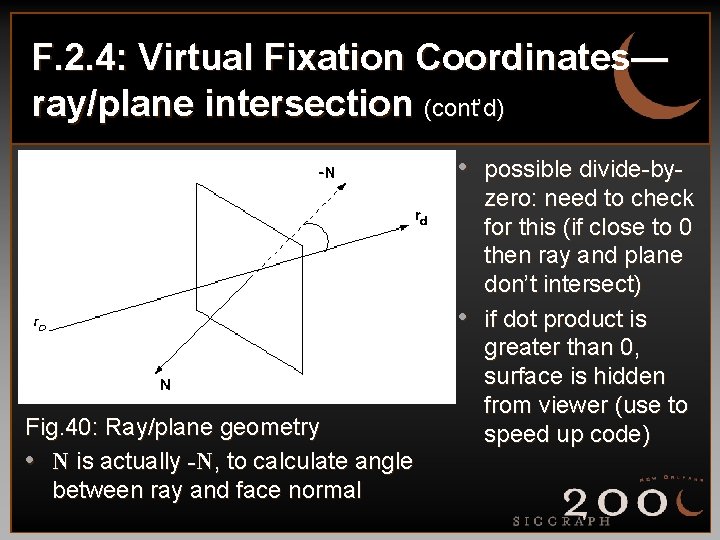

F. 2. 4: Virtual Fixation Coordinates— ray/plane intersection (cont’d) • possible divide-by- • Fig. 40: Ray/plane geometry • N is actually -N, to calculate angle between ray and face normal zero: need to check for this (if close to 0 then ray and plane don’t intersect) if dot product is greater than 0, surface is hidden from viewer (use to speed up code)

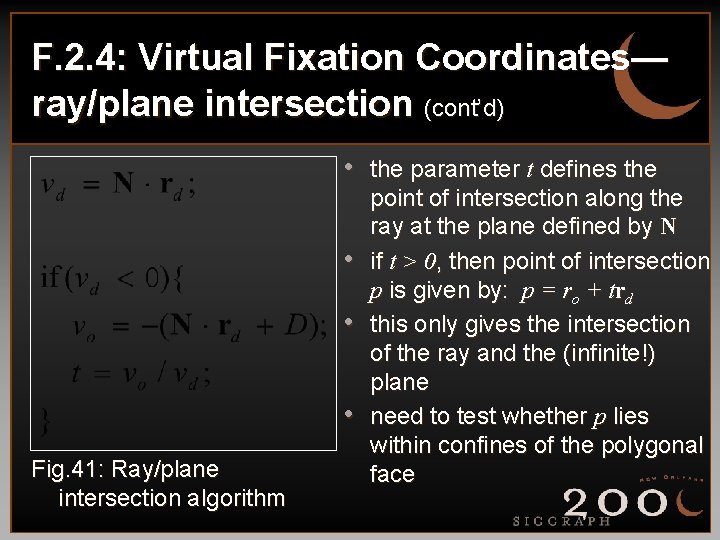

F. 2. 4: Virtual Fixation Coordinates— ray/plane intersection (cont’d) • the parameter t defines the • • • Fig. 41: Ray/plane intersection algorithm point of intersection along the ray at the plane defined by N if t > 0, then point of intersection p is given by: p = ro + trd this only gives the intersection of the ray and the (infinite!) plane need to test whether p lies within confines of the polygonal face

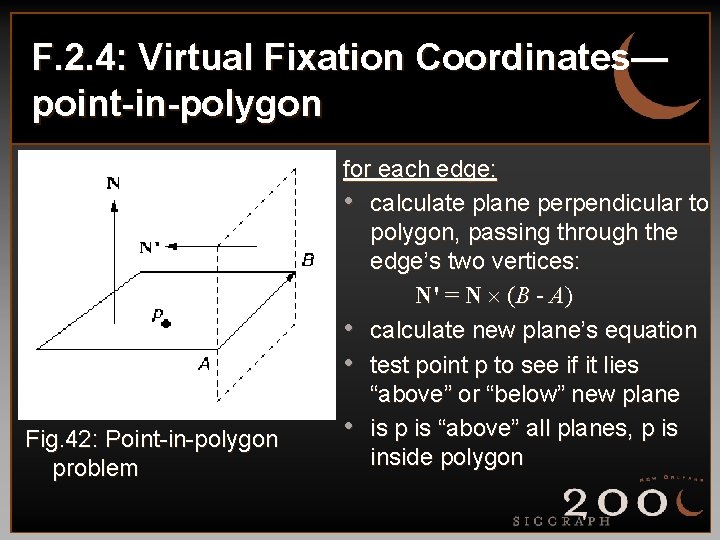

F. 2. 4: Virtual Fixation Coordinates— point-in-polygon Fig. 42: Point-in-polygon problem for each edge: • calculate plane perpendicular to polygon, passing through the edge’s two vertices: N' = N (B - A) • calculate new plane’s equation • test point p to see if it lies “above” or “below” new plane • is p is “above” all planes, p is inside polygon

F. 3: System Calibration and Usage • Most video-based eye trackers require • • calibration Usually composed of simple stimuli (dots, crosses, etc. ) displayed sequentially at far extents of viewing window Application program displaying stimulus must be able to draw calibration stimulus at appropriate locations and at appropriate time

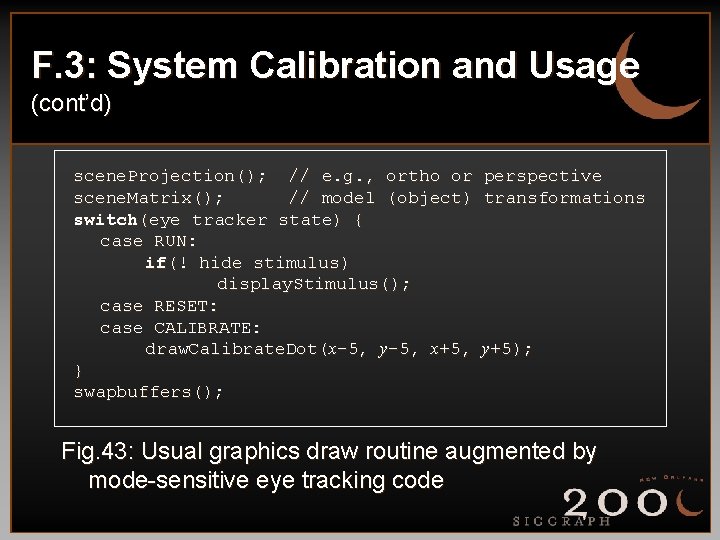

F. 3: System Calibration and Usage (cont’d) scene. Projection(); // e. g. , ortho or perspective scene. Matrix(); // model (object) transformations switch(eye tracker state) { case RUN: if(! hide stimulus) display. Stimulus(); case RESET: case CALIBRATE: draw. Calibrate. Dot(x-5, y-5, x+5, y+5); } swapbuffers(); Fig. 43: Usual graphics draw routine augmented by mode-sensitive eye tracking code

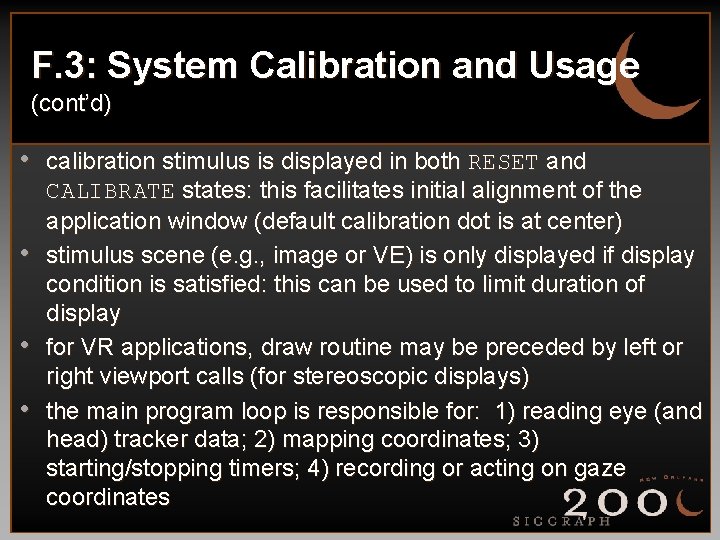

F. 3: System Calibration and Usage (cont’d) • calibration stimulus is displayed in both RESET and • • • CALIBRATE states: this facilitates initial alignment of the application window (default calibration dot is at center) stimulus scene (e. g. , image or VE) is only displayed if display condition is satisfied: this can be used to limit duration of display for VR applications, draw routine may be preceded by left or right viewport calls (for stereoscopic displays) the main program loop is responsible for: 1) reading eye (and head) tracker data; 2) mapping coordinates; 3) starting/stopping timers; 4) recording or acting on gaze coordinates

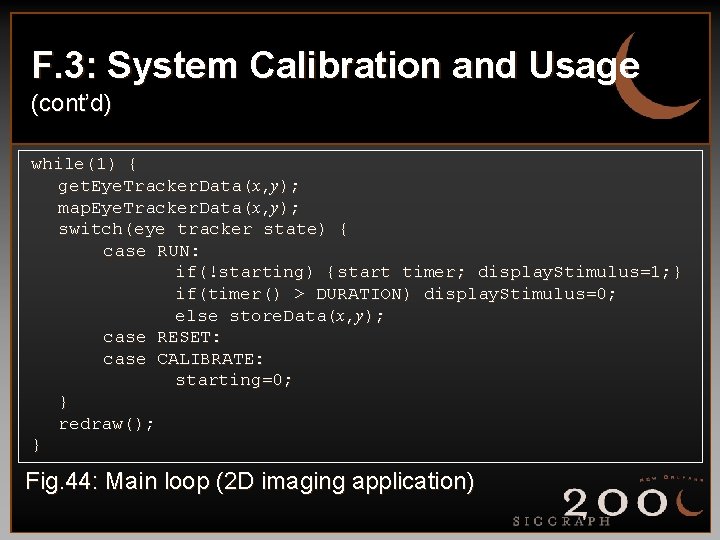

F. 3: System Calibration and Usage (cont’d) while(1) { get. Eye. Tracker. Data(x, y); map. Eye. Tracker. Data(x, y); switch(eye tracker state) { case RUN: if(!starting) {start timer; display. Stimulus=1; } if(timer() > DURATION) display. Stimulus=0; else store. Data(x, y); case RESET: case CALIBRATE: starting=0; } redraw(); } Fig. 44: Main loop (2 D imaging application)

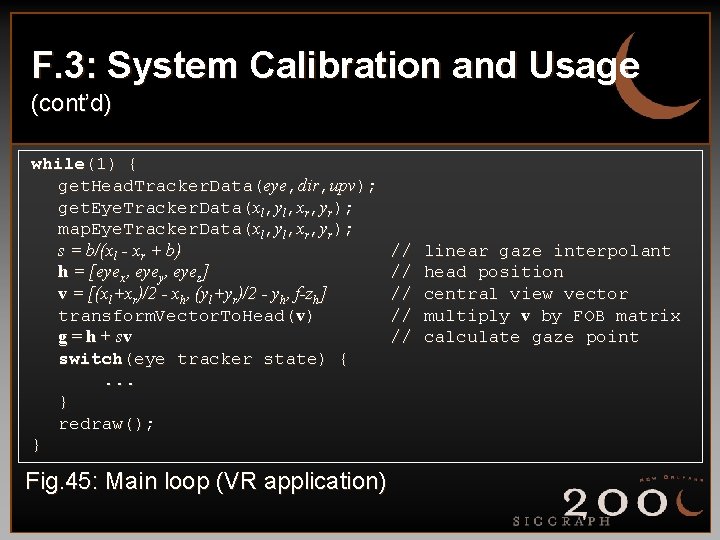

F. 3: System Calibration and Usage (cont’d) while(1) { get. Head. Tracker. Data(eye, dir, upv); get. Eye. Tracker. Data(xl, yl, xr, yr); map. Eye. Tracker. Data(xl, yl, xr, yr); s = b/(xl - xr + b) h = [eyex, eyey, eyez] v = [(xl+xr)/2 - xh, (yl+yr)/2 - yh, f-zh] transform. Vector. To. Head(v) g = h + sv switch(eye tracker state) {. . . } redraw(); } Fig. 45: Main loop (VR application) // // // linear gaze interpolant head position central view vector multiply v by FOB matrix calculate gaze point

F. 3: System Calibration and Usage (cont’d) • once application program has been developed, system is ready for use; general manner of usage requires the following steps: • move application window to align it with eye tracker’s default (central) calibration dot • adjust the eye tracker’s pupil and corneal reflection thresholds • calibrate the eye tracker • reset the eye tracker and run (program displays stimulus and stores data) • save recorded data • optionally re-calibrate again

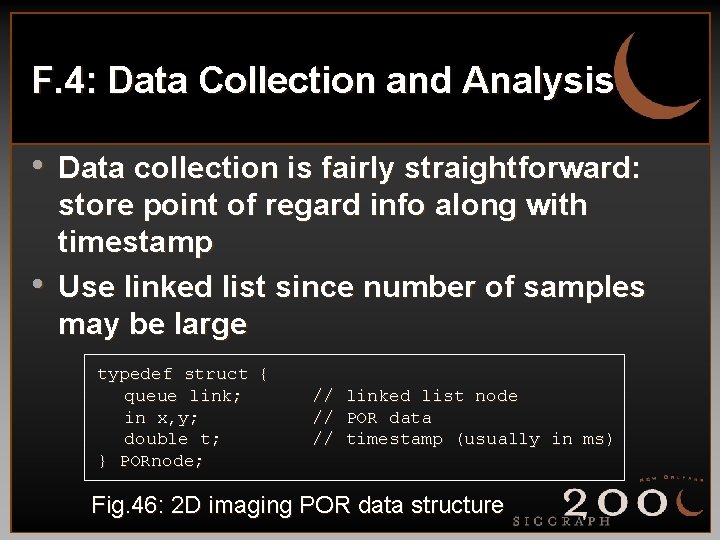

F. 4: Data Collection and Analysis • Data collection is fairly straightforward: • store point of regard info along with timestamp Use linked list since number of samples may be large typedef struct { queue link; in x, y; double t; } PORnode; // linked list node // POR data // timestamp (usually in ms) Fig. 46: 2 D imaging POR data structure

F. 4: Data Collection and Analysis (cont’d) • For VR applications, data structure is • • similar, but will require z-component May also store head position Analysis follows eye movement analysis models presented previously • Goals: 1) eliminate noise; 2) identify fixations • Final point: label stored data appropriately; with many subjects, experiments tend to generate LOTS of data

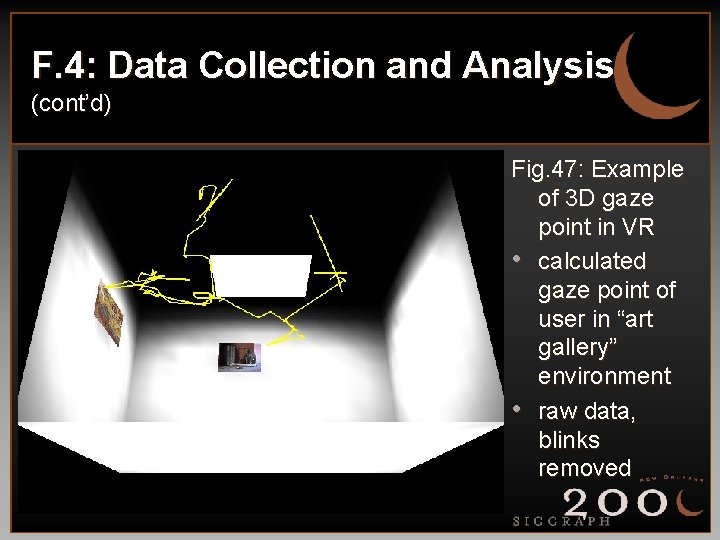

F. 4: Data Collection and Analysis (cont’d) Fig. 47: Example of 3 D gaze point in VR • calculated gaze point of user in “art gallery” environment • raw data, blinks removed

- Slides: 55