Etcetera CMSC 491 HadoopBased Distributed Computing Spring 2016

Etcetera! CMSC 491 Hadoop-Based Distributed Computing Spring 2016 Adam Shook

Agenda • Advanced HDFS Features • Apache Cassandra • Cluster Planning

ADVANCED HDFS FEATURES

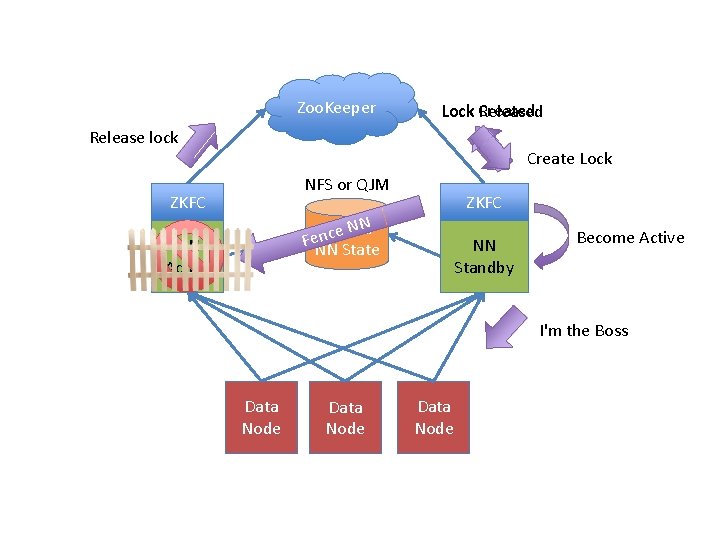

Highly Available Name. Node • Highly Available Name. Node feature eliminates SPOF • Requires two Name. Nodes and some extra configuration Active/Passive or Active/Active Clients only contact the active Name. Node Data. Nodes report in and heartbeat with both Name. Nodes Active Name. Node writes metadata to a quorum of Journal. Nodes – Standby Name. Node reads the Journal. Nodes to stay in sync – – • There is no Check. Point. Node (Secondary. Name. Node) – The passive Name. Node performs checkpoint operations

HA Name. Node Failover • There are two failover scenarios – Graceful – Performed by an administrator for maintenance – Automated – Active Name. Node fails • Failed Name. Node must be fenced – Eliminates the 'split brain syndrome' • Two fencing methods are available – sshfence – Kill Name. Nodes daemon – shell script – disables access to the Name. Node, shuts down the network switch port, sends power off to the failed Name. Node • There is no 'default' fencing method

Zoo. Keeper Lock Created Release lock Create Lock NFS or QJM ZKFC NN Shared e c n Fe. NN State NN Active Standby Become Active I'm the Boss Data Node

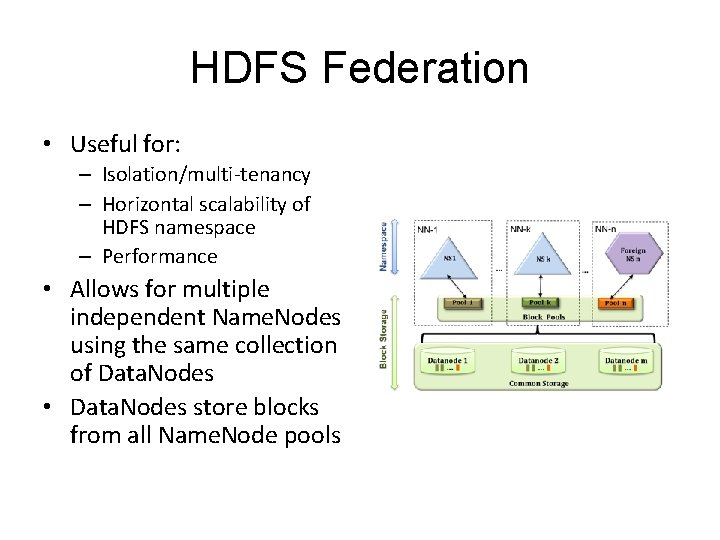

HDFS Federation • Useful for: – Isolation/multi-tenancy – Horizontal scalability of HDFS namespace – Performance • Allows for multiple independent Name. Nodes using the same collection of Data. Nodes • Data. Nodes store blocks from all Name. Node pools

Federated Name. Nodes • File-system namespace scalable beyond heap size • Name. Node performance no longer a bottleneck • Name. Node failure/degradation is isolated – Only data managed by the failed Name. Node is unavailable • Each Name. Node can be made Highly Available

Hadoop Security • Hadoop's original design – web crawler and indexing – Not designed for processing of confidential data – Small number of trusted users • Access to cluster controlled by providing user accounts – Little / no control on what a user could do once logged in • HDFS permissions were added in the Hadoop 0. 16 release – – Similar to basic UNIX file permissions HDFS permissions can be disabled via dfs. permissions Basically for protection against user-induced accidents Did not protect from attacks • Authentication is accomplished on the client side – Easily subverted via a simple configuration parameter

Kerberos • Kerberos support introduced in the Hadoop 0. 22. 2 release – Developed at MIT / freely available – Not a Hadoop-specific feature – Not included in Hadoop releases • Works on the basis of 'tickets' – Allow communicating nodes to securely identify each other across unsecure networks • Primarily a client/server model implementing mutual authentication – The user and the server verify each other's identity

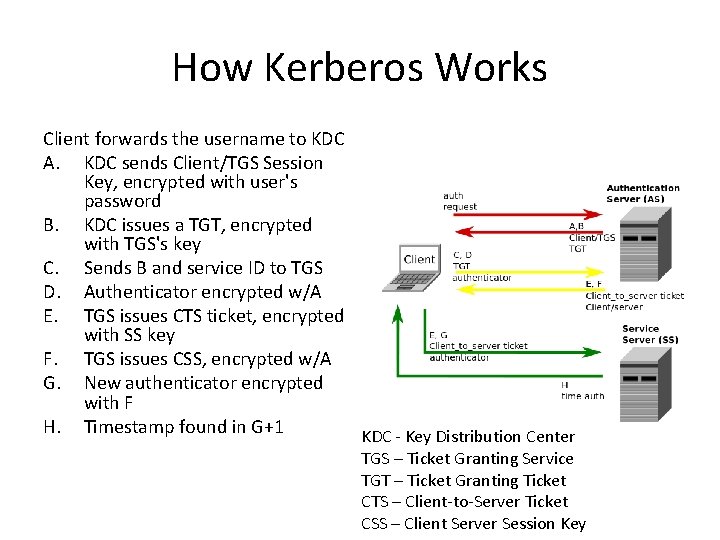

How Kerberos Works Client forwards the username to KDC A. KDC sends Client/TGS Session Key, encrypted with user's password B. KDC issues a TGT, encrypted with TGS's key C. Sends B and service ID to TGS D. Authenticator encrypted w/A E. TGS issues CTS ticket, encrypted with SS key F. TGS issues CSS, encrypted w/A G. New authenticator encrypted with F H. Timestamp found in G+1 KDC - Key Distribution Center TGS – Ticket Granting Service TGT – Ticket Granting Ticket CTS – Client-to-Server Ticket CSS – Client Server Session Key

Kerberos Services • Authentication Server – Authenticates client – Gives client enough information to authenticate with Service Server • Service Server – Authenticates client – Authenticates itself to client – Provides services to client

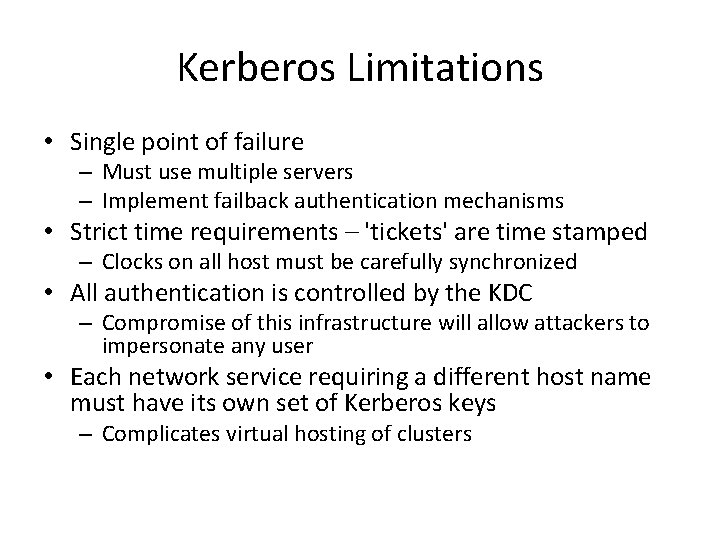

Kerberos Limitations • Single point of failure – Must use multiple servers – Implement failback authentication mechanisms • Strict time requirements – 'tickets' are time stamped – Clocks on all host must be carefully synchronized • All authentication is controlled by the KDC – Compromise of this infrastructure will allow attackers to impersonate any user • Each network service requiring a different host name must have its own set of Kerberos keys – Complicates virtual hosting of clusters

APACHE CASSANDRA

In a couple dozen words. . . • Apache Cassandra is an open source, distributed, decentralized, elastically scalable, highly available, fault-tolerant, tunably consistent, column-oriented database with a lot of adjectives

Overview • Originally created by Facebook and opened sourced in 2008 • Based on Google Big Table & Amazon Dynamo • Massively Scalable • Easy to use • No relation to Hadoop – Specifically, data is not stored on HDFS

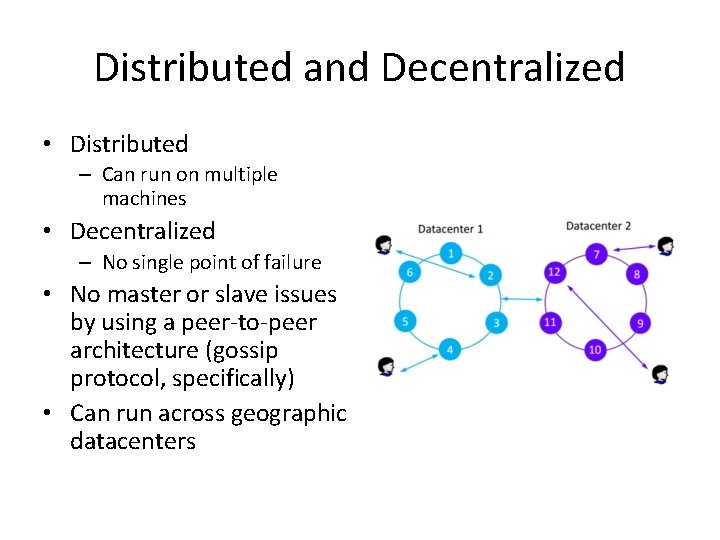

Distributed and Decentralized • Distributed – Can run on multiple machines • Decentralized – No single point of failure • No master or slave issues by using a peer-to-peer architecture (gossip protocol, specifically) • Can run across geographic datacenters

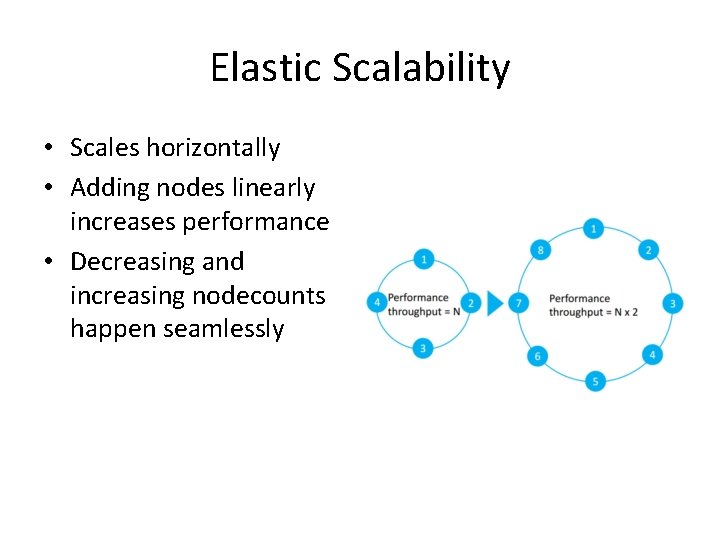

Elastic Scalability • Scales horizontally • Adding nodes linearly increases performance • Decreasing and increasing nodecounts happen seamlessly

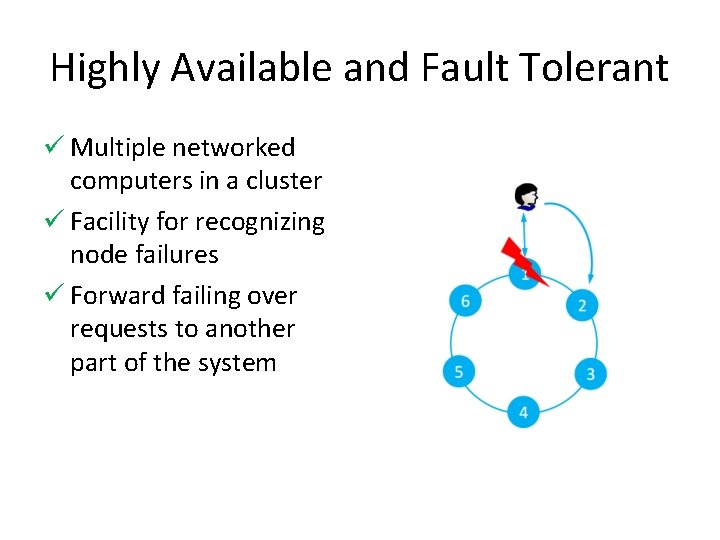

Highly Available and Fault Tolerant ü Multiple networked computers in a cluster ü Facility for recognizing node failures ü Forward failing over requests to another part of the system

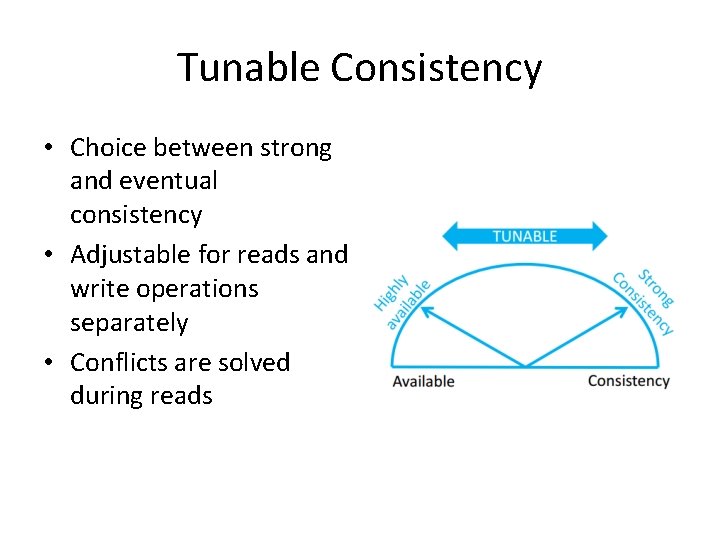

Tunable Consistency • Choice between strong and eventual consistency • Adjustable for reads and write operations separately • Conflicts are solved during reads

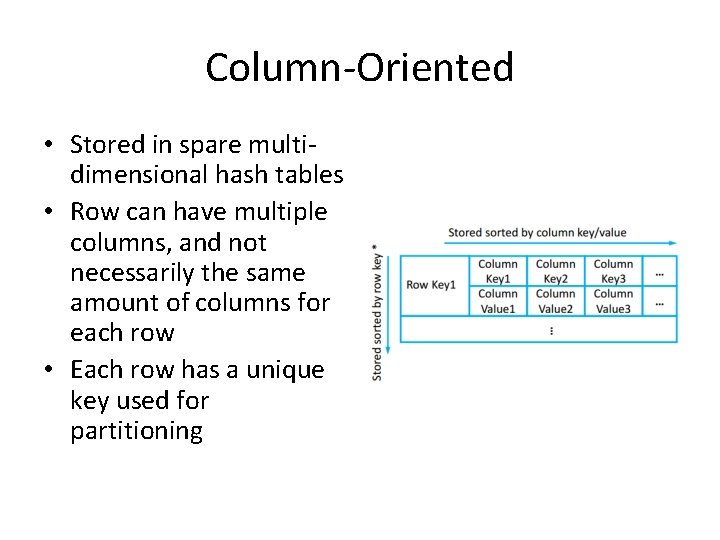

Column-Oriented • Stored in spare multidimensional hash tables • Row can have multiple columns, and not necessarily the same amount of columns for each row • Each row has a unique key used for partitioning

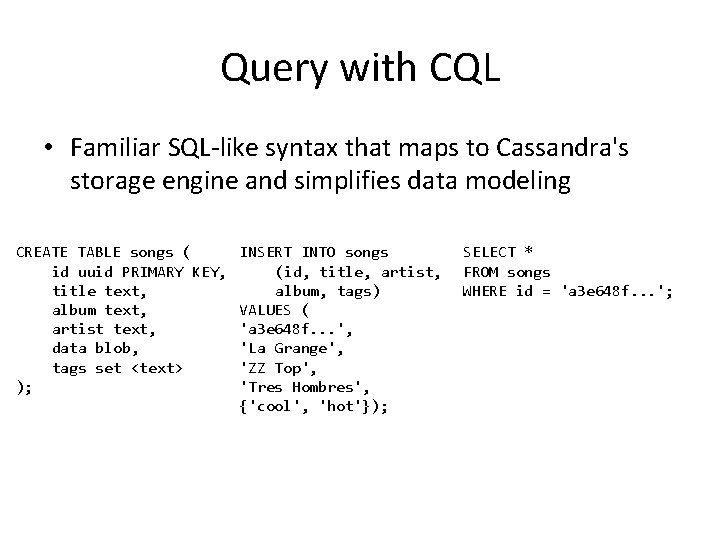

Query with CQL • Familiar SQL-like syntax that maps to Cassandra's storage engine and simplifies data modeling CREATE TABLE songs ( id uuid PRIMARY KEY, title text, album text, artist text, data blob, tags set <text> ); INSERT INTO songs (id, title, artist, album, tags) VALUES ( 'a 3 e 648 f. . . ', 'La Grange', 'ZZ Top', 'Tres Hombres', {'cool', 'hot'}); SELECT * FROM songs WHERE id = 'a 3 e 648 f. . . ';

When should I use this? • Key features to compliment a Hadoop system: • Geographical distribution • Large deployments of structured data

CLUSTER PLANNING

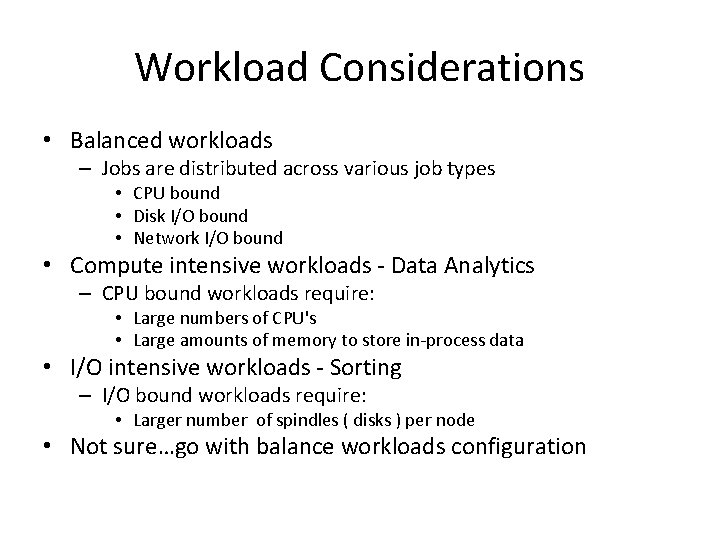

Workload Considerations • Balanced workloads – Jobs are distributed across various job types • CPU bound • Disk I/O bound • Network I/O bound • Compute intensive workloads - Data Analytics – CPU bound workloads require: • Large numbers of CPU's • Large amounts of memory to store in-process data • I/O intensive workloads - Sorting – I/O bound workloads require: • Larger number of spindles ( disks ) per node • Not sure…go with balance workloads configuration

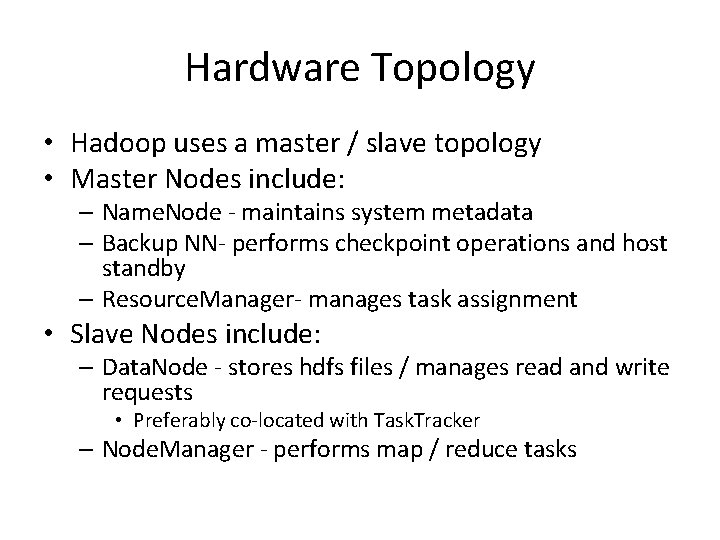

Hardware Topology • Hadoop uses a master / slave topology • Master Nodes include: – Name. Node - maintains system metadata – Backup NN- performs checkpoint operations and host standby – Resource. Manager- manages task assignment • Slave Nodes include: – Data. Node - stores hdfs files / manages read and write requests • Preferably co-located with Task. Tracker – Node. Manager - performs map / reduce tasks

Sizing The Cluster • Remember. . . Scaling is a relatively simple task – Start with a moderate sized cluster – Grow the cluster as requirements dictate – Develop a scaling strategy • As simple as scaling is…adding new nodes takes time and resources • Don't want to be adding new nodes each week • Amount of data typically defines initial cluster size – rate at which the volume of data increases • Drivers for determining when to grow your cluster – Storage requirements – Processing requirements – Memory requirements

Storage Reqs Drive Cluster Growth • Data volume increases at a rate of 1 TB / week • 3 TB of storage are required to store the data alone – Remember block replication • Consider additional overhead - typically 30% – Remember files that are stored on a nodes local disk • If Data. Nodes incorporate 4 - 1 TB drives – 1 new node per week is required – 2 years of data - roughly 100 TB • will require 100 new nodes

Things Break • Things are going to break – – This assumption is a core premise of Hadoop If a disk fails, the infrastructure must accommodate If a Data. Node fails, the Name. Node must manage this If a task fails, the Application. Master must manage this failure • Master nodes are typically a SPOF unless using a Highly Available configuration – Name. Node goes down, HDFS is inaccessible • Use Name. Node HA – Resource. Manager goes down, can't run any jobs • Use RM HA (in development)

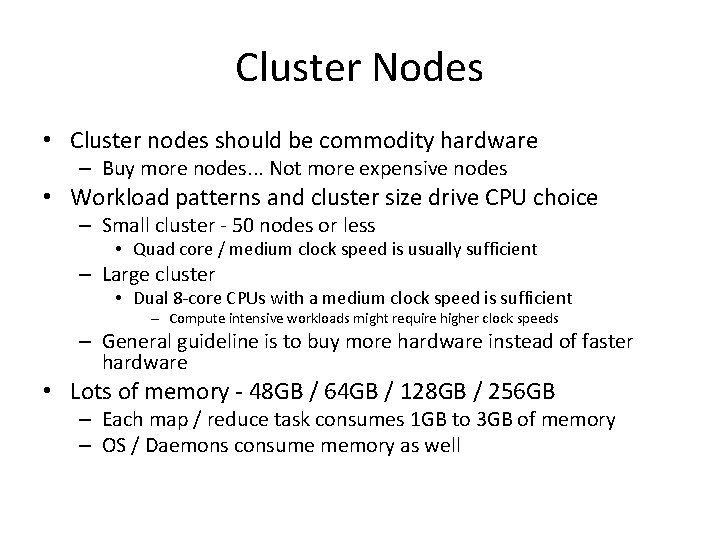

Cluster Nodes • Cluster nodes should be commodity hardware – Buy more nodes. . . Not more expensive nodes • Workload patterns and cluster size drive CPU choice – Small cluster - 50 nodes or less • Quad core / medium clock speed is usually sufficient – Large cluster • Dual 8 -core CPUs with a medium clock speed is sufficient – Compute intensive workloads might require higher clock speeds – General guideline is to buy more hardware instead of faster hardware • Lots of memory - 48 GB / 64 GB / 128 GB / 256 GB – Each map / reduce task consumes 1 GB to 3 GB of memory – OS / Daemons consume memory as well

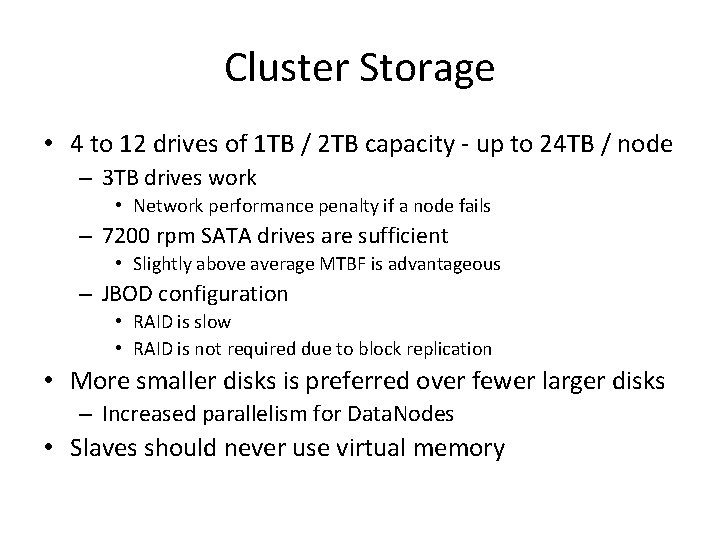

Cluster Storage • 4 to 12 drives of 1 TB / 2 TB capacity - up to 24 TB / node – 3 TB drives work • Network performance penalty if a node fails – 7200 rpm SATA drives are sufficient • Slightly above average MTBF is advantageous – JBOD configuration • RAID is slow • RAID is not required due to block replication • More smaller disks is preferred over fewer larger disks – Increased parallelism for Data. Nodes • Slaves should never use virtual memory

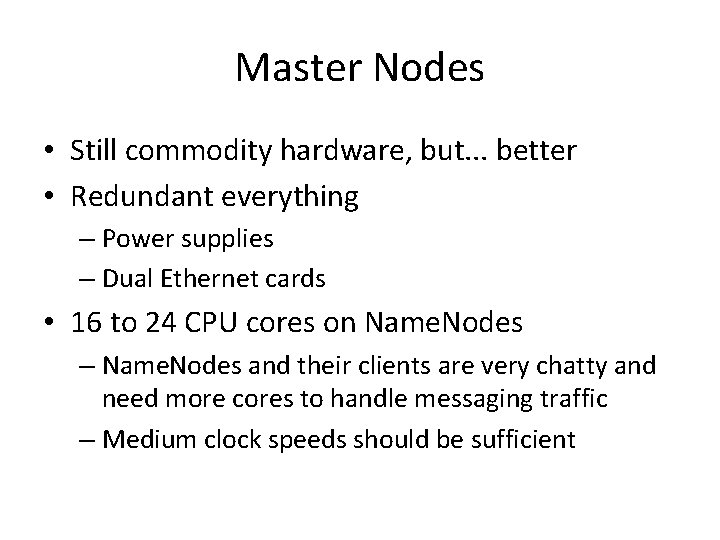

Master Nodes • Still commodity hardware, but. . . better • Redundant everything – Power supplies – Dual Ethernet cards • 16 to 24 CPU cores on Name. Nodes – Name. Nodes and their clients are very chatty and need more cores to handle messaging traffic – Medium clock speeds should be sufficient

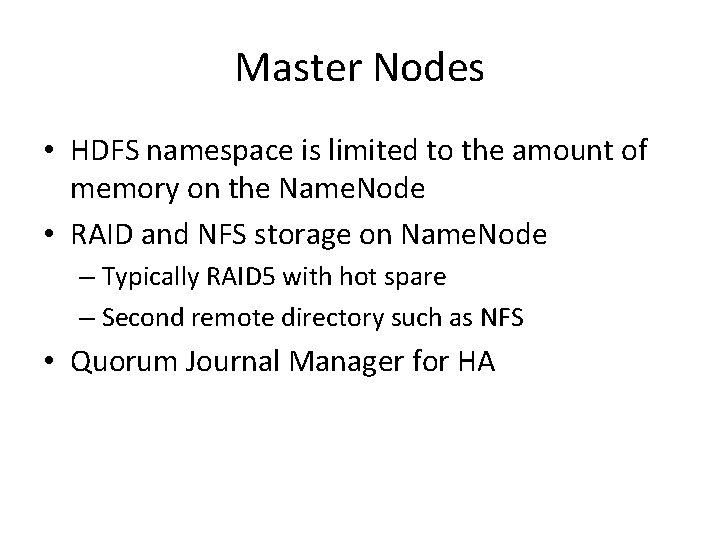

Master Nodes • HDFS namespace is limited to the amount of memory on the Name. Node • RAID and NFS storage on Name. Node – Typically RAID 5 with hot spare – Second remote directory such as NFS • Quorum Journal Manager for HA

Network Considerations • Hadoop is bandwidth intensive – This can be a significant bottleneck – Use dedicated switches • 10 Gb Ethernet is pretty good for large clusters

Which Operating System? • Choose an OS that you are comfortable and familiar with – Consider you admin resources / experience • Red. Hat Enterprise Linux – Includes support contract • Cent. OS – No support but the price is right • Many other possibilities – Su. SE Enterprise Linux – Ubuntu – Fedora

Which Java Virtual Machine? • Oracle Java is the only “supported” JVM – Runs on Open. JDK, but use at your own risk • Hadoop 1. 0 requires Java JDK 1. 6 or higher • Hadoop 2. x requires Java JDK 1. 7

References • http: //cassandra. apache. org • http: //redis. io/ – http: //try. redis. io – Give it a test drive! • http: //www. slideshare. net/jbellis/apache-cassandranosql-in-the-enterprise • http: //www. slideshare. net/planetcassandra/cassandra -introduction-features-30103666 • http: //research. microsoft. com/enus/um/people/srikanth/netdb 11 papers/netd b 11 -final 12. pdf • http: //www. slideshare. net/miguno/apache-kafka-08 basic-training-verisign

- Slides: 37