Workflow Management CMSC 491 HadoopBased Distributed Computing Spring

Workflow Management CMSC 491 Hadoop-Based Distributed Computing Spring 2016 Adam Shook

APACHE OOZIE

Problem! • "Okay, Hadoop is great, but how do people actually do this? “ – A Real Person – Package jobs? – Chaining actions together? – Run these on a schedule? – Pre and post processing? – Retry failures?

Apache Oozie Workflow Scheduler for Hadoop • Scalable, reliable, and extensible workflow scheduler system to manage Apache Hadoop jobs • Workflow jobs are DAGs of actions • Coordinator jobs are recurrent Oozie Workflow jobs triggered by time and data availability • Supports several types of jobs: • • Java Map. Reduce Streaming Map. Reduce Pig Hive • • Sqoop Distcp Java programs Shell scripts

Why should I care? • Retry jobs in the event of a failure • Execute jobs at a specific time or when data is available • Correctly order job execution based on dependencies • Provide a common framework for communication • Use the workflow to couple resources instead of some home-grown code base

Layers of Oozie • • Bundles Coordinators Workflows Actions

Actions • Have a type, and each type has a defined set of configuration variables • Each action must specify what to do based on success or failure

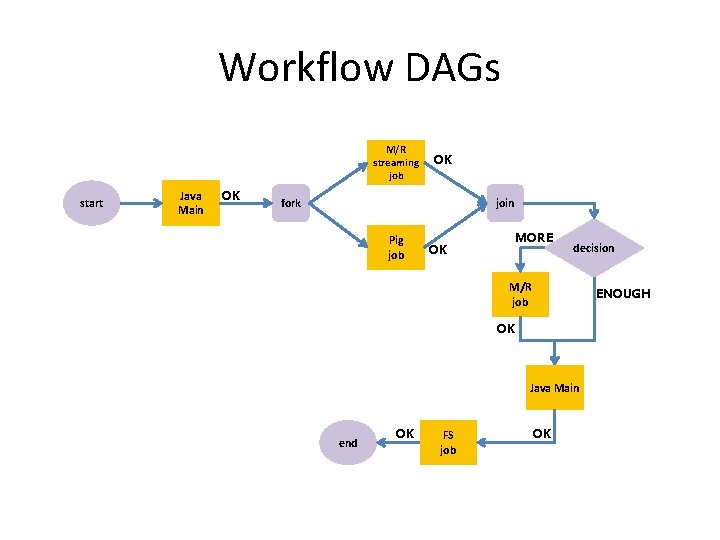

Workflow DAGs M/R streaming job start Java Main OK OK fork join Pig job MORE OK decision M/R job ENOUGH OK Java Main end OK FS job OK

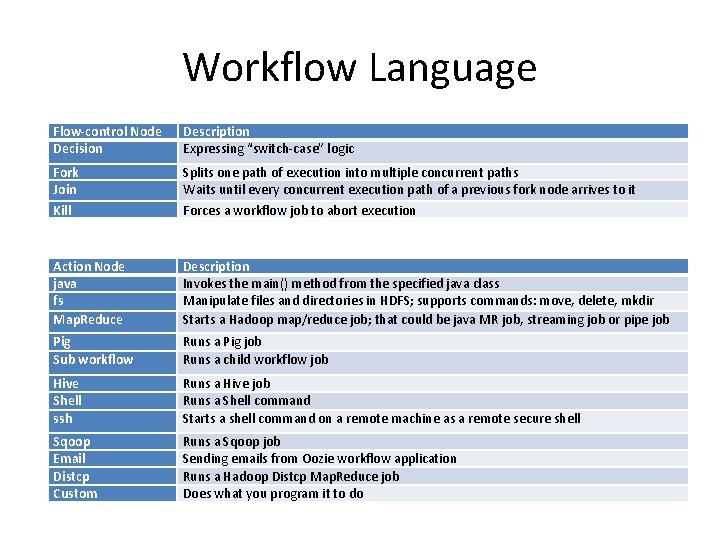

Workflow Language Flow-control Node Decision Description Expressing “switch-case” logic Fork Join Kill Splits one path of execution into multiple concurrent paths Waits until every concurrent execution path of a previous fork node arrives to it Forces a workflow job to abort execution Action Node java fs Map. Reduce Description Invokes the main() method from the specified java class Manipulate files and directories in HDFS; supports commands: move, delete, mkdir Starts a Hadoop map/reduce job; that could be java MR job, streaming job or pipe job Pig Sub workflow Runs a Pig job Runs a child workflow job Hive Shell ssh Runs a Hive job Runs a Shell command Starts a shell command on a remote machine as a remote secure shell Sqoop Email Distcp Custom Runs a Sqoop job Sending emails from Oozie workflow application Runs a Hadoop Distcp Map. Reduce job Does what you program it to do

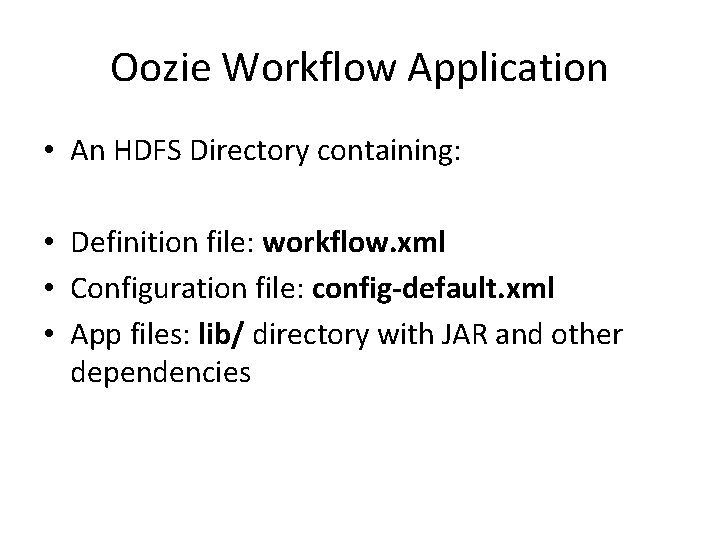

Oozie Workflow Application • An HDFS Directory containing: • Definition file: workflow. xml • Configuration file: config-default. xml • App files: lib/ directory with JAR and other dependencies

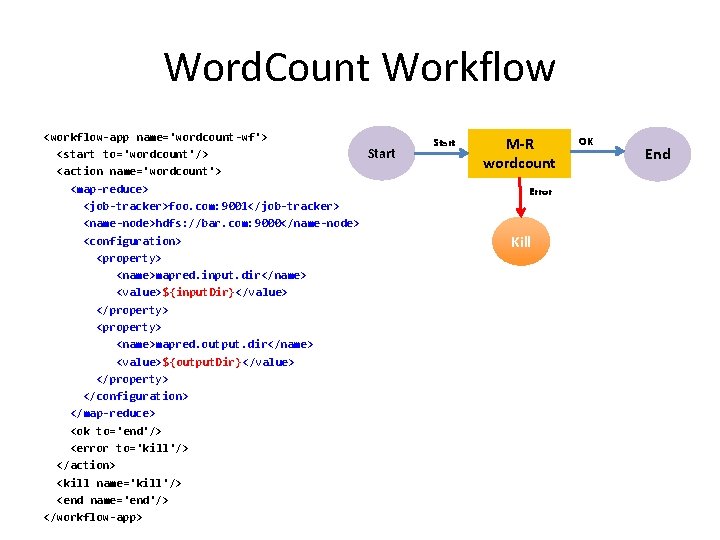

Word. Count Workflow <workflow-app name='wordcount-wf'> Start <start to='wordcount'/> <action name='wordcount'> <map-reduce> <job-tracker>foo. com: 9001</job-tracker> <name-node>hdfs: //bar. com: 9000</name-node> <configuration> <property> <name>mapred. input. dir</name> <value>${input. Dir}</value> </property> <property> <name>mapred. output. dir</name> <value>${output. Dir}</value> </property> </configuration> </map-reduce> <ok to='end'/> <error to='kill'/> </action> <kill name='kill'/> <end name='end'/> </workflow-app> Start M-R wordcount Error Kill OK End

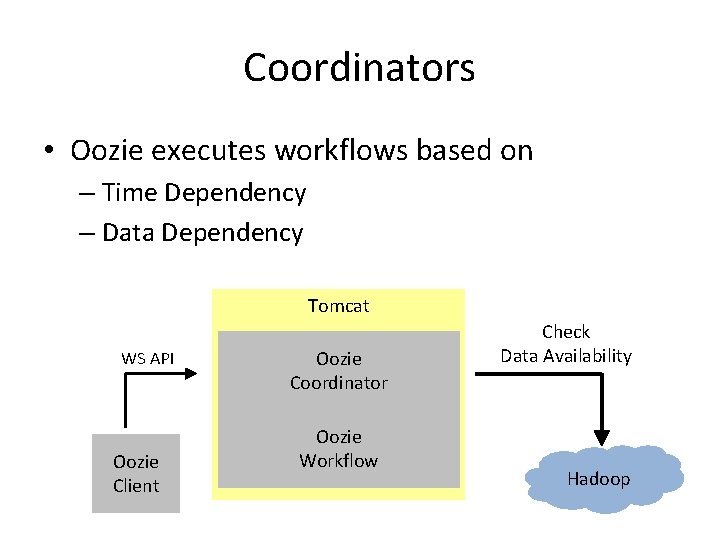

Coordinators • Oozie executes workflows based on – Time Dependency – Data Dependency Tomcat WS API Oozie Client Oozie Coordinator Oozie Workflow Check Data Availability Hadoop

Bundle • Bundles are higher-level abstractions that batch a set of coordinators together • No explicit dependencies between them, but they can be used to define a pipeline

Interacting with Oozie • • • Read-Only Web Console CLI Java client Web Service Endpoints Directly with Oozie DB using SQL

What do I need to deploy a workflow? • • coordinator. xml workflow. xml Libraries Properties – Contains things like Name. Node and Resource. Manager addresses and other jobspecific properties

Okay, I've built those • Now you can put it in HDFS and run it hdfs -put my_job oozie/app oozie job -run -config job. properties

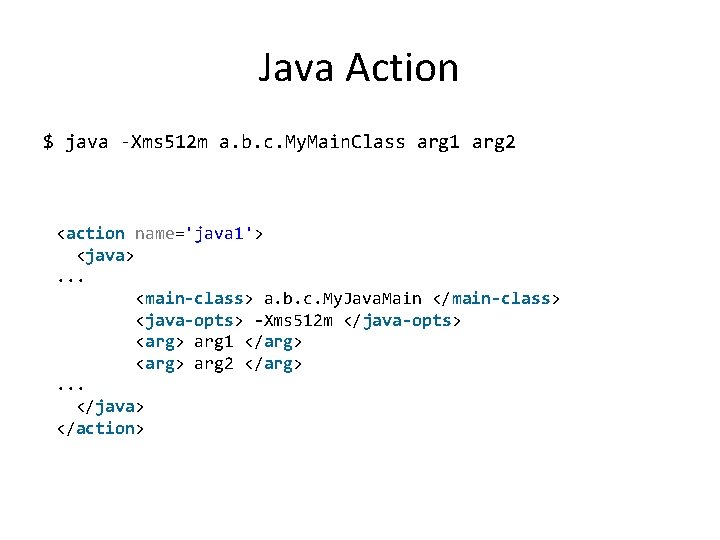

Java Action • A Java action will execute the main method of the specified Java class • Java classes should be packaged in a JAR and placed with workflow application's lib directory – wf-app-dir/workflow. xml – wf-app-dir/lib/my. Java. Classes. JAR

Java Action $ java -Xms 512 m a. b. c. My. Main. Class arg 1 arg 2 <action name='java 1'> <java> . . . <main-class> a. b. c. My. Java. Main </main-class> <java-opts> -Xms 512 m </java-opts> <arg> arg 1 </arg> <arg> arg 2 </arg> . . . </java> </action>

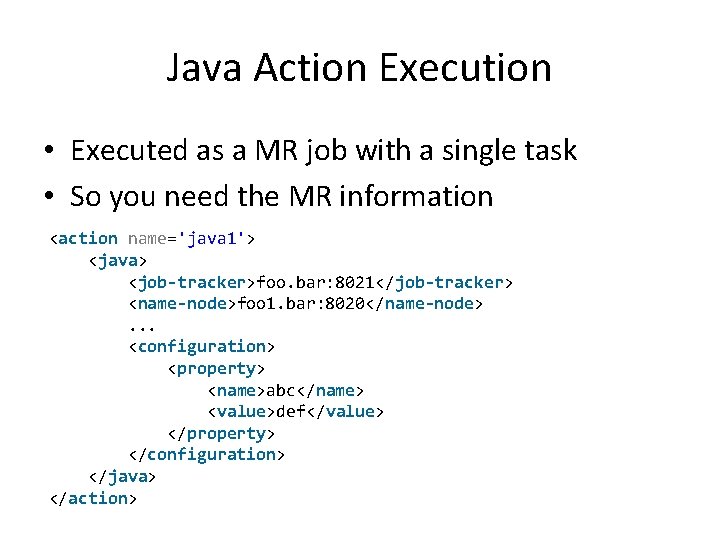

Java Action Execution • Executed as a MR job with a single task • So you need the MR information <action name='java 1'> <java> <job-tracker>foo. bar: 8021</job-tracker> <name-node>foo 1. bar: 8020</name-node> . . . <configuration> <property> <name>abc</name> <value>def</value> </property> </configuration> </java> </action>

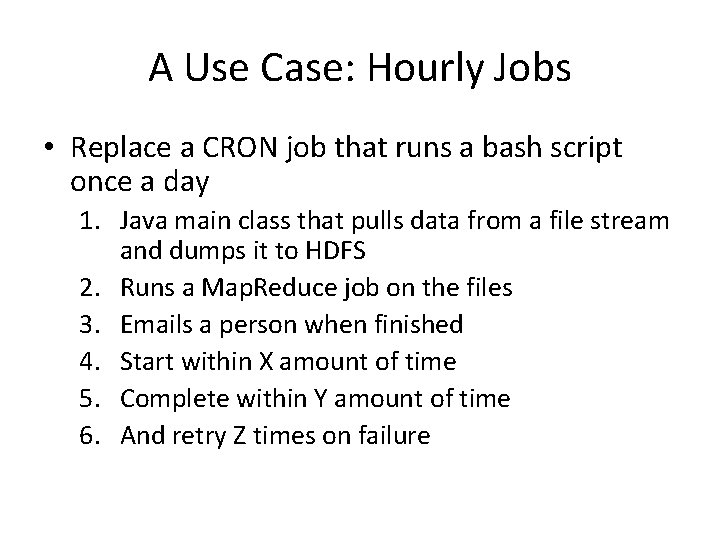

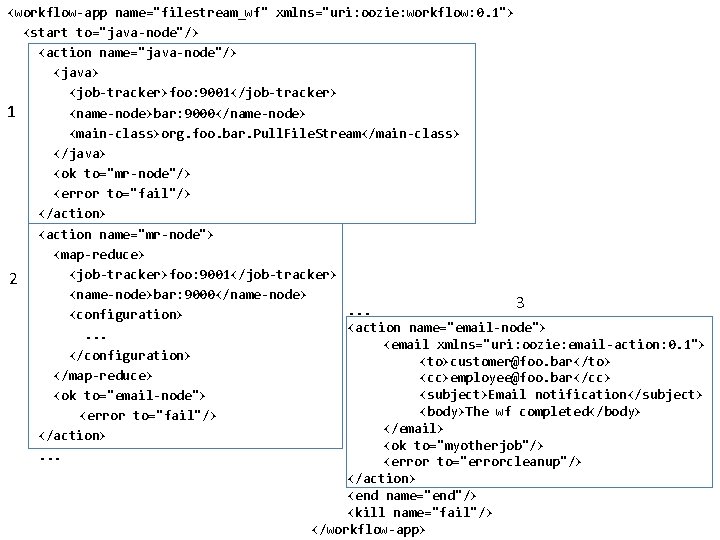

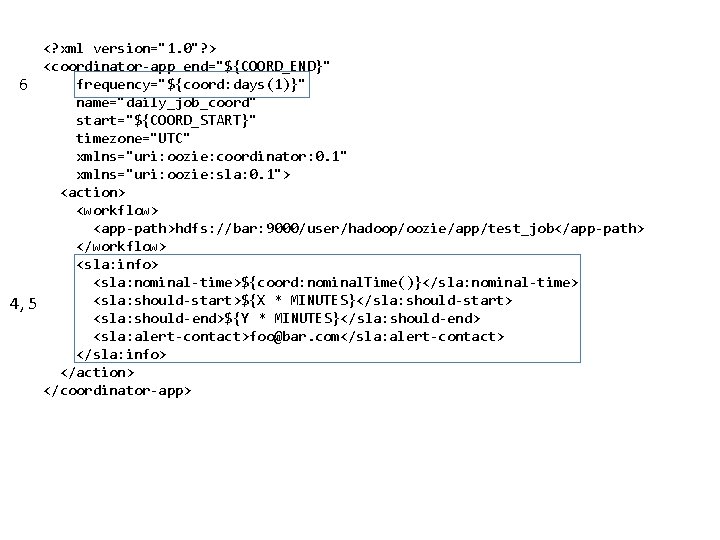

A Use Case: Hourly Jobs • Replace a CRON job that runs a bash script once a day 1. Java main class that pulls data from a file stream and dumps it to HDFS 2. Runs a Map. Reduce job on the files 3. Emails a person when finished 4. Start within X amount of time 5. Complete within Y amount of time 6. And retry Z times on failure

<workflow-app name="filestream_wf" xmlns="uri: oozie: workflow: 0. 1"> <start to="java-node"/> <action name="java-node"/> <java> <job-tracker>foo: 9001</job-tracker> 1 <name-node>bar: 9000</name-node> <main-class>org. foo. bar. Pull. File. Stream</main-class> </java> <ok to="mr-node"/> <error to="fail"/> </action> <action name="mr-node"> <map-reduce> <job-tracker>foo: 9001</job-tracker> 2 <name-node>bar: 9000</name-node> 3. . . <configuration> <action name="email-node"> . . . <email xmlns="uri: oozie: email-action: 0. 1"> </configuration> <to>customer@foo. bar</to> </map-reduce> <cc>employee@foo. bar</cc> <subject>Email notification</subject> <ok to="email-node"> <body>The wf completed</body> <error to="fail"/> </email> </action> <ok to="myotherjob"/> . . . <error to="errorcleanup"/> </action> <end name="end"/> <kill name="fail"/> </workflow-app>

6 4, 5 <? xml version="1. 0"? > <coordinator-app end="${COORD_END}" frequency="${coord: days(1)}" name="daily_job_coord" start="${COORD_START}" timezone="UTC" xmlns="uri: oozie: coordinator: 0. 1" xmlns="uri: oozie: sla: 0. 1"> <action> <workflow> <app-path>hdfs: //bar: 9000/user/hadoop/oozie/app/test_job</app-path> </workflow> <sla: info> <sla: nominal-time>${coord: nominal. Time()}</sla: nominal-time> <sla: should-start>${X * MINUTES}</sla: should-start> <sla: should-end>${Y * MINUTES}</sla: should-end> <sla: alert-contact>foo@bar. com</sla: alert-contact> </sla: info> </action> </coordinator-app>

Review • Oozie ties together many Hadoop ecosystem components to "productionalize" this stuff • Advanced control flow and action extendibility lets Oozie do whatever you would need it to do at any point in the workflow • XML is gross

References • http: //oozie. apache. org • https: //cwiki. apache. org/confluence/display/OO ZIE/Index • http: //www. slideshare. net/mattgoeke/oozie-riotgames • http: //www. slideshare. net/mislam 77/ooziesweet-13451212 • http: //www. slideshare. net/Chicago. HUG/everythi ng-you-wanted-to-know-but-were-afraid-to-askabout-oozie

- Slides: 24