Ensemble Methods Daryl Kleist Univ of MarylandCollege Park

Ensemble Methods Daryl Kleist Univ. of Maryland-College Park, Dept. of Atmos. & Oceanic Science JCSDA Summer Colloquium on Data Assimilation Fort Collins, CO 27 July – 7 August 2015 Thanks to Kayo Ide (UMD) and Massimo Bonavita (ECMWF) for much of the inspiration and/or slides for this lecture. Acknowledgements also to Eugenia Kalnay (UMD) and Jeff Whitaker (NOAA/ESRL) 1

Outline I. Introduction: Sequential Filtering a) Kalman Filter b) Extended KF and RRKF II. The Ensemble Kalman Filter a) Stochastic Filter (Perturbed Observations) b) Serial/Square Root Filters (En. SRF, EAKF, LETKF) c) Technical Challenges i. Localization ii. Inflation d) Toy model example results III. Ensemble of Vars IV. Summary 2

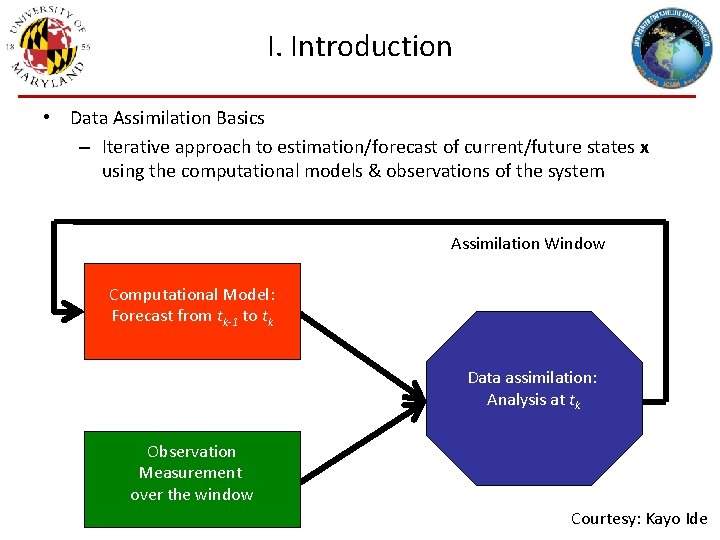

I. Introduction • Data Assimilation Basics – Iterative approach to estimation/forecast of current/future states x using the computational models & observations of the system Assimilation Window Computational Model: Forecast from tk-1 to tk Data assimilation: Analysis at tk Observation Measurement over the window Courtesy: Kayo Ide

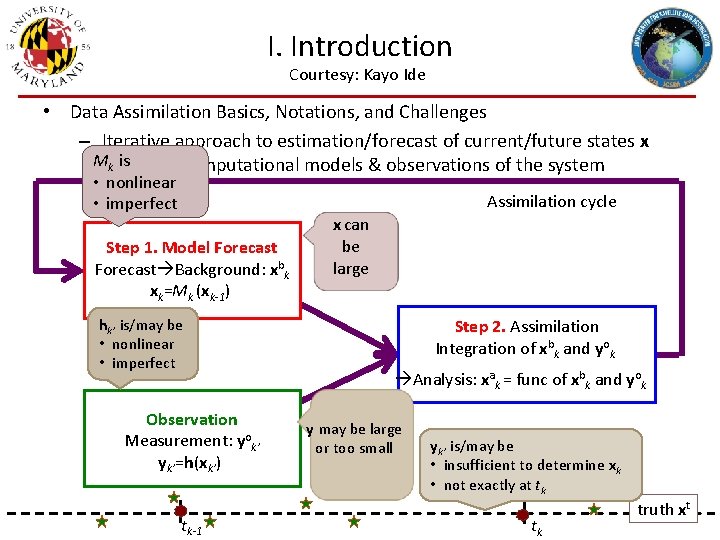

I. Introduction Courtesy: Kayo Ide • Data Assimilation Basics, Notations, and Challenges – Iterative approach to estimation/forecast of current/future states x Musing k is the computational models & observations of the system • nonlinear • imperfect Assimilation cycle Step 1. Model Forecast Background: xbk xk=Mk (xk-1) hk’ is/may be • nonlinear • imperfect Observation Measurement: yok’ yk’=h(xk’) tk-1 x can be large Step 2. Assimilation Integration of xbk and yok Analysis: xak = func of xbk and yok y may be large or too small yk’ is/may be • insufficient to determine xk • not exactly at tk tk truth xt

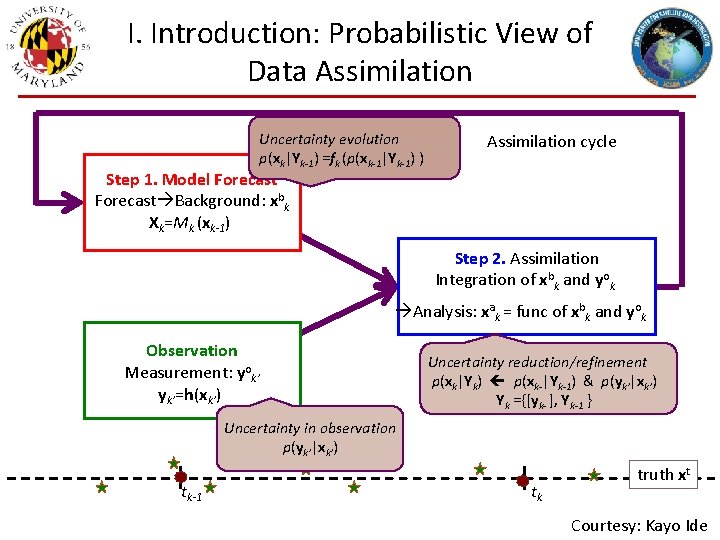

I. Introduction: Probabilistic View of Data Assimilation Uncertainty evolution p(xk|Yk-1) =fk (p(xk-1|Yk-1) ) Assimilation cycle Step 1. Model Forecast Background: xbk Xk=Mk (xk-1) Step 2. Assimilation Integration of xbk and yok Analysis: xak = func of xbk and yok Observation Measurement: yok’ yk’=h(xk’) Uncertainty reduction/refinement p(xk|Yk) p(xk-|Yk-1) & p(yk’|xk’) Yk ={[yk- ], Yk-1 } Uncertainty in observation p(yk’|xk’) tk-1 tk truth xt Courtesy: Kayo Ide

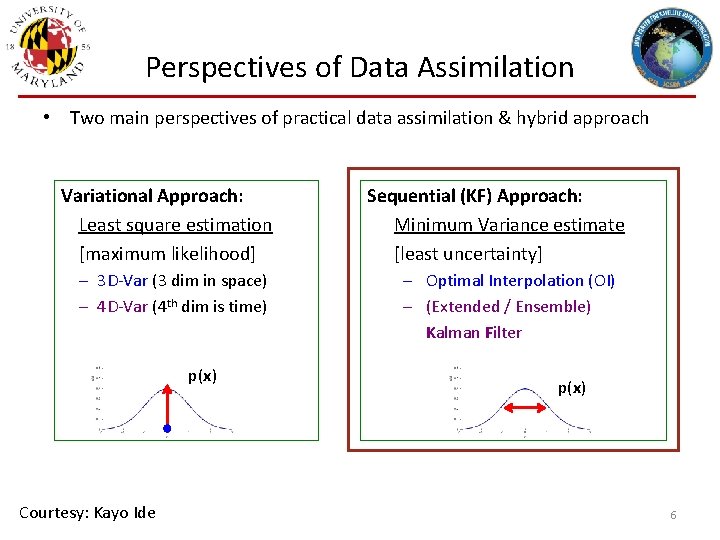

Perspectives of Data Assimilation • Two main perspectives of practical data assimilation & hybrid approach Variational Approach: Least square estimation [maximum likelihood] – 3 D-Var (3 dim in space) – 4 D-Var (4 th dim is time) p(x) Courtesy: Kayo Ide Sequential (KF) Approach: Minimum Variance estimate [least uncertainty] – Optimal Interpolation (OI) – (Extended / Ensemble) Kalman Filter p(x) 6

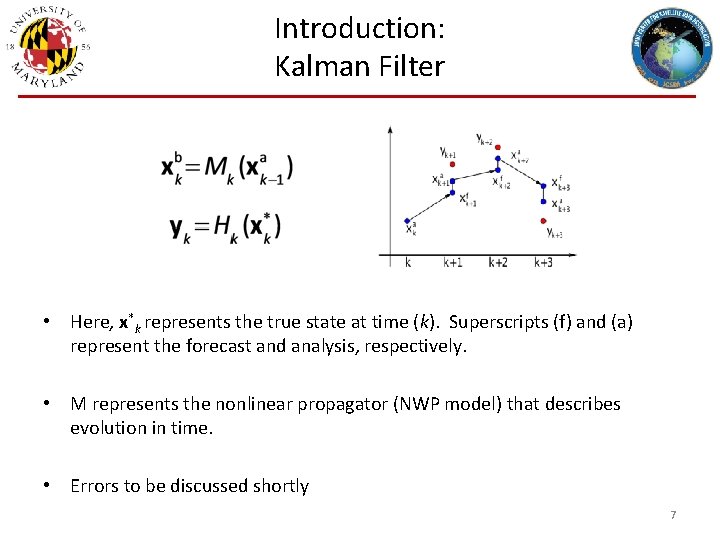

Introduction: Kalman Filter • Here, x*k represents the true state at time (k). Superscripts (f) and (a) represent the forecast and analysis, respectively. • M represents the nonlinear propagator (NWP model) that describes evolution in time. • Errors to be discussed shortly 7

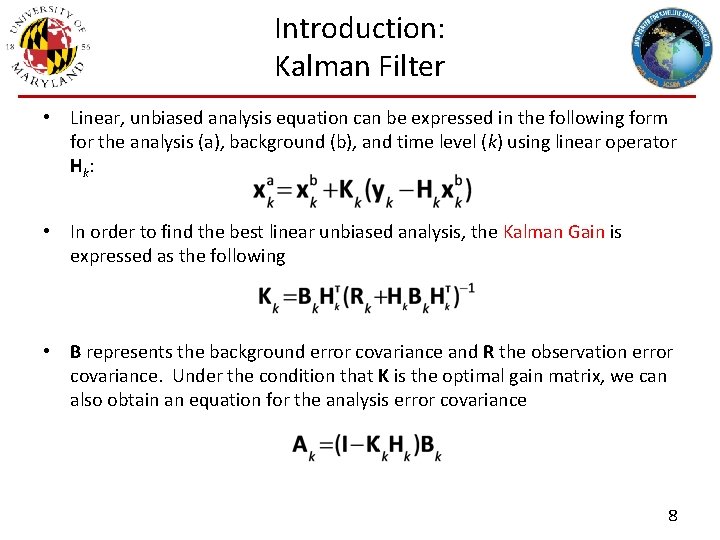

Introduction: Kalman Filter • Linear, unbiased analysis equation can be expressed in the following form for the analysis (a), background (b), and time level (k) using linear operator H k: • In order to find the best linear unbiased analysis, the Kalman Gain is expressed as the following • B represents the background error covariance and R the observation error covariance. Under the condition that K is the optimal gain matrix, we can also obtain an equation for the analysis error covariance 8

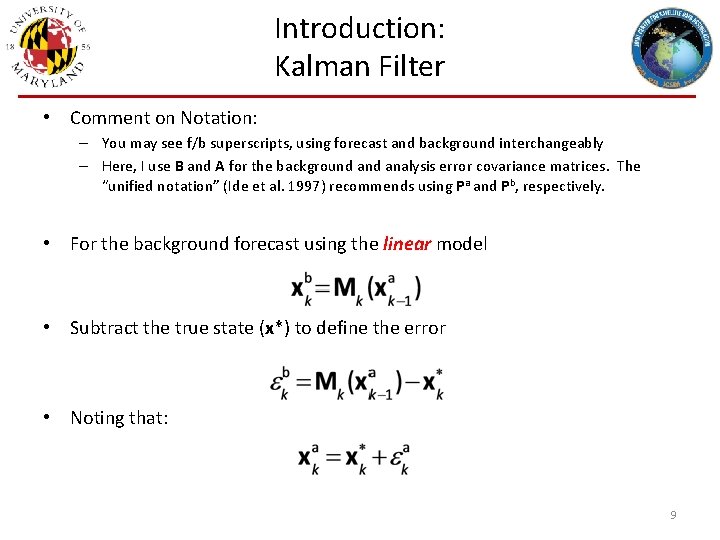

Introduction: Kalman Filter • Comment on Notation: – You may see f/b superscripts, using forecast and background interchangeably – Here, I use B and A for the background analysis error covariance matrices. The “unified notation” (Ide et al. 1997) recommends using Pa and Pb, respectively. • For the background forecast using the linear model • Subtract the true state (x*) to define the error • Noting that: 9

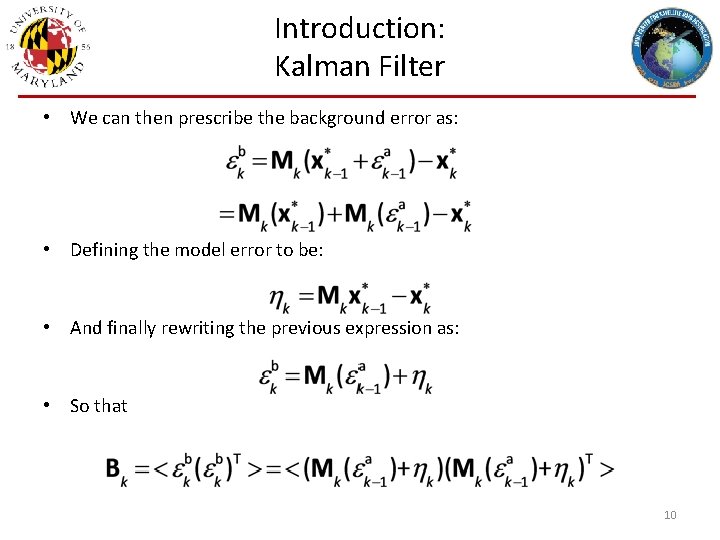

Introduction: Kalman Filter • We can then prescribe the background error as: • Defining the model error to be: • And finally rewriting the previous expression as: • So that 10

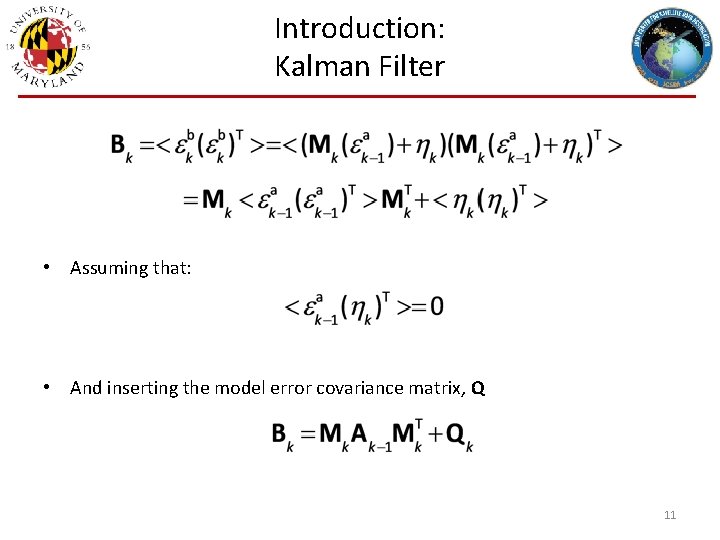

Introduction: Kalman Filter • Assuming that: • And inserting the model error covariance matrix, Q 11

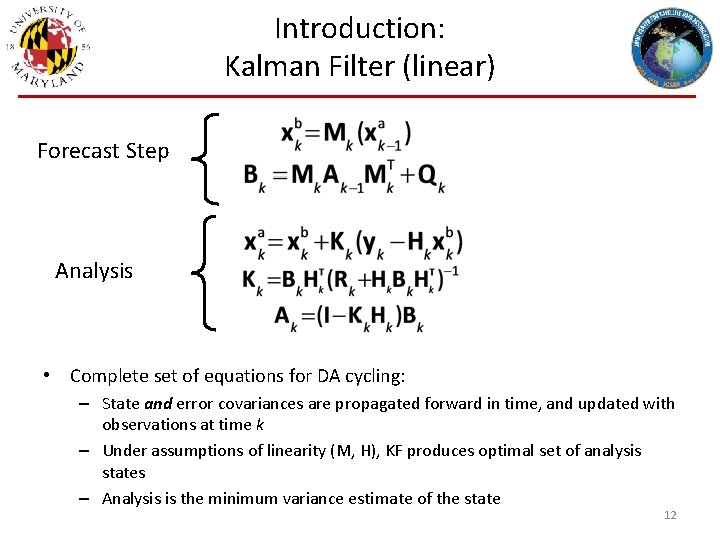

Introduction: Kalman Filter (linear) Forecast Step Analysis • Complete set of equations for DA cycling: – State and error covariances are propagated forward in time, and updated with observations at time k – Under assumptions of linearity (M, H), KF produces optimal set of analysis states – Analysis is the minimum variance estimate of the state 12

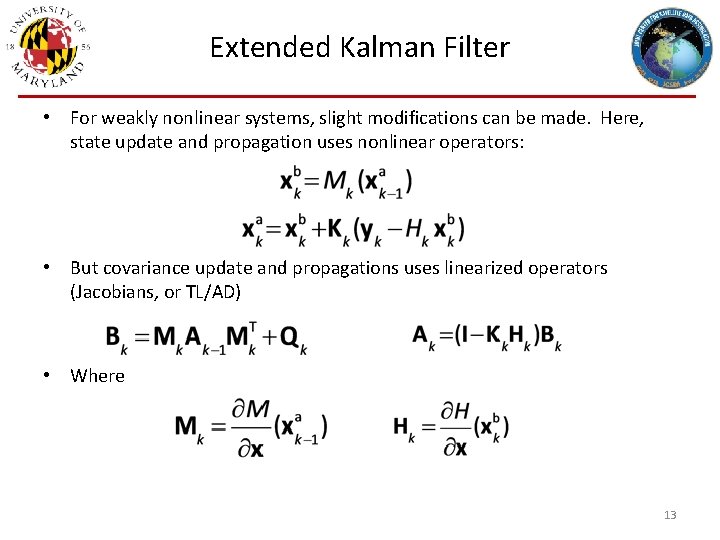

Extended Kalman Filter • For weakly nonlinear systems, slight modifications can be made. Here, state update and propagation uses nonlinear operators: • But covariance update and propagations uses linearized operators (Jacobians, or TL/AD) • Where 13

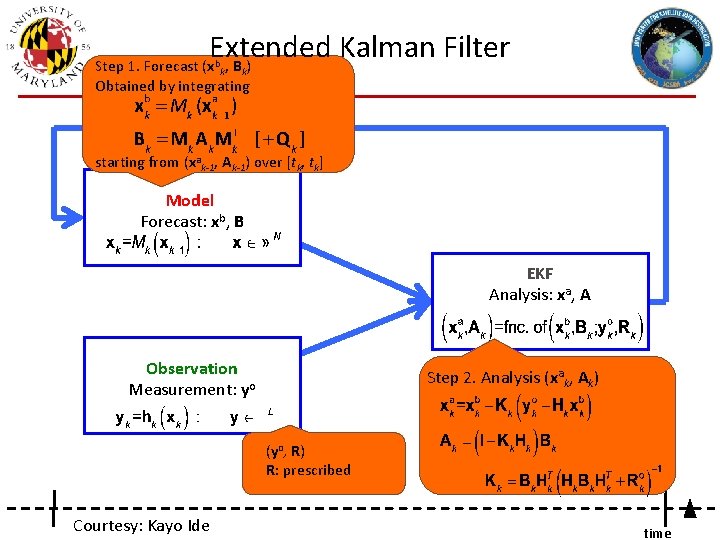

Extended Kalman Filter Step 1. Forecast (xbk, Bk) Obtained by integrating starting from (xak-1, Ak-1) over [tk, tk] Model Forecast: xb, B EKF Analysis: xa, A Observation Measurement: yo Step 2. Analysis (xak, Ak) (yo, R) R: prescribed Courtesy: Kayo Ide time

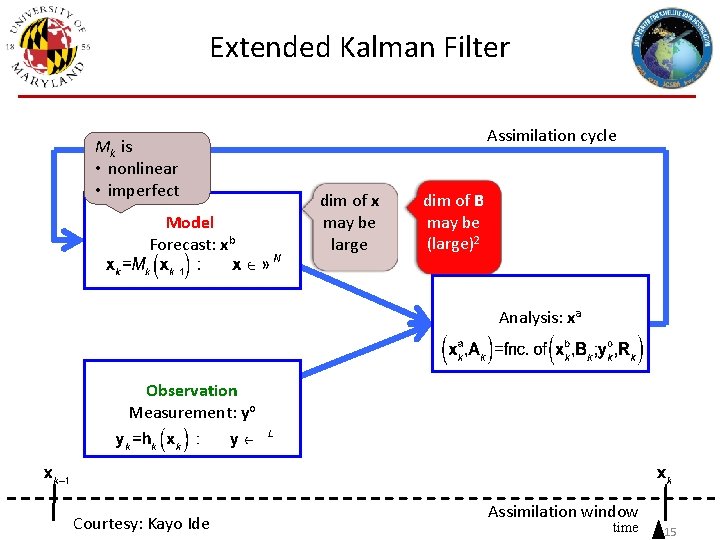

Extended Kalman Filter Mk is • nonlinear • imperfect Model Forecast: xb Assimilation cycle dim of x may be large dim of B may be (large)2 Analysis: xa Observation Measurement: yo Courtesy: Kayo Ide Assimilation window time 15

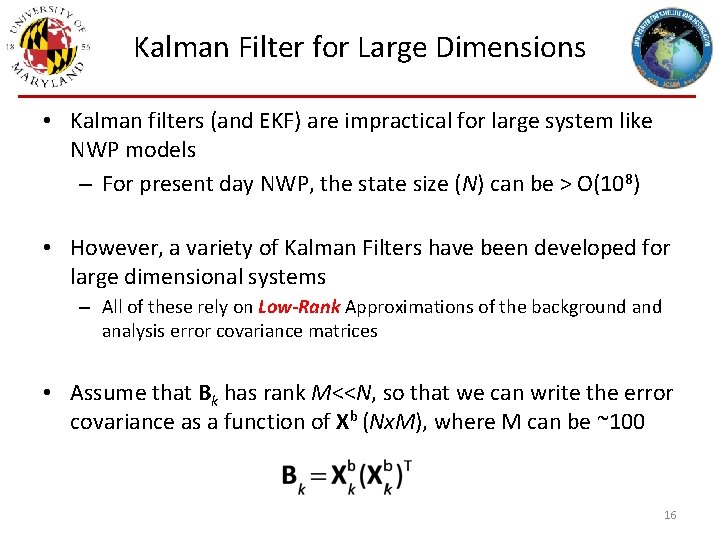

Kalman Filter for Large Dimensions • Kalman filters (and EKF) are impractical for large system like NWP models – For present day NWP, the state size (N) can be > O(108) • However, a variety of Kalman Filters have been developed for large dimensional systems – All of these rely on Low-Rank Approximations of the background analysis error covariance matrices • Assume that Bk has rank M<<N, so that we can write the error covariance as a function of Xb (Nx. M), where M can be ~100 16

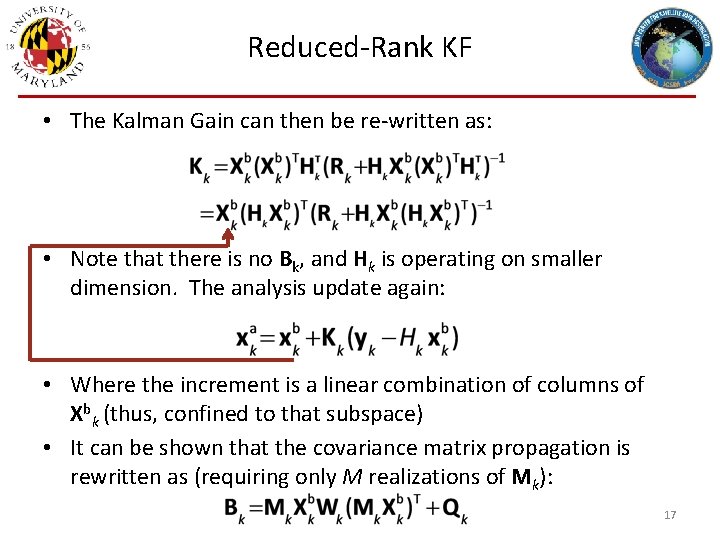

Reduced-Rank KF • The Kalman Gain can then be re-written as: • Note that there is no Bk, and Hk is operating on smaller dimension. The analysis update again: • Where the increment is a linear combination of columns of Xbk (thus, confined to that subspace) • It can be shown that the covariance matrix propagation is rewritten as (requiring only M realizations of Mk): 17

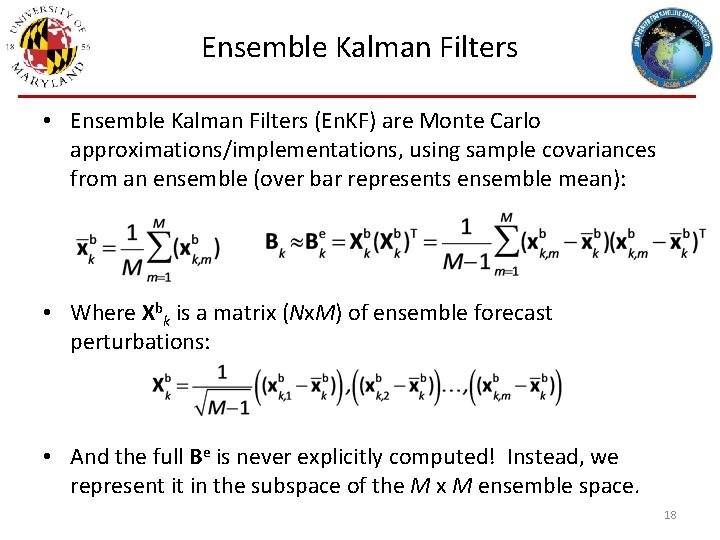

Ensemble Kalman Filters • Ensemble Kalman Filters (En. KF) are Monte Carlo approximations/implementations, using sample covariances from an ensemble (over bar represents ensemble mean): • Where Xbk is a matrix (Nx. M) of ensemble forecast perturbations: • And the full Be is never explicitly computed! Instead, we represent it in the subspace of the M x M ensemble space. 18

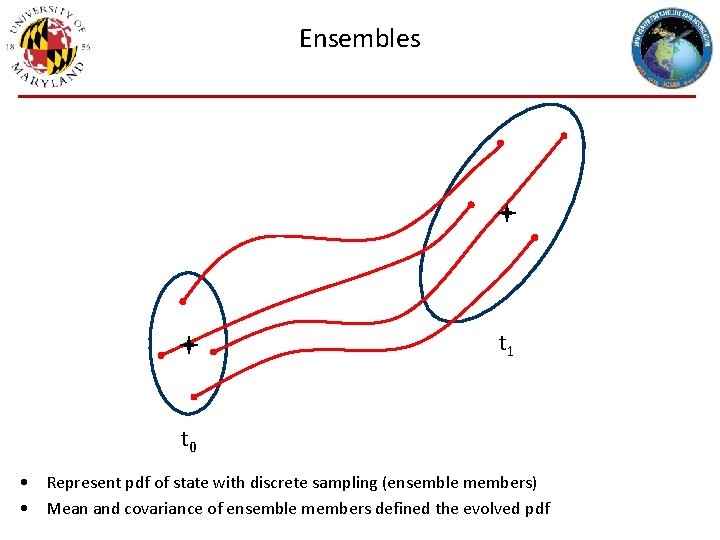

Ensembles t 1 t 0 • Represent pdf of state with discrete sampling (ensemble members) • Mean and covariance of ensemble members defined the evolved pdf

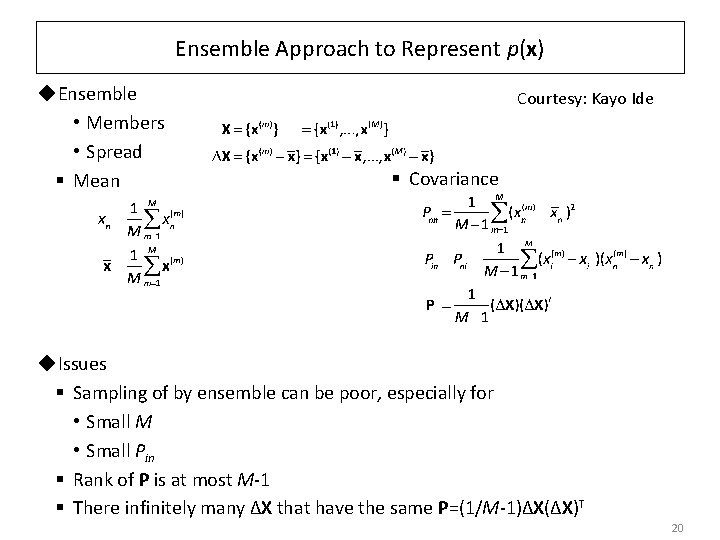

Ensemble Approach to Represent p(x) ◆Ensemble • Members • Spread § Mean Courtesy: Kayo Ide § Covariance ◆Issues § Sampling of by ensemble can be poor, especially for • Small M • Small Pin § Rank of P is at most M-1 § There infinitely many ΔX that have the same P=(1/M-1)ΔX(ΔX)T 20

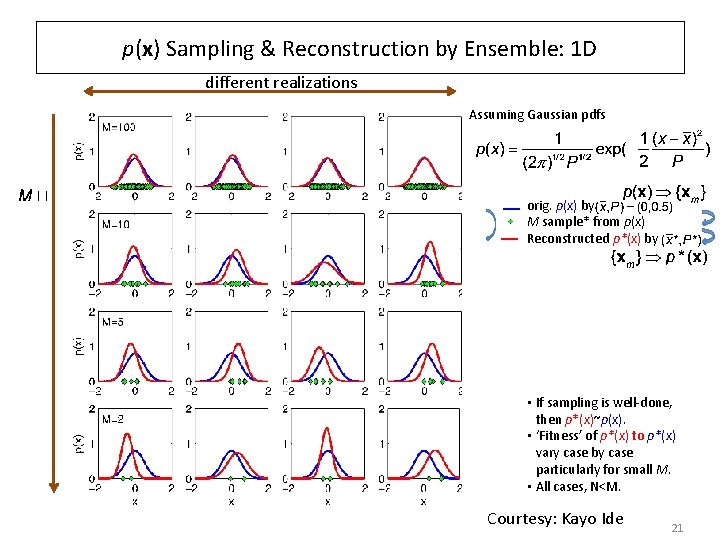

p(x) Sampling & Reconstruction by Ensemble: 1 D different realizations Assuming Gaussian pdfs orig. p(x) by M sample* from p(x) Reconstructed p*(x) by • If sampling is well-done, then p*(x)~p(x). • ‘Fitness’ of p*(x) to p*(x) vary case by case particularly for small M. • All cases, N<M. Courtesy: Kayo Ide 21

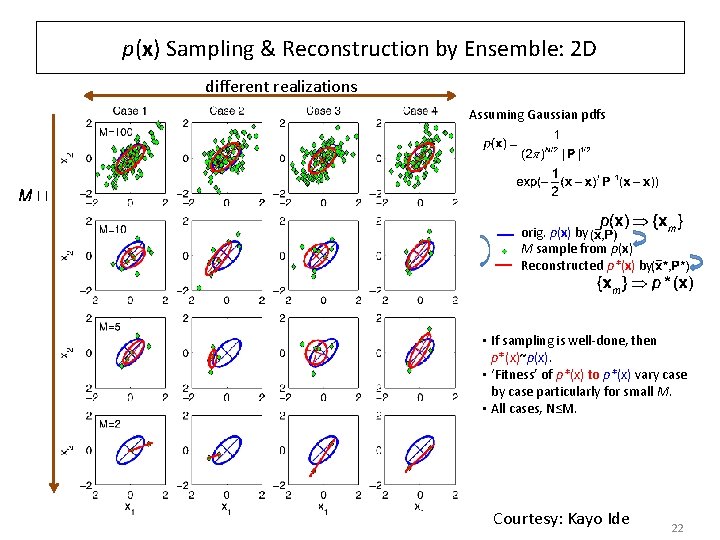

p(x) Sampling & Reconstruction by Ensemble: 2 D different realizations Assuming Gaussian pdfs orig. p(x) by M sample from p(x) Reconstructed p*(x) by • If sampling is well-done, then p*(x)~p(x). • ‘Fitness’ of p*(x) to p*(x) vary case by case particularly for small M. • All cases, N≤M. Courtesy: Kayo Ide 22

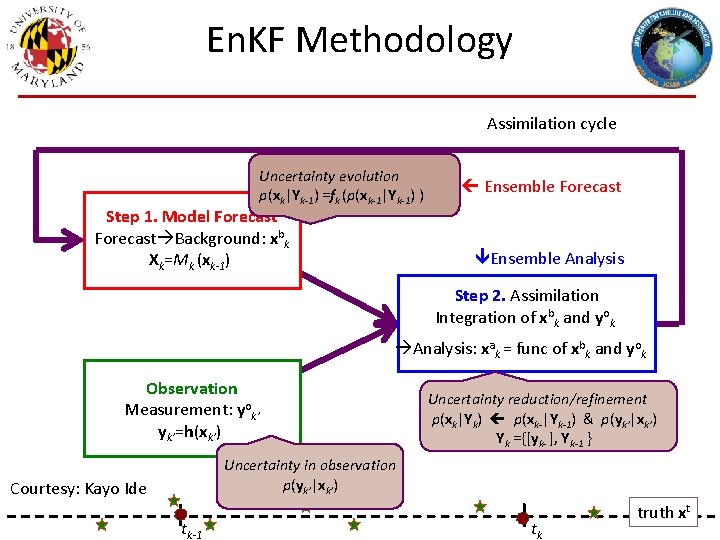

En. KF Methodology Assimilation cycle Uncertainty evolution p(xk|Yk-1) =fk (p(xk-1|Yk-1) ) Step 1. Model Forecast Background: xbk Xk=Mk (xk-1) Ensemble Forecast Ensemble Analysis Step 2. Assimilation Integration of xbk and yok Analysis: xak = func of xbk and yok Observation Measurement: yok’ yk’=h(xk’) Uncertainty reduction/refinement p(xk|Yk) p(xk-|Yk-1) & p(yk’|xk’) Yk ={[yk- ], Yk-1 } Uncertainty in observation p(yk’|xk’) Courtesy: Kayo Ide tk-1 tk truth xt

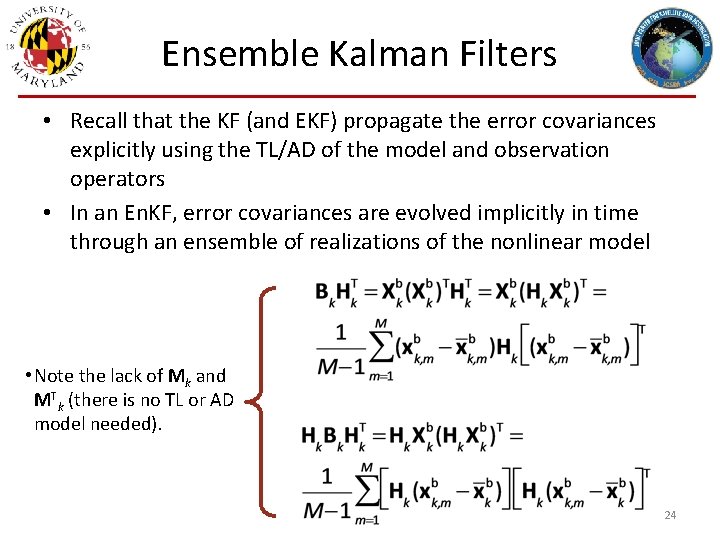

Ensemble Kalman Filters • Recall that the KF (and EKF) propagate the error covariances explicitly using the TL/AD of the model and observation operators • In an En. KF, error covariances are evolved implicitly in time through an ensemble of realizations of the nonlinear model • Note the lack of Mk and MTk (there is no TL or AD model needed). 24

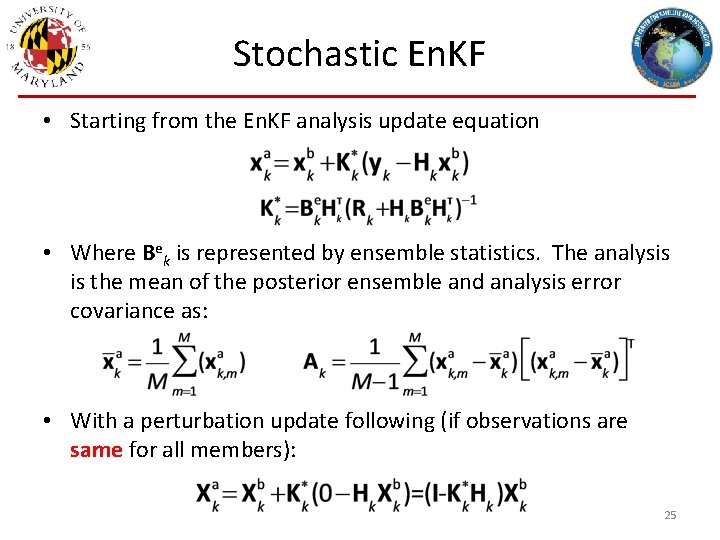

Stochastic En. KF • Starting from the En. KF analysis update equation • Where Bek is represented by ensemble statistics. The analysis is the mean of the posterior ensemble and analysis error covariance as: • With a perturbation update following (if observations are same for all members): 25

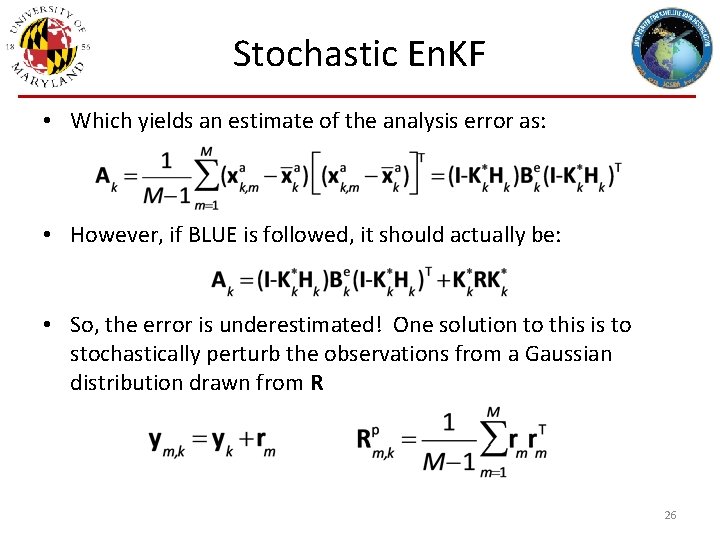

Stochastic En. KF • Which yields an estimate of the analysis error as: • However, if BLUE is followed, it should actually be: • So, the error is underestimated! One solution to this is to stochastically perturb the observations from a Gaussian distribution drawn from R 26

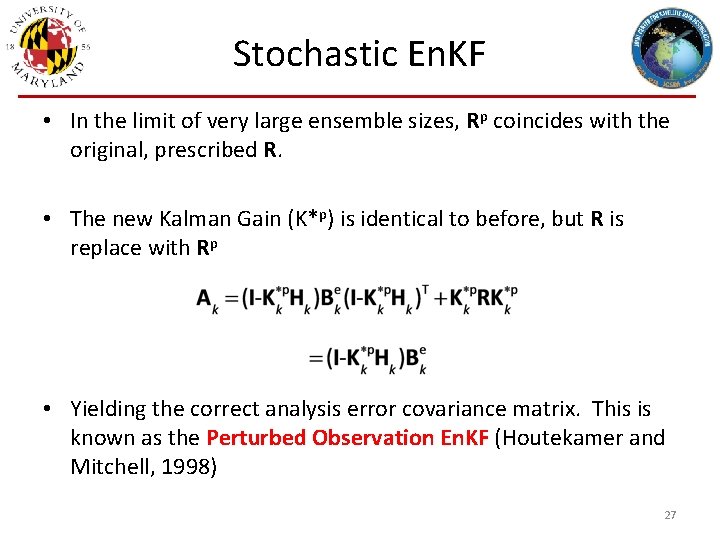

Stochastic En. KF • In the limit of very large ensemble sizes, Rp coincides with the original, prescribed R. • The new Kalman Gain (K*p) is identical to before, but R is replace with Rp • Yielding the correct analysis error covariance matrix. This is known as the Perturbed Observation En. KF (Houtekamer and Mitchell, 1998) 27

![Deterministic En. KF [Square Root Filters] • There is another class of filters that Deterministic En. KF [Square Root Filters] • There is another class of filters that](http://slidetodoc.com/presentation_image_h2/6f96da86afa1e5df7b6ad02a3d2b4520/image-28.jpg)

Deterministic En. KF [Square Root Filters] • There is another class of filters that does not require perturbing the observations. Starting with the ensemble background perturbation matrix again: • We define the analysis to be a linear combination background perturbations: • Where wk is a vector of coefficients in ensemble space. Expanding the RHS and defining the departures: 28

![Deterministic En. KF [Square Root Filters] • Which implies that: • Let’s define the Deterministic En. KF [Square Root Filters] • Which implies that: • Let’s define the](http://slidetodoc.com/presentation_image_h2/6f96da86afa1e5df7b6ad02a3d2b4520/image-29.jpg)

Deterministic En. KF [Square Root Filters] • Which implies that: • Let’s define the following matrix in observation space: • Which yields a simplified formulation for the weights: 29

![Deterministic En. KF [Square Root Filters] • In other words, the Gain is computed Deterministic En. KF [Square Root Filters] • In other words, the Gain is computed](http://slidetodoc.com/presentation_image_h2/6f96da86afa1e5df7b6ad02a3d2b4520/image-30.jpg)

Deterministic En. KF [Square Root Filters] • In other words, the Gain is computed in observation space. However, using the Sherman-Morrison-Woodbury formula, this can be rewritten to ensemble space: • The perturbations can be updated to satisfy the following transform (T) satisfying the relationships: • It can be shown that one such choice accomplishes this: 30

![Deterministic En. KF [Square Root Filters] • There are many implementations of deterministic, square Deterministic En. KF [Square Root Filters] • There are many implementations of deterministic, square](http://slidetodoc.com/presentation_image_h2/6f96da86afa1e5df7b6ad02a3d2b4520/image-31.jpg)

Deterministic En. KF [Square Root Filters] • There are many implementations of deterministic, square root filters. There are differences in the handling of observations (performing on local patches, serial assimilation of observations, etc. ) – Ensemble Transform Kalman Filter (ETKF, Bishop et al. 2001) – Local Ensemble Transform Kalman Filter (LETKF, Ott et al. 2004, Hunt et al. 2007) – Serial Ensemble Square Root Filter (En. SRF, Whitaker and Hamill 2002) – Ensemble Adjustment Kalman Filter (EAKF, Anderson 2001) • Overall, all of the above are largely similar and differ in their practical implementation. The class above is different than the stochastic filter. 31

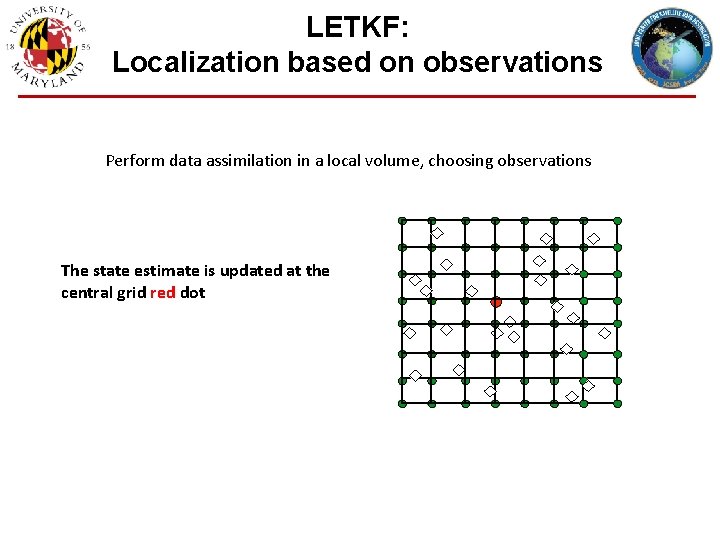

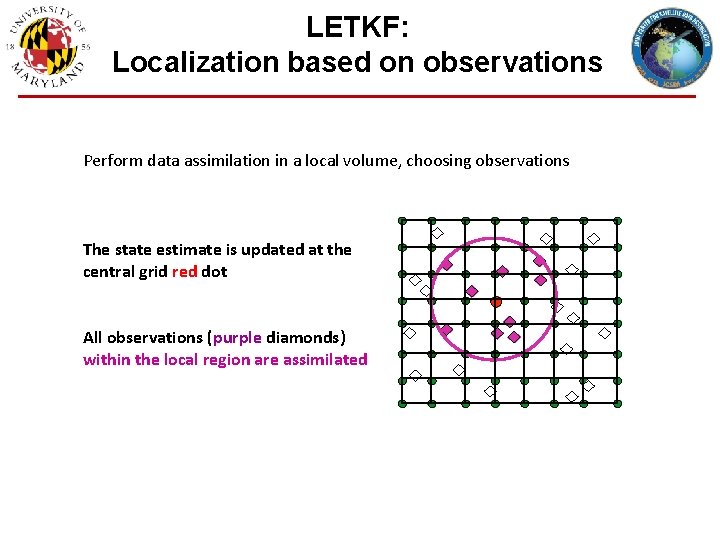

LETKF: Localization based on observations Perform data assimilation in a local volume, choosing observations The state estimate is updated at the central grid red dot

LETKF: Localization based on observations Perform data assimilation in a local volume, choosing observations The state estimate is updated at the central grid red dot All observations (purple diamonds) within the local region are assimilated

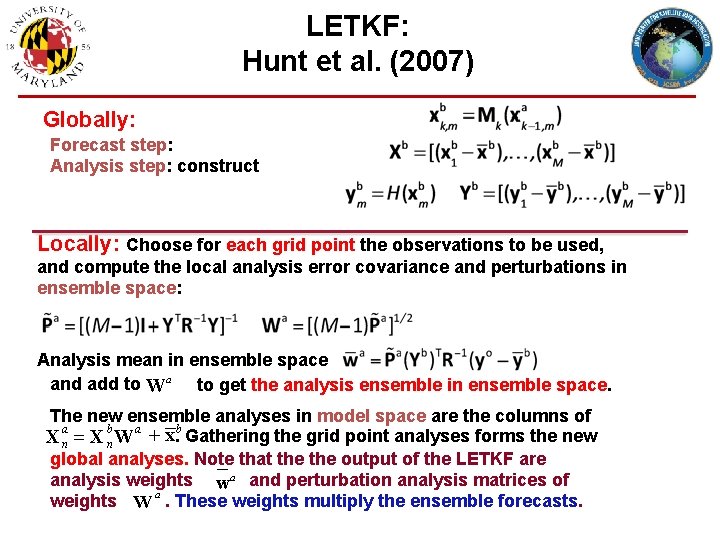

LETKF: Hunt et al. (2007) Globally: Forecast step: Analysis step: construct Locally: Choose for each grid point the observations to be used, and compute the local analysis error covariance and perturbations in ensemble space: Analysis mean in ensemble space and add to Wa to get the analysis ensemble in ensemble space. The new ensemble analyses in model space are the columns of X an = X bn W a + x. b Gathering the grid point analyses forms the new global analyses. Note that the output of the LETKF are analysis weights wa and perturbation analysis matrices of a weights W. These weights multiply the ensemble forecasts.

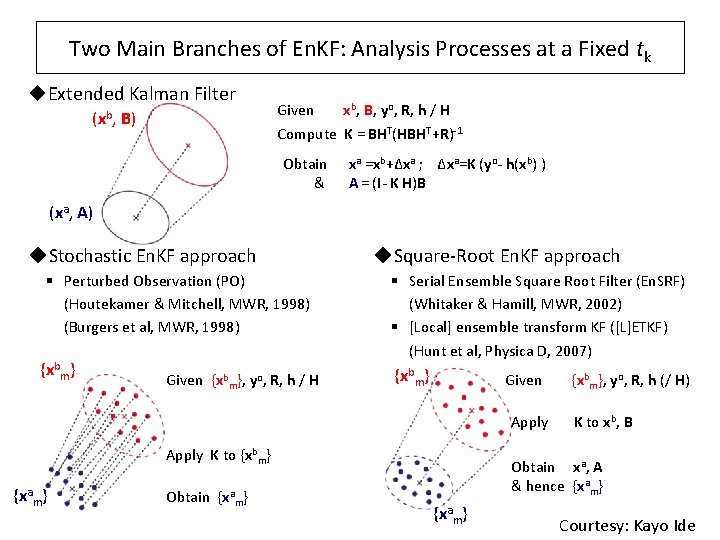

Two Main Branches of En. KF: Analysis Processes at a Fixed tk ◆Extended Kalman Filter (xb, B) Given xb, B, yo, R, h / H Compute K = BHT(HBHT+R)-1 Obtain & xa =xb+Δxa ; Δxa=K (yo- h(xb) ) A = (I- K H)B (xa, A) ◆Stochastic En. KF approach § Perturbed Observation (PO) (Houtekamer & Mitchell, MWR, 1998) (Burgers et al, MWR, 1998) {xbm} Given {xbm}, yo, R, h / H ◆Square-Root En. KF approach § Serial Ensemble Square Root Filter (En. SRF) (Whitaker & Hamill, MWR, 2002) § [Local] ensemble transform KF ([L]ETKF) (Hunt et al, Physica D, 2007) {xbm} Given Apply K to {xbm} {xam} Obtain {xam} {xbm}, yo, R, h (/ H) K to xb, B Obtain xa, A & hence {xam} Courtesy: Kayo Ide

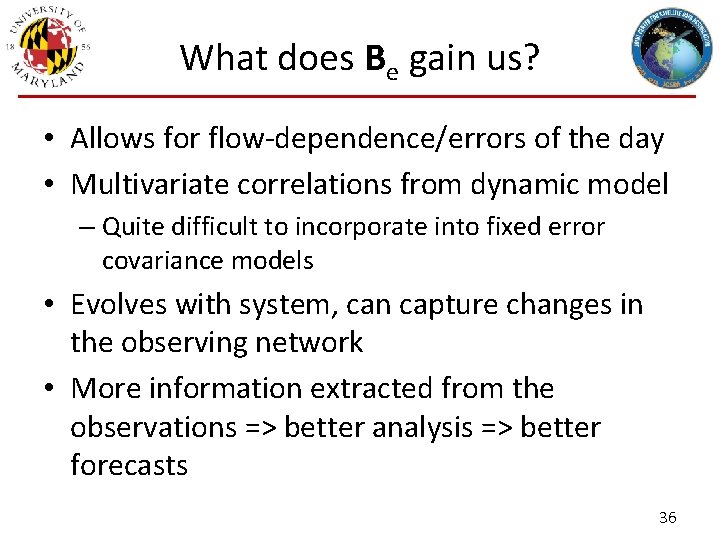

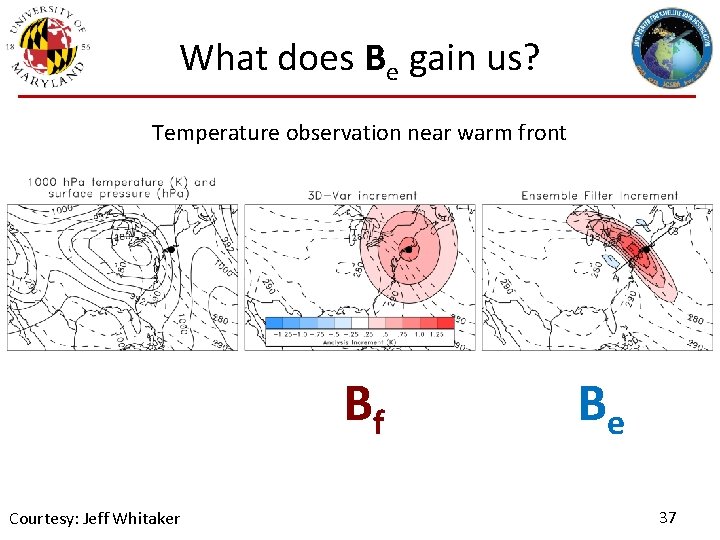

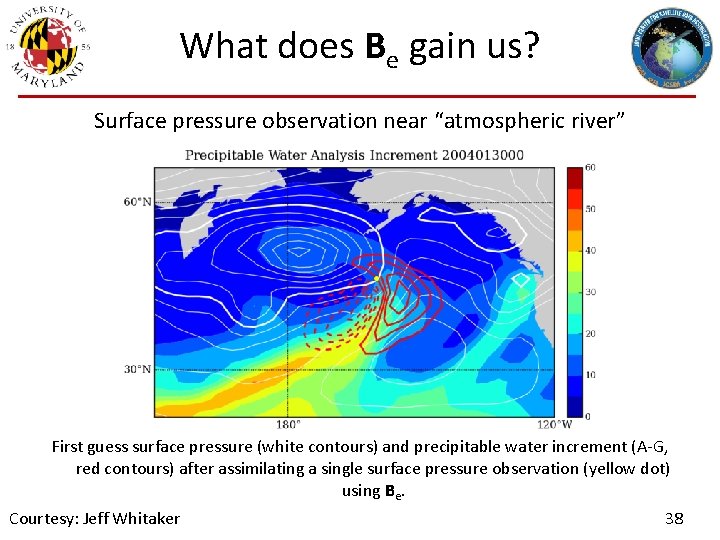

What does Be gain us? • Allows for flow-dependence/errors of the day • Multivariate correlations from dynamic model – Quite difficult to incorporate into fixed error covariance models • Evolves with system, can capture changes in the observing network • More information extracted from the observations => better analysis => better forecasts 36

What does Be gain us? Temperature observation near warm front Bf Courtesy: Jeff Whitaker Be 37

What does Be gain us? Surface pressure observation near “atmospheric river” First guess surface pressure (white contours) and precipitable water increment (A-G, red contours) after assimilating a single surface pressure observation (yellow dot) using Be. Courtesy: Jeff Whitaker 38

So what’s the catch? • Rank Deficiency – The ensemble sizes used (~40 -100+) for NWP are not nearly large enough • Too few degrees of freedom available to fit the observations • Low rank approximation yields spurious long-distance correlations • Mistreatment of “system error/uncertainty” – Sampling (as above), model error, observation operator error, representativeness, etc. • State estimate is ensemble average – This can produce unphysical estimates, smooth out high fidelity information, etc. 39

Inflation and Localization • Inflation – Used to inflate ensemble estimate of uncertainty to avoid filter divergence (additive and multiplicative) • Localization – Domain Localization • Solves equations independently for each grid point (LETKF) – Covariance Localization • Performed element wise (Schur product) on covariances themselves 40

Methods for representing model error (inflation) • Multiplicative inflation: – Relaxation-to-prior spread (RTPS) – Relaxation-to-prior perturbation (Zhang et al. 2004) – Adaptive (Anderson 2007) • Additive inflation: Add random samples from a specified distribution to each ensemble member after the analysis step. – Env. Canada uses random samples of isotropic 3 DVar covariance matrix. – NCEP uses random samples of 48 -h – 24 -h forecast error (fcsts valid at same time). 41

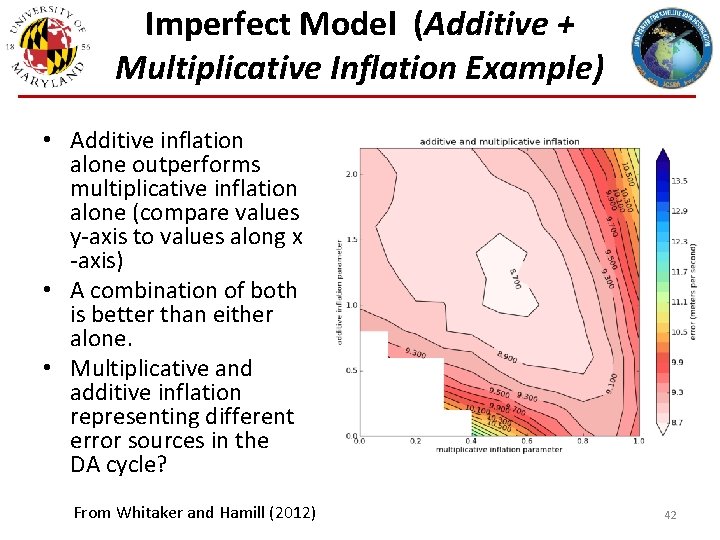

Imperfect Model (Additive + Multiplicative Inflation Example) • Additive inflation alone outperforms multiplicative inflation alone (compare values y-axis to values along x -axis) • A combination of both is better than either alone. • Multiplicative and additive inflation representing different error sources in the DA cycle? From Whitaker and Hamill (2012) 42

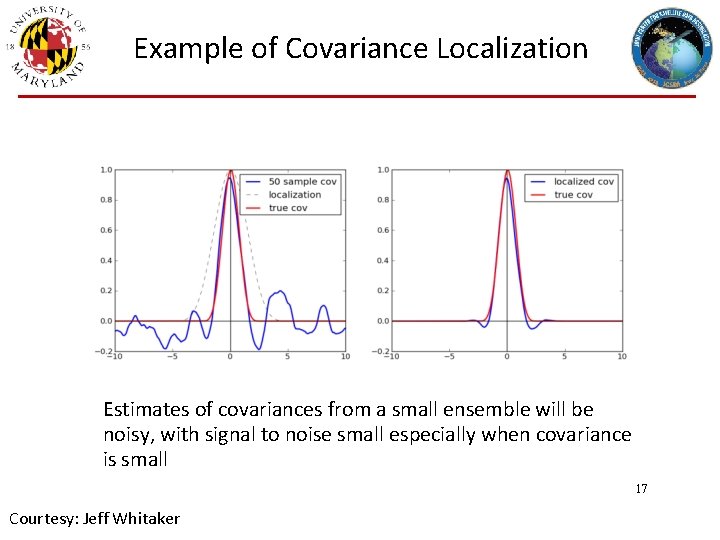

Example of Covariance Localization Estimates of covariances from a small ensemble will be noisy, with signal to noise small especially when covariance is small 17 Courtesy: Jeff Whitaker

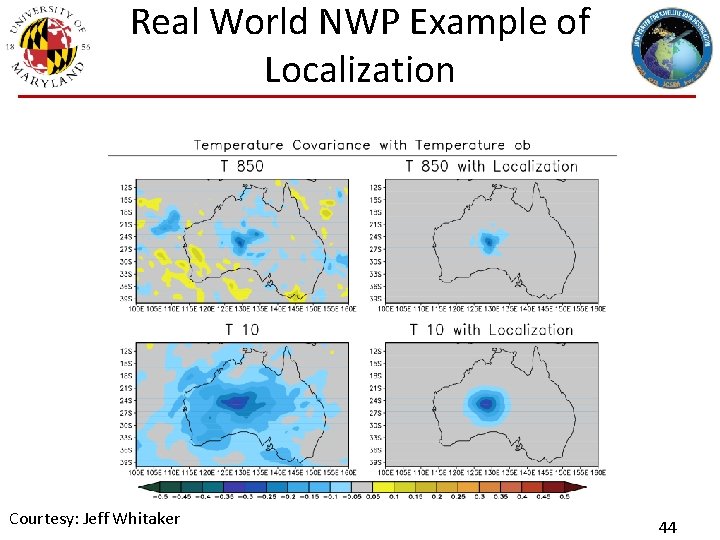

Real World NWP Example of Localization Courtesy: Jeff Whitaker 44

Toy Model Experiments • Lorenz 96 40 -variable model (F-8. 0) – 0. 05 cycling (~6 hours) • Investigate aspects of En. KF, will show various RMSE metrics Graphic courtesy Rahul Mahajan 45

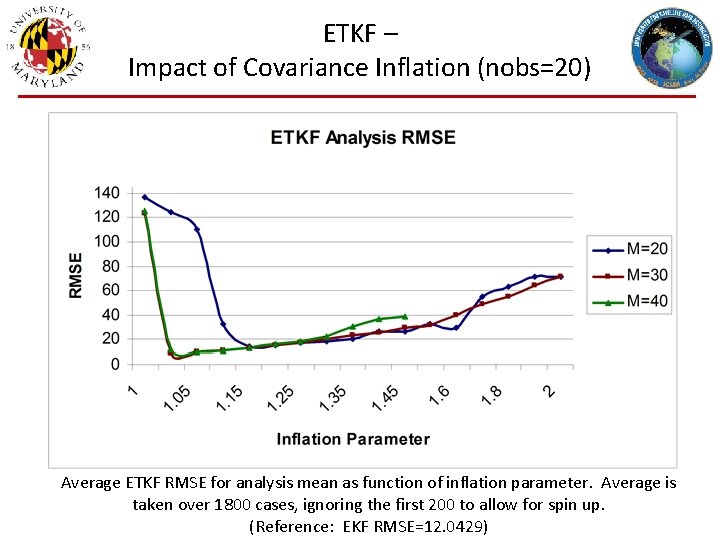

ETKF – Impact of Covariance Inflation (nobs=20) Average ETKF RMSE for analysis mean as function of inflation parameter. Average is taken over 1800 cases, ignoring the first 200 to allow for spin up. (Reference: EKF RMSE=12. 0429)

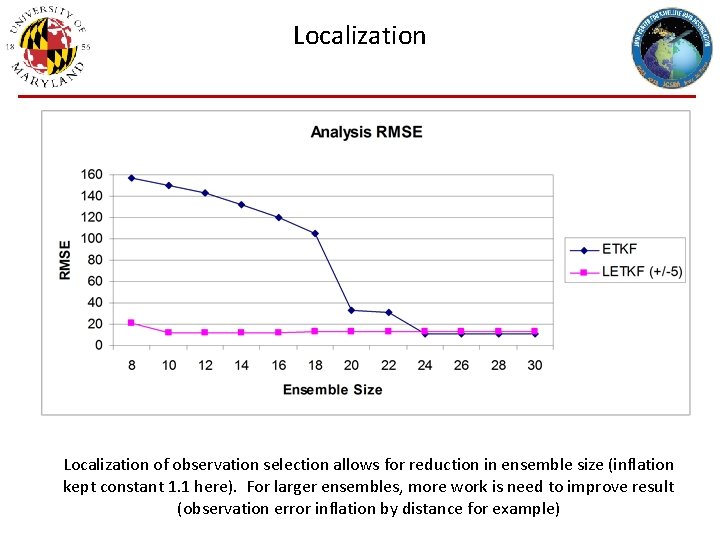

Localization of observation selection allows for reduction in ensemble size (inflation kept constant 1. 1 here). For larger ensembles, more work is need to improve result (observation error inflation by distance for example)

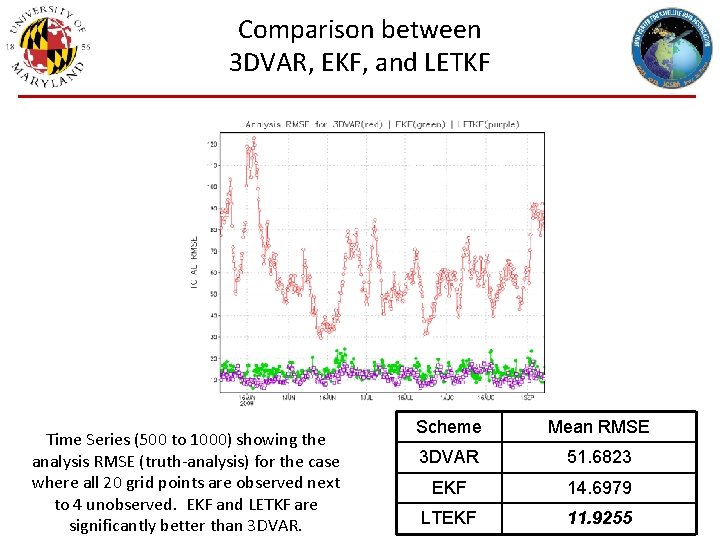

Comparison between 3 DVAR, EKF, and LETKF Time Series (500 to 1000) showing the analysis RMSE (truth-analysis) for the case where all 20 grid points are observed next to 4 unobserved. EKF and LETKF are significantly better than 3 DVAR. Scheme Mean RMSE 3 DVAR 51. 6823 EKF 14. 6979 LTEKF 11. 9255

Ensemble of Data Assimilations • Much like the stochastic En. KF, ECMWF and Meteo-France use an ensemble of data assimilations instead of an En. KF – Perturb the observations and model – Designed to represent and estimate the uncertainty in their deterministic 4 DVAR • This provides flow-dependent estimates of analysis error for their EPS • Also provides flow-dependent estimates of background error for use in DA (either as B 0 or in hybrid…. next lecture) • Can be hugely expensive, given that a variational (4 DVAR) update has to be executed for each ensemble member! 49

Summary • En. KFs are Monte-Carlo implementations of the sequential Kalman Filter (minimum variance estimate) – PROS: Easy to implement, do not need TL/AD, flow-dependent estimates for error covariances, solver can be done in ensemble space (computationally efficient) – CONS: Sample sizes are usually much too small for large dimensional systems such as NWP models, requires ad-hoc methods of inflation and localization • Many variants of En. KFs exist, but can be subset into two classes – Stochastic, Perturbed observation schemes ( – Deterministic, square root filters • While many of these exist in their details and practical information, they are all solving for essentially the same thing. • Observation versus physical space localization (when might this matter? ) • Has been successfully applied to many, high-dimensional problems 50

Summary (cont. ) • Like the variational solutions, similar assumptions need to be made to formulate the En. KF (including Gaussianity) – Although not discussed in detail, one does not necessarily need linear, differentiable observation operators as in variational schemes • There is another class of “ensemble methods” designed to sample/estimate the full PDF (not just the mean and covariance) – Particle Filters: Nonlinear, non-Gaussian DA, towards Bayesian filtering – Expensive with greater dimensionality issues than the En. KF 51

Selective References • Anderson, J. L. , 2001. An ensemble adjustment Kalman filter for data assimilation. Mon. Wea. Rev. 129, 2884– 2903. • Bishop, C. H. , Etherton, B. J. and Majumdar, S. J. , 2001. Adaptive sampling with ensemble transform Kalman filter. Part I: theoretical aspects. Mon. Wea. Rev. 129, 420– 436. • Burgers, G. , Van Leeuwen, P. J. and Evensen, G. , 1998. On the analysis scheme in the ensemble Kalman filter. Mon. Wea. Rev. 126, 1719– 1724. • Evensen, G. , 1994. Sequential data assimilation with a nonlinear quasi-geostrophic model using Monte Carlo methods to forecast error statistics. J. Geophys. Res. 99(C 5), 10 143– 10 162. • Houtekamer, P. L. and Mitchell, H. L. , 1998. Data assimilation using an ensemble Kalman filter technique. Mon. Wea. Rev. 126, 796– 811. • Houtekamer, P. L. and Mitchell, H. L. , 2001. A sequential ensemble Kalman filter for atmospheric data assimilation. Mon. Wea. Rev. 129, 123– 137. • Hunt, B. R. , Kostelich, E. J. and Szunyogh, I. , 2007. Efficient data assimilation for spatiotemporal chaos: a local ensemble transform Kalman filter. Physica D, 230, 112– 126. • Ott, E. , Hunt, B. H. , Szunyogh, I. , Zimin, A. V. , Kostelich, E. J. and co-authors. 2004. A local ensemble Kalman filter for atmospheric data assimilation. Tellus 56 A, 415– 428. • Tippett, M. K. , J. L. Anderson, C. H. Bishop, T. M. Hamill, and J. S. Whitaker, 2003: Ensemble Square Root Filters, Mon. Wea. Rev. 131, 1485– 1490. • Whitaker, J. S. and Hamill, T. M. , 2002. Ensemble data assimilation without perturbed observations. Mon. Wea. Rev. 130, 1913– 1924. • Whitaker, J. S. and Hamill, T. M. , 2012. Evaluating Methods to Account for System Errors in Ensemble Data Assimilation. Mon. Wea. Rev. 140, 3078– 3089. • Zhang, F. , C. Snyder, and J. Sun, 2004: Impact of initial estimate and observation availability on convective-scale data assimilation with an ensemble Kalman filter. Mon. Wea. Rev. 132, 1238– 1253. 52

- Slides: 52