DSpace 2 x Architecture Roadmap Robert Tansley DSpace

DSpace 2. x Architecture Roadmap Robert Tansley DSpace Technical Lead, HP © 2004 Hewlett-Packard Development Company, L. P. The information contained herein is subject to change without notice

Overview • Why a DSpace 2. x? • Proposed • Example Deployments • Proposed 3/5/2021 Target Architecture Migration Path 2

Why a DSpace 2. x?

DSpace 1. x • ‘Breadth-first’ repository implementation of institutional • Provides all required functionality to start capturing digital assets • Widened awareness and understanding of digital preservation problem 3/5/2021 4

Key areas for improvement • Modularity • Digital Preservation • Scalability 3/5/2021 5

Modularity • Current APIs are low-level, somewhat ad-hoc − Difficult to keep stable − Difficult to implement enhanced/alternative functionality behind them • Changing a particular aspect of functionality involves changing UI as well as underlying business logic module − e. g: Workflow review pages very specific to current Workflow Manager module functionality 3/5/2021 6

Modularity • Heavy inter-dependence − e. g. Use same DB tables; change in one module means you have to change others that use the same tables • No real “plug in” mechanism − Managing a modification alongside evolving core DSpace code can be tricky 3/5/2021 7

DSpace 1 series architecture 3/5/2021 8

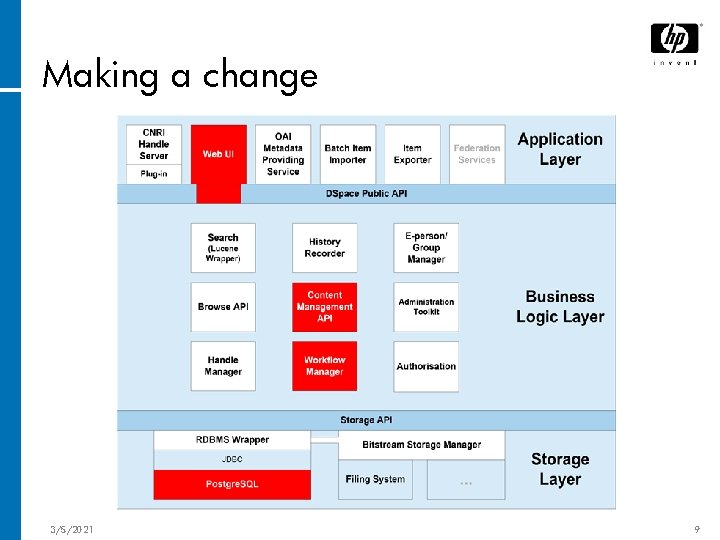

Making a change 3/5/2021 9

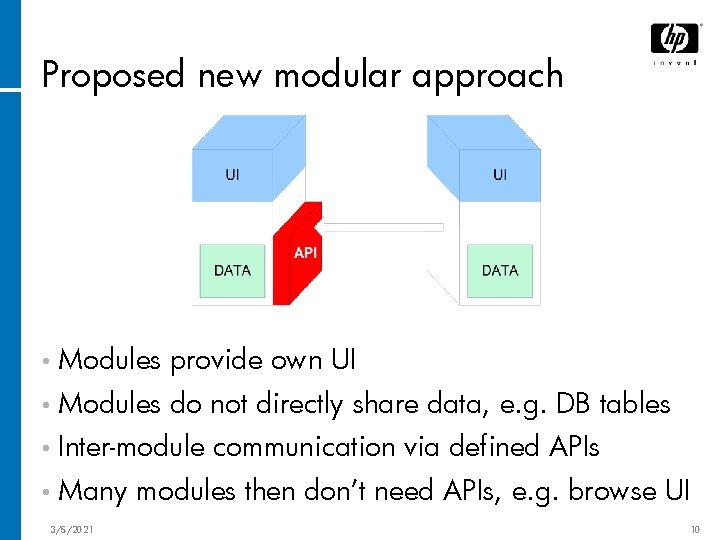

Proposed new modular approach • Modules provide own UI • Modules do not directly share data, e. g. DB tables • Inter-module • Many 3/5/2021 communication via defined APIs modules then don’t need APIs, e. g. browse UI 10

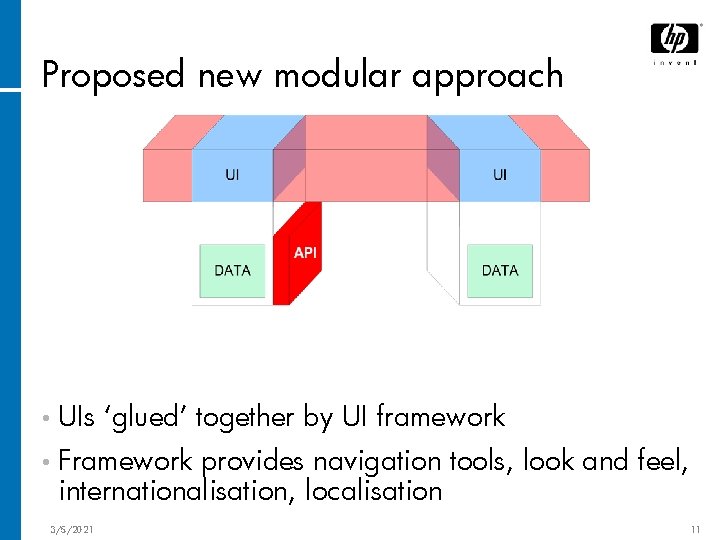

Proposed new modular approach • UIs ‘glued’ together by UI framework • Framework provides navigation tools, look and feel, internationalisation, localisation 3/5/2021 11

Proposed new modular approach • Modules 3/5/2021 can depend on APIs 12

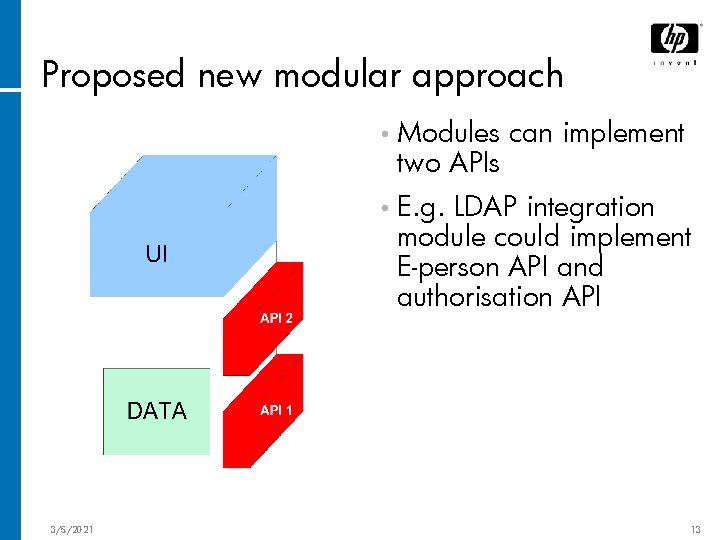

Proposed new modular approach • Modules two APIs can implement • E. g. LDAP integration module could implement E-person API and authorisation API 3/5/2021 13

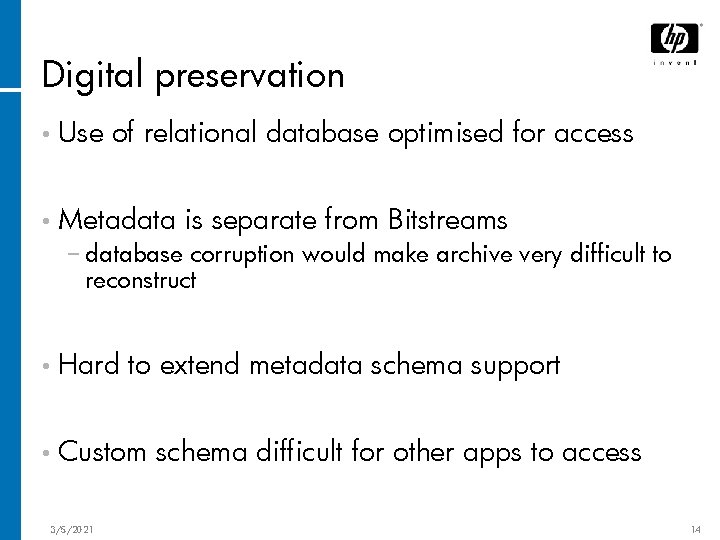

Digital preservation • Use of relational database optimised for access • Metadata is separate from Bitstreams − database corruption would make archive very difficult to reconstruct • Hard to extend metadata schema support • Custom 3/5/2021 schema difficult for other apps to access 14

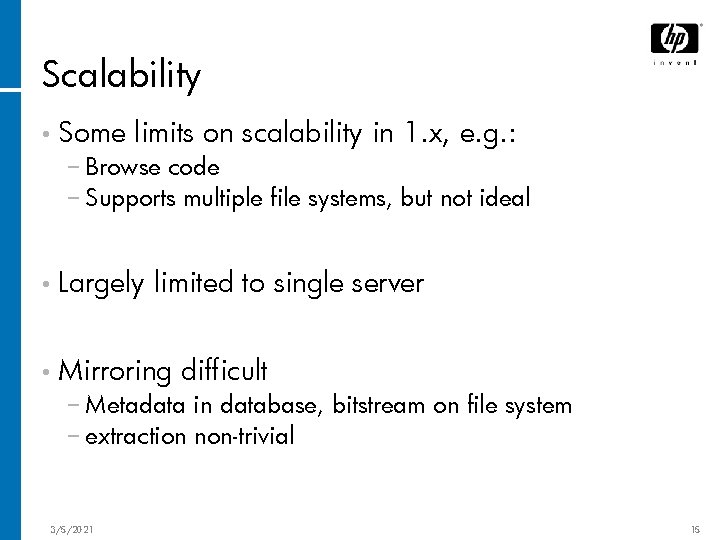

Scalability • Some limits on scalability in 1. x, e. g. : − Browse code − Supports multiple file systems, but not ideal • Largely limited to single server • Mirroring difficult − Metadata in database, bitstream on file system − extraction non-trivial 3/5/2021 15

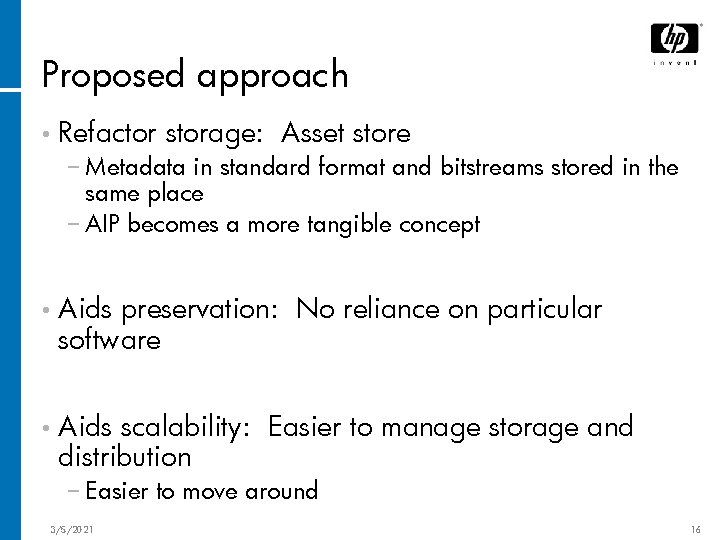

Proposed approach • Refactor storage: Asset store − Metadata in standard format and bitstreams stored in the same place − AIP becomes a more tangible concept • Aids preservation: No reliance on particular software • Aids scalability: Easier to manage storage and distribution − Easier to move around 3/5/2021 16

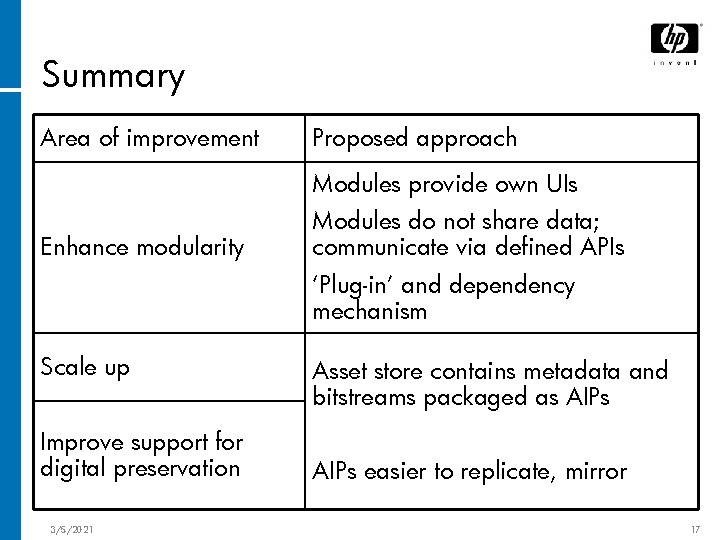

Summary Area of improvement Proposed approach Modules provide own UIs Enhance modularity Modules do not share data; communicate via defined APIs ‘Plug-in’ and dependency mechanism Scale up Improve support for digital preservation 3/5/2021 Asset store contains metadata and bitstreams packaged as AIPs easier to replicate, mirror 17

Proposed Target Architecture

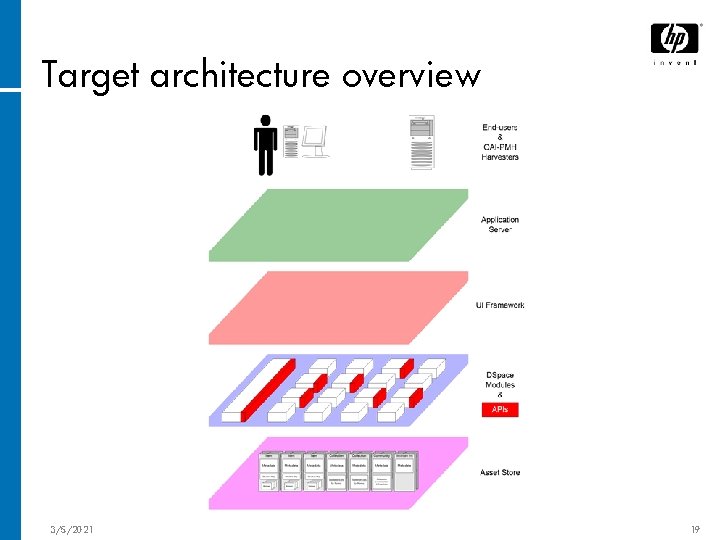

Target architecture overview 3/5/2021 19

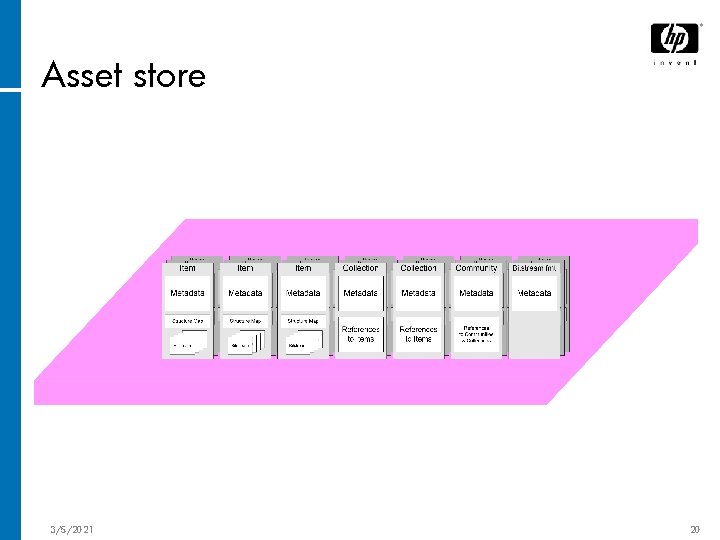

Asset store 3/5/2021 20

Asset store • Corresponds • Contains to OAIS ‘Archival Storage’ only Archival Information Packages (AIPs) − Not e-people records, in-progress submissions etc. • AIPs consist of − Metadata serialisation − Bitstreams − AIP checksum 3/5/2021 21

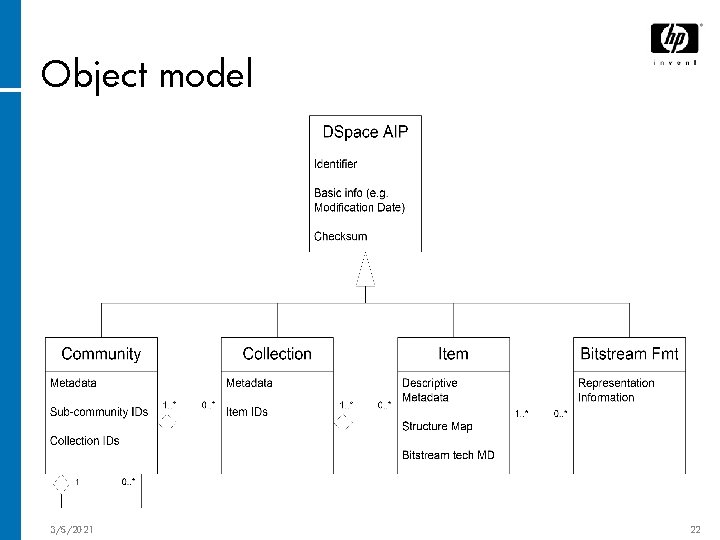

Object model 3/5/2021 22

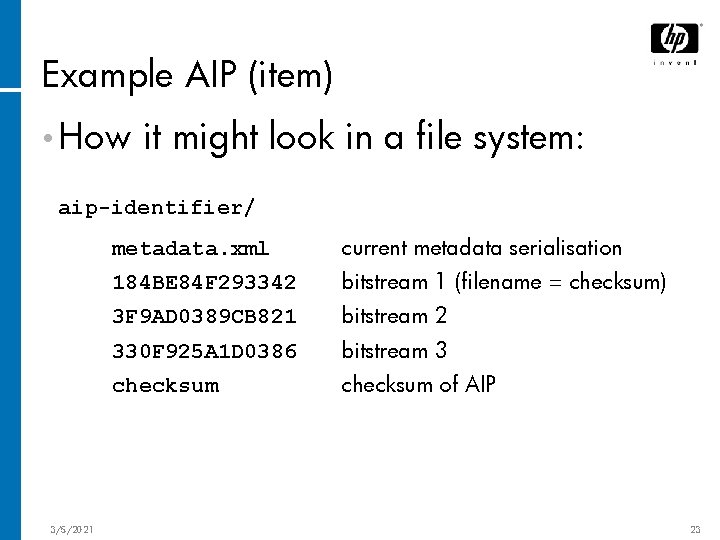

Example AIP (item) • How it might look in a file system: aip-identifier/ 3/5/2021 metadata. xml current metadata serialisation 184 BE 84 F 293342 bitstream 1 (filename = checksum) 3 F 9 AD 0389 CB 821 bitstream 2 330 F 925 A 1 D 0386 bitstream 3 checksum of AIP 23

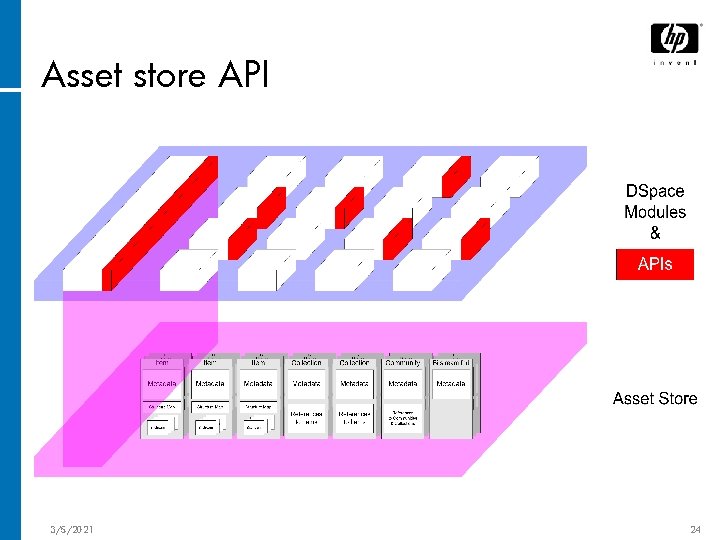

Asset store API 3/5/2021 24

Asset store API • Standardised • May Java API for DSpace asset stores be different implementations − simple file system − Enterprise reference information store − Grid-based, e. g. SRB − SAN • Allows 3/5/2021 creation, retrieval, update etc. of AIPs 25

Scaling up • Easy to replicate AIPs and asset stores • Enables serving larger numbers of users • Aids preservation: Multiple copies, more robust 3/5/2021 26

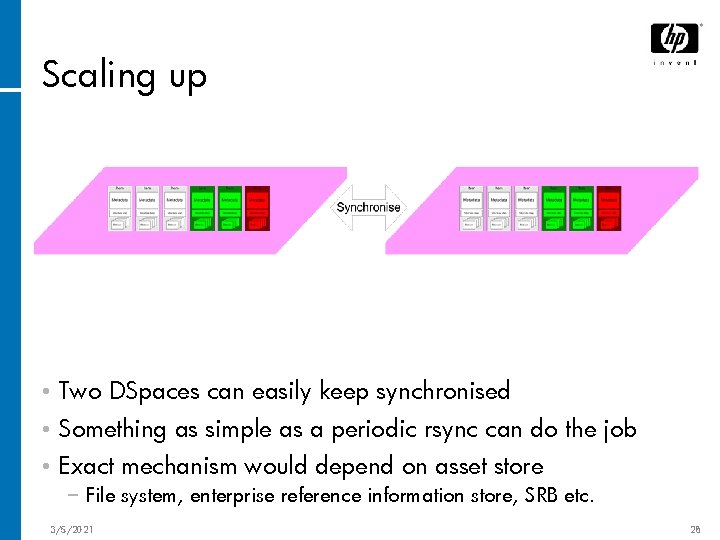

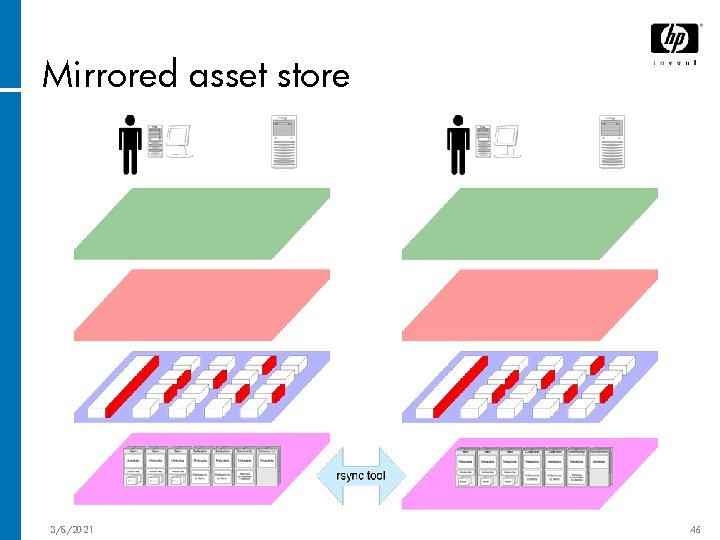

Scaling up • Two DSpaces can easily keep synchronised 3/5/2021 27

Scaling up • Two DSpaces can easily keep synchronised • Something as simple as a periodic rsync can do the job • Exact mechanism would depend on asset store − File system, enterprise reference information store, SRB etc. 3/5/2021 28

What about clashes? • We’re dealing with reference information − DSpace is not an authoring system − Not work-in-progress, often-updated material • Same AIP updated by two different DSpace instances in same day unlikely − Can flag as a conflict for manual resolution • Exception: Items being added to same collection − Simple to resolve; merge the additions • Just 3/5/2021 make sure IDs are unique! 29

What about search indices? • Modules may maintain indices or ‘caches’ of information from AIPs in the asset store − E. g. the browse UI, Lucene index • Modules keep indices or caches up-to-date by periodically polling asset store API − Similar to ‘incremental harvesting’ in OAI-PMH 3/5/2021 30

Why the polling approach? • Polling is simpler to implement than real-time notification − Implementing custom asset store easier • More scalable: can control when indexing occurs − Big sync might mean several indices updating at once • End-users might not see deposits appear in the search/browse indices immediately. However: − Doesn’t happen anyway if any workflow review needed − Needn’t take more than overnight to happen − Reference information; not time-critical data 3/5/2021 31

DSpace modular architecture • Some modules have APIs; some do not • Modules may have dependencies − i. e. for module X depends on an implementation of API Y • Modules may use RDBMS but do not share tables 3/5/2021 32

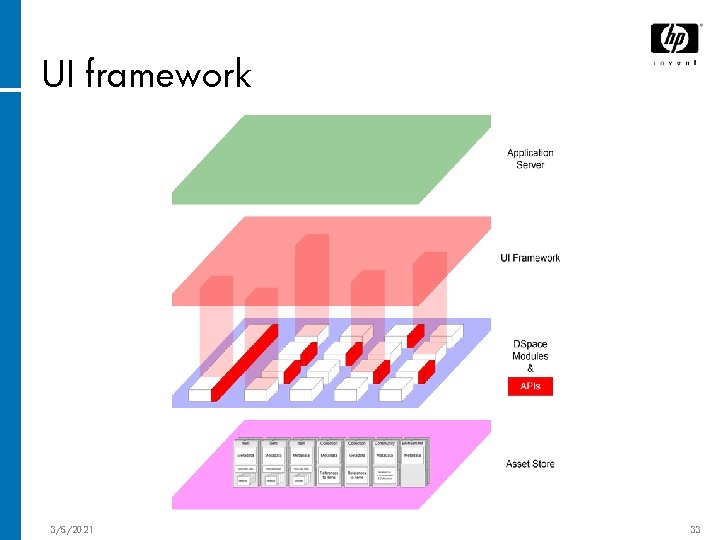

UI framework 3/5/2021 33

UI framework • Glues together UIs of different modules − Provides navigation tools, stylesheets, ‘skin’ − Internationalisation, localisation − User authentication • Cocoon provides most of the above functionality − Easy to add the rest 3/5/2021 34

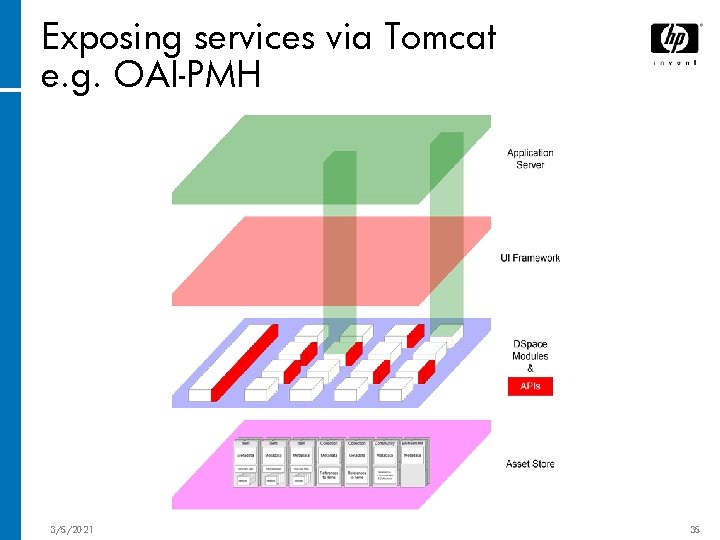

Exposing services via Tomcat e. g. OAI-PMH 3/5/2021 35

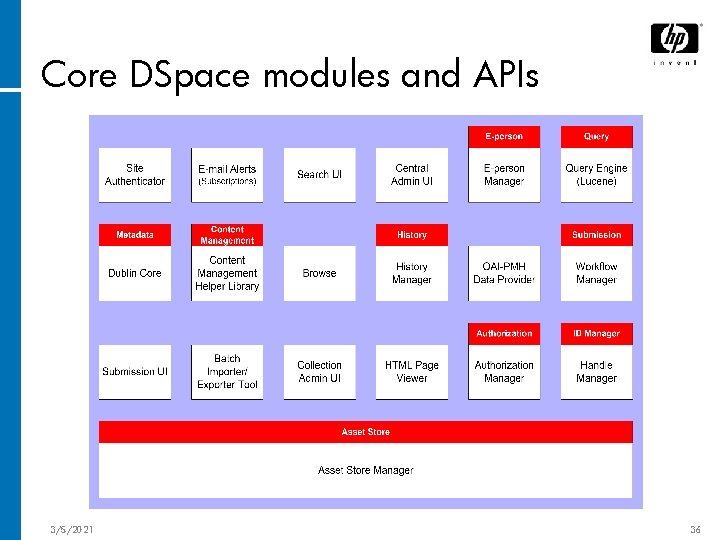

Core DSpace modules and APIs 3/5/2021 36

Content management API • Similar to existing org. dspace. content API • Provides procedural way to manipulate AIPs • Implementation RDBMS may ‘cache’ some information in − E. g. Community/collection/item structure 3/5/2021 37

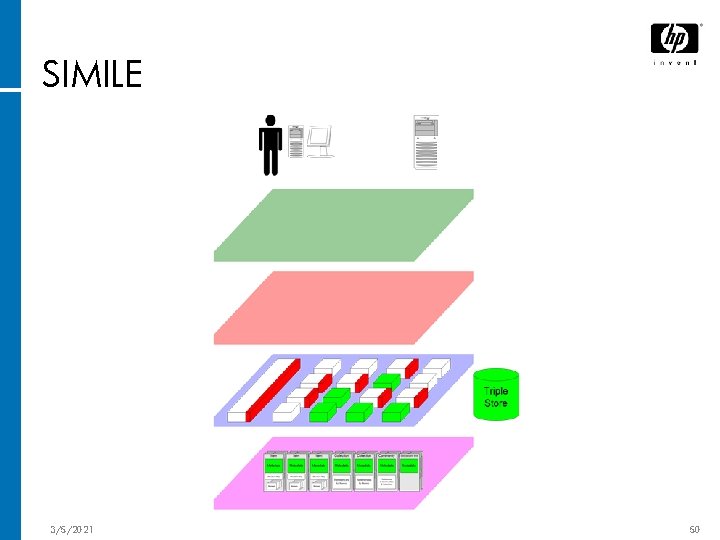

Extending metadata • Pull out pieces of search UI, submit UI, item display related to Dublin Core into a separate module • Allow other similar modules for dealing with other schemas and extensions • Start with simple property/value support • SIMILE 3/5/2021 will provide richer functionality 38

Security • Similar to DSpace 1. x • Modules running within DSpace instance ‘trusted’ − Not worrying about malicious code for now • Modules, UI framework responsible for authenticating end-user as an e-person • Modules, asset store implementation must invoke authorisation API as appropriate 3/5/2021 39

Summary • Refactor storage: Content in AIPs (metadata + bitstreams) − Easier to share/mirror AIPs with periodic synchronisation − Modules do OAI-PMH-style incremental harvests to keep indices/caches up to date • Benefit: • Cost: Increased scalability, preserve-ability New/changed AIPs aren’t instantly indexed − Often not the case anyway (workflow reviews) − Reference information (not time critical) 3/5/2021 40

Summary • Modular architecture − Modules responsible for own UI and data − Modules inter-communicate via defined APIs − UI framework provides Web UI ‘glue’ – Cocoon − Dependency mechanism to allow ‘plug-in’ functionality • Benefit: Vastly improved modularity − Essential for our diverse community of users • Cost: Implementing modules might take more effort − Unavoidable but manageable price of modularity • Different from current approach; migration non-trivial − Those who haven’t changed DSpace 1 much will have easy upgrade path − Does anyone really like servlets/JSPs? 3/5/2021 41

Example Deployments

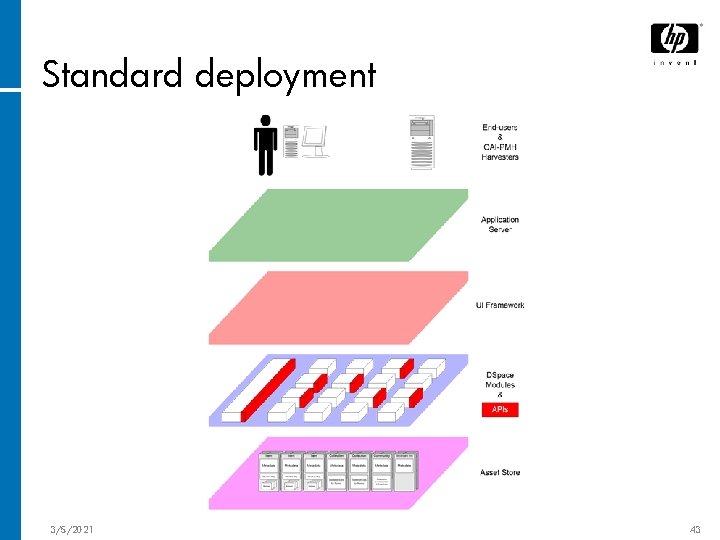

Standard deployment 3/5/2021 43

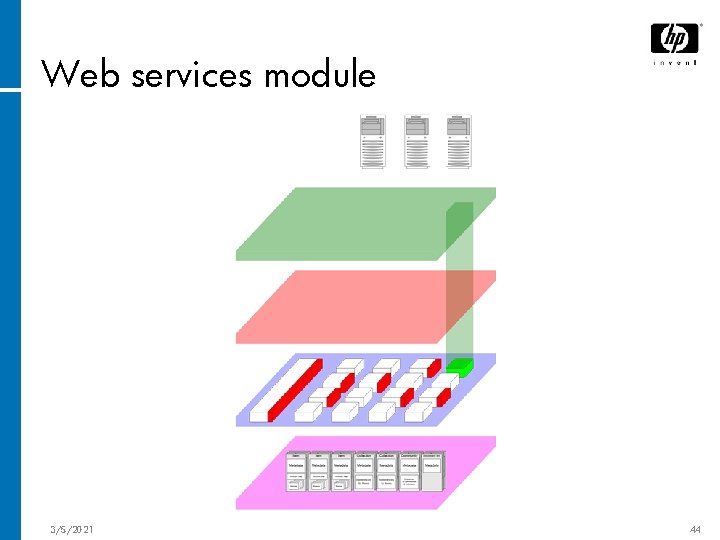

Web services module 3/5/2021 44

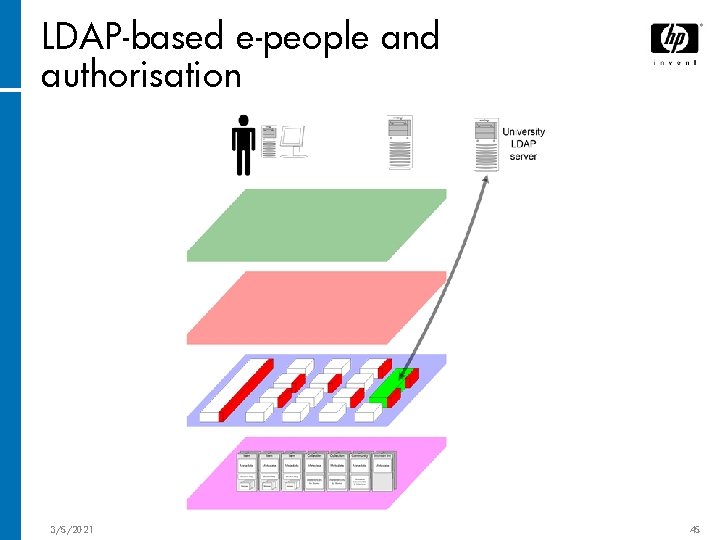

LDAP-based e-people and authorisation 3/5/2021 45

Mirrored asset store 3/5/2021 46

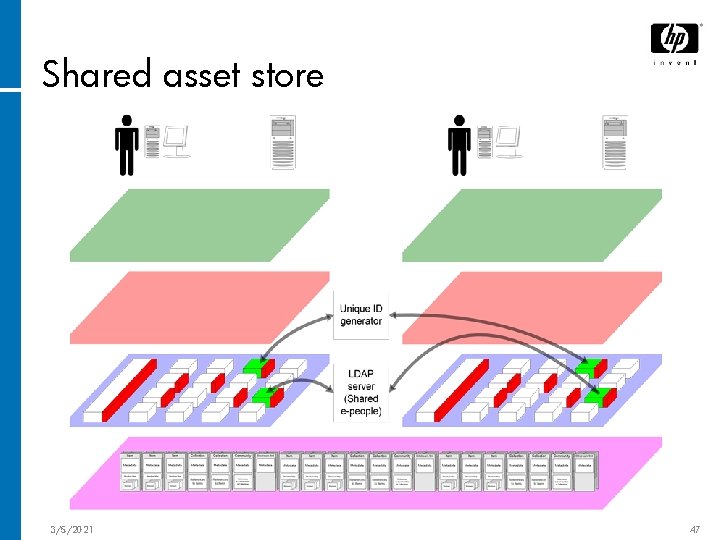

Shared asset store 3/5/2021 47

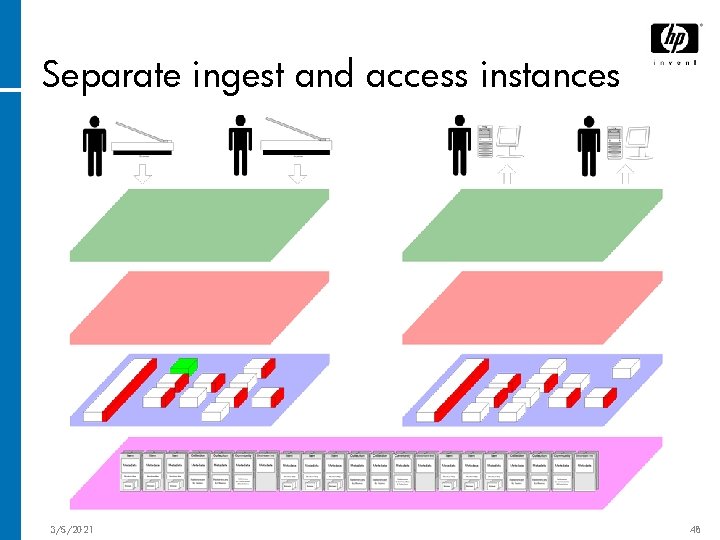

Separate ingest and access instances 3/5/2021 48

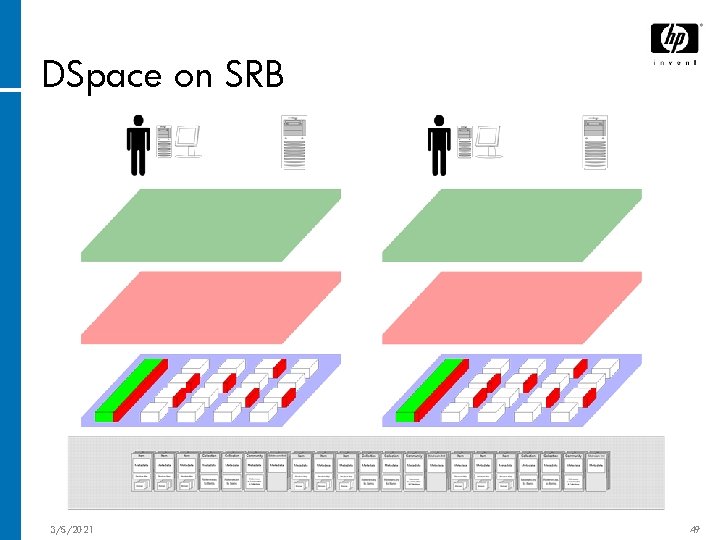

DSpace on SRB 3/5/2021 49

SIMILE 3/5/2021 50

Proposed Migration Path

Stage 1: Build asset store • Decide • Build on AIP metadata serialisation asset store • Integrate asset store w/DSpace 1. x − Either build synchronisation tool, or − Replace CM API (org. dspace. content) -- trickier 3/5/2021 52

Stage 2: Build 2. 0 • Design & build modular infrastructure (dependencies etc) • Define the APIs • Port/implement • Release 1. x functionality this as 2. 0 − Institutions can port their code to the 2. 0 architecture, and swap over 3/5/2021 53

Stage 3: 2. x and beyond • DSpace 2. 1 − Authorisation policy expression in AIPs − XQuery API • DSpace 2. 2 − Federation • DSpace 2. 3 − Integrate SIMILE components 3/5/2021 54

3/5/2021 55

- Slides: 55