Data Mining Classification Alternative Techniques Lecture Notes for

- Slides: 47

Data Mining Classification: Alternative Techniques Lecture Notes for Chapter 5 Introduction to Data Mining by Tan, Steinbach, Kumar © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 1

Alternative Techniques Rule-Based Classifier – Classify records by using a collection of “if…then…” rules l Instance Based Classifiers l © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 2

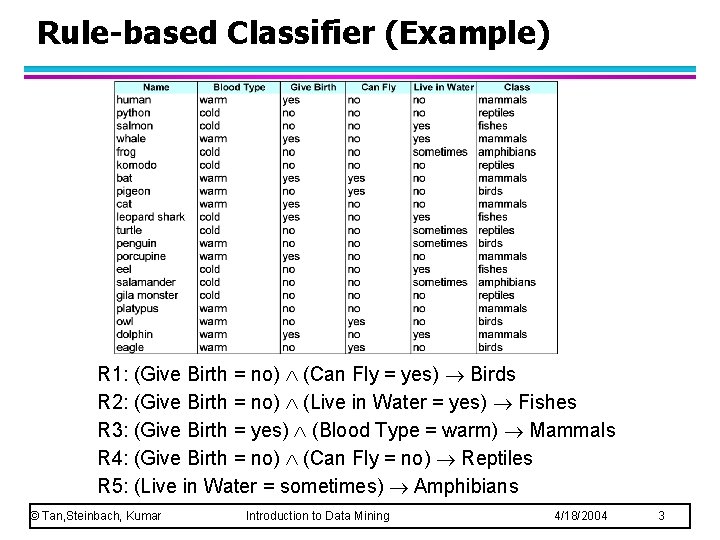

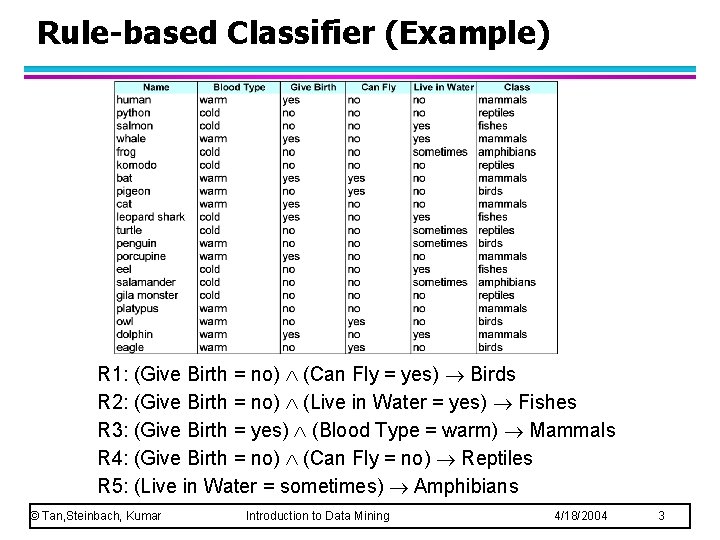

Rule-based Classifier (Example) R 1: (Give Birth = no) (Can Fly = yes) Birds R 2: (Give Birth = no) (Live in Water = yes) Fishes R 3: (Give Birth = yes) (Blood Type = warm) Mammals R 4: (Give Birth = no) (Can Fly = no) Reptiles R 5: (Live in Water = sometimes) Amphibians © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 3

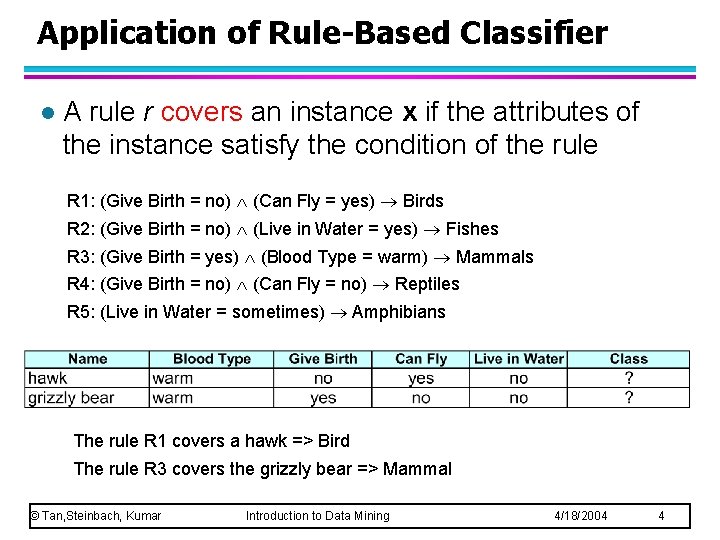

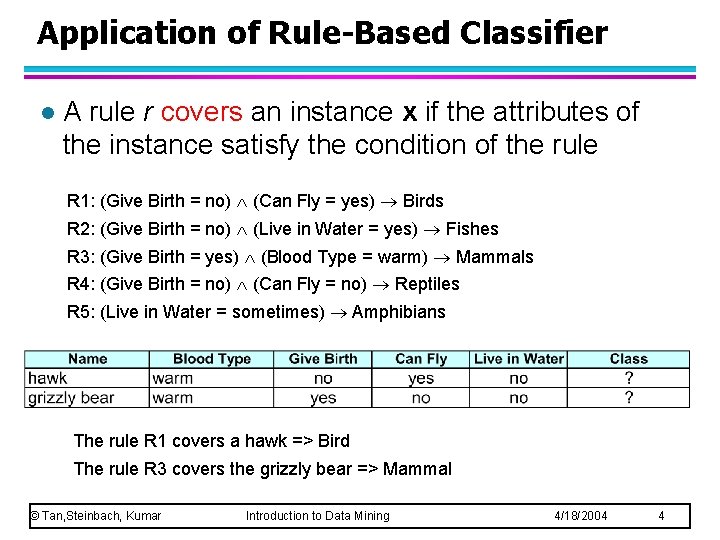

Application of Rule-Based Classifier l A rule r covers an instance x if the attributes of the instance satisfy the condition of the rule R 1: (Give Birth = no) (Can Fly = yes) Birds R 2: (Give Birth = no) (Live in Water = yes) Fishes R 3: (Give Birth = yes) (Blood Type = warm) Mammals R 4: (Give Birth = no) (Can Fly = no) Reptiles R 5: (Live in Water = sometimes) Amphibians The rule R 1 covers a hawk => Bird The rule R 3 covers the grizzly bear => Mammal © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 4

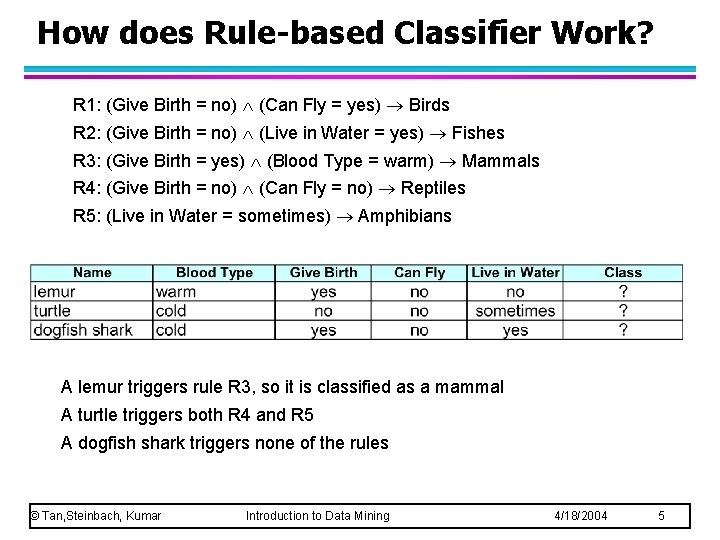

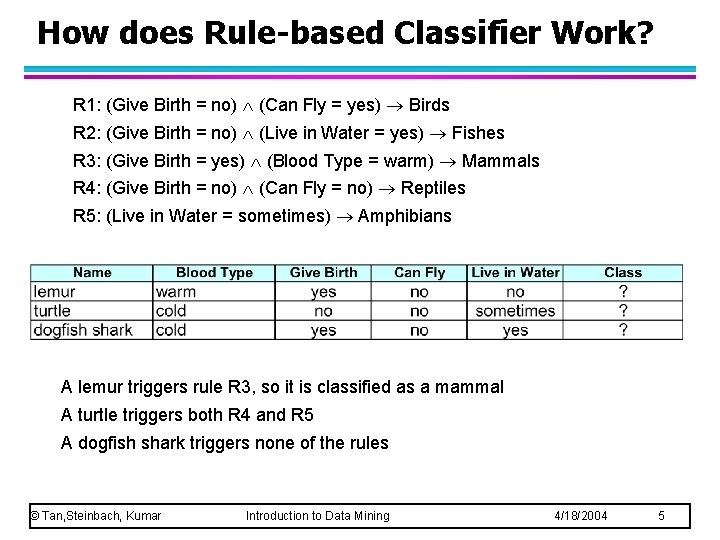

How does Rule-based Classifier Work? R 1: (Give Birth = no) (Can Fly = yes) Birds R 2: (Give Birth = no) (Live in Water = yes) Fishes R 3: (Give Birth = yes) (Blood Type = warm) Mammals R 4: (Give Birth = no) (Can Fly = no) Reptiles R 5: (Live in Water = sometimes) Amphibians A lemur triggers rule R 3, so it is classified as a mammal A turtle triggers both R 4 and R 5 A dogfish shark triggers none of the rules © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 5

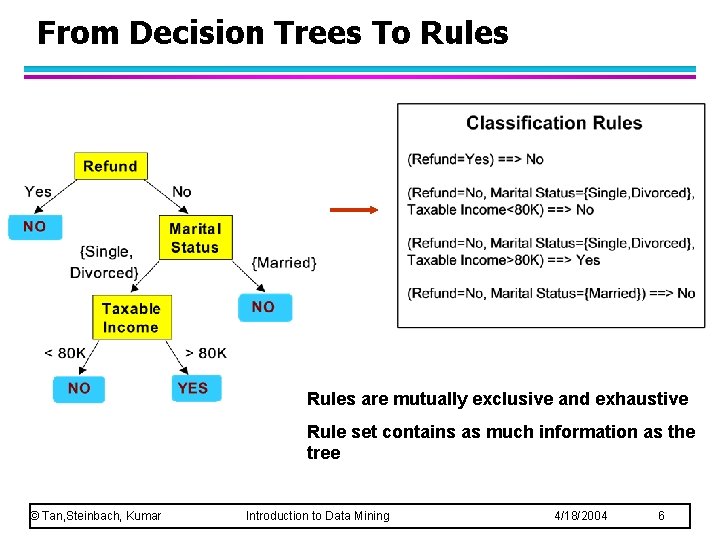

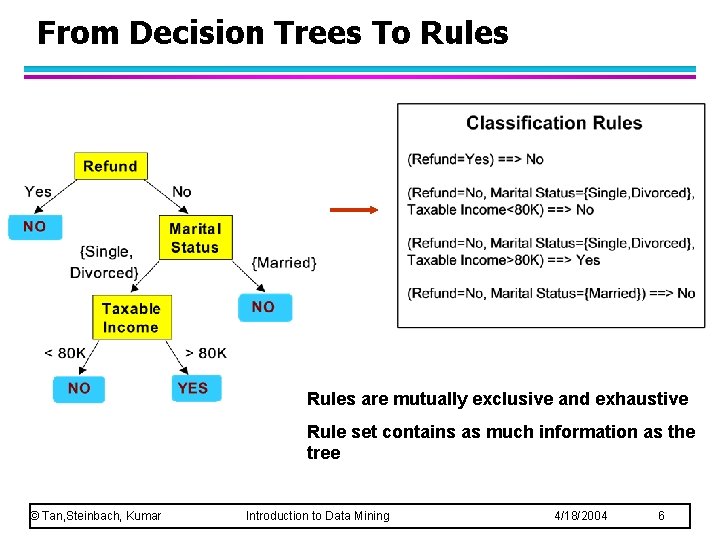

From Decision Trees To Rules are mutually exclusive and exhaustive Rule set contains as much information as the tree © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 6

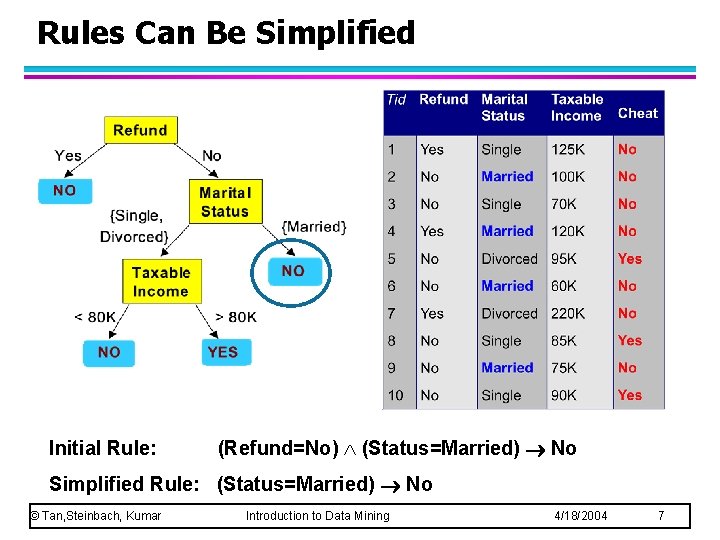

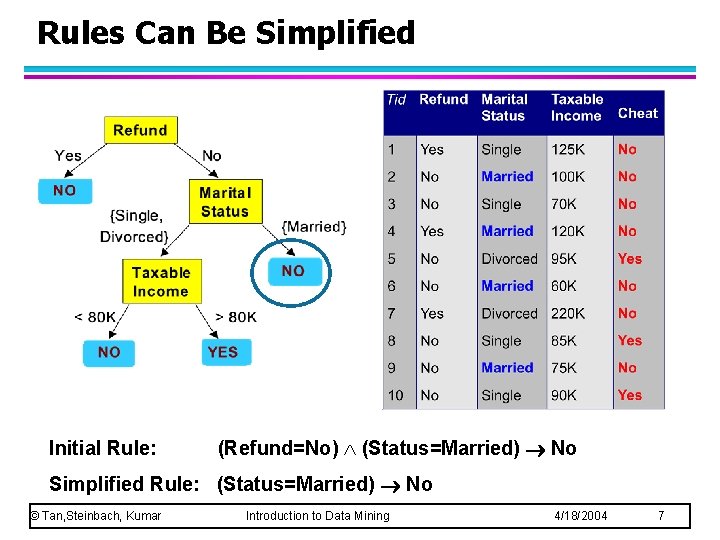

Rules Can Be Simplified Initial Rule: (Refund=No) (Status=Married) No Simplified Rule: (Status=Married) No © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 7

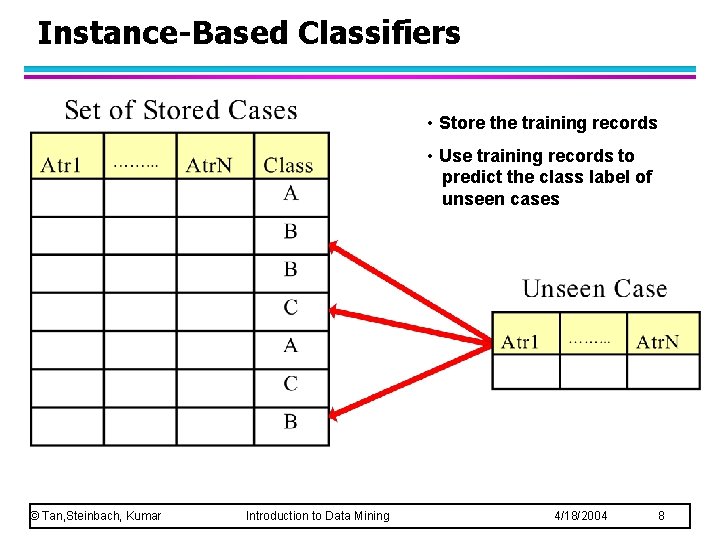

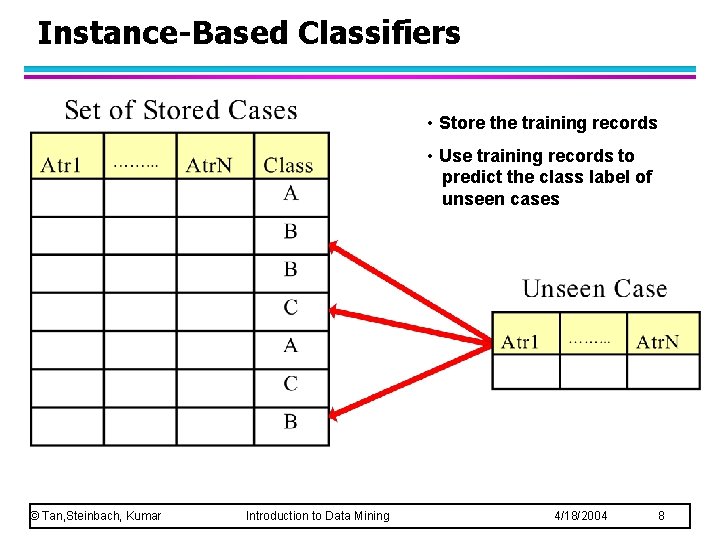

Instance-Based Classifiers • Store the training records • Use training records to predict the class label of unseen cases © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 8

Instance Based Classifiers l Examples: – Rote-learner Memorizes entire training data and performs classification only if attributes of record match one of the training examples exactly u – Nearest neighbor Uses k “closest” points (nearest neighbors) for performing classification u © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 9

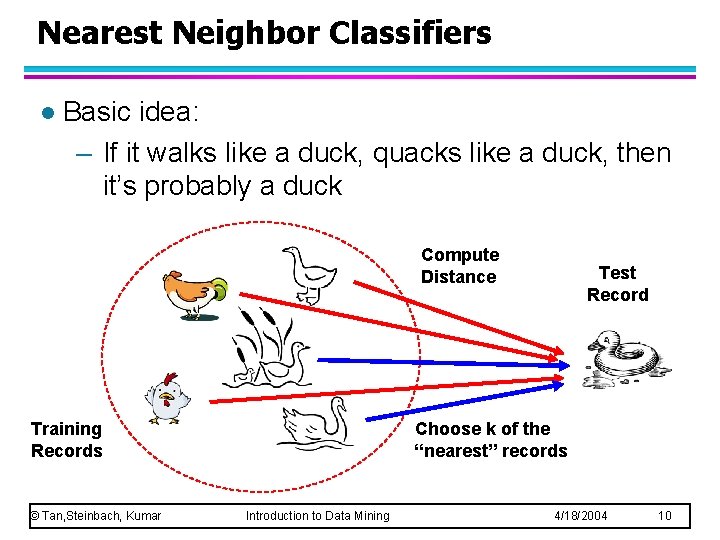

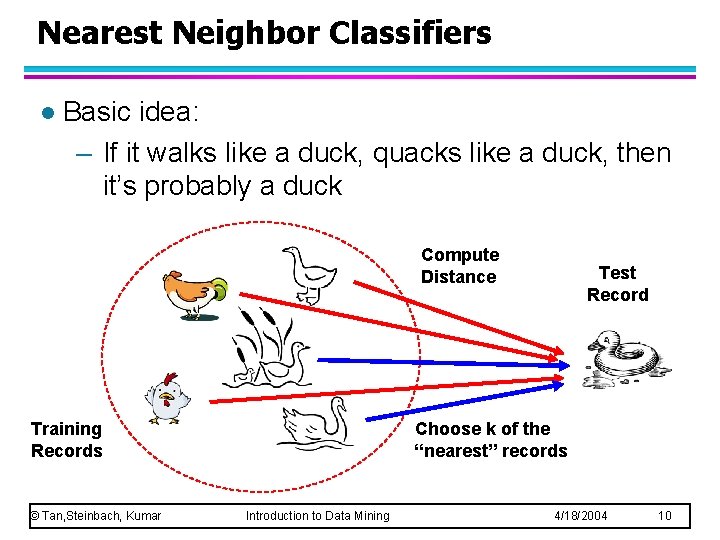

Nearest Neighbor Classifiers l Basic idea: – If it walks like a duck, quacks like a duck, then it’s probably a duck Compute Distance Training Records © Tan, Steinbach, Kumar Test Record Choose k of the “nearest” records Introduction to Data Mining 4/18/2004 10

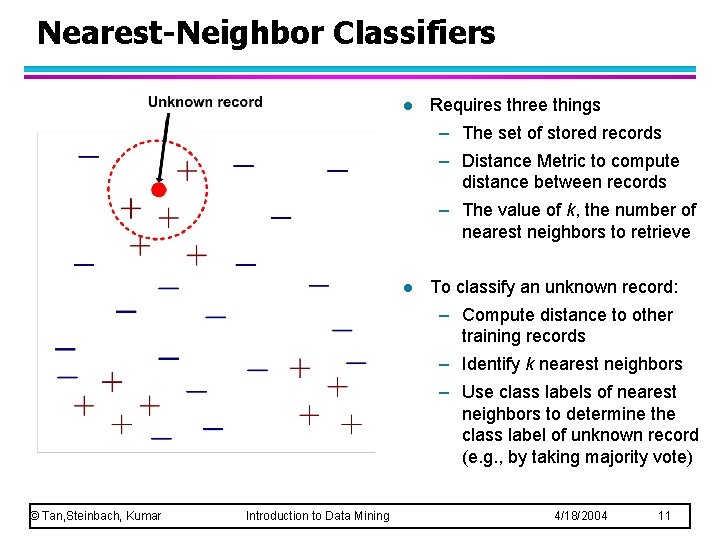

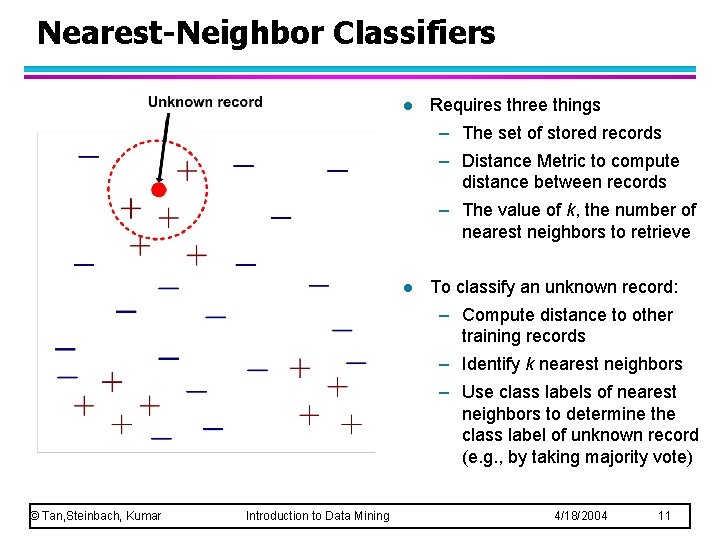

Nearest-Neighbor Classifiers l Requires three things – The set of stored records – Distance Metric to compute distance between records – The value of k, the number of nearest neighbors to retrieve l To classify an unknown record: – Compute distance to other training records – Identify k nearest neighbors – Use class labels of nearest neighbors to determine the class label of unknown record (e. g. , by taking majority vote) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 11

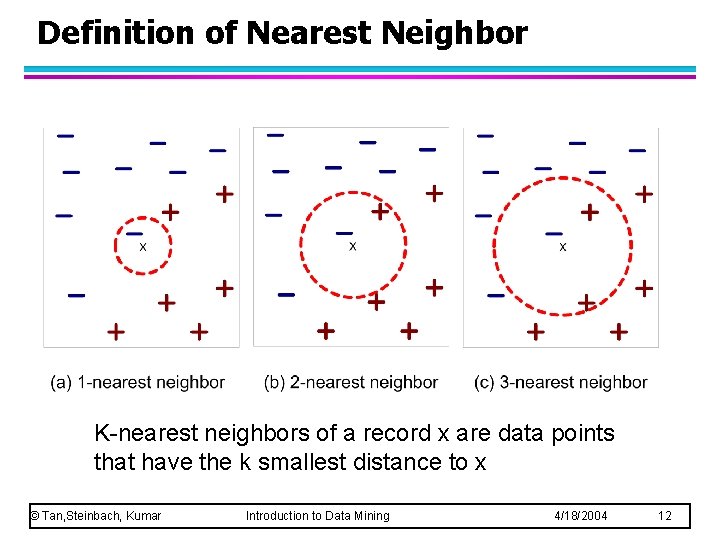

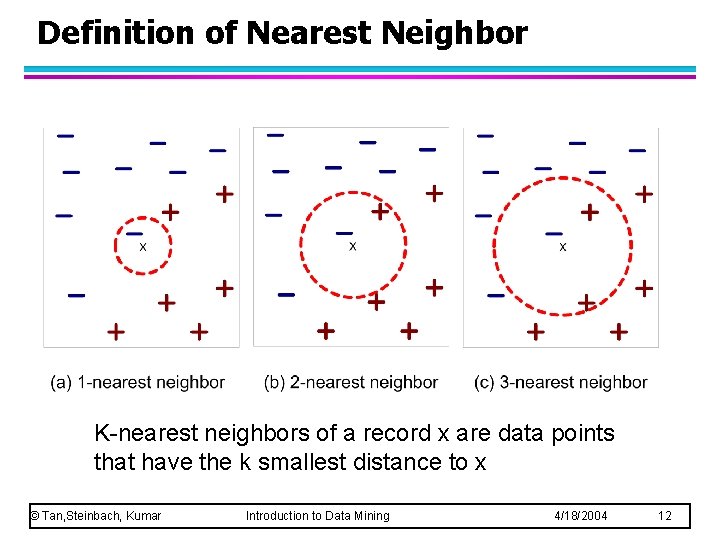

Definition of Nearest Neighbor K-nearest neighbors of a record x are data points that have the k smallest distance to x © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 12

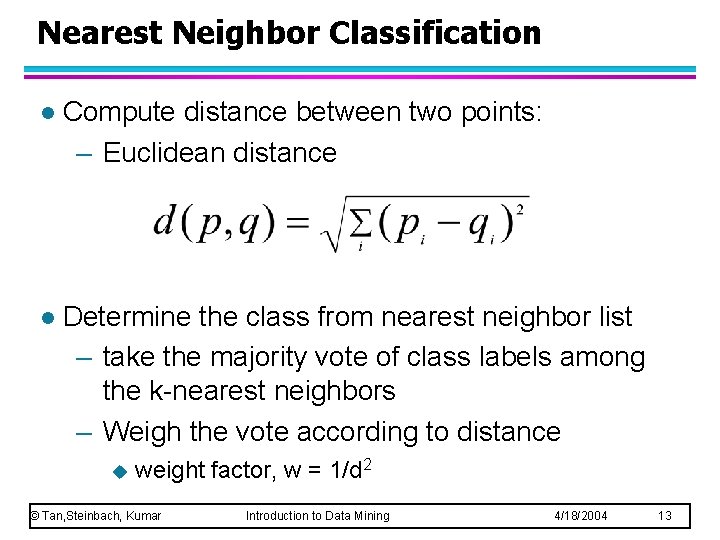

Nearest Neighbor Classification l Compute distance between two points: – Euclidean distance l Determine the class from nearest neighbor list – take the majority vote of class labels among the k-nearest neighbors – Weigh the vote according to distance u weight factor, w = 1/d 2 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 13

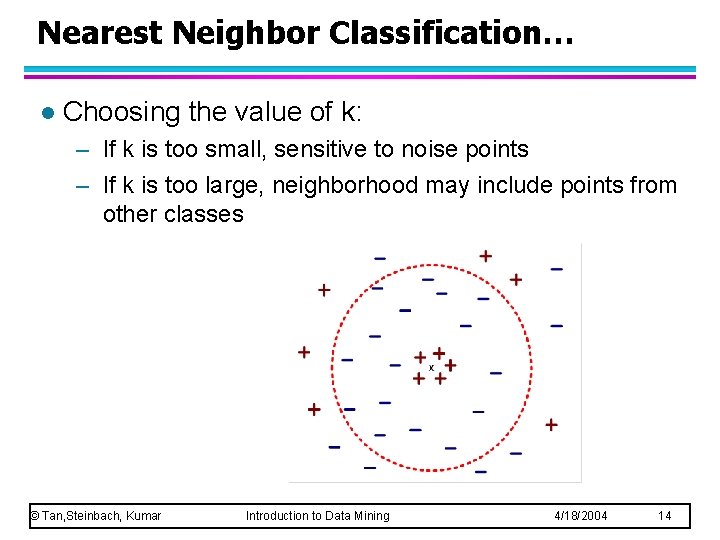

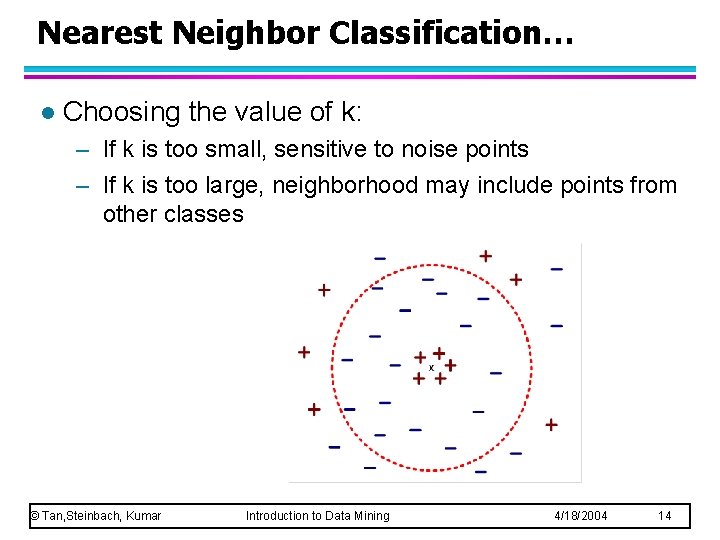

Nearest Neighbor Classification… l Choosing the value of k: – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 14

Nearest Neighbor Classification… l Scaling issues – Attributes may have to be scaled to prevent distance measures from being dominated by one of the attributes – Example: height of a person may vary from 1. 5 m to 1. 8 m u weight of a person may vary from 90 lb to 300 lb u income of a person may vary from $10 K to $1 M u – Solution: Normalize the vectors to unit length © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 15

Nearest neighbor Classification… l k-NN classifiers are lazy learners – It does not build models explicitly – Unlike eager learners such as decision tree induction and rule-based systems – Classifying unknown records are relatively expensive © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 16

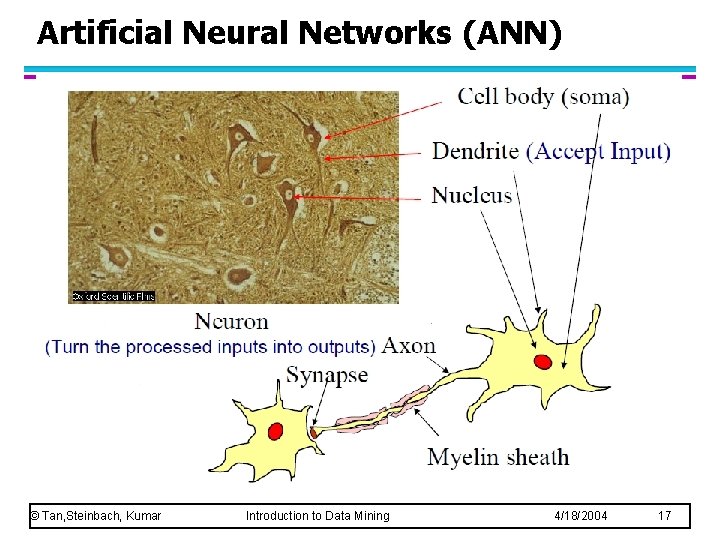

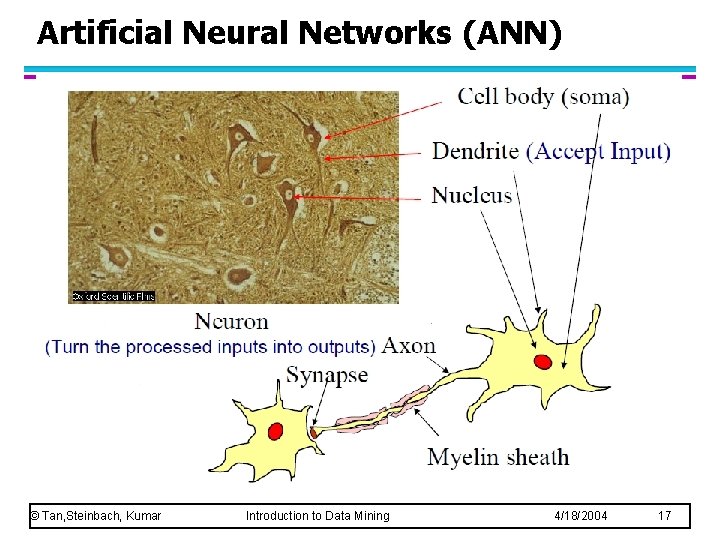

Artificial Neural Networks (ANN) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 17

Artificial Neural Networks (ANN) l What is ANN? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 18

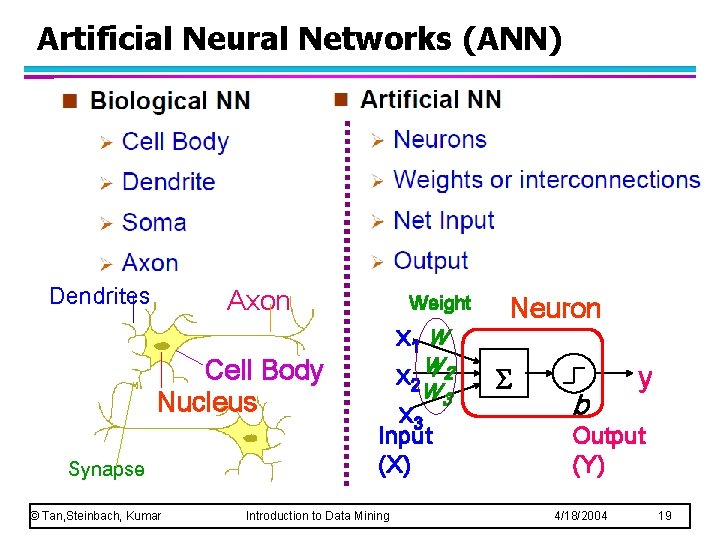

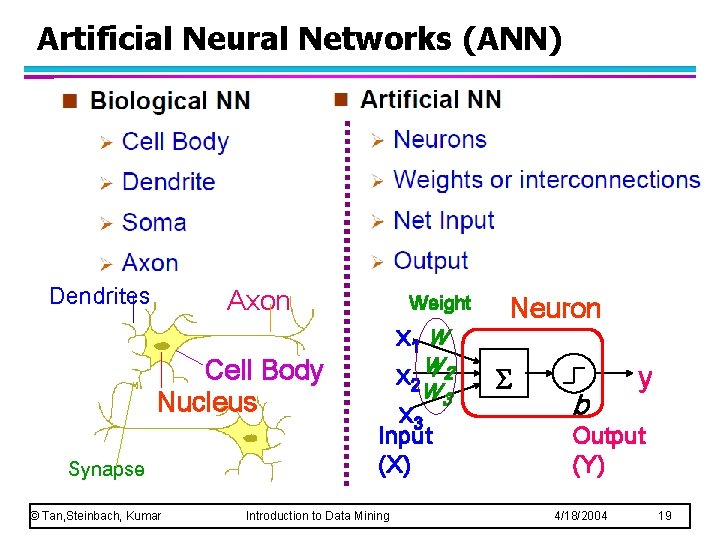

Artificial Neural Networks (ANN) Dendrites Axon Weight Cell Body Nucleus Synapse © Tan, Steinbach, Kumar x 1 w 12 x 2 w w 3 x 3 Input (X) Introduction to Data Mining Neuron S b y Output (Y) 4/18/2004 19

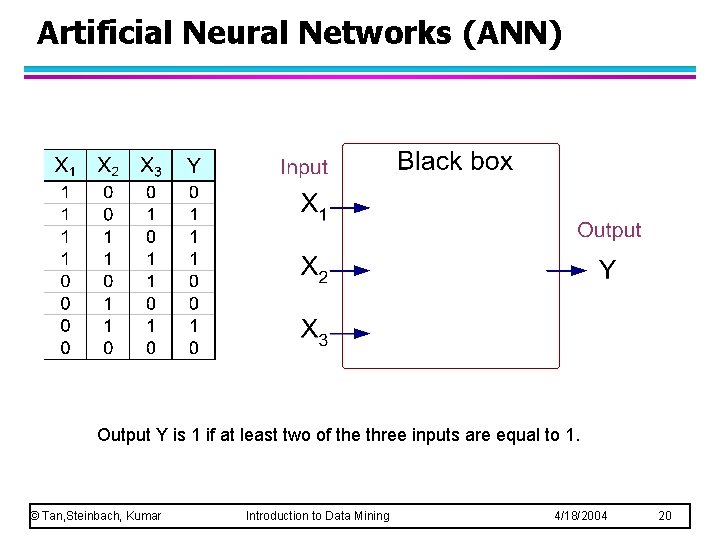

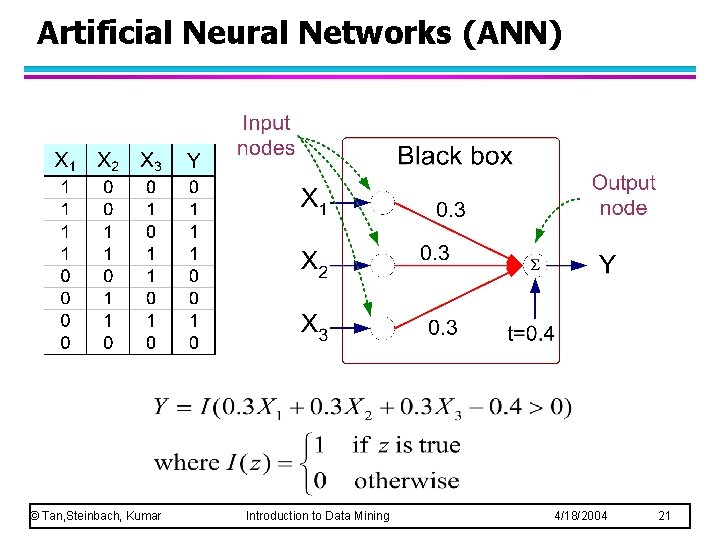

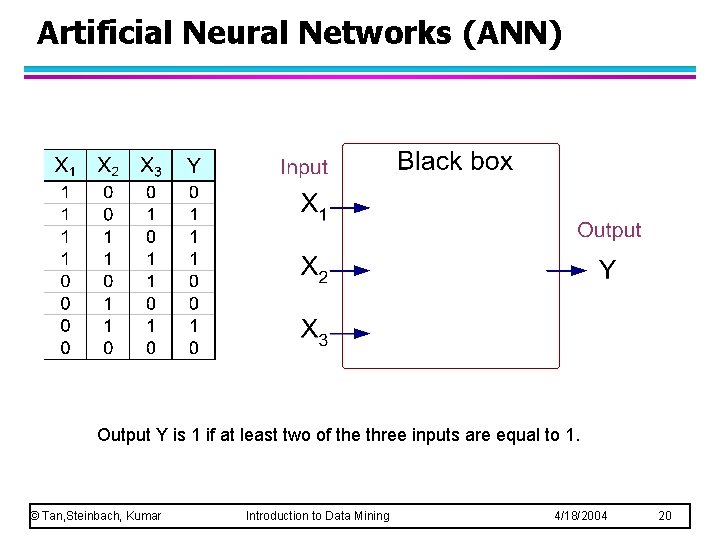

Artificial Neural Networks (ANN) Output Y is 1 if at least two of the three inputs are equal to 1. © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 20

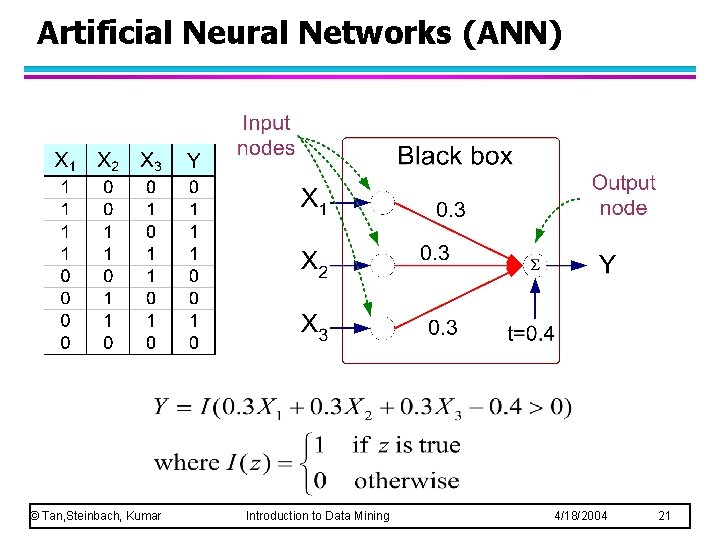

Artificial Neural Networks (ANN) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 21

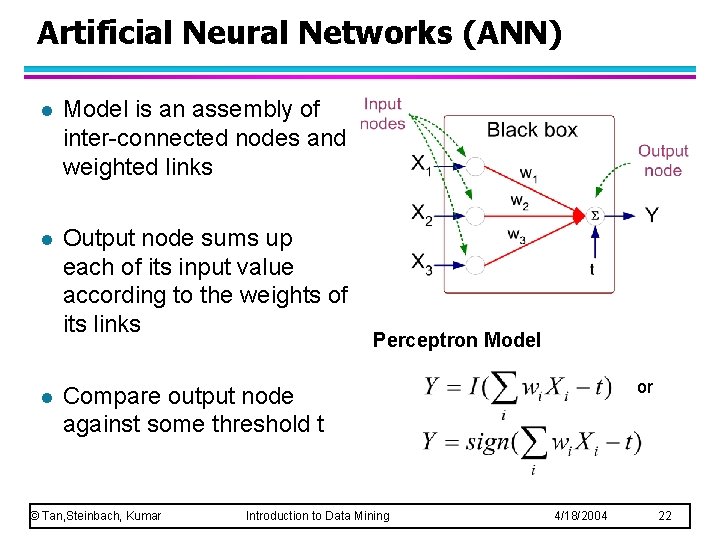

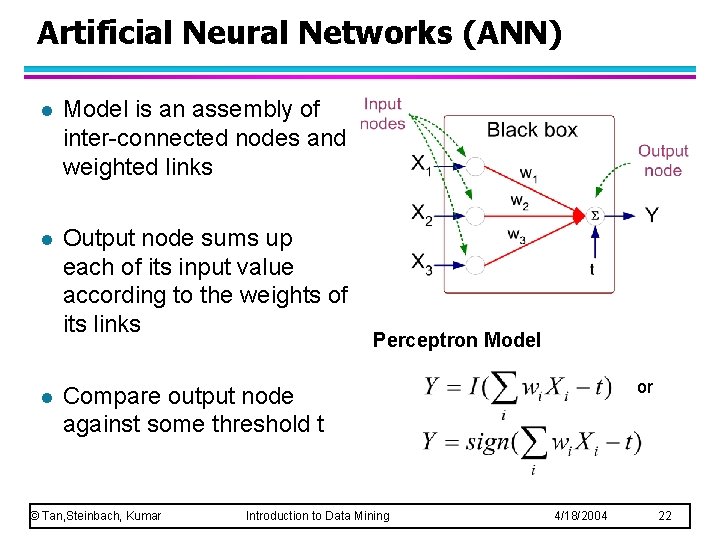

Artificial Neural Networks (ANN) l Model is an assembly of inter-connected nodes and weighted links l Output node sums up each of its input value according to the weights of its links l Perceptron Model or Compare output node against some threshold t © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 22

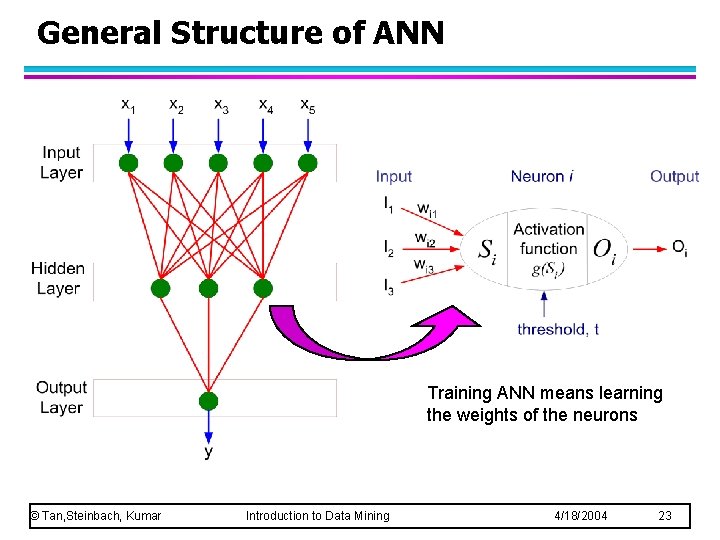

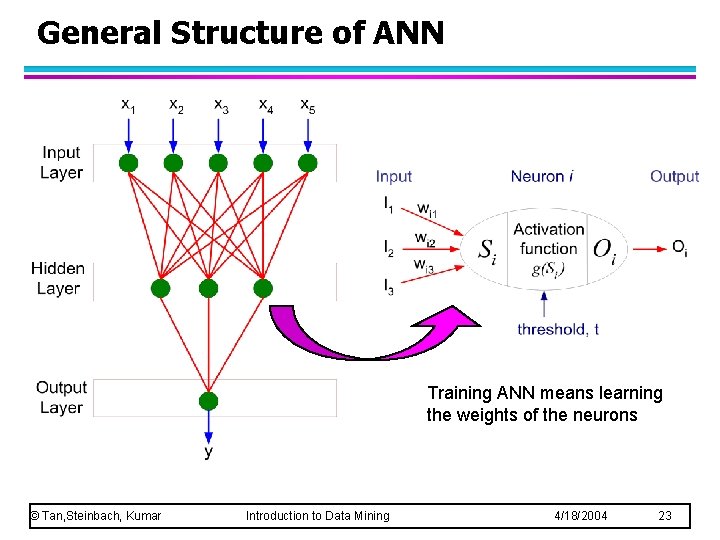

General Structure of ANN Training ANN means learning the weights of the neurons © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 23

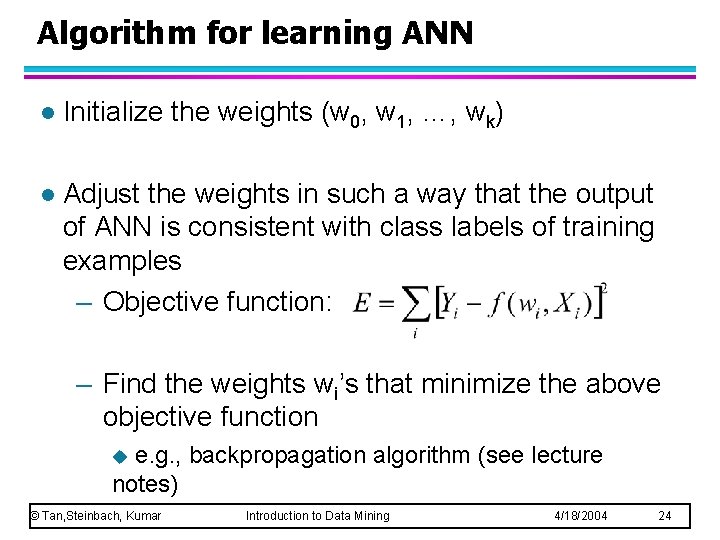

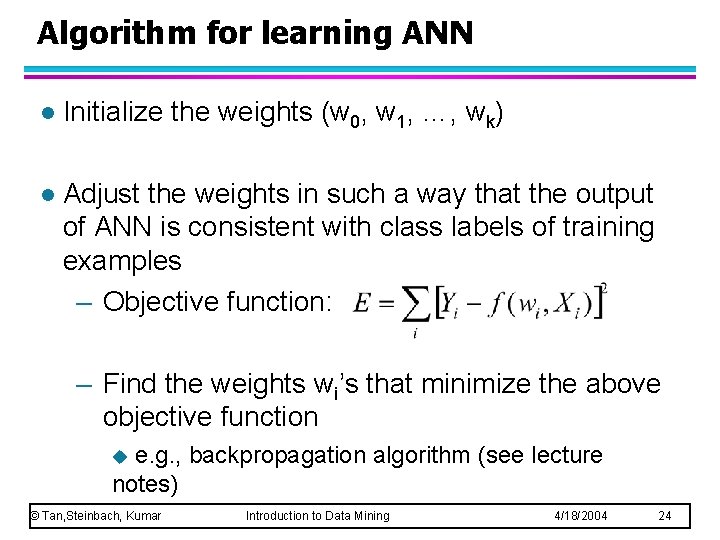

Algorithm for learning ANN l Initialize the weights (w 0, w 1, …, wk) l Adjust the weights in such a way that the output of ANN is consistent with class labels of training examples – Objective function: – Find the weights wi’s that minimize the above objective function e. g. , backpropagation algorithm (see lecture notes) u © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 24

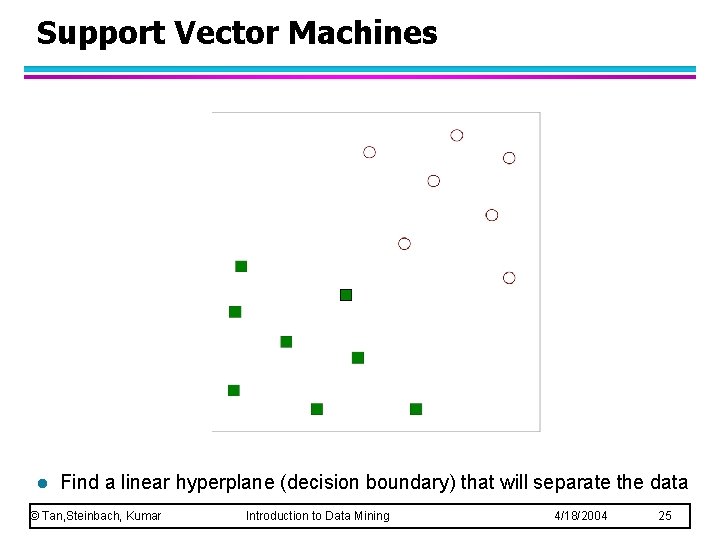

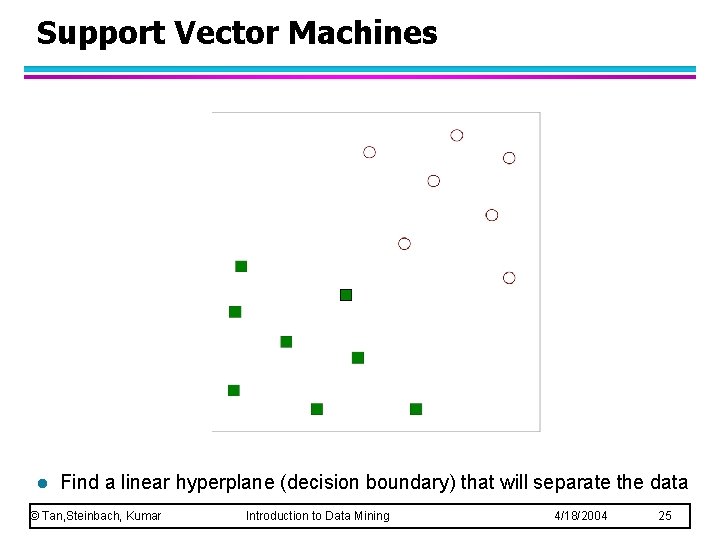

Support Vector Machines l Find a linear hyperplane (decision boundary) that will separate the data © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 25

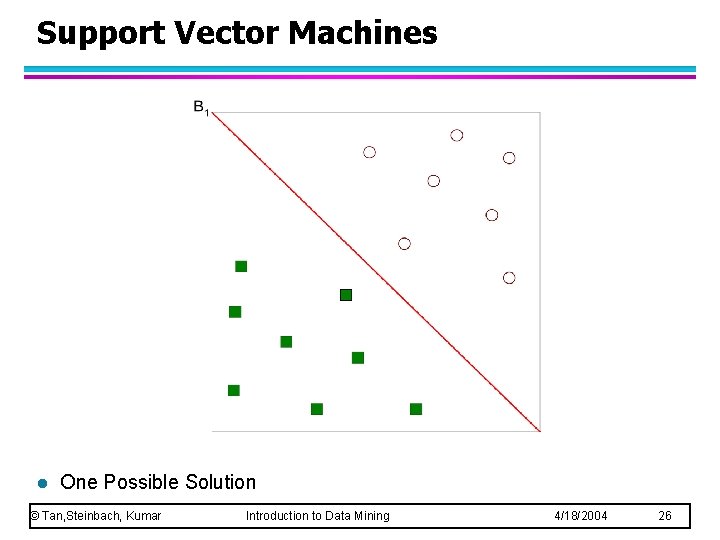

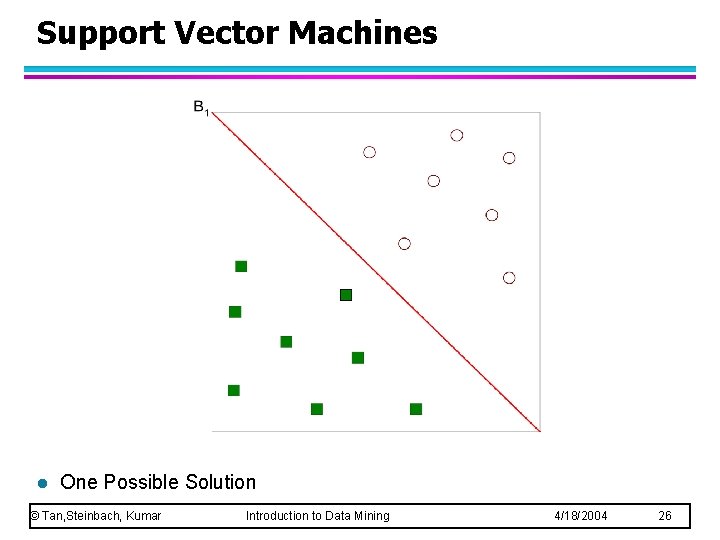

Support Vector Machines l One Possible Solution © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 26

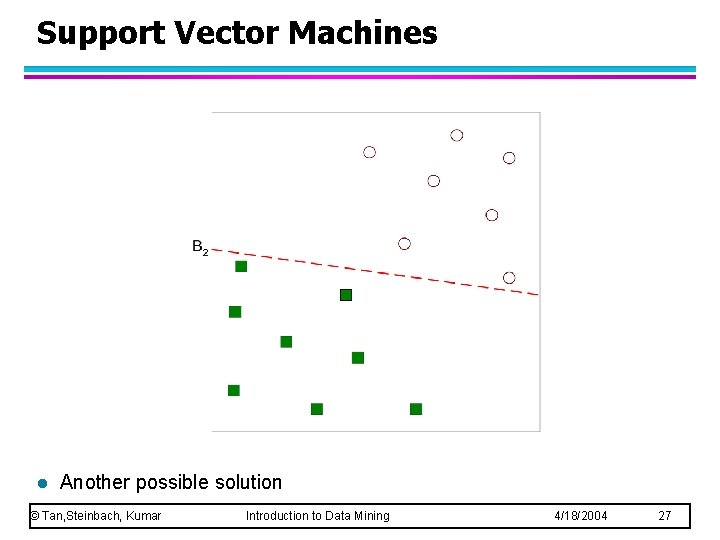

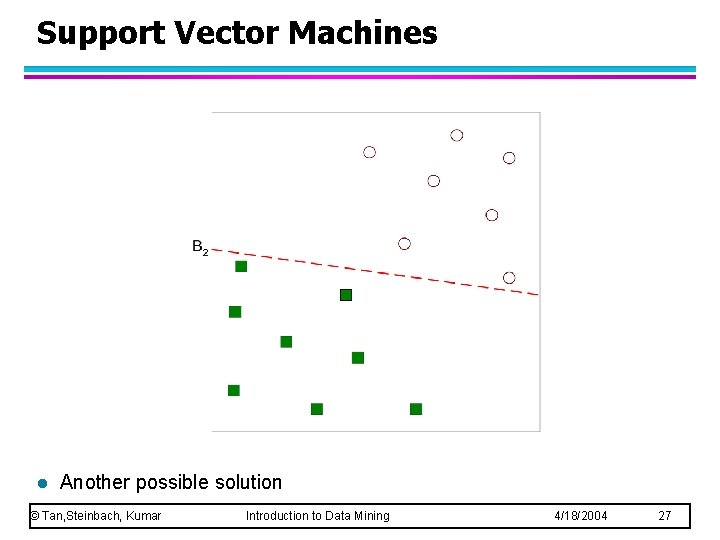

Support Vector Machines l Another possible solution © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 27

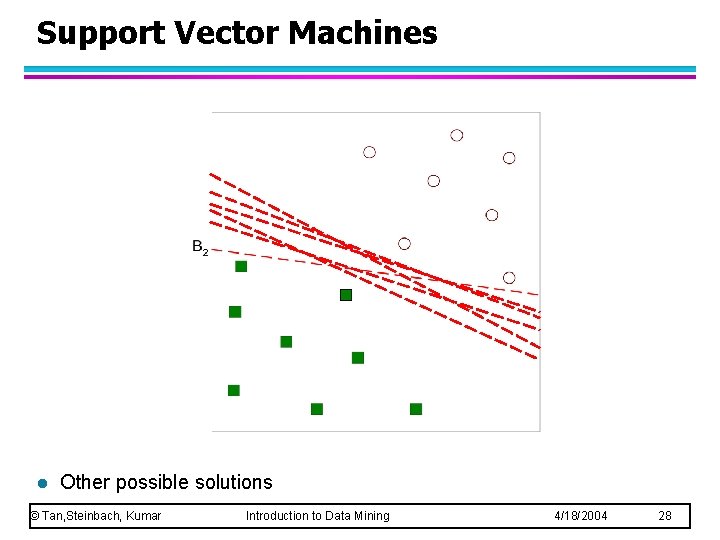

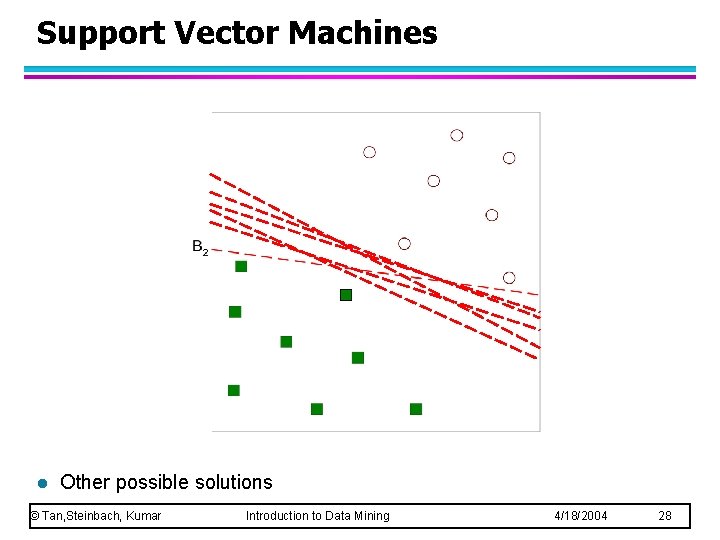

Support Vector Machines l Other possible solutions © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 28

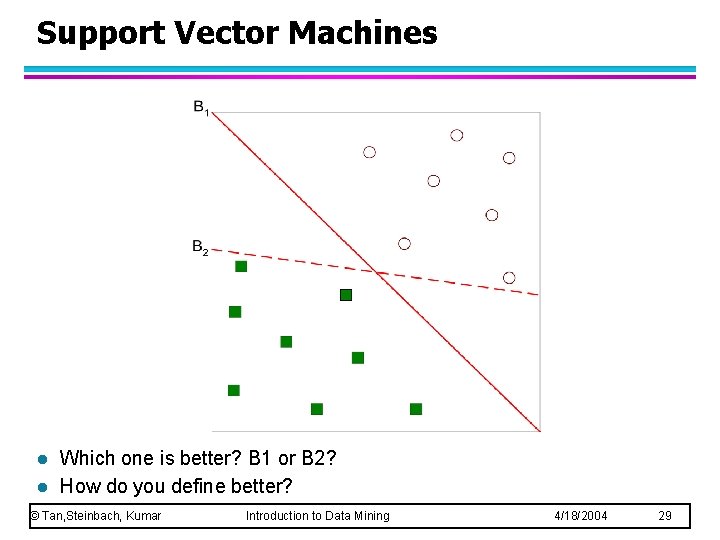

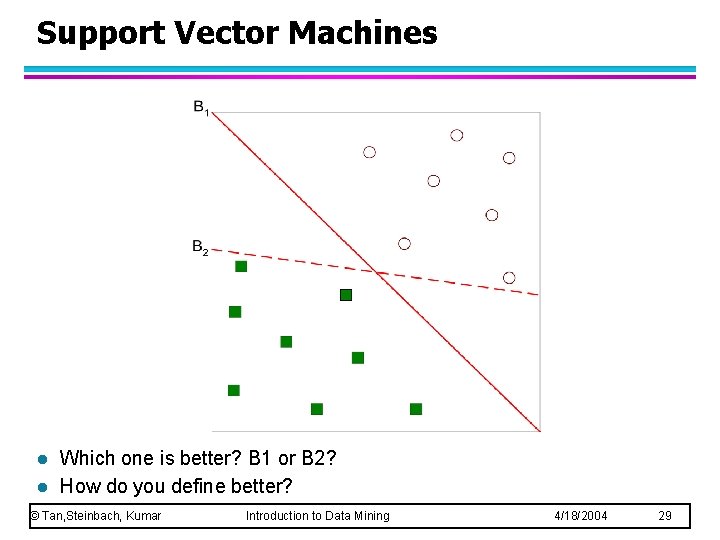

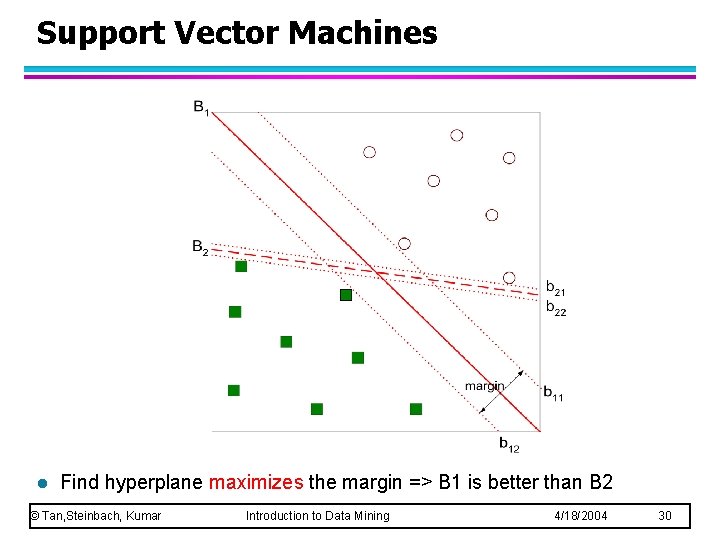

Support Vector Machines l l Which one is better? B 1 or B 2? How do you define better? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 29

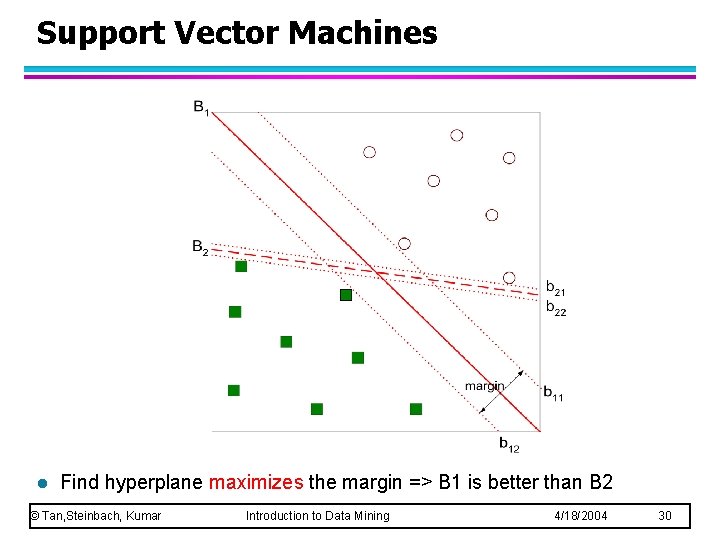

Support Vector Machines l Find hyperplane maximizes the margin => B 1 is better than B 2 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 30

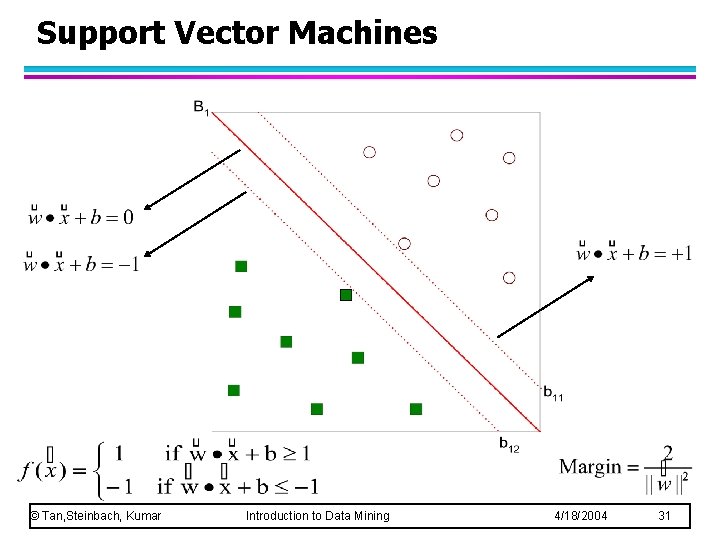

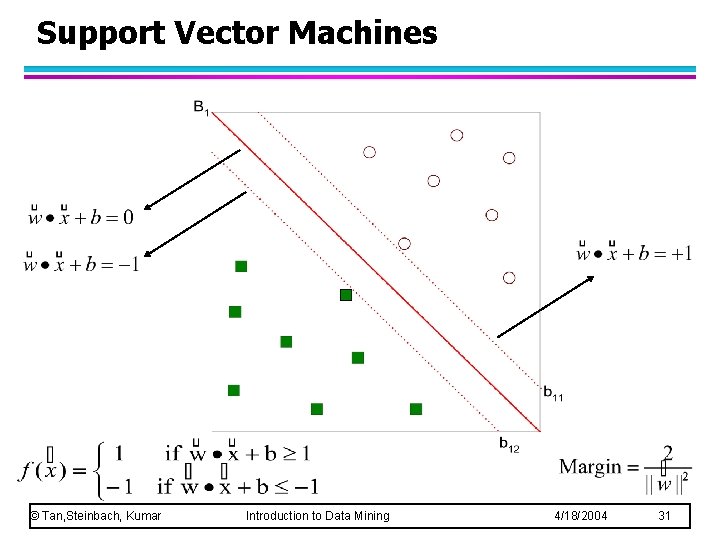

Support Vector Machines © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 31

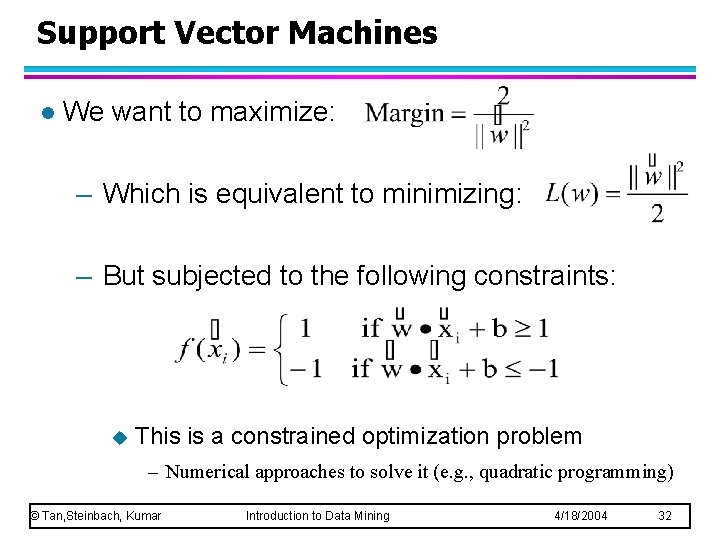

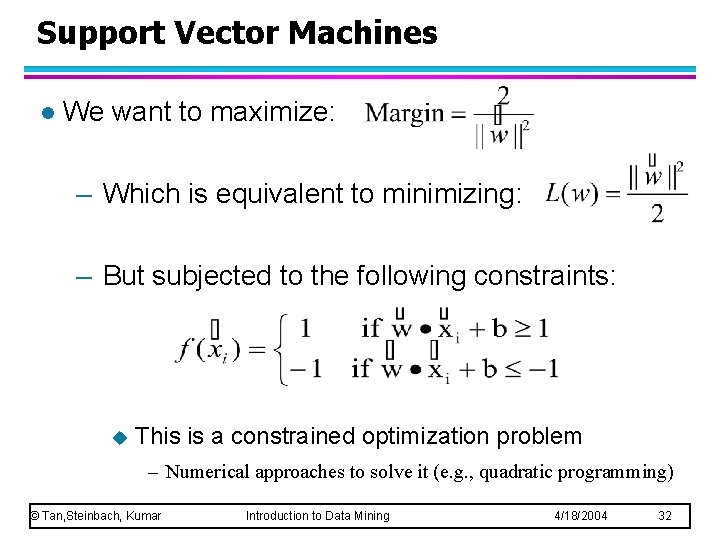

Support Vector Machines l We want to maximize: – Which is equivalent to minimizing: – But subjected to the following constraints: u This is a constrained optimization problem – Numerical approaches to solve it (e. g. , quadratic programming) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 32

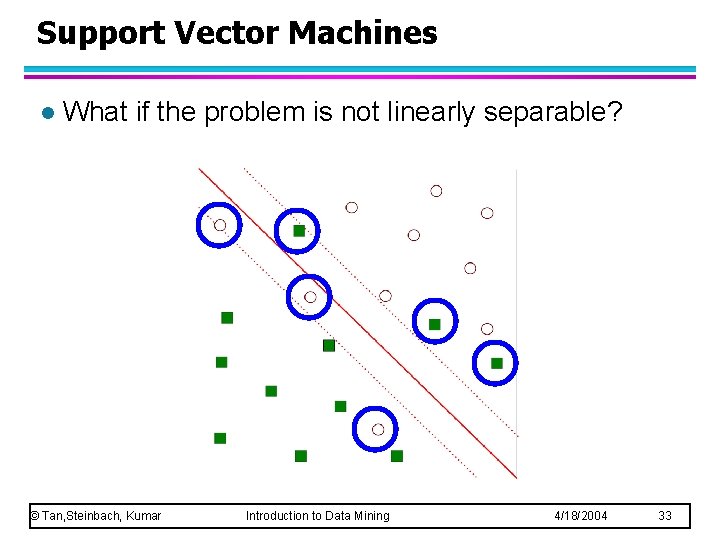

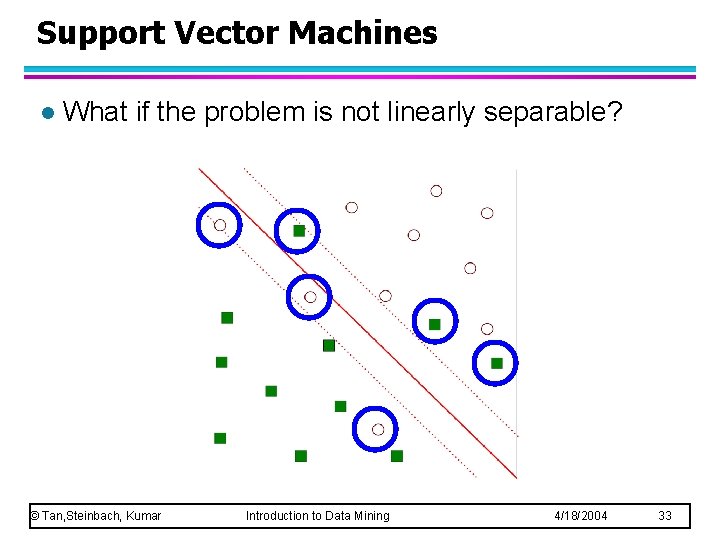

Support Vector Machines l What if the problem is not linearly separable? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 33

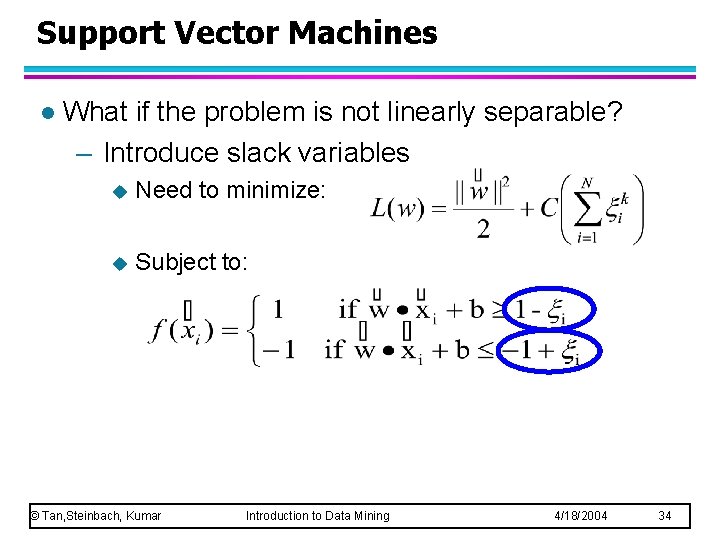

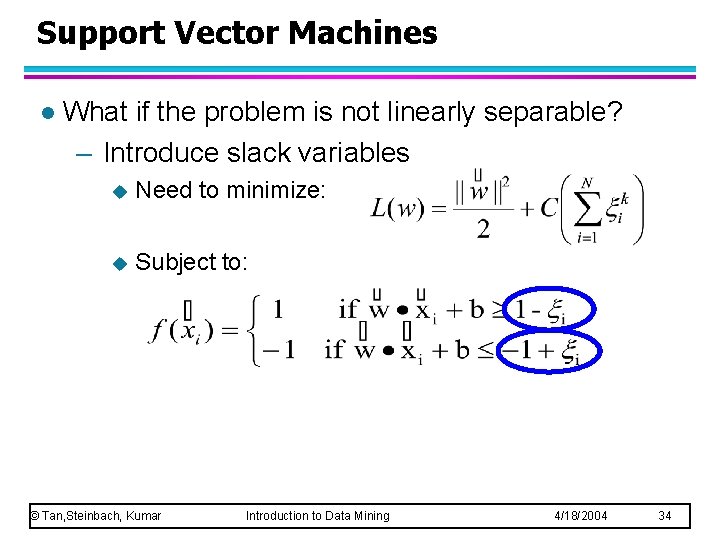

Support Vector Machines l What if the problem is not linearly separable? – Introduce slack variables u Need to minimize: u Subject to: © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 34

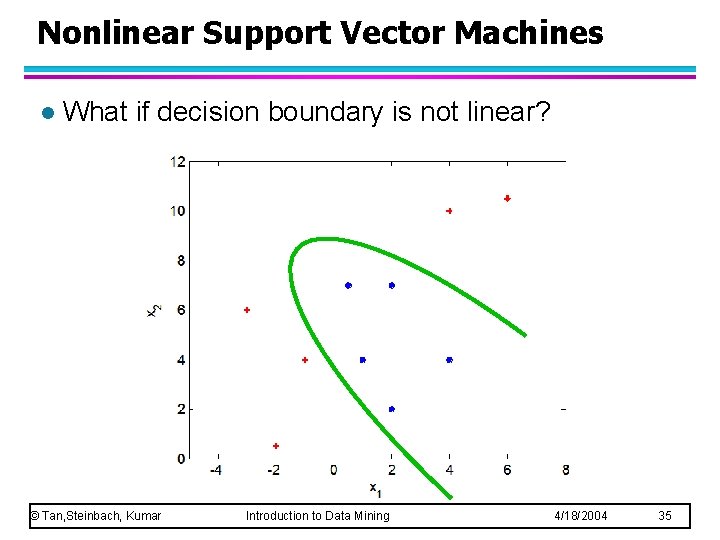

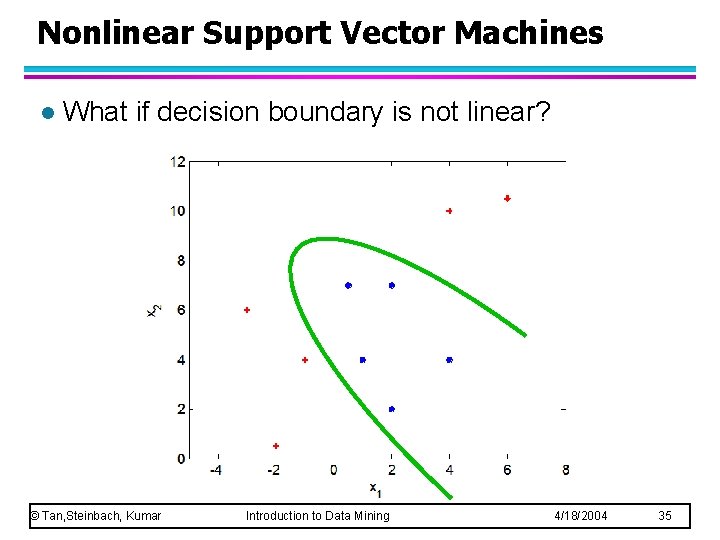

Nonlinear Support Vector Machines l What if decision boundary is not linear? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 35

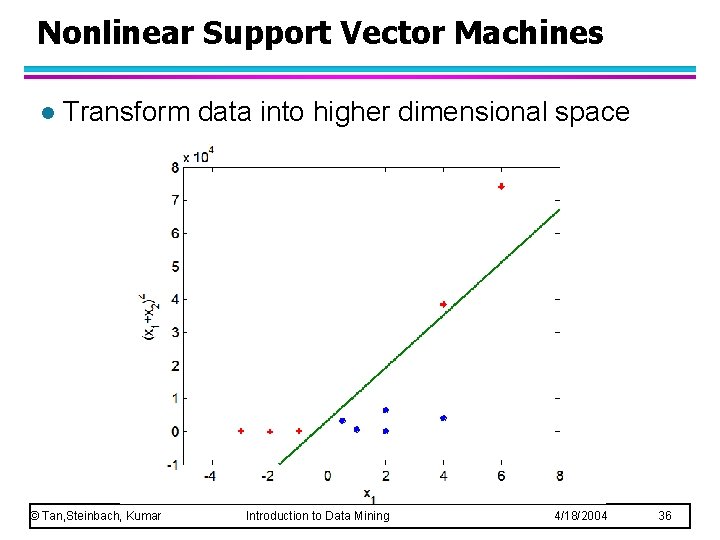

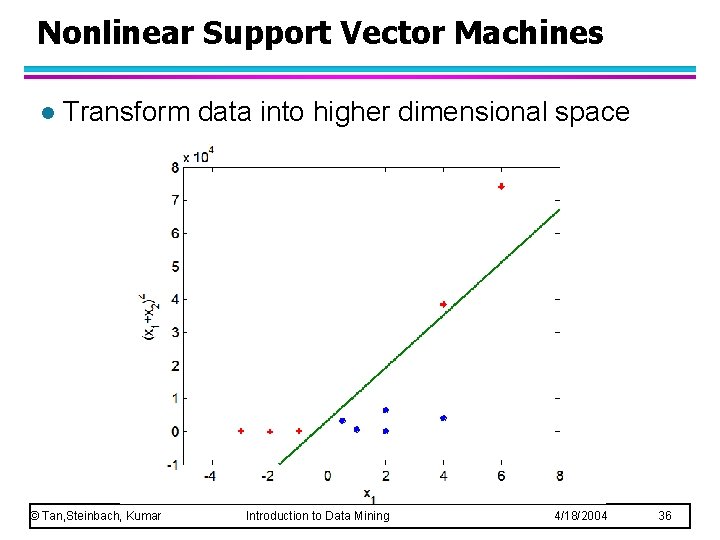

Nonlinear Support Vector Machines l Transform data into higher dimensional space © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 36

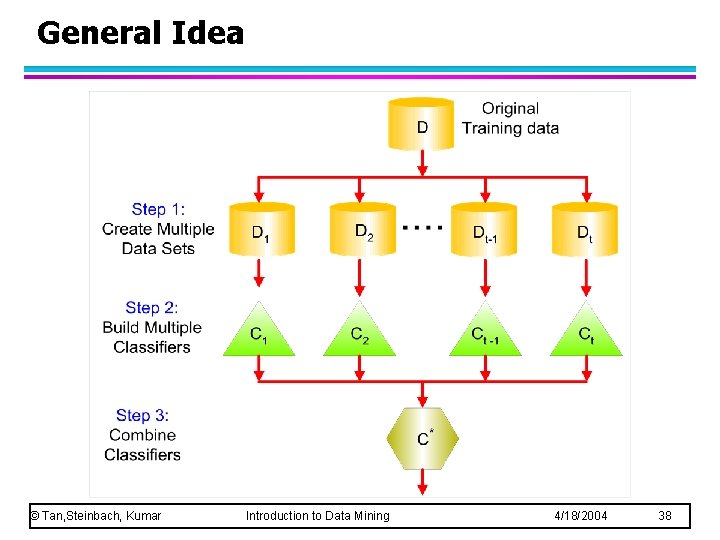

Ensemble Methods l Construct a set of classifiers from the training data l Predict class label of previously unseen records by aggregating predictions made by multiple classifiers © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 37

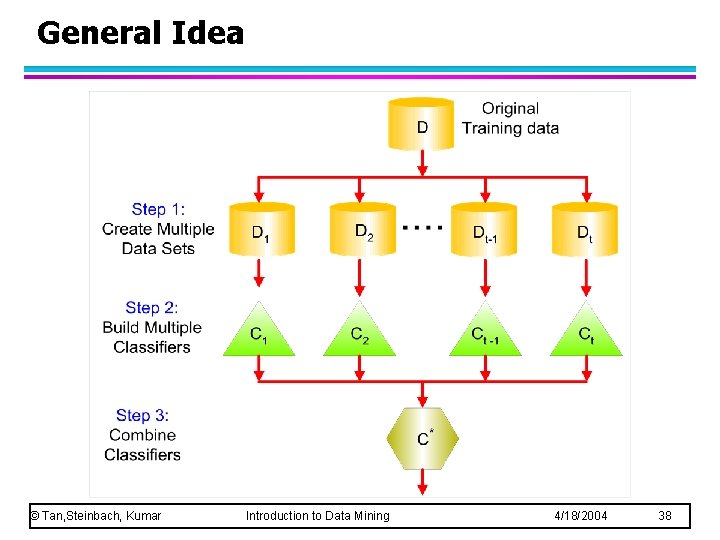

General Idea © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 38

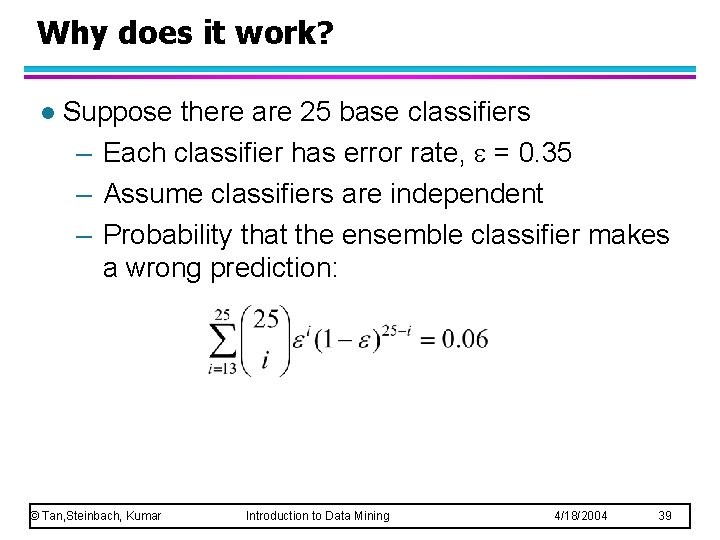

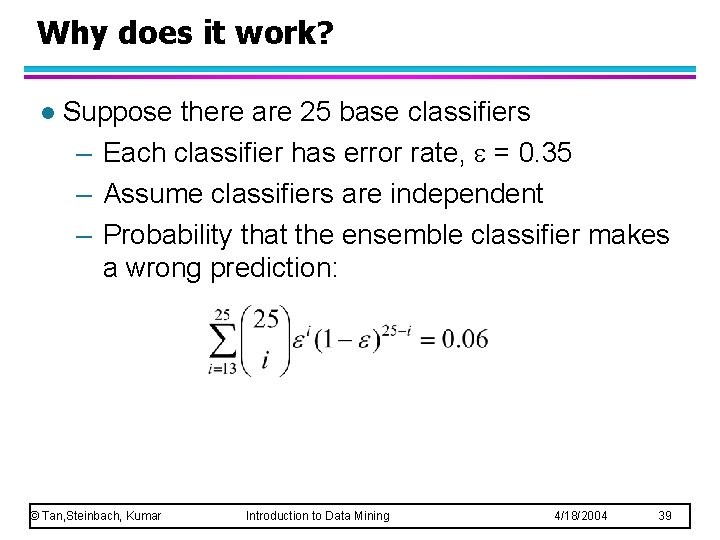

Why does it work? l Suppose there are 25 base classifiers – Each classifier has error rate, = 0. 35 – Assume classifiers are independent – Probability that the ensemble classifier makes a wrong prediction: © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 39

Examples of Ensemble Methods l How to generate an ensemble of classifiers? – Bagging – Boosting © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 40

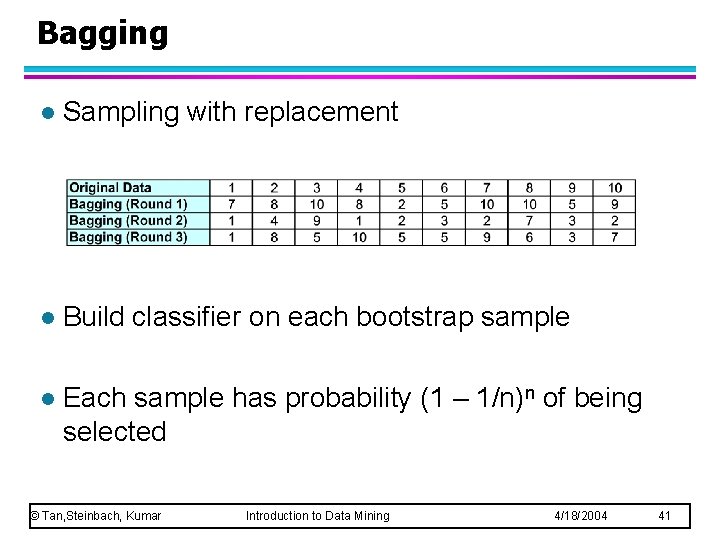

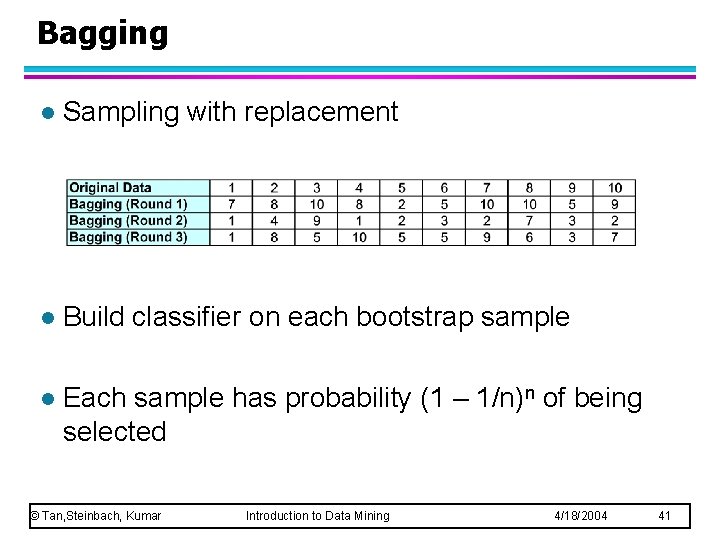

Bagging l Sampling with replacement l Build classifier on each bootstrap sample l Each sample has probability (1 – 1/n)n of being selected © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 41

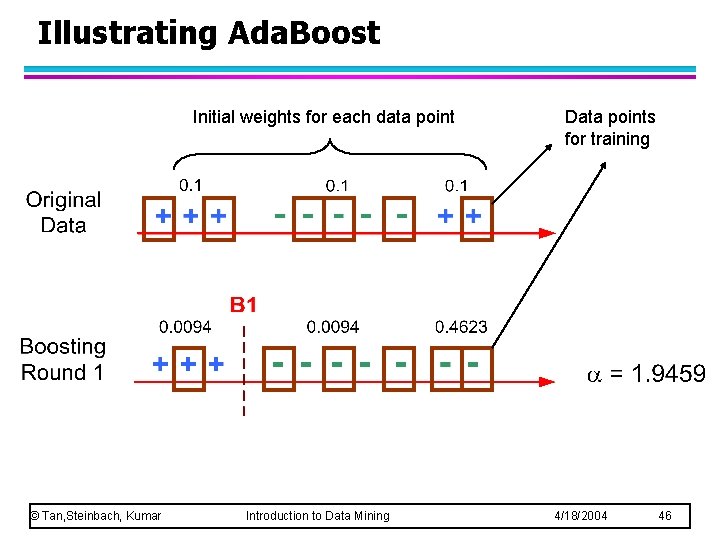

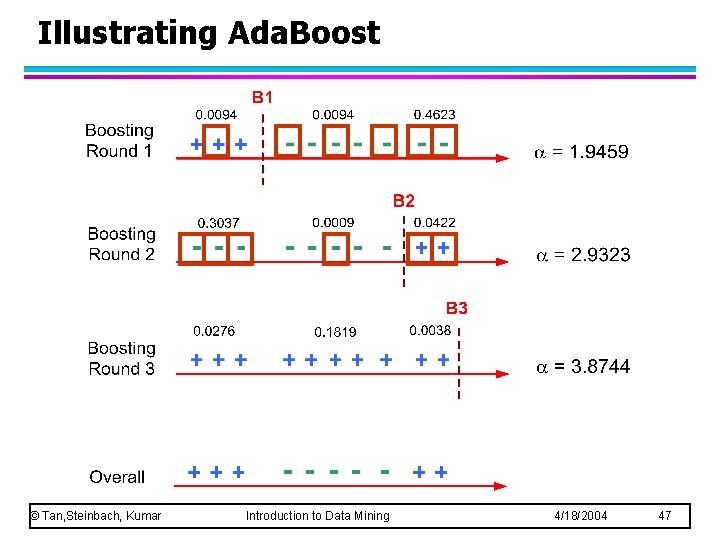

Boosting l An iterative procedure to adaptively change distribution of training data by focusing more on previously misclassified records – Initially, all N records are assigned equal weights – Unlike bagging, weights may change at the end of boosting round © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 42

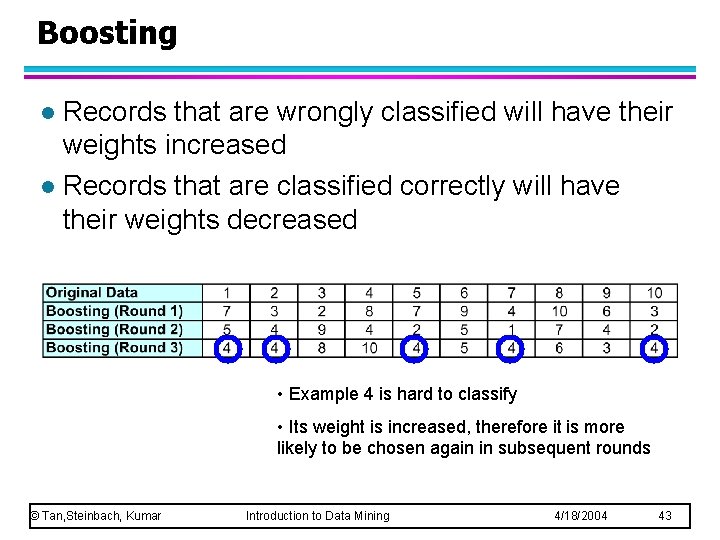

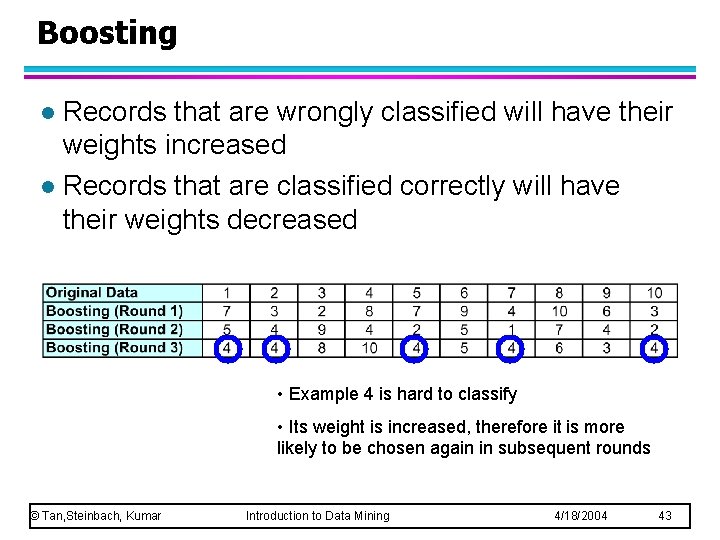

Boosting Records that are wrongly classified will have their weights increased l Records that are classified correctly will have their weights decreased l • Example 4 is hard to classify • Its weight is increased, therefore it is more likely to be chosen again in subsequent rounds © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 43

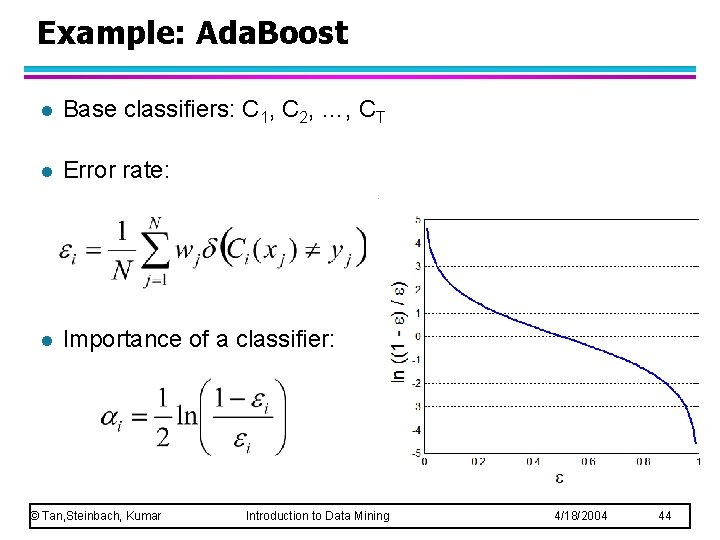

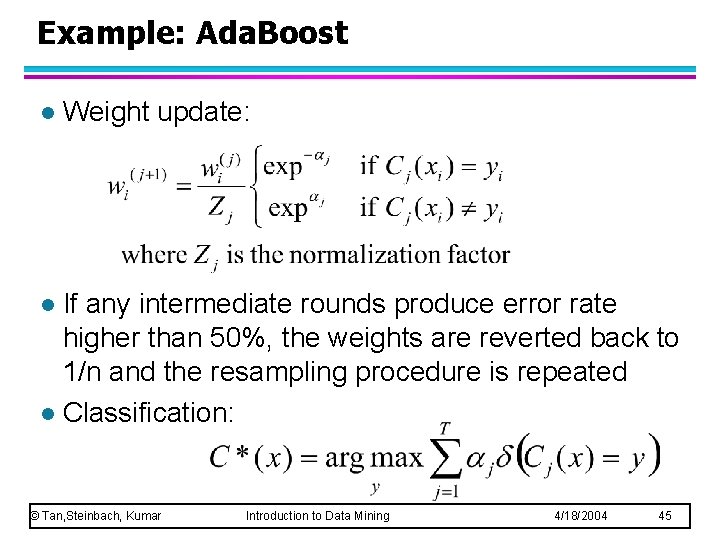

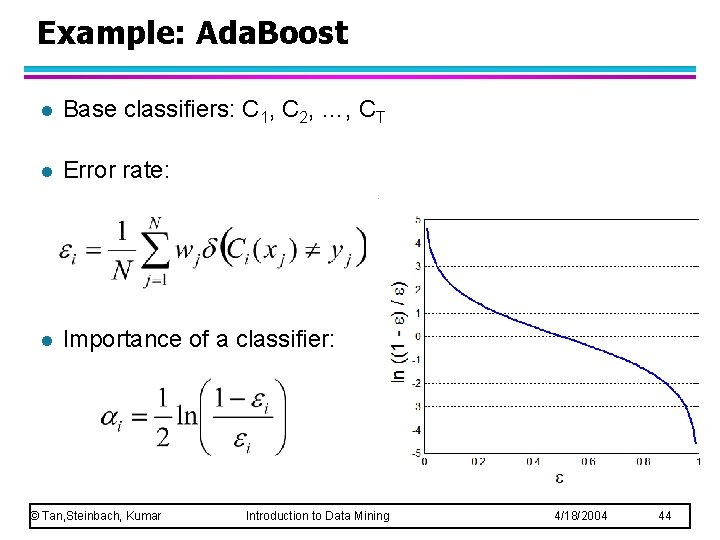

Example: Ada. Boost l Base classifiers: C 1, C 2, …, CT l Error rate: l Importance of a classifier: © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 44

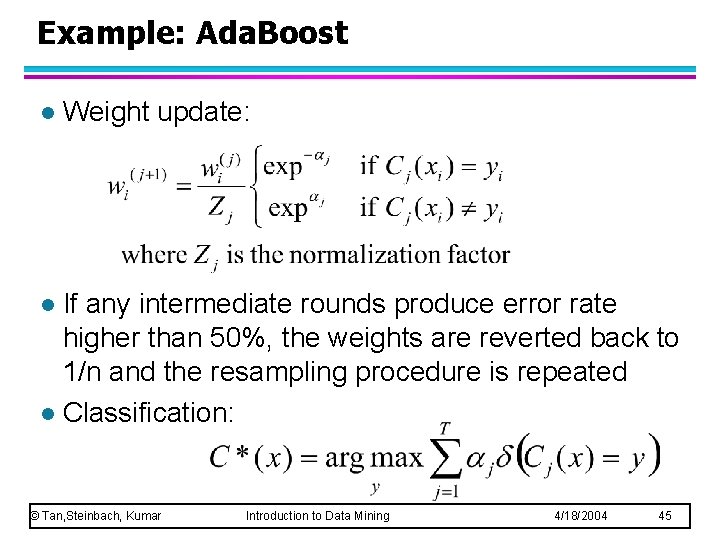

Example: Ada. Boost l Weight update: If any intermediate rounds produce error rate higher than 50%, the weights are reverted back to 1/n and the resampling procedure is repeated l Classification: l © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 45

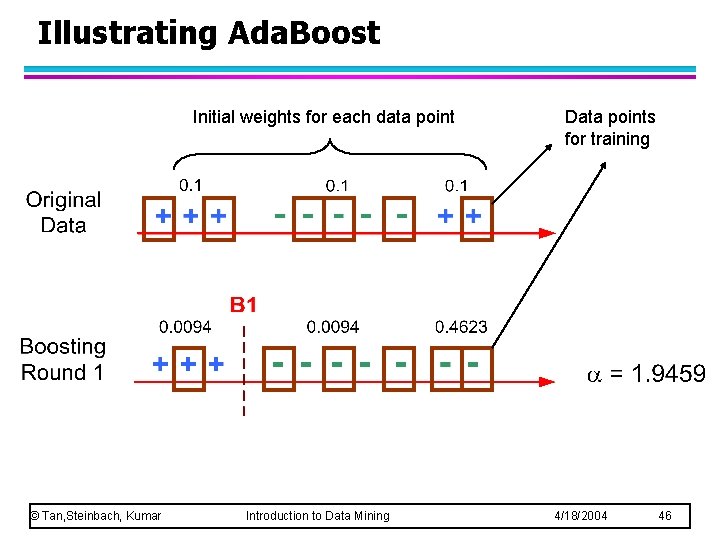

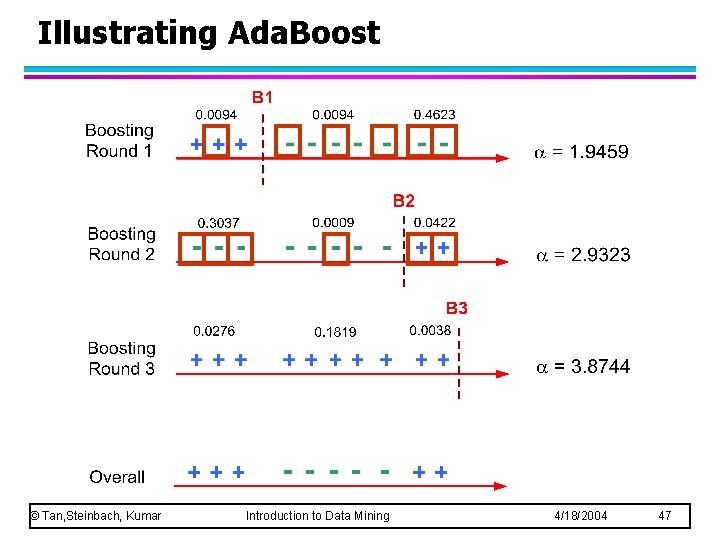

Illustrating Ada. Boost Initial weights for each data point © Tan, Steinbach, Kumar Introduction to Data Mining Data points for training 4/18/2004 46

Illustrating Ada. Boost © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 47