BMED 3510 Parameter Estimation Book Chapter 5 Recap

BMED 3510 Parameter Estimation Book Chapter 5

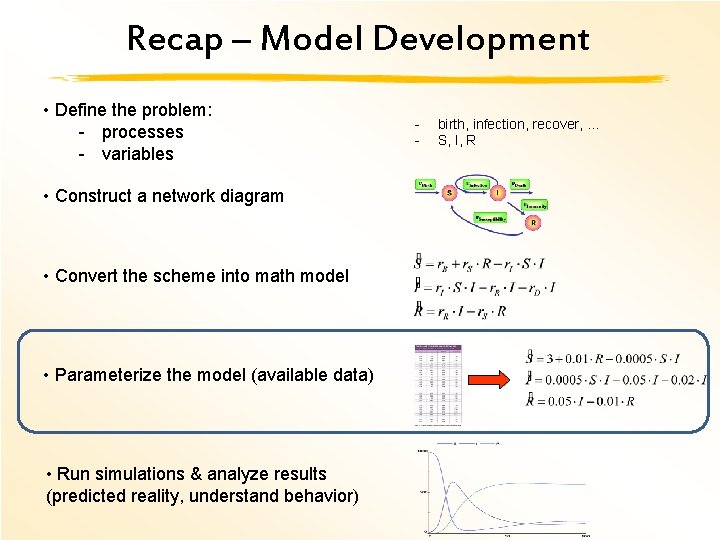

Recap – Model Development • Define the problem: - processes - variables • Construct a network diagram • Convert the scheme into math model • Parameterize the model (available data) • Run simulations & analyze results (predicted reality, understand behavior) - birth, infection, recover, … S, I, R

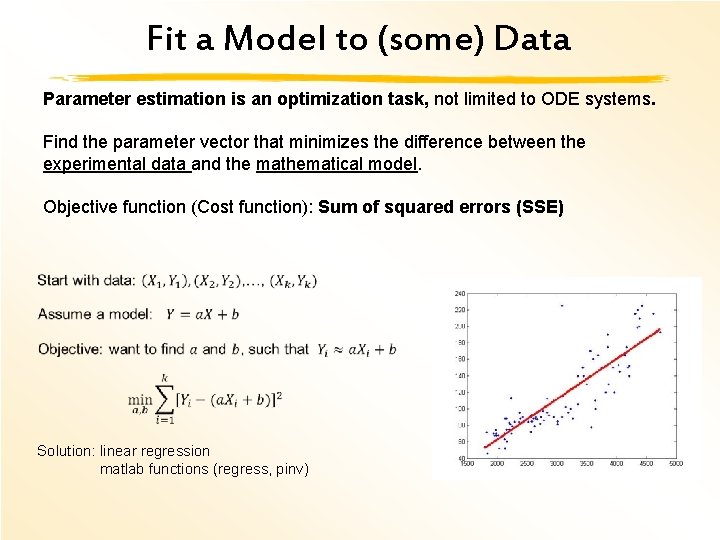

Fit a Model to (some) Data Parameter estimation is an optimization task, not limited to ODE systems. Find the parameter vector that minimizes the difference between the experimental data and the mathematical model. Objective function (Cost function): Sum of squared errors (SSE) Solution: linear regression matlab functions (regress, pinv)

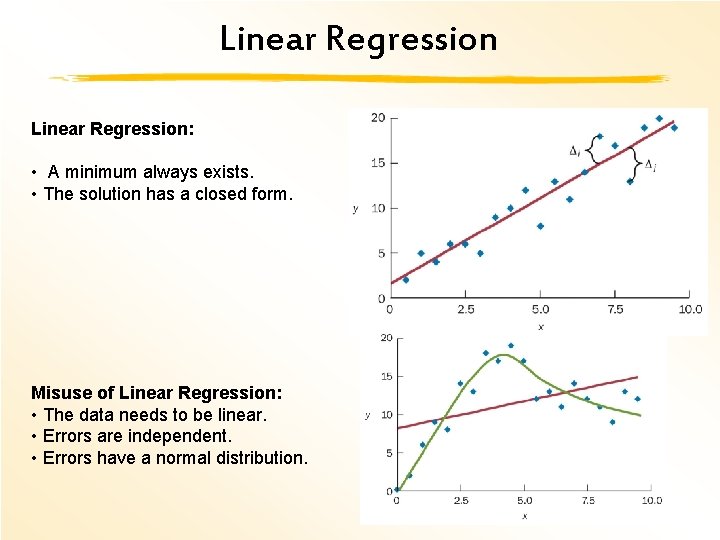

Linear Regression: • A minimum always exists. • The solution has a closed form. Misuse of Linear Regression: • The data needs to be linear. • Errors are independent. • Errors have a normal distribution.

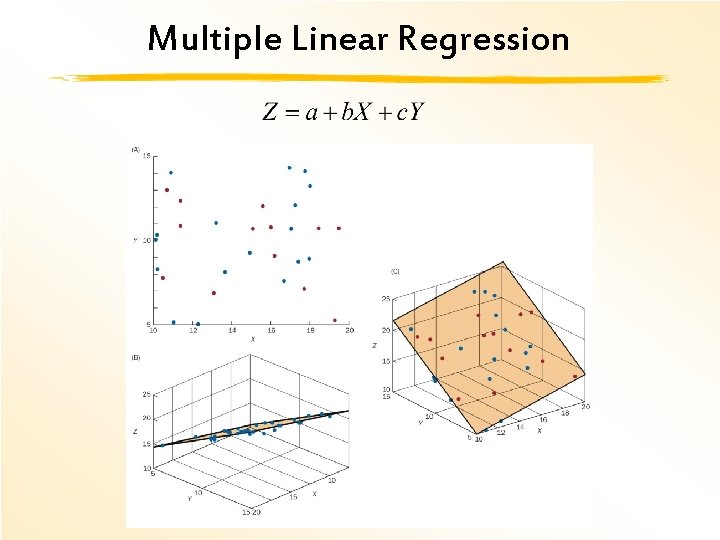

Multiple Linear Regression

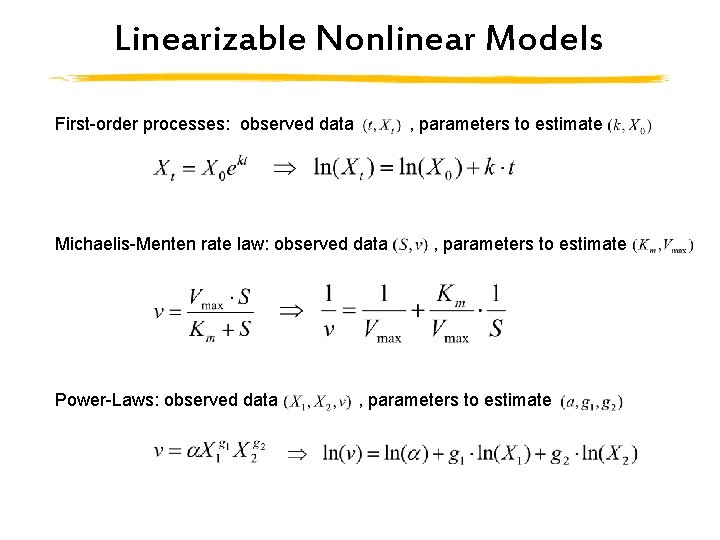

Linearizable Nonlinear Models First-order processes: observed data , parameters to estimate Michaelis-Menten rate law: observed data , parameters to estimate Power-Laws: observed data , parameters to estimate

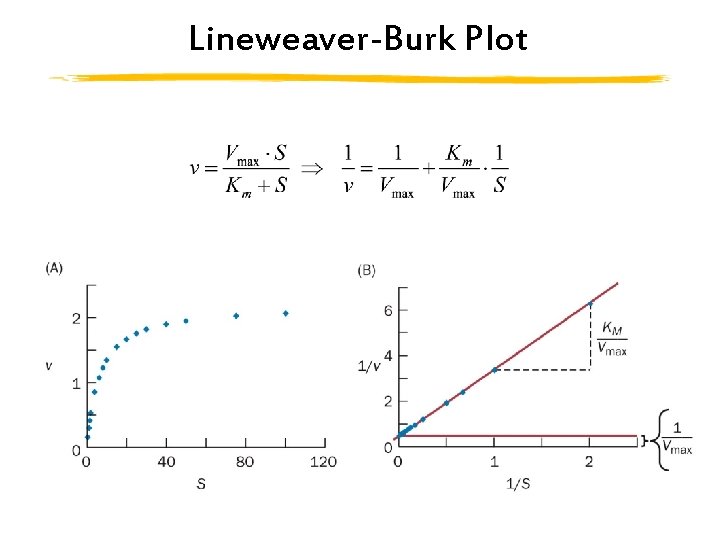

Lineweaver-Burk Plot

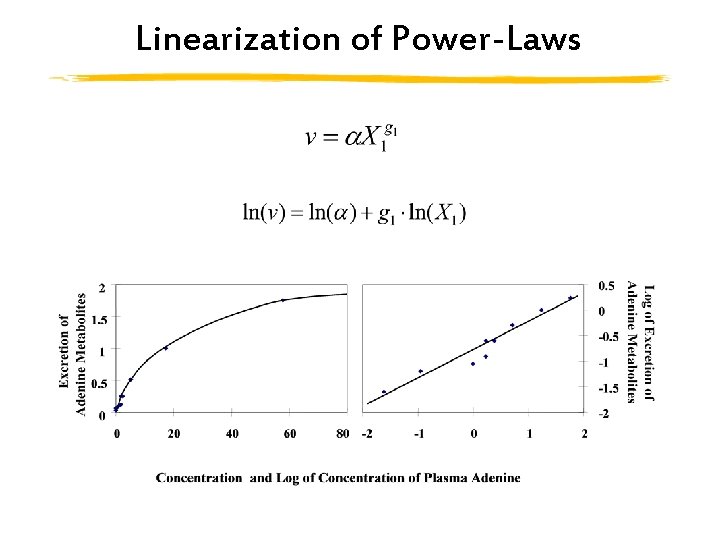

Linearization of Power-Laws

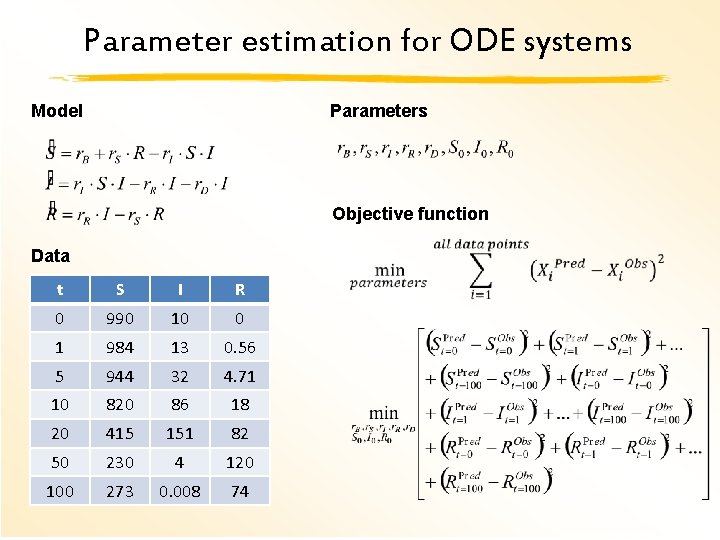

Parameter estimation for ODE systems Model Parameters Objective function Data t S I R 0 990 10 0 1 984 13 0. 56 5 944 32 4. 71 10 820 86 18 20 415 151 82 50 230 4 120 100 273 0. 008 74

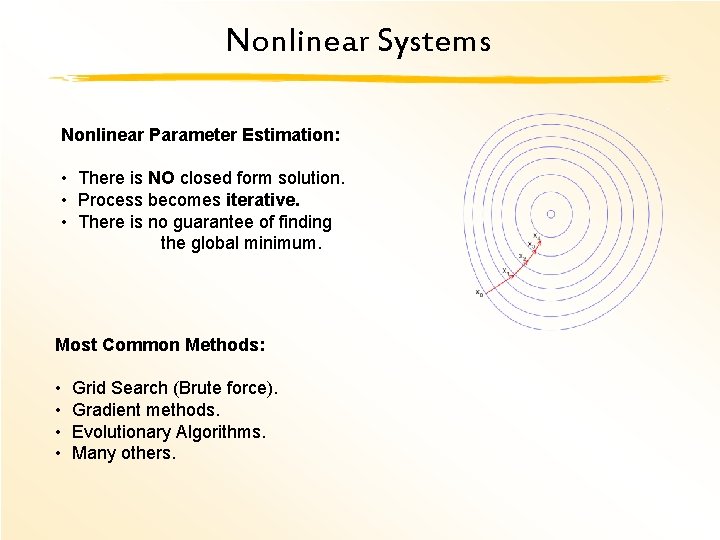

Nonlinear Systems Nonlinear Parameter Estimation: • There is NO closed form solution. • Process becomes iterative. • There is no guarantee of finding the global minimum. Most Common Methods: • Grid Search (Brute force). • Gradient methods. • Evolutionary Algorithms. • Many others.

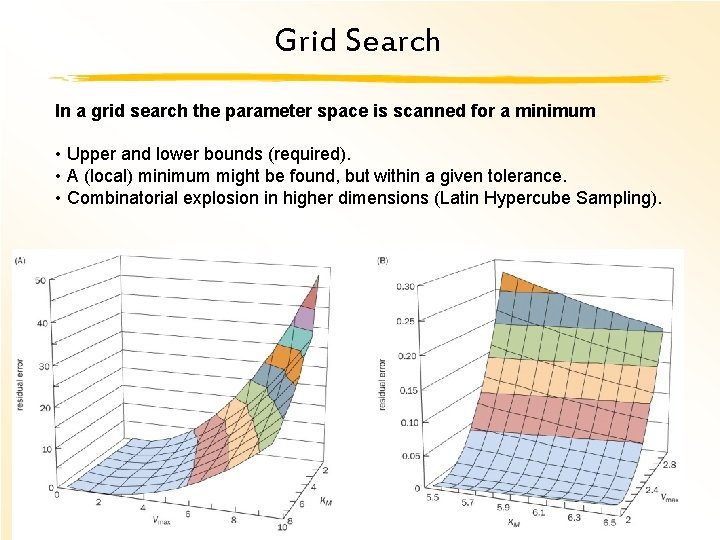

Grid Search In a grid search the parameter space is scanned for a minimum • Upper and lower bounds (required). • A (local) minimum might be found, but within a given tolerance. • Combinatorial explosion in higher dimensions (Latin Hypercube Sampling).

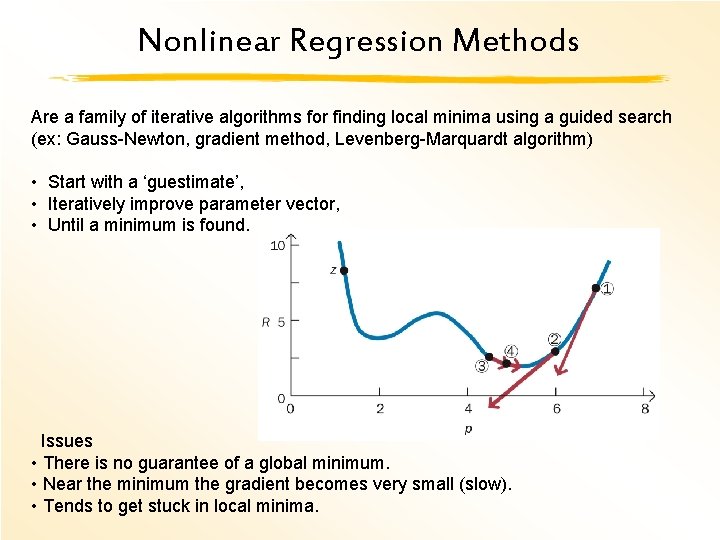

Nonlinear Regression Methods Are a family of iterative algorithms for finding local minima using a guided search (ex: Gauss-Newton, gradient method, Levenberg-Marquardt algorithm) • Start with a ‘guestimate’, • Iteratively improve parameter vector, • Until a minimum is found. Issues • There is no guarantee of a global minimum. • Near the minimum the gradient becomes very small (slow). • Tends to get stuck in local minima.

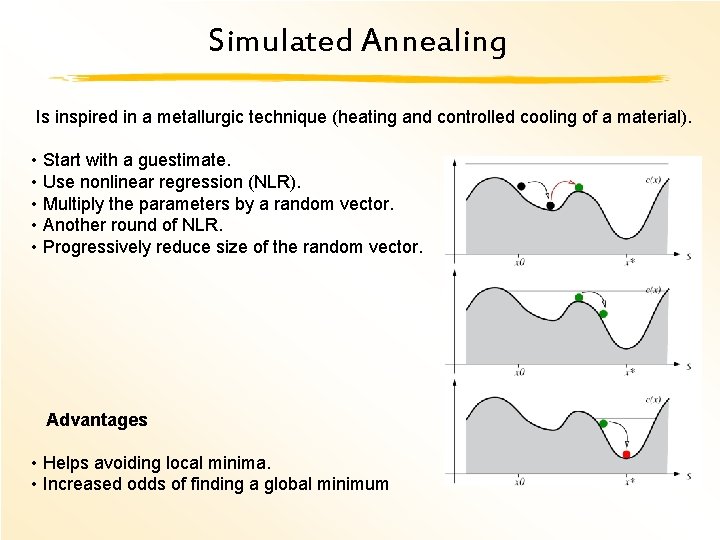

Simulated Annealing Is inspired in a metallurgic technique (heating and controlled cooling of a material). • Start with a guestimate. • Use nonlinear regression (NLR). • Multiply the parameters by a random vector. • Another round of NLR. • Progressively reduce size of the random vector. Advantages • Helps avoiding local minima. • Increased odds of finding a global minimum

Stochastic Search Basic idea: • Start with a population of guestimates. • Try to improve the population Algorithms: • Genetic algorithm. • Particle swarm optimization • Cross entropy

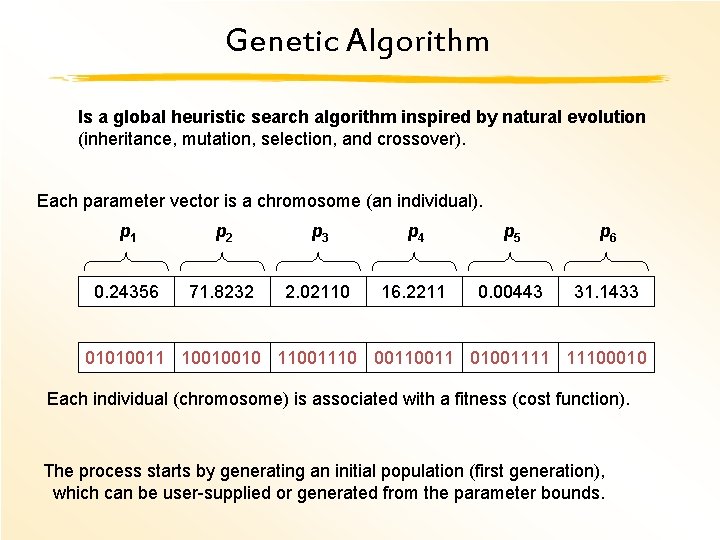

Genetic Algorithm Is a global heuristic search algorithm inspired by natural evolution (inheritance, mutation, selection, and crossover). Each parameter vector is a chromosome (an individual). p 1 p 2 p 3 p 4 p 5 p 6 0. 24356 71. 8232 2. 02110 16. 2211 0. 00443 31. 1433 01010011 10010010 11001110 0011 01001111 11100010 Each individual (chromosome) is associated with a fitness (cost function). The process starts by generating an initial population (first generation), which can be user-supplied or generated from the parameter bounds.

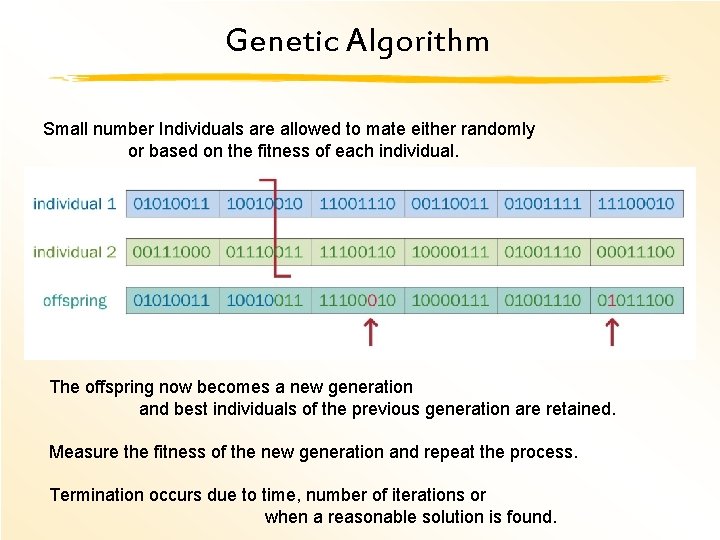

Genetic Algorithm Small number Individuals are allowed to mate either randomly or based on the fitness of each individual. The offspring now becomes a new generation and best individuals of the previous generation are retained. Measure the fitness of the new generation and repeat the process. Termination occurs due to time, number of iterations or when a reasonable solution is found.

Genetic Algorithm Advantages Likely to find a region containing the global minimum (no guarantee) Any cost function may be used Disadvantages Finding the best settings is a form of art The process is slow, and may not even converge. But parallelization can improve speed (multiple CPUs) Local minima Seldom exact solutions

Genetic Algorithm Combining the best of both worlds Start with genetic algorithms Identify promising regions of the parameter space. And use nonlinear regression To find local minima.

Stochastic / Deterministic Stochastic methods Never yield the same result in consecutive runs. Ex. Genetic algorithms and Simulated Annealing. Deterministic methods Always find the same solution if started from the same point Ex. Nonlinear regression

Choosing the Best Strategy If • Data and model are linear - Linear regression. • Data is nonlinear but model is linear(izable) - Linear regression. • Model is nonlinear: • Nothing is known – start with grid search method. • The model is small and a good estimate exists: Gradient method. • Medium to large model with local information is available: • Linear or nonlinear regression for each process – bottom-up. • If information is insufficient for full parameterization: • Use local data for an initial guess or for parameter bounds: gradient method / global search. • Medium to large model with global information is available: • Use top-down approach with global search methods.

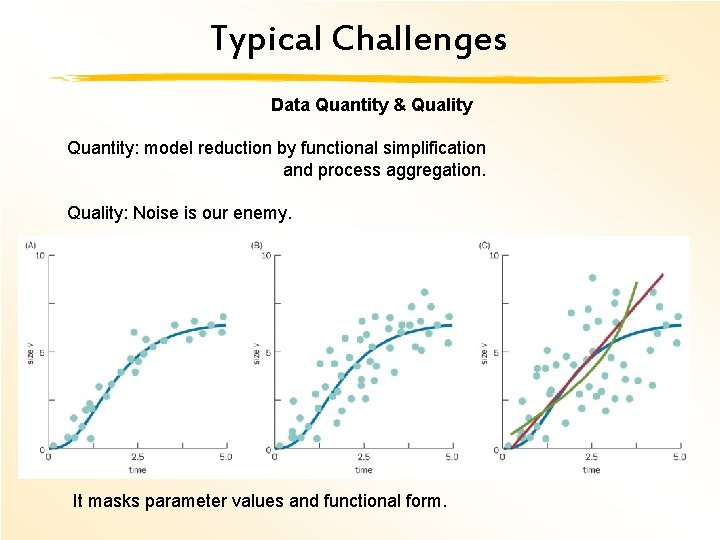

Typical Challenges Data Quantity & Quality Quantity: model reduction by functional simplification and process aggregation. Quality: Noise is our enemy. It masks parameter values and functional form.

Typical Challenges Parameter redundancies / dependencies Parameters always enter the model as p 1 + p 2 or p 1 p 2 Functional dependencies among parameters Example and Simplification!

Typical Challenges Numerically integration of an ODE model takes up 99% of the estimation time. In an analytical model at each iteration: (fast) In an ODE model: 1) Numerically simulate the Model(P) (slow) 2) Estimate the SSE. The number of Iterations required for parameter estimation can go from few hundreds or thousands to millions. 1 million seconds = 11. 5 days

Summary Parameter estimation stands between reality and realistic model Crucial component of modeling Lots of research No silver bullet (yet)! Most methods work sometimes, few work always.

- Slides: 24