Principles of Parameter Estimation The Estimation Problem We

Principles of Parameter Estimation

The Estimation Problem § We use the various concepts introduced and studied in earlier lectures to solve practical problems of interest. § Consider the problem of estimating an unknown parameter of interest from a few of its noisy observations. - the daily temperature in a city - the depth of a river at a particular spot § Observations (measurement) are made on data that contain the desired nonrandom parameter and undesired noise.

The Estimation Problem § For example § or, the i th observation can be represented as § : the unknown nonrandom desired parameter § : random variables that may be dependent or independent from observation to observation. § The Estimation Problem: - Given n observations obtain the “best” estimator for the unknown parameter in terms of these observations.

Estimators § Let us denote by § Obviously the estimator for . is a function of only the observations. § “Best estimator” in what sense? § Ideal solution: the estimate coincides with the unknown . § Almost always any estimate will result in an error given by § One strategy would be to select the estimator some function of this error - mean square error (MMSE), - absolute value of the error - etc. so as to minimize

A More Fundamental Approach: Principle of Maximum Likelihood § Underlying Assumption: the available data to do with the unknown parameter . § We assume that the joint p. d. f of depends on . has something , § This method - assumes that the given sample data set is representative of the population - chooses the value for that most likely caused the observed data to occur

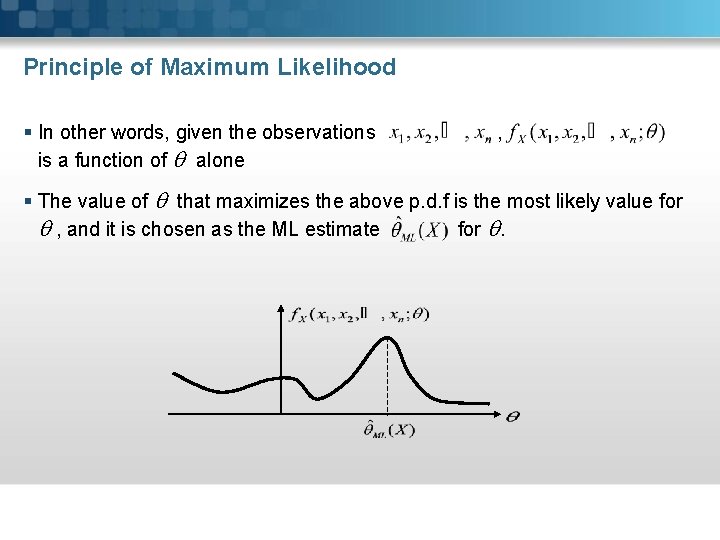

Principle of Maximum Likelihood § In other words, given the observations is a function of alone , § The value of that maximizes the above p. d. f is the most likely value for , and it is chosen as the ML estimate for .

§ Given the joint p. d. f represents the likelihood function § The ML estimate can be determined either from - the likelihood equation - or using the log-likelihood function § If is differentiable and a supremum above equation, then that must satisfy the equation exists in the

Example § Let represent n observations where is the unknown parameter of interest, § are zero mean independent normal r. vs with common variance § Determine the ML estimate for . Solution § Since s are independent r. vs and is an unknown constant, are independent normal random variables. § Thus the likelihood function takes the form s

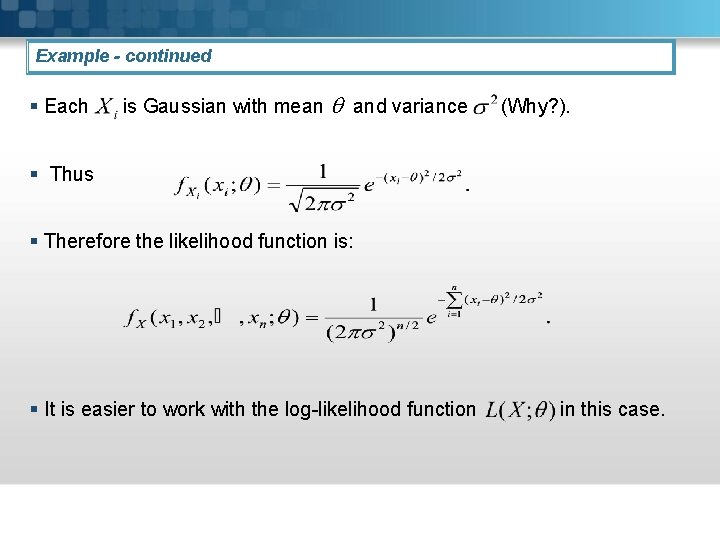

Example - continued § Each is Gaussian with mean and variance (Why? ). § Thus § Therefore the likelihood function is: § It is easier to work with the log-likelihood function in this case.

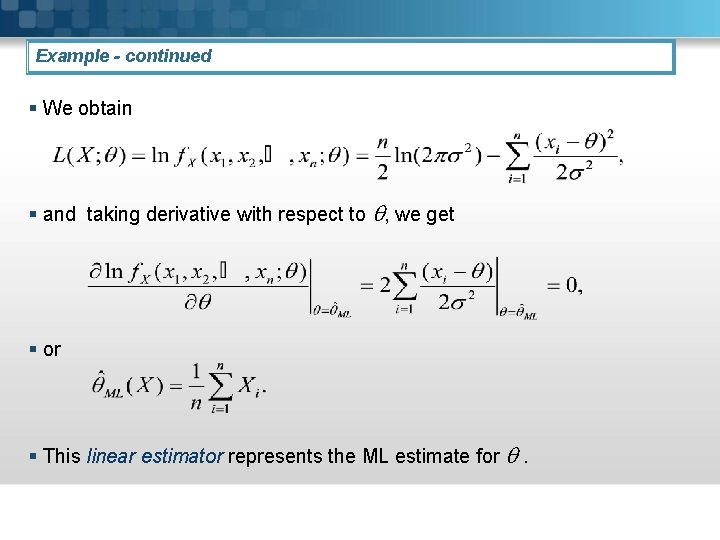

Example - continued § We obtain § and taking derivative with respect to , we get § or § This linear estimator represents the ML estimate for .

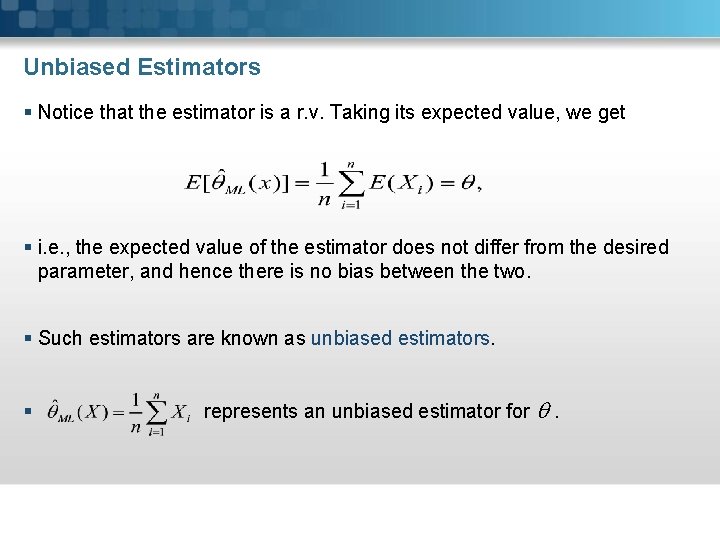

Unbiased Estimators § Notice that the estimator is a r. v. Taking its expected value, we get § i. e. , the expected value of the estimator does not differ from the desired parameter, and hence there is no bias between the two. § Such estimators are known as unbiased estimators. § represents an unbiased estimator for .

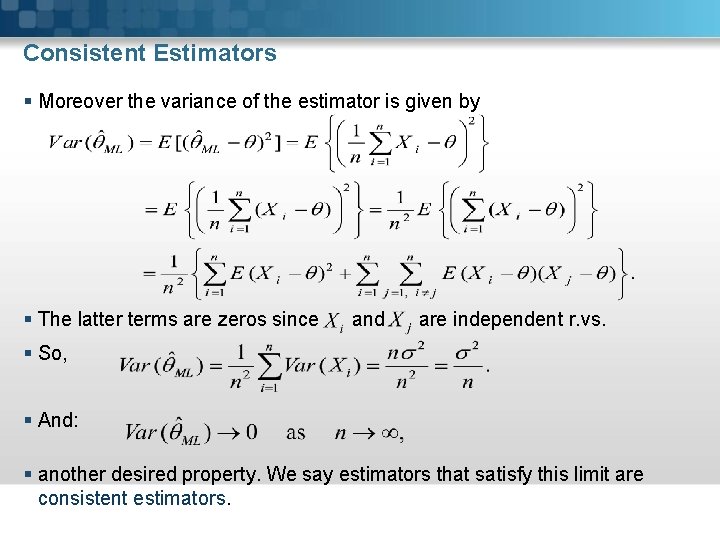

Consistent Estimators § Moreover the variance of the estimator is given by § The latter terms are zeros since and are independent r. vs. § So, § And: § another desired property. We say estimators that satisfy this limit are consistent estimators.

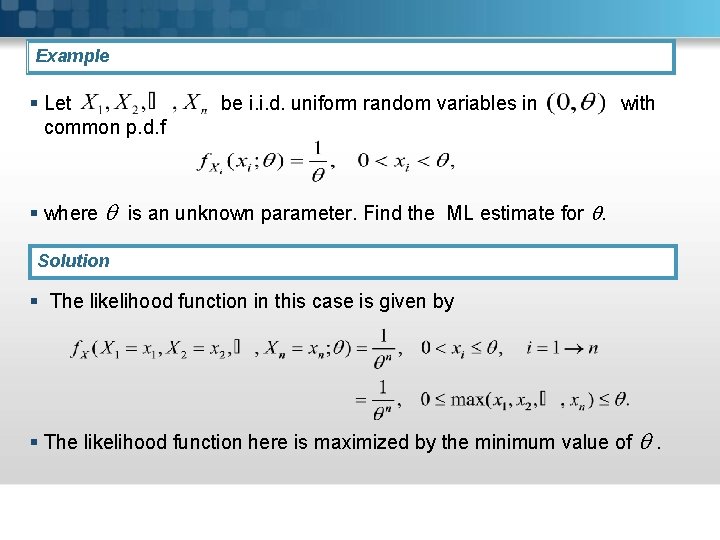

Example § Let common p. d. f be i. i. d. uniform random variables in with § where is an unknown parameter. Find the ML estimate for . Solution § The likelihood function in this case is given by § The likelihood function here is maximized by the minimum value of .

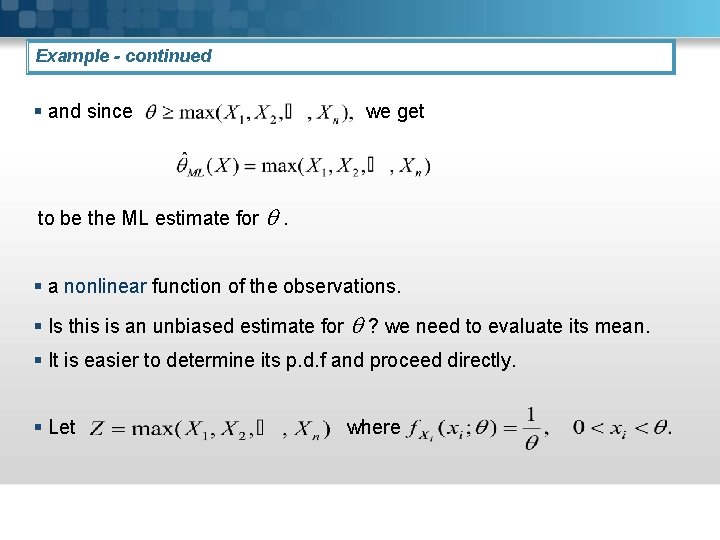

Example - continued § and since we get to be the ML estimate for . § a nonlinear function of the observations. § Is this is an unbiased estimate for ? we need to evaluate its mean. § It is easier to determine its p. d. f and proceed directly. § Let where

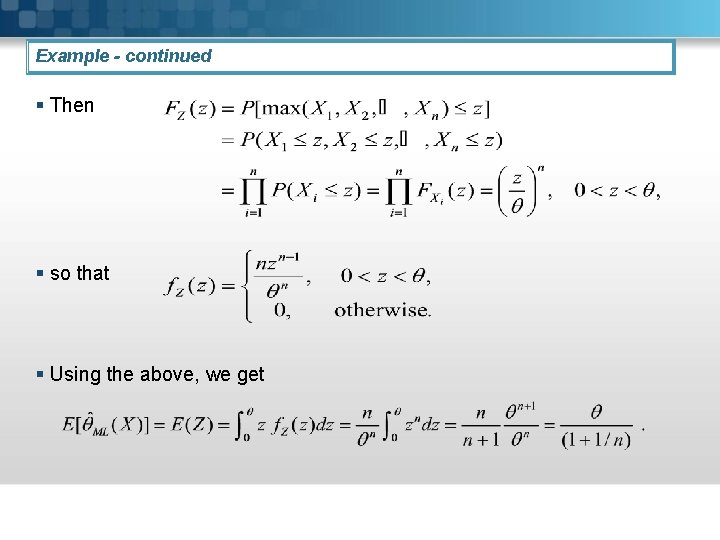

Example - continued § Then § so that § Using the above, we get

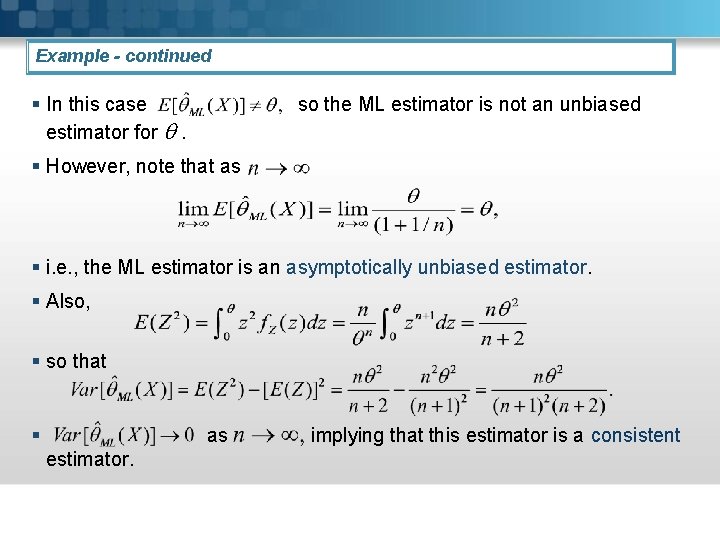

Example - continued § In this case estimator for . so the ML estimator is not an unbiased § However, note that as § i. e. , the ML estimator is an asymptotically unbiased estimator. § Also, § so that § as estimator. implying that this estimator is a consistent

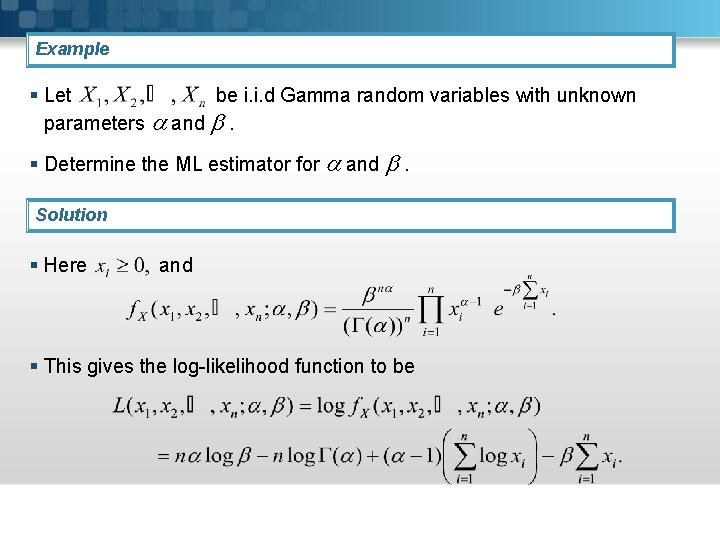

Example § Let be i. i. d Gamma random variables with unknown parameters and . § Determine the ML estimator for and . Solution § Here and § This gives the log-likelihood function to be

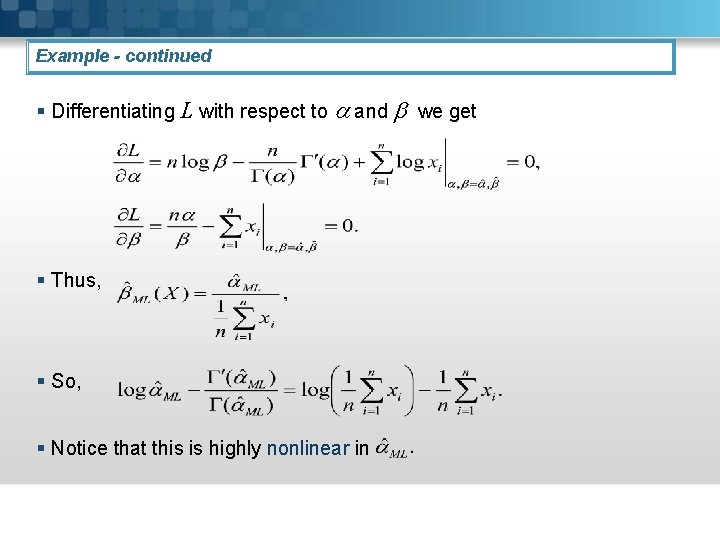

Example - continued § Differentiating L with respect to and we get § Thus, § So, § Notice that this is highly nonlinear in

Conclusion § In general the (log)-likelihood function - can have more than one solution, or no solutions at all. - may not be even differentiable - can be extremely complicated to solve explicitly

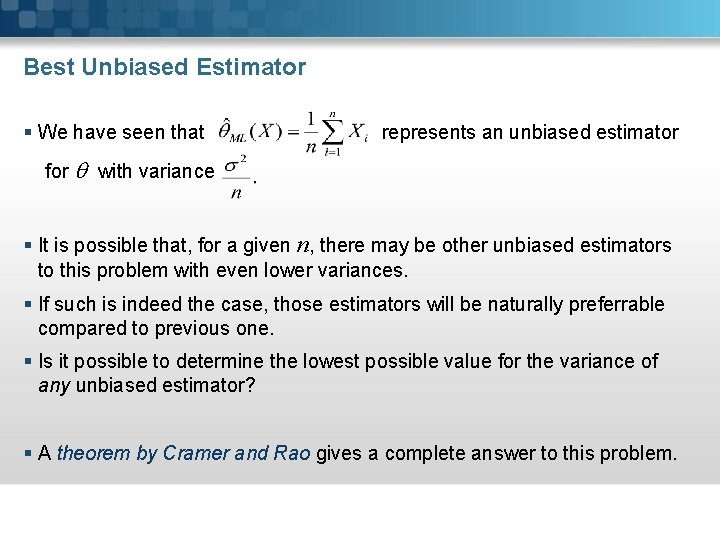

Best Unbiased Estimator § We have seen that represents an unbiased estimator for with variance § It is possible that, for a given n, there may be other unbiased estimators to this problem with even lower variances. § If such is indeed the case, those estimators will be naturally preferrable compared to previous one. § Is it possible to determine the lowest possible value for the variance of any unbiased estimator? § A theorem by Cramer and Rao gives a complete answer to this problem.

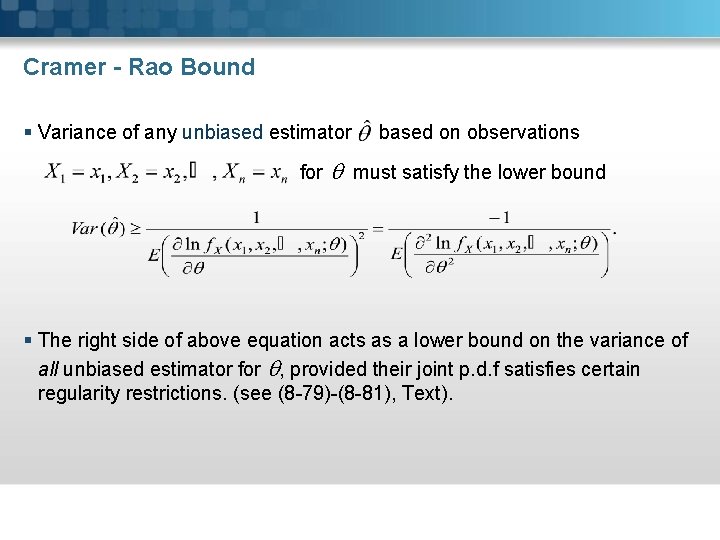

Cramer - Rao Bound § Variance of any unbiased estimator for based on observations must satisfy the lower bound § The right side of above equation acts as a lower bound on the variance of all unbiased estimator for , provided their joint p. d. f satisfies certain regularity restrictions. (see (8 -79)-(8 -81), Text).

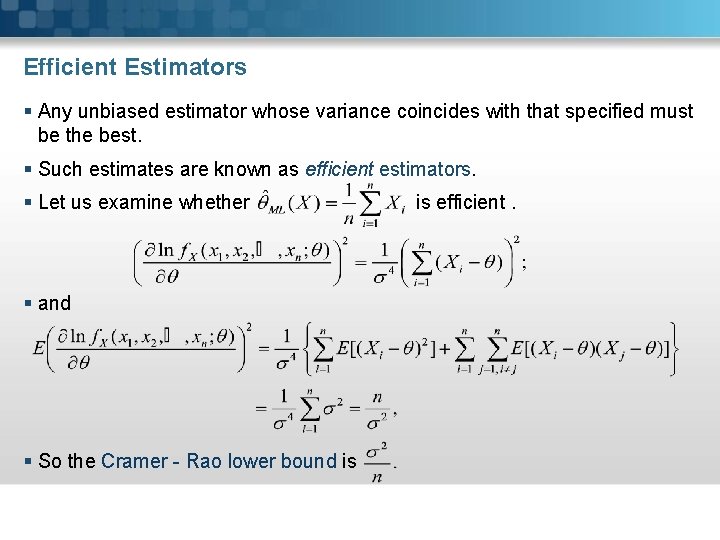

Efficient Estimators § Any unbiased estimator whose variance coincides with that specified must be the best. § Such estimates are known as efficient estimators. § Let us examine whether § and § So the Cramer - Rao lower bound is is efficient.

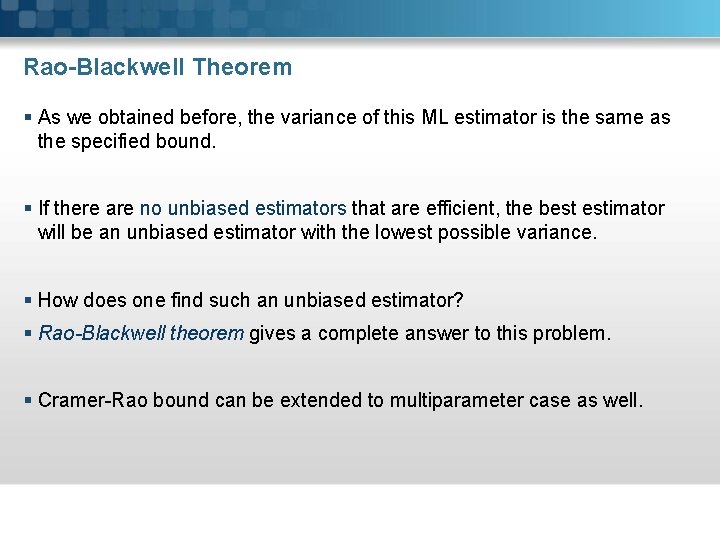

Rao-Blackwell Theorem § As we obtained before, the variance of this ML estimator is the same as the specified bound. § If there are no unbiased estimators that are efficient, the best estimator will be an unbiased estimator with the lowest possible variance. § How does one find such an unbiased estimator? § Rao-Blackwell theorem gives a complete answer to this problem. § Cramer-Rao bound can be extended to multiparameter case as well.

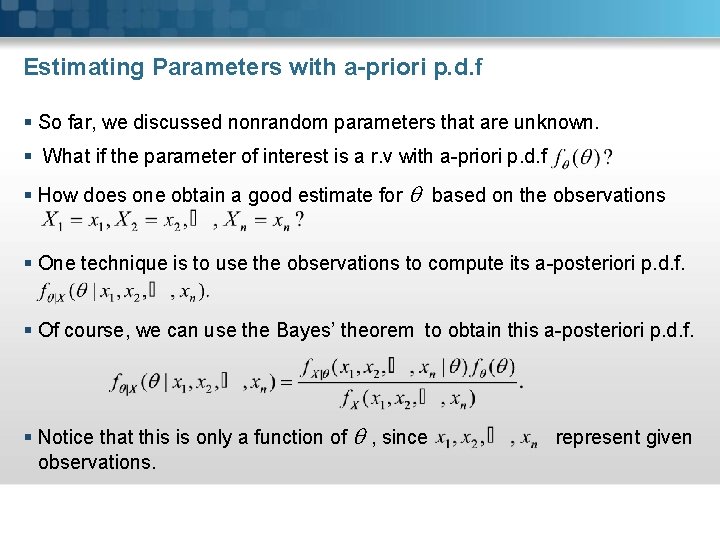

Estimating Parameters with a-priori p. d. f § So far, we discussed nonrandom parameters that are unknown. § What if the parameter of interest is a r. v with a-priori p. d. f § How does one obtain a good estimate for based on the observations § One technique is to use the observations to compute its a-posteriori p. d. f. § Of course, we can use the Bayes’ theorem to obtain this a-posteriori p. d. f. § Notice that this is only a function of , since observations. represent given

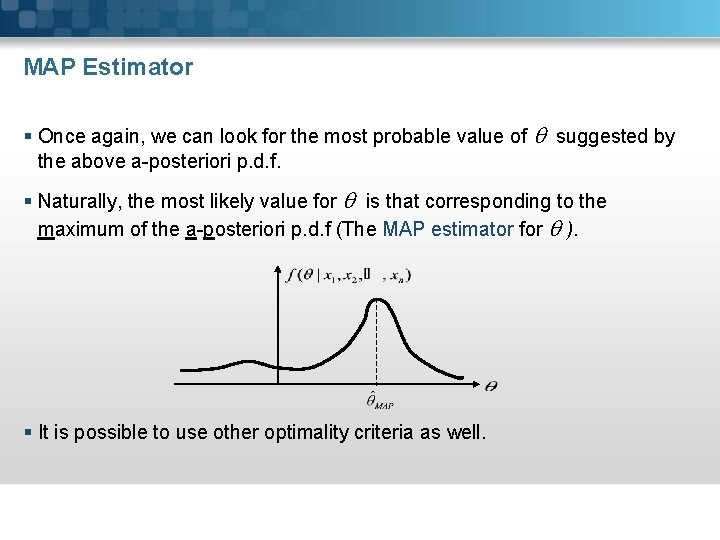

MAP Estimator § Once again, we can look for the most probable value of suggested by the above a-posteriori p. d. f. § Naturally, the most likely value for is that corresponding to the maximum of the a-posteriori p. d. f (The MAP estimator for ). § It is possible to use other optimality criteria as well.

- Slides: 25