Attention By Merav Mazouz Lecturer Prof Hagit HelOr

Attention By: Merav Mazouz Lecturer: Prof. Hagit Hel-Or

Outline Background Fully connected CNN (Computer Vision) RNN (seq 2 seq) Attention Examples Usages of attention

Resource: https: //towardsdatascience. com/cousins-of-artificial-intelligence-dda 4 edc 27 b 55

What is Machine Learning? Learning Machine an essential human property learning is to study of algorithms that improve their performance at some task with experience

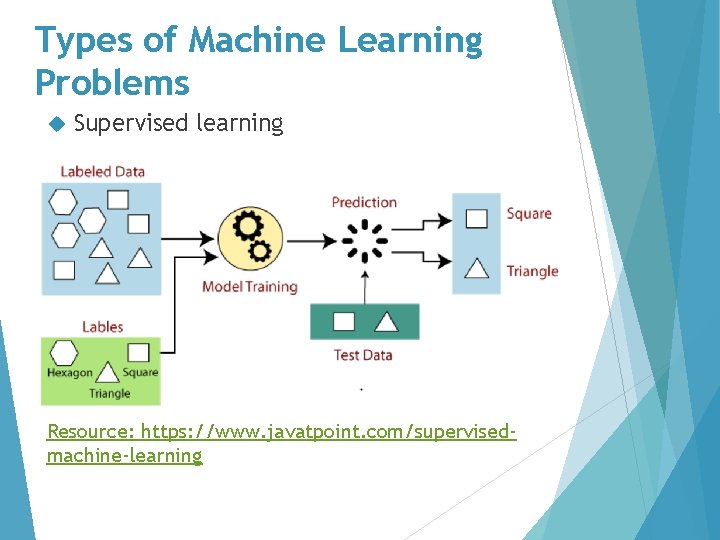

Types of Machine Learning Problems Supervised learning Resource: https: //www. javatpoint. com/supervisedmachine-learning

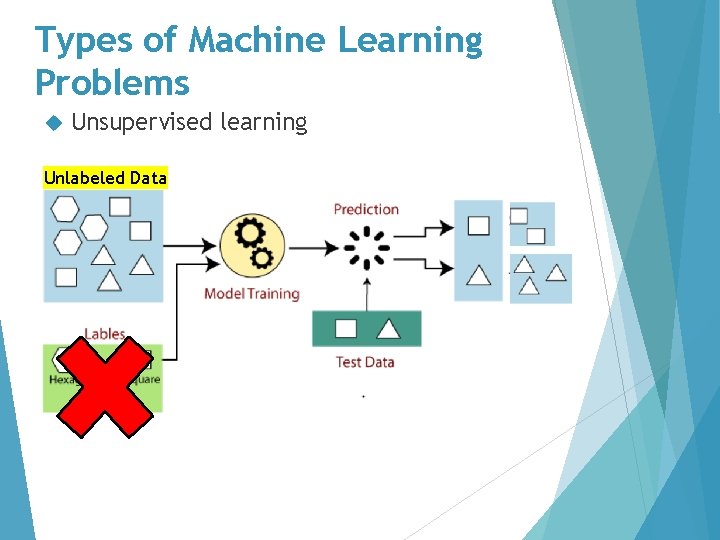

Types of Machine Learning Problems Unsupervised learning Unlabeled Data

What is the features?

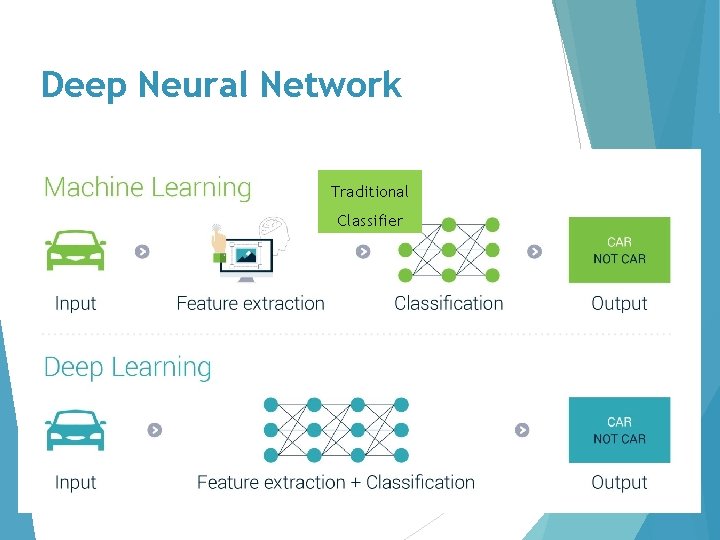

Deep Neural Network Traditional Classifier

Usages of deep NN Classification (supervised learning): Detect faces

Usages of deep NN Classification (supervised learning): Detect faces Identify people in images

Usages of deep NN Classification (supervised learning): Detect faces Identify people in images Recognize facial expressions (angry, joyful)

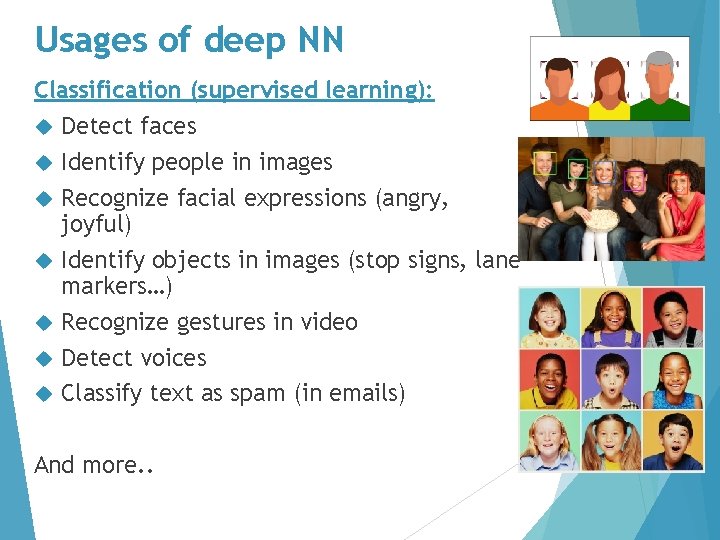

Usages of deep NN Classification (supervised learning): Detect faces Identify people in images Recognize facial expressions (angry, joyful) Identify objects in images (stop signs, lane markers…) Recognize gestures in video Detect voices Classify text as spam (in emails) And more. .

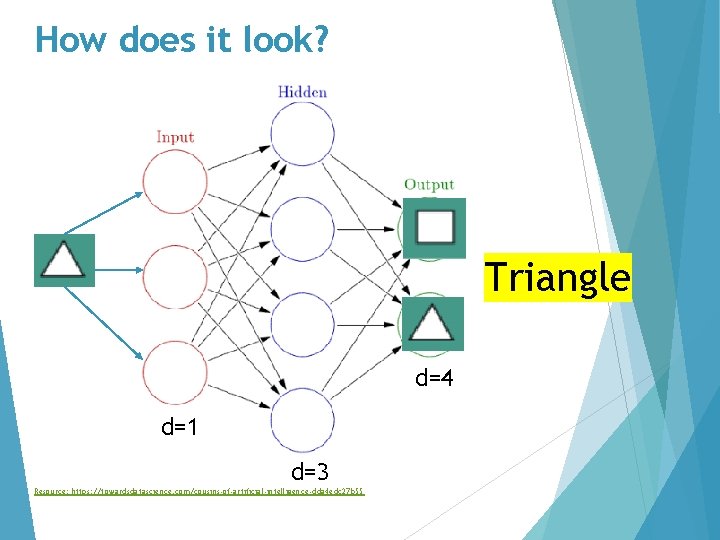

How does it look? Triangle d=4 d=1 d=3 Resource: https: //towardsdatascience. com/cousins-of-artificial-intelligence-dda 4 edc 27 b 55

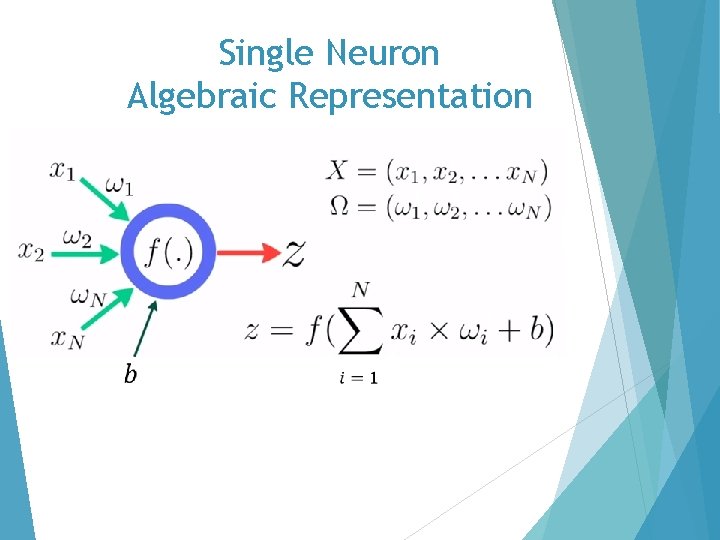

Single Neuron Algebraic Representation

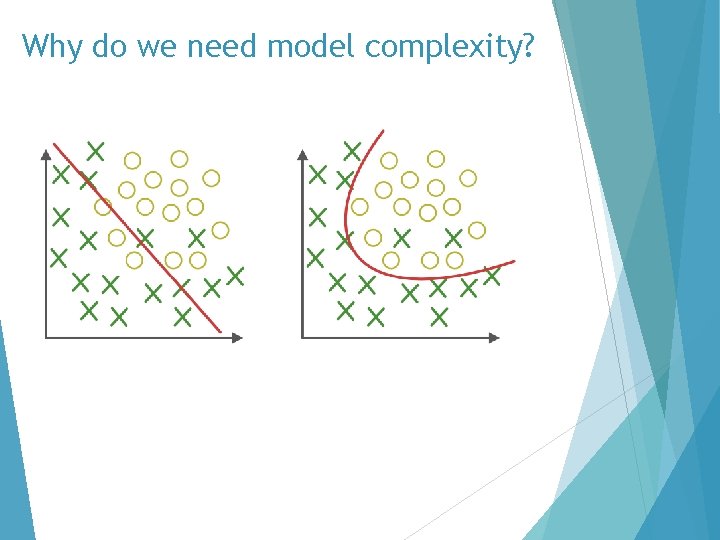

Why do we need model complexity?

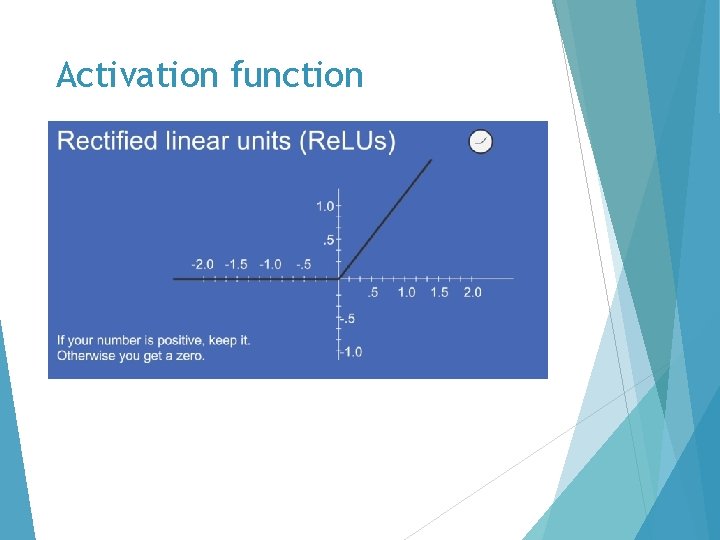

Activation function

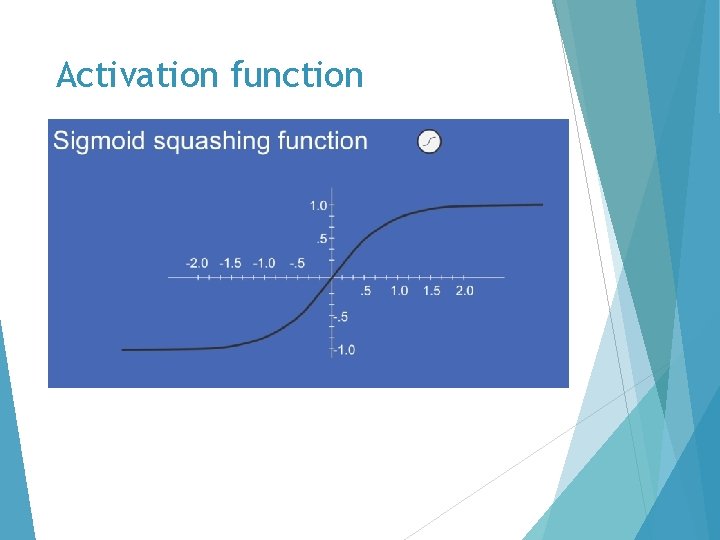

Activation function

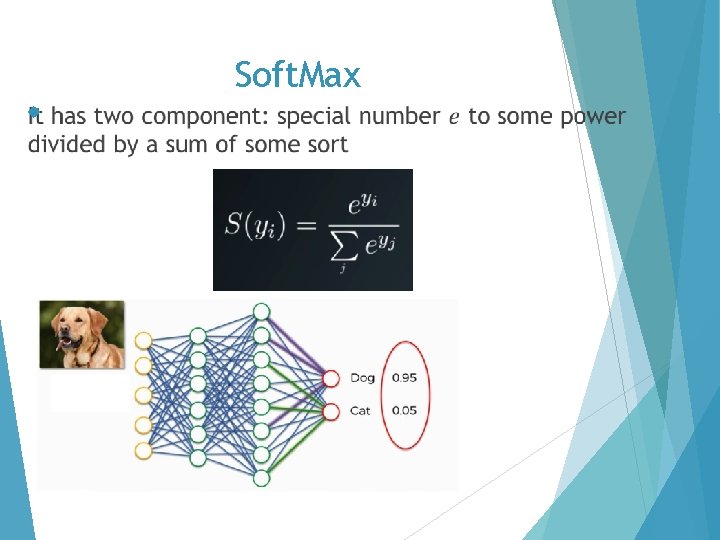

Soft. Max

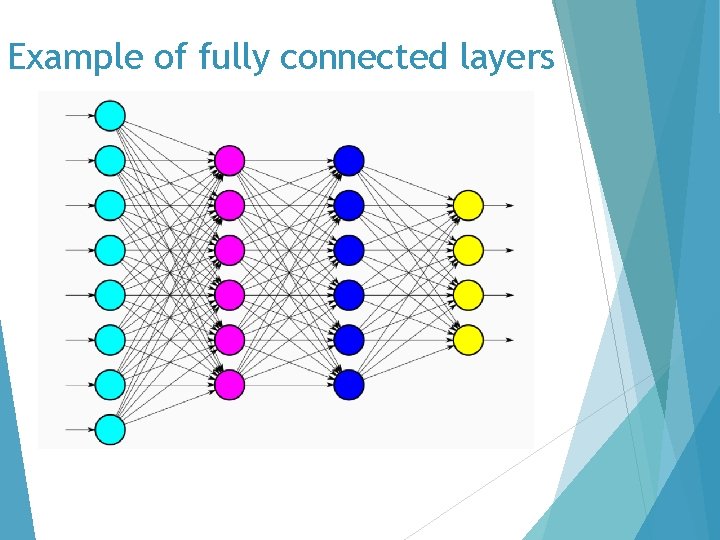

Example of fully connected layers

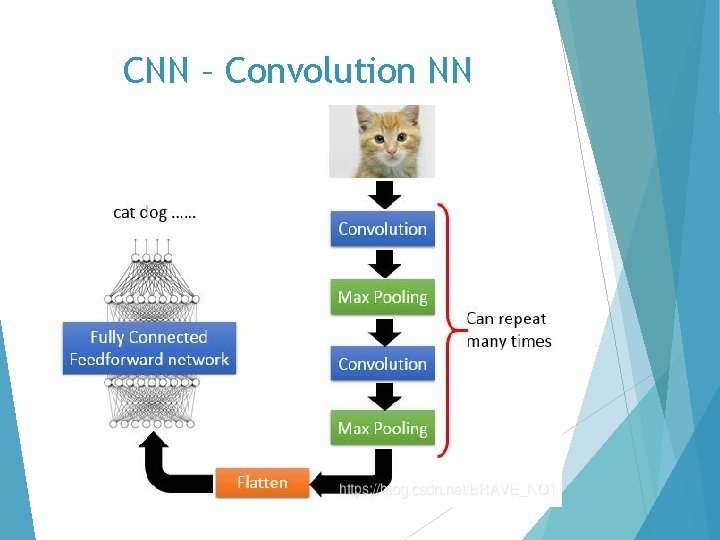

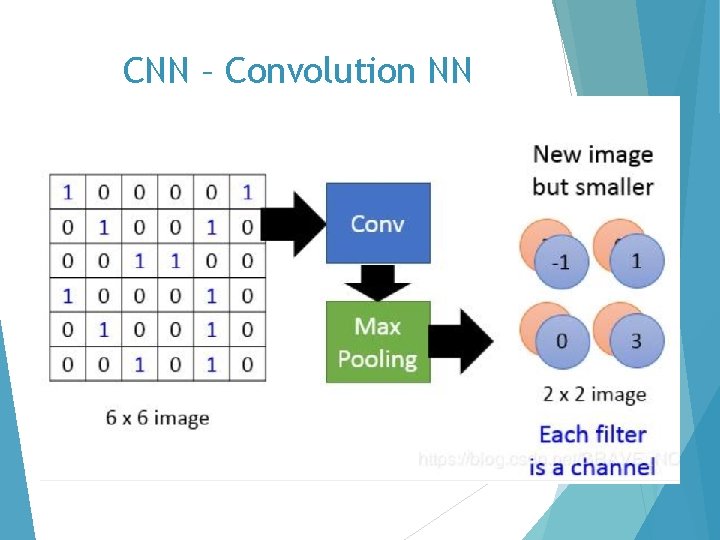

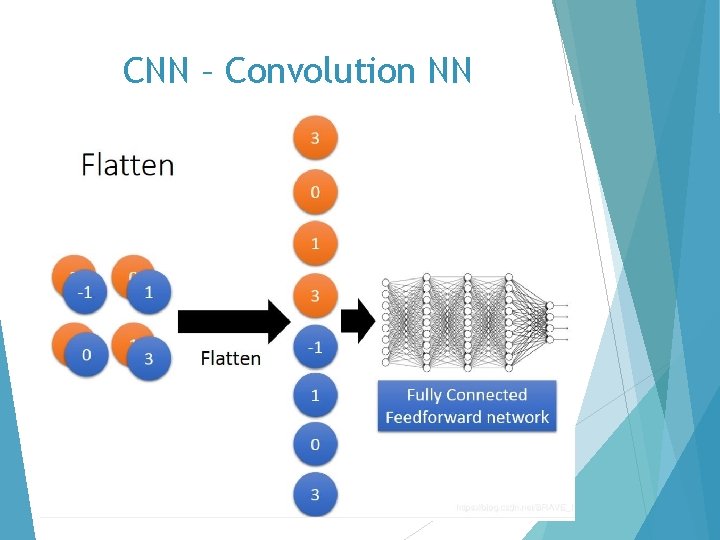

CNN – Convolution NN

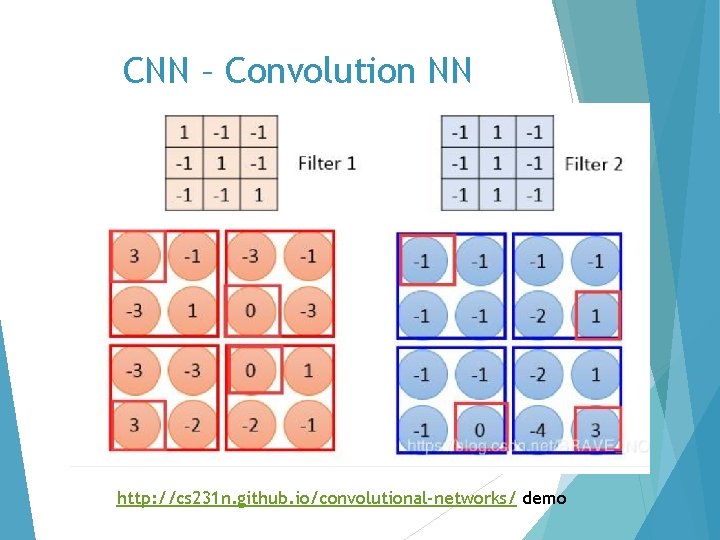

CNN – Convolution NN http: //cs 231 n. github. io/convolutional-networks/ demo

CNN – Convolution NN

CNN – Convolution NN

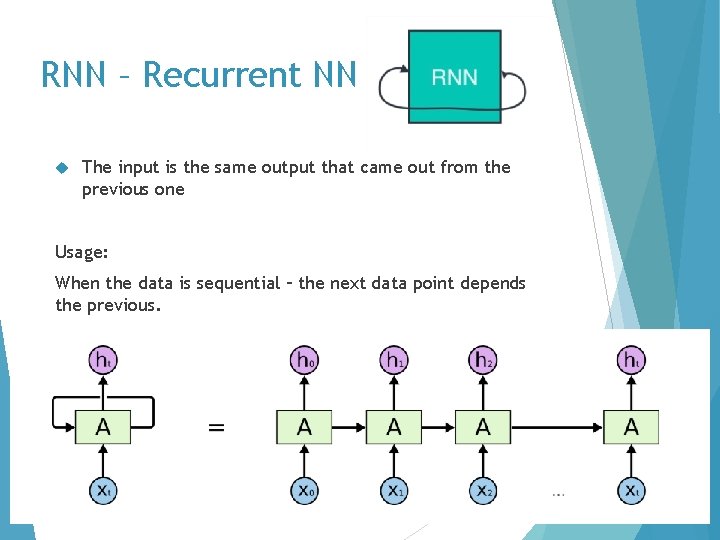

RNN – Recurrent NN The input is the same output that came out from the previous one Usage: When the data is sequential – the next data point depends the previous.

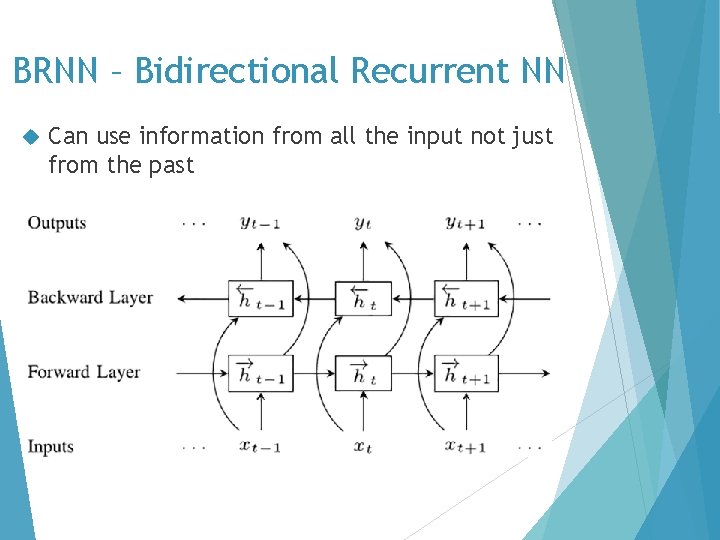

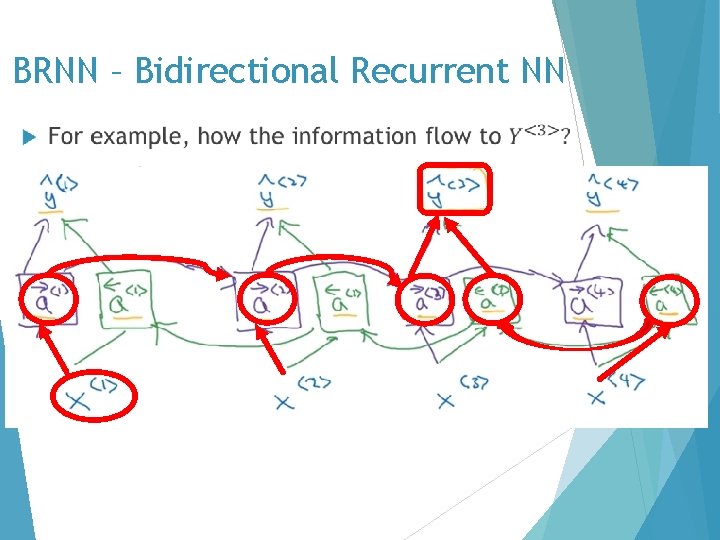

BRNN – Bidirectional Recurrent NN Can use information from all the input not just from the past

BRNN – Bidirectional Recurrent NN

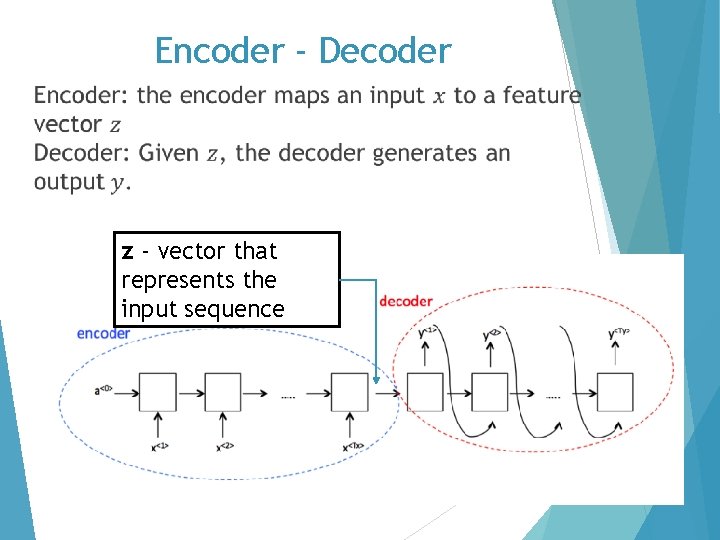

Encoder - Decoder z - vector that represents the input sequence

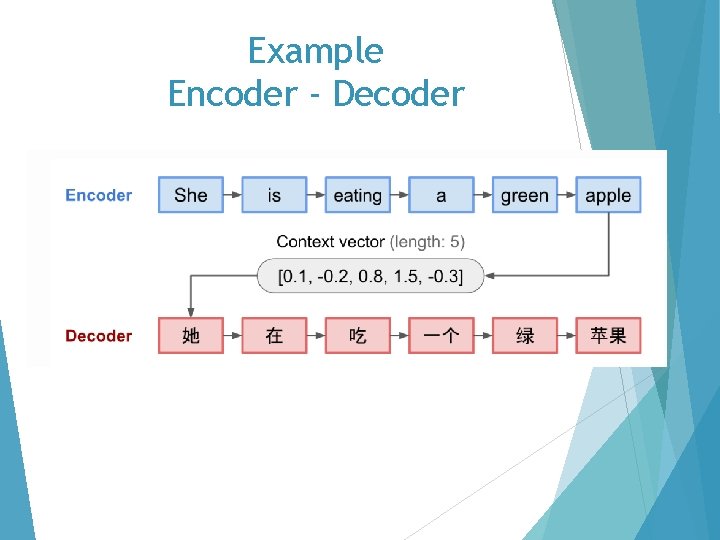

Example Encoder - Decoder

Disadvantage of encoder-decoder Fixed length context vector And to solve this problem…

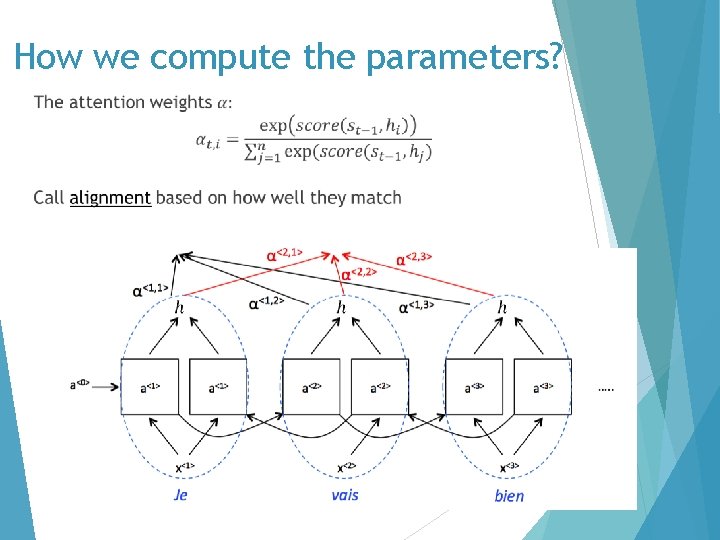

Attention seq 2 seq model Attention is motivated by how we pay attention to different regions of an image or correlate words in one sentence.

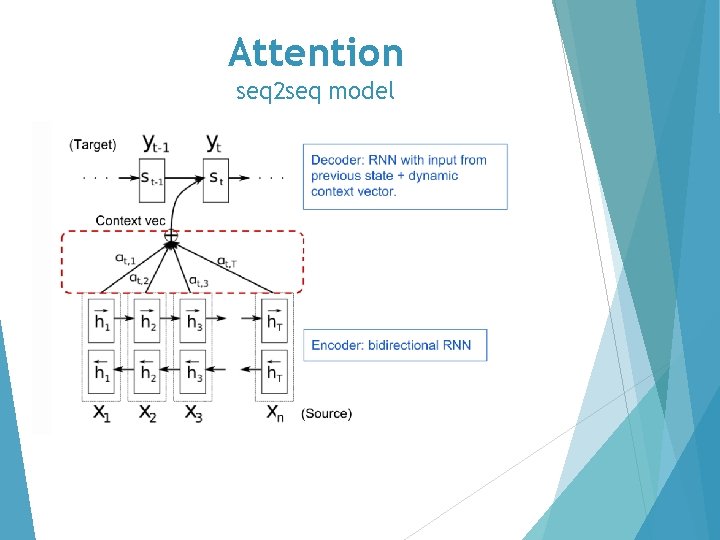

Attention seq 2 seq model

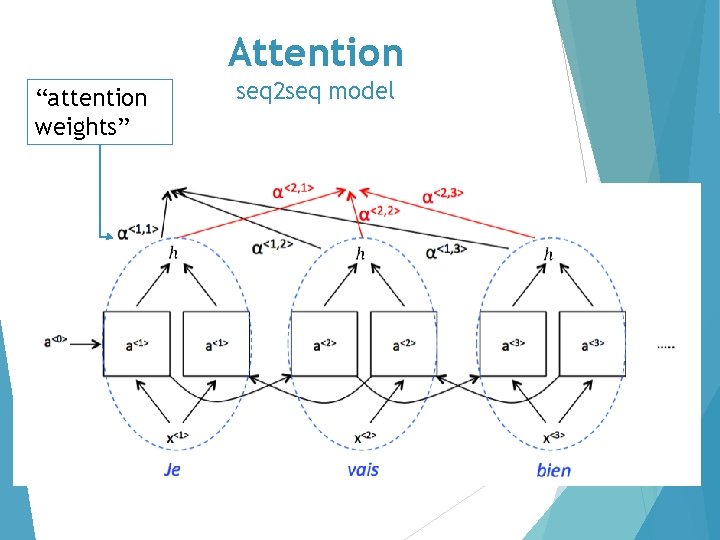

Attention “attention weights” seq 2 seq model

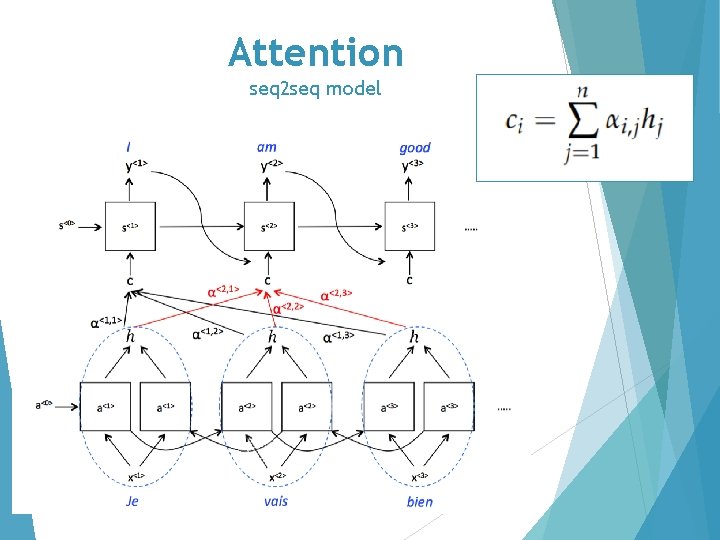

Attention seq 2 seq model

How we compute the parameters?

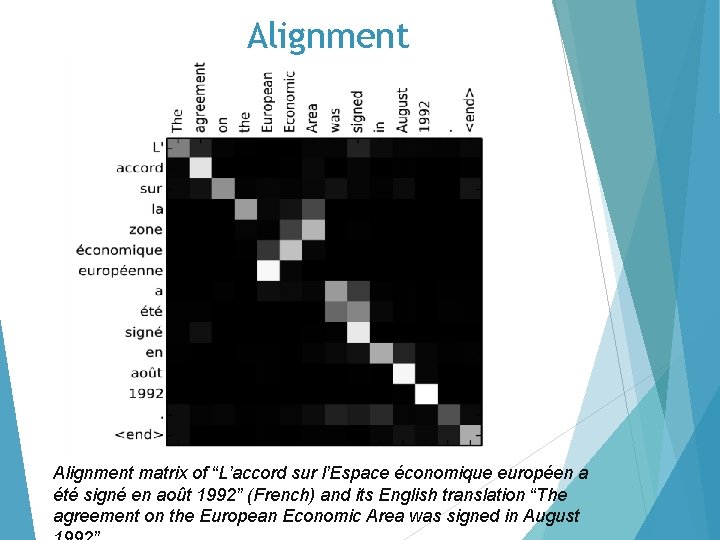

Alignment matrix of “L’accord sur l’Espace économique européen a été signé en août 1992” (French) and its English translation “The agreement on the European Economic Area was signed in August

Attention Examples change format of date

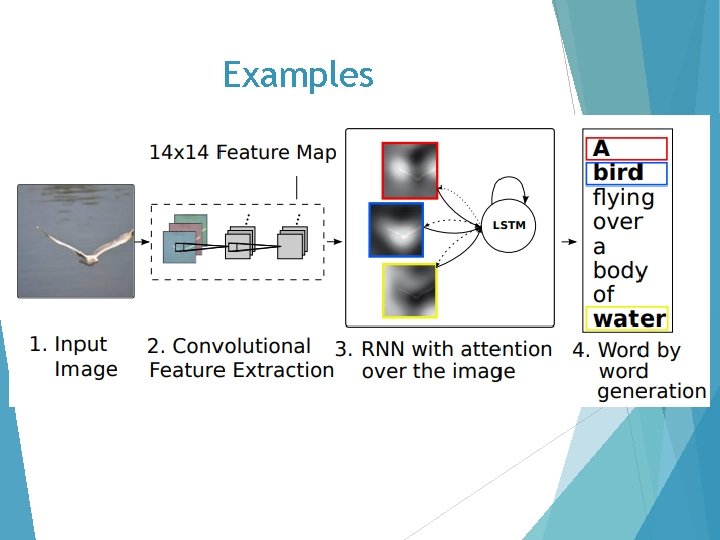

Examples

Examples

Examples

Examples of mistakes

Examples of mistakes

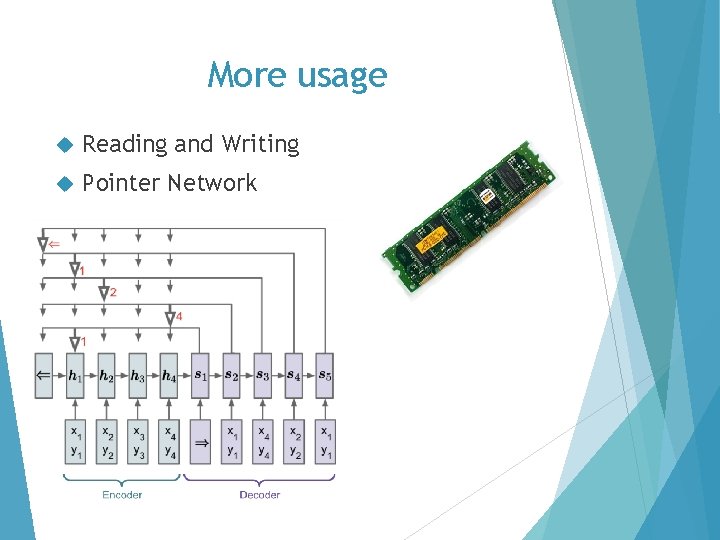

More usage Reading and Writing Pointer Network

Resources https: //papers. nips. cc/paper/7181 -attention-is-all-youneed. pdf https: //www. youtube. com/watch? v=ILs. A 4 ny. G 7 I 0 https: //www. youtube. com/watch? v=UNmq. Ti. On. Rfg https: //medium. com/machine-learning-bites/deeplearningseries-attention-model-and-speech-recognition-deeb 50632152 https: //medium. com/machine-learning-bites/deeplearningseries-sequence-to-sequence-architectures-4 c 4 ca 89 e 5654 https: //www. wolfram. com/mathematica/new-in 9/legends/identify-people-in-a-photo. html https: //medium. com/data-science-bootcamp/understand-thesoftmax-function-in-minutes-f 3 a 59641 e 86 d http: //www. programmersought. com/article/8238112623/ https: //lilianweng. github. io/lil-log/2018/06/24/attention. html#born-for-translation http: //proceedings. mlr. press/v 37/xuc 15. pdf

Thank you

- Slides: 44