AgentBased Modeling Simulation CAS 2 Group DISANL Veena

Agent-Based Modeling & Simulation CAS 2 Group, DIS@ANL Veena S. Mellarkod

Develops Computational Modeling Techniques The mission of Center for Complex Adaptive Agent Systems Simulation (CAS 2) is to: p Research and develop agent-based modeling and simulation (ABMS) and other computational techniques for understanding complex adaptive systems p Apply these techniques to problems of interest and importance p DIS CAS 2 believes that the development and application of these techniques will lead to better informed decision making Copyright 2004 Argonne National Laboratory

Members of CAS 2 Dr. Charles M. Macal - Director p Dr. David L. Sallach–Associate Director p Michael J. North – Deputy Director p Tom Howe p Intern Students p Programmers p

Topics p Agent-Based Modeling and Simulation (ABMS) p ABMS examples p An Occupation Dynamics Model p Interpretive Mechanisms in ABMS

What is ABMS? p Given a set of agents and environments, and their behaviors, ABMS creates electronic laboratories that simulates the agents’ decisions and interactions p Individual and environment behavior is given as rules p ABMS can show system evolves through time, using only the behaviors of the individual agents (local interactions) p The global consequence is called the emergent behavior

Complex Adaptive Systems p ABMS can be used to build complex adaptive systems p A Complex Adaptive System (CAS) is made up of agents that interact and reproduce while adapting to a changing environment n n n Economic markets with producers, distributors, and consumers Social systems with people, groups, factions, and countries Ecosystems with species, individuals, hives, and flocks

Features of CAS p p Nonlinearity Property: n Components or agents exchange resources or information in ways that are not simply additive Diversity Property: n Agents/groups of agents differentiate from one another over time Aggregation Property: n A group of agents is treated as a single agent at a higher level Flows Mechanism: n Resources/information are exchanged between agents such that the resources can be repeatedly forwarded from agent to agent Copyright 2004 Argonne National Laboratory

Features of CAS – cont. p Tagging Mechanism: n p Internal Models Mechanism: n p The presence of identifiable flags that let agents identify the traits of other agents Formal, informal, or implicit representations of the world that are embedded within agents Building Blocks Mechanism: n Used when an agent participates in more than one kind of interaction where each interaction is a building block for larger activities Copyright 2004 Argonne National Laboratory

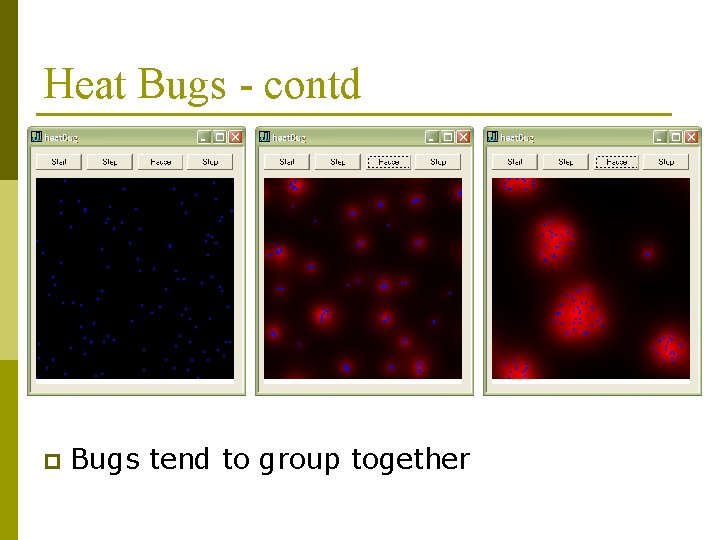

Heat Bug Example p p p Agents - heat bugs Environment - cells/grids Agents occupy cells Environment behavior: heat dissipation Agent behaviors: n n n p Emit constant heat Capable of sensing temperatures of its cell and neighbor cells They move to a neighboring cell if un-occupied and is comfortable At every tick (time step), bugs emit heat, heat diffuses in the environment and bugs move in the neighborhood to a more comfortable place

Heat Bugs - contd p Bugs tend to group together

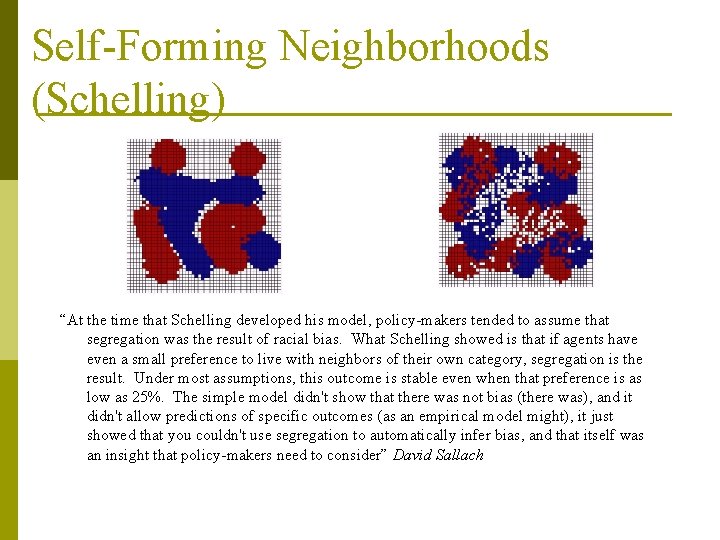

An early exemplar from Thomas Schelling: Segregation Model p Two categories of people (e. g. , red, blue) on a grid n n p What is the lowest point that will produce an (artificial) society composed of discrete clusters? n p Each category has a preference for minimum percent of neighbors of the same kind If current location doesn’t meet individual preferences, s/he migrates to a (randomly selected) adjacent empty square Clusters occur even when individuals of both types are happy to be in a significant minority Policy implications: n n n Segregation as an aggregate effect can be produced without bias Macro effects from micro interaction may manifest a “tipping point” Even an extremely simple model, individual interaction can produce counterintuitive macroscopic effects

Self-Forming Neighborhoods (Schelling) “At the time that Schelling developed his model, policy-makers tended to assume that segregation was the result of racial bias. What Schelling showed is that if agents have even a small preference to live with neighbors of their own category, segregation is the result. Under most assumptions, this outcome is stable even when that preference is as low as 25%. The simple model didn't show that there was not bias (there was), and it didn't allow predictions of specific outcomes (as an empirical model might), it just showed that you couldn't use segregation to automatically infer bias, and that itself was an insight that policy-makers need to consider” David Sallach

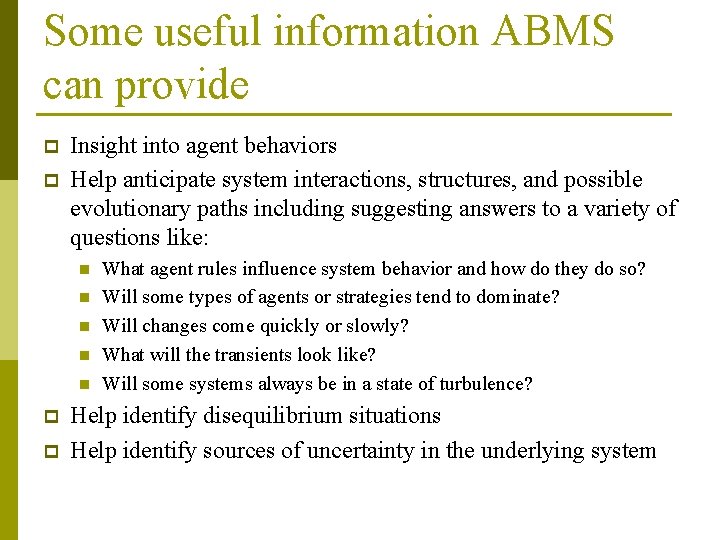

Some useful information ABMS can provide p p Insight into agent behaviors Help anticipate system interactions, structures, and possible evolutionary paths including suggesting answers to a variety of questions like: n n n p p What agent rules influence system behavior and how do they do so? Will some types of agents or strategies tend to dominate? Will changes come quickly or slowly? What will the transients look like? Will some systems always be in a state of turbulence? Help identify disequilibrium situations Help identify sources of uncertainty in the underlying system

Other ABMS examples p p p p Simulate growth and behavior of bacteria Analyze parameters that influence the growth of forests Role of forest fire in species diversity Simulate public group behaviors to study emergency situations and placement of exits in public places. Simulation of road traffics to plan new policies or build highways SIMPDEL model developed to analyze and predict effects of alternative water management scenarios in South Florida on the long-term populations of white-tailed deer and Florida panther. Agent. Cell is a environment with digital virtual Escherichia coli, a singlecelled bacterium, which are equipped with all the virtual components necessary to search for food. These digital E. coli contain their own chemotaxis system, which transmits the biochemical signals responsible for cellular locomotion. They also have flagella, the whiplike appendages that cells use for propulsion, and the motors to drive them.

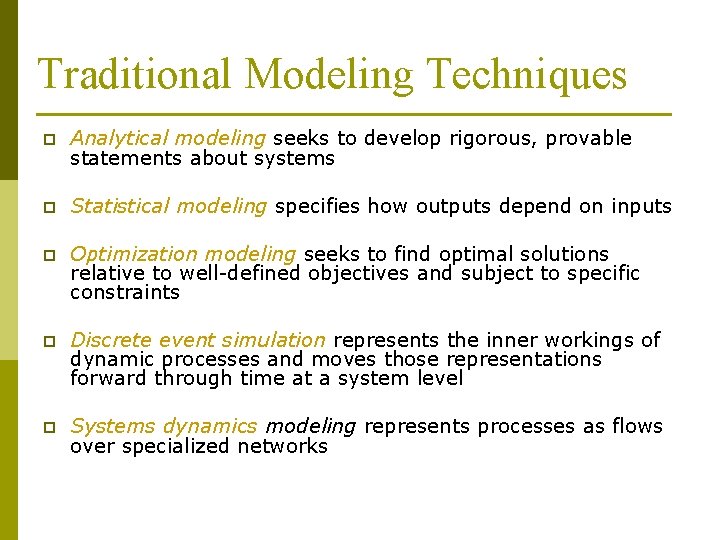

Traditional Modeling Techniques p Analytical modeling seeks to develop rigorous, provable statements about systems p Statistical modeling specifies how outputs depend on inputs p Optimization modeling seeks to find optimal solutions relative to well-defined objectives and subject to specific constraints p Discrete event simulation represents the inner workings of dynamic processes and moves those representations forward through time at a system level p Systems dynamics modeling represents processes as flows over specialized networks

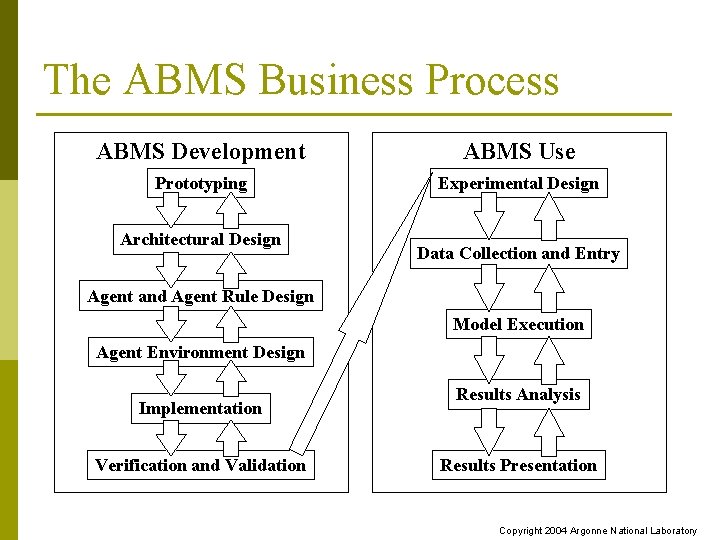

The ABMS Business Process ABMS Development ABMS Use Prototyping Experimental Design Architectural Design Data Collection and Entry Agent and Agent Rule Design Model Execution Agent Environment Design Implementation Verification and Validation Results Analysis Results Presentation Copyright 2004 Argonne National Laboratory

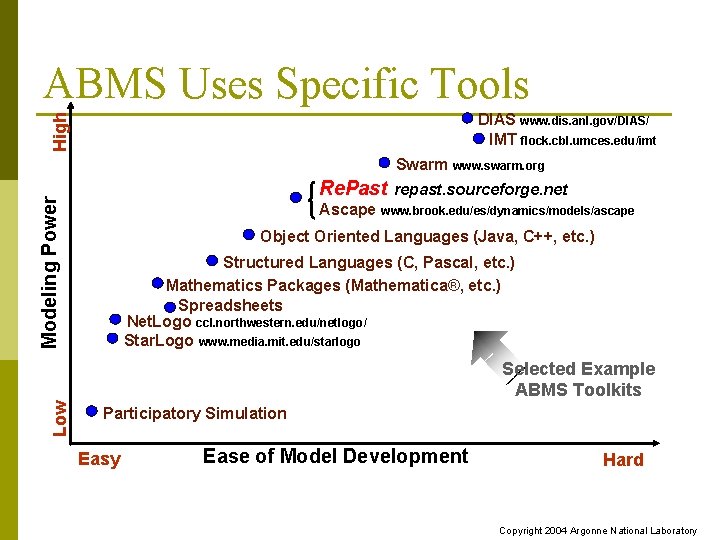

ABMS Uses Specific Tools High DIAS www. dis. anl. gov/DIAS/ IMT flock. cbl. umces. edu/imt Swarm Modeling Power Re. Past Ascape www. swarm. org repast. sourceforge. net www. brook. edu/es/dynamics/models/ascape Object Oriented Languages (Java, C++, etc. ) Structured Languages (C, Pascal, etc. ) Mathematics Packages (Mathematica®, etc. ) Spreadsheets Net. Logo ccl. northwestern. edu/netlogo/ Star. Logo www. media. mit. edu/starlogo Low Selected Example ABMS Toolkits Participatory Simulation Easy Ease of Model Development Hard Copyright 2004 Argonne National Laboratory

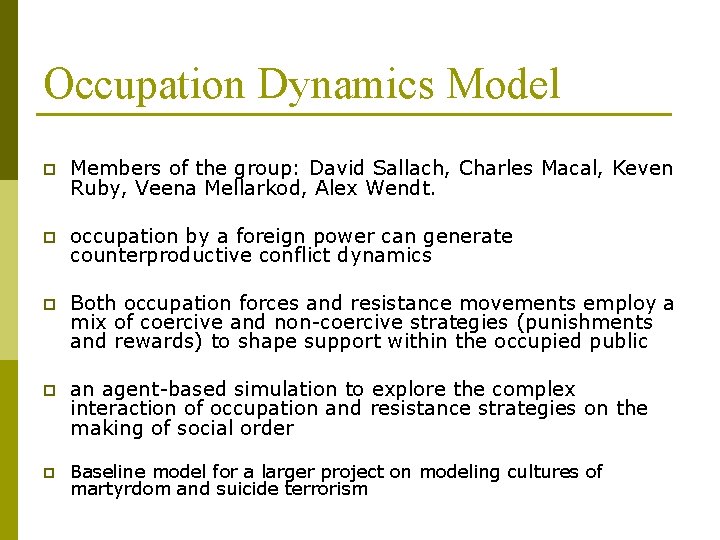

Occupation Dynamics Model p Members of the group: David Sallach, Charles Macal, Keven Ruby, Veena Mellarkod, Alex Wendt. p occupation by a foreign power can generate counterproductive conflict dynamics p Both occupation forces and resistance movements employ a mix of coercive and non-coercive strategies (punishments and rewards) to shape support within the occupied public p an agent-based simulation to explore the complex interaction of occupation and resistance strategies on the making of social order p Baseline model for a larger project on modeling cultures of martyrdom and suicide terrorism

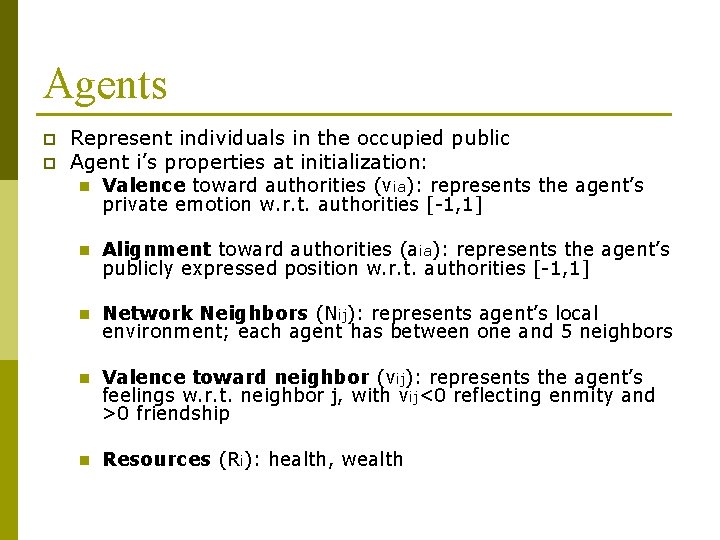

Agents p p Represent individuals in the occupied public Agent i’s properties at initialization: n Valence toward authorities (via): represents the agent’s private emotion w. r. t. authorities [-1, 1] n Alignment toward authorities (aia): represents the agent’s publicly expressed position w. r. t. authorities [-1, 1] n Network Neighbors (Nij): represents agent’s local environment; each agent has between one and 5 neighbors n Valence toward neighbor (vij): represents the agent’s feelings w. r. t. neighbor j, with vij<0 reflecting enmity and >0 friendship n Resources (Ri): health, wealth

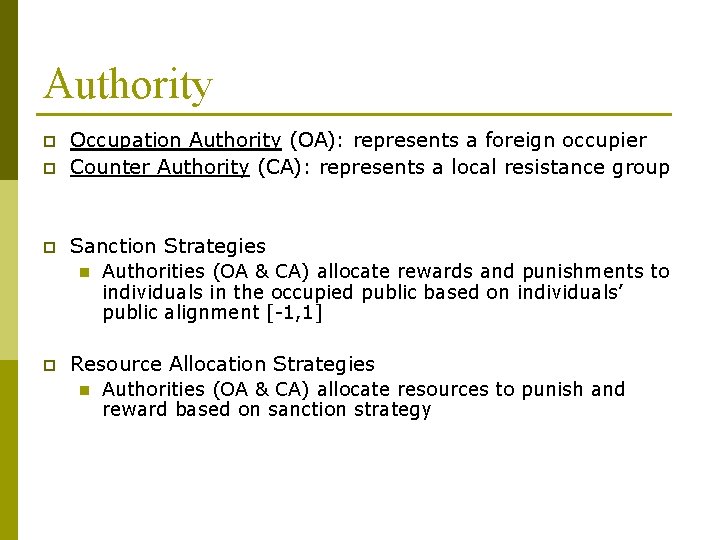

Authority p Occupation Authority (OA): represents a foreign occupier Counter Authority (CA): represents a local resistance group p Sanction Strategies p n p Authorities (OA & CA) allocate rewards and punishments to individuals in the occupied public based on individuals’ public alignment [-1, 1] Resource Allocation Strategies n Authorities (OA & CA) allocate resources to punish and reward based on sanction strategy

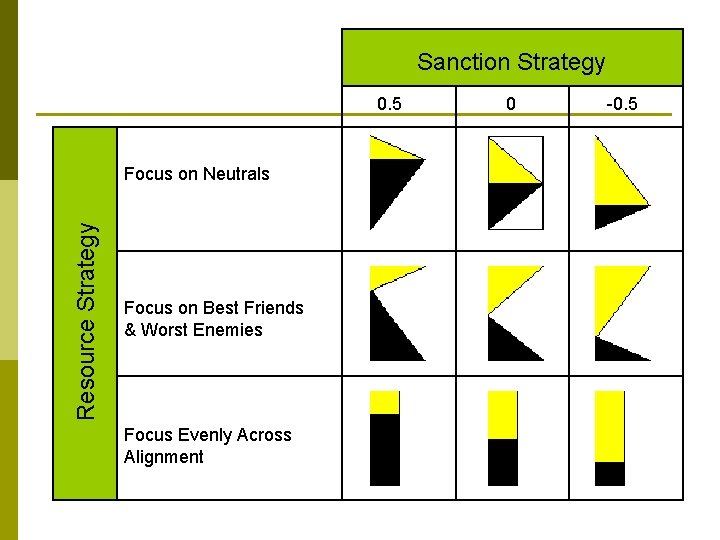

Sanction Strategy 0. 5 Resource Strategy Focus on Neutrals Focus on Best Friends & Worst Enemies Focus Evenly Across Alignment 0 -0. 5

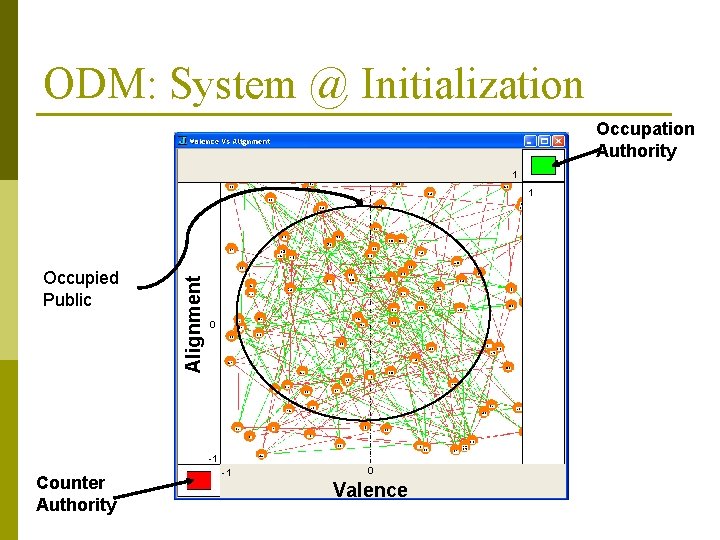

ODM: System @ Initialization Occupation Authority 1 Occupied Public Alignment 1 0 -1 Counter Authority -1 0 Valence

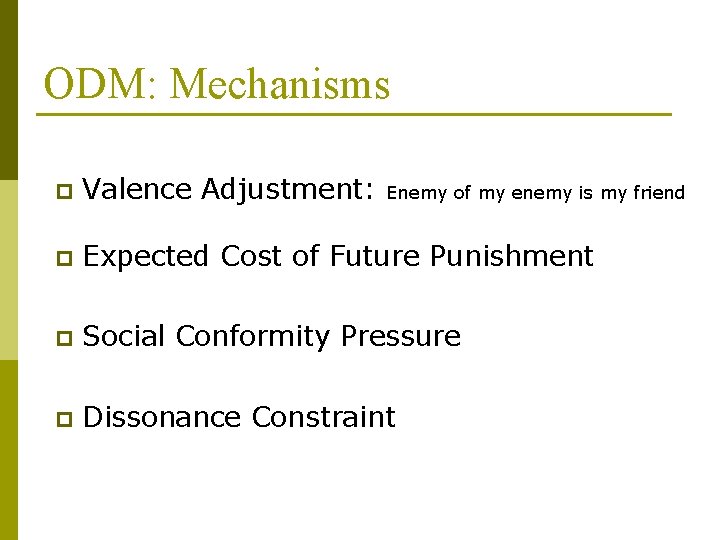

ODM: Mechanisms p Valence Adjustment: Enemy of my enemy is my friend p Expected Cost of Future Punishment p Social Conformity Pressure p Dissonance Constraint

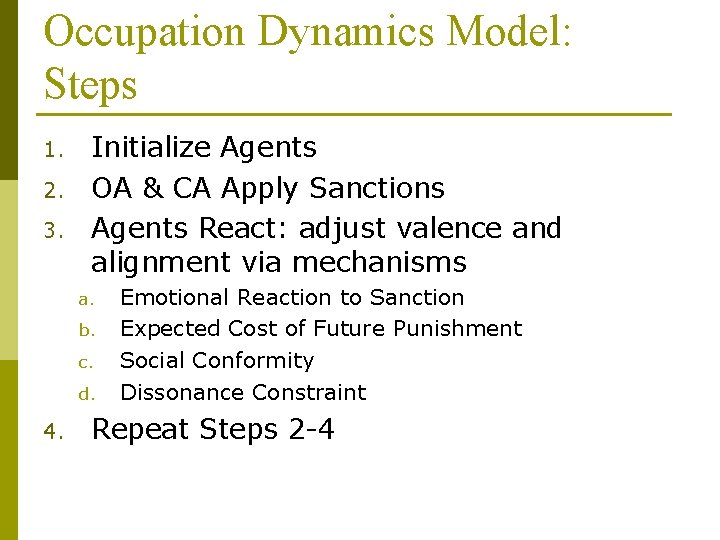

Occupation Dynamics Model: Steps 1. 2. 3. Initialize Agents OA & CA Apply Sanctions Agents React: adjust valence and alignment via mechanisms a. b. c. d. 4. Emotional Reaction to Sanction Expected Cost of Future Punishment Social Conformity Dissonance Constraint Repeat Steps 2 -4

Implementation p An initial prototype implementation by Charles Macal in Mathematica p The baseline implementation by Veena in J (an array programming language)

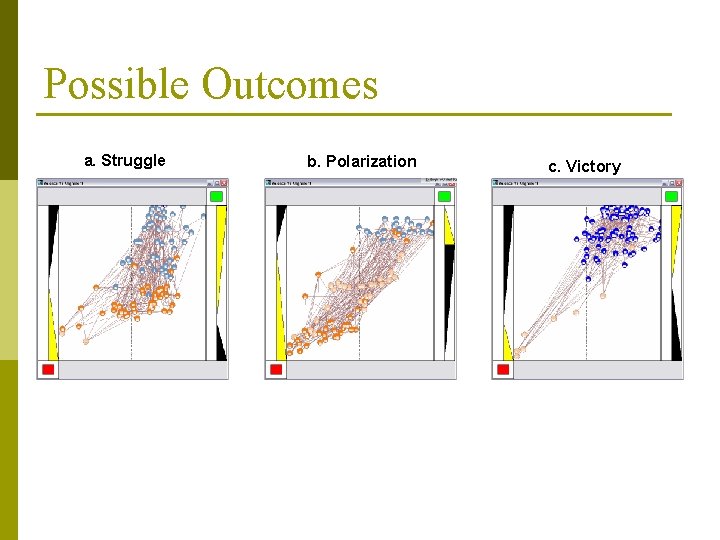

Possible Outcomes a. Struggle b. Polarization c. Victory

Future Directions with ODM Analysis of all the 81 possible combinations of strategies of OA and CA p Introduce geo-cultural social structure p Implement spatial models p Introduce dynamic networks (kinship, friends, enemies, spatial) neighbors p Influence of multiple counter authorities p

Interpretive Mechanisms in ABMS p As of now, ABMS have simple behaviors for agents. More specifically, similar actions of two agents are interpreted in the same way. p There can be more than one interpretation for an action. These interpretations depend on the situation at which the action is performed. Different situations give rise to different interpretations. p To introduce, work with, understand reason flexibly about several interpretations for actions, we introduce interpretive mechanisms in ABMS. p Meaning is what actually drives human action. Not only can actions be interpreted in different ways, two humans could (hypothetically) be in completely identical situations, facing the same actions by another but, because they interpret it differently, they will feel, reason and respond differently. It is the meaning that they find in (or attribute to) a situation or (series of) events that determines how they will respond.

Three IA Assumptions 1. 2. 3. Agent simulation a productive domain for experimentation with interpretive models Natural agents constantly translate from continuous settings to discrete models Agents dynamically maintain an orientation field with an emotional valence for every relevant actor, object and resource

Three IA Mechanisms 1. 2. 3. § prototype inference orientation accounting situation definition Based on specified assumptions, researchers can use these mechanisms, working in conjunction, to simulate situated meaning

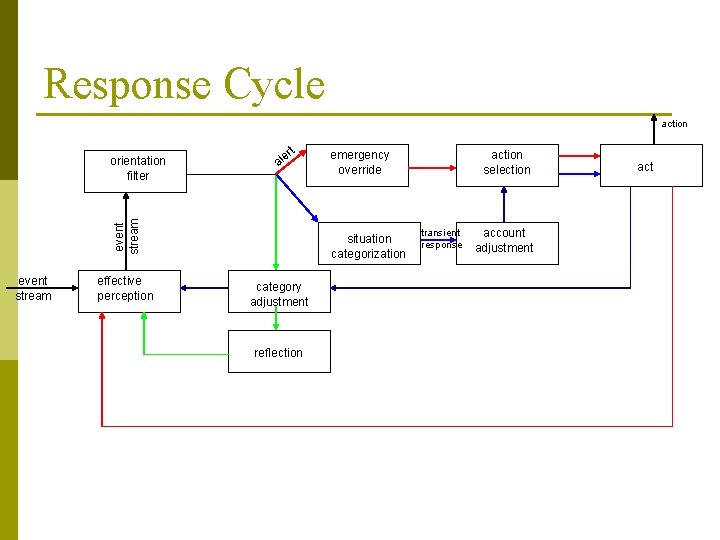

Response Cycle action t er al event stream orientation filter event stream effective perception emergency override situation categorization category adjustment reflection action selection transient response account adjustment act

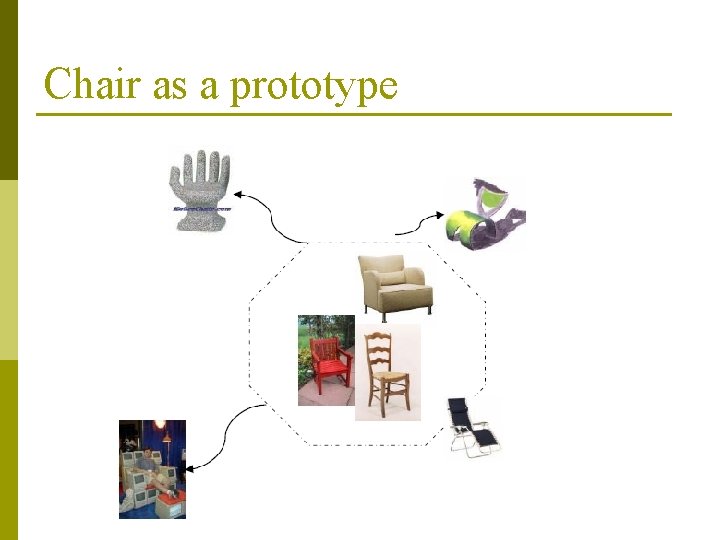

Prototype Concepts: A Radial Structure p p p Prototype concepts are an empirical discovery of cognitive science Prototype structure is multidimensional and radial, with an (idealized) exemplar at the core and more idiosyncratic representatives along the radians Prototypes can refer to divergent types of phenomena including objects, animals, people, emotions, circumstances and abstract entities such as numbers.

Chair as a prototype

Prototype Structure p A prototype is composed by entities, events and/or relationships, where at least one is present p Prototype structure is represented using Codd’s RM/T relational model

A Hypothetical Social Structure p Agents have a religion and ethnicity n They can perceive others’ ethnicity and religion by interacting with them for some period of time p Their actions depend, in part, on religious values, ethnic clusivity, and their own aggressiveness p At each step, agents choose from three actions: request, help, deceive or they can choose to do nothing

Actions Requesting Resources when depleted, agents request resources p they use simple rules to decide from whom to seek resources p the rules are dependent on the prototype structures the agents maintain p § ask resources from agents who normally help § ask resources from agents who are #similar § ask resources from agents who received help from self

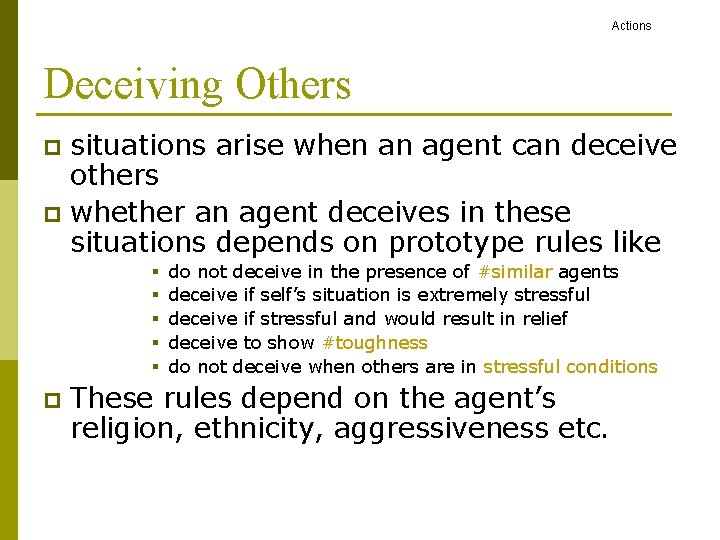

Actions Deceiving Others situations arise when an agent can deceive others p whether an agent deceives in these situations depends on prototype rules like p § § § p do not deceive in the presence of #similar agents deceive if self’s situation is extremely stressful deceive if stressful and would result in relief deceive to show #toughness do not deceive when others are in stressful conditions These rules depend on the agent’s religion, ethnicity, aggressiveness etc.

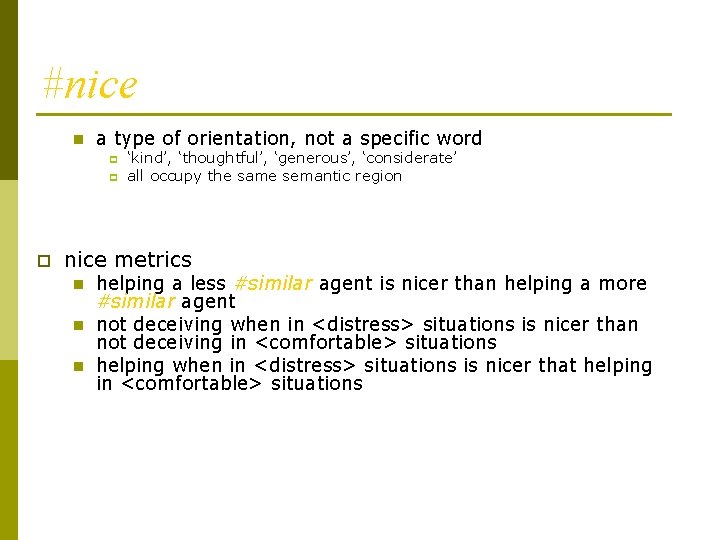

#nice n a type of orientation, not a specific word p p p ‘kind’, ‘thoughtful’, ‘generous’, ‘considerate’ all occupy the same semantic region nice metrics n n n helping a less #similar agent is nicer than helping a more #similar agent not deceiving when in <distress> situations is nicer than not deceiving in <comfortable> situations helping when in <distress> situations is nicer that helping in <comfortable> situations

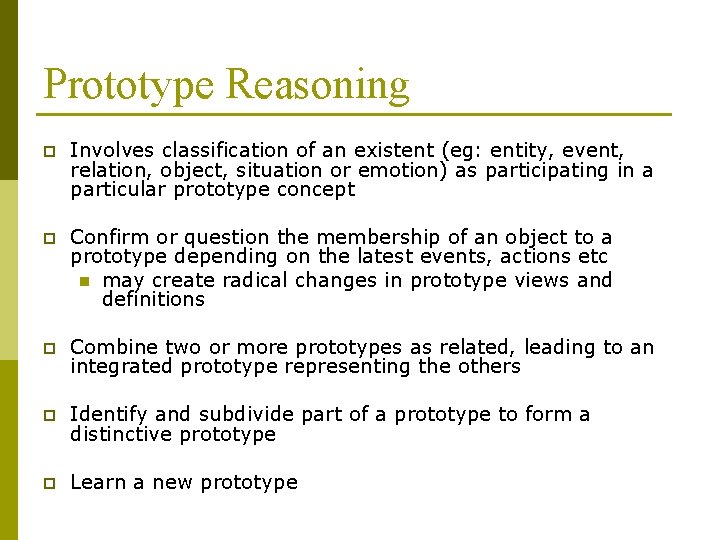

Prototype Reasoning p Involves classification of an existent (eg: entity, event, relation, object, situation or emotion) as participating in a particular prototype concept p Confirm or question the membership of an object to a prototype depending on the latest events, actions etc n may create radical changes in prototype views and definitions p Combine two or more prototypes as related, leading to an integrated prototype representing the others p Identify and subdivide part of a prototype to form a distinctive prototype p Learn a new prototype

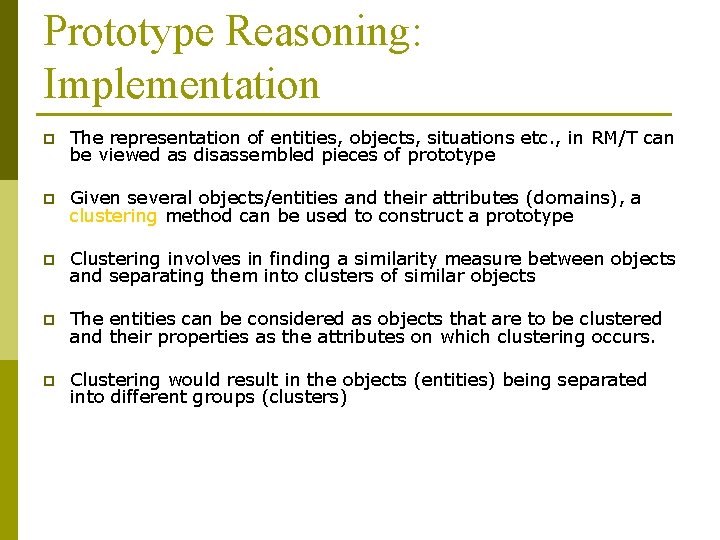

Prototype Reasoning: Implementation p The representation of entities, objects, situations etc. , in RM/T can be viewed as disassembled pieces of prototype p Given several objects/entities and their attributes (domains), a clustering method can be used to construct a prototype p Clustering involves in finding a similarity measure between objects and separating them into clusters of similar objects p The entities can be considered as objects that are to be clustered and their properties as the attributes on which clustering occurs. p Clustering would result in the objects (entities) being separated into different groups (clusters)

Prototype Clusters p p p A set of objects that are similar in some respects Several clustering methods can be used Hierarchical clusters are considered § Initially every object is regarded as a separate cluster § At each step, the clustering merges two similar clusters together § Similarity measure used is Euclidean distance measures § alternatives are nearest and farthest neighbor measures § Gower’s coefficient handles multiple data types § weighted measures expresses salience across domains p Dynamic (floating) definition of a prototype core will be used n core and peripheral definitions change with situation

When to stop clustering? p p p Prototype structure has a core and periphery represented by clusters of objects The clustering algorithm should return the objects separated into finite number of clusters At what level do we stop the algorithm: how many clusters should the algorithm return? To use a heuristic consistent with bounded rationality The span of immediate memory impose several limitations on the amount of information that we are able to receive, process and remember - George A. Miller p Clustering stops when there are Miller’s Magic number 7 ± 2 of clusters

Prototype Blends p p Concepts blend to derive/define new objects, events, goals and situations When a single prototype cannot be used to describe a particular object, event or situation, blending of prototypes result n Two or more prototypes are combined to form a new prototype p p green apple, respectful enemy The resulting prototype is a blend of the parent prototypes and represents a new object, event, emotion or situation

Detailed Blends Vs Fast Blends p Detailed blends are performed by clustering the integrated data sets resulting from applying join operators of RM/T to several prototype structures requires longer periods of time p can be done during reflection p greater flexibility and expressiveness p p Fast blends involves merging at the clusters level; implementation involves intersecting two types of clusters together depending on a criteria § similar and nice fast and dirty (sometimes) p transient responses use fast blends p everything cannot be blended p

Weighted Clusters p depending on situations, some dimensions of a prototype are given more preference or salience over the others p larger weights can be given to dimensions of preference and clustering would result in objects with higher similarity in preferred dimensions put together p similar in religion, similar in niceness p weights are functions of agent’s preferences and impending situations and/or goals p blending weighed clusters can be done; detailed joins of the prototypes allows flexibility

Interpretive Heat Bugs The ordinary heat bugs example is extended to introduce interpretive mechanisms. p The example acts as a reference example for IA mechanisms p I am currently working on an implementation of IHB in J p

Conclusion A very nice experience

- Slides: 47