Advances in Metric Embedding Theory Ofer Neiman Ittai

Advances in Metric Embedding Theory Ofer Neiman Ittai Abraham Yair Bartal Hebrew University

Talk Outline Current results: § § § New method of embedding. New partition techniques. Constant average distortion. Extend notions of distortion. Optimal results for scaling embeddings. Tradeoff between distortion and dimension. Work in progress: § Low dimension embedding for doubling metrics. § Scaling distortion into a single tree. § Nearest neighbors preserving embedding.

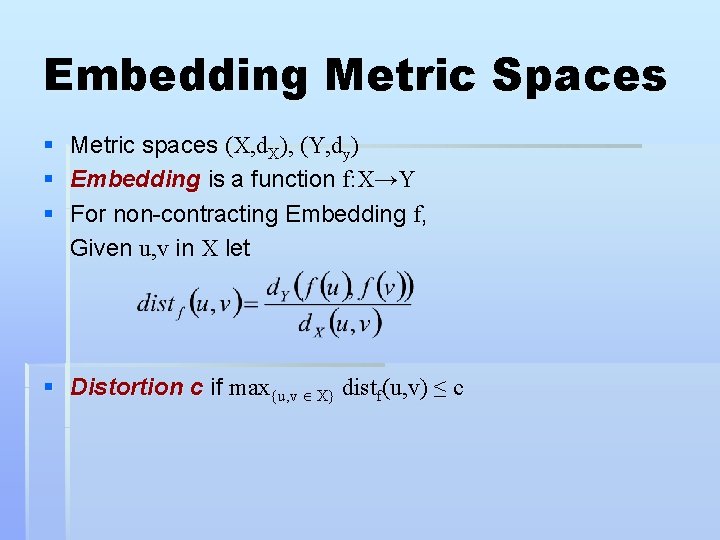

Embedding Metric Spaces § § § Metric spaces (X, d. X), (Y, dy) Embedding is a function f: X→Y For non-contracting Embedding f, Given u, v in X let § Distortion c if max{u, v X} distf(u, v) ≤ c

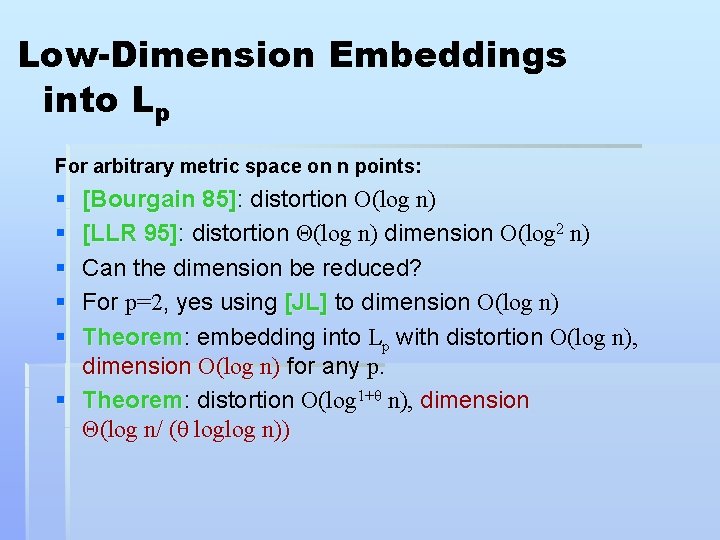

Low-Dimension Embeddings into Lp For arbitrary metric space on n points: § § § [Bourgain 85]: distortion O(log n) [LLR 95]: distortion Θ(log n) dimension O(log 2 n) Can the dimension be reduced? For p=2, yes using [JL] to dimension O(log n) Theorem: embedding into Lp with distortion O(log n), dimension O(log n) for any p. § Theorem: distortion O(log 1+θ n), dimension Θ(log n/ (θ loglog n))

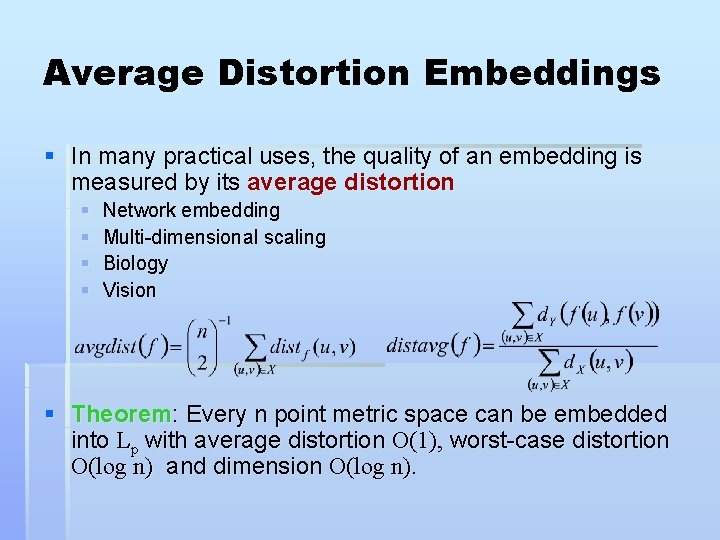

Average Distortion Embeddings § In many practical uses, the quality of an embedding is measured by its average distortion § § Network embedding Multi-dimensional scaling Biology Vision § Theorem: Every n point metric space can be embedded into Lp with average distortion O(1), worst-case distortion O(log n) and dimension O(log n).

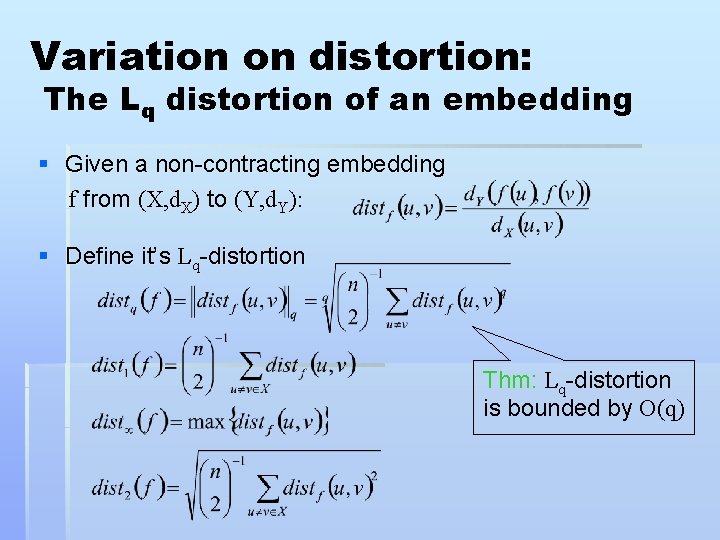

Variation on distortion: The Lq distortion of an embedding § Given a non-contracting embedding f from (X, d. X) to (Y, d. Y): § Define it’s Lq-distortion Thm: Lq-distortion is bounded by O(q)

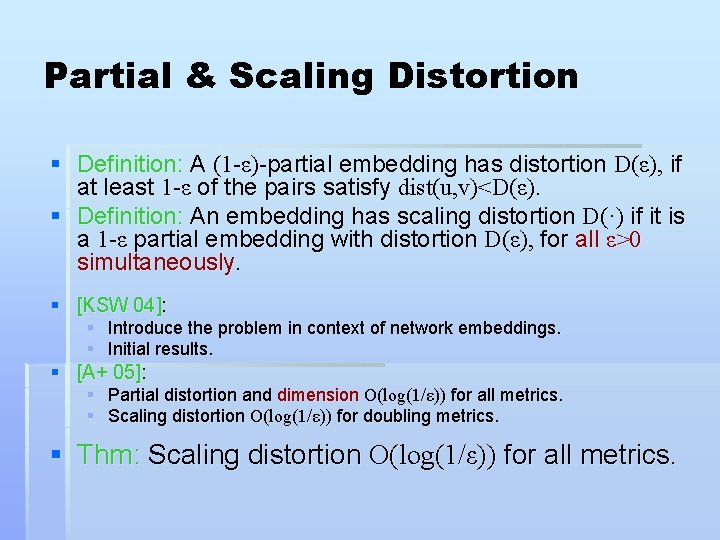

Partial & Scaling Distortion § Definition: A (1 -ε)-partial embedding has distortion D(ε), if at least 1 -ε of the pairs satisfy dist(u, v)<D(ε). § Definition: An embedding has scaling distortion D(·) if it is a 1 -ε partial embedding with distortion D(ε), for all ε>0 simultaneously. § [KSW 04]: § Introduce the problem in context of network embeddings. § Initial results. § [A+ 05]: § Partial distortion and dimension O(log(1/ε)) for all metrics. § Scaling distortion O(log(1/ε)) for doubling metrics. § Thm: Scaling distortion O(log(1/ε)) for all metrics.

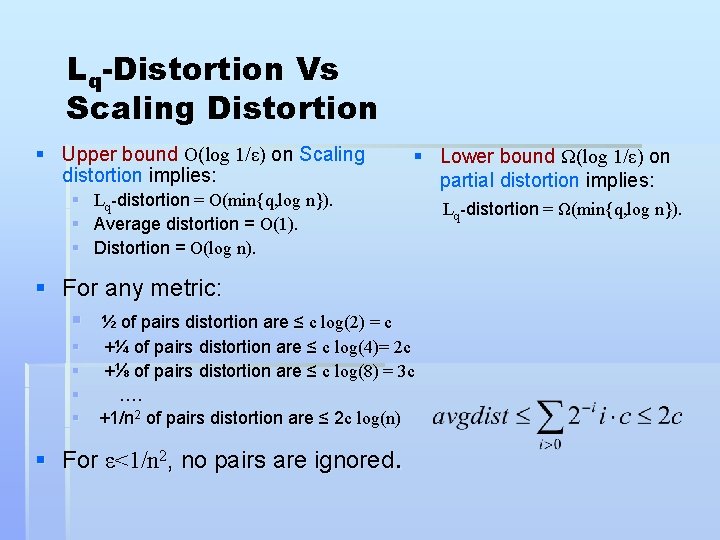

Lq-Distortion Vs Scaling Distortion § Upper bound O(log 1/ε) on Scaling distortion implies: § Lq-distortion = O(min{q, log n}). § Average distortion = O(1). § Distortion = O(log n). § Lower bound Ω(log 1/ε) on partial distortion implies: § For any metric: § ½ of pairs distortion are ≤ c log(2) = c § +¼ of pairs distortion are ≤ c log(4)= 2 c § +⅛ of pairs distortion are ≤ c log(8) = 3 c § …. § +1/n 2 of pairs distortion are ≤ 2 c log(n) § For ε<1/n 2, no pairs are ignored. Lq-distortion = Ω(min{q, log n}).

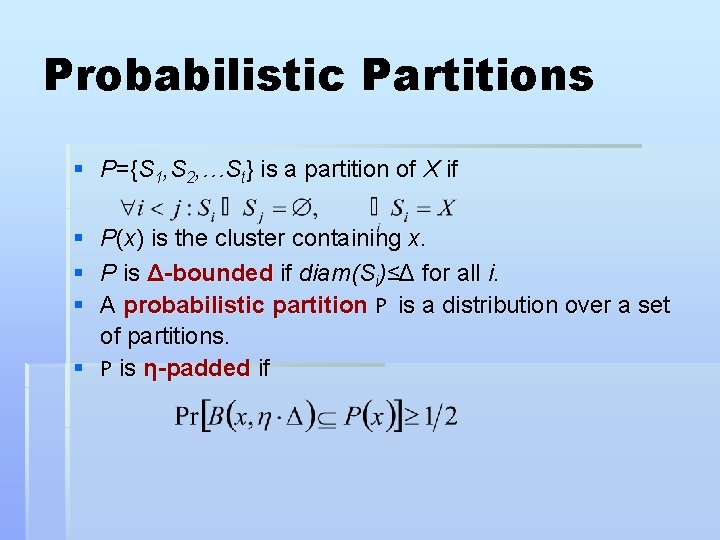

Probabilistic Partitions § P={S 1, S 2, …St} is a partition of X if § P(x) is the cluster containing x. § P is Δ-bounded if diam(Si)≤Δ for all i. § A probabilistic partition P is a distribution over a set of partitions. § P is η-padded if

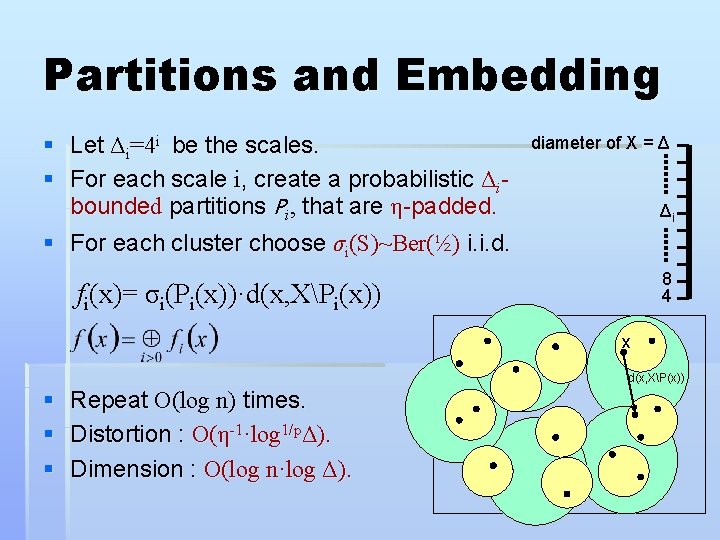

Partitions and Embedding § Let Δi=4 i be the scales. § For each scale i, create a probabilistic Δibounded partitions Pi, that are η-padded. § For each cluster choose σi(S)~Ber(½) i. i. d. diameter of X = Δ Δi 8 4 fi(x)= σi(Pi(x))·d(x, XPi(x)) x d(x, XP(x)) § § § Repeat O(log n) times. Distortion : O(η-1·log 1/pΔ). Dimension : O(log n·log Δ).

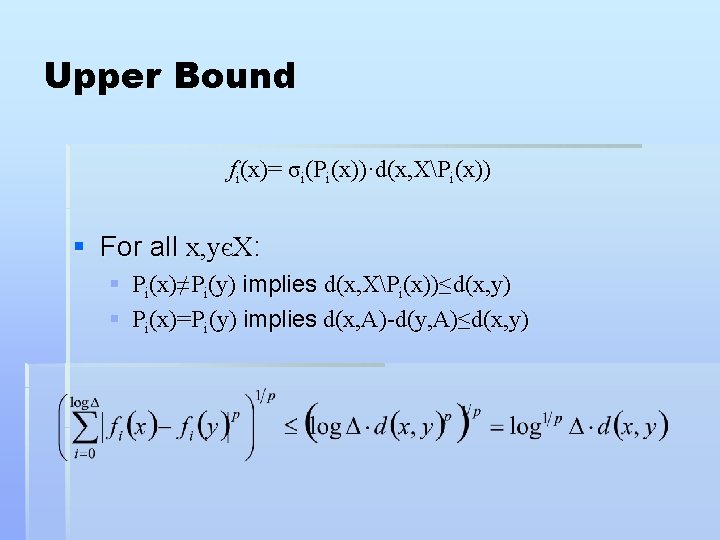

Upper Bound fi(x)= σi(Pi(x))·d(x, XPi(x)) § For all x, yєX: § Pi(x)≠Pi(y) implies d(x, XPi(x))≤d(x, y) § Pi(x)=Pi(y) implies d(x, A)-d(y, A)≤d(x, y)

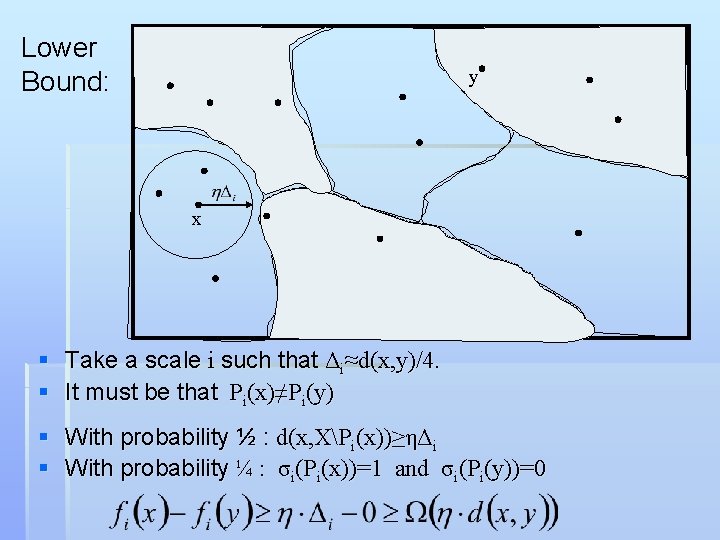

Lower Bound: y x § Take a scale i such that Δi≈d(x, y)/4. § It must be that Pi(x)≠Pi(y) § With probability ½ : d(x, XPi(x))≥ηΔi § With probability ¼ : σi(Pi(x))=1 and σi(Pi(y))=0

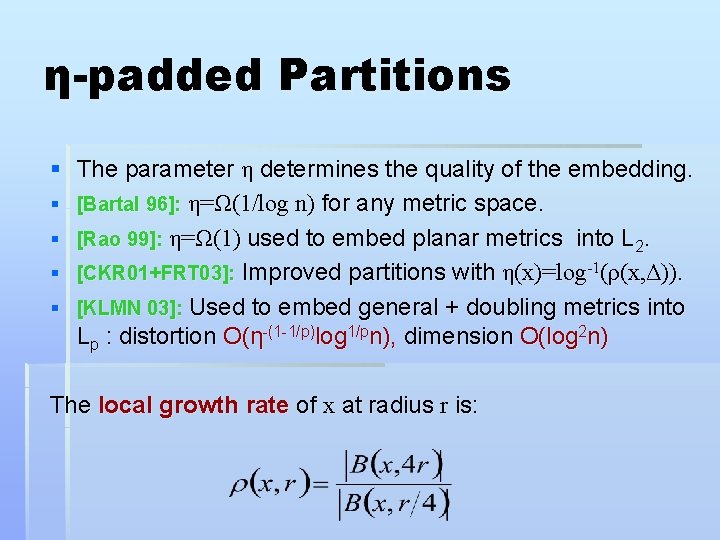

η-padded Partitions § The parameter η determines the quality of the embedding. § [Bartal 96]: η=Ω(1/log n) for any metric space. § [Rao 99]: η=Ω(1) used to embed planar metrics into L 2. § [CKR 01+FRT 03]: Improved partitions with η(x)=log-1(ρ(x, Δ)). § [KLMN 03]: Used to embed general + doubling metrics into Lp : distortion O(η-(1 -1/p)log 1/pn), dimension O(log 2 n) The local growth rate of x at radius r is:

![Uniform Probabilistic Partitions § In a Uniform Probabilistic Partition η: X→[0, 1] § All Uniform Probabilistic Partitions § In a Uniform Probabilistic Partition η: X→[0, 1] § All](http://slidetodoc.com/presentation_image_h/93a2952445e0a4989b2105ca9777635f/image-14.jpg)

Uniform Probabilistic Partitions § In a Uniform Probabilistic Partition η: X→[0, 1] § All points in a cluster have the same padding parameter. § Uniform partition lemma: There exists a uniform probabilistic Δ-bounded partition such that for any , η(x)=log-1ρ(v, Δ), where C 1 v 1 η(C 1) v 2 C 2 v 3 η(C 2)

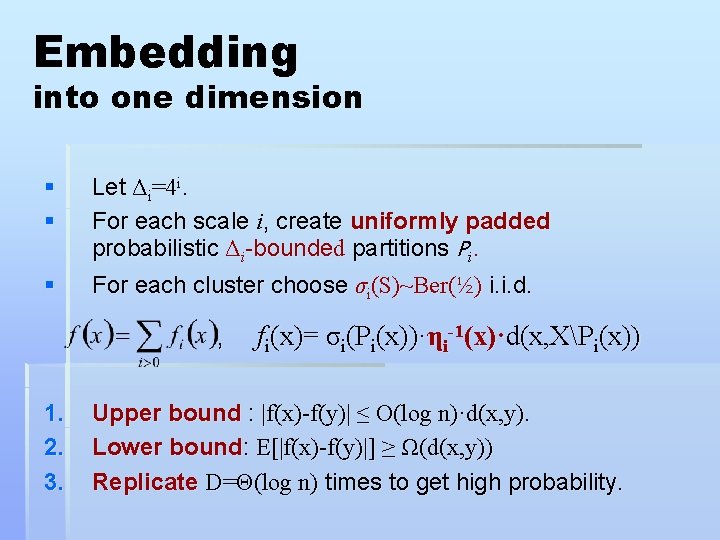

Embedding into one dimension § § Let Δi=4 i. For each scale i, create uniformly padded probabilistic Δi-bounded partitions Pi. § For each cluster choose σi(S)~Ber(½) i. i. d. , 1. 2. 3. fi(x)= σi(Pi(x))·ηi-1(x)·d(x, XPi(x)) Upper bound : |f(x)-f(y)| ≤ O(log n)·d(x, y). Lower bound: E[|f(x)-f(y)|] ≥ Ω(d(x, y)) Replicate D=Θ(log n) times to get high probability.

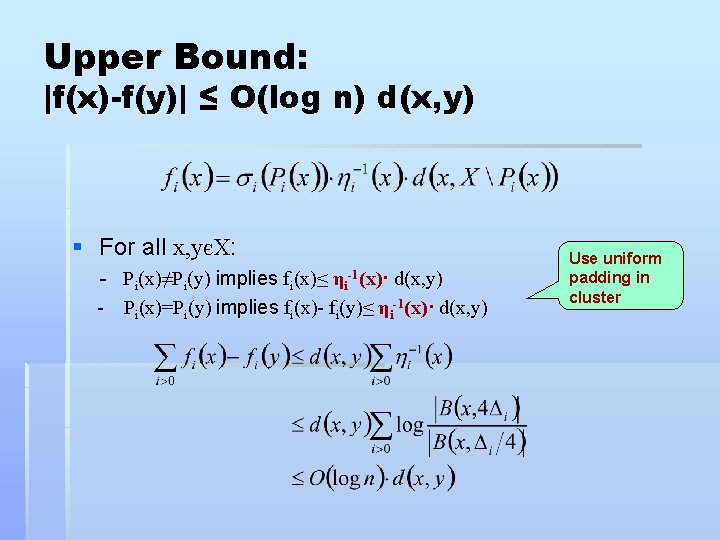

Upper Bound: |f(x)-f(y)| ≤ O(log n) d(x, y) § For all x, yєX: - Pi(x)≠Pi(y) implies fi(x)≤ d(x, y) - Pi(x)=Pi(y) implies fi(x)- fi(y)≤ ηi-1(x)· d(x, y) ηi-1(x)· Use uniform padding in cluster

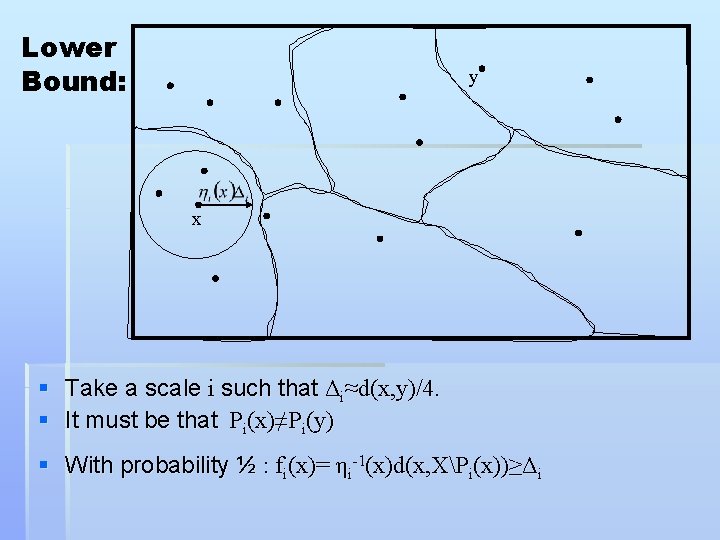

Lower Bound: y x § Take a scale i such that Δi≈d(x, y)/4. § It must be that Pi(x)≠Pi(y) § With probability ½ : fi(x)= ηi-1(x)d(x, XPi(x))≥Δi

![Lower bound : E[|f(x)-f(y)|] ≥ d(x, y) § Two cases: 1. R < Δi/2 Lower bound : E[|f(x)-f(y)|] ≥ d(x, y) § Two cases: 1. R < Δi/2](http://slidetodoc.com/presentation_image_h/93a2952445e0a4989b2105ca9777635f/image-18.jpg)

Lower bound : E[|f(x)-f(y)|] ≥ d(x, y) § Two cases: 1. R < Δi/2 then § prob. ⅛: σi(Pi(x))=1 and σi(Pi(y))=0 § Then fi(x) ≥ Δi , fi(y)=0 § |f(x)-f(y)| ≥ Δi/2 =Ω(d(x, y)). 2. R ≥ Δi/2 then 1. prob. ¼: σi(Pi(x))=0 and σi(Pi(y))=0 2. fi(x)=fi(y)=0 3. |f(x)-f(y)| ≥ Δi/2 =Ω(d(x, y)).

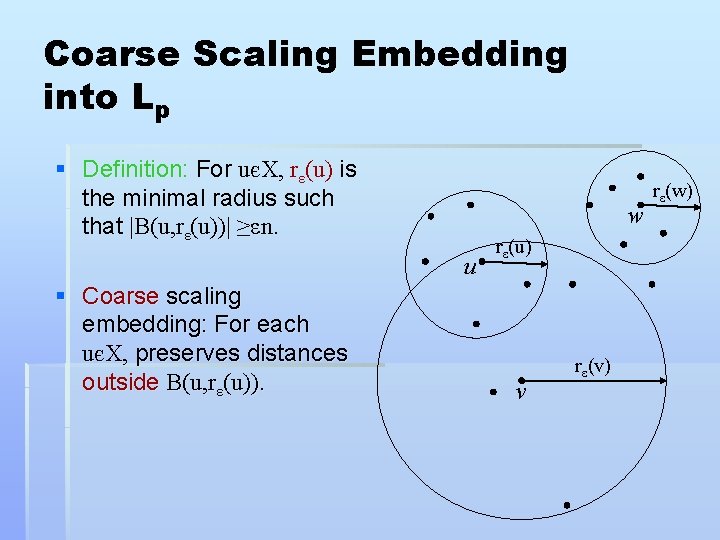

Coarse Scaling Embedding into Lp § Definition: For uєX, rε(u) is the minimal radius such that |B(u, rε(u))| ≥εn. w u § Coarse scaling embedding: For each uєX, preserves distances outside B(u, rε(u)). rε(u) v rε(v) rε(w)

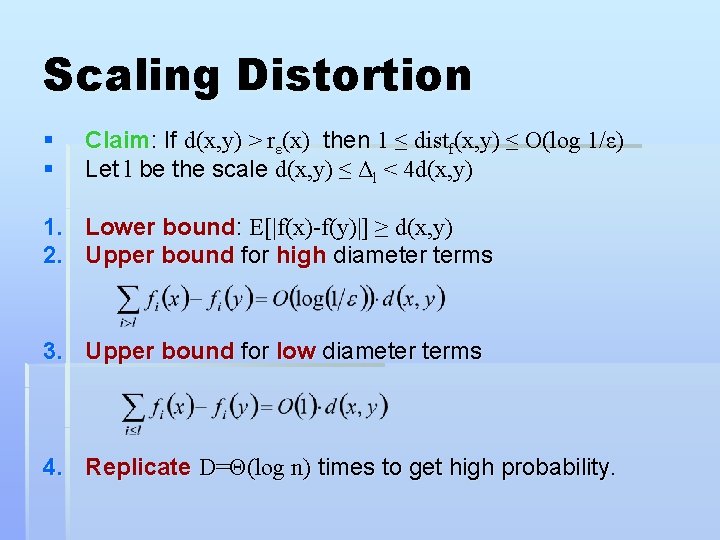

Scaling Distortion § § Claim: If d(x, y) > rε(x) then 1 ≤ distf(x, y) ≤ O(log 1/ε) Let l be the scale d(x, y) ≤ Δl < 4 d(x, y) 1. Lower bound: E[|f(x)-f(y)|] ≥ d(x, y) 2. Upper bound for high diameter terms 3. Upper bound for low diameter terms 4. Replicate D=Θ(log n) times to get high probability.

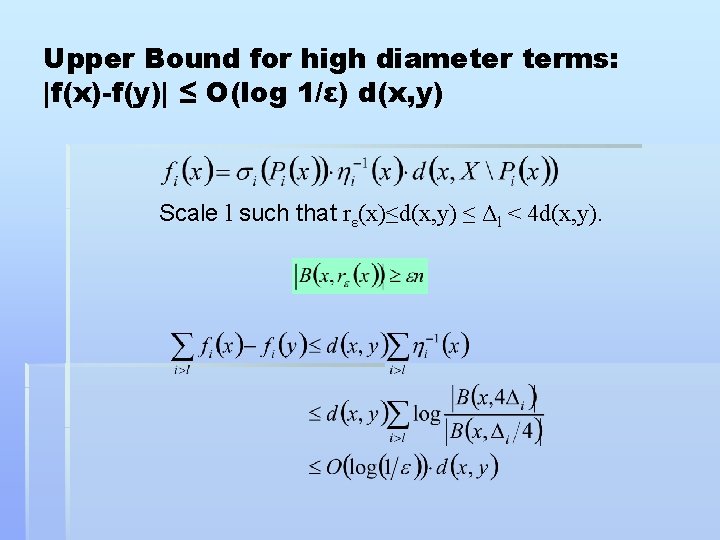

Upper Bound for high diameter terms: |f(x)-f(y)| ≤ O(log 1/ε) d(x, y) Scale l such that rε(x)≤d(x, y) ≤ Δl < 4 d(x, y).

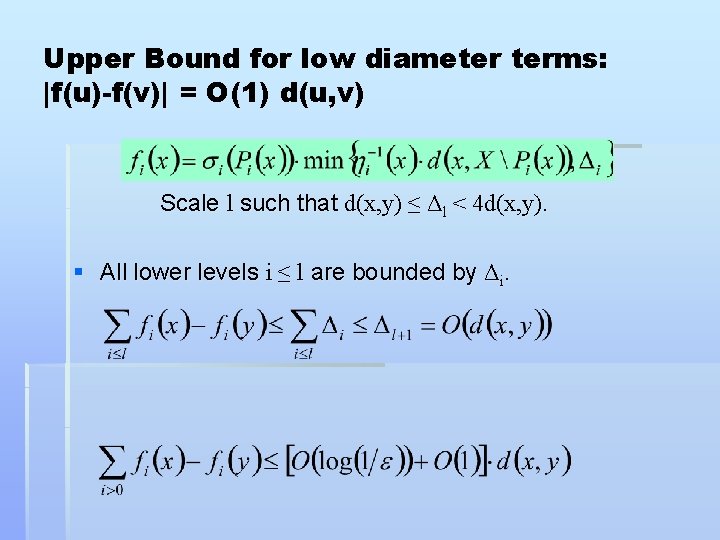

Upper Bound for low diameter terms: |f(u)-f(v)| = O(1) d(u, v) Scale l such that d(x, y) ≤ Δl < 4 d(x, y). § All lower levels i ≤ l are bounded by Δi.

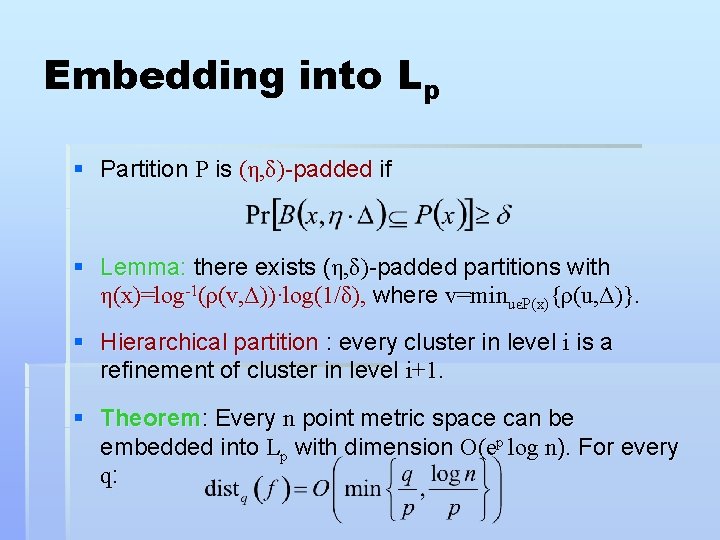

Embedding into Lp § Partition P is (η, δ)-padded if § Lemma: there exists (η, δ)-padded partitions with η(x)=log-1(ρ(v, Δ))·log(1/δ), where v=minuєP(x){ρ(u, Δ)}. § Hierarchical partition : every cluster in level i is a refinement of cluster in level i+1. § Theorem: Every n point metric space can be embedded into Lp with dimension O(ep log n). For every q:

Embedding into Lp § Embedding into Lp with scaling distortion: § Use partitions with small probability of padding : δ=e-p. § Hierarchical Uniform Partitions. § Combination with Matousek’s sampling techniques.

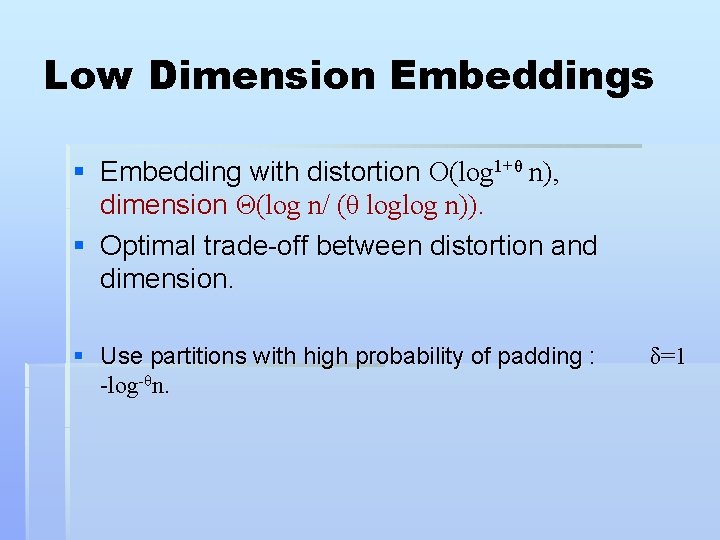

Low Dimension Embeddings § Embedding with distortion O(log 1+θ n), dimension Θ(log n/ (θ loglog n)). § Optimal trade-off between distortion and dimension. § Use partitions with high probability of padding : -log-θn. δ=1

Additional Results: Weighted Averages § Embedding with weighted average distortion O(log Ψ) for weights with aspect ratio Ψ § Algorithmic applications: § § § Sparsest cut, Uncapacitated quadratic assignment, Multiple sequence alignment.

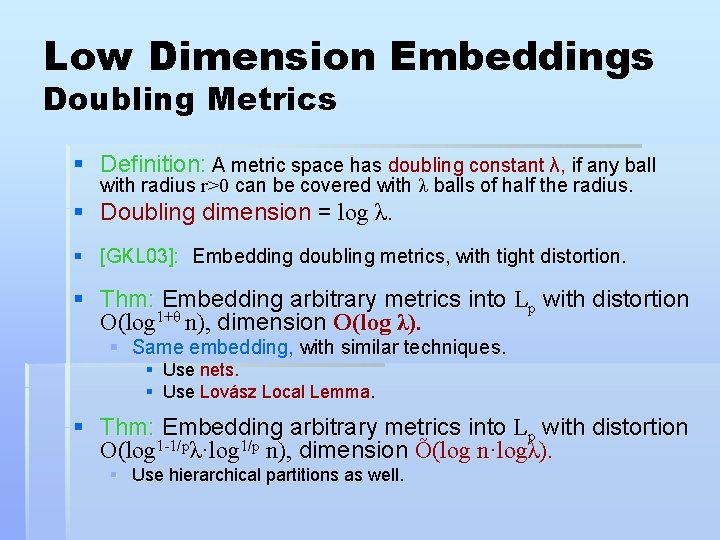

Low Dimension Embeddings Doubling Metrics § Definition: A metric space has doubling constant λ, if any ball with radius r>0 can be covered with λ balls of half the radius. § Doubling dimension = log λ. § [GKL 03]: Embedding doubling metrics, with tight distortion. § Thm: Embedding arbitrary metrics into Lp with distortion O(log 1+θ n), dimension O(log λ). § Same embedding, with similar techniques. § Use nets. § Use Lovász Local Lemma. § Thm: Embedding arbitrary metrics into Lp with distortion O(log 1 -1/pλ·log 1/p n), dimension Õ(log n·logλ). § Use hierarchical partitions as well.

![Scaling Distortion into trees § [A+ 05]: Probabilistic Embedding into a distribution of ultrametrics Scaling Distortion into trees § [A+ 05]: Probabilistic Embedding into a distribution of ultrametrics](http://slidetodoc.com/presentation_image_h/93a2952445e0a4989b2105ca9777635f/image-28.jpg)

Scaling Distortion into trees § [A+ 05]: Probabilistic Embedding into a distribution of ultrametrics with scaling distortion O(log(1/ε)). § Thm: Embedding into an ultrametric with scaling distortion. § Thm: Every graph contains a spanning tree with scaling distortion. § Imply : § Average distortion = O(1). § L 2 -distortion = § Can be viewed as a network design objective. § Thm: Probabilistic Embedding into a distribution of spanning trees with scaling distortion Õ(log 2(1/ε)).

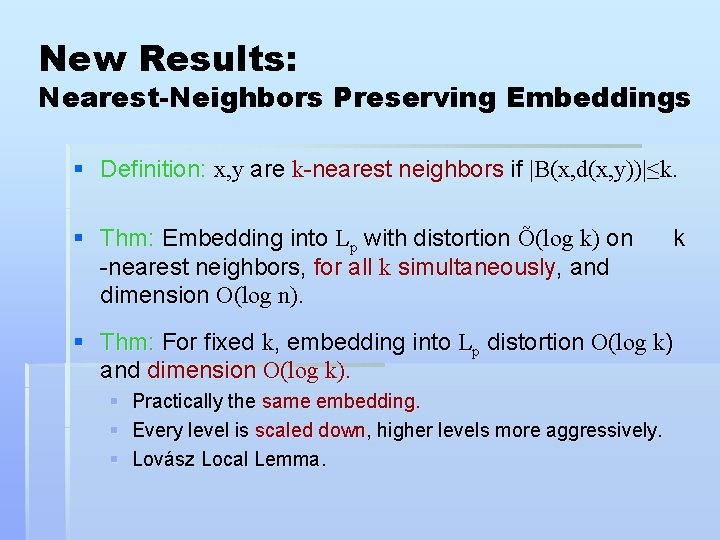

New Results: Nearest-Neighbors Preserving Embeddings § Definition: x, y are k-nearest neighbors if |B(x, d(x, y))|≤k. § Thm: Embedding into Lp with distortion Õ(log k) on -nearest neighbors, for all k simultaneously, and dimension O(log n). k § Thm: For fixed k, embedding into Lp distortion O(log k) and dimension O(log k). § Practically the same embedding. § Every level is scaled down, higher levels more aggressively. § Lovász Local Lemma.

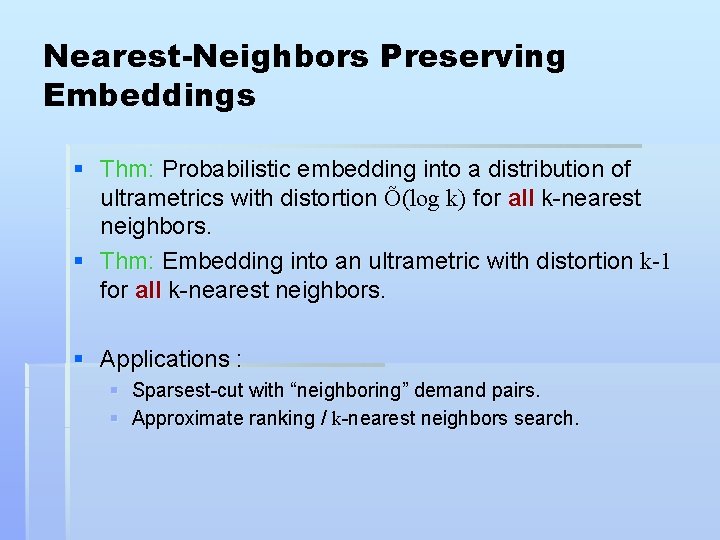

Nearest-Neighbors Preserving Embeddings § Thm: Probabilistic embedding into a distribution of ultrametrics with distortion Õ(log k) for all k-nearest neighbors. § Thm: Embedding into an ultrametric with distortion k-1 for all k-nearest neighbors. § Applications : § Sparsest-cut with “neighboring” demand pairs. § Approximate ranking / k-nearest neighbors search.

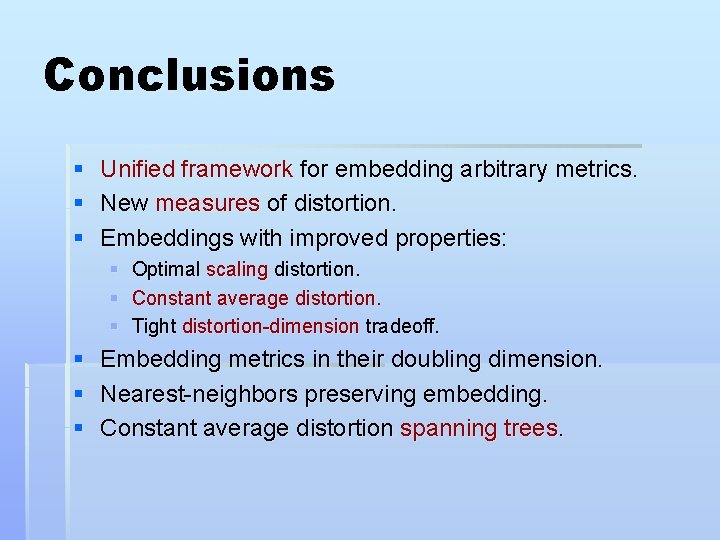

Conclusions § § § Unified framework for embedding arbitrary metrics. New measures of distortion. Embeddings with improved properties: § § § Optimal scaling distortion. Constant average distortion. Tight distortion-dimension tradeoff. § Embedding metrics in their doubling dimension. § Nearest-neighbors preserving embedding. § Constant average distortion spanning trees.

- Slides: 31