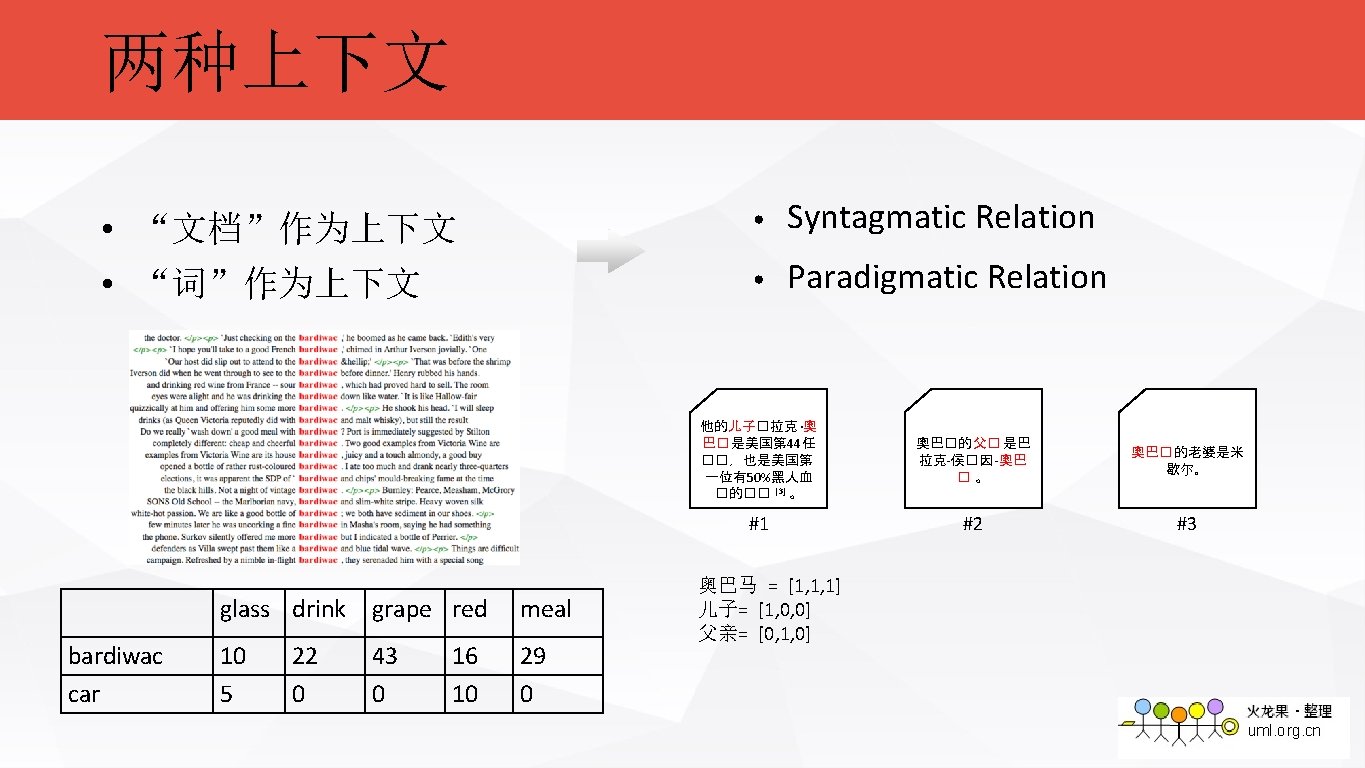

Word Embedding Paradigmatic vs Syntagmatic Relation You like

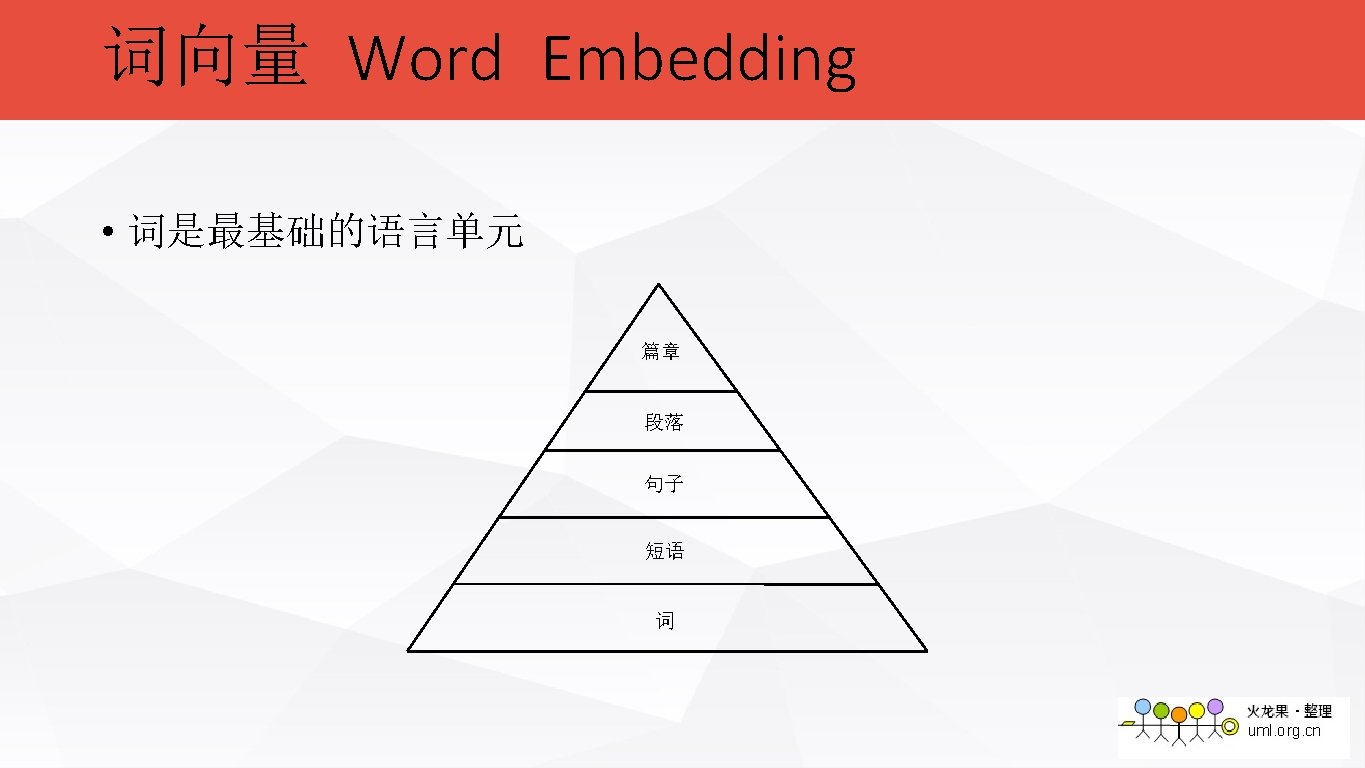

Word Embedding

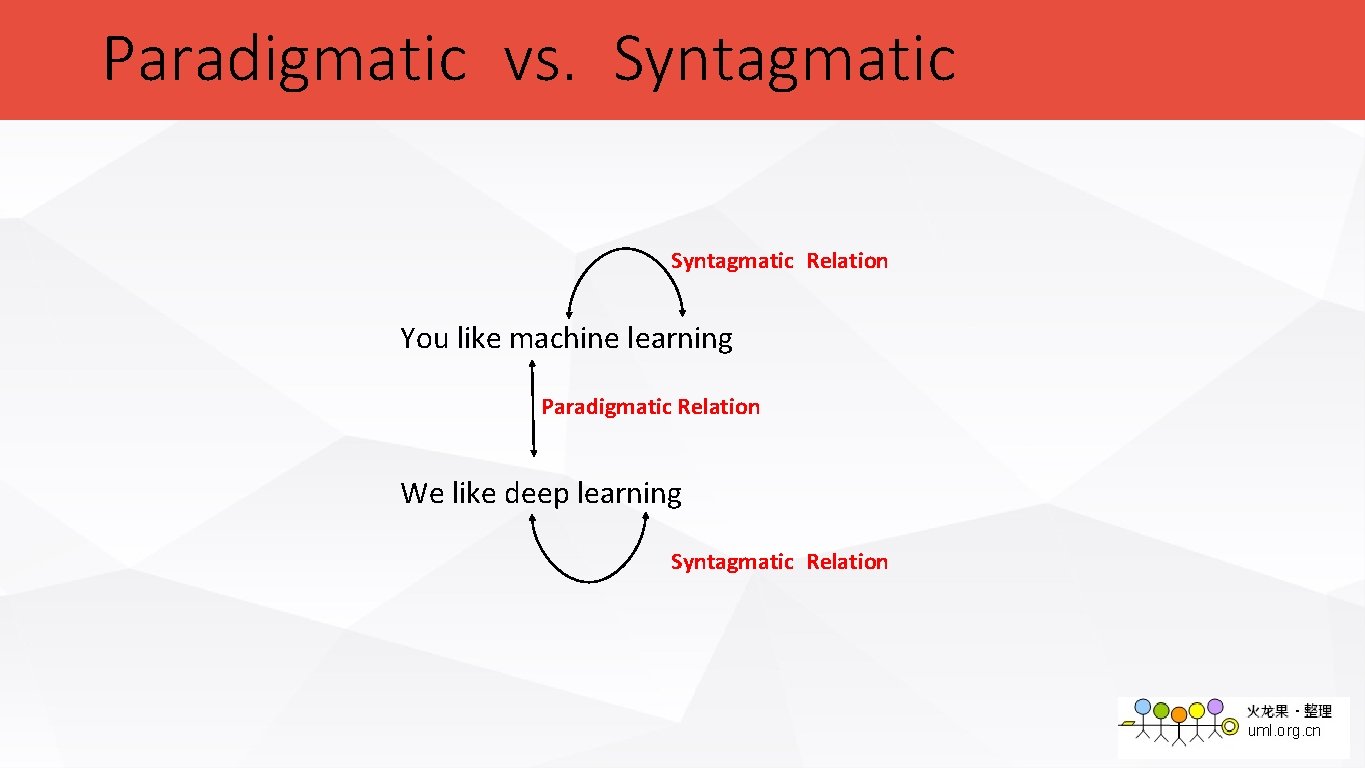

Paradigmatic vs. Syntagmatic Relation You like machine learning Paradigmatic Relation We like deep learning Syntagmatic Relation

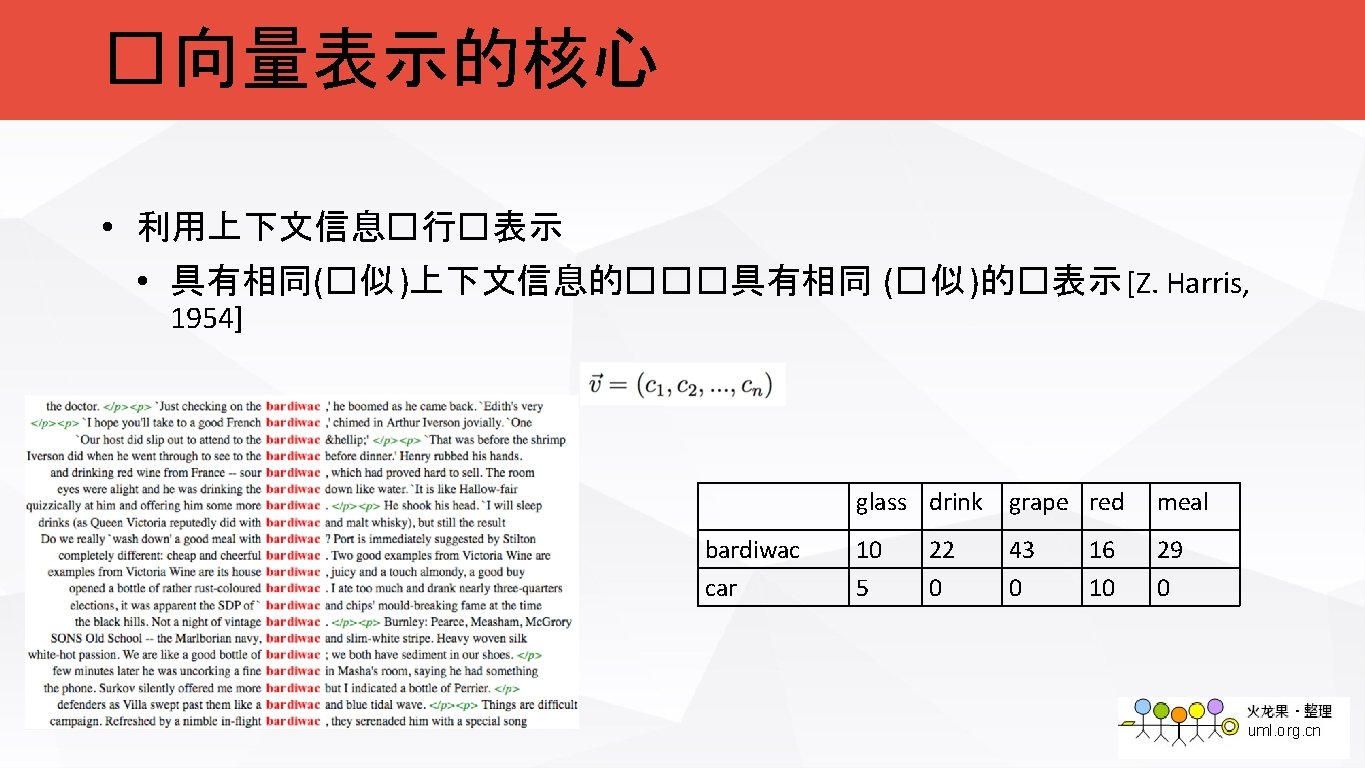

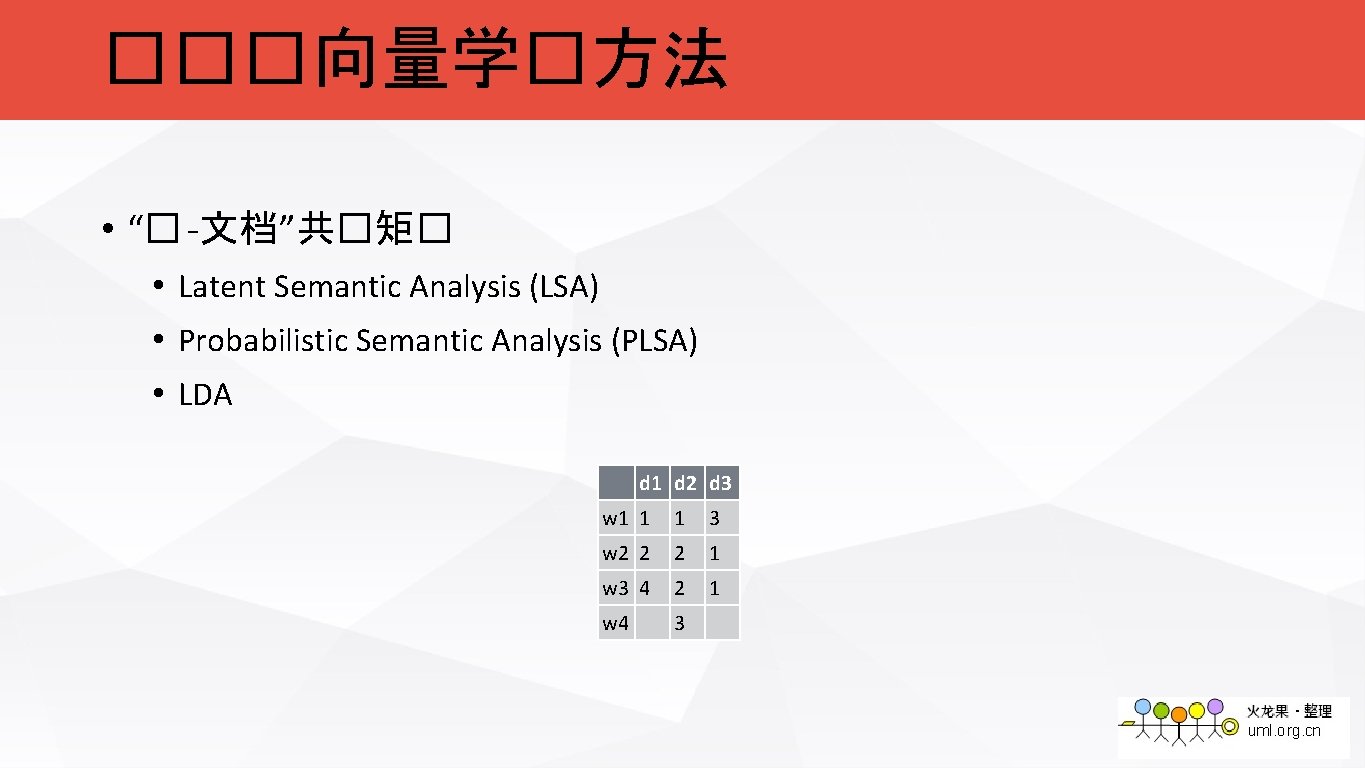

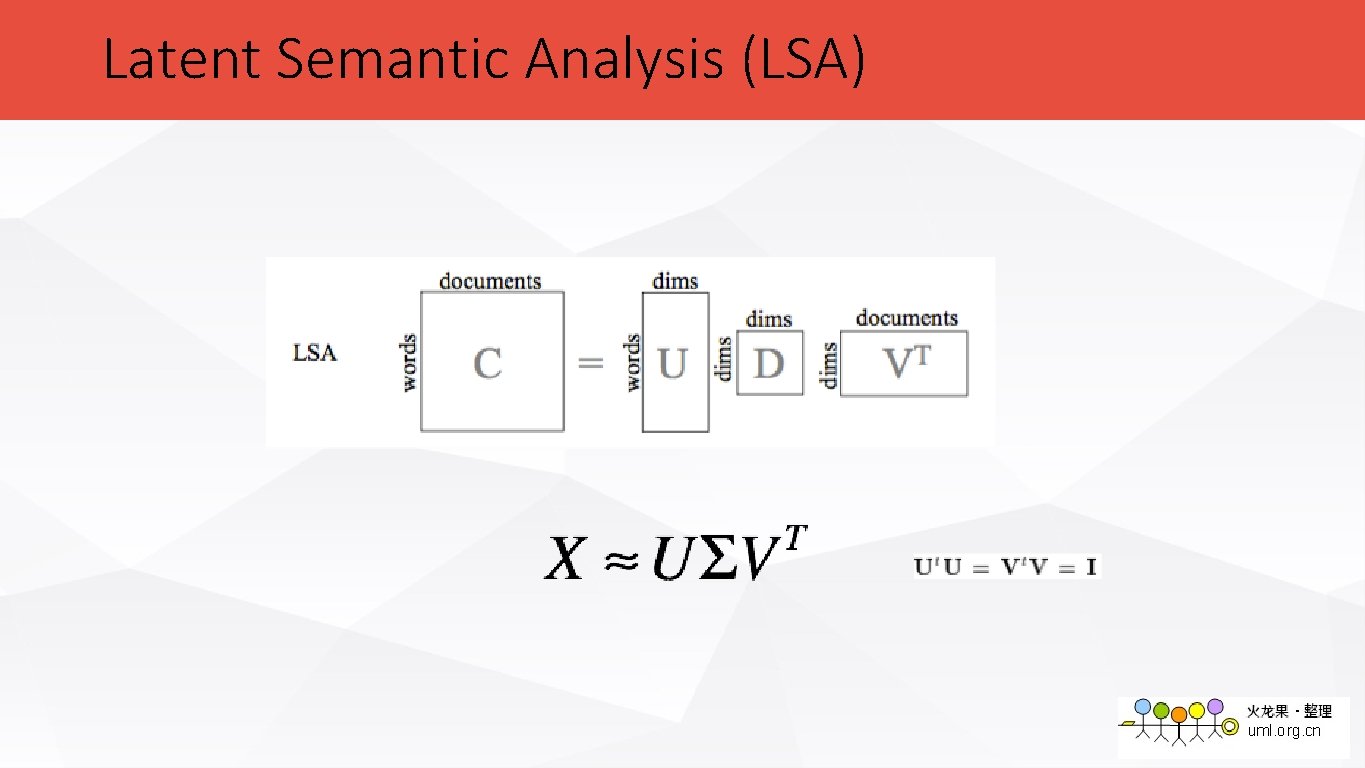

���向量学�方法 • “� -文档”共�矩� • Latent Semantic Analysis (LSA) • Probabilistic Semantic Analysis (PLSA) • LDA d 1 d 2 d 3 w 1 1 1 3 w 2 2 2 1 w 3 4 2 1 w 4 3

Latent Semantic Analysis (LSA)

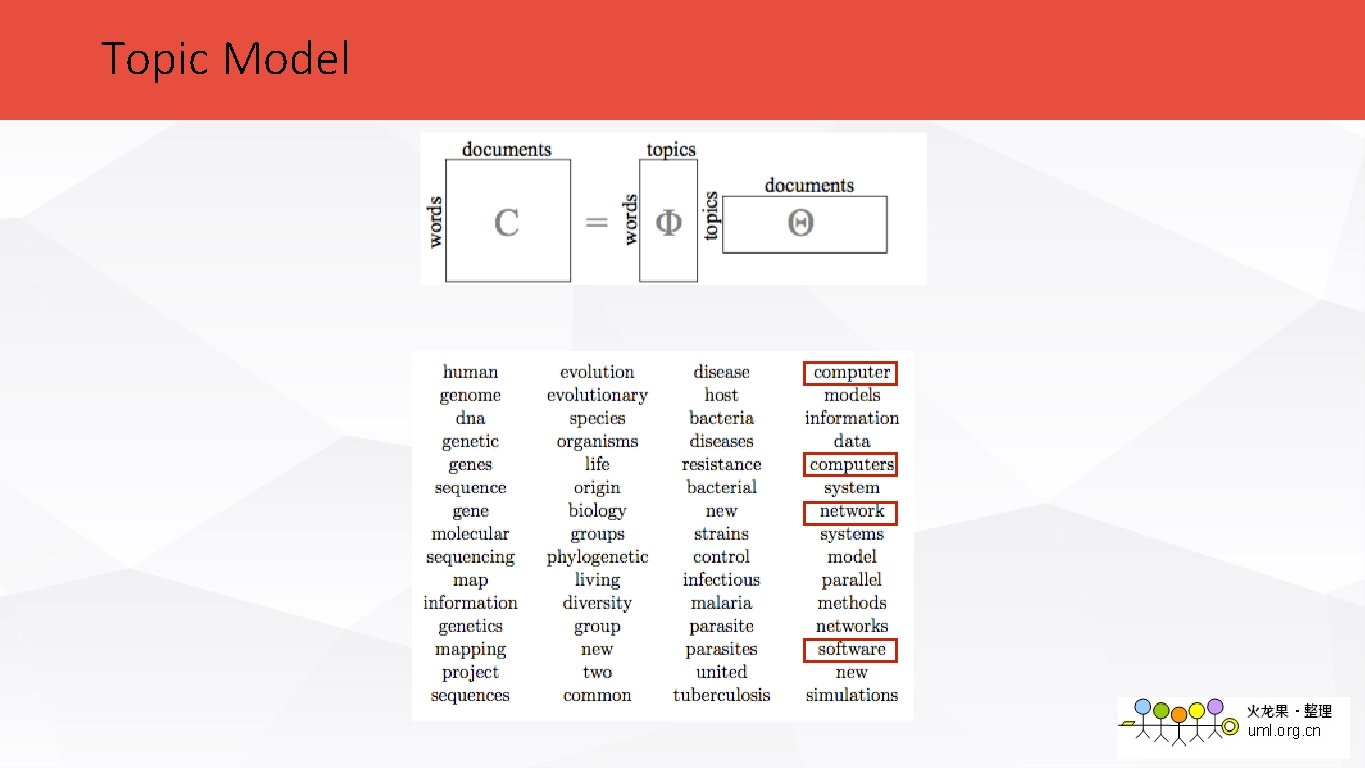

Topic Model

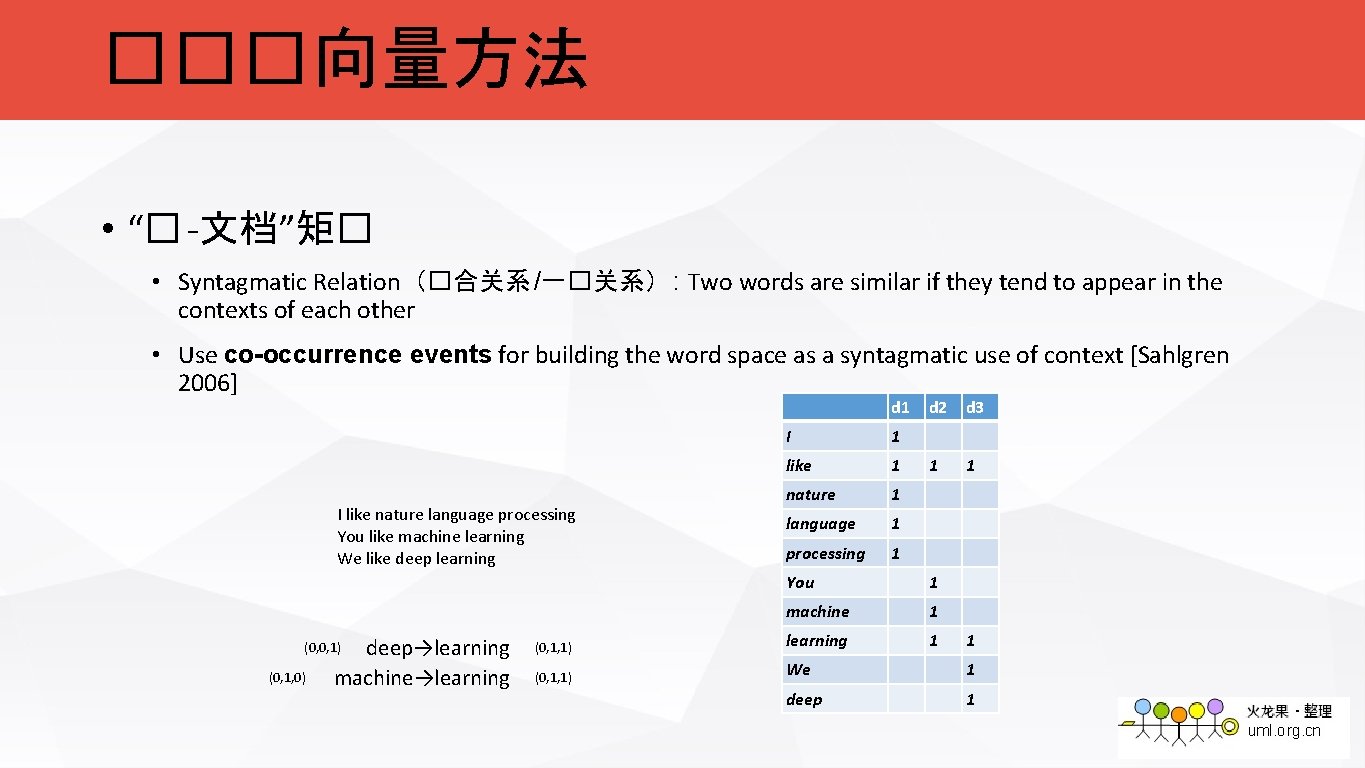

���向量方法 • “� -文档”矩� • Syntagmatic Relation(�合关系 /一�关系) : Two words are similar if they tend to appear in the contexts of each other • Use co-occurrence events for building the word space as a syntagmatic use of context [Sahlgren 2006] d 1 I like nature language processing You like machine learning We like deep learning deep→learning machine→learning (0, 0, 1) (0, 1, 0) I 1 like 1 nature 1 language 1 processing 1 d 2 d 3 1 1 You 1 machine 1 (0, 1, 1) learning 1 (0, 1, 1) We 1 deep 1 1

![���向量方法 • “� -� ”共�矩� • Brown Clustering [Brown et al. 1992] • Hyperspace ���向量方法 • “� -� ”共�矩� • Brown Clustering [Brown et al. 1992] • Hyperspace](http://slidetodoc.com/presentation_image_h2/3f932abd0109d43fbd540d1f65c6bf9c/image-16.jpg)

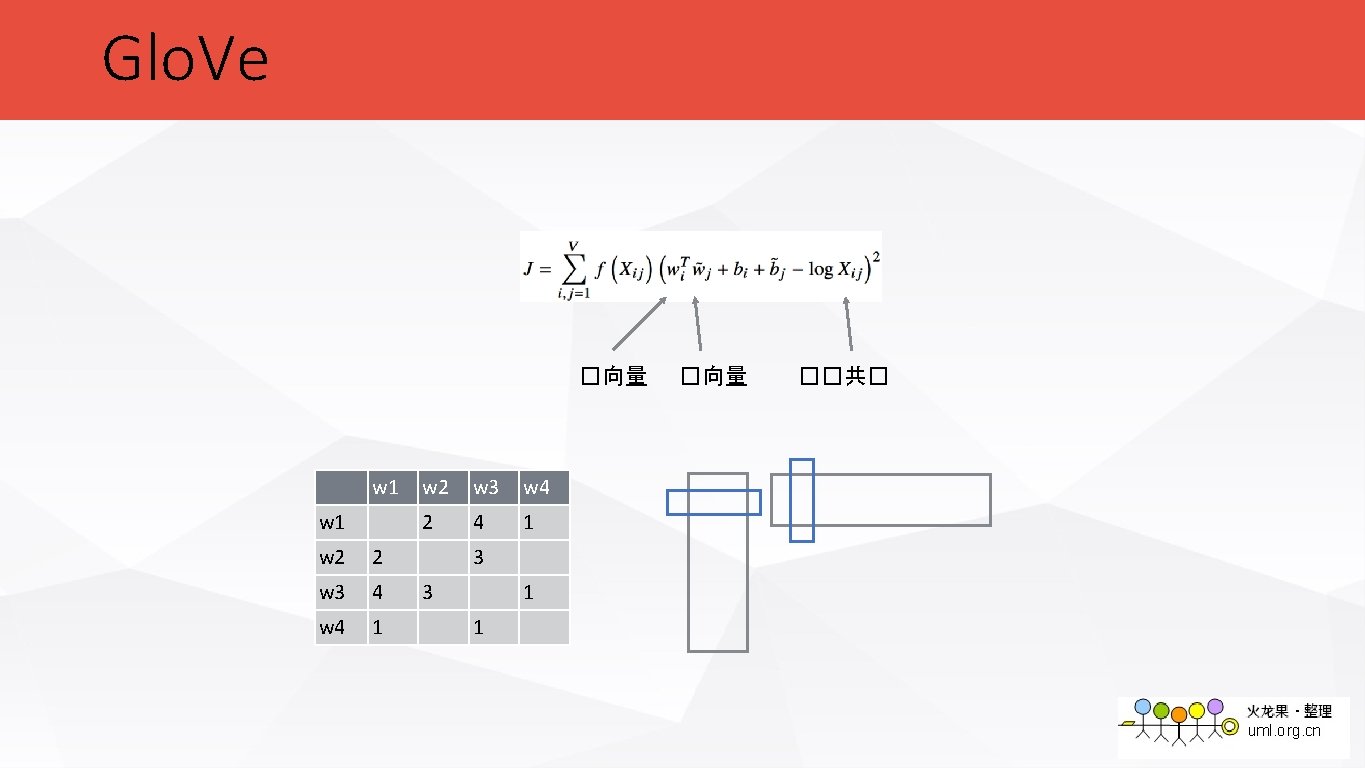

���向量方法 • “� -� ”共�矩� • Brown Clustering [Brown et al. 1992] • Hyperspace Analogue to Language, HAL [Lund et al. 1996] • Glo. Ve [Pennington et al 2014] w 1 I like nature language processing You like machine learning We like deep learning w 1 w 2 2 w 3 4 w 4 1 w 2 w 3 w 4 2 4 1 3 3 1 1

Glo. Ve �向量 w 1 w 2 2 w 3 4 w 4 1 w 2 w 3 w 4 2 4 1 3 3 1 1 �向量 ��共�

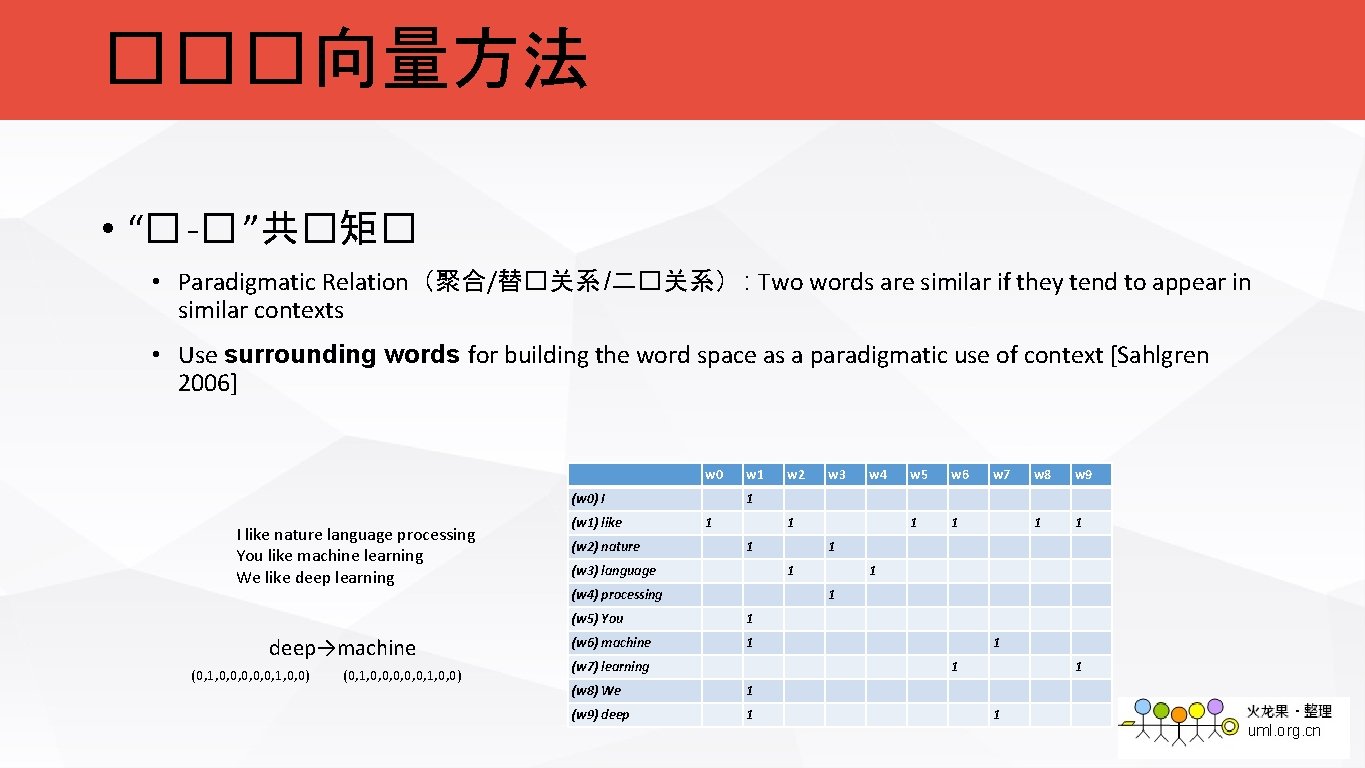

���向量方法 • “� -� ”共�矩� • Paradigmatic Relation(聚合/替�关系 /二�关系) : Two words are similar if they tend to appear in similar contexts • Use surrounding words for building the word space as a paradigmatic use of context [Sahlgren 2006] w 0 (w 0) I I like nature language processing You like machine learning We like deep learning deep→machine (0, 1, 0, 0, 0, 1, 0, 0) (w 1) like (w 2) nature w 1 w 2 w 3 w 4 w 5 w 6 1 1 w 7 w 8 w 9 1 1 1 (w 3) language 1 1 (w 4) processing 1 1 (w 5) You 1 (w 6) machine 1 (w 7) learning 1 1 (w 8) We 1 (w 9) deep 1 1 1

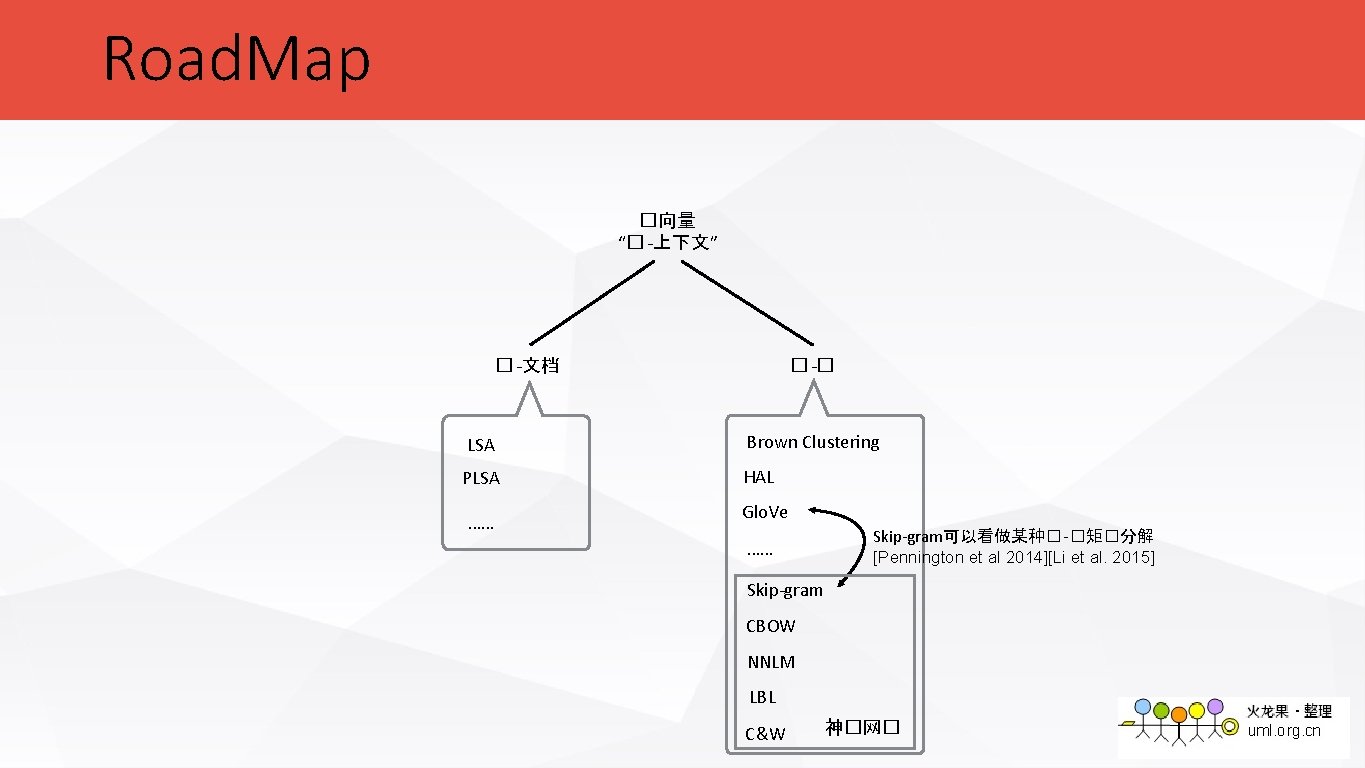

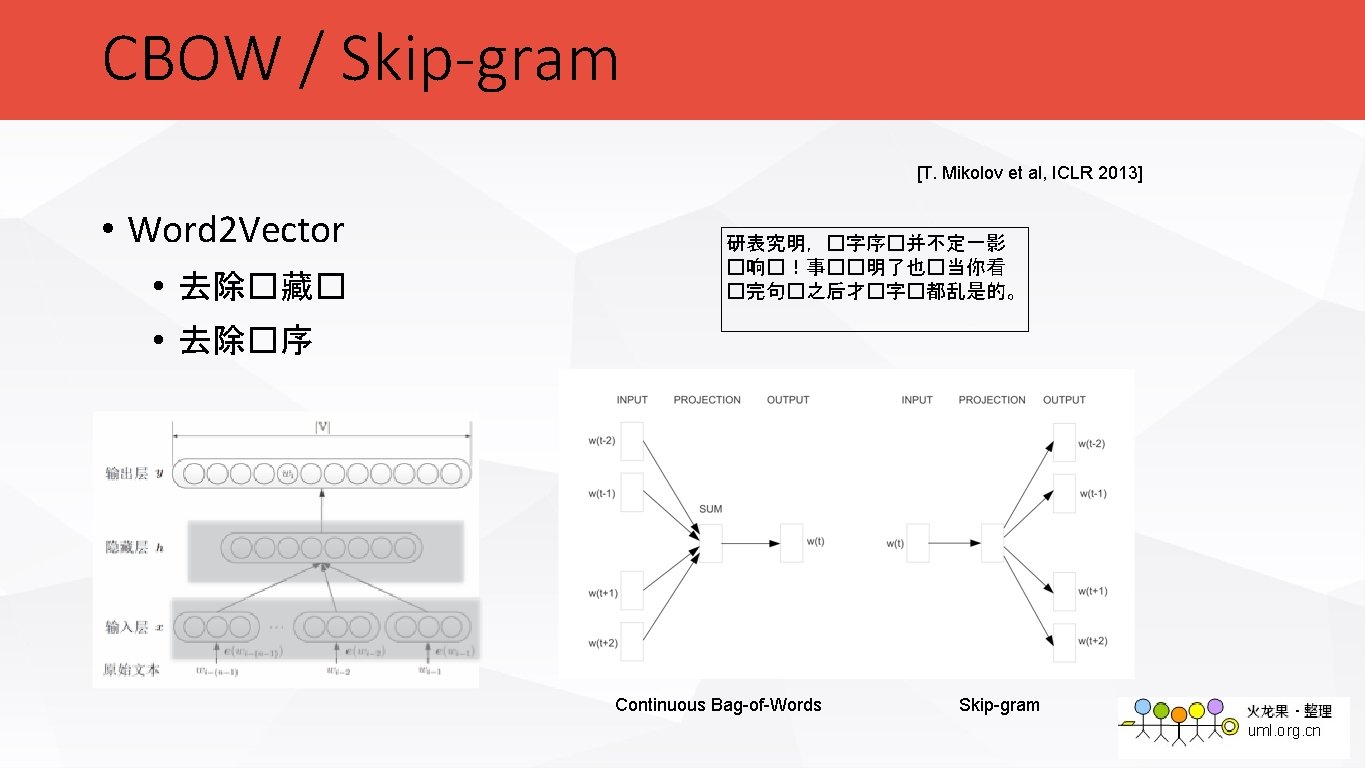

Road. Map �向量 “� -上下文” � -文档 � -� LSA Brown Clustering PLSA HAL …… Glo. Ve …… Skip-gram可以看做某种� -�矩�分解 [Pennington et al 2014][Li et al. 2015] Skip-gram CBOW NNLM LBL C&W 神�网�

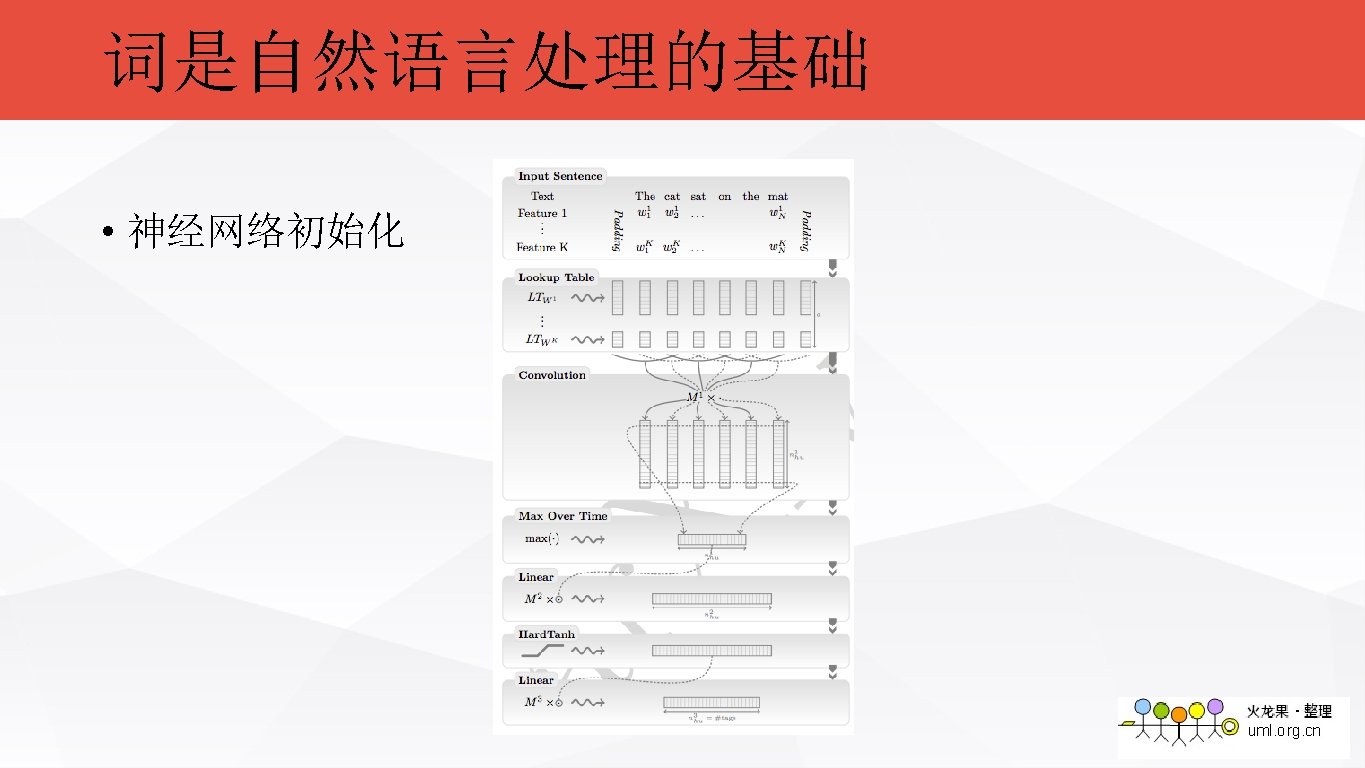

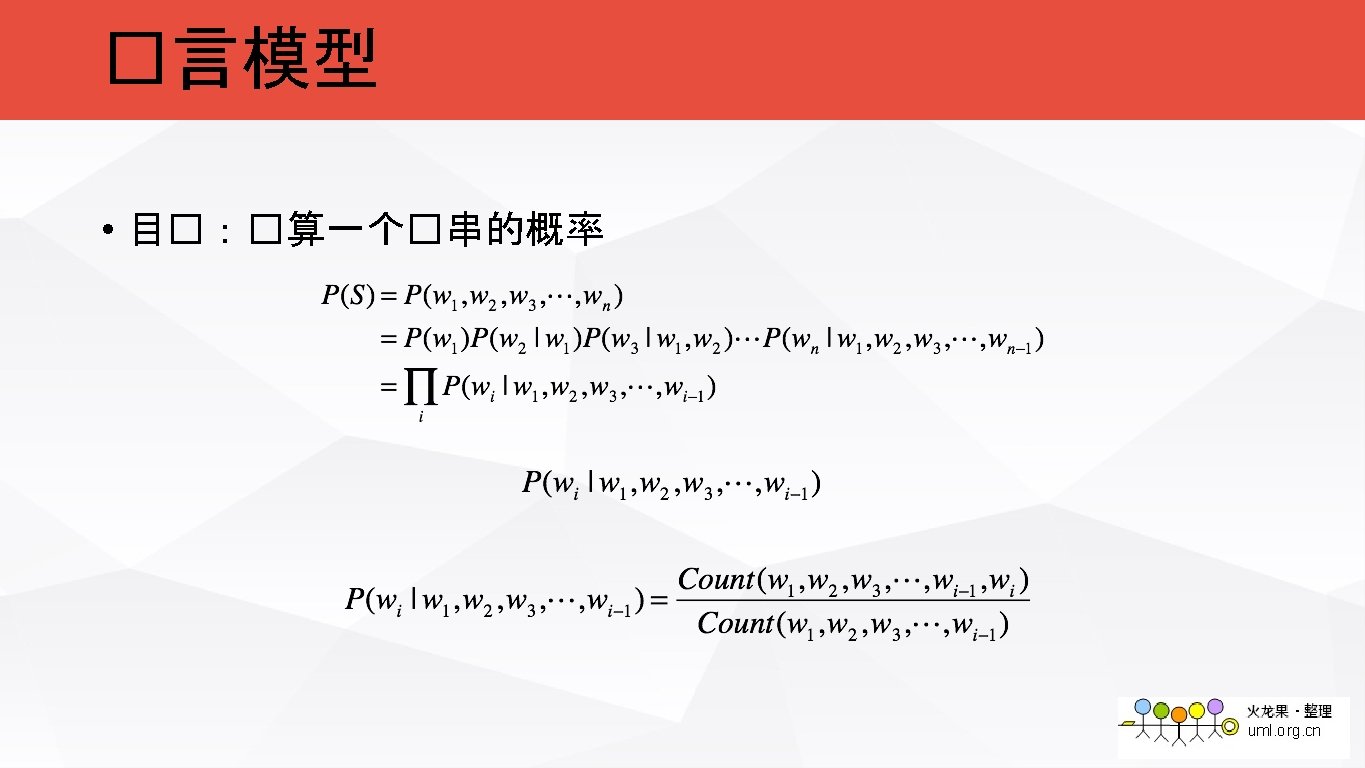

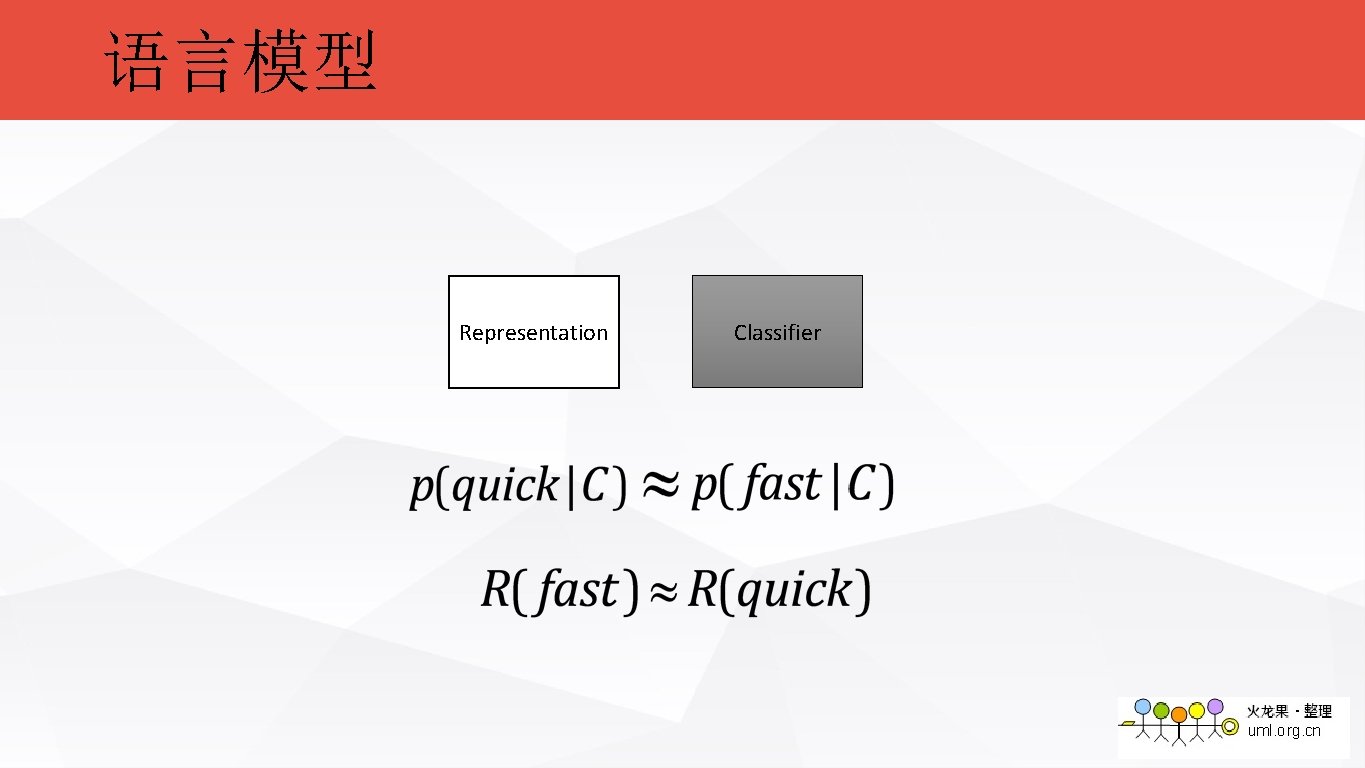

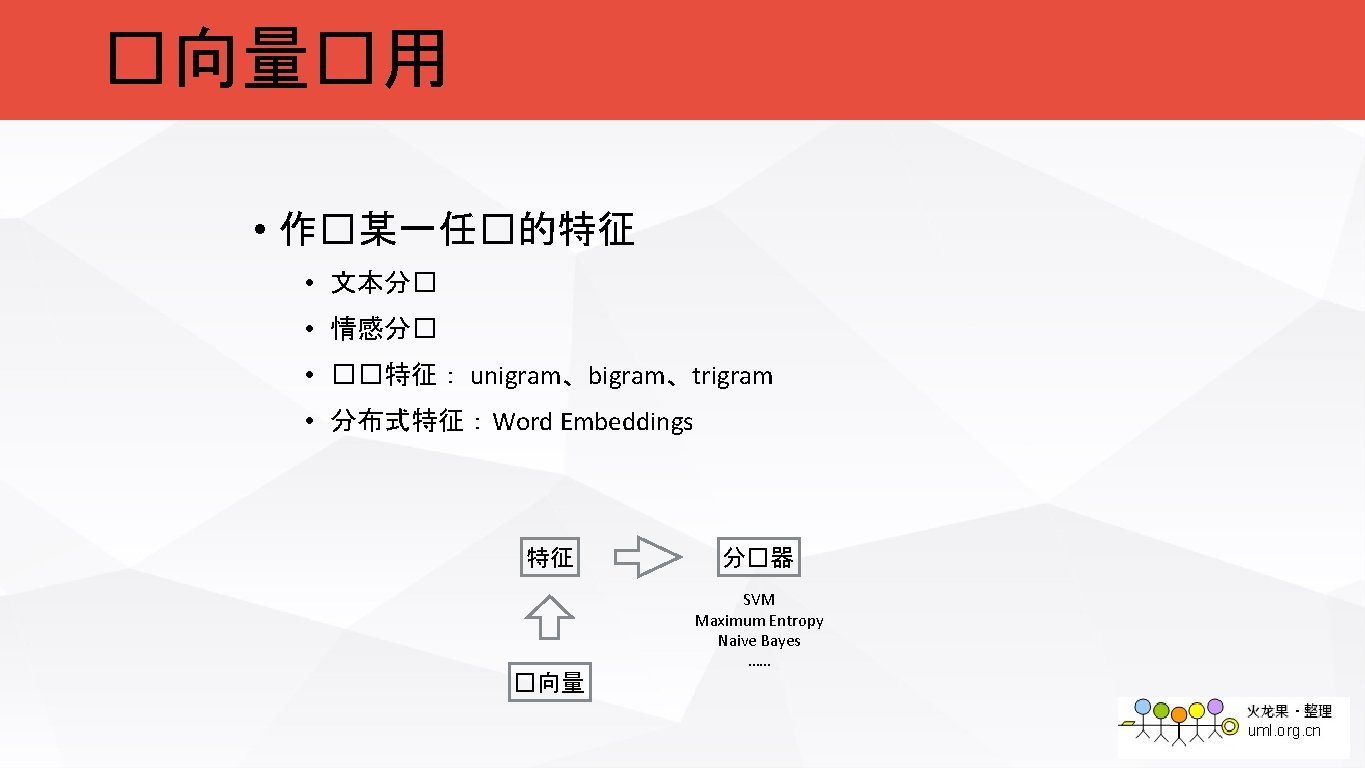

语言模型 Representation Classifier

![NNLM • Neural Network Language Model [Y. Bengio et al. 2003] NNLM • Neural Network Language Model [Y. Bengio et al. 2003]](http://slidetodoc.com/presentation_image_h2/3f932abd0109d43fbd540d1f65c6bf9c/image-26.jpg)

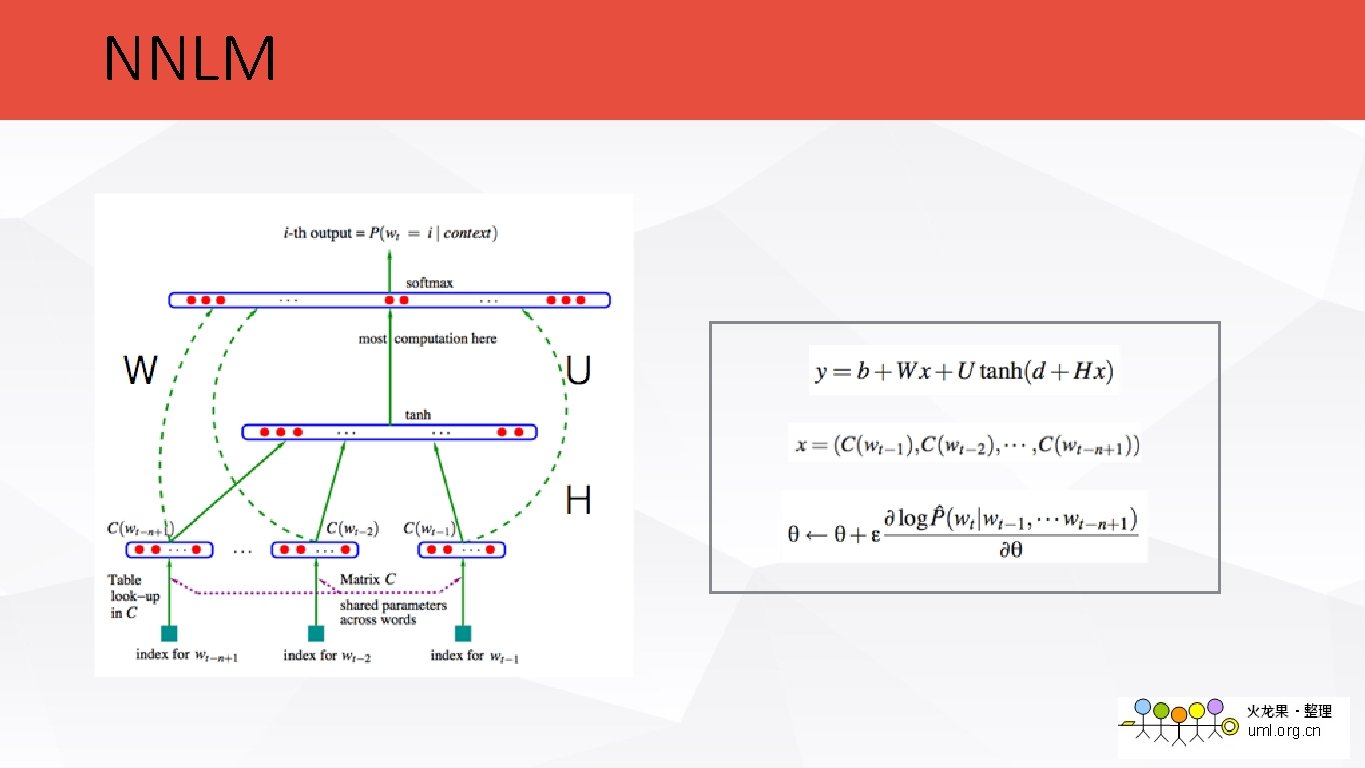

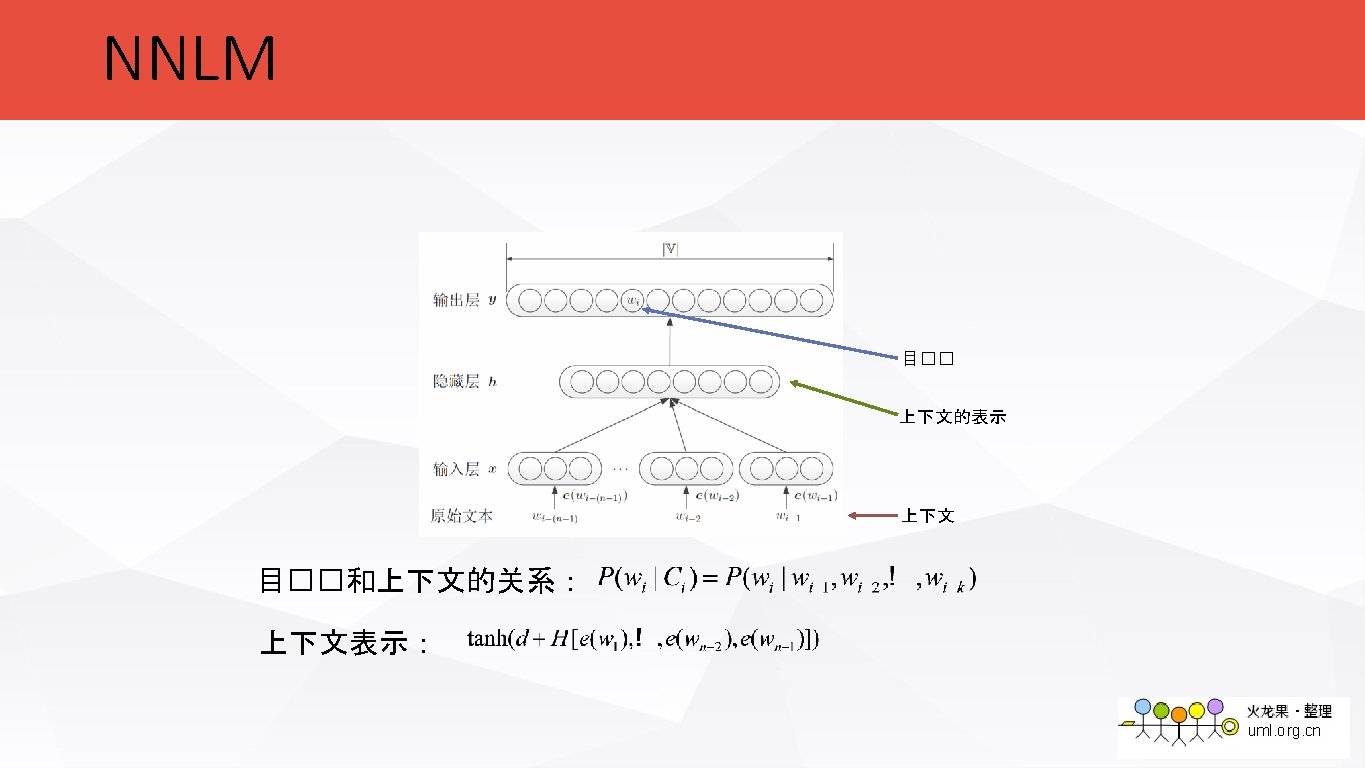

NNLM • Neural Network Language Model [Y. Bengio et al. 2003]

NNLM

![LBL • Log-bilinear Language Model[A. Mnih & G. Hinton, 2007] �向量矩� ��表 LBL • Log-bilinear Language Model[A. Mnih & G. Hinton, 2007] �向量矩� ��表](http://slidetodoc.com/presentation_image_h2/3f932abd0109d43fbd540d1f65c6bf9c/image-28.jpg)

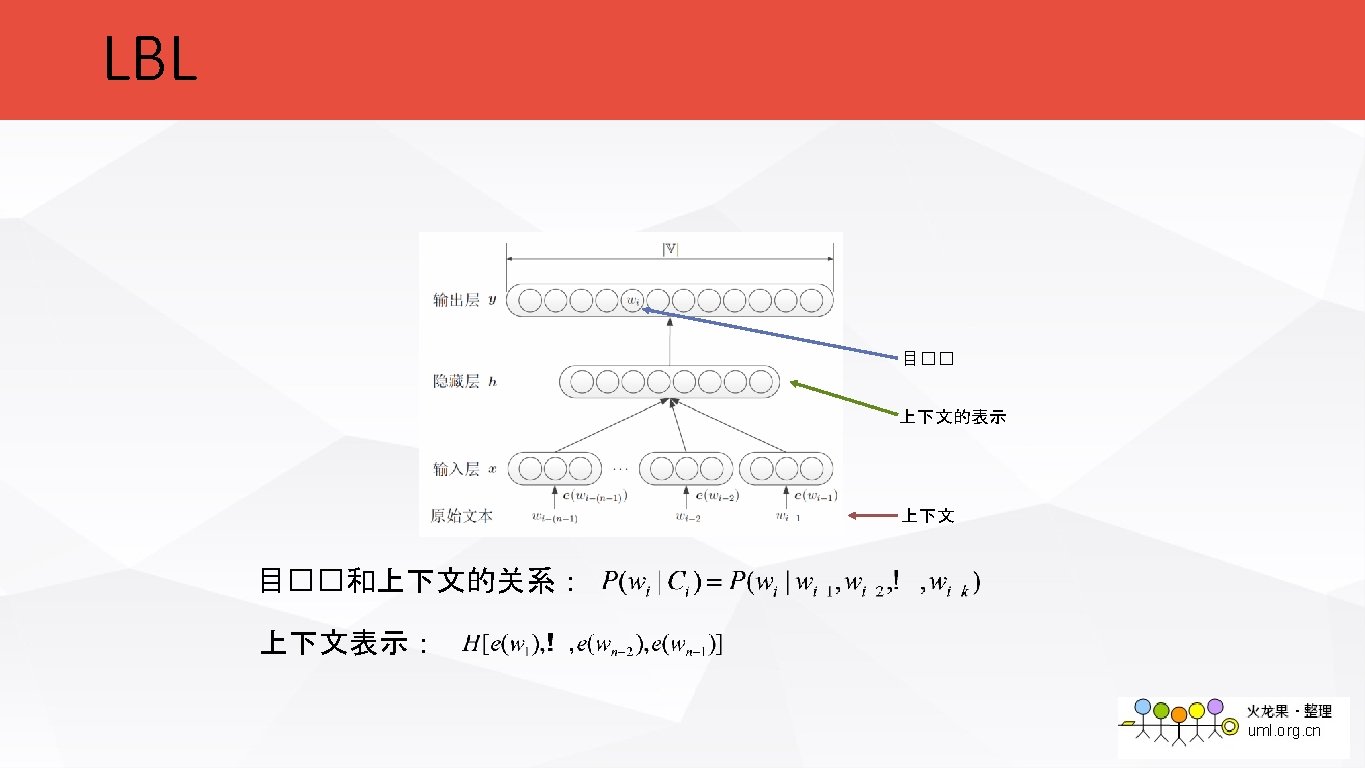

LBL • Log-bilinear Language Model[A. Mnih & G. Hinton, 2007] �向量矩� ��表

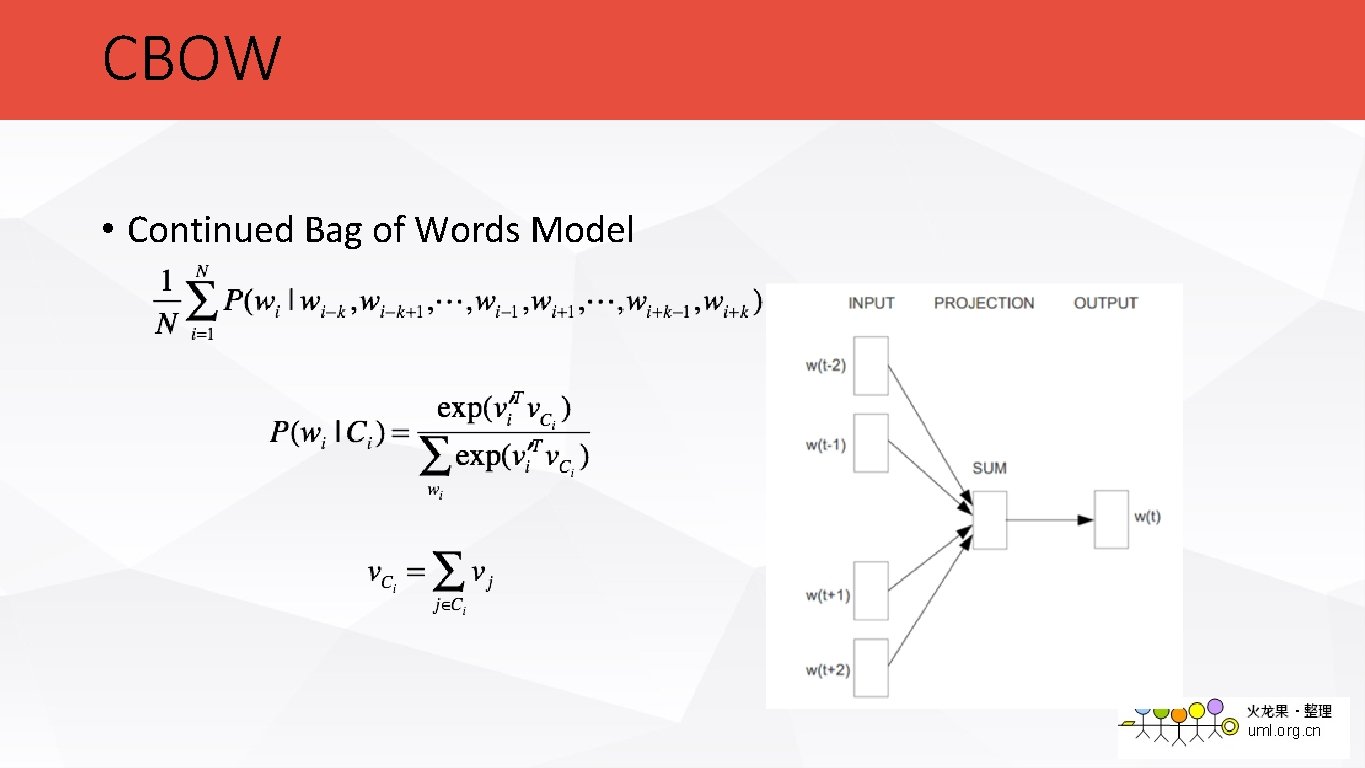

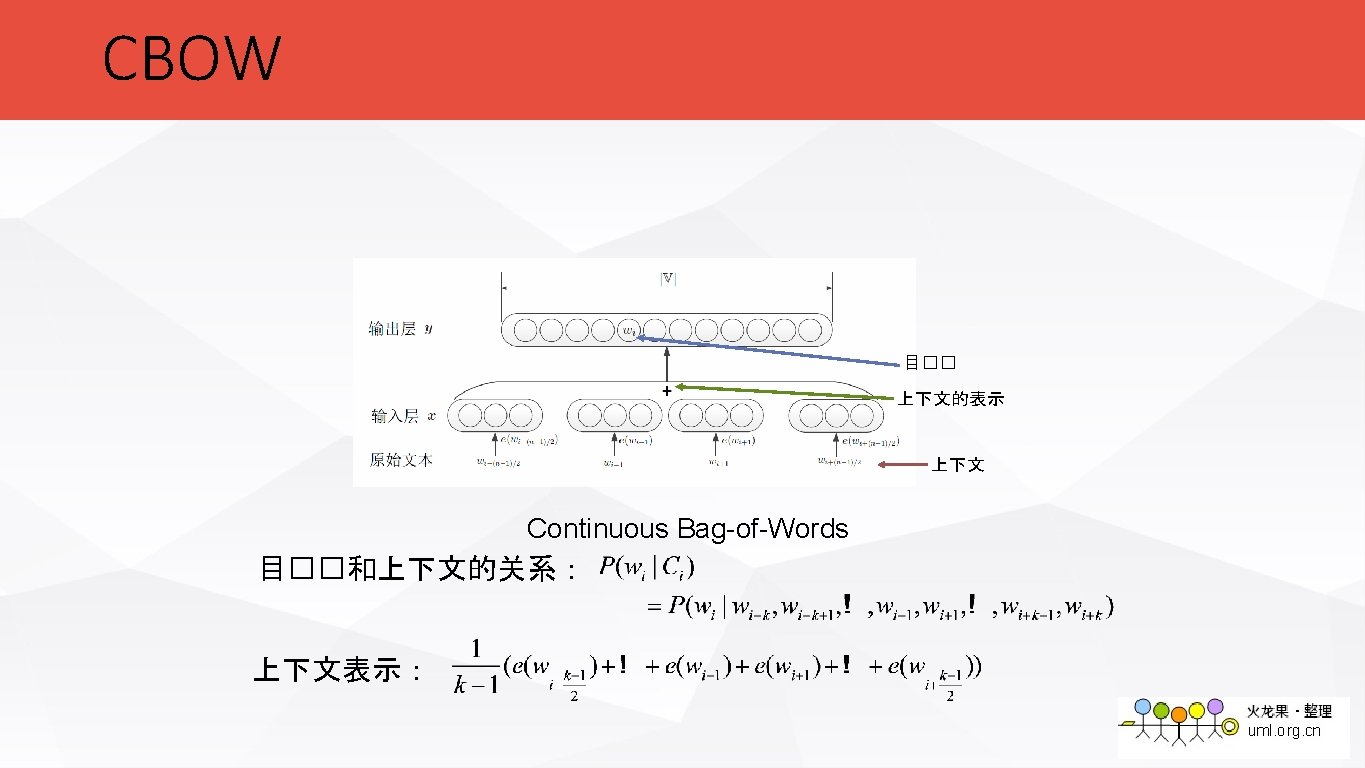

CBOW • Continued Bag of Words Model

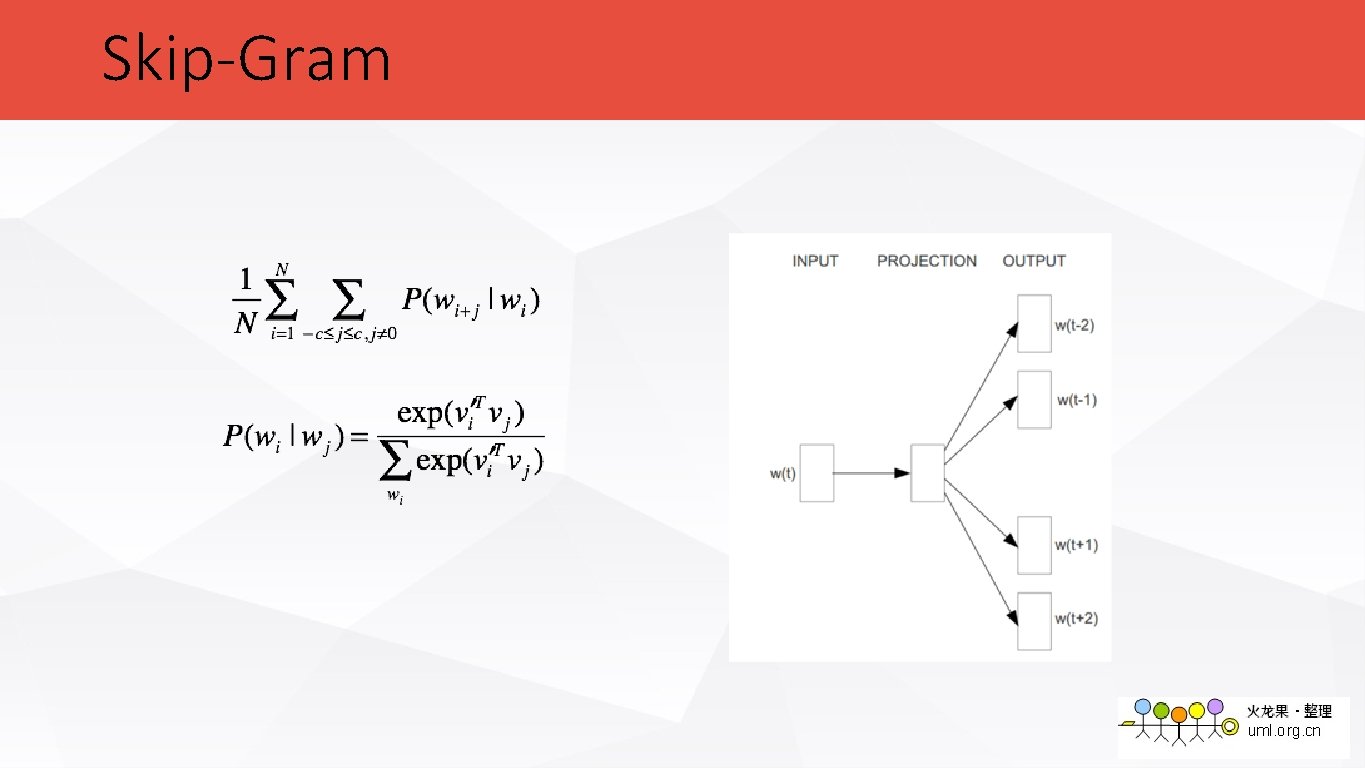

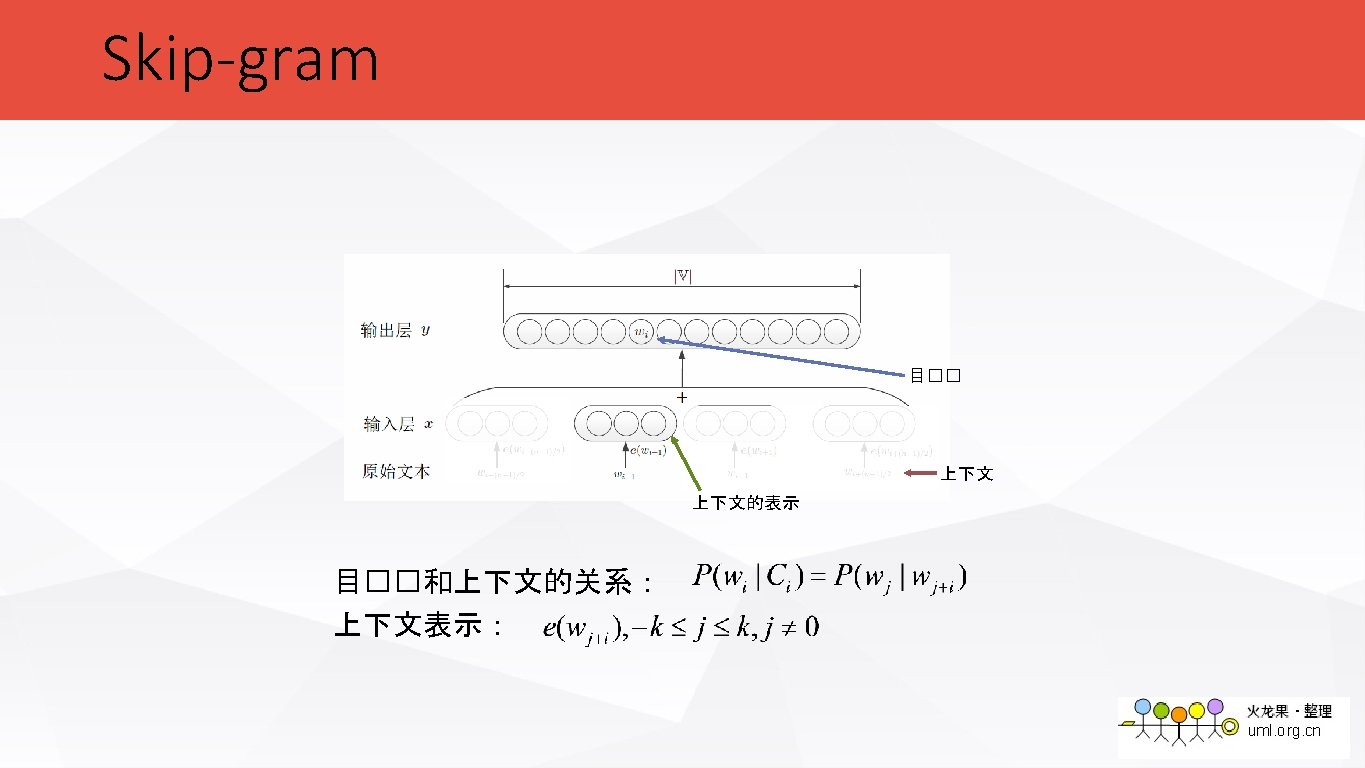

Skip-Gram

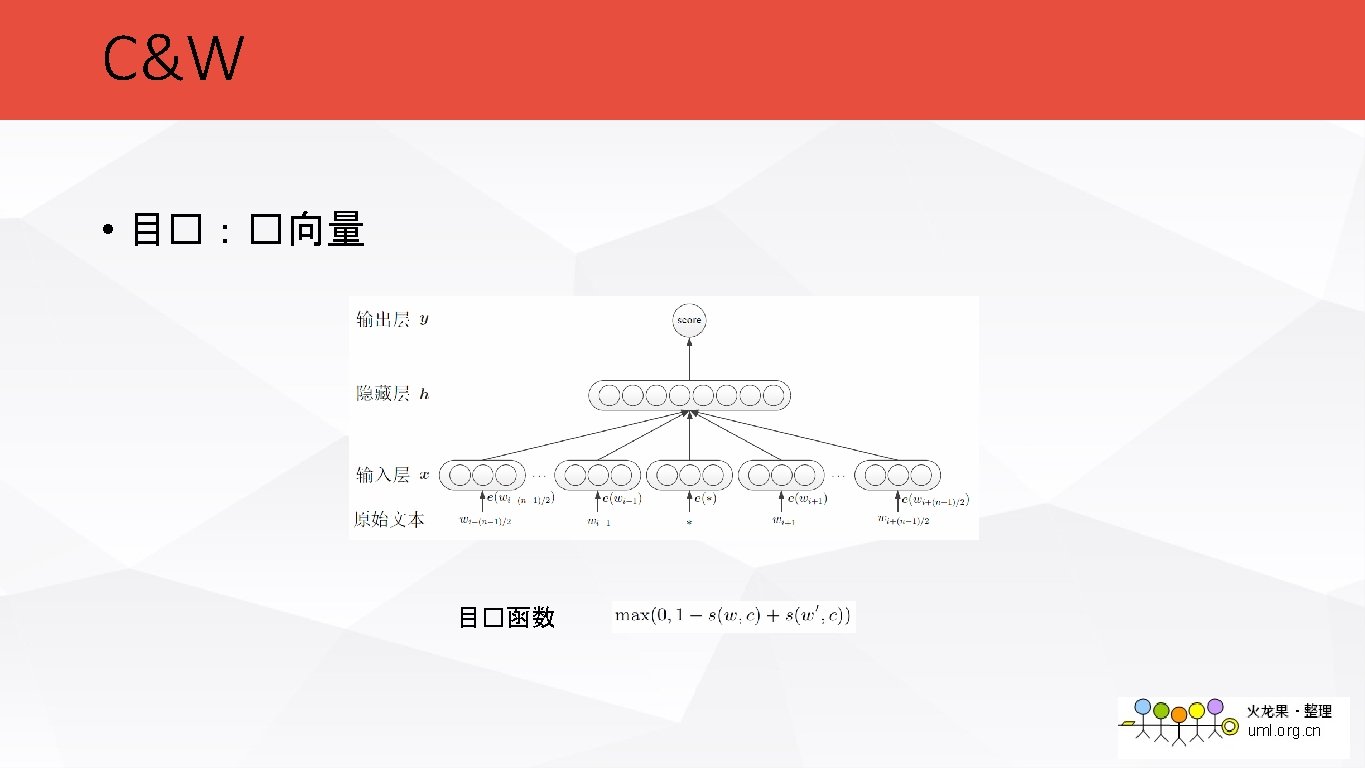

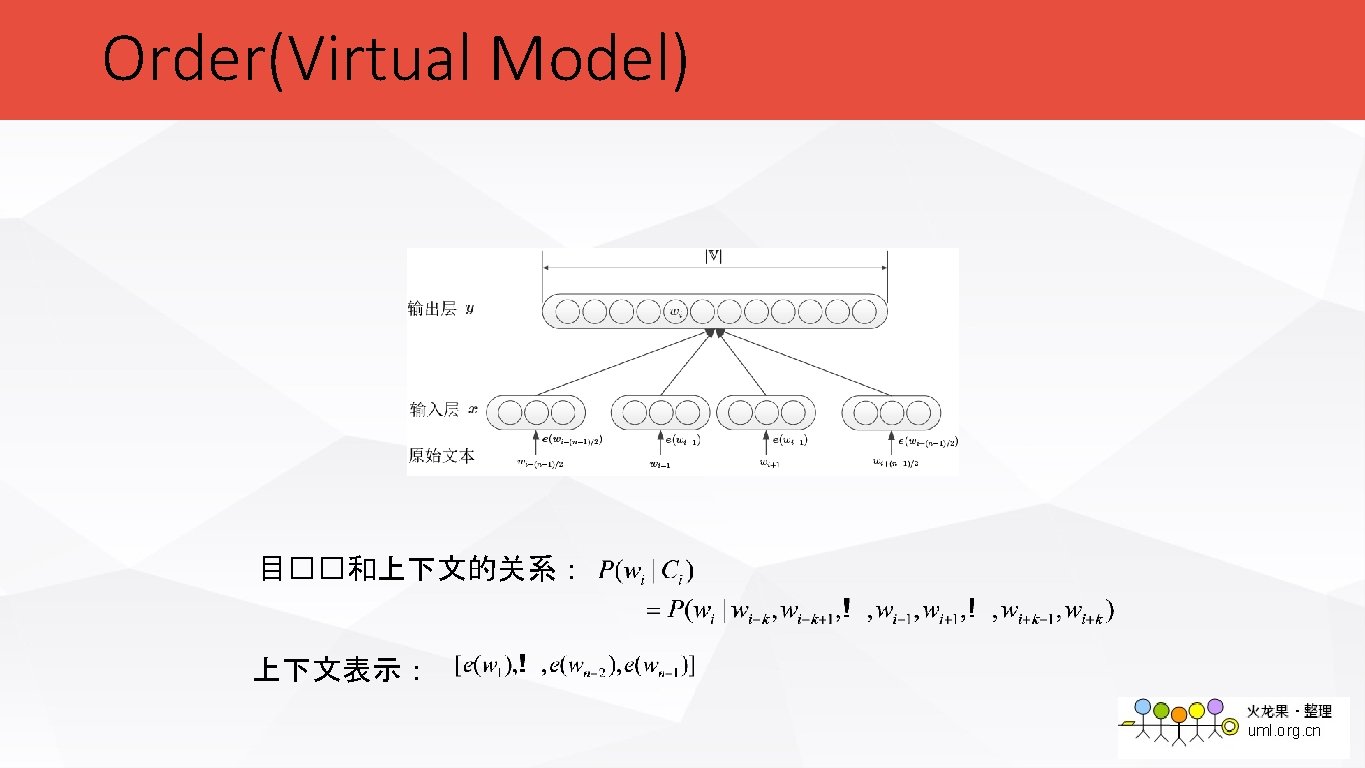

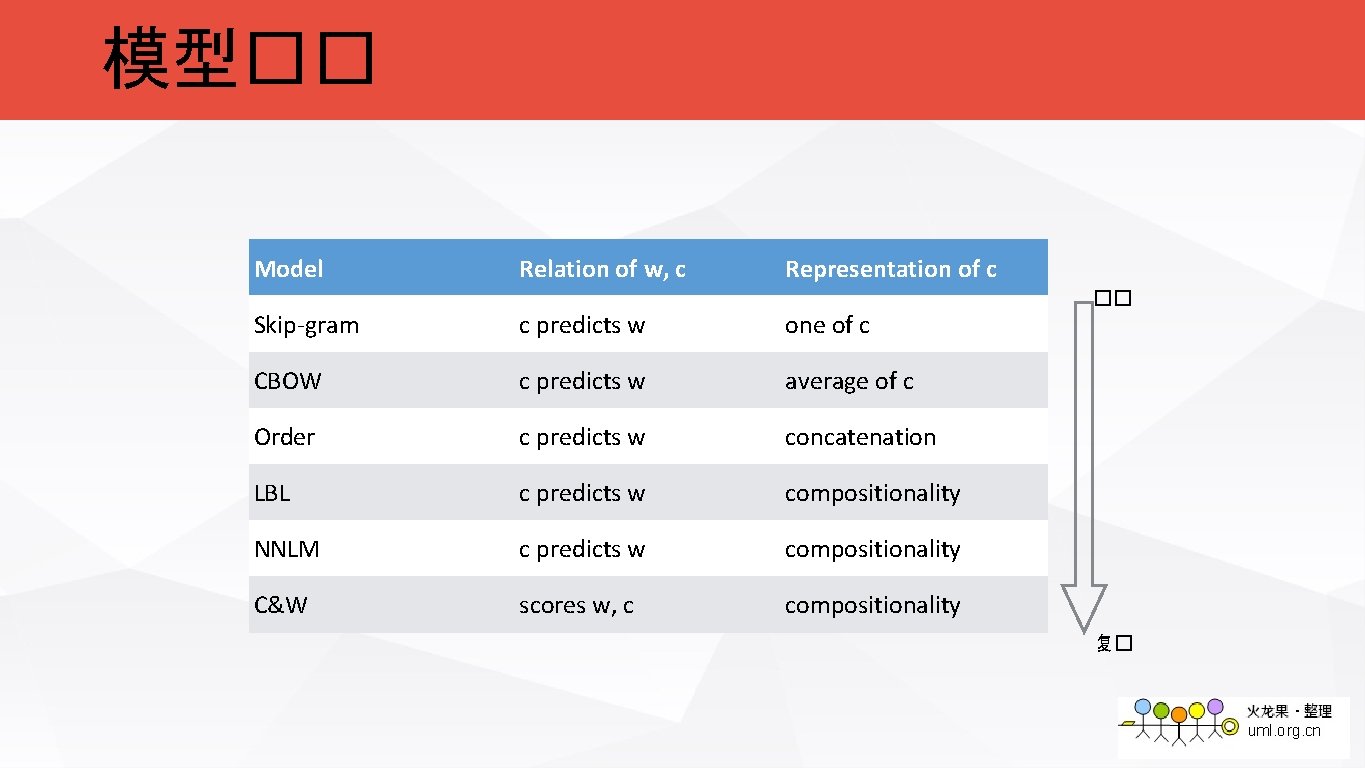

模型�� Model Relation of w, c Representation of c Skip-gram c predicts w one of c CBOW c predicts w average of c Order c predicts w concatenation LBL c predicts w compositionality NNLM c predicts w compositionality C&W scores w, c compositionality �� 复�

![�价任�:�比任� [Mikolov et al. 2013] • �法相似度( syn)10. 5 k • predict – predicting �价任�:�比任� [Mikolov et al. 2013] • �法相似度( syn)10. 5 k • predict – predicting](http://slidetodoc.com/presentation_image_h2/3f932abd0109d43fbd540d1f65c6bf9c/image-47.jpg)

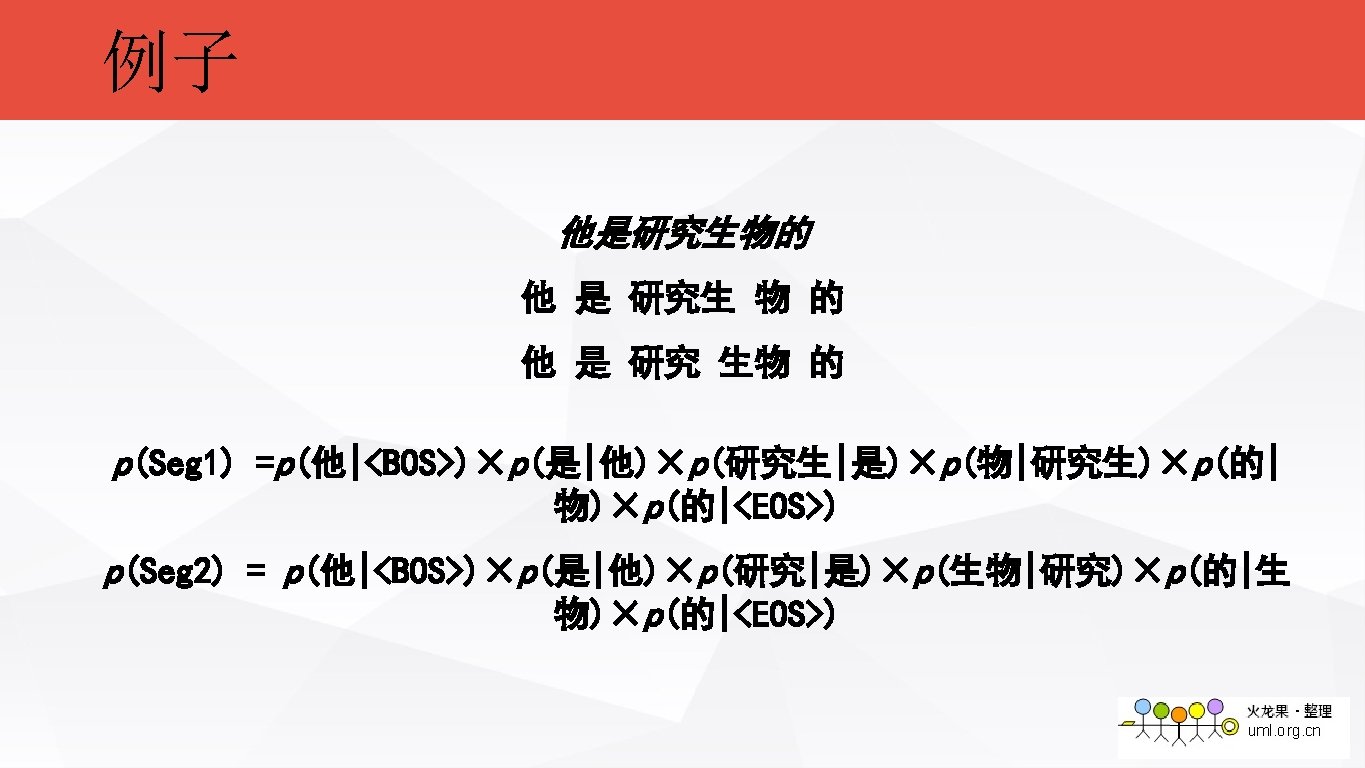

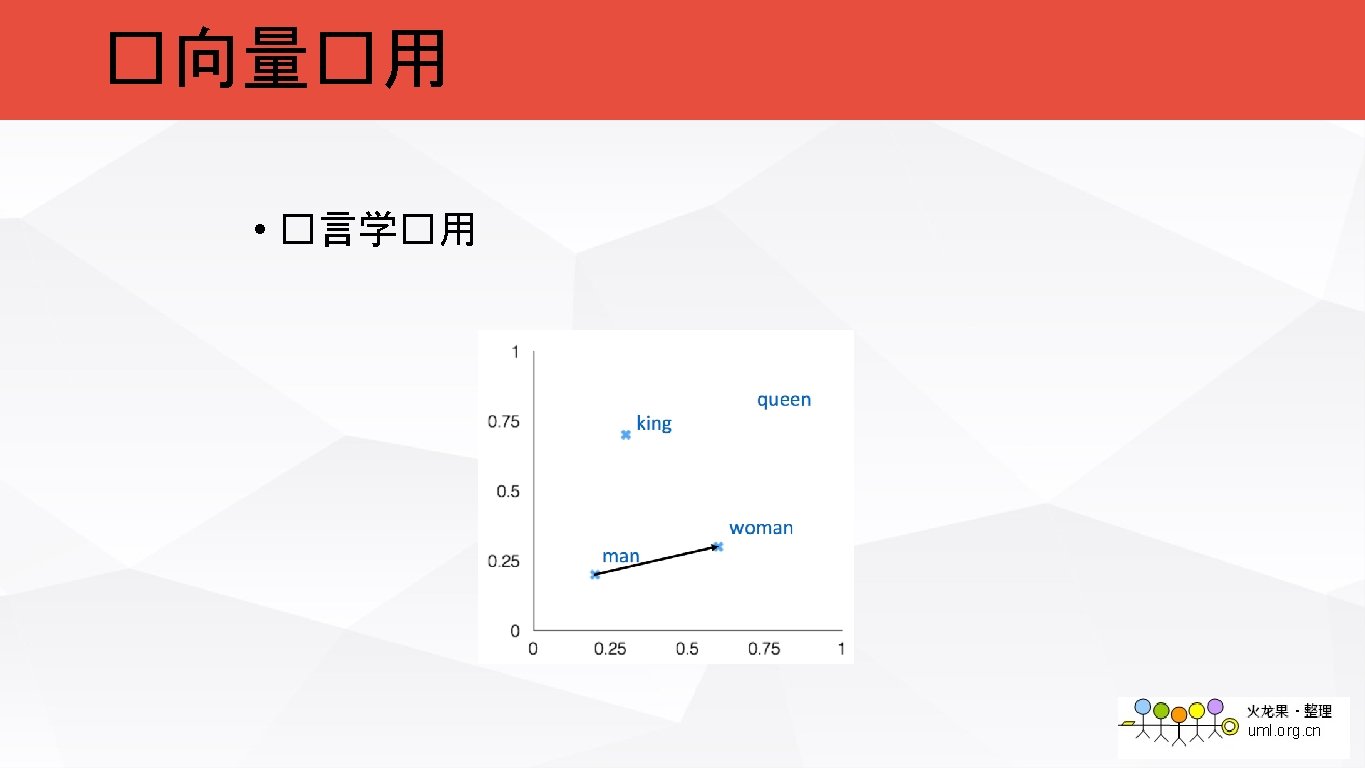

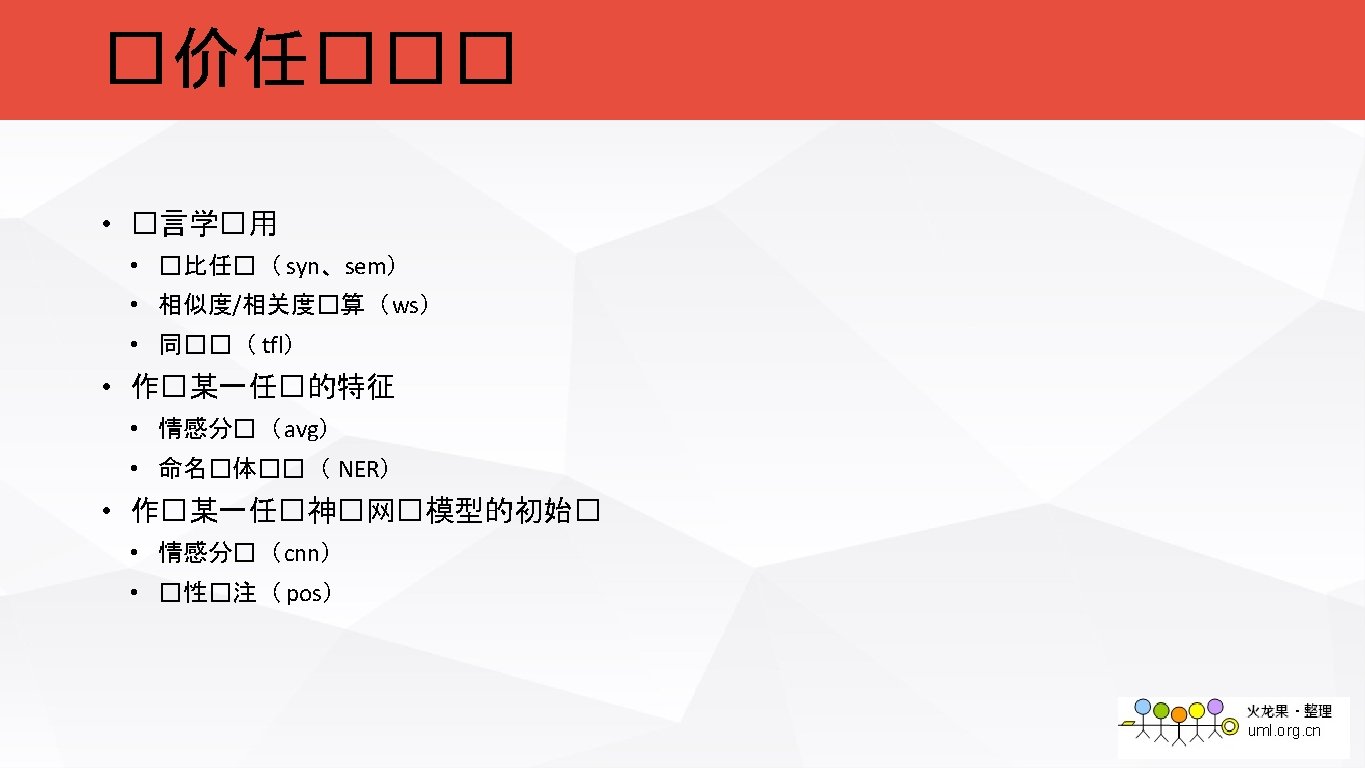

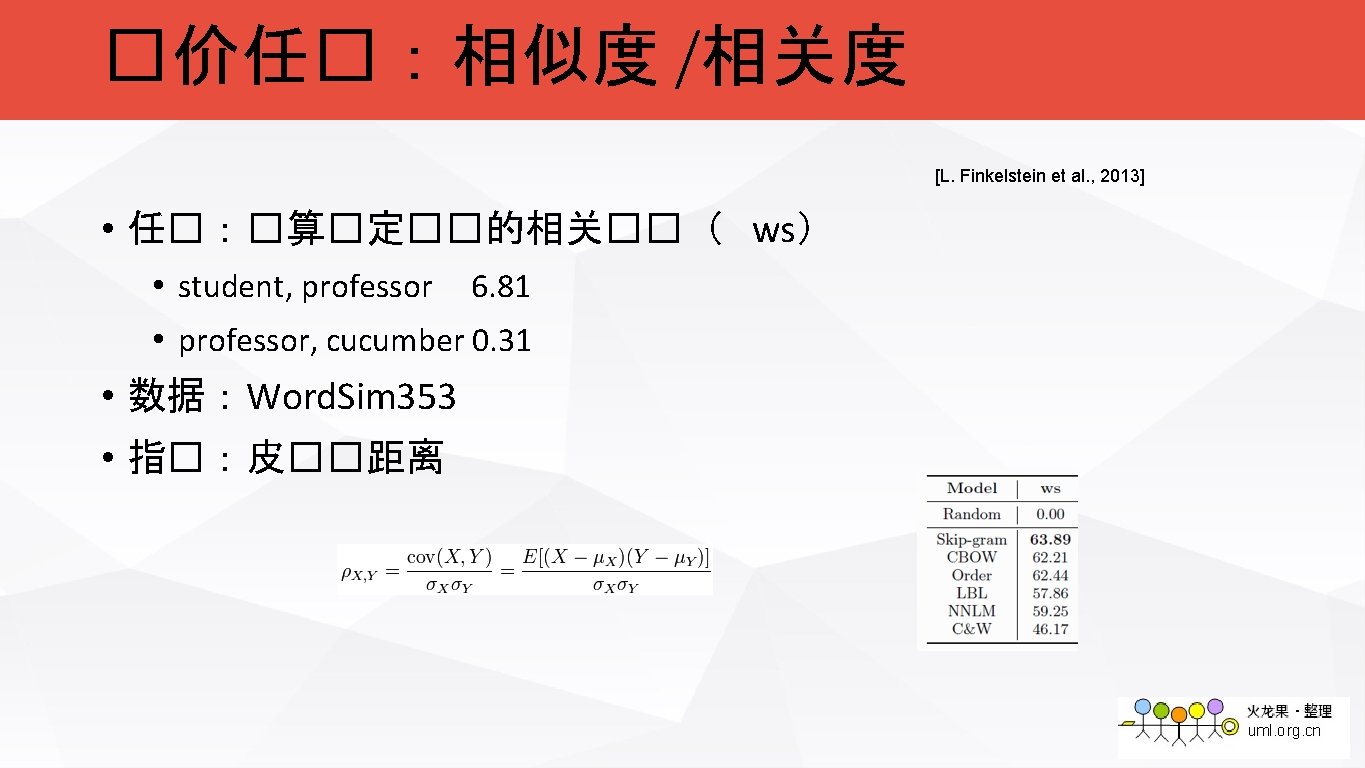

�价任�:�比任� [Mikolov et al. 2013] • �法相似度( syn)10. 5 k • predict – predicting ≈ dance – dancing • �比关系(��)( sem)9 k • king – queen ≈ man – woman • �� • man – woman + queen → king • predict-dance+dancing →predicting • �价指� • Accuracy

![�价任�:同�� [T. Landauer & S. Dumais, 2013] • 任�:找�定��的同��( tfl)80个��� levied A) imposed C) �价任�:同�� [T. Landauer & S. Dumais, 2013] • 任�:找�定��的同��( tfl)80个��� levied A) imposed C)](http://slidetodoc.com/presentation_image_h2/3f932abd0109d43fbd540d1f65c6bf9c/image-49.jpg)

�价任�:同�� [T. Landauer & S. Dumais, 2013] • 任�:找�定��的同��( tfl)80个��� levied A) imposed C) requested • 数据:托福考�同��� • 指�: Accuracy B) believed D) correlated

![�价任�:情感分� [Y. Kim, 2014] • 任�:情感分�, 5分�( cnn) • 模型:Convolutional Neural Network • 数据:Stanford �价任�:情感分� [Y. Kim, 2014] • 任�:情感分�, 5分�( cnn) • 模型:Convolutional Neural Network • 数据:Stanford](http://slidetodoc.com/presentation_image_h2/3f932abd0109d43fbd540d1f65c6bf9c/image-52.jpg)

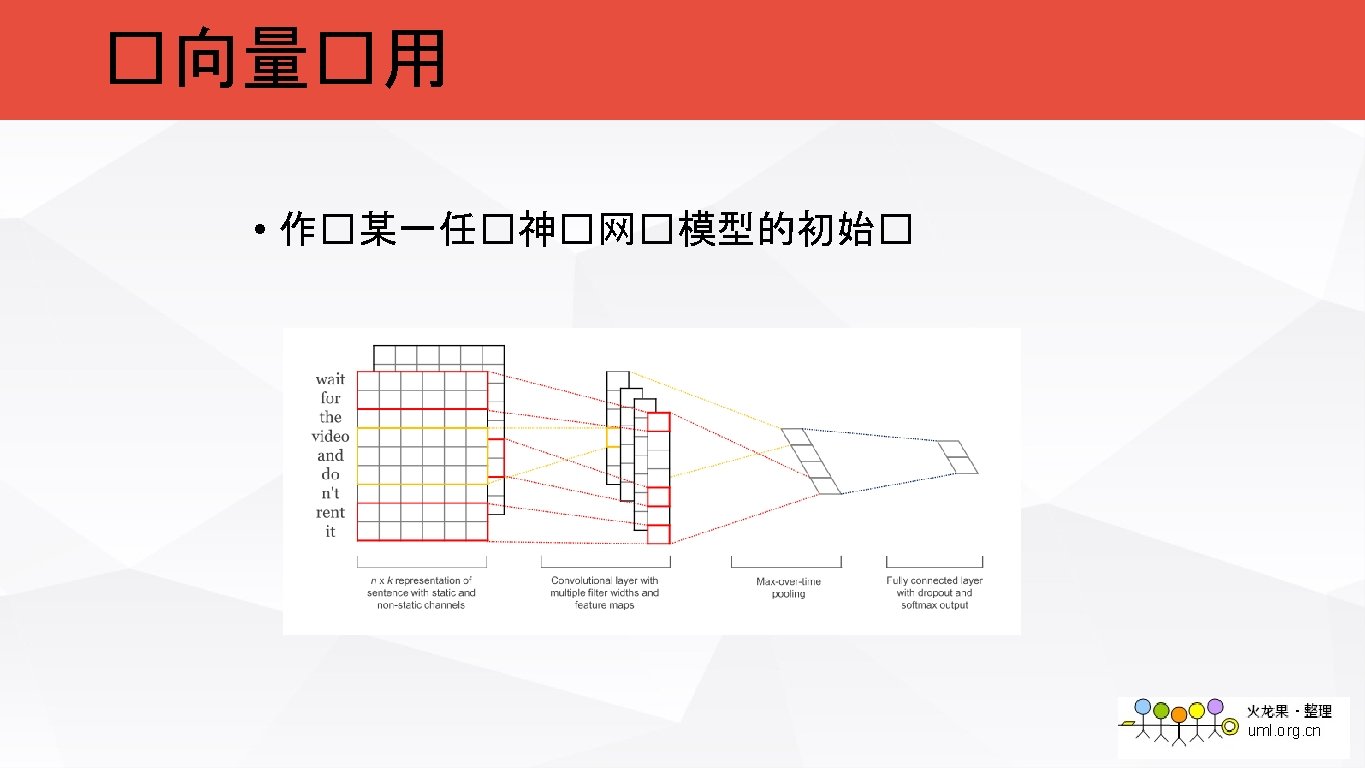

�价任�:情感分� [Y. Kim, 2014] • 任�:情感分�, 5分�( cnn) • 模型:Convolutional Neural Network • 数据:Stanford Sentiment Tree Bank • 6920 Train, 872 Dev, 1821 Test • 指�: Accuracy

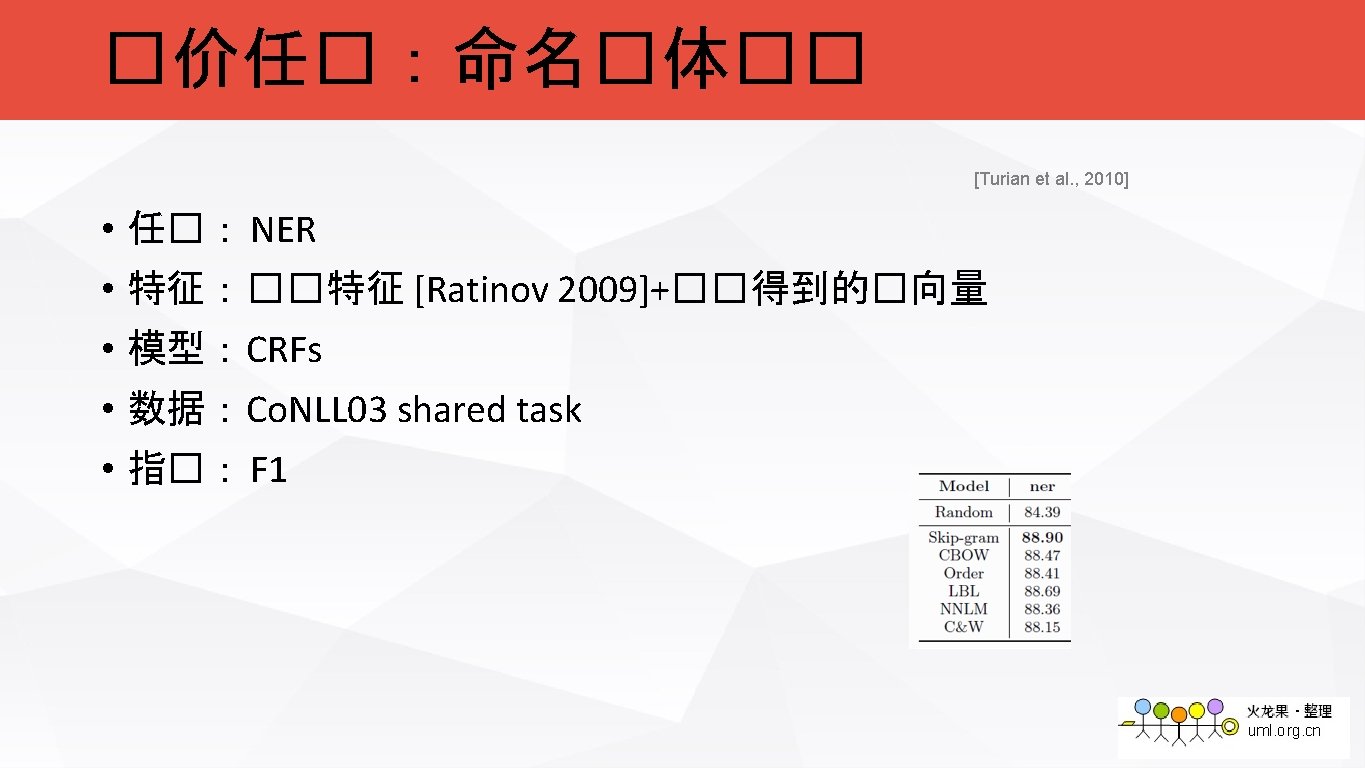

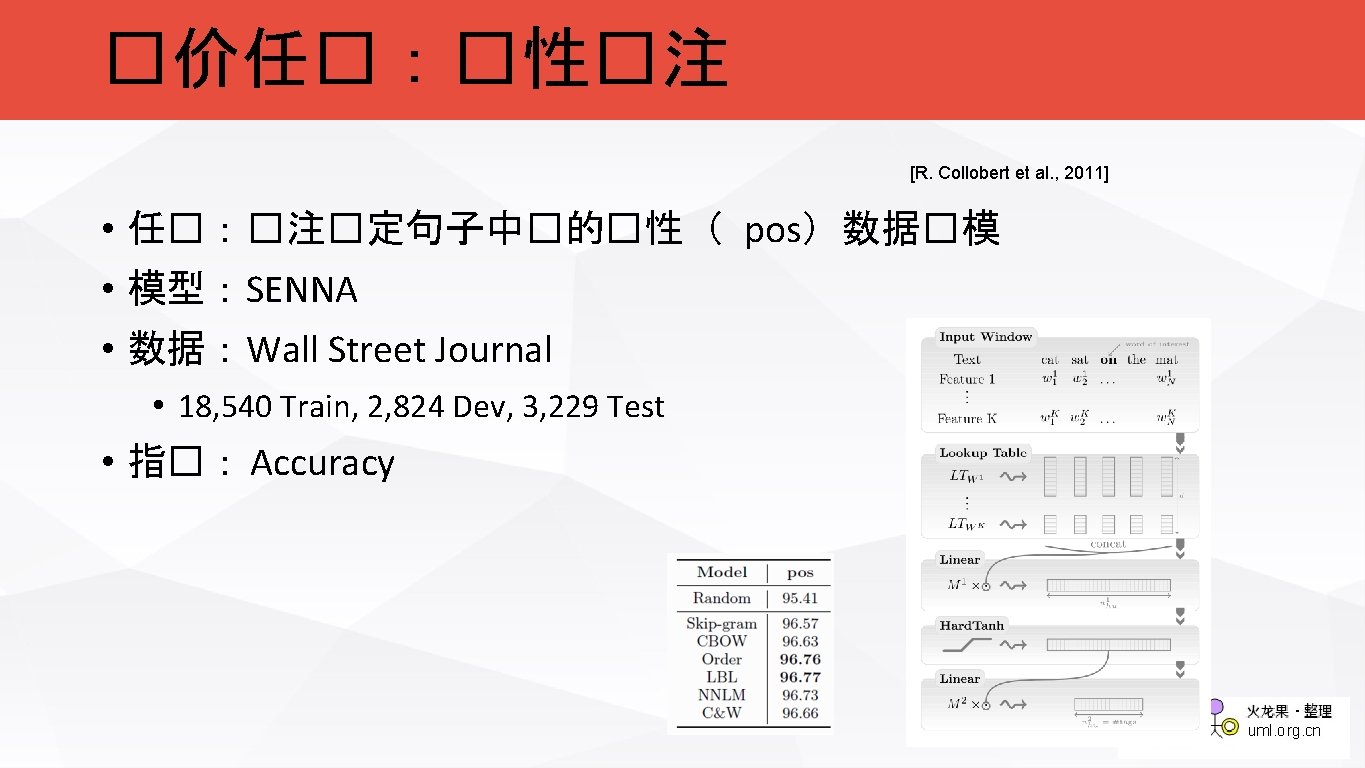

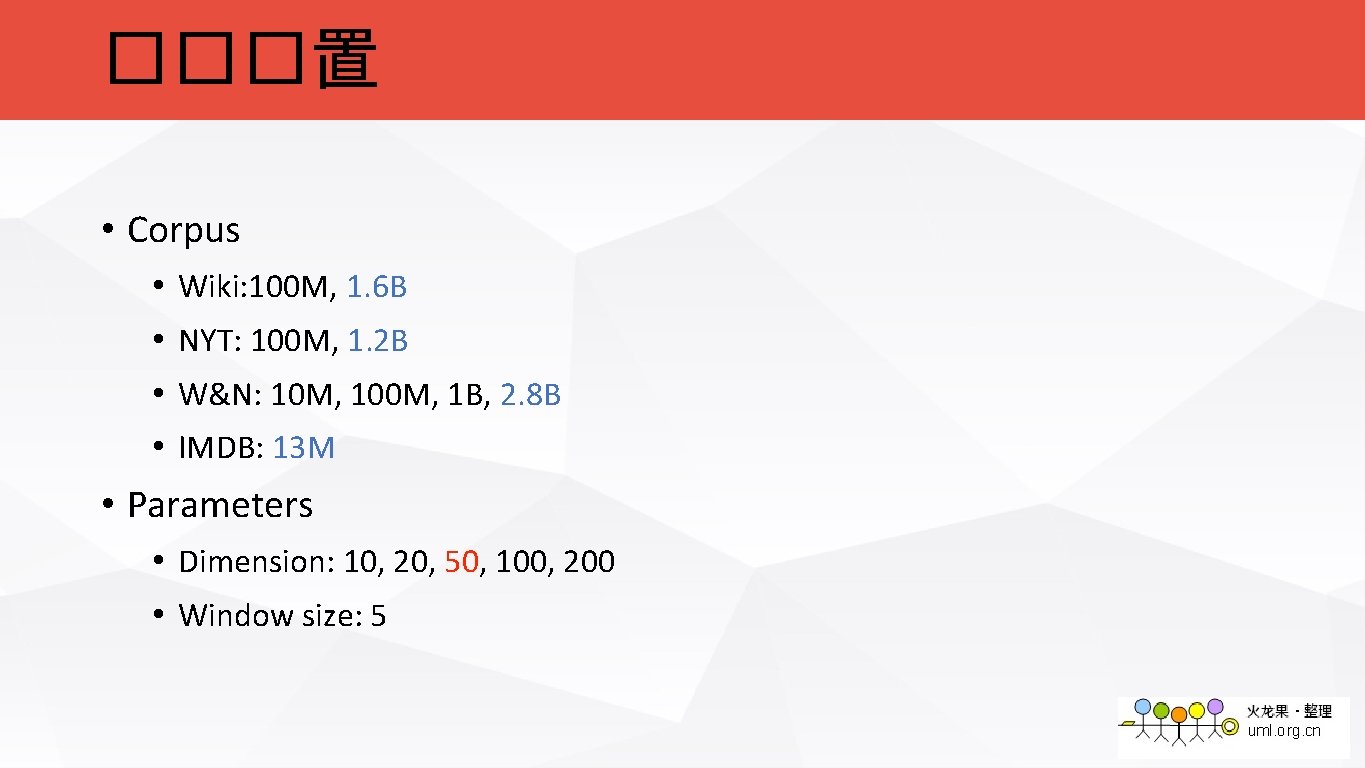

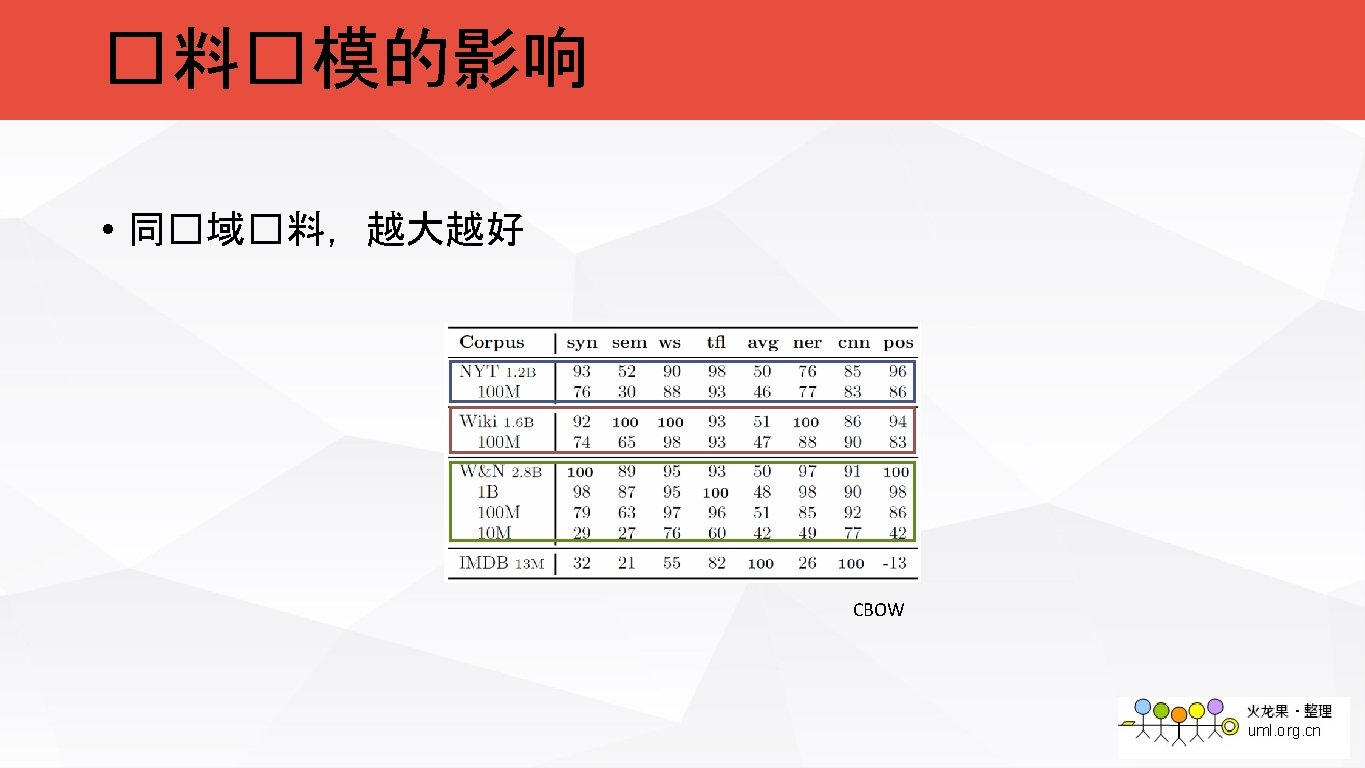

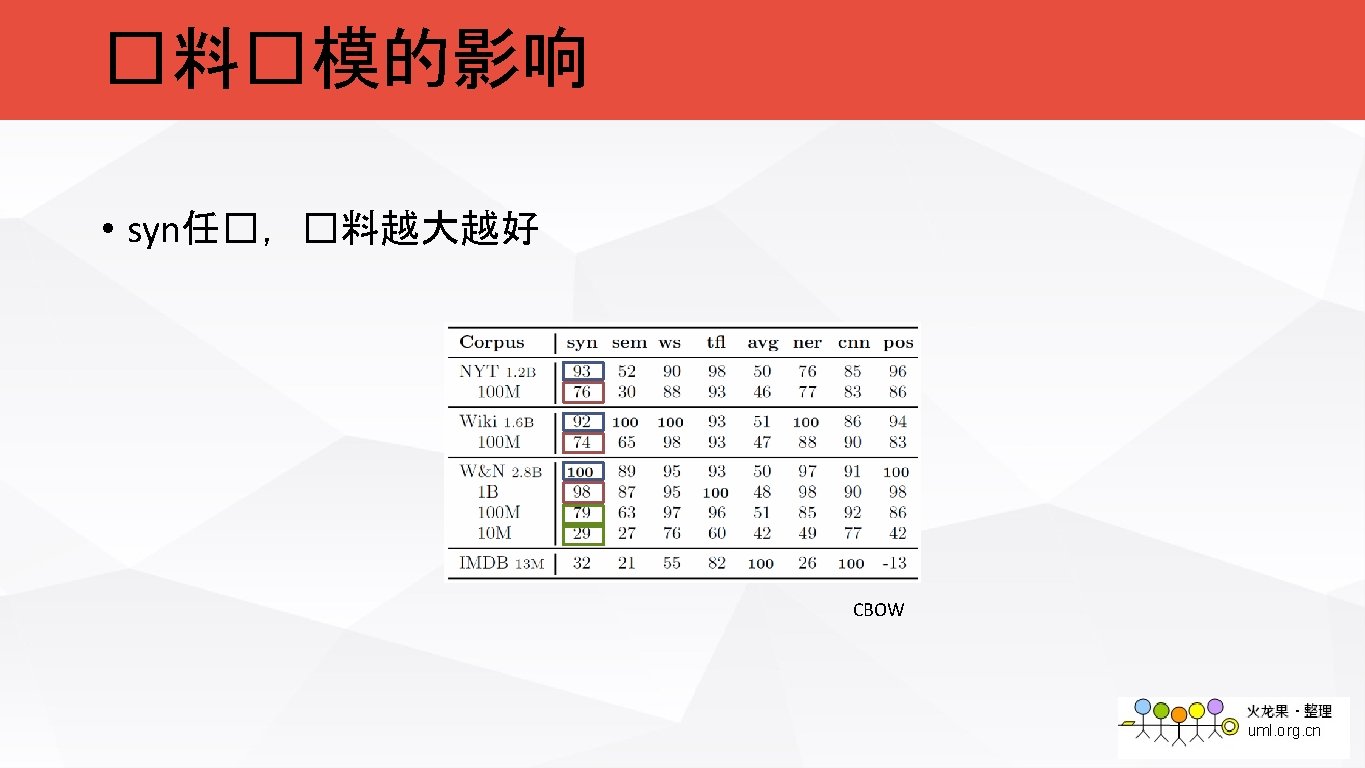

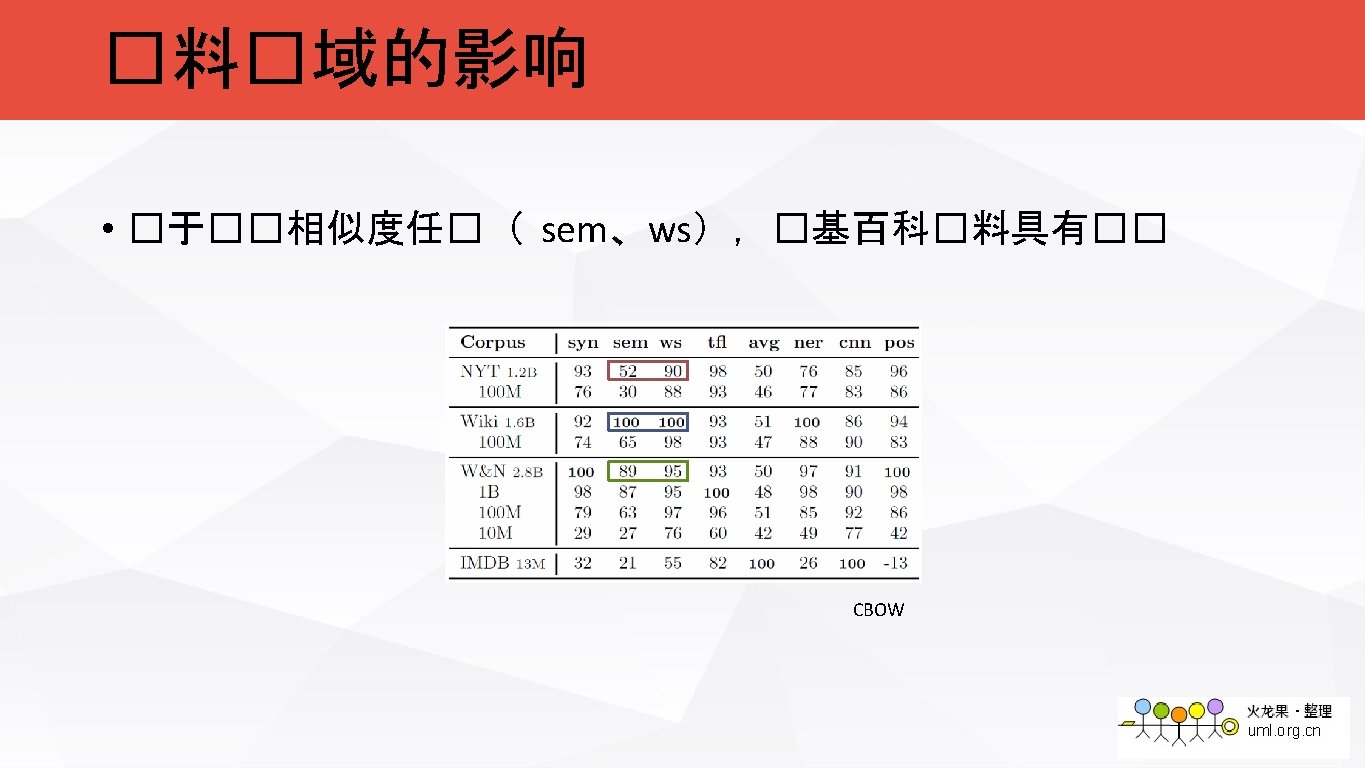

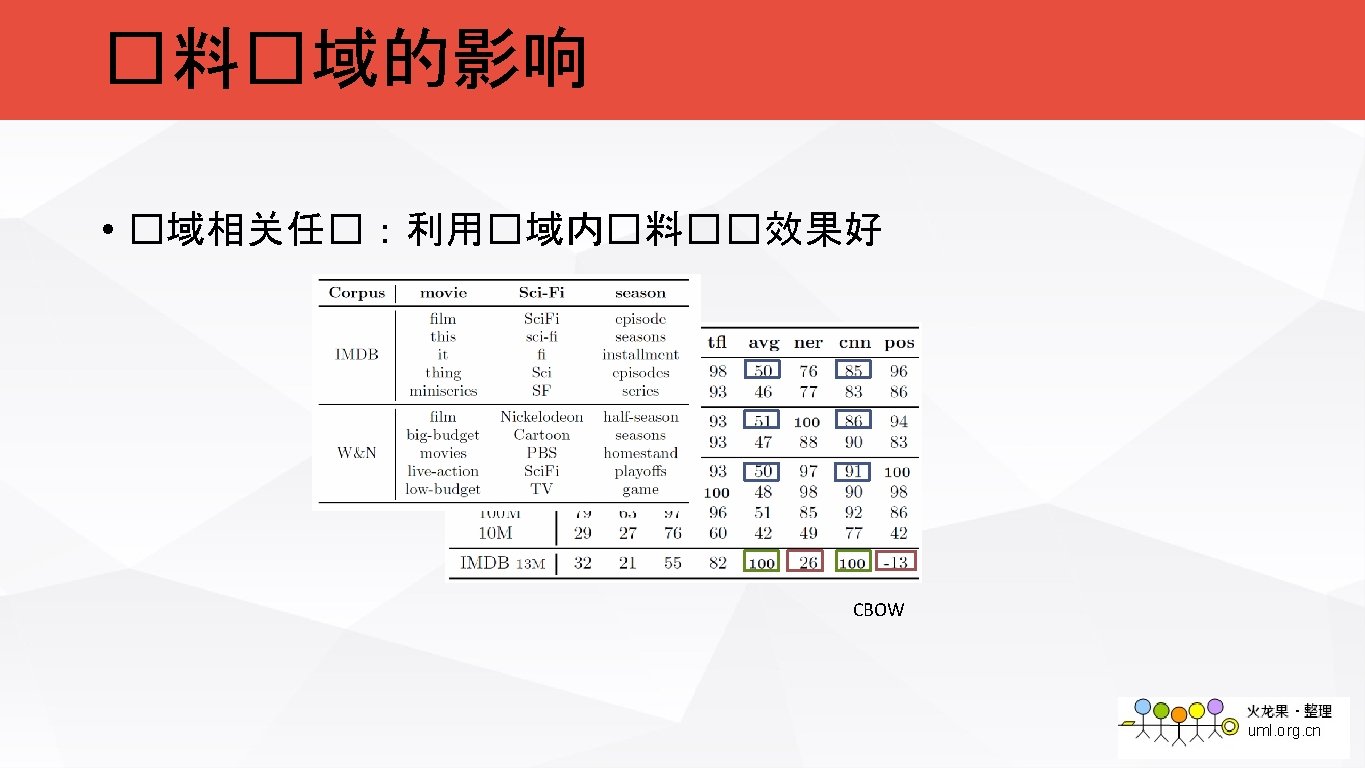

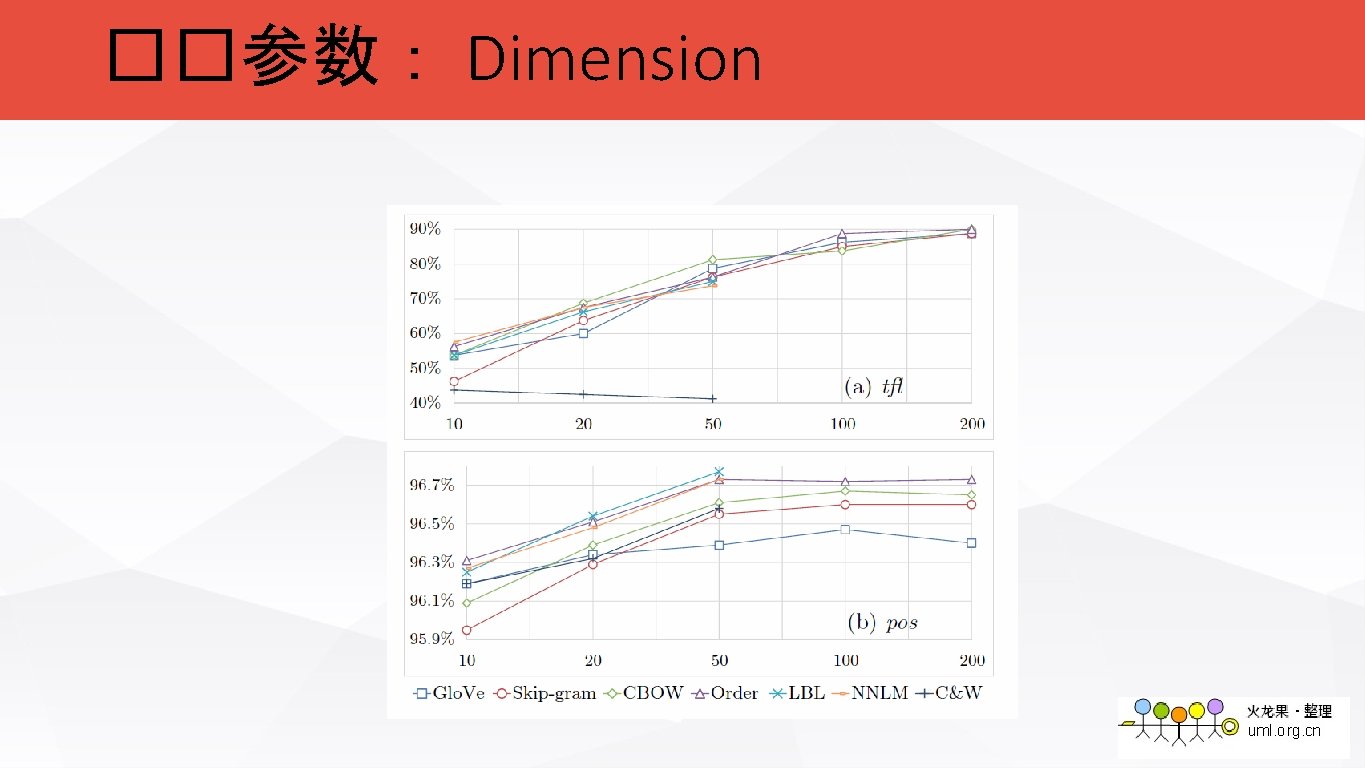

���置 • Corpus • Wiki: 100 M, 1. 6 B • NYT: 100 M, 1. 2 B • W&N: 10 M, 100 M, 1 B, 2. 8 B • IMDB: 13 M • Parameters • Dimension: 10, 20, 50, 100, 200 • Window size: 5

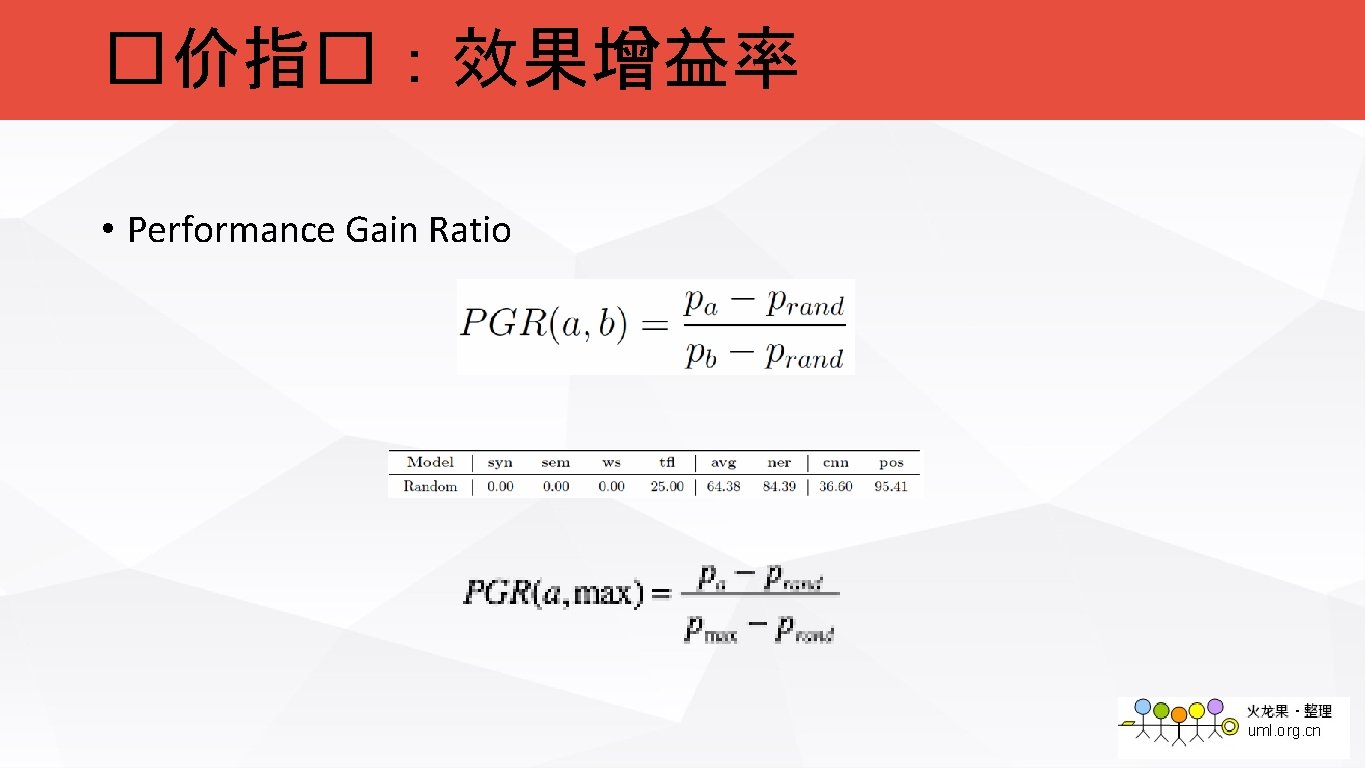

�价指�:效果增益率 • Performance Gain Ratio

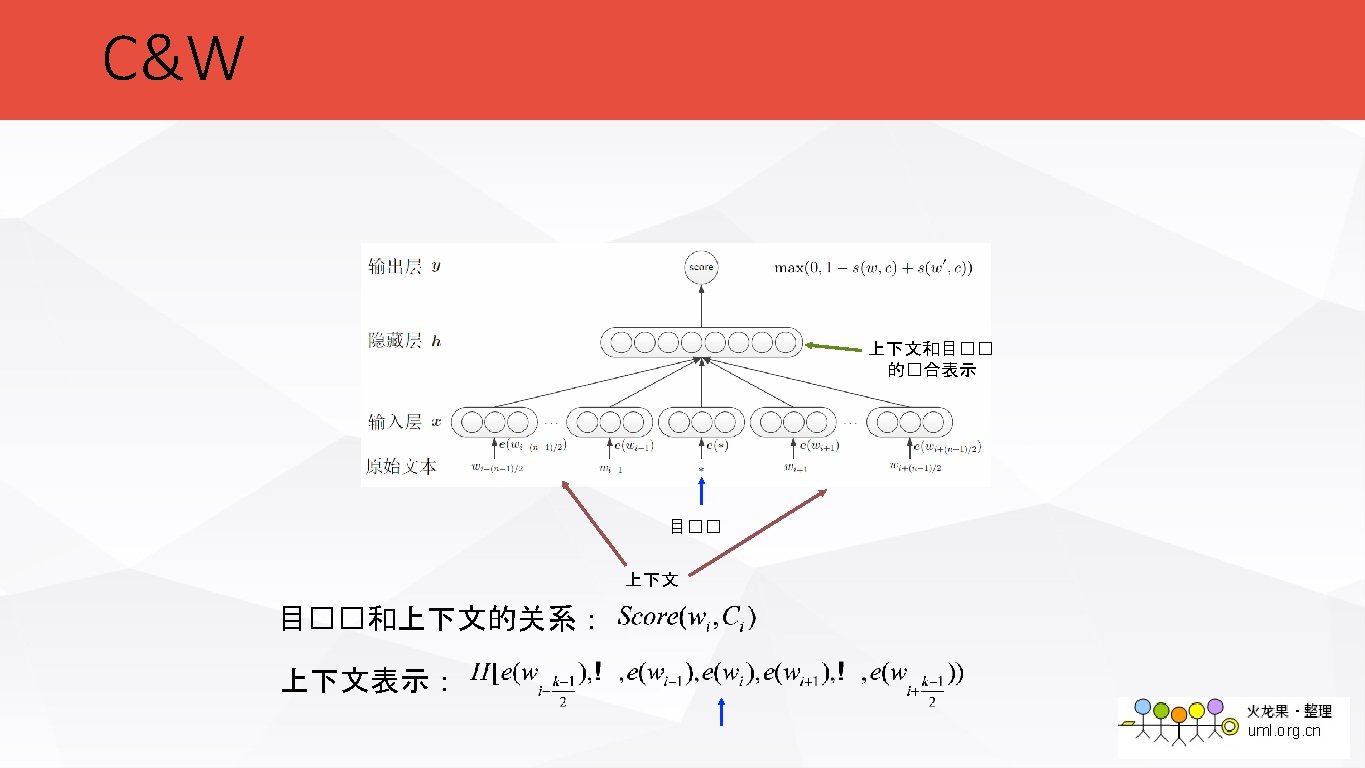

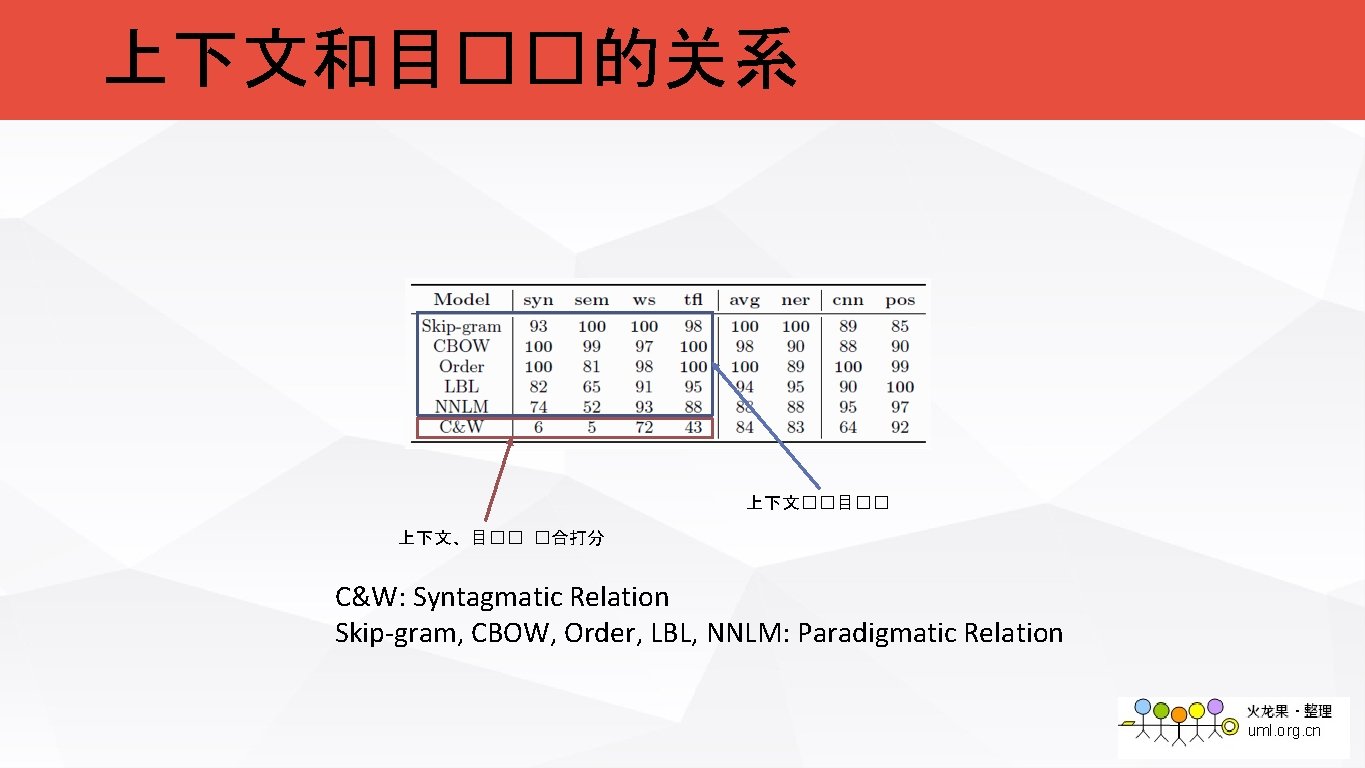

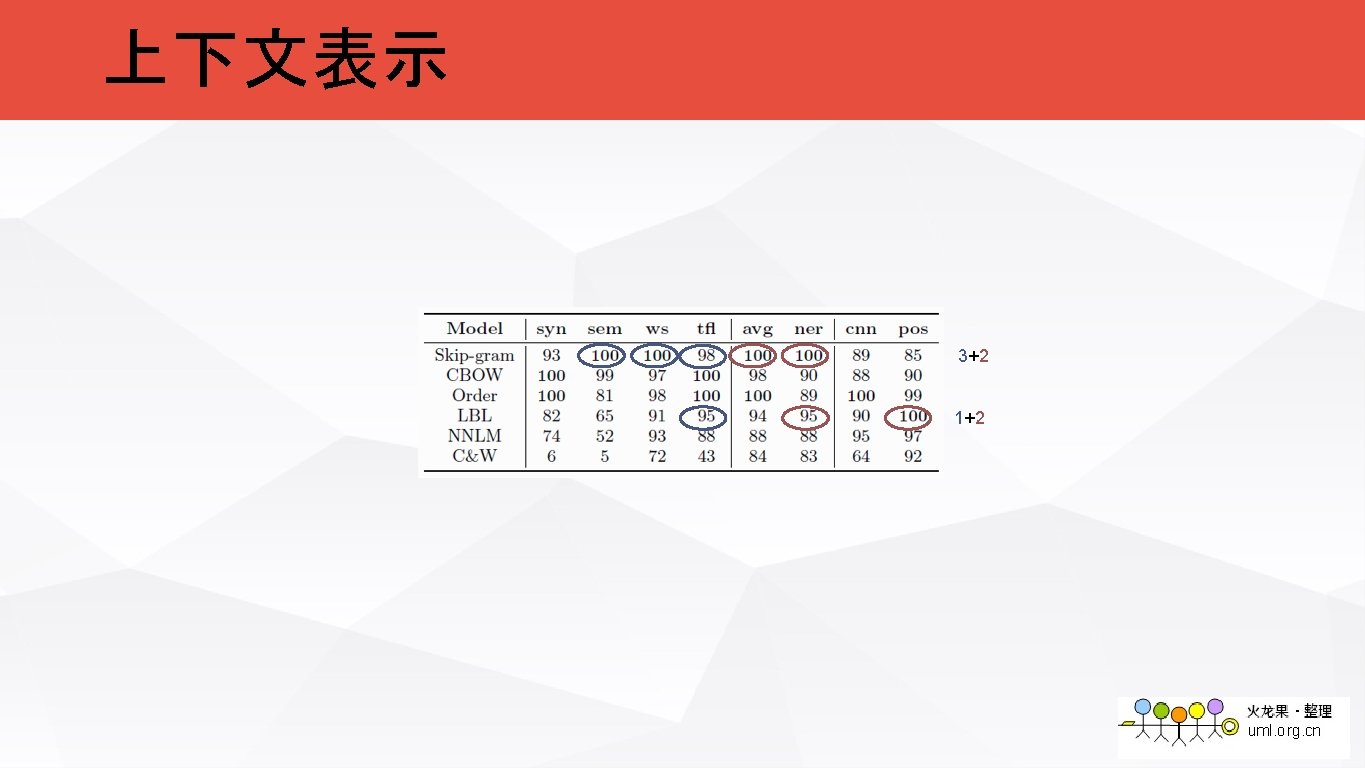

上下文和目��的关系 上下文��目�� 上下文、目�� �合打分 C&W: Syntagmatic Relation Skip-gram, CBOW, Order, LBL, NNLM: Paradigmatic Relation

上下文和目��的关系 paradigmatic relation syntagmatic relation

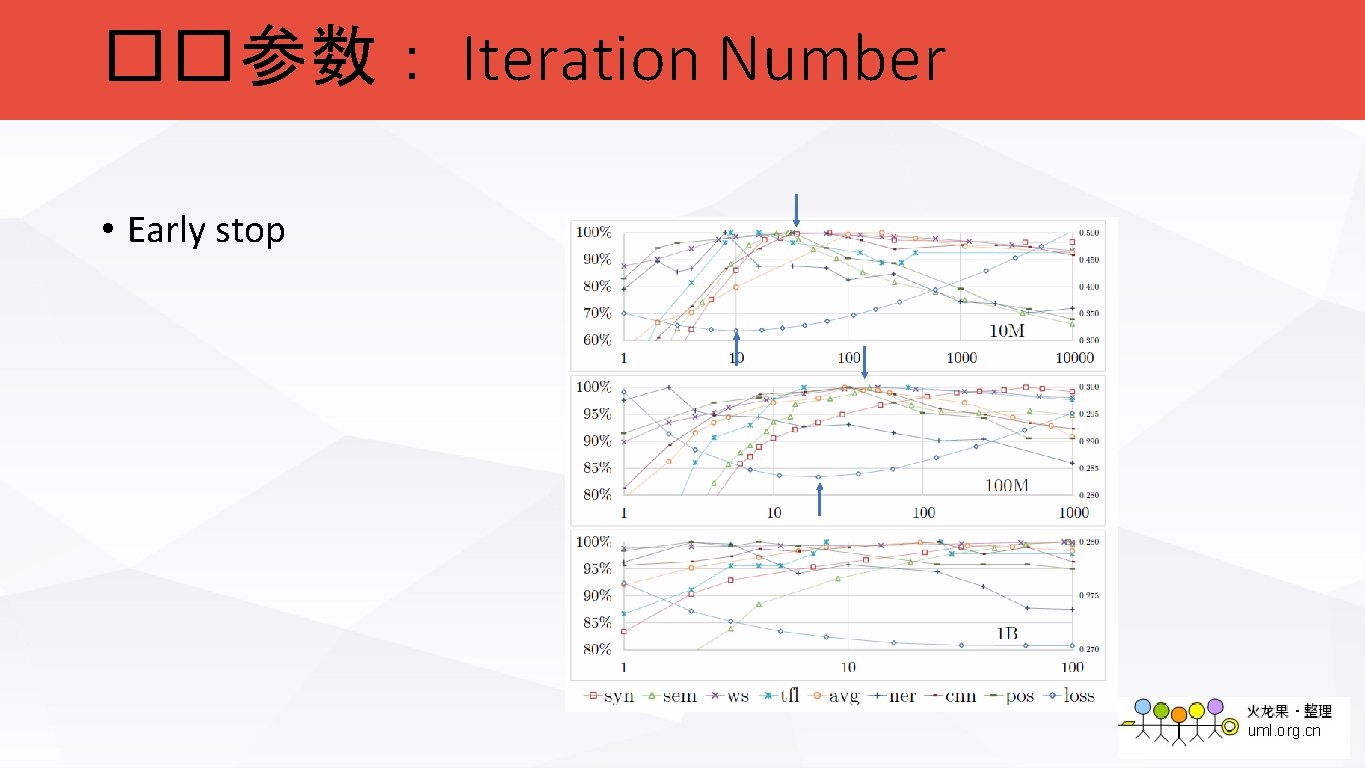

��参数: Iteration Number • Early stop

��参数: Dimension

Thanks

- Slides: 68