wav 2 letter Facebooks fast opensource speech recognition

wav 2 letter++: Facebook’s fast open-source speech recognition system Vineel Pratap, Awni Hannun, Qiantong Xu, Jeff Cai, Jacob Kahn, Gabriel Synnaeve Vitaliy Liptchinsky, Ronan Collobert Se Facebook AI Research vera l slid borr e o w ed f s Ron r a o n m who Col lobe wa s no rt, t hu the rt in proc ess

Agenda Research • • • Automatic Speech Recognition – overview and how it works Acoustic model architectures Training, ASG loss vs CTC loss (criteria) Language models, decoding Toolkit • • • Overview, design Flashlight Benchmarks • • • Word Error Rate Training/decoding speed

research

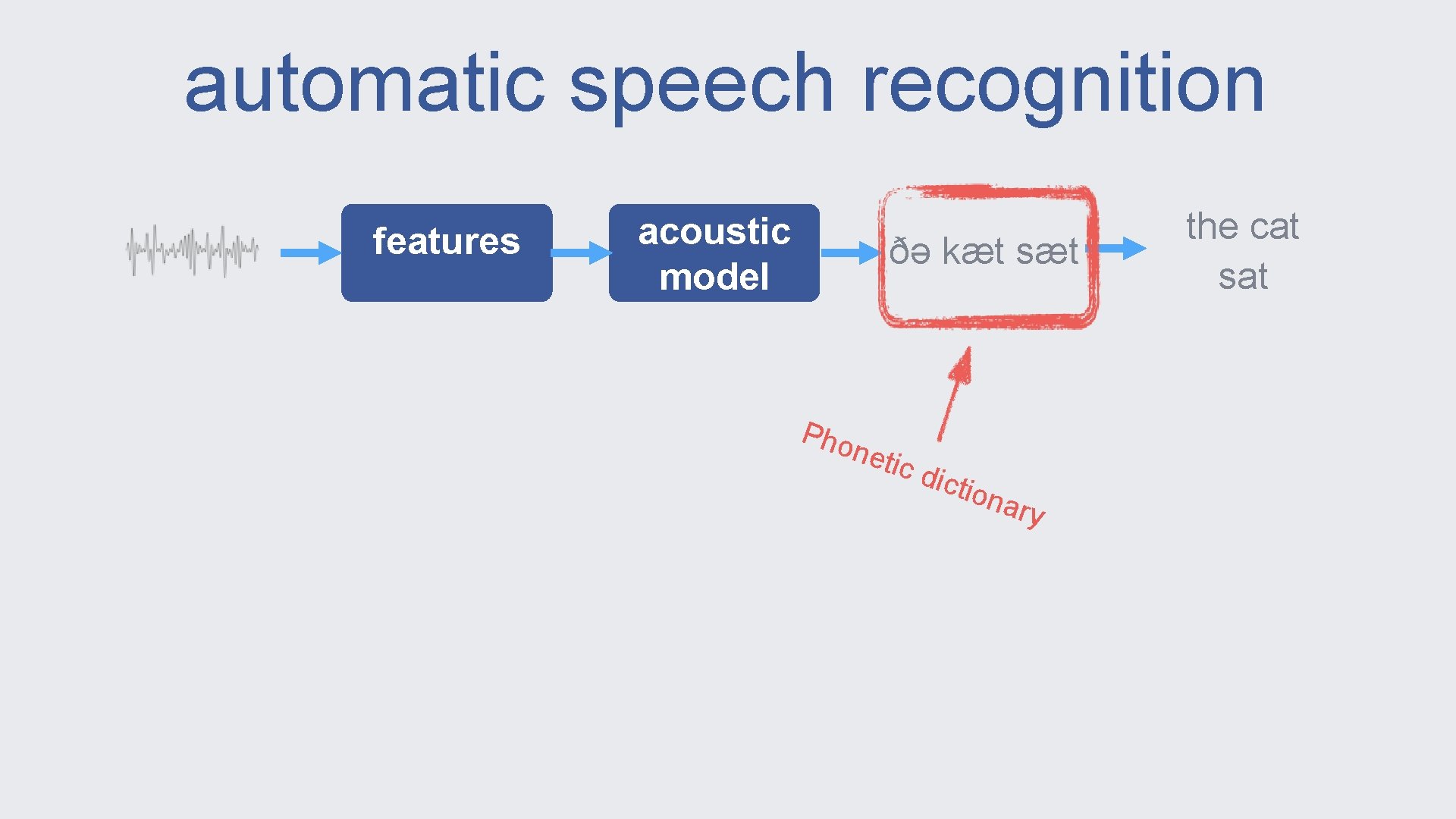

automatic speech recognition features acoustic model ðə kæt sæt Pho neti c di ctio nary the cat sat

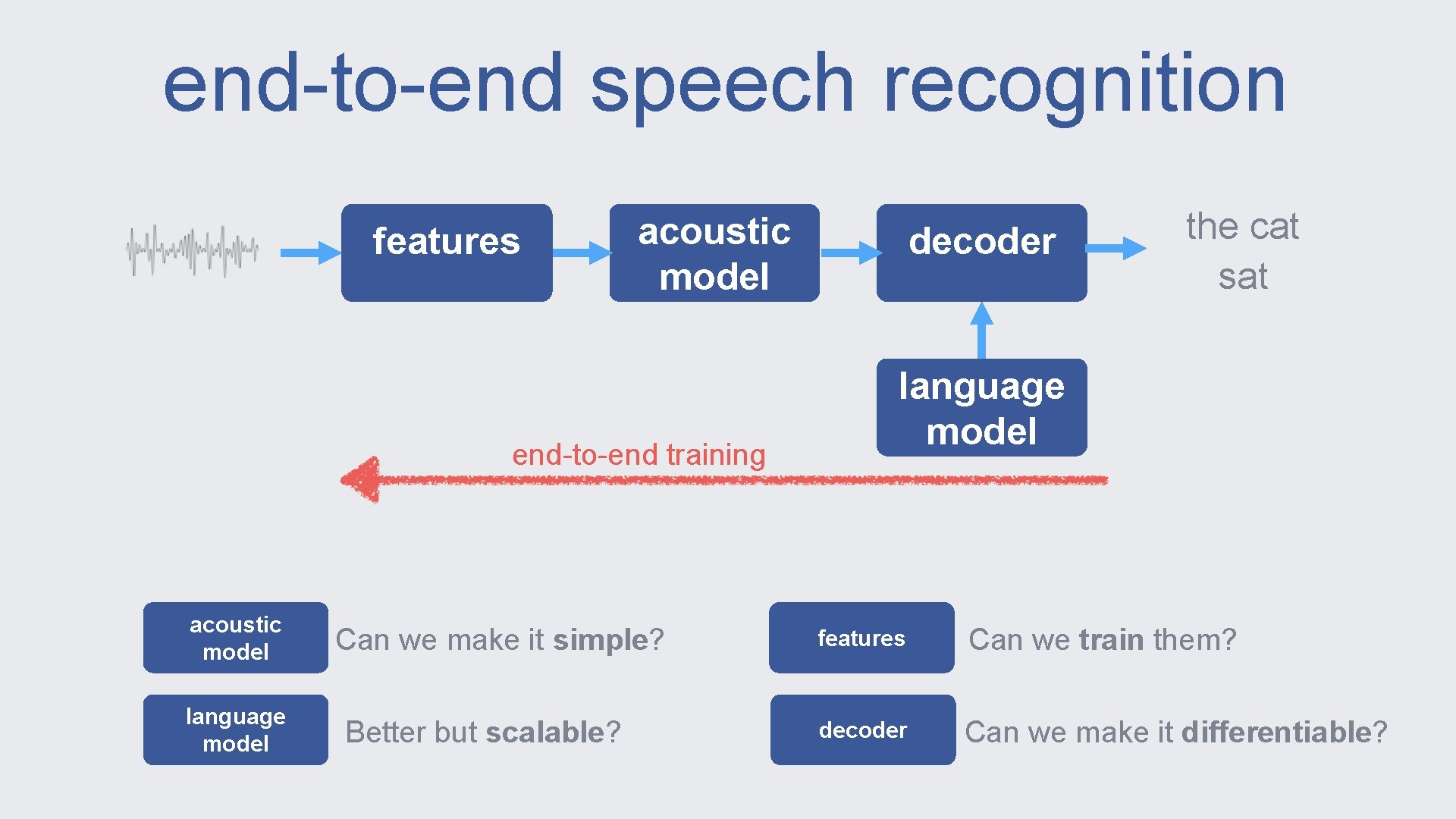

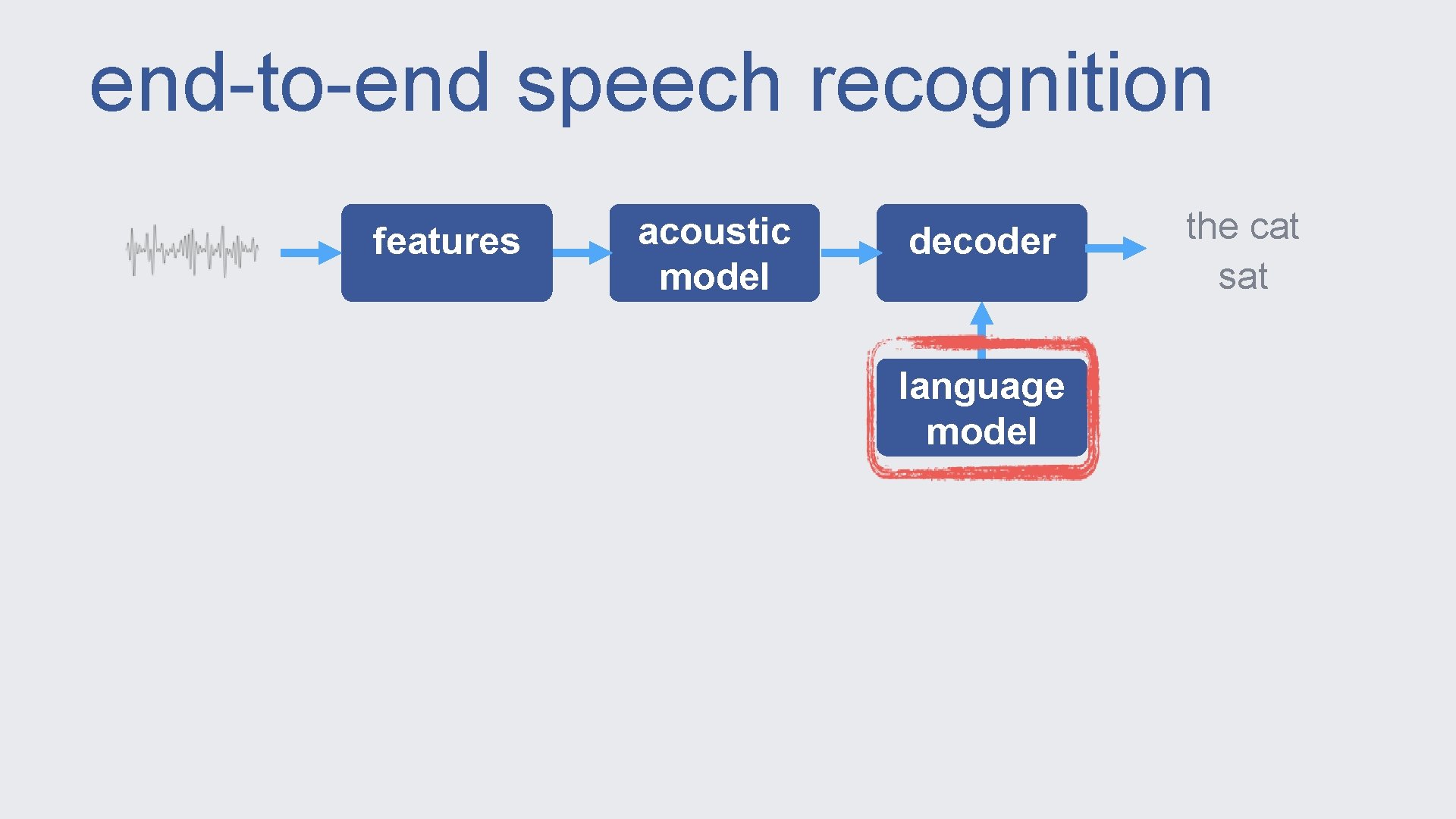

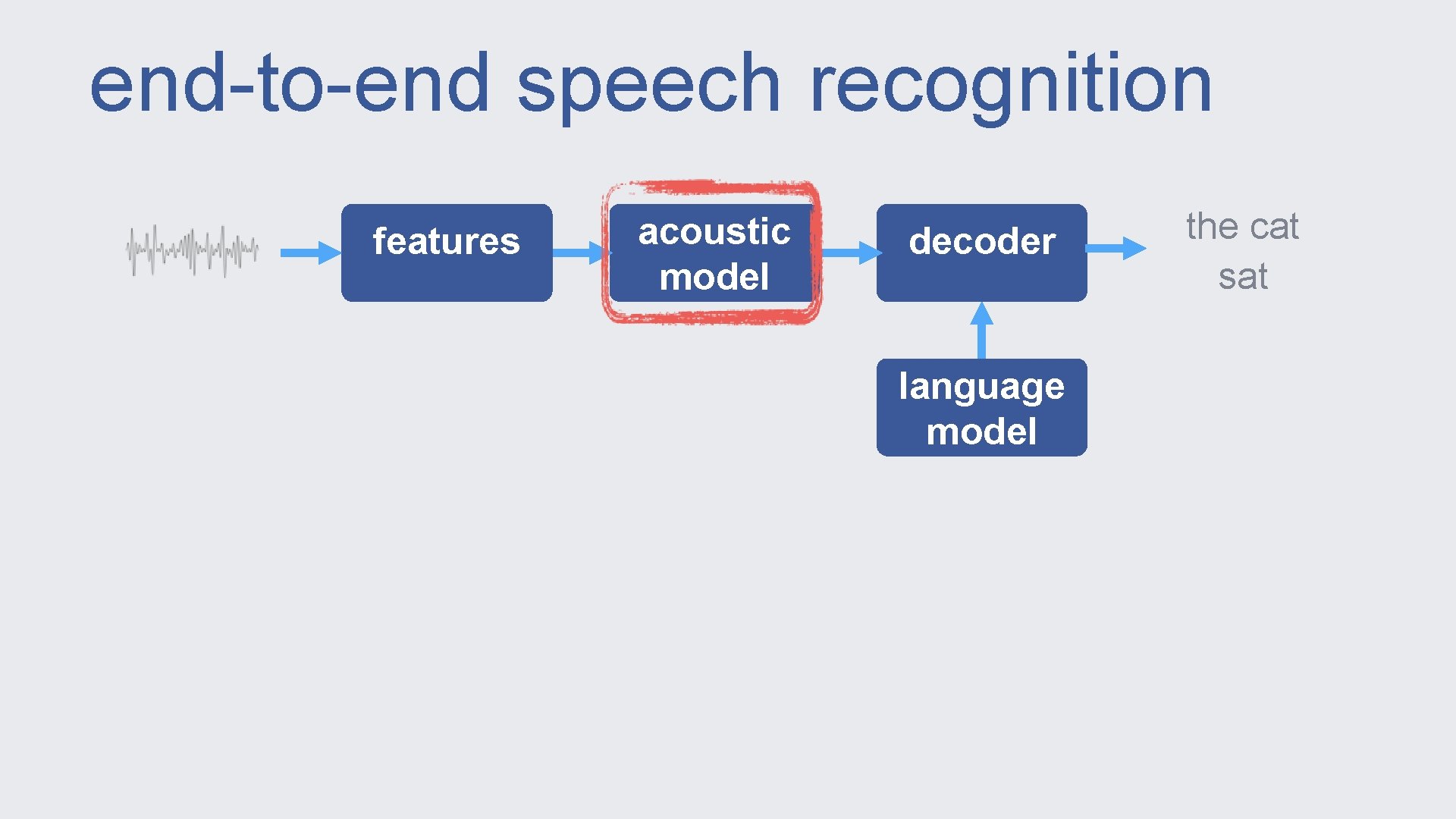

end-to-end speech recognition features acoustic model end-to-end training acoustic model language model Can we make it simple? Better but scalable? decoder the cat sat language model features Can we train them? decoder Can we make it differentiable?

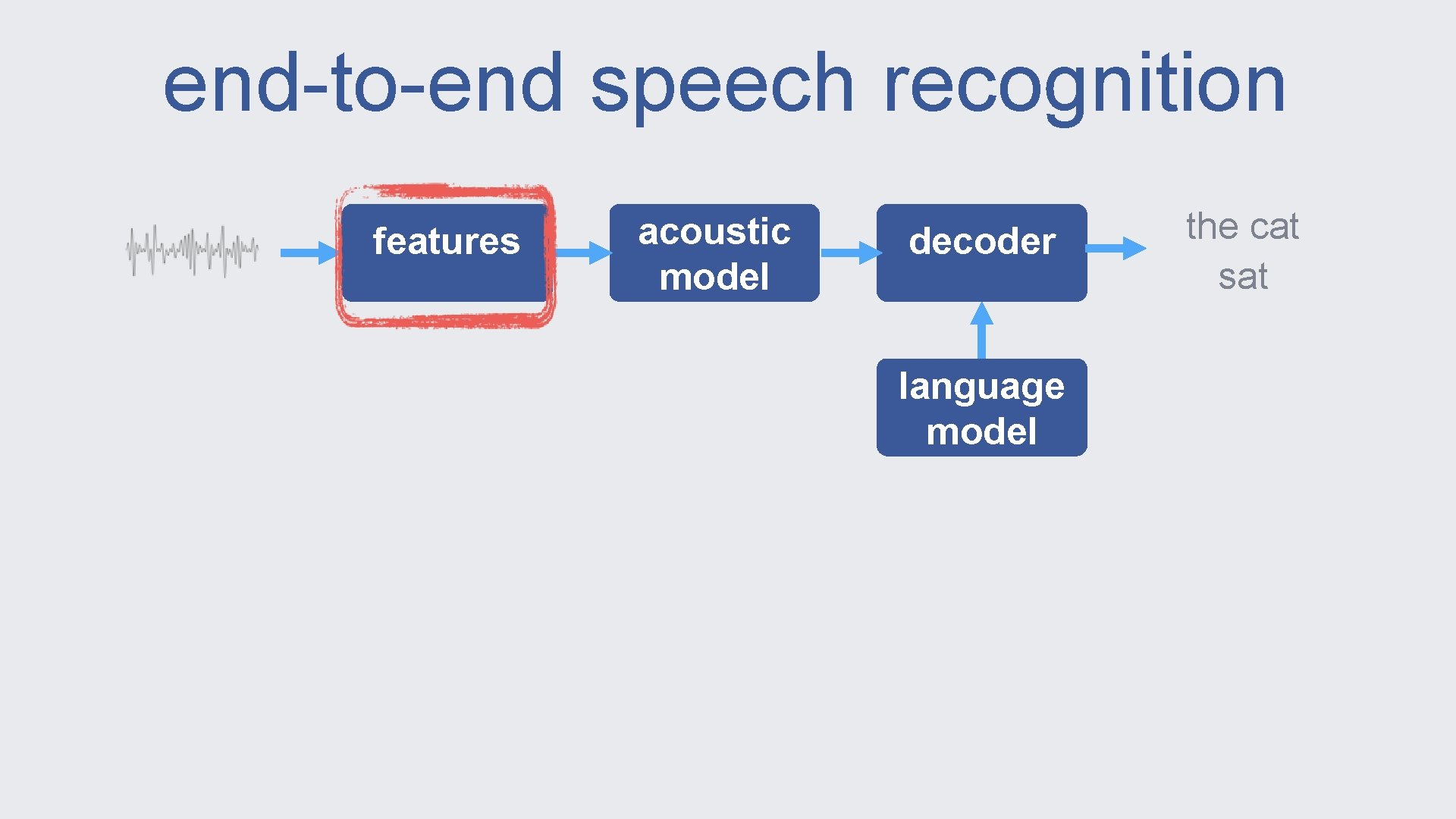

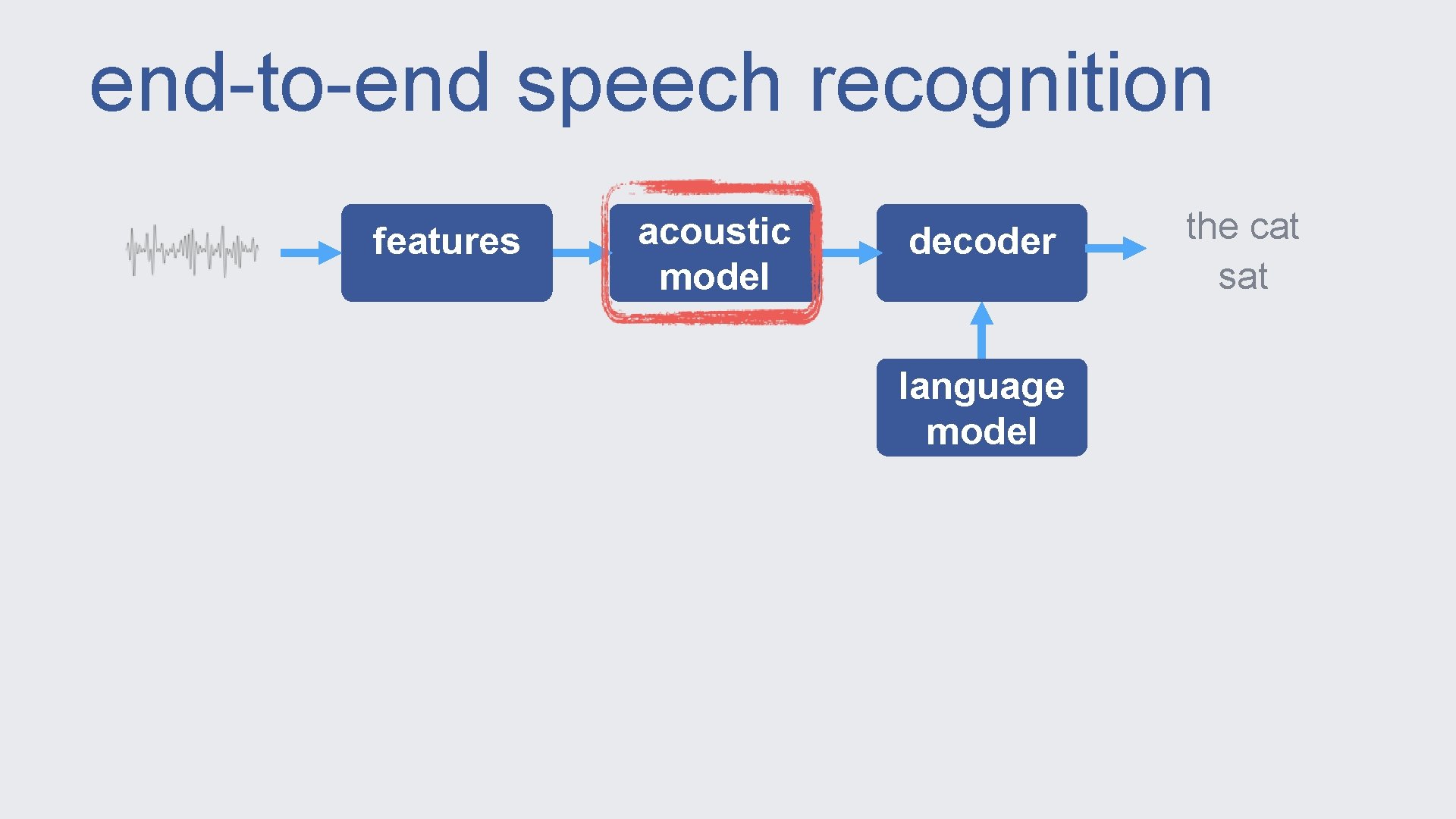

end-to-end speech recognition features acoustic model decoder language model the cat sat

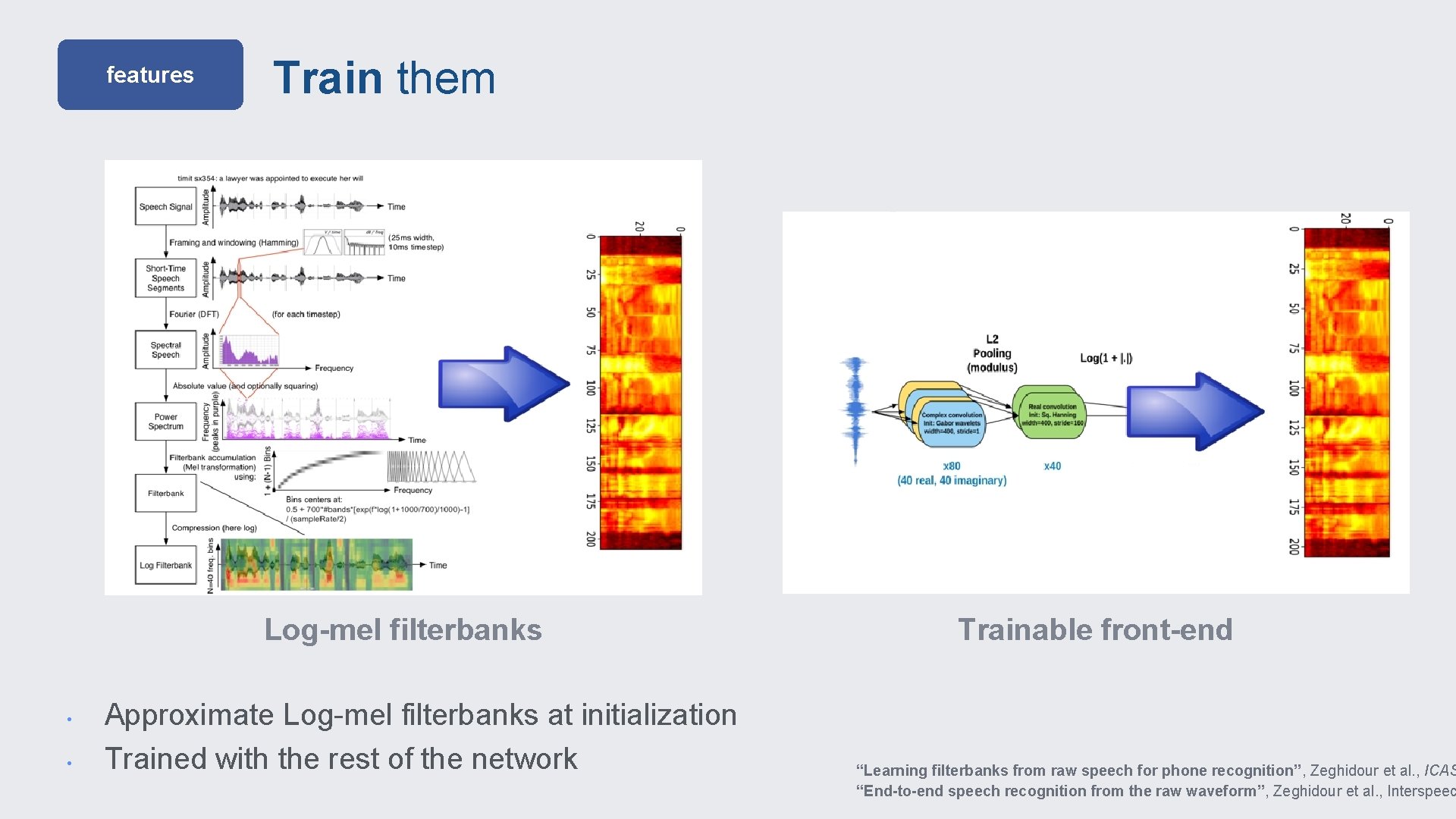

features Train them Log-mel filterbanks • • Approximate Log-mel filterbanks at initialization Trained with the rest of the network Trainable front-end “Learning filterbanks from raw speech for phone recognition”, Zeghidour et al. , ICAS “End-to-end speech recognition from the raw waveform”, Zeghidour et al. , Interspeec

end-to-end speech recognition features acoustic model decoder language model the cat sat

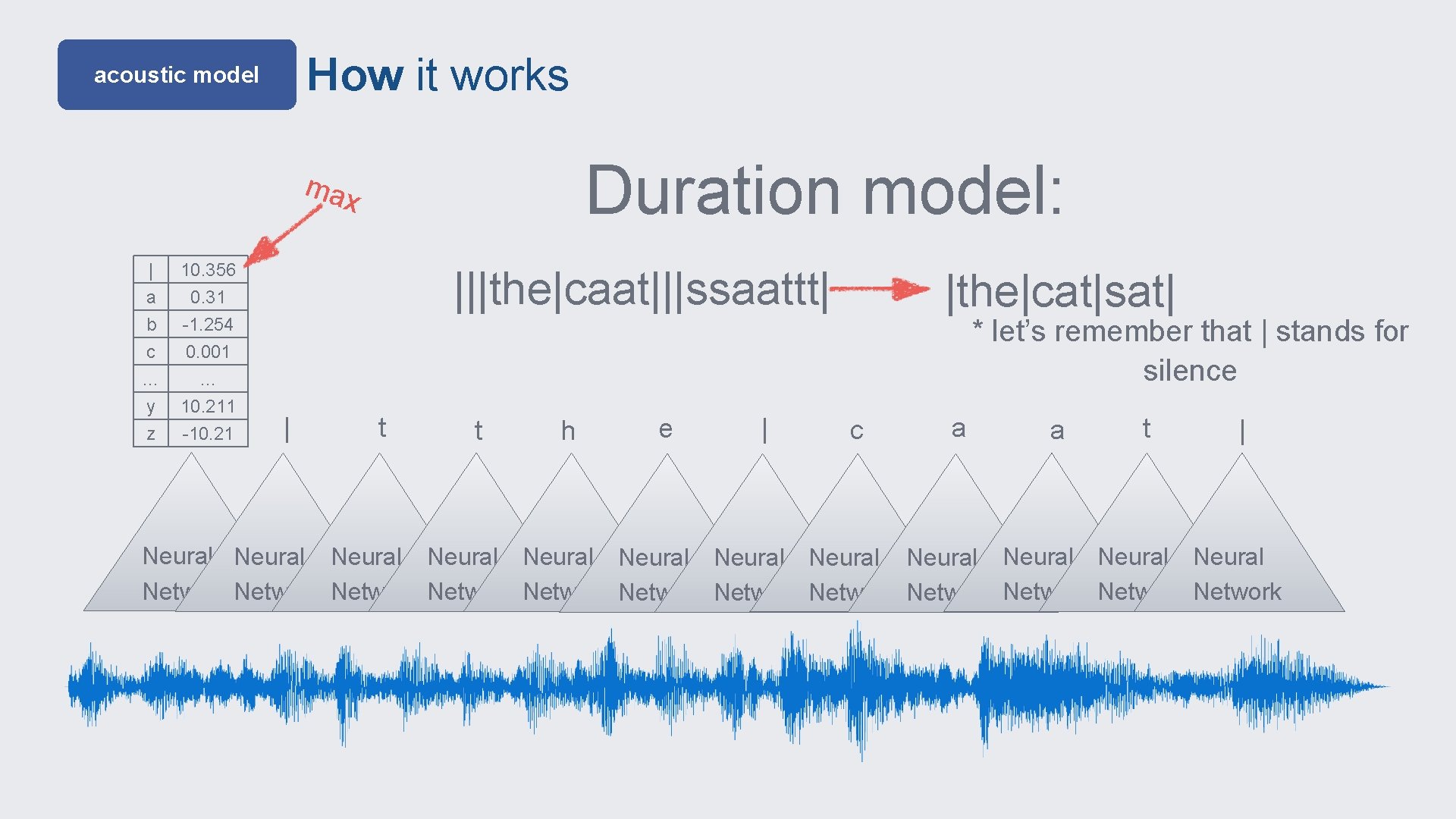

How it works acoustic model Duration model: max | a b c 10. 356 0. 31 -1. 254 0. 001 … y z … 10. 211 -10. 21 |||the|caat|||ssaattt| | t t h e | |the|cat|sat| * let’s remember that | stands for silence c a a t | Neural Neural Neural Network Network Network

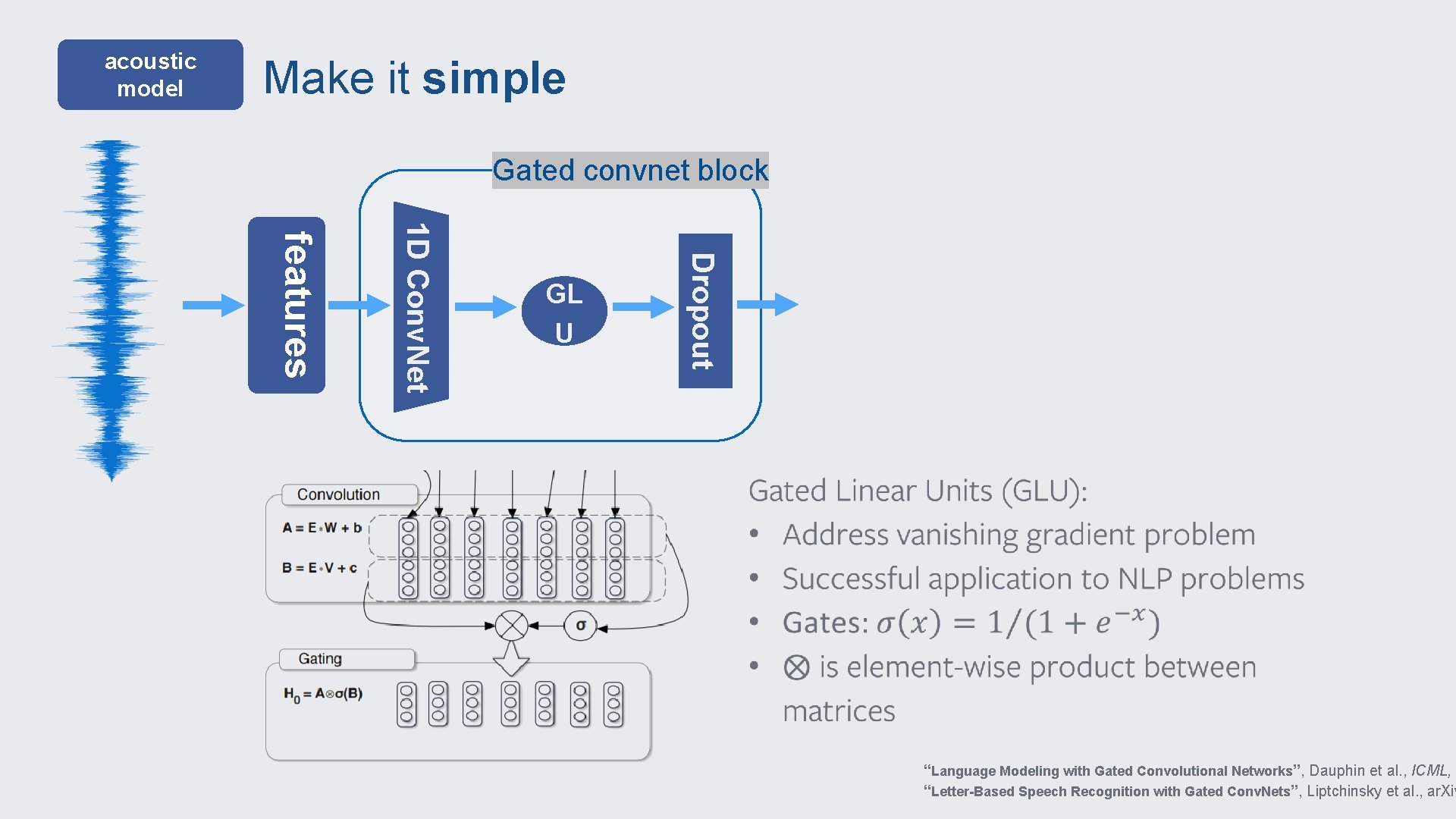

acoustic model Make it simple Gated convnet block Dropout 1 D Conv. Net features GL U “Language Modeling with Gated Convolutional Networks”, Dauphin et al. , ICML, 2 “Letter-Based Speech Recognition with Gated Conv. Nets”, Liptchinsky et al. , ar. Xiv

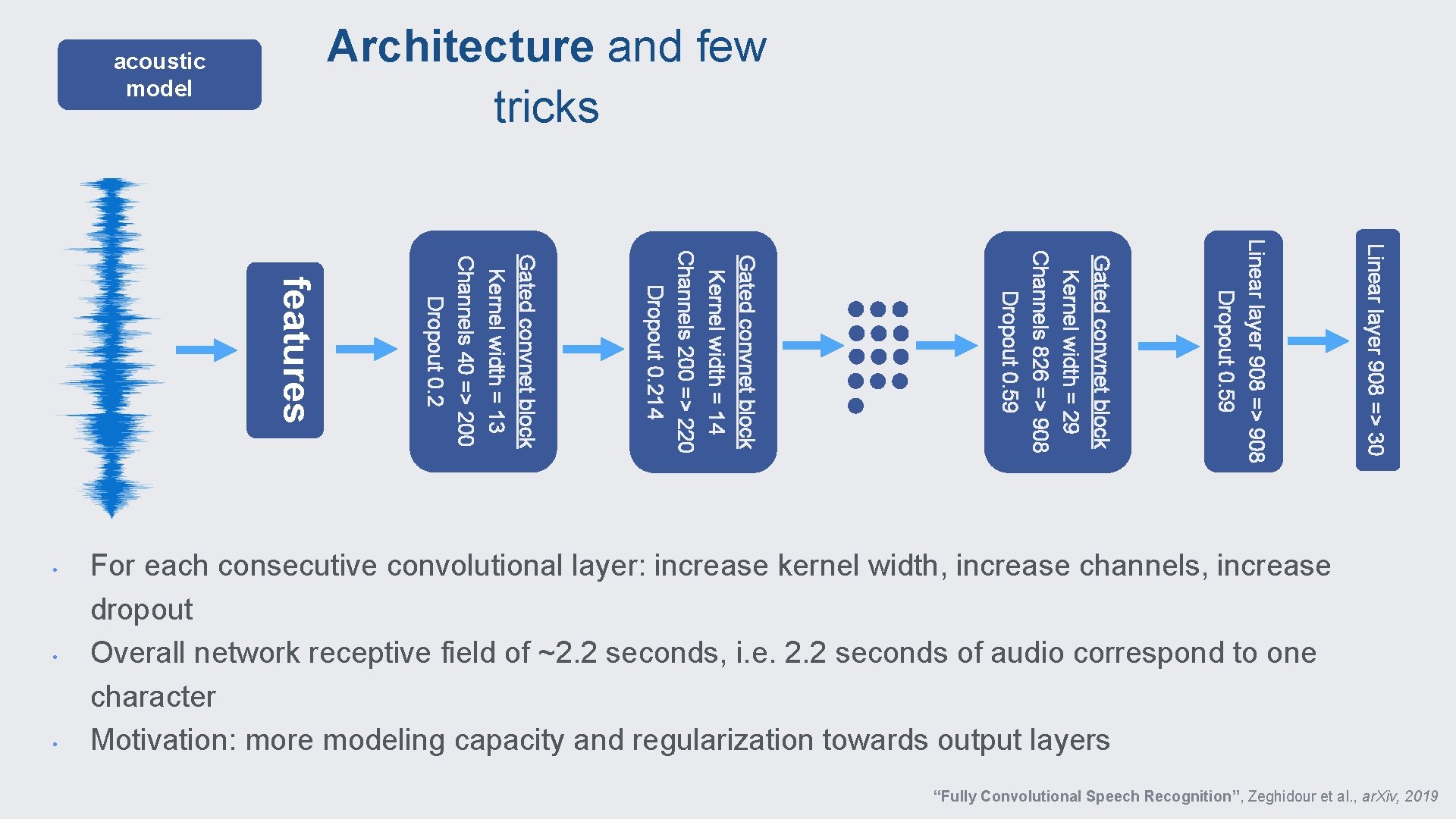

Architecture and few tricks acoustic model Linear layer 908 => 30 Linear layer 908 => 908 Dropout 0. 59 Gated convnet block Kernel width = 29 Channels 826 => 908 Dropout 0. 59 • Gated convnet block Kernel width = 14 Channels 200 => 220 Dropout 0. 214 • Gated convnet block Kernel width = 13 Channels 40 => 200 Dropout 0. 2 features • For each consecutive convolutional layer: increase kernel width, increase channels, increase dropout Overall network receptive field of ~2. 2 seconds, i. e. 2. 2 seconds of audio correspond to one character Motivation: more modeling capacity and regularization towards output layers “Fully Convolutional Speech Recognition”, Zeghidour et al. , ar. Xiv, 2019

end-to-end speech recognition features acoustic model decoder language model the cat sat

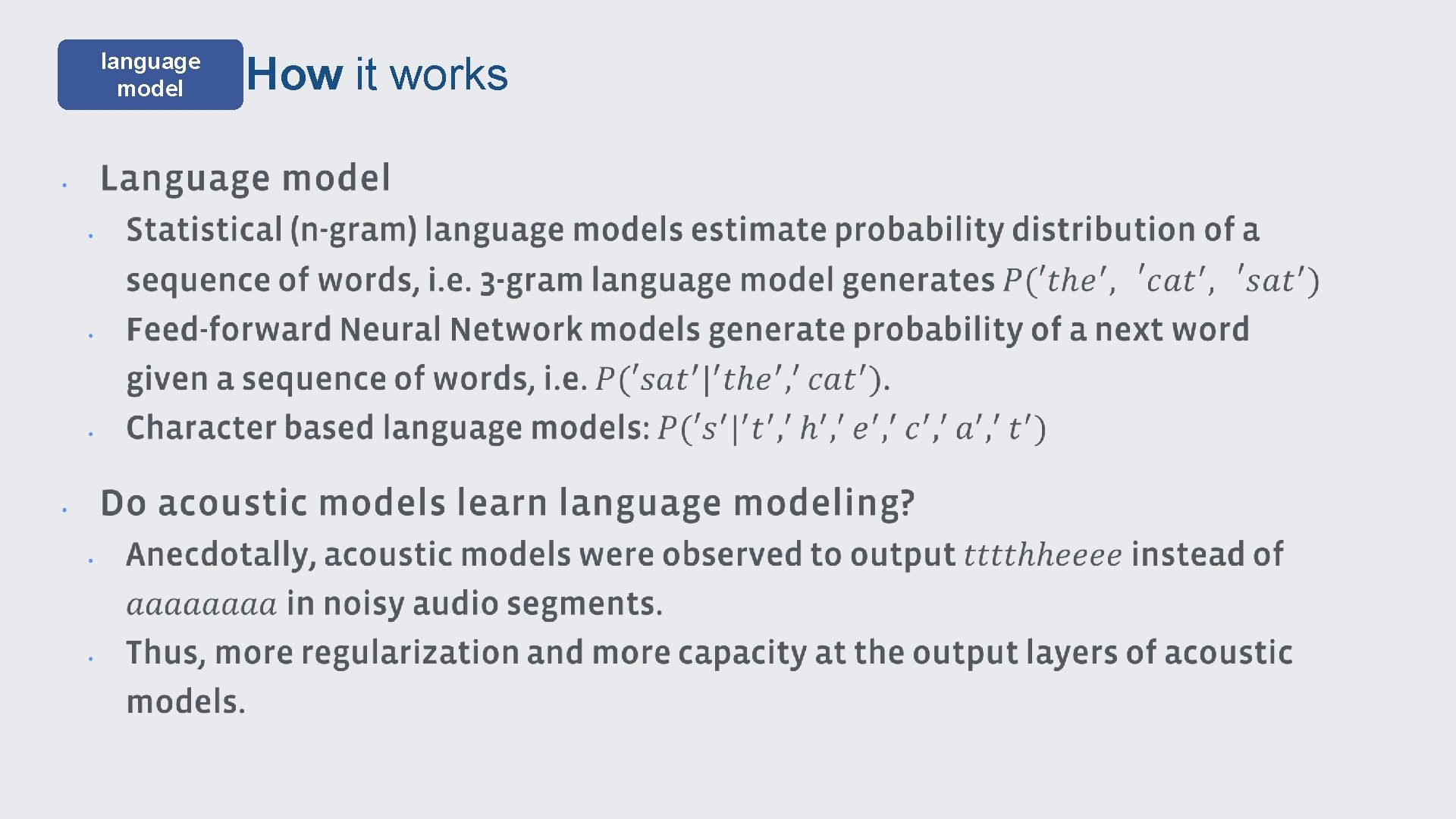

language model How it works

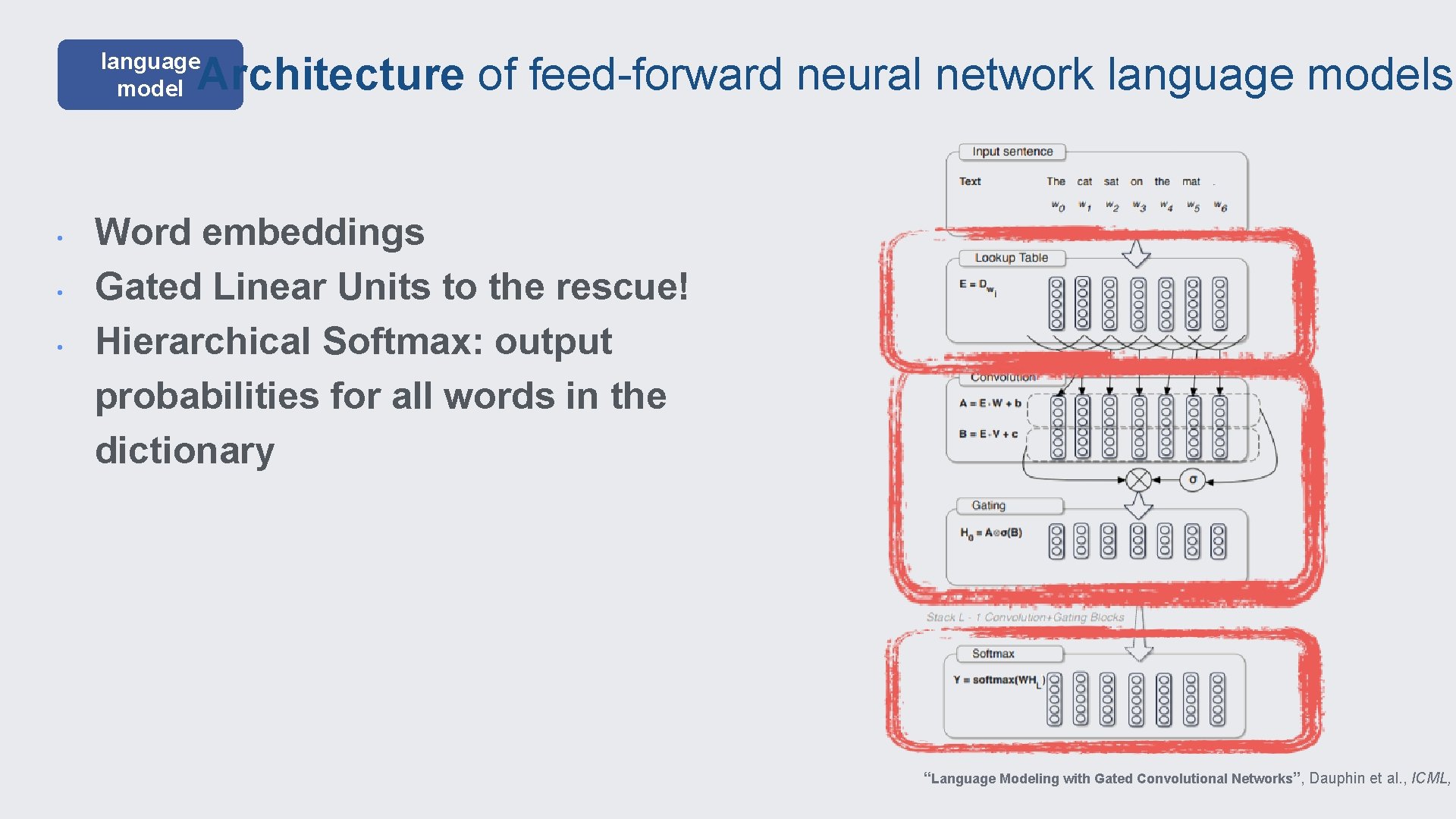

Architecture of feed-forward neural network language models language model • • • Word embeddings Gated Linear Units to the rescue! Hierarchical Softmax: output probabilities for all words in the dictionary “Language Modeling with Gated Convolutional Networks”, Dauphin et al. , ICML, 2

end-to-end speech recognition features acoustic model decoder language model the cat sat

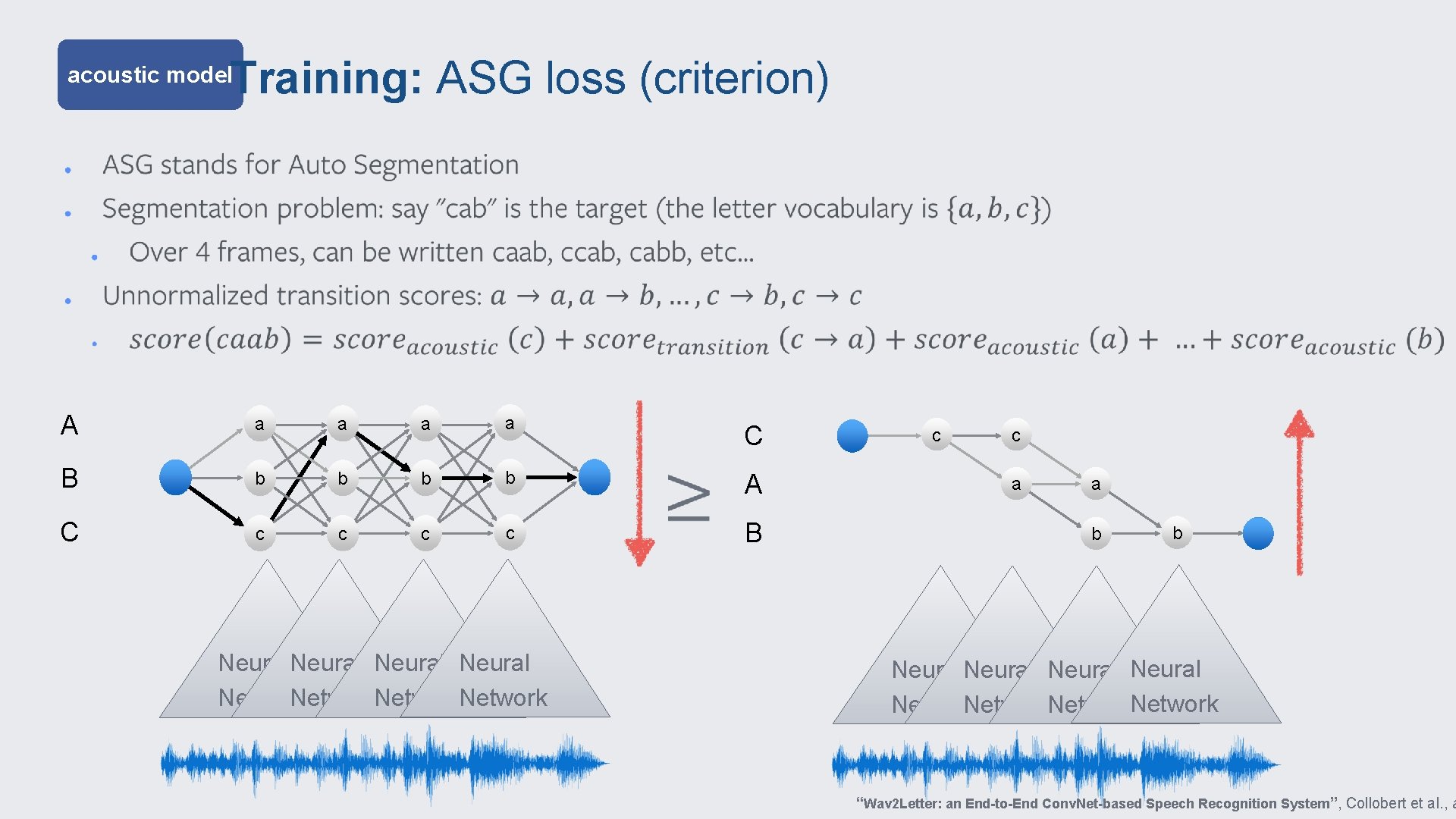

Training: ASG loss (criterion) acoustic model A a a B b b C c c Neural Network C A B c c a a b b Neural Network “Wav 2 Letter: an End-to-End Conv. Net-based Speech Recognition System”, Collobert et al. , a

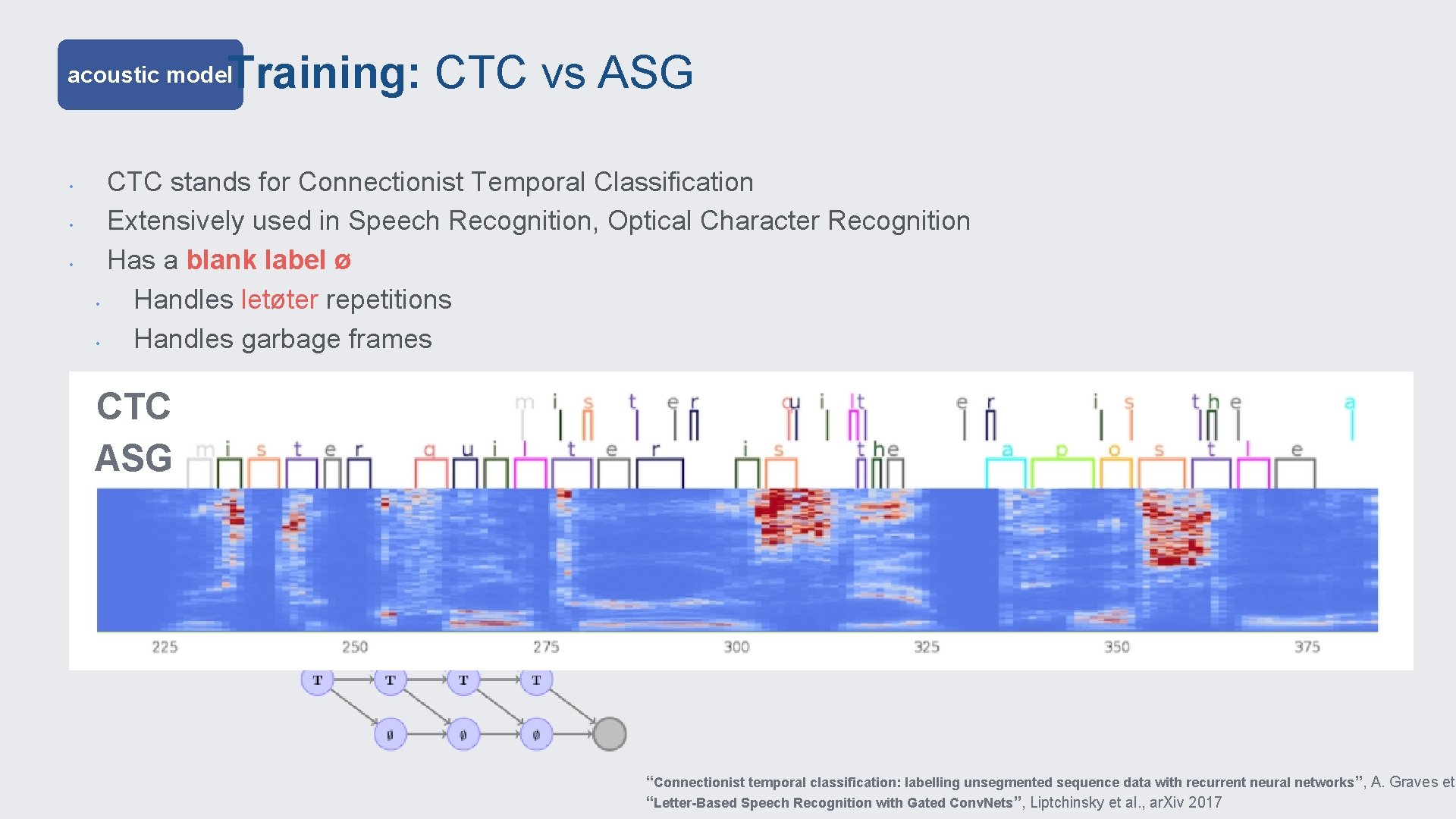

Training: CTC vs ASG acoustic model • • • CTC stands for Connectionist Temporal Classification Extensively used in Speech Recognition, Optical Character Recognition Has a blank label ø • Handles letøter repetitions • Handles garbage frames CTC ASG VS “Connectionist temporal classification: labelling unsegmented sequence data with recurrent neural networks ”, A. Graves et “Letter-Based Speech Recognition with Gated Conv. Nets”, Liptchinsky et al. , ar. Xiv 2017

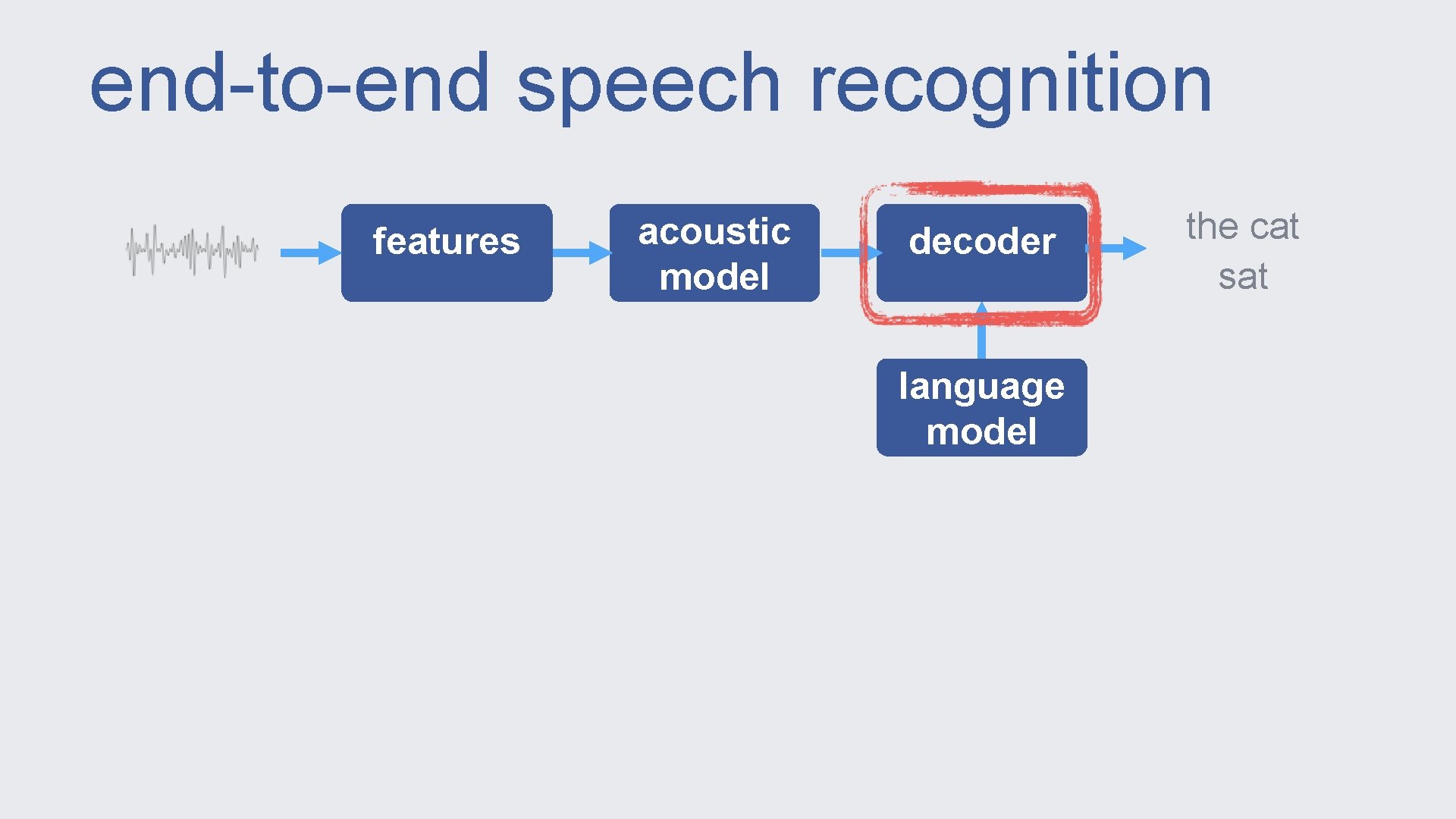

end-to-end speech recognition features acoustic model decoder language model the cat sat

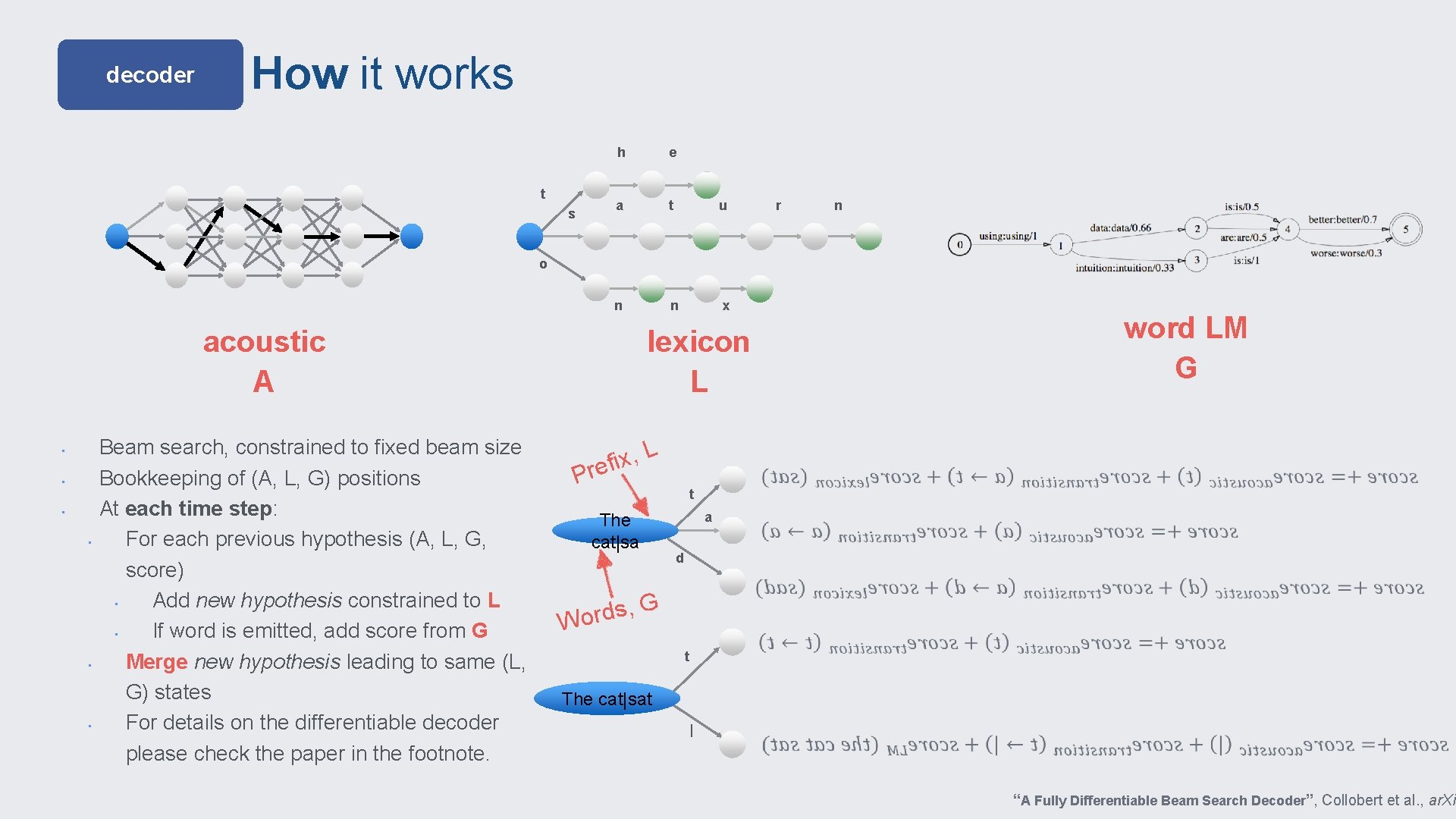

decoder How it works t s h e a t u n n x r n o acoustic A • • • Beam search, constrained to fixed beam size Bookkeeping of (A, L, G) positions At each time step: For each previous hypothesis (A, L, G, score) • Add new hypothesis constrained to L • If word is emitted, add score from G Merge new hypothesis leading to same (L, G) states For details on the differentiable decoder please check the paper in the footnote. word LM G lexicon L L , x i ref P t The cat|sa a d G , s d Wor t The cat|sat | “A Fully Differentiable Beam Search Decoder”, Collobert et al. , ar. Xiv

Toolkit

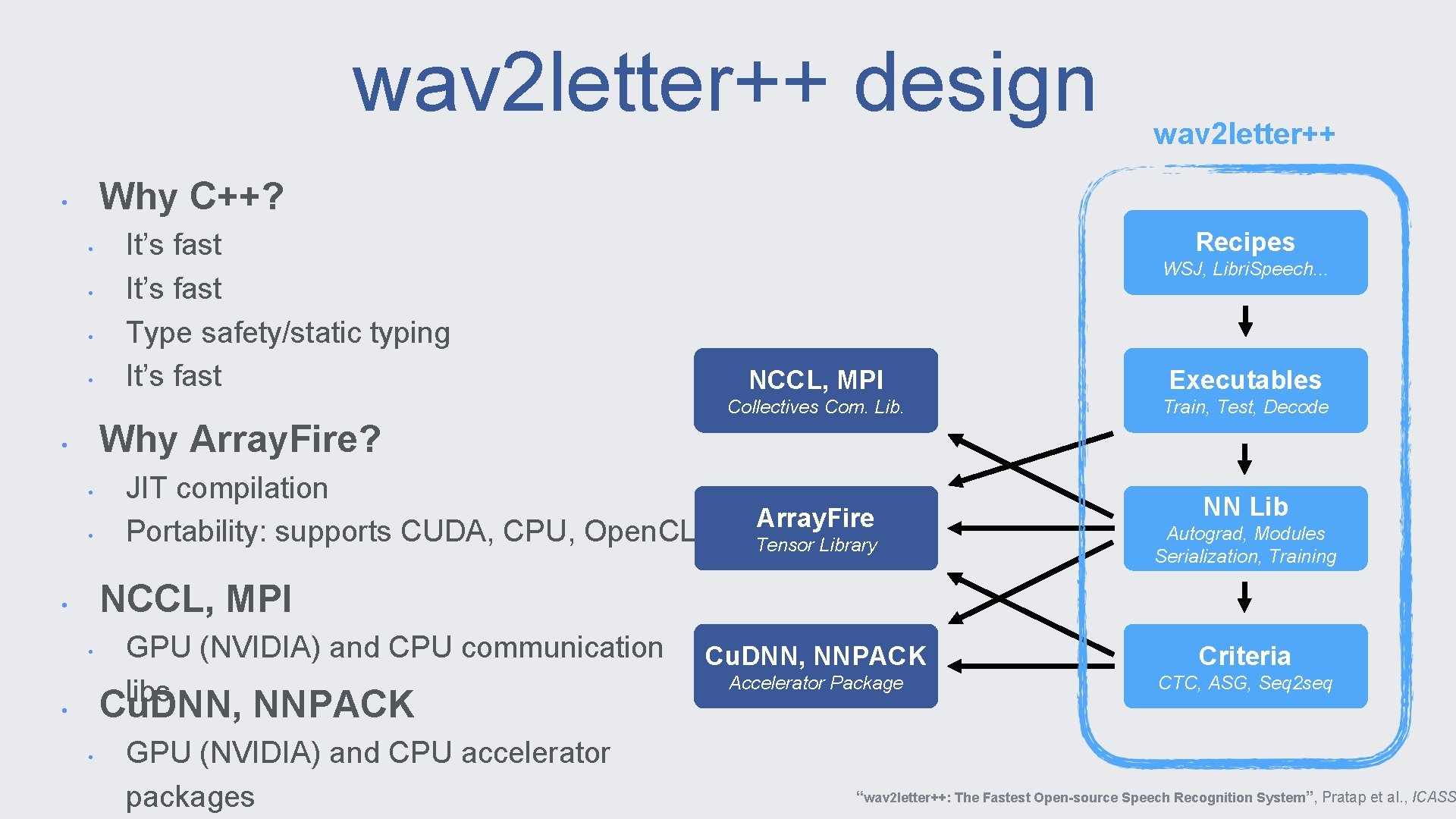

wav 2 letter++ design wav 2 letter++ Why C++? • • • It’s fast Type safety/static typing It’s fast Recipes WSJ, Libri. Speech. . . NCCL, MPI Executables Collectives Com. Lib. Train, Test, Decode Why Array. Fire? • • • JIT compilation Portability: supports CUDA, CPU, Open. CL Array. Fire NN Lib Tensor Library Autograd, Modules Serialization, Training Cu. DNN, NNPACK Criteria Accelerator Package CTC, ASG, Seq 2 seq NCCL, MPI • • GPU (NVIDIA) and CPU communication libs Cu. DNN, NNPACK • • GPU (NVIDIA) and CPU accelerator packages “wav 2 letter++: The Fastest Open-source Speech Recognition System”, Pratap et al. , ICASS

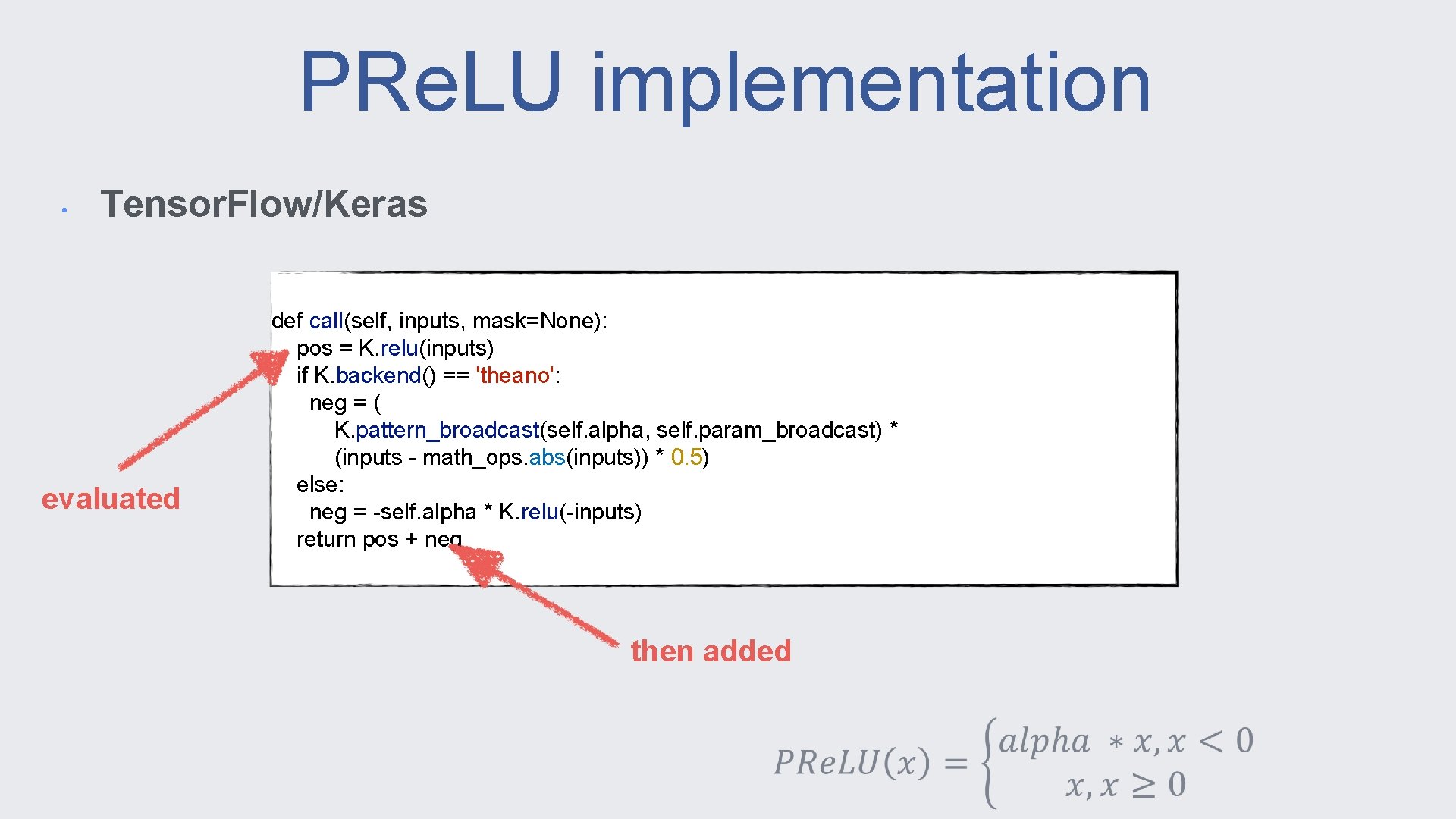

PRe. LU implementation • Tensor. Flow/Keras evaluated def call(self, inputs, mask=None): pos = K. relu(inputs) if K. backend() == 'theano': neg = ( K. pattern_broadcast(self. alpha, self. param_broadcast) * (inputs - math_ops. abs(inputs)) * 0. 5) else: neg = -self. alpha * K. relu(-inputs) return pos + neg then added

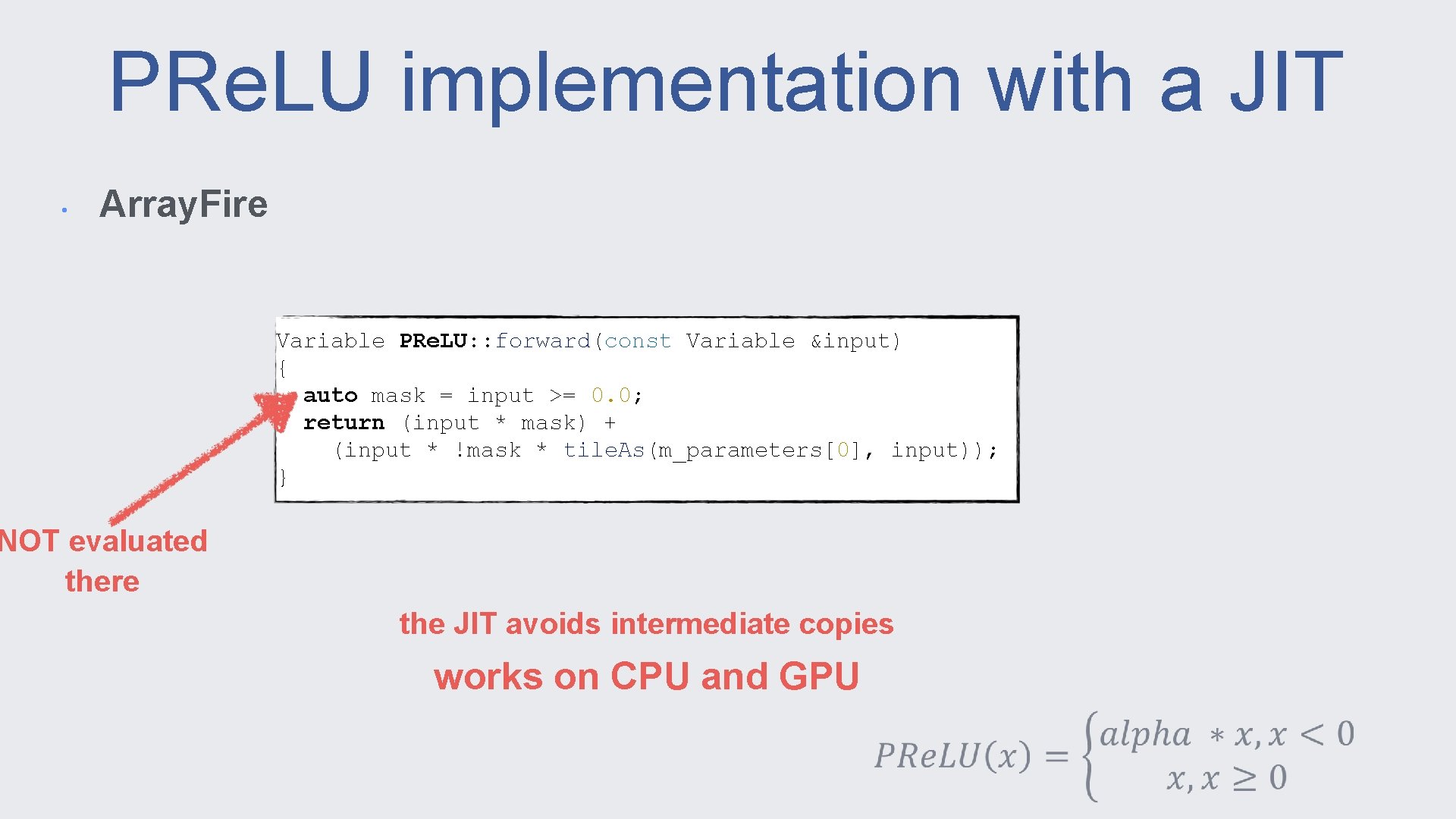

PRe. LU implementation with a JIT • Array. Fire Variable PRe. LU: : forward(const Variable &input) { auto mask = input >= 0. 0; return (input * mask) + (input * !mask * tile. As(m_parameters[0], input)); } NOT evaluated there the JIT avoids intermediate copies works on CPU and GPU

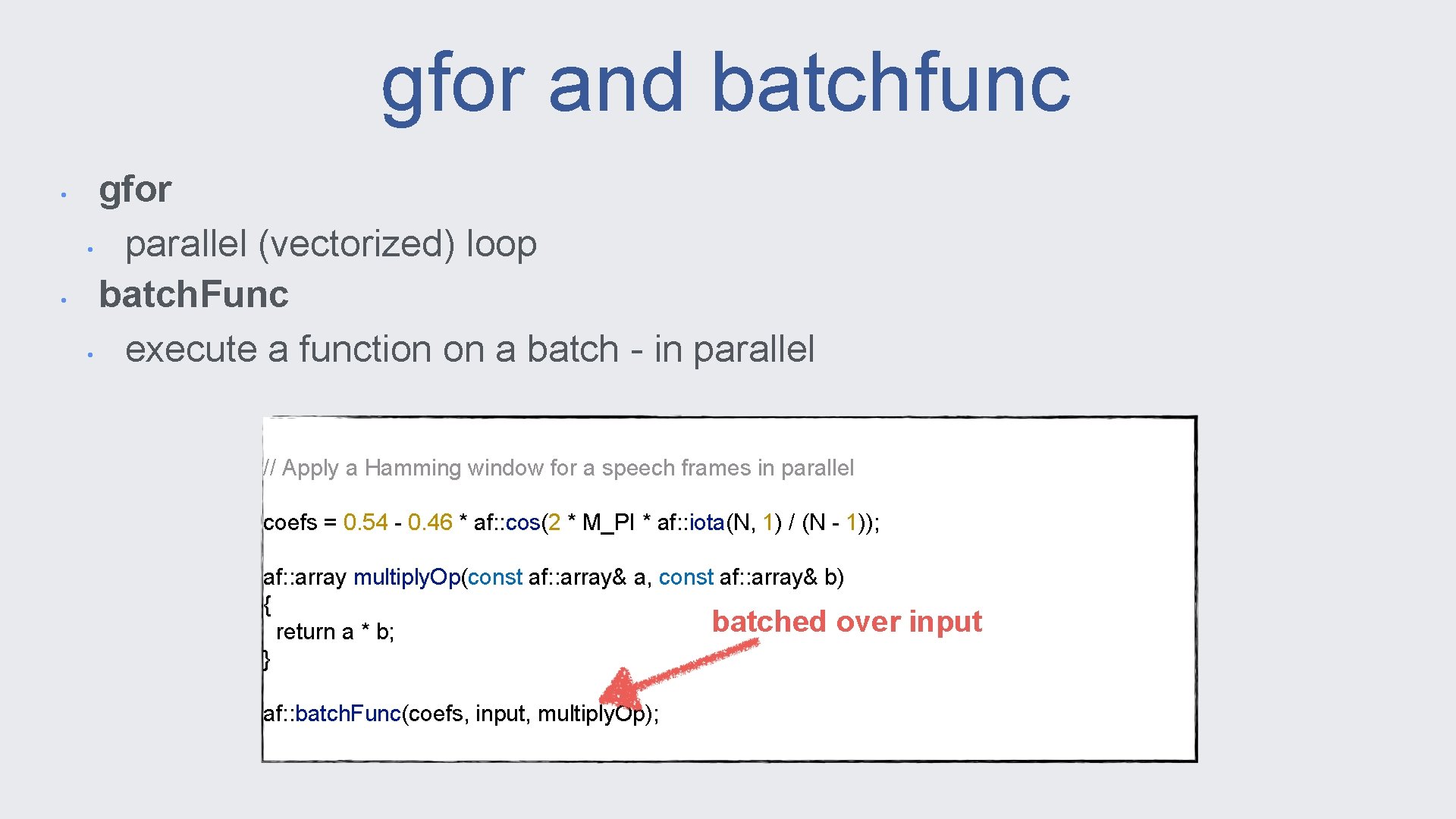

gfor and batchfunc • • gfor • parallel (vectorized) loop batch. Func • execute a function on a batch - in parallel // Apply a Hamming window for a speech frames in parallel coefs = 0. 54 - 0. 46 * af: : cos(2 * M_PI * af: : iota(N, 1) / (N - 1)); af: : array multiply. Op(const af: : array& a, const af: : array& b) { batched over return a * b; } af: : batch. Func(coefs, input, multiply. Op); input

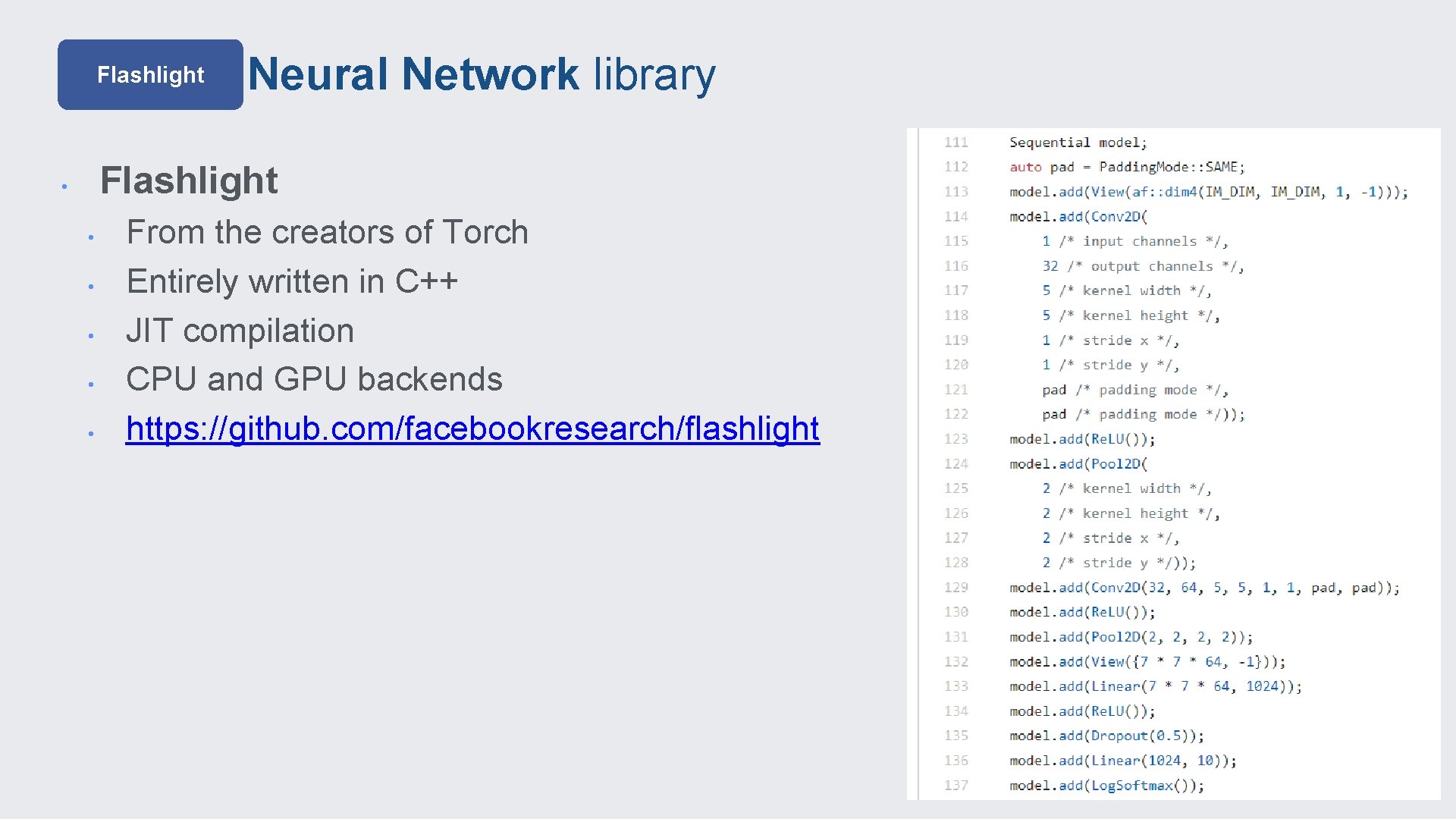

Flashlight Neural Network library Flashlight • • • From the creators of Torch Entirely written in C++ JIT compilation CPU and GPU backends https: //github. com/facebookresearch/flashlight

benchmarks

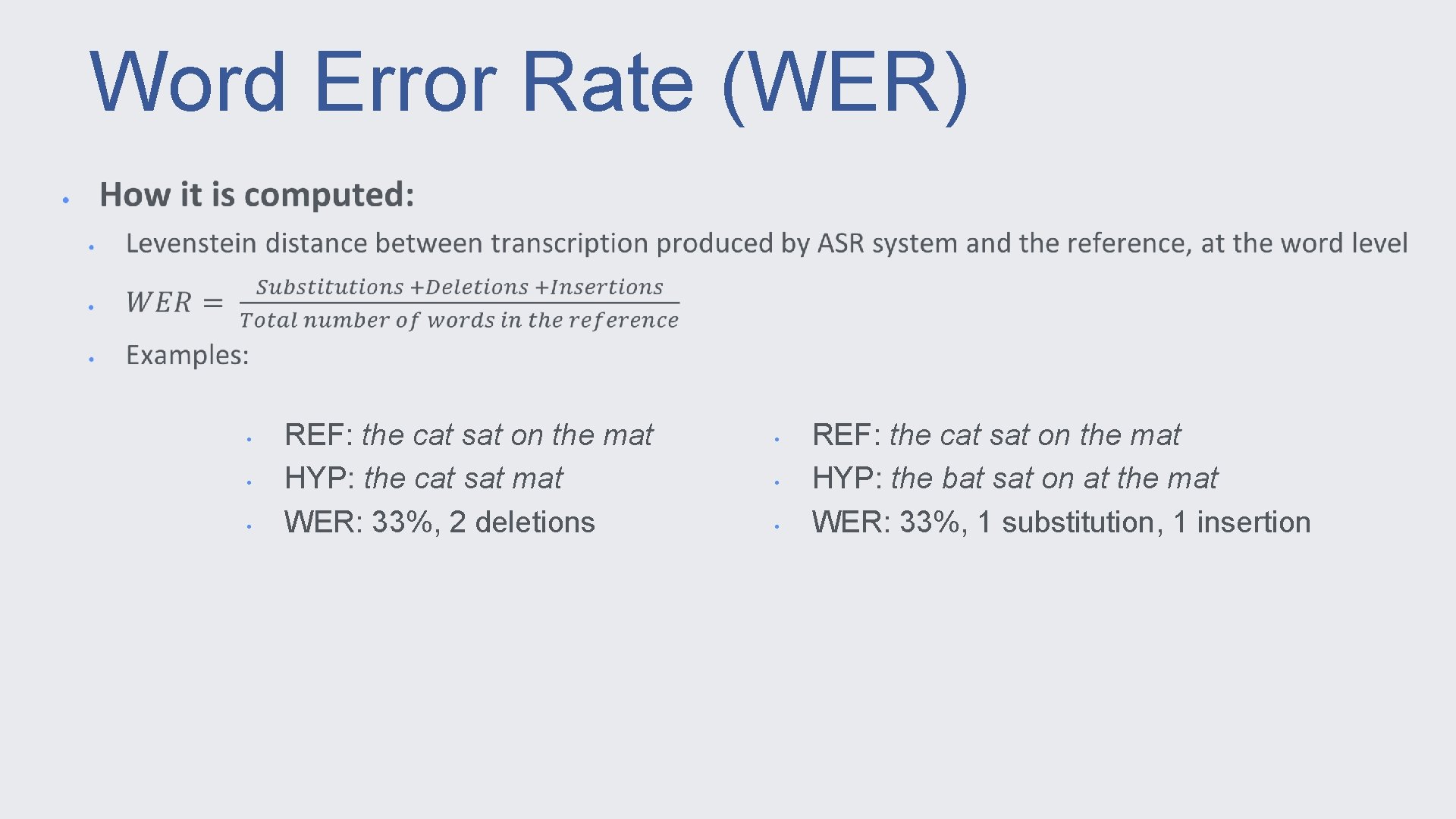

Word Error Rate (WER) • • • REF: the cat sat on the mat HYP: the cat sat mat WER: 33%, 2 deletions • • • REF: the cat sat on the mat HYP: the bat sat on at the mat WER: 33%, 1 substitution, 1 insertion

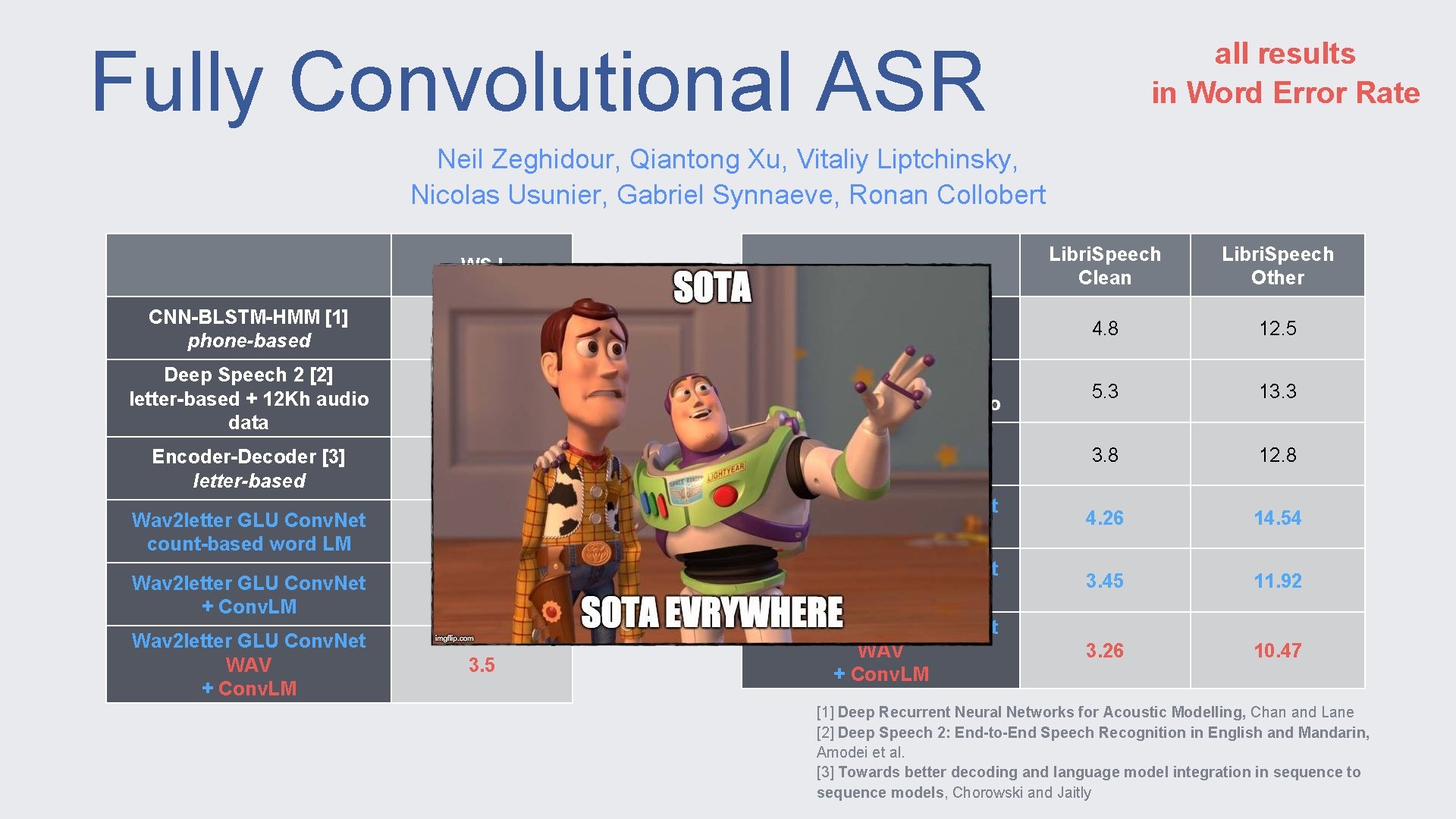

Fully Convolutional ASR all results in Word Error Rate Neil Zeghidour, Qiantong Xu, Vitaliy Liptchinsky, Nicolas Usunier, Gabriel Synnaeve, Ronan Collobert Libri. Speech Clean Libri. Speech Other 3. 7 HMM+CNN+i. Vectors [1] phone-based 4. 8 12. 5 3. 6 Deep Speech 2 [2] letter-based + 12 Kh audio 5. 3 13. 3 6. 7 Attention Model [3] letter-based 3. 8 12. 8 5. 6 Wav 2 letter GLU Conv. Net count-based word LM 4. 26 14. 54 4. 1 Wav 2 letter GLU Conv. Net + Conv. LM 3. 45 11. 92 3. 5 Wav 2 letter GLU Conv. Net WAV + Conv. LM 3. 26 10. 47 WSJ CNN-BLSTM-HMM [1] phone-based Deep Speech 2 [2] letter-based + 12 Kh audio data Encoder-Decoder [3] letter-based Wav 2 letter GLU Conv. Net count-based word LM Wav 2 letter GLU Conv. Net + Conv. LM Wav 2 letter GLU Conv. Net WAV + Conv. LM [1] Deep Recurrent Neural Networks for Acoustic Modelling, Chan and Lane [2] Deep Speech 2: End-to-End Speech Recognition in English and Mandarin, Amodei et al. [3] Towards better decoding and language model integration in sequence to sequence models, Chorowski and Jaitly

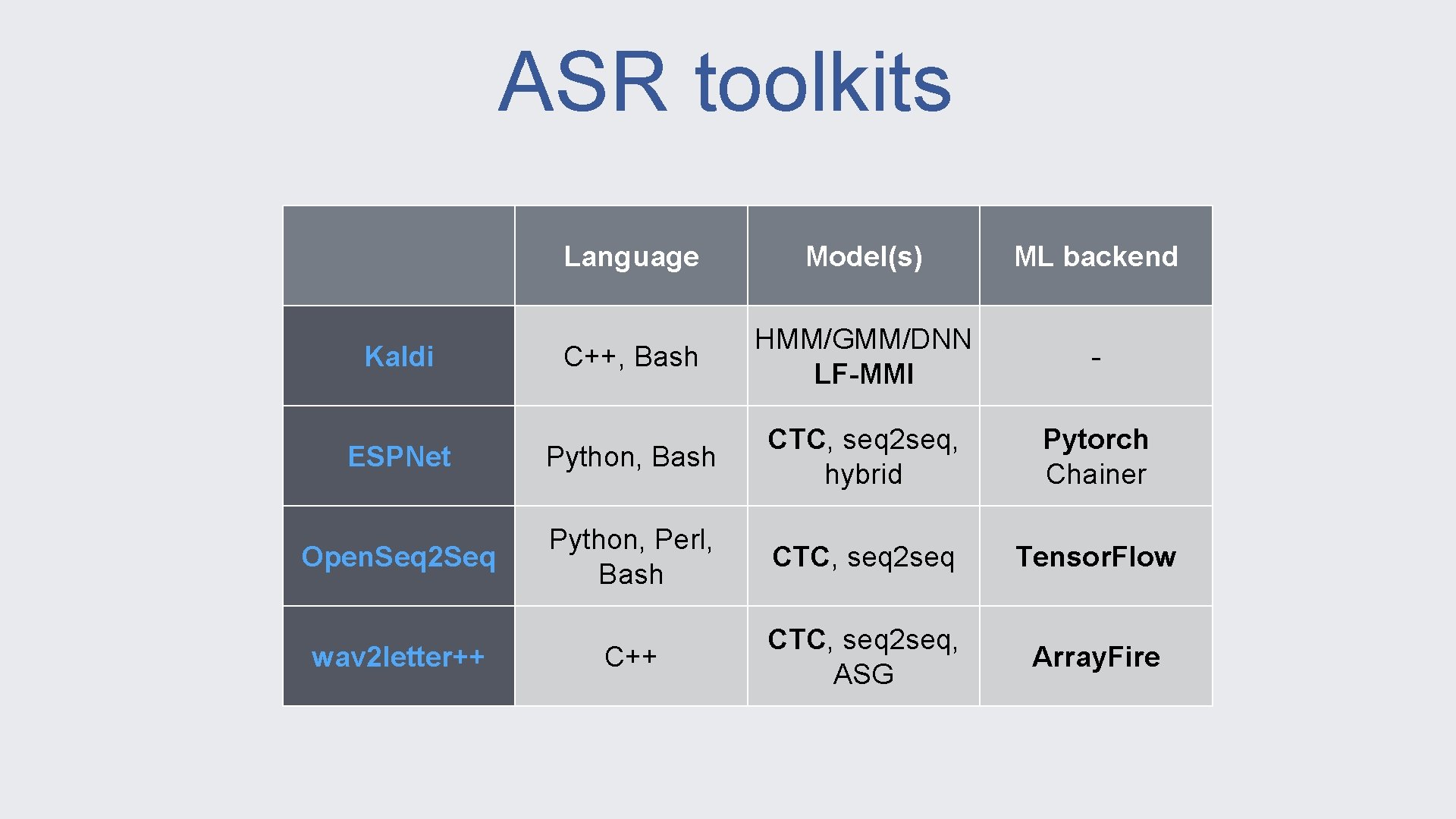

ASR toolkits Language Model(s) ML backend C++, Bash HMM/GMM/DNN LF-MMI - ESPNet Python, Bash CTC, seq 2 seq, hybrid Pytorch Chainer Open. Seq 2 Seq Python, Perl, Bash CTC, seq 2 seq Tensor. Flow C++ CTC, seq 2 seq, ASG Array. Fire Kaldi wav 2 letter++

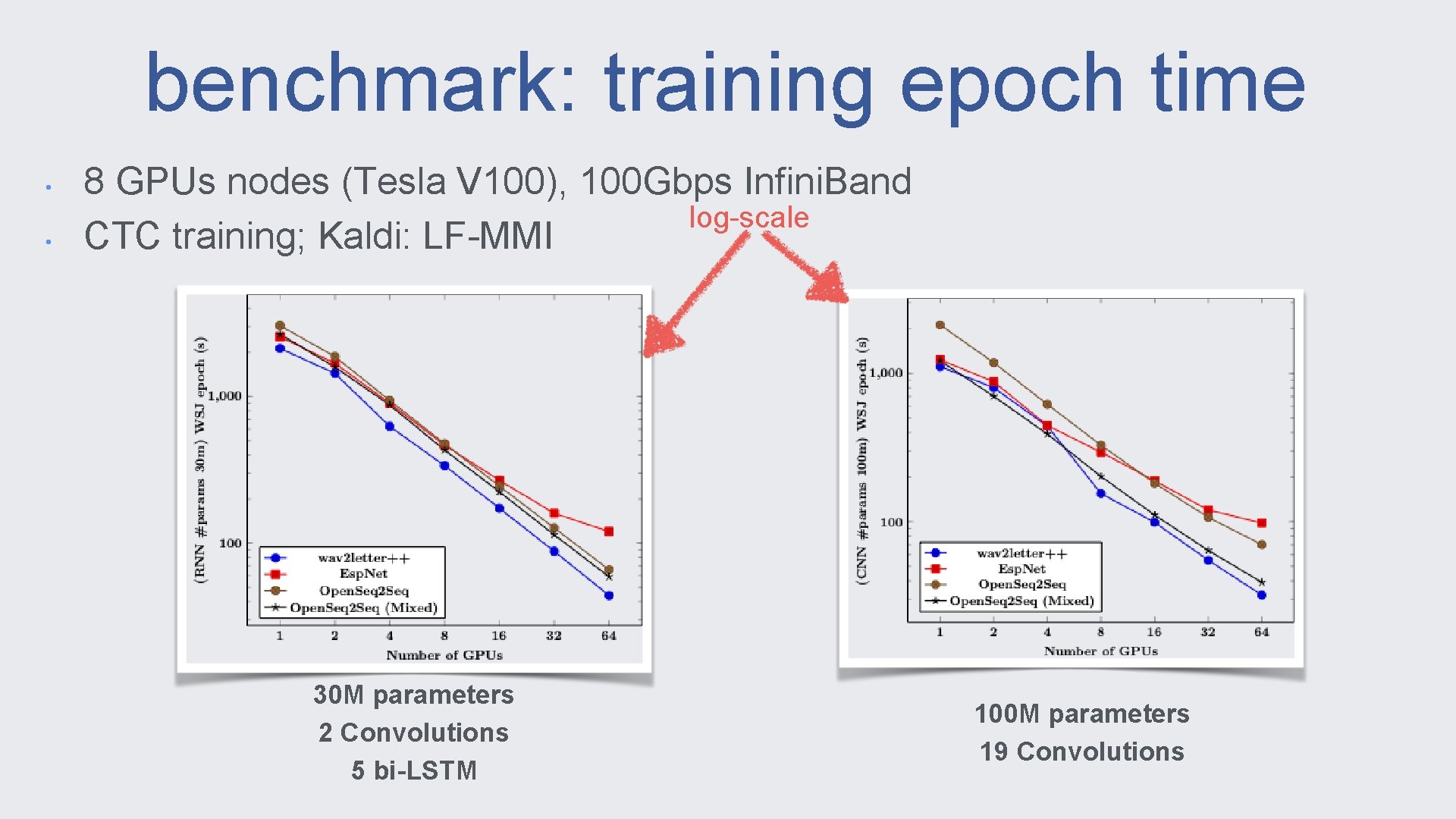

benchmark: training epoch time • • 8 GPUs nodes (Tesla V 100), 100 Gbps Infini. Band log-scale CTC training; Kaldi: LF-MMI 30 M parameters 2 Convolutions 5 bi-LSTM 100 M parameters 19 Convolutions

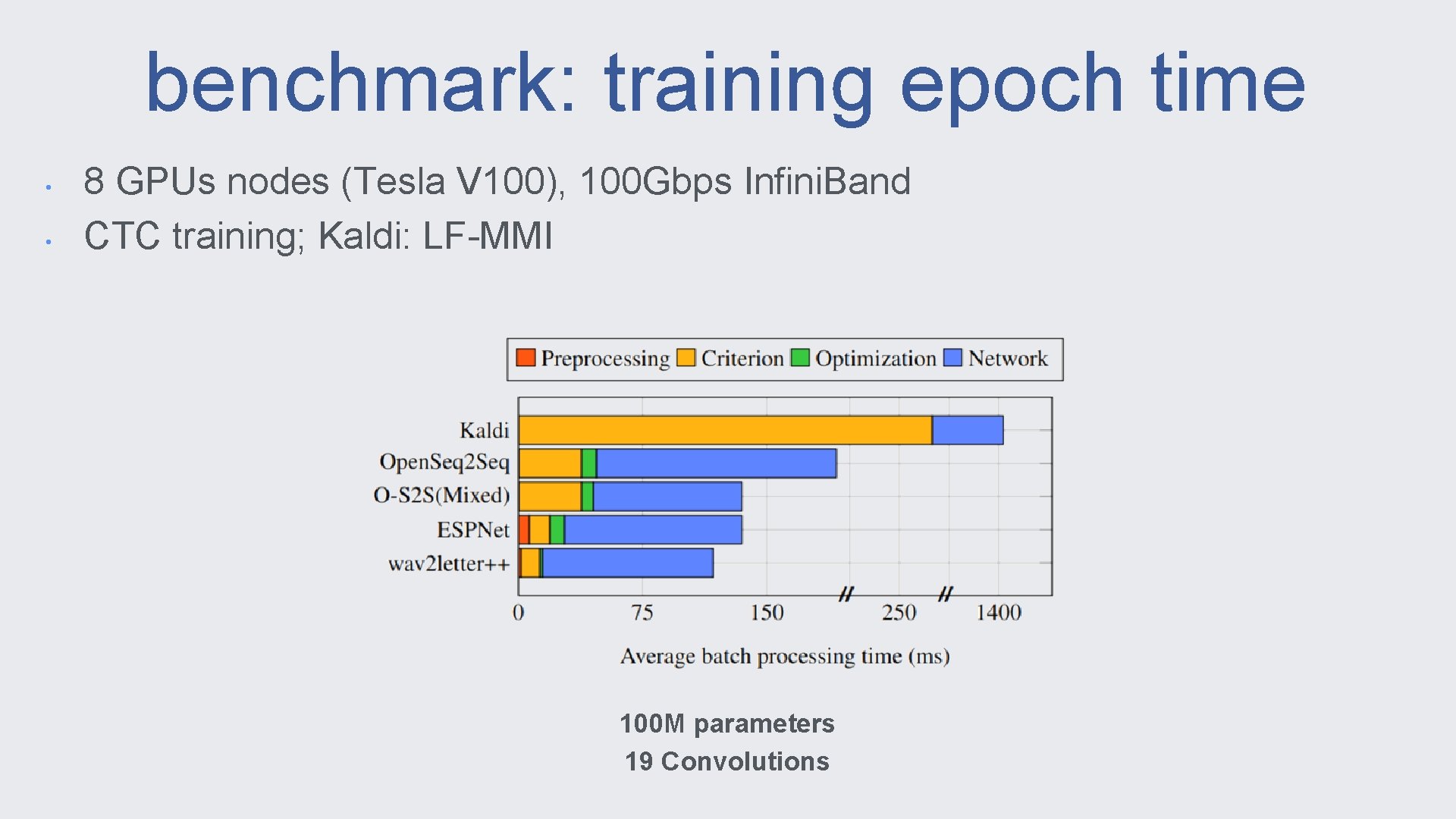

benchmark: training epoch time • • 8 GPUs nodes (Tesla V 100), 100 Gbps Infini. Band CTC training; Kaldi: LF-MMI 100 M parameters 19 Convolutions

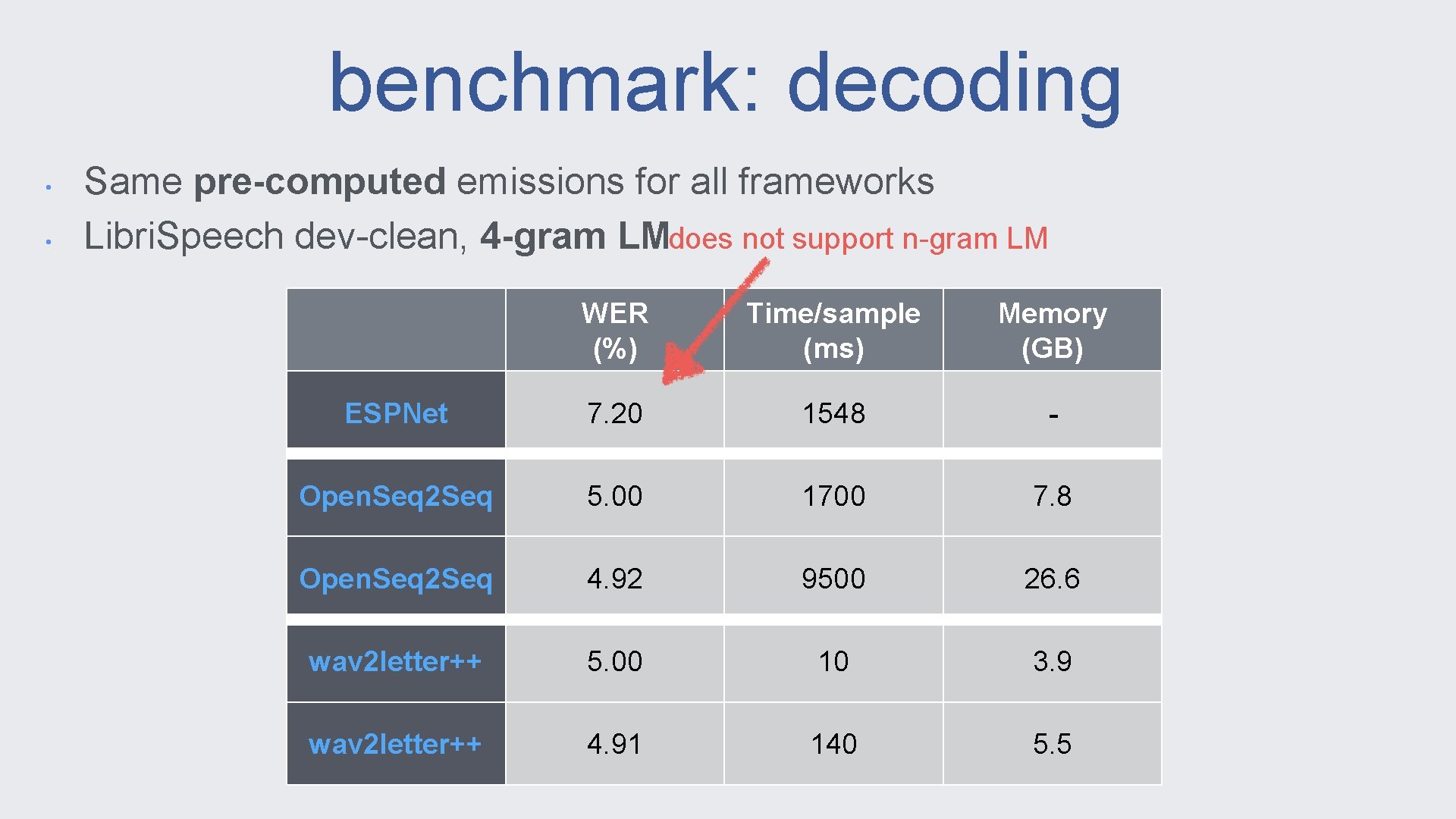

benchmark: decoding • • Same pre-computed emissions for all frameworks Libri. Speech dev-clean, 4 -gram LMdoes not support n-gram LM WER (%) Time/sample (ms) Memory (GB) ESPNet 7. 20 1548 - Open. Seq 2 Seq 5. 00 1700 7. 8 Open. Seq 2 Seq 4. 92 9500 26. 6 wav 2 letter++ 5. 00 10 3. 9 wav 2 letter++ 4. 91 140 5. 5

- Slides: 34