Wang Xiaoyu et al Regionlets for Generic Object

Wang, Xiaoyu, et al. “Regionlets for Generic Object Detection. ” ICCV 2013 2014. 5. 8. Mooyeol Baek

Motivation Limitations of current generic object detections • Hand-crafted parameters to handle different degrees of deformation • Sub-optimal multiple scales · viewpoints handling A flexible and general object-level representation • Data-driven deformations handling • Multiple scales · viewpoints handling using a single model • Fast and easy to be extended with different features

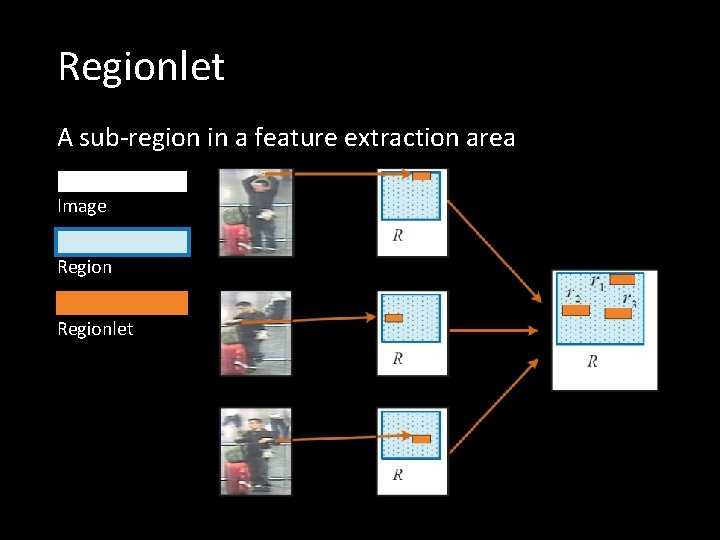

Regionlet A sub-region in a feature extraction area Image Regionlet

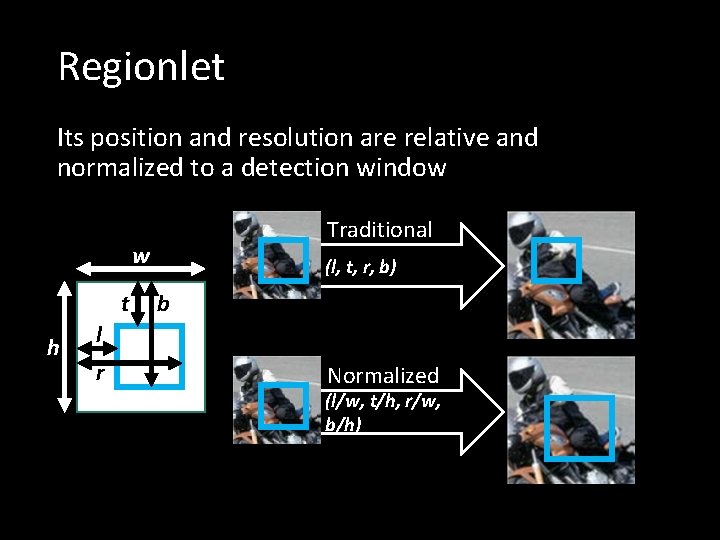

Regionlet Its position and resolution are relative and normalized to a detection window Traditional w t h (l, t, r, b) b l r Normalized (l/w, t/h, r/w, b/h)

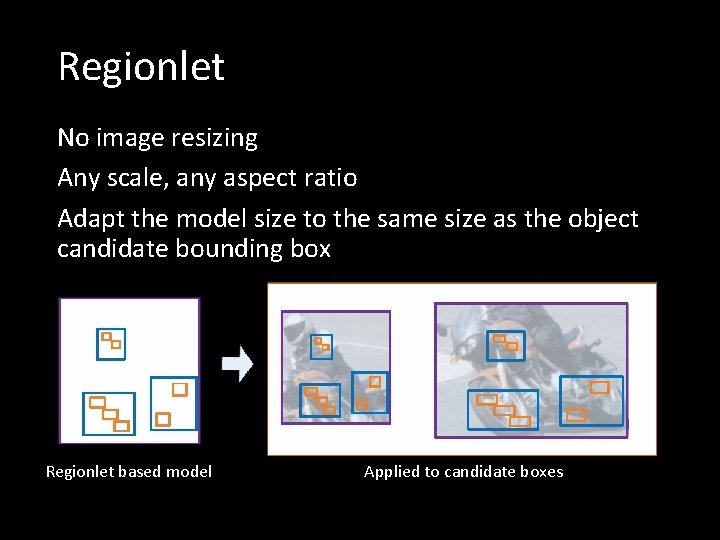

Regionlet No image resizing Any scale, any aspect ratio Adapt the model size to the same size as the object candidate bounding box Regionlet based model Applied to candidate boxes

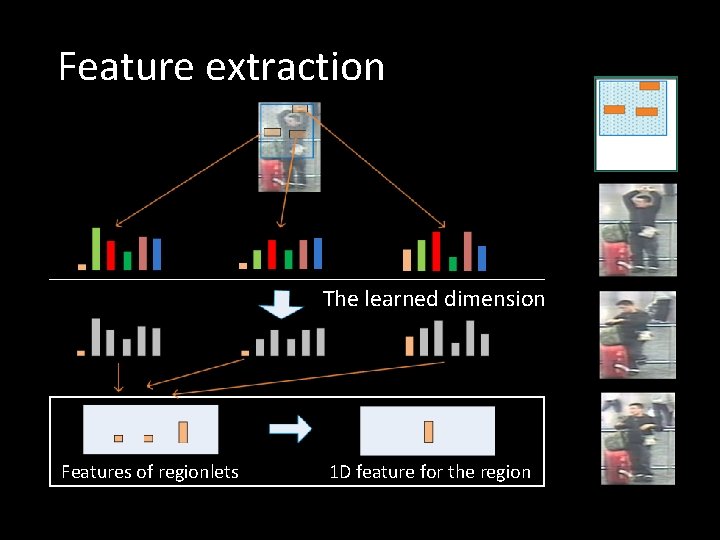

Feature extraction The learned dimension Features of regionlets 1 D feature for the region

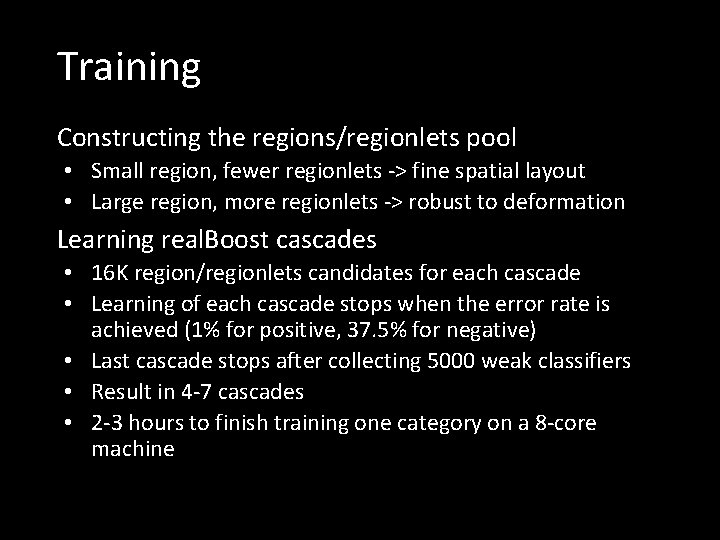

Training Constructing the regions/regionlets pool • Small region, fewer regionlets -> fine spatial layout • Large region, more regionlets -> robust to deformation Learning real. Boost cascades • 16 K region/regionlets candidates for each cascade • Learning of each cascade stops when the error rate is achieved (1% for positive, 37. 5% for negative) • Last cascade stops after collecting 5000 weak classifiers • Result in 4 -7 cascades • 2 -3 hours to finish training one category on a 8 -core machine

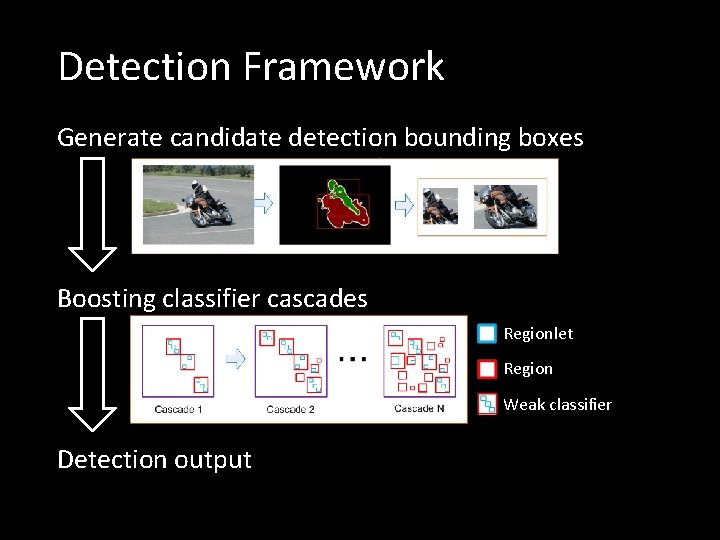

Detection Framework Generate candidate detection bounding boxes Boosting classifier cascades Regionlet Region Weak classifier Detection output

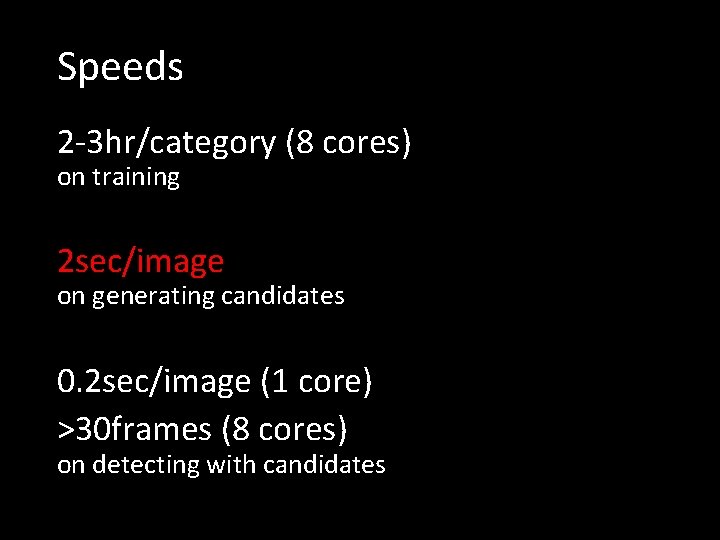

Speeds 2 -3 hr/category (8 cores) on training 2 sec/image on generating candidates 0. 2 sec/image (1 core) >30 frames (8 cores) on detecting with candidates

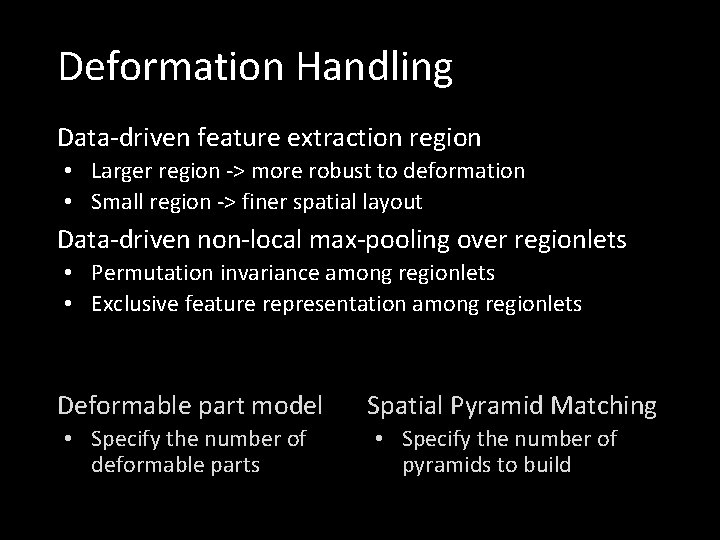

Deformation Handling Data-driven feature extraction region • Larger region -> more robust to deformation • Small region -> finer spatial layout Data-driven non-local max-pooling over regionlets • Permutation invariance among regionlets • Exclusive feature representation among regionlets Deformable part model • Specify the number of deformable parts Spatial Pyramid Matching • Specify the number of pyramids to build

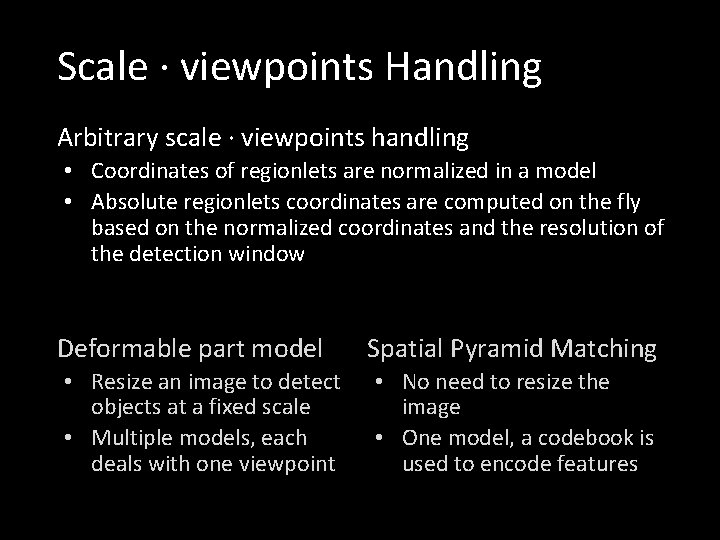

Scale · viewpoints Handling Arbitrary scale · viewpoints handling • Coordinates of regionlets are normalized in a model • Absolute regionlets coordinates are computed on the fly based on the normalized coordinates and the resolution of the detection window Deformable part model • Resize an image to detect objects at a fixed scale • Multiple models, each deals with one viewpoint Spatial Pyramid Matching • No need to resize the image • One model, a codebook is used to encode features

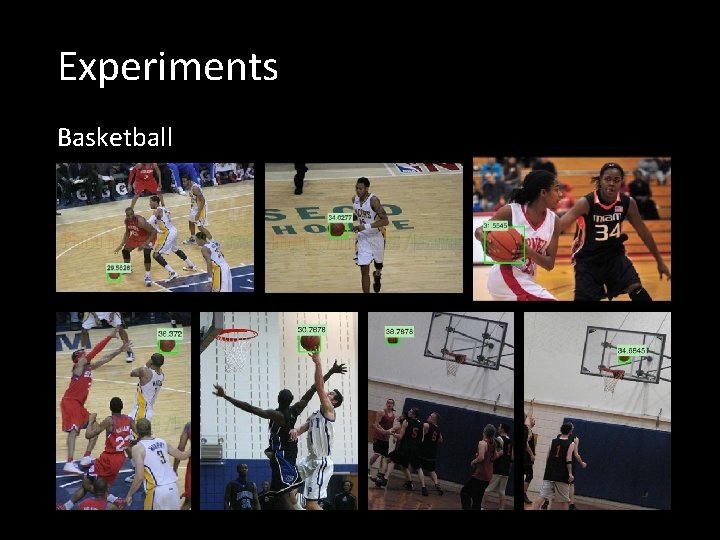

Experiments Basketball

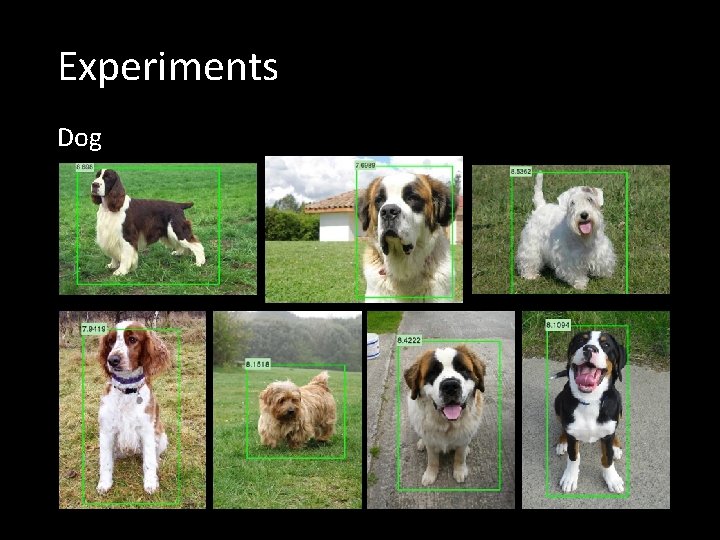

Experiments Dog

Experiments Backpack

Experiments Spatula

Conclusions A new object representation for object detection • Non-local max-pooling of regionlets • Relative normalized locations of regionlets • Flexibility to incorporate various types of features A principled data-driven detection framework, effective in handling deformation, multiple scales, multiple viewpoints Superior performance with a fast running speed

- Slides: 16