VIBES Variational Inference Engine For Bayesian Networks John

VIBES Variational Inference Engine For Bayesian Networks John Winn Inference Group, Cavendish Laboratory in association with Chris Bishop (Microsoft Research, Cambridge) David Spiegelhalter (MRC Biostatistics Unit, Cambridge) 30 th October 2002

Overview Bayesian Networks n Variational Inference n VIBES n Digit data demo n

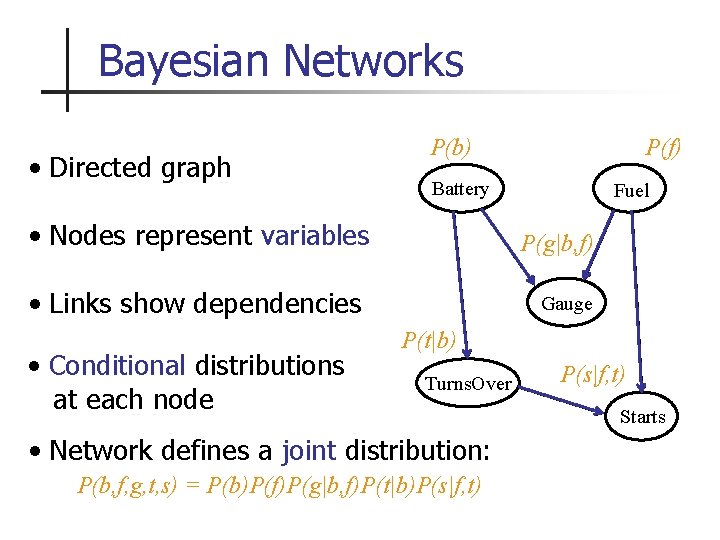

Bayesian Networks • Directed graph P(b) Battery • Nodes represent variables Fuel P(g|b, f) • Links show dependencies • Conditional distributions at each node P(f) Gauge P(t|b) Turns. Over • Network defines a joint distribution: P(b, f, g, t, s) = P(b)P(f)P(g|b, f)P(t|b)P(s|f, t) Starts

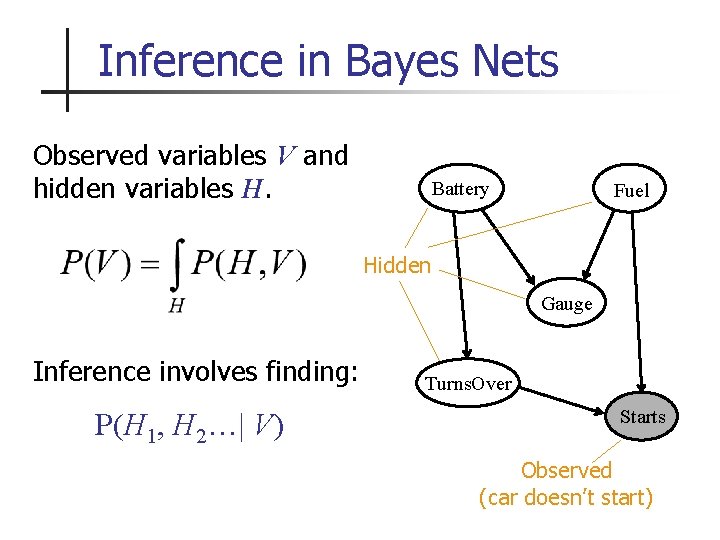

Inference in Bayes Nets Observed variables V and hidden variables H. Battery Fuel Hidden Gauge Inference involves finding: P(H 1, H 2…| V) Turns. Over Starts Observed (car doesn’t start)

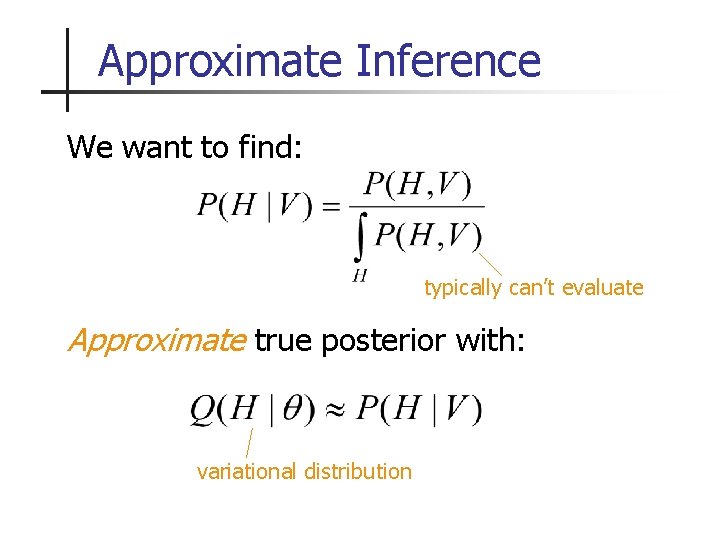

Approximate Inference We want to find: typically can’t evaluate Approximate true posterior with: variational distribution

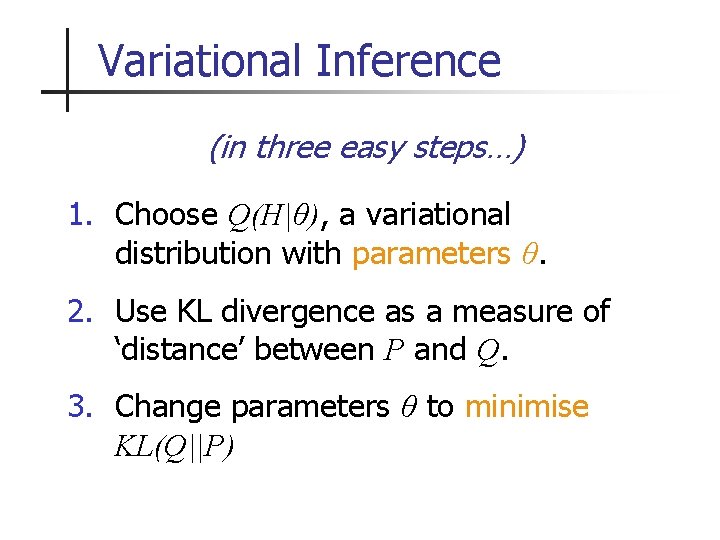

Variational Inference (in three easy steps…) 1. Choose Q(H|θ), a variational distribution with parameters θ. 2. Use KL divergence as a measure of ‘distance’ between P and Q. 3. Change parameters θ to minimise KL(Q||P)

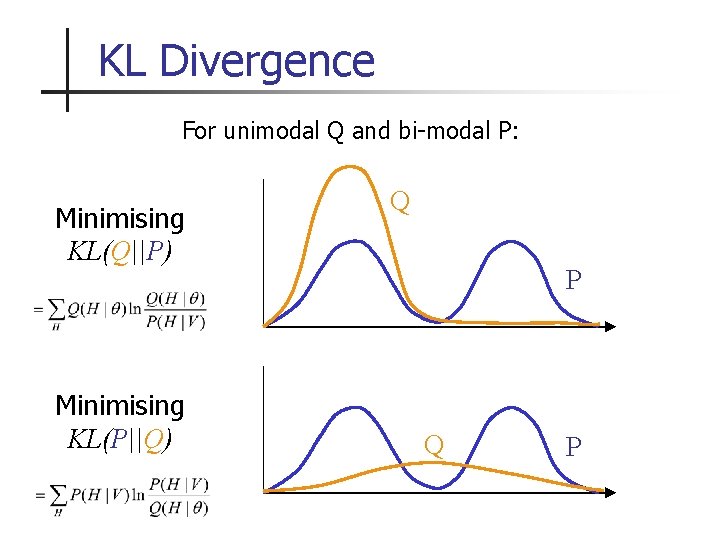

KL Divergence For unimodal Q and bi-modal P: Minimising KL(Q||P) Minimising KL(P||Q) Q P

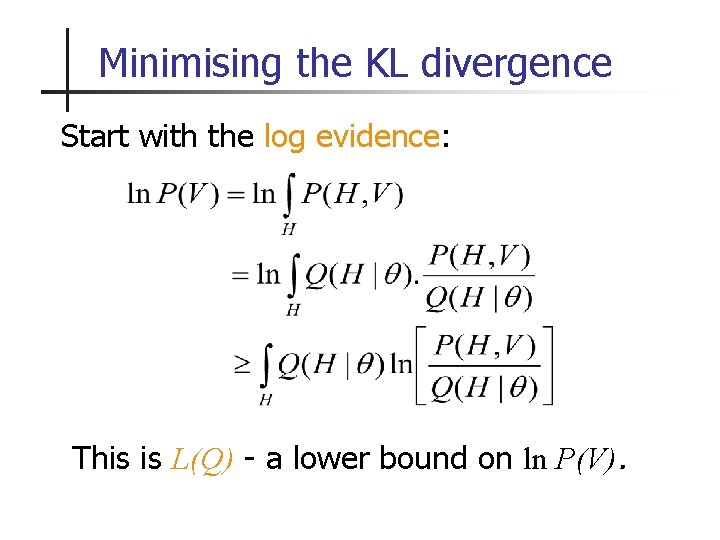

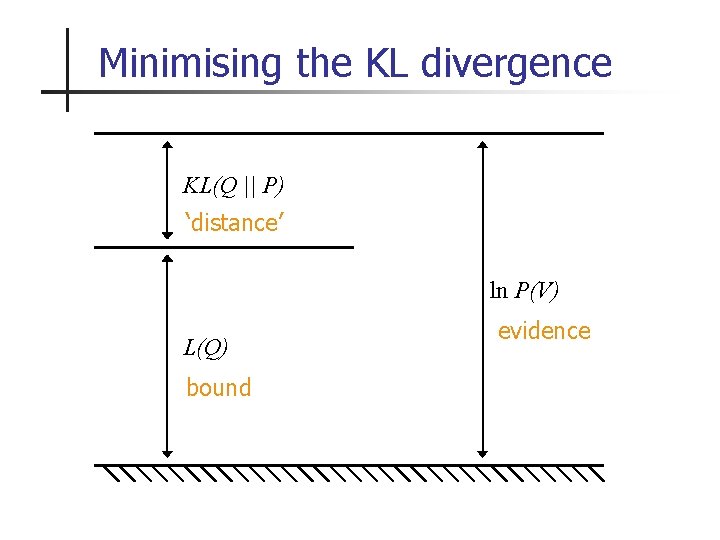

Minimising the KL divergence Start with the log evidence: This is L(Q) - a lower bound on ln P(V).

Minimising the KL divergence KL(Q || P) ‘distance’ ln P(V) L(Q) bound evidence

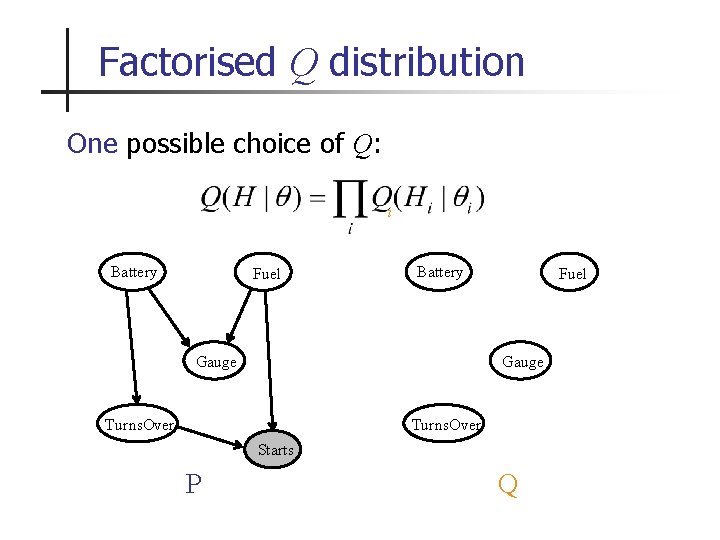

Factorised Q distribution One possible choice of Q: i Battery Fuel Battery Gauge Fuel Gauge Turns. Over Starts P Q

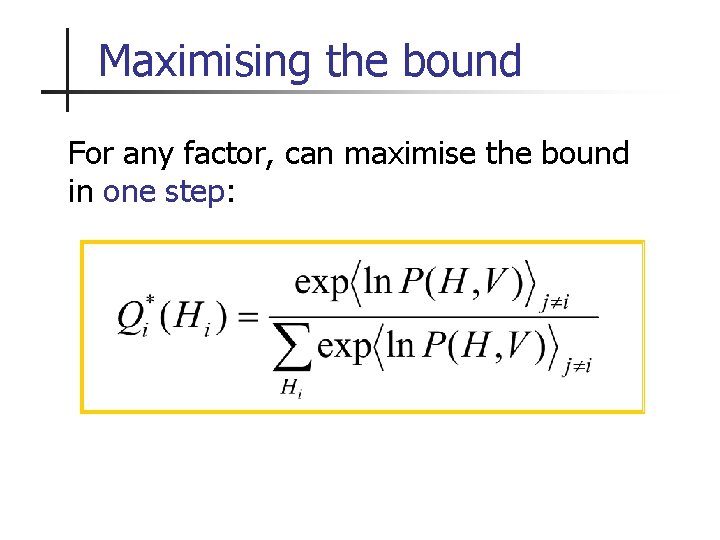

Maximising the bound For any factor, can maximise the bound in one step:

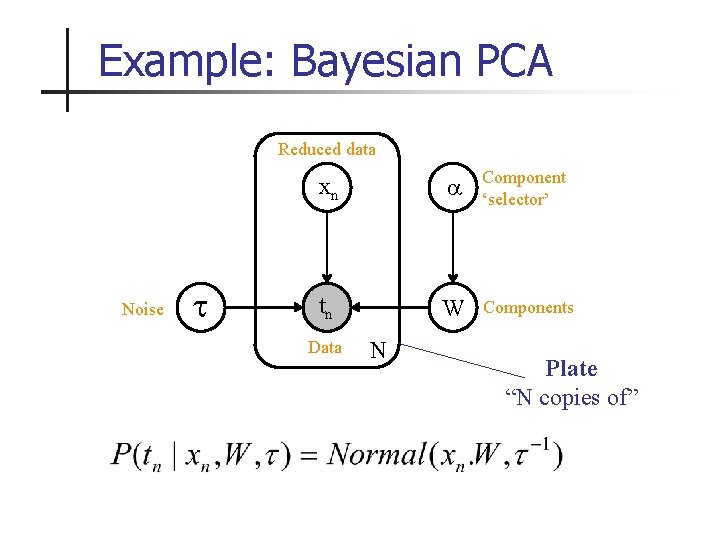

Example: Bayesian PCA Reduced data Noise xn Component ‘selector’ tn W Components Data N Plate “N copies of”

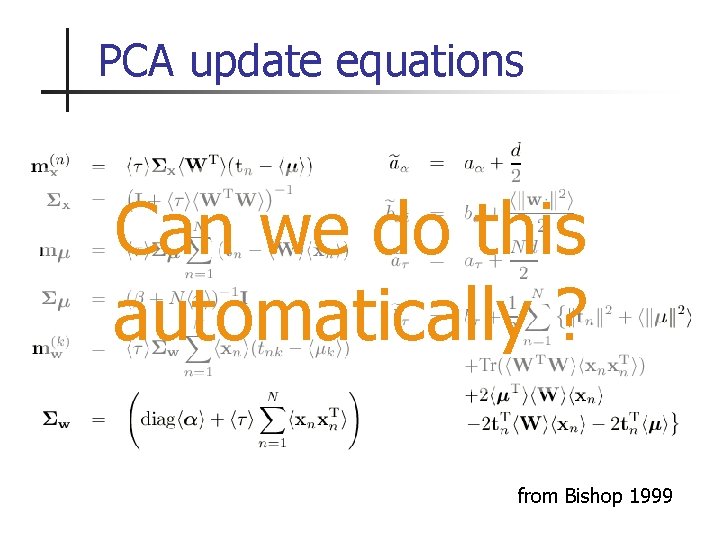

PCA update equations Can we do this automatically ? from Bishop 1999

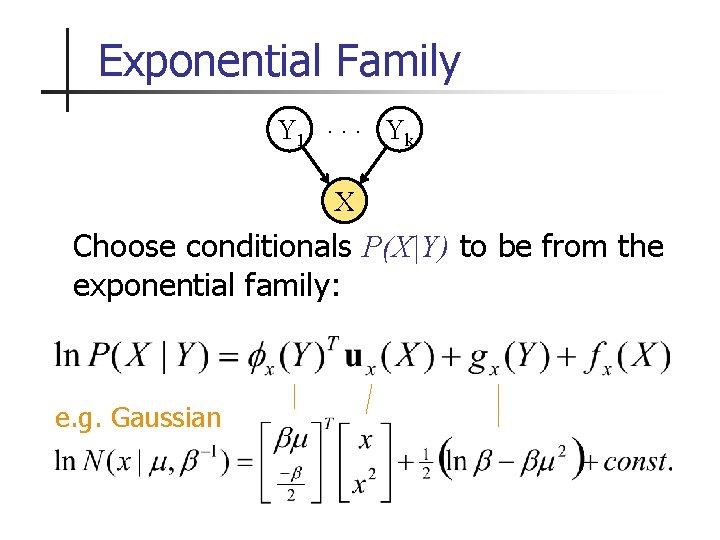

Exponential Family Y 1. . . Yk X Choose conditionals P(X|Y) to be from the exponential family: e. g. Gaussian natural parameter vector u function

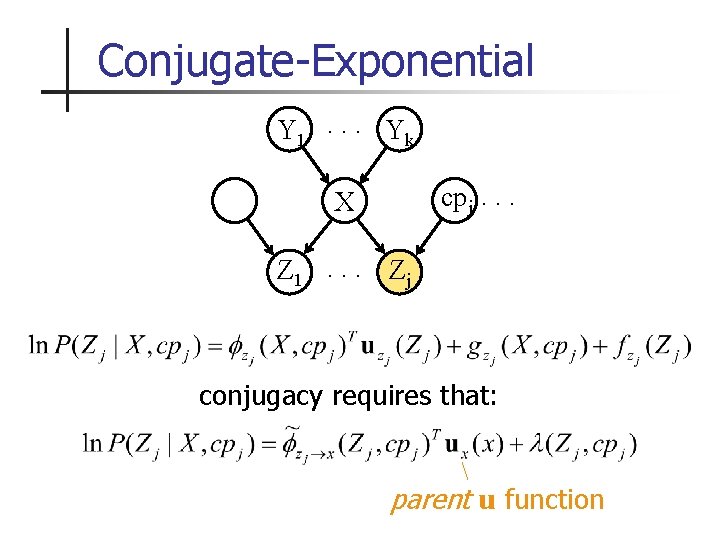

Conjugate-Exponential Y 1. . . Yk cpj. . . X Z 1. . . Zj conjugacy requires that: parent u function

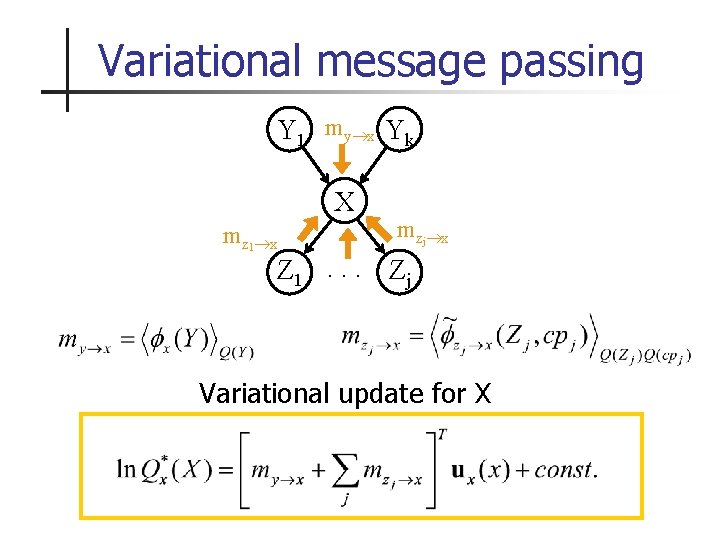

Variational message passing Y 1 my x Yk X mz 1 x mzj x Z 1. . . Zj Variational update for X

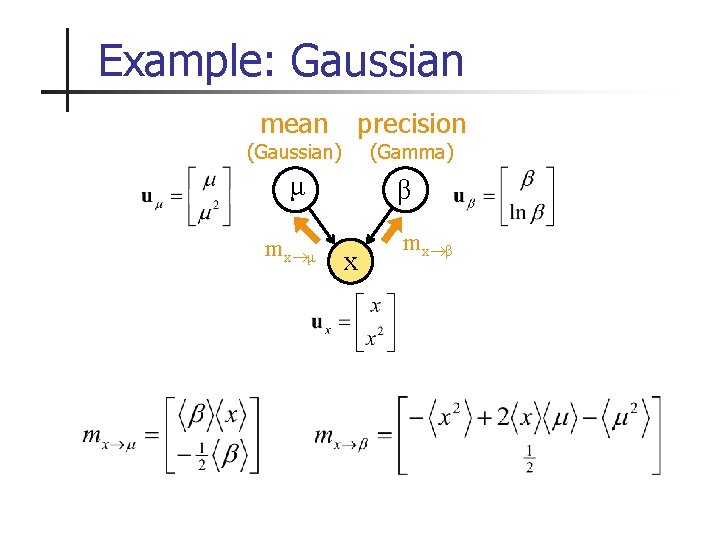

Example: Gaussian mean precision μ β (Gaussian) mx μ (Gamma) x mx β

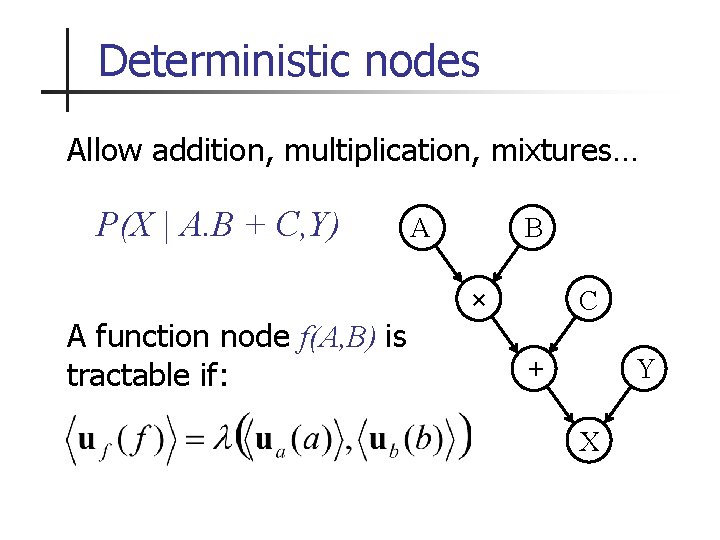

Deterministic nodes Allow addition, multiplication, mixtures… P(X | A. B + C, Y) A function node f(A, B) is tractable if: A B × C + Y X

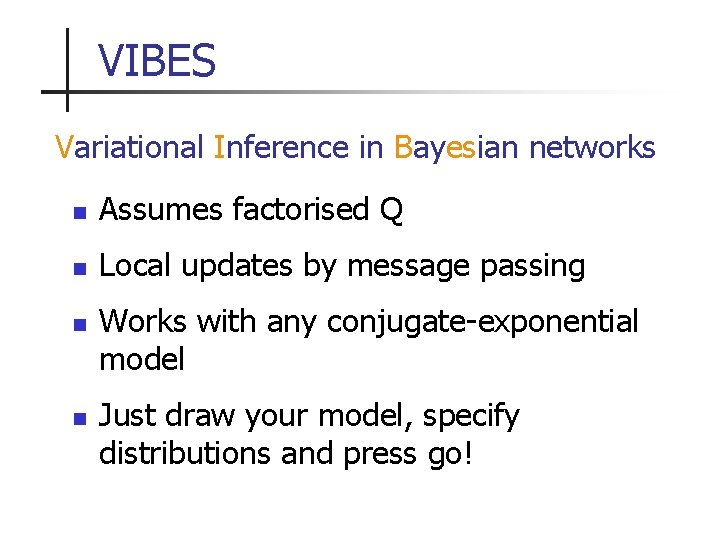

VIBES Variational Inference in Bayesian networks n Assumes factorised Q n Local updates by message passing n n Works with any conjugate-exponential model Just draw your model, specify distributions and press go!

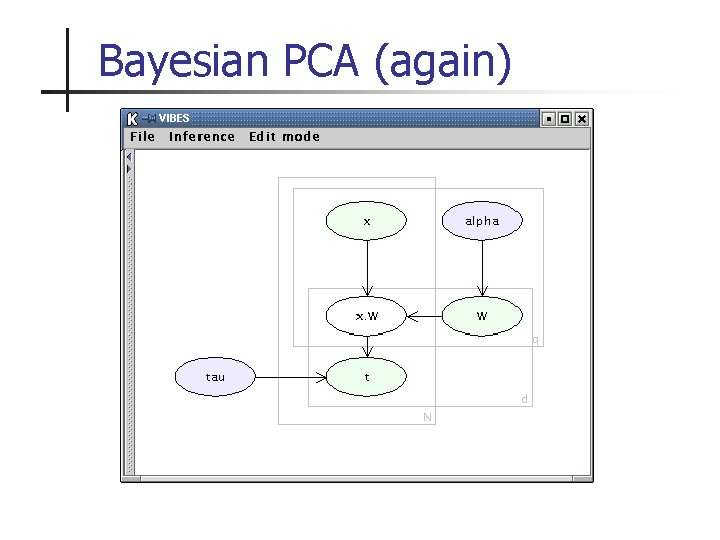

Bayesian PCA (again) xn W tn N

Digits demo

Work in progress n n Allowing structure in the Q distribution (no longer fully factorised) First release version of VIBES

- Slides: 22