An Introduction to Variational Methods for Graphical Models

- Slides: 25

An Introduction to Variational Methods for Graphical Models Michael I. Jordan, Zoubin Ghahramani, Tommi S. Jaakkola and Lawrence K. Saul 報告者:邱炫盛 NTNU Speech Lab

Outline • Introduction • Exact Inference • Basics of Variational Methodology • … NTNU Speech Lab

Introduction • The problem of probabilistic inference in graphical models is the problem of computing a conditional probability distribution NTNU Speech Lab

Exact Inference • Junction Tree Algorithm – Moralization – Triangulation • Graphical models – Directed (& Acyclic) • Bayesian Network • Local conditional probabilities – Undirected • Markov random field • Potentials with the cliques NTNU Speech Lab

Exact Inference • Directed Graphical Model – Specified numerically by associating local conditional probabilities with each nodes in the graph • The conditional probability – The probability of node given the values of its parents NTNU Speech Lab

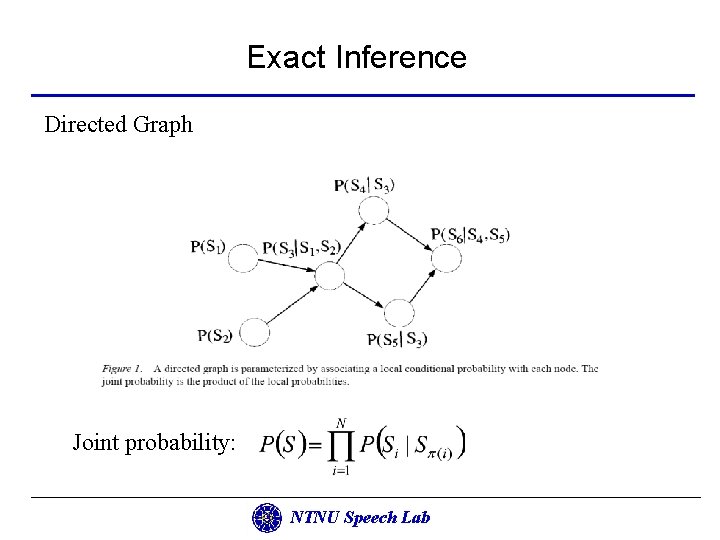

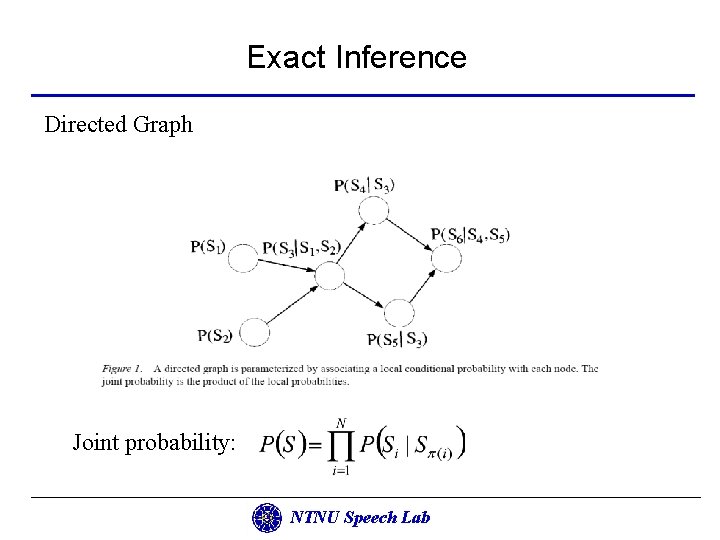

Exact Inference Directed Graph Joint probability: NTNU Speech Lab

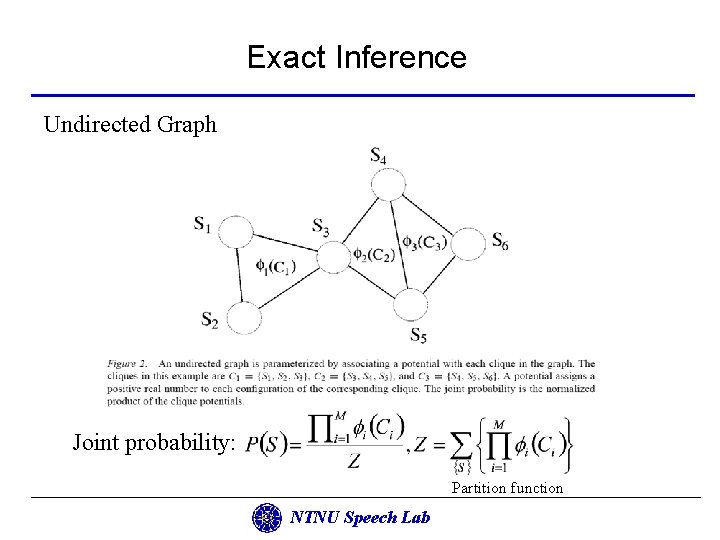

Exact Inference • Undirected Graphical Model – specified numerically by associating “potentials” with the clique of the graph • Potential – A function on the set of configurations of a clique (that is, a setting of values for all of the nodes in the clique) • Clique – (Maximal) complete subgraph NTNU Speech Lab

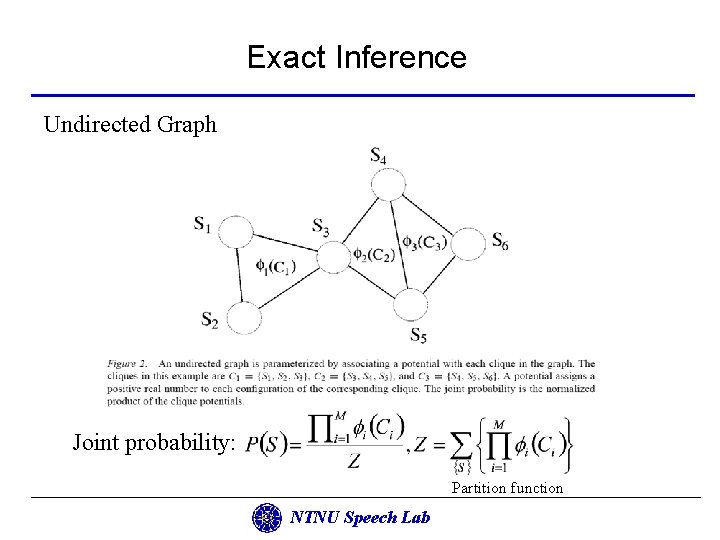

Exact Inference Undirected Graph Joint probability: Partition function NTNU Speech Lab

Exact Inference • The junction tree algorithm compiles directed graphical models into undirected graphical models – Moralization – Triangulation • Moralization – Convert the directed graph into an undirected graph (skip when undirected graph) – The variables do not always appear together within a clique – “marry” the parents of all of the nodes with undirected edges and then drop the arrows (moral graph) NTNU Speech Lab

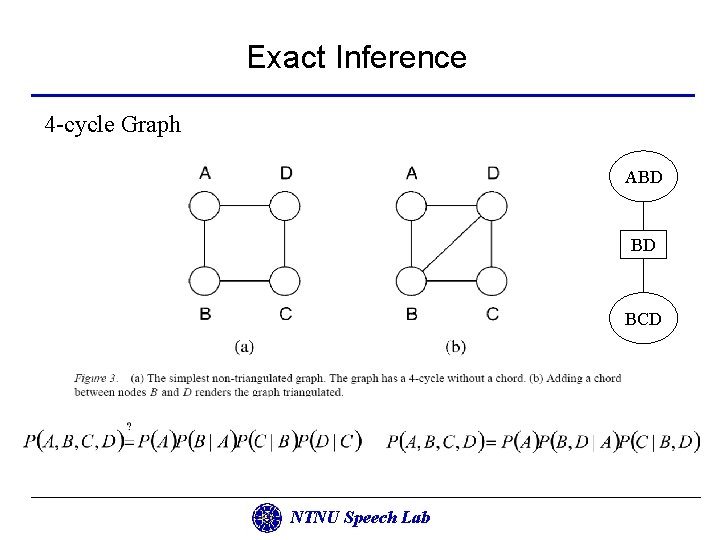

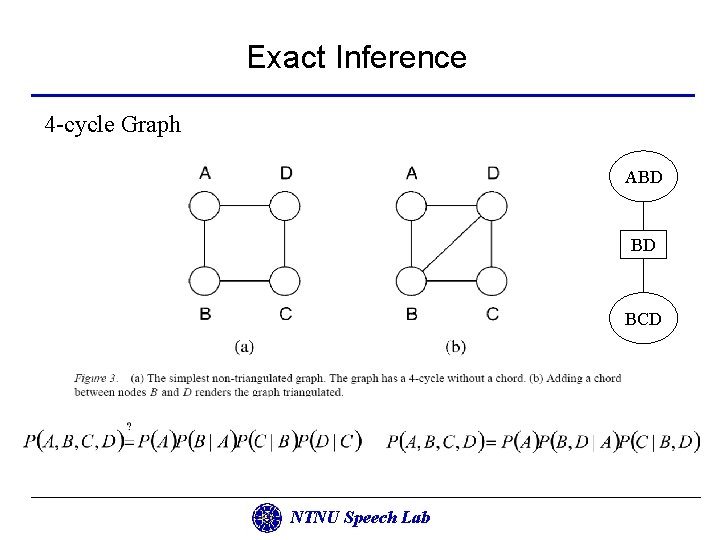

Exact Inference • Triangulation – Take a moral graph as input and produces as output an undirected graph in which additional edges (possibly) been added (allow recursive calculation) • A graph is not triangulated if there are 4 -cycles which do not have a chord • Chord – An edge between non-neighboring nodes NTNU Speech Lab

Exact Inference 4 -cycle Graph ABD BD BCD NTNU Speech Lab

Exact Inference • Once a graph has been triangulated, it is possible to arrange cliques of the graph into a data structure known as a junction tree • Running intersection property – If a node appears in any two cliques in the tree, it appears in all cliques that lie on the path between the two cliques (the cliques assign the same marginal probability to the nodes that they have in common) • Local consistency implies global consistency in a junction tree because of running intersection property NTNU Speech Lab

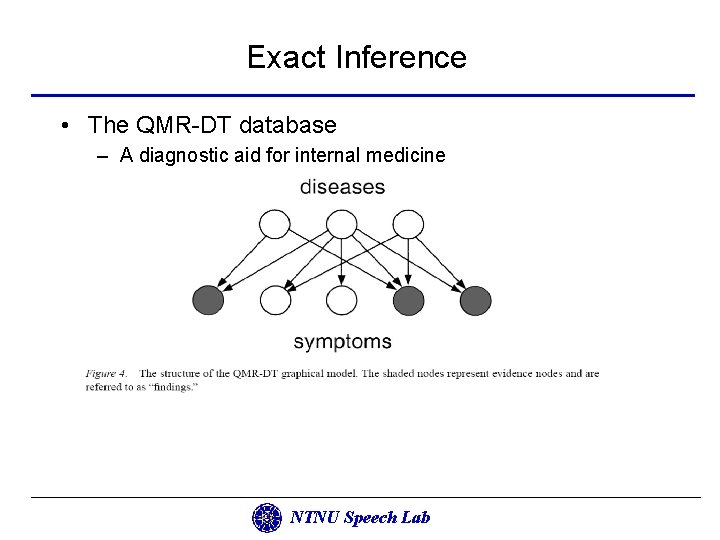

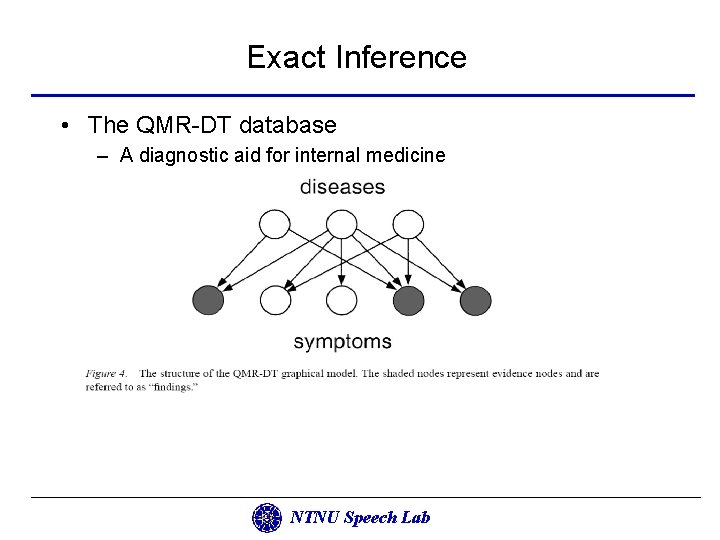

Exact Inference • The QMR-DT database – A diagnostic aid for internal medicine NTNU Speech Lab

Basics of variational methodology • Variational methods – used as approximation methods – convert a complex problem into a simpler problem – The decoupling achieved via an expansion of the problem to include additional parameters • The terminology “variational” comes from the roots of the techniques in the calculus of variation NTNU Speech Lab

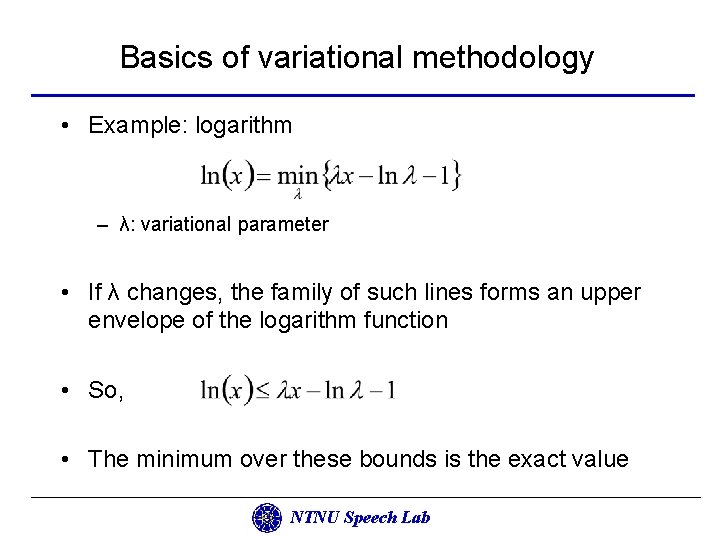

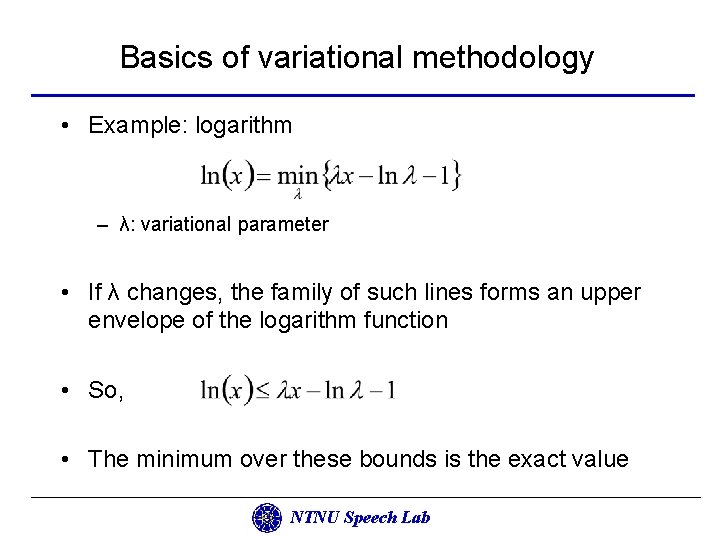

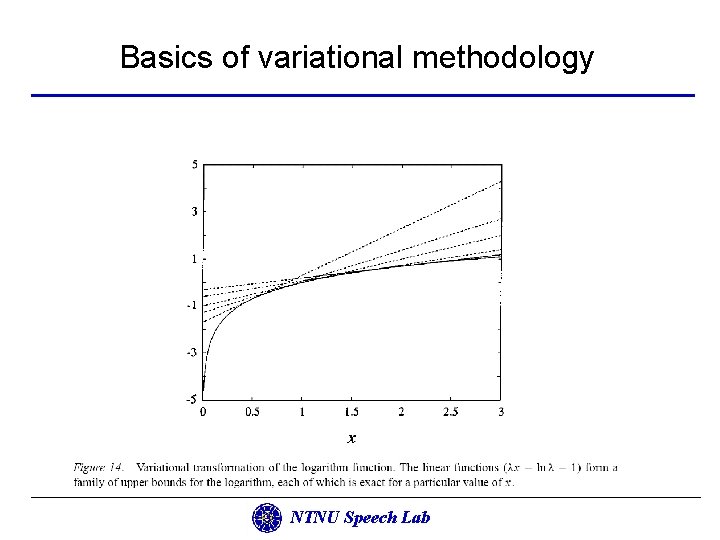

Basics of variational methodology • Example: logarithm – λ: variational parameter • If λ changes, the family of such lines forms an upper envelope of the logarithm function • So, • The minimum over these bounds is the exact value NTNU Speech Lab

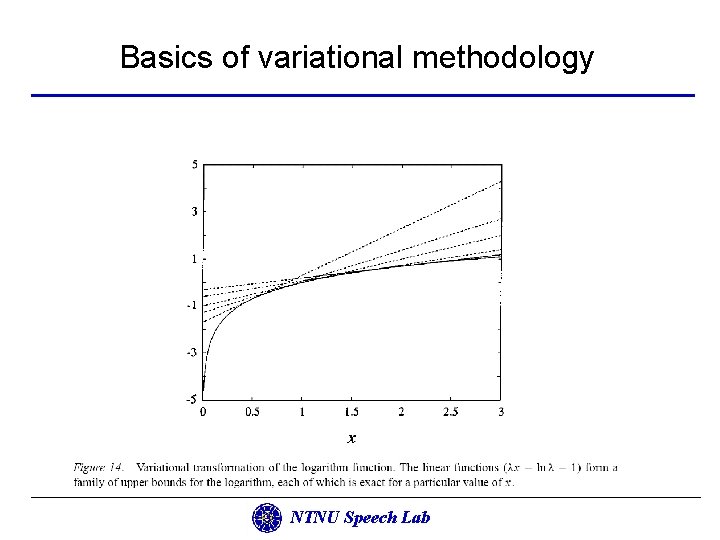

Basics of variational methodology NTNU Speech Lab

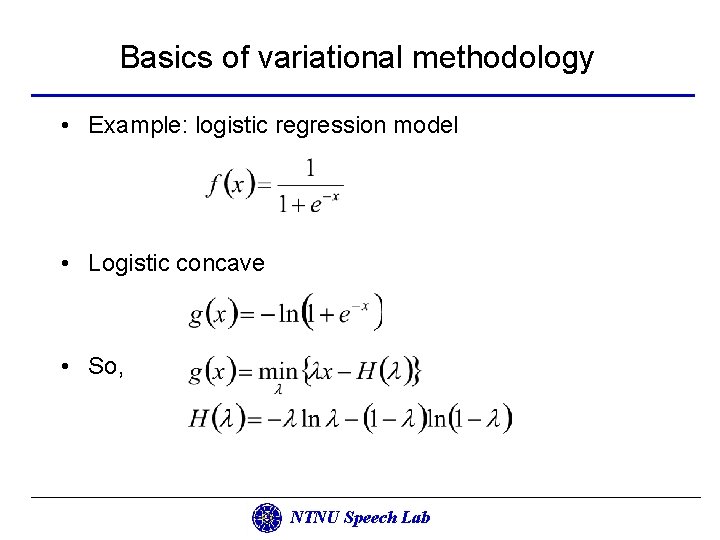

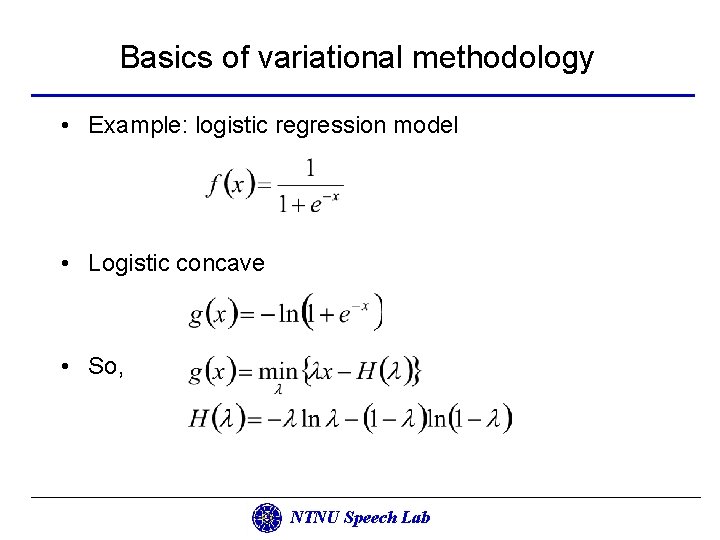

Basics of variational methodology • Example: logistic regression model • Logistic concave • So, NTNU Speech Lab

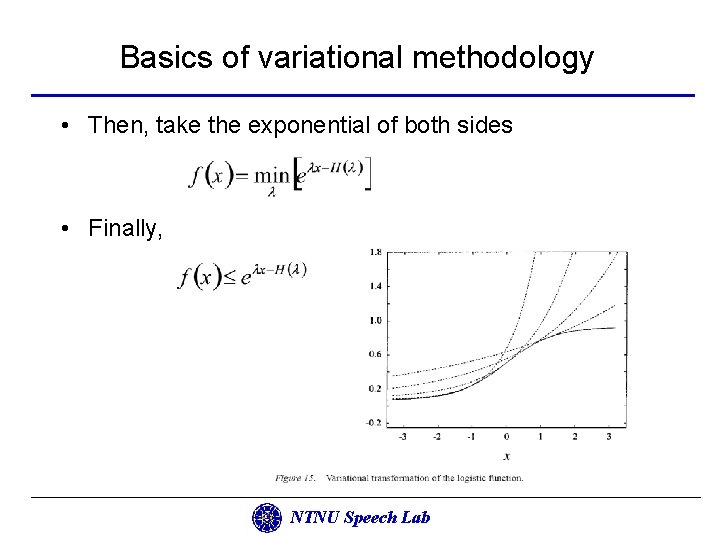

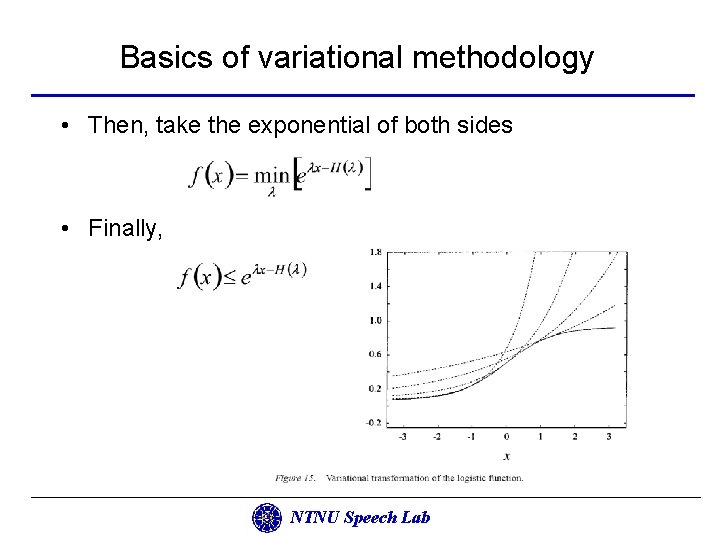

Basics of variational methodology • Then, take the exponential of both sides • Finally, NTNU Speech Lab

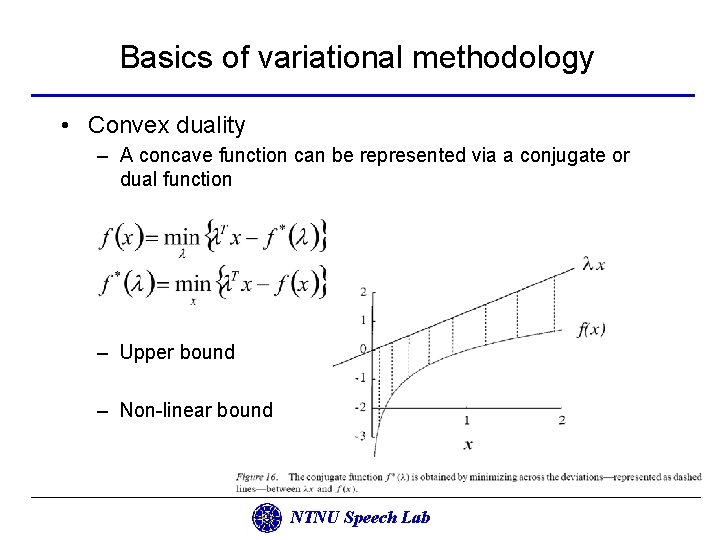

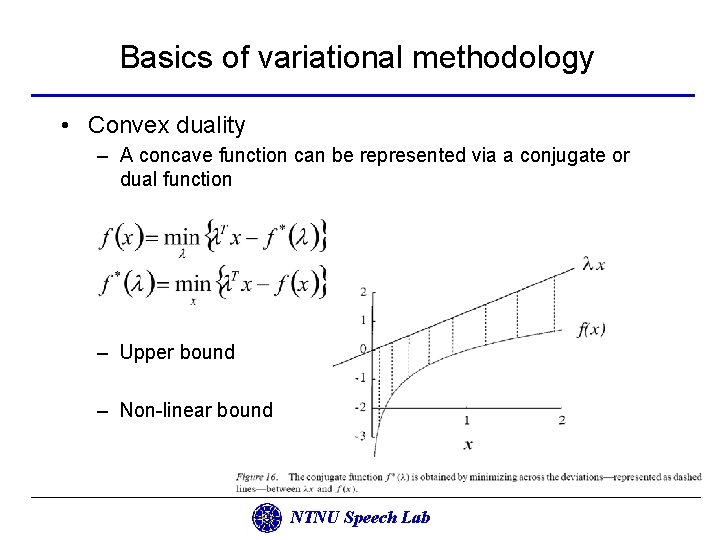

Basics of variational methodology • Convex duality – A concave function can be represented via a conjugate or dual function – Upper bound – Non-linear bound NTNU Speech Lab

Basics of variational methodology • To summarize, if the function is already convex or concave then we simply calculate the conjugate function or then we look for an invertible transformation that render the function convex or concave if the function is not convex or concave NTNU Speech Lab

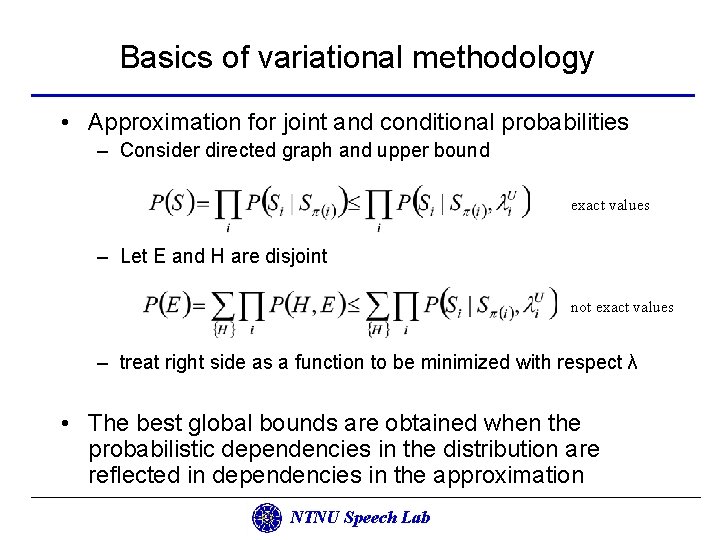

Basics of variational methodology • Approximation for joint and conditional probabilities – Consider directed graph and upper bound exact values – Let E and H are disjoint not exact values – treat right side as a function to be minimized with respect λ • The best global bounds are obtained when the probabilistic dependencies in the distribution are reflected in dependencies in the approximation NTNU Speech Lab

Basics of variational methodology • Obtain a lower bound on the likelihood P(E) by fitting variational parameters • Substitute these parameters into the parameterized variation form for P(H, E) • Utilize the variational form as an efficient inference engine in calculating an approximation to P(H|E) NTNU Speech Lab

Basics of variational methodology • Sequential approach – Introduce variational transformations for the nodes in a particular order – The goal is to transform the network until the resulting transformed network is amenable to exact methods – Begin with the untransformed graph and introduce variational transformations one node at a time – Or begin with a completely transformed graph and reintroduce exact conditional probabilities NTNU Speech Lab

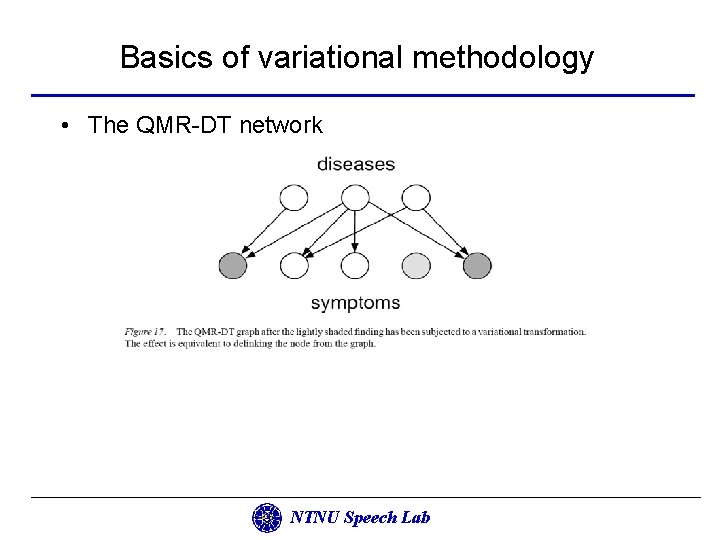

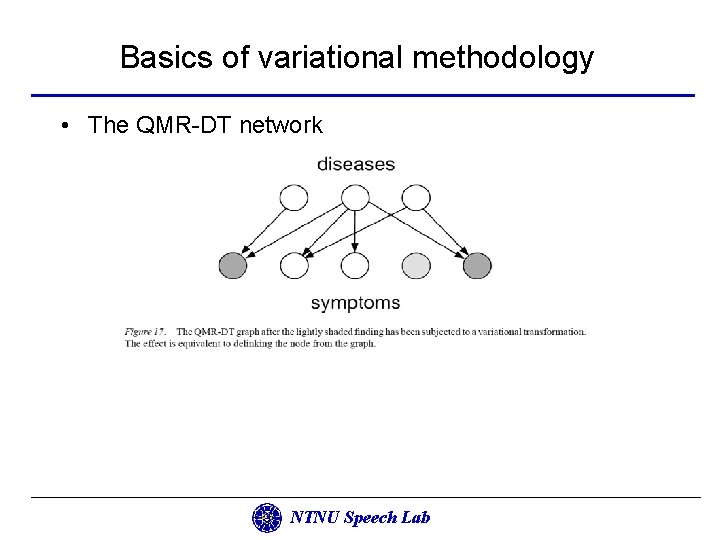

Basics of variational methodology • The QMR-DT network NTNU Speech Lab

Basics of variational methodology • Block approach • … NTNU Speech Lab