From Bayesian Inference to Logical Bayesian Inference A

From Bayesian Inference to Logical Bayesian Inference --A New Mathematical Frame for Semantic Communication and Machine Learning College of Intelligence Engineering and Mathematics, Liaoning Engineering and Technology University, Fuxin, Liaoning, China Chenguang Lu lcguang@foxmail. com 从贝叶斯推断到逻辑贝叶斯推断 --新的数学框架for语义通信和机器学习 鲁晨光 更详细中文原文见 http: \survivor 99. comlcgrecent

研究�� Research Experience • In 1990 s, studied semantic information theory, color vision, portfolio • Recently combined semantic information method and likelihood method for machine learning: • Maximum mutual information classification • Mixture models,Multi-label learning • Improved Bayesian inference to Logical Bayesian inference (group A 1) • 最早研究色觉和美感等哲学问题,因色觉模型涉及模糊数学,当了汪培 庄教授的访问学者,完成《广义信息论》。 后来研究投资组合理论,下海搞投资。 最近在汪老师鼓励下重新搞研究, • 结合语义信息方法和似然度方法 研究机器学习:最大互信息分类, 混合模型,贝叶斯推断,多标签 分类(也是这次会议交流B 1组)。 2

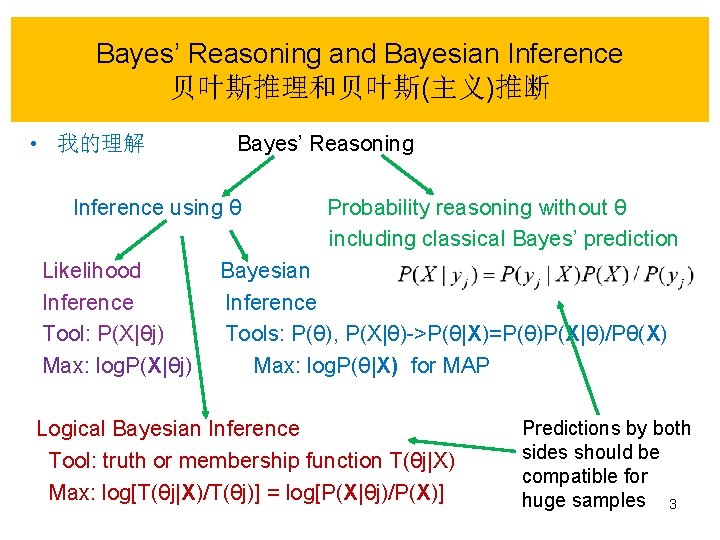

Bayes’ Reasoning and Bayesian Inference 贝叶斯推理和贝叶斯(主义)推断 • 我的理解 Bayes’ Reasoning Inference using θ Probability reasoning without θ including classical Bayes’ prediction Likelihood Bayesian Inference Tool: P(X|θj) Tools: P(θ), P(X|θ)->P(θ|X)=P(θ)P(X|θ)/Pθ(X) Max: log. P(X|θj) Max: log. P(θ|X) for MAP Predictions by both Logical Bayesian Inference sides should be Tool: truth or membership function T(θj|X) compatible for Max: log[T(θj|X)/T(θj)] = log[P(X|θj)/P(X)] huge samples 3

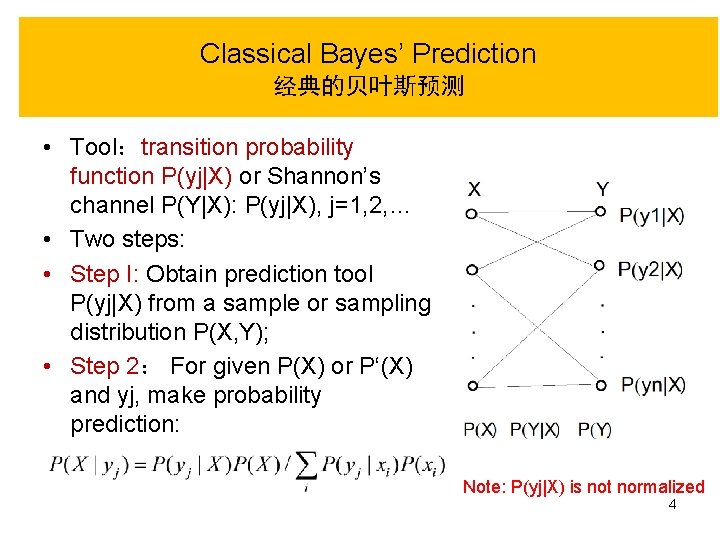

Classical Bayes’ Prediction 经典的贝叶斯预测 • Tool:transition probability function P(yj|X) or Shannon’s channel P(Y|X): P(yj|X), j=1, 2, … • Two steps: • Step I: Obtain prediction tool P(yj|X) from a sample or sampling distribution P(X, Y); • Step 2: For given P(X) or P‘(X) and yj, make probability prediction: Note: P(yj|X) is not normalized 4

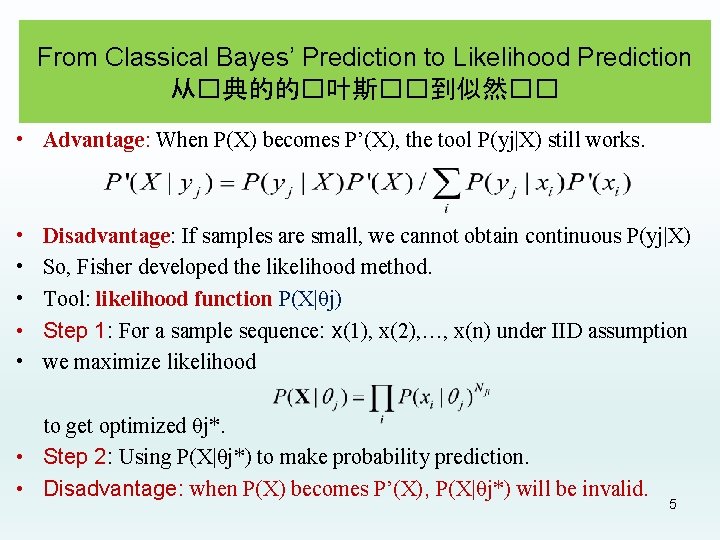

From Classical Bayes’ Prediction to Likelihood Prediction 从�典的的�叶斯��到似然�� • Advantage: When P(X) becomes P’(X), the tool P(yj|X) still works. • • • Disadvantage: If samples are small, we cannot obtain continuous P(yj|X) So, Fisher developed the likelihood method. Tool: likelihood function P(X|θj) Step 1: For a sample sequence: x(1), x(2), …, x(n) under IID assumption we maximize likelihood to get optimized θj*. • Step 2: Using P(X|θj*) to make probability prediction. • Disadvantage: when P(X) becomes P’(X), P(X|θj*) will be invalid. 5

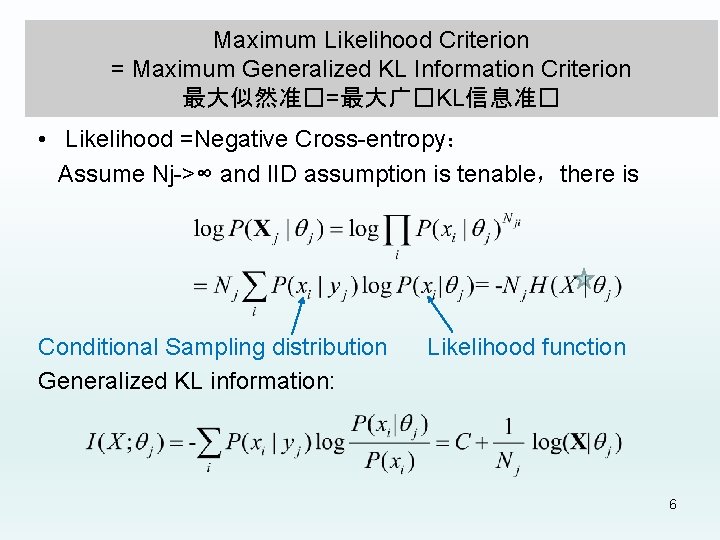

Maximum Likelihood Criterion = Maximum Generalized KL Information Criterion 最大似然准�=最大广�KL信息准� • Likelihood =Negative Cross-entropy: Assume Nj->∞ and IID assumption is tenable,there is Conditional Sampling distribution Likelihood function Generalized KL information: 6

Bayesian Inference: Advantages and Disadvantages �叶斯主�推断: �点和缺点 • Tool: Bayesian posterior • Advantages: 1) to consider the prior knowledge P(θ); • 2) with sample size’s increasing, distribution P(θ|X) shrinks to MAP θj*. 3)… • Disadvantages: 1) no using P(X), the prior of X; • 2) Probability prediction is not compatible with classical Bayesian prediction: • 3)… 7

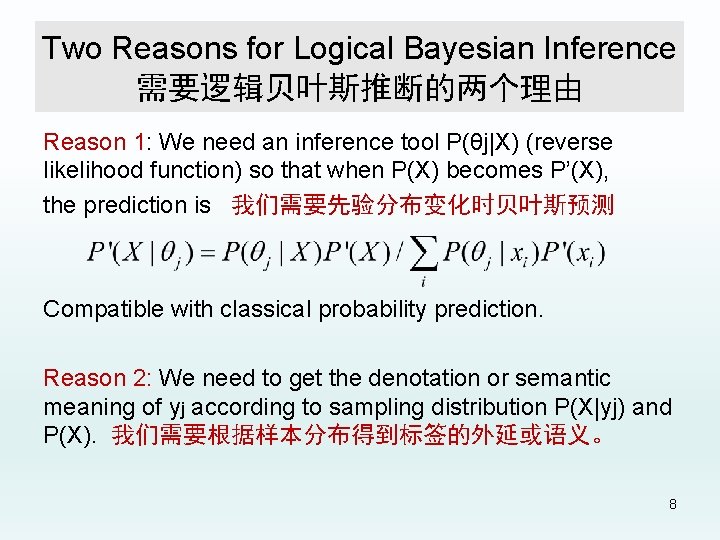

Two Reasons for Logical Bayesian Inference 需要逻辑贝叶斯推断的两个理由 Reason 1: We need an inference tool P(θj|X) (reverse likelihood function) so that when P(X) becomes P’(X), the prediction is 我们需要先验分布变化时贝叶斯预测 Compatible with classical probability prediction. Reason 2: We need to get the denotation or semantic meaning of yj according to sampling distribution P(X|yj) and P(X). 我们需要根据样本分布得到标签的外延或语义。 8

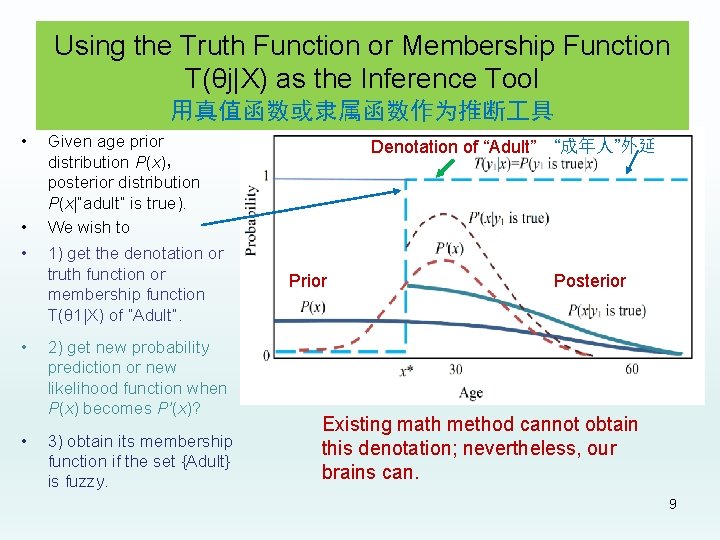

Using the Truth Function or Membership Function T(θj|X) as the Inference Tool 用真值函数或隶属函数作为推断 具 • • • Given age prior distribution P(x), posterior distribution P(x|“adult” is true). We wish to 1) get the denotation or truth function or membership function T(θ 1|X) of “Adult”. 2) get new probability prediction or new likelihood function when P(x) becomes P’(x)? 3) obtain its membership function if the set {Adult} is fuzzy. Denotation of “Adult” Prior “成年人”外延 Posterior Existing math method cannot obtain this denotation; nevertheless, our brains can. 9

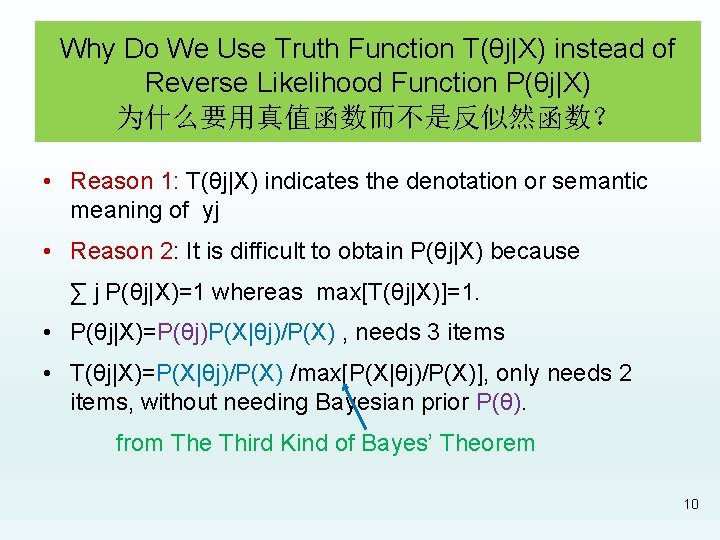

Why Do We Use Truth Function T(θj|X) instead of Reverse Likelihood Function P(θj|X) 为什么要用真值函数而不是反似然函数? • Reason 1: T(θj|X) indicates the denotation or semantic meaning of yj • Reason 2: It is difficult to obtain P(θj|X) because ∑ j P(θj|X)=1 whereas max[T(θj|X)]=1. • P(θj|X)=P(θj)P(X|θj)/P(X) , needs 3 items • T(θj|X)=P(X|θj)/P(X) /max[P(X|θj)/P(X)], only needs 2 items, without needing Bayesian prior P(θ). from The Third Kind of Bayes’ Theorem 10

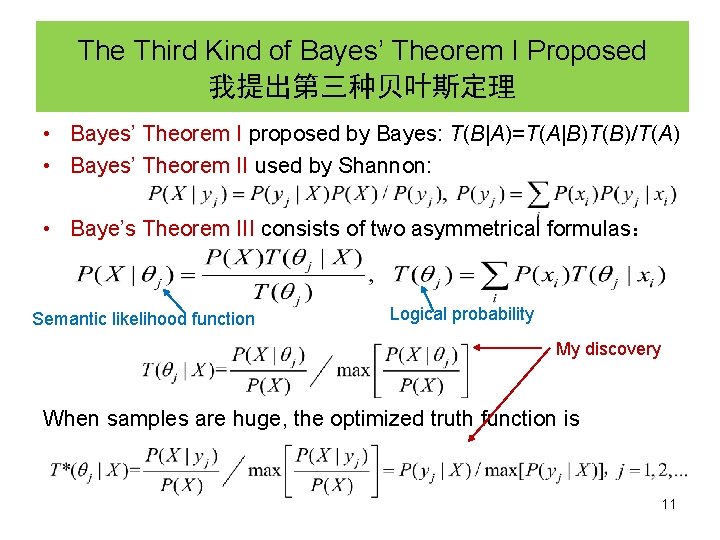

The Third Kind of Bayes’ Theorem I Proposed 我提出第三种贝叶斯定理 • Bayes’ Theorem I proposed by Bayes: T(B|A)=T(A|B)T(B)/T(A) • Bayes’ Theorem II used by Shannon: • Baye’s Theorem III consists of two asymmetrical formulas: Semantic likelihood function Logical probability My discovery When samples are huge, the optimized truth function is 11

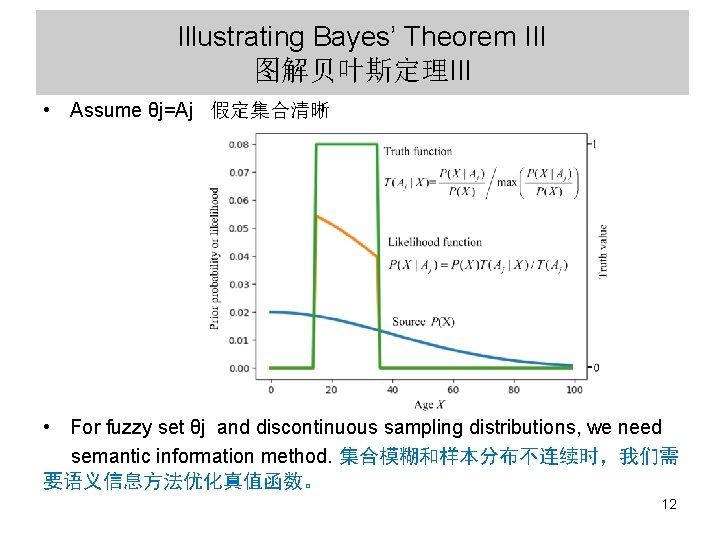

Illustrating Bayes’ Theorem III 图解贝叶斯定理III • Assume θj=Aj 假定集合清晰 • For fuzzy set θj and discontinuous sampling distributions, we need semantic information method. 集合模糊和样本分布不连续时,我们需 要语义信息方法优化真值函数。 12

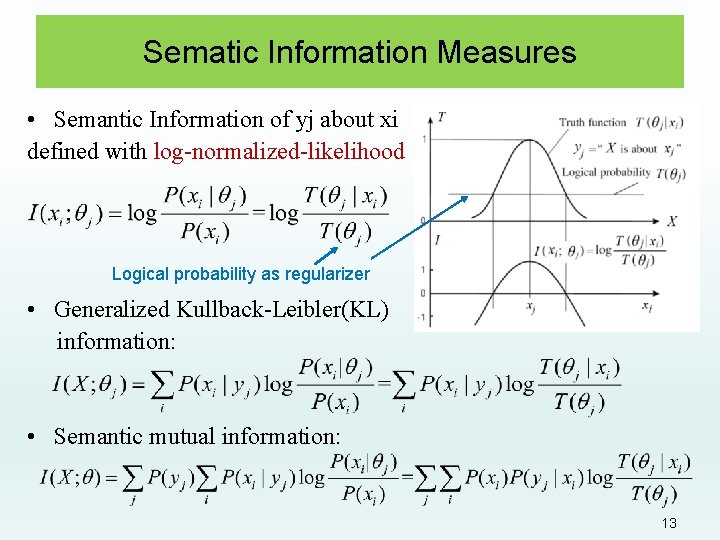

Sematic Information Measures • Semantic Information of yj about xi defined with log-normalized-likelihood Logical probability as regularizer • Generalized Kullback-Leibler(KL) information: • Semantic mutual information: 13

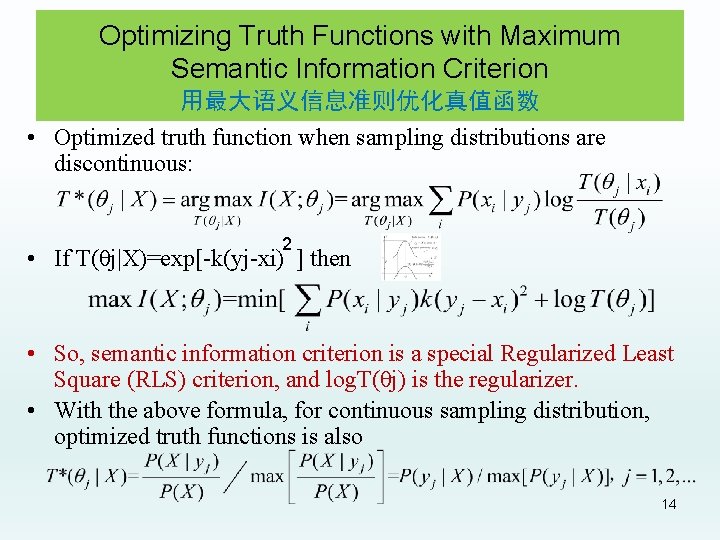

Optimizing Truth Functions with Maximum Semantic Information Criterion 用最大语义信息准则优化真值函数 • Optimized truth function when sampling distributions are discontinuous: 2 • If T(θj|X)=exp[-k(yj-xi) ] then • So, semantic information criterion is a special Regularized Least Square (RLS) criterion, and log. T(θj) is the regularizer. • With the above formula, for continuous sampling distribution, optimized truth functions is also 14

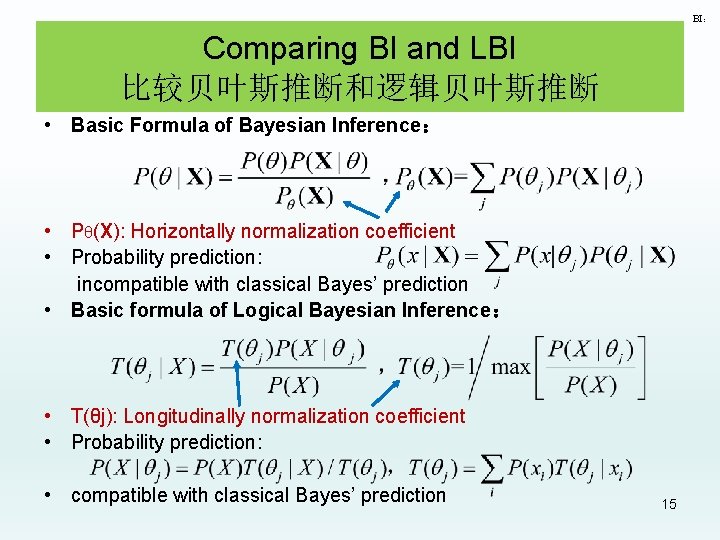

BI: Comparing BI and LBI 比较贝叶斯推断和逻辑贝叶斯推断 • Basic Formula of Bayesian Inference: • Pθ(X): Horizontally normalization coefficient • Probability prediction: incompatible with classical Bayes’ prediction • Basic formula of Logical Bayesian Inference: • T(θj): Longitudinally normalization coefficient • Probability prediction: • compatible with classical Bayes’ prediction 15

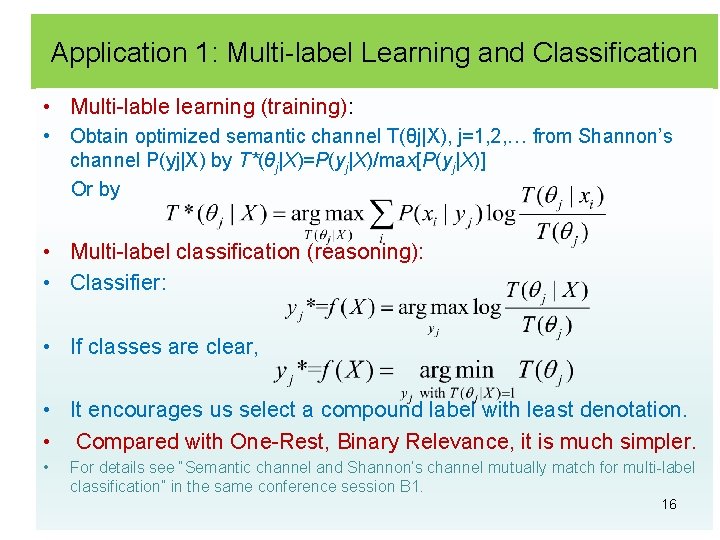

Application 1: Multi-label Learning and Classification • Multi-lable learning (training): • Obtain optimized semantic channel T(θj|X), j=1, 2, … from Shannon’s channel P(yj|X) by T*(θj|X)=P(yj|X)/max[P(yj|X)] Or by • Multi-label classification (reasoning): • Classifier: • If classes are clear, • It encourages us select a compound label with least denotation. • Compared with One-Rest, Binary Relevance, it is much simpler. • For details see “Semantic channel and Shannon’s channel mutually match for multi-label classification” in the same conference session B 1. 16

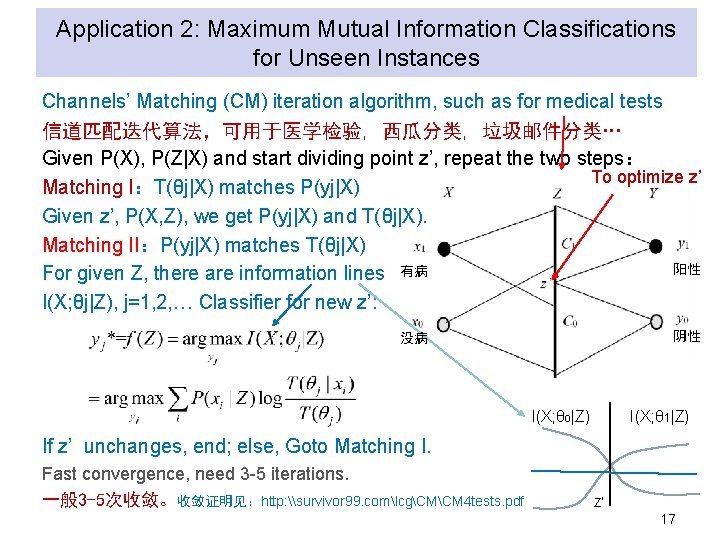

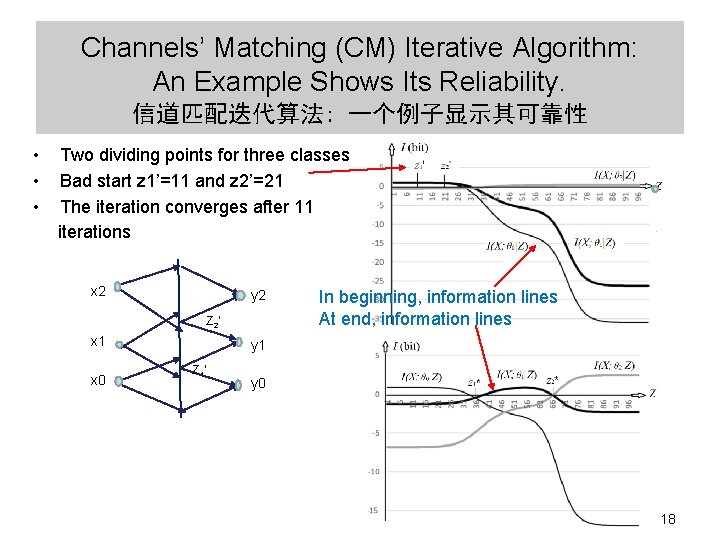

Application 2: Maximum Mutual Information Classifications for Unseen Instances Channels’ Matching (CM) iteration algorithm, such as for medical tests 信道匹配迭代算法,可用于医学检验, 西瓜分类, 垃圾邮件分类… Given P(X), P(Z|X) and start dividing point z’, repeat the two steps: To optimize z’ Matching I:T(θj|X) matches P(yj|X) Given z’, P(X, Z), we get P(yj|X) and T(θj|X). Matching II:P(yj|X) matches T(θj|X) 阳性 For given Z, there are information lines 有病 I(X; θj|Z), j=1, 2, … Classifier for new z’: 阴性 没病 I(X; θ 0|Z) I(X; θ 1|Z) If z’ unchanges, end; else, Goto Matching I. Fast convergence, need 3 -5 iterations. 一般 3 -5次收敛。收敛证明见:http: \survivor 99. comlcgCMCM 4 tests. pdf Z’ 17

Channels’ Matching (CM) Iterative Algorithm: An Example Shows Its Reliability. 信道匹配迭代算法: 一个例子显示其可靠性 • Two dividing points for three classes • Bad start z 1’=11 and z 2’=21 • The iteration converges after 11 iterations x 2 y 2 Z 2’ x 1 x 0 In beginning, information lines At end, information lines y 1 Z 1’ y 0 18

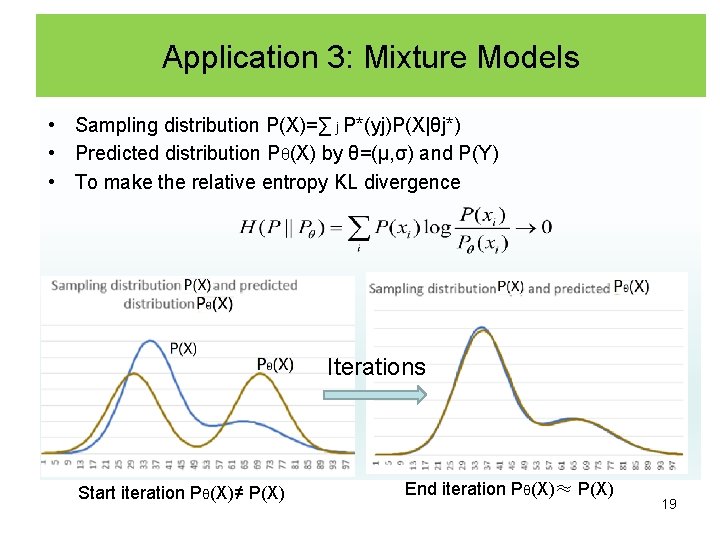

Application 3: Mixture Models • Sampling distribution P(X)=∑ j P*(yj)P(X|θj*) • Predicted distribution Pθ(X) by θ=(μ, σ) and P(Y) • To make the relative entropy KL divergence Iterations Start iteration Pθ(X)≠ P(X) End iteration Pθ(X)≈ P(X) 19

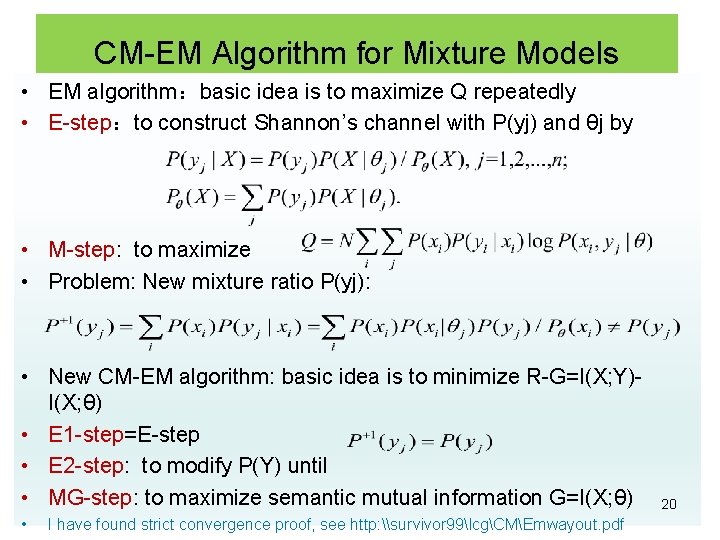

CM-EM Algorithm for Mixture Models • EM algorithm:basic idea is to maximize Q repeatedly • E-step:to construct Shannon’s channel with P(yj) and θj by • M-step: to maximize • Problem: New mixture ratio P(yj): • New CM-EM algorithm: basic idea is to minimize R-G=I(X; Y)I(X; θ) • E 1 -step=E-step • E 2 -step: to modify P(Y) until • MG-step: to maximize semantic mutual information G=I(X; θ) • I have found strict convergence proof, see http: \survivor 99lcgCMEmwayout. pdf 20

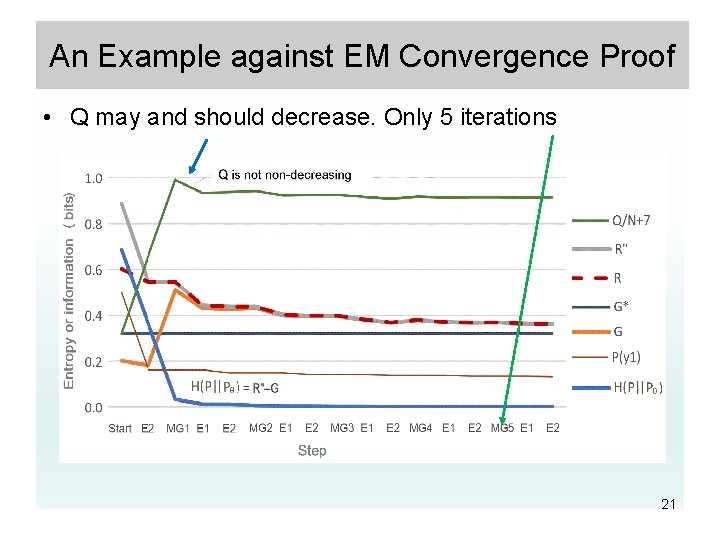

An Example against EM Convergence Proof • Q may and should decrease. Only 5 iterations 21

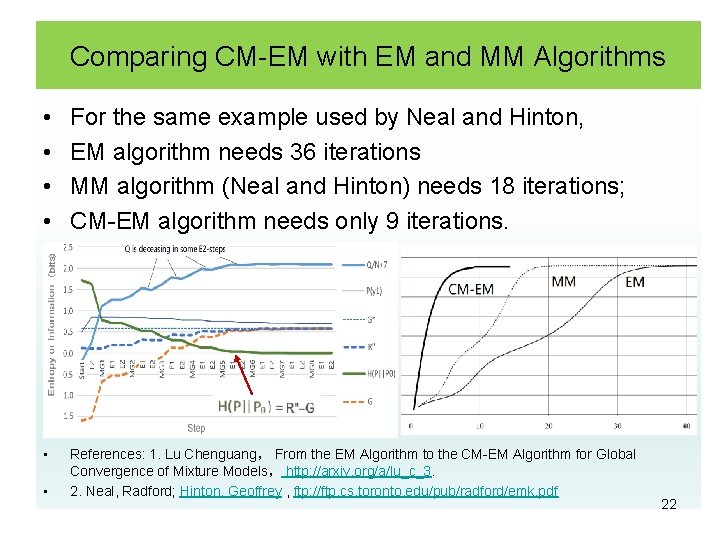

Comparing CM-EM with EM and MM Algorithms • • For the same example used by Neal and Hinton, EM algorithm needs 36 iterations MM algorithm (Neal and Hinton) needs 18 iterations; CM-EM algorithm needs only 9 iterations. • References: 1. Lu Chenguang, From the EM Algorithm to the CM-EM Algorithm for Global Convergence of Mixture Models, http: //arxiv. org/a/lu_c_3. 2. Neal, Radford; Hinton, Geoffrey , ftp: //ftp. cs. toronto. edu/pub/radford/emk. pdf • 22

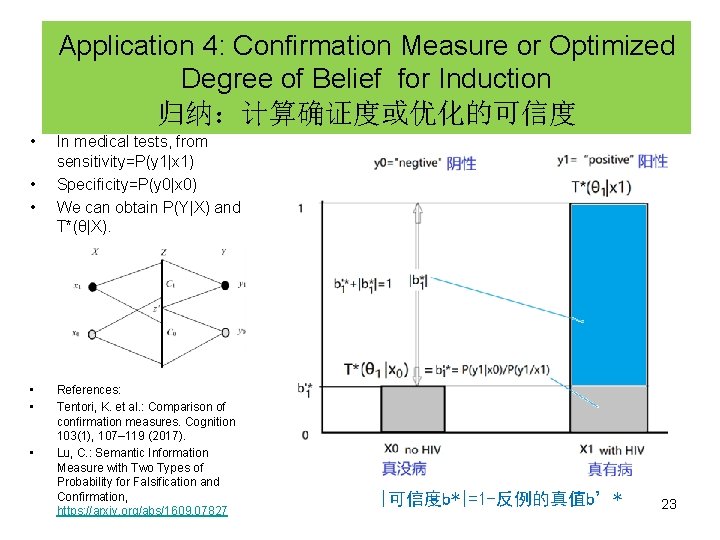

Application 4: Confirmation Measure or Optimized Degree of Belief for Induction 归纳:计算确证度或优化的可信度 • • • In medical tests, from sensitivity=P(y 1|x 1) Specificity=P(y 0|x 0) We can obtain P(Y|X) and T*(θ|X). References: Tentori, K. et al. : Comparison of confirmation measures. Cognition 103(1), 107– 119 (2017). Lu, C. : Semantic Information Measure with Two Types of Probability for Falsification and Confirmation, https: //arxiv. org/abs/1609. 07827 |可信度b*|=1 -反例的真值b’* 23

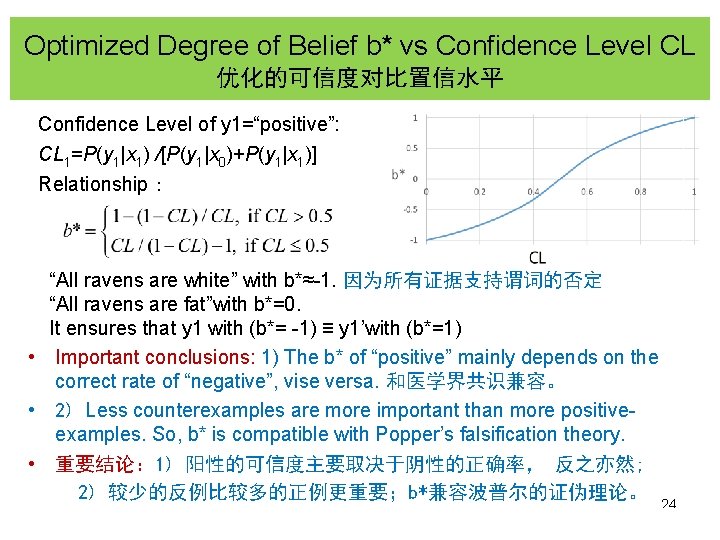

Optimized Degree of Belief b* vs Confidence Level CL 优化的可信度对比置信水平 Confidence Level of y 1=“positive”: CL 1=P(y 1|x 1) /[P(y 1|x 0)+P(y 1|x 1)] Relationship: “All ravens are white” with b*≈-1. 因为所有证据支持谓词的否定 “All ravens are fat”with b*=0. It ensures that y 1 with (b*= -1) ≡ y 1’with (b*=1) • Important conclusions: 1) The b* of “positive” mainly depends on the correct rate of “negative”, vise versa. 和医学界共识兼容。 • 2) Less counterexamples are more important than more positiveexamples. So, b* is compatible with Popper’s falsification theory. • 重要结论: 1) 阳性的可信度主要取决于阴性的正确率, 反之亦然; 2) 较少的反例比较多的正例更重要;b*兼容波普尔的证伪理论。 24

Summary总结 • Using truth function or membership function T(θj|X) as inference tool • To be compatible with classical Bayes’ prediction • Using the prior P(X) or P’(X) instead of P(θ) • Label learning or training: T(θj|X) matches P(yj|X) to maximize I(X; θ) • Label selecting or reasoning: P(yj|X) matches T(θj|X) to maximize I(X; θ) • Maximum mutual information classification: repeating two matches • Mixture models: minimizing I(X; Y)-I(X; θ) repeatedly. • Confirmation measure is compatible with Popper’s falsification theory: b*=1 -counterexample-ratio / positive-example-ratio Thanks for your listening! Welcome to exchange ideas. For more papers about semantic information theory and machine learning, see http: //survivor 99. com/lcg/books/GIT/index. htm or http: //arxiv. org/a/lu_c_3 25

- Slides: 25