Unsupervised Learning with Permuted Data Sergey Kirshner Sridevi

- Slides: 31

Unsupervised Learning with Permuted Data Sergey Kirshner Sridevi Parise Padhraic Smyth School of Information and Computer Science University of California, Irvine www. datalab. uci. edu ICML 2003 © Sergey Kirshner, UC Irvine

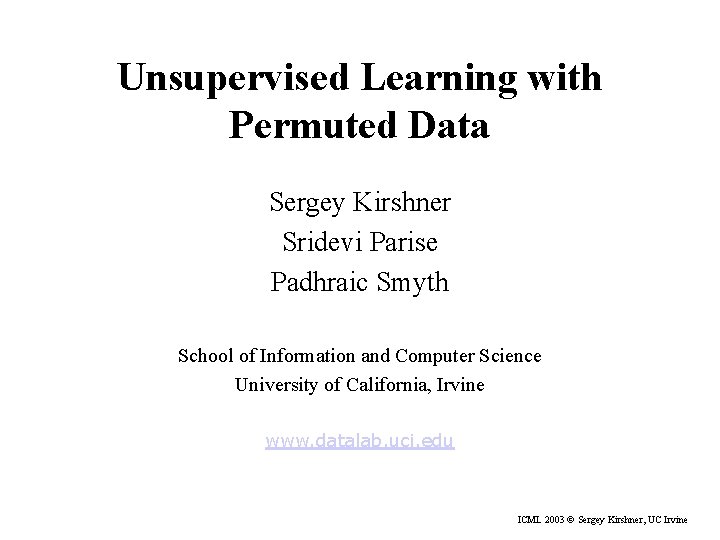

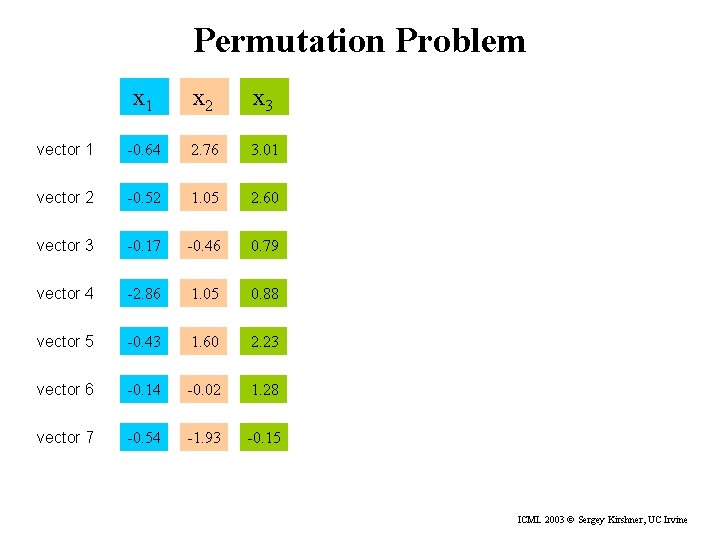

Permutation Problem x 1 x 2 x 3 vector 1 -0. 64 2. 76 3. 01 vector 2 -0. 52 1. 05 2. 60 vector 3 -0. 17 -0. 46 0. 79 vector 4 -2. 86 1. 05 0. 88 vector 5 -0. 43 1. 60 2. 23 vector 6 -0. 14 -0. 02 1. 28 vector 7 -0. 54 -1. 93 -0. 15 ICML 2003 © Sergey Kirshner, UC Irvine

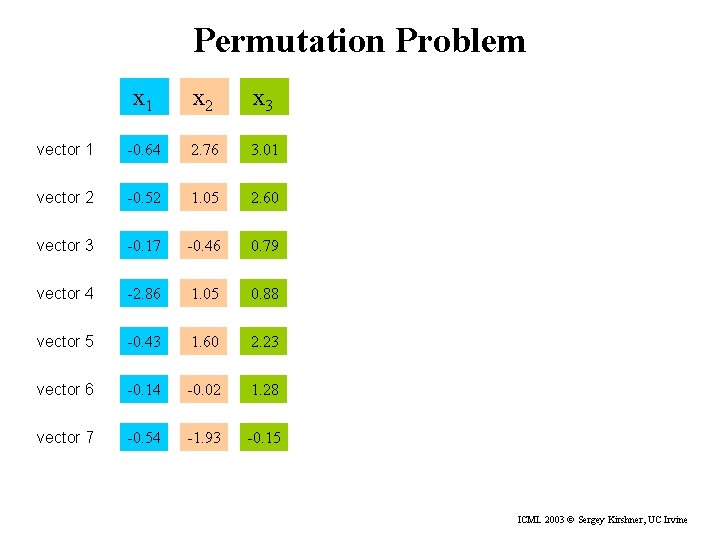

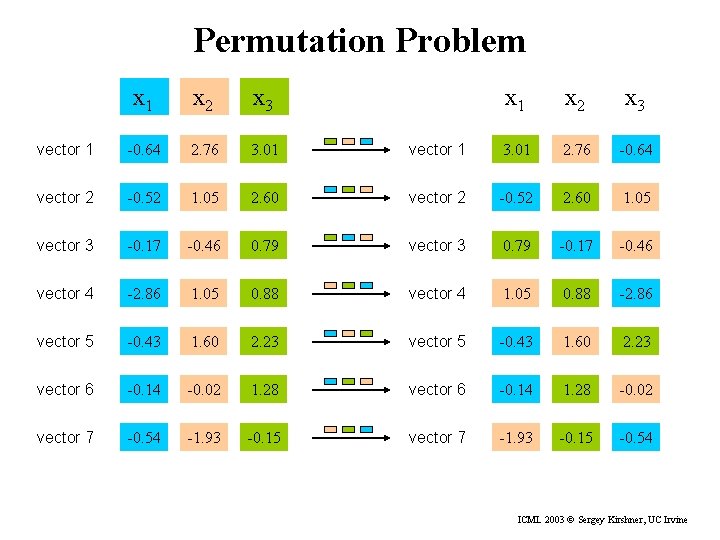

Permutation Problem x 1 x 2 x 3 vector 1 3. 01 2. 76 -0. 64 2. 60 vector 2 -0. 52 2. 60 1. 05 -0. 46 0. 79 vector 3 0. 79 -0. 17 -0. 46 -2. 86 1. 05 0. 88 vector 4 1. 05 0. 88 -2. 86 vector 5 -0. 43 1. 60 2. 23 vector 6 -0. 14 -0. 02 1. 28 vector 6 -0. 14 1. 28 -0. 02 vector 7 -0. 54 -1. 93 -0. 15 vector 7 -1. 93 -0. 15 -0. 54 x 1 x 2 x 3 vector 1 -0. 64 2. 76 3. 01 vector 2 -0. 52 1. 05 vector 3 -0. 17 vector 4 ICML 2003 © Sergey Kirshner, UC Irvine

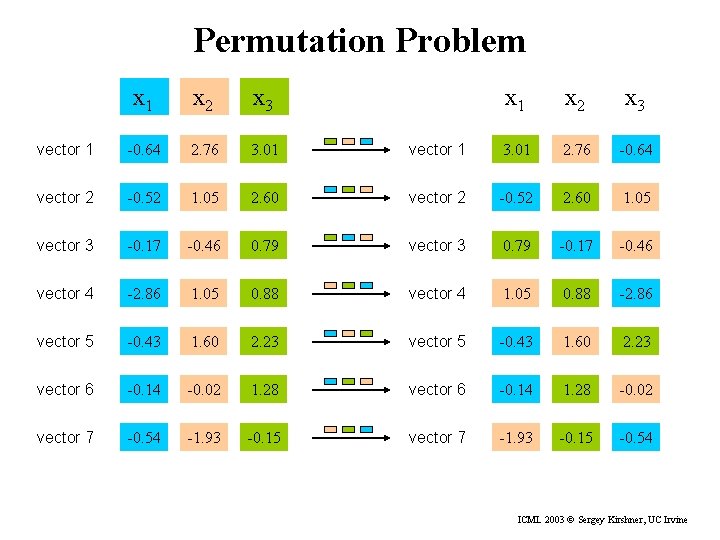

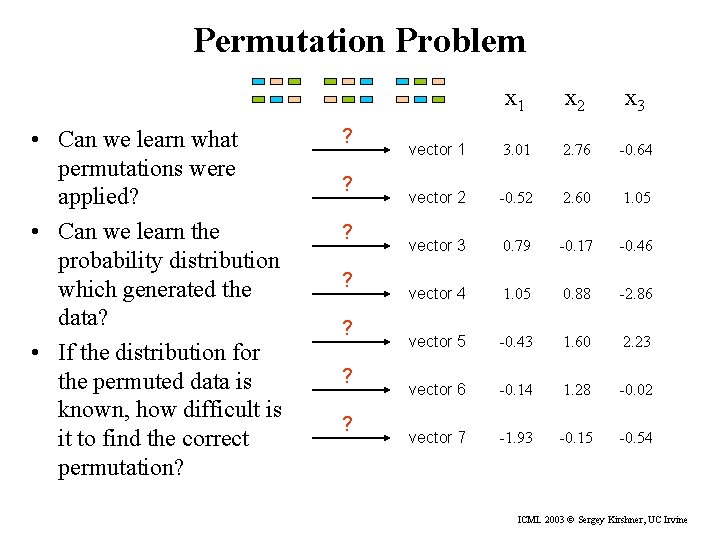

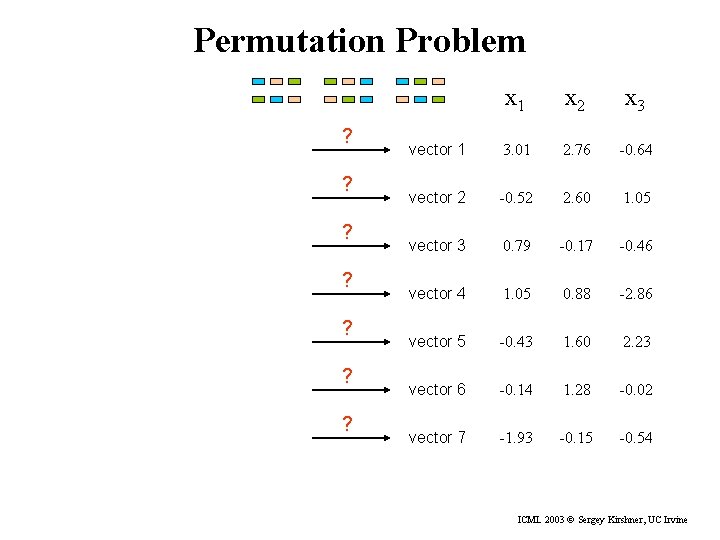

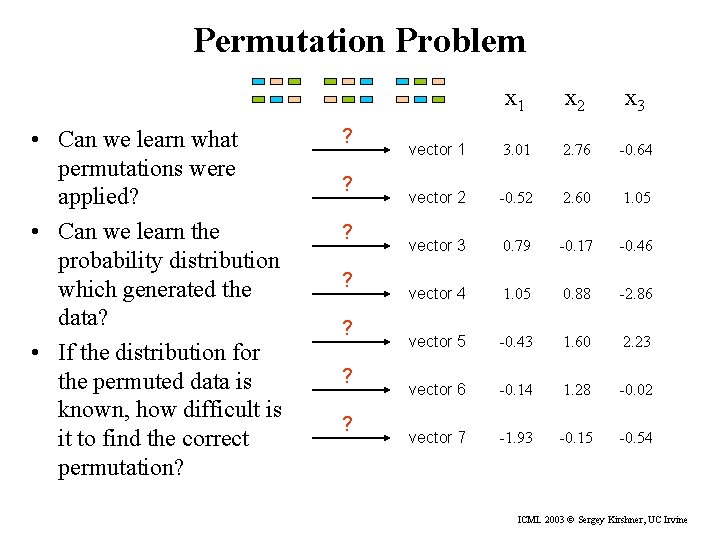

Permutation Problem ? ? ? ? x 1 x 2 x 3 vector 1 3. 01 2. 76 -0. 64 vector 2 -0. 52 2. 60 1. 05 vector 3 0. 79 -0. 17 -0. 46 vector 4 1. 05 0. 88 -2. 86 vector 5 -0. 43 1. 60 2. 23 vector 6 -0. 14 1. 28 -0. 02 vector 7 -1. 93 -0. 15 -0. 54 ICML 2003 © Sergey Kirshner, UC Irvine

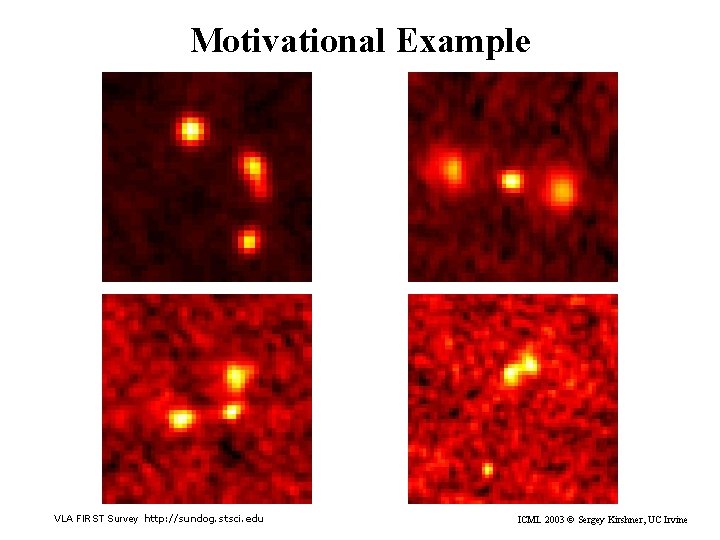

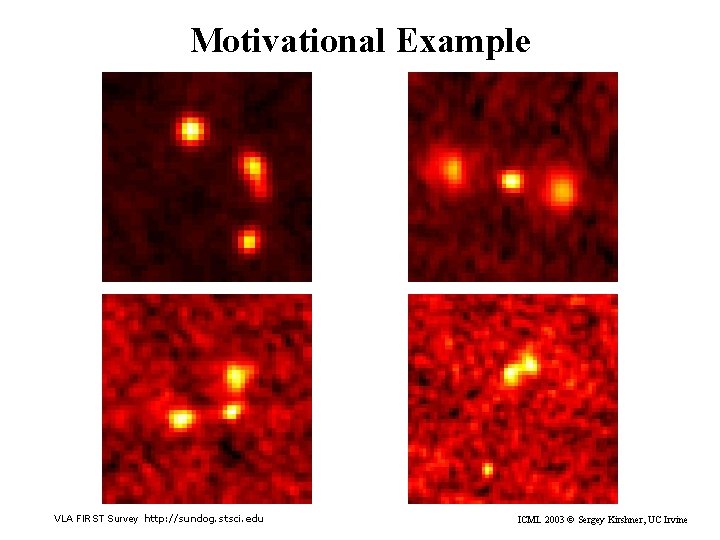

Motivational Example VLA FIRST Survey http: //sundog. stsci. edu ICML 2003 © Sergey Kirshner, UC Irvine

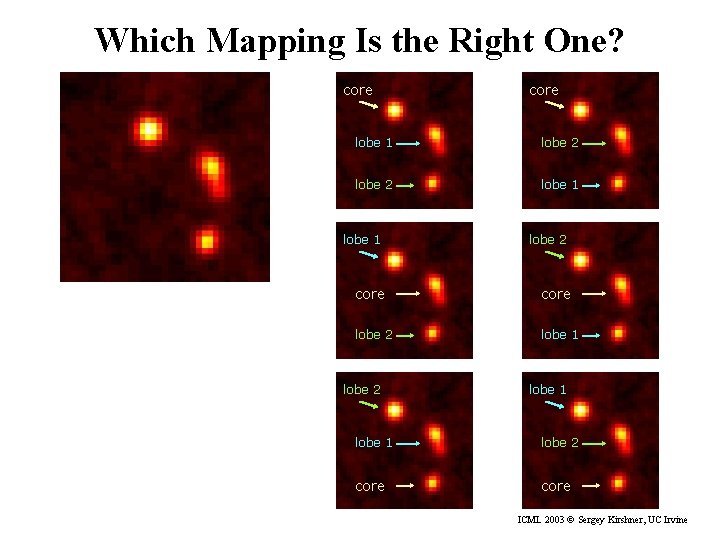

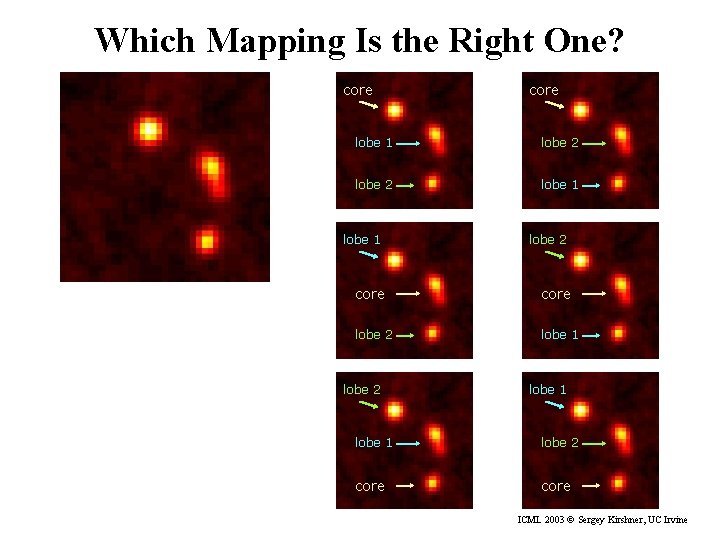

Which Mapping Is the Right One? core lobe 1 lobe 2 core lobe 2 lobe 1 lobe 2 core ICML 2003 © Sergey Kirshner, UC Irvine

Permutation Problem • Can we learn what permutations were applied? • Can we learn the probability distribution which generated the data? • If the distribution for the permuted data is known, how difficult is it to find the correct permutation? ? ? ? ? x 1 x 2 x 3 vector 1 3. 01 2. 76 -0. 64 vector 2 -0. 52 2. 60 1. 05 vector 3 0. 79 -0. 17 -0. 46 vector 4 1. 05 0. 88 -2. 86 vector 5 -0. 43 1. 60 2. 23 vector 6 -0. 14 1. 28 -0. 02 vector 7 -1. 93 -0. 15 -0. 54 ICML 2003 © Sergey Kirshner, UC Irvine

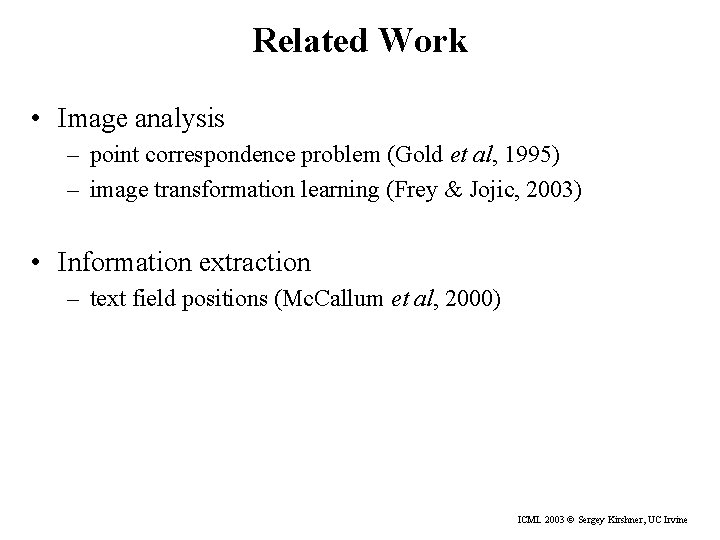

Related Work • Image analysis – point correspondence problem (Gold et al, 1995) – image transformation learning (Frey & Jojic, 2003) • Information extraction – text field positions (Mc. Callum et al, 2000) ICML 2003 © Sergey Kirshner, UC Irvine

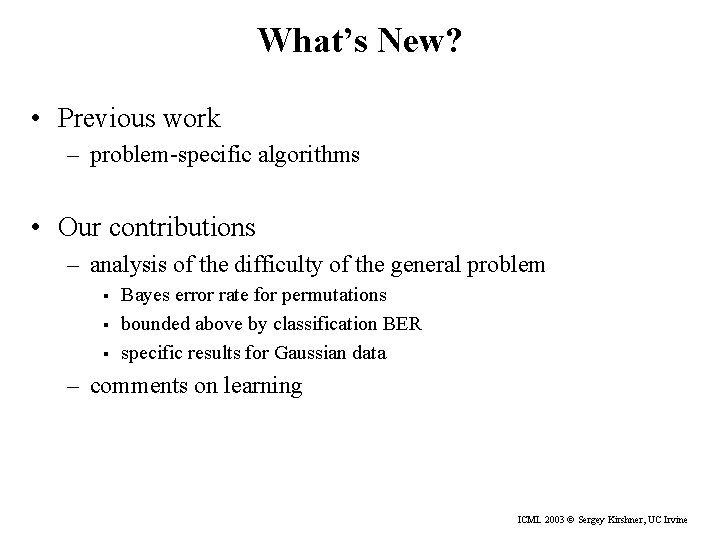

What’s New? • Previous work – problem-specific algorithms • Our contributions – analysis of the difficulty of the general problem § § § Bayes error rate for permutations bounded above by classification BER specific results for Gaussian data – comments on learning ICML 2003 © Sergey Kirshner, UC Irvine

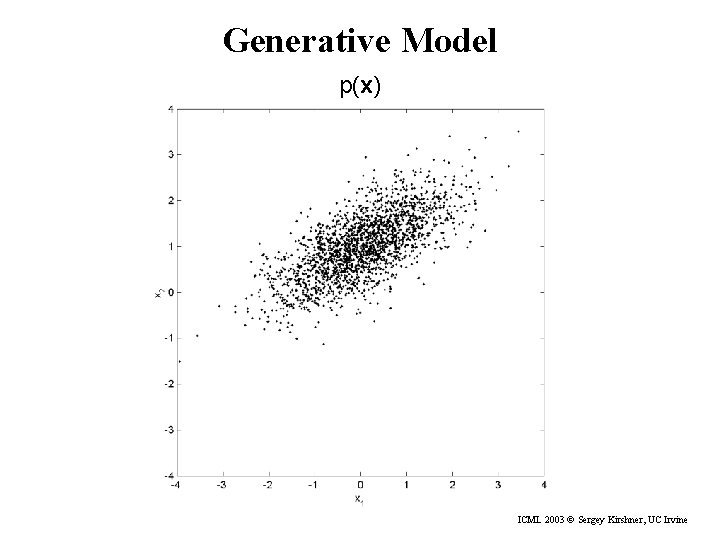

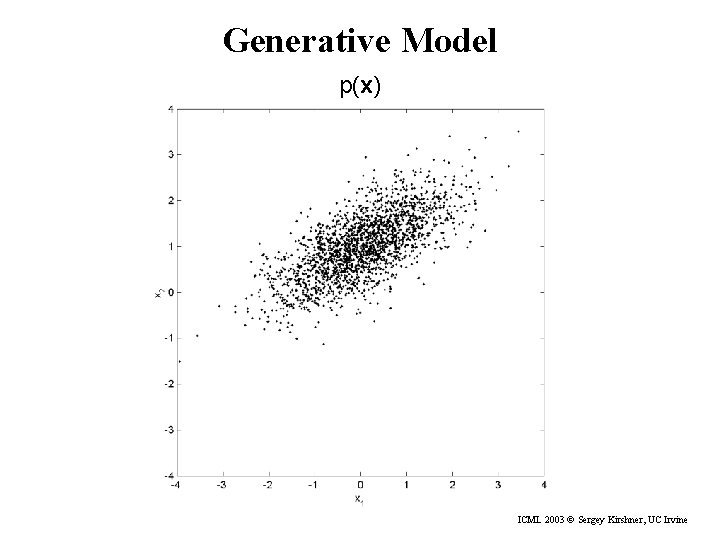

Generative Model p(x) ICML 2003 © Sergey Kirshner, UC Irvine

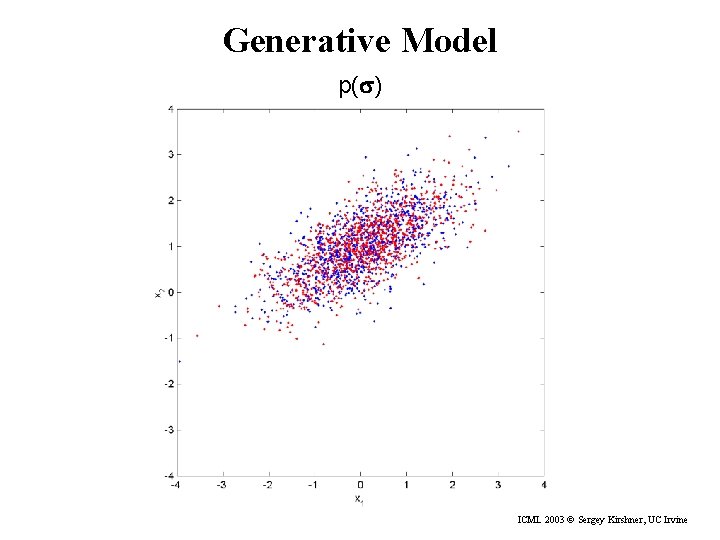

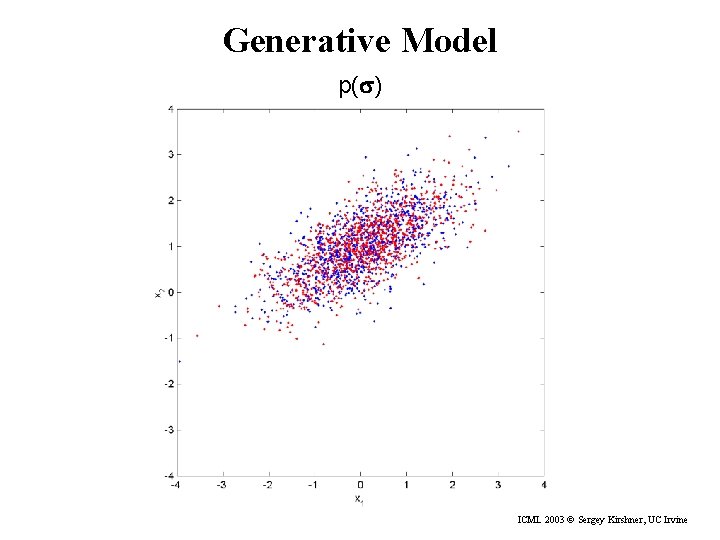

Generative Model p(s) ICML 2003 © Sergey Kirshner, UC Irvine

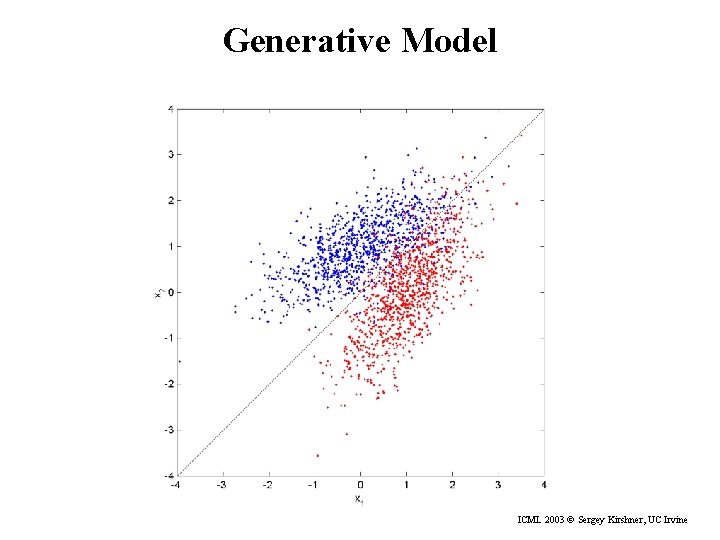

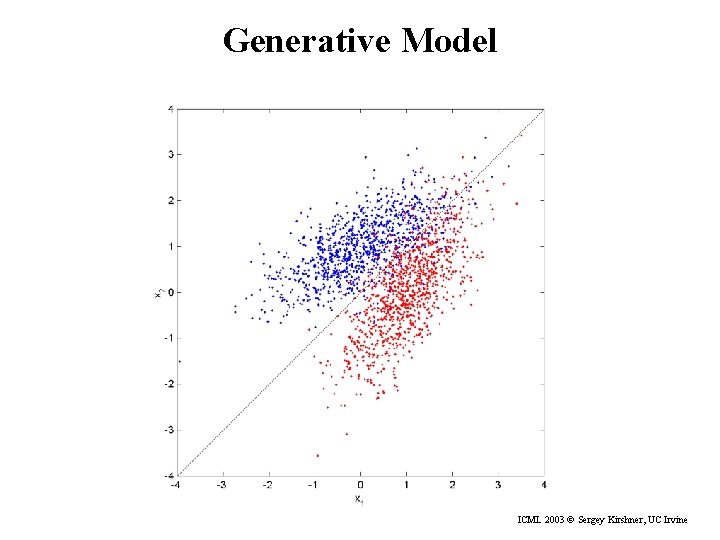

Generative Model ICML 2003 © Sergey Kirshner, UC Irvine

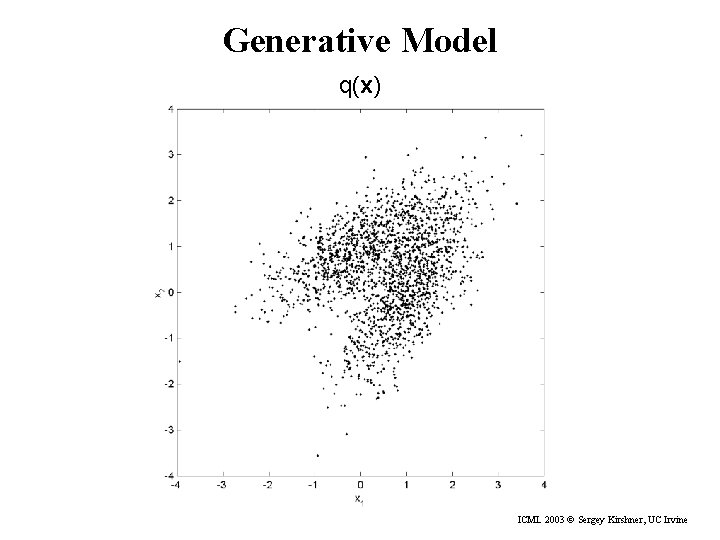

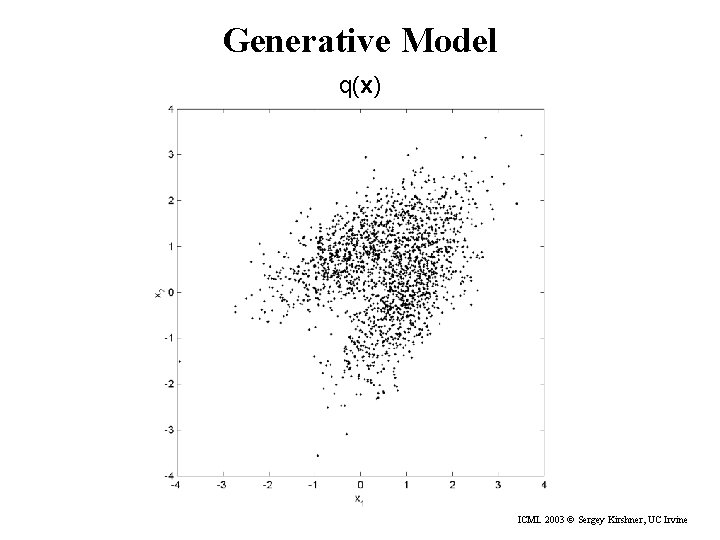

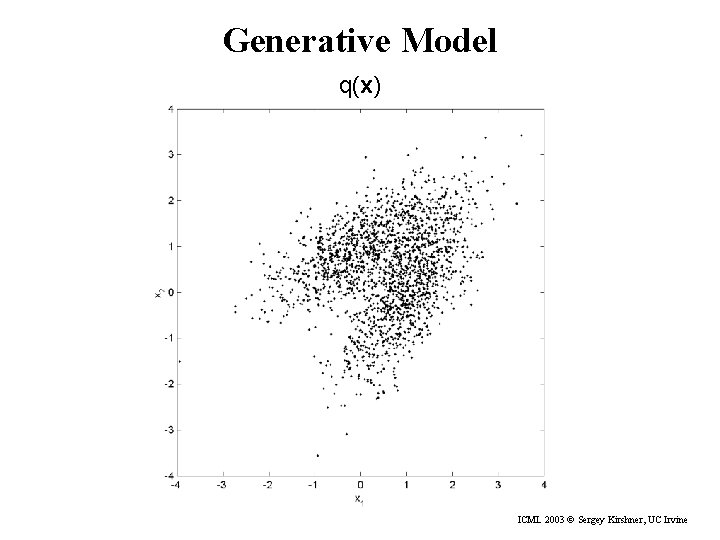

Generative Model q(x) ICML 2003 © Sergey Kirshner, UC Irvine

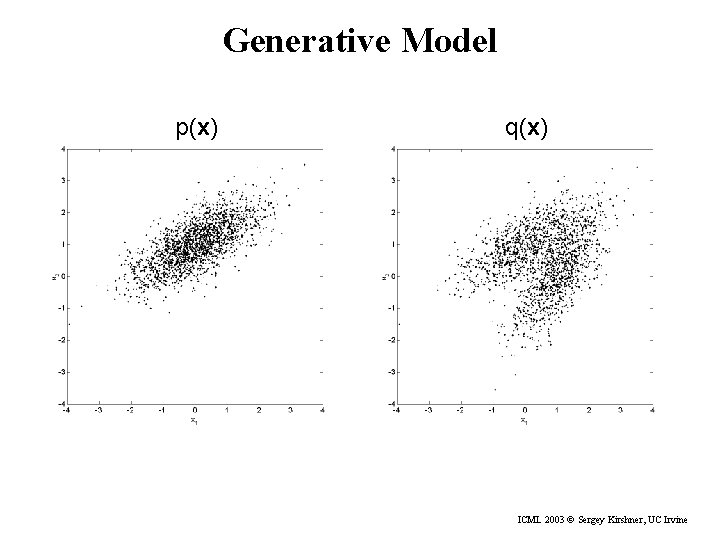

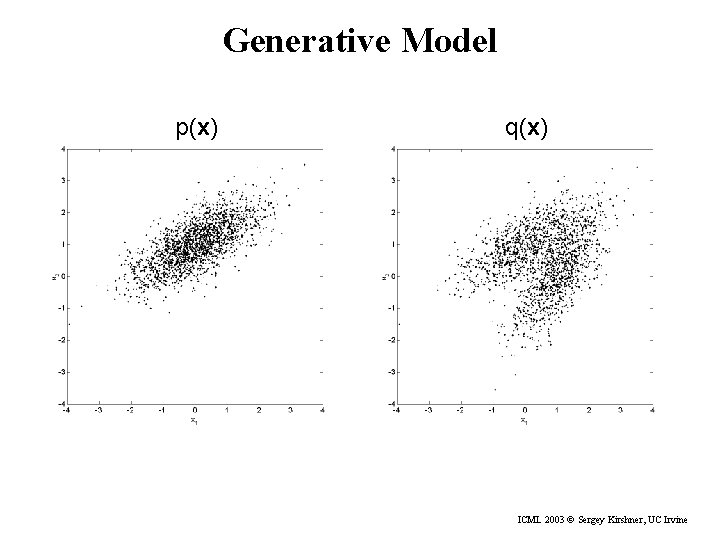

Generative Model p(x) q(x) ICML 2003 © Sergey Kirshner, UC Irvine

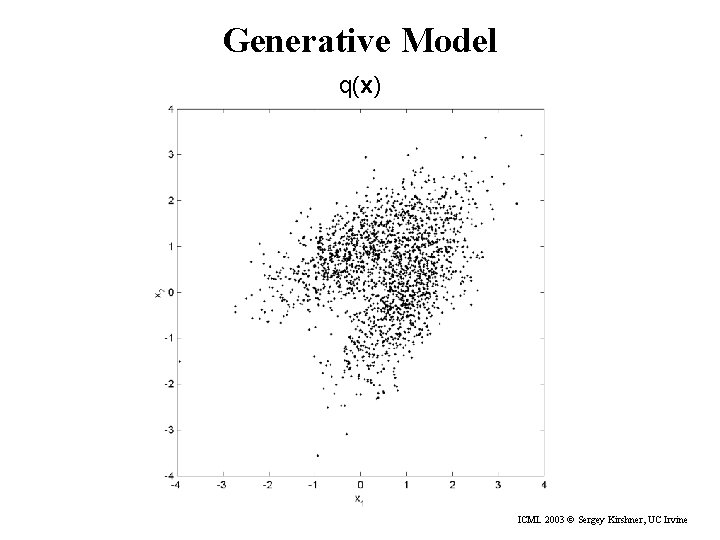

Generative Model q(x) ICML 2003 © Sergey Kirshner, UC Irvine

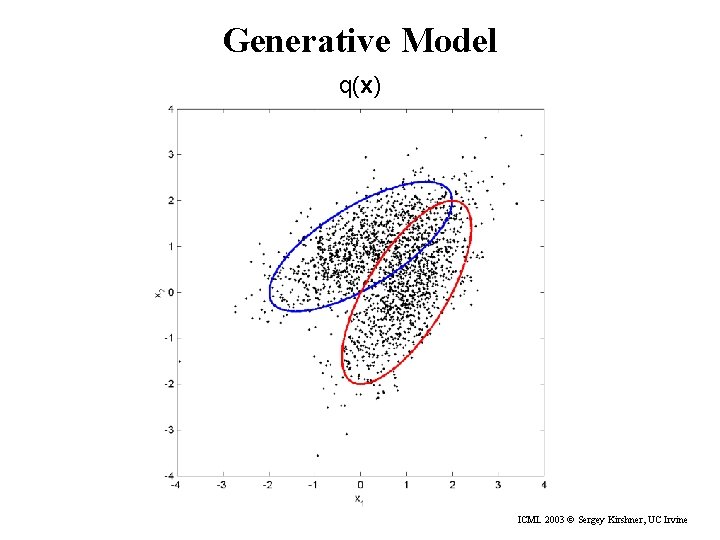

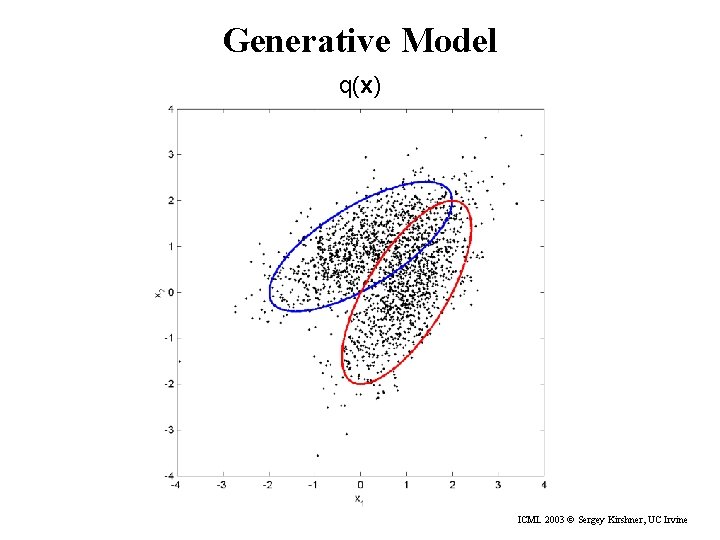

Generative Model q(x) ICML 2003 © Sergey Kirshner, UC Irvine

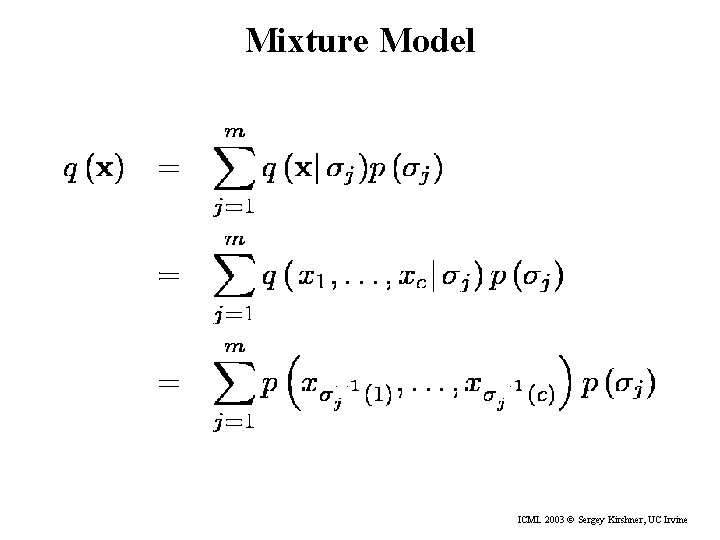

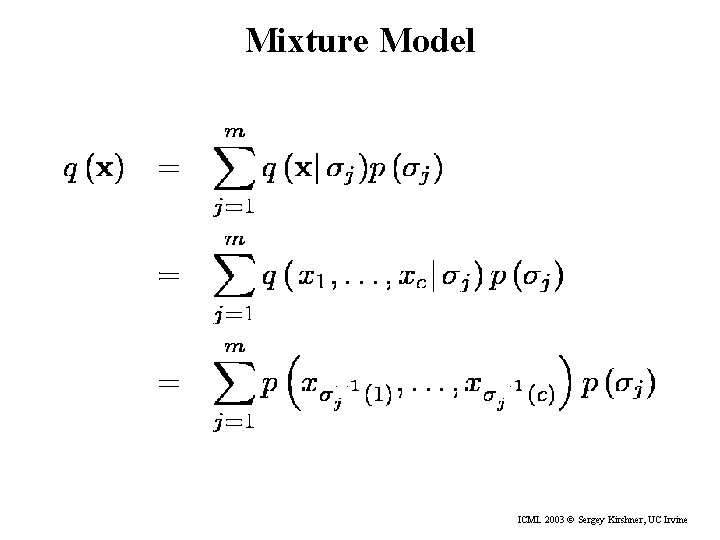

Mixture Model ICML 2003 © Sergey Kirshner, UC Irvine

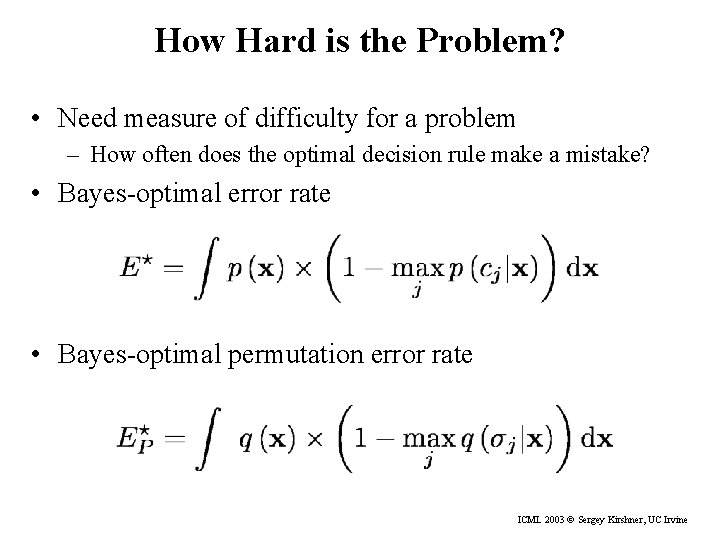

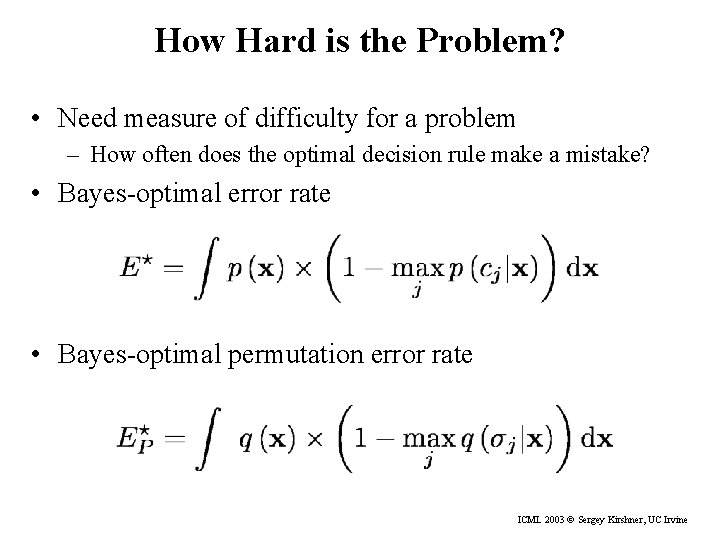

How Hard is the Problem? • Need measure of difficulty for a problem – How often does the optimal decision rule make a mistake? • Bayes-optimal error rate • Bayes-optimal permutation error rate ICML 2003 © Sergey Kirshner, UC Irvine

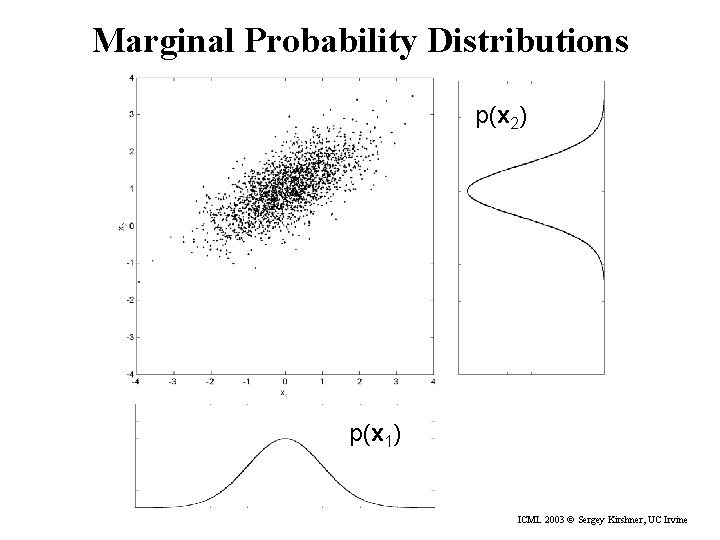

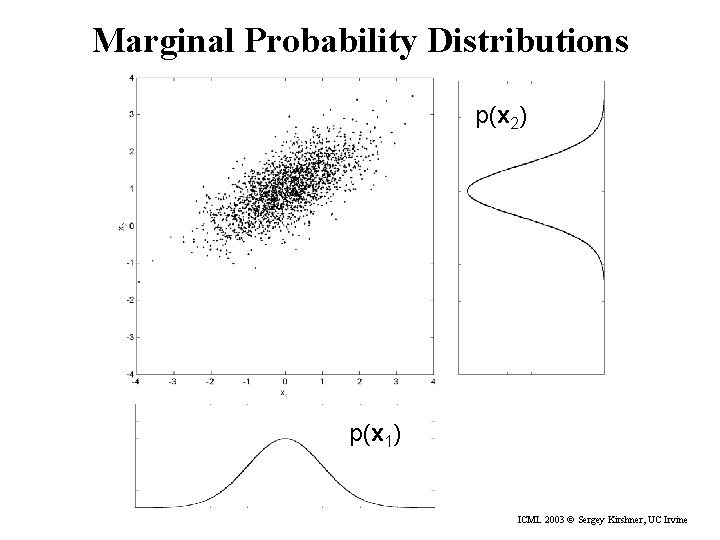

Marginal Probability Distributions p(x 2) p(x 1) ICML 2003 © Sergey Kirshner, UC Irvine

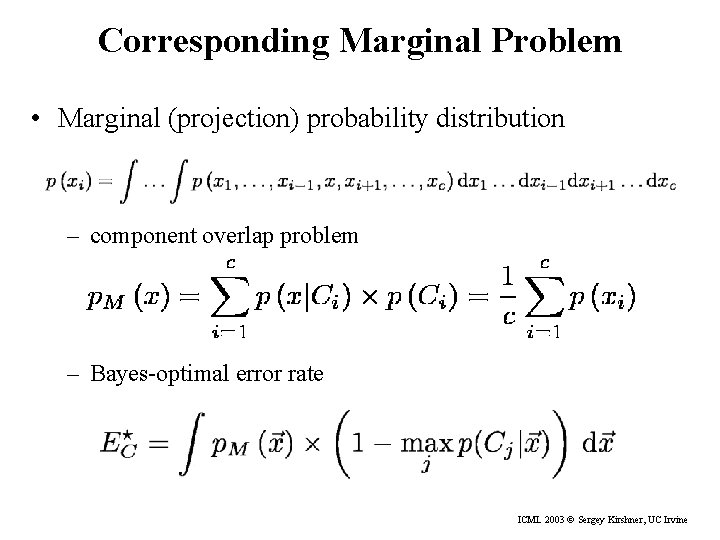

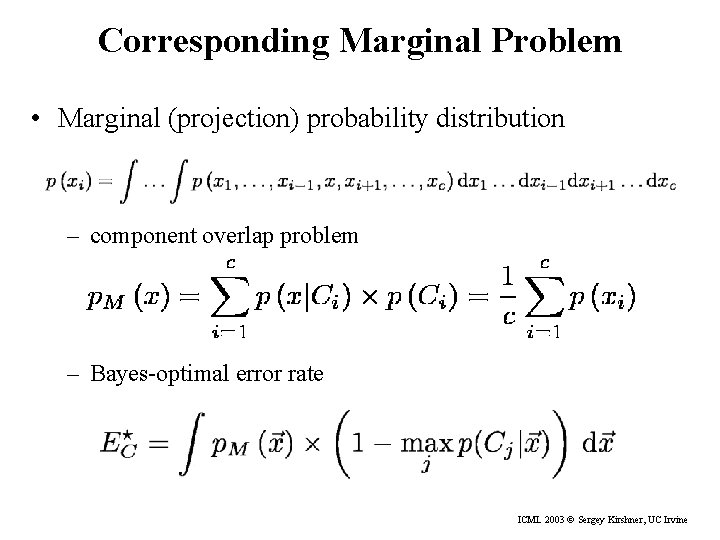

Corresponding Marginal Problem • Marginal (projection) probability distribution – component overlap problem – Bayes-optimal error rate ICML 2003 © Sergey Kirshner, UC Irvine

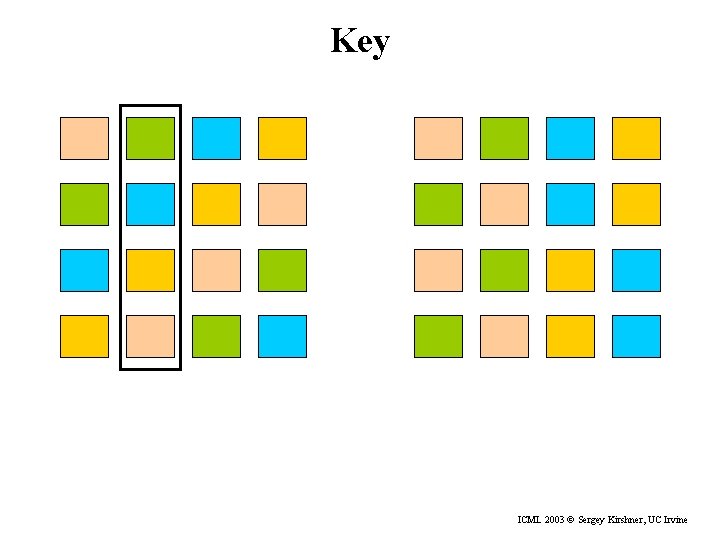

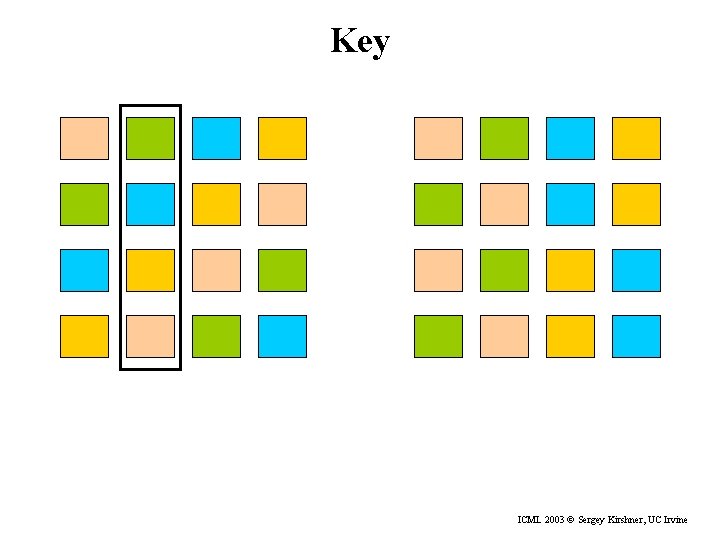

Key ICML 2003 © Sergey Kirshner, UC Irvine

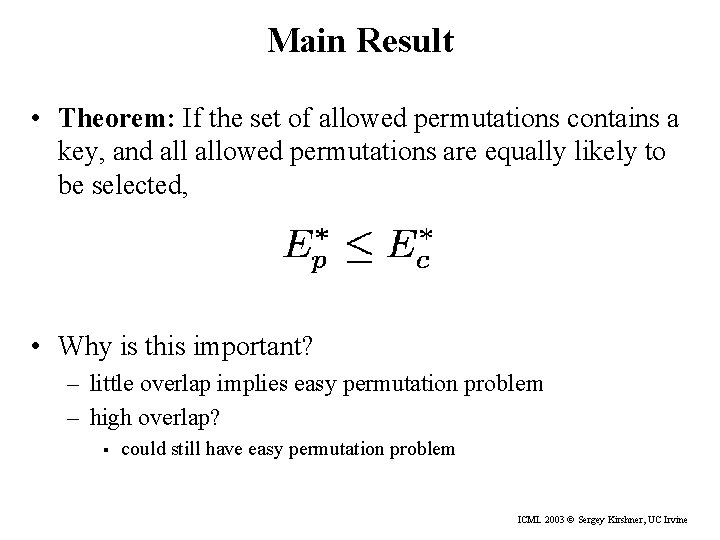

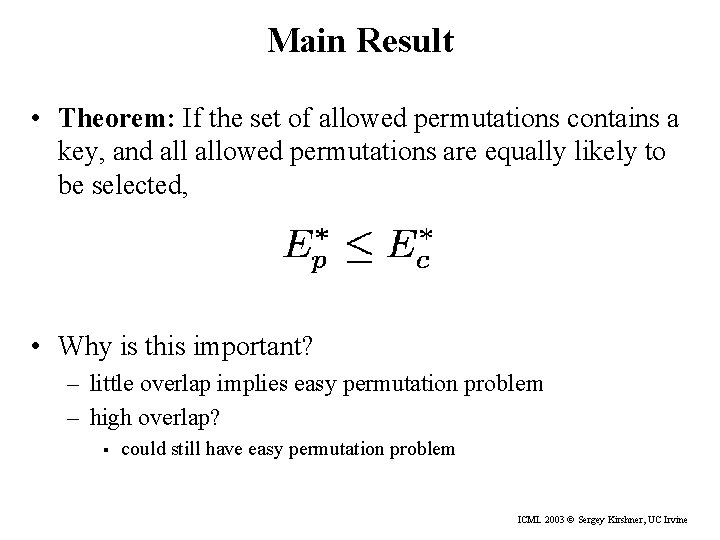

Main Result • Theorem: If the set of allowed permutations contains a key, and allowed permutations are equally likely to be selected, • Why is this important? – little overlap implies easy permutation problem – high overlap? § could still have easy permutation problem ICML 2003 © Sergey Kirshner, UC Irvine

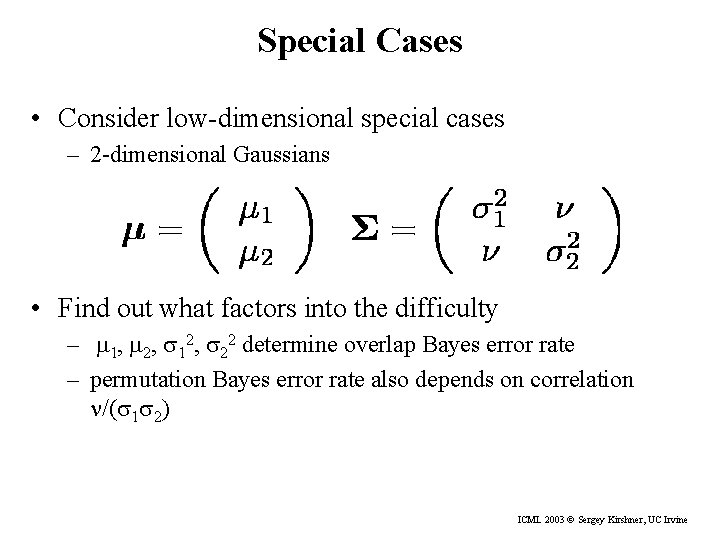

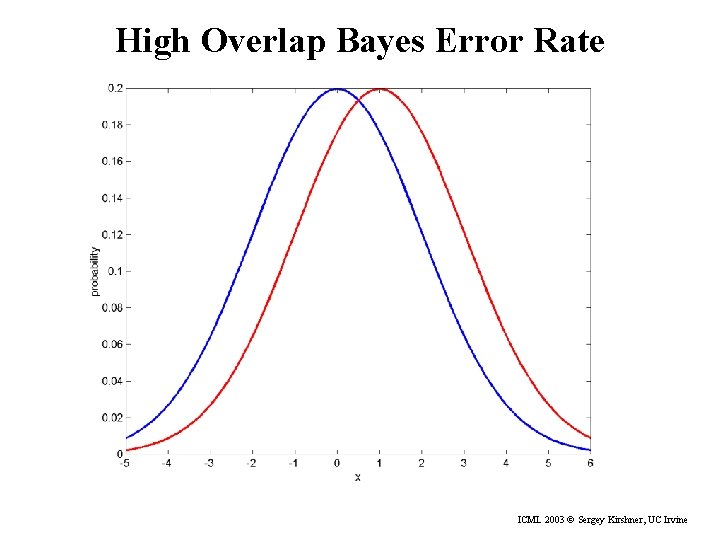

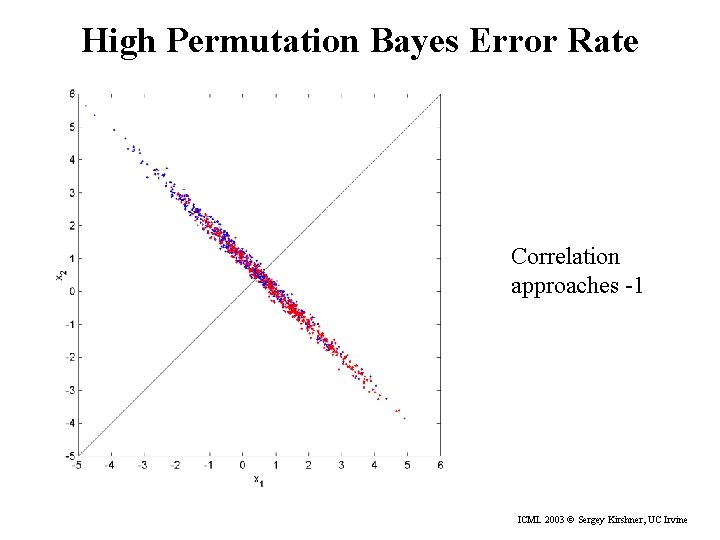

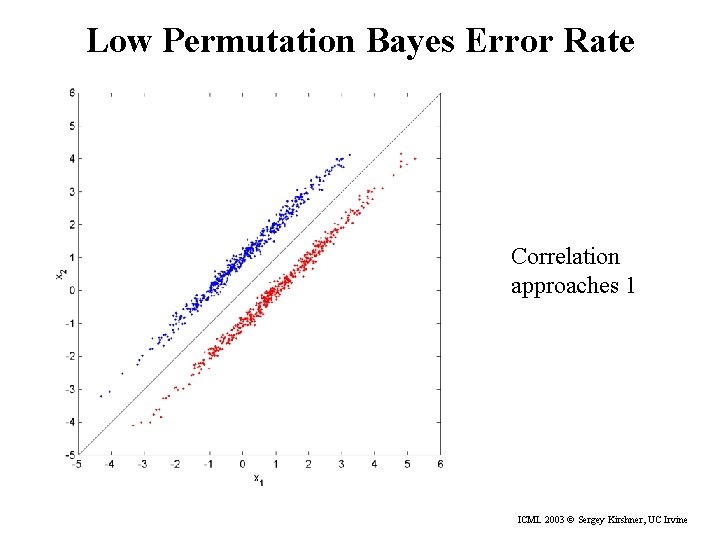

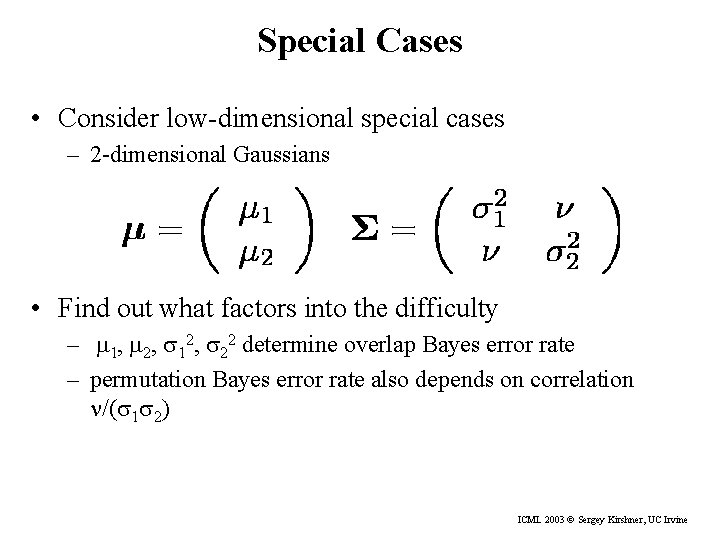

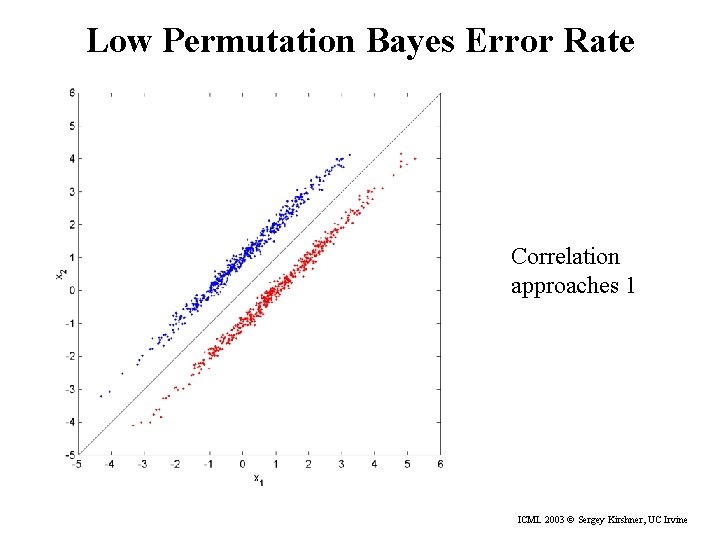

Special Cases • Consider low-dimensional special cases – 2 -dimensional Gaussians • Find out what factors into the difficulty – m 1, m 2, s 12, s 22 determine overlap Bayes error rate – permutation Bayes error rate also depends on correlation n/(s 1 s 2) ICML 2003 © Sergey Kirshner, UC Irvine

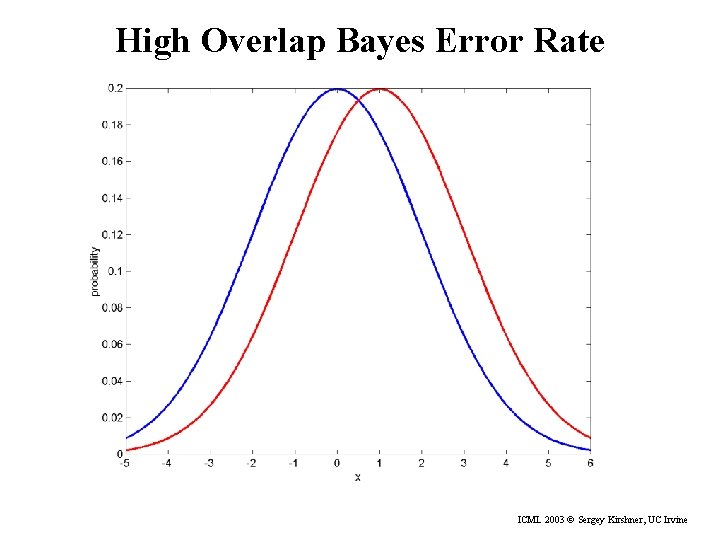

High Overlap Bayes Error Rate ICML 2003 © Sergey Kirshner, UC Irvine

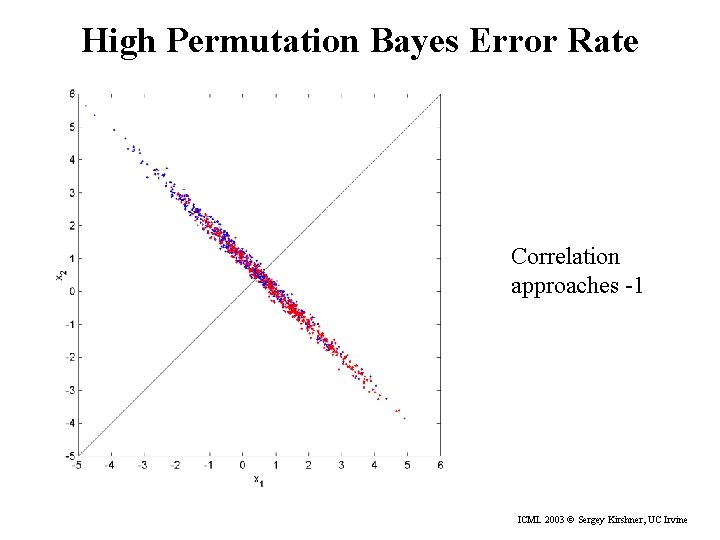

High Permutation Bayes Error Rate Correlation approaches -1 ICML 2003 © Sergey Kirshner, UC Irvine

Low Permutation Bayes Error Rate Correlation approaches 1 ICML 2003 © Sergey Kirshner, UC Irvine

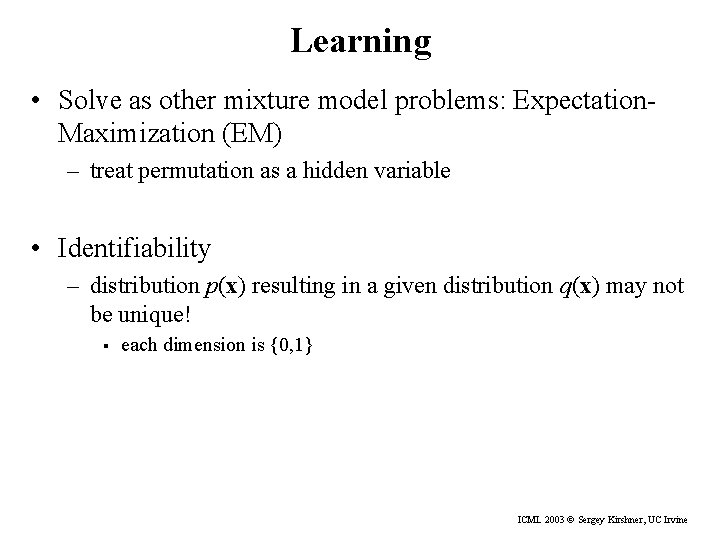

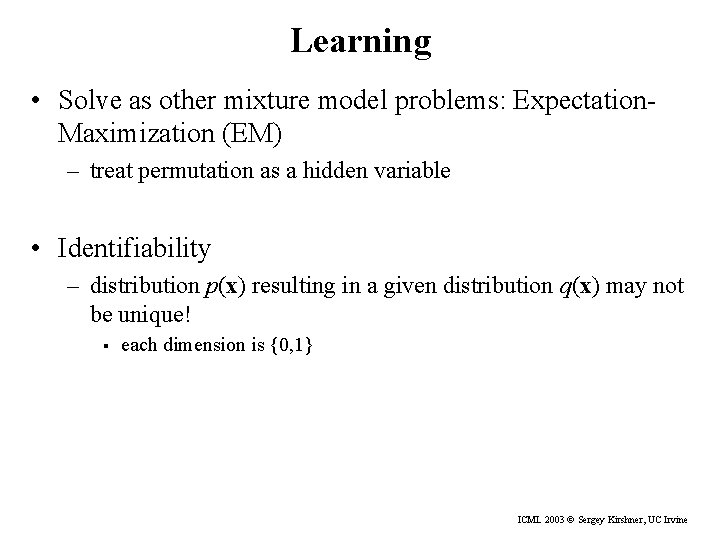

Learning • Solve as other mixture model problems: Expectation. Maximization (EM) – treat permutation as a hidden variable • Identifiability – distribution p(x) resulting in a given distribution q(x) may not be unique! § each dimension is {0, 1} ICML 2003 © Sergey Kirshner, UC Irvine

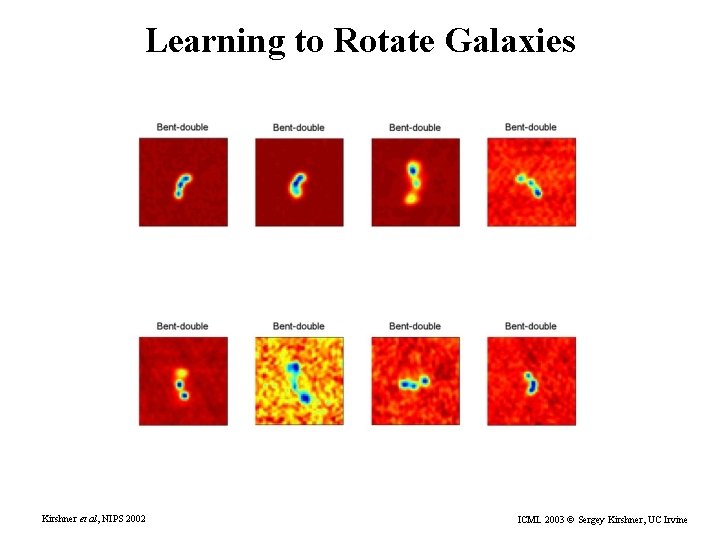

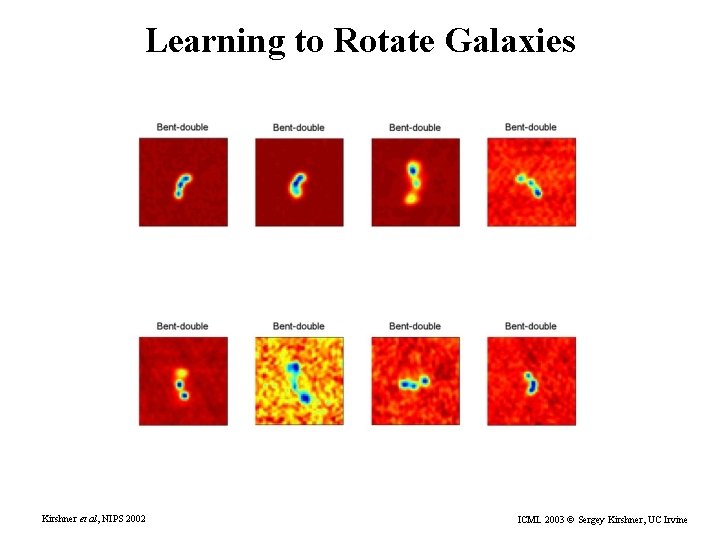

Learning to Rotate Galaxies Kirshner et al, NIPS 2002 ICML 2003 © Sergey Kirshner, UC Irvine

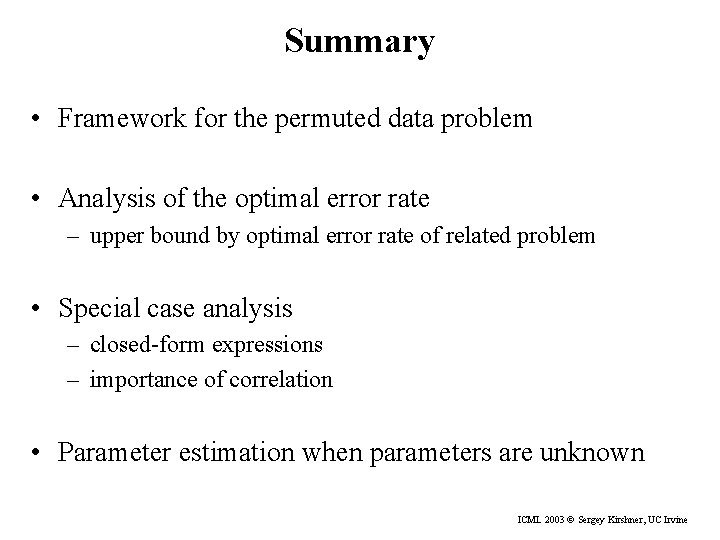

Summary • Framework for the permuted data problem • Analysis of the optimal error rate – upper bound by optimal error rate of related problem • Special case analysis – closed-form expressions – importance of correlation • Parameter estimation when parameters are unknown ICML 2003 © Sergey Kirshner, UC Irvine

Future Work • Identifiability – What distributions are identifiable? – Do permutation make identifiability problem different from ordinary mixtures? • What to do with large number of permutations? • Other applications ICML 2003 © Sergey Kirshner, UC Irvine

Acknowledgements • Funding – NSF – DOE • Datalab @ UCI http: //www. datalab. uci. edu • Sapphire Group at LLNL – Chandrika Kamath – Erick Cantú-Paz ICML 2003 © Sergey Kirshner, UC Irvine