Tracking by Sampling Trackers Junseok Kwon and Kyoung

Tracking by Sampling Trackers Junseok Kwon* and Kyoung Mu lee Computer Vision Lab. Dept. of EECS Seoul National University, Korea Homepage: http: //cv. snu. ac. kr

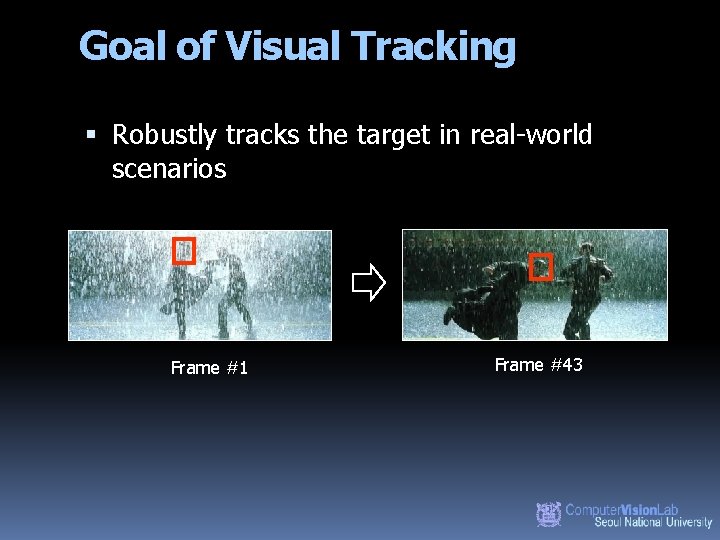

Goal of Visual Tracking Robustly tracks the target in real-world scenarios Frame #1 Frame #43

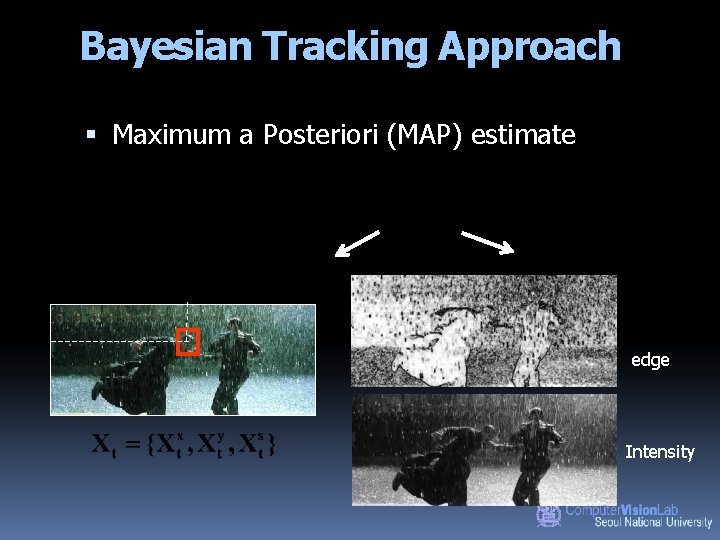

Bayesian Tracking Approach Maximum a Posteriori (MAP) estimate edge Intensity

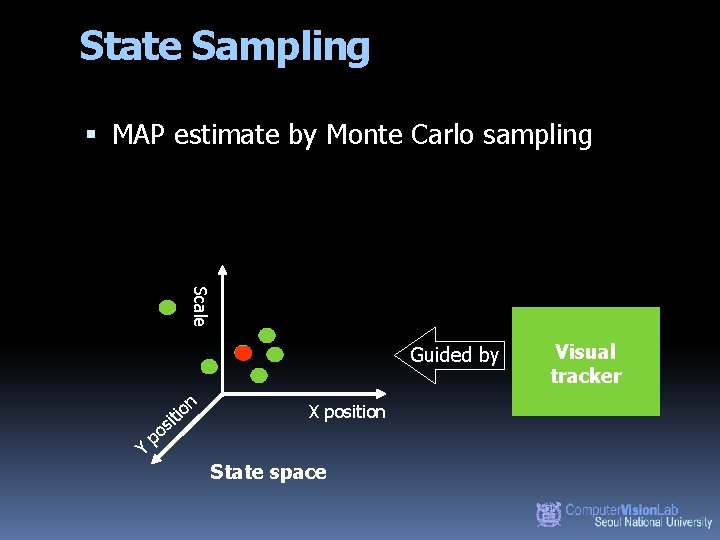

State Sampling MAP estimate by Monte Carlo sampling Scale Guided by n o is ti Y po X position State space Visual tracker

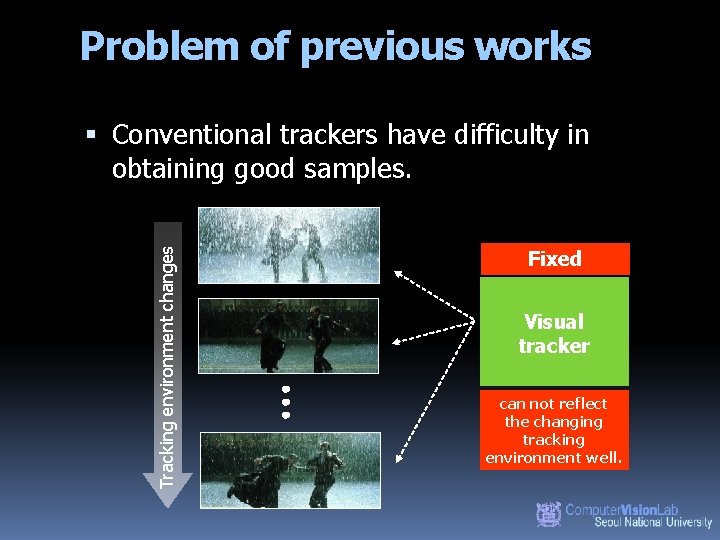

Problem of previous works Tracking environment changes Conventional trackers have difficulty in obtaining good samples. Fixed Visual tracker can not reflect the changing tracking environment well.

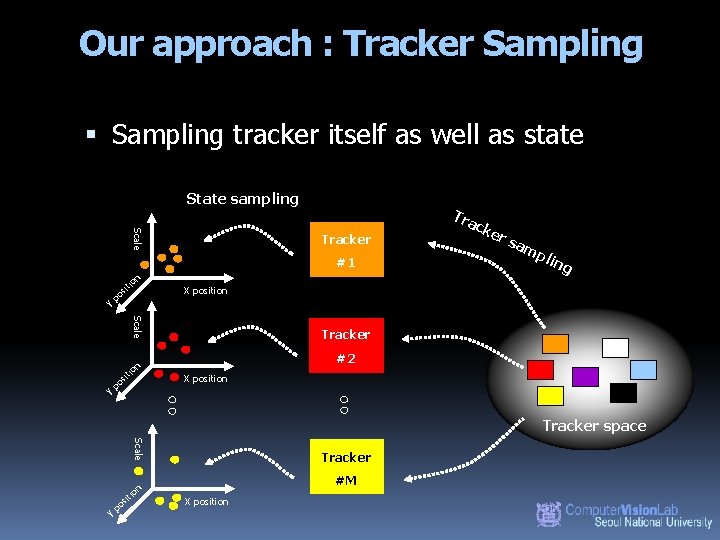

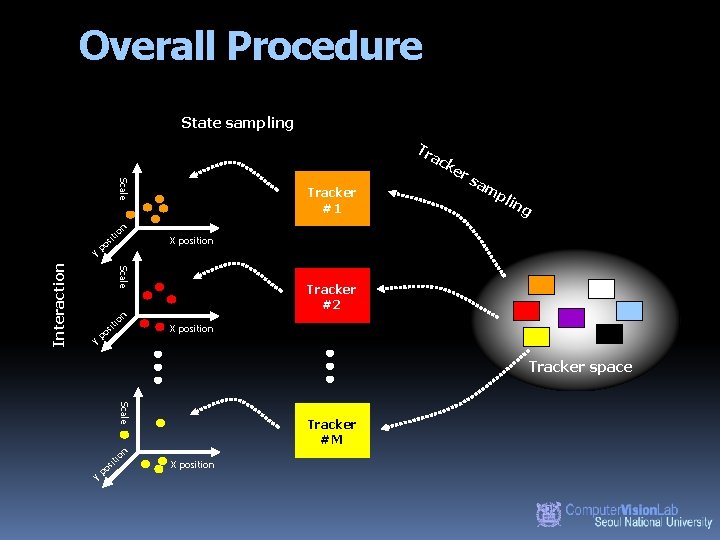

Our approach : Tracker Sampling tracker itself as well as state State sampling Scale Tracker #1 on iti Y s po Scale Y s po rs am p lin g X position Tracker #2 n o iti Tra cke X position Tracker space Scale Tracker #M n io sit Y po X position

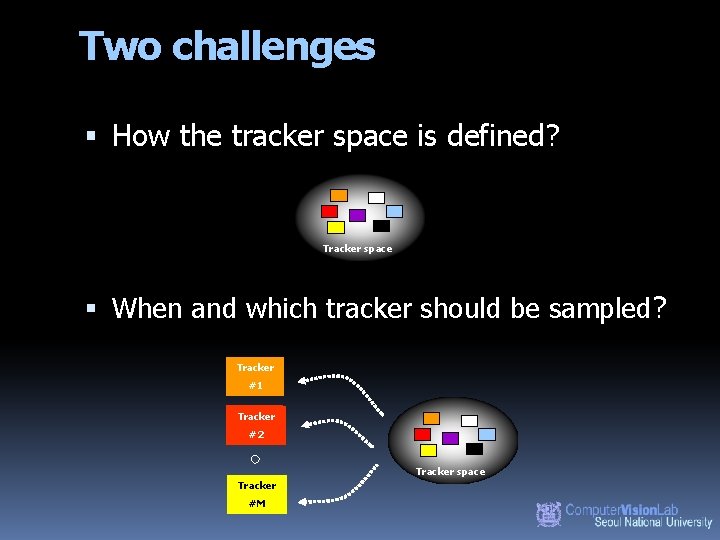

Two challenges How the tracker space is defined? Tracker space When and which tracker should be sampled? Tracker #1 Tracker #2 Tracker space Tracker #M

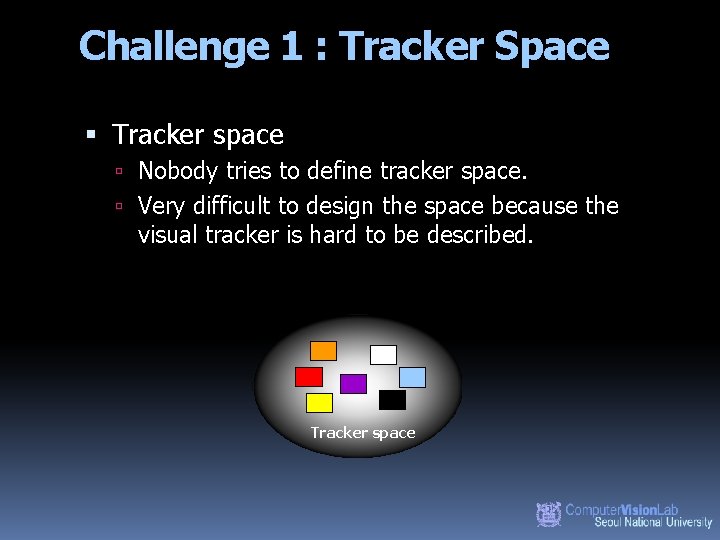

Challenge 1 : Tracker Space Tracker space Nobody tries to define tracker space. Very difficult to design the space because the visual tracker is hard to be described. Tracker space

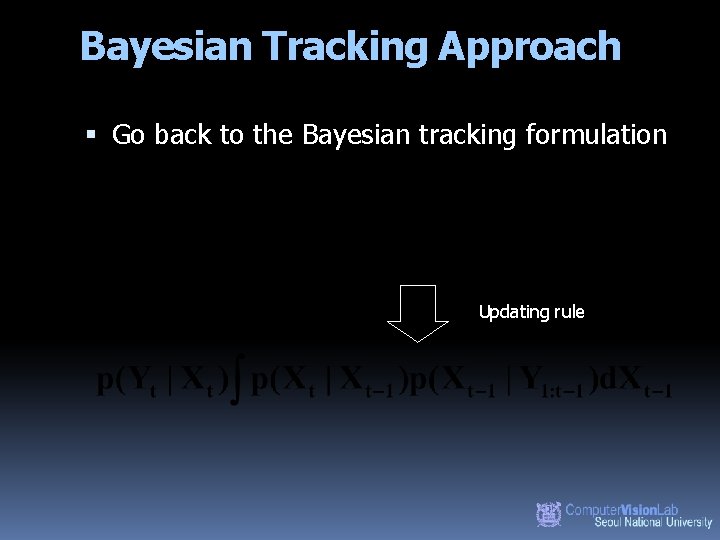

Bayesian Tracking Approach Go back to the Bayesian tracking formulation Updating rule

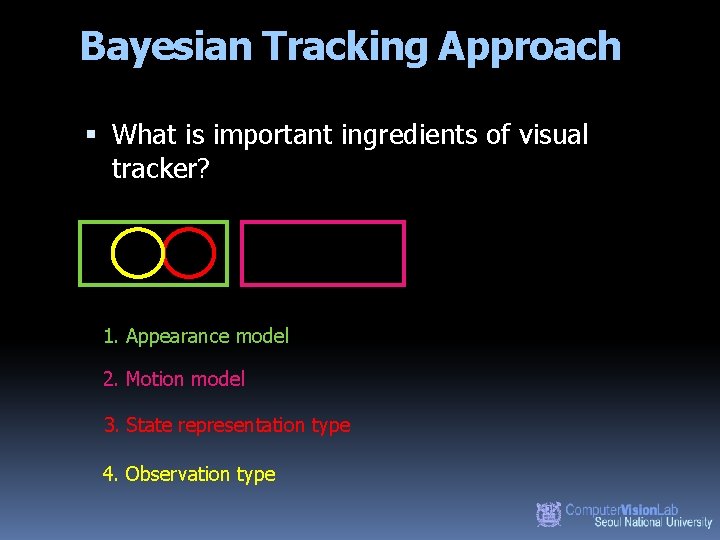

Bayesian Tracking Approach What is important ingredients of visual tracker? 1. Appearance model 2. Motion model 3. State representation type 4. Observation type

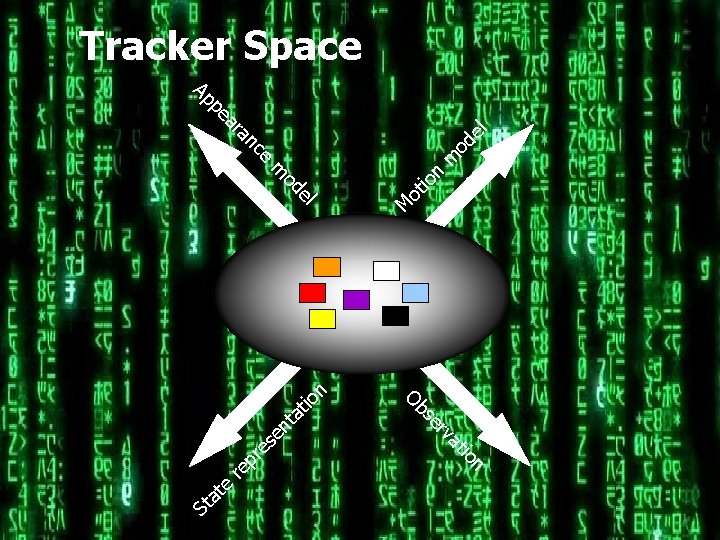

n io n nt at io at rv se Ob se M n ot io el od m re el m od ce re p an ar pe e Ap St at Tracker Space

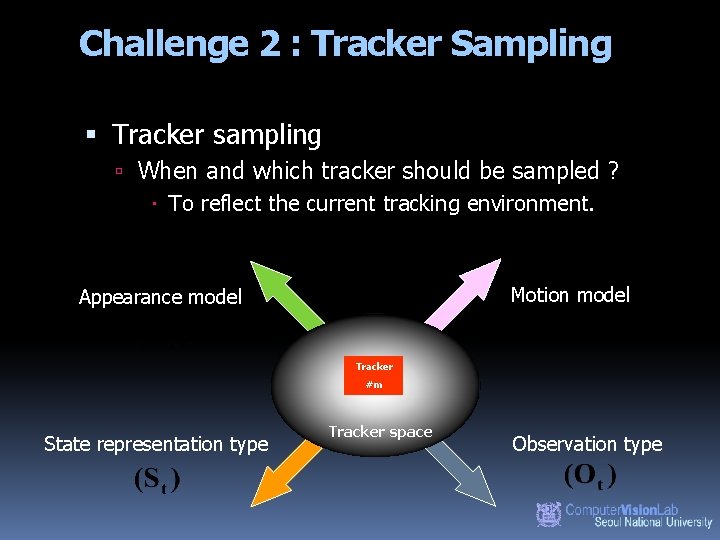

Challenge 2 : Tracker Sampling Tracker sampling When and which tracker should be sampled ? To reflect the current tracking environment. Motion model Appearance model Tracker #m State representation type Tracker space Observation type

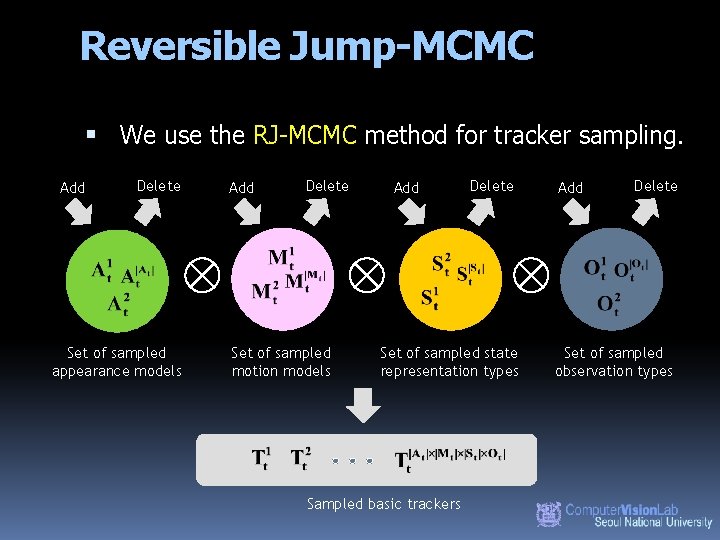

Reversible Jump-MCMC We use the RJ-MCMC method for tracker sampling. Add Delete Set of sampled appearance models Add Delete Set of sampled motion models Add Delete Set of sampled state representation types Sampled basic trackers Add Delete Set of sampled observation types

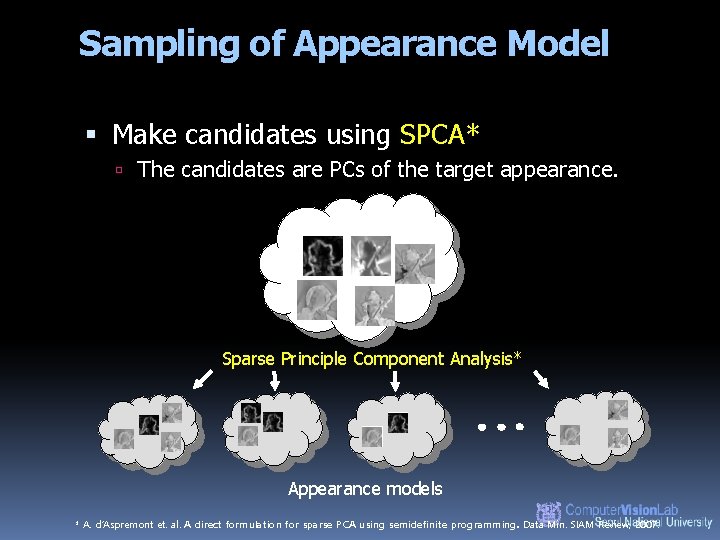

Sampling of Appearance Model Make candidates using SPCA* The candidates are PCs of the target appearance. Sparse Principle Component Analysis* Appearance models * A. d’Aspremont et. al. A direct formulation for sparse PCA using semidefinite programming. Data Min. SIAM Review, 2007.

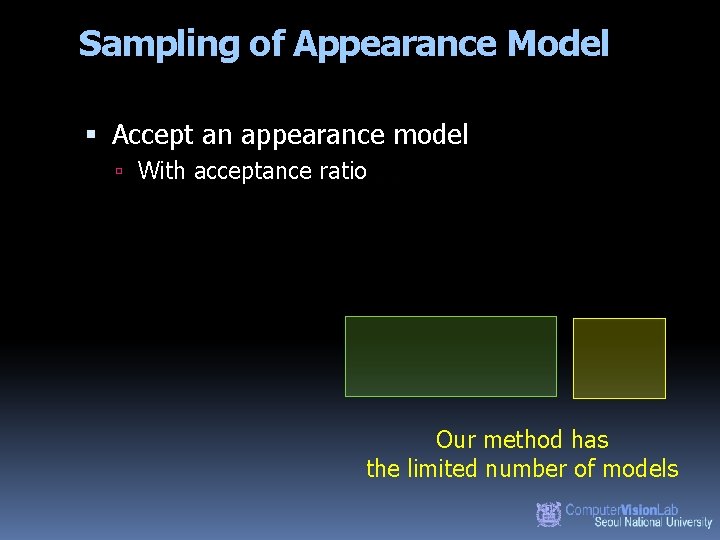

Sampling of Appearance Model Accept an appearance model With acceptance ratio Our method has the limited number of models

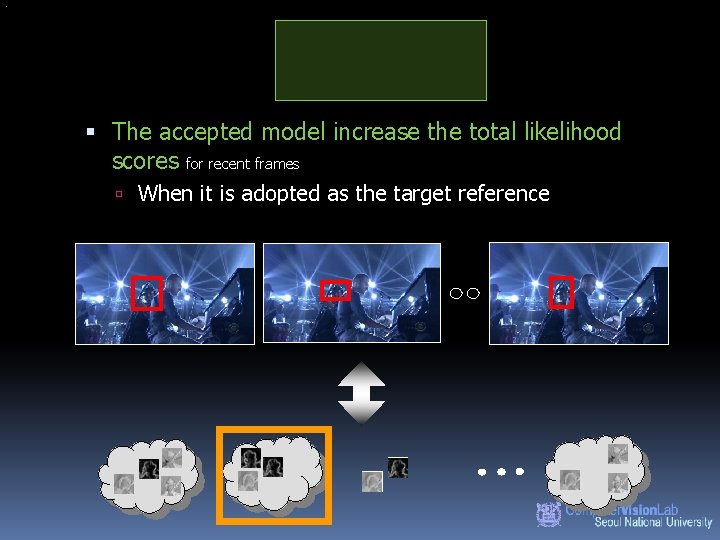

The accepted model increase the total likelihood scores for recent frames When it is adopted as the target reference

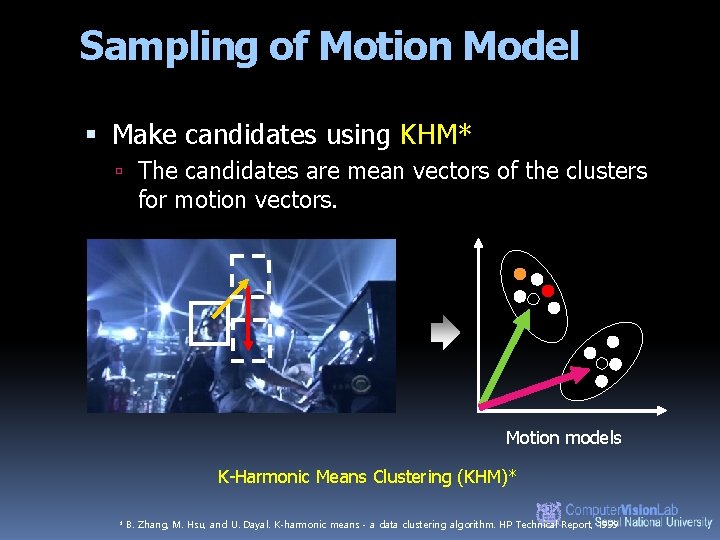

Sampling of Motion Model Make candidates using KHM* The candidates are mean vectors of the clusters for motion vectors. Motion models K-Harmonic Means Clustering (KHM)* * B. Zhang, M. Hsu, and U. Dayal. K-harmonic means - a data clustering algorithm. HP Technical Report, 1999

Sampling of Motion Model Accept a motion model With acceptance ratio Our method has the limited number of models

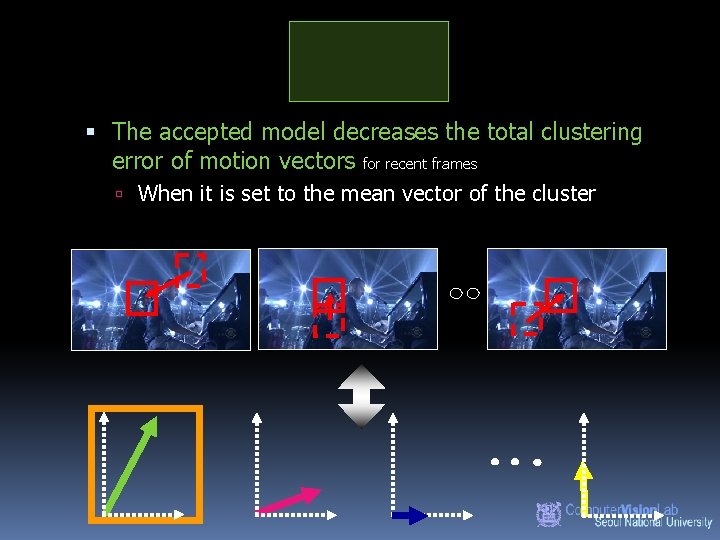

The accepted model decreases the total clustering error of motion vectors for recent frames When it is set to the mean vector of the cluster

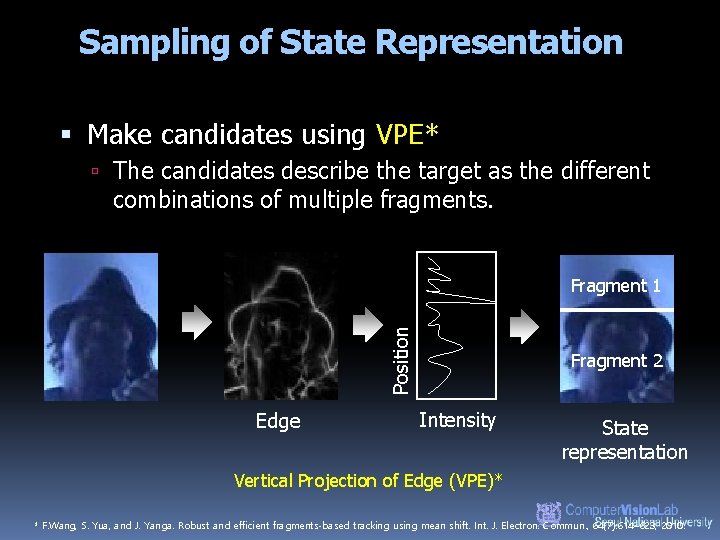

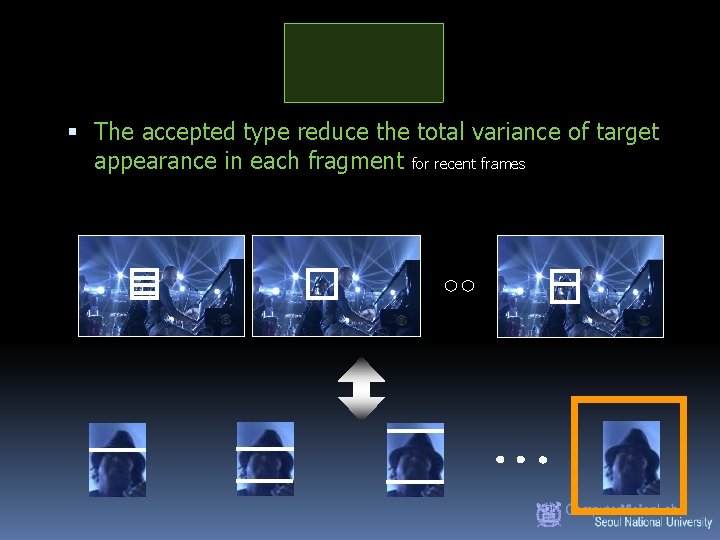

Sampling of State Representation Make candidates using VPE* The candidates describe the target as the different combinations of multiple fragments. Position Fragment 1 Edge Fragment 2 Intensity State representation Vertical Projection of Edge (VPE)* * F. Wang, S. Yua, and J. Yanga. Robust and efficient fragments-based tracking using mean shift. Int. J. Electron. Commun. , 64(7): 614– 623, 2010.

Sampling of State Representation Accept a state representation type With acceptance ratio Our method has the limited number of types

The accepted type reduce the total variance of target appearance in each fragment for recent frames

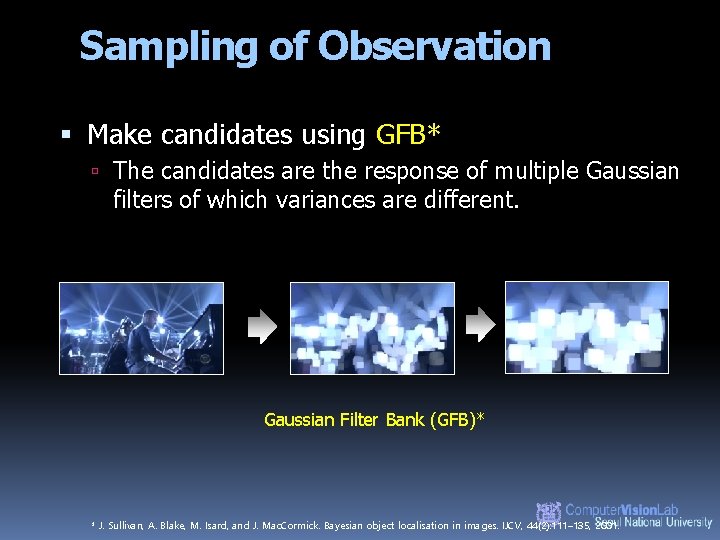

Sampling of Observation Make candidates using GFB* The candidates are the response of multiple Gaussian filters of which variances are different. Gaussian Filter Bank (GFB)* * J. Sullivan, A. Blake, M. Isard, and J. Mac. Cormick. Bayesian object localisation in images. IJCV, 44(2): 111– 135, 2001.

Sampling of Observation Accept an observation type With acceptance ratio Our method has the limited number of types

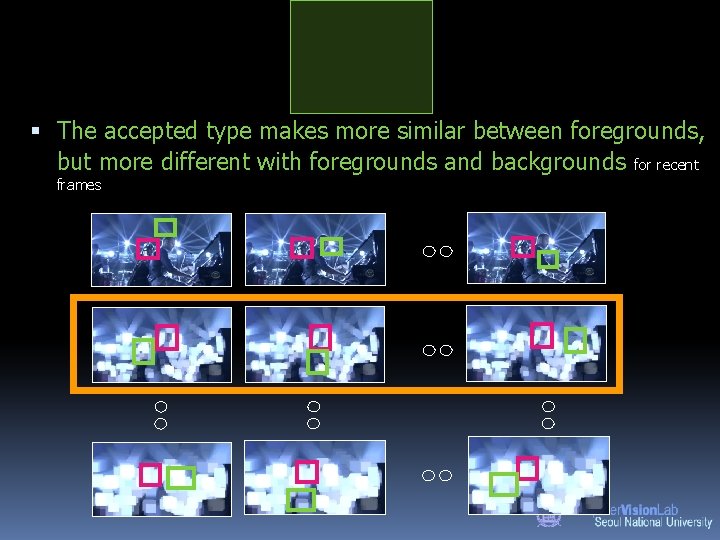

The accepted type makes more similar between foregrounds, but more different with foregrounds and backgrounds for recent frames

Overall Procedure State sampling Tr a Scale n io Y sit po rs am pli ng X position Scale Interaction Y sit po Tracker #1 ck e Tracker #2 X position Tracker space Scale n io Y sit po Tracker #M X position

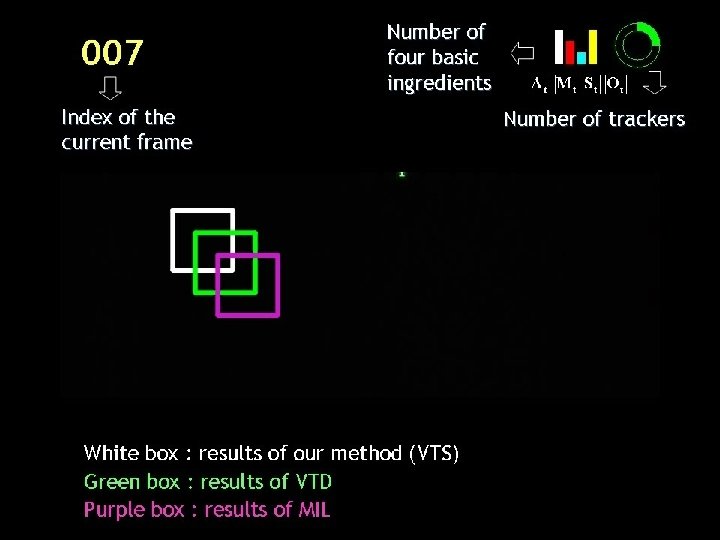

Qualitative Results

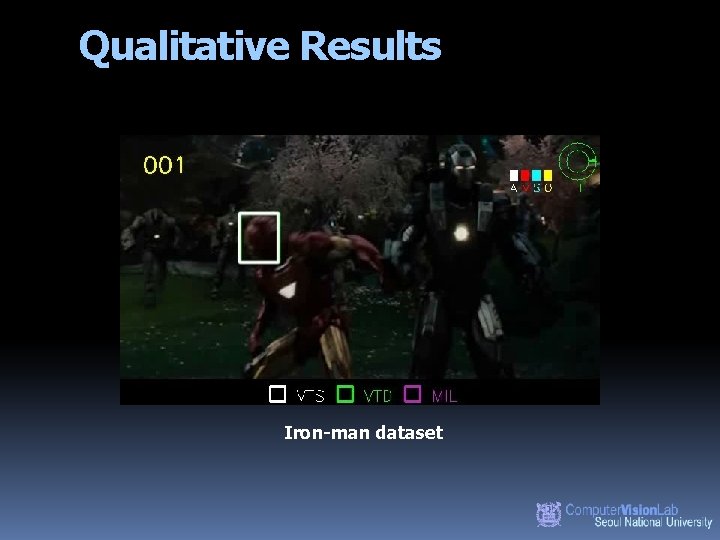

Qualitative Results Iron-man dataset

Qualitative Results Matrix dataset

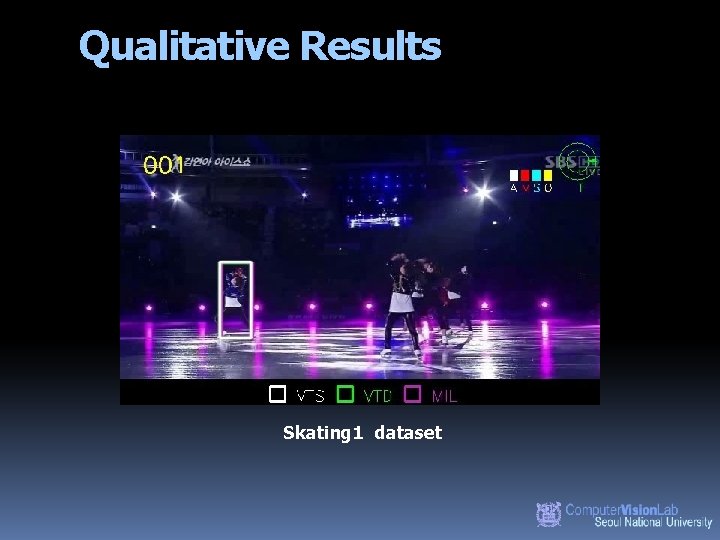

Qualitative Results Skating 1 dataset

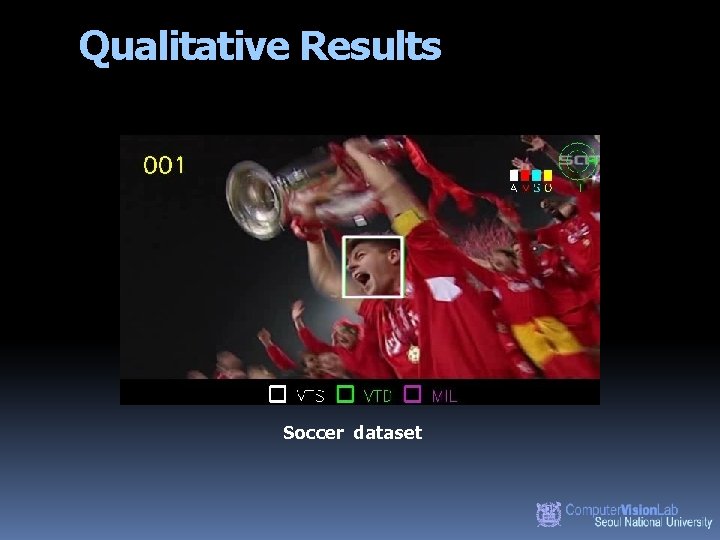

Qualitative Results Soccer dataset

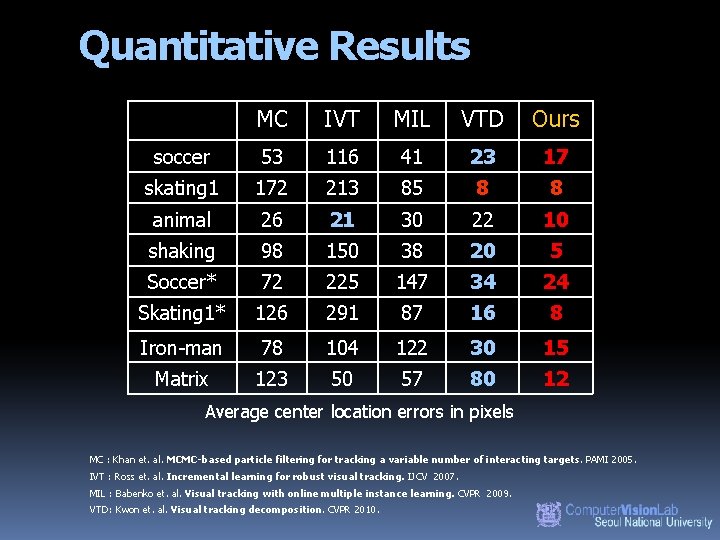

Quantitative Results MC IVT MIL VTD Ours soccer 53 116 41 23 17 skating 1 172 213 85 8 8 animal 26 21 30 22 10 shaking 98 150 38 20 5 Soccer* 72 225 147 34 24 Skating 1* 126 291 87 16 8 Iron-man 78 104 122 30 15 Matrix 123 50 57 80 12 Average center location errors in pixels MC : Khan et. al. MCMC-based particle filtering for tracking a variable number of interacting targets. PAMI 2005. IVT : Ross et. al. Incremental learning for robust visual tracking. IJCV 2007. MIL : Babenko et. al. Visual tracking with online multiple instance learning. CVPR 2009. VTD: Kwon et. al. Visual tracking decomposition. CVPR 2010.

Summary Visual tracker sampler New framework, which samples visual tracker itself as well as state. Efficient sampling strategy to sample the visual tracker.

http: //cv. snu. ac. kr/paradiso

- Slides: 34