String Matching String Matching Problem Pattern compress Text

String Matching

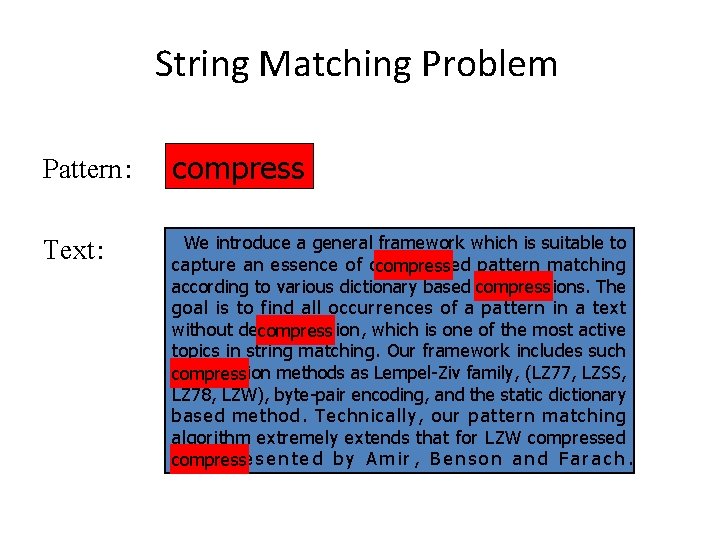

String Matching Problem Pattern: compress Text: We introduce a general framework which is suitable to capture an essence of compressed pattern matching compress according to various dictionary based compressions. The goal is to find all occurrences of a pattern in a text without decompression, which is one of the most active compress topics in string matching. Our framework includes such compression methods as Lempel-Ziv family, (LZ 77, LZSS, compress LZ 78, LZW), byte-pair encoding, and the static dictionary based method. Technically, our pattern matching algorithm extremely extends that for LZW compressed text presented by Amir, Benson and Farach. compress

![Notation & Terminology • String S: – S[1…n] • Sub-string of S : – Notation & Terminology • String S: – S[1…n] • Sub-string of S : –](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-3.jpg)

Notation & Terminology • String S: – S[1…n] • Sub-string of S : – S[i…j] • Prefix of S: – S[1…i] • Suffix of S: – S[i…n] • |S| = n (string length) • Ex: AGATCGATGGA Prefix Sub-string Suffix

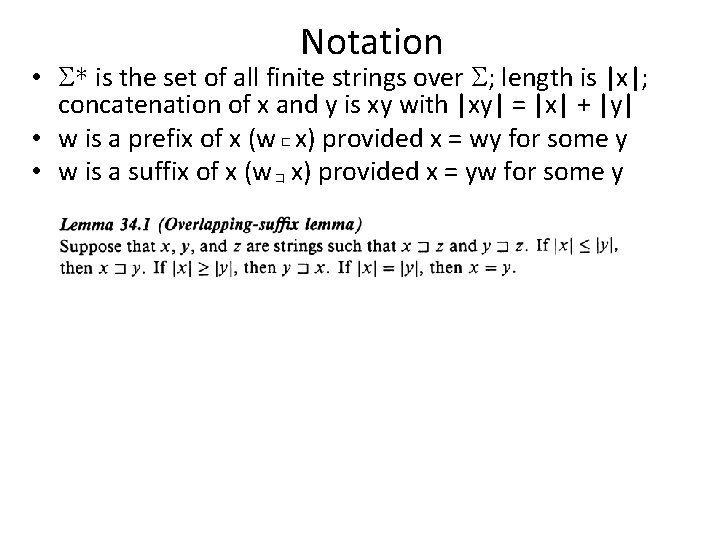

Notation • * is the set of all finite strings over ; length is |x|; concatenation of x and y is xy with |xy| = |x| + |y| • w is a prefix of x (w x) provided x = wy for some y • w is a suffix of x (w x) provided x = yw for some y

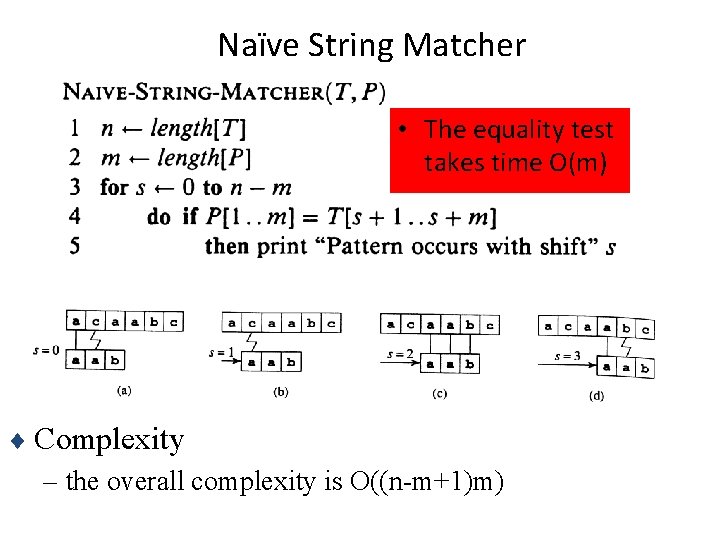

Naïve String Matcher • The equality test takes time O(m) ¨ Complexity – the overall complexity is O((n-m+1)m)

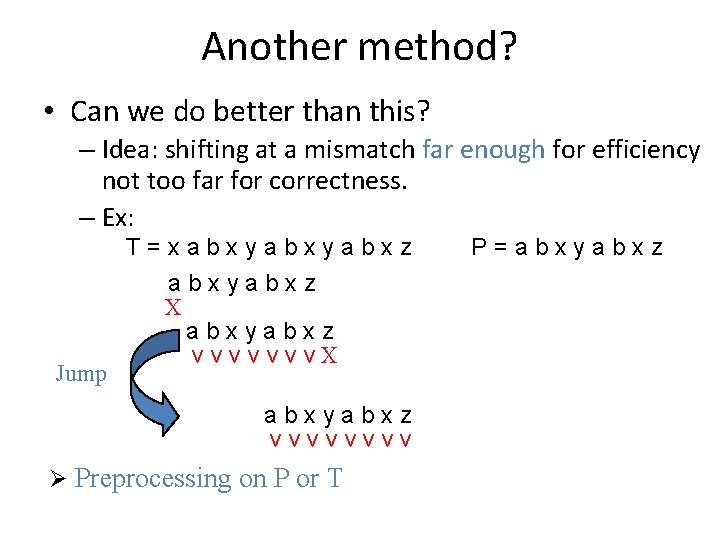

Another method? • Can we do better than this? – Idea: shifting at a mismatch far enough for efficiency not too far for correctness. – Ex: Jump T=xabxyabxz X abxyabxz v v v v. X abxyabxz vvvv Ø Preprocessing on P or T P=abxyabxz

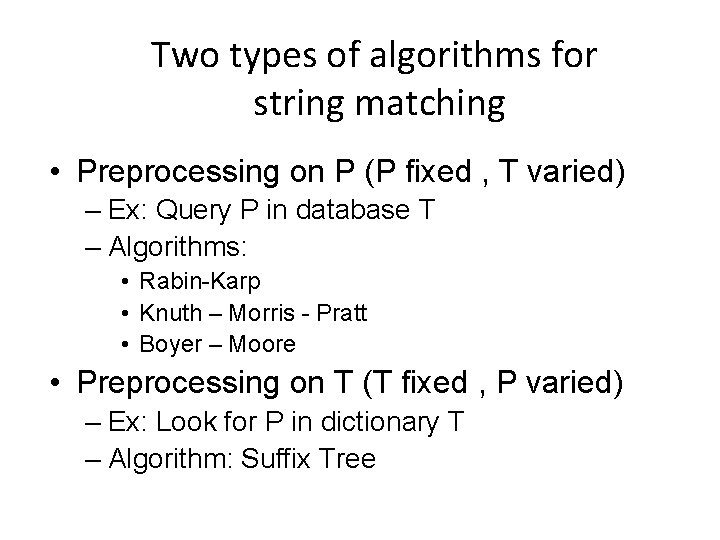

Two types of algorithms for string matching • Preprocessing on P (P fixed , T varied) – Ex: Query P in database T – Algorithms: • Rabin-Karp • Knuth – Morris - Pratt • Boyer – Moore • Preprocessing on T (T fixed , P varied) – Ex: Look for P in dictionary T – Algorithm: Suffix Tree

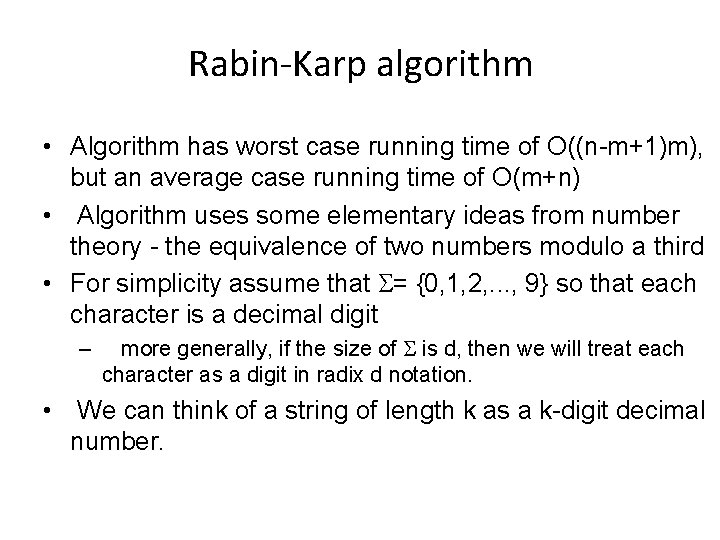

Rabin-Karp algorithm • Algorithm has worst case running time of O((n-m+1)m), but an average case running time of O(m+n) • Algorithm uses some elementary ideas from number theory - the equivalence of two numbers modulo a third • For simplicity assume that = {0, 1, 2, . . . , 9} so that each character is a decimal digit – more generally, if the size of is d, then we will treat each character as a digit in radix d notation. • We can think of a string of length k as a k-digit decimal number.

Rabin Karp algorithm • Example - string of characters 354123 corresponds to the number 354, 123 • Given a pattern P[1. . m], let p be the decimal number corresponding to the m character pattern • Given a text T[1. . n], let ts = T[s+1. . s+m] for s = 0, 1, . . . n-m denotes the decimal value of the m character substring starting at position s+1. • Clearly, ts = p if and only if P[1. . m] = T[s+1. . s+m] • So, if we could compute p in O(m) time and compute all of the ts in O(n) time, then in O(n) time we can compare all of the shifts with p and find all matching locations. • Of course, the numbers may get very large, but we'll solve that problem in 2 -3 slides

![P --> p • p = P[m] + 10 x P[m-1] + 10 2 P --> p • p = P[m] + 10 x P[m-1] + 10 2](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-10.jpg)

P --> p • p = P[m] + 10 x P[m-1] + 10 2 x P[m-2] +. . . 10 m-1 x P[1] • Computing this in a brute force way would require O(m 2) operations to compute all of the powers of 10. • But notice, that we can eliminate one multiplication by 10 in all terms (but the first), by factoring the 10 out: • p = P[m] + 10 (P[m-1] + 10 x P[m-2] +. . . + 10 m-2 x P[1]) • Applying this factoring rule repeatedly will lead to only a linear number, in m, of multiplications.

Rabin-Karp • Computing p in O(m) time. We use what is called Horner's rule • p = P[m] + 10 (P[m-1] +10 (P[m-2]) +. . . + 10(P[2] + 10 P[1]). . . ))) • Example: P = 3276; P[4] = 6, P[3] = 7, P[2] = 2, P[1] =3 • p = 6 + 10(7 +10(2 + 10(3)))) = 6 + 10(7 + 10(32)) = 6 + 10(327) = 6 + 3270 = 3276 • t 0 can be computed from T[1. . m] using the same method

Rabin Karp • Big question is then computing the remaining ti in O(n-m) time - that is, with just a constant number of operations for each remaining ti. • But, there is a simple relationship between t s+1 and ts: t s+1 = 10(ts - 10(m-1) T[s]) + T[s+m+1] • Example: Suppose T = 314152 and the length of the pattern is 5. Then t 0 = 31, 415. What is t 1? t 1 = 10(31, 415 - 104(3)) + 2 = 10( 1, 415) + 2 = 14, 152

Rabin Karp • We precompute the constant 10(m-1) (which can be done in logm time) so each update of a t takes a constant number of operations. • So, p and all of the ti can be computed in O(m+n) time.

Rabin Karp • So - what is the problem? Well, if P is a long pattern, or if the size of the alphabet is large, then the p and ti may be very large numbers, and each arithmetic operation will not take constant time - the numbers might require many words of memory to represent

Rabin Karp • Solution - compute the p and t's modulo a suitable modulus q. • Horner's rule and the simple updating rule will work using mod q arithmetic • q is large and prime • q is chosen such that 10 q just fits within one computer word. This allows all of the arithmetic operations to be performed usingle precision arithmetic, while keeping q as large as possible • For an alphabet with d symbols, q is chosen so that dq fits within one word.

Rabin Karp ¨ The catch is that p = ts is no longer a sufficient condition for deciding that P matches a substring of T. ¨ What if two values collide? – Similar to hash table functions, it is possible that two or more different strings produce the same “hit” value – any hit will have to be tested to verify that it is not spurious and that p[1. . m] = T[s+1. . s+m]

The Calculations

What is a worst case? • Let P be a string of length m of all a's • Let T be a string of length n of all a's • Then all substrings of length m of T will have the same "key" as P, and all will have to be checked. • But we know that if a substring of T matches P, to determine if the "next" substring matches we only have to perform one additional comparison. • So, the Rabin Karp algorithm can do a lot of unnecessary work. • In practice, though, it doesn't.

The Algorithm

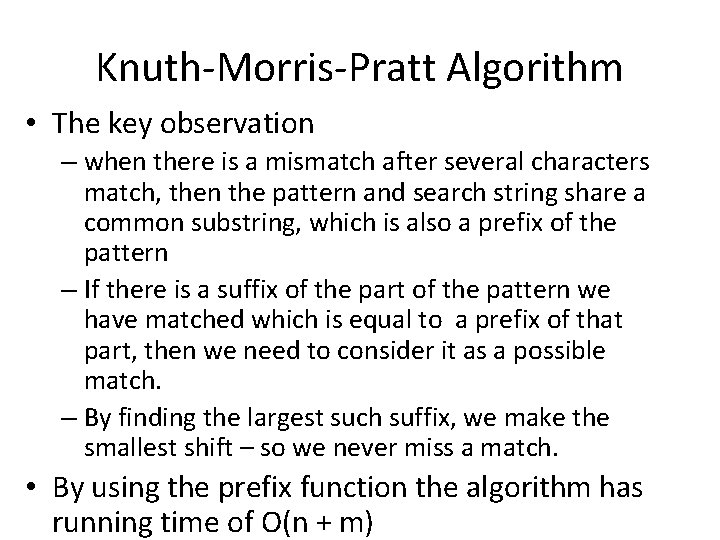

Knuth-Morris-Pratt Algorithm • The key observation – when there is a mismatch after several characters match, then the pattern and search string share a common substring, which is also a prefix of the pattern – If there is a suffix of the part of the pattern we have matched which is equal to a prefix of that part, then we need to consider it as a possible match. – By finding the largest such suffix, we make the smallest shift – so we never miss a match. • By using the prefix function the algorithm has running time of O(n + m)

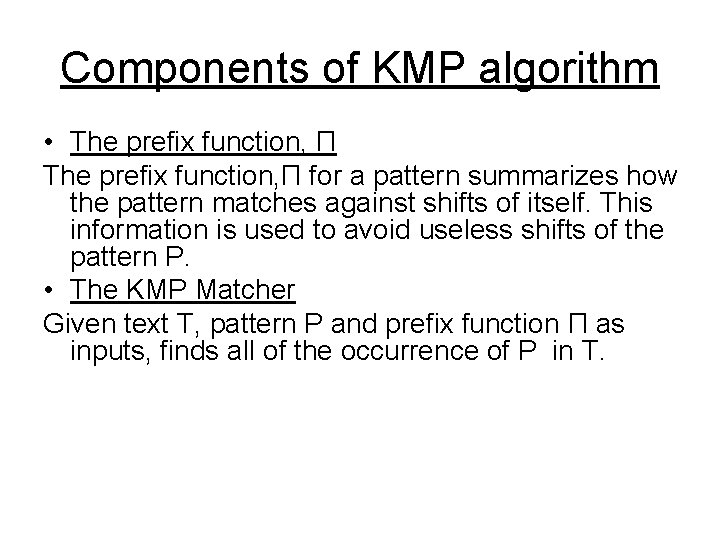

Components of KMP algorithm • The prefix function, Π for a pattern summarizes how the pattern matches against shifts of itself. This information is used to avoid useless shifts of the pattern P. • The KMP Matcher Given text T, pattern P and prefix function Π as inputs, finds all of the occurrence of P in T.

![The prefix function, Π Compute-Prefix-Function (p) 1 m length[p] //’p’ pattern to be matched The prefix function, Π Compute-Prefix-Function (p) 1 m length[p] //’p’ pattern to be matched](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-22.jpg)

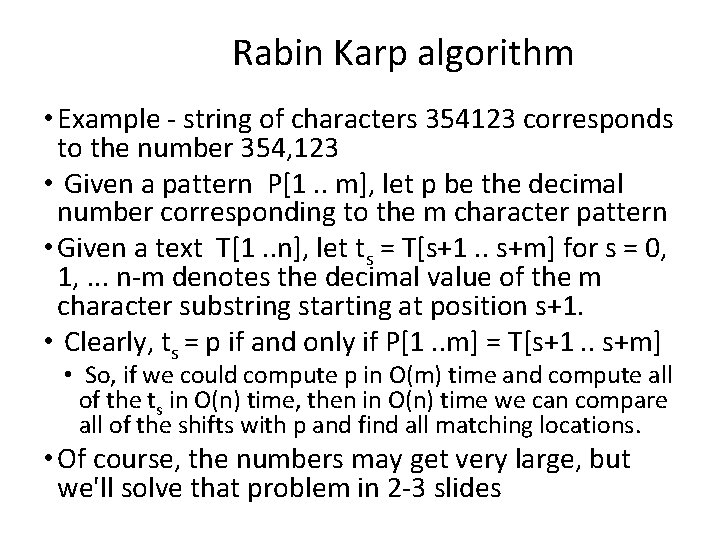

The prefix function, Π Compute-Prefix-Function (p) 1 m length[p] //’p’ pattern to be matched 2 Π[1] 0 3 k 0 4 for q 2 to m 5 do while k > 0 and p[k+1] != p[q] 6 do k Π[k] 7 If p[k+1] = p[q] 8 then k k +1 9 Π[q] k 10 return Π

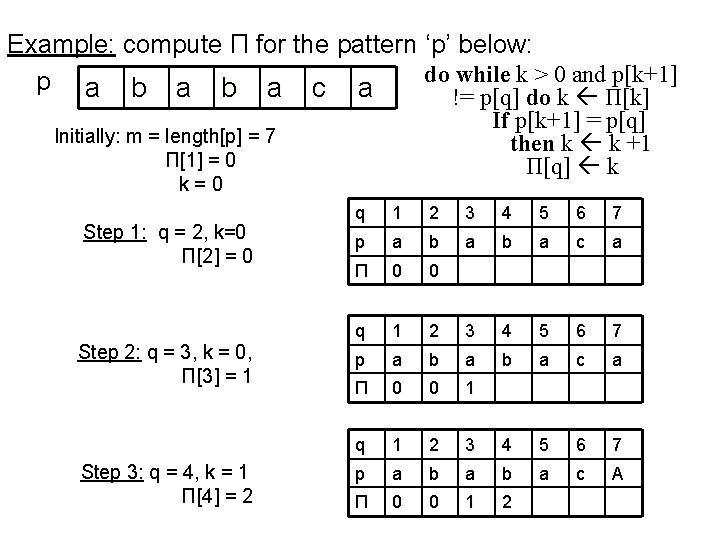

Example: compute Π for the pattern ‘p’ below: do while k > 0 and p[k+1] p a b a c a != p[q] do k Π[k] If p[k+1] = p[q] then k k +1 Π[q] k Initially: m = length[p] = 7 Π[1] = 0 k=0 Step 1: q = 2, k=0 Π[2] = 0 Step 2: q = 3, k = 0, Π[3] = 1 Step 3: q = 4, k = 1 Π[4] = 2 q 1 2 3 4 5 6 7 p a b a b a c a Π 0 0 1 q 1 2 3 4 5 6 7 p a b a c A Π 0 0 1 2

![do while k > 0 and p[k+1] != p[q] do k Π[k] If p[k+1] do while k > 0 and p[k+1] != p[q] do k Π[k] If p[k+1]](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-24.jpg)

do while k > 0 and p[k+1] != p[q] do k Π[k] If p[k+1] = p[q] then k k +1 Π[q] k Step 4: q = 5, k =2 Π[5] = 3 Step 5: q = 6, k = 3 Π[6] = 1 Step 6: q = 7, k = 1 Π[7] = 1 q 1 2 3 4 5 6 7 p a b a b a c a Π 0 0 1 2 3 0 q 1 2 3 4 5 6 7 p a b a c a Π 0 0 1 2 3 0 1

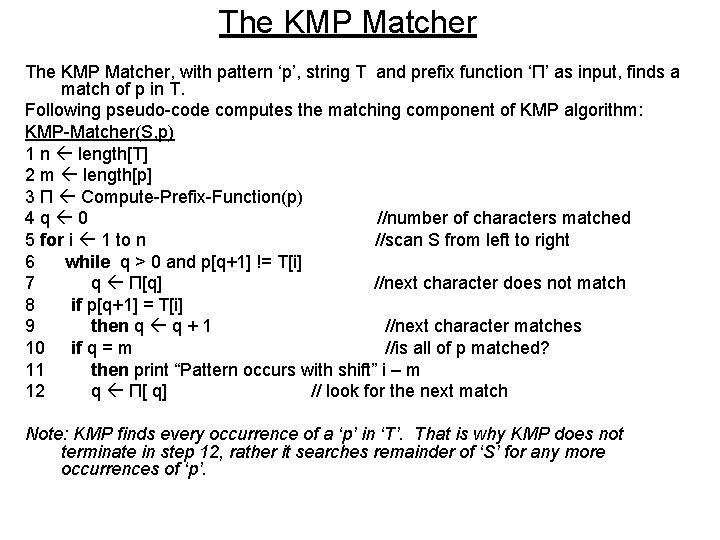

The KMP Matcher, with pattern ‘p’, string T and prefix function ‘Π’ as input, finds a match of p in T. Following pseudo-code computes the matching component of KMP algorithm: KMP-Matcher(S, p) 1 n length[T] 2 m length[p] 3 Π Compute-Prefix-Function(p) 4 q 0 //number of characters matched 5 for i 1 to n //scan S from left to right 6 while q > 0 and p[q+1] != T[i] 7 q Π[q] //next character does not match 8 if p[q+1] = T[i] 9 then q q + 1 //next character matches 10 if q = m //is all of p matched? 11 then print “Pattern occurs with shift” i – m 12 q Π[ q] // look for the next match Note: KMP finds every occurrence of a ‘p’ in ‘T’. That is why KMP does not terminate in step 12, rather it searches remainder of ‘S’ for any more occurrences of ‘p’.

5 for i 1 to n //scan S from left to right 6 do while q > 0 and p[q+1] != T[i] 7 do q Π[q] //next character does not match 8 if p[q+1] = T[i] 9 then q q + 1 //next character matches 10 if q = m //is all of p matched? 11 then print “Pattern occurs with shift” i – m 12 q Π[ q] // look for the next match Note: KMP finds every occurrence of a ‘p’ in ‘T’. That is why KMP does not terminate in step 12, rather it searches remainder of ‘S’ for any more occurrences of ‘p’.

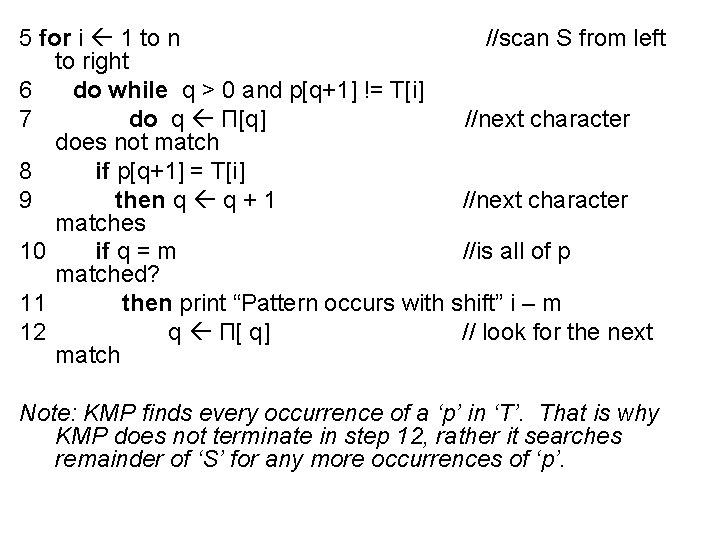

Illustration: given a String ‘T’ and pattern ‘p’ as follows: b a c b a b a c a T p a b a c a Simulate the KMP algorithm to find whether ‘p’ occurs in ‘T’. For ‘p’ the prefix function, Π was computed previously and is as follows: q 1 2 3 4 5 6 7 p a b a c a Π 0 0 1 2 3 0 1

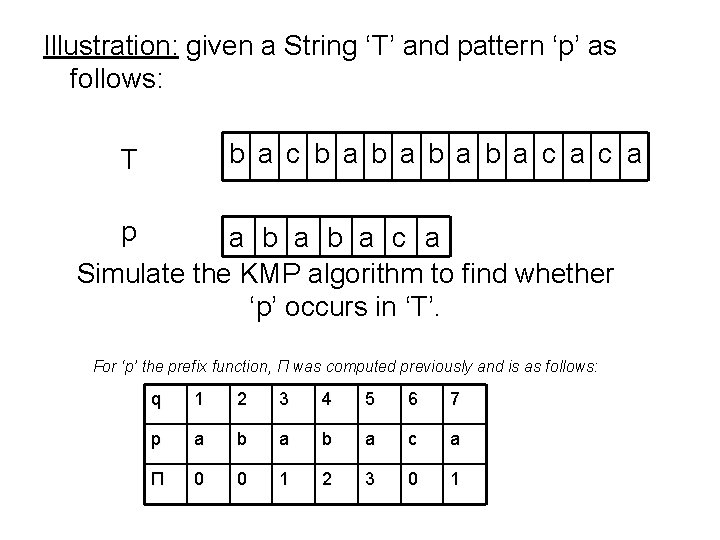

Initially: n = size of T = 15; m = size of p = 7 Step 1: i = 1, q = 0 comparing p[1] with T[1] T b a c b a b a c a a b p a b a c a p[1] does not match with T[1]. ‘p’ will be shifted one position to the right. Step 2: i = 2, q = 0 comparing p[1] with T[2] T p b a c b a b a c a a b a b a c a P[1] matches T[2]. Since there is a match, p is not shifted.

![Step 3: i = 3, q = 1 Comparing p[2] with T[3] T p Step 3: i = 3, q = 1 Comparing p[2] with T[3] T p](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-29.jpg)

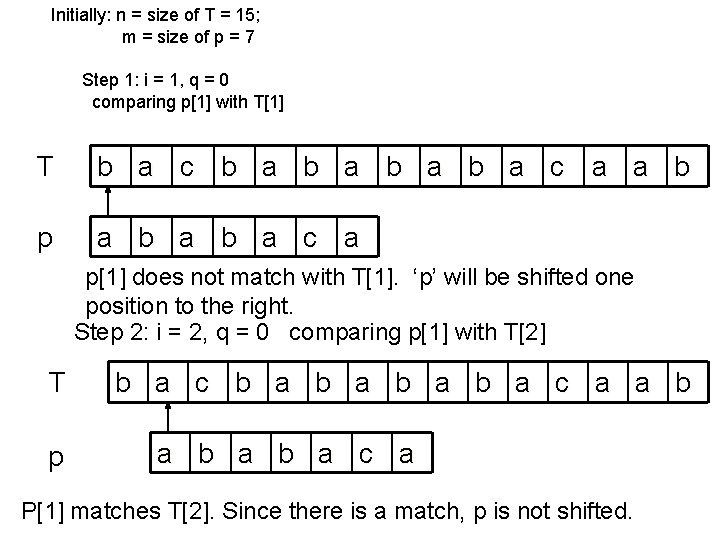

Step 3: i = 3, q = 1 Comparing p[2] with T[3] T p p[2] does not match T[3] b a c b a b a c a a b a b a c a Backtracking on p, comparing p[1] and T[3] Step 4: i = 4, q = 0 comparing p[1] with T[4] T p p[1] does not match T[4] b a c b a b a c a a b a b a c a

![Step 5: i = 5, q = 0 comparing p[1] with T[5] p[1] matches Step 5: i = 5, q = 0 comparing p[1] with T[5] p[1] matches](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-30.jpg)

Step 5: i = 5, q = 0 comparing p[1] with T[5] p[1] matches with T[5] T b a c b a b a c a a b p a b a c a

![Step 6: i = 6, q = 1 Comparing p[2] with T[6] p[2] matches Step 6: i = 6, q = 1 Comparing p[2] with T[6] p[2] matches](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-31.jpg)

Step 6: i = 6, q = 1 Comparing p[2] with T[6] p[2] matches T[6] T b a c b a b a c a a b p a b a c a Step 7: i = 7, q = 2 Comparing p[3] with T[7] p[3] matches T[7] T b a c b a b a c a a b p a b a c a Step 8: i = 8, q = 3 Comparing p[4] with T[8] p[4] matches T[8] T b a c b a b a c a a b p a b a c a

![Step 9: i = 9, q = 4 Comparing p[5] with T[9] p[5] matches Step 9: i = 9, q = 4 Comparing p[5] with T[9] p[5] matches](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-32.jpg)

Step 9: i = 9, q = 4 Comparing p[5] with T[9] p[5] matches T[9] T b a c b a b a c a a b p a b a c a Step 10: i = 10, q = 5 Comparing p[6] with T[10] p[6] doesn’t match T[10] T b a c b a b a c a a b p a b a c a Backtracking on p, compare p[4] with T[10] because after mismatch q = Π[5] = 3

![Step 11: i = 11, q = 4 Comparing p[5] with T[11] T p Step 11: i = 11, q = 4 Comparing p[5] with T[11] T p](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-33.jpg)

Step 11: i = 11, q = 4 Comparing p[5] with T[11] T p p[5] matches T[11] b a c b a b a c a a b a b a c a

![Step 12: i = 12, q = 5 Comparing p[6] with T[12] T p Step 12: i = 12, q = 5 Comparing p[6] with T[12] T p](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-34.jpg)

Step 12: i = 12, q = 5 Comparing p[6] with T[12] T p b a c b a b a c a a b a b a c a Step 13: i = 13, q = 6 Comparing p[7] with T[13] T p p[6] matches T[12] p[7] matches T[13] b a c b a b a c a a b a b a c a Pattern ‘p’ has been found to completely occur in string ‘S’. The total number of shifts that took place for the match to be found are: i – m = 13 – 7 = 6 shifts.

![Run - time analysis • Compute-Prefix-Function (Π) 1 m length[p] //’p’ pattern to be Run - time analysis • Compute-Prefix-Function (Π) 1 m length[p] //’p’ pattern to be](http://slidetodoc.com/presentation_image_h2/cc6586d03af79ad16a7cf6b348c0fbcc/image-35.jpg)

Run - time analysis • Compute-Prefix-Function (Π) 1 m length[p] //’p’ pattern to be matched 2 Π[1] 0 3 k 0 4 for q 2 to m 5 do while k > 0 and p[k+1] != p[q] 6 do k Π[k] 7 If p[k+1] = p[q] 8 then k k +1 9 Π[q] k 10 return Π In the above pseudocode for computing the prefix function, the for loop from step 4 to step 10 runs ‘m’ times. Step 1 to step 3 take constant time. Hence the running time of compute prefix function is Θ(m). • KMP Matcher 1 n length[S] 2 m length[p] 3 Π Compute-Prefix-Function(p) 4 q 0 5 for i 1 to n 6 do while q > 0 and p[q+1] != S[i] 7 do q Π[q] 8 if p[q+1] = S[i] 9 then q q + 1 10 if q = m 11 then print “Pattern occurs with shift” i – m 12 q Π[ q] The for loop beginning in step 5 runs ‘n’ times, i. e. , as long as the length of the string ‘S’. Since step 1 to step 4 take constant time, the running time is dominated by this for loop. Thus running time of matching function is Θ(n).

Boyer-Moore Algorithm • For longer patterns and large it is the most efficient algorithm • What it does – – Compares characters from right to left Uses a “bad character” rule Adds a “good suffix” rule These two rules generate two different shift values; the larger of the two values is chosen • In some cases Boyer-Moore can run in sub-linear time, which means it may not be necessary to check all of the characters in the search text!

Boyer and Moore

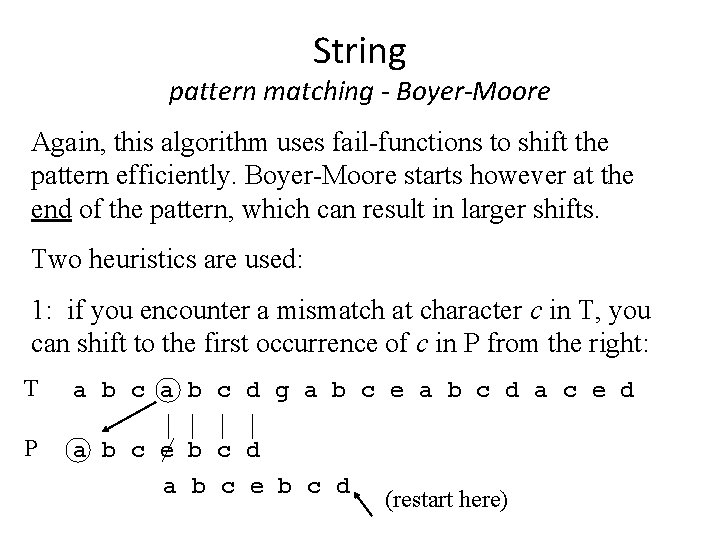

String pattern matching - Boyer-Moore Again, this algorithm uses fail-functions to shift the pattern efficiently. Boyer-Moore starts however at the end of the pattern, which can result in larger shifts. Two heuristics are used: 1: if you encounter a mismatch at character c in T, you can shift to the first occurrence of c in P from the right: T a b c d g a b c e a b c d a c e d P a b c e b c d (restart here)

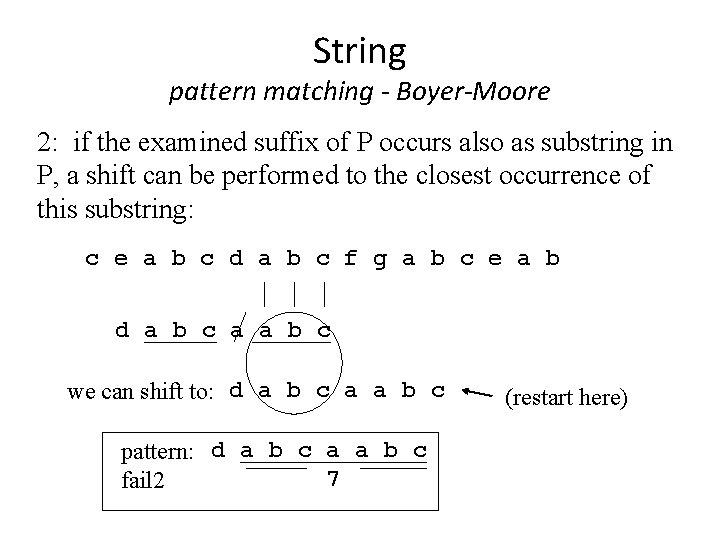

String pattern matching - Boyer-Moore 2: if the examined suffix of P occurs also as substring in P, a shift can be performed to the closest occurrence of this substring: c e a b c d a b c f g a b c e a b d a b c a a b c we can shift to: d a b c a a b c pattern: d a b c a a b c 7 fail 2 (restart here)

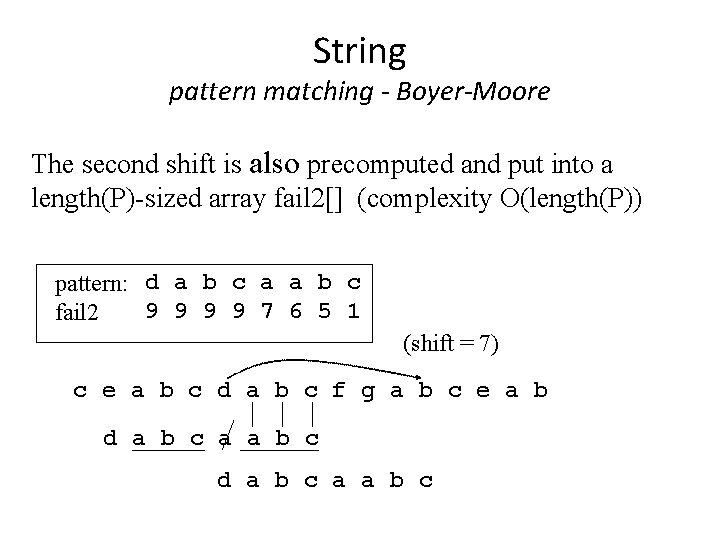

String pattern matching - Boyer-Moore The second shift is also precomputed and put into a length(P)-sized array fail 2[] (complexity O(length(P)) pattern: d a b c a a b c 9 9 7 6 5 1 fail 2 (shift = 7) c e a b c d a b c f g a b c e a b d a b c a a b c

• If lines 12 and 13 were changed to then we would have a naïve-string matcher

Suffix trees

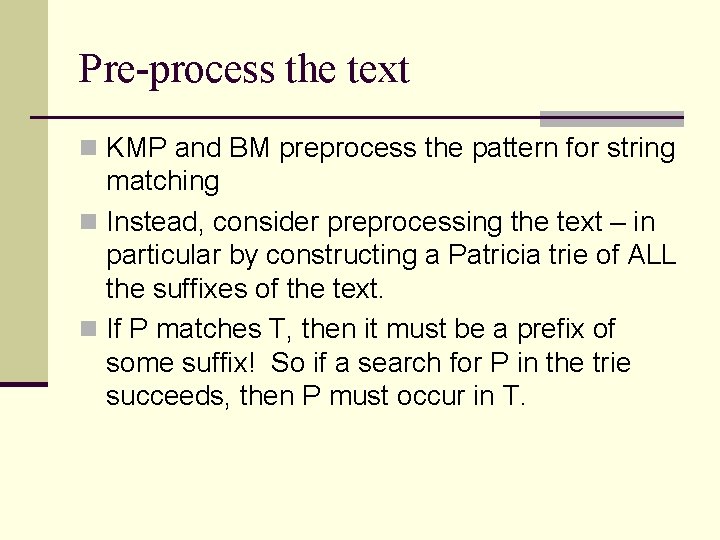

Pre-process the text n KMP and BM preprocess the pattern for string matching n Instead, consider preprocessing the text – in particular by constructing a Patricia trie of ALL the suffixes of the text. n If P matches T, then it must be a prefix of some suffix! So if a search for P in the trie succeeds, then P must occur in T.

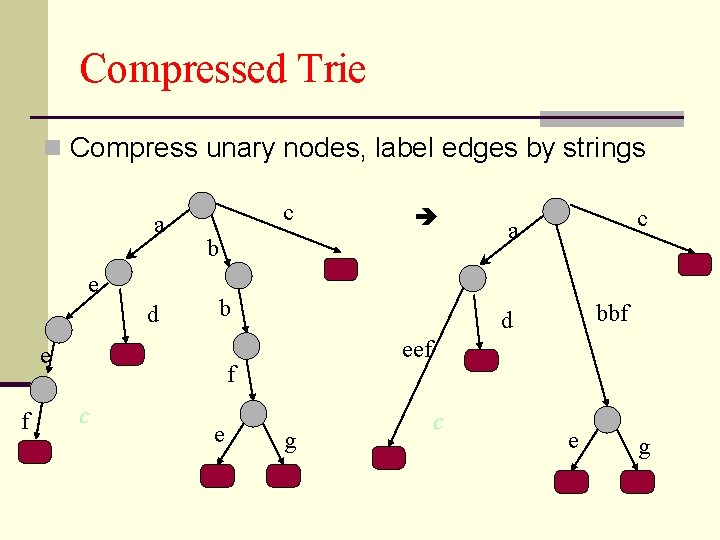

Compressed Trie n Compress unary nodes, label edges by strings a e d c b b e f e c a bbf d eef f c g c e g

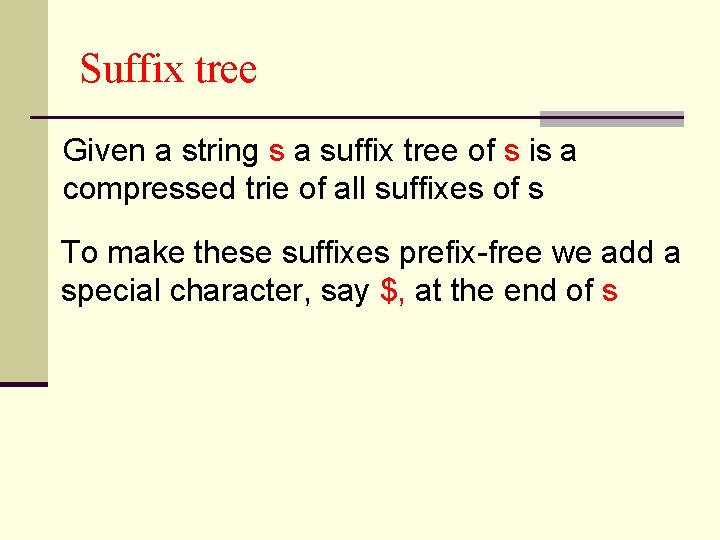

Suffix tree Given a string s a suffix tree of s is a compressed trie of all suffixes of s To make these suffixes prefix-free we add a special character, say $, at the end of s

Suffix tree (Example) Let s=abab, a suffix tree of s is a compressed trie of all suffixes of s=abab$ { $ b$ ab$ bab$ abab$ } $ a b $ b $ a b $ $

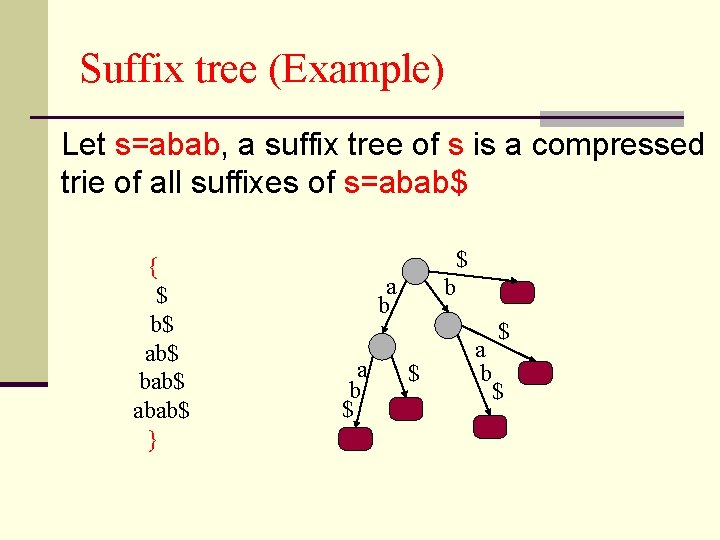

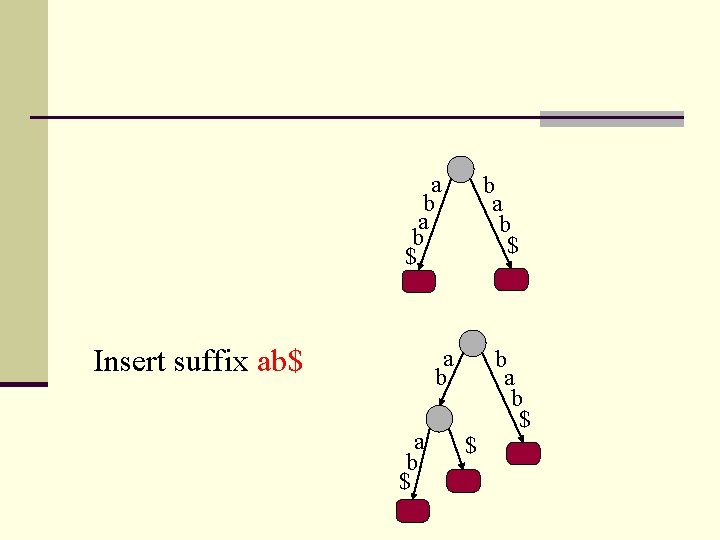

Trivial algorithm to build a Suffix tree Insert the largest suffix Insert the suffix bab$ a b a b $

a b $ Insert suffix ab$ b a b $ $ b a b $

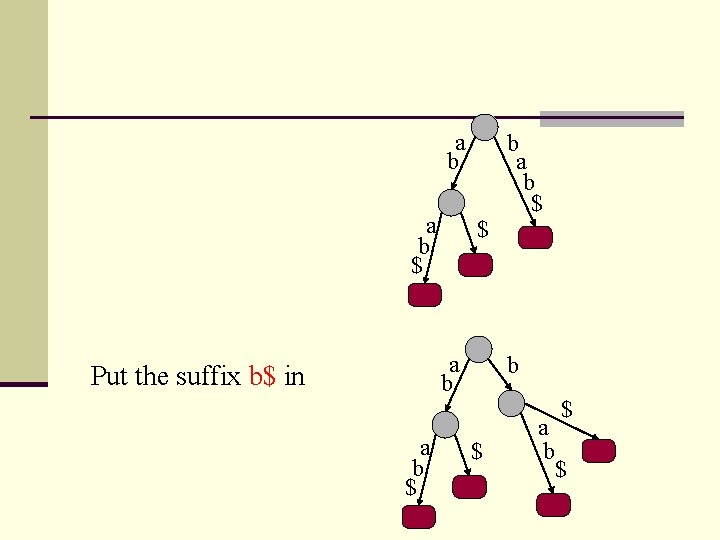

a b $ $ a b Put the suffix b$ in a b $ b $ a b $ $

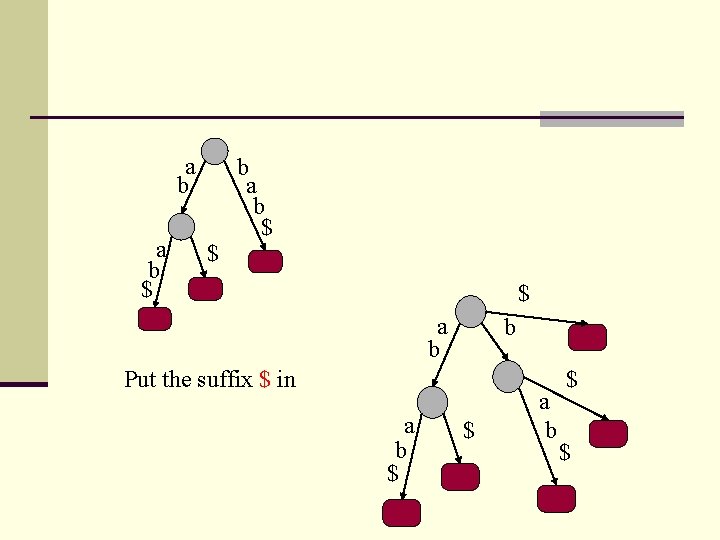

a b $ $ b a b $ $ a b b Put the suffix $ in a b $ $

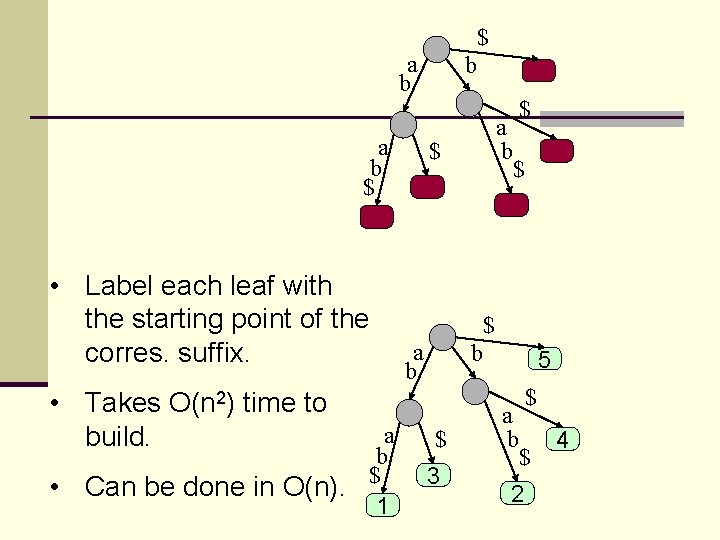

$ a b $ • Label each leaf with the starting point of the corres. suffix. • Takes O(n 2) time to build. • a b $ Can be done in O(n). 1 b $ a b $ $ $ a b b $ 3 5 a b $ $ 2 4

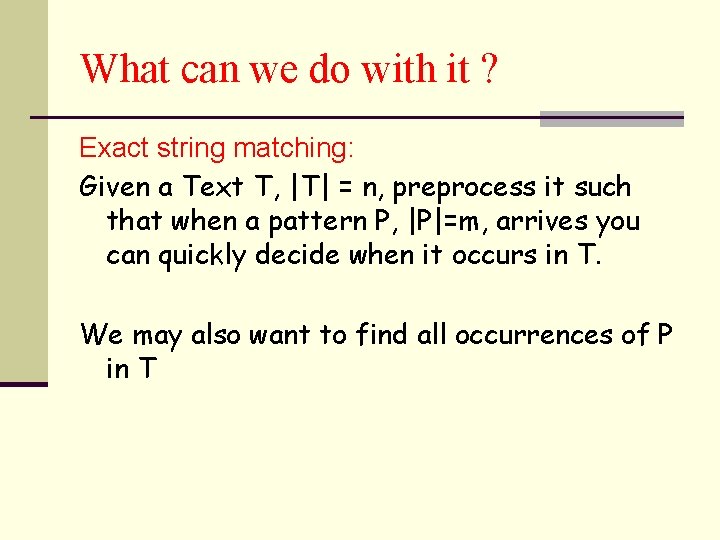

What can we do with it ? Exact string matching: Given a Text T, |T| = n, preprocess it such that when a pattern P, |P|=m, arrives you can quickly decide when it occurs in T. We may also want to find all occurrences of P in T

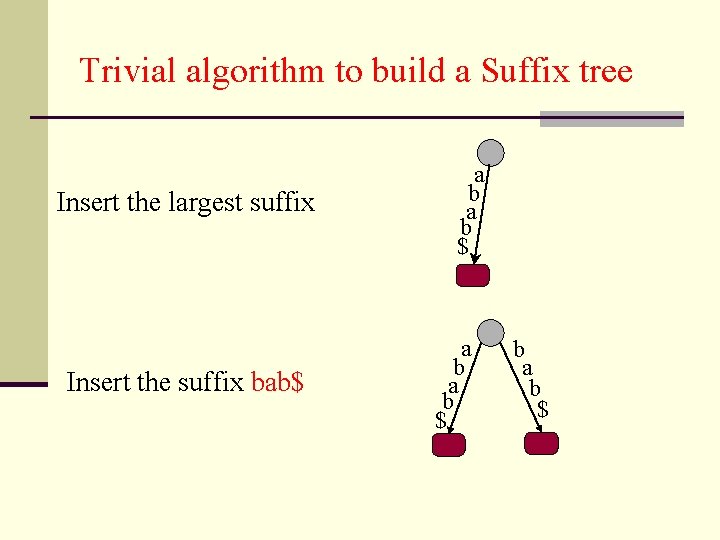

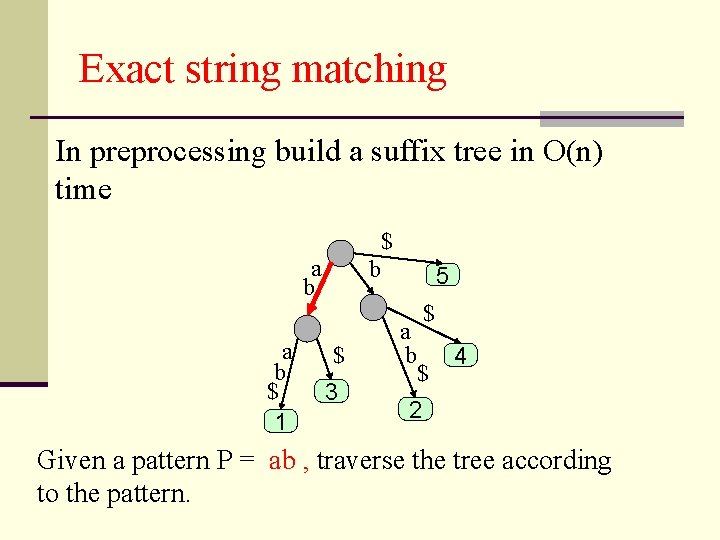

Exact string matching In preprocessing build a suffix tree in O(n) time $ a b $ 1 b $ 3 5 $ a b $ 4 2 Given a pattern P = ab , traverse the tree according to the pattern.

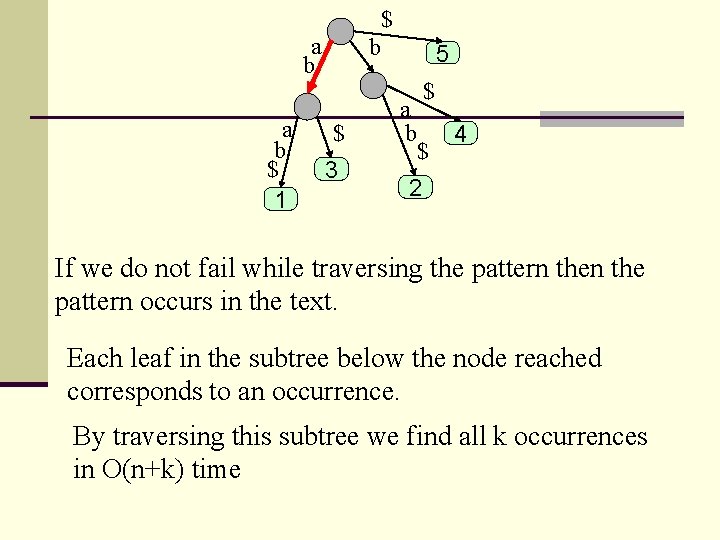

$ a b $ 1 b $ 3 5 $ a b $ 4 2 If we do not fail while traversing the pattern occurs in the text. Each leaf in the subtree below the node reached corresponds to an occurrence. By traversing this subtree we find all k occurrences in O(n+k) time

Drawbacks of suffix trees n Suffix trees consume a lot of space n It is O(n) but the constant is quite big n Recall that if we want to traverse an edge in O(1) time then we need an array of ptrs. of size |Σ| in each node

Suffix array n We lose some of the functionality but save space. Let s = abab Sort the suffixes lexicographically: ab, abab, b, bab The suffix array gives the indices of the suffixes in sorted order

Suffix array construction – naïve algorithm n Naive in place construction n Similar to insertion sort n Insert all the suffixes into the array one by one Running time complexity: n O(n 2) where n is the length of the string

How do we build it fast? n Build a suffix tree n Traverse the tree using DFS, lexicographically following outgoing edges from each node and filling the suffix array. n O(n) time

How do we search for a pattern ? n If P occurs in T then all its occurrences are consecutive in the suffix array. n Do a binary search on the suffix array n Takes O(mlogn) time

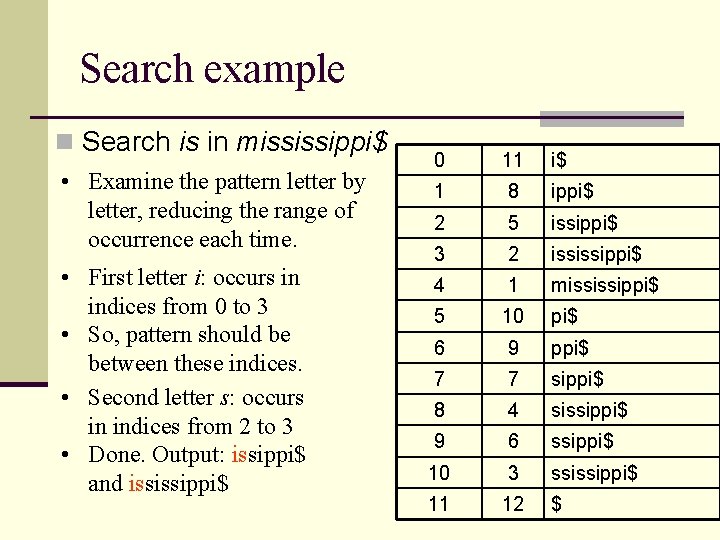

Search example n Search is in mississippi$ • Examine the pattern letter by 0 11 i$ letter, reducing the range of occurrence each time. 1 8 ippi$ 2 5 issippi$ 3 2 ississippi$ 4 1 mississippi$ 5 10 pi$ 6 9 ppi$ 7 7 sippi$ 8 4 sissippi$ 9 6 ssippi$ 10 3 ssissippi$ 11 12 $ • First letter i: occurs in indices from 0 to 3 • So, pattern should be between these indices. • Second letter s: occurs in indices from 2 to 3 • Done. Output: issippi$ and ississippi$

- Slides: 61