Segmentation via Maximum Entropy Model Goals Is it

- Slides: 19

Segmentation via Maximum Entropy Model

Goals • Is it possible to learn the segmentation problem automatically? • Using a model which is frequently used in NLP tasks - the Maximum Entropy model • Finding features compatible to the segmentation task

Segmentation as a tagging problem • Attach each pixel one of two tags: is it an edge between segments or not. • Use a tagged set of images to build a statistical model. • Given a new image we want to find the most probable tag for each pixel using this model

Maximum Entropy • We want to build a statistical model which makes as few assumptions as possible. • Entropy – the uncertainty of a distribution. The level of “surprise”. • So, we need a statistical model with the highest entropy possible.

Maximum Entropy - Definitions • Event – a couple (p, t) where p is a pixel and t is its tag. • Feature – elementary piece of evidence that link p to t. A feature has a real value. • The decision about a pixel is based only on the features active at that point. • Looking for conditional probability P(p|t, f)

Maximum Entropy – building the model • We use a tagged training set to build the statistical distribution. • Constrains on the distribution: – The expectation for each feature should be equal to its expectation in the training set. – Maximizing the Entropy over all possible distributions. • We get an optimization problem which can be solved using Lagrange multipliers (weights).

opennlp maxent • Machine learning package compatible for NLP tasks. • Used for training maximum entropy models. • All is left is adjusting it to work with images and finding a set of features compatible for the segmentation task.

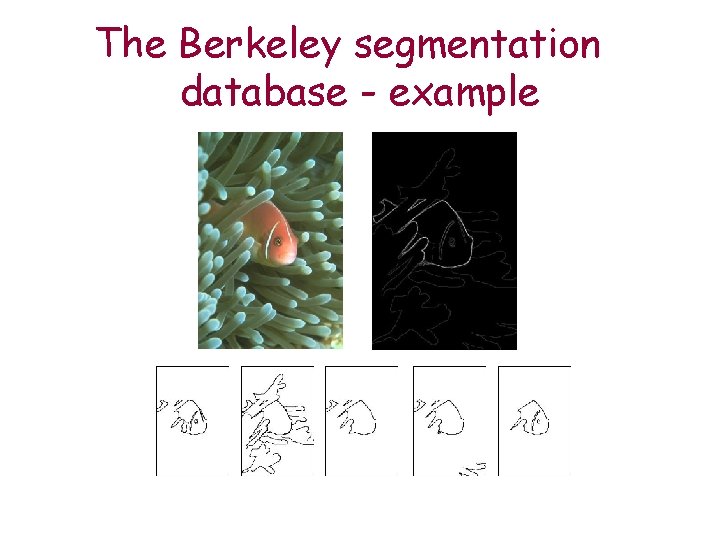

The Berkeley segmentation database • Consists of 300 images and the corresponding segmentation maps. • The segmentation maps where constructed by humans. • The database is divided into a train set (200) and a test set (100).

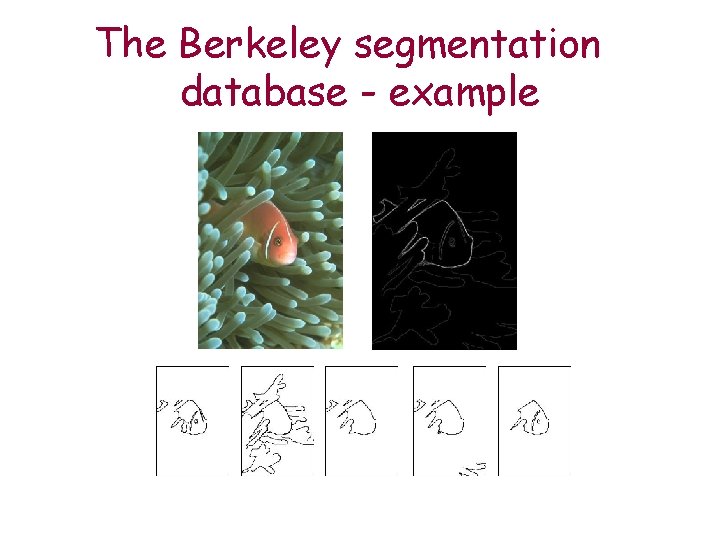

The Berkeley segmentation database - example

Features • I focused on brightness and texture features (technical problems): – Brightness difference with surrounding pixels – Gradient magnitude using the Prewitt operators. – Canny edge detection results

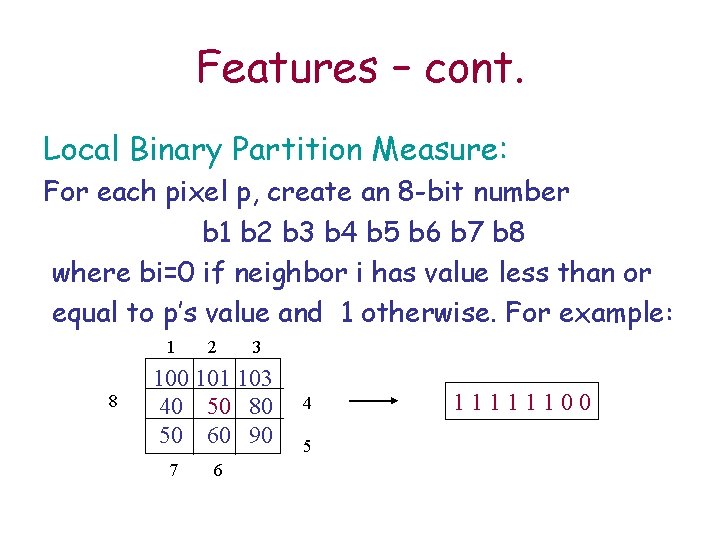

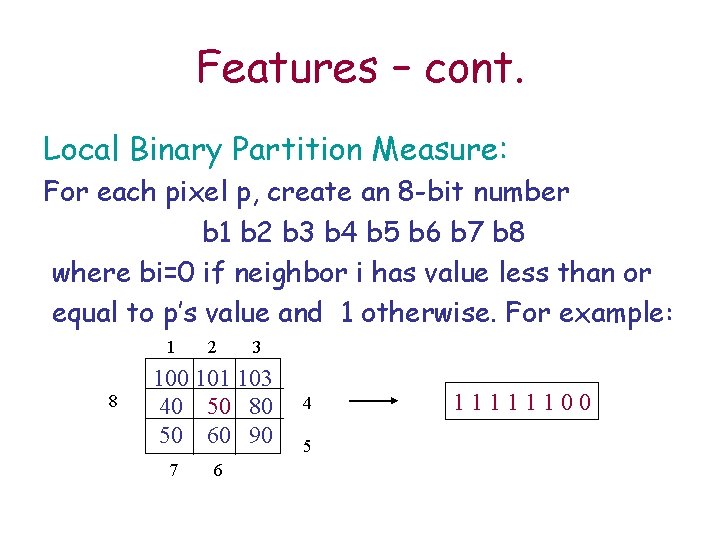

Features – cont. Local Binary Partition Measure: For each pixel p, create an 8 -bit number b 1 b 2 b 3 b 4 b 5 b 6 b 7 b 8 where bi=0 if neighbor i has value less than or equal to p’s value and 1 otherwise. For example: 1 8 2 3 100 101 103 40 50 80 50 60 90 7 6 4 5 11111100

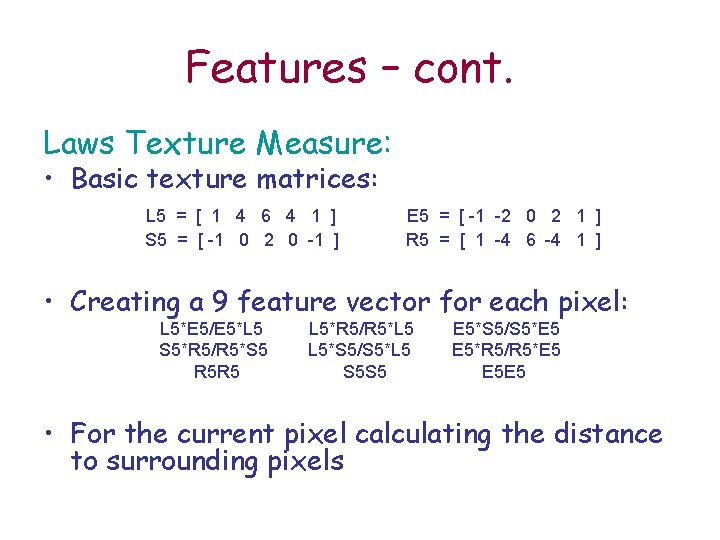

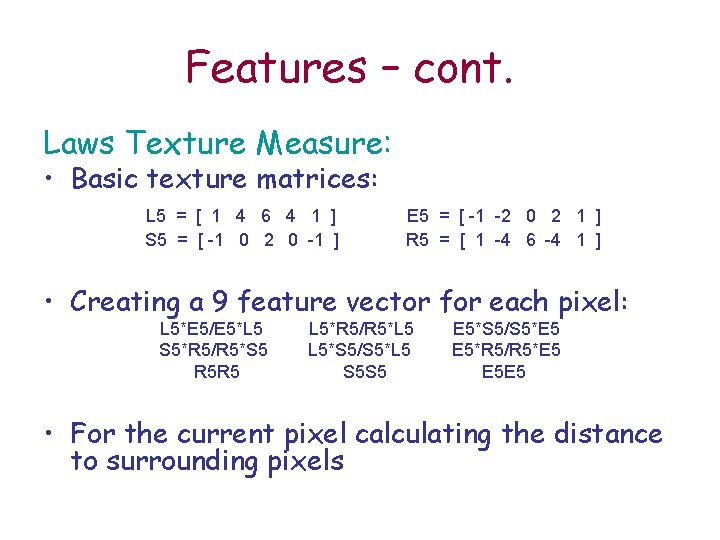

Features – cont. Laws Texture Measure: • Basic texture matrices: L 5 = [ 1 4 6 4 1 ] S 5 = [ -1 0 2 0 -1 ] E 5 = [ -1 -2 0 2 1 ] R 5 = [ 1 -4 6 -4 1 ] • Creating a 9 feature vector for each pixel: L 5*E 5/E 5*L 5 S 5*R 5/R 5*S 5 R 5 R 5 L 5*R 5/R 5*L 5 L 5*S 5/S 5*L 5 S 5 S 5 E 5*S 5/S 5*E 5 E 5*R 5/R 5*E 5 E 5 E 5 • For the current pixel calculating the distance to surrounding pixels

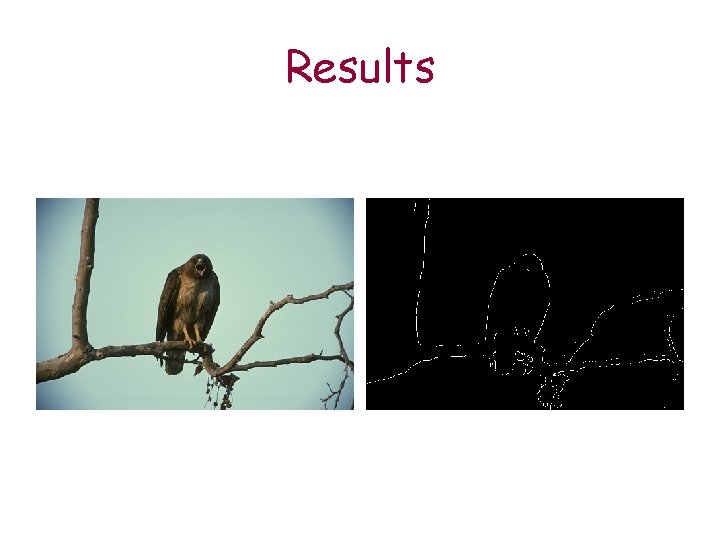

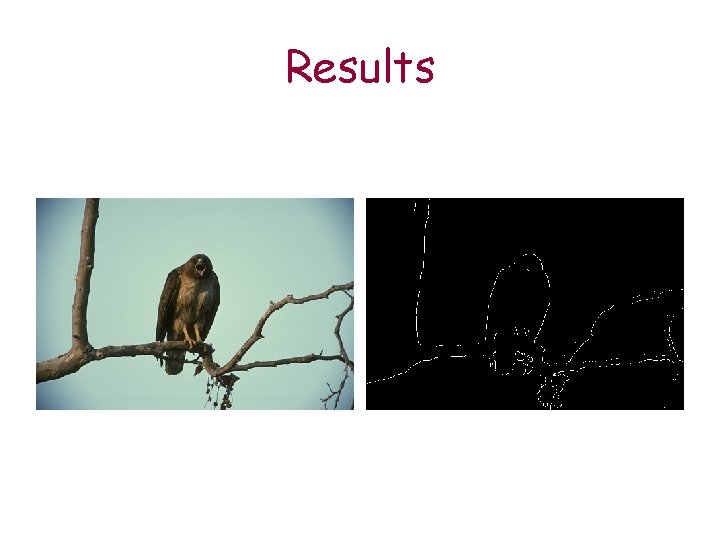

Results

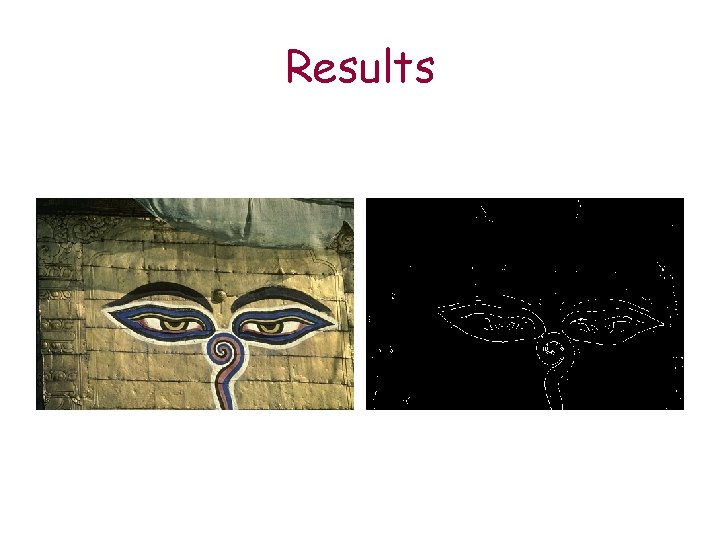

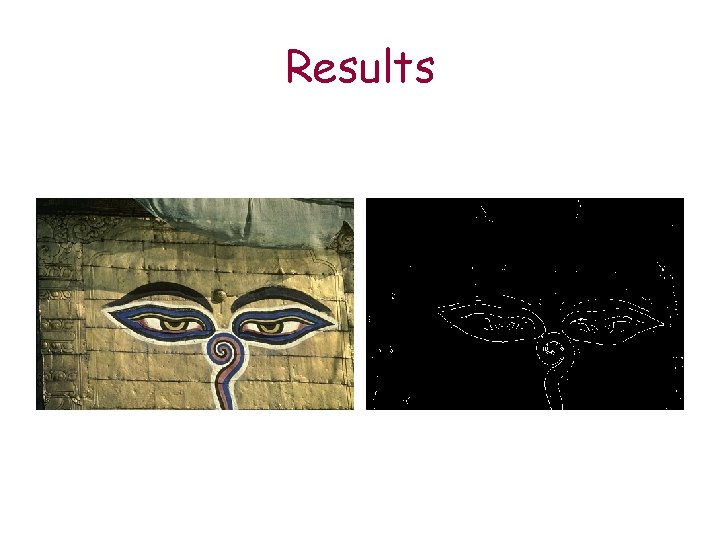

Results

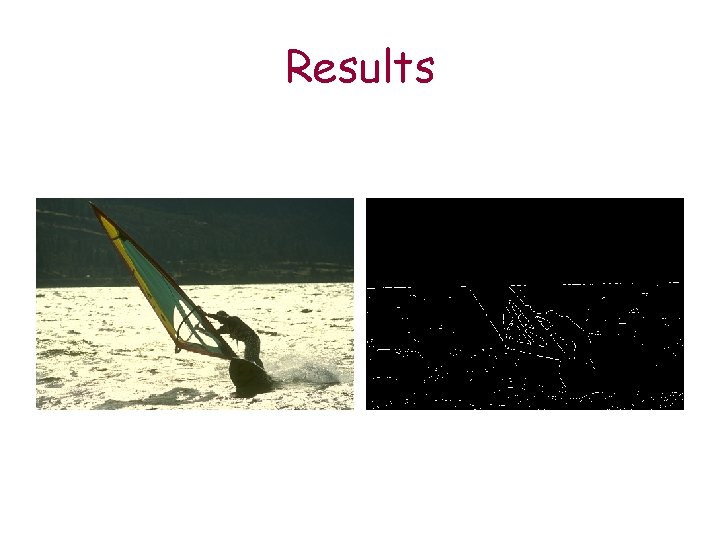

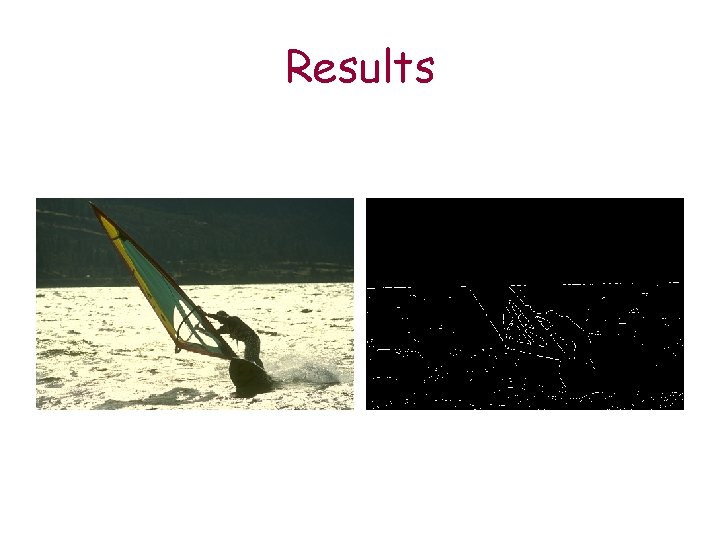

Results

Results

Discussion • The model is basic but it has managed to find segments at some level. • Best result are found where there is an object with a contrasting background or radical difference of texture. • The model had succeeded in filtering edges which are not segments. For example:

Discussion

Feature Work • More features • A compatible package for images • Search algorithm for finding the best sequence • Working with color images • Special learning: by one person, by category etc.