Semantic Role Labeling using Maximum Entropy Model JoonHo

Semantic Role Labeling using Maximum Entropy Model Joon-Ho Lim NLP Lab. Korea Univ.

Contents q Previous work • Review the previously studied ML approaches for SRL q Semantic Role Labeling using ME • Explain a probabilistic model for semantic role sequence, and an incremental approach q Feature Sets for Semantic Role Labeling q Experiments q Conclusion 2

![Previous work q Machine learning approaches for SRL • [Gildea 2002] : A probabilistic Previous work q Machine learning approaches for SRL • [Gildea 2002] : A probabilistic](http://slidetodoc.com/presentation_image_h2/49bf745c0d1c468bfa03c3349711a03e/image-3.jpg)

Previous work q Machine learning approaches for SRL • [Gildea 2002] : A probabilistic discriminative model Ü It needs a complex interpolation for smoothing because of the data sparseness problem. • [Pradhan 2003] : Applied a SVM to SRL. Ü Ü It requires high computational complexity because of the polynomial kernel function. Because the SVM is a binary-classifier, one-vs-rest or pairwise method is required. • [Thompson 2003] : A probabilistic generative model. Ü Ü This model assumes that a constituent is generated by a semantic role, so it is called a Generative Model. Because the constituent depends only on the role that generated it, and the constituent is independent of each other, this model can’t exploit rich features. 3

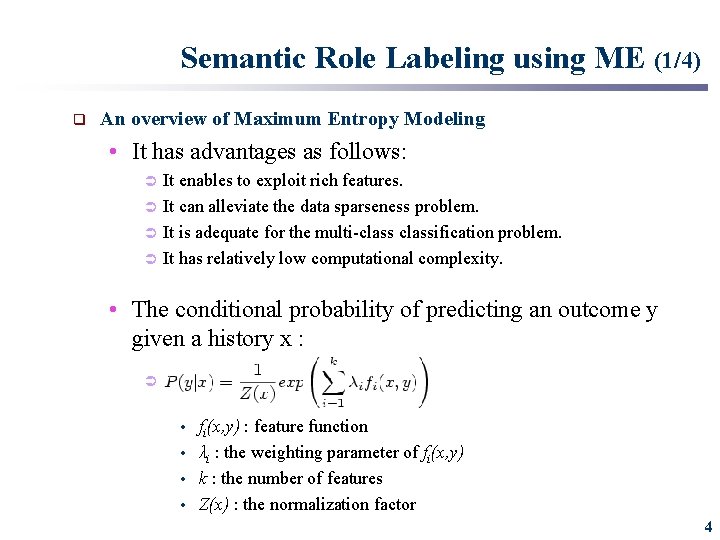

Semantic Role Labeling using ME (1/4) q An overview of Maximum Entropy Modeling • It has advantages as follows: Ü Ü It enables to exploit rich features. It can alleviate the data sparseness problem. It is adequate for the multi-classification problem. It has relatively low computational complexity. • The conditional probability of predicting an outcome y given a history x : Ü fi(x, y) : feature function • λi : the weighting parameter of fi(x, y) • k : the number of features • Z(x) : the normalization factor • 4

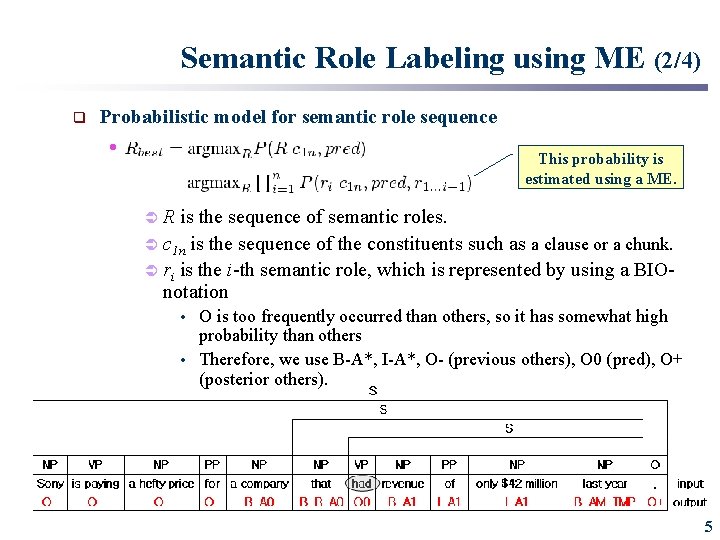

Semantic Role Labeling using ME (2/4) q Probabilistic model for semantic role sequence • This probability is estimated using a ME. ÜR is the sequence of semantic roles. Ü c 1 n is the sequence of the constituents such as a clause or a chunk. Ü ri is the i-th semantic role, which is represented by using a BIOnotation O is too frequently occurred than others, so it has somewhat high probability than others • Therefore, we use B-A*, I-A*, O- (previous others), O 0 (pred), O+ (posterior others). • 5

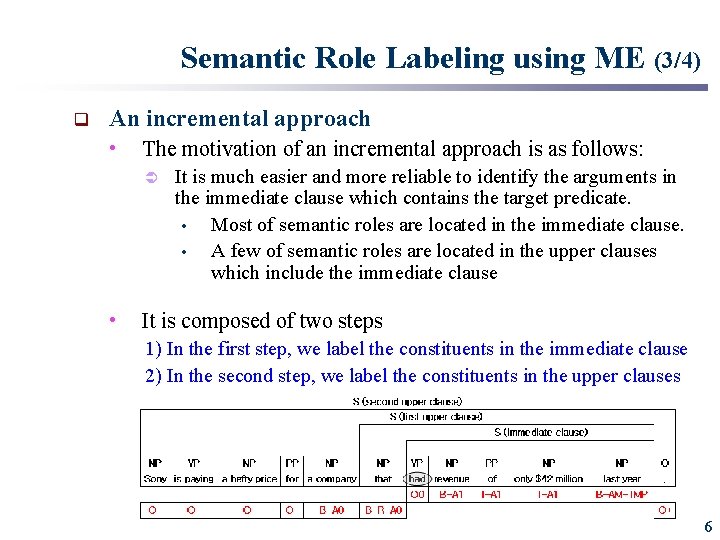

Semantic Role Labeling using ME (3/4) q An incremental approach • The motivation of an incremental approach is as follows: Ü • It is much easier and more reliable to identify the arguments in the immediate clause which contains the target predicate. • Most of semantic roles are located in the immediate clause. • A few of semantic roles are located in the upper clauses which include the immediate clause It is composed of two steps 1) In the first step, we label the constituents in the immediate clause 2) In the second step, we label the constituents in the upper clauses 6

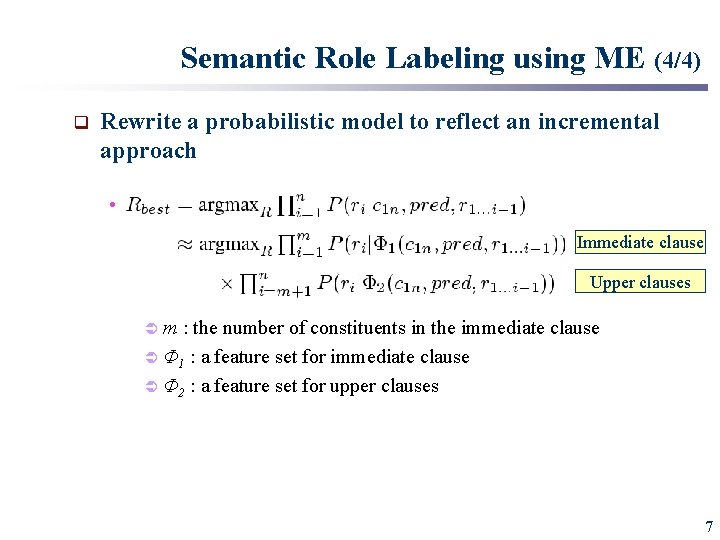

Semantic Role Labeling using ME (4/4) q Rewrite a probabilistic model to reflect an incremental approach • Immediate clause Upper clauses Üm : the number of constituents in the immediate clause Ü Φ 1 : a feature set for immediate clause Ü Φ 2 : a feature set for upper clauses 7

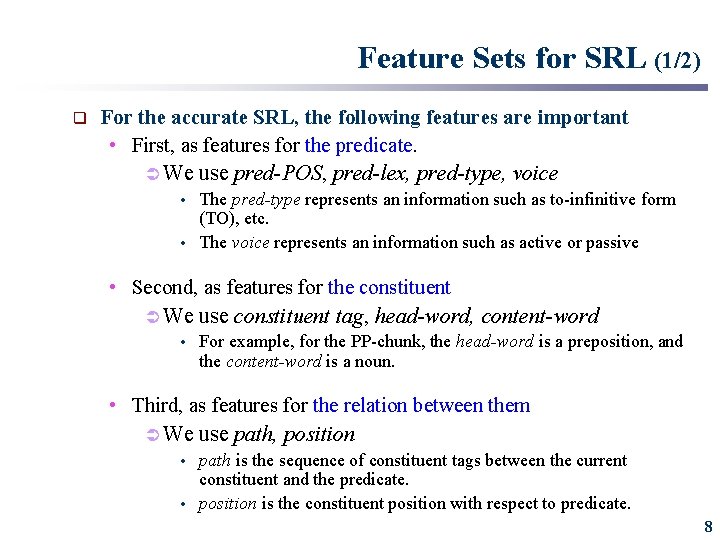

Feature Sets for SRL (1/2) q For the accurate SRL, the following features are important • First, as features for the predicate. Ü We use pred-POS, pred-lex, pred-type, voice The pred-type represents an information such as to-infinitive form (TO), etc. • The voice represents an information such as active or passive • • Second, as features for the constituent Ü We use constituent tag, head-word, content-word • For example, for the PP-chunk, the head-word is a preposition, and the content-word is a noun. • Third, as features for the relation between them Ü We use path, position path is the sequence of constituent tags between the current constituent and the predicate. • position is the constituent position with respect to predicate. • 8

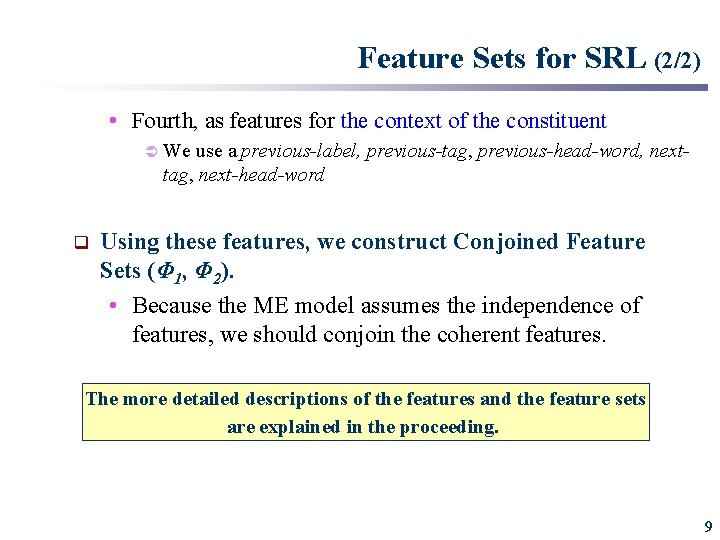

Feature Sets for SRL (2/2) • Fourth, as features for the context of the constituent Ü We use a previous-label, previous-tag, previous-head-word, nexttag, next-head-word q Using these features, we construct Conjoined Feature Sets (Φ 1, Φ 2). • Because the ME model assumes the independence of features, we should conjoin the coherent features. The more detailed descriptions of the features and the feature sets are explained in the proceeding. 9

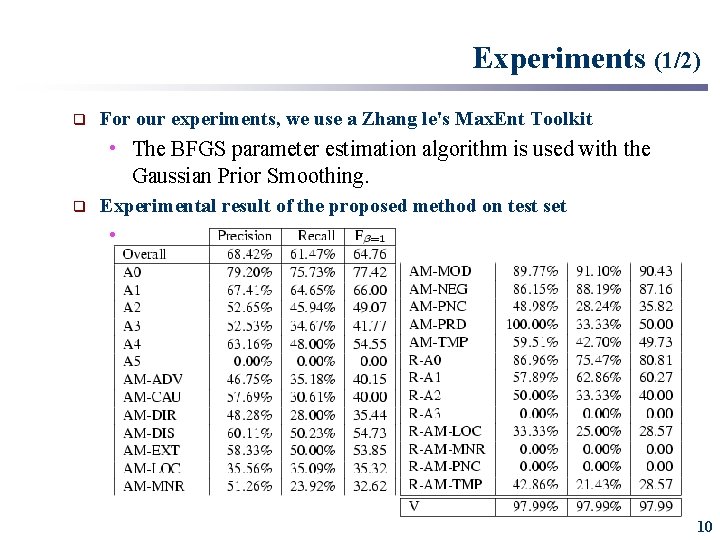

Experiments (1/2) q For our experiments, we use a Zhang le's Max. Ent Toolkit • The BFGS parameter estimation algorithm is used with the Gaussian Prior Smoothing. q Experimental result of the proposed method on test set • 10

Experiments (2/2) q From the experimental results, we can know that • The proposed method has relatively high performance on the labels related to A 0 and A 1, while it has relatively low performance on the other labels. • We think that this may be caused by following two reasons. Ü Ü Firstly, the instances of A 0 and A 1 are provided enough for accurate semantic role labeling. Secondly, thematic roles of A 0 and A 1 are more clear than other core semantic roles. • For example, agent is labeled as mainly A 0 while benefactive can be labeled as A 2 or A 3. • Therefore, the ME model can get a good generalization performance in case of A 0 and A 1, but can't generalize well in other cases. 11

Conclusion q Semantic role labeling method using a ME model • We use an incremental approach Ü It is a kind of divide-and-conquer strategy. • It is much easier and more reliable to identify the arguments in the immediate clause. • And then, we label the semantic roles in the upper clauses • As feature sets Ü We use features which utilize the characteristics of a predicate, a constituent, a relationship between them, and a context. 12

Thank you. 13

- Slides: 13