Regression Prof Sahebgouda R Patil Dr Anil Maheshwari

Regression Prof : Sahebgouda R Patil Dr. Anil Maheshwari

Learning Objectives To understand the application of regression analysis in data mining Linear/nonlinear Logistic (Logit) To understand the key statistical measures of fit

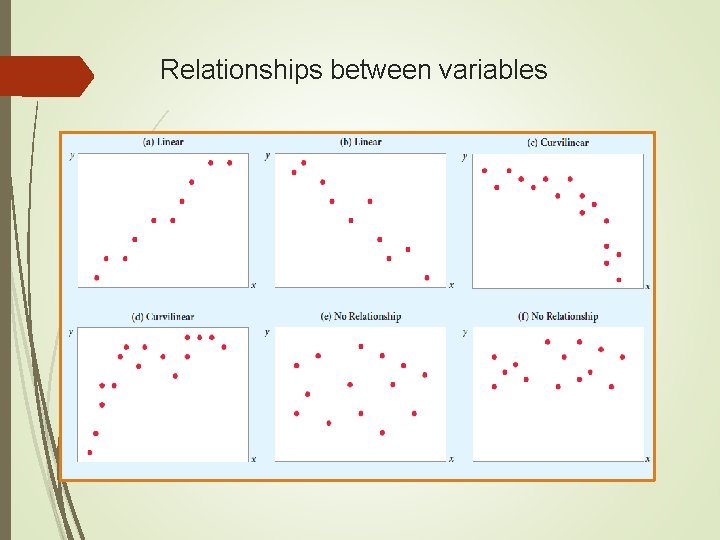

Relationships between variables

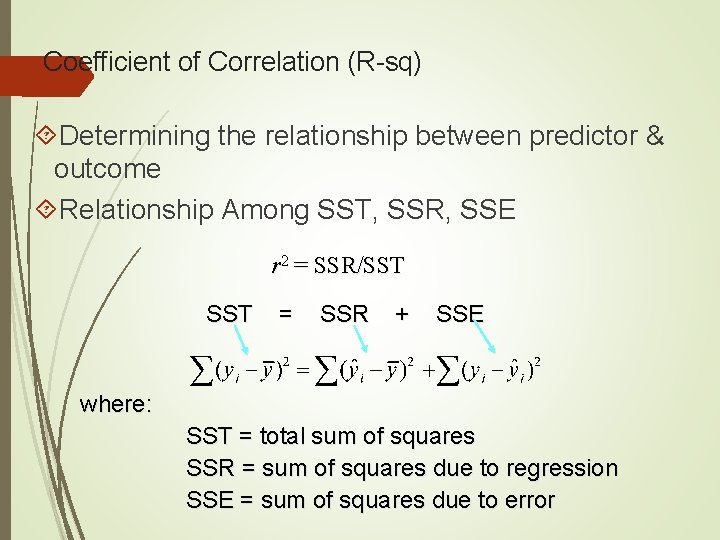

Coefficient of Correlation (R-sq) Determining the relationship between predictor & outcome Relationship Among SST, SSR, SSE r 2 = SSR/SST = SSR + SSE where: SST = total sum of squares SSR = sum of squares due to regression SSE = sum of squares due to error

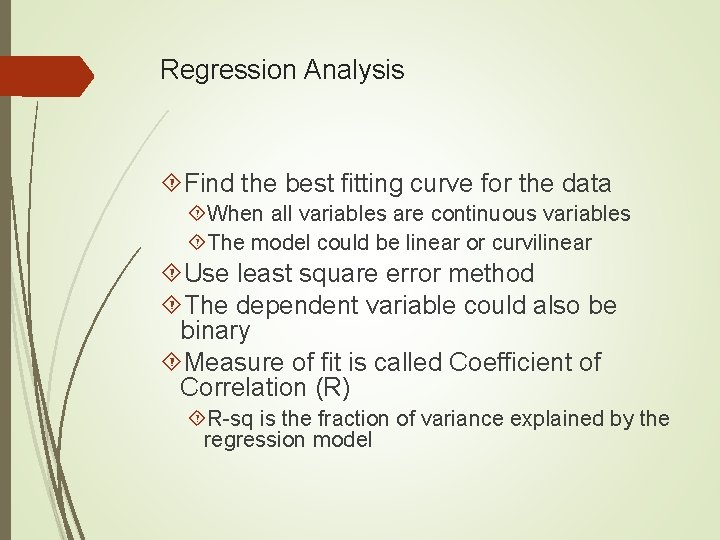

Regression Analysis Find the best fitting curve for the data When all variables are continuous variables The model could be linear or curvilinear Use least square error method The dependent variable could also be binary Measure of fit is called Coefficient of Correlation (R) R-sq is the fraction of variance explained by the regression model

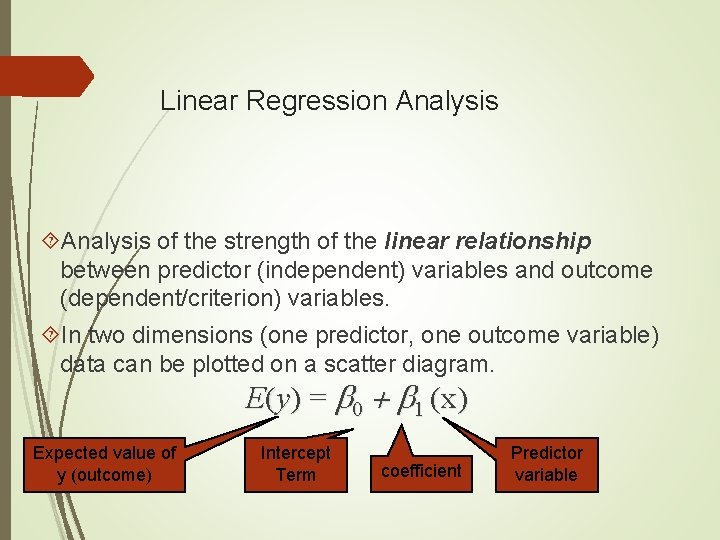

Linear Regression Analysis of the strength of the linear relationship between predictor (independent) variables and outcome (dependent/criterion) variables. In two dimensions (one predictor, one outcome variable) data can be plotted on a scatter diagram. E(y) = b 0 + b 1 (x) Expected value of y (outcome) Intercept Term coefficient Predictor variable

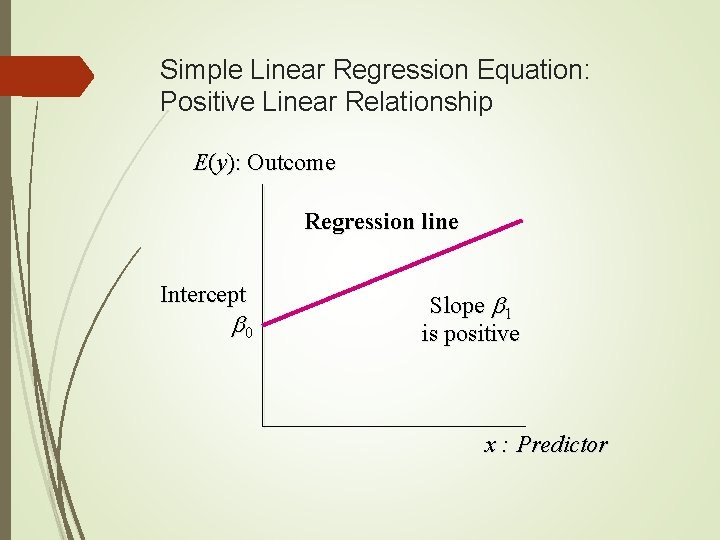

Simple Linear Regression Equation: Positive Linear Relationship E(y): Outcome Regression line Intercept b 0 Slope b 1 is positive x : Predictor

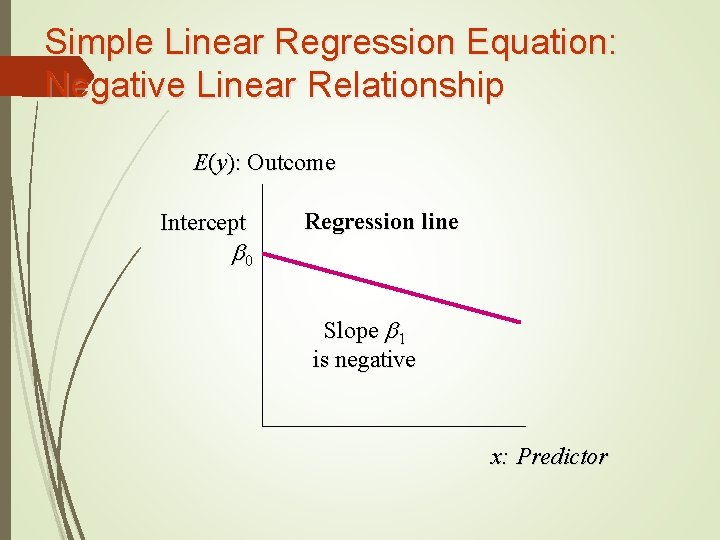

Simple Linear Regression Equation: Negative Linear Relationship E(y): Outcome Intercept b 0 Regression line Slope b 1 is negative x: Predictor

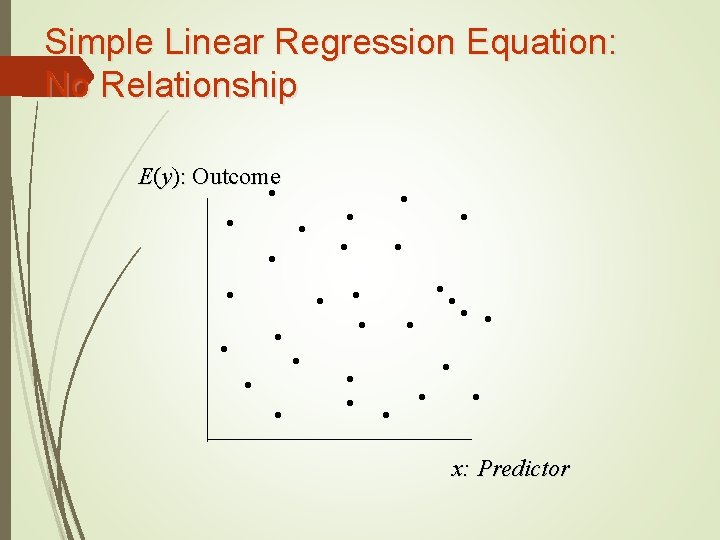

Simple Linear Regression Equation: No Relationship E(y): Outcome • • • • • • • • x: Predictor

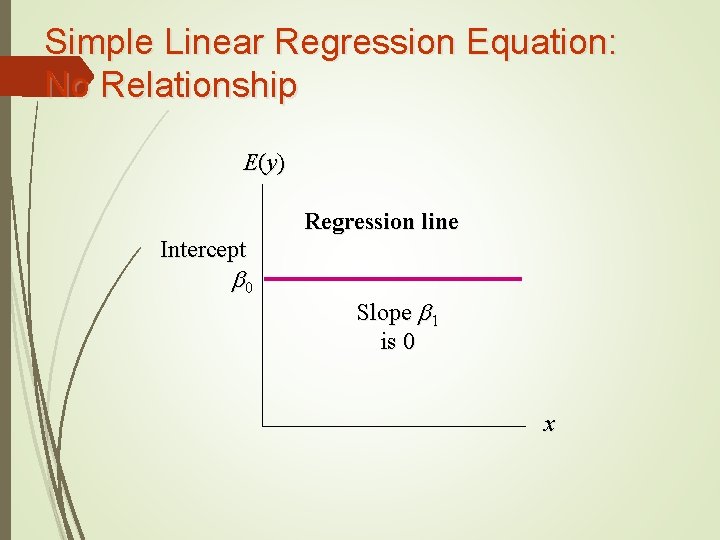

Simple Linear Regression Equation: No Relationship E (y ) Regression line Intercept b 0 Slope b 1 is 0 x

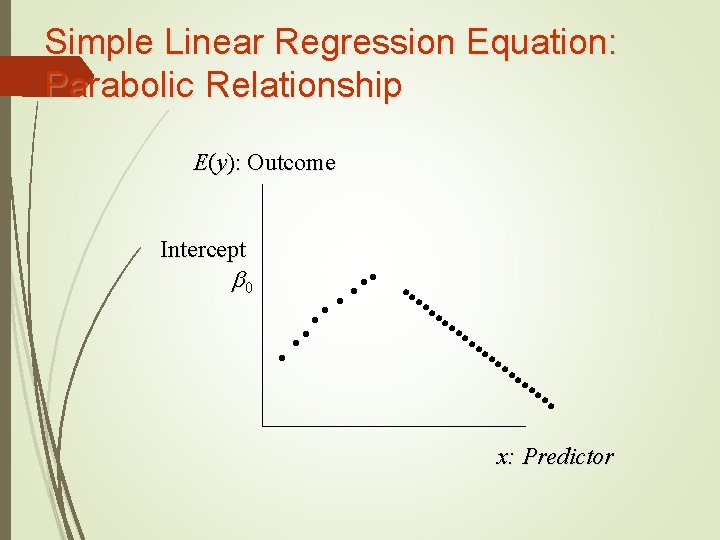

Simple Linear Regression Equation: Parabolic Relationship E(y): Outcome Intercept b 0 • • • • • • • • • • x: Predictor

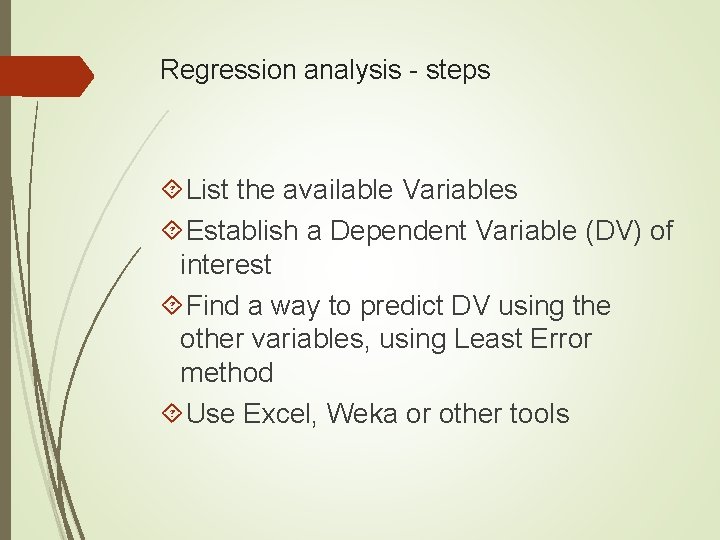

Regression analysis - steps List the available Variables Establish a Dependent Variable (DV) of interest Find a way to predict DV using the other variables, using Least Error method Use Excel, Weka or other tools

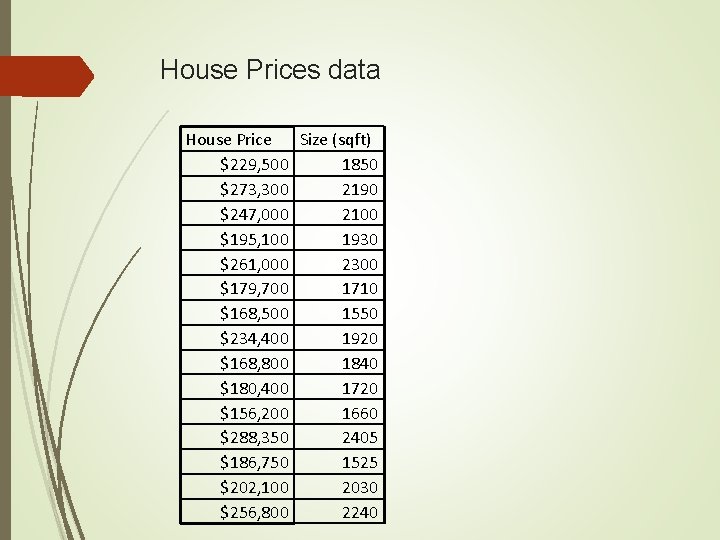

House Prices data House Price Size (sqft) $229, 500 1850 $273, 300 2190 $247, 000 2100 $195, 100 1930 $261, 000 2300 $179, 700 1710 $168, 500 1550 $234, 400 1920 $168, 800 1840 $180, 400 1720 $156, 200 1660 $288, 350 2405 $186, 750 1525 $202, 100 2030 $256, 800 2240

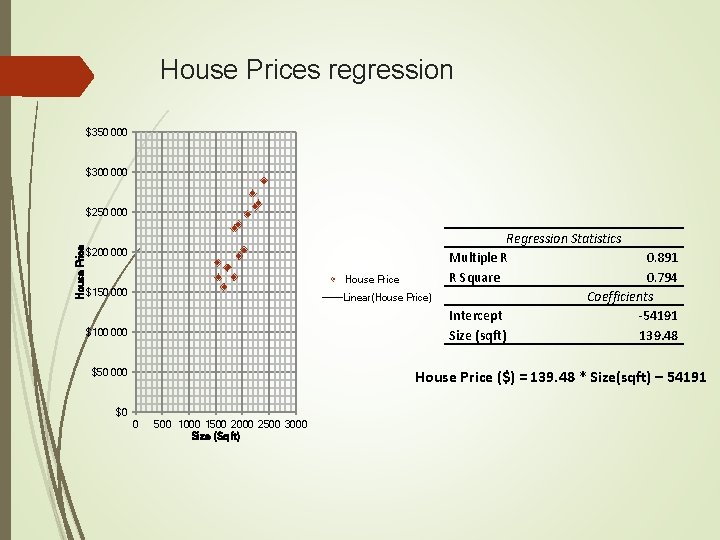

House Prices regression $350 000 $300 000 House Price $250 000 $200 000 House Price $150 000 Linear(House Price) $100 000 $50 000 Regression Statistics Multiple R 0. 891 R Square 0. 794 Coefficients Intercept -54191 Size (sqft) 139. 48 House Price ($) = 139. 48 * Size(sqft) – 54191 $0 0 500 1000 1500 2000 2500 3000 Size (Sq ft)

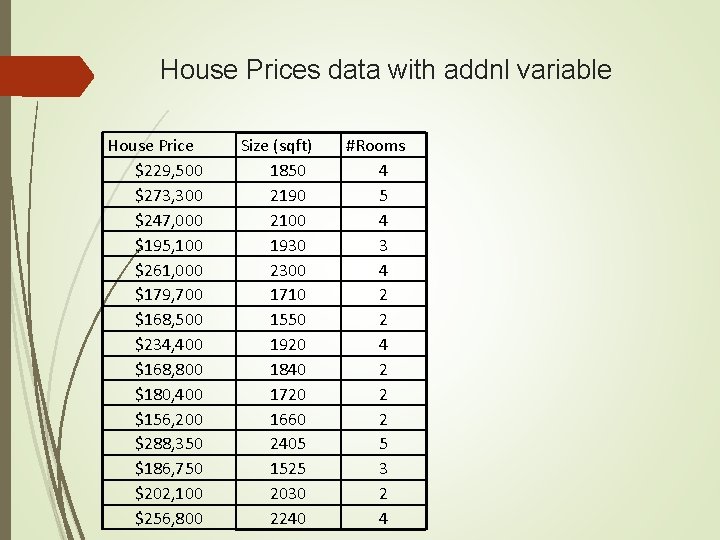

House Prices data with addnl variable House Price $229, 500 $273, 300 $247, 000 $195, 100 $261, 000 $179, 700 $168, 500 $234, 400 $168, 800 $180, 400 $156, 200 $288, 350 $186, 750 $202, 100 $256, 800 Size (sqft) 1850 2190 2100 1930 2300 1710 1550 1920 1840 1720 1660 2405 1525 2030 2240 #Rooms 4 5 4 3 4 2 2 2 5 3 2 4

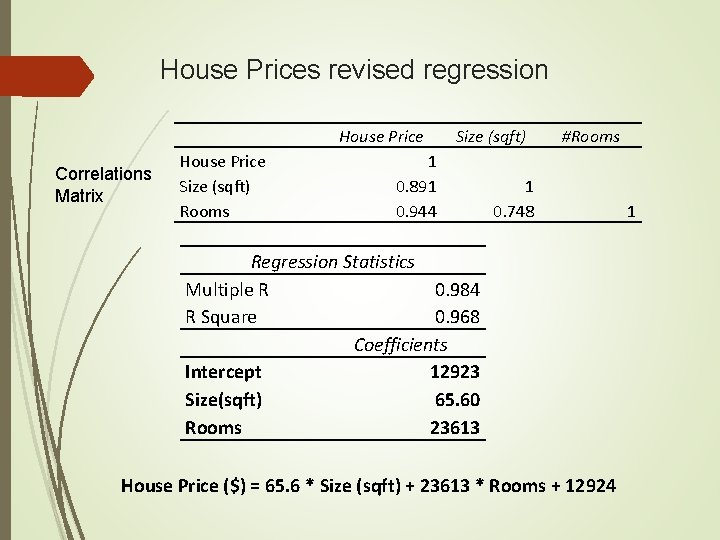

House Prices revised regression House Price Correlations Matrix House Price Size (sqft) Rooms Size (sqft) 1 0. 891 0. 944 #Rooms 1 0. 748 Regression Statistics Multiple R 0. 984 R Square 0. 968 Coefficients Intercept 12923 Size(sqft) 65. 60 Rooms 23613 House Price ($) = 65. 6 * Size (sqft) + 23613 * Rooms + 12924 1

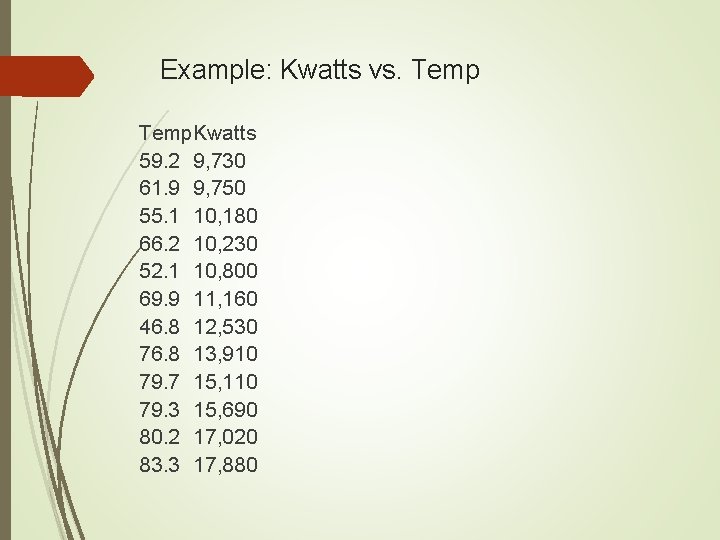

Example: Kwatts vs. Temp. Kwatts 59. 2 9, 730 61. 9 9, 750 55. 1 10, 180 66. 2 10, 230 52. 1 10, 800 69. 9 11, 160 46. 8 12, 530 76. 8 13, 910 79. 7 15, 110 79. 3 15, 690 80. 2 17, 020 83. 3 17, 880

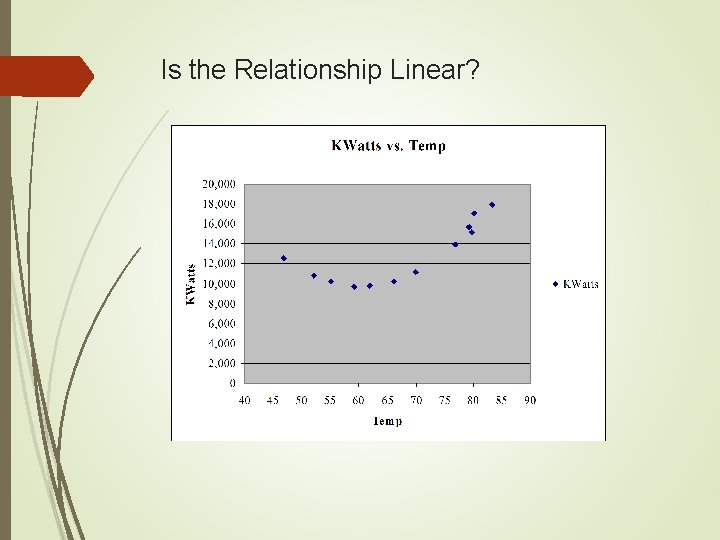

Is the Relationship Linear?

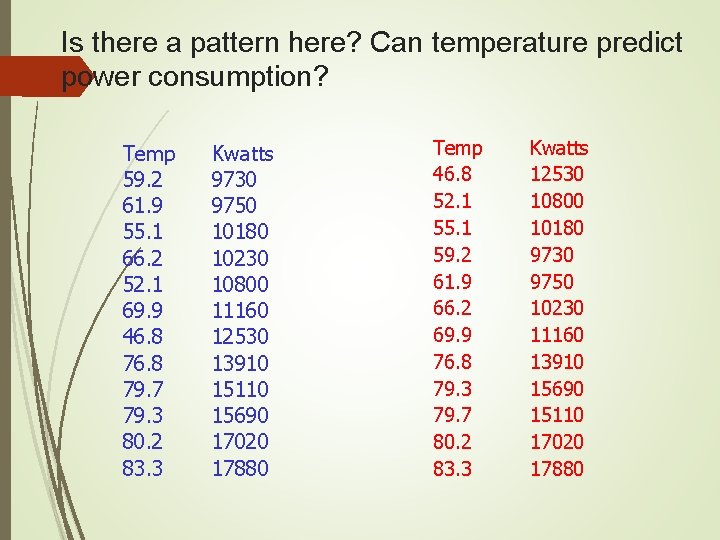

Is there a pattern here? Can temperature predict power consumption? Temp 59. 2 61. 9 55. 1 66. 2 52. 1 69. 9 46. 8 79. 7 79. 3 80. 2 83. 3 Kwatts 9730 9750 10180 10230 10800 11160 12530 13910 15110 15690 17020 17880 Temp 46. 8 52. 1 55. 1 59. 2 61. 9 66. 2 69. 9 76. 8 79. 3 79. 7 80. 2 83. 3 Kwatts 12530 10800 10180 9730 9750 10230 11160 13910 15690 15110 17020 17880

Testing a linear pattern Kwatts 9730 9750 10180 10230 10800 11160 12530 13910 15110 15690 17020 17880 Temp Line Fit Plot Regression Statistics Multiple R 0. 776552 R Square 0. 603034 Adjusted R Square 0. 563337 Standard Error 1967. 153 Observations 12 20000 18000 16000 14000 12000 Kwatts Temp 59. 2 61. 9 55. 1 66. 2 52. 1 69. 9 46. 8 79. 7 79. 3 80. 2 83. 3 10000 Kwatts 8000 Intercept Coefficie nts 319. 0414 Temp 185. 2702 Predicted Kwatts 6000 4000 2000 0 0 50 Temp 100 Kwatts = 185*Temp + 319

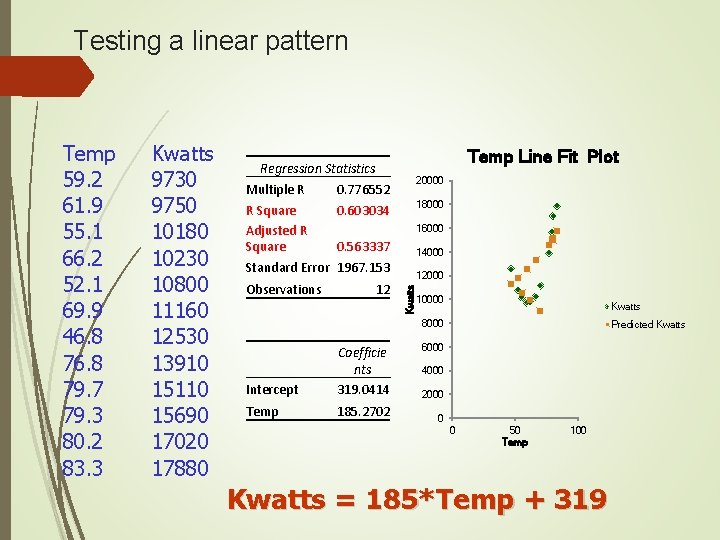

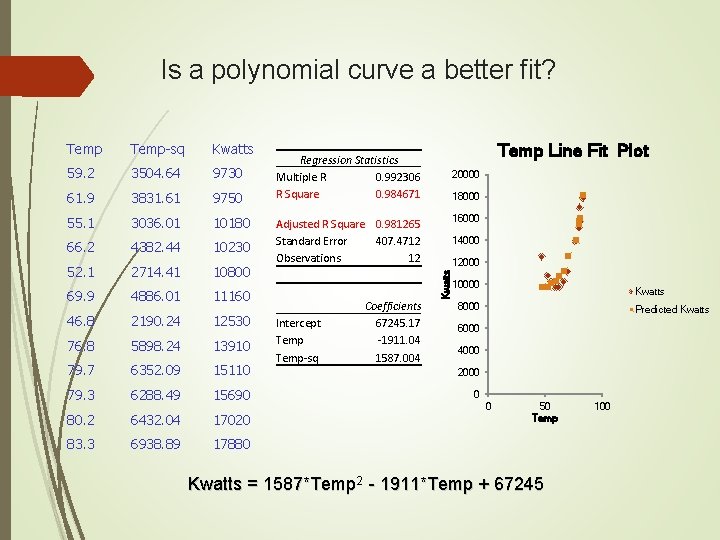

Is a polynomial curve a better fit? Temp-sq Kwatts 59. 2 3504. 64 9730 61. 9 3831. 61 9750 55. 1 3036. 01 10180 66. 2 4382. 44 10230 52. 1 2714. 41 10800 69. 9 4886. 01 11160 46. 8 2190. 24 12530 76. 8 5898. 24 13910 79. 7 6352. 09 15110 79. 3 6288. 49 15690 80. 2 6432. 04 17020 83. 3 6938. 89 17880 Temp Line Fit Plot Regression Statistics Multiple R 0. 992306 R Square 0. 984671 20000 18000 16000 Adjusted R Square 0. 981265 Standard Error 407. 4712 Observations 12 Intercept Temp-sq Coefficients 67245. 17 -1911. 04 1587. 004 14000 12000 Kwatts Temp 10000 Kwatts 8000 Predicted Kwatts 6000 4000 2000 0 0 50 Temp Kwatts = 1587*Temp 2 - 1911*Temp + 67245 100

Advantages of Regression Modeling Easy to understand Provide simple algebraic equations Goodness of fit is measured by correlation coefficient Match and beat the predictive power of other modeling techniques Can include any number of variables Regression modeling tools are pervasive such as Excel

Disadvantages of Regression Models Can not cover for poor data quality issues Does not automatically take care of collinearity problems Does not automatically take care of non-linearity Usually works only with numeric data

Thank you!

Logistic Regression Work with dependent variables with binary values Effectively becomes decision model Logistic regression take the natural logarithm (logit) of the odds of the dependent variable being a case logit is the continuous function upon which linear regression is conducted

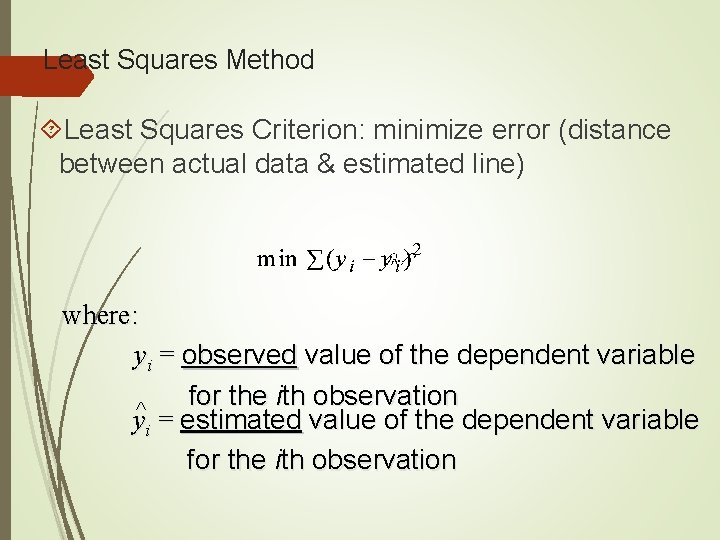

Least Squares Method Least Squares Criterion: minimize error (distance between actual data & estimated line) where: yi = observed value of the dependent variable for the ith observation ^ yi = estimated value of the dependent variable for the ith observation

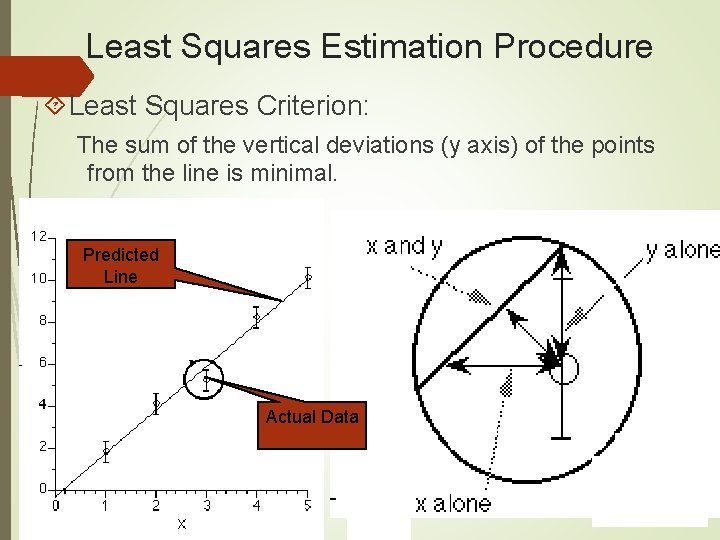

Least Squares Estimation Procedure Least Squares Criterion: The sum of the vertical deviations (y axis) of the points from the line is minimal. Predicted Line Actual Data

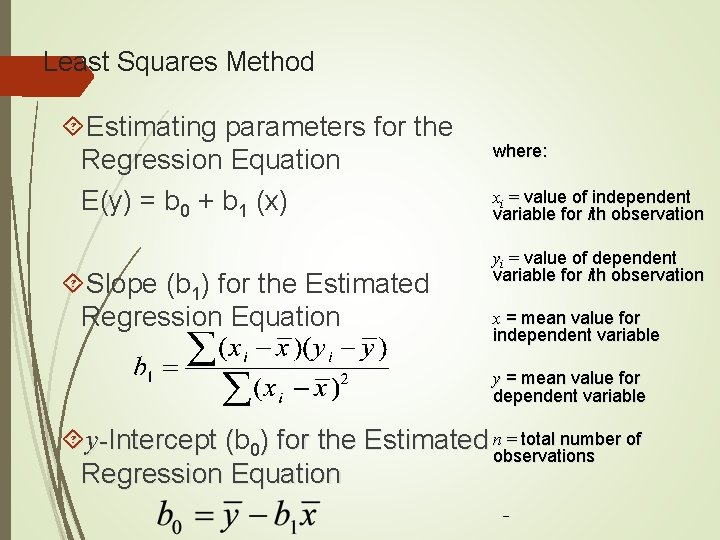

Least Squares Method Estimating parameters for the Regression Equation E(y) = b 0 + b 1 (x) Slope (b 1) for the Estimated Regression Equation where: xi = value of independent variable for ith observation yi = value of dependent variable for ith observation x = mean value for independent variable y = mean value for dependent variable = total number of y-Intercept (b ( 0) for the Estimated nobservations Regression Equation _

- Slides: 29