Neural Subgraph Isomorphism Counting Xin Liu 1 Haojie

Neural Subgraph Isomorphism Counting Xin Liu 1, Haojie Pan 1, Mutian He 1, Yangqiu Song 1, Xin Jiang 2 , Lifeng Shang 2 1 The Hong Kong University of Science and Technology 2 Huawei Noah’s Ark Lab 1

Graph Representation Learning Input: Karate Graph Output: Representation • Downstream tasks – Node classification – Link prediction Local decisions Bryan Perozzi, Rami Al-Rfou, and Steven Skiena. 2014. Deepwalk: Online learning of social representations. In SIGKDD. 701– 710. 2

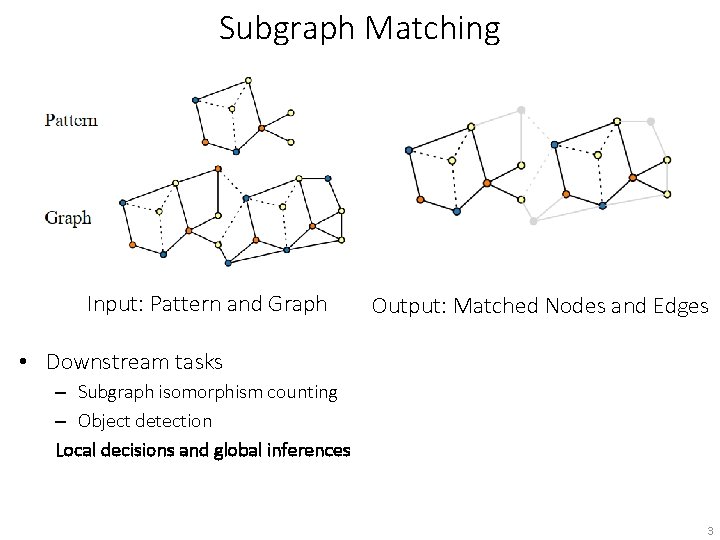

Subgraph Matching Input: Pattern and Graph Output: Matched Nodes and Edges • Downstream tasks – Subgraph isomorphism counting – Object detection Local decisions and global inferences 3

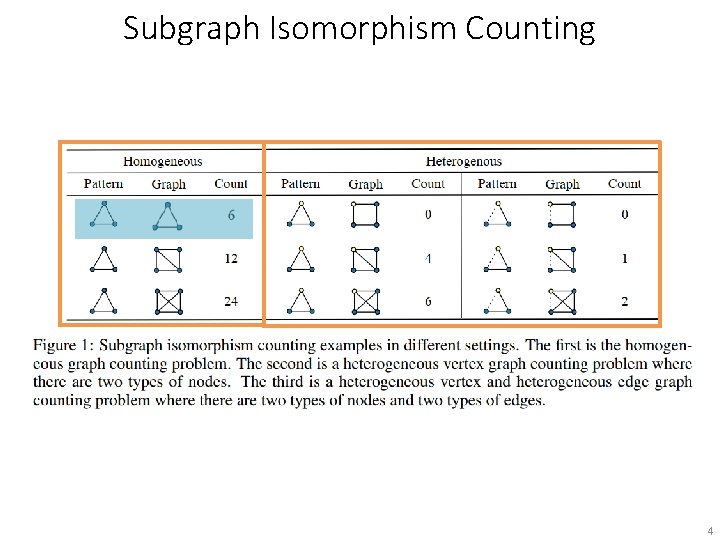

Subgraph Isomorphism Counting 4

Cases in Research and Life ! e t e l p m o c P N Christian Borgelt. and Michael R. Berthold. 2002. Mining molecular fragments: Finding relevant substructures of molecules. In ICDM. 51 -58. Hamilton, Will, Payal Bajaj, Marinka Zitnik, Dan Jurafsky, and Jure Leskovec. 2018. Embedding logical queries on knowledge graphs. In Neur. IPS. 2026 -2037. Stephen A. Cook. 1971. The complexity of theorem-proving procedures. STOC. 151 -158. 5

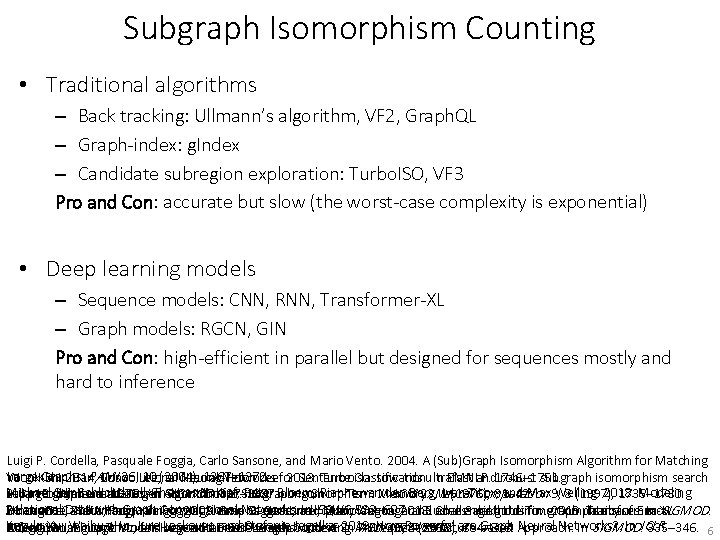

Subgraph Isomorphism Counting • Traditional algorithms – Back tracking: Ullmann’s algorithm, VF 2, Graph. QL – Graph-index: g. Index – Candidate subregion exploration: Turbo. ISO, VF 3 Pro and Con: accurate but slow (the worst-case complexity is exponential) • Deep learning models – Sequence models: CNN, RNN, Transformer-XL – Graph models: RGCN, GIN Pro and Con: high-efficient in parallel but designed for sequences mostly and hard to inference Luigi P. Cordella, Pasquale Foggia, Carlo Sansone, and Mario Vento. 2004. A (Sub)Graph Isomorphism Algorithm for Matching Large Graphs. PAMI 26, 10 (2004), 1367– 1372. Yoon Kim. 2014. Convolutional Neural Networks for Sentence Classification. In EMNLP. 1746– 1751. Wook-Shin Han, Jinsoo Lee, and Jeong-Hoon Lee. 2013. Turboiso: towards ultrafast and robust subgraph isomorphism search Michael Sejr Schlichtkrull, Thomas N. Kipf, Peter Bloem, Rianne van den Berg, Ivan Titov, and Max Welling. 2018. Modeling Julian R. Ullmann. 1976. An Algorithm for Subgraph Isomorphism. J. ACM 23, 1 (1976), 31– 42. Sepp Hochreiter and Jürgen Schmidhuber. 1997. Long Short-Term Memory. in large graph databases. In SIGMOD. 337– 348. Neural Computation 9, 8 (1997), 1735– 1780. Relational Data with Graph Convolutional Networks. In ESWC. 593– 607. Huahai He and Ambuj K. Singh. 2008. Graphs-at-a-time: query language and access methods for graph databases. In SIGMOD. Zihang Dai, Zhilin Yang, Yiming Yang, Jaime G. Carbonell, Quoc Viet Le, and Ruslan Salakhutdinov. 2019. Transformer-XL: Vincenzo Carletti, Pasquale Foggia, Alessia Saggese, and Mario Vento. 2018. Challenging the Time Complexity of Exact Keyulu Xu, Weihua Hu, Jure Leskovec, and Stefanie Jegelka. 2019. How Powerful are Graph Neural Networks? . In ICLR. 405– 418. Attentive Language Models beyond a Fixed-Length Context. In Subgraph Isomorphism for Huge and Dense Graphs with VF 3. PAMI ACL. 2978– 2988. 40, 4 (2018), 804– 818. Xifeng Yan, Philip S. Yu, and Jiawei Han. 2004. Graph Indexing: A Frequent Structure-based Approach. In SIGMOD. 335– 346. 6

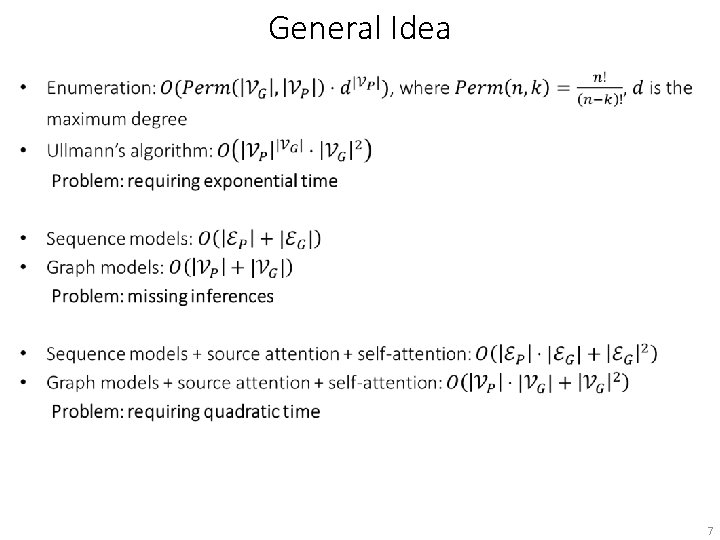

General Idea • 7

General Idea • counting Pred. FFN Memory + Attention memory pattern-attention graph-attention pattern graph 8

General Idea • 9

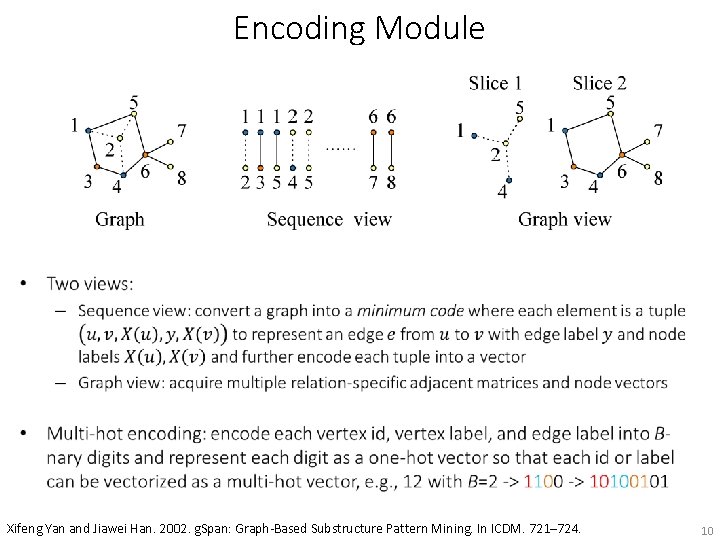

Encoding Module • Xifeng Yan and Jiawei Han. 2002. g. Span: Graph-Based Substructure Pattern Mining. In ICDM. 721– 724. 10

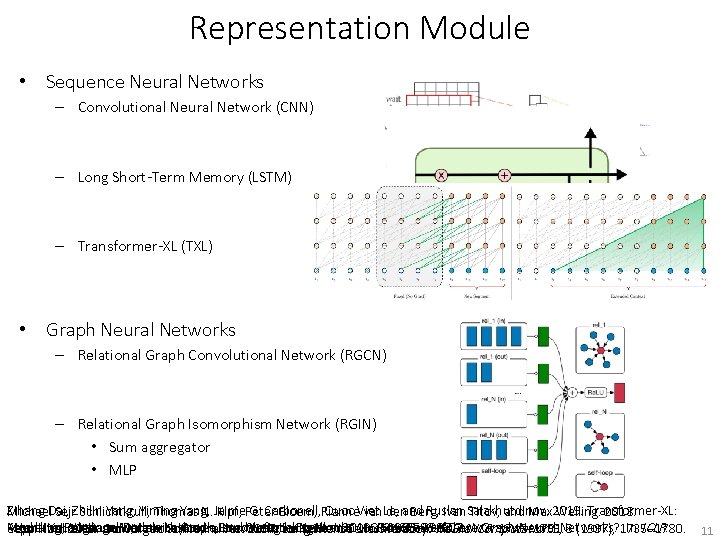

Representation Module • Sequence Neural Networks – Convolutional Neural Network (CNN) – Long Short-Term Memory (LSTM) – Transformer-XL (TXL) • Graph Neural Networks – Relational Graph Convolutional Network (RGCN) – Relational Graph Isomorphism Network (RGIN) • Sum aggregator • MLP Zihang Dai, Zhilin Yang, Yiming Yang, Jaime G. Carbonell, Quoc Viet Le, and Ruslan Salakhutdinov. 2019. Transformer-XL: Michael Sejr Schlichtkrull, Thomas N. Kipf, Peter Bloem, Rianne van den Berg, Ivan Titov, and Max Welling. 2018. Attentive Language Models beyond a Fixed-Length Context. In ACLESWC. 2978– 2988. Modeling Relational Data with Graph Convolutional Networks. In . 593– 607. Keyulu Xu, Weihua Hu, Jure Leskovec, and Stefanie Jegelka. 2019. How Powerful are Graph Neural Networks? . In ICLR. Sepp Hochreiter and Jürgen Schmidhuber. 1997. Long Short-Term Memory. Neural Computation 9, 8 (1997), 1735– 1780. Yoon Kim. 2014. Convolutional Neural Networks for Sentence Classification. In EMNLP. 1746– 1751. 11

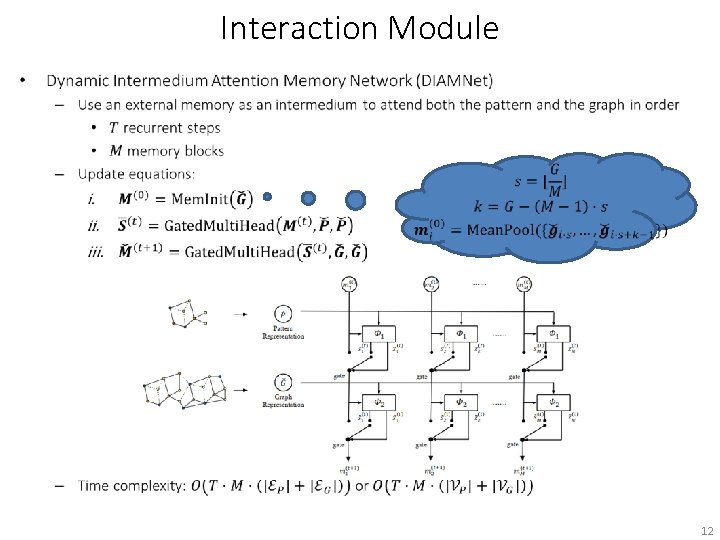

Interaction Module • 12

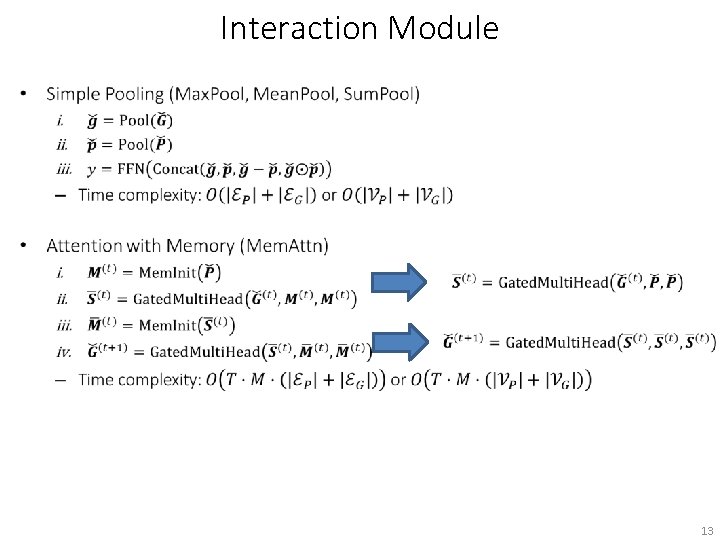

Interaction Module 13

Datasets • Two synthetic datasets and one real-life dataset 14

Synthetic Datasets • Synthetic datasets generation – Random graph generation is better, but the running time cannot guarantee for traditional algorithms – Given a pattern, try to add nodes and edges into a graph to control whether incoming subgraphs isomorphic to the pattern or not with the help of the neighborhood equivalence class (NEC) in Turbo. ISO. We can use high-efficient traditional algorithms to search candidate regions in parallel to get the ground truth. small large pattern nodes {3, 4, 8} {3, 4, 8, 16} pattern edges {2, 4, 8} {2, 4, 8, 16} pattern node labels {2, 4, 8} {2, 4, 8, 16} pattern edge labels {2, 4, 8} {2, 4, 8, 16} graph nodes {8, 16, 32, {64, 128, 64} 256, 512} graph edges {8, 16, …, 256} {64, 128, …, 2048} graph node labels {4, 8, 16} {16, 32, 64} graph edge labels {4, 8, 16} {16, 32, 64} Wook-Shin Han, Jinsoo Lee, and Jeong-Hoon Lee. 2013. Turboiso: towards ultrafast and robust subgraph isomorphism search in large graph databases. In SIGMOD. 337– 348. 15

Results on the small dataset • Bars with same colors of one model represent performance of Pool, Mem. Attn, and DIAMNet Mem. Attn Pool DIAMNet 16

Max. Pool vs. DIAMNet • Orange bars are averages of ground truth and blue ones are average of predictions 17

Max. Pool vs. DIAMNet Max. Pool DIAMNet 18

Results on the large dataset 19

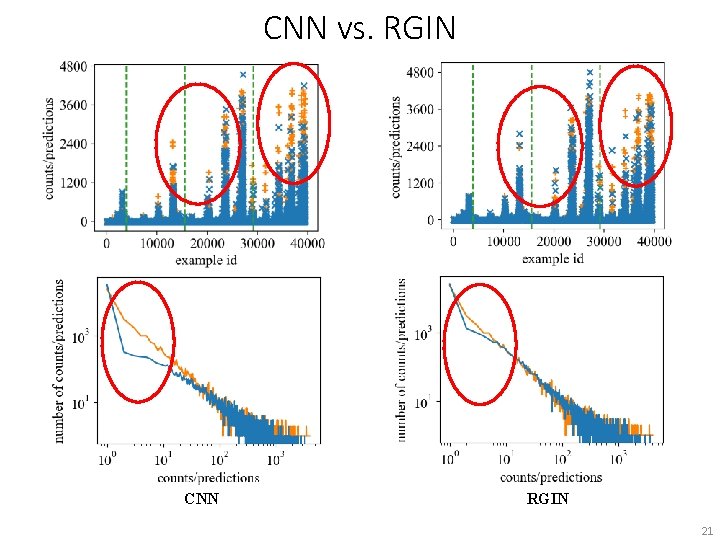

CNN vs. RGIN 20

CNN vs. RGIN CNN RGIN 21

Time Cost • When the pattern becomes two times larger and the graph becomes 7. 4 times larger on average, time cost of neural models increases linearly while VF 2 exponentially increases from ~110 seconds to ~5000 seconds. • 10 -1, 000 X faster Luigi P. Cordella, Pasquale Foggia, Carlo Sansone, and Mario Vento. 2004. A (Sub)Graph Isomorphism Algorithm for Matching Large Graphs. PAMI 26, 10 (2004), 1367– 1372. 22

MUTAG Dataset • Randomly generate 6 different homogeneous patterns and 18 heterogeneous patterns with three or four nodes • training: valid: test = 1, 488 : 1, 512 23

Transfer Learning from Synthetic Data to MUTAG • Neural models pretrained on synthetic data can further improve the performance and make convergence faster • DIAMNet-Transfer finally becomes the best and achieves 1. 307 RMSE and 0. 440 MAE 24

Conclusion • This is the first work to model the subgraph isomorphism counting problem as a learning task, for which both training and inference have linear time complexities. • We exploit the representation power of different deep neural network architectures under an end-to-end learning framework, including CNN, RNN, Transformer-XL, RGCN, and the more powerful RGIN. • We design the Dynamic Intermedium Attention Memory Network (DIAMNet) to utilize attention to do inference but enjoy the linear time cost. The idea can also be generalized to many other tasks. And it is the best compared with simple pooling or attention with memory. • We conduct extensive experiments on two synthetic datasets and a well-known real benchmark dataset, which demonstrate that our framework can achieve good results on both relatively large graphs and patterns. And transfer learning from synthetic data is helpful on real-life data. 25

Thanks email: xliucr@cse. ust. hk Paper Code https: //arxiv. org/abs/1912. 11589 https: //github. com/HKUSTKnow. Comp/Neural. Subgraph. Counting 26

- Slides: 26