MPI Message Passing Interface Message passing library standard

MPI (Message Passing Interface) • Message passing library standard developed by group of academics and industrial partners to foster more widespread use and portability. • Defines routines, not implementation. • Several free implementations exist. COMPE 472 Parallel Computing 2. 1

MPI (Message Passing Interface) Implementations • • MVAPICH MPICH 2 IBM MPI Library Open. MPI http: //www. open-mpi. org/ COMPE 472 Parallel Computing 2. 2

MPI Process Creation and Execution • Only static process creation supported in MPI version 1. All processes must be defined prior to execution and started together. • Originally SPMD model of computation. • MPMD also possible with static creation - each program to be started together specified. COMPE 472 Parallel Computing 2. 3

Communicators • Defines scope of a communication operation. • Processes have ranks associated with communicator. • Initially, all processes enrolled in a “universe” called MPI_COMM_WORLD, and each process is given a unique rank, a number from 0 to p - 1, with p processes. COMPE 472 Parallel Computing 2. 4

![Using SPMD Computational Model main (int argc, char *argv[]) { MPI_Init(&argc, &argv); . . Using SPMD Computational Model main (int argc, char *argv[]) { MPI_Init(&argc, &argv); . .](http://slidetodoc.com/presentation_image_h2/318465dc81cc82963de3b52fb2665a34/image-5.jpg)

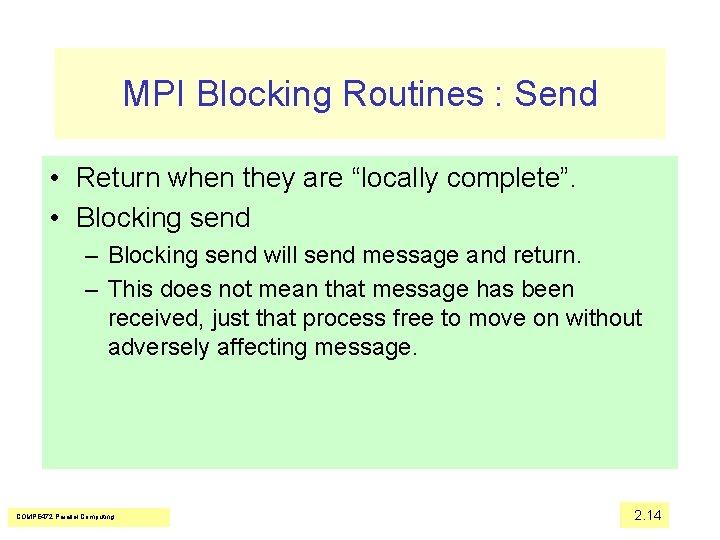

Using SPMD Computational Model main (int argc, char *argv[]) { MPI_Init(&argc, &argv); . . MPI_Comm_rank(MPI_COMM_WORLD, &myrank); /*find process rank */ if (myrank == 0) master(); else slave(); . . MPI_Finalize(); } where master() and slave() are to be executed by master process and slave process, respectively. COMPE 472 Parallel Computing 2. 5

Global and Local Variables • Any global declarations of variables will be duplicated in each process. • Variables that are not to be duplicated will need to be declared within code only executed by that process. COMPE 472 Parallel Computing 2. 6

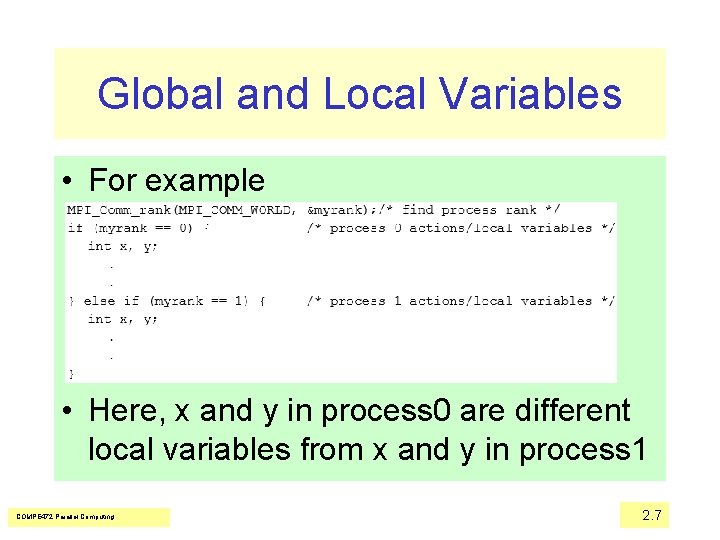

Global and Local Variables • For example • Here, x and y in process 0 are different local variables from x and y in process 1 COMPE 472 Parallel Computing 2. 7

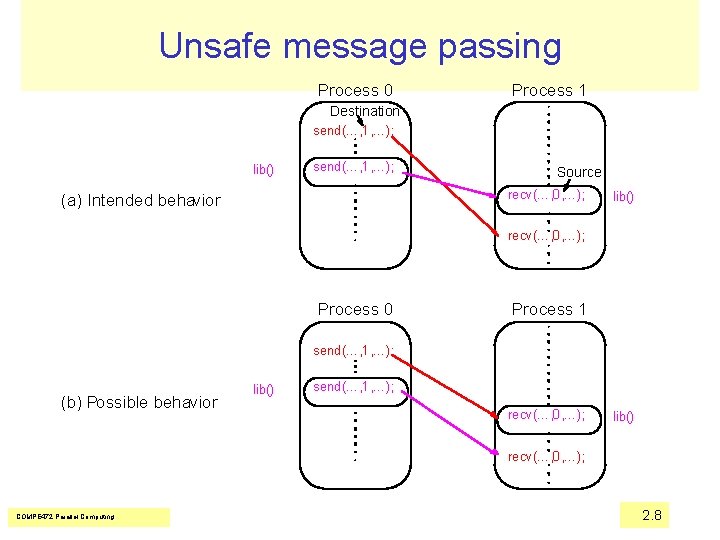

Unsafe message passing Process 0 Process 1 Destination send(…, 1, …); lib() send(…, 1, …); Source recv(…, 0, …); (a) Intended behavior lib() recv(…, 0, …); Process 0 Process 1 send(…, 1, …); (b) Possible behavior lib() send(…, 1, …); recv(…, 0, …); lib() recv(…, 0, …); COMPE 472 Parallel Computing 2. 8

Unsafe message passing • In this figure, process 0 wishes to send a message to process 1, but there is also message passing between library routines as shown. • Even though each send/recv pair has matching source and destination, incorrect message passing occurs. COMPE 472 Parallel Computing 2. 9

MPI Solution “Communicators” • Defines a communication domain - a set of processes that are allowed to communicate between themselves. • Communication domains of libraries can be separated from that of a user program. • Used in all point-to-point and collective MPI messagepassing communications. • Each process has a rank within the communicator, an integer from 0 to n− 1, where there are n processes. COMPE 472 Parallel Computing 2. 10

Communicator Types • Intracommunicator – for communicating within a group • Intercommunicator – for communication between groups. • A group is used to define a collection of processes for these purposes. A process has a unique rank in a group. • A process could be a member of more than one group. COMPE 472 Parallel Computing 2. 11

Default Communicator MPI_COMM_WORLD • Exists as first communicator for all processes existing in the application. • New communicators are created based upon existing communicators. • A set of MPI routines exists forming communicators from existing communicators. COMPE 472 Parallel Computing 2. 12

MPI Point-to-Point Communication • Message tags are present, and wild cards can be used in place of the tag (MPI_ANY_TAG) and in place of the source in receive routines (MPI_ANY_SOURCE). COMPE 472 Parallel Computing 2. 13

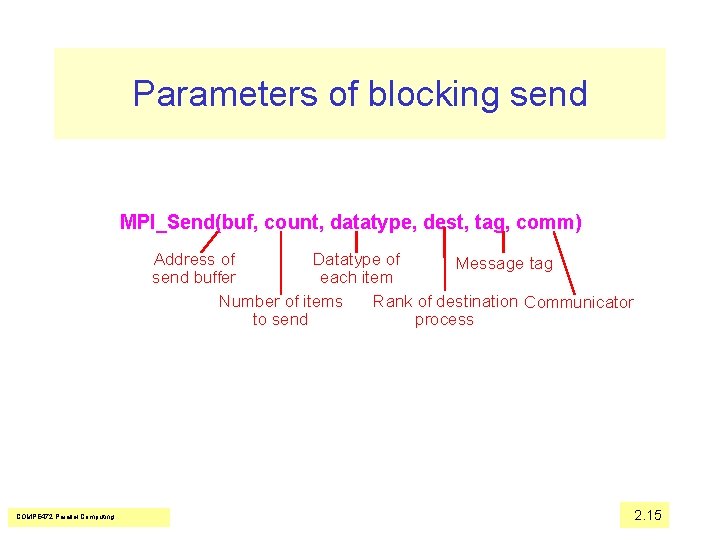

MPI Blocking Routines : Send • Return when they are “locally complete”. • Blocking send – Blocking send will send message and return. – This does not mean that message has been received, just that process free to move on without adversely affecting message. COMPE 472 Parallel Computing 2. 14

Parameters of blocking send MPI_Send(buf, count, datatype, dest, tag, comm) Address of Datatype of Message tag send buffer each item Number of items Rank of destination Communicator to send process COMPE 472 Parallel Computing 2. 15

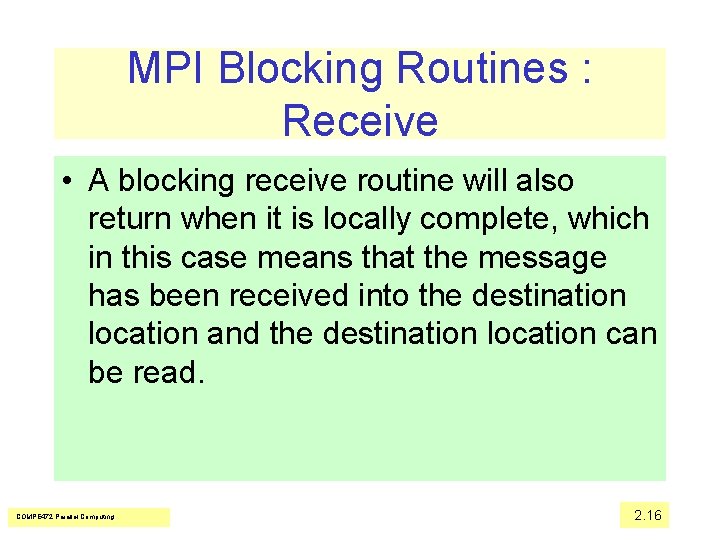

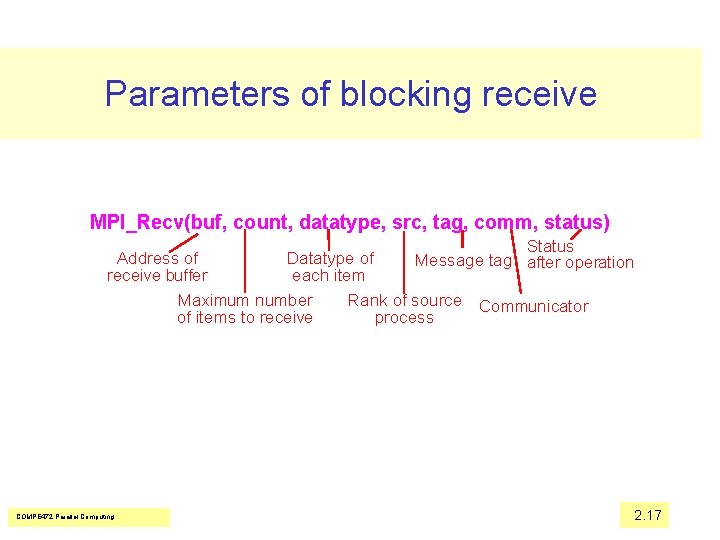

MPI Blocking Routines : Receive • A blocking receive routine will also return when it is locally complete, which in this case means that the message has been received into the destination location and the destination location can be read. COMPE 472 Parallel Computing 2. 16

Parameters of blocking receive MPI_Recv(buf, count, datatype, src, tag, comm, status) Status Message tag after operation Address of Datatype of receive buffer each item Maximum number Rank of source Communicator of items to receive process COMPE 472 Parallel Computing 2. 17

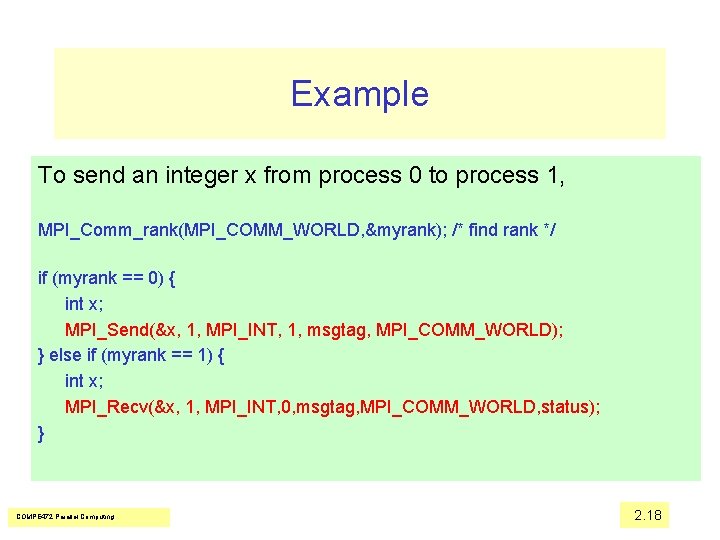

Example To send an integer x from process 0 to process 1, MPI_Comm_rank(MPI_COMM_WORLD, &myrank); /* find rank */ if (myrank == 0) { int x; MPI_Send(&x, 1, MPI_INT, 1, msgtag, MPI_COMM_WORLD); } else if (myrank == 1) { int x; MPI_Recv(&x, 1, MPI_INT, 0, msgtag, MPI_COMM_WORLD, status); } COMPE 472 Parallel Computing 2. 18

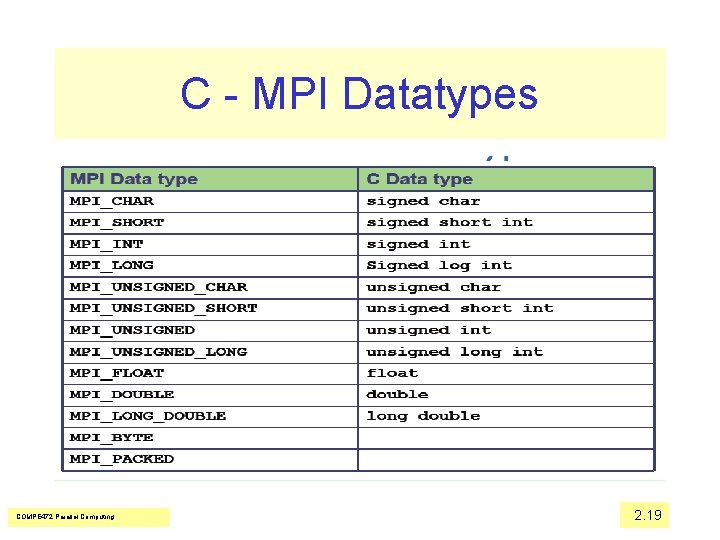

C - MPI Datatypes COMPE 472 Parallel Computing 2. 19

MPI Nonblocking Routines • A nonblocking routine returns immediately; that is, allows the next statement to execute, whether or not the routine is locally complete. – Nonblocking send - MPI_Isend() - will return “immediately” even before source location is safe to be altered. – Nonblocking receive - MPI_Irecv() - will return even if no message to accept. COMPE 472 Parallel Computing 2. 20

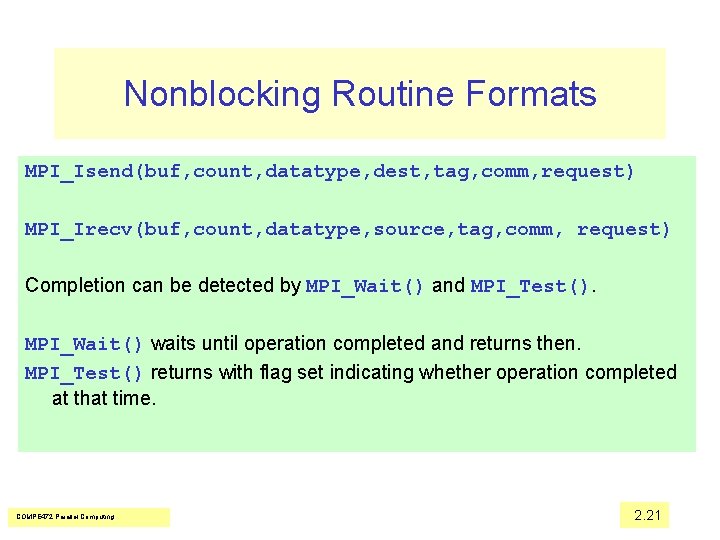

Nonblocking Routine Formats MPI_Isend(buf, count, datatype, dest, tag, comm, request) MPI_Irecv(buf, count, datatype, source, tag, comm, request) Completion can be detected by MPI_Wait() and MPI_Test(). MPI_Wait() waits until operation completed and returns then. MPI_Test() returns with flag set indicating whether operation completed at that time. COMPE 472 Parallel Computing 2. 21

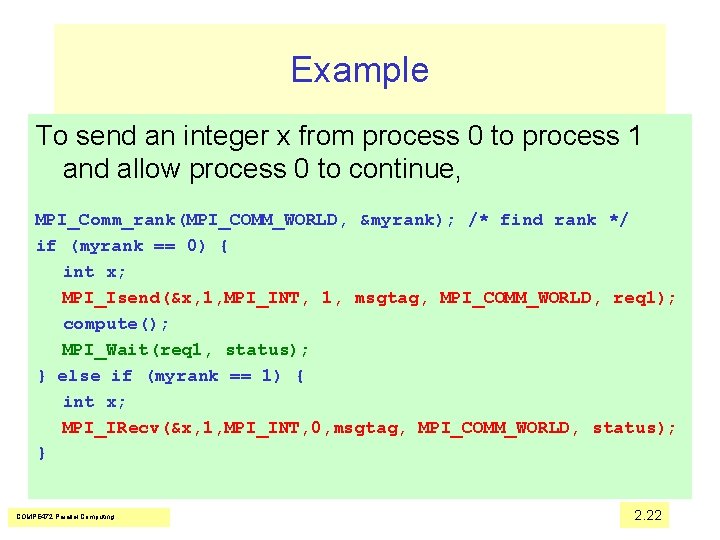

Example To send an integer x from process 0 to process 1 and allow process 0 to continue, MPI_Comm_rank(MPI_COMM_WORLD, &myrank); /* find rank */ if (myrank == 0) { int x; MPI_Isend(&x, 1, MPI_INT, 1, msgtag, MPI_COMM_WORLD, req 1); compute(); MPI_Wait(req 1, status); } else if (myrank == 1) { int x; MPI_IRecv(&x, 1, MPI_INT, 0, msgtag, MPI_COMM_WORLD, status); } COMPE 472 Parallel Computing 2. 22

- Slides: 22