Monitoring Status and Progress Alberto AIMAR CERN for

Monitoring Status and Progress Alberto AIMAR, CERN (for the IT-CM Monitoring and Messaging section) 2

DC and Grid Monitoring “Grid” and “WLCG” are synonyms at CERN 3

Outline Grid Testing • Hammer. Cloud • Experiment Testing Framework • Network Transfers and Throughput Monitoring • Data Centres and Grid Monitoring 4

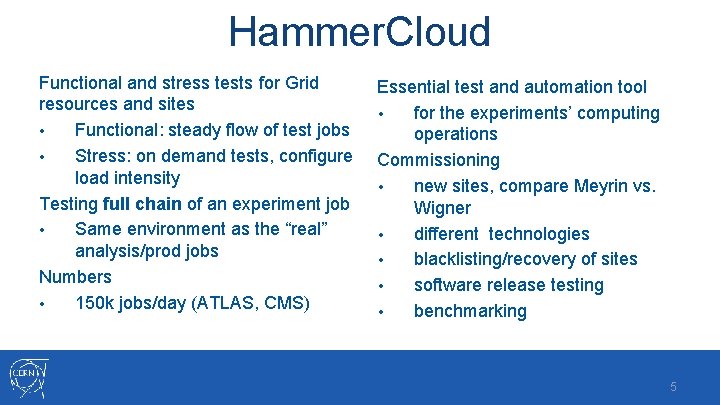

Hammer. Cloud Functional and stress tests for Grid resources and sites • Functional: steady flow of test jobs • Stress: on demand tests, configure load intensity Testing full chain of an experiment job • Same environment as the “real” analysis/prod jobs Numbers • 150 k jobs/day (ATLAS, CMS) Essential test and automation tool • for the experiments’ computing operations Commissioning • new sites, compare Meyrin vs. Wigner • different technologies • blacklisting/recovery of sites • software release testing • benchmarking 5

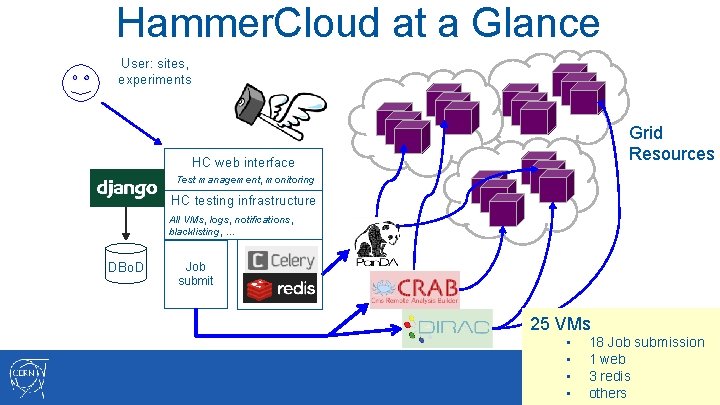

Hammer. Cloud at a Glance User: sites, experiments Grid Resources HC web interface Test management, monitoring HC testing infrastructure All VMs, logs, notifications, blacklisting, … DBo. D Job submit 25 VMs • • 18 Job submission 1 web 3 redis 6 others

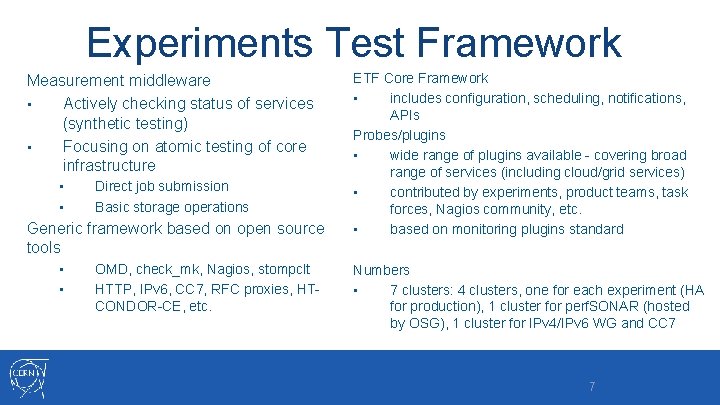

Experiments Test Framework Measurement middleware • Actively checking status of services (synthetic testing) • Focusing on atomic testing of core infrastructure • • Direct job submission Basic storage operations Generic framework based on open source tools • • OMD, check_mk, Nagios, stompclt HTTP, IPv 6, CC 7, RFC proxies, HTCONDOR-CE, etc. ETF Core Framework • includes configuration, scheduling, notifications, APIs Probes/plugins • wide range of plugins available - covering broad range of services (including cloud/grid services) • contributed by experiments, product teams, task forces, Nagios community, etc. • based on monitoring plugins standard Numbers • 7 clusters: 4 clusters, one for each experiment (HA for production), 1 cluster for perf. SONAR (hosted by OSG), 1 cluster for IPv 4/IPv 6 WG and CC 7 7

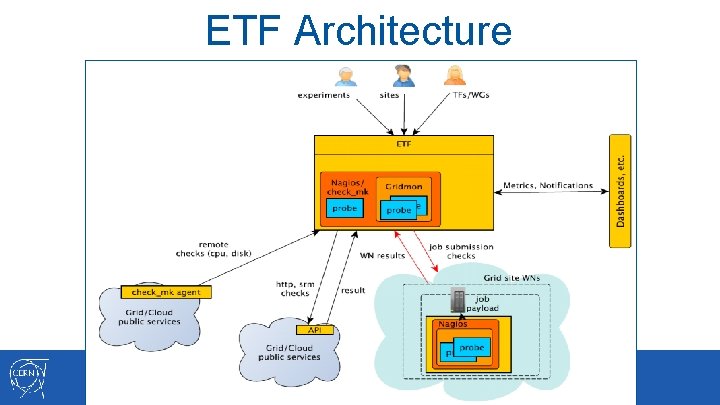

ETF Architecture

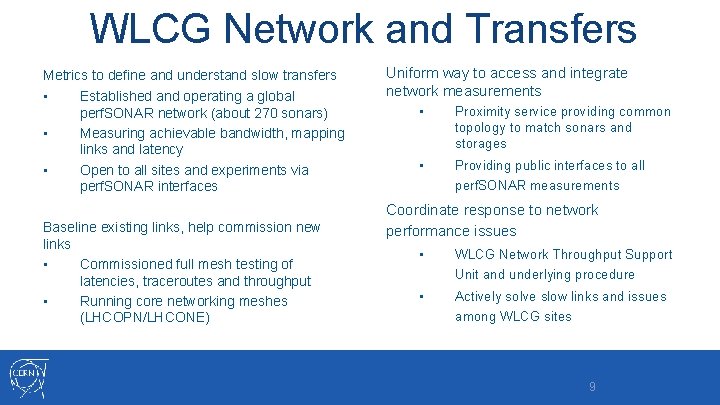

WLCG Network and Transfers Metrics to define and understand slow transfers • Established and operating a global perf. SONAR network (about 270 sonars) • Measuring achievable bandwidth, mapping links and latency • Open to all sites and experiments via perf. SONAR interfaces Baseline existing links, help commission new links • Commissioned full mesh testing of latencies, traceroutes and throughput • Running core networking meshes (LHCOPN/LHCONE) Uniform way to access and integrate network measurements • Proximity service providing common topology to match sonars and storages • Providing public interfaces to all perf. SONAR measurements Coordinate response to network performance issues • WLCG Network Throughput Support Unit and underlying procedure • Actively solve slow links and issues among WLCG sites 9

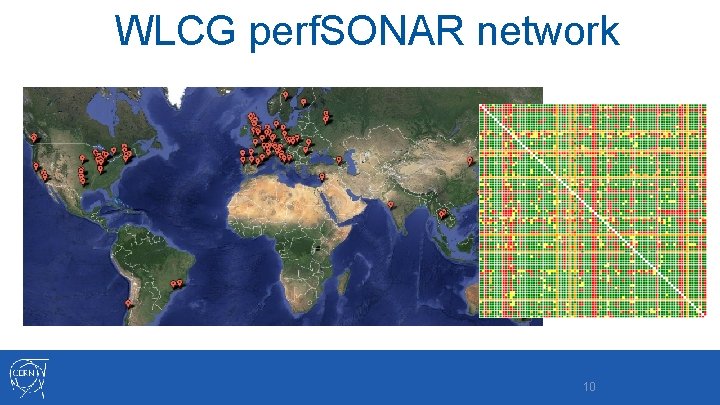

WLCG perf. SONAR network 10

Outline Grid Testing • Hammer. Cloud • Experiment Testing Framework • Network Throughput Monitoring • Data Centres and Grid Monitoring 11

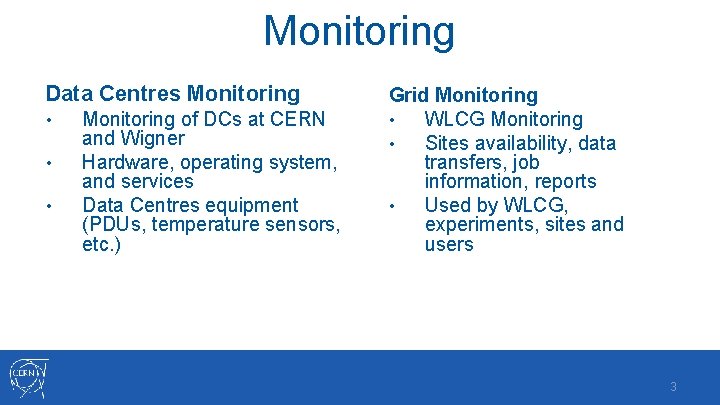

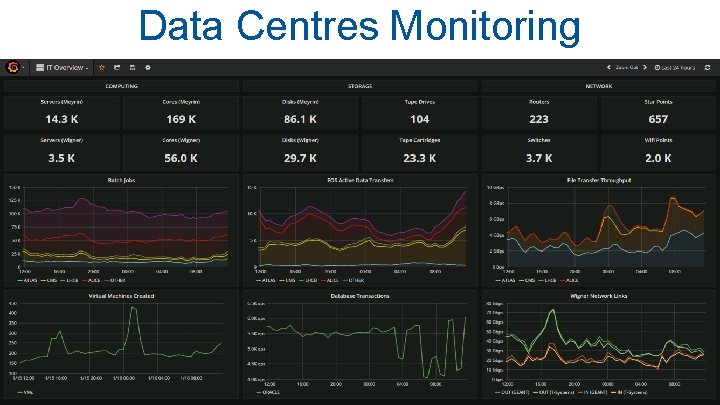

Monitoring Data Centres Monitoring • • • Monitoring of DCs at CERN and Wigner Hardware, operating system, and services Data Centres equipment (PDUs, temperature sensors, etc. ) Grid Monitoring • WLCG Monitoring • Sites availability, data transfers, job information, reports • Used by WLCG, experiments, sites and users 3

Data Centres Monitoring 13

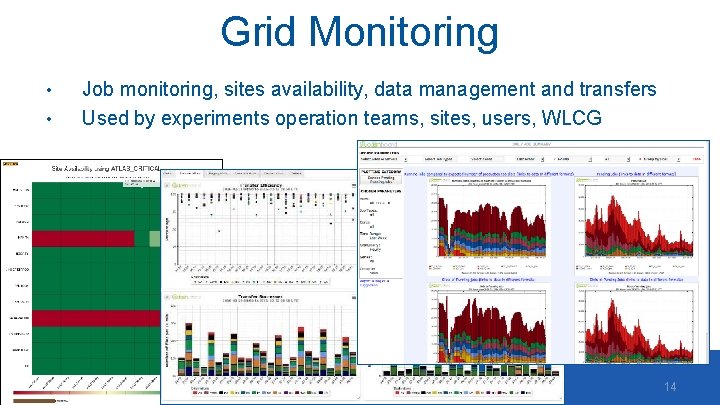

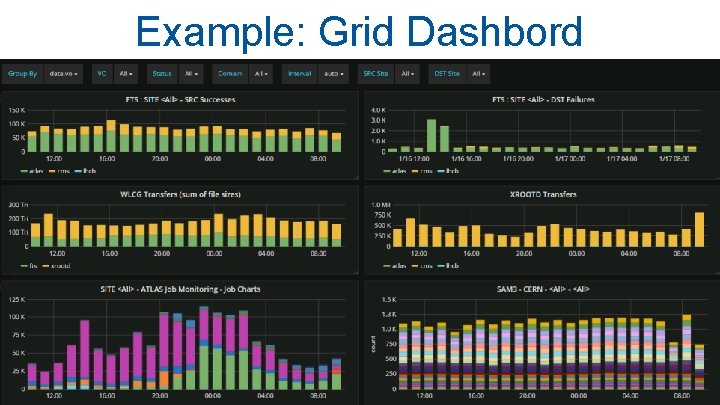

Grid Monitoring • • Job monitoring, sites availability, data management and transfers Used by experiments operation teams, sites, users, WLCG 14

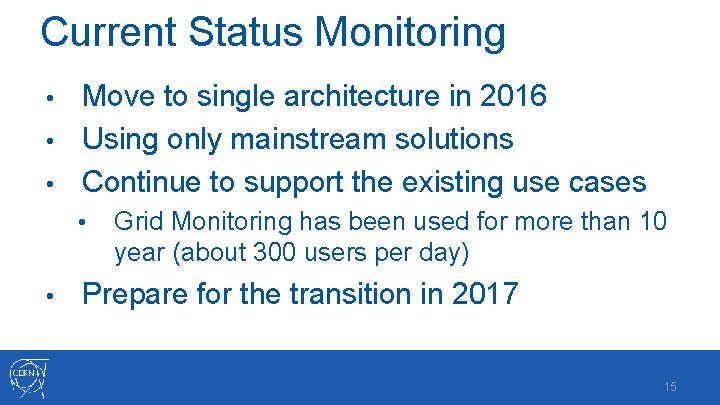

Current Status Monitoring Move to single architecture in 2016 • Using only mainstream solutions • Continue to support the existing use cases • • • Grid Monitoring has been used for more than 10 year (about 300 users per day) Prepare for the transition in 2017 15

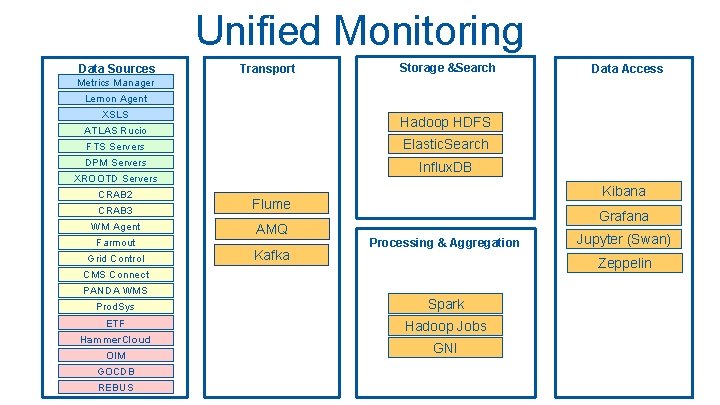

Unified Monitoring Data Sources Transport Storage &Search Data Access Metrics Manager Lemon Agent XSLS Hadoop HDFS ATLAS Rucio FTS Servers Elastic. Search DPM Servers Influx. DB XROOTD Servers CRAB 2 CRAB 3 Flume WM Agent z AMQ Farmout Grid Control Kafka Kibana Grafana Processing & Aggregation Zeppelin CMS Connect PANDA WMS Prod. Sys Spark ETF Hadoop Jobs Hammer. Cloud OIM GOCDB REBUS Jupyter (Swan) GNI

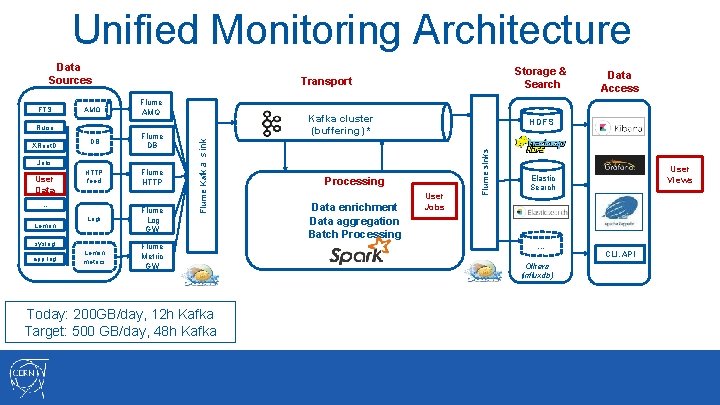

Unified Monitoring Architecture Data Sources AMQ Flume AMQ DB Flume DB HTTP feed Flume HTTP Kafka cluster (buffering) * XRoot. D Jobs User Data … Lemon Logs Flume Log GW Lemon metrics Flume Metric GW syslog app log Flume Kafka sink Rucio Today: 200 GB/day, 12 h Kafka Target: 500 GB/day, 48 h Kafka Processing Data enrichment Data aggregation Batch Processing Data Access HDFS User Jobs Flume sinks FTS Storage & Search Transport User Views Elastic Search … Others (influxdb) CLI, API

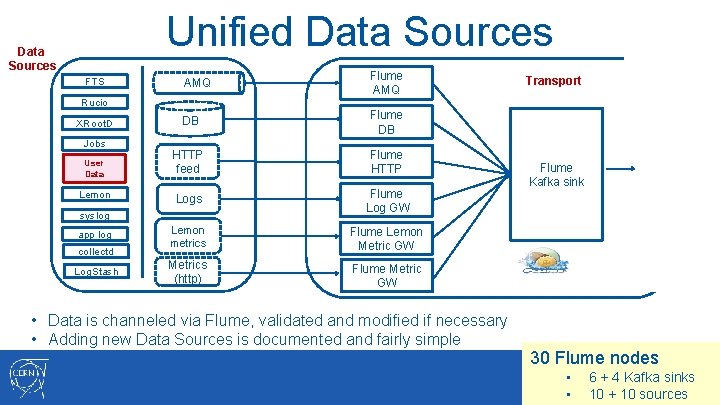

Unified Data Sources FTS AMQ Flume AMQ DB Flume DB HTTP feed Flume HTTP Logs Flume Log GW Lemon metrics Flume Lemon Metric GW Metrics (http) Flume Metric GW Rucio XRoot. D Jobs User Data Lemon syslog app log collectd Log. Stash • Data is channeled via Flume, validated and modified if necessary • Adding new Data Sources is documented and fairly simple Transport Flume Kafka sink 30 Flume nodes • • 6 + 4 Kafka sinks 18 10 + 10 sources

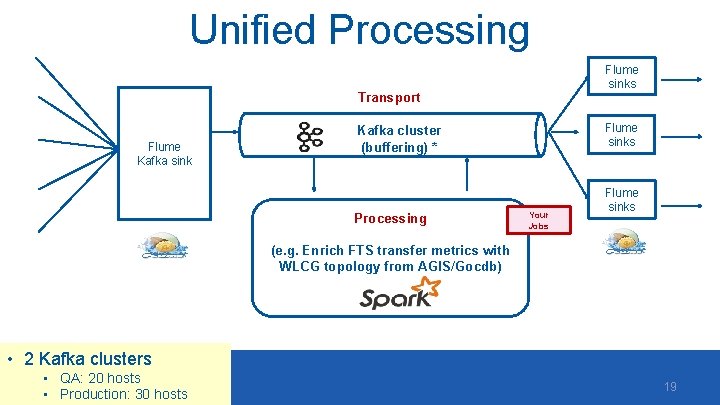

Unified Processing Flume sinks Transport Flume Kafka sink Flume sinks Kafka cluster (buffering) * Processing Your Jobs Flume sinks (e. g. Enrich FTS transfer metrics with WLCG topology from AGIS/Gocdb) • 2 Kafka clusters • QA: 20 hosts • Production: 30 hosts 19

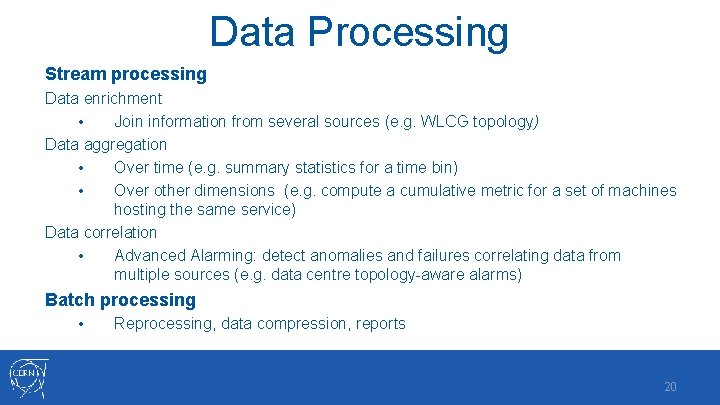

Data Processing Stream processing Data enrichment • Join information from several sources (e. g. WLCG topology) Data aggregation • Over time (e. g. summary statistics for a time bin) • Over other dimensions (e. g. compute a cumulative metric for a set of machines hosting the same service) Data correlation • Advanced Alarming: detect anomalies and failures correlating data from multiple sources (e. g. data centre topology-aware alarms) Batch processing • Reprocessing, data compression, reports 20

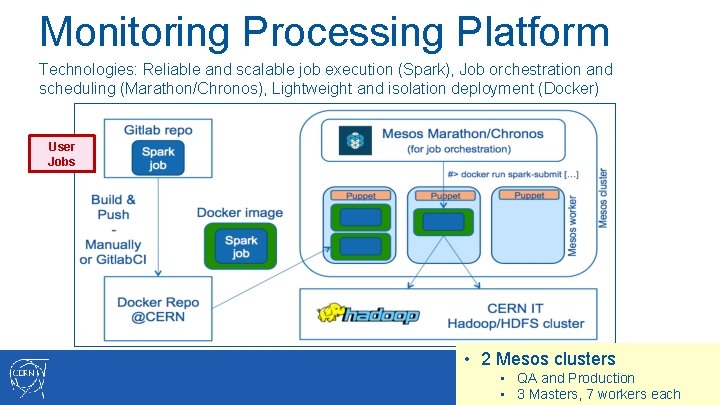

Monitoring Processing Platform Technologies: Reliable and scalable job execution (Spark), Job orchestration and scheduling (Marathon/Chronos), Lightweight and isolation deployment (Docker) User Jobs • 2 Mesos clusters • QA and Production 21 • 3 Masters, 7 workers each

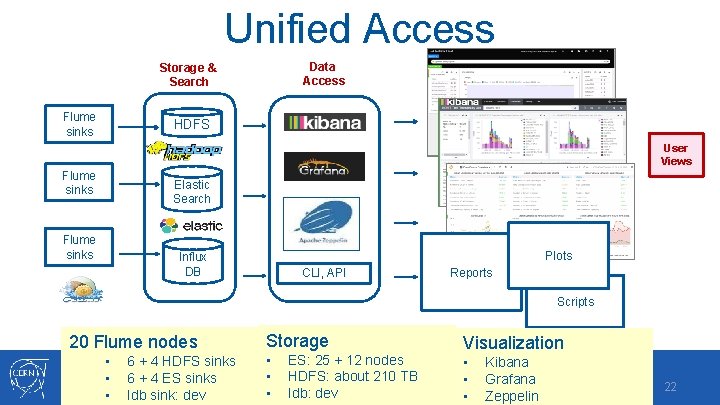

Unified Access Data Access Storage & Search Flume sinks HDFS User Views Flume sinks Elastic Search Flume sinks Plots Influx DB CLI, API Reports Scripts 20 Flume nodes • • • 6 + 4 HDFS sinks 6 + 4 ES sinks Idb sink: dev Storage • • • ES: 25 + 12 nodes HDFS: about 210 TB Idb: dev Visualization • • • Kibana Grafana Zeppelin 22

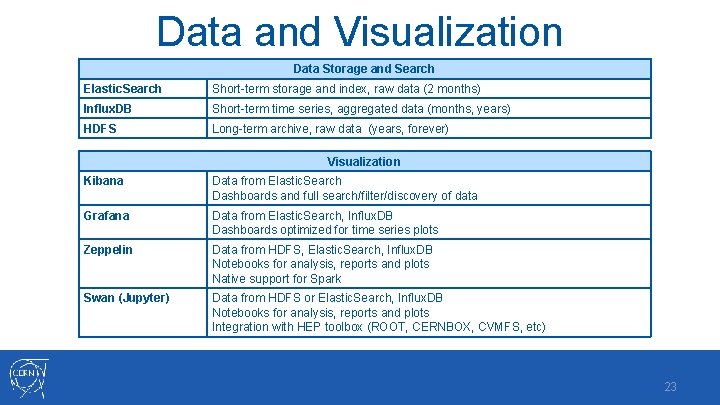

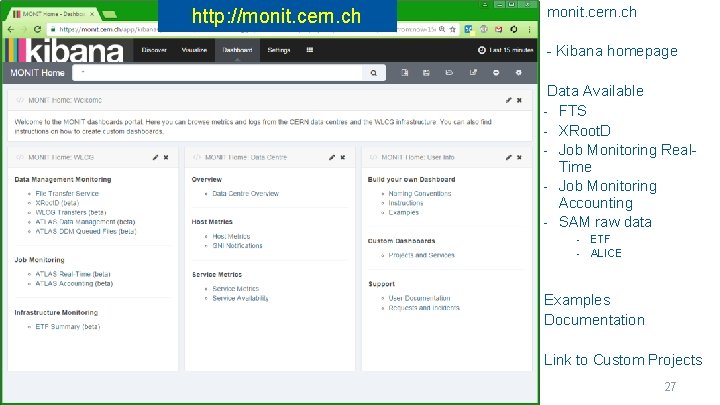

Data and Visualization Data Storage and Search Elastic. Search Short-term storage and index, raw data (2 months) Influx. DB Short-term time series, aggregated data (months, years) HDFS Long-term archive, raw data (years, forever) Visualization Kibana Data from Elastic. Search Dashboards and full search/filter/discovery of data Grafana Data from Elastic. Search, Influx. DB Dashboards optimized for time series plots Zeppelin Data from HDFS, Elastic. Search, Influx. DB Notebooks for analysis, reports and plots Native support for Spark Swan (Jupyter) Data from HDFS or Elastic. Search, Influx. DB Notebooks for analysis, reports and plots Integration with HEP toolbox (ROOT, CERNBOX, CVMFS, etc) 23

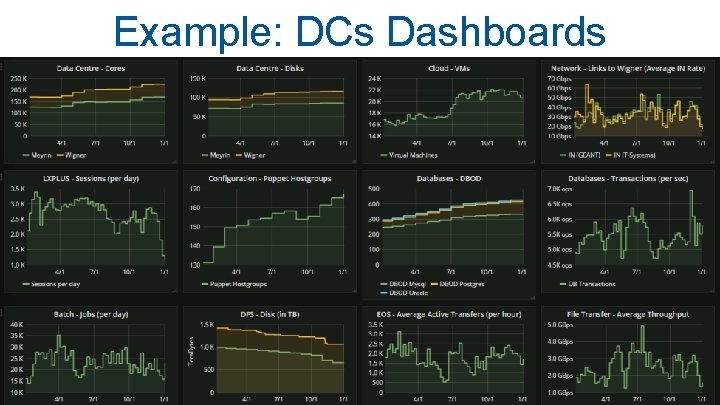

Example: DCs Dashboards 12/6/2020 24

Example: Grid Dashbord 12/6/2020 25

Examples • • • Kibana Grafana Zeppelin 26

http: //monit. cern. ch - Kibana homepage Data Available - FTS - XRoot. D - Job Monitoring Real. Time - Job Monitoring Accounting - SAM raw data - ETF ALICE Examples Documentation Link to Custom Projects 27

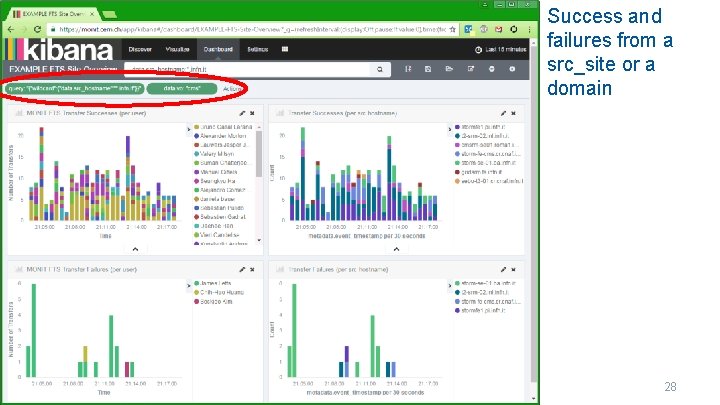

Success and failures from a src_site or a domain 28

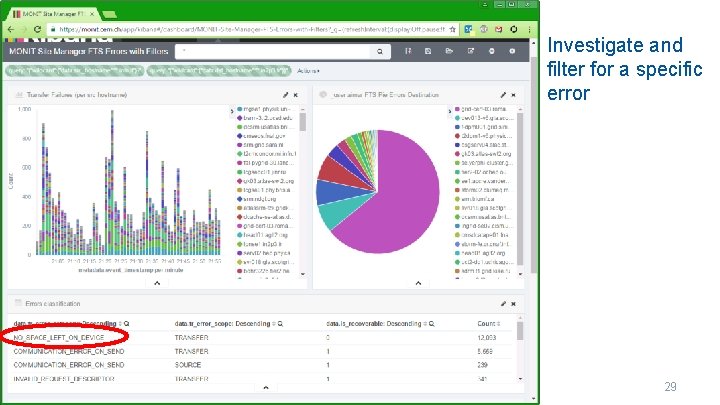

Investigate and filter for a specific error 29

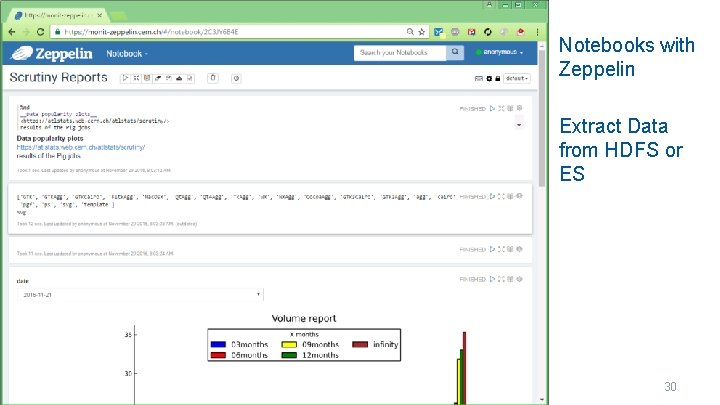

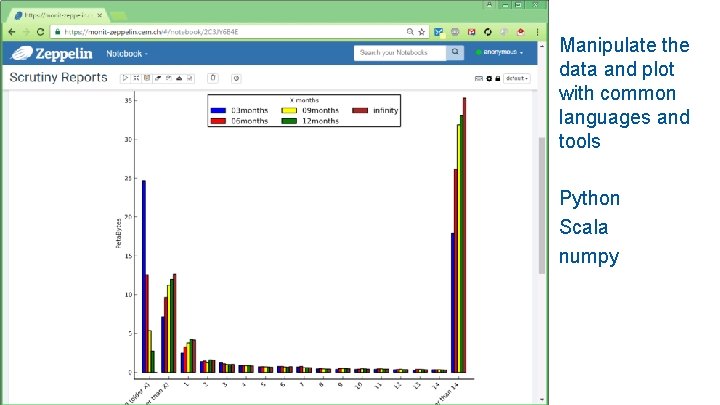

Notebooks with Zeppelin Extract Data from HDFS or ES 30

Manipulate the data and plot with common languages and tools Python Scala numpy 12/6/2020 31

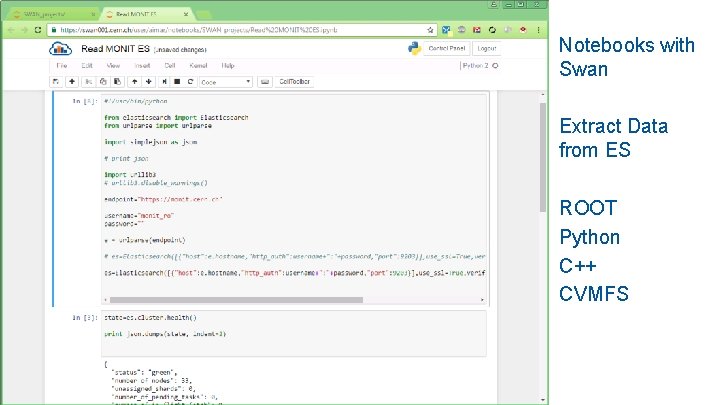

Notebooks with Swan Extract Data from ES ROOT Python C++ CVMFS 12/6/2020 32

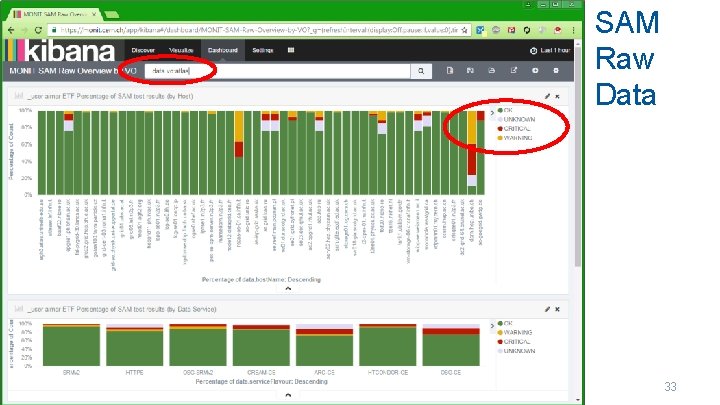

SAM Raw Data 12/6/2020 33

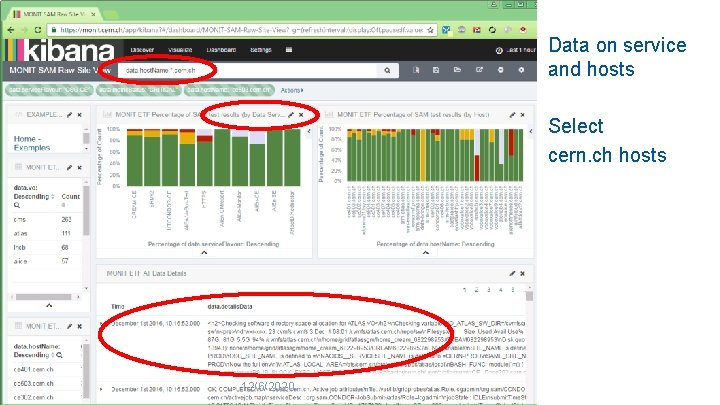

Data on service and hosts Select cern. ch hosts 12/6/2020 34

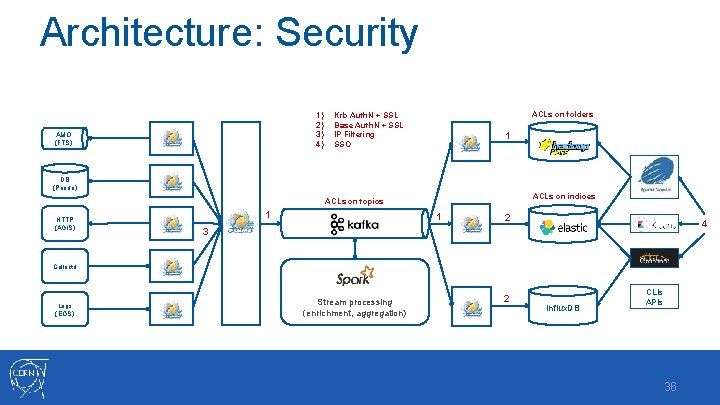

Architecture: Security 1) 2) 3) 4) AMQ (FTS) ACLs on folders Krb Auth. N + SSL Base Auth. N + SSL IP Filtering SSO 1 DB (Panda) ACLs on indices ACLs on topics HTTP (AGIS) 1 1 2 4 3 Collectd Logs (EOS) Stream processing (enrichment, aggregation) 2 Influx. DB CLIs APIs 36

- Slides: 36