HDFS on Kubernetes Deep Dive on Security and

HDFS on Kubernetes -Deep Dive on Security and Locality Kimoon Kim, Pepperdata Ilan Filonenko, Bloomberg LP

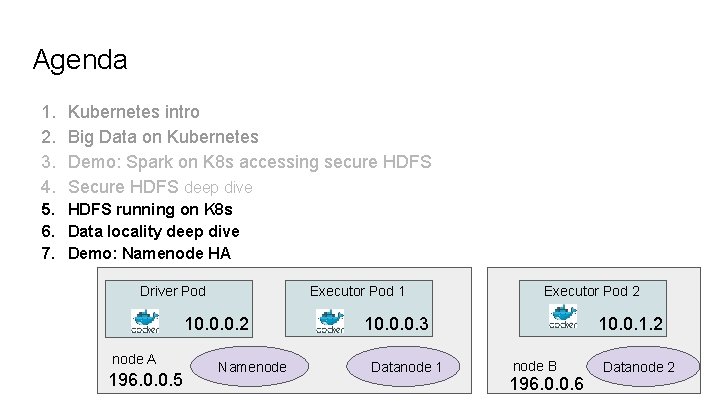

Agenda 1. 2. 3. 4. Kubernetes intro Big Data on Kubernetes Demo: Spark on K 8 s accessing secure HDFS Secure HDFS deep dive 5. HDFS running on K 8 s 6. Data locality deep dive 7. Demo: Namenode HA

Kubernetes New open-source cluster manager. - github. com/kubernetes Runs programs in Linux containers. app app libs kernel 1600+ contributors and 60, 000+ commits.

“My app was running fine until someone installed their software” DON’T TOUCH MY STUFF

More isolation is good Kubernetes provides each program with: ● a lightweight virtual file system -- Docker image ○ an independent set of S/W packages ● a virtual network interface ○ a unique virtual IP address ○ an entire range of ports

Other isolation layers ● Separate process ID space ● Max memory limit ● CPU share throttling ● Mountable volumes ○ Config files -- Config. Maps ○ Credentials -- Secrets ○ Local storages -- Empty. Dir, Host. Path ○ Network storages -- Persistent. Volumes

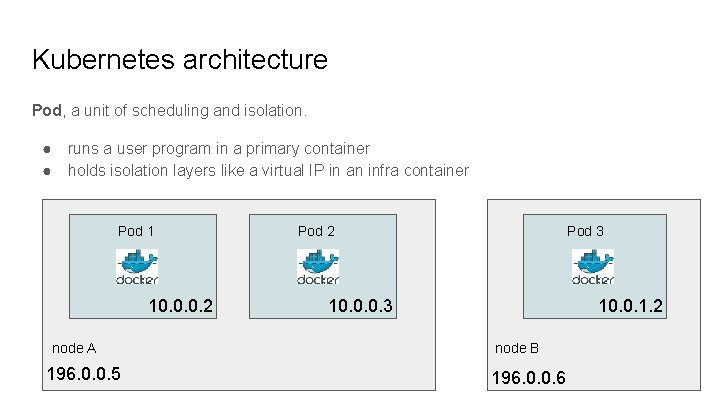

Kubernetes architecture Pod, a unit of scheduling and isolation. ● ● runs a user program in a primary container holds isolation layers like a virtual IP in an infra container Pod 1 10. 0. 0. 2 node A 196. 0. 0. 5 Pod 2 Pod 3 10. 0. 0. 3 10. 0. 1. 2 node B 196. 0. 0. 6

Big Data on Kubernetes github. com/apache-spark-on-k 8 s ● Bloomberg, Google, Haiwen, Hyperpilot, Intel, Palantir, Pepperdata, Red Hat, and growing ● Patching up Spark Driver and Executor code to work on Kubernetes. ● Upstreaming. Part of Spark 2. 3 -“Spark release 2. 3. 0. … Major features: Spark on Kubernetes: [SPARK-18278] A new kubernetes scheduler backend that supports native submission of spark jobs to a cluster managed by kubernetes. . ” Related talk: spark-summit. org/2017/events/apache-spark-on-kubernetes/

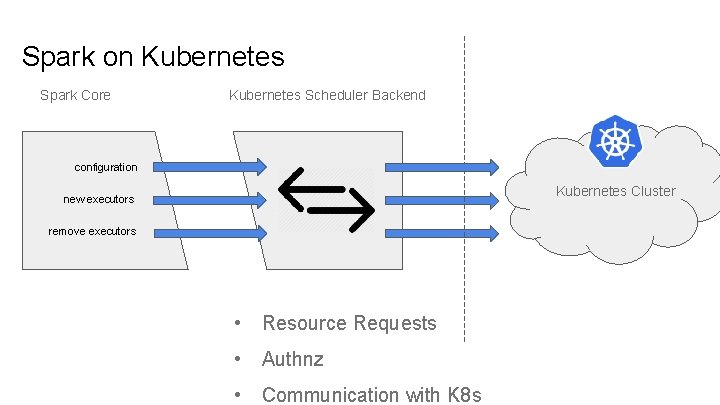

Spark on Kubernetes Spark Core Kubernetes Scheduler Backend configuration Kubernetes Cluster new executors remove executors • Resource Requests • Authnz • Communication with K 8 s

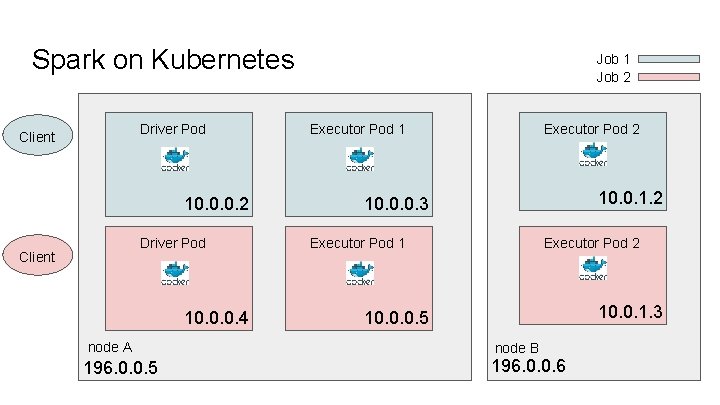

Spark on Kubernetes Driver Pod Client 10. 0. 0. 2 Driver Pod Client 10. 0. 0. 4 node A 196. 0. 0. 5 Job 1 Job 2 Executor Pod 1 Executor Pod 2 10. 0. 1. 2 10. 0. 0. 3 Executor Pod 1 Executor Pod 2 10. 0. 1. 3 10. 0. 0. 5 node B 196. 0. 0. 6

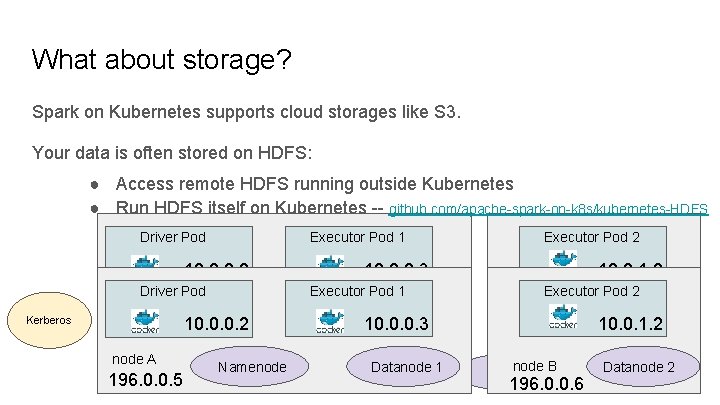

What about storage? Spark on Kubernetes supports cloud storages like S 3. Your data is often stored on HDFS: ● Access remote HDFS running outside Kubernetes ● Run HDFS itself on Kubernetes -- github. com/apache-spark-on-k 8 s/kubernetes-HDFS Driver Pod 10. 0. 0. 2 Driver Pod node A Kerberos 196. 0. 0. 5 10. 0. 0. 2 node A Namenode 196. 0. 0. 5 Executor Pod 1 Executor Pod 2 10. 0. 0. 3 Executor Pod 1 10. 0. 0. 3 Datanode 1 10. 0. 1. 2 Executor Pod 2 node B 196. 0. 0. 6 10. 0. 1. 2 node B 2 Datanode 196. 0. 0. 6 Datanode 2

Agenda 1. 2. 3. 4. Kubernetes intro Big Data on Kubernetes Demo: Spark on K 8 s accessing secure HDFS Secure HDFS deep dive 5. HDFS running on K 8 s 6. Data locality deep dive 7. Demo: Namenode HA

Demo: Spark k 8 s Accessing Secure HDFS Running a Spark Job on Kubernetes accessing Secure HDFS https: //github. com/ifilonenko/secure-hdfs-test

Security deep dive ● Kerberos tickets ● HDFS tokens ● Long running jobs ● Access Control of Secrets

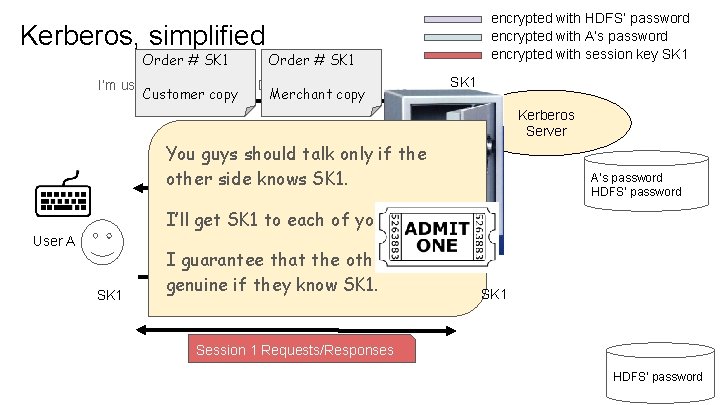

Kerberos, simplified Order # SK 1 encrypted with HDFS’ password encrypted with A’s password encrypted with session key SK 1 Order # SK 1 I’m user A. May I talk to HDFS? Customer copy Merchant copy SK 1 Kerberos Server SK 1 copy for HDFS You guys should talk only if the SK 1 copy for User A other side knows SK 1. A’s password HDFS’ password I’ll get SK 1 to each of you secretly. Ticket to HDFS User A SK 1 I guarantee thatforthe other side is SK 1 copy HDFS genuine if they know SK 1 Session 1 Requests/Responses HDFS’ password

HDFS Delegation Token Kerberos ticket, no good for executors on cluster nodes. ● Stamped with the client IP. Give tokens to driver and executors instead. ● Issued by namenode only if the client has a valid Kerberos ticket. ● No client IP stamped. ● Permit for driver and executors to use HDFS on your behalf across all cluster nodes.

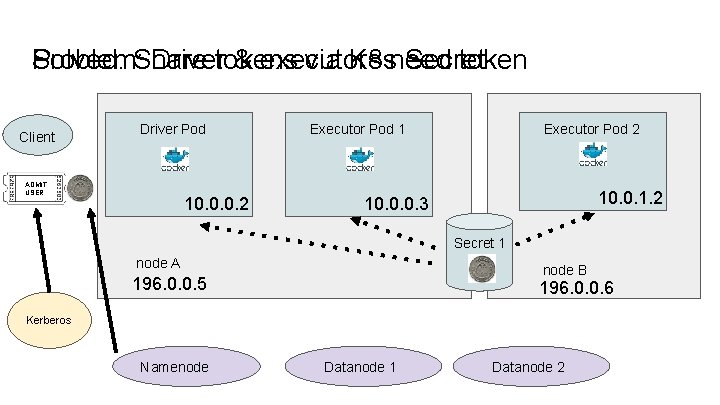

Solved: Share Problem: Drivertokens & executors via K 8 sneed Secret token Client Driver Pod ADMIT USER 10. 0. 0. 2 Executor Pod 1 Executor Pod 2 10. 0. 1. 2 10. 0. 0. 3 Secret 1 node A node B 196. 0. 0. 5 196. 0. 0. 6 Kerberos Namenode Datanode 1 Datanode 2

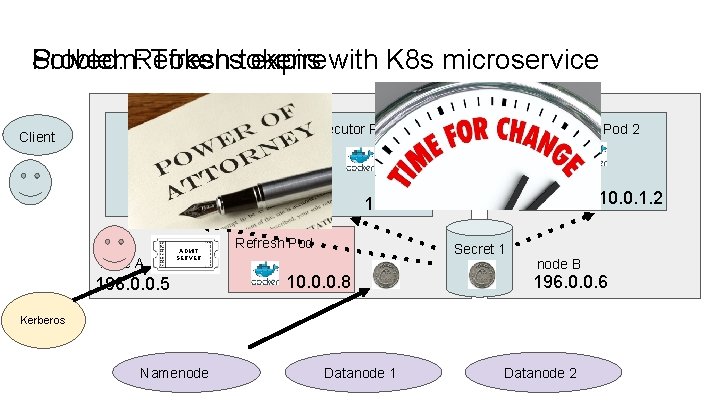

Solved: Refresh Problem: Tokenstokens expire with K 8 s microservice Client Driver Pod Executor Pod 1 10. 0. 0. 2 node A ADMIT SERVER 196. 0. 0. 5 Executor Pod 2 10. 0. 1. 2 10. 0. 0. 3 Refresh Pod Secret 1 10. 0. 0. 8 node B 196. 0. 0. 6 Kerberos Namenode Datanode 1 Datanode 2

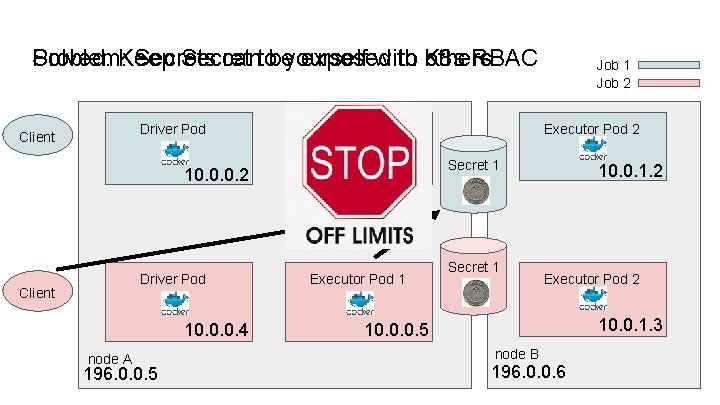

Solved: Keep Problem: Secrets Secret cantobeyourself exposed with to K 8 s others RBAC Driver Pod Client 10. 0. 0. 2 Driver Pod Client 10. 0. 0. 4 node A 196. 0. 0. 5 Executor Pod 1 10. 0. 0. 3 Executor Pod 1 Job 2 Executor Pod 2 Secret 1 10. 0. 1. 2 Executor Pod 2 10. 0. 1. 3 10. 0. 0. 5 node B 196. 0. 0. 6

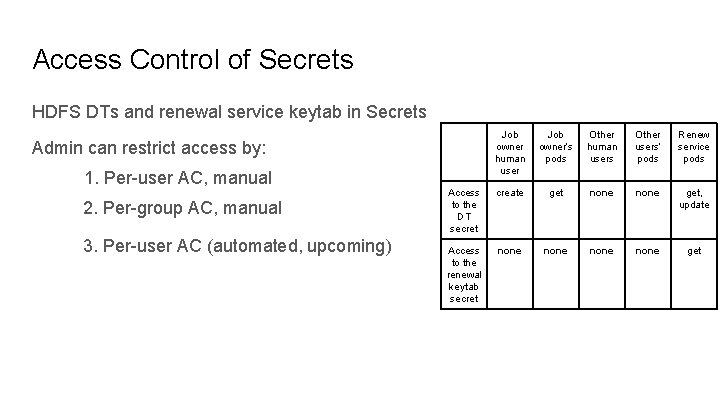

Access Control of Secrets HDFS DTs and renewal service keytab in Secrets Job owner human user Job owner’s pods Other human users Other users’ pods Renew service pods Access to the DT secret create get none get, update Access to the renewal keytab secret none get Admin can restrict access by: 1. Per-user AC, manual 2. Per-group AC, manual 3. Per-user AC (automated, upcoming)

Demo: Spark k 8 s Accessing Secure HDFS Running a Spark Job on Kubernetes accessing Secure HDFS https: //github. com/ifilonenko/secure-hdfs-test

Agenda 1. 2. 3. 4. Kubernetes intro Big Data on Kubernetes Demo: Spark on K 8 s accessing secure HDFS Secure HDFS deep dive 5. HDFS running on K 8 s 6. Data locality deep dive 7. Demo: Namenode HA Driver Pod node A 196. 0. 0. 5 10. 0. 0. 2 node A Namenode 196. 0. 0. 5 Executor Pod 1 10. 0. 0. 3 Datanode 1 Executor Pod 2 node B 196. 0. 0. 6 10. 0. 1. 2 node B 196. 0. 0. 6 Datanode 2

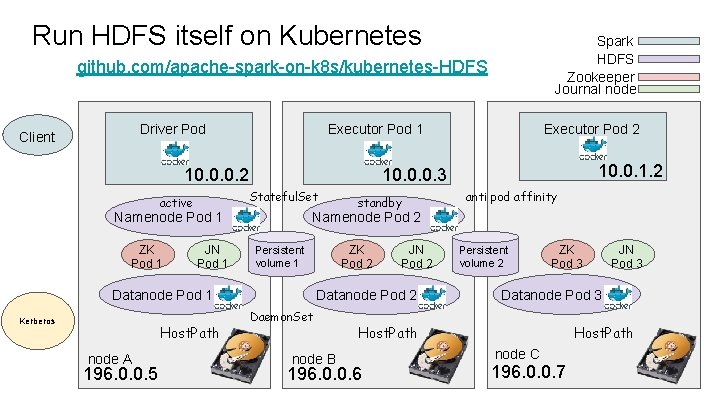

Run HDFS itself on Kubernetes Spark HDFS Zookeeper Journal node github. com/apache-spark-on-k 8 s/kubernetes-HDFS Driver Pod Client Executor Pod 1 10. 0. 0. 2 Namenode Pod 1 ZK Pod 1 JN Pod 1 Host. Path node A 196. 0. 0. 5 standby anti pod affinity Namenode Pod 2 ZK Pod 2 Persistent volume 1 Datanode Pod 1 Kerberos 10. 0. 1. 2 10. 0. 0. 3 Stateful. Set active Executor Pod 2 JN Pod 2 Datanode Pod 2 Daemon. Set node B Persistent volume 2 ZK Pod 3 Datanode Pod 3 Host. Path 196. 0. 0. 6 JN Pod 3 Host. Path node C 196. 0. 0. 7

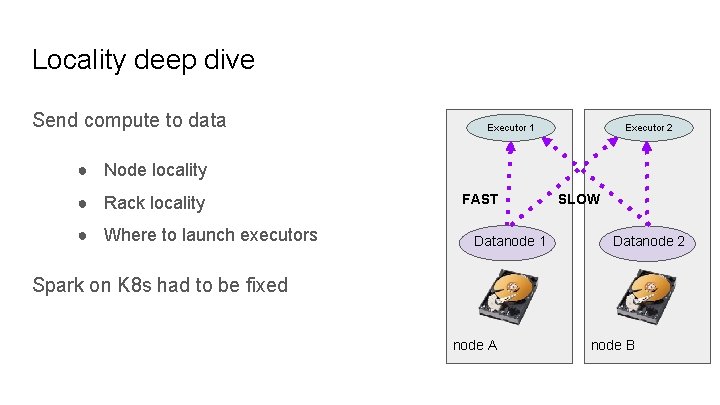

Locality deep dive Send compute to data Executor 1 Executor 2 ● Node locality ● Rack locality ● Where to launch executors FAST Datanode 1 SLOW Datanode 2 Spark on K 8 s had to be fixed node A node B

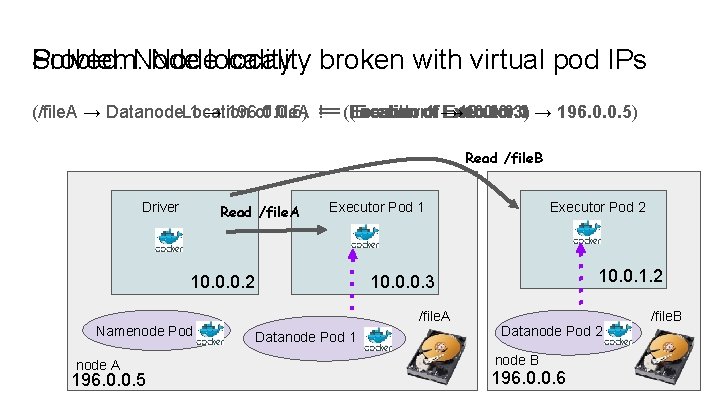

Problem: Node Solved: Nodelocality broken with virtual pod IPs (/file. A → Datanode. Location 1 → 196. 0. 0. 5) of file. A == != == (Executor Location of of 11→ 10. 0. 0. 3) Executor → 10. 0. 0. 3 1 → 196. 0. 0. 5) Read /file. B Driver Read /file. A Executor Pod 1 10. 0. 0. 2 Namenode Pod node A 196. 0. 0. 5 Executor Pod 2 10. 0. 1. 2 10. 0. 0. 3 /file. A Datanode Pod 1 Datanode Pod 2 node B 196. 0. 0. 6 /file. B

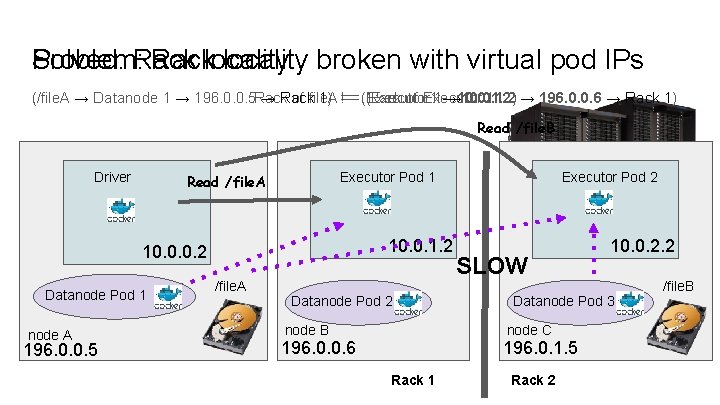

Problem: Rack Solved: Racklocality broken with virtual pod IPs (/file. A → Datanode 1 → 196. 0. 0. 5 Rack → Rack of file. A 1) == != == (Executor Rack of Executor 11→ 10. 0. 1. 2) → 10. 0. 1. 2 1 → 196. 0. 0. 6 → Rack 1) Read /file. B Driver Executor Pod 1 Read /file. A 10. 0. 1. 2 10. 0. 0. 2 Datanode Pod 1 node A 196. 0. 0. 5 /file. A Datanode Pod 2 node B Executor Pod 2 SLOW Datanode Pod 3 node C 196. 0. 0. 6 196. 0. 1. 5 Rack 1 10. 0. 2. 2 Rack 2 /file. B

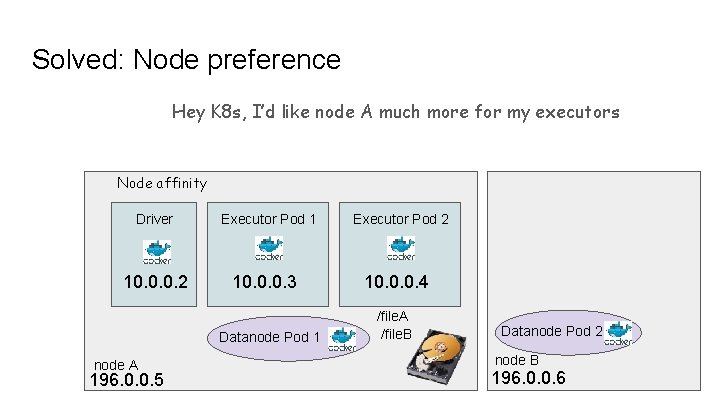

Solved: Node preference Hey K 8 s, I’d like node A much more for my executors Node affinity Driver 10. 0. 0. 2 Executor Pod 1 10. 0. 0. 3 Datanode Pod 1 node A 196. 0. 0. 5 Executor Pod 2 10. 0. 0. 4 /file. A /file. B Datanode Pod 2 node B 196. 0. 0. 6

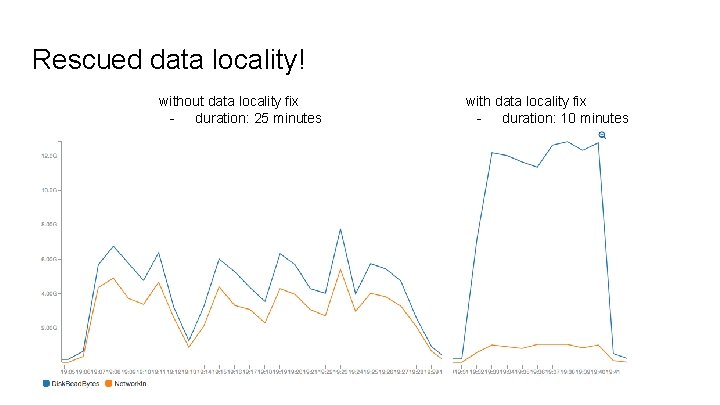

Rescued data locality! without data locality fix - duration: 25 minutes with data locality fix - duration: 10 minutes

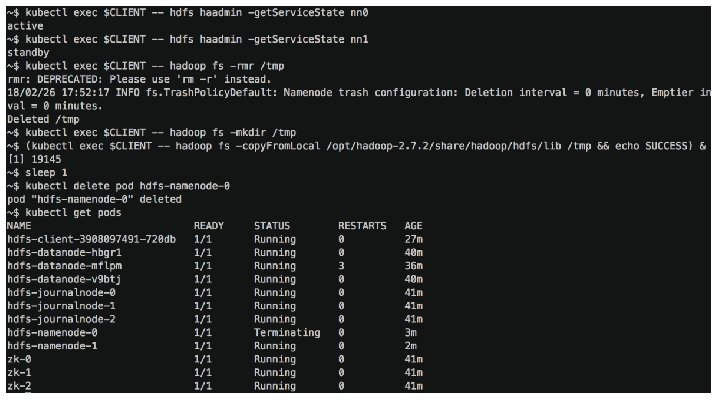

Demo - Namenode HA Source code in github. com/apache-spark-on-k 8 s/kubernetes-HDFS/pull/33 - Launch HDFS pods (8 x fast-forward) Copy data while killing a namenode (normal-speed, slow-motion)

Join us! github. com/apache-spark-on-k 8 s Kimoon Kim, Pepperdata Ilan Filonenko, Bloomberg LP

Appendix

Hadoop Cluster Setup Launching: single-noded, pseudo-distributed, kerberized HC https: //github. com/ifilonenko/hadoop-kerberos-helm

Access Control of Secrets HDFS DTs and renewal service keytab in Secrets Admin can restrict access by: 1. Creating a Namespace per user 2. Creating a Namespace per user-group 3. Creating a Namespace per user-group w/ prepopulated secrets per user 4. Creating a Namespace per user-group w/ authorization plugin 5. Creating a Namespace per user-group w/ a controller pod that creates label-based ACLs

- Slides: 34