Kubernetes Modifications for GPUs Sanjeev Mehrotra Kubernetes resource

Kubernetes Modifications for GPUs Sanjeev Mehrotra

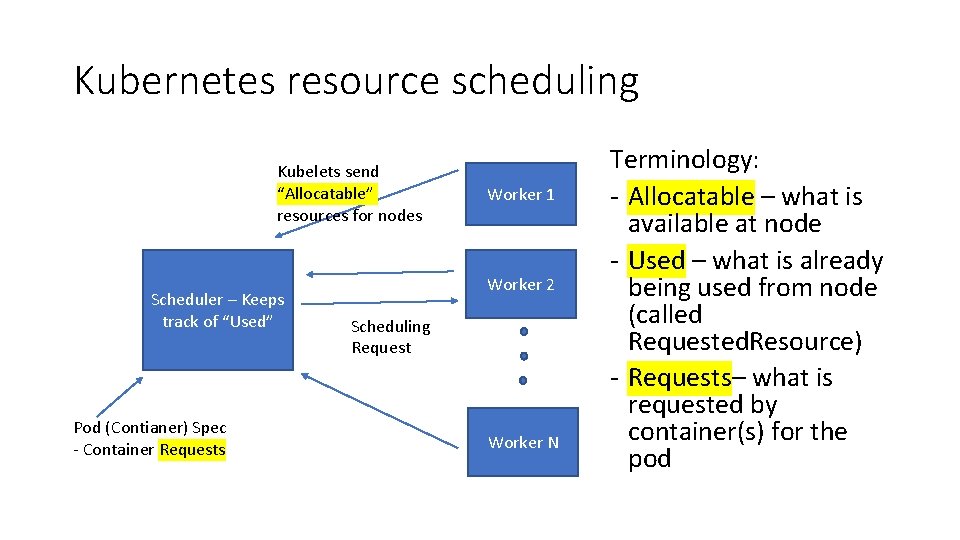

Kubernetes resource scheduling Kubelets send “Allocatable” resources for nodes Scheduler – Keeps track of “Used” Pod (Contianer) Spec - Container Requests Worker 1 Worker 2 Scheduling Request Worker N Terminology: - Allocatable – what is available at node - Used – what is already being used from node (called Requested. Resource) - Requests– what is requested by container(s) for the pod

Resources • All resources (allocatable, used, and requests) are represented as a “Resource. List” which is simply a list of key-value pairs, e. g. memory cpu : 64 Gi. B : 8

Simple scheduling 1. Find worker nodes that can “fit” a pod spec • plugin/pkg/scheduler/algorithm/predicates 2. Prioritize list of nodes • plugin/pkg/scheduler/algorithm/priorities 3. Try to schedule pod on node – node may have additional admission policy so pod may fail 4. If fails, try next node on list

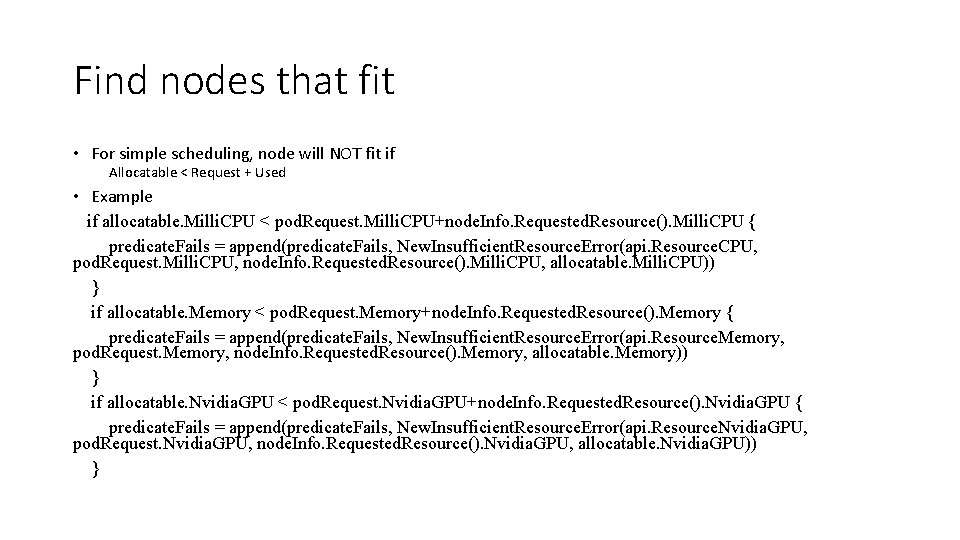

Find nodes that fit • For simple scheduling, node will NOT fit if Allocatable < Request + Used • Example if allocatable. Milli. CPU < pod. Request. Milli. CPU+node. Info. Requested. Resource(). Milli. CPU { predicate. Fails = append(predicate. Fails, New. Insufficient. Resource. Error(api. Resource. CPU, pod. Request. Milli. CPU, node. Info. Requested. Resource(). Milli. CPU, allocatable. Milli. CPU)) } if allocatable. Memory < pod. Request. Memory+node. Info. Requested. Resource(). Memory { predicate. Fails = append(predicate. Fails, New. Insufficient. Resource. Error(api. Resource. Memory, pod. Request. Memory, node. Info. Requested. Resource(). Memory, allocatable. Memory)) } if allocatable. Nvidia. GPU < pod. Request. Nvidia. GPU+node. Info. Requested. Resource(). Nvidia. GPU { predicate. Fails = append(predicate. Fails, New. Insufficient. Resource. Error(api. Resource. Nvidia. GPU, pod. Request. Nvidia. GPU, node. Info. Requested. Resource(). Nvidia. GPU, allocatable. Nvidia. GPU)) }

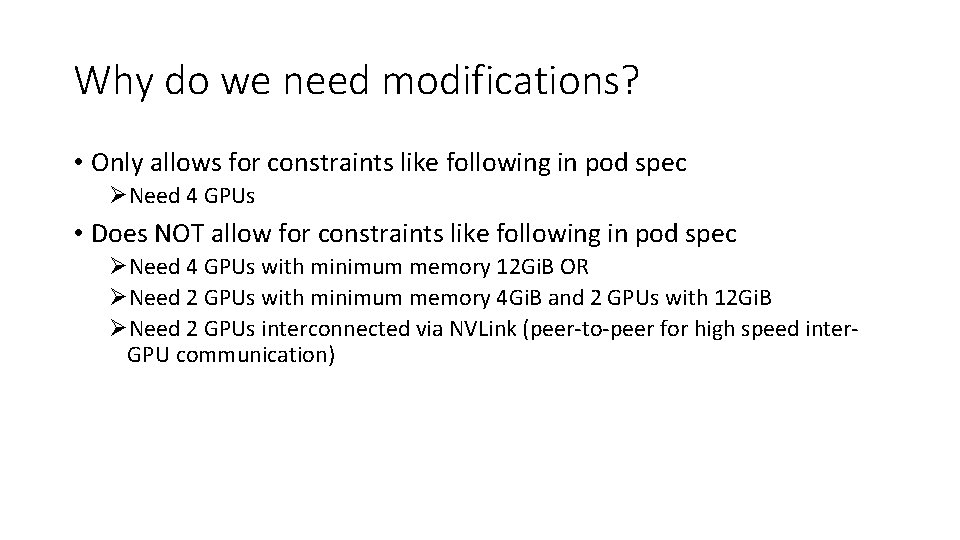

Why do we need modifications? • Only allows for constraints like following in pod spec ØNeed 4 GPUs • Does NOT allow for constraints like following in pod spec ØNeed 4 GPUs with minimum memory 12 Gi. B OR ØNeed 2 GPUs with minimum memory 4 Gi. B and 2 GPUs with 12 Gi. B ØNeed 2 GPUs interconnected via NVLink (peer-to-peer for high speed inter. GPU communication)

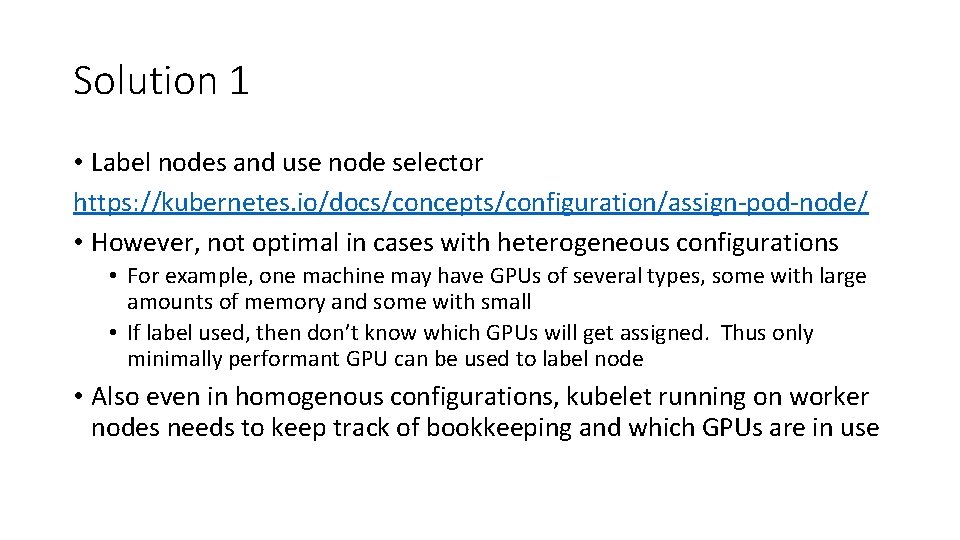

Solution 1 • Label nodes and use node selector https: //kubernetes. io/docs/concepts/configuration/assign-pod-node/ • However, not optimal in cases with heterogeneous configurations • For example, one machine may have GPUs of several types, some with large amounts of memory and some with small • If label used, then don’t know which GPUs will get assigned. Thus only minimally performant GPU can be used to label node • Also even in homogenous configurations, kubelet running on worker nodes needs to keep track of bookkeeping and which GPUs are in use

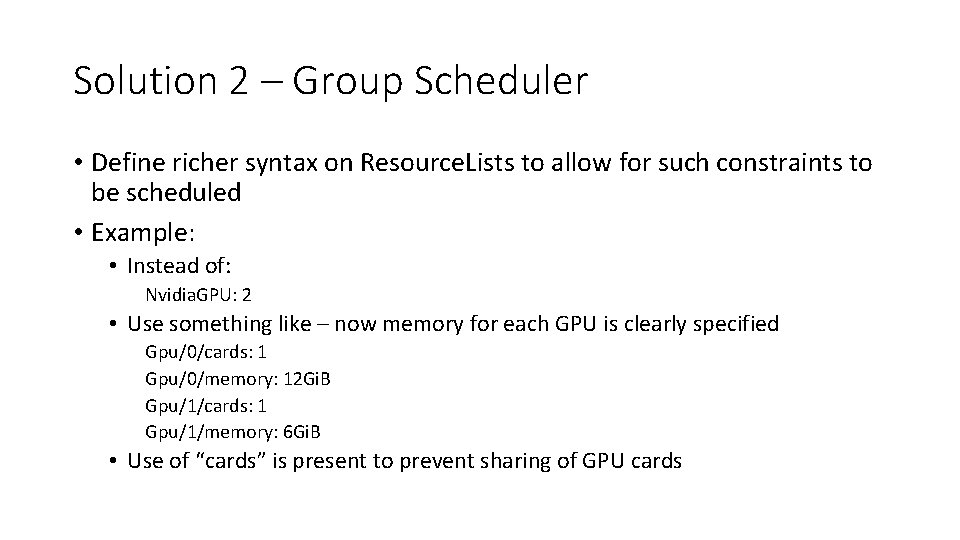

Solution 2 – Group Scheduler • Define richer syntax on Resource. Lists to allow for such constraints to be scheduled • Example: • Instead of: Nvidia. GPU: 2 • Use something like – now memory for each GPU is clearly specified Gpu/0/cards: 1 Gpu/0/memory: 12 Gi. B Gpu/1/cards: 1 Gpu/1/memory: 6 Gi. B • Use of “cards” is present to prevent sharing of GPU cards

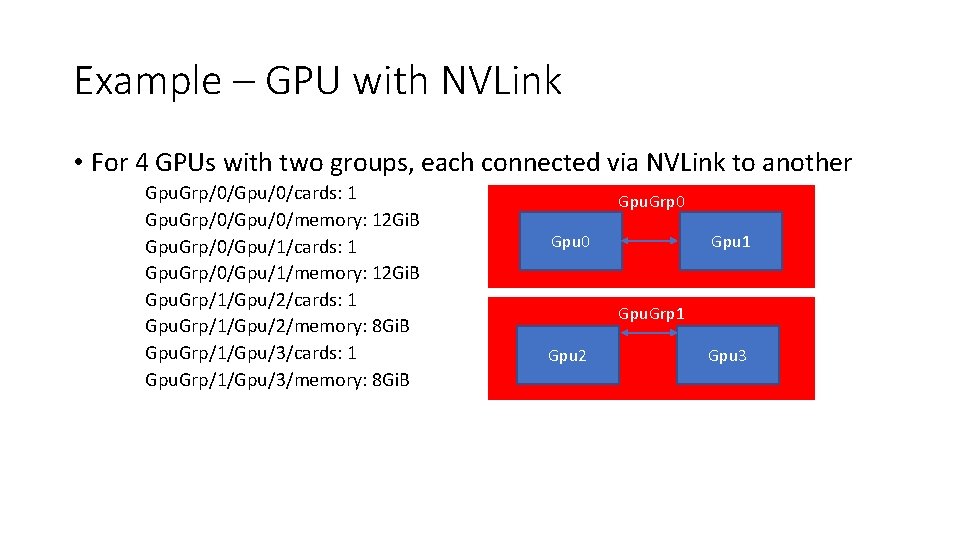

Example – GPU with NVLink • For 4 GPUs with two groups, each connected via NVLink to another Gpu. Grp/0/Gpu/0/cards: 1 Gpu. Grp/0/Gpu/0/memory: 12 Gi. B Gpu. Grp/0/Gpu/1/cards: 1 Gpu. Grp/0/Gpu/1/memory: 12 Gi. B Gpu. Grp/1/Gpu/2/cards: 1 Gpu. Grp/1/Gpu/2/memory: 8 Gi. B Gpu. Grp/1/Gpu/3/cards: 1 Gpu. Grp/1/Gpu/3/memory: 8 Gi. B Gpu. Grp 0 Gpu 1 Gpu. Grp 1 Gpu 2 Gpu 3

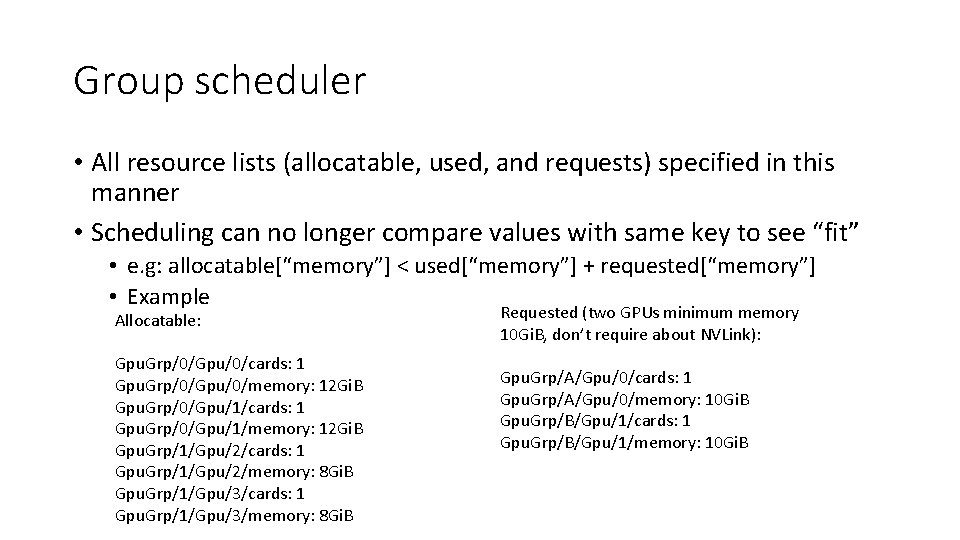

Group scheduler • All resource lists (allocatable, used, and requests) specified in this manner • Scheduling can no longer compare values with same key to see “fit” • e. g: allocatable[“memory”] < used[“memory”] + requested[“memory”] • Example Allocatable: Gpu. Grp/0/Gpu/0/cards: 1 Gpu. Grp/0/Gpu/0/memory: 12 Gi. B Gpu. Grp/0/Gpu/1/cards: 1 Gpu. Grp/0/Gpu/1/memory: 12 Gi. B Gpu. Grp/1/Gpu/2/cards: 1 Gpu. Grp/1/Gpu/2/memory: 8 Gi. B Gpu. Grp/1/Gpu/3/cards: 1 Gpu. Grp/1/Gpu/3/memory: 8 Gi. B Requested (two GPUs minimum memory 10 Gi. B, don’t require about NVLink): Gpu. Grp/A/Gpu/0/cards: 1 Gpu. Grp/A/Gpu/0/memory: 10 Gi. B Gpu. Grp/B/Gpu/1/cards: 1 Gpu. Grp/B/Gpu/1/memory: 10 Gi. B

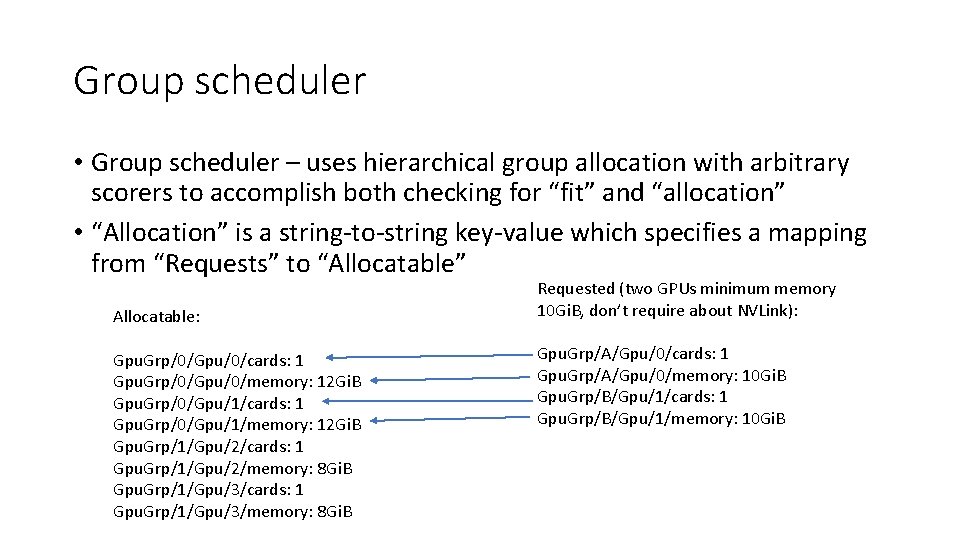

Group scheduler • Group scheduler – uses hierarchical group allocation with arbitrary scorers to accomplish both checking for “fit” and “allocation” • “Allocation” is a string-to-string key-value which specifies a mapping from “Requests” to “Allocatable” Allocatable: Gpu. Grp/0/Gpu/0/cards: 1 Gpu. Grp/0/Gpu/0/memory: 12 Gi. B Gpu. Grp/0/Gpu/1/cards: 1 Gpu. Grp/0/Gpu/1/memory: 12 Gi. B Gpu. Grp/1/Gpu/2/cards: 1 Gpu. Grp/1/Gpu/2/memory: 8 Gi. B Gpu. Grp/1/Gpu/3/cards: 1 Gpu. Grp/1/Gpu/3/memory: 8 Gi. B Requested (two GPUs minimum memory 10 Gi. B, don’t require about NVLink): Gpu. Grp/A/Gpu/0/cards: 1 Gpu. Grp/A/Gpu/0/memory: 10 Gi. B Gpu. Grp/B/Gpu/1/cards: 1 Gpu. Grp/B/Gpu/1/memory: 10 Gi. B

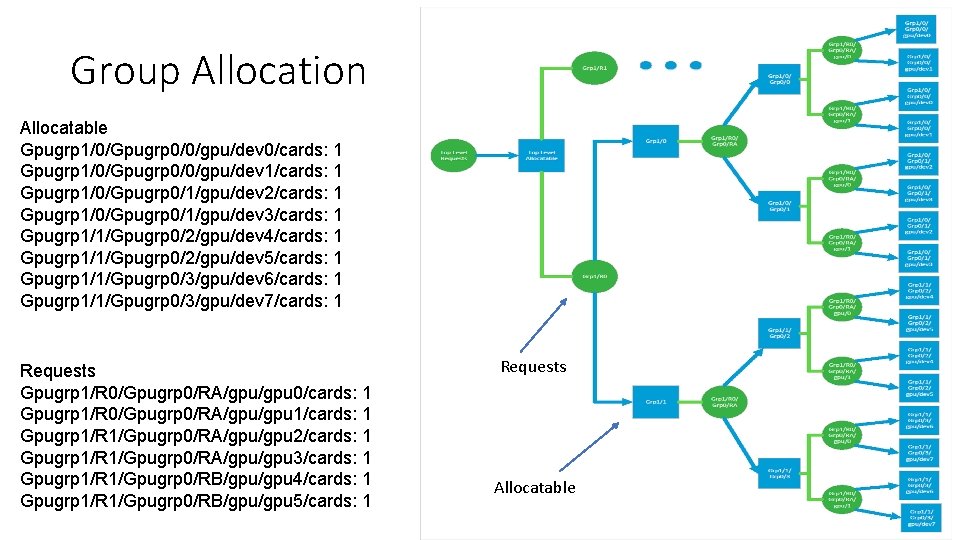

Group Allocation Allocatable Gpugrp 1/0/Gpugrp 0/0/gpu/dev 0/cards: 1 Gpugrp 1/0/Gpugrp 0/0/gpu/dev 1/cards: 1 Gpugrp 1/0/Gpugrp 0/1/gpu/dev 2/cards: 1 Gpugrp 1/0/Gpugrp 0/1/gpu/dev 3/cards: 1 Gpugrp 1/1/Gpugrp 0/2/gpu/dev 4/cards: 1 Gpugrp 1/1/Gpugrp 0/2/gpu/dev 5/cards: 1 Gpugrp 1/1/Gpugrp 0/3/gpu/dev 6/cards: 1 Gpugrp 1/1/Gpugrp 0/3/gpu/dev 7/cards: 1 Requests Gpugrp 1/R 0/Gpugrp 0/RA/gpu 0/cards: 1 Gpugrp 1/R 0/Gpugrp 0/RA/gpu 1/cards: 1 Gpugrp 1/R 1/Gpugrp 0/RA/gpu 2/cards: 1 Gpugrp 1/R 1/Gpugrp 0/RA/gpu 3/cards: 1 Gpugrp 1/R 1/Gpugrp 0/RB/gpu 4/cards: 1 Gpugrp 1/R 1/Gpugrp 0/RB/gpu 5/cards: 1 Requests Allocatable

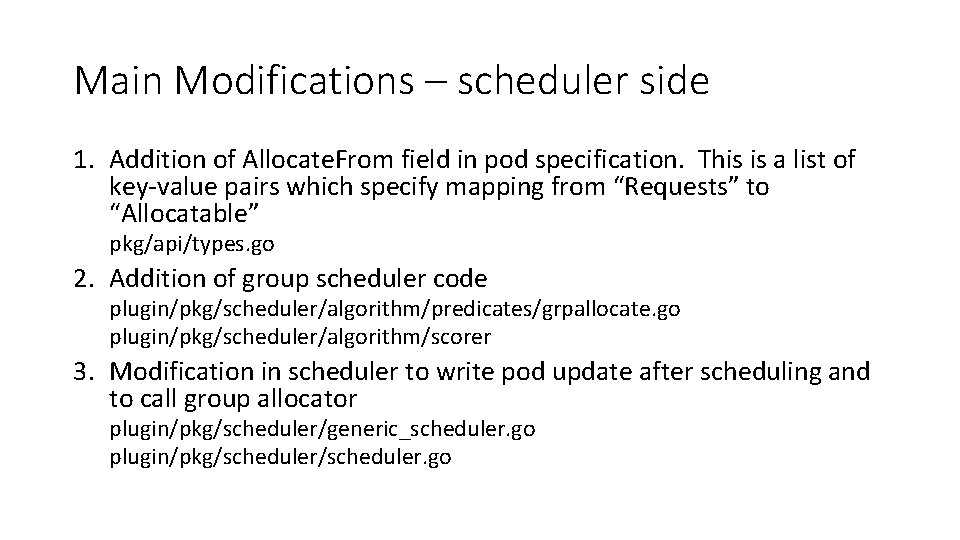

Main Modifications – scheduler side 1. Addition of Allocate. From field in pod specification. This is a list of key-value pairs which specify mapping from “Requests” to “Allocatable” pkg/api/types. go 2. Addition of group scheduler code plugin/pkg/scheduler/algorithm/predicates/grpallocate. go plugin/pkg/scheduler/algorithm/scorer 3. Modification in scheduler to write pod update after scheduling and to call group allocator plugin/pkg/scheduler/generic_scheduler. go plugin/pkg/scheduler. go

Kubelet modifications • Existing multi-GPU code makes the kubelet do the work of keeping track of which GPUs are available and uses /dev/nvidia* to see number of devices, both of which are hacks • With addition of “Allocate. From” field, scheduler decides which GPUs to use and keeps track of which ones are in use.

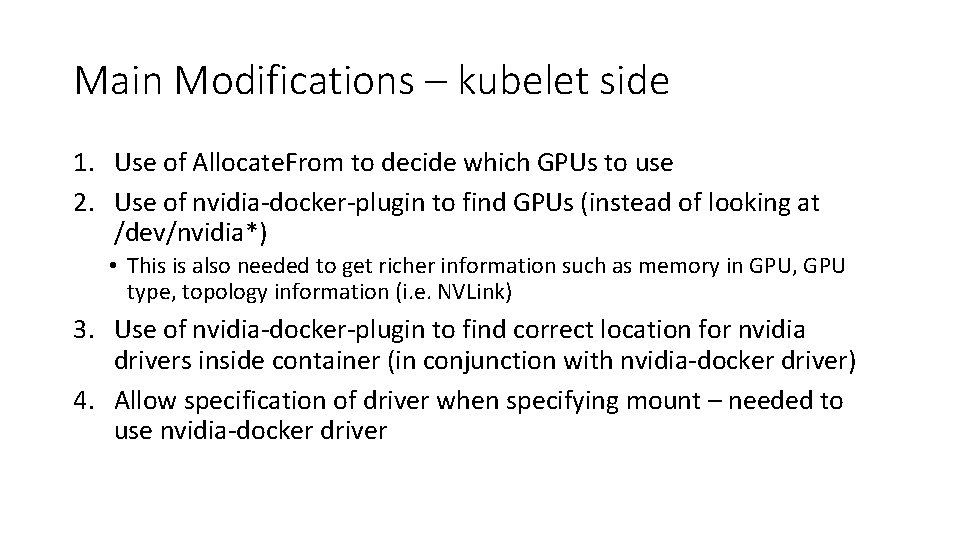

Main Modifications – kubelet side 1. Use of Allocate. From to decide which GPUs to use 2. Use of nvidia-docker-plugin to find GPUs (instead of looking at /dev/nvidia*) • This is also needed to get richer information such as memory in GPU, GPU type, topology information (i. e. NVLink) 3. Use of nvidia-docker-plugin to find correct location for nvidia drivers inside container (in conjunction with nvidia-docker driver) 4. Allow specification of driver when specifying mount – needed to use nvidia-docker driver

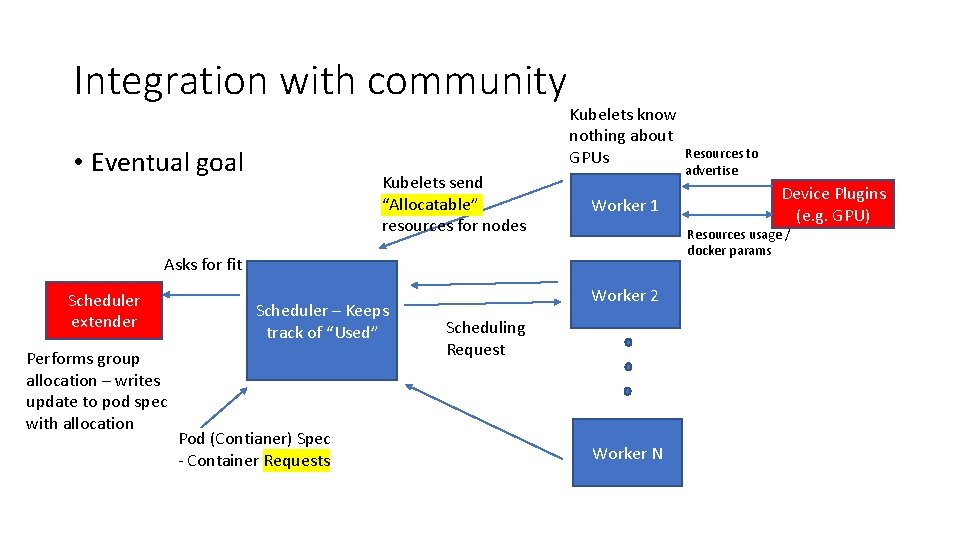

Integration with community • Eventual goal Kubelets send “Allocatable” resources for nodes Kubelets know nothing about GPUs Worker 1 Performs group allocation – writes update to pod spec with allocation Scheduler – Keeps track of “Used” Pod (Contianer) Spec - Container Requests Device Plugins (e. g. GPU) Resources usage / docker params Asks for fit Scheduler extender Resources to advertise Worker 2 Scheduling Request Worker N

Needed in Kubernetes core • We will need a few things in order to achieve separation with core which will allow for directly using latest Kubernetes binaries • Resource Class, scheduled for v 1. 9 will allow for non-identity mappings between requests and allocatable • Device plugins and native Nvidia GPU support is v 1. 13 for now https: //docs. google. com/a/google. com/spreadsheets/d/1 NWar. Igt. SLsq 3 izc 5 w. Oz. V 7 Itdh. DNRd-6 o. BVawmvs-LGw

Other future Kubernetes/Scheduler work • Pod placement using other constraints such as pod-level constraints or higher (e. g. multiple pods for distributed training) • For example, networking constraints for distributed training when scheduling • Container networking for faster cross-pod communication (e. g. using RDMA / IB)

- Slides: 18