Factor Analysis Lua Augustin Savannah Guo and Blair

Factor Analysis Lua Augustin, Savannah Guo, and Blair Marquardt

Learning Outcomes To understand: 1. What is factor analysis. 2. What is its model. 3. Latent vs. observable variables; examples of each. 4. Potential applications of factor analysis. 5. Assumptions of factor analysis. 6. How to perform an analysis using R.

Big Picture – What is Factor Analysis?

What can we do with it? Originated in psychometrics as a tool to understand human thought and behavior. Some applications of Factor Analysis: - Development of a scale or questionnaire - Development or corroboration of theory - Prediction of some other variable as a function of the modeled latent variables

Unobservable vs. Observable Variables Latent variable: “. . . variables that are not directly observed but are rather inferred from other variables that are directly measured. . . ” (Wikipedia) DNA exists but it is unobservable. DNA predicts observable traits. Unobservable Observable DNA* eye color, hair type Depression suicidal thoughts, insomnia * DNA used to be unobservable

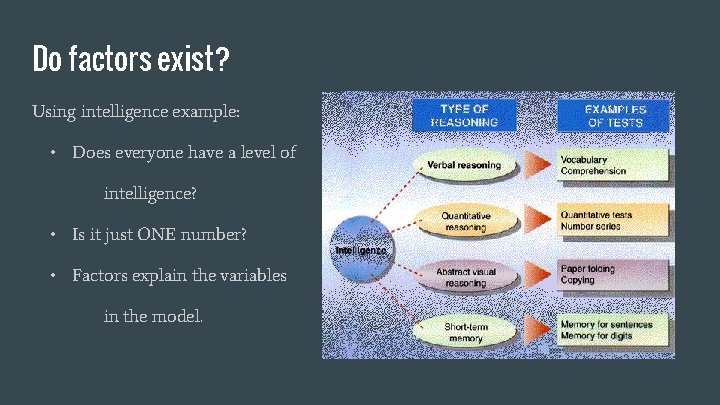

Do factors exist? Using intelligence example: • Does everyone have a level of intelligence? • Is it just ONE number? • Factors explain the variables in the model.

Unobservable vs. Observable Variables Our model assumes. . . § Latent variables are unobservable but correlated with observable variables. § Use data from observable variables to infer information about unobservable variables. § Examine the covariance matrix of observable variables.

What do the data look like? Formula: X = LF + Ɛ Let’s look at a basic matrix that applies to any example.

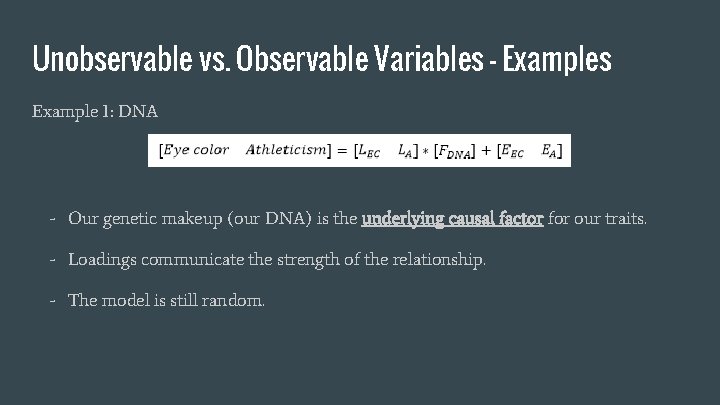

Unobservable vs. Observable Variables - Examples Example 1: DNA - Our genetic makeup (our DNA) is the underlying causal factor for our traits. - Loadings communicate the strength of the relationship. - The model is still random.

Unobservable vs. Observable Variables - Examples Example 2: Boss appreciation (factor) - Observed variables (outward manifestations of your latent appreciation): Performance evaluation rating, absenteeism, survey responses. - Unobserved variable: How much you appreciate your boss. - When the F is high, all X’s tend to be high; vice versa.

Unobservable vs. Observable Variables - Examples Example 3: Depression and anxiety* - Observed variables: Insomnia, suicidal thoughts, nausea, and hyperventilation Cov(Ins, Suic) = 0. 3 - Unobserved: Depression, anxiety (cause the variance and covariance between the observed variables) *Example courtesy of Ben Lambert (see References)

Unobservable vs. Observable Variables - Examples Example 4: Student evaluation data - Observed: faculty expertise rating, teaching ability rating - Unobserved: satisfaction with teacher

Assumptions of Factor Analysis 1. Latent factors exist. - Think back to our examples - this is an easier sell for some examples than for others. - Ex: Can we really boil depression down into a single value? That’s what our model assumes! 2. The observed variables are linearly related to the factors. - Evident in our model xi-μi = li 1 F 1+ … + lik. Fk + Ɛi.

Assumptions of Factor Analysis 3. The model is invariant across subjects. - The same loadings and error variance apply for all subjects - Implies the factors affect all people equally, subject only to random error. Reasonable? 4. No association between the factor and measurement error. - Cov(F, ε) = 0 - This is automatically true if L is the regression coefficient matrix.

Assumptions of Factor Analysis 5. No association between the errors (uncorrelated errors). - Implies all items are “independent” measures of the common factor. Reasonable? 6. The factors (if we include more than one) are uncorrelated with each other. - Cov(F) = I. Key distinction between EFA and CFA. 7. Multivariate normally distributed observed variables.

The Steps to Factor Analysis 1. Obtain a multivariate dataset having numeric columns. 2. Estimate (rotated) models for various pre-defined numbers of factors. a. Goal: observed covariance matrix (of Xs) as close as possible to the implied covariance matrix b. Maximum likelihood methods typically work well. 3. Choose a particular model (number of factors). a. Based primarily on interpretability. b. Aided by objective statistical measures.

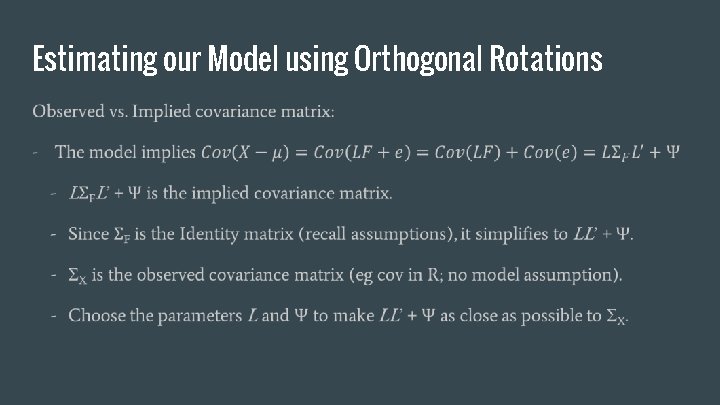

Estimating our Model using Orthogonal Rotations

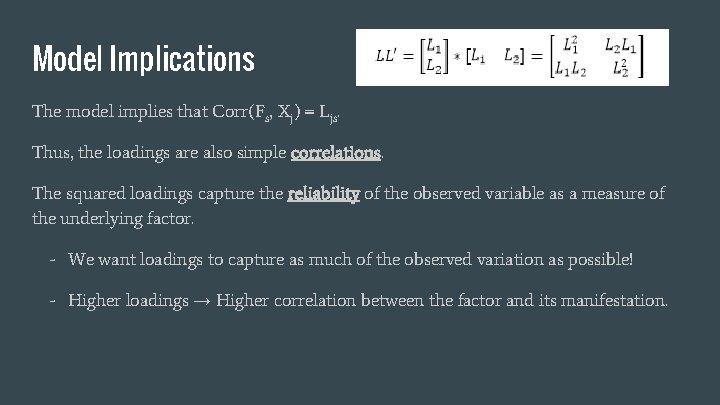

Model Implications The model implies that Corr(Fs, Xj) = Ljs. Thus, the loadings are also simple correlations. The squared loadings capture the reliability of the observed variable as a measure of the underlying factor. - We want loadings to capture as much of the observed variation as possible! - Higher loadings → Higher correlation between the factor and its manifestation.

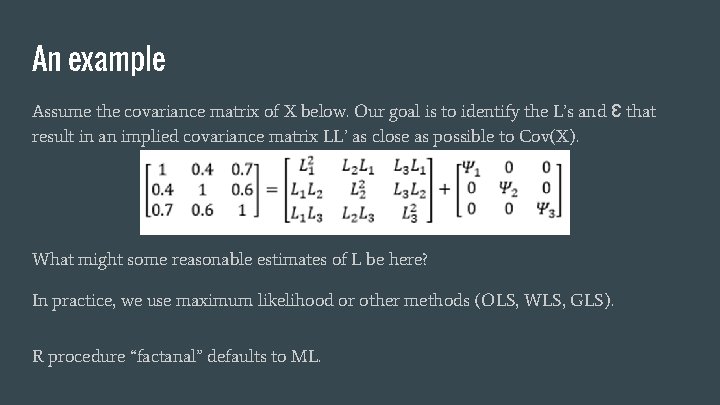

An example Assume the covariance matrix of X below. Our goal is to identify the L’s and Ɛ that result in an implied covariance matrix LL’ as close as possible to Cov(X). What might some reasonable estimates of L be here? In practice, we use maximum likelihood or other methods (OLS, WLS, GLS). R procedure “factanal” defaults to ML.

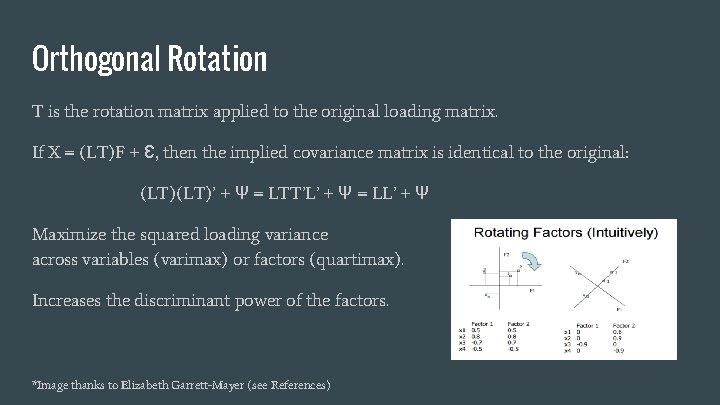

Orthogonal Rotation T is the rotation matrix applied to the original loading matrix. If X = (LT)F + Ɛ, then the implied covariance matrix is identical to the original: (LT)’ + Ψ = LTT’L’ + Ψ = LL’ + Ψ Maximize the squared loading variance across variables (varimax) or factors (quartimax). Increases the discriminant power of the factors. *Image thanks to Elizabeth Garrett-Mayer (see References)

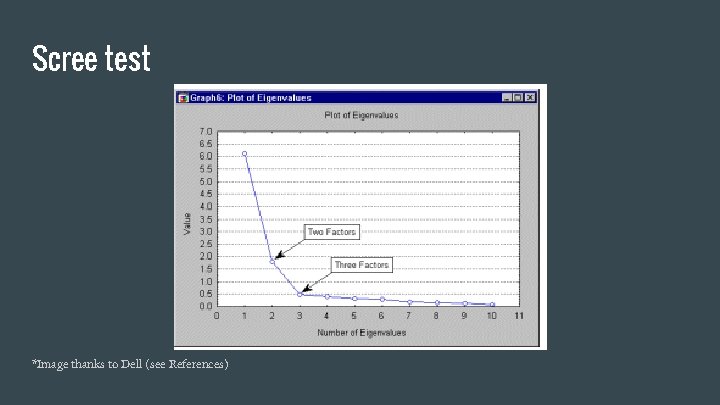

Which factors do we keep? Factor analysis is a “data reduction” method, in that we desire fewer factors than observed variables. 1. Theory basis: a. Theory suggests relevant factors. b. Use judgment to label the factors based on heavy loadings. 2. Mathematical basis: a. Kaiser criterion: Keep factors with eigenvalue greater than 1 (ugly rule of thumb) b. Scree test

Scree test *Image thanks to Dell (see References)

Exploratory vs. Confirmatory Factor Analysis - EFA used when no a priori hypothesis of factors exists: - All factors are uncorrelated. - CFA used to test for consistency in a priori hypothesis: - Allows for interfactor correlations based on theory. - Pre-specify factors and loadings. Specify some loadings as zero, based on theory.

References Carpita, M. , Brentari, E. , & Qannari, E. M. (Eds. ). (2015). Advances in Latent Variables: Methods, Models and Applications. Springer. Floyd, F. J. , & Widaman, K. F. (1995). Factor analysis in the development and refinement of clinical assessment instruments. Psychological assessment, 7(3), 286. Westfall, P. H. , Henning, K. S. , & Howell, R. D. (2012). The effect of error correlation on interfactor correlation in psychometric measurement. Structural Equation Modeling: A Multidisciplinary Journal, 19(1), 99 -117. Factor analysis (n. d. ) In Wikipedia. Retrieved 22 September 2015 from https: //en. wikipedia. org/wiki/Factor_analysis

References cont’d Exploratory factor analysis. In Wikipedia. Retrieved 22 September 2015 from https: //en. wikipedia. org/wiki/Exploratory_factor_analysis Latent variable (n. d. ) In Wikipedia. Retrieved 22 September 2015 from https: //en. wikipedia. org/wiki/Latent_variable Lambert, Ben. (published on Feb 20, 2014). Factor Analysis - an introduction. Retrieved 22 September 2015 from https: //www. youtube. com/watch? v=WV_jca. DBZ 2 I Lambert, Ben. (published on Feb 20, 2014). Factor Analysis - model representation. . Retrieved 22 September 2015 from https: //www. youtube. com/watch? v=Te. Ix 7 d. Redkg

References cont’d Lambert, Ben. (published on Feb 20, 2014). Factor Analysis - assumptions. . Retrieved 22 September 2015 from https: //www. youtube. com/watch? v=Pgqi. Bezo. AUA Principal Components Factor Analysis. Retrieved on 22 September 2015 from http: //documents. software. dell. com/Statistics/Textbook/Principal-Components-Factor-Analysis Garret-Mayer, Elizabeth. Statistics in Psychosocial Research Lecture 8. Factor Analysis I. Retrieved 22 September 2015 from http: //ocw. jhsph. edu/courses/Statistics. Psychosocial. Research/PDFs/Lecture 8. pdf

- Slides: 26