Embedded Computer Architecture 5 SAI 0 DLP Architectures

Embedded Computer Architecture 5 SAI 0 DLP Architectures Vector, SIMD, GPU Henk Corporaal www. ics. ele. tue. nl/~heco/courses/ECA h. corporaal@tue. nl TUEindhoven 2020 -2021

Today’s program: Data-level parallel architectures 1. Vector machine 2. SIMD (Single Instruction Multiple Data) processors – sub-word parallelism support 3. GPU (Graphic Processing Unit); see earlier slides Material: – Book of Hennessy & Patterson – Study: Chapter 4: 4. 1 -4. 7 – (extra material: app G: vector processors) • But first: finish ‘what can the compiler do? ’ 3/11/2021 2

What can the compiler do? • Loop transformations • Code scheduling • Special course 5 LIM 0 on Parallelization and Compilers – LLVM based An oldy on compilers 3/11/2021 ECA H. Corporaal 3

Basic compiler techniques • Dependencies limit ILP (Instruction-Level Parallelism) – We can not always find sufficient independent operations to fill all the delay slots – May result in pipeline stalls • Scheduling to avoid stalls (= reorder instructions) • (Source-)code transformations to create more exploitable parallelism – Loop Unrolling – Loop Merging (Fusion) • see online slide-set about loop transformations !! 3/11/2021 ECA H. Corporaal 4

![Dependencies Limit ILP: Example C loop: for (i=1; i<=1000; i++) x[i] = x[i] + Dependencies Limit ILP: Example C loop: for (i=1; i<=1000; i++) x[i] = x[i] +](http://slidetodoc.com/presentation_image_h/356a34d737b8294e2941887018e8284d/image-5.jpg)

Dependencies Limit ILP: Example C loop: for (i=1; i<=1000; i++) x[i] = x[i] + s; MIPS assembly code: ; R 1 = &x[1] ; R 2 = &x[1000]+8 ; F 2 = s Loop: L. D ADD. D S. D ADDI BNE 3/11/2021 ECA H. Corporaal // compiler choices F 0, 0(R 1) F 4, F 0, F 2 0(R 1), F 4 R 1, 8 R 1, R 2, Loop ; ; ; F 0 = x[i] F 4 = x[i]+s x[i] = F 4 R 1 = &x[i+1] branch if R 1!=&x[1000]+8 5

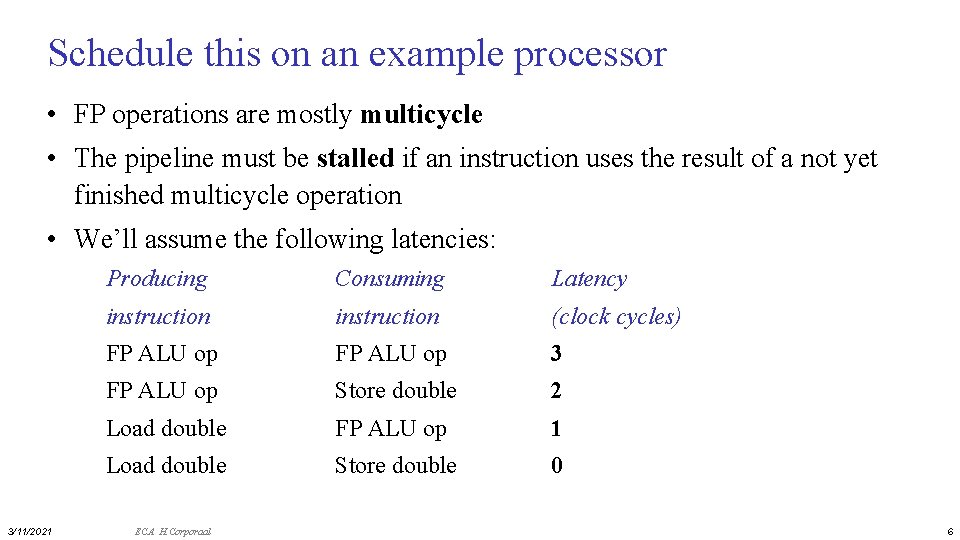

Schedule this on an example processor • FP operations are mostly multicycle • The pipeline must be stalled if an instruction uses the result of a not yet finished multicycle operation • We’ll assume the following latencies: 3/11/2021 Producing Consuming Latency instruction (clock cycles) FP ALU op 3 FP ALU op Store double 2 Load double FP ALU op 1 Load double Store double 0 ECA H. Corporaal 6

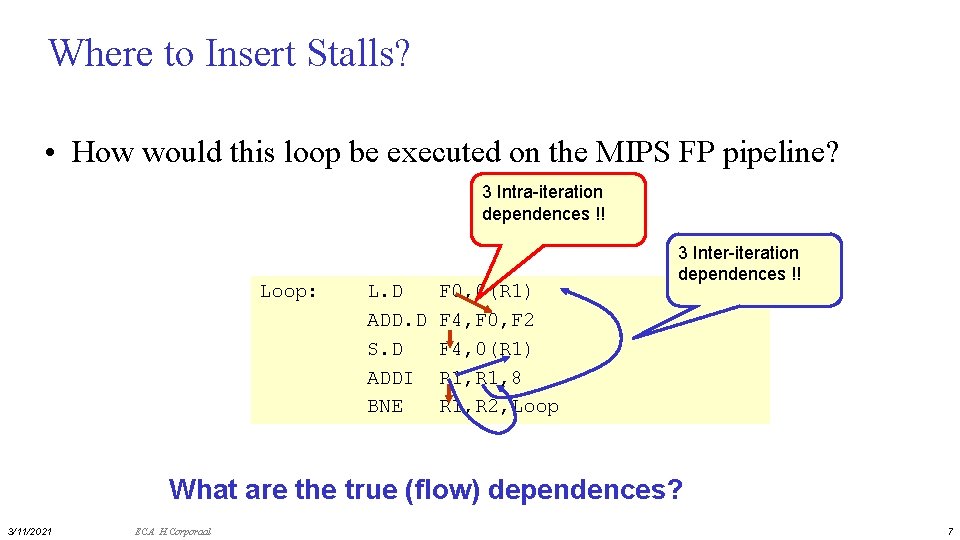

Where to Insert Stalls? • How would this loop be executed on the MIPS FP pipeline? 3 Intra-iteration dependences !! Loop: L. D ADD. D S. D ADDI BNE F 0, 0(R 1) F 4, F 0, F 2 F 4, 0(R 1) R 1, 8 R 1, R 2, Loop 3 Inter-iteration dependences !! What are the true (flow) dependences? 3/11/2021 ECA H. Corporaal 7

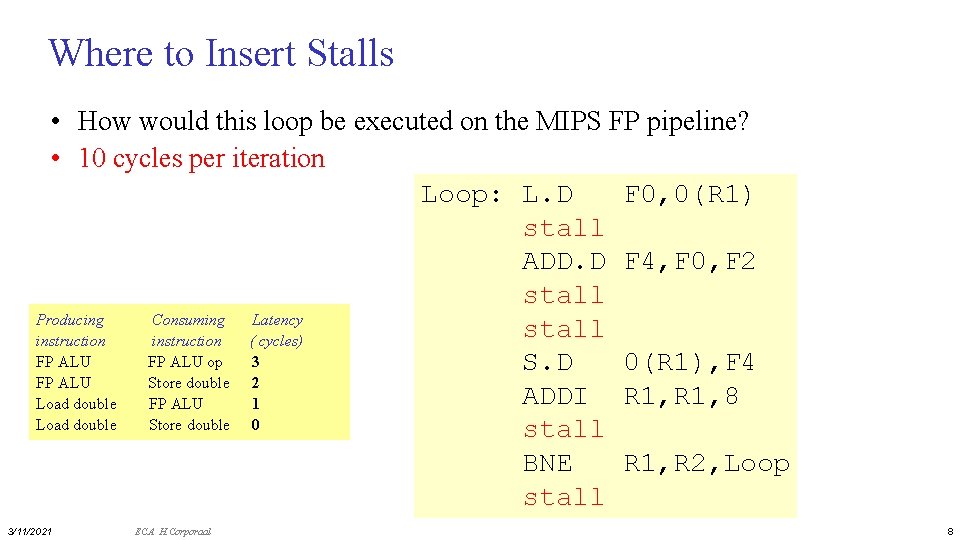

Where to Insert Stalls • How would this loop be executed on the MIPS FP pipeline? • 10 cycles per iteration Loop: L. D F 0, 0(R 1) stall ADD. D F 4, F 0, F 2 stall Producing Consuming Latency stall instruction ( cycles) FP ALU op 3 S. D 0(R 1), F 4 FP ALU Store double 2 ADDI R 1, 8 Load double FP ALU 1 Load double Store double 0 stall BNE R 1, R 2, Loop stall 3/11/2021 ECA H. Corporaal 8

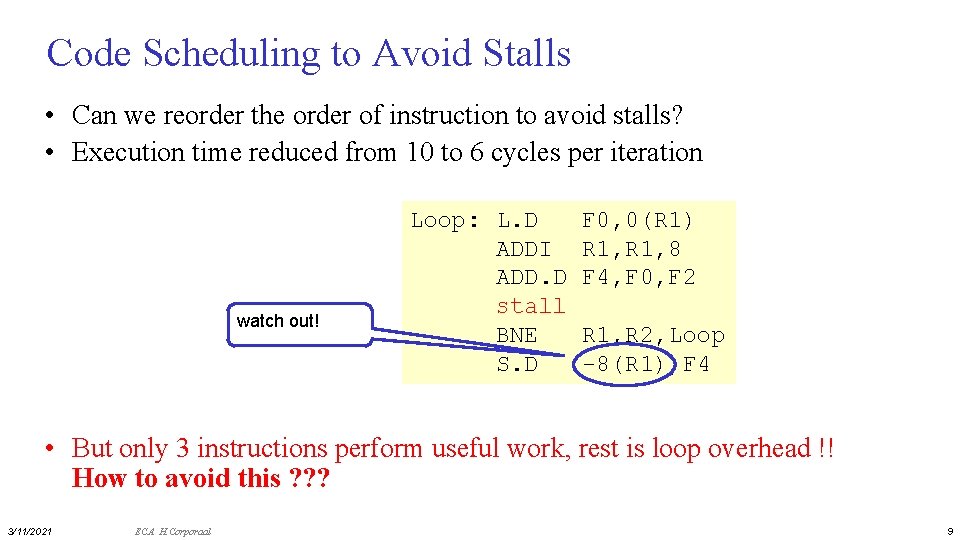

Code Scheduling to Avoid Stalls • Can we reorder the order of instruction to avoid stalls? • Execution time reduced from 10 to 6 cycles per iteration watch out! Loop: L. D ADDI ADD. D stall BNE S. D F 0, 0(R 1) R 1, 8 F 4, F 0, F 2 R 1, R 2, Loop -8(R 1), F 4 • But only 3 instructions perform useful work, rest is loop overhead !! How to avoid this ? ? ? 3/11/2021 ECA H. Corporaal 9

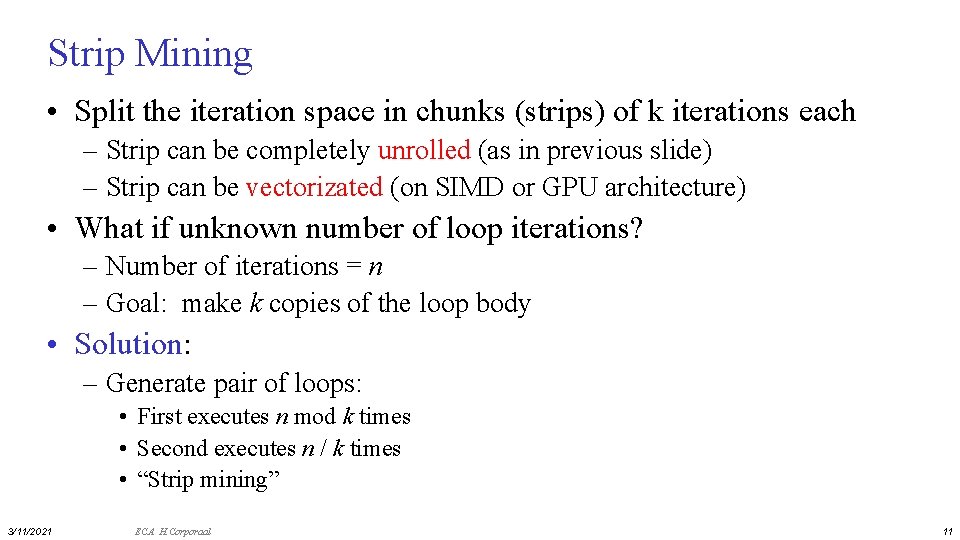

Loop Unrolling: increasing ILP At source level: Code after scheduling: for (i=1; i<=1000; i++) x[i] = x[i] + s; for (i=1; { x[i] x[i+1] x[i+2] x[i+3] } i<=1000; i=i+4) = = x[i] + s; x[i+1]+s; x[i+2]+s; x[i+3]+s; • 14 cycles/4 iterations => 3. 5 cycles/iteration • Any drawbacks? – loop unrolling increases code size – more registers needed 3/11/2021 ECA H. Corporaal Loop: L. D ADD. D S. D ADDI SD BNE SD F 0, 0(R 1) F 6, 8(R 1) F 10, 16(R 1) F 14, 24(R 1) F 4, F 0, F 2 F 8, F 6, F 2 F 12, F 10, F 2 F 16, F 14, F 2 0(R 1), F 4 8(R 1), F 8 R 1, 32 -16(R 1), F 12 R 1, R 2, Loop -8(R 1), F 16 10

Strip Mining • Split the iteration space in chunks (strips) of k iterations each – Strip can be completely unrolled (as in previous slide) – Strip can be vectorizated (on SIMD or GPU architecture) • What if unknown number of loop iterations? – Number of iterations = n – Goal: make k copies of the loop body • Solution: – Generate pair of loops: • First executes n mod k times • Second executes n / k times • “Strip mining” 3/11/2021 ECA H. Corporaal 11

Hardware support for compile-time scheduling • Predication – see earlier slides on conditional move and if-conversion • Speculative loads – Deferred exceptions 3/11/2021 ECA H. Corporaal 12

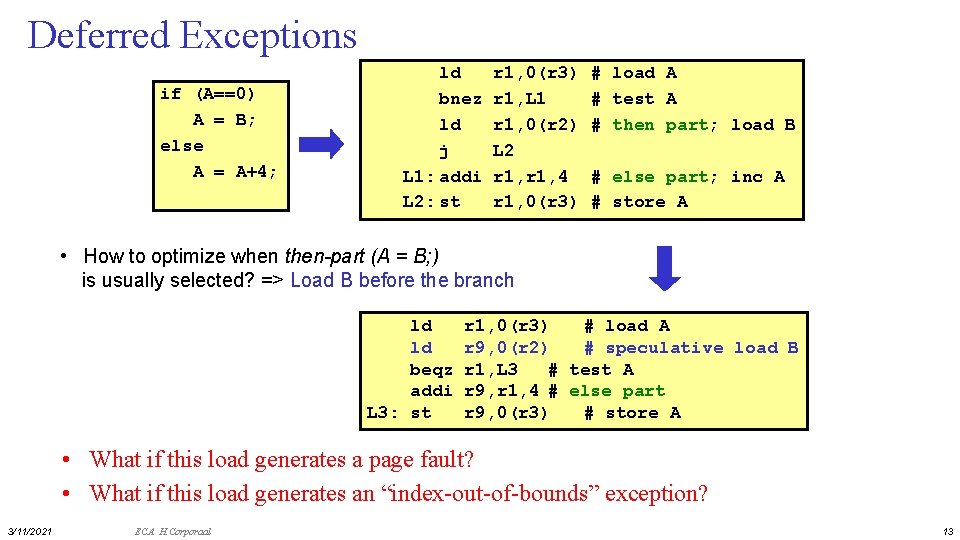

Deferred Exceptions if (A==0) A = B; else A = A+4; ld bnez ld j L 1: addi L 2: st r 1, 0(r 3) r 1, L 1 r 1, 0(r 2) L 2 r 1, 4 r 1, 0(r 3) # load A # test A # then part; load B # else part; inc A # store A • How to optimize when then-part (A = B; ) is usually selected? => Load B before the branch ld ld beqz addi L 3: st r 1, 0(r 3) # load A r 9, 0(r 2) # speculative load B r 1, L 3 # test A r 9, r 1, 4 # else part r 9, 0(r 3) # store A • What if this load generates a page fault? • What if this load generates an “index-out-of-bounds” exception? 3/11/2021 ECA H. Corporaal 13

HW supporting Speculative Loads • 2 new instructions: – Speculative load (sld): does not generate exceptions – Speculation check instruction (speck): check for exception. The exception occurs when this instruction is executed. L 1: L 2: 3/11/2021 ECA H. Corporaal ld sld bnez speck j addi st r 1, 0(r 3) r 9, 0(r 2) r 1, L 1 0(r 2) L 2 r 9, r 1, 4 r 9, 0(r 3) # # load A speculative load of B test A perform exception check # else part # store A 14

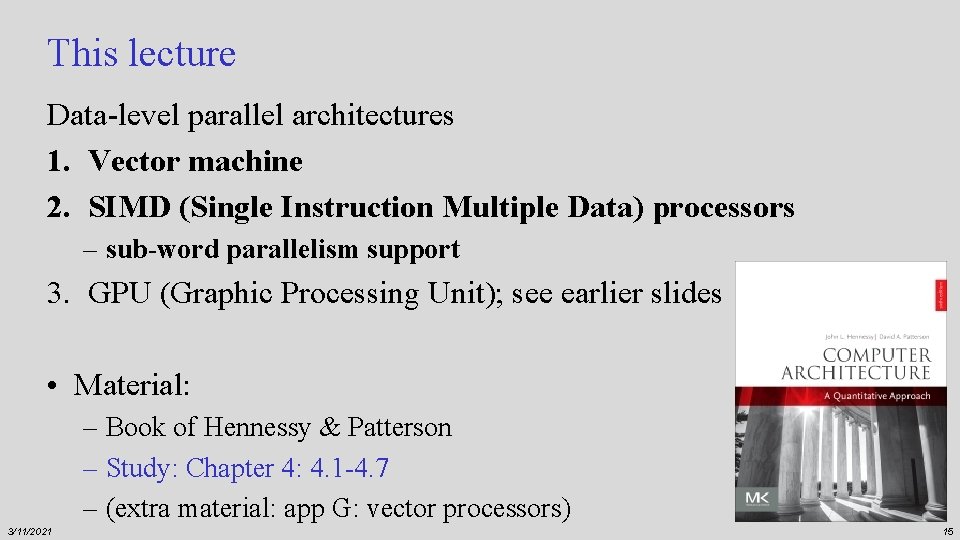

This lecture Data-level parallel architectures 1. Vector machine 2. SIMD (Single Instruction Multiple Data) processors – sub-word parallelism support 3. GPU (Graphic Processing Unit); see earlier slides • Material: – Book of Hennessy & Patterson – Study: Chapter 4: 4. 1 -4. 7 – (extra material: app G: vector processors) 3/11/2021 15

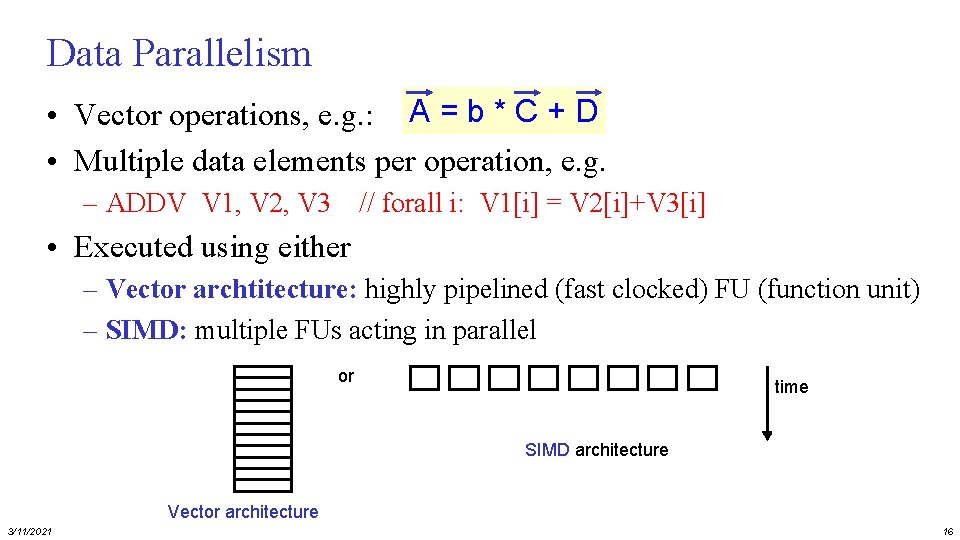

Data Parallelism • Vector operations, e. g. : A = b * C + D • Multiple data elements per operation, e. g. – ADDV V 1, V 2, V 3 // forall i: V 1[i] = V 2[i]+V 3[i] • Executed using either – Vector archtitecture: highly pipelined (fast clocked) FU (function unit) – SIMD: multiple FUs acting in parallel or time SIMD architecture Vector architecture 3/11/2021 16

Intermezzo: SIMD vs MIMD • SIMD architectures can exploit significant data-level parallelism for: – matrix-oriented: scientific computing – media-oriented: image, video and sound processors • SIMD is more energy efficient than MIMD – Only needs to fetch and decode one instruction per data operation – Makes SIMD attractive for personal mobile devices • SIMD allows programmer to continue to think sequentially • MIMD is more generic: why? 3/11/2021 17

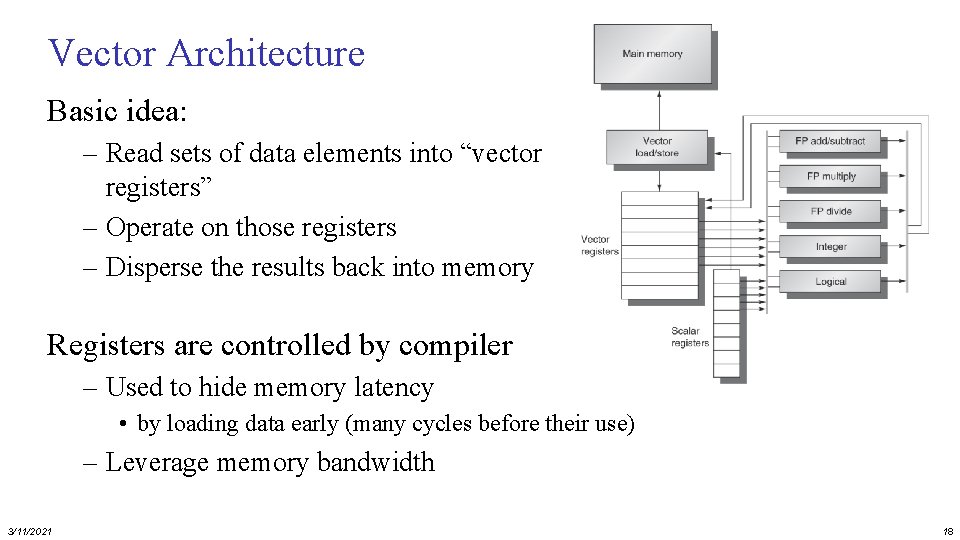

Vector Architecture Basic idea: – Read sets of data elements into “vector registers” – Operate on those registers – Disperse the results back into memory Registers are controlled by compiler – Used to hide memory latency • by loading data early (many cycles before their use) – Leverage memory bandwidth 3/11/2021 18

Example architecture: VMIPS • Loosely based on Cray-1 • Vector registers – Each register holds a 64 -element, 64 bits/element vector – Register file has 16 read- and 8 write-ports • Vector functional units Cray-1 1976 – Fully pipelined; Data and control hazards are detected • Vector load-store unit – Fully pipelined; One word per clock cycle after initial latency • Scalar registers – 32 general-purpose registers+ 32 floating-point registers 3/11/2021 19

VMIPS Instructions • ADDVV. D: add two vectors (of Doubles) • ADDVS. D: add vector to a scalar (Doubles) • LV/SV: vector load and vector store from address • Example: DAXPY ((double) Y=a*X+Y), inner loop of Linpack L. D LV MULVS. D LV ADDVV SV F 0, a V 1, Rx V 2, V 1, F 0 V 3, Ry V 4, V 2, V 3 Ry, V 4 ; ; ; load scalar a load vector X vector-scalar multiply load vector Y add store the result • Requires 6 instructions vs. almost 600 for MIPS 3/11/2021 20

Vector Execution Time • Execution time depends on three factors: 1. Length of operand vectors 2. Structural hazards 3. Data dependencies • VMIPS functional units consume one element per clock cycle – Execution time is approximately the vector length (VL): Texec ~ VL • Convoy – Set of vector instructions that could potentially execute together 3/11/2021 21

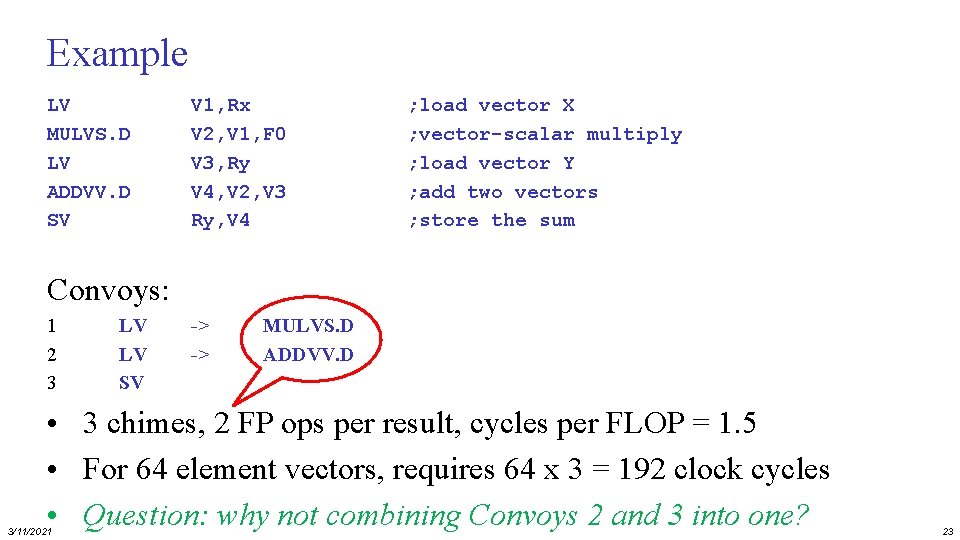

Chimes • Sequences with read-after-write dependency hazards can be in the same convey via chaining • Chaining – Allows a vector operation to start as soon as the individual elements of its vector source operand become available • Chime – Unit of time to execute one convoy – m convoys executes in m chimes – For VL=n, requires m x n clock cycles (+ pipeline filling latency) 3/11/2021 22

Example LV MULVS. D LV ADDVV. D SV V 1, Rx V 2, V 1, F 0 V 3, Ry V 4, V 2, V 3 Ry, V 4 ; load vector X ; vector-scalar multiply ; load vector Y ; add two vectors ; store the sum Convoys: 1 2 3 LV LV SV -> -> MULVS. D ADDVV. D • 3 chimes, 2 FP ops per result, cycles per FLOP = 1. 5 • For 64 element vectors, requires 64 x 3 = 192 clock cycles • Question: why not combining Convoys 2 and 3 into one? 3/11/2021 23

Challenges • Start up time: – Latency of vector functional unit – Assume the same as Cray-1 • • 3/11/2021 Floating-point add => 6 clock cycles Floating-point multiply => 7 clock cycles Floating-point divide => 20 clock cycles Vector load => 12 clock cycles • Improvements: – – > 1 element per clock cycle Non-64 wide vectors IF statements in vector code Memory system optimizations to support vector processors – Multiple dimensional matrices – Sparse matrices – HLL support: Programming a vector 24

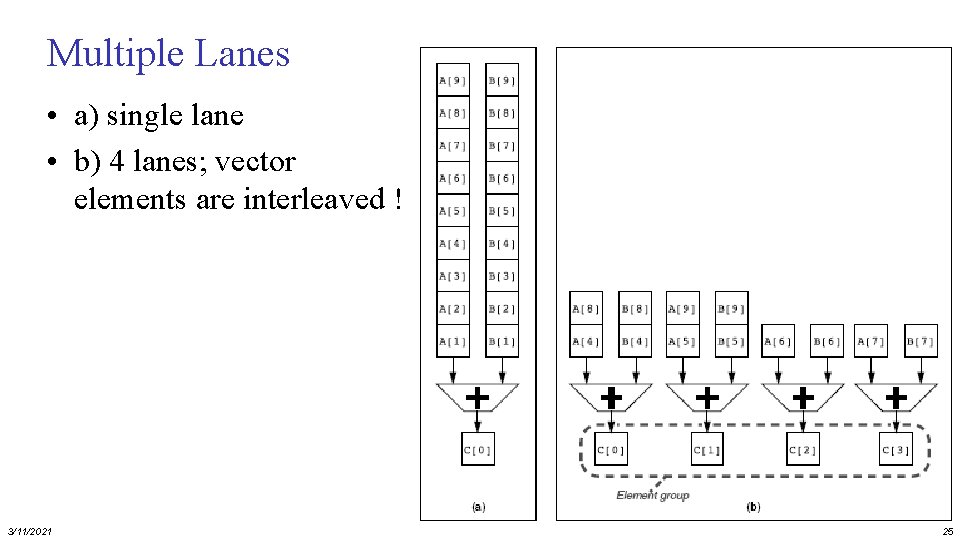

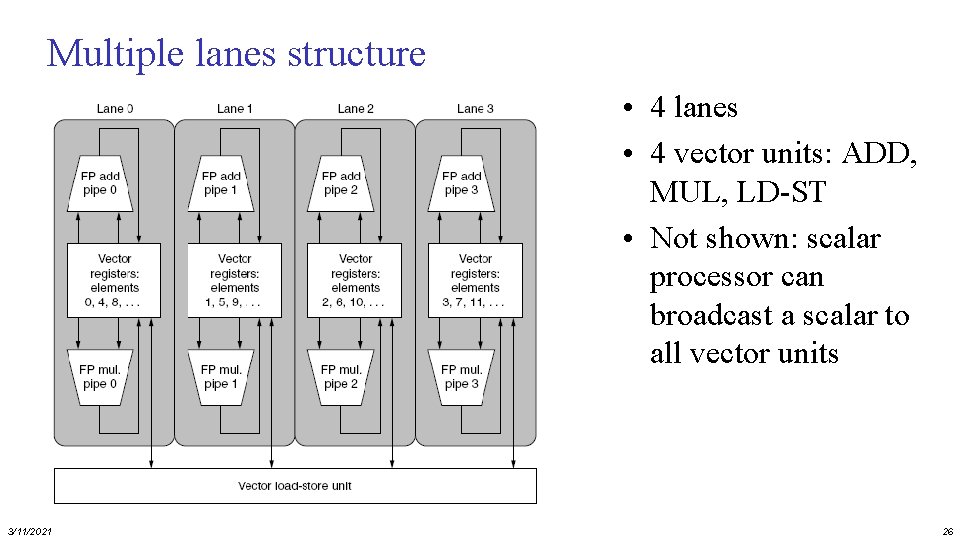

Multiple Lanes • a) single lane • b) 4 lanes; vector elements are interleaved ! 3/11/2021 25

Multiple lanes structure • 4 lanes • 4 vector units: ADD, MUL, LD-ST • Not shown: scalar processor can broadcast a scalar to all vector units 3/11/2021 26

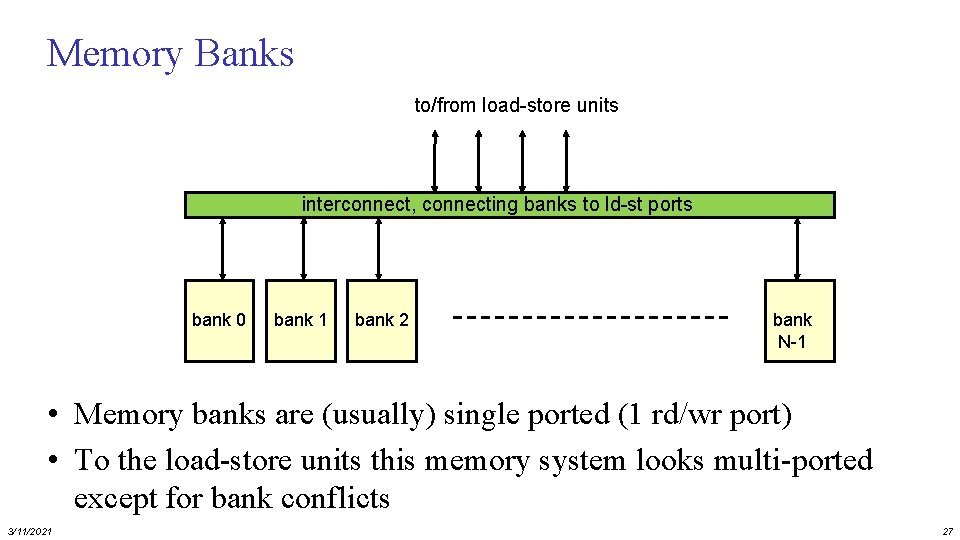

Memory Banks to/from load-store units interconnect, connecting banks to ld-st ports bank 0 bank 1 bank 2 bank N-1 • Memory banks are (usually) single ported (1 rd/wr port) • To the load-store units this memory system looks multi-ported except for bank conflicts 3/11/2021 27

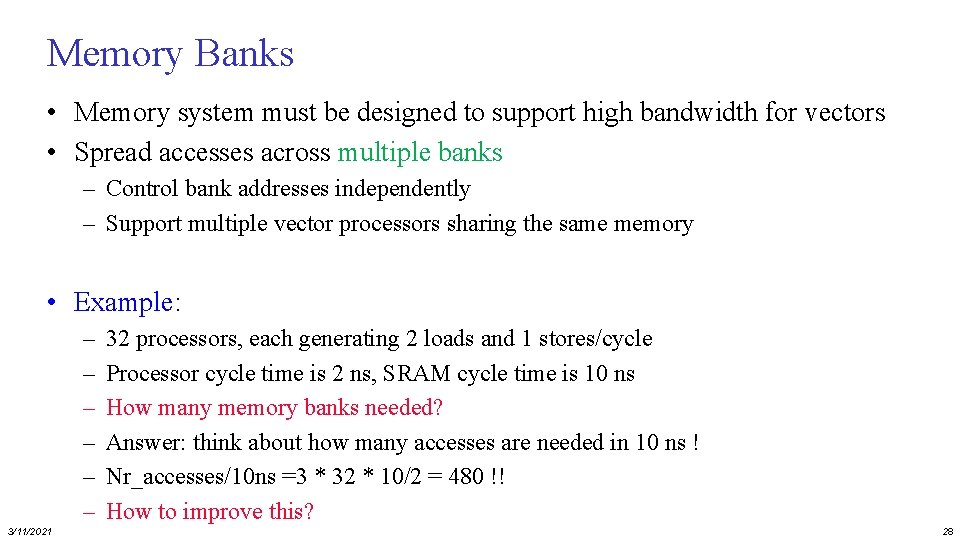

Memory Banks • Memory system must be designed to support high bandwidth for vectors • Spread accesses across multiple banks – Control bank addresses independently – Support multiple vector processors sharing the same memory • Example: – – – 3/11/2021 32 processors, each generating 2 loads and 1 stores/cycle Processor cycle time is 2 ns, SRAM cycle time is 10 ns How many memory banks needed? Answer: think about how many accesses are needed in 10 ns ! Nr_accesses/10 ns =3 * 32 * 10/2 = 480 !! How to improve this? 28

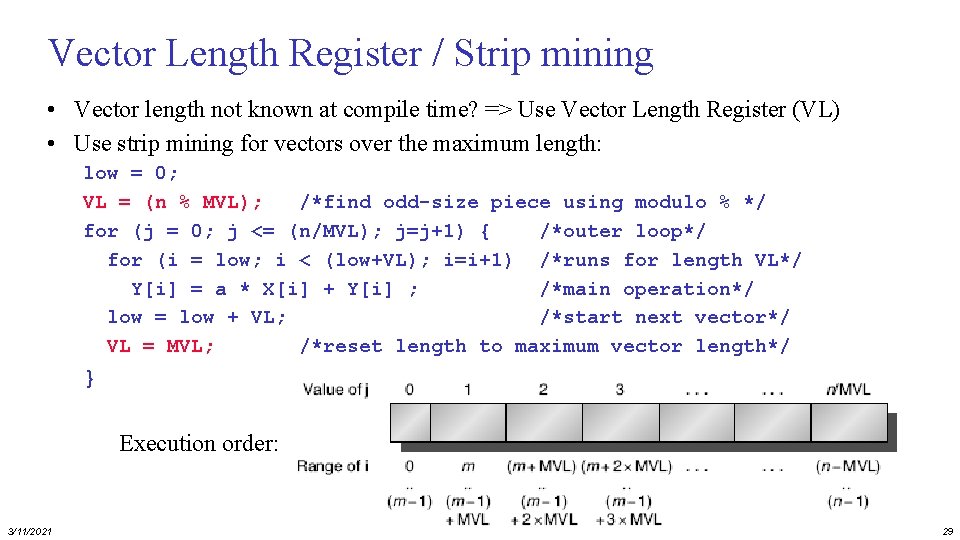

Vector Length Register / Strip mining • Vector length not known at compile time? => Use Vector Length Register (VL) • Use strip mining for vectors over the maximum length: low = 0; VL = (n % MVL); /*find odd-size piece using modulo % */ for (j = 0; j <= (n/MVL); j=j+1) { /*outer loop*/ for (i = low; i < (low+VL); i=i+1) /*runs for length VL*/ Y[i] = a * X[i] + Y[i] ; /*main operation*/ low = low + VL; /*start next vector*/ VL = MVL; /*reset length to maximum vector length*/ } Execution order: 3/11/2021 29

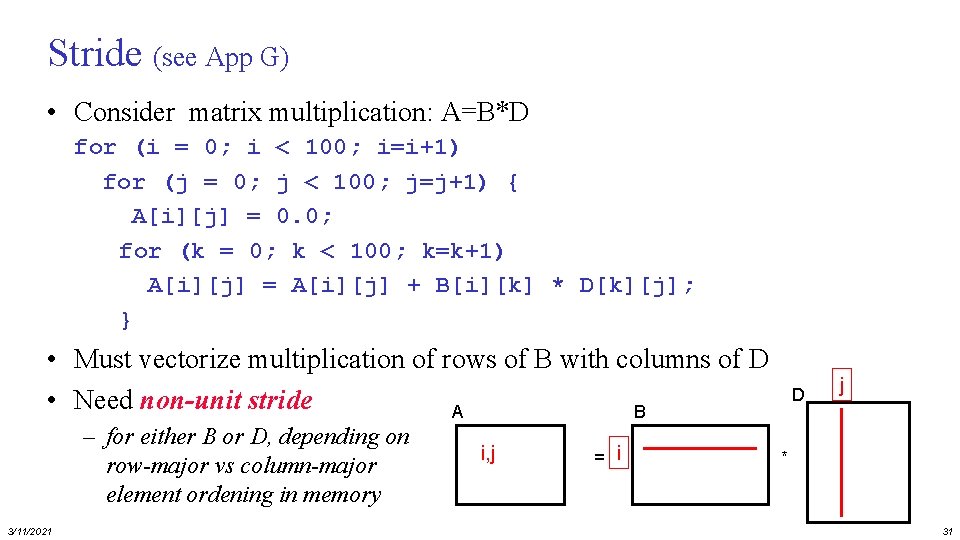

Vector Mask Registers: handling If-statements • Consider: for (i = 0; i < 64; i=i+1) if (X[i] != 0) X[i] = X[i] – Y[i]; • Use vector mask register, VM, to “disable” elements: LV LV L. D SNEVS. D SUBVV. D SV V 1, Rx V 2, Ry F 0, #0 V 1, F 0 V 1, V 2 Rx, V 1 ; load vector X into V 1 ; load vector Y ; load FP zero into F 0 ; sets VM(i) to 1 if V 1(i)!= F 0 ; subtract under vector mask ; store the result in X • Q: GFLOPS rate decreases! Why? ? ? 3/11/2021 – Compare to GPU solution 30

Stride (see App G) • Consider matrix multiplication: A=B*D for (i = 0; i < 100; i=i+1) for (j = 0; j < 100; j=j+1) { A[i][j] = 0. 0; for (k = 0; k < 100; k=k+1) A[i][j] = A[i][j] + B[i][k] * D[k][j]; } • Must vectorize multiplication of rows of B with columns of D • Need non-unit stride A B – for either B or D, depending on row-major vs column-major element ordening in memory 3/11/2021 i, j = i D j * 31

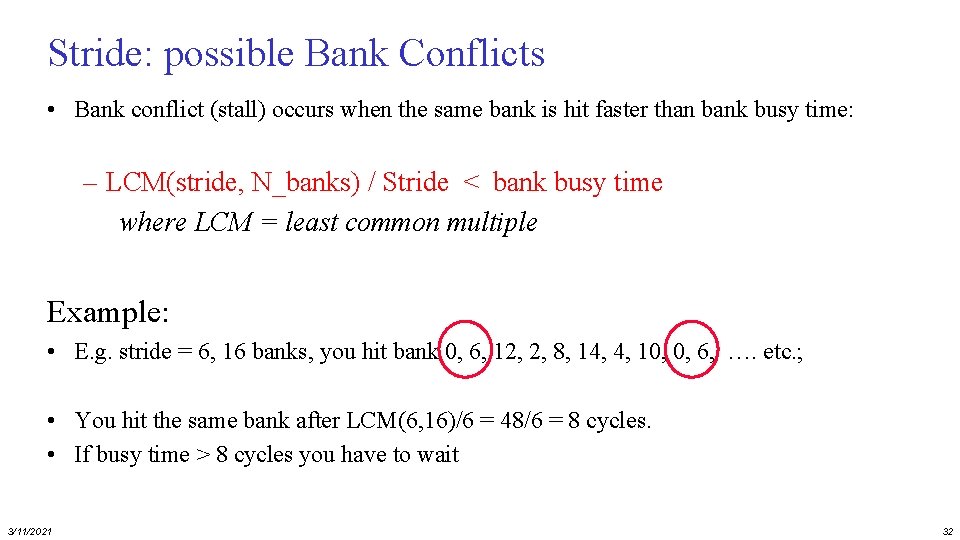

Stride: possible Bank Conflicts • Bank conflict (stall) occurs when the same bank is hit faster than bank busy time: – LCM(stride, N_banks) / Stride < bank busy time where LCM = least common multiple Example: • E. g. stride = 6, 16 banks, you hit bank 0, 6, 12, 2, 8, 14, 4, 10, 0, 6, …. etc. ; • You hit the same bank after LCM(6, 16)/6 = 48/6 = 8 cycles. • If busy time > 8 cycles you have to wait 3/11/2021 32

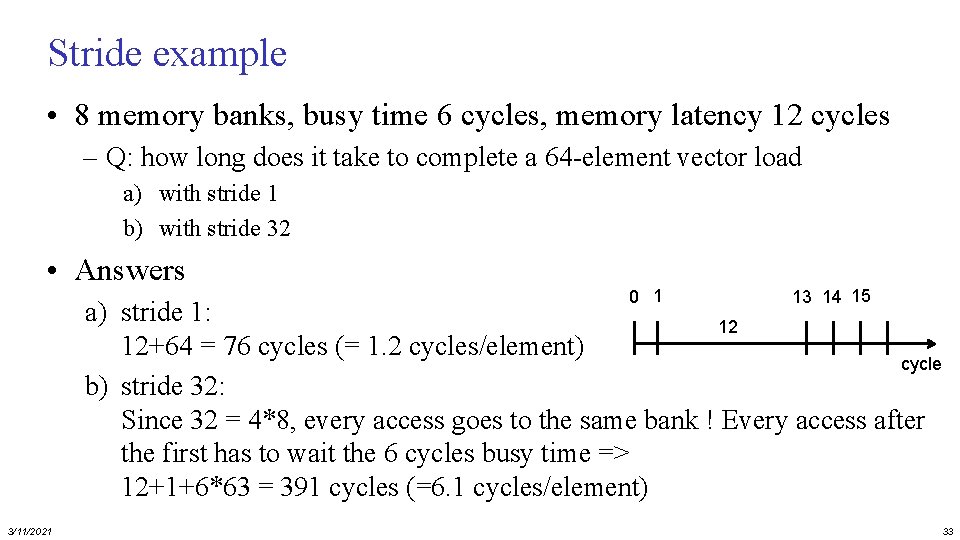

Stride example • 8 memory banks, busy time 6 cycles, memory latency 12 cycles – Q: how long does it take to complete a 64 -element vector load a) with stride 1 b) with stride 32 • Answers 0 1 13 14 15 a) stride 1: 12 12+64 = 76 cycles (= 1. 2 cycles/element) cycle b) stride 32: Since 32 = 4*8, every access goes to the same bank ! Every access after the first has to wait the 6 cycles busy time => 12+1+6*63 = 391 cycles (=6. 1 cycles/element) 3/11/2021 33

![Scatter-Gather: Indirect Vector Access for (i = 0; i < n; i=i+1) A[K[i]] = Scatter-Gather: Indirect Vector Access for (i = 0; i < n; i=i+1) A[K[i]] =](http://slidetodoc.com/presentation_image_h/356a34d737b8294e2941887018e8284d/image-34.jpg)

Scatter-Gather: Indirect Vector Access for (i = 0; i < n; i=i+1) A[K[i]] = A[K[i]] + C[M[i]]; indirect memory accesses • Use index vector to load e. g. only the non-zero elements of A into vector Va: LV LVI ADDVV. D SVI 3/11/2021 Vk, Rk Va, (Ra+Vk) Vm, Rm Vc, (Rc+Vm) Va, Vc (Ra+Vk), Va ; load K ; load A[K[]] ; load M ; load C[M[]] ; add them ; store A[K[]] 34

SIMD architecture exploiting Sub-word Parallelism • Divide word into multiple parts (sub-words) and perform operations on these parts in parallel • E. g Vector. Add of 16 parallel Adds: + 3/11/2021 35

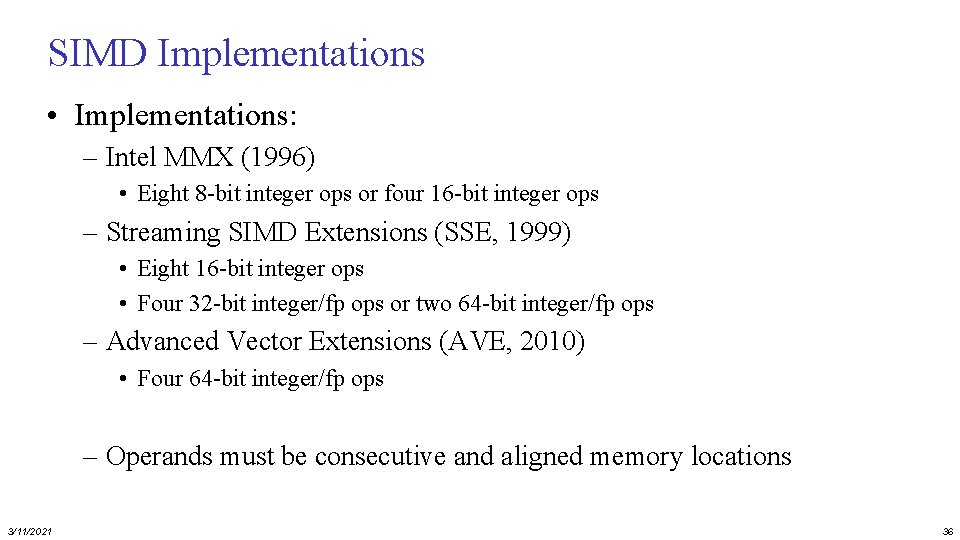

SIMD Implementations • Implementations: – Intel MMX (1996) • Eight 8 -bit integer ops or four 16 -bit integer ops – Streaming SIMD Extensions (SSE, 1999) • Eight 16 -bit integer ops • Four 32 -bit integer/fp ops or two 64 -bit integer/fp ops – Advanced Vector Extensions (AVE, 2010) • Four 64 -bit integer/fp ops – Operands must be consecutive and aligned memory locations 3/11/2021 36

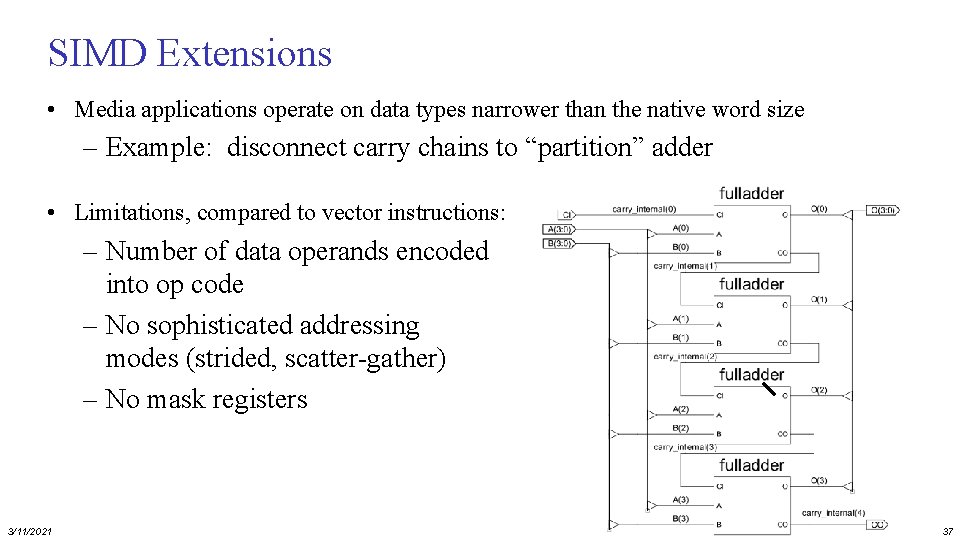

SIMD Extensions • Media applications operate on data types narrower than the native word size – Example: disconnect carry chains to “partition” adder • Limitations, compared to vector instructions: – Number of data operands encoded into op code – No sophisticated addressing modes (strided, scatter-gather) – No mask registers 3/11/2021 37

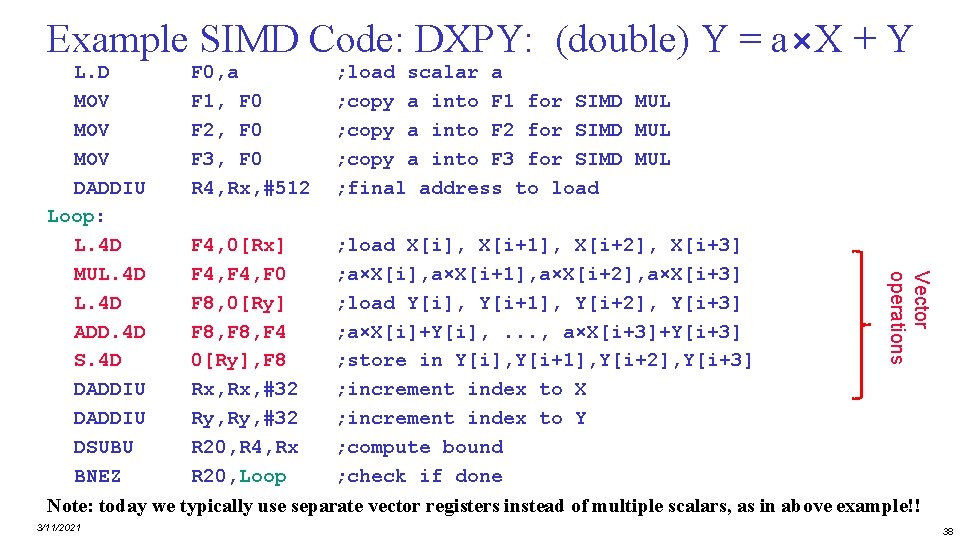

Example SIMD Code: DXPY: (double) Y = a×X + Y F 0, a F 1, F 0 F 2, F 0 F 3, F 0 R 4, Rx, #512 ; load scalar a ; copy a into F 1 for SIMD MUL ; copy a into F 2 for SIMD MUL ; copy a into F 3 for SIMD MUL ; final address to load F 4, 0[Rx] F 4, F 0 F 8, 0[Ry] F 8, F 4 0[Ry], F 8 Rx, #32 Ry, #32 R 20, R 4, Rx R 20, Loop ; load X[i], X[i+1], X[i+2], X[i+3] ; a×X[i], a×X[i+1], a×X[i+2], a×X[i+3] ; load Y[i], Y[i+1], Y[i+2], Y[i+3] ; a×X[i]+Y[i], . . . , a×X[i+3]+Y[i+3] ; store in Y[i], Y[i+1], Y[i+2], Y[i+3] ; increment index to X ; increment index to Y ; compute bound ; check if done Vector operations L. D MOV MOV DADDIU Loop: L. 4 D MUL. 4 D ADD. 4 D S. 4 D DADDIU DSUBU BNEZ Note: today we typically use separate vector registers instead of multiple scalars, as in above example!! 3/11/2021 38

Performance model • What is peak performance of an architecture? – compute limited? – or, memory bandwidth limited? 3/11/2021 39

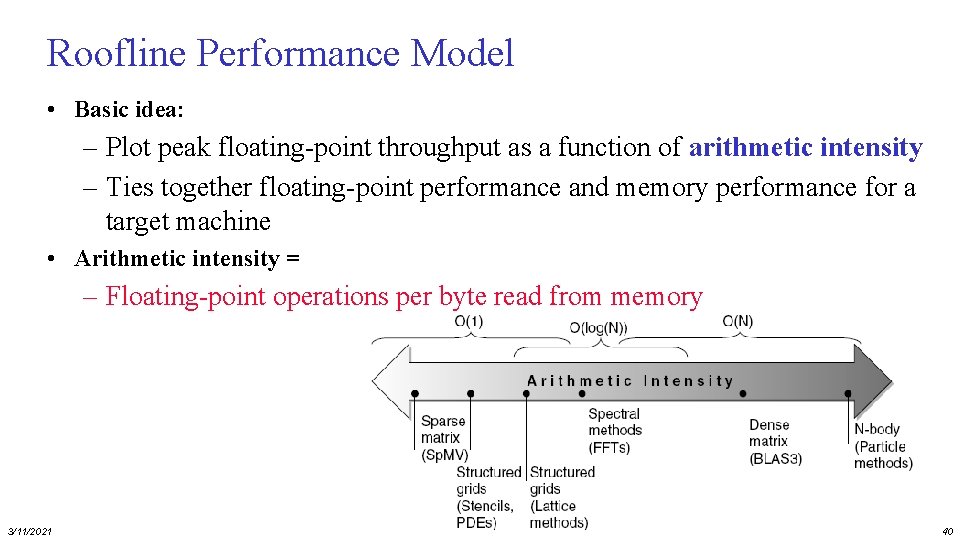

Roofline Performance Model • Basic idea: – Plot peak floating-point throughput as a function of arithmetic intensity – Ties together floating-point performance and memory performance for a target machine • Arithmetic intensity = – Floating-point operations per byte read from memory 3/11/2021 40

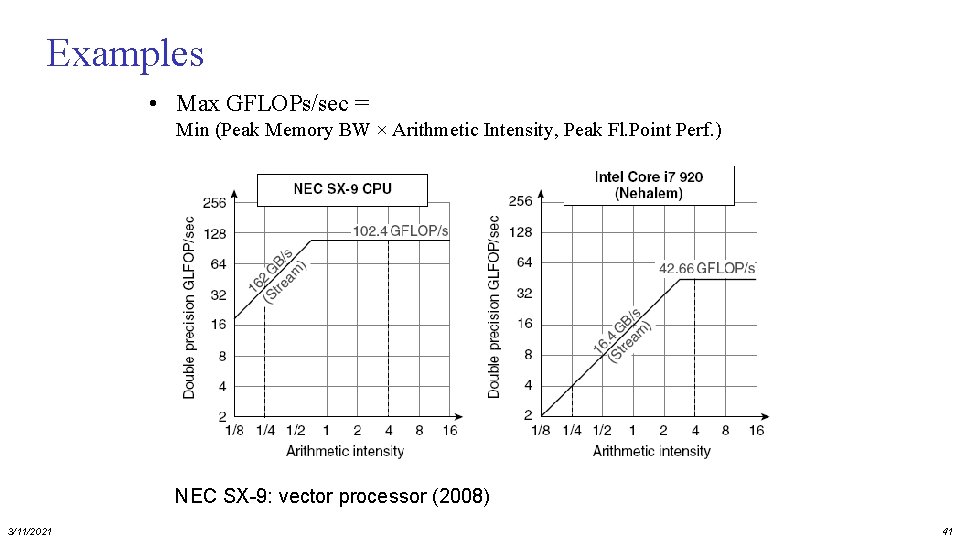

Examples • Max GFLOPs/sec = Min (Peak Memory BW × Arithmetic Intensity, Peak Fl. Point Perf. ) NEC SX-9: vector processor (2008) 3/11/2021 41

Concluding remarks • Amount of ILP in general programs limited • However, often plenty of DLP, data-level parallelism – Signal processing, Video, Imaging, Deep Learning, etc. • Vector, SIMD and GPU architectures support DLP – used by almost any architecture (e. g. as sub-word parallelism) • GPUs become mainstream in many platforms, from mobile to supercomputers – new SIMT programming model: supported by CUDA and Open. CL • however: – CPU-GPU transfer bottleneck (traffic over PCI bus) – Solution: Unified physical memories for CPU and GPU • Q: Clearly explain the differences between Vector, SIMD, and GPU? • Q: Interpret the different Rooflines 3/11/2021 42

- Slides: 42