Dialogue Management Speech Recognition Maryam Habibi Sharif university

Dialogue Management Speech Recognition Maryam Habibi Sharif university department of AI Spring 87 12/12/2021 Dialogue Management 1

Dialogue Management Mapping function it is concerned with decision-making with delayed rewards. 12/12/2021 Dialogue Management 2

Core of Dialogue Management n data structure which represents the system’s view of the world in the form of a machine state sm n n n n user’s input act au estimate of the intended user goal su some record of the dialog history sd Based on each new state estimate, a dialog policy is used to select an appropriate response in the form of a system speech act am 12/12/2021 Dialogue Management 3

Problems n user’s state su is unknown n the decoded inputs au are prone to errors n dialog optimisation requires forward planning and this is extremely difficult in a deterministic framework. 12/12/2021 Dialogue Management 4

Solution statistical approach (MDP) n MDP n n provide a good statistical framework n allow forward planning n dialog policy optimisation through reinforcement learning 12/12/2021 Dialogue Management 5

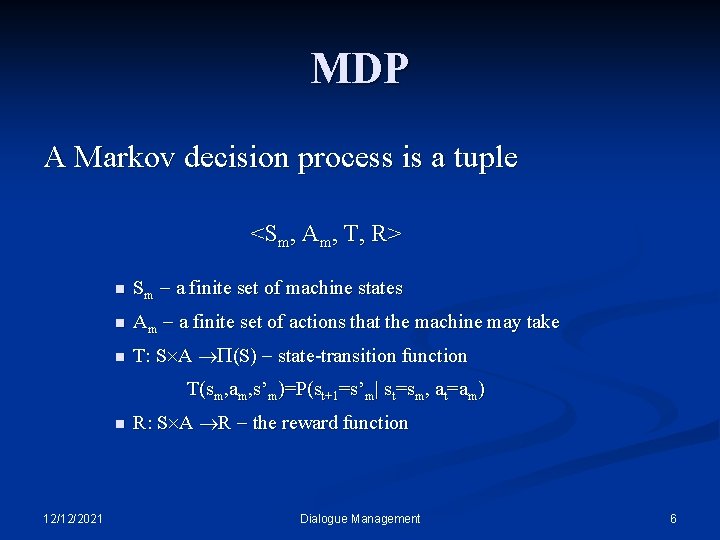

MDP A Markov decision process is a tuple <Sm, Am, T, R> n Sm a finite set of machine states n Am a finite set of actions that the machine may take n T: S A (S) state-transition function T(sm, am, s’m)=P(st+1=s’m| st=sm, at=am) n 12/12/2021 R: S A R the reward function Dialogue Management 6

Complete Observability MDPs assume that the entire state is observable. Therefore, it is called CO-MDP (completely observable) 12/12/2021 Dialogue Management 7

Partial Observability Instead of directly measuring the current state, the agent makes an observation to get a hint about what state it is in. 12/12/2021 Dialogue Management 8

Why use a POMDP? n POMDPs provide a rich framework for sequential decision-making, which can model: n varying rewards across actions and goals n actions with random effects n uncertainty in the state of the world 12/12/2021 Dialogue Management 9

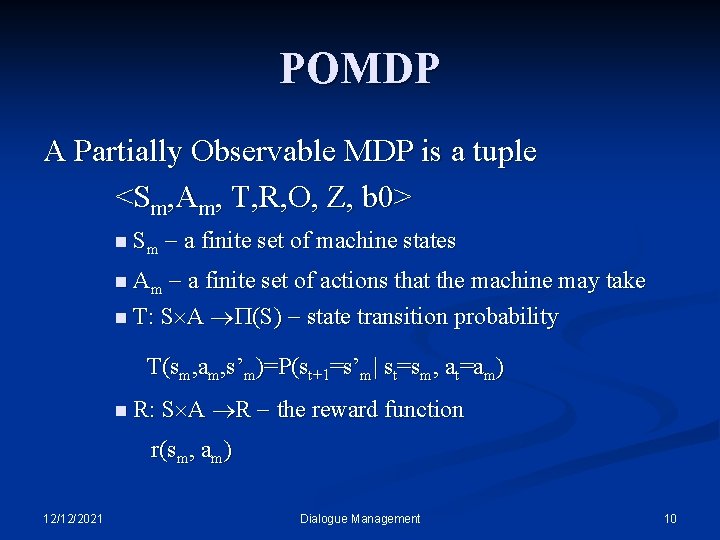

POMDP A Partially Observable MDP is a tuple <Sm, Am, T, R, O, Z, b 0> n Sm a finite set of machine states a finite set of actions that the machine may take n T: S A (S) state transition probability n Am T(sm, am, s’m)=P(st+1=s’m| st=sm, at=am) n R: S A R the reward function r(sm, am) 12/12/2021 Dialogue Management 10

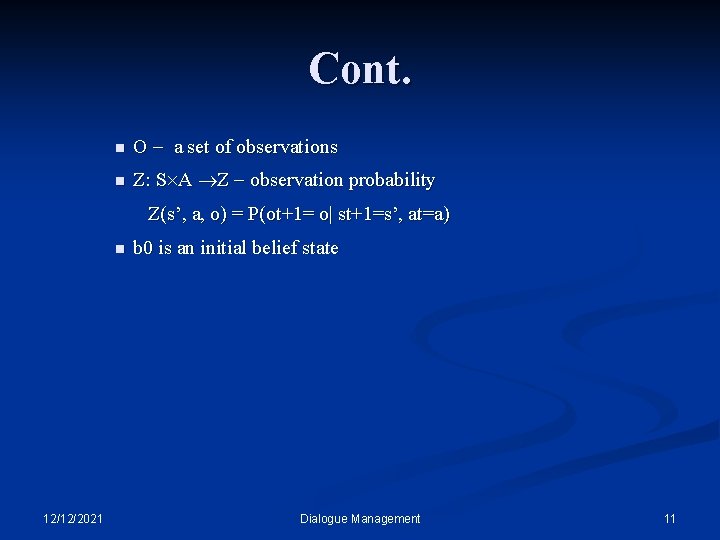

Cont. n O a set of observations n Z: S A Z observation probability Z(s’, a, o) = P(ot+1= o| st+1=s’, at=a) n 12/12/2021 b 0 is an initial belief state Dialogue Management 11

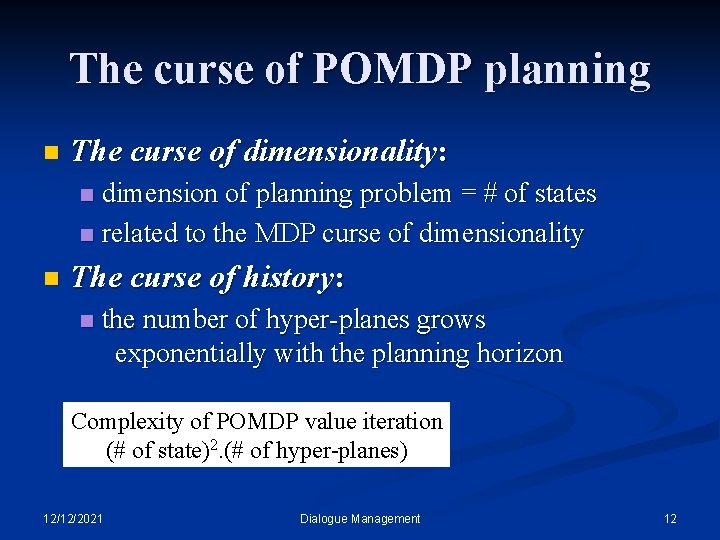

The curse of POMDP planning n The curse of dimensionality: dimension of planning problem = # of states n related to the MDP curse of dimensionality n n The curse of history: n the number of hyper-planes grows exponentially with the planning horizon Complexity of POMDP value iteration (# of state)2. (# of hyper-planes) 12/12/2021 Dialogue Management 12

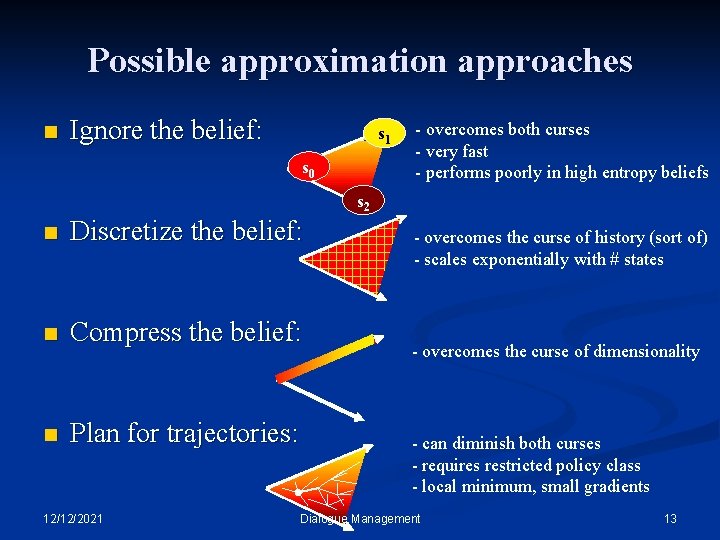

Possible approximation approaches n Ignore the belief: s 1 s 0 - overcomes both curses - very fast - performs poorly in high entropy beliefs s 2 n Discretize the belief: n Compress the belief: n Plan for trajectories: 12/12/2021 - overcomes the curse of history (sort of) - scales exponentially with # states - overcomes the curse of dimensionality - can diminish both curses - requires restricted policy class - local minimum, small gradients Dialogue Management 13

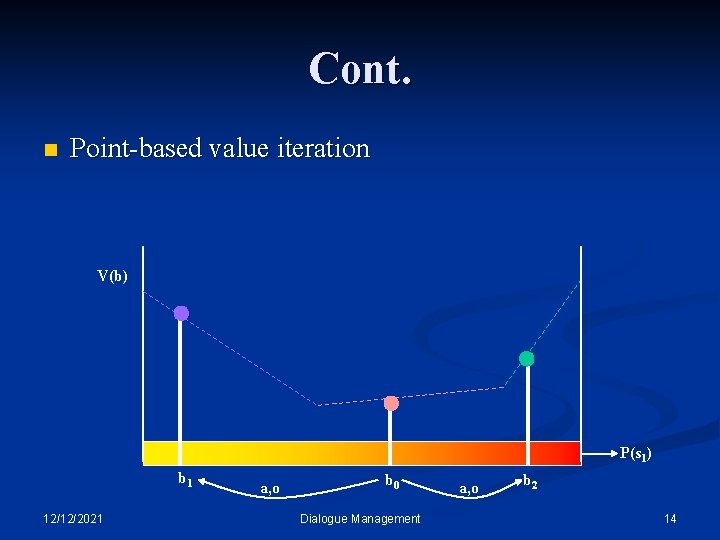

Cont. n Point-based value iteration V(b) P(s 1) b 1 12/12/2021 a, o b 0 Dialogue Management a, o b 2 14

Cont. n H-POMDP n n Action set partitioning Break the problem into many “related” POMDPs. Each smaller POMDP has only a subset of A. M-POMDP n 12/12/2021 Multi-agent POMDP Dialogue Management 15

challenges the complexity of a POMDP grows with the number of user goals, and optimization quickly becomes intractable n the choice of appropriate reward functions and their relationship to established metrics of user performance n how models of user behaviour should be created and evaluated n automatic generation of good action partitioning n 12/12/2021 Dialogue Management 16

Reference n Jason D. Williams , Steve Young, “Partially observable Markov decision processes for spoken dialog systems”, accepted 30 June 2006 n Steve Young, “USING POMDPS FOR DIALOG MANAGEMENT”, 2005 n Jason D. Williams, “A Probabilistic Model of Human/Computer Dialogue with Application to a Partially Observable Markov Decision Process” , 2003 n Joelle Pineau, “Hierarchical POMDP Planning and Execution”, 2000 n Joelle Pineau, Geoff Gordon and Sebastian Thrun, “Fast approximate POMDP planning”, 2003 12/12/2021 Dialogue Management 17

- Slides: 17