Course Effective Period COMP 6227 Artificial Intelligence September

Course Effective Period : COMP 6227 -Artificial Intelligence : September 2016 Learning from Examples II Session 19 1

Learning Outcomes At the end of this session, students will be able to: » LO 5 : Apply various techniques to an agent when acting under certainty » LO 6 : Apply how to process natural language and other perceptual signs in order that an agent can interact intelligently with the world 2

Outline 1. 2. 3. 4. 5. Theory of Learning Regression and Classification with Linear Models Artificial Neural Networks Practical Machine Learning Summary 3

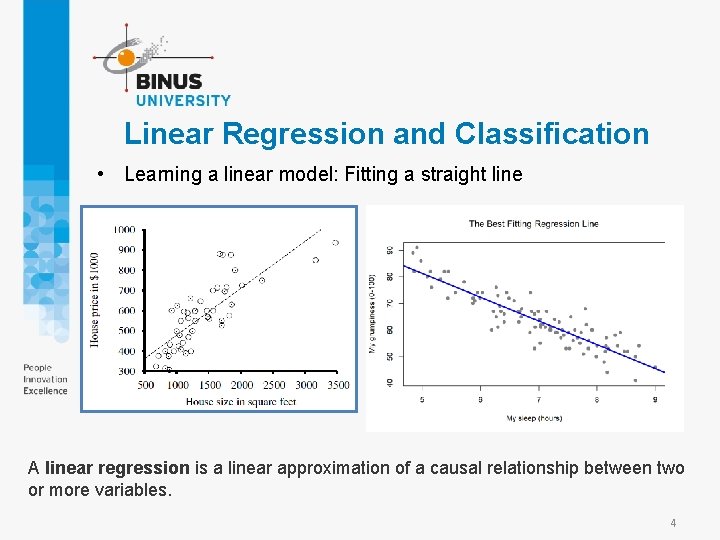

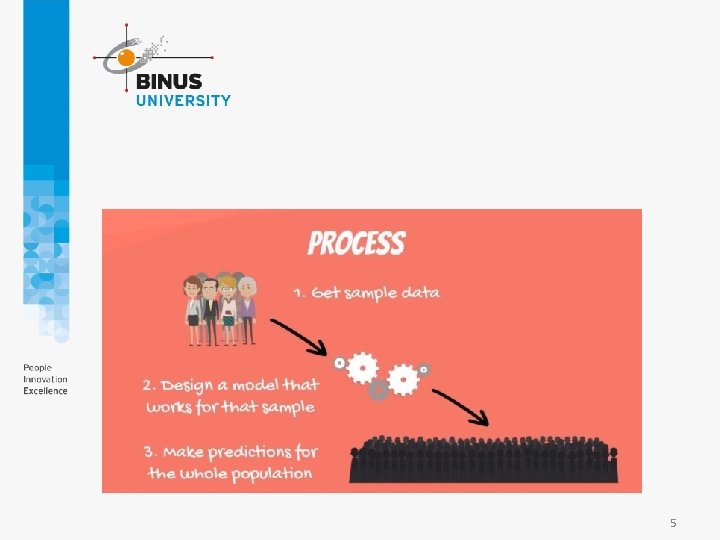

Linear Regression and Classification • Learning a linear model: Fitting a straight line A linear regression is a linear approximation of a causal relationship between two or more variables. 4

5

Example: y=2 x ( nilai y y= 2 kali nilai x) 6

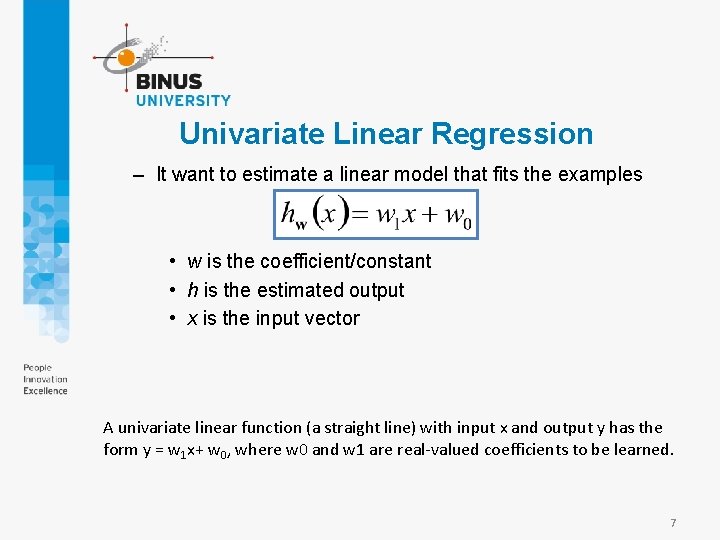

Univariate Linear Regression – It want to estimate a linear model that fits the examples • w is the coefficient/constant • h is the estimated output • x is the input vector A univariate linear function (a straight line) with input x and output y has the form y = w 1 x+ w 0, where w 0 and w 1 are real-valued coefficients to be learned. 7

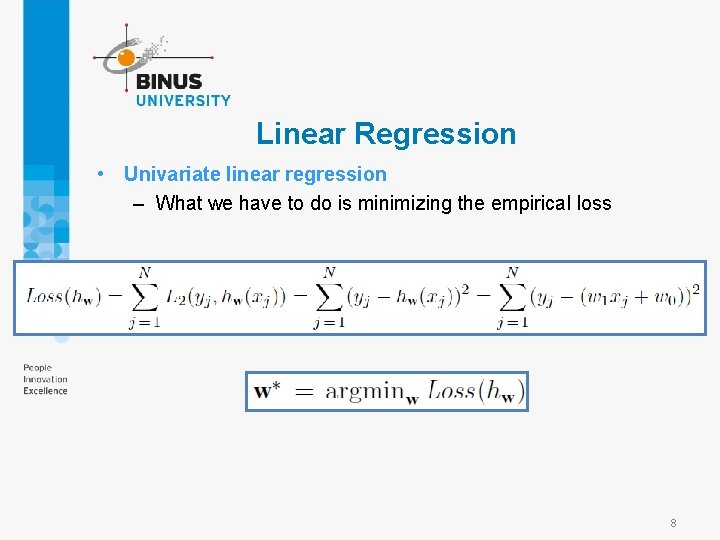

Linear Regression • Univariate linear regression – What we have to do is minimizing the empirical loss 8

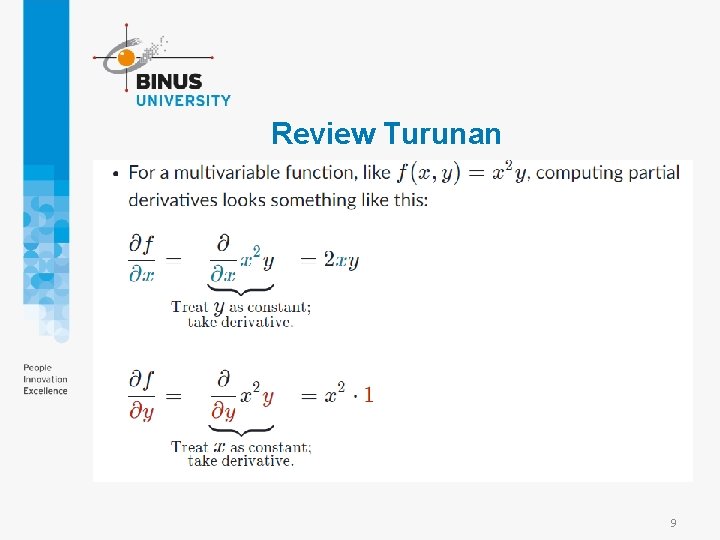

Review Turunan 9

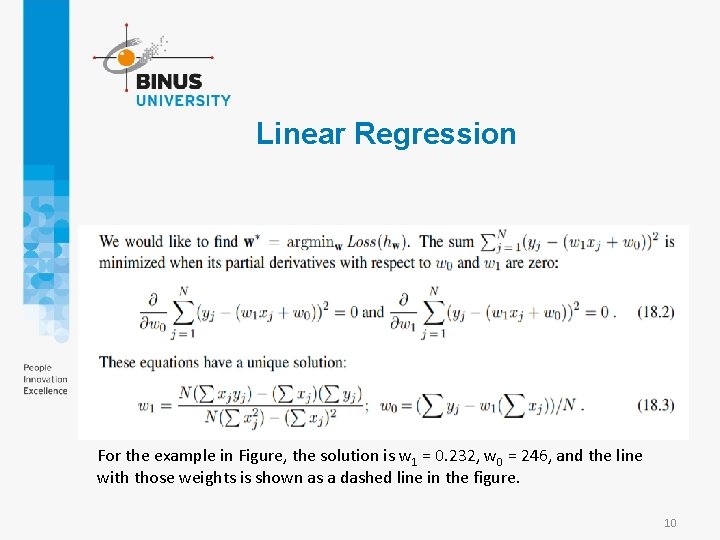

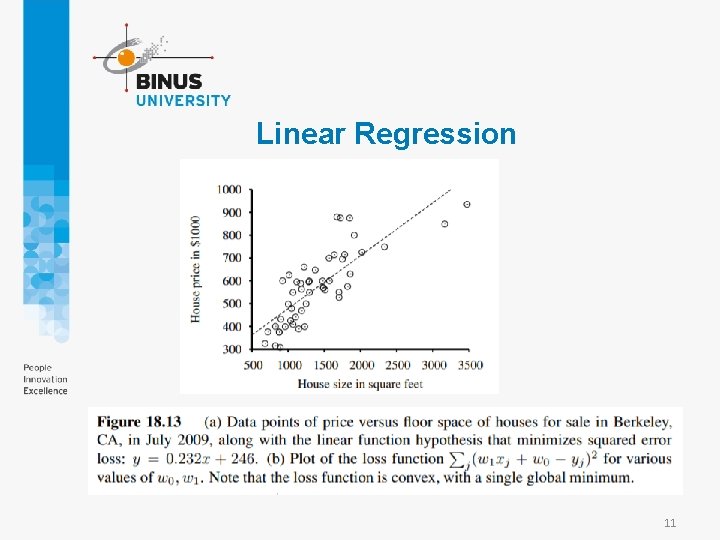

Linear Regression For the example in Figure, the solution is w 1 = 0. 232, w 0 = 246, and the line with those weights is shown as a dashed line in the figure. 10

Linear Regression 11

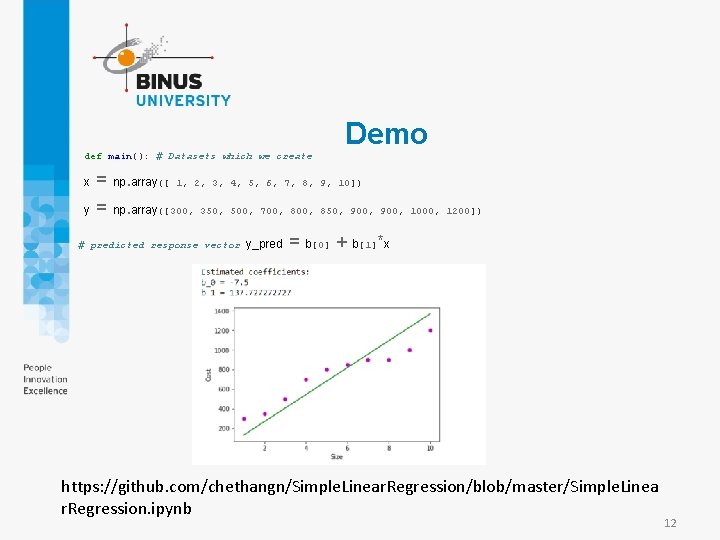

Demo def main(): # Datasets which we create x = np. array([ 1, 2, 3, 4, 5, 6, 7, 8, 9, 10]) y = np. array([300, 350, 500, 700, 850, 900, 1000, 1200]) … # predicted response vector y_pred = b[0] + b[1]*x https: //github. com/chethangn/Simple. Linear. Regression/blob/master/Simple. Linea r. Regression. ipynb 12

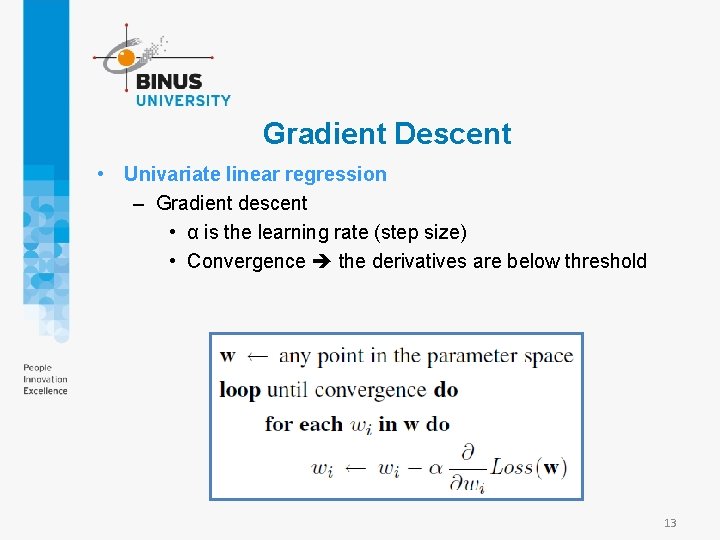

Gradient Descent • Univariate linear regression – Gradient descent • α is the learning rate (step size) • Convergence the derivatives are below threshold 13

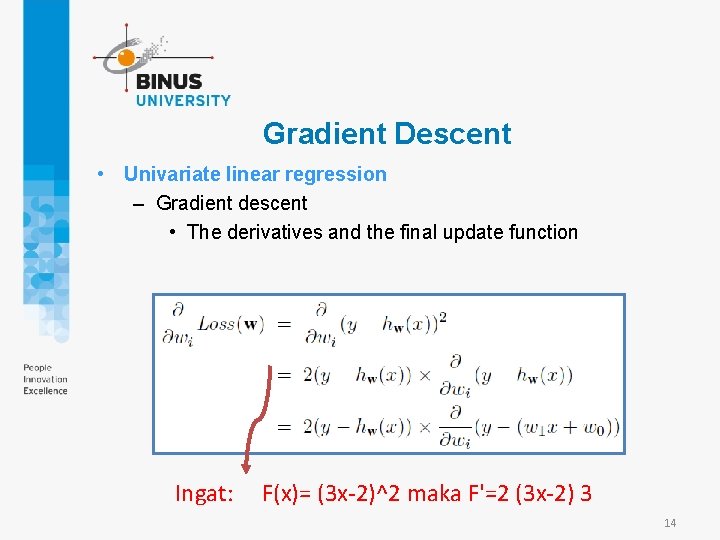

Gradient Descent • Univariate linear regression – Gradient descent • The derivatives and the final update function Ingat: F(x)= (3 x-2)^2 maka F'=2 (3 x-2) 3 14

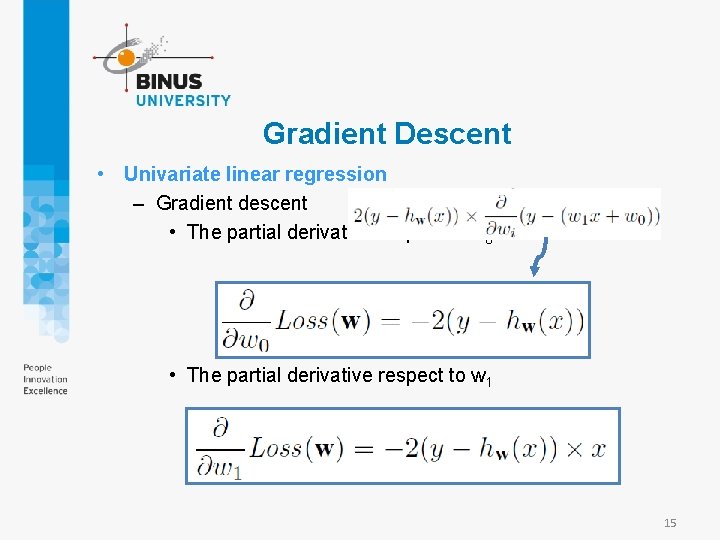

Gradient Descent • Univariate linear regression – Gradient descent • The partial derivative respect to w 0 • The partial derivative respect to w 1 15

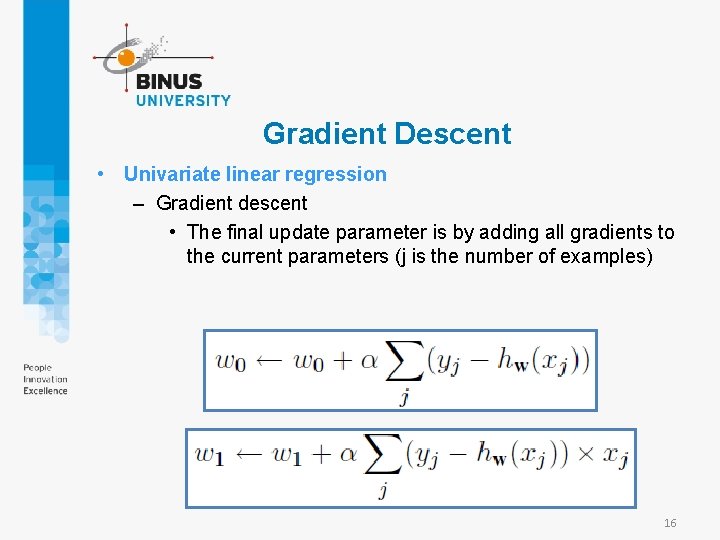

Gradient Descent • Univariate linear regression – Gradient descent • The final update parameter is by adding all gradients to the current parameters (j is the number of examples) 16

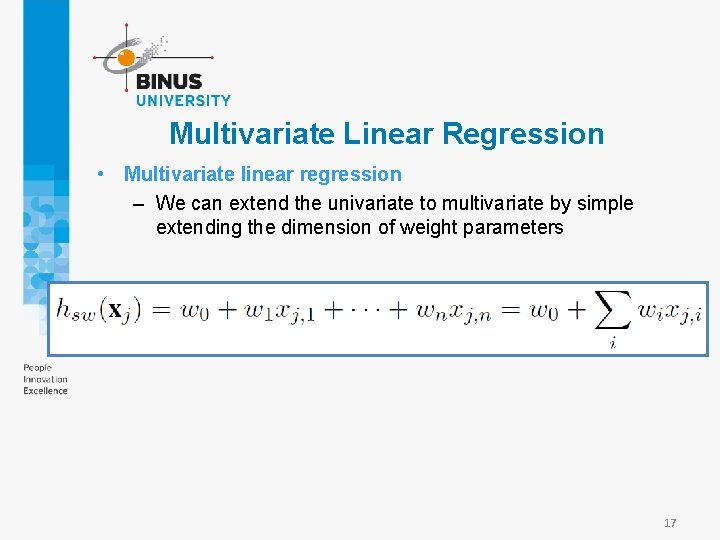

Multivariate Linear Regression • Multivariate linear regression – We can extend the univariate to multivariate by simple extending the dimension of weight parameters 17

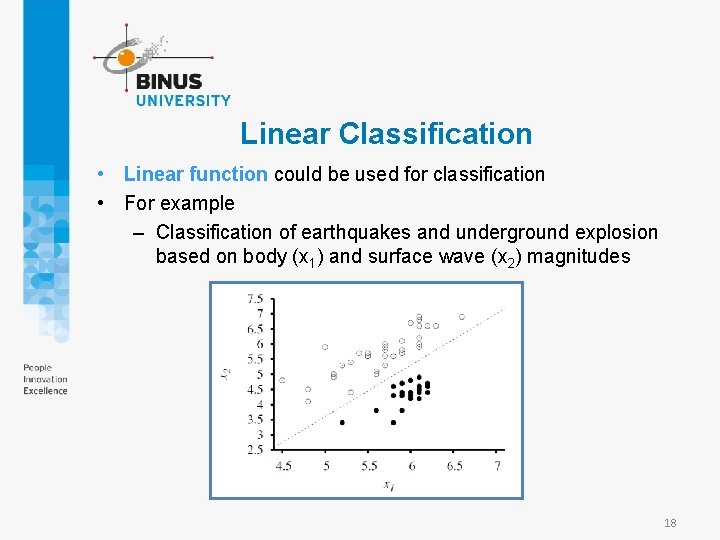

Linear Classification • Linear function could be used for classification • For example – Classification of earthquakes and underground explosion based on body (x 1) and surface wave (x 2) magnitudes 18

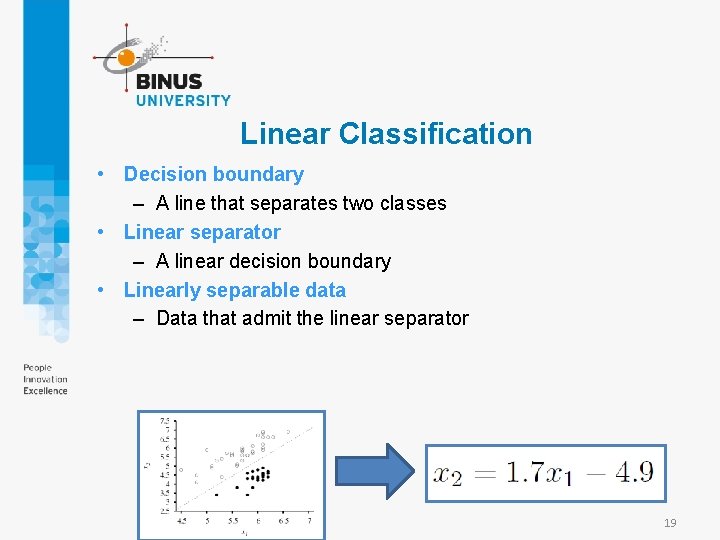

Linear Classification • Decision boundary – A line that separates two classes • Linear separator – A linear decision boundary • Linearly separable data – Data that admit the linear separator 19

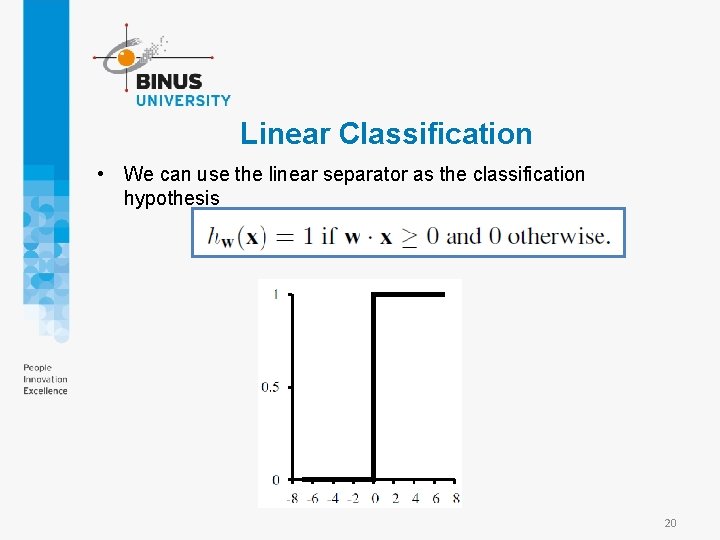

Linear Classification • We can use the linear separator as the classification hypothesis 20

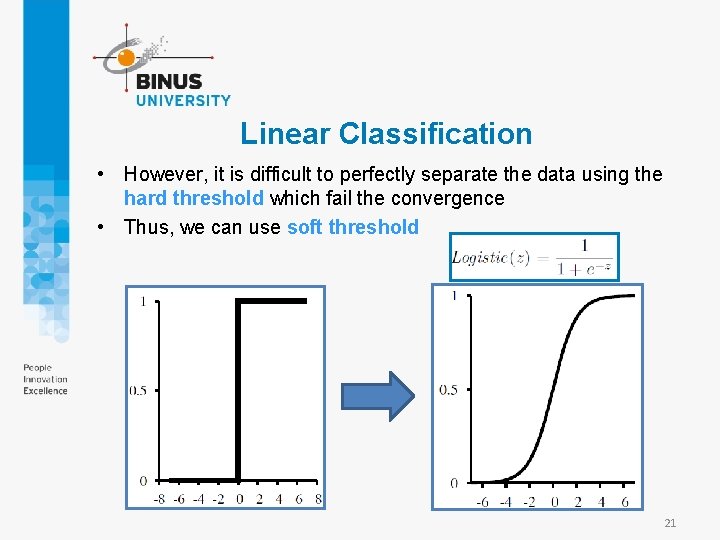

Linear Classification • However, it is difficult to perfectly separate the data using the hard threshold which fail the convergence • Thus, we can use soft threshold 21

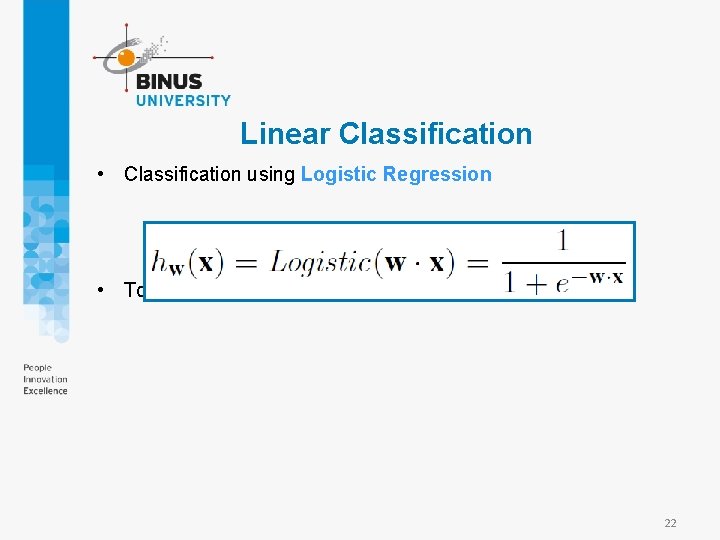

Linear Classification • Classification using Logistic Regression • To estimate the weights, gradient descent is used 22

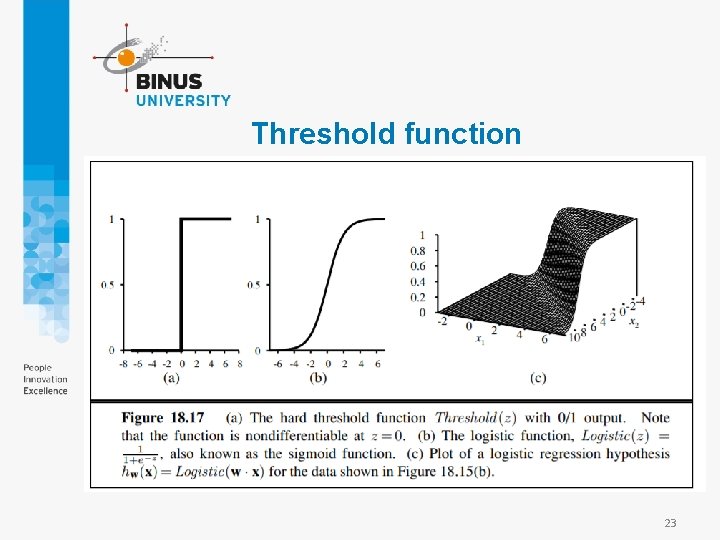

Threshold function 23

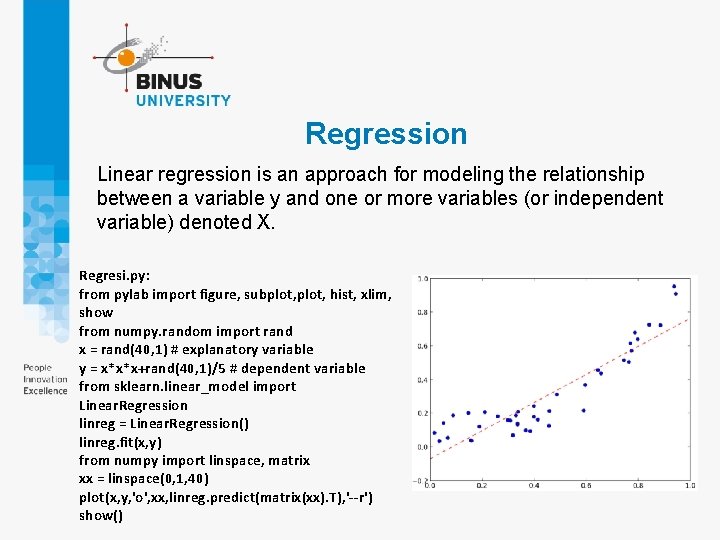

Regression Linear regression is an approach for modeling the relationship between a variable y and one or more variables (or independent variable) denoted X. Regresi. py: from pylab import figure, subplot, hist, xlim, show from numpy. random import rand x = rand(40, 1) # explanatory variable y = x*x*x+rand(40, 1)/5 # dependent variable from sklearn. linear_model import Linear. Regression linreg = Linear. Regression() linreg. fit(x, y) from numpy import linspace, matrix xx = linspace(0, 1, 40) plot(x, y, 'o', xx, linreg. predict(matrix(xx). T), '--r') show()

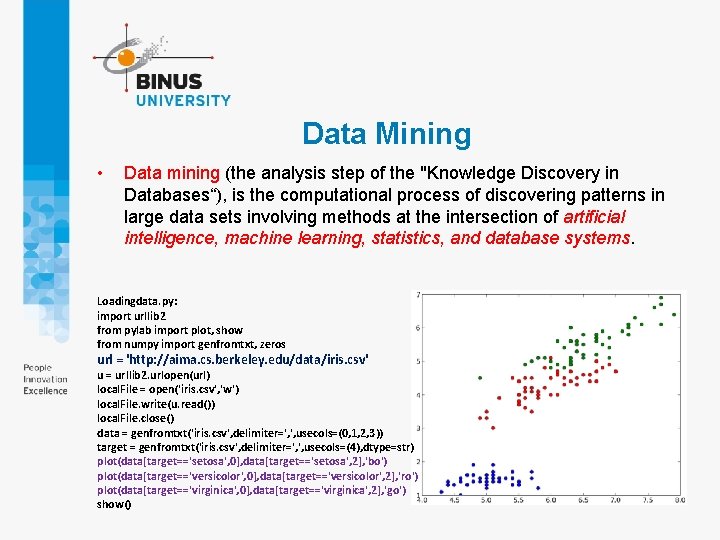

Data Mining • Data mining (the analysis step of the "Knowledge Discovery in Databases“), is the computational process of discovering patterns in large data sets involving methods at the intersection of artificial intelligence, machine learning, statistics, and database systems. Loadingdata. py: import urllib 2 from pylab import plot, show from numpy import genfromtxt, zeros url = 'http: //aima. cs. berkeley. edu/data/iris. csv' u = urllib 2. urlopen(url) local. File = open('iris. csv', 'w') local. File. write(u. read()) local. File. close() data = genfromtxt('iris. csv', delimiter=', ', usecols=(0, 1, 2, 3)) target = genfromtxt('iris. csv', delimiter=', ', usecols=(4), dtype=str) plot(data[target=='setosa', 0], data[target=='setosa', 2], 'bo') plot(data[target=='versicolor', 0], data[target=='versicolor', 2], 'ro') plot(data[target=='virginica', 0], data[target=='virginica', 2], 'go') show()

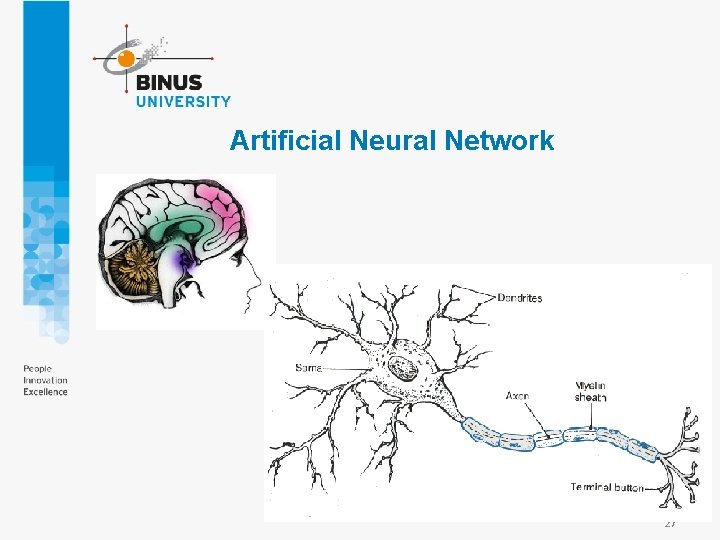

Artificial Neural Network 27

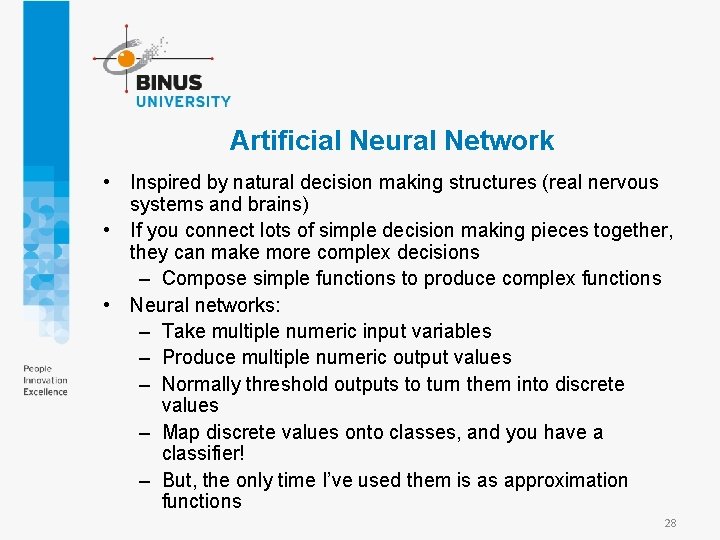

Artificial Neural Network • Inspired by natural decision making structures (real nervous systems and brains) • If you connect lots of simple decision making pieces together, they can make more complex decisions – Compose simple functions to produce complex functions • Neural networks: – Take multiple numeric input variables – Produce multiple numeric output values – Normally threshold outputs to turn them into discrete values – Map discrete values onto classes, and you have a classifier! – But, the only time I’ve used them is as approximation functions 28

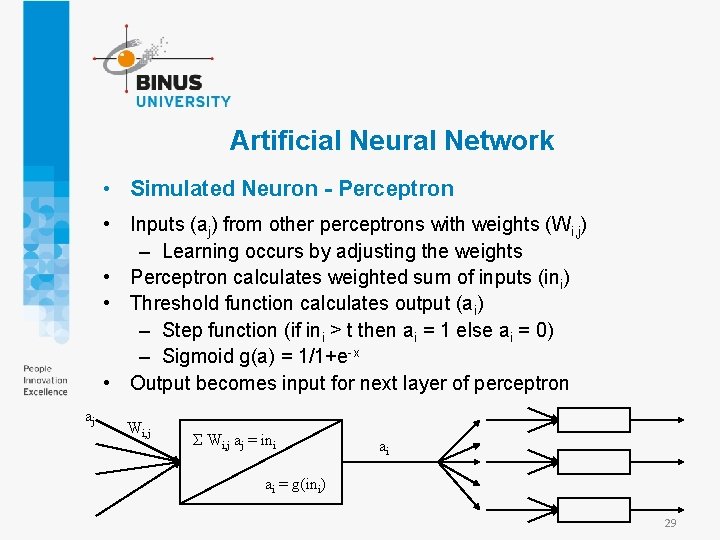

Artificial Neural Network • Simulated Neuron - Perceptron • Inputs (aj) from other perceptrons with weights (Wi, j) – Learning occurs by adjusting the weights • Perceptron calculates weighted sum of inputs (ini) • Threshold function calculates output (ai) – Step function (if ini > t then ai = 1 else ai = 0) – Sigmoid g(a) = 1/1+e-x • Output becomes input for next layer of perceptron aj Wi, j Σ Wi, j aj = ini ai ai = g(ini) 29

Artificial Neural Network • Neural Network Structure • Single perceptron can represent AND, OR not XOR – Combinations of perceptron are more powerful • Perceptron are usually organized on layers – Input layer: takes external input – Hidden layer(s) – Output layer: external output • Feed-forward vs. recurrent – Feed-forward: outputs only connect to later layers • Learning is easier – Recurrent: outputs can connect to earlier layers or same layer • Internal state 30

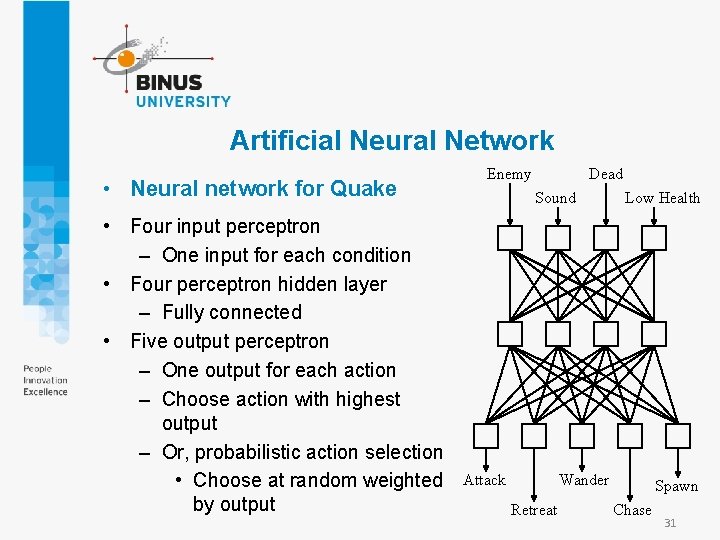

Artificial Neural Network • Neural network for Quake • Four input perceptron – One input for each condition • Four perceptron hidden layer – Fully connected • Five output perceptron – One output for each action – Choose action with highest output – Or, probabilistic action selection • Choose at random weighted by output Enemy Dead Sound Attack Low Health Wander Retreat Spawn Chase 31

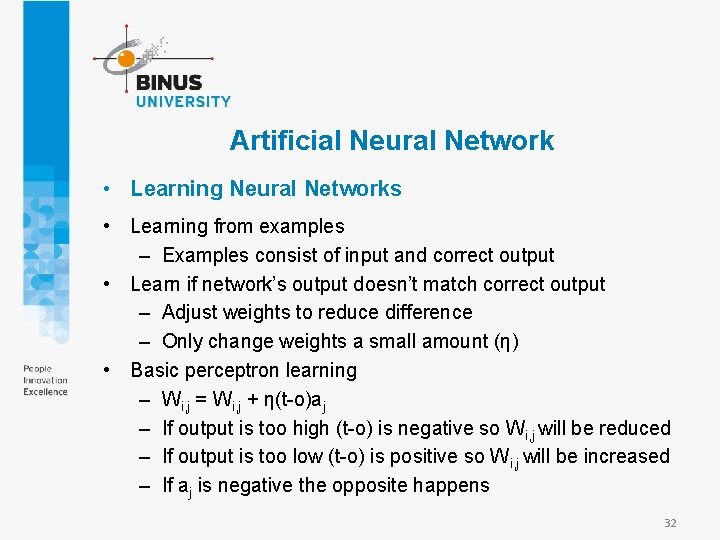

Artificial Neural Network • Learning Neural Networks • Learning from examples – Examples consist of input and correct output • Learn if network’s output doesn’t match correct output – Adjust weights to reduce difference – Only change weights a small amount (η) • Basic perceptron learning – Wi, j = Wi, j + η(t-o)aj – If output is too high (t-o) is negative so Wi, j will be reduced – If output is too low (t-o) is positive so Wi, j will be increased – If aj is negative the opposite happens 32

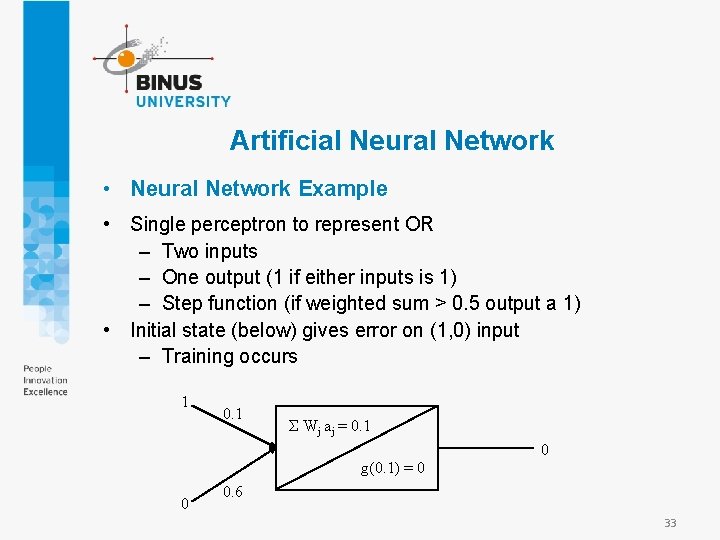

Artificial Neural Network • Neural Network Example • Single perceptron to represent OR – Two inputs – One output (1 if either inputs is 1) – Step function (if weighted sum > 0. 5 output a 1) • Initial state (below) gives error on (1, 0) input – Training occurs 1 0. 1 Σ Wj aj = 0. 1 0 g(0. 1) = 0 0 0. 6 33

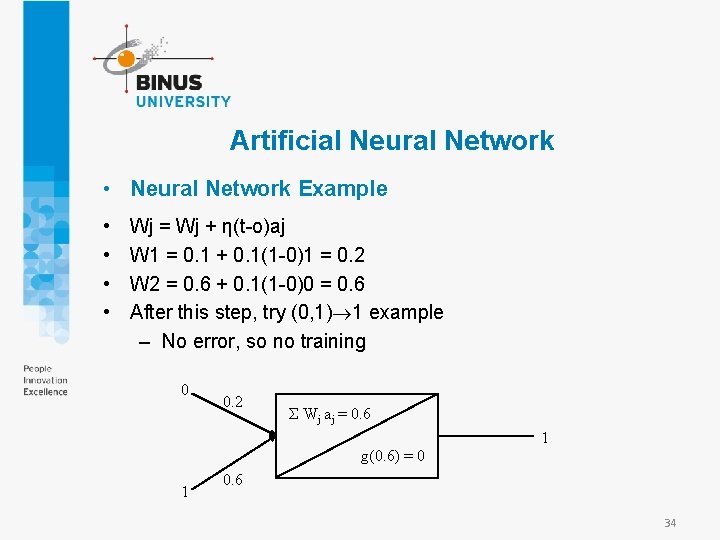

Artificial Neural Network • Neural Network Example • • Wj = Wj + η(t-o)aj W 1 = 0. 1 + 0. 1(1 -0)1 = 0. 2 W 2 = 0. 6 + 0. 1(1 -0)0 = 0. 6 After this step, try (0, 1) 1 example – No error, so no training 0 0. 2 Σ Wj aj = 0. 6 1 g(0. 6) = 0 1 0. 6 34

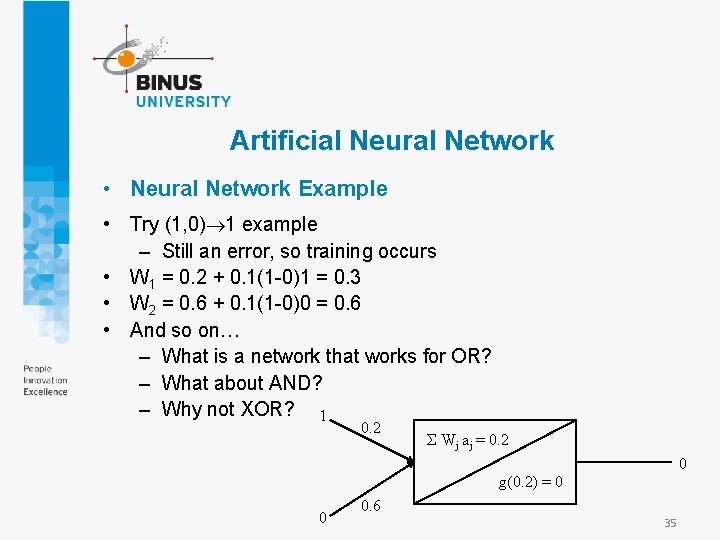

Artificial Neural Network • Neural Network Example • Try (1, 0) 1 example – Still an error, so training occurs • W 1 = 0. 2 + 0. 1(1 -0)1 = 0. 3 • W 2 = 0. 6 + 0. 1(1 -0)0 = 0. 6 • And so on… – What is a network that works for OR? – What about AND? – Why not XOR? 1 0. 2 Σ Wj aj = 0. 2 0 g(0. 2) = 0 0 0. 6 35

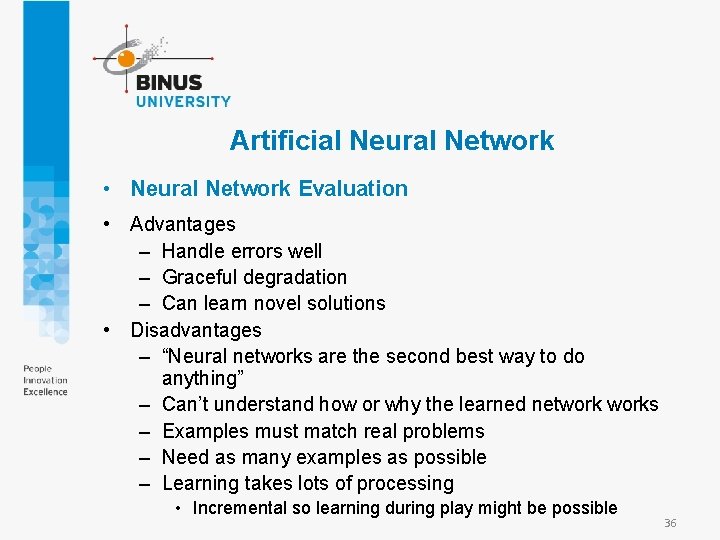

Artificial Neural Network • Neural Network Evaluation • Advantages – Handle errors well – Graceful degradation – Can learn novel solutions • Disadvantages – “Neural networks are the second best way to do anything” – Can’t understand how or why the learned networks – Examples must match real problems – Need as many examples as possible – Learning takes lots of processing • Incremental so learning during play might be possible 36

Practical Machine Learning • Case Study • Character recognition neural networks Recognition of both printed and handwrittencharacters is a typical domain where neural networks have been successfully applied • Optical character recognition systems were among the first commercial applications of neural networks 37

Practical Machine Learning • Case Study • We demonstrate an application of a multilayer feed forward network for printed character recognition. • For simplicity, we can limit our task to the recognition of digits from 0 to 9. Each digit is represented by a 5 ´ 9 bit map. • In commercial applications, where a better resolution is required, at least 16 ´ 16 bit maps are used. 38

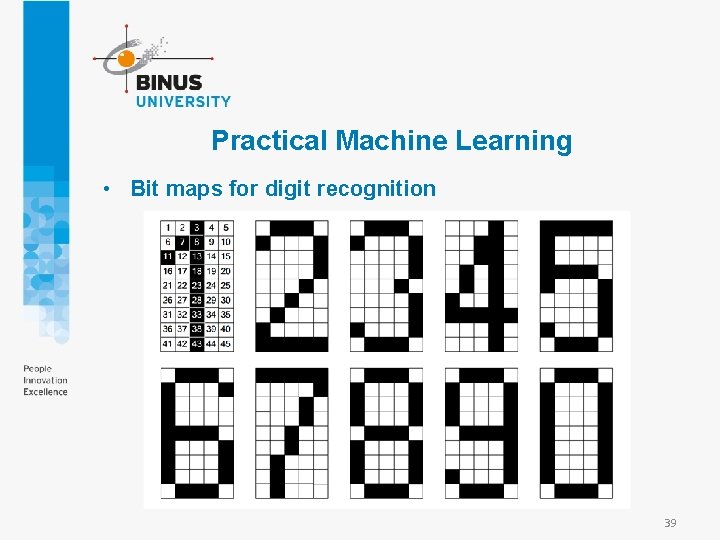

Practical Machine Learning • Bit maps for digit recognition 39

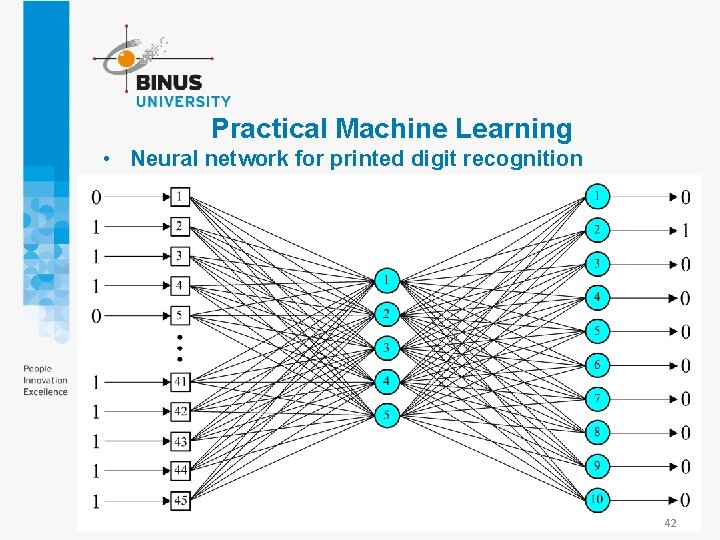

Practical Machine Learning • How do we choose the architecture of a neural network? • The number of neurons in the input layer is decided by the number of pixels in the bit map. The bit map in our example consists of 45 pixels, and thus we need 45 input neurons. • The output layer has 10 neurons – one neuron for each digit to be recognized. 40

Practical Machine Learning • How do we determine an optimal number of hidden neurons? • Complex patterns cannot be detected by a small number of hidden neurons; however too many of them can dramatically increase the computational burden. • Another problem is overfitting. The greater the number of hidden neurons, the greater the ability of the network to recognize existing patterns. However, if the number of hidden neurons is too big, the network might simply memorize all training examples. 41

Practical Machine Learning • Neural network for printed digit recognition 42

Practical Machine Learning • What are the test examples for character recognition? • A test set has to be strictly independent from the training examples. • To test the character recognition network, we present it with examples that include “noise” – the distortion of the input patterns. • We evaluate the performance of the printed digit recognition networks with 1000 test examples (100 for each digit to be recognized). 43

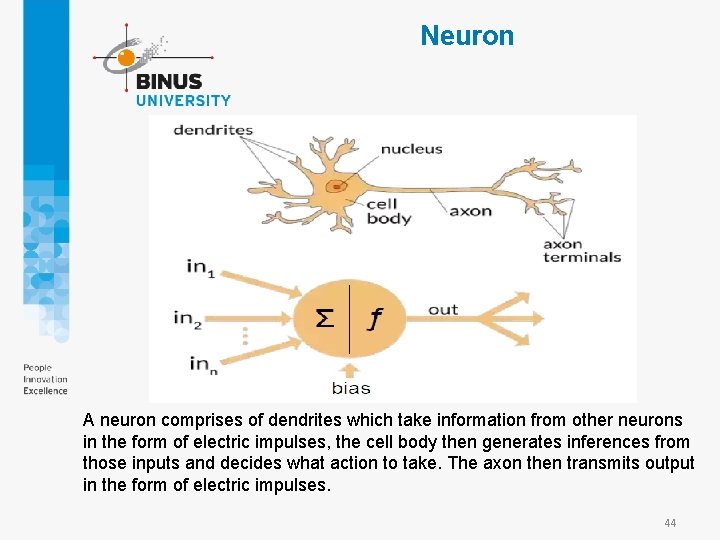

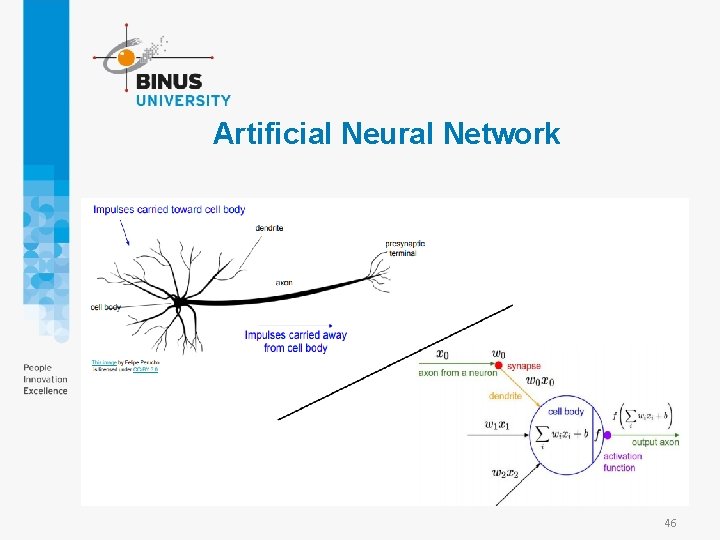

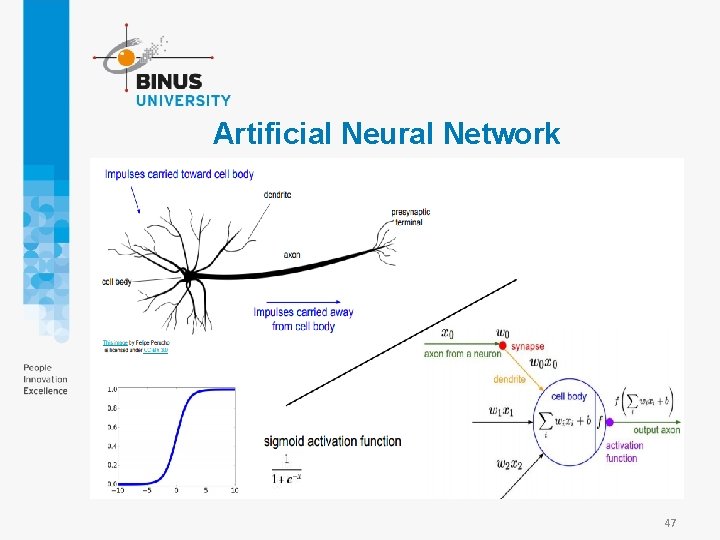

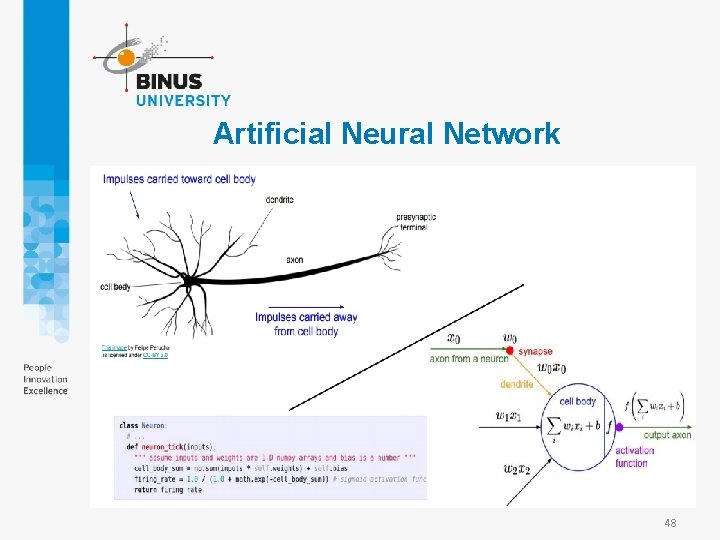

Neuron A neuron comprises of dendrites which take information from other neurons in the form of electric impulses, the cell body then generates inferences from those inputs and decides what action to take. The axon then transmits output in the form of electric impulses. 44

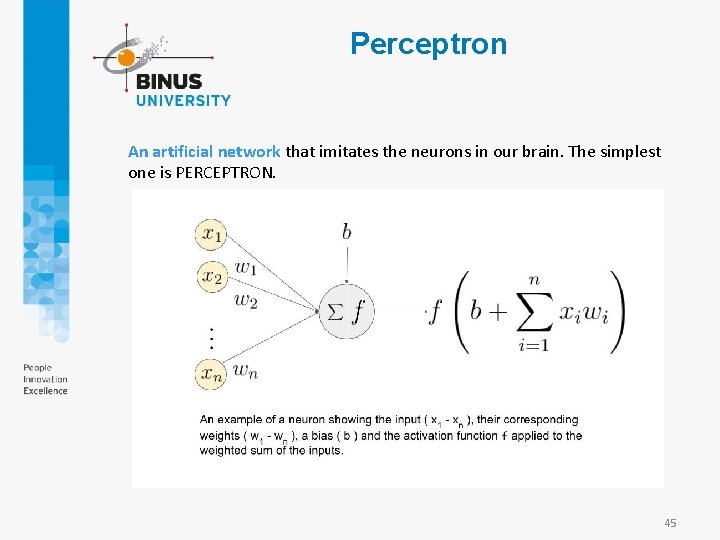

Perceptron An artificial network that imitates the neurons in our brain. The simplest one is PERCEPTRON. 45

Artificial Neural Network 46

Artificial Neural Network 47

Artificial Neural Network 48

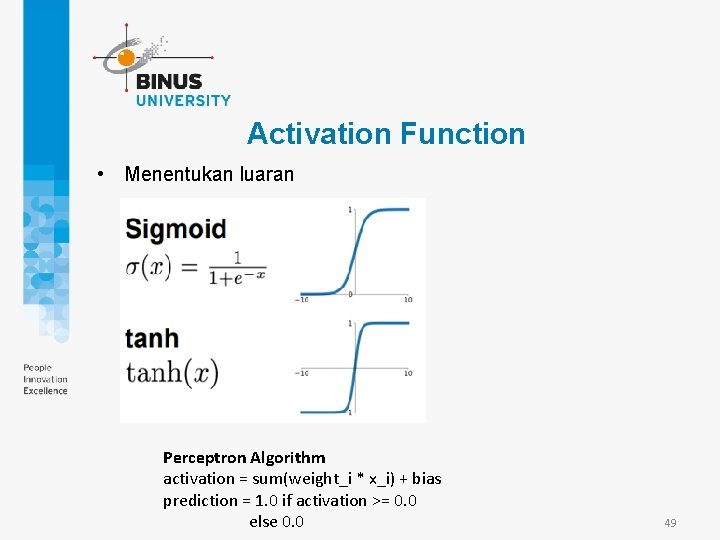

Activation Function • Menentukan luaran Perceptron Algorithm activation = sum(weight_i * x_i) + bias prediction = 1. 0 if activation >= 0. 0 else 0. 0 49

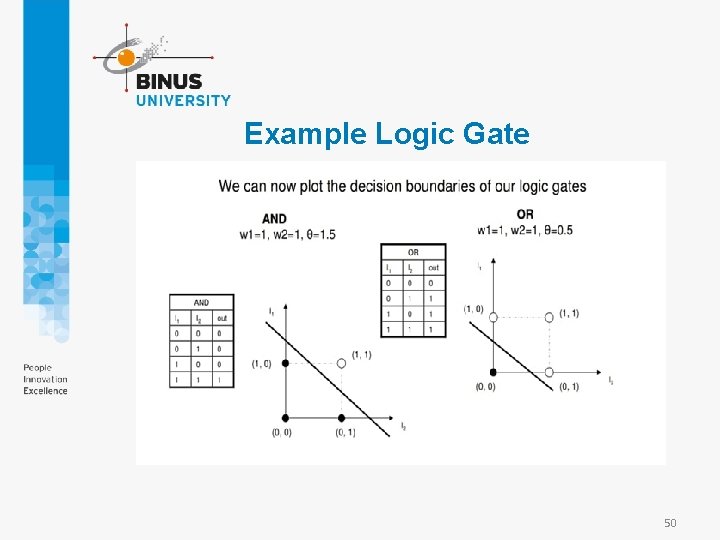

Example Logic Gate 50

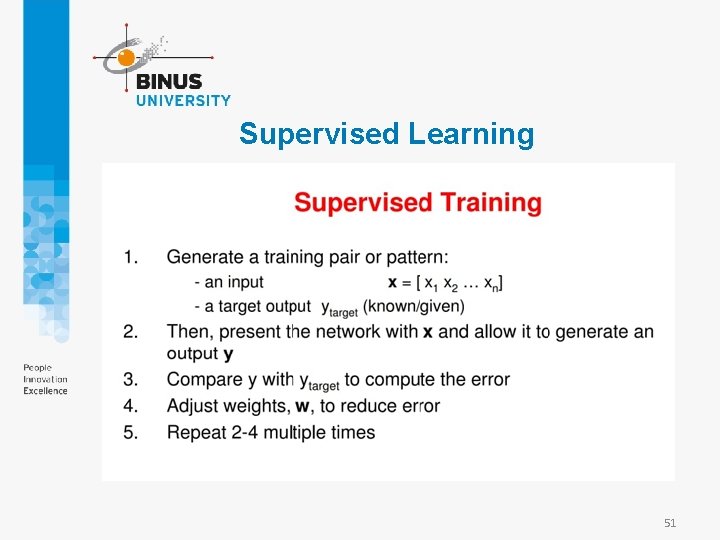

Supervised Learning 51

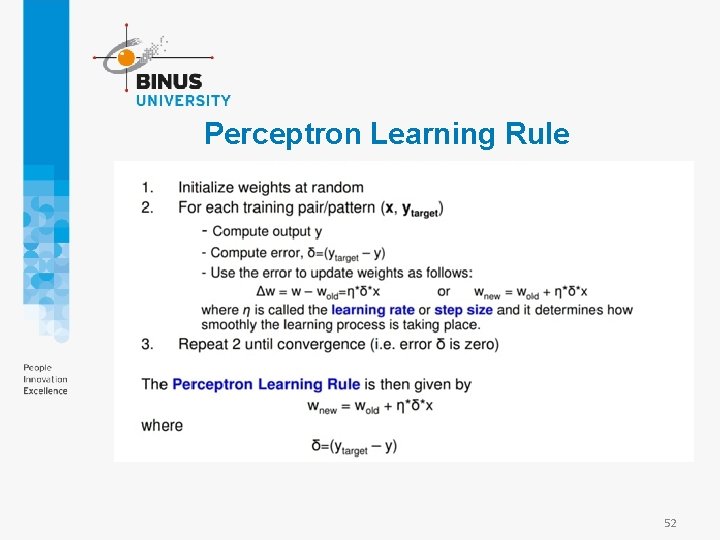

Perceptron Learning Rule 52

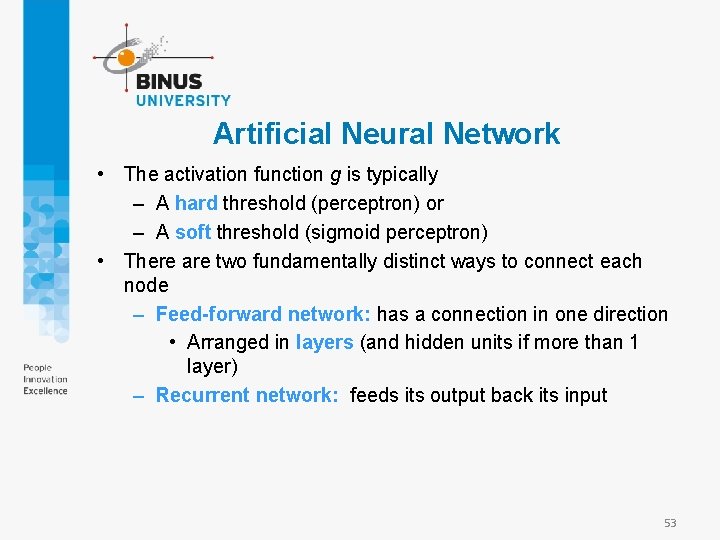

Artificial Neural Network • The activation function g is typically – A hard threshold (perceptron) or – A soft threshold (sigmoid perceptron) • There are two fundamentally distinct ways to connect each node – Feed-forward network: has a connection in one direction • Arranged in layers (and hidden units if more than 1 layer) – Recurrent network: feeds its output back its input 53

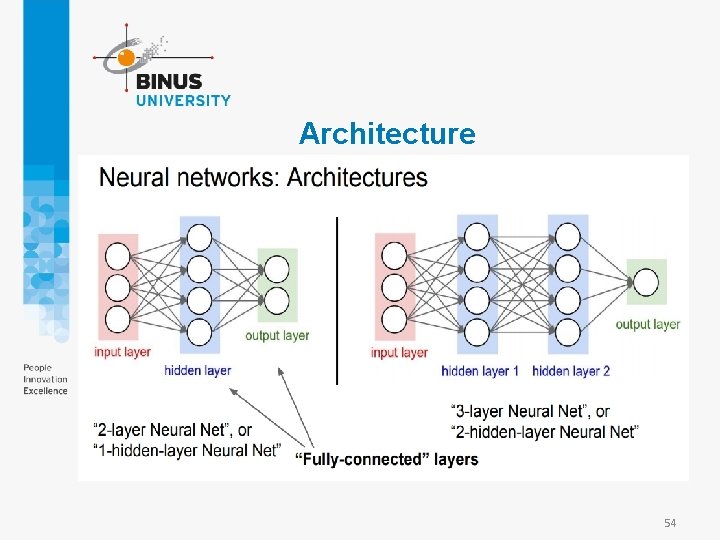

Architecture 54

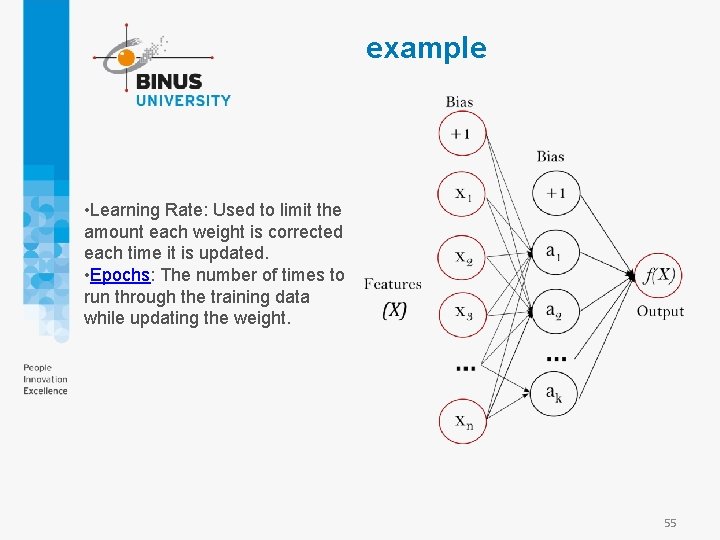

example • Learning Rate: Used to limit the amount each weight is corrected each time it is updated. • Epochs: The number of times to run through the training data while updating the weight. 55

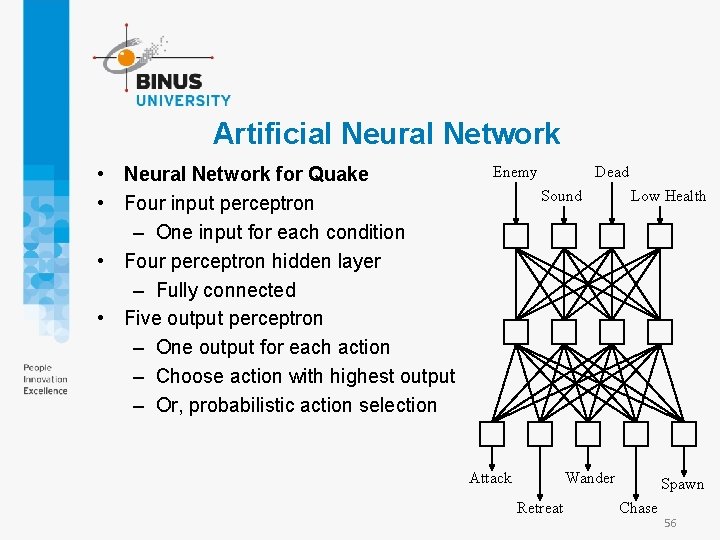

Artificial Neural Network • Neural Network for Quake • Four input perceptron – One input for each condition • Four perceptron hidden layer – Fully connected • Five output perceptron – One output for each action – Choose action with highest output – Or, probabilistic action selection Enemy Dead Sound Attack Low Health Wander Retreat Spawn Chase 56

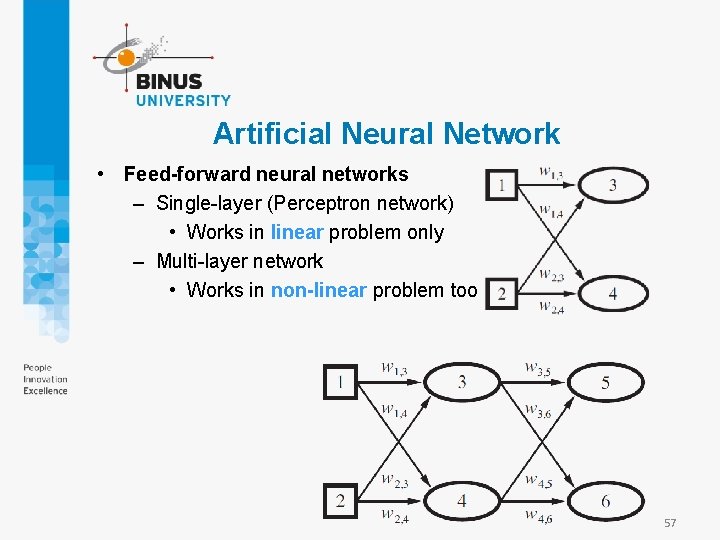

Artificial Neural Network • Feed-forward neural networks – Single-layer (Perceptron network) • Works in linear problem only – Multi-layer network • Works in non-linear problem too 57

Backpropagation • The back-propagation process can be summarized as follows – Compute the derivative values for the output units, using the observed error – Starting with output layer, repeat the following for each layer until the earliest hidden layer is reached • Propagate the derivative values back to the previous layer • Update the weights between the two layer 58

Example Class MLPClassifier implements a multi-layer perceptron (MLP) algorithm that trains using Backpropagation. MLP trains on two arrays: array X of size (n_samples, n_features), which holds the training samples represented as floating point feature vectors; and array y of size (n_samples, ), which holds the target values (class labels) for the training samples: 59

![example from sklearn. neural_network import MLPClassifier X = [[0, 0], [1. 5, 1. 5], example from sklearn. neural_network import MLPClassifier X = [[0, 0], [1. 5, 1. 5],](http://slidetodoc.com/presentation_image_h2/9eaf659aea8fb774dbede058da053fa4/image-60.jpg)

example from sklearn. neural_network import MLPClassifier X = [[0, 0], [1. 5, 1. 5], [2, 2] ] y = [0, 1, 2] clf = MLPClassifier(solver='lbfgs', alpha=1 e-5, hidden_layer_sizes=(5, 2), random_state=1) clf. fit(X, y) MLPClassifier(alpha=1 e-05, hidden_layer_sizes=(5, 2), random_state=1, solver='lbfgs') print (clf. predict([[0, 0], [1. 2, 1. 5], [2. 1, 2. 2]])) [0 1 2] https: //repl. it/languages/python 3 60

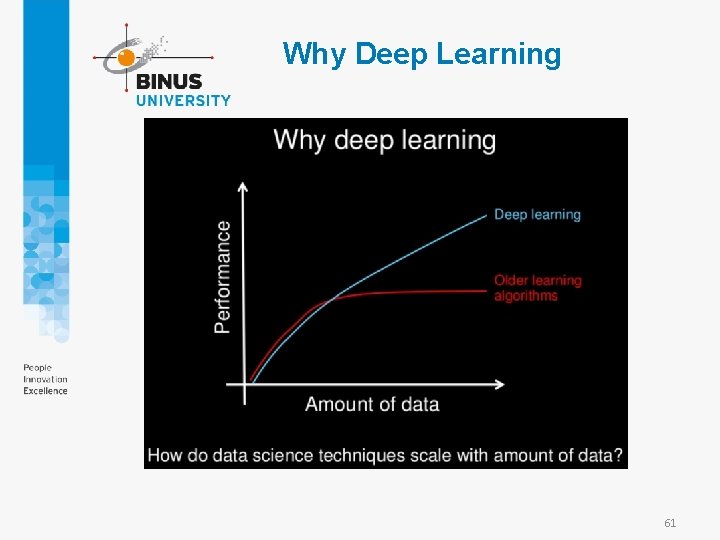

Why Deep Learning 61

Implementation Autonomous Car 62

Summary • Learning needed for unknown environments, lazy designers • Learning agent = performance element + learning element • For supervised learning, the aim is to find a simple hypothesis approximately consistent with training examples • Decision tree learning using information gain • Learning performance = prediction accuracy measured on test set 63

References • Stuart Russell, Peter Norvig, . 2010. Artificial intelligence : a modern approach. PE. New Jersey. ISBN: 9780132071482, Chapter 18 • Elaine Rich, Kevin Knight, Shivashankar B. Nair. 2010. Artificial Intelligence. MHE. New York. , Chapter 17 • Learning System: http: //www. myreaders. info/06_Learning_Systems. pdf • Machine Learning: http: //www. langbein. org/publish/ai/V/AI-18 -V_1. pdf 64

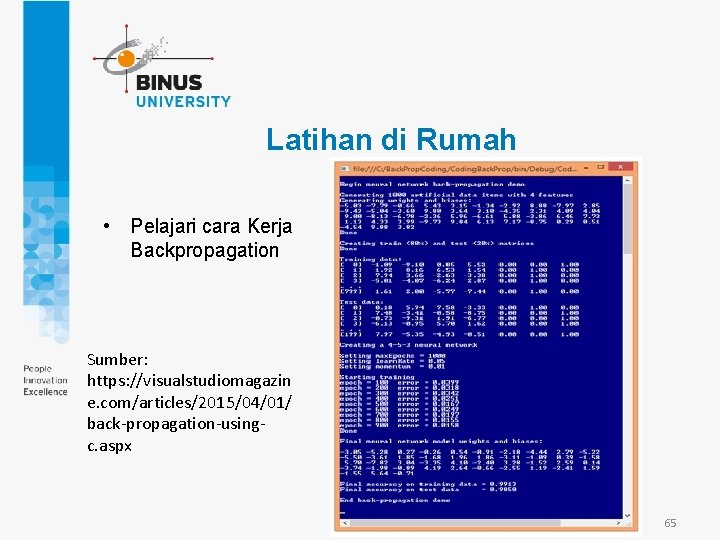

Latihan di Rumah • Pelajari cara Kerja Backpropagation Sumber: https: //visualstudiomagazin e. com/articles/2015/04/01/ back-propagation-usingc. aspx 65

- Slides: 65