Course Artificial Intelligence Effective Period September 2018 Natural

Course : Artificial Intelligence Effective Period : September 2018 Natural Language Processing Session 22 1

Learning Outcomes At the end of this session, students will be able to: § LO 6: Apply AI algorithms on various applications such as Game AI, Natural Language Processing, and Computer Vision 2

Outline 1. Language Models 2. Text Classification 3. Information Retrieval 4. Information Extraction 3

Natural Language Processing • Agent which want to add the information needs to understand (at least partially) of the human language (natural language) – To communicate with humans – To acquire information from written language • There are 3 ways to acquire information: – Text Classification – Information Retrieval – Information Extraction 4

Knowledge and Information Data: Unorganized and unprocessed facts; static; a set of discrete facts about events Information: Aggregation of data that makes decision making easier Knowledge: combination of experiences and important information need !

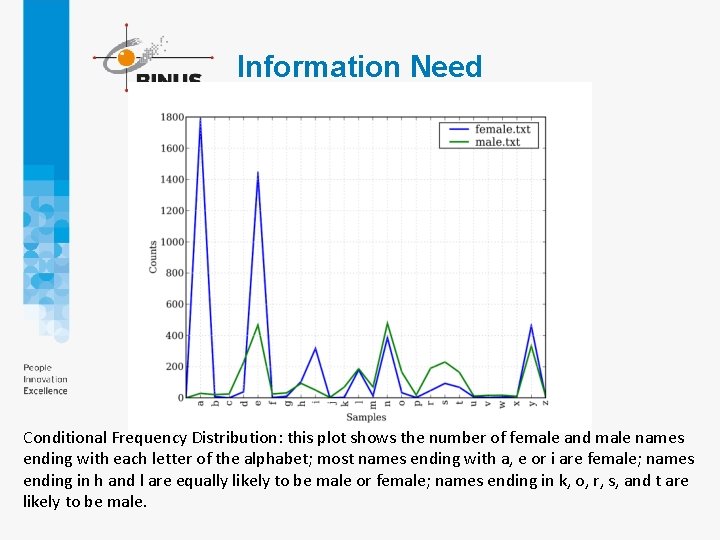

Information Need Conditional Frequency Distribution: this plot shows the number of female and male names ending with each letter of the alphabet; most names ending with a, e or i are female; names ending in h and l are equally likely to be male or female; names ending in k, o, r, s, and t are likely to be male.

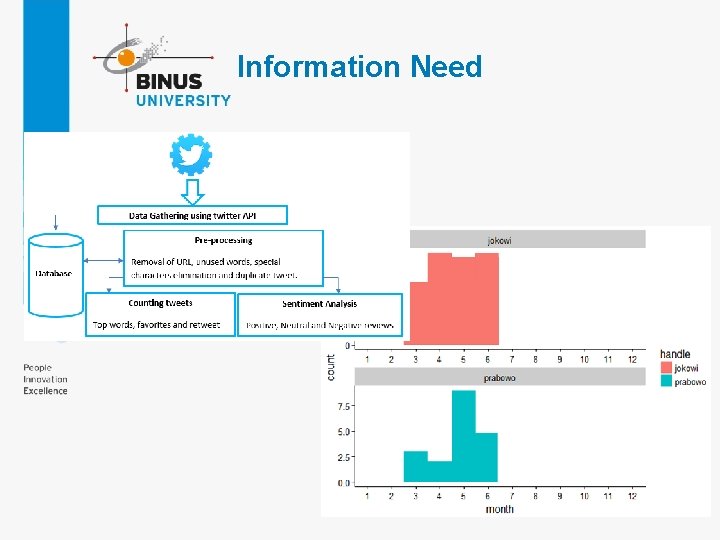

Information Need

Language Models • One common factor in searching information is the language models • Formal languages also have rules that define the meaning or semantics of a program; – For example: The rules say that the "meaning" of "2 + 2" is 4, and the meaning of “ 1 / 0 ” is that an error is signaled • Natural languages is ambiguous and difficult to deal (large and changing) 8

Text Classification • Given a text of some kind, decide which of predefined set of classes it belongs to (categorization) – I. e. spam detection (spam and ham) • Training data 9

Information Retrieval • Information retrieval (Googling) is the task of finding documents that are relevant to a user’s need for information • An information retrieval (IR) system can be characterized by – A corpus of documents – Queries posed in a query language – A result set – A presentation of the result set • The earliest IR systems worked on a Boolean keyword model 10

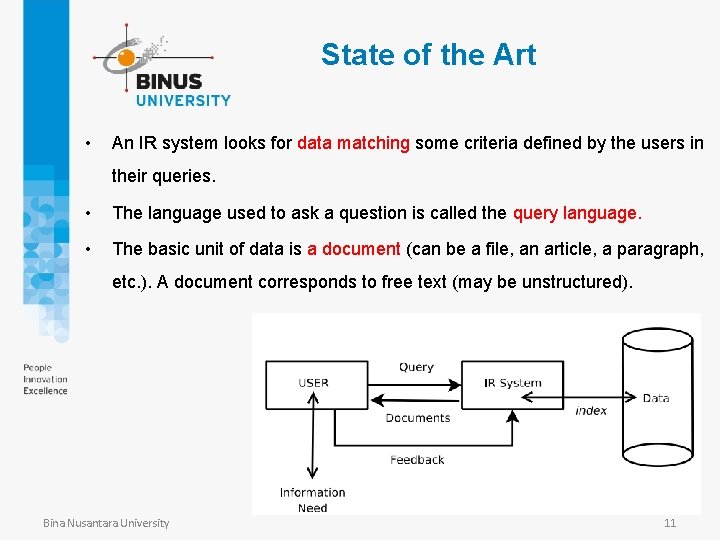

State of the Art • An IR system looks for data matching some criteria defined by the users in their queries. • The language used to ask a question is called the query language. • The basic unit of data is a document (can be a file, an article, a paragraph, etc. ). A document corresponds to free text (may be unstructured). Bina Nusantara University 11

Size of information • These queries use keywords. • All the documents are gathered into a collection (or corpus). Example: 1 million documents, each counting about 1000 words if each word is encoded using 6 bytes: 109 × 1000 × 6/1024 ≃ 6 GB Bina Nusantara University 12

Learning NLP • NLTK (Natural Language Toolkit) https: //www. nltk. org/ • Python • Speech recognition • pyttsx 13

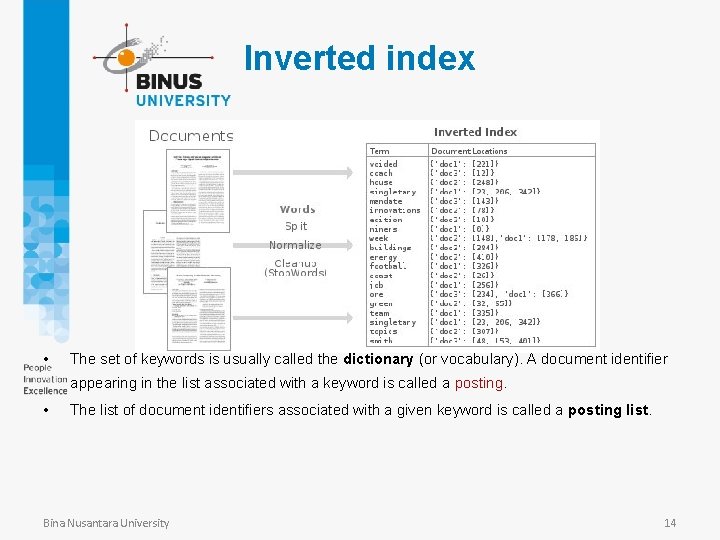

Inverted index • The set of keywords is usually called the dictionary (or vocabulary). A document identifier appearing in the list associated with a keyword is called a posting. • The list of document identifiers associated with a given keyword is called a posting list. Bina Nusantara University 14

Index • How to relate the user’s information need with some documents’ content ? “using an index to refer to documents “ • Usually an index is a list of terms that appear in a document. The kind of index we use maps keywords to the list of documents, we call this as an inverted index. Bina Nusantara University 15

IR Technique • A first model of IR technique to build an index and apply queries on this index. • Example of input collection (Shakespeare’s plays): Doc 1 I did enact Julius Caesar: I was killed i’ the Capitol; Brutus killed me. Doc 2 So let it be with Caesar. The noble Brutus hath told you Caesar was ambitious Bina Nusantara University 16

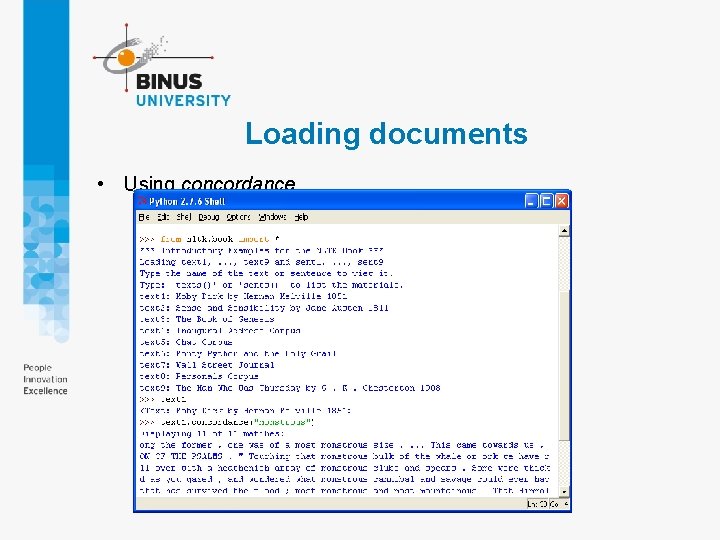

Loading documents • Using concordance

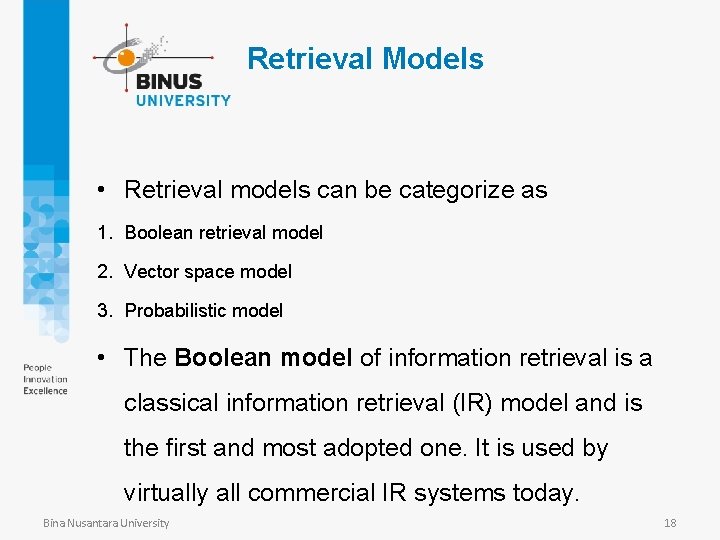

Retrieval Models • Retrieval models can be categorize as 1. Boolean retrieval model 2. Vector space model 3. Probabilistic model • The Boolean model of information retrieval is a classical information retrieval (IR) model and is the first and most adopted one. It is used by virtually all commercial IR systems today. Bina Nusantara University 18

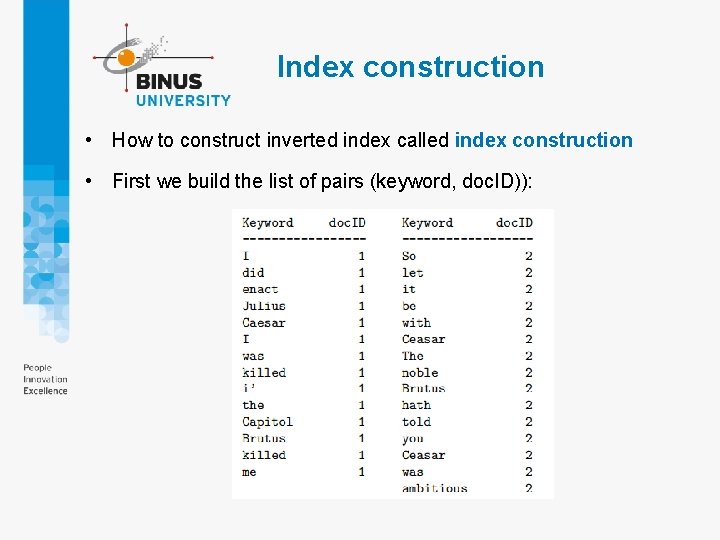

Index construction • How to construct inverted index called index construction • First we build the list of pairs (keyword, doc. ID)):

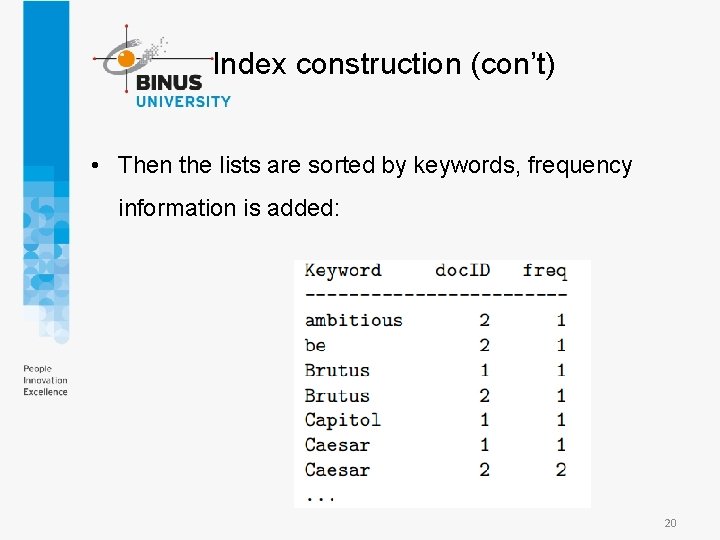

Index construction (con’t) • Then the lists are sorted by keywords, frequency information is added: 20

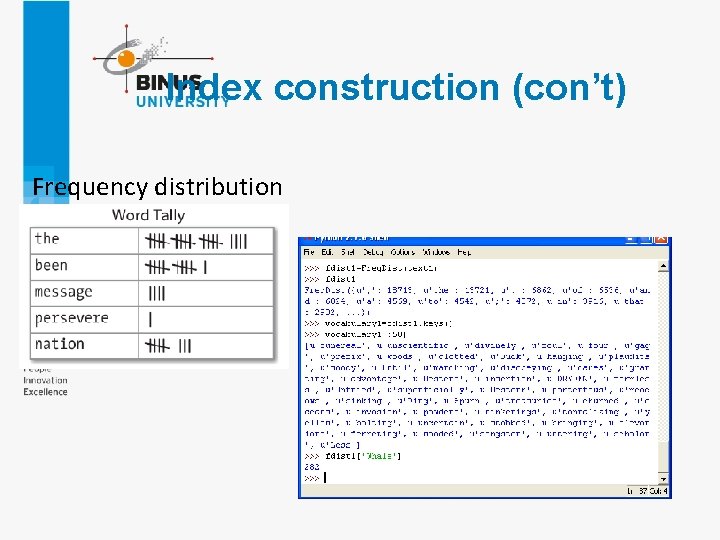

Index construction (con’t) Frequency distribution

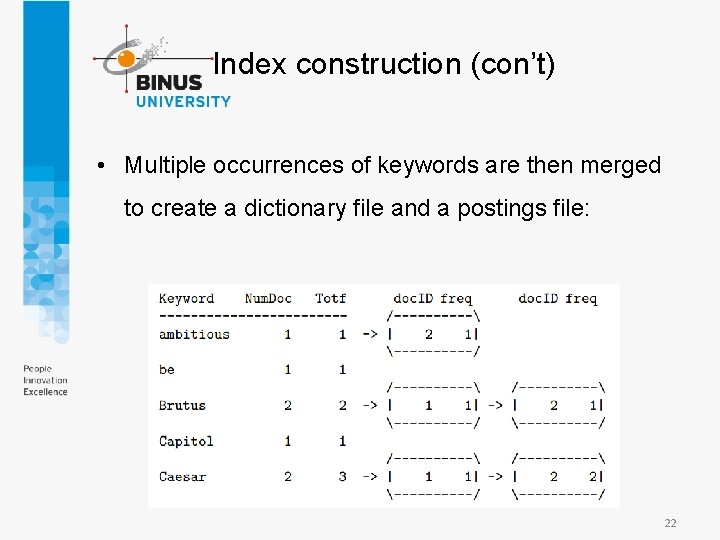

Index construction (con’t) • Multiple occurrences of keywords are then merged to create a dictionary file and a postings file: 22

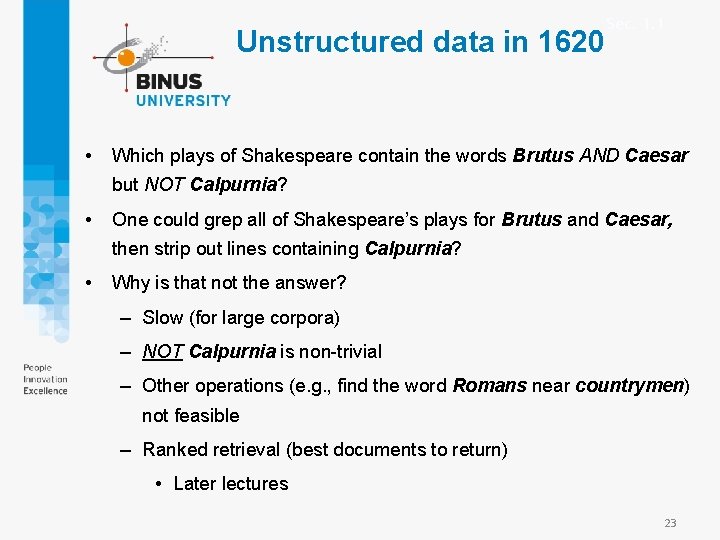

Unstructured data in 1620 • Sec. 1. 1 Which plays of Shakespeare contain the words Brutus AND Caesar but NOT Calpurnia? • One could grep all of Shakespeare’s plays for Brutus and Caesar, then strip out lines containing Calpurnia? • Why is that not the answer? – Slow (for large corpora) – NOT Calpurnia is non-trivial – Other operations (e. g. , find the word Romans near countrymen) not feasible – Ranked retrieval (best documents to return) • Later lectures 23

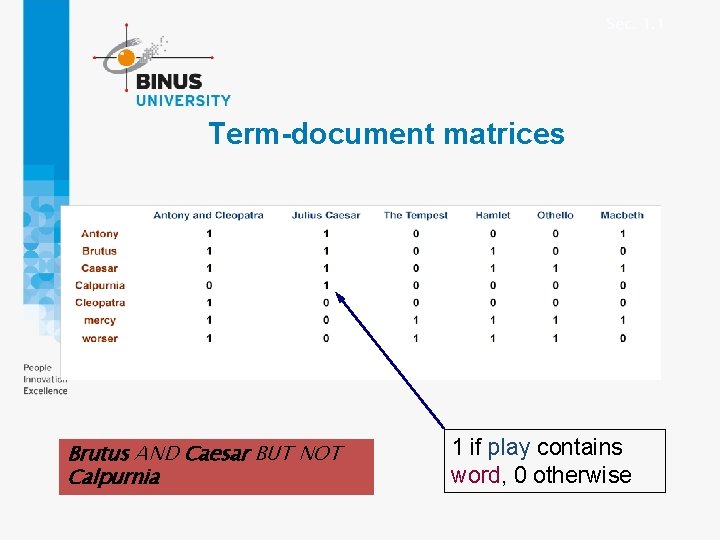

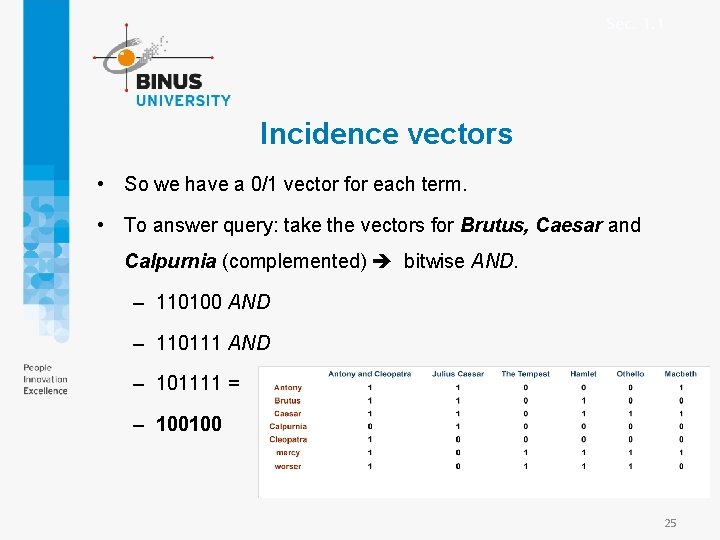

Sec. 1. 1 Term-document matrices Brutus AND Caesar BUT NOT Calpurnia 1 if play contains word, 0 otherwise

Sec. 1. 1 Incidence vectors • So we have a 0/1 vector for each term. • To answer query: take the vectors for Brutus, Caesar and Calpurnia (complemented) bitwise AND. – 110100 AND – 110111 AND – 101111 = – 100100 25

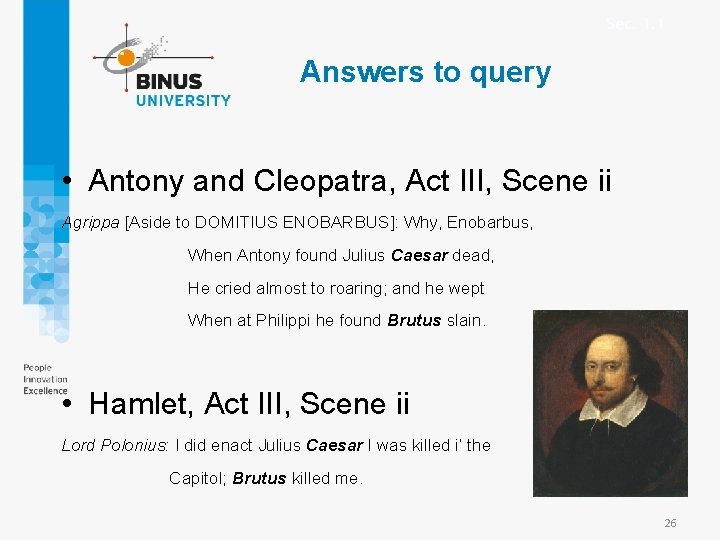

Sec. 1. 1 Answers to query • Antony and Cleopatra, Act III, Scene ii Agrippa [Aside to DOMITIUS ENOBARBUS]: Why, Enobarbus, When Antony found Julius Caesar dead, He cried almost to roaring; and he wept When at Philippi he found Brutus slain. • Hamlet, Act III, Scene ii Lord Polonius: I did enact Julius Caesar I was killed i’ the Capitol; Brutus killed me. 26

Information Retrieval • IR scoring functions – Instead of using Boolean model, most IR systems use models based on statistics of word counts • I. e. BM 25 scoring function – A scoring function takes a document and a query and returns a numeric score (relevancy score) – In BM 25 function, the score is a linear weighted combination of scores for each of the words 27

Information Retrieval • BM 25 function – Three factors affect the weight of a query term • The frequency with which a query term appears in document (TF = term frequency) • The inverse document frequency of the term (IDF) 28

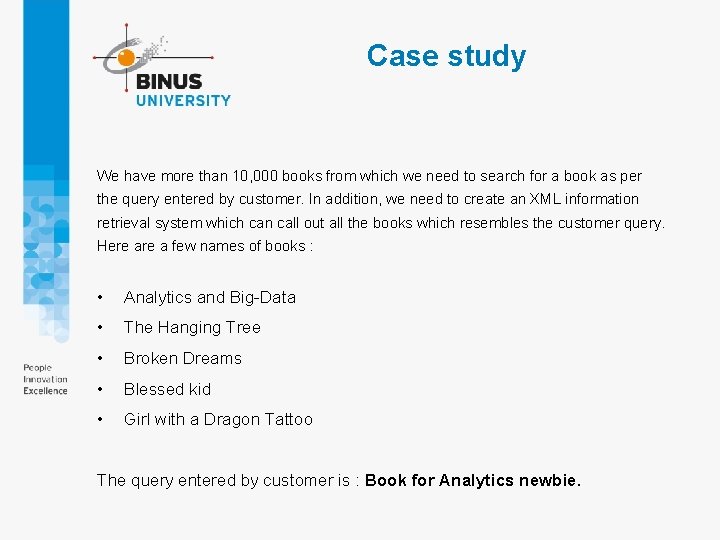

Case study We have more than 10, 000 books from which we need to search for a book as per the query entered by customer. In addition, we need to create an XML information retrieval system which can call out all the books which resembles the customer query. Here a few names of books : • Analytics and Big-Data • The Hanging Tree • Broken Dreams • Blessed kid • Girl with a Dragon Tattoo The query entered by customer is : Book for Analytics newbie.

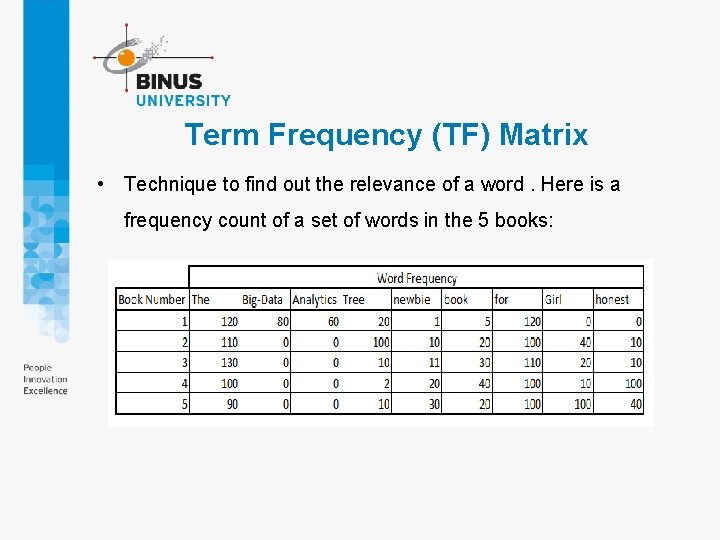

Term Frequency (TF) Matrix • Technique to find out the relevance of a word. Here is a frequency count of a set of words in the 5 books:

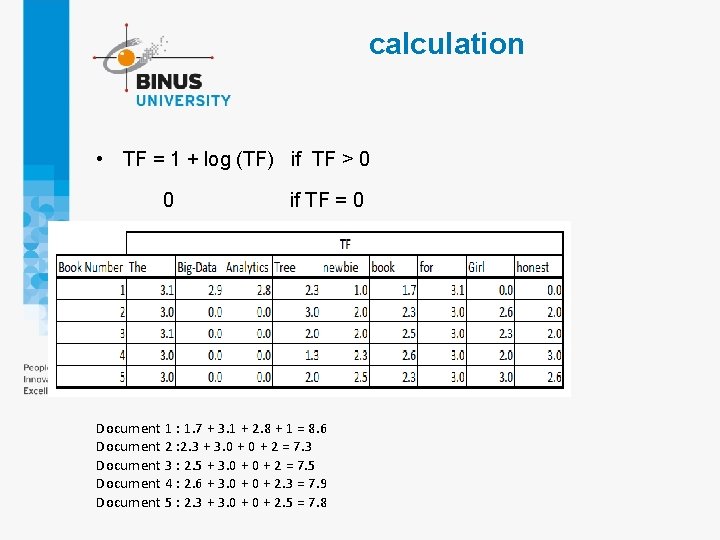

calculation • TF = 1 + log (TF) if TF > 0 if TF = 0 Document 1 : 1. 7 + 3. 1 + 2. 8 + 1 = 8. 6 Document 2 : 2. 3 + 3. 0 + 2 = 7. 3 Document 3 : 2. 5 + 3. 0 + 2 = 7. 5 Document 4 : 2. 6 + 3. 0 + 2. 3 = 7. 9 Document 5 : 2. 3 + 3. 0 + 2. 5 = 7. 8

calculation Document 1 : 1. 7 + 3. 1 + 2. 8 + 1 = 8. 6 Document 2 : 2. 3 + 3. 0 + 2 = 7. 3 Document 3 : 2. 5 + 3. 0 + 2 = 7. 5 Document 4 : 2. 6 + 3. 0 + 2. 3 = 7. 9 Document 5 : 2. 3 + 3. 0 + 2. 5 = 7. 8 Result shows, Document 1 will be more relevant to display for the query, but we still make a concrete conclusion. Since, document 4 and 5 are not far away from Document 1. They might turn out to be relevant too.

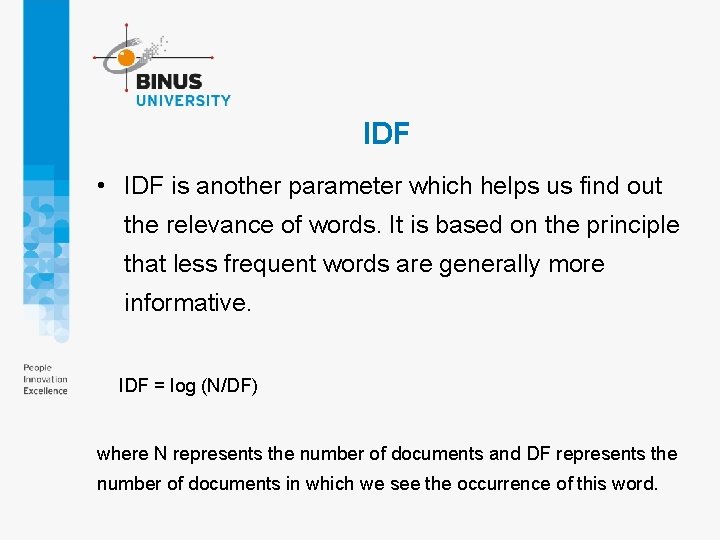

IDF • IDF is another parameter which helps us find out the relevance of words. It is based on the principle that less frequent words are generally more informative. IDF = log (N/DF) where N represents the number of documents and DF represents the number of documents in which we see the occurrence of this word.

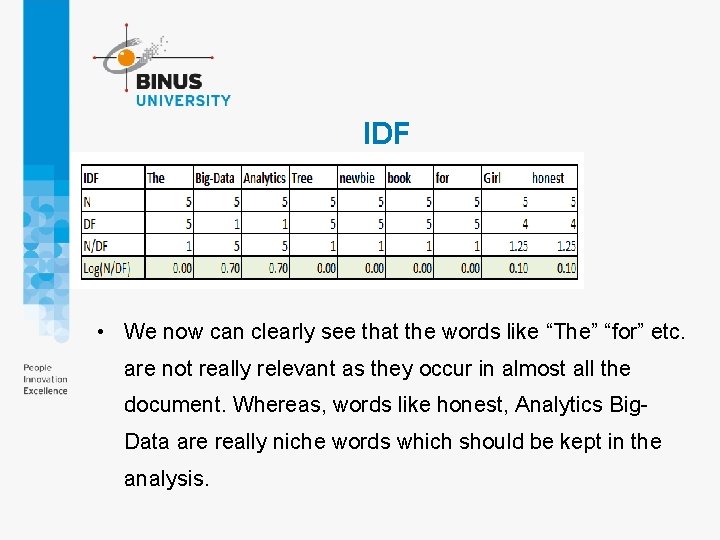

IDF • We now can clearly see that the words like “The” “for” etc. are not really relevant as they occur in almost all the document. Whereas, words like honest, Analytics Big. Data are really niche words which should be kept in the analysis.

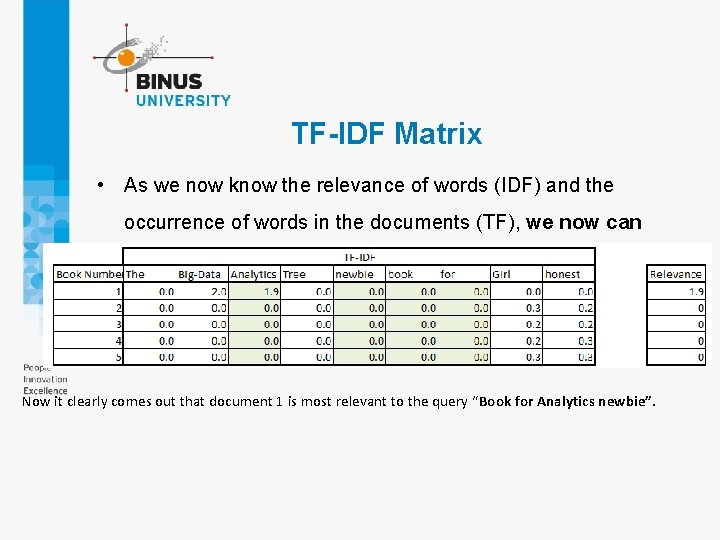

TF-IDF Matrix • As we now know the relevance of words (IDF) and the occurrence of words in the documents (TF), we now can multiply the two. Then, find the subject of the document and thereafter the similarity of query with the document. Now it clearly comes out that document 1 is most relevant to the query “Book for Analytics newbie”.

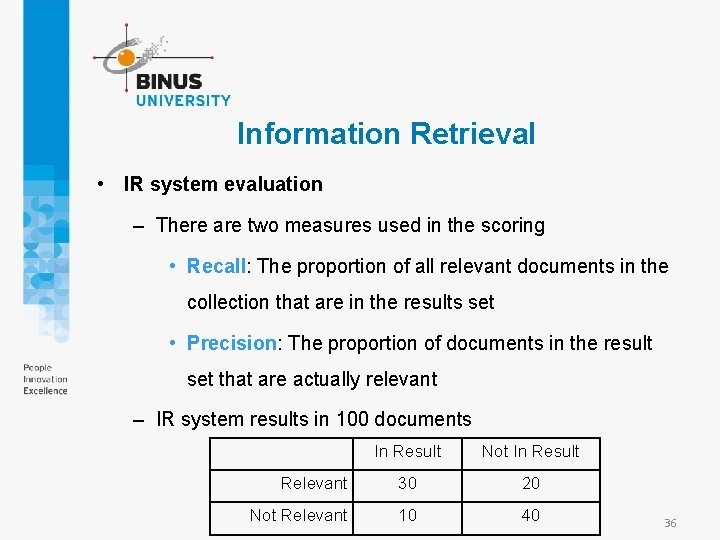

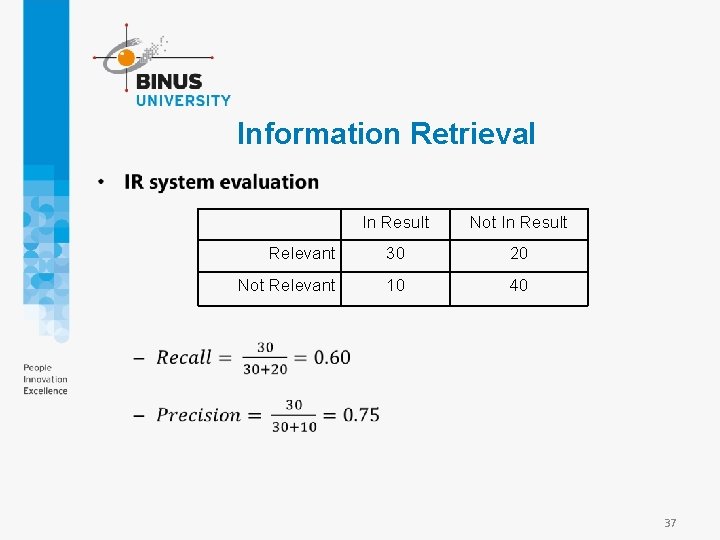

Information Retrieval • IR system evaluation – There are two measures used in the scoring • Recall: The proportion of all relevant documents in the collection that are in the results set • Precision: The proportion of documents in the result set that are actually relevant – IR system results in 100 documents In Result Not In Result Relevant 30 20 Not Relevant 10 40 36

Information Retrieval • In Result Not In Result Relevant 30 20 Not Relevant 10 40 37

Information Retrieval • Page. Rank algorithm – It was one of the two original ideas that set Google’s search apart from other Web search engines (1997) – If the query [IBM] how do we ensure that the IBM home page (ibm. com ) is the first in a sequence of query results, even if other pages have a more frequency of IBM word. – The concept is that ibm. com has many in-links (links to pages ibm. com), then it certainly would be ranked first in the results. 38

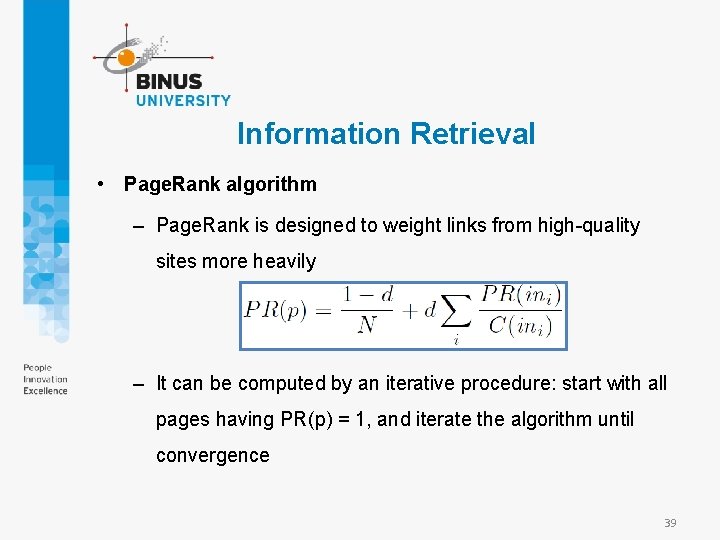

Information Retrieval • Page. Rank algorithm – Page. Rank is designed to weight links from high-quality sites more heavily – It can be computed by an iterative procedure: start with all pages having PR(p) = 1, and iterate the algorithm until convergence 39

Information Retrieval • Question answering – Is a somewhat different task, in which the query really is a question, and the answer is not a ranked list of documents but rather a short response – Based on the premise that the question could be answered on many web pages, then the problem in question-andanswer is considered as the issue of precision (accuracy), not a recall (completeness). • We only have to find the answer 40

Information Extraction • Information extraction is the process of acquiring knowledge by skimming a text and looking for occurrences of a particular class of object and for relationship among objects – I. e. extract instances of addresses from web pages • In a limited domain, it can be done with high accuracy • In a general domain, more complex linguistic models and learning techniques are necessary 41

Information Extraction • The simplest type of information extraction system is an attribute-based extraction systems – Assumes that the entire text refers to a single object – I. e. The problem of extracting from the text “IBM Think. Book 970. Our price: $399. 00” the attributes {Manufacturer=IBM, Model=Think. Book 970, Price=$399. 00” • We can address the problem by defining a template – Defined by a finite state automaton, regex (regular expression) 42

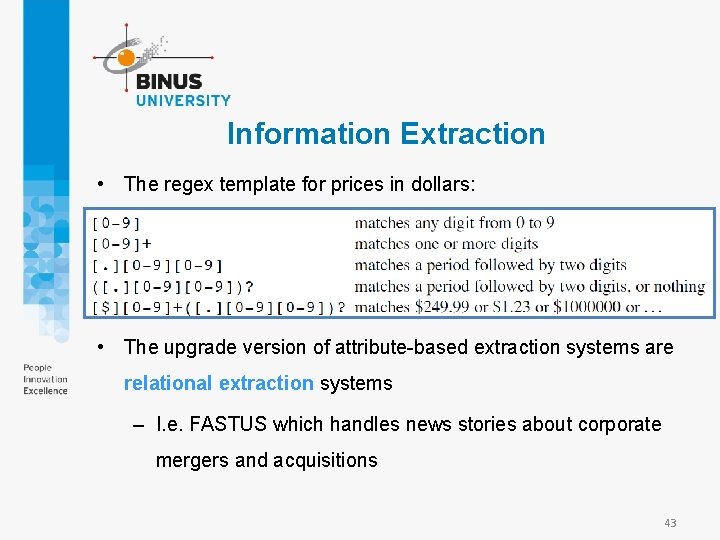

Information Extraction • The regex template for prices in dollars: • The upgrade version of attribute-based extraction systems are relational extraction systems – I. e. FASTUS which handles news stories about corporate mergers and acquisitions 43

Information Extraction • FASTUS consists of five stages: – Tokenization – Complex-word handling – Basic-group handling – Complex-phrase handling – Structure merging 44

Information Extraction • A different application of extraction technology is building a large knowledge base of facts from a corpus • This is different in three ways: – First it is open-ended—we want to acquire facts about all types of domains, not just one specific domain – Second, with a large corpus, this task is dominated by precision, not recall – Third, the results can be statistical aggregates gathered from multiple sources 45

Information Extraction • Machine reading – A machine that behaves more like a human reader who learns from the text itself – A representative machine-reading system is TEXTRUNNER (Banko and Etzioni, 2008) • I. e. from the parse of the sentence “Einstein received the Nobel Prize in 1921, ” TEXTRUNNER is able to extract the relation (“Einstein”, “received”, “Nobel Prize”) 46

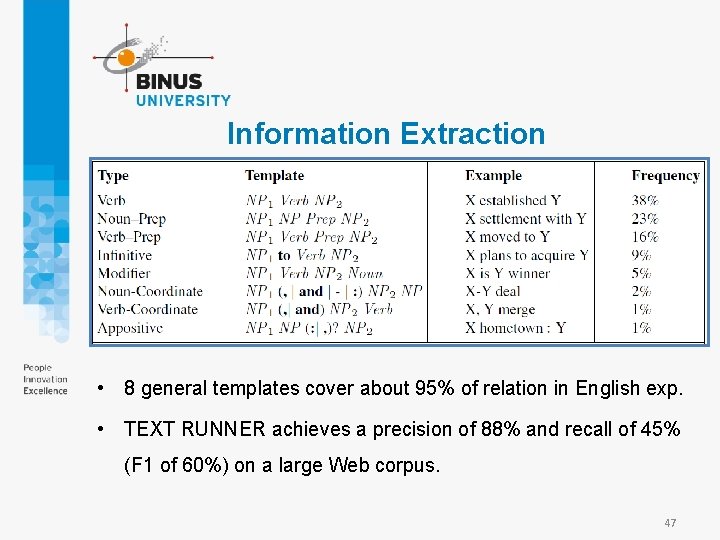

Information Extraction • 8 general templates cover about 95% of relation in English exp. • TEXT RUNNER achieves a precision of 88% and recall of 45% (F 1 of 60%) on a large Web corpus. 47

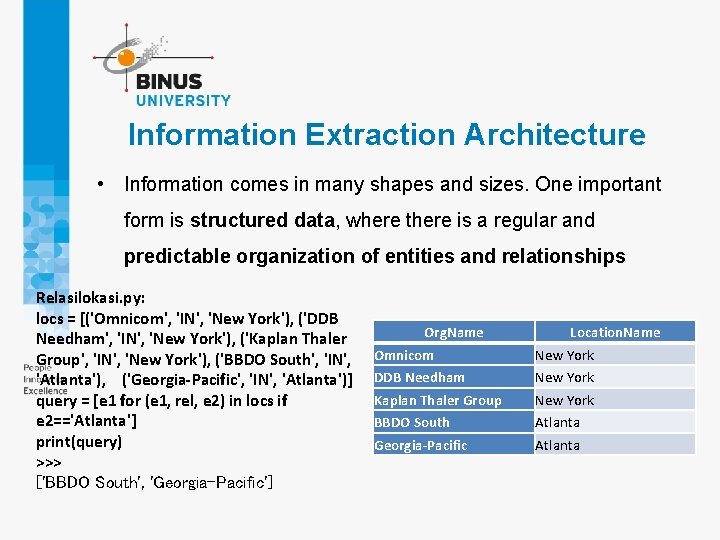

Information Extraction Architecture • Information comes in many shapes and sizes. One important form is structured data, where there is a regular and predictable organization of entities and relationships Relasilokasi. py: locs = [('Omnicom', 'IN', 'New York'), ('DDB Needham', 'IN', 'New York'), ('Kaplan Thaler Group', 'IN', 'New York'), ('BBDO South', 'IN', 'Atlanta'), ('Georgia-Pacific', 'IN', 'Atlanta')] query = [e 1 for (e 1, rel, e 2) in locs if e 2=='Atlanta'] print(query) >>> ['BBDO South', 'Georgia-Pacific'] Org. Name Location. Name Omnicom New York DDB Needham New York Kaplan Thaler Group New York BBDO South Atlanta Georgia-Pacific Atlanta

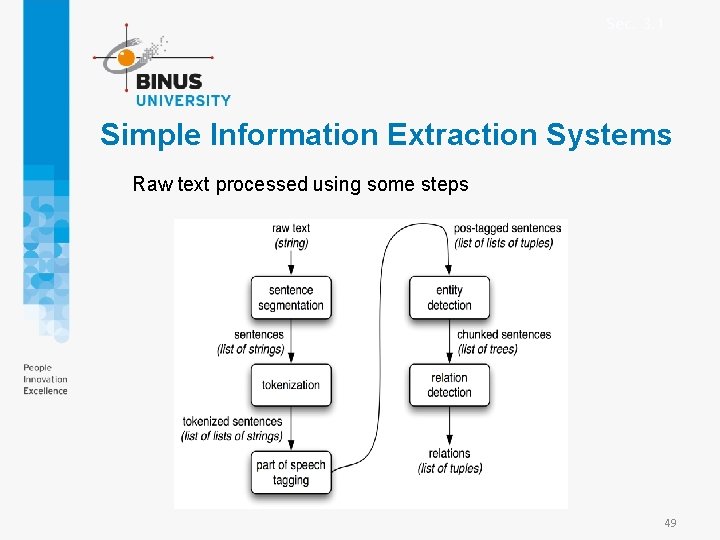

Sec. 3. 1 Simple Information Extraction Systems Raw text processed using some steps 49

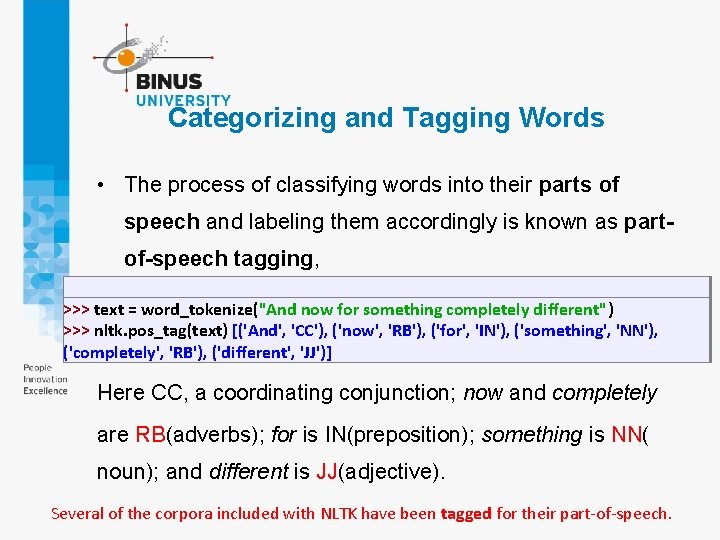

Categorizing and Tagging Words • The process of classifying words into their parts of speech and labeling them accordingly is known as partof-speech tagging, >>> text = word_tokenize("And now for something completely different") >>> nltk. pos_tag(text) [('And', 'CC'), ('now', 'RB'), ('for', 'IN'), ('something', 'NN'), ('completely', 'RB'), ('different', 'JJ')] Here CC, a coordinating conjunction; now and completely are RB(adverbs); for is IN(preposition); something is NN( noun); and different is JJ(adjective). Several of the corpora included with NLTK have been tagged for their part-of-speech.

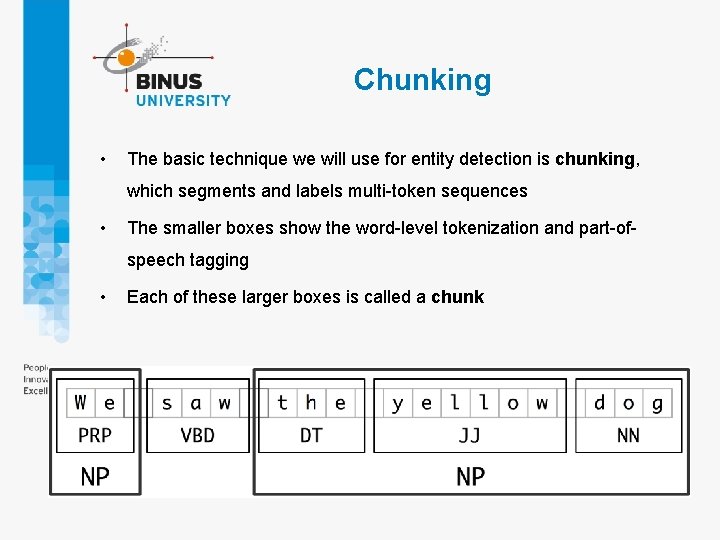

Chunking • The basic technique we will use for entity detection is chunking, which segments and labels multi-token sequences • The smaller boxes show the word-level tokenization and part-ofspeech tagging • Each of these larger boxes is called a chunk

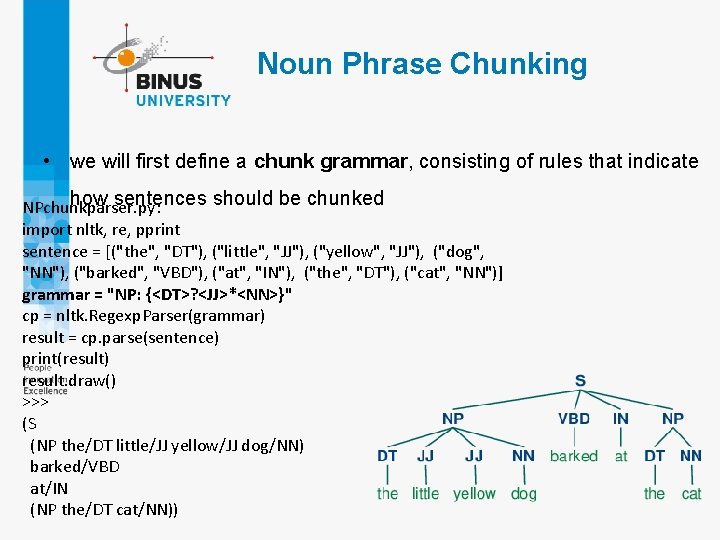

Noun Phrase Chunking • we will first define a chunk grammar, consisting of rules that indicate how sentences should be chunked NPchunkparser. py: import nltk, re, pprint sentence = [("the", "DT"), ("little", "JJ"), ("yellow", "JJ"), ("dog", "NN"), ("barked", "VBD"), ("at", "IN"), ("the", "DT"), ("cat", "NN")] grammar = "NP: {<DT>? <JJ>*<NN>}" cp = nltk. Regexp. Parser(grammar) result = cp. parse(sentence) print(result) result. draw() >>> (S (NP the/DT little/JJ yellow/JJ dog/NN) barked/VBD at/IN (NP the/DT cat/NN))

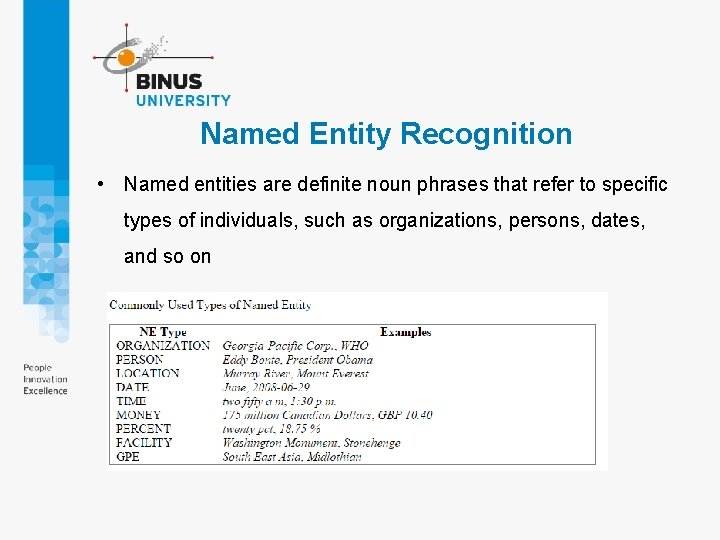

Named Entity Recognition • Named entities are definite noun phrases that refer to specific types of individuals, such as organizations, persons, dates, and so on

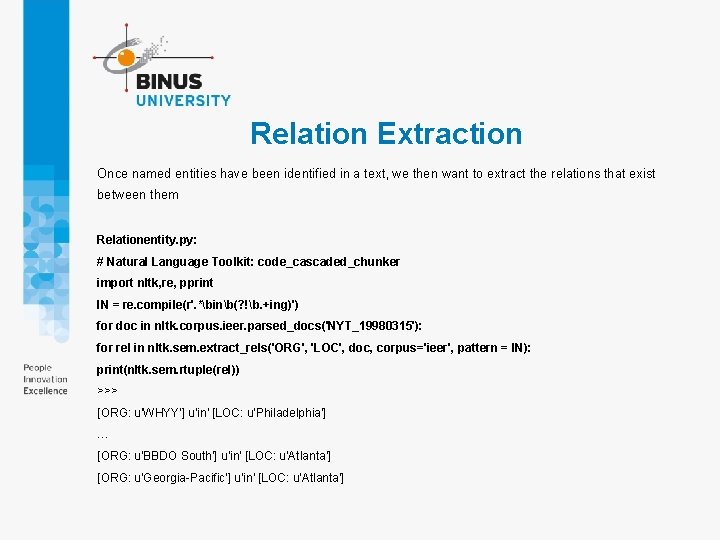

Relation Extraction Once named entities have been identified in a text, we then want to extract the relations that exist between them Relationentity. py: # Natural Language Toolkit: code_cascaded_chunker import nltk, re, pprint IN = re. compile(r'. *binb(? !b. +ing)') for doc in nltk. corpus. ieer. parsed_docs('NYT_19980315'): for rel in nltk. sem. extract_rels('ORG', 'LOC', doc, corpus='ieer', pattern = IN): print(nltk. sem. rtuple(rel)) >>> [ORG: u'WHYY'] u'in' [LOC: u'Philadelphia'] … [ORG: u'BBDO South'] u'in' [LOC: u'Atlanta'] [ORG: u'Georgia-Pacific'] u'in' [LOC: u'Atlanta']

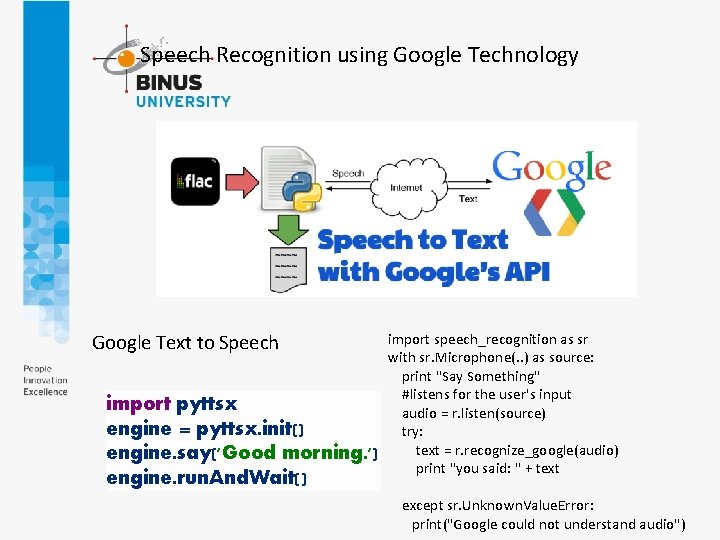

Speech Recognition using Google Technology Google Text to Speech import pyttsx engine = pyttsx. init() engine. say('Good morning. ') engine. run. And. Wait() import speech_recognition as sr with sr. Microphone(. . ) as source: print "Say Something" #listens for the user's input audio = r. listen(source) try: text = r. recognize_google(audio) print "you said: " + text except sr. Unknown. Value. Error: print("Google could not understand audio")

References • Stuart Russell, Peter Norvig. 2010. Artificial Intelligence : A Modern Approach. Pearson Education. New Jersey. ISBN: 9780132071482 56

- Slides: 56