Course Artificial Intelligence Effective Period September 2018 Linear

Course : Artificial Intelligence Effective Period : September 2018 Linear Regression and Classification Session 16 1

Learning Outcomes At the end of this session, students will be able to: § LO 5: Apply various learning algorithms to solve the problems 2

Outline 1. Linear Regression 2. Gradient Descent 3. Linear Classification 3

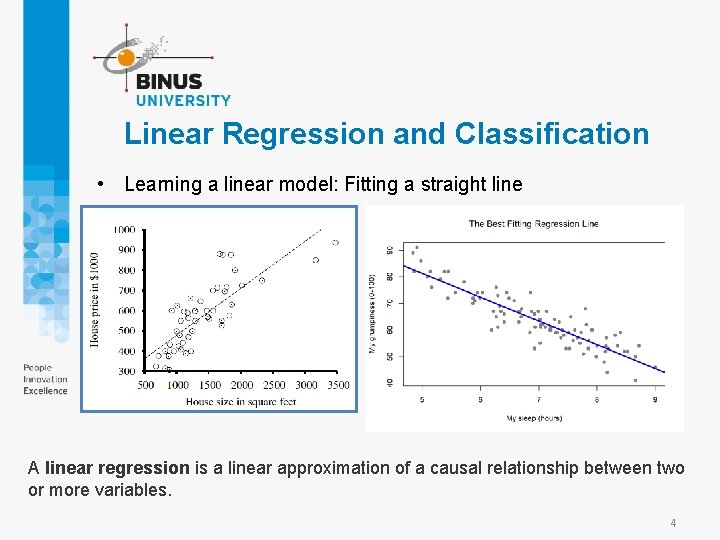

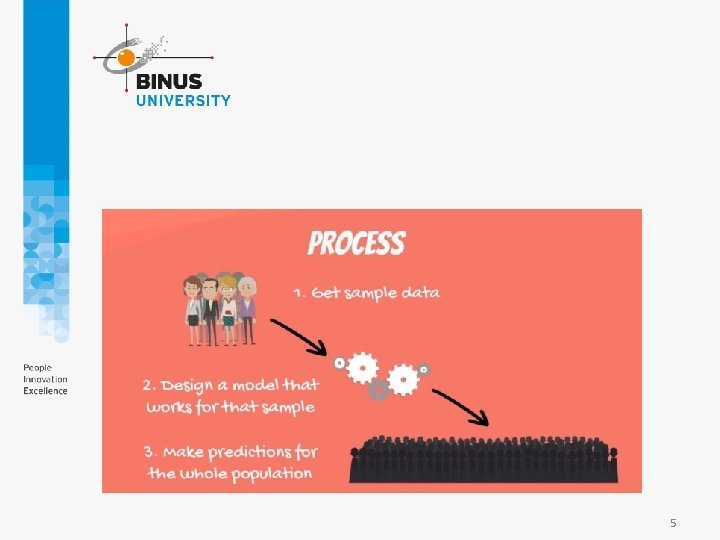

Linear Regression and Classification • Learning a linear model: Fitting a straight line A linear regression is a linear approximation of a causal relationship between two or more variables. 4

5

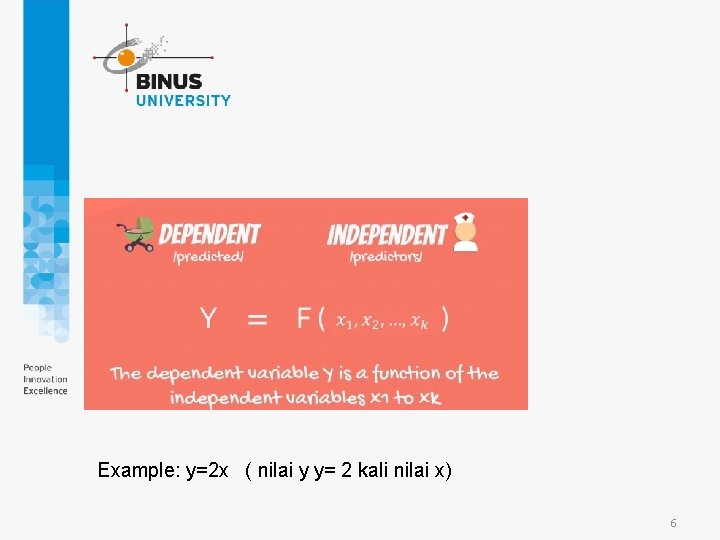

Example: y=2 x ( nilai y y= 2 kali nilai x) 6

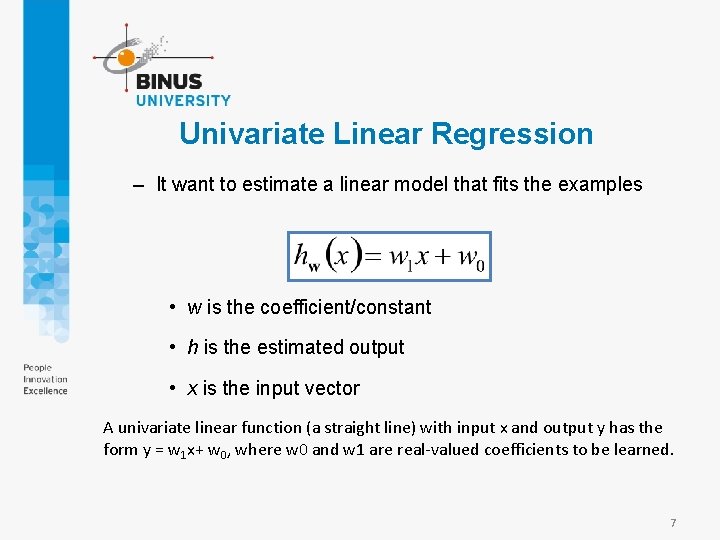

Univariate Linear Regression – It want to estimate a linear model that fits the examples • w is the coefficient/constant • h is the estimated output • x is the input vector A univariate linear function (a straight line) with input x and output y has the form y = w 1 x+ w 0, where w 0 and w 1 are real-valued coefficients to be learned. 7

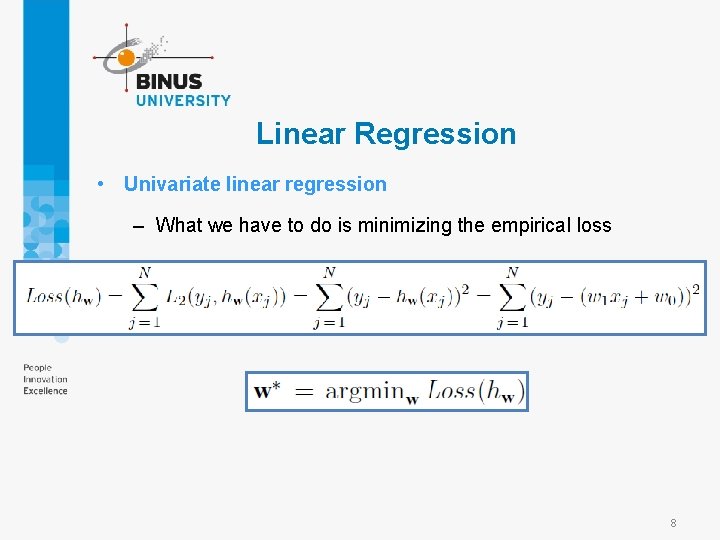

Linear Regression • Univariate linear regression – What we have to do is minimizing the empirical loss 8

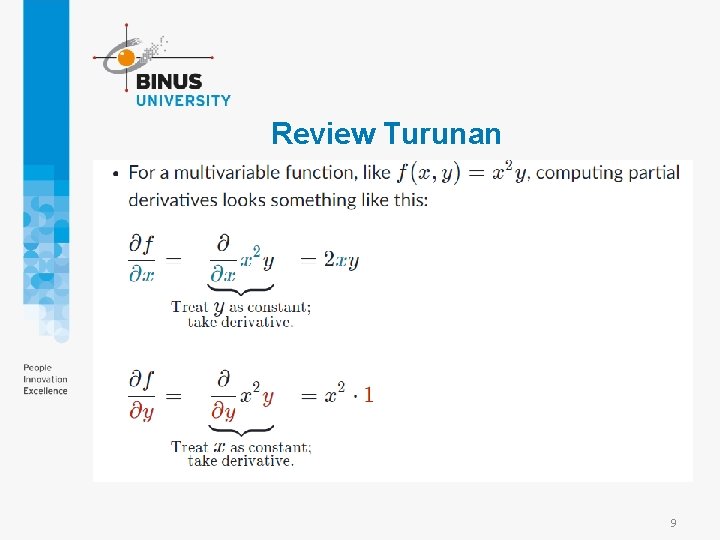

Review Turunan 9

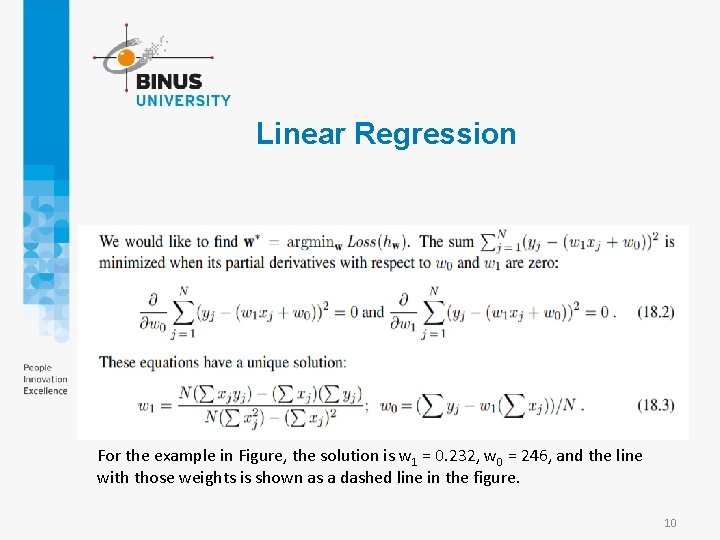

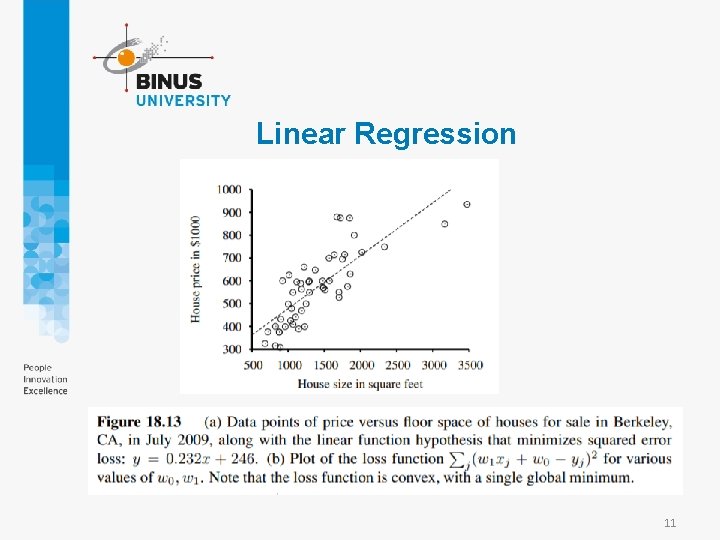

Linear Regression For the example in Figure, the solution is w 1 = 0. 232, w 0 = 246, and the line with those weights is shown as a dashed line in the figure. 10

Linear Regression 11

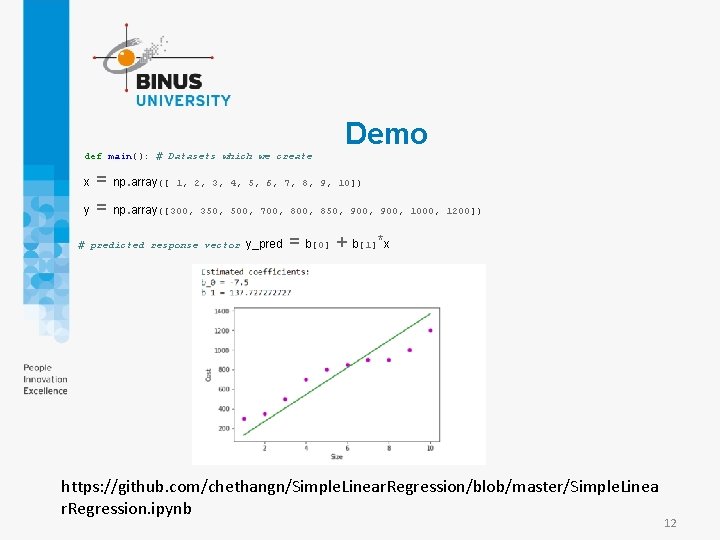

Demo def main(): # Datasets which we create x = np. array([ 1, 2, 3, 4, 5, 6, 7, 8, 9, 10]) y = np. array([300, 350, 500, 700, 850, 900, 1000, 1200]) … # predicted response vector y_pred = b[0] + b[1]*x https: //github. com/chethangn/Simple. Linear. Regression/blob/master/Simple. Linea r. Regression. ipynb 12

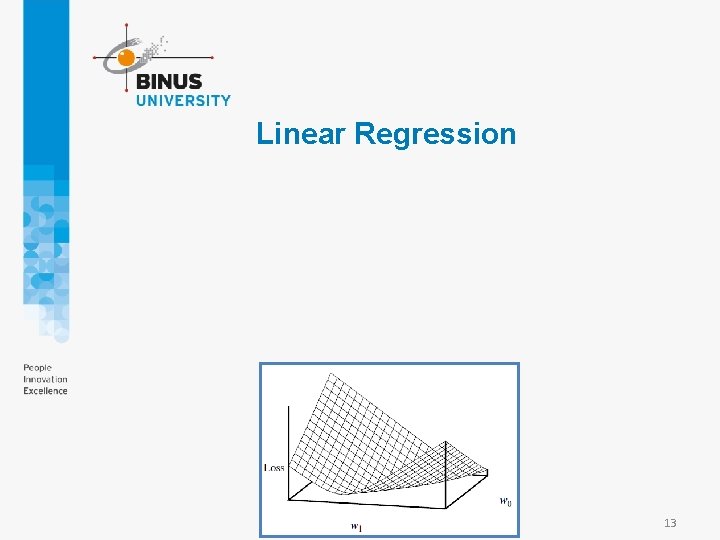

Linear Regression 13

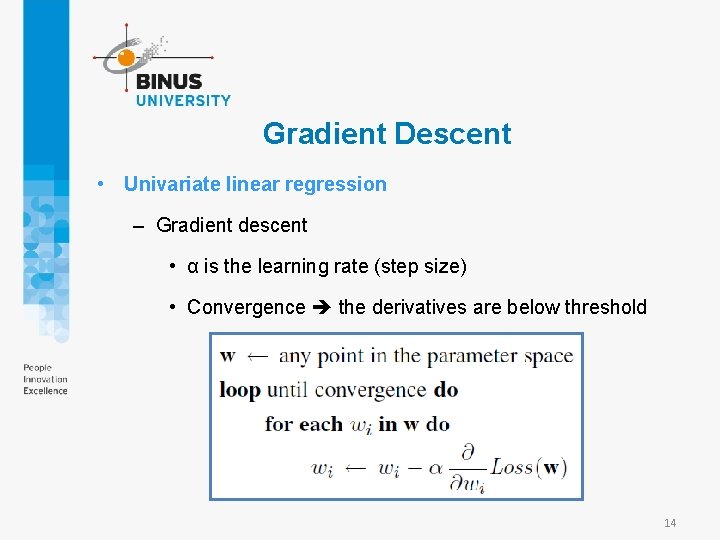

Gradient Descent • Univariate linear regression – Gradient descent • α is the learning rate (step size) • Convergence the derivatives are below threshold 14

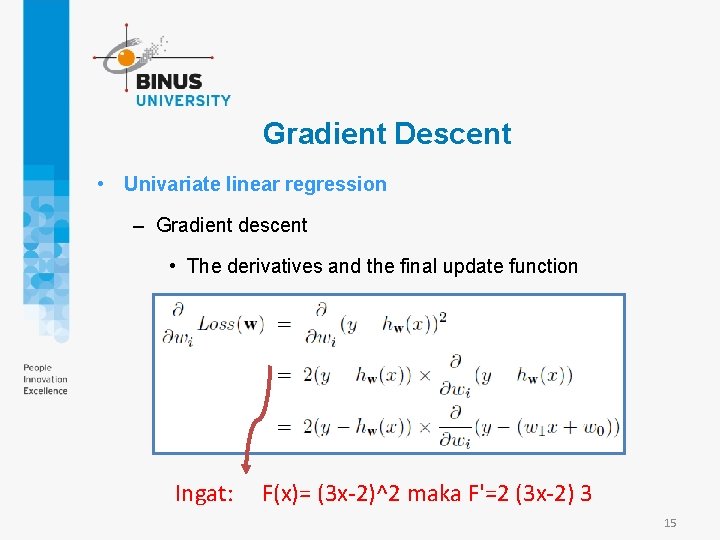

Gradient Descent • Univariate linear regression – Gradient descent • The derivatives and the final update function Ingat: F(x)= (3 x-2)^2 maka F'=2 (3 x-2) 3 15

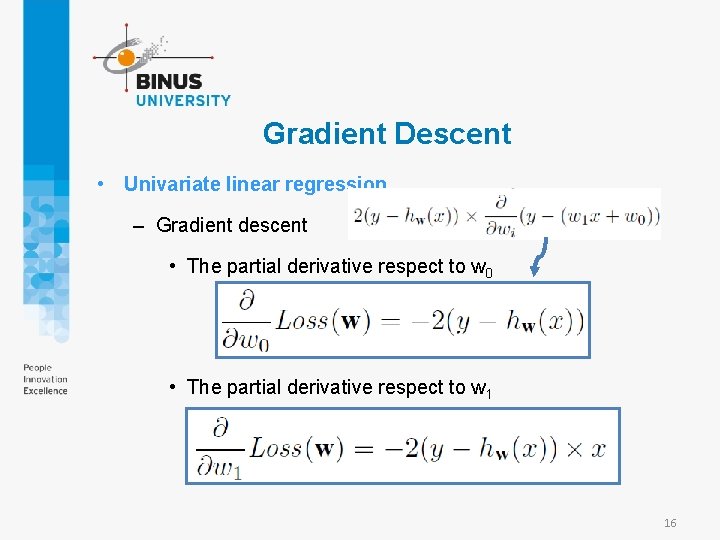

Gradient Descent • Univariate linear regression – Gradient descent • The partial derivative respect to w 0 • The partial derivative respect to w 1 16

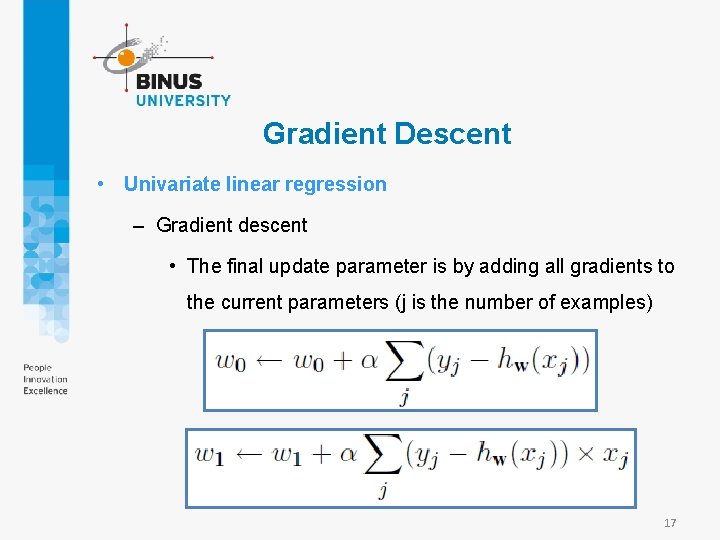

Gradient Descent • Univariate linear regression – Gradient descent • The final update parameter is by adding all gradients to the current parameters (j is the number of examples) 17

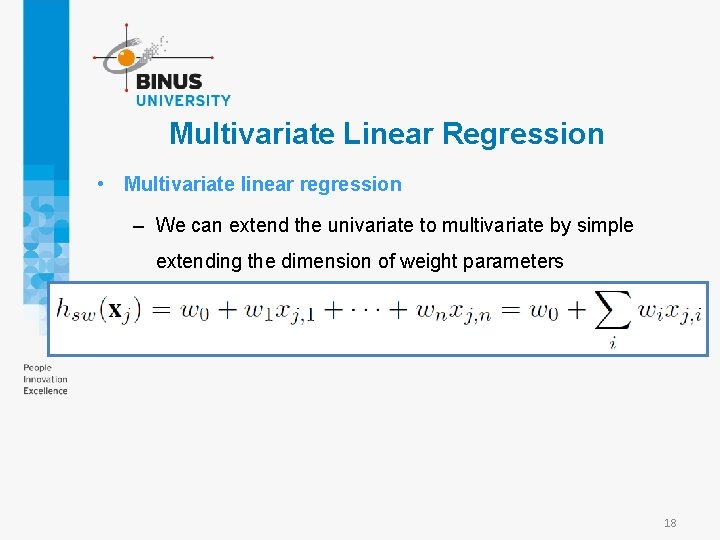

Multivariate Linear Regression • Multivariate linear regression – We can extend the univariate to multivariate by simple extending the dimension of weight parameters 18

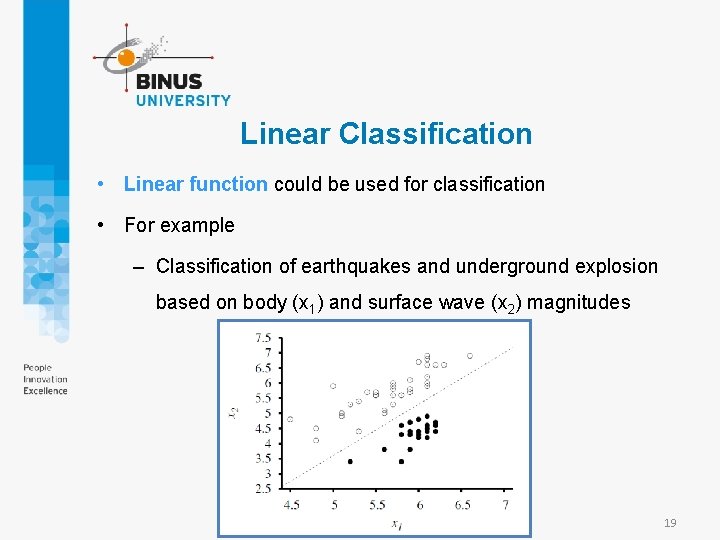

Linear Classification • Linear function could be used for classification • For example – Classification of earthquakes and underground explosion based on body (x 1) and surface wave (x 2) magnitudes 19

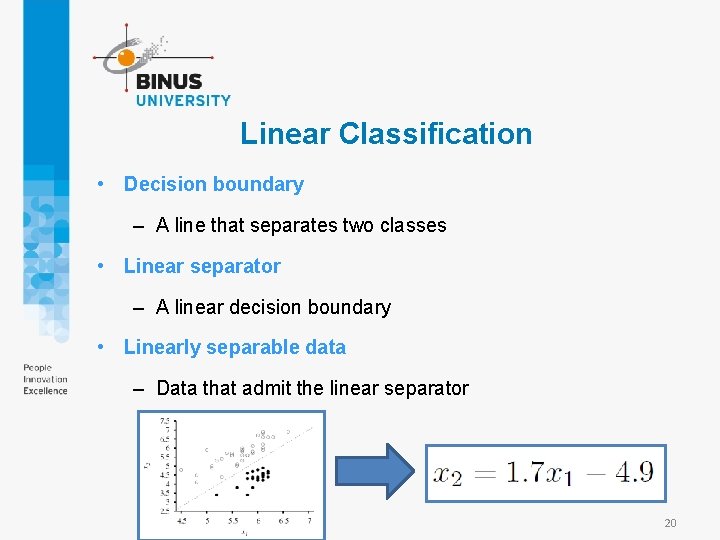

Linear Classification • Decision boundary – A line that separates two classes • Linear separator – A linear decision boundary • Linearly separable data – Data that admit the linear separator 20

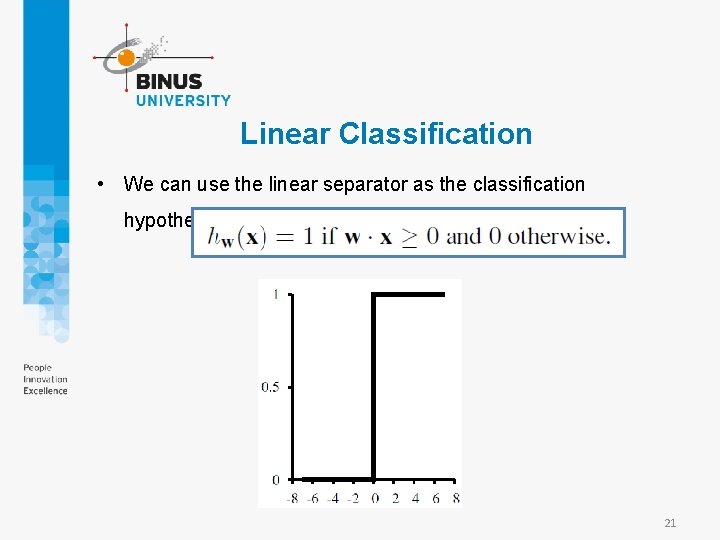

Linear Classification • We can use the linear separator as the classification hypothesis 21

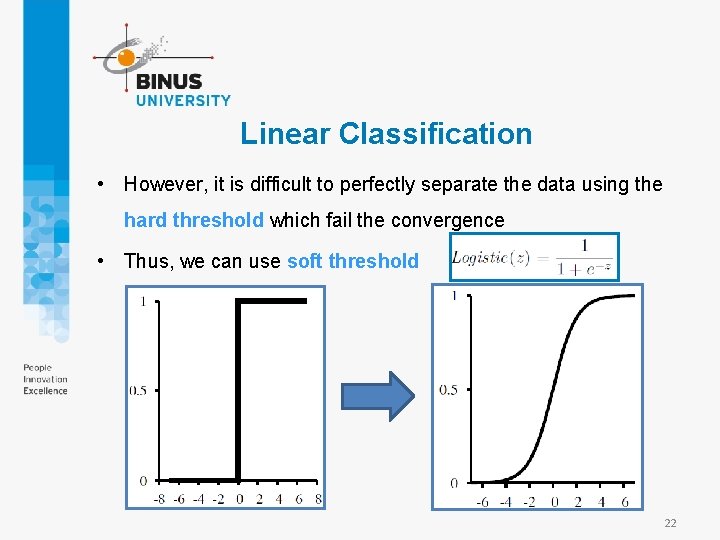

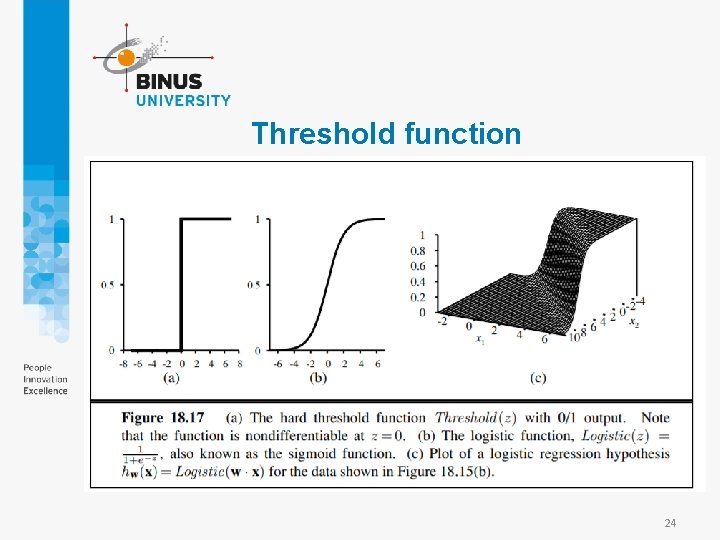

Linear Classification • However, it is difficult to perfectly separate the data using the hard threshold which fail the convergence • Thus, we can use soft threshold 22

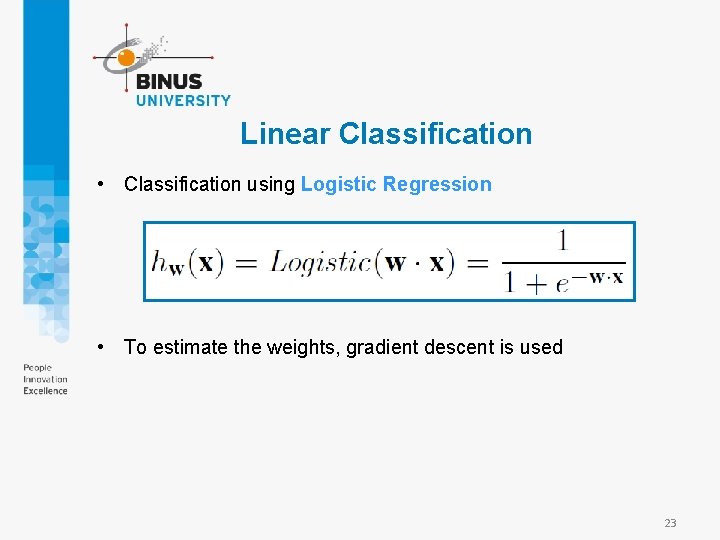

Linear Classification • Classification using Logistic Regression • To estimate the weights, gradient descent is used 23

Threshold function 24

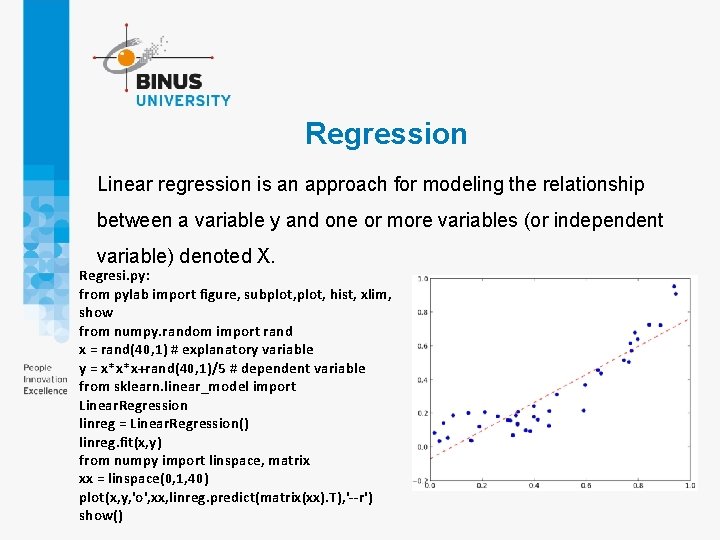

Regression Linear regression is an approach for modeling the relationship between a variable y and one or more variables (or independent variable) denoted X. Regresi. py: from pylab import figure, subplot, hist, xlim, show from numpy. random import rand x = rand(40, 1) # explanatory variable y = x*x*x+rand(40, 1)/5 # dependent variable from sklearn. linear_model import Linear. Regression linreg = Linear. Regression() linreg. fit(x, y) from numpy import linspace, matrix xx = linspace(0, 1, 40) plot(x, y, 'o', xx, linreg. predict(matrix(xx). T), '--r') show()

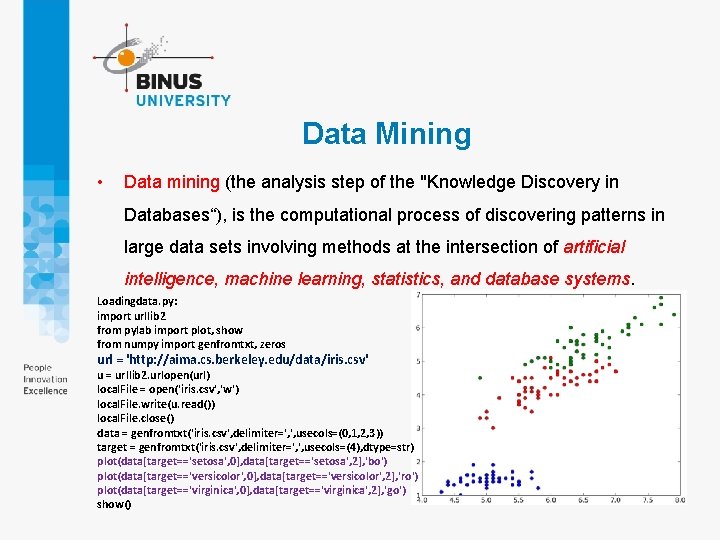

Data Mining • Data mining (the analysis step of the "Knowledge Discovery in Databases“), is the computational process of discovering patterns in large data sets involving methods at the intersection of artificial intelligence, machine learning, statistics, and database systems. Loadingdata. py: import urllib 2 from pylab import plot, show from numpy import genfromtxt, zeros url = 'http: //aima. cs. berkeley. edu/data/iris. csv' u = urllib 2. urlopen(url) local. File = open('iris. csv', 'w') local. File. write(u. read()) local. File. close() data = genfromtxt('iris. csv', delimiter=', ', usecols=(0, 1, 2, 3)) target = genfromtxt('iris. csv', delimiter=', ', usecols=(4), dtype=str) plot(data[target=='setosa', 0], data[target=='setosa', 2], 'bo') plot(data[target=='versicolor', 0], data[target=='versicolor', 2], 'ro') plot(data[target=='virginica', 0], data[target=='virginica', 2], 'go') show()

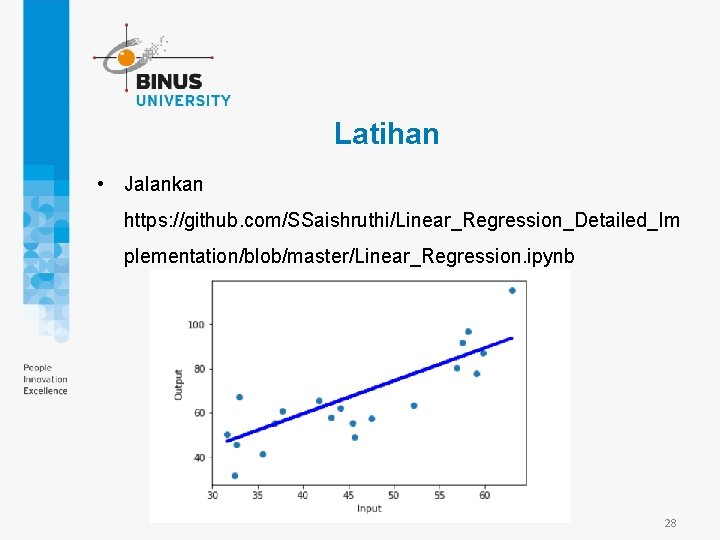

Latihan • Jalankan https: //github. com/SSaishruthi/Linear_Regression_Detailed_Im plementation/blob/master/Linear_Regression. ipynb 28

References • Stuart Russell, Peter Norvig. 2010. Artificial Intelligence : A Modern Approach. Pearson Education. New Jersey. ISBN: 9780132071482 29

- Slides: 29