Course Artificial Intelligence Effective Period September 2018 Artificial

Course : Artificial Intelligence Effective Period : September 2018 Artificial Neural Network Session 19 1

Learning Outcomes At the end of this session, students will be able to: § LO 5: Apply various learning algorithms to solve the problems § LO 6: Apply AI algorithms on various applications such as Game AI, Natural Language Processing, and Computer Vision 2

Outline 1. Introduction 2. Single/Multi Layer Perceptron 3. Backpropagation 4. Exercise 3

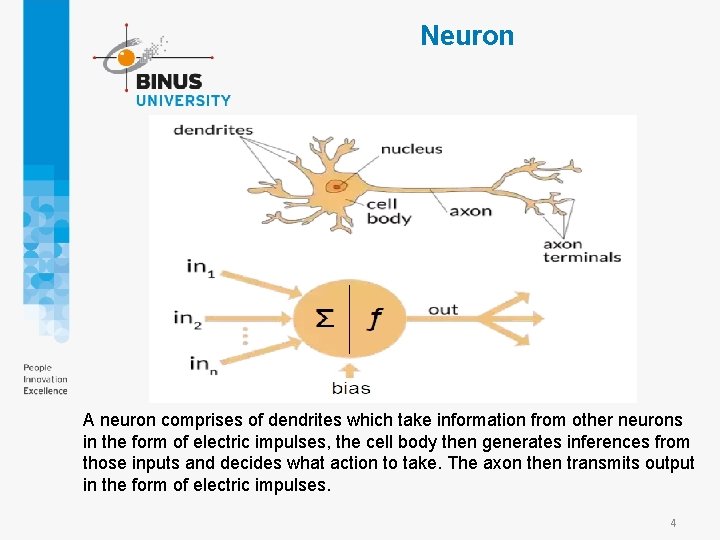

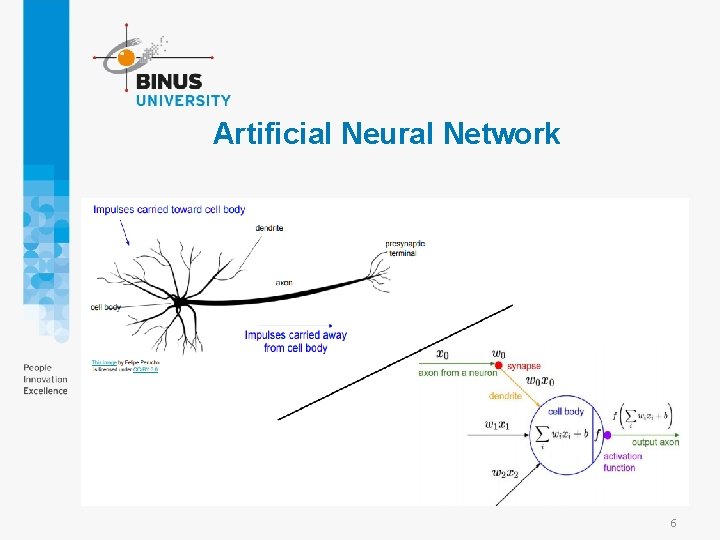

Neuron A neuron comprises of dendrites which take information from other neurons in the form of electric impulses, the cell body then generates inferences from those inputs and decides what action to take. The axon then transmits output in the form of electric impulses. 4

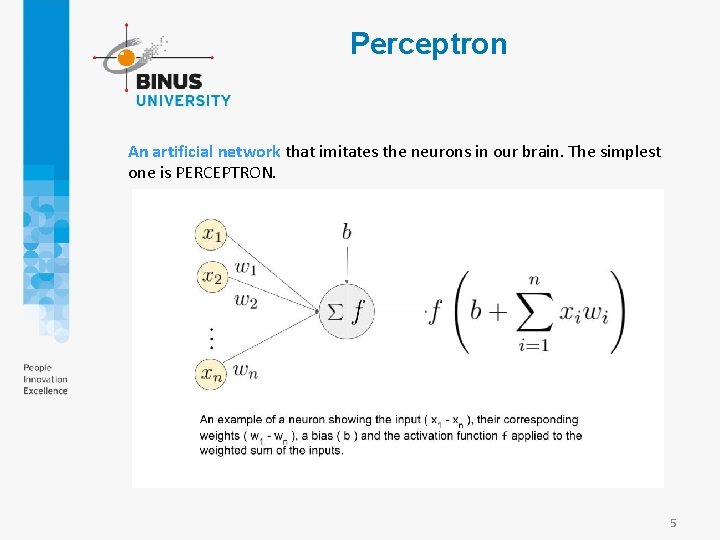

Perceptron An artificial network that imitates the neurons in our brain. The simplest one is PERCEPTRON. 5

Artificial Neural Network 6

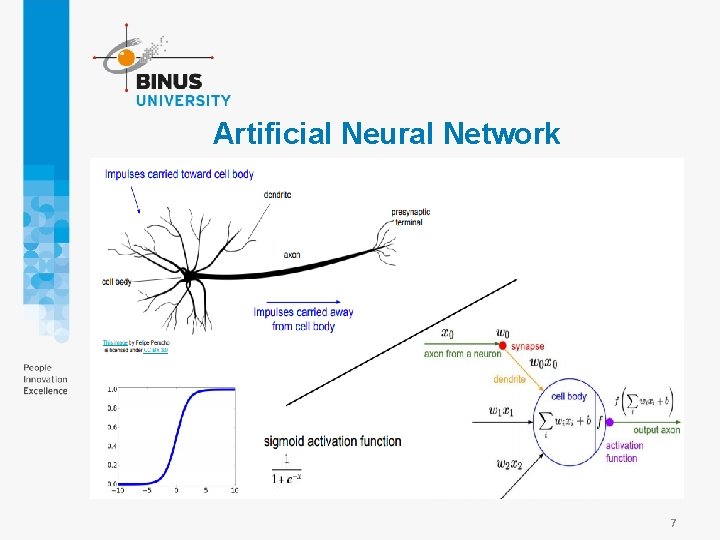

Artificial Neural Network 7

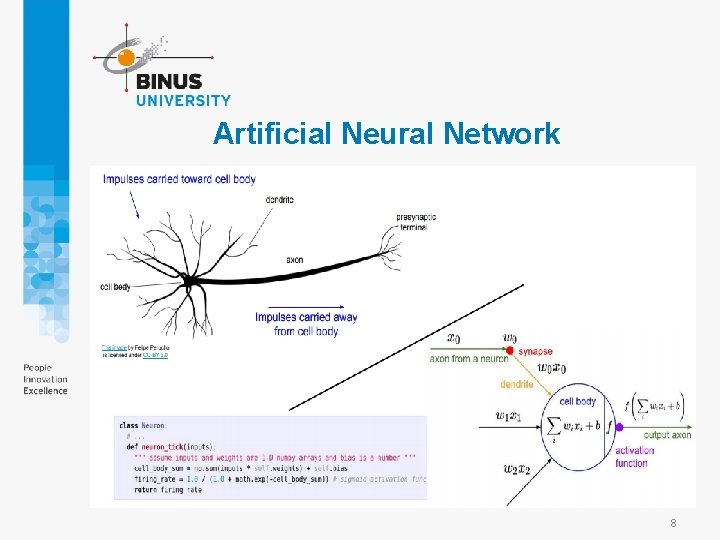

Artificial Neural Network 8

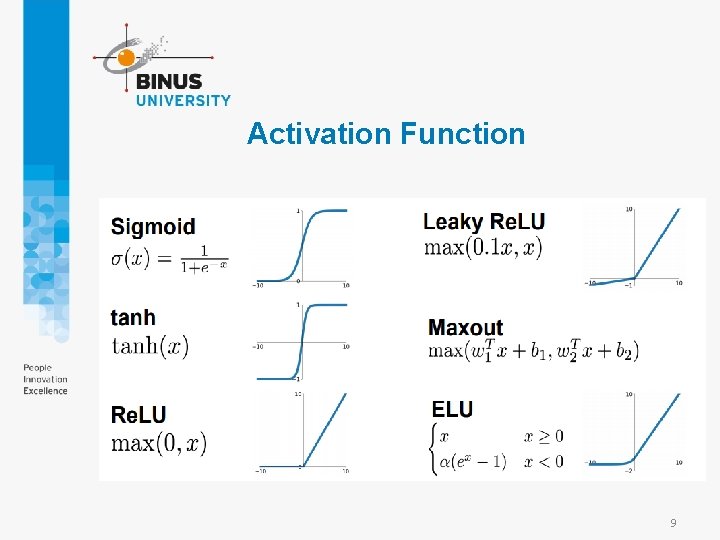

Activation Function 9

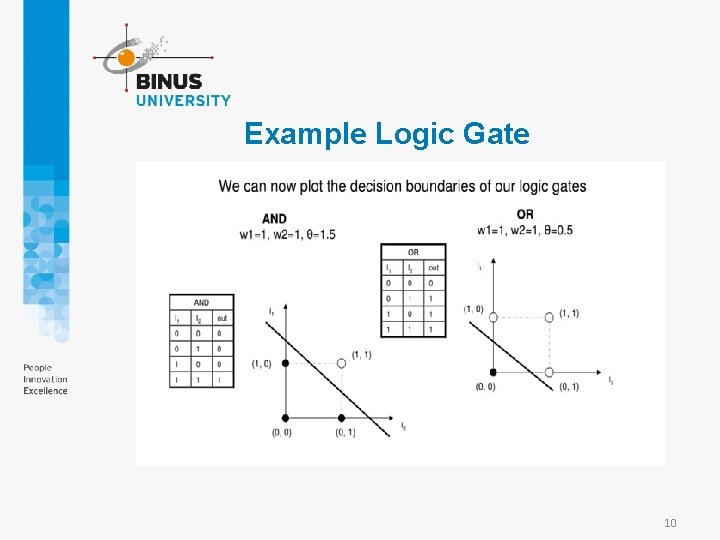

Example Logic Gate 10

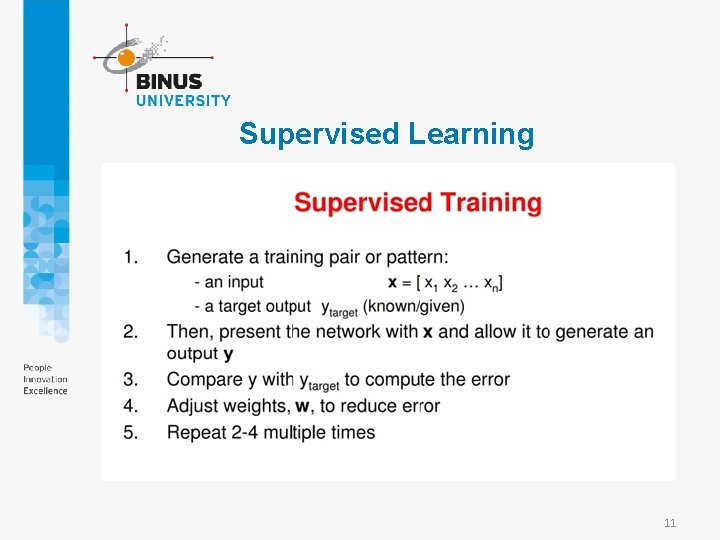

Supervised Learning 11

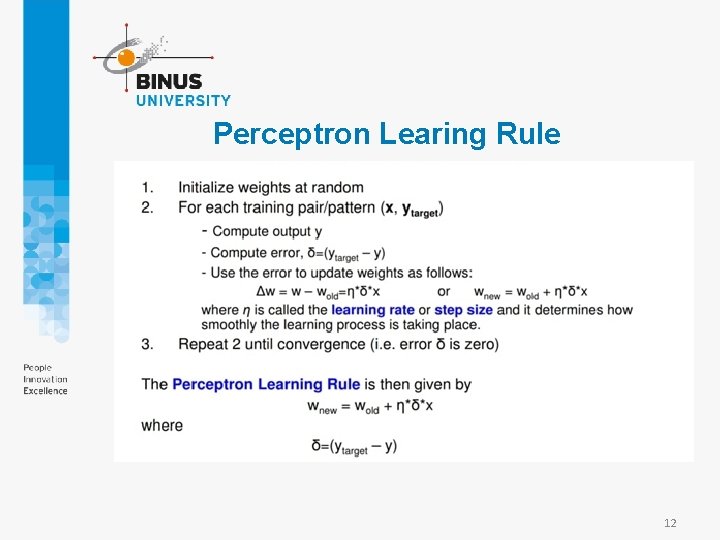

Perceptron Learing Rule 12

Exercise • Jalankan demo Perceptron • https: //machinelearningmastery. com/implement-perceptronalgorithm-scratch-python/ >epoch=0, lrate=0. 100, error=2. 000 >epoch=1, lrate=0. 100, error=1. 000 >epoch=2, lrate=0. 100, error=0. 000 >epoch=3, lrate=0. 100, error=0. 000 >epoch=4, lrate=0. 100, error=0. 000 [-0. 1, 0. 20653640140000007, -0. 23418117710000003] 13

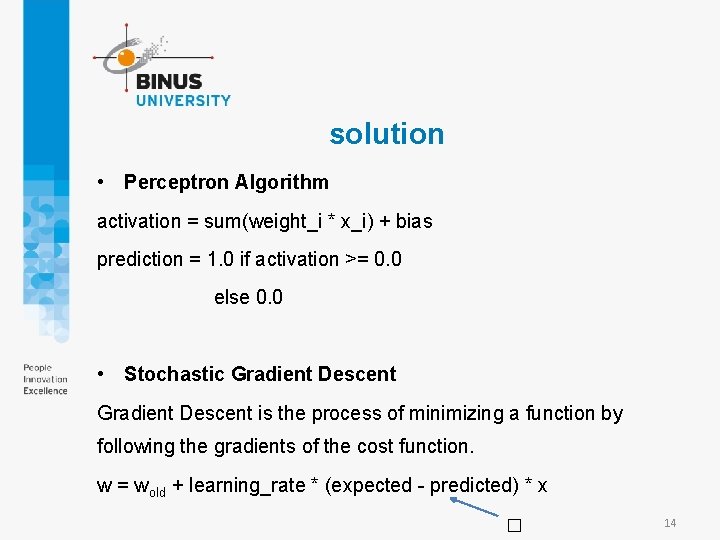

solution • Perceptron Algorithm activation = sum(weight_i * x_i) + bias prediction = 1. 0 if activation >= 0. 0 else 0. 0 • Stochastic Gradient Descent is the process of minimizing a function by following the gradients of the cost function. w = wold + learning_rate * (expected - predicted) * x � 14

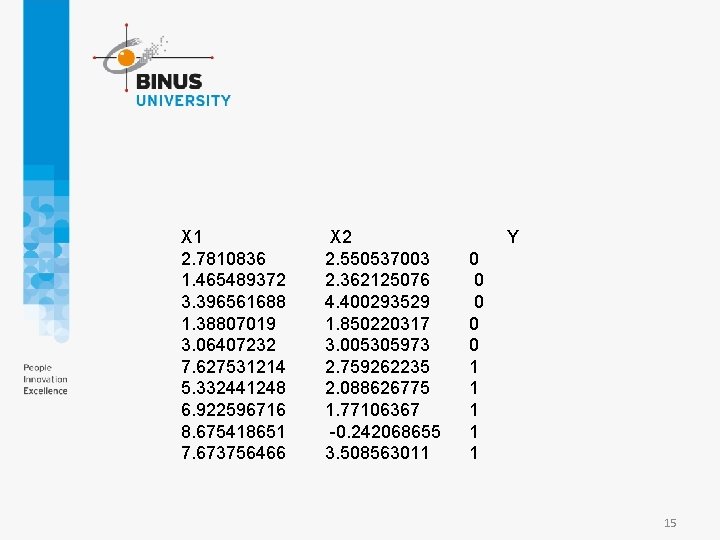

X 1 2. 7810836 1. 465489372 3. 396561688 1. 38807019 3. 06407232 7. 627531214 5. 332441248 6. 922596716 8. 675418651 7. 673756466 X 2 2. 550537003 2. 362125076 4. 400293529 1. 850220317 3. 005305973 2. 759262235 2. 088626775 1. 77106367 -0. 242068655 3. 508563011 Y 0 0 0 1 1 15

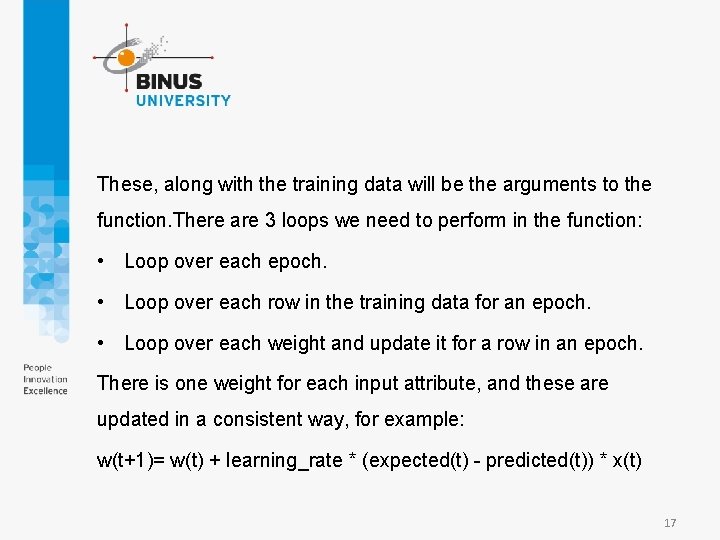

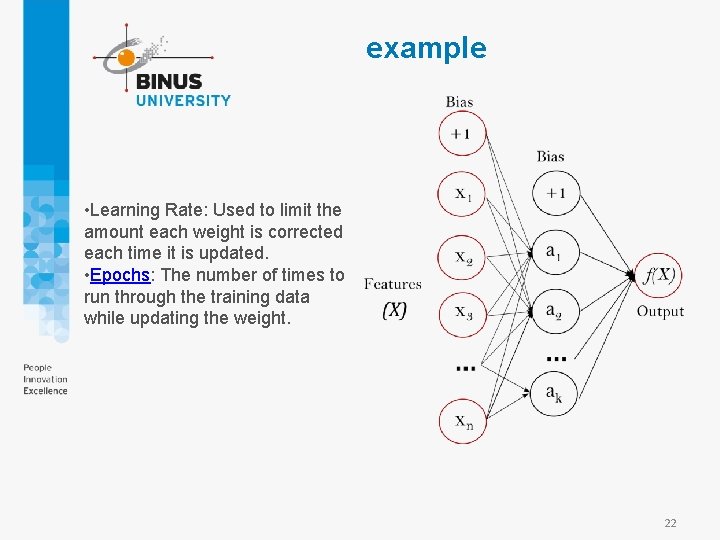

• Training Network Weights • We can estimate the weight values for our training data using stochastic gradient descent. • Stochastic gradient descent requires two parameters: • Learning Rate: Used to limit the amount each weight is corrected each time it is updated. • Epochs: The number of times to run through the training data while updating the weight. 16

These, along with the training data will be the arguments to the function. There are 3 loops we need to perform in the function: • Loop over each epoch. • Loop over each row in the training data for an epoch. • Loop over each weight and update it for a row in an epoch. There is one weight for each input attribute, and these are updated in a consistent way, for example: w(t+1)= w(t) + learning_rate * (expected(t) - predicted(t)) * x(t) 17

The bias is updated in a similar way, except without an input as it is not associated with a specific input value: bias(t+1) = bias(t) + learning_rate * (expected(t) - predicted(t)) 3 4 5 6 >epoch=0, lrate=0. 100, error=2. 000 >epoch=1, lrate=0. 100, error=1. 000 >epoch=2, lrate=0. 100, error=0. 000 >epoch=3, lrate=0. 100, error=0. 000 >epoch=4, lrate=0. 100, error=0. 000 [-0. 1, 0. 20653640140000007, -0. 23418117710000003] 18

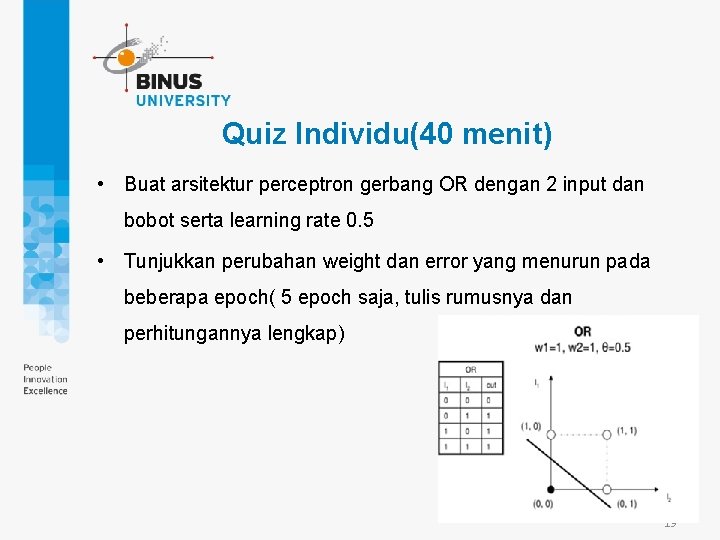

Quiz Individu(40 menit) • Buat arsitektur perceptron gerbang OR dengan 2 input dan bobot serta learning rate 0. 5 • Tunjukkan perubahan weight dan error yang menurun pada beberapa epoch( 5 epoch saja, tulis rumusnya dan perhitungannya lengkap) 19

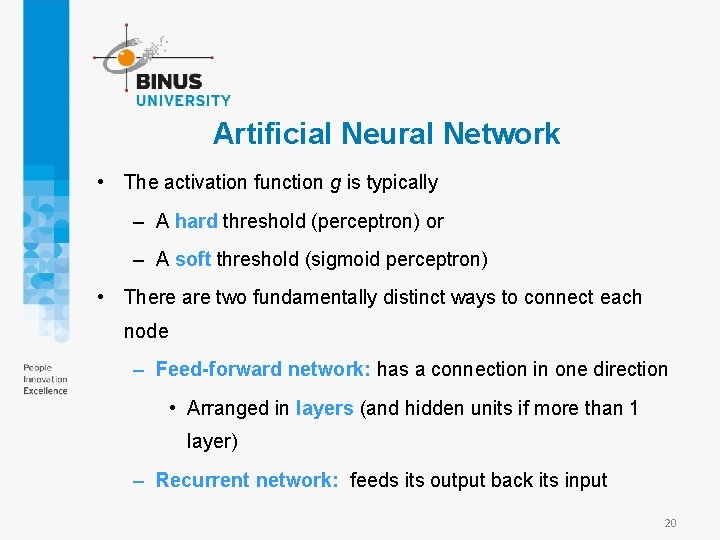

Artificial Neural Network • The activation function g is typically – A hard threshold (perceptron) or – A soft threshold (sigmoid perceptron) • There are two fundamentally distinct ways to connect each node – Feed-forward network: has a connection in one direction • Arranged in layers (and hidden units if more than 1 layer) – Recurrent network: feeds its output back its input 20

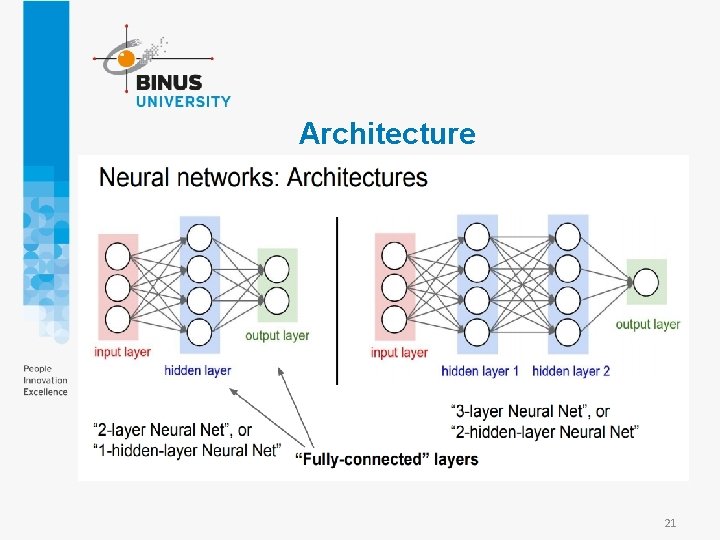

Architecture 21

example • Learning Rate: Used to limit the amount each weight is corrected each time it is updated. • Epochs: The number of times to run through the training data while updating the weight. 22

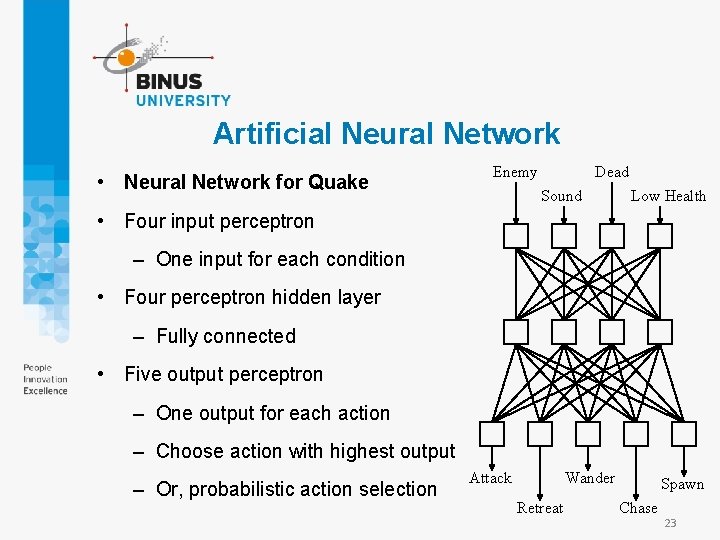

Artificial Neural Network • Neural Network for Quake Enemy Dead Sound Low Health • Four input perceptron – One input for each condition • Four perceptron hidden layer – Fully connected • Five output perceptron – One output for each action – Choose action with highest output – Or, probabilistic action selection Attack Wander Retreat Spawn Chase 23

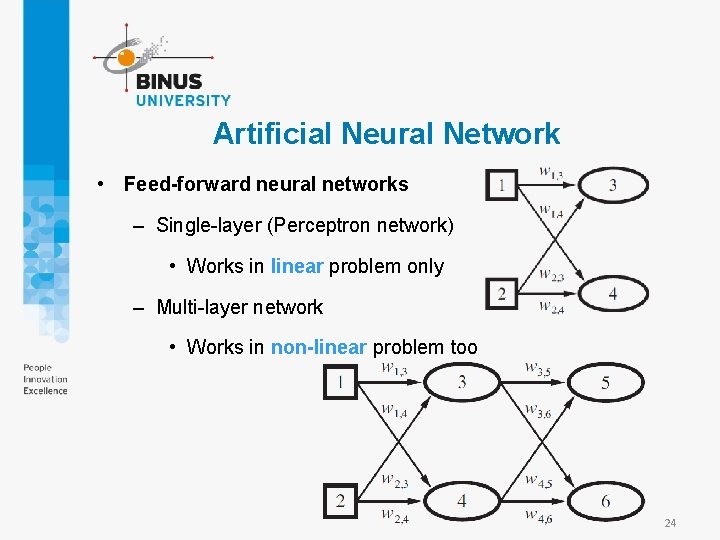

Artificial Neural Network • Feed-forward neural networks – Single-layer (Perceptron network) • Works in linear problem only – Multi-layer network • Works in non-linear problem too 24

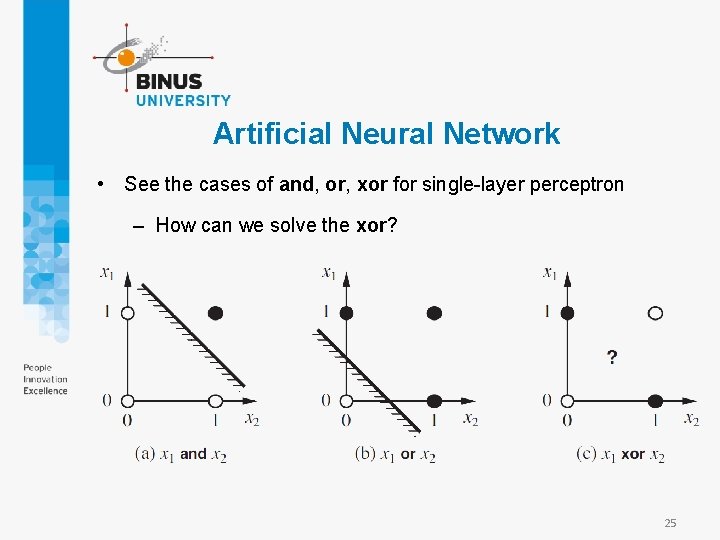

Artificial Neural Network • See the cases of and, or, xor for single-layer perceptron – How can we solve the xor? 25

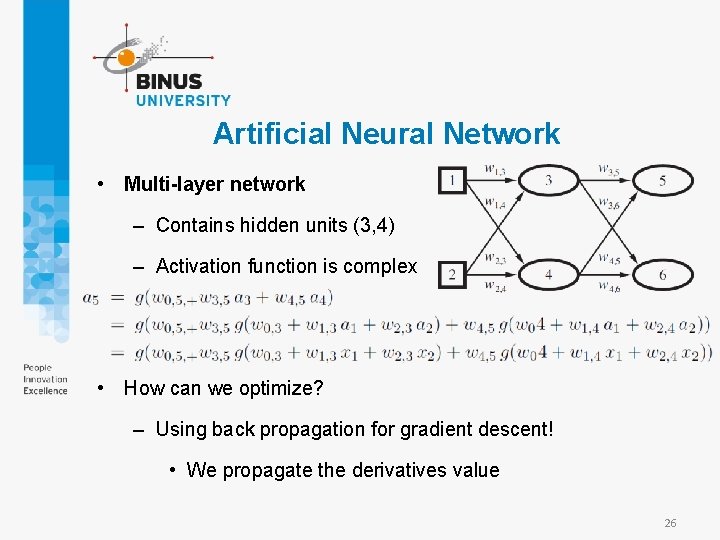

Artificial Neural Network • Multi-layer network – Contains hidden units (3, 4) – Activation function is complex • How can we optimize? – Using back propagation for gradient descent! • We propagate the derivatives value 26

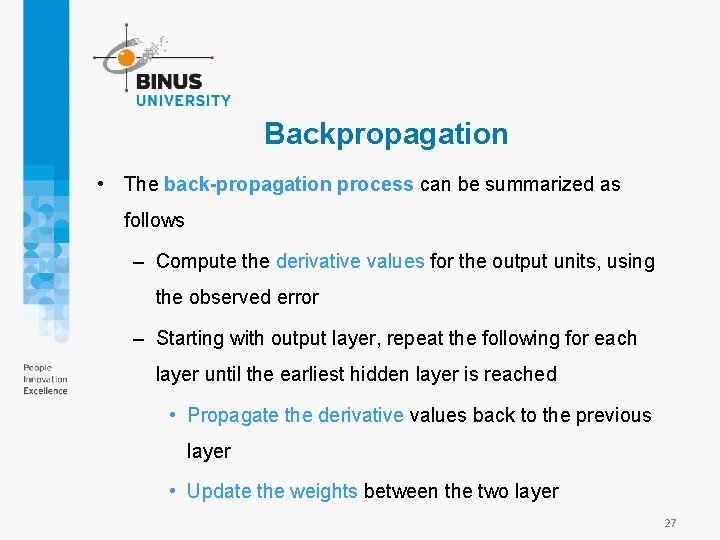

Backpropagation • The back-propagation process can be summarized as follows – Compute the derivative values for the output units, using the observed error – Starting with output layer, repeat the following for each layer until the earliest hidden layer is reached • Propagate the derivative values back to the previous layer • Update the weights between the two layer 27

Example Class MLPClassifier implements a multi-layer perceptron (MLP) algorithm that trains using Backpropagation. MLP trains on two arrays: array X of size (n_samples, n_features), which holds the training samples represented as floating point feature vectors; and array y of size (n_samples, ), which holds the target values (class labels) for the training samples: 28

![example from sklearn. neural_network import MLPClassifier X = [[0, 0], [1. 5, 1. 5], example from sklearn. neural_network import MLPClassifier X = [[0, 0], [1. 5, 1. 5],](http://slidetodoc.com/presentation_image_h/97ca9ebfb44bab04b0180f78d01c483c/image-29.jpg)

example from sklearn. neural_network import MLPClassifier X = [[0, 0], [1. 5, 1. 5], [2, 2] ] y = [0, 1, 2] clf = MLPClassifier(solver='lbfgs', alpha=1 e-5, hidden_layer_sizes=(5, 2), random_state=1) clf. fit(X, y) MLPClassifier(alpha=1 e-05, hidden_layer_sizes=(5, 2), random_state=1, solver='lbfgs') print (clf. predict([[0, 0], [1. 2, 1. 5], [2. 1, 2. 2]])) [0 1 2] https: //repl. it/languages/python 3 29

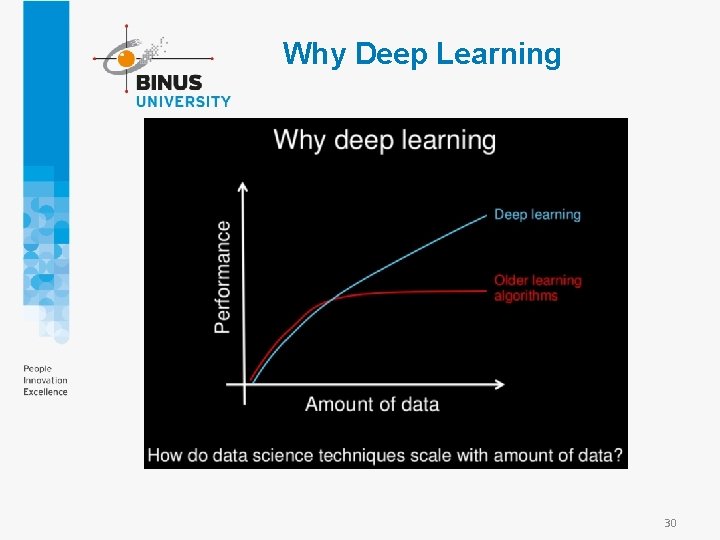

Why Deep Learning 30

References • https: //www. pythoncourse. eu/neural_networks_backpropagation. php • Stuart Russell, Peter Norvig. 2010. Artificial Intelligence : A Modern Approach. Pearson Education. New Jersey. ISBN: 9780132071482 • https: //www. freecodecamp. org/news/build-a-flexible-neuralnetwork-with-backpropagation-in-python-acffeb 7846 d 0/ • http: //cs 231 n. stanford. edu/slides/2018/cs 231 n_2018_lecture 04. pdf 31

Exercise 32

• https: //www. springboard. com/blog/beginners-guide-neuralnetwork-in-python-scikit-learn-0 -18/ Jalankan demo di atas dan pelajari konsepnya 33

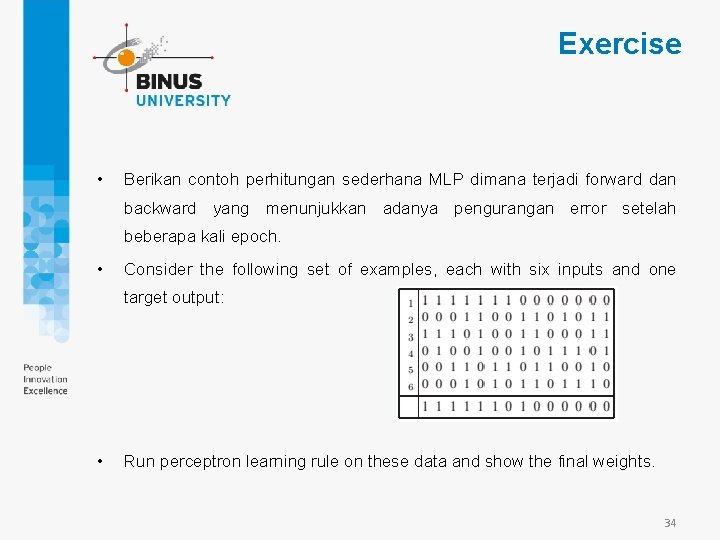

Exercise • Berikan contoh perhitungan sederhana MLP dimana terjadi forward dan backward yang menunjukkan adanya pengurangan error setelah beberapa kali epoch. • Consider the following set of examples, each with six inputs and one target output: • Run perceptron learning rule on these data and show the final weights. 34

- Slides: 34