Chapter 10 Virtual Memory Operating System Concepts 10

- Slides: 47

Chapter 10: Virtual Memory Operating System Concepts – 10 th Edition Silberschatz, Galvin and Gagne © 2018

Chapter 10: Virtual Memory n Background n Demand Paging n Copy-on-Write n Page Replacement n Other Considerations n Allocation of Frames n Thrashing n Memory-Mapped Files n Allocating Kernel Memory n Operating-System Examples Operating System Concepts – 10 th Edition 10. 2 Silberschatz, Galvin and Gagne © 2018

Objectives n Define virtual memory and describe its benefits. n Illustrate how pages are loaded into memory using demand paging. n Apply the FIFO, optimal, and LRU page-replacement algorithms. n Describe the working set of a process, and explain how it is related to program locality. n Describe how Linux, Windows 10, and Solaris manage virtual memory. n Design a virtual memory manager simulation in the C programming language. Operating System Concepts – 10 th Edition 10. 3 Silberschatz, Galvin and Gagne © 2018

Background n Code needs to be in memory to execute, l But entire program is rarely used 4 i. e. Error code, Unusual routines, Large data structures n Entire program code might be not needed at the same time n Consider ability to execute partially-loaded program l Program no longer constrained by limits of physical memory l Each program takes less memory while running 4 More programs can run at the same time 4 This increases CPU utilization and throughput with no increase in response time and/or turnaround time l Less I/O needed to load or swap programs into memory 4 each user program runs faster Operating System Concepts – 10 th Edition 10. 4 Silberschatz, Galvin and Gagne © 2018

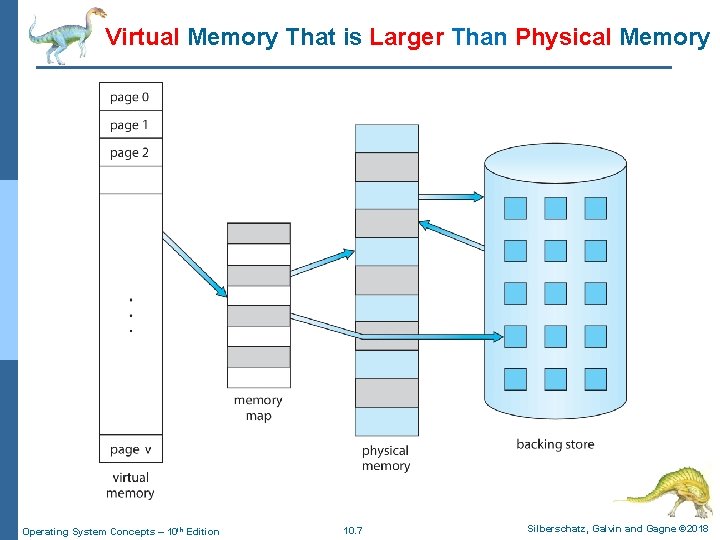

Virtual memory n Virtual memory – l separation of user logical memory from physical memory n Only part of the program needs to be in memory for execution, therefore l Logical address space can be much larger than physical address space l Allows address spaces to be shared by several processes l Allows for more efficient process creation l More programs running concurrently l Less I/O needed to load or swap processes Operating System Concepts – 10 th Edition 10. 5 Silberschatz, Galvin and Gagne © 2018

Virtual memory (Cont. ) n Virtual Address Space – l Logical view of how process is stored in memory l Usually start at address 0, contiguous addresses until end of space l Meanwhile, physical memory organized in frames (physical page frames) l MMU must map logical to physical n Virtual memory can be implemented via: l Demand paging l Demand segmentation Operating System Concepts – 10 th Edition 10. 6 Silberschatz, Galvin and Gagne © 2018

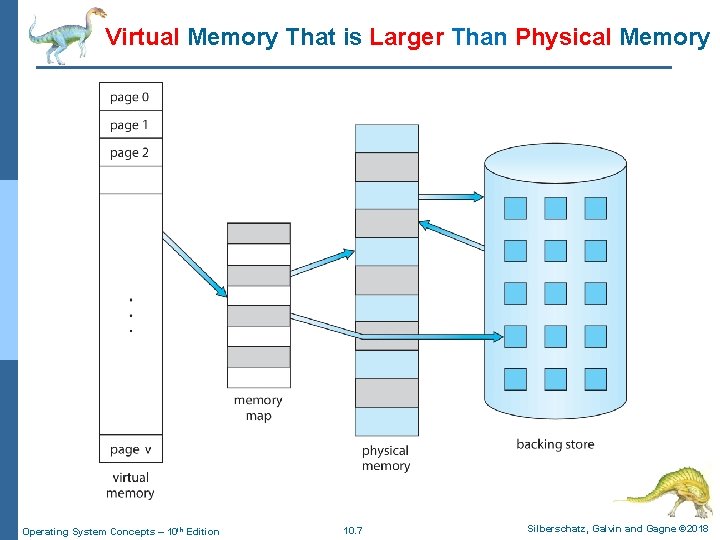

Virtual Memory That is Larger Than Physical Memory Operating System Concepts – 10 th Edition 10. 7 Silberschatz, Galvin and Gagne © 2018

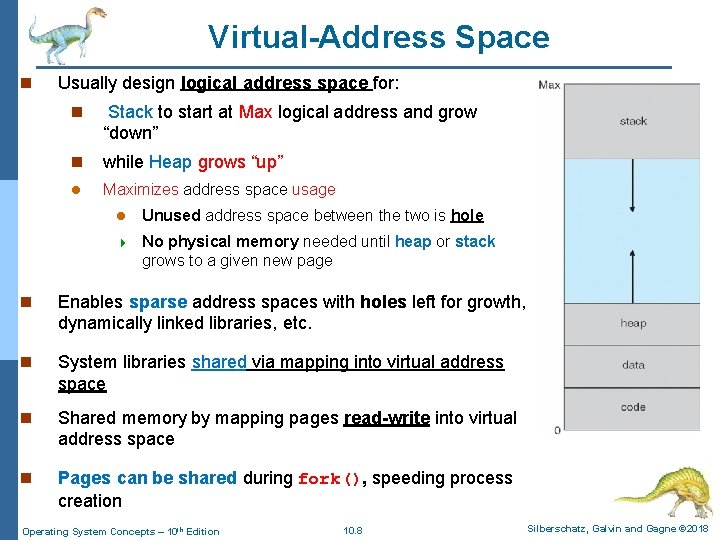

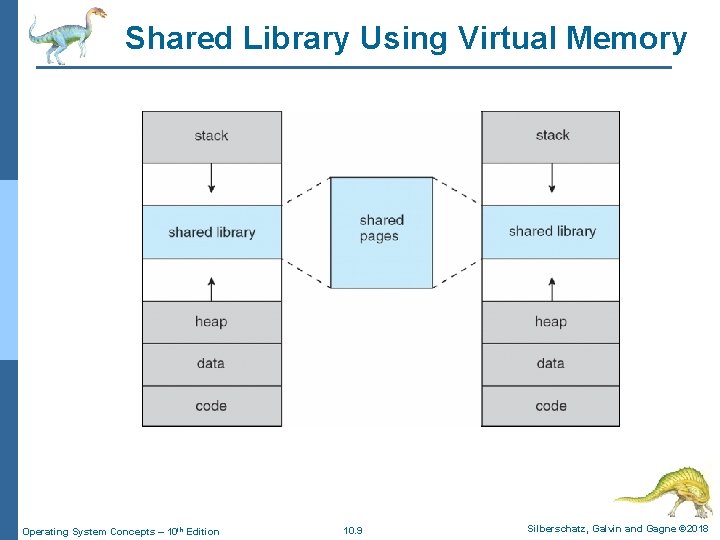

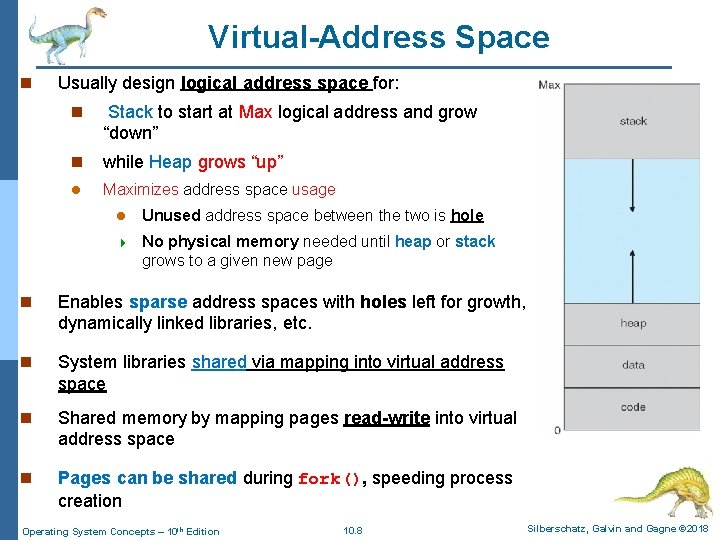

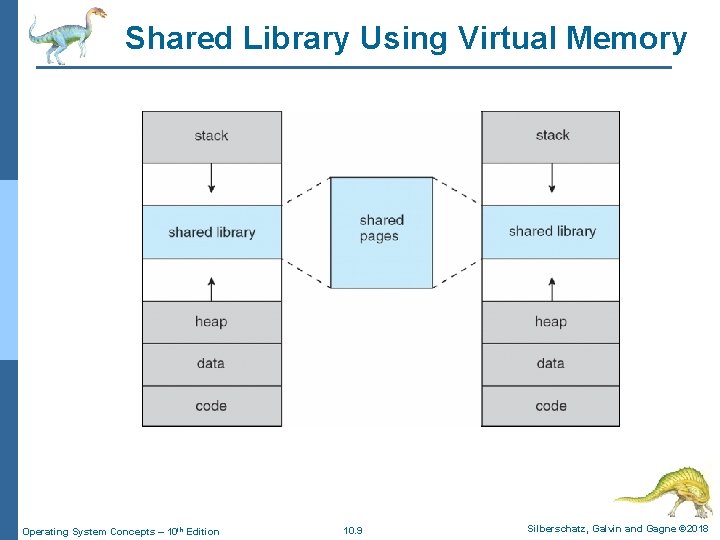

Virtual-Address Space n Usually design logical address space for: n Stack to start at Max logical address and grow “down” n while Heap grows “up” l Maximizes address space usage l Unused address space between the two is hole 4 No physical memory needed until heap or stack grows to a given new page n Enables sparse address spaces with holes left for growth, dynamically linked libraries, etc. n System libraries shared via mapping into virtual address space n Shared memory by mapping pages read-write into virtual address space n Pages can be shared during fork(), speeding process creation Operating System Concepts – 10 th Edition 10. 8 Silberschatz, Galvin and Gagne © 2018

Shared Library Using Virtual Memory Operating System Concepts – 10 th Edition 10. 9 Silberschatz, Galvin and Gagne © 2018

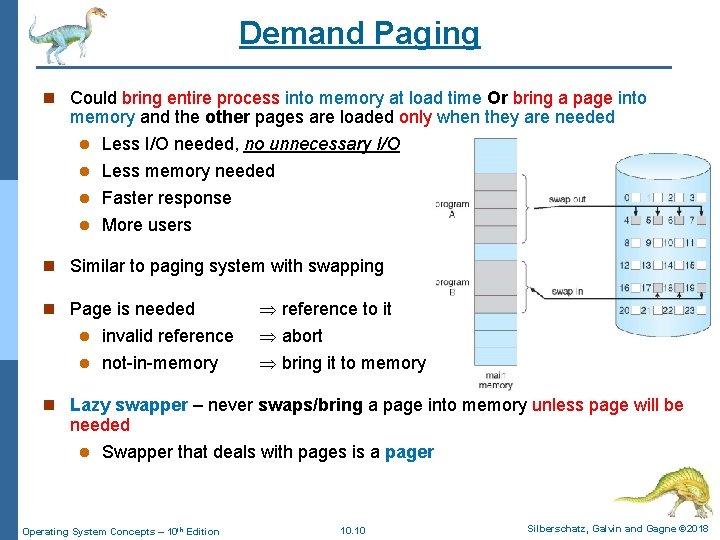

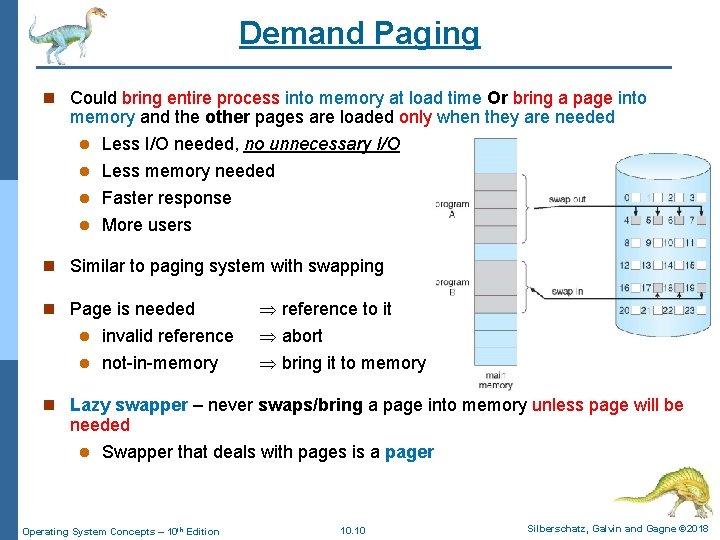

Demand Paging n Could bring entire process into memory at load time Or bring a page into memory and the other pages are loaded only when they are needed l Less I/O needed, no unnecessary I/O l Less memory needed l Faster response l More users n Similar to paging system with swapping l invalid reference to it abort l not-in-memory bring it to memory n Page is needed n Lazy swapper – never swaps/bring a page into memory unless page will be needed l Swapper that deals with pages is a pager Operating System Concepts – 10 th Edition 10. 10 Silberschatz, Galvin and Gagne © 2018

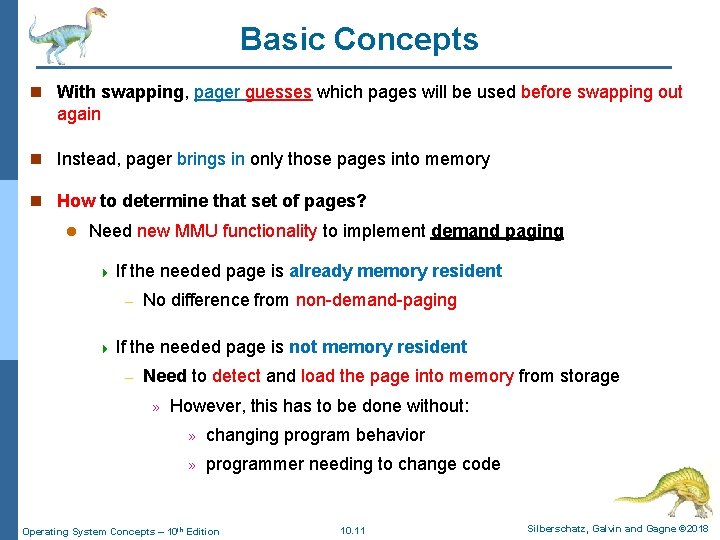

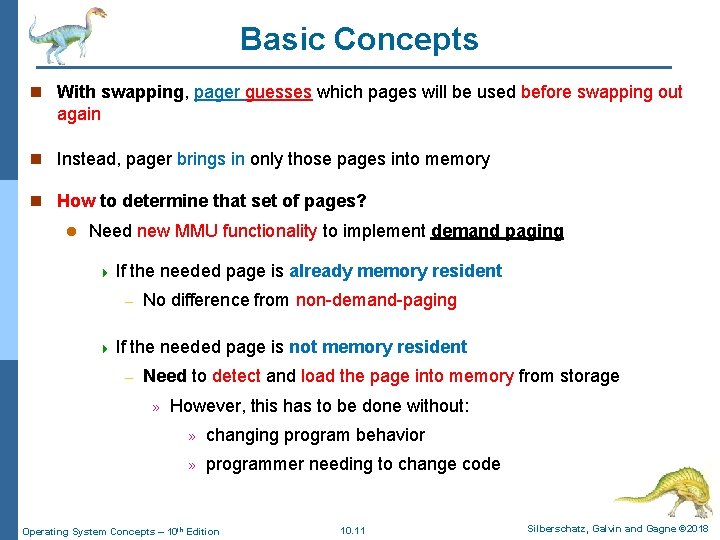

Basic Concepts n With swapping, pager guesses which pages will be used before swapping out again n Instead, pager brings in only those pages into memory n How to determine that set of pages? l Need new MMU functionality to implement demand paging 4 If the needed page is already memory resident – 4 If No difference from non-demand-paging the needed page is not memory resident – Need to detect and load the page into memory from storage » However, this has to be done without: » changing program behavior » programmer needing to change code Operating System Concepts – 10 th Edition 10. 11 Silberschatz, Galvin and Gagne © 2018

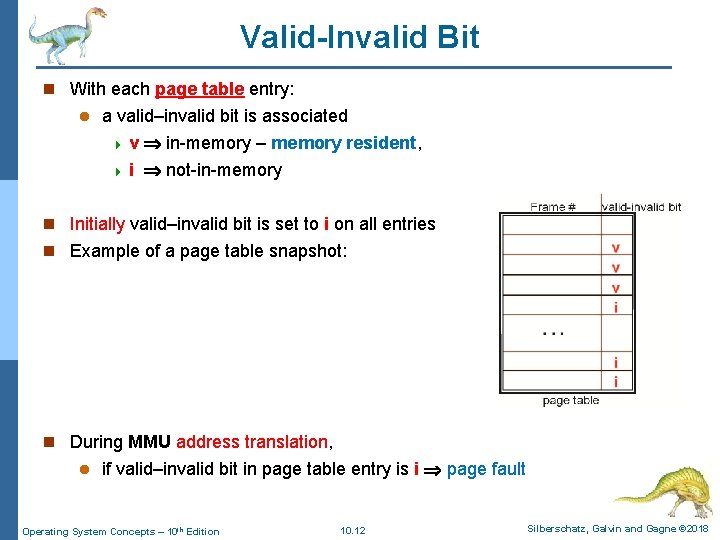

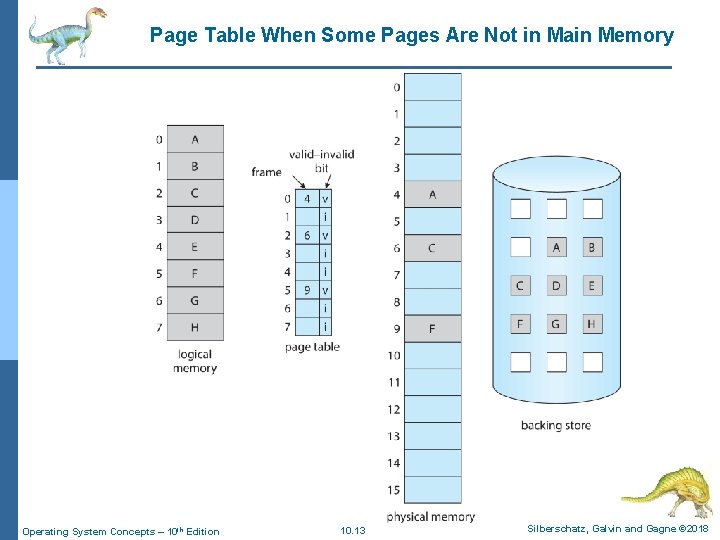

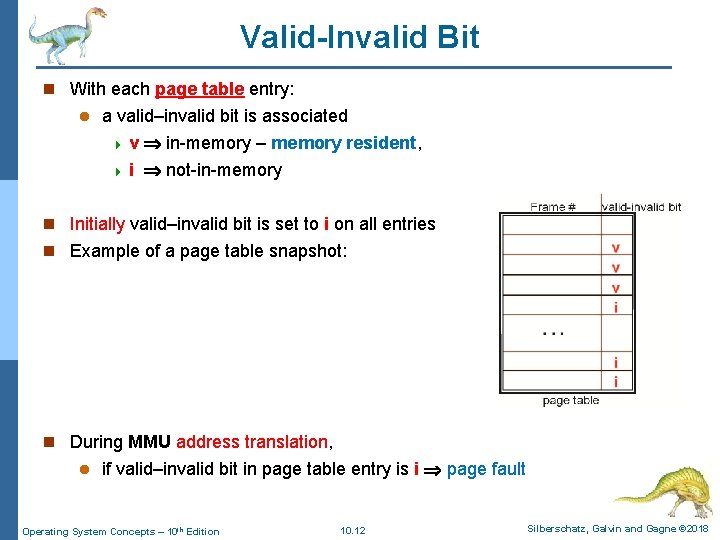

Valid-Invalid Bit n With each page table entry: l a valid–invalid bit is associated 4 v in-memory – memory resident, 4 i not-in-memory n Initially valid–invalid bit is set to i on all entries n Example of a page table snapshot: n During MMU address translation, l if valid–invalid bit in page table entry is i page fault Operating System Concepts – 10 th Edition 10. 12 Silberschatz, Galvin and Gagne © 2018

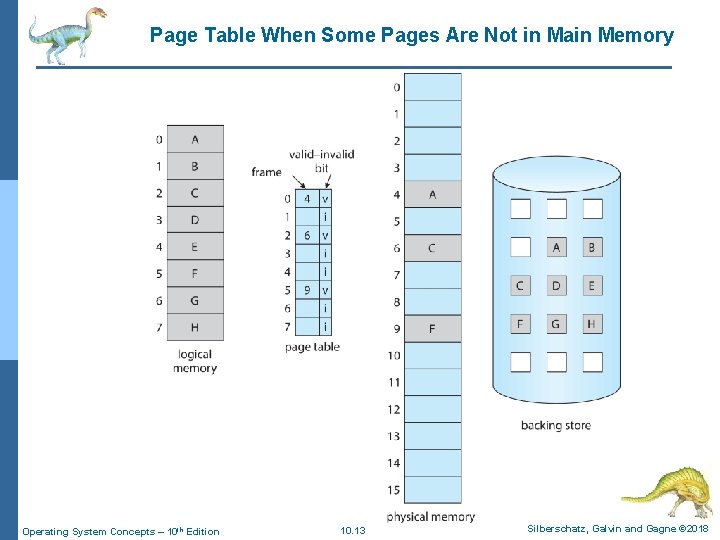

Page Table When Some Pages Are Not in Main Memory Operating System Concepts – 10 th Edition 10. 13 Silberschatz, Galvin and Gagne © 2018

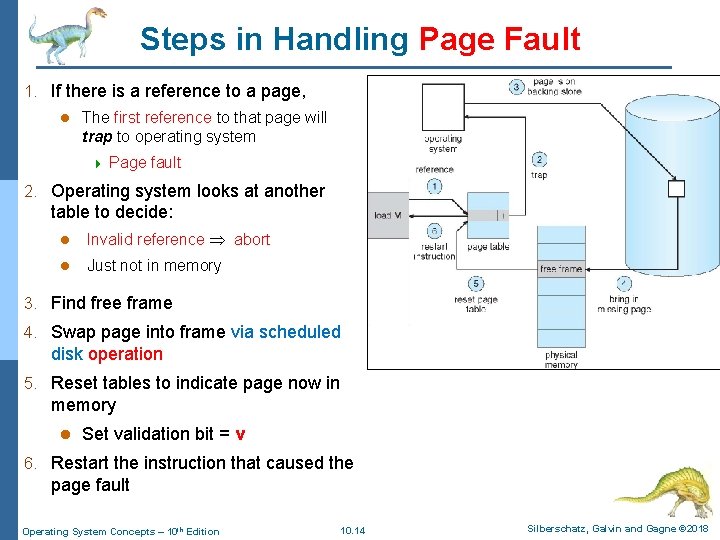

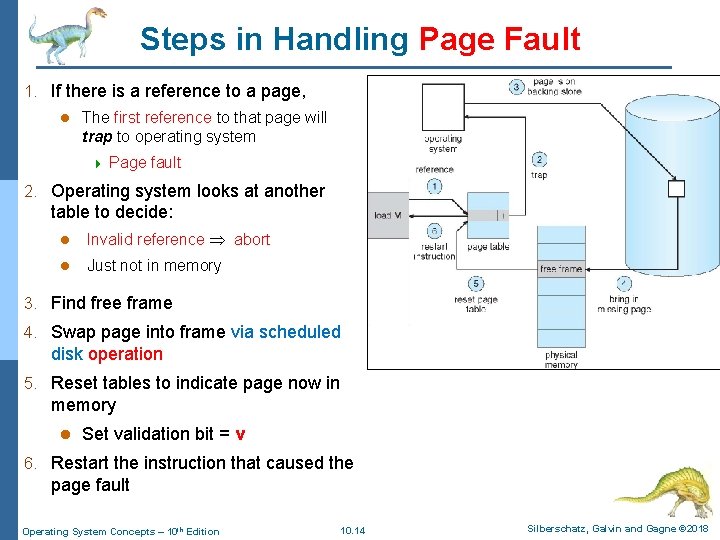

Steps in Handling Page Fault 1. If there is a reference to a page, l The first reference to that page will trap to operating system 4 Page fault 2. Operating system looks at another table to decide: l Invalid reference abort l Just not in memory 3. Find free frame 4. Swap page into frame via scheduled disk operation 5. Reset tables to indicate page now in memory l Set validation bit = v 6. Restart the instruction that caused the page fault Operating System Concepts – 10 th Edition 10. 14 Silberschatz, Galvin and Gagne © 2018

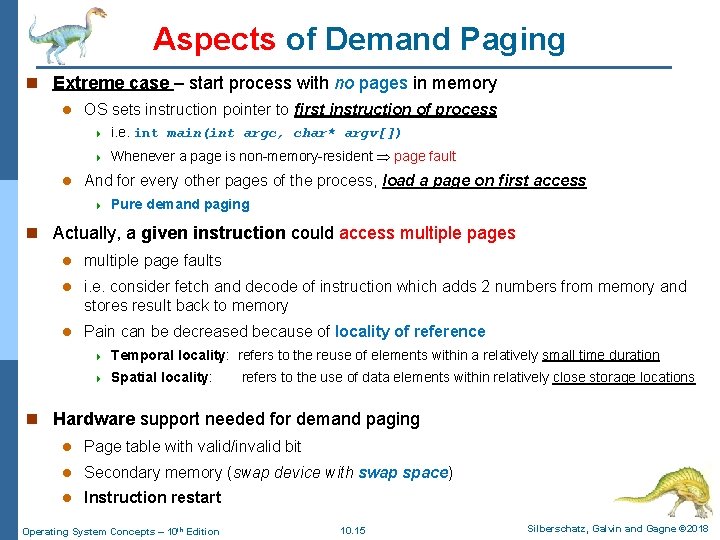

Aspects of Demand Paging n Extreme case – start process with no pages in memory l l OS sets instruction pointer to first instruction of process 4 i. e. int main(int argc, char* argv[]) 4 Whenever a page is non-memory-resident page fault And for every other pages of the process, load a page on first access 4 Pure demand paging n Actually, a given instruction could access multiple pages l multiple page faults l i. e. consider fetch and decode of instruction which adds 2 numbers from memory and stores result back to memory l Pain can be decreased because of locality of reference 4 Temporal locality: refers to the reuse of elements within a relatively small time duration 4 Spatial locality: refers to the use of data elements within relatively close storage locations n Hardware support needed for demand paging l Page table with valid/invalid bit l Secondary memory (swap device with swap space) l Instruction restart Operating System Concepts – 10 th Edition 10. 15 Silberschatz, Galvin and Gagne © 2018

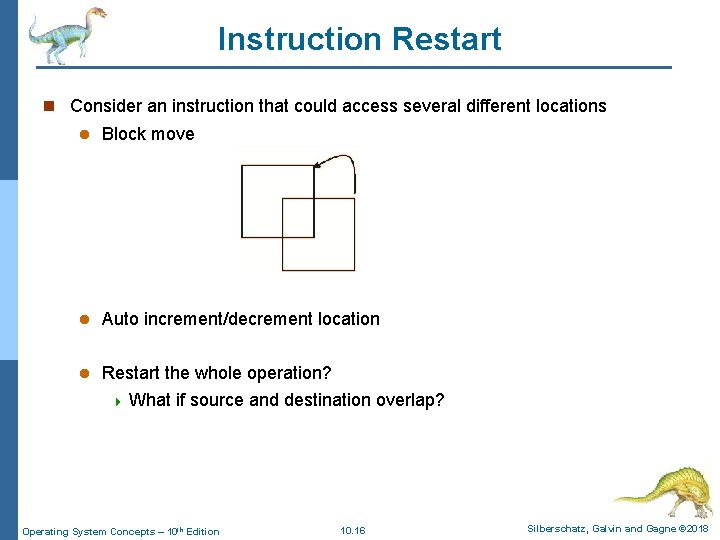

Instruction Restart n Consider an instruction that could access several different locations l Block move l Auto increment/decrement location l Restart the whole operation? 4 What if source and destination overlap? Operating System Concepts – 10 th Edition 10. 16 Silberschatz, Galvin and Gagne © 2018

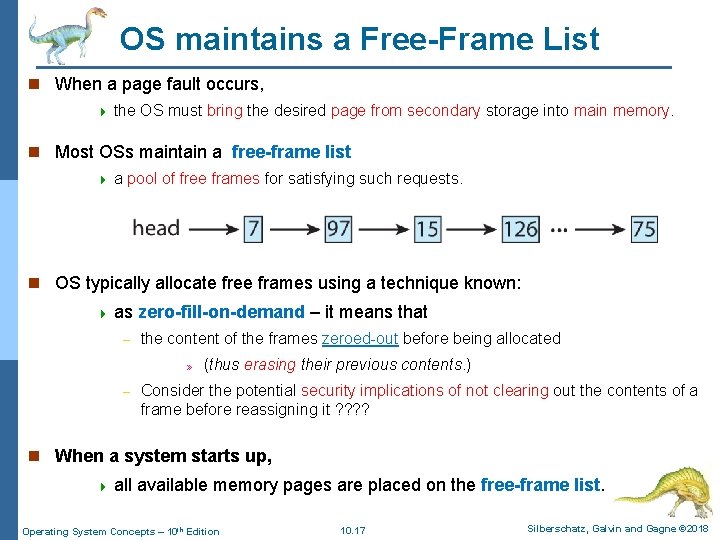

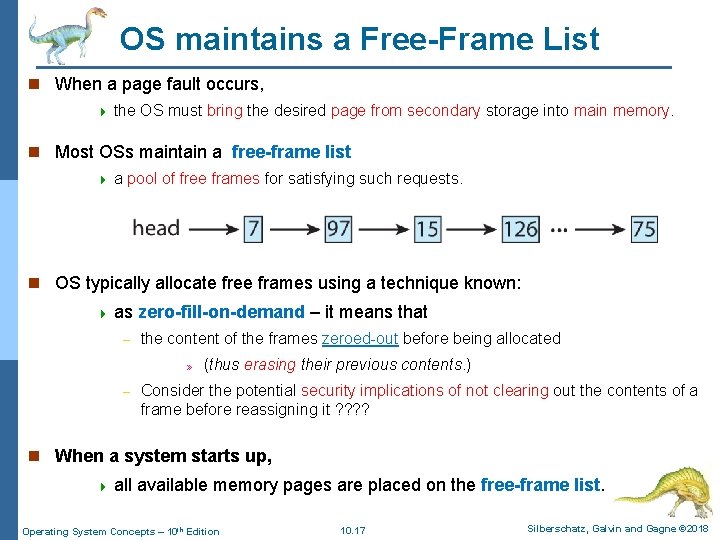

OS maintains a Free-Frame List n When a page fault occurs, 4 the OS must bring the desired page from secondary storage into main memory. n Most OSs maintain a free-frame list 4 a pool of free frames for satisfying such requests. n OS typically allocate free frames using a technique known: 4 as – zero-fill-on-demand – it means that the content of the frames zeroed-out before being allocated » – (thus erasing their previous contents. ) Consider the potential security implications of not clearing out the contents of a frame before reassigning it ? ? n When a system starts up, 4 all available memory pages are placed on the free-frame list. Operating System Concepts – 10 th Edition 10. 17 Silberschatz, Galvin and Gagne © 2018

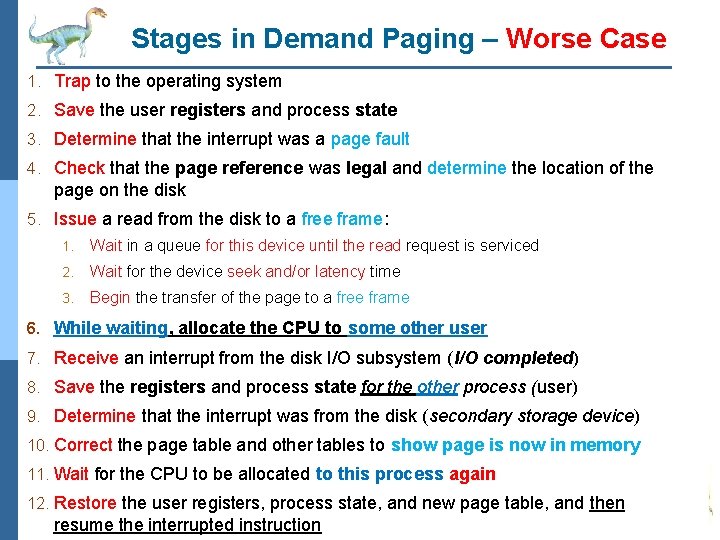

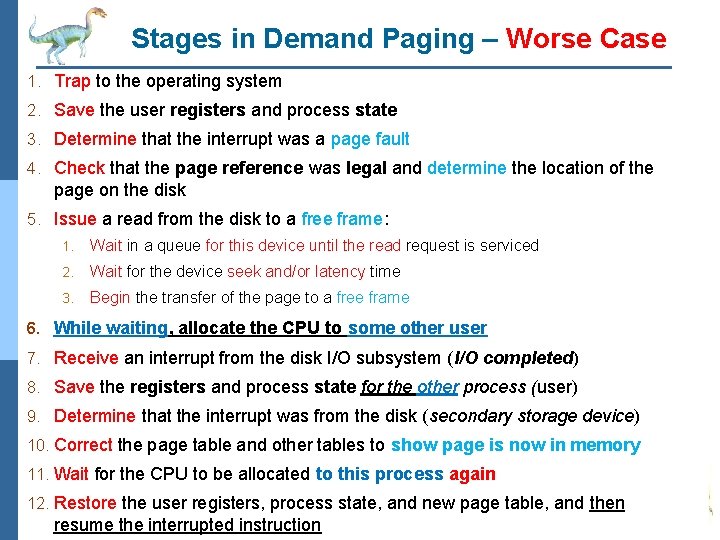

Stages in Demand Paging – Worse Case 1. Trap to the operating system 2. Save the user registers and process state 3. Determine that the interrupt was a page fault 4. Check that the page reference was legal and determine the location of the page on the disk 5. Issue a read from the disk to a free frame: 1. Wait in a queue for this device until the read request is serviced 2. Wait for the device seek and/or latency time 3. Begin the transfer of the page to a free frame 6. While waiting, allocate the CPU to some other user 7. Receive an interrupt from the disk I/O subsystem (I/O completed) 8. Save the registers and process state for the other process (user) 9. Determine that the interrupt was from the disk (secondary storage device) 10. Correct the page table and other tables to show page is now in memory 11. Wait for the CPU to be allocated to this process again 12. Restore the user registers, process state, and new page table, and then resume the interrupted instruction Operating System Concepts – 10 th Edition 10. 18 Silberschatz, Galvin and Gagne © 2018

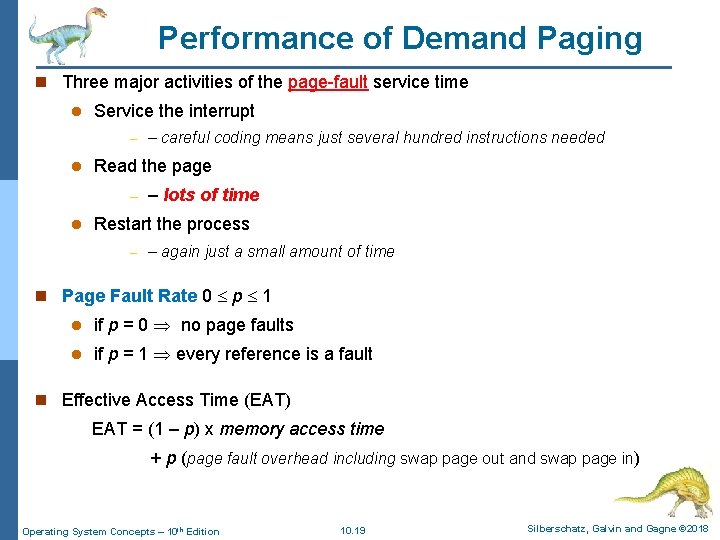

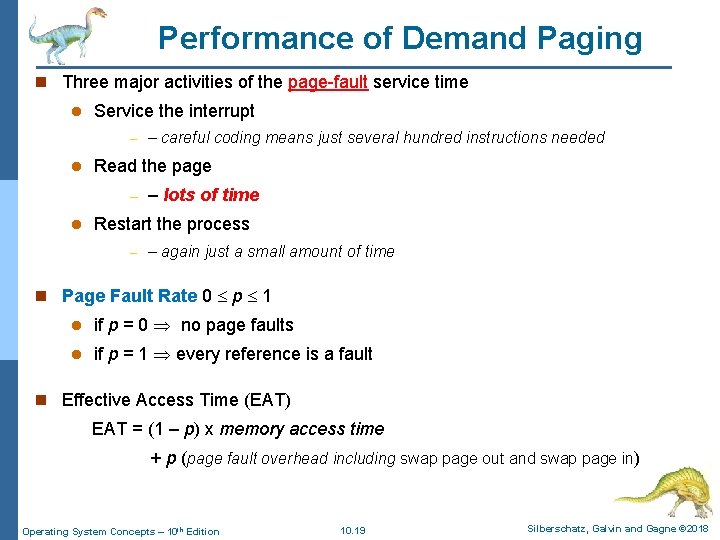

Performance of Demand Paging n Three major activities of the page-fault service time l Service the interrupt – l Read the page – l – careful coding means just several hundred instructions needed – lots of time Restart the process – – again just a small amount of time n Page Fault Rate 0 p 1 l if p = 0 no page faults l if p = 1 every reference is a fault n Effective Access Time (EAT) EAT = (1 – p) x memory access time + p (page fault overhead including swap page out and swap page in) Operating System Concepts – 10 th Edition 10. 19 Silberschatz, Galvin and Gagne © 2018

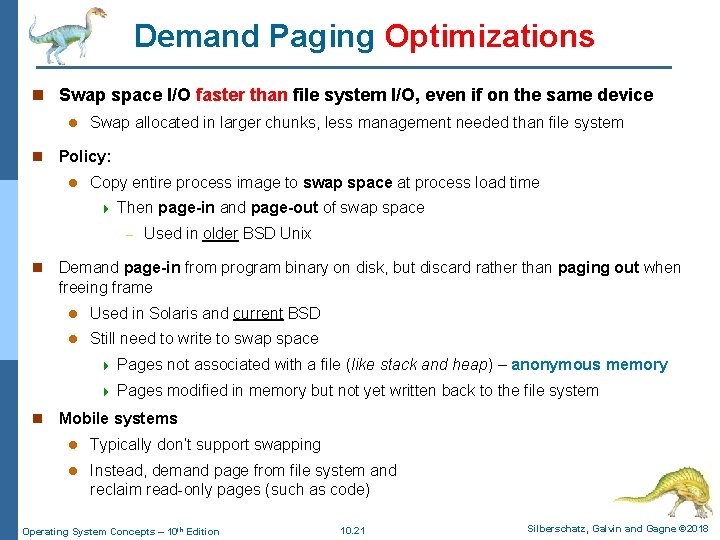

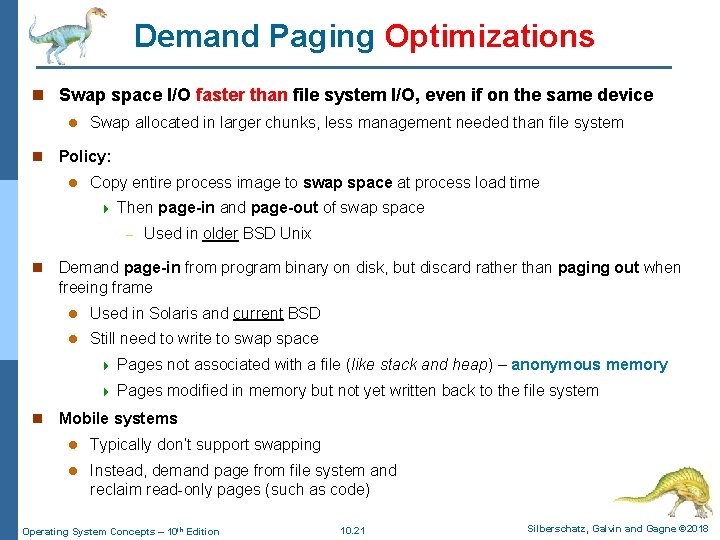

Demand Paging Optimizations n Swap space I/O faster than file system I/O, even if on the same device l n Swap allocated in larger chunks, less management needed than file system Policy: l Copy entire process image to swap space at process load time 4 Then page-in and page-out of swap space – n n Used in older BSD Unix Demand page-in from program binary on disk, but discard rather than paging out when freeing frame l Used in Solaris and current BSD l Still need to write to swap space 4 Pages not associated with a file (like stack and heap) – anonymous memory 4 Pages modified in memory but not yet written back to the file system Mobile systems l Typically don’t support swapping l Instead, demand page from file system and reclaim read-only pages (such as code) Operating System Concepts – 10 th Edition 10. 21 Silberschatz, Galvin and Gagne © 2018

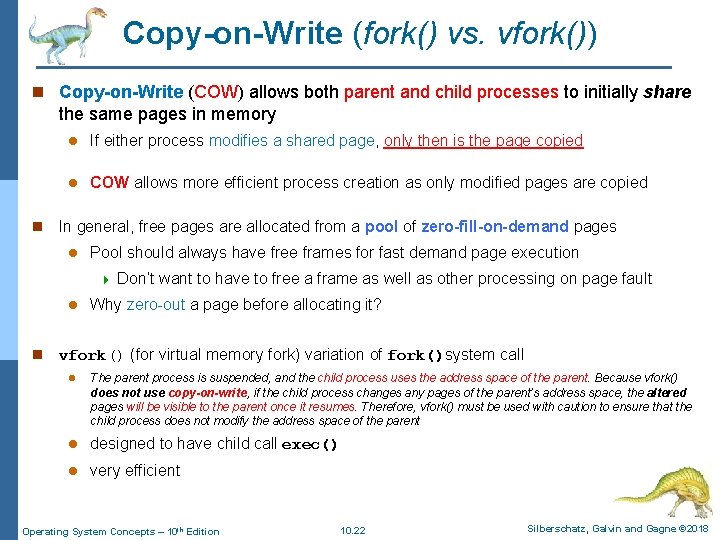

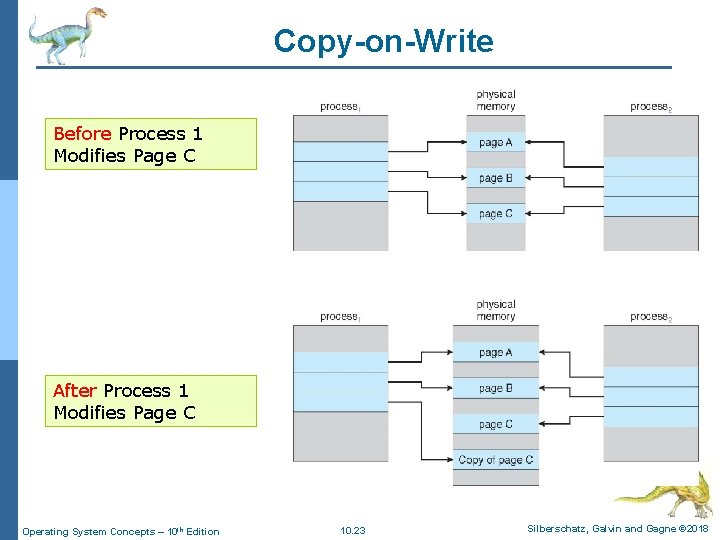

Copy-on-Write (fork() vs. vfork()) n Copy-on-Write (COW) allows both parent and child processes to initially share the same pages in memory n l If either process modifies a shared page, only then is the page copied l COW allows more efficient process creation as only modified pages are copied In general, free pages are allocated from a pool of zero-fill-on-demand pages l Pool should always have free frames for fast demand page execution 4 l n Don’t want to have to free a frame as well as other processing on page fault Why zero-out a page before allocating it? vfork() (for virtual memory fork) variation of fork()system call l The parent process is suspended, and the child process uses the address space of the parent. Because vfork() does not use copy-on-write, if the child process changes any pages of the parent’s address space, the altered pages will be visible to the parent once it resumes. Therefore, vfork() must be used with caution to ensure that the child process does not modify the address space of the parent l designed to have child call exec() l very efficient Operating System Concepts – 10 th Edition 10. 22 Silberschatz, Galvin and Gagne © 2018

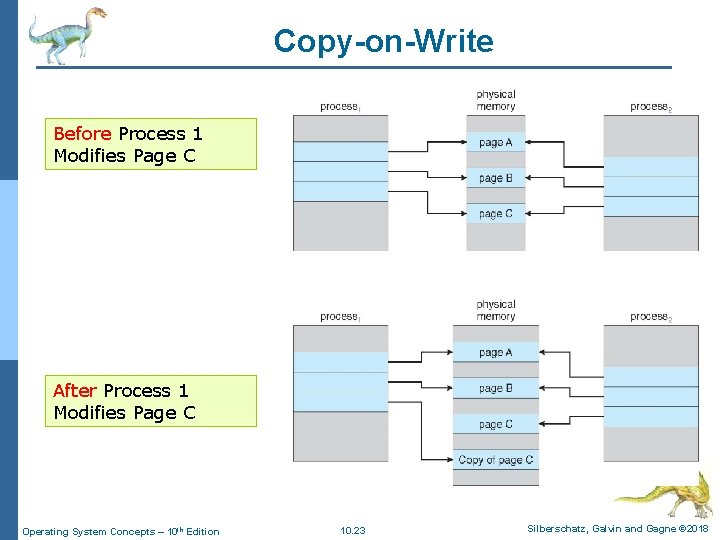

Copy-on-Write Before Process 1 Modifies Page C After Process 1 Modifies Page C Operating System Concepts – 10 th Edition 10. 23 Silberschatz, Galvin and Gagne © 2018

Page Replacement Algorithms Operating System Concepts – 10 th Edition Silberschatz, Galvin and Gagne © 2018

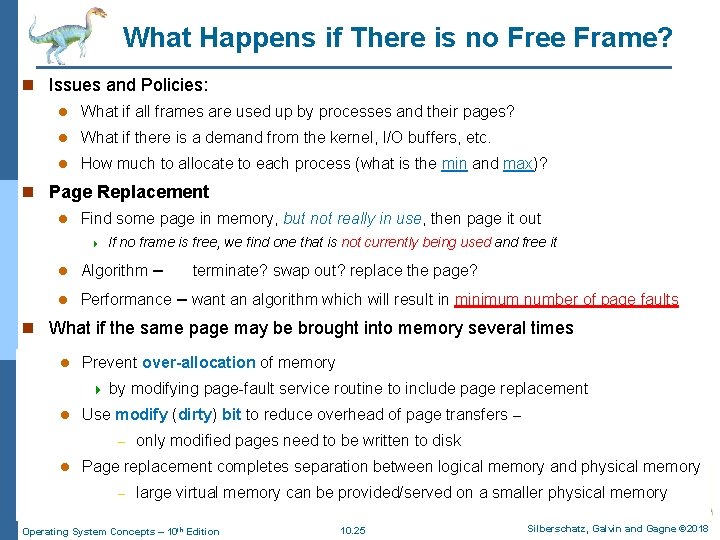

What Happens if There is no Free Frame? n Issues and Policies: l What if all frames are used up by processes and their pages? l What if there is a demand from the kernel, I/O buffers, etc. l How much to allocate to each process (what is the min and max)? n Page Replacement l Find some page in memory, but not really in use, then page it out 4 If no frame is free, we find one that is not currently being used and free it l Algorithm – l Performance – want an algorithm which will result in minimum number of page faults terminate? swap out? replace the page? n What if the same page may be brought into memory several times l Prevent over-allocation of memory 4 l by modifying page-fault service routine to include page replacement Use modify (dirty) bit to reduce overhead of page transfers – – l only modified pages need to be written to disk Page replacement completes separation between logical memory and physical memory – large virtual memory can be provided/served on a smaller physical memory Operating System Concepts – 10 th Edition 10. 25 Silberschatz, Galvin and Gagne © 2018

Need For Page Replacement 0 1 2 3 Operating System Concepts – 10 th Edition 10. 26 Silberschatz, Galvin and Gagne © 2018

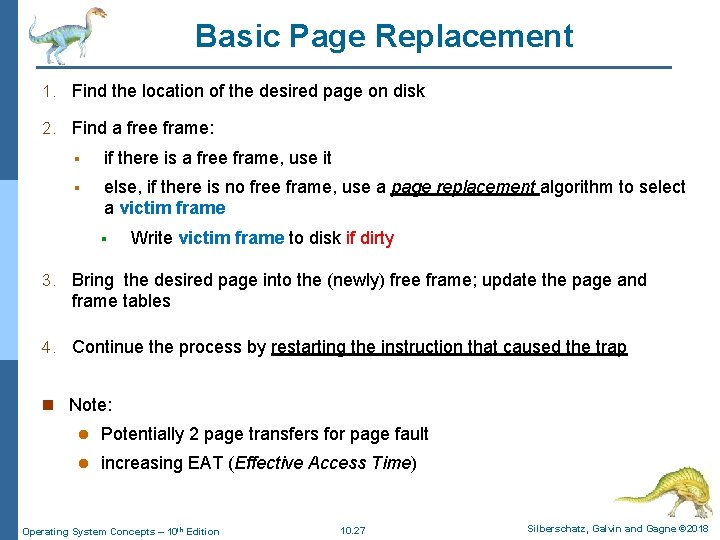

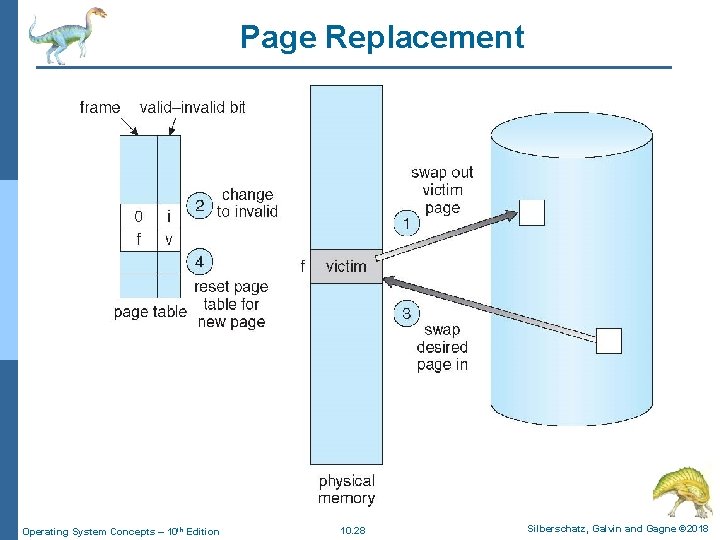

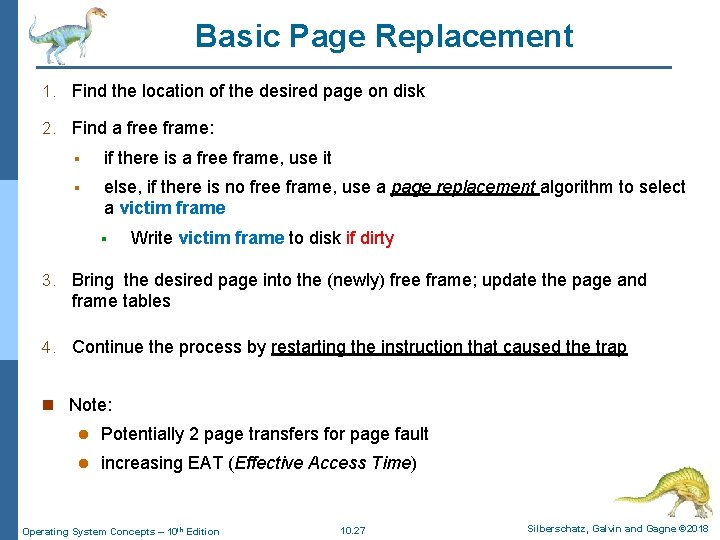

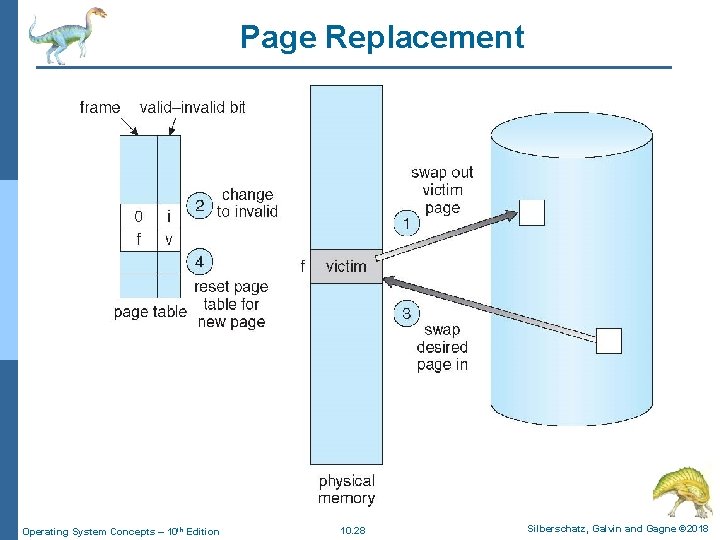

Basic Page Replacement 1. Find the location of the desired page on disk 2. Find a free frame: § if there is a free frame, use it § else, if there is no free frame, use a page replacement algorithm to select a victim frame § Write victim frame to disk if dirty 3. Bring the desired page into the (newly) free frame; update the page and frame tables 4. Continue the process by restarting the instruction that caused the trap n Note: l Potentially 2 page transfers for page fault l increasing EAT (Effective Access Time) Operating System Concepts – 10 th Edition 10. 27 Silberschatz, Galvin and Gagne © 2018

Page Replacement Operating System Concepts – 10 th Edition 10. 28 Silberschatz, Galvin and Gagne © 2018

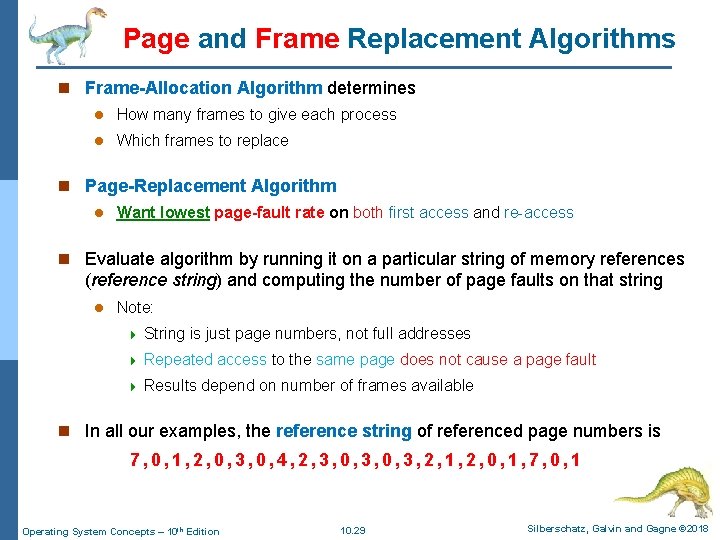

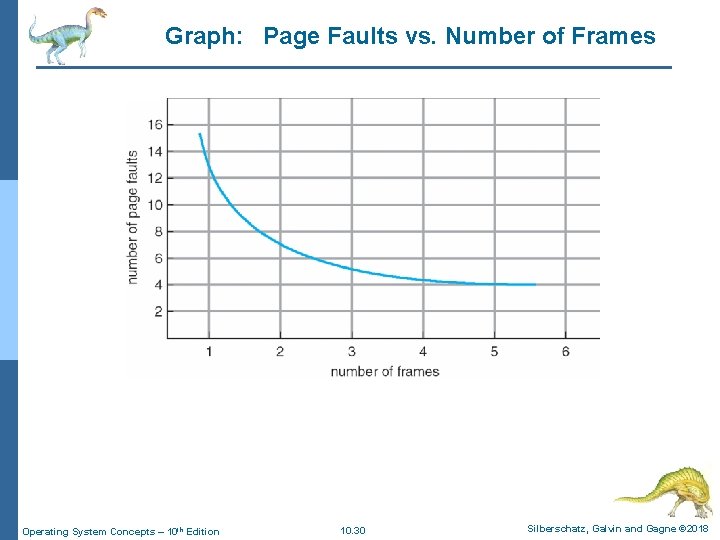

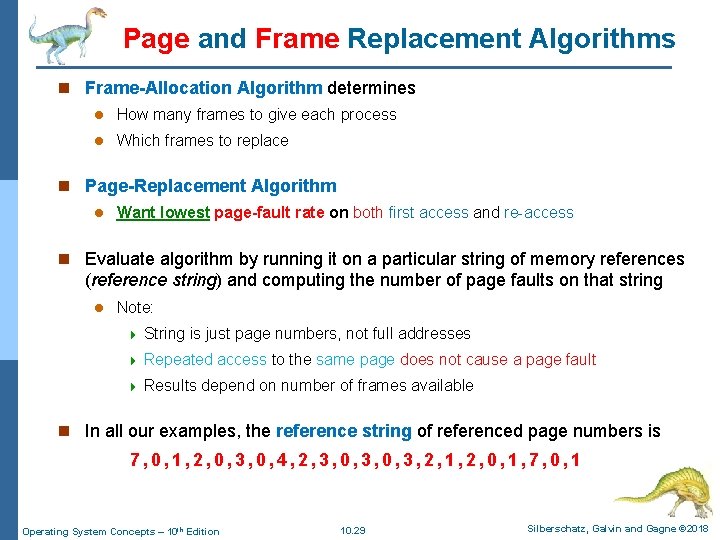

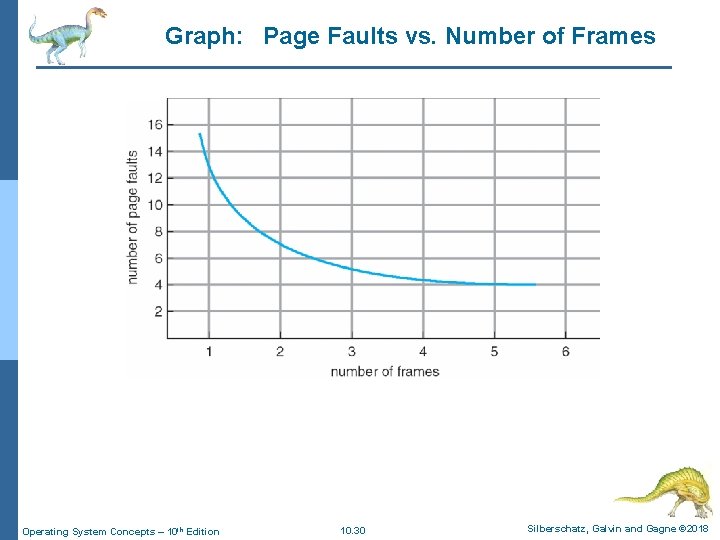

Page and Frame Replacement Algorithms n Frame-Allocation Algorithm determines l How many frames to give each process l Which frames to replace n Page-Replacement Algorithm l Want lowest page-fault rate on both first access and re-access n Evaluate algorithm by running it on a particular string of memory references (reference string) and computing the number of page faults on that string l Note: 4 String is just page numbers, not full addresses 4 Repeated access to the same page does not cause a page fault 4 Results depend on number of frames available n In all our examples, the reference string of referenced page numbers is 7, 0, 1, 2, 0, 3, 0, 4, 2, 3, 0, 3, 2, 1, 2, 0, 1, 7, 0, 1 Operating System Concepts – 10 th Edition 10. 29 Silberschatz, Galvin and Gagne © 2018

Graph: Page Faults vs. Number of Frames Operating System Concepts – 10 th Edition 10. 30 Silberschatz, Galvin and Gagne © 2018

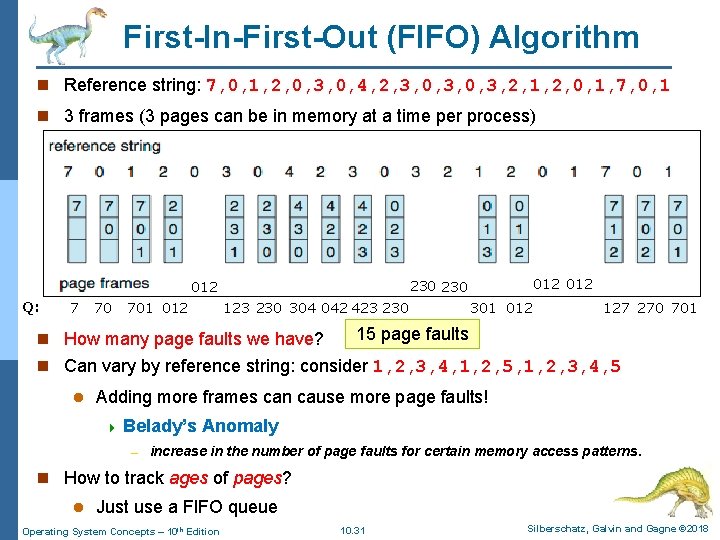

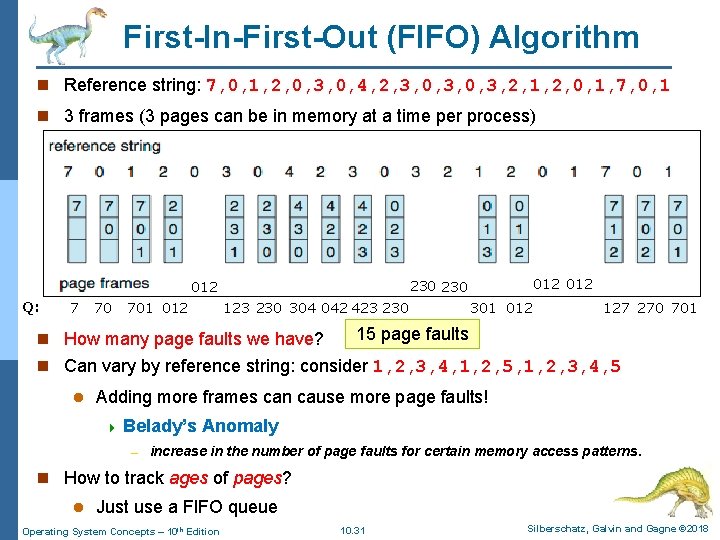

First-In-First-Out (FIFO) Algorithm n Reference string: 7, 0, 1, 2, 0, 3, 0, 4, 2, 3, 0, 3, 2, 1, 2, 0, 1, 7, 0, 1 n 3 frames (3 pages can be in memory at a time per process) Q: 7 70 701 012 012 230 012 123 230 304 042 423 230 n How many page faults we have? 301 012 127 270 701 15 page faults n Can vary by reference string: consider 1, 2, 3, 4, 1, 2, 5, 1, 2, 3, 4, 5 l Adding more frames can cause more page faults! 4 Belady’s Anomaly – increase in the number of page faults for certain memory access patterns. n How to track ages of pages? l Just use a FIFO queue Operating System Concepts – 10 th Edition 10. 31 Silberschatz, Galvin and Gagne © 2018

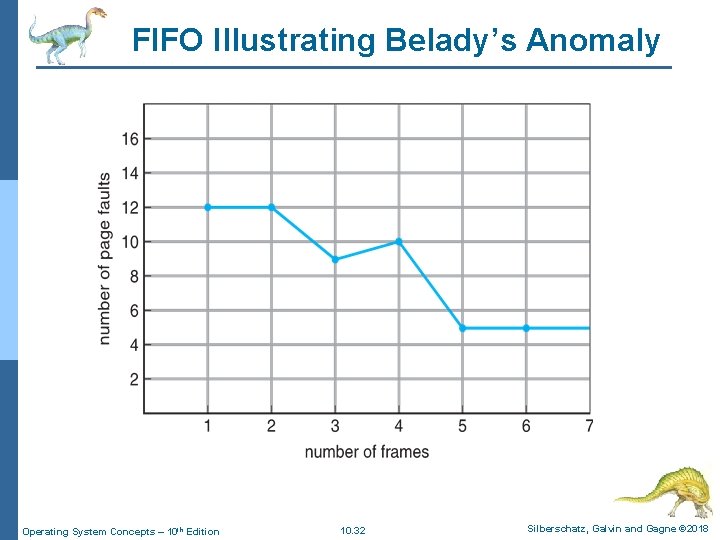

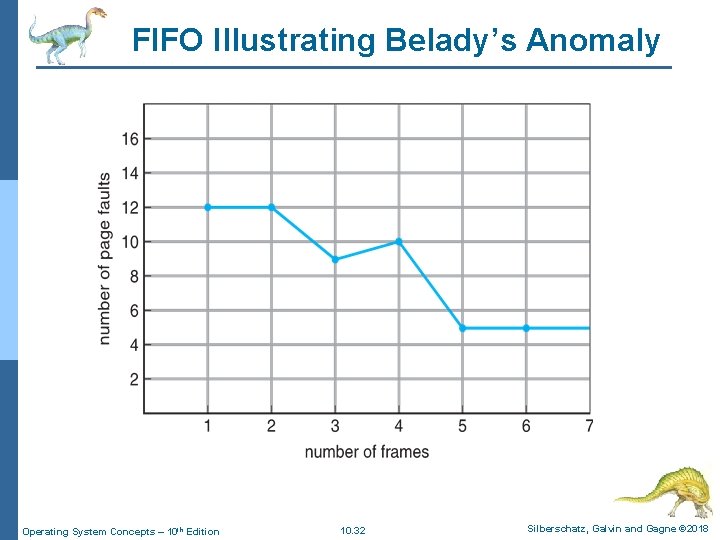

FIFO Illustrating Belady’s Anomaly Operating System Concepts – 10 th Edition 10. 32 Silberschatz, Galvin and Gagne © 2018

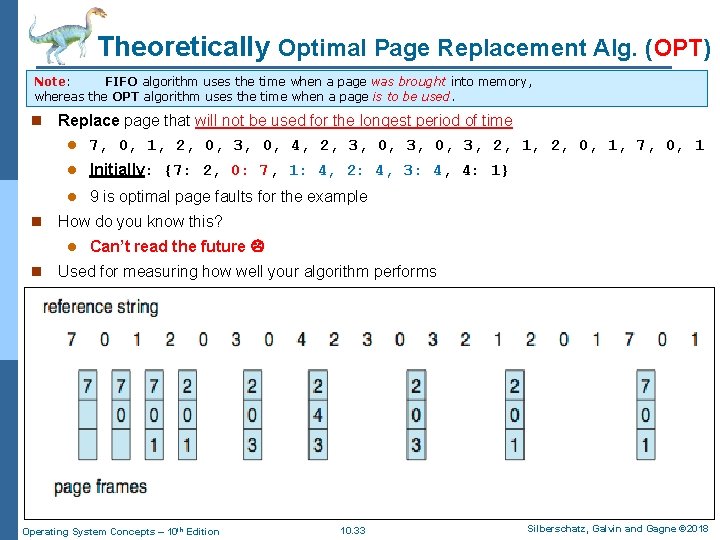

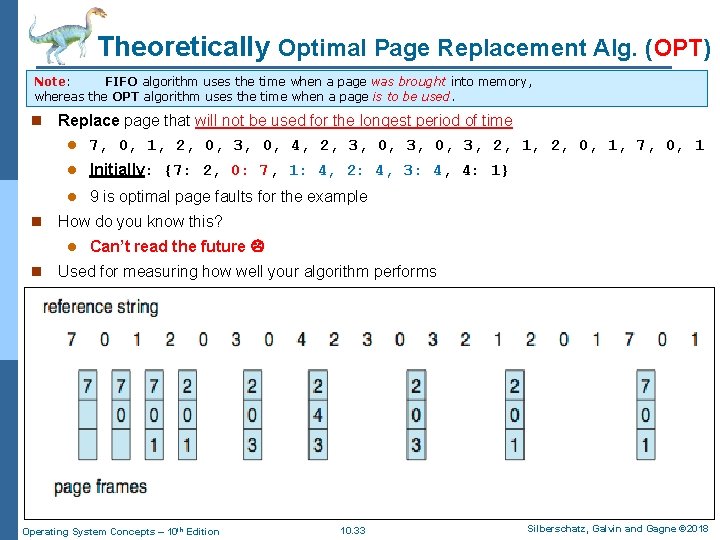

Theoretically Optimal Page Replacement Alg. (OPT) Note: FIFO algorithm uses the time when a page was brought into memory, whereas the OPT algorithm uses the time when a page is to be used. n n Replace page that will not be used for the longest period of time l 7, 0, 1, 2, 0, 3, 0, 4, 2, 3, 0, 3, 2, 1, 2, 0, 1, 7, 0, 1 l Initially: {7: 2, 0: 7, 1: 4, 2: 4, 3: 4, 4: 1} l 9 is optimal page faults for the example How do you know this? l n Can’t read the future Used for measuring how well your algorithm performs Operating System Concepts – 10 th Edition 10. 33 Silberschatz, Galvin and Gagne © 2018

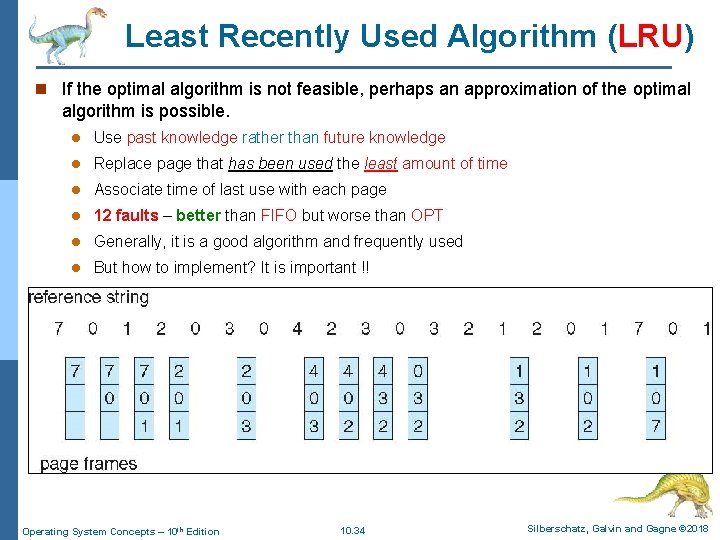

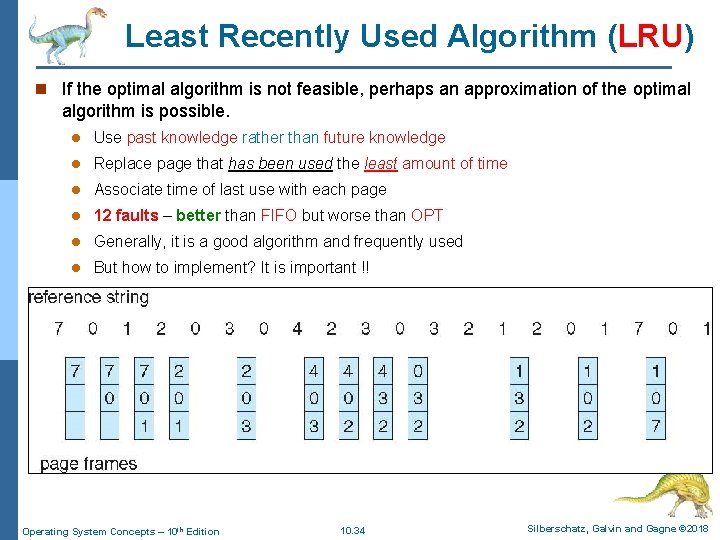

Least Recently Used Algorithm (LRU) n If the optimal algorithm is not feasible, perhaps an approximation of the optimal algorithm is possible. l Use past knowledge rather than future knowledge l Replace page that has been used the least amount of time l Associate time of last use with each page l 12 faults – better than FIFO but worse than OPT l Generally, it is a good algorithm and frequently used l But how to implement? It is important !! Operating System Concepts – 10 th Edition 10. 34 Silberschatz, Galvin and Gagne © 2018

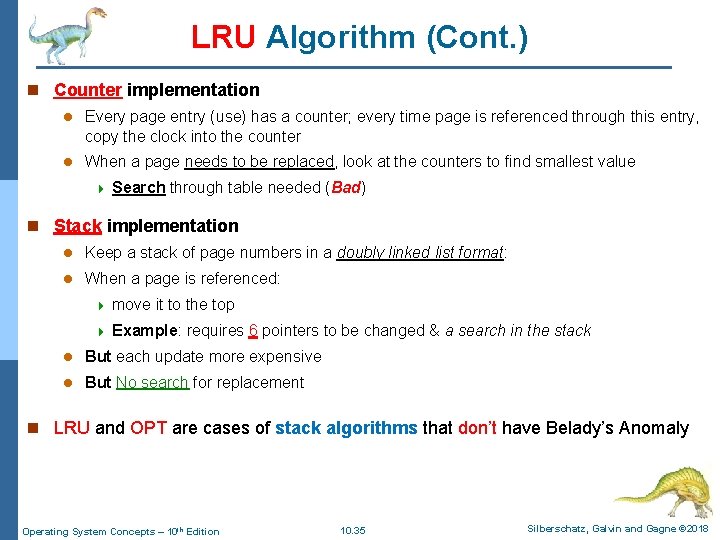

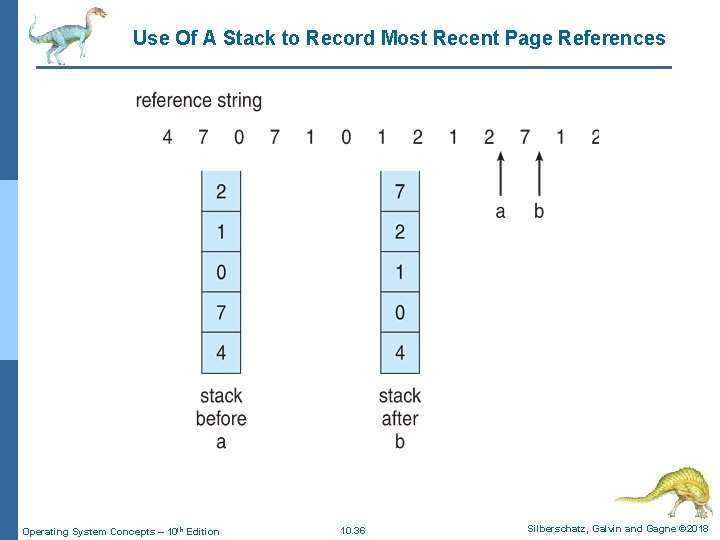

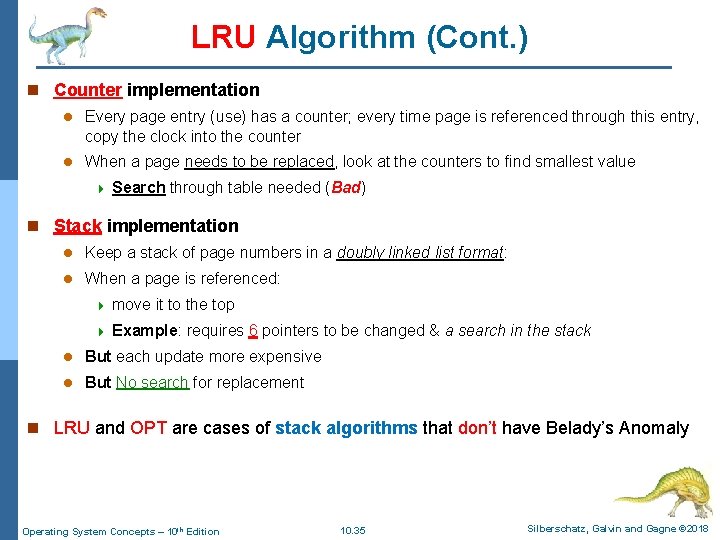

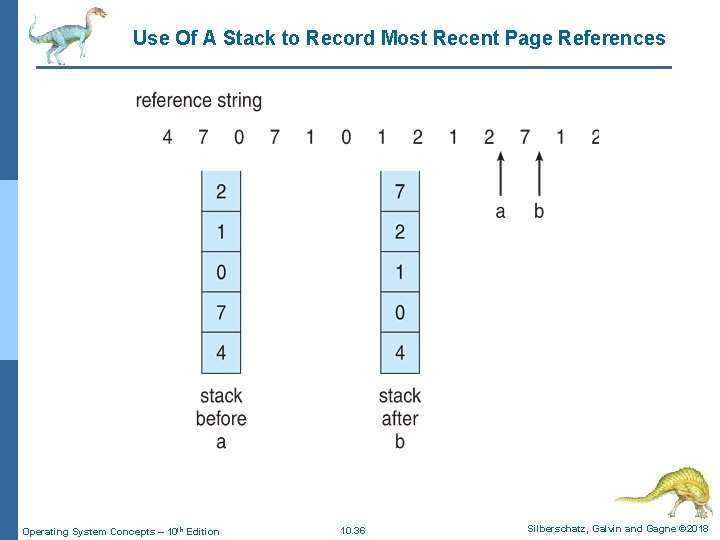

LRU Algorithm (Cont. ) n Counter implementation l Every page entry (use) has a counter; every time page is referenced through this entry, copy the clock into the counter l When a page needs to be replaced, look at the counters to find smallest value 4 Search through table needed (Bad) n Stack implementation l Keep a stack of page numbers in a doubly linked list format: l When a page is referenced: 4 move it to the top 4 Example: requires 6 pointers to be changed & a search in the stack l But each update more expensive l But No search for replacement n LRU and OPT are cases of stack algorithms that don’t have Belady’s Anomaly Operating System Concepts – 10 th Edition 10. 35 Silberschatz, Galvin and Gagne © 2018

Use Of A Stack to Record Most Recent Page References Operating System Concepts – 10 th Edition 10. 36 Silberschatz, Galvin and Gagne © 2018

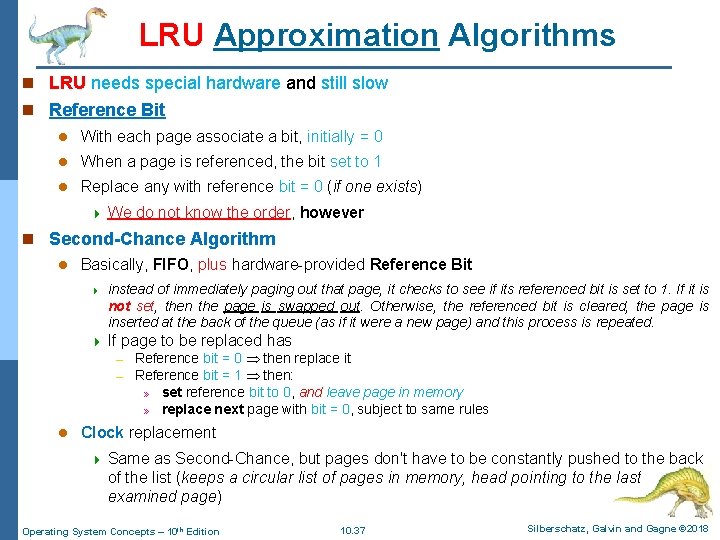

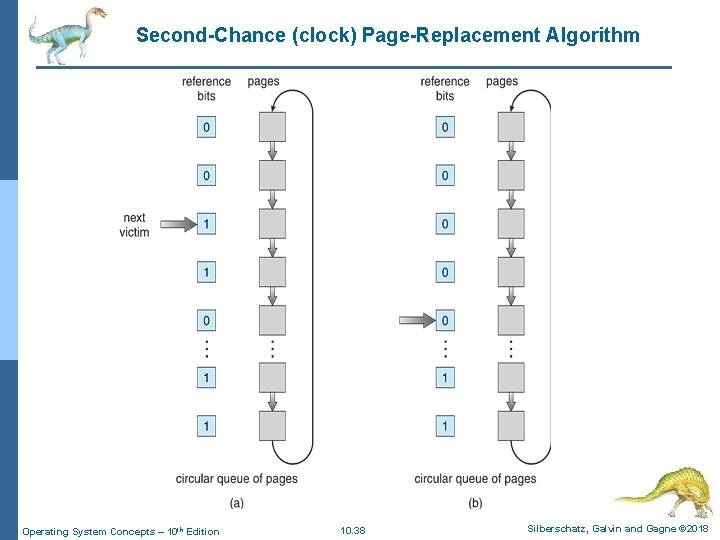

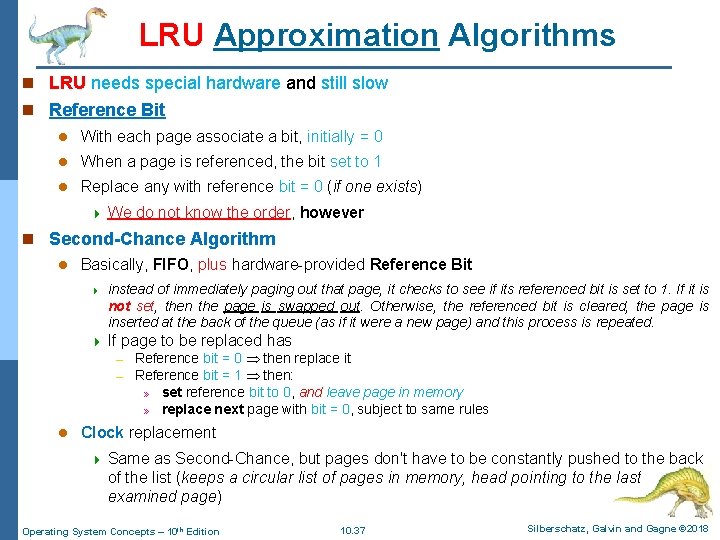

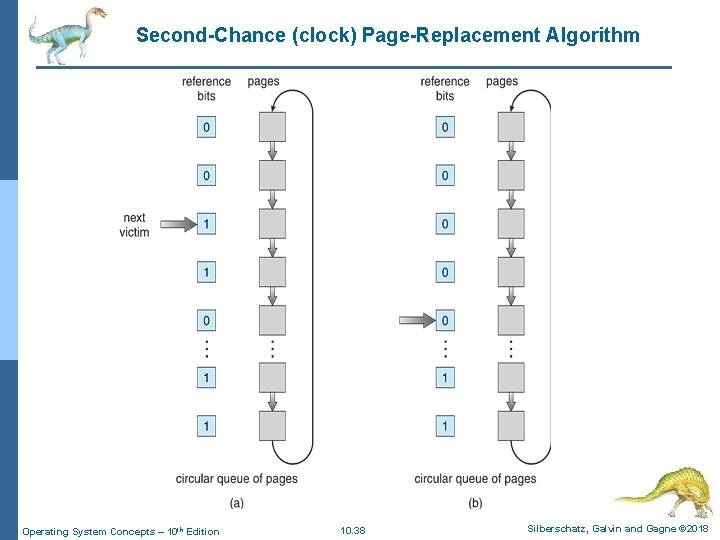

LRU Approximation Algorithms n LRU needs special hardware and still slow n Reference Bit l With each page associate a bit, initially = 0 l When a page is referenced, the bit set to 1 l Replace any with reference bit = 0 (if one exists) 4 We do not know the order, however n Second-Chance Algorithm l Basically, FIFO, plus hardware-provided Reference Bit 4 instead of immediately paging out that page, it checks to see if its referenced bit is set to 1. If it is not set, then the page is swapped out. Otherwise, the referenced bit is cleared, the page is inserted at the back of the queue (as if it were a new page) and this process is repeated. 4 If page to be replaced has – – l Reference bit = 0 then replace it Reference bit = 1 then: » set reference bit to 0, and leave page in memory » replace next page with bit = 0, subject to same rules Clock replacement 4 Same as Second-Chance, but pages don't have to be constantly pushed to the back of the list (keeps a circular list of pages in memory, head pointing to the last examined page) Operating System Concepts – 10 th Edition 10. 37 Silberschatz, Galvin and Gagne © 2018

Second-Chance (clock) Page-Replacement Algorithm Operating System Concepts – 10 th Edition 10. 38 Silberschatz, Galvin and Gagne © 2018

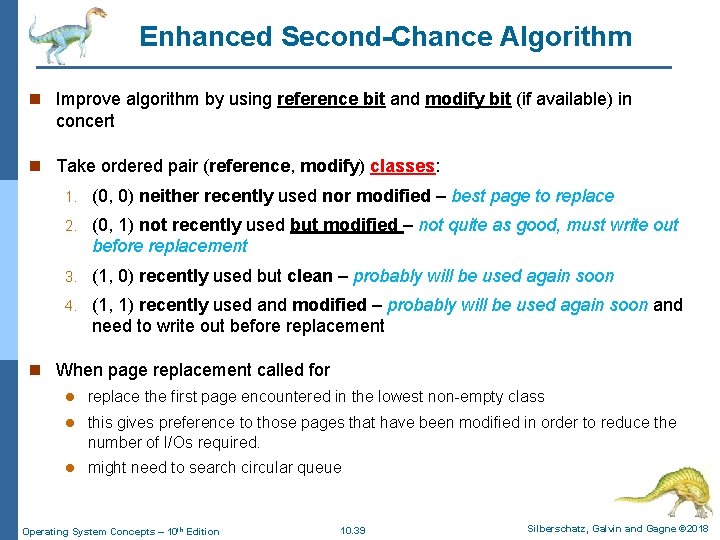

Enhanced Second-Chance Algorithm n Improve algorithm by using reference bit and modify bit (if available) in concert n Take ordered pair (reference, modify) classes: 1. (0, 0) neither recently used nor modified – best page to replace 2. (0, 1) not recently used but modified – not quite as good, must write out before replacement 3. (1, 0) recently used but clean – probably will be used again soon 4. (1, 1) recently used and modified – probably will be used again soon and need to write out before replacement n When page replacement called for l replace the first page encountered in the lowest non-empty class l this gives preference to those pages that have been modified in order to reduce the number of I/Os required. l might need to search circular queue Operating System Concepts – 10 th Edition 10. 39 Silberschatz, Galvin and Gagne © 2018

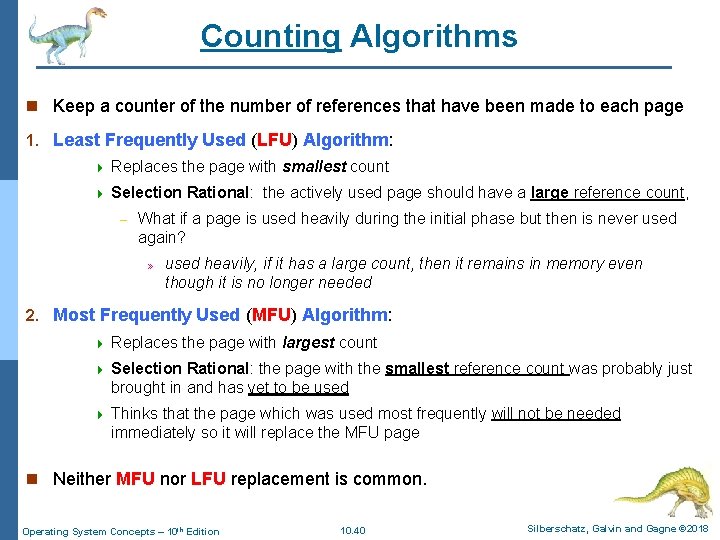

Counting Algorithms n Keep a counter of the number of references that have been made to each page 1. Least Frequently Used (LFU) Algorithm: 4 Replaces the page with smallest count 4 Selection Rational: the actively used page should have a large reference count, – What if a page is used heavily during the initial phase but then is never used again? » used heavily, if it has a large count, then it remains in memory even though it is no longer needed 2. Most Frequently Used (MFU) Algorithm: 4 Replaces the page with largest count 4 Selection Rational: the page with the smallest reference count was probably just brought in and has yet to be used 4 Thinks that the page which was used most frequently will not be needed immediately so it will replace the MFU page n Neither MFU nor LFU replacement is common. Operating System Concepts – 10 th Edition 10. 40 Silberschatz, Galvin and Gagne © 2018

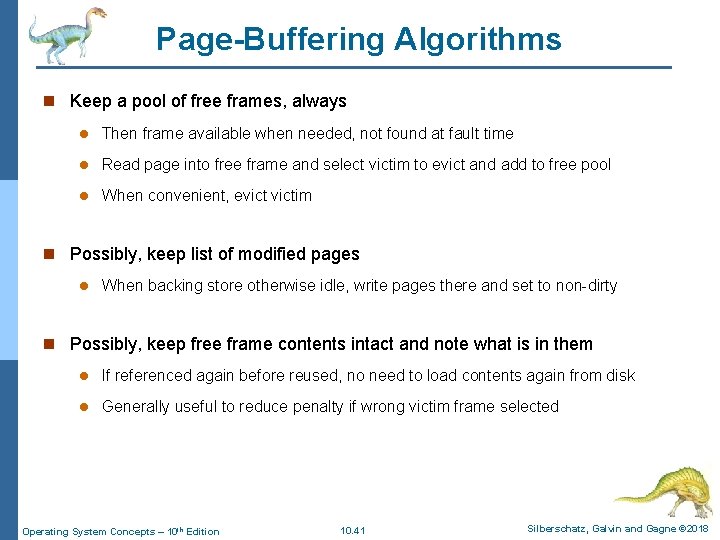

Page-Buffering Algorithms n Keep a pool of free frames, always l Then frame available when needed, not found at fault time l Read page into free frame and select victim to evict and add to free pool l When convenient, evictim n Possibly, keep list of modified pages l When backing store otherwise idle, write pages there and set to non-dirty n Possibly, keep free frame contents intact and note what is in them l If referenced again before reused, no need to load contents again from disk l Generally useful to reduce penalty if wrong victim frame selected Operating System Concepts – 10 th Edition 10. 41 Silberschatz, Galvin and Gagne © 2018

Applications and Page Replacement n All of these algorithms have OS guessing about future page access n Some applications have better knowledge – i. e. databases n Memory intensive applications can cause double buffering l OS keeps copy of page in memory as I/O buffer l Application keeps page in memory for its own work n Operating system can given direct access to the disk, getting out of the way of the applications l Raw disk mode n Bypasses buffering, locking, etc. Operating System Concepts – 10 th Edition 10. 42 Silberschatz, Galvin and Gagne © 2018

Thrashing Operating System Concepts – 10 th Edition Silberschatz, Galvin and Gagne © 2018

Thrashing n If a process does not have “enough” pages, the page-fault rate is very high l Page fault to get page l Replace existing frame l But quickly need replaced frame back. a constant state of paging and page faults l This (thrashing) leads to: 4 Low CPU utilization 4 Operating system thinking that it needs to increase the degree of multiprogramming 4 Another process added to the system Operating System Concepts – 10 th Edition 10. 44 Silberschatz, Galvin and Gagne © 2018

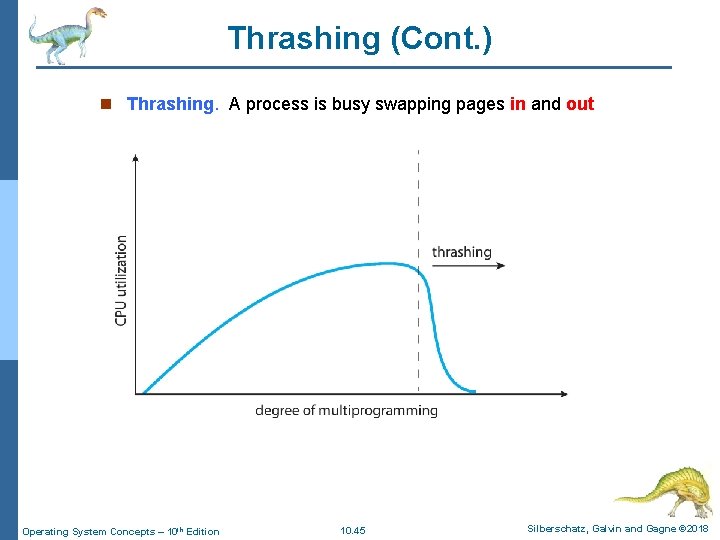

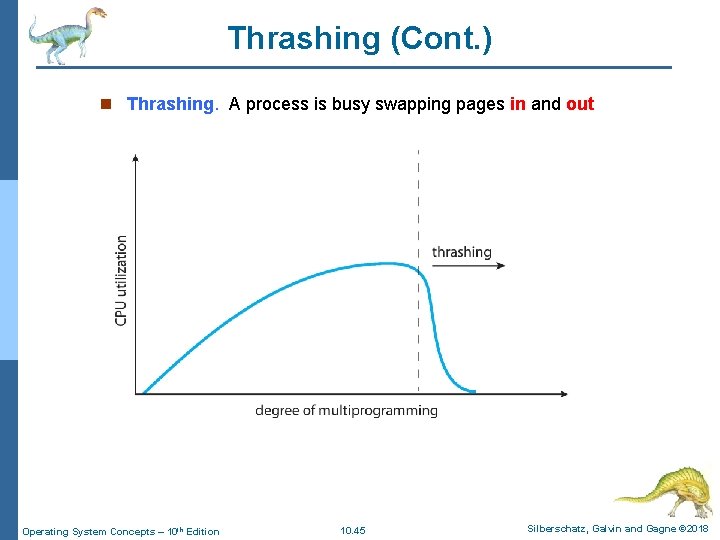

Thrashing (Cont. ) n Thrashing. A process is busy swapping pages in and out Operating System Concepts – 10 th Edition 10. 45 Silberschatz, Galvin and Gagne © 2018

End of Chapter 10 Operating System Concepts – 10 th Edition Silberschatz, Galvin and Gagne © 2018

Demand Paging and Thrashing n Why does demand paging work? Locality model l Process migrates from one locality to another l Localities may overlap n Why does thrashing occur? size of locality > total memory size n Limit effects by using local or priority page replacement Operating System Concepts – 10 th Edition 10. 47 Silberschatz, Galvin and Gagne © 2018

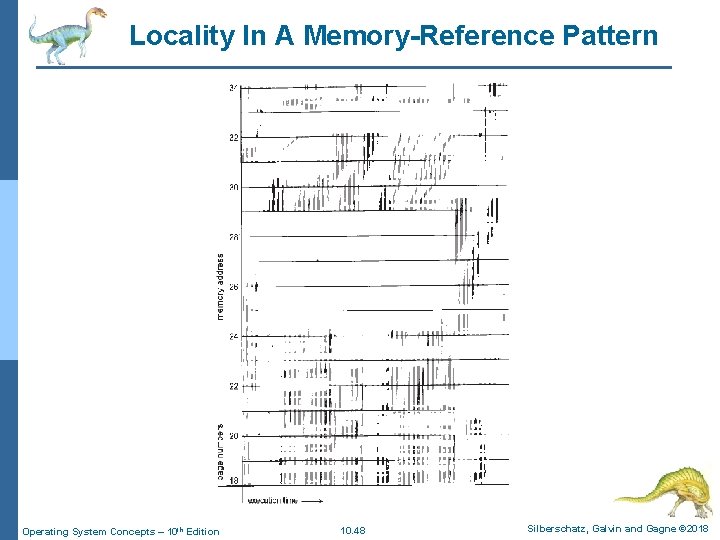

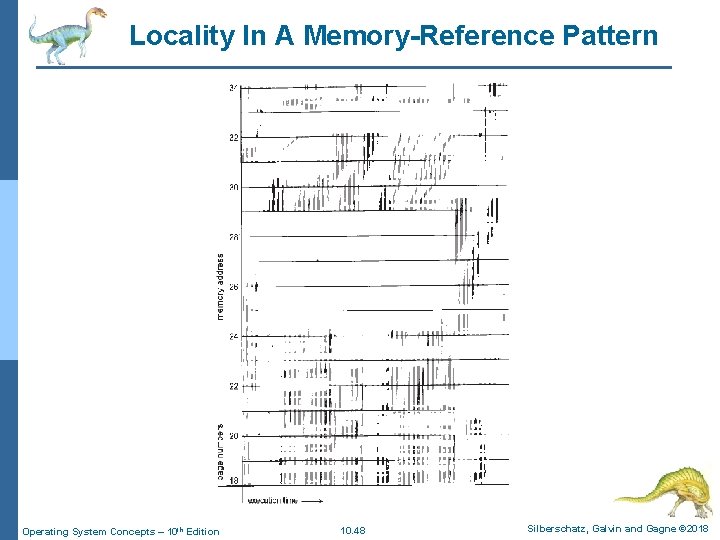

Locality In A Memory-Reference Pattern Operating System Concepts – 10 th Edition 10. 48 Silberschatz, Galvin and Gagne © 2018