BARC Microsoft BARC Bay Area Research Center Tom

BARC Microsoft BARC Bay Area Research Center Tom Barclay Tyler Beam (U VA)* Gordon Bell Joe Barrera Josh Coates (UCB)* Jim Gemmell Jim Gray Steve Lucco Erik Riedel (CMU)* Eve Schooler (Cal Tech) Don Slutz Catherine Van Ingen (NTFS)* http: //www. research. Microsoft. com/barc/ 1

Overview • Telepresence • » Goals » Prototypes Rags: automating software testing • Scaleable Systems. » Goals » Prototypes • Misc. 2

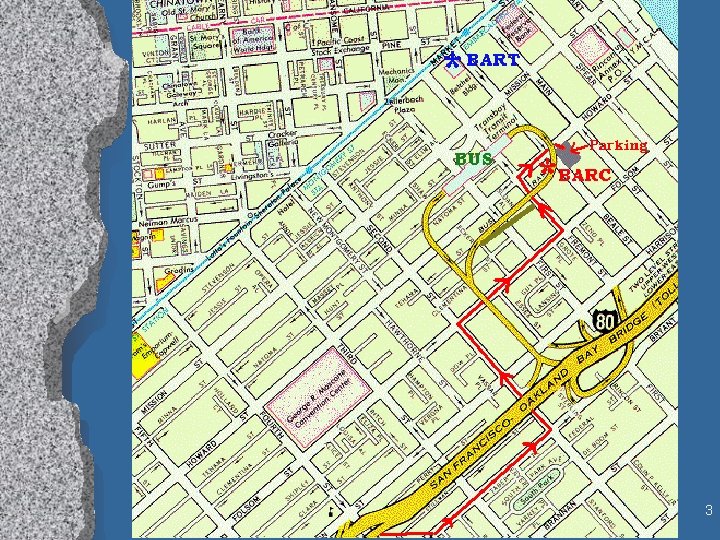

3

Telepresence: The next Killer App • Space shifting: » Reduce travel • Time shifting: » Retrospectives » Condensations » Just in time meetings. • Example: ACM 97 » http: //research. Microsoft. com/barc/acm 97/ » Net. Show and Web site. » More web visitors than attendees 4

What We Are Doing • Scalable Reliable Multicast (SRM) » used by WB (white board) of Mbone » Nack suppression (backoff) » N 2 message traffic to set up • Error Correcting SRM (EC SRM) » Do not resend lost packets. » Send Error Correction in addition to regular » (or)Send Error Correction in response to NACK » One EC packet repairs any of k lost packets » Improved scaleability (millions of subscribers). 5

Telepresence Prototypes • Power. Cast: multicast Power. Point » Streaming - pre-sends next anticipated slide » Send slides and voice rather than talking head and voice » Uses ECSRM for reliable multicast » 1000’s of receivers can join and leave any time. » No server needed; no pre-load of slides. » Cooperating with Net. Show • File. Cast: multicast file transfer. » Erasure encodes all packets » Receivers only need to receive as many bytes as the length of the file » Multicast IE to solve Midnight-Madness problem • NT SRM: reliable IP multicast library for NT • Spatialized Teleconference Station » Texture map faces onto spheres » Space map voices 6

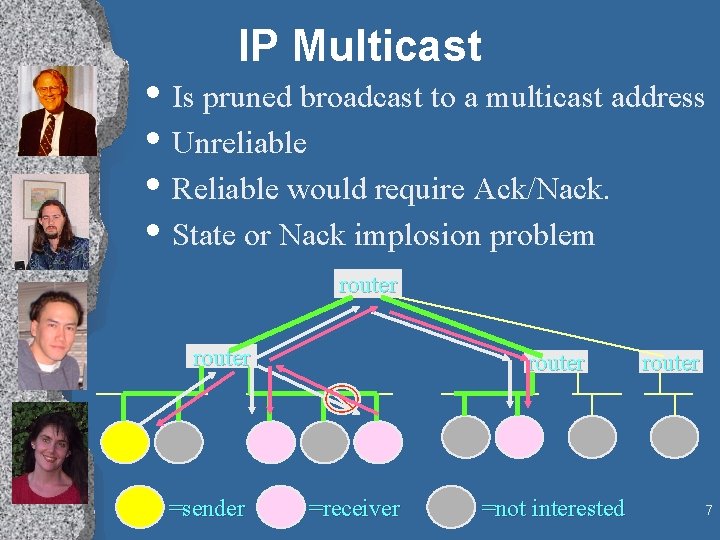

IP Multicast • Is pruned broadcast to a multicast address • Unreliable • Reliable would require Ack/Nack. • State or Nack implosion problem router =sender router =receiver =not interested router 7

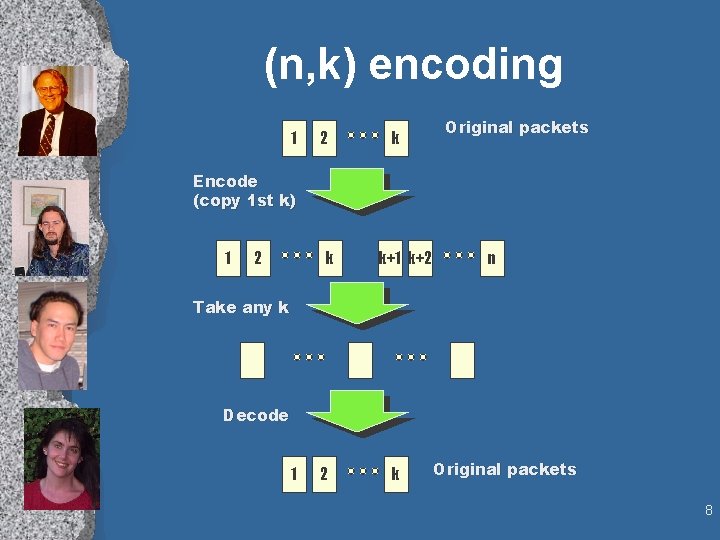

(n, k) encoding 1 2 k Original packets Encode (copy 1 st k) 1 2 k k+1 k+2 n Take any k Decode 1 2 k Original packets 8

Fcast • File tranfer protocol • FEC-only • Files transmitted in parallel 9

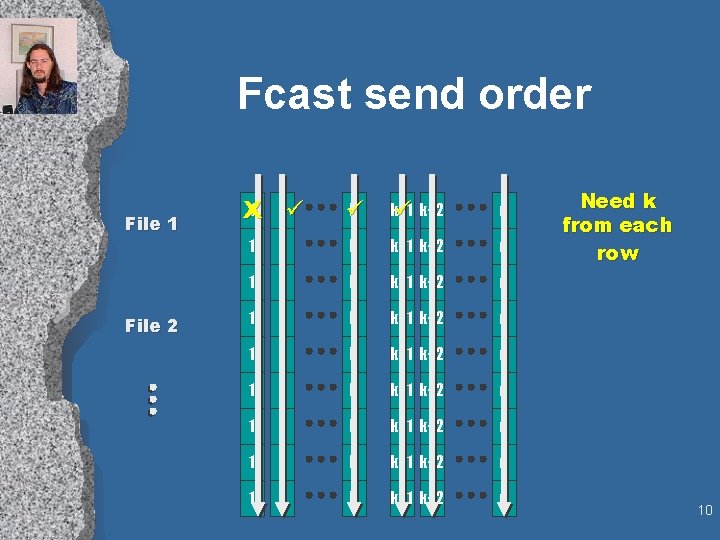

Fcast send order File 1 File 2 1 2ü X k ü k+1 ü k+2 n 1 2 k k+1 k+2 n 1 2 k k+1 k+2 n Need k from each row 10

ECSRM Erasure Correcting SRM • Combines: » suppression » erasure correction 11

Suppression • Delay a NACK or repair in the hopes that • • • someone else will do it. NACKs are multicast After NACKing, re-set timer and wait for repair If you hear a NACK that you were waiting to send, then re-set your timer as if you did send it. 12

ECSRM - adding FEC to suppression • Assign each packet to an EC group of size k • NACK: (group, # missing) • NACK of (g, c) suppresses all (g, x c). • Don’t re-send originals; send EC packets using (n, k) encoding 13

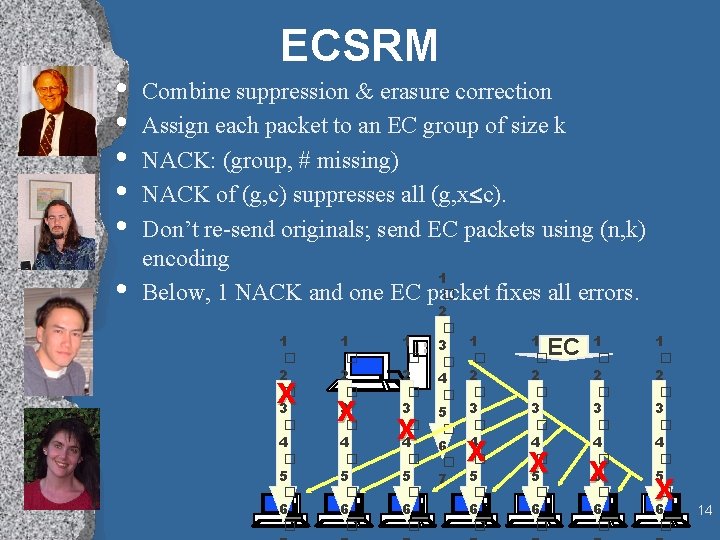

• • • ECSRM Combine suppression & erasure correction Assign each packet to an EC group of size k NACK: (group, # missing) NACK of (g, c) suppresses all (g, x c). Don’t re-send originals; send EC packets using (n, k) encoding 1 � Below, 1 NACK and one EC packet fixes all errors. 1 � 2 � 3 � 4 � 5 � 6 � X 2 � 3 � 4 � 5 � 6 � 7 1 � 2 � 3 � 4 � 5 � 6 � X 1 � 2 � 3 � 4 � 5 � 6 � EC X 1 � 2 � 3 � 4 � 5 � 6 � X 14

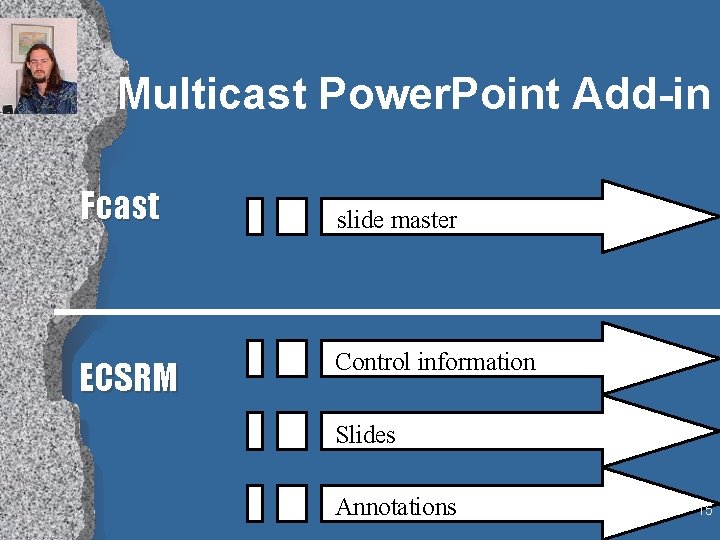

Multicast Power. Point Add-in Fcast ECSRM slide master Control information Slides Annotations 15

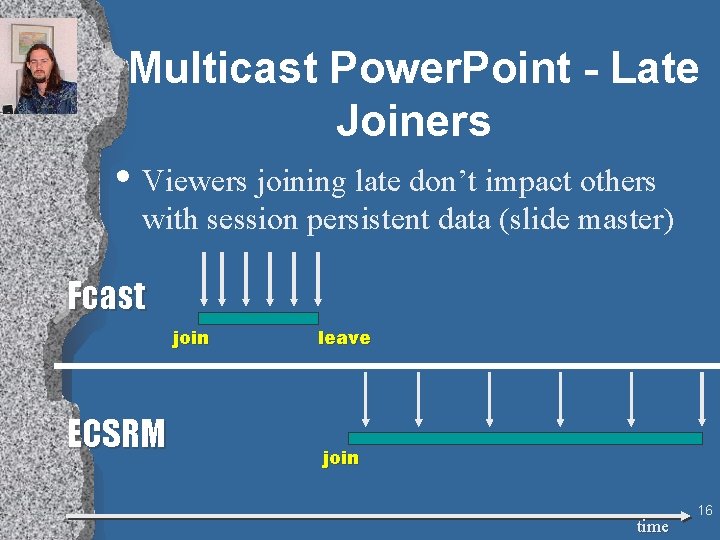

Multicast Power. Point - Late Joiners • Viewers joining late don’t impact others with session persistent data (slide master) Fcast join ECSRM leave join time 16

Future Work • Adding hierarchy (e. g. PGM by Cisco) • Do we need 2 protocols? 17

Spatialized Teleconferences • Map heads to “Eggs” • Project voices in stereo using “nose vector” 18

RAGS: RAndom SQL test Generator • Microsoft spends a LOT of money on testing. (60% of development according to one source). • Idea: test SQL by » generating random correct queries » executing queries against database » compare results with SQL 6. 5, DB 2, Oracle, Sybase • Being used in SQL 7. 0 testing. » 375 unique bugs found (since 2/97) » Very productive test tool 19

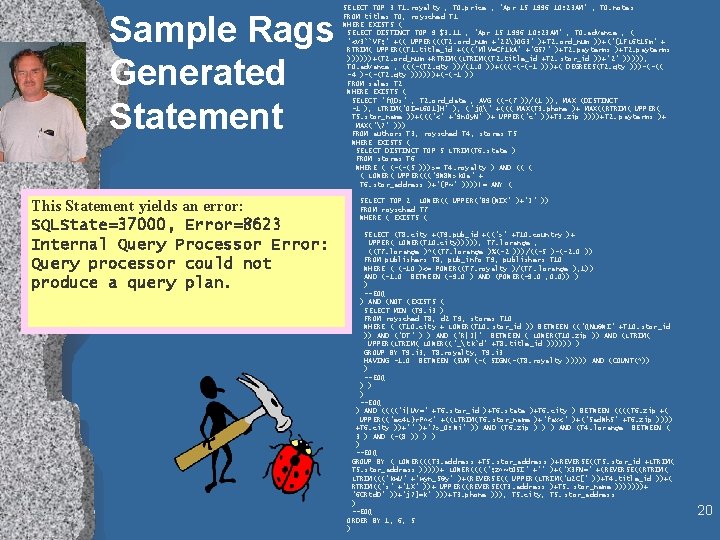

Sample Rags Generated Statement This Statement yields an error: SQLState=37000, Error=8623 Internal Query Processor Error: Query processor could not produce a query plan. SELECT TOP 3 T 1. royalty , T 0. price , "Apr 15 1996 10: 23 AM" , T 0. notes FROM titles T 0, roysched T 1 WHERE EXISTS ( SELECT DISTINCT TOP 9 $3. 11 , "Apr 15 1996 10: 23 AM" , T 0. advance , ( "<v 3``VF; " +(( UPPER(((T 2. ord_num +"22}0 G 3" )+T 2. ord_num ))+("{1 FL 6 t 15 m" + RTRIM( UPPER((T 1. title_id +(((" Ml. V=Cf 1 k. A" +"GS? " )+T 2. payterms ))))))+(T 2. ord_num +RTRIM((LTRIM((T 2. title_id +T 2. stor_id ))+"2" ))))), T 0. advance , (((-(T 2. qty ))/(1. 0 ))+(((-(-(-1 )))+( DEGREES(T 2. qty )))-(-(( -4 )-(-(T 2. qty ))))))+(-(-1 )) FROM sales T 2 WHERE EXISTS ( SELECT "f. QDs" , T 2. ord_date , AVG ((-(7 ))/(1 )), MAX (DISTINCT -1 ), LTRIM("0 I=L 601]H" ), (" j. Q" +((( MAX(T 3. phone )+ MAX((RTRIM( UPPER( T 5. stor_name ))+((("<" +"9 n 0 y. N" )+ UPPER("c" ))+T 3. zip ))))+T 2. payterms )+ MAX("? " ))) FROM authors T 3, roysched T 4, stores T 5 WHERE EXISTS ( SELECT DISTINCT TOP 5 LTRIM(T 6. state ) FROM stores T 6 WHERE ( (-(-(5 )))>= T 4. royalty ) AND (( ( ( LOWER( UPPER((("9 W 8 W> k. Oa" + T 6. stor_address )+"{P~" ))))!= ANY ( SELECT TOP 2 LOWER(( UPPER("B 9{WIX" )+"J" )) FROM roysched T 7 WHERE ( EXISTS ( SELECT (T 8. city +(T 9. pub_id +((">" +T 10. country )+ UPPER( LOWER(T 10. city))))), T 7. lorange , ((T 7. lorange )*((T 7. lorange )%(-2 )))/((-5 )-(-2. 0 )) FROM publishers T 8, pub_info T 9, publishers T 10 WHERE ( (-10 )<= POWER((T 7. royalty )/(T 7. lorange ), 1)) AND (-1. 0 BETWEEN (-9. 0 ) AND (POWER(-9. 0 , 0. 0)) ) ) --EOQ ) AND (NOT (EXISTS ( SELECT MIN (T 9. i 3 ) FROM roysched T 8, d 2 T 9, stores T 10 WHERE ( (T 10. city + LOWER(T 10. stor_id )) BETWEEN (("QNu@WI" +T 10. stor_id )) AND ("DT" ) ) AND ("R|J|" BETWEEN ( LOWER(T 10. zip )) AND (LTRIM( UPPER(LTRIM( LOWER(("_ tk`d" +T 8. title_id )))))) ) GROUP BY T 9. i 3, T 8. royalty, T 9. i 3 HAVING -1. 0 BETWEEN (SUM (-( SIGN(-(T 8. royalty ))))) AND (COUNT(*)) ) --EOQ ) AND (((("i|Uv=" +T 6. stor_id )+T 6. state )+T 6. city ) BETWEEN ((((T 6. zip +( UPPER(("ec 4 L}r. P^<" +((LTRIM(T 6. stor_name )+"fax<" )+("5 ad. Wh. S" +T 6. zip )))) +T 6. city ))+"" )+"? >_0: Wi" )) AND (T 6. zip ) ) ) AND (T 4. lorange BETWEEN ( 3 ) AND (-(8 )) ) --EOQ GROUP BY ( LOWER(((T 3. address +T 5. stor_address )+REVERSE((T 5. stor_id +LTRIM( T 5. stor_address )))))+ LOWER(((("; z^~t. O 5 I" +"" )+("X 3 FN=" +(REVERSE((RTRIM( LTRIM((("kw. U" +"wyn_S@y" )+(REVERSE(( UPPER(LTRIM("u 2 C[" ))+T 4. title_id ))+( RTRIM(("s" +"1 X" ))+ UPPER((REVERSE(T 3. address )+T 5. stor_name )))))))+ "6 CRtd. D" ))+"j? ]=k" )))+T 3. phone ))), T 5. city, T 5. stor_address ) --EOQ ORDER BY 1, 6, 5 ) 20

Automation • Simpler Statement with same error SELECT roysched. royalty FROM titles, roysched WHERE EXISTS ( SELECT DISTINCT TOP 1 titles. advance FROM sales ORDER BY 1) • Control statement attributes » complexity, kind, depth, . . . • Multi-user stress tests » tests concurrency, allocation, recovery 21

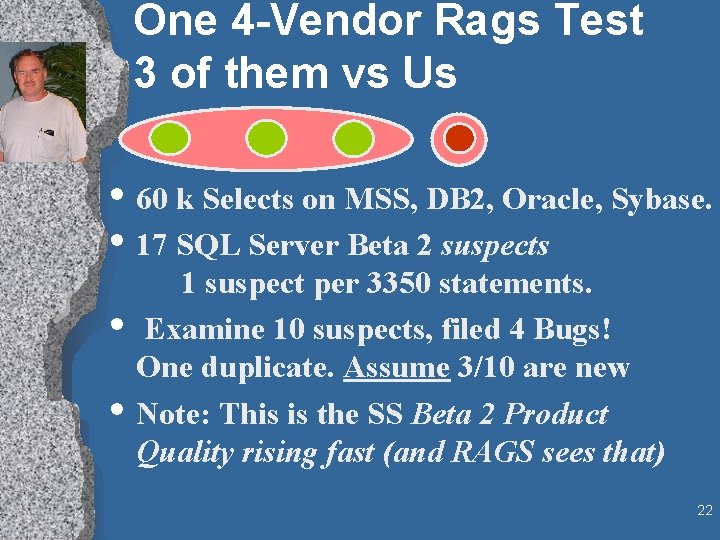

One 4 -Vendor Rags Test 3 of them vs Us • 60 k Selects on MSS, DB 2, Oracle, Sybase. • 17 SQL Server Beta 2 suspects • • 1 suspect per 3350 statements. Examine 10 suspects, filed 4 Bugs! One duplicate. Assume 3/10 are new Note: This is the SS Beta 2 Product Quality rising fast (and RAGS sees that) 22

RAGS Next Steps • Done: » Patents, Papers, Talks » tech transfer to development • SQL 7 (over 400 bugs), Fox. Pro, OLE DB. • Next steps: » Make even more automatic » Extend to other parts of SQL and Tsql » “Crawl” the config space (look for new holes) » Apply ideas to other domains (ole db). 23

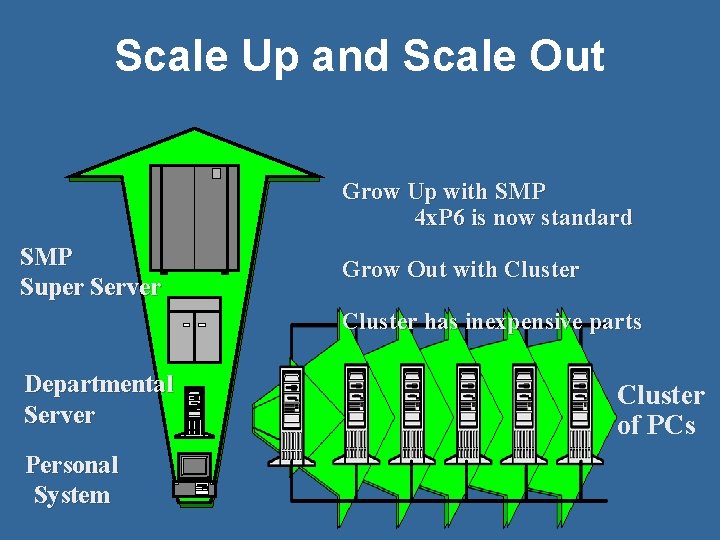

Scale Up and Scale Out Grow Up with SMP 4 x. P 6 is now standard SMP Super Server Grow Out with Cluster has inexpensive parts Departmental Server Personal System Cluster of PCs

Billions Of Clients • Every device will be “intelligent” • Doors, rooms, cars… • Computing will be ubiquitous

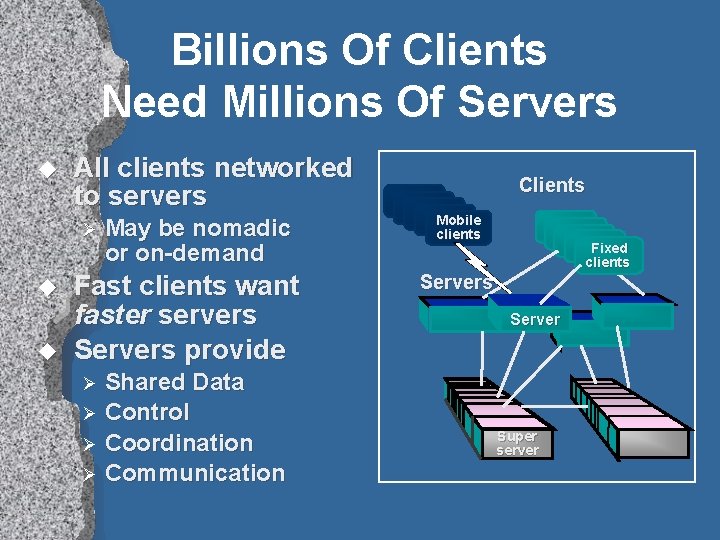

Billions Of Clients Need Millions Of Servers u All clients networked to servers Ø u u May be nomadic or on-demand Fast clients want faster servers Servers provide Shared Data Ø Control Ø Coordination Ø Communication Clients Mobile clients Fixed clients Server Ø Super server

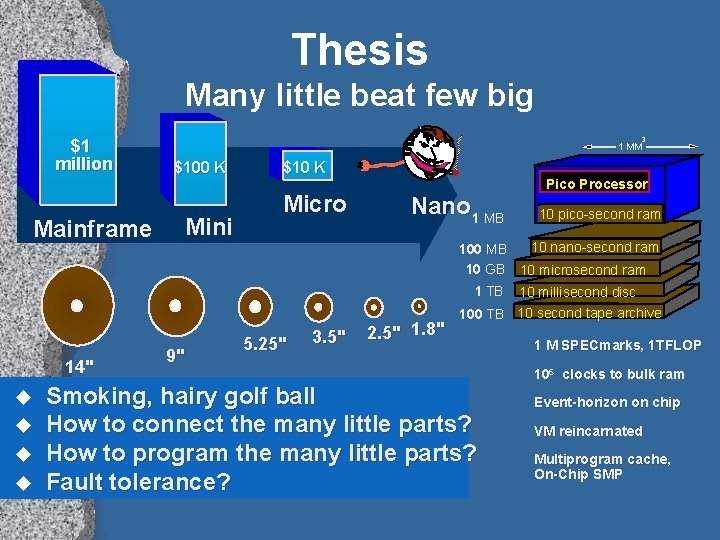

Thesis Many little beat few big $1 million Mainframe 3 1 MM $100 K Mini $10 K Micro Nano 1 MB Pico Processor 10 pico-second ram 10 nano-second ram 100 MB 10 GB 10 microsecond ram 1 TB 14" u u 9" 5. 25" 3. 5" 2. 5" 1. 8" 10 millisecond disc 100 TB 10 second tape archive Smoking, hairy golf ball How to connect the many little parts? How to program the many little parts? Fault tolerance? 1 M SPECmarks, 1 TFLOP 106 clocks to bulk ram Event-horizon on chip VM reincarnated Multiprogram cache, On-Chip SMP

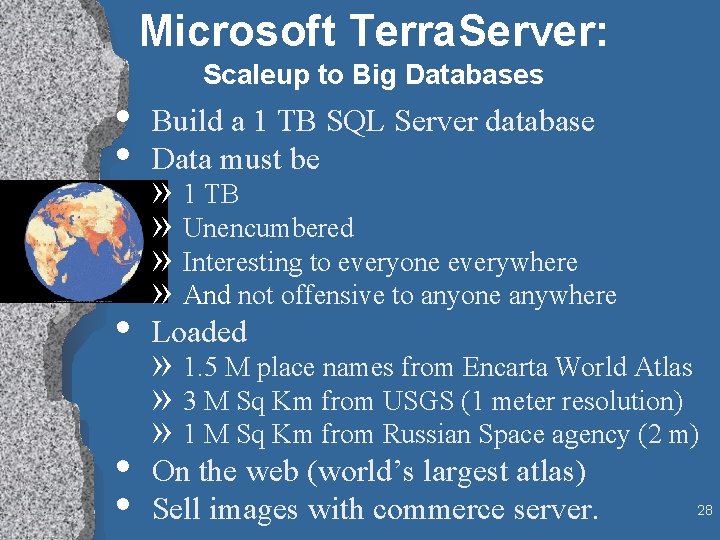

Microsoft Terra. Server: Scaleup to Big Databases • • Build a 1 TB SQL Server database Data must be • Loaded • • On the web (world’s largest atlas) Sell images with commerce server. » 1 TB » Unencumbered » Interesting to everyone everywhere » And not offensive to anyone anywhere » 1. 5 M place names from Encarta World Atlas » 3 M Sq Km from USGS (1 meter resolution) » 1 M Sq Km from Russian Space agency (2 m) 28

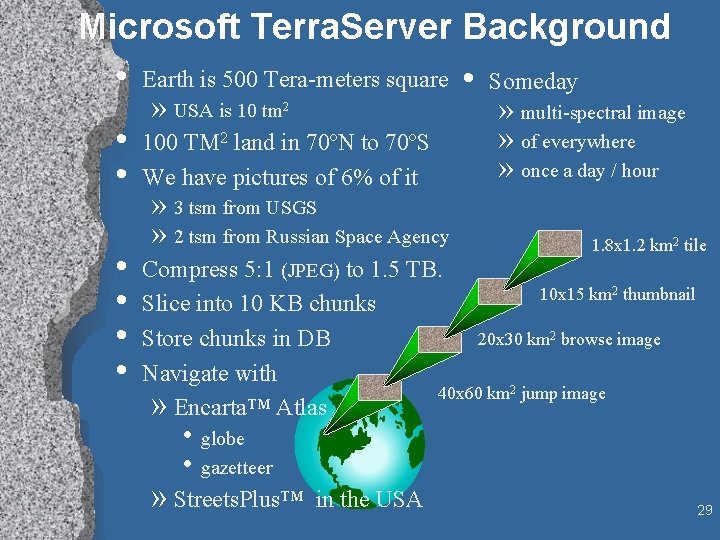

Microsoft Terra. Server Background • Earth is 500 Tera-meters square • Someday • • • » USA is 10 tm 2 100 TM 2 land in 70ºN to 70ºS We have pictures of 6% of it » 3 tsm from USGS » 2 tsm from Russian Space Agency » multi-spectral image » of everywhere » once a day / hour 1. 8 x 1. 2 km 2 tile Compress 5: 1 (JPEG) to 1. 5 TB. 10 x 15 km 2 thumbnail Slice into 10 KB chunks 20 x 30 km 2 browse image Store chunks in DB Navigate with 40 x 60 km 2 jump image » Encarta™ Atlas • globe • gazetteer » Streets. Plus™ in the USA 29

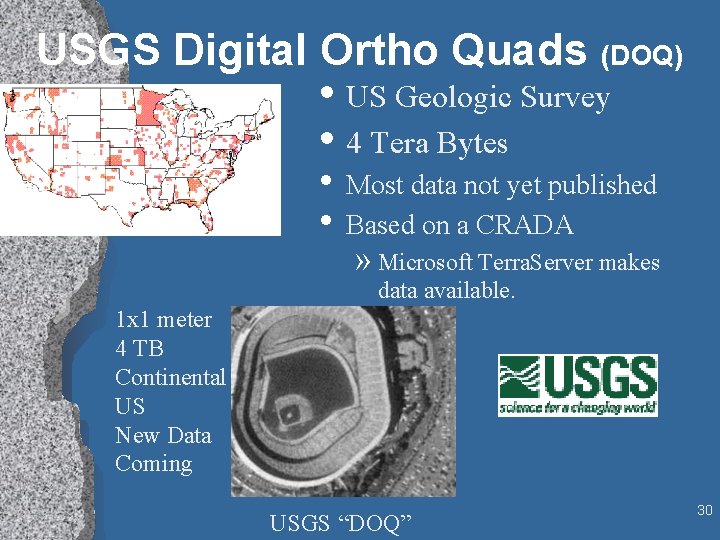

USGS Digital Ortho Quads (DOQ) • US Geologic Survey • 4 Tera Bytes • Most data not yet published • Based on a CRADA » Microsoft Terra. Server makes data available. 1 x 1 meter 4 TB Continental US New Data Coming USGS “DOQ” 30

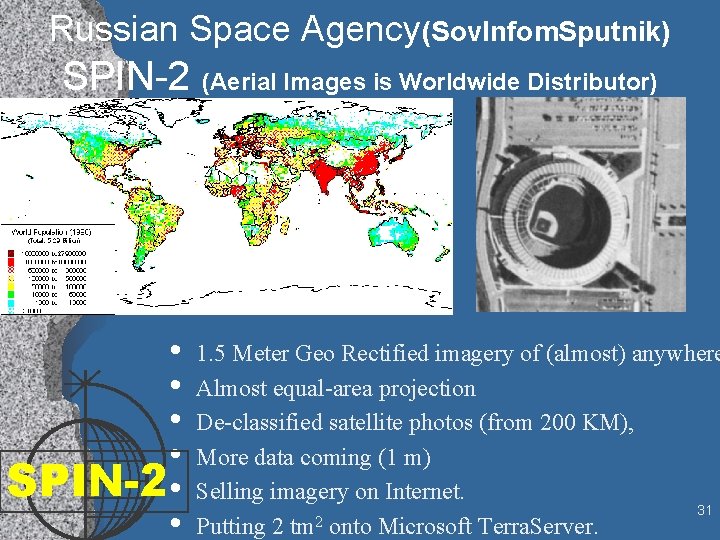

Russian Space Agency(Sov. Infom. Sputnik) SPIN-2 (Aerial Images is Worldwide Distributor) • • SPIN-2 • • 1. 5 Meter Geo Rectified imagery of (almost) anywhere Almost equal-area projection De-classified satellite photos (from 200 KM), More data coming (1 m) Selling imagery on Internet. 31 2 Putting 2 tm onto Microsoft Terra. Server.

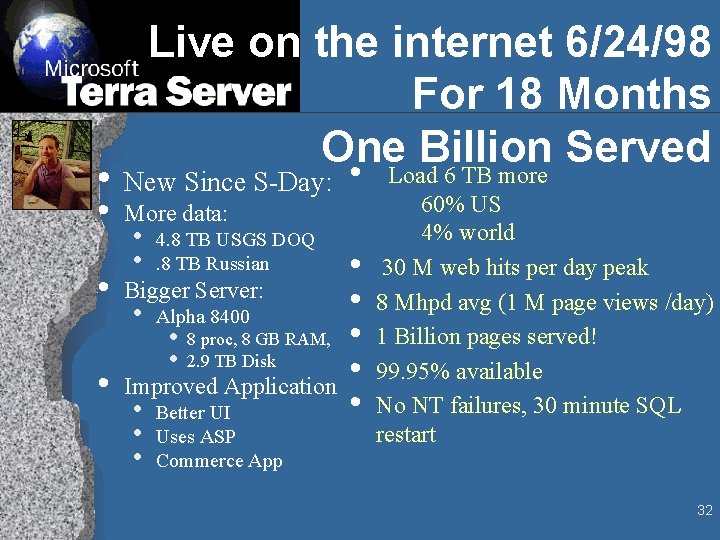

Live on the internet 6/24/98 For 18 Months One Billion Served • Load 6 TB more • New Since S-Day: • • • More data: • • 4. 8 TB USGS DOQ. 8 TB Russian • Alpha 8400 Bigger Server: • • 8 proc, 8 GB RAM, 2. 9 TB Disk Improved Application • • • Better UI Uses ASP Commerce App • • • 60% US 4% world 30 M web hits per day peak 8 Mhpd avg (1 M page views /day) 1 Billion pages served! 99. 95% available No NT failures, 30 minute SQL restart 32

Demo http: //www. Terra. Server. Microsoft. com/ Microsoft Back. Office SPIN-2 33

Demo • navigate by coverage map to White House • Download image • buy imagery from USGS • navigate by name to Venice • buy SPIN 2 image & Kodak photo • Pop out to Expedia street map of Venice • Mention that DB will double in next 18 months (2 x USGS, 2 X SPIN 2) 34

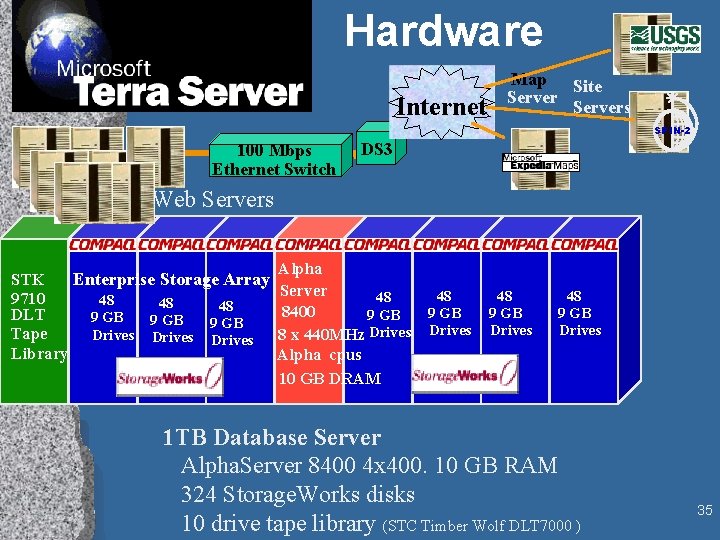

Hardware Internet Map Site Servers SPIN-2 100 Mbps Ethernet Switch DS 3 Web Servers STK Enterprise Storage Array 9710 48 48 48 DLT 9 GB Tape Drives Library Alpha Server 48 8400 9 GB 8 x 440 MHz Drives Alpha cpus 10 GB DRAM 48 9 GB Drives 1 TB Database Server Alpha. Server 8400 4 x 400. 10 GB RAM 324 Storage. Works disks 10 drive tape library (STC Timber Wolf DLT 7000 ) 35

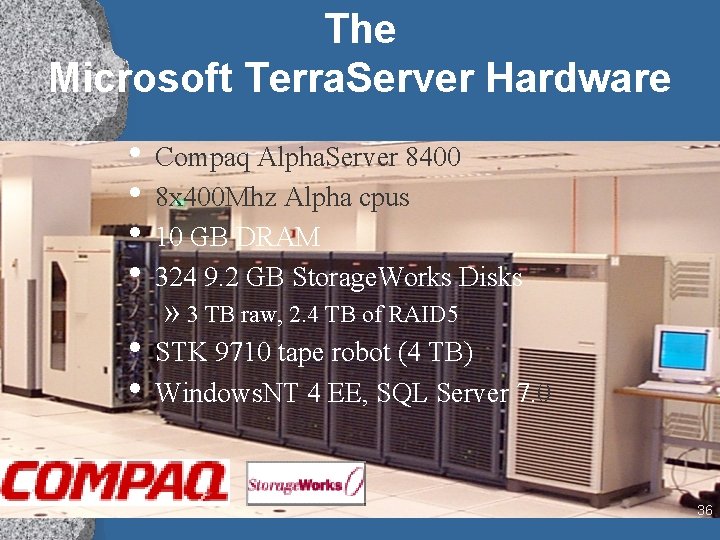

The Microsoft Terra. Server Hardware • Compaq Alpha. Server 8400 • 8 x 400 Mhz Alpha cpus • 10 GB DRAM • 324 9. 2 GB Storage. Works Disks » 3 TB raw, 2. 4 TB of RAID 5 • STK 9710 tape robot (4 TB) • Windows. NT 4 EE, SQL Server 7. 0 36

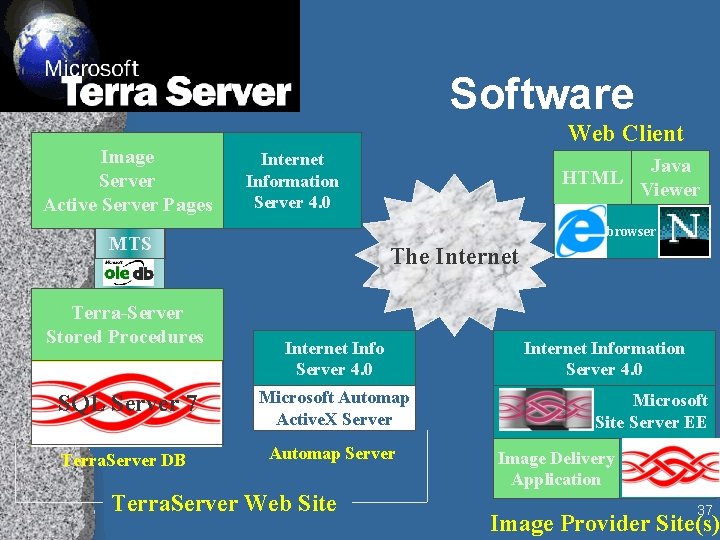

Software Image Server Active Server Pages Web Client Internet Information Server 4. 0 Java Viewer browser MTS Terra-Server Stored Procedures HTML The Internet Info Server 4. 0 SQL Server 7 Microsoft Automap Active. X Server Terra. Server DB Automap Server Terra. Server Web Site Internet Information Server 4. 0 Microsoft Site Server EE Image Delivery SQL Server Application 7 37 Image Provider Site(s)

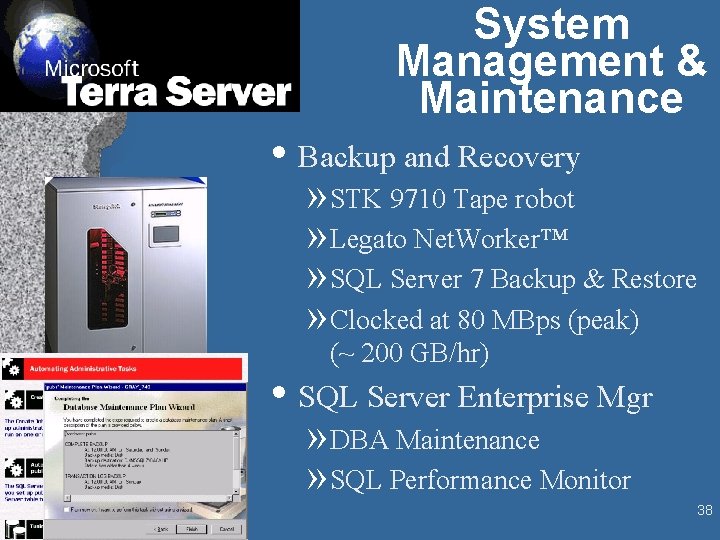

System Management & Maintenance • Backup and Recovery » STK 9710 Tape robot » Legato Net. Worker™ » SQL Server 7 Backup & Restore » Clocked at 80 MBps (peak) (~ 200 GB/hr) • SQL Server Enterprise Mgr » DBA Maintenance » SQL Performance Monitor 38

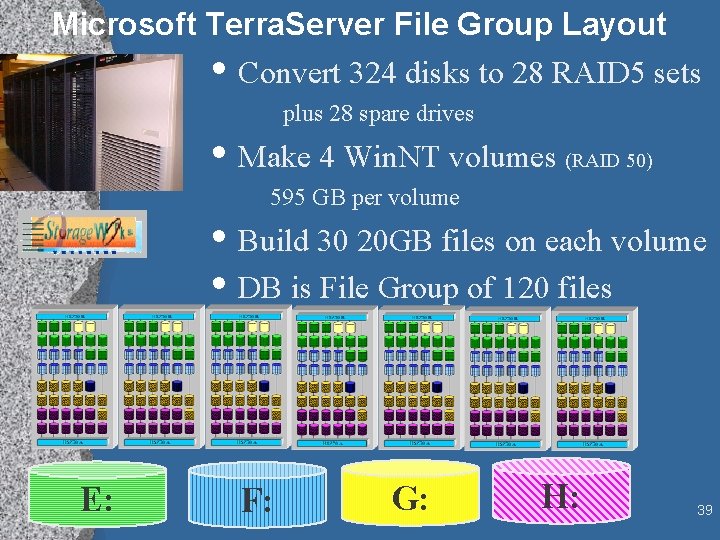

Microsoft Terra. Server File Group Layout • Convert 324 disks to 28 RAID 5 sets plus 28 spare drives • Make 4 Win. NT volumes (RAID 50) 595 GB per volume • Build 30 20 GB files on each volume • DB is File Group of 120 files E: F: G: H: 39

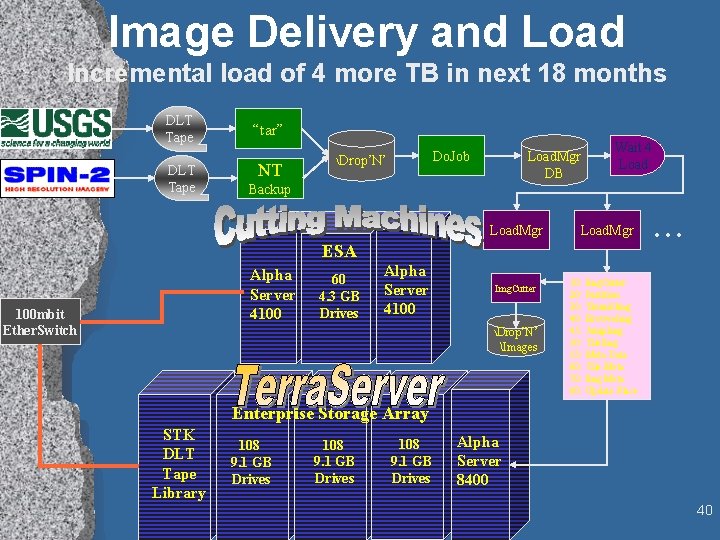

Image Delivery and Load Incremental load of 4 more TB in next 18 months DLT Tape “tar” NT Do. Job Drop’N’ Load. Mgr DB Wait 4 Load Backup Load. Mgr ESA Alpha Server 4100 100 mbit Ether. Switch 60 4. 3 GB Drives Alpha Server 4100 Img. Cutter Drop’N’ Images . . . 10: Img. Cutter 20: Partition 30: Thumb. Img 40: Browse. Img 45: Jump. Img 50: Tile. Img 55: Meta Data 60: Tile Meta 70: Img Meta 80: Update Place Enterprise Storage Array STK DLT Tape Library 108 9. 1 GB Drives Alpha Server 8400 40

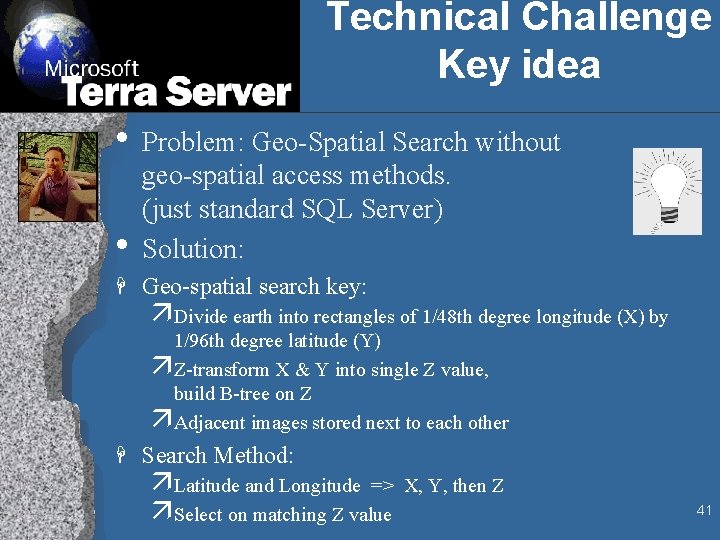

Technical Challenge Key idea • Problem: Geo-Spatial Search without • geo-spatial access methods. (just standard SQL Server) Solution: H Geo-spatial search key: äDivide earth into rectangles of 1/48 th degree longitude (X) by 1/96 th degree latitude (Y) äZ-transform X & Y into single Z value, build B-tree on Z äAdjacent images stored next to each other H Search Method: äLatitude and Longitude => X, Y, then Z äSelect on matching Z value 41

Kilo Mega Giga Tera Peta Exa Zetta Yotta Some Tera-Byte Databases • The Web: 1 TB of HTML • Terra. Server 1 TB of images • Several other 1 TB (file) servers • Hotmail: 7 TB of email • Sloan Digital Sky Survey: 40 TB raw, 2 TB cooked • EOS/DIS (picture of planet each week) » 15 PB by 2007 • Federal Clearing house: images of checks » 15 PB by 2006 (7 year history) • Nuclear Stockpile Stewardship Program » 10 Exabytes (? ? ? !!) 42

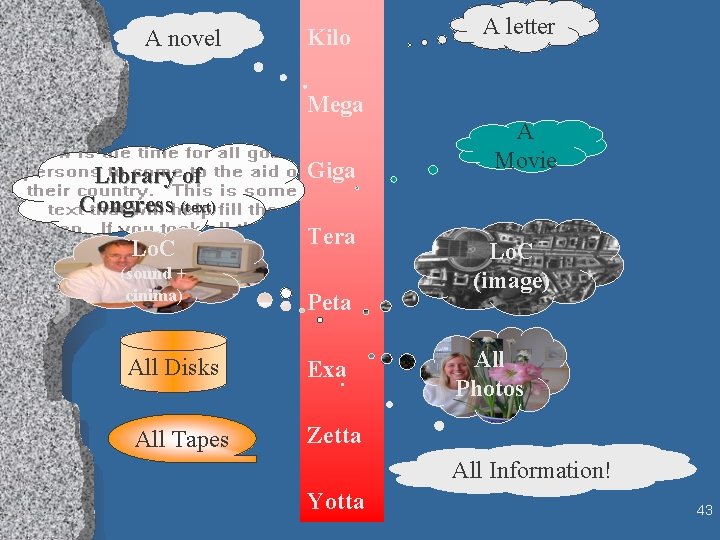

A novel Kilo A letter Mega Library of Congress (text) Giga Lo. C Tera (sound + cinima) All Disks All Tapes Peta Exa A Movie Lo. C (image) All Photos Zetta All Information! Yotta 43

Michael Lesk’s Points www. lesk. com/mlesk/ksg 97/ksg. html • Soon everything can be recorded and kept • Most data will never be seen by humans • Precious Resource: Human attention Auto-Summarization Auto-Search will be a key enabling technology. 44

Scalability 1 billion transactions 100 million web hits • Scale up: to large SMP nodes • Scale out: to clusters of SMP nodes 4 terabytes of data 1. 8 million mail messages 45

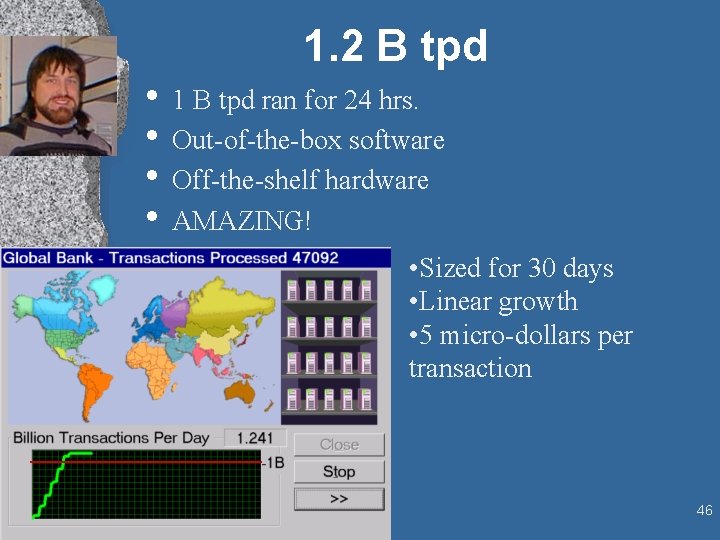

1. 2 B tpd • 1 B tpd ran for 24 hrs. • Out-of-the-box software • Off-the-shelf hardware • AMAZING! • Sized for 30 days • Linear growth • 5 micro-dollars per transaction 46

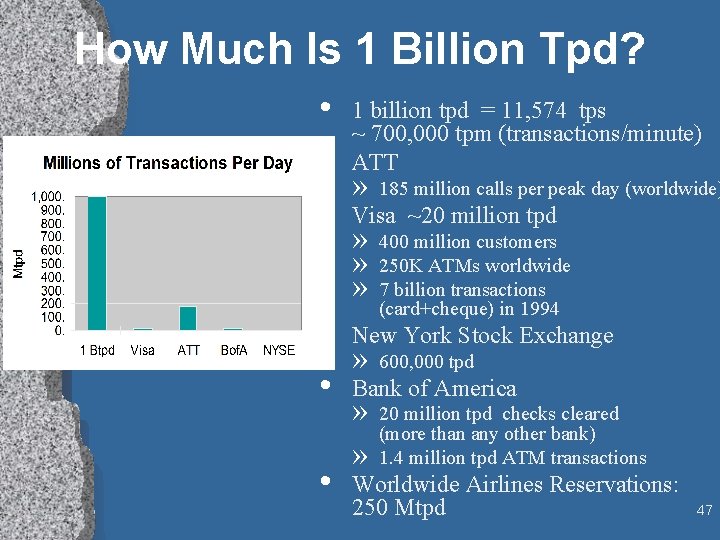

How Much Is 1 Billion Tpd? • 1 billion tpd = 11, 574 tps ~ 700, 000 tpm (transactions/minute) • ATT » 185 million calls per peak day (worldwide) • Visa ~20 million tpd » » » 400 million customers 250 K ATMs worldwide 7 billion transactions (card+cheque) in 1994 • New York Stock Exchange » 600, 000 tpd • Bank of America • » » 20 million tpd checks cleared (more than any other bank) 1. 4 million tpd ATM transactions Worldwide Airlines Reservations: 250 Mtpd 47

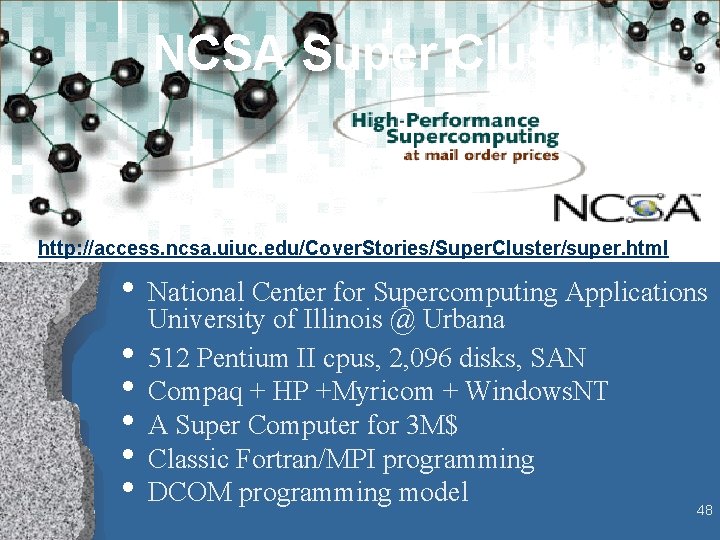

NCSA Super Cluster http: //access. ncsa. uiuc. edu/Cover. Stories/Super. Cluster/super. html • National Center for Supercomputing Applications • • • University of Illinois @ Urbana 512 Pentium II cpus, 2, 096 disks, SAN Compaq + HP +Myricom + Windows. NT A Super Computer for 3 M$ Classic Fortran/MPI programming DCOM programming model 48

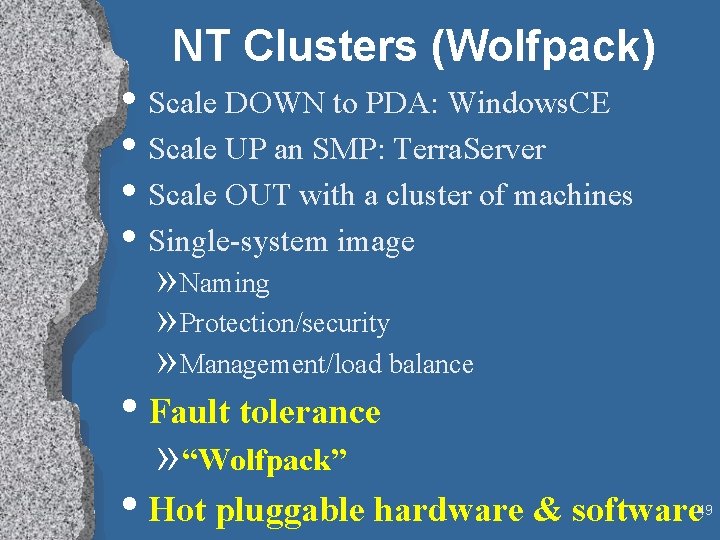

NT Clusters (Wolfpack) • Scale DOWN to PDA: Windows. CE • Scale UP an SMP: Terra. Server • Scale OUT with a cluster of machines • Single-system image » Naming » Protection/security » Management/load balance • Fault tolerance » “Wolfpack” • Hot pluggable hardware & software 49

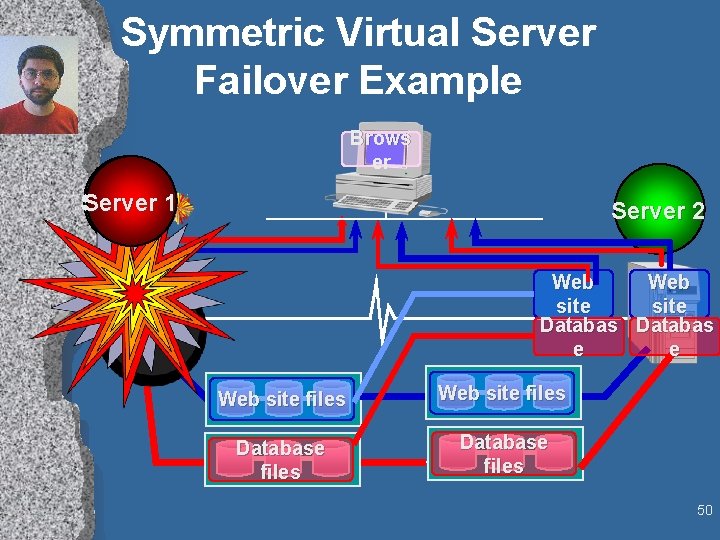

Symmetric Virtual Server Failover Example Brows er Server 11 Server 2 Web site Databas e e Web site files Database files 50

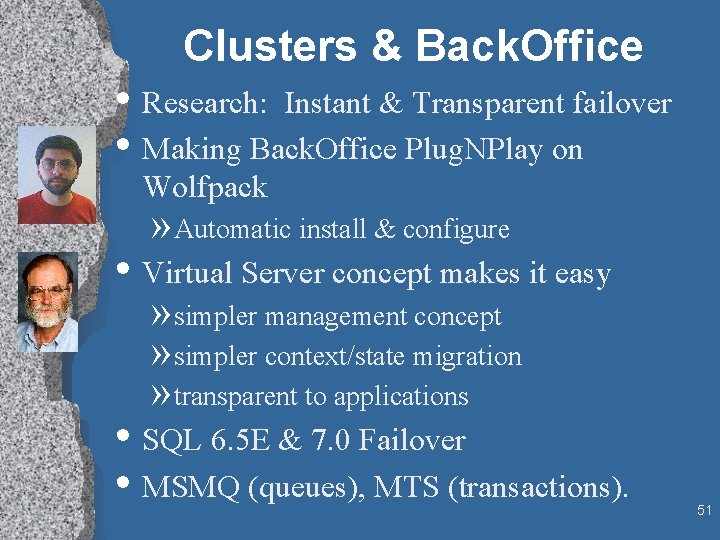

Clusters & Back. Office • Research: Instant & Transparent failover • Making Back. Office Plug. NPlay on Wolfpack » Automatic install & configure • Virtual Server concept makes it easy » simpler management concept » simpler context/state migration » transparent to applications • SQL 6. 5 E & 7. 0 Failover • MSMQ (queues), MTS (transactions). 51

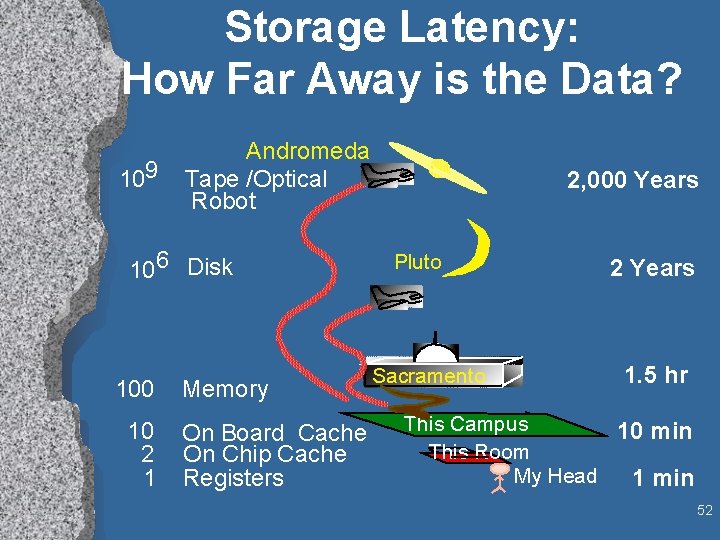

Storage Latency: How Far Away is the Data? 109 Andromeda Tape /Optical Robot 106 Disk 100 10 2 1 Memory On Board Cache On Chip Cache Registers 2, 000 Years Pluto Sacramento 2 Years 1. 5 hr This Campus 10 min This Room My Head 1 min 52

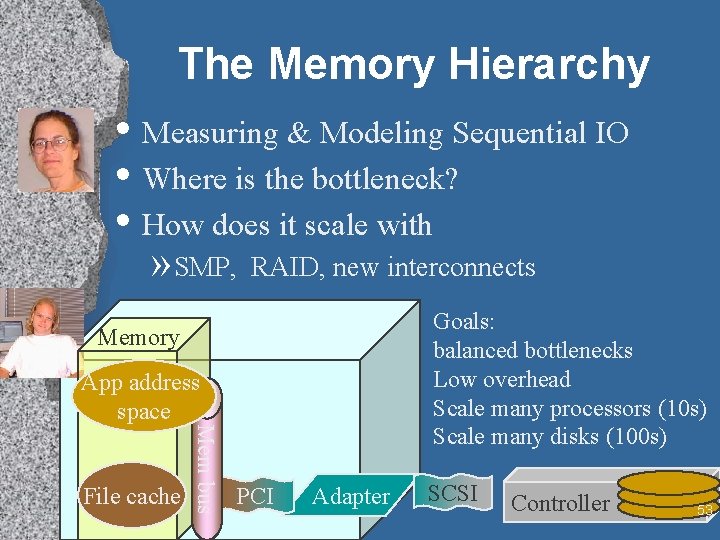

The Memory Hierarchy • Measuring & Modeling Sequential IO • Where is the bottleneck? • How does it scale with » SMP, RAID, new interconnects Goals: balanced bottlenecks Low overhead Scale many processors (10 s) Scale many disks (100 s) Memory File cache Mem bus App address space PCI Adapter SCSI Controller 53

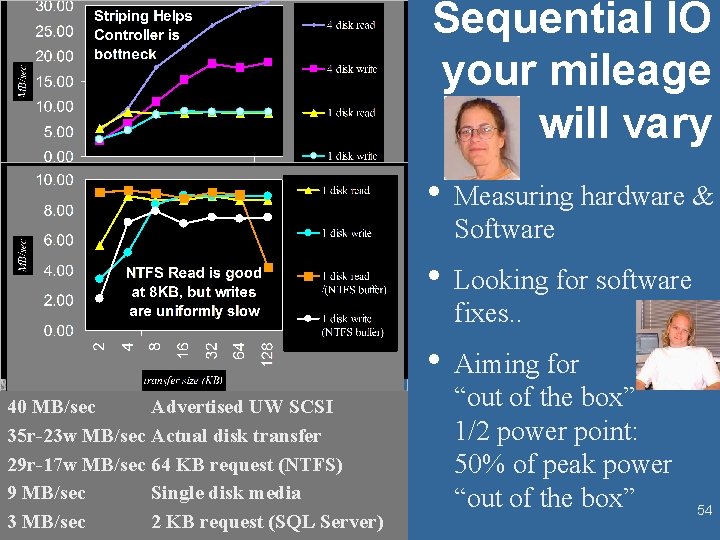

Sequential IO your mileage will vary • Measuring hardware & Software • Looking for software fixes. . • Aiming for 40 MB/sec Advertised UW SCSI 35 r-23 w MB/sec Actual disk transfer 29 r-17 w MB/sec 64 KB request (NTFS) 9 MB/sec Single disk media 3 MB/sec 2 KB request (SQL Server) “out of the box” 1/2 power point: 50% of peak power “out of the box” 54

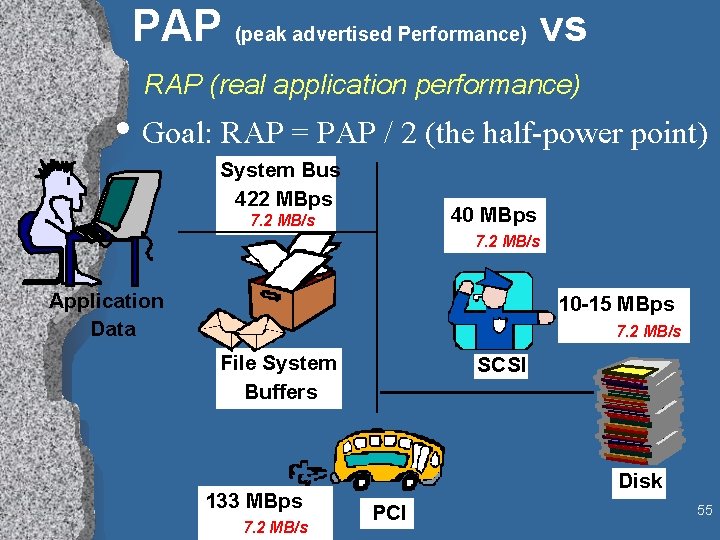

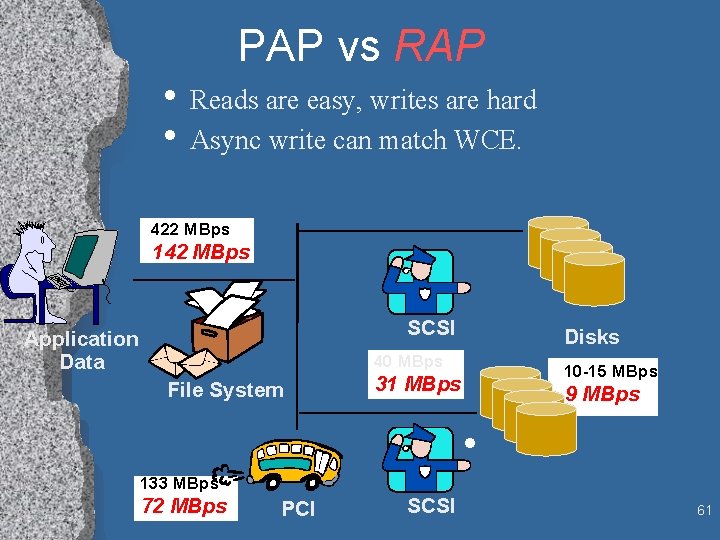

PAP (peak advertised Performance) vs RAP (real application performance) • Goal: RAP = PAP / 2 (the half-power point) System Bus 422 MBps 40 MBps 7. 2 MB/s Application Data 10 -15 MBps 7. 2 MB/s File System Buffers 133 MBps 7. 2 MB/s SCSI Disk PCI 55

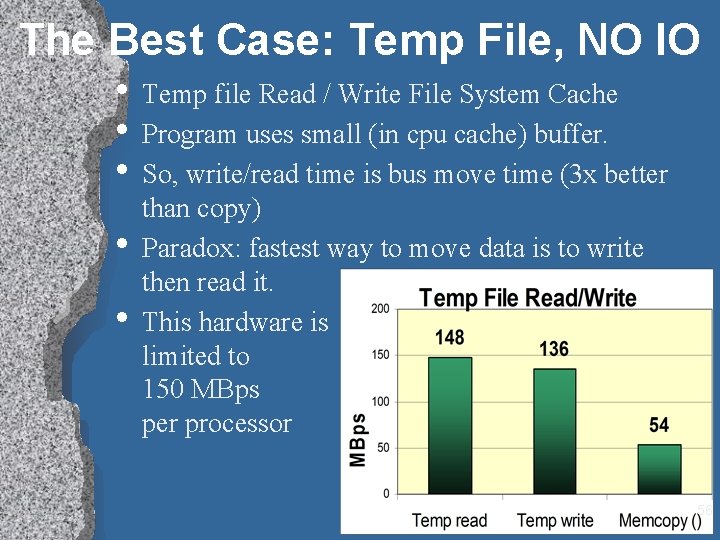

The Best Case: Temp File, NO IO • Temp file Read / Write File System Cache • Program uses small (in cpu cache) buffer. • So, write/read time is bus move time (3 x better • • than copy) Paradox: fastest way to move data is to write then read it. This hardware is limited to 150 MBps per processor 56

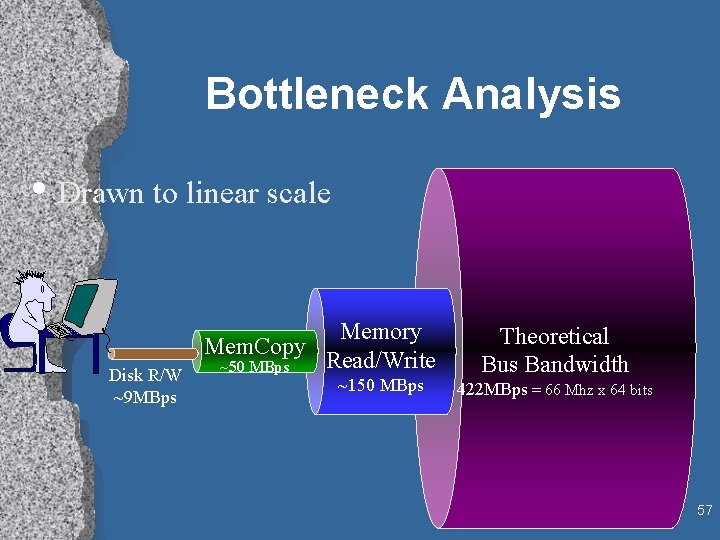

Bottleneck Analysis • Drawn to linear scale Disk R/W ~9 MBps Memory Mem. Copy Read/Write ~50 MBps ~150 MBps Theoretical Bus Bandwidth 422 MBps = 66 Mhz x 64 bits 57

3 Stripes and Your Out! • 3 disks can saturate adapter • CPU time goes • Similar story with Ultra. Wide down with request size • Ftdisk (striping is = cheap) 58

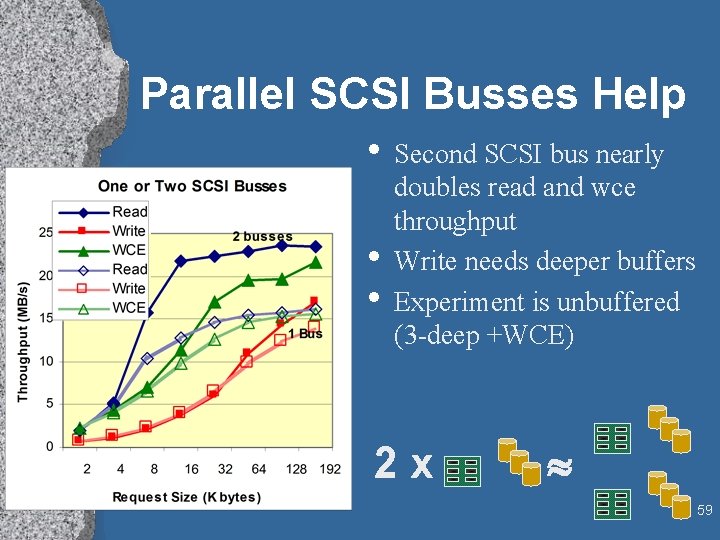

Parallel SCSI Busses Help • Second SCSI bus nearly • • doubles read and wce throughput Write needs deeper buffers Experiment is unbuffered (3 -deep +WCE) 2 x 59

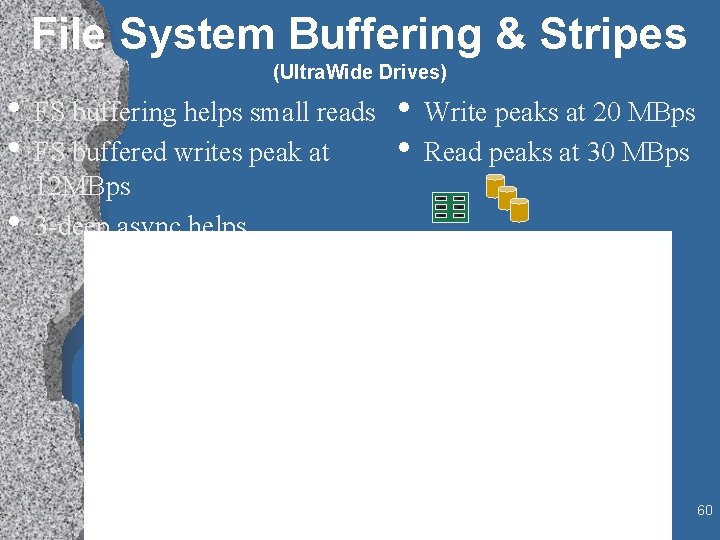

File System Buffering & Stripes (Ultra. Wide Drives) • FS buffering helps small reads • Write peaks at 20 MBps • Read peaks at 30 MBps • FS buffered writes peak at • 12 MBps 3 -deep async helps 60

PAP vs RAP • Reads are easy, writes are hard • Async write can match WCE. 422 MBps 142 MBps SCSI Application Data Disks 40 MBps File System 10 -15 MBps 31 MBps 9 MBps • 133 MBps 72 MBps PCI SCSI 61

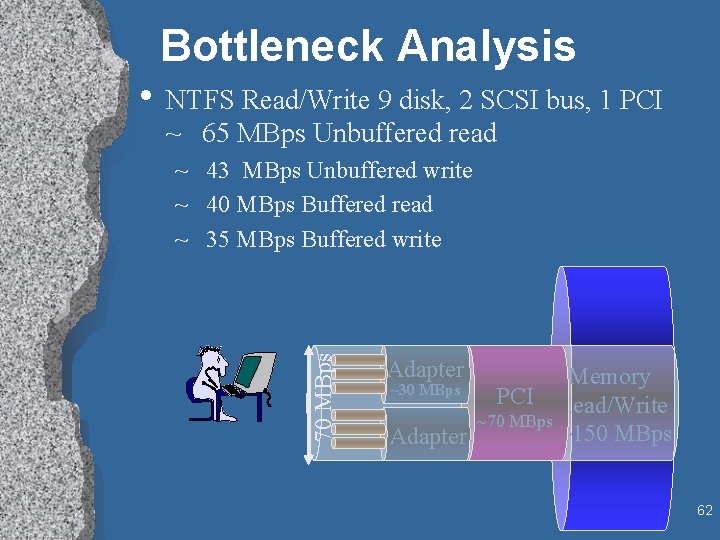

Bottleneck Analysis • NTFS Read/Write 9 disk, 2 SCSI bus, 1 PCI ~ 65 MBps Unbuffered read 70 MBps ~ 43 MBps Unbuffered write ~ 40 MBps Buffered read ~ 35 MBps Buffered write Adapter Memory PCI Read/Write ~70 MBps ~150 MBps Adapter ~30 MBps 62

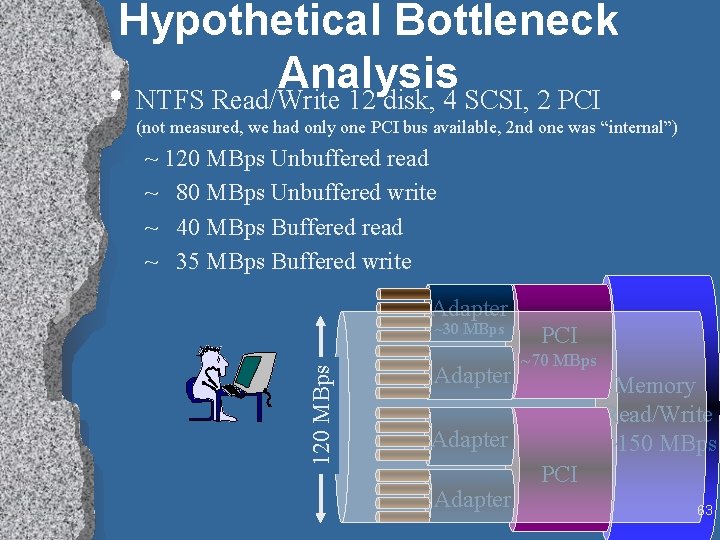

Hypothetical Bottleneck Analysis • NTFS Read/Write 12 disk, 4 SCSI, 2 PCI (not measured, we had only one PCI bus available, 2 nd one was “internal”) ~ 120 MBps Unbuffered read ~ 80 MBps Unbuffered write ~ 40 MBps Buffered read ~ 35 MBps Buffered write Adapter 120 MBps ~30 MBps Adapter PCI ~70 MBps Memory Read/Write ~150 MBps Adapter PCI 63

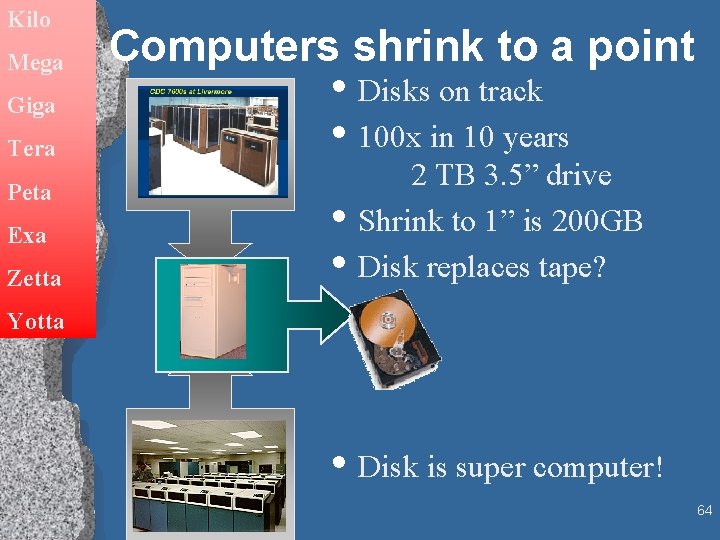

Kilo Mega Giga Tera Peta Exa Zetta Computers shrink to a point • Disks on track • 100 x in 10 years • • 2 TB 3. 5” drive Shrink to 1” is 200 GB Disk replaces tape? Yotta • Disk is super computer! 64

Data Gravity Processing Moves to Transducers • Move Processing to data sources • Move to where the power (and sheet metal) is • Processor in » Modem » Display » Microphones (speech recognition) & cameras (vision) » Storage: Data storage and analysis 65

It’s Already True of Printers Peripheral = Cyber. Brick • You buy a printer • You get a » several network interfaces » A Postscript engine • cpu, • memory, • software, • a spooler (soon) » and… a print engine. 66

Remember Your Roots 67

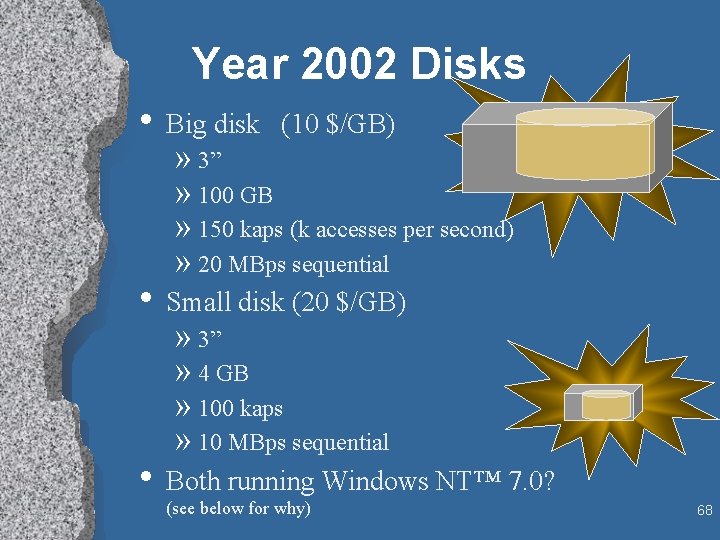

Year 2002 Disks • Big disk (10 $/GB) » 3” » 100 GB » 150 kaps (k accesses per second) » 20 MBps sequential • Small disk (20 $/GB) » 3” » 4 GB » 100 kaps » 10 MBps sequential • Both running Windows NT™ 7. 0? (see below for why) 68

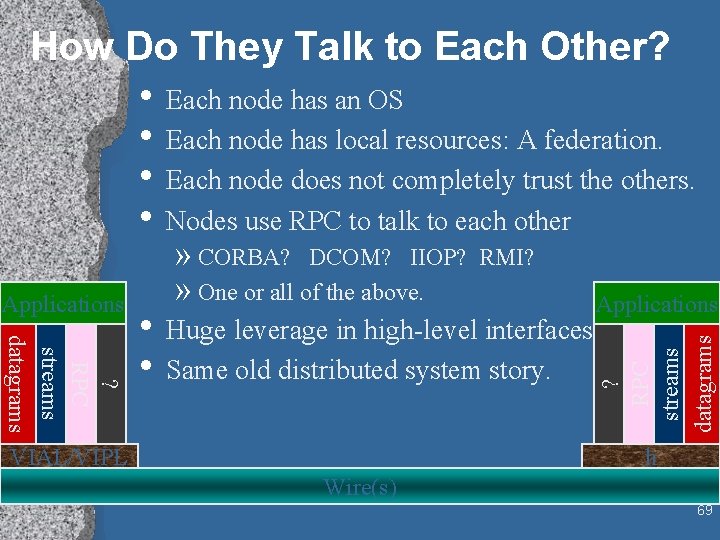

How Do They Talk to Each Other? • Each node has an OS • Each node has local resources: A federation. • Each node does not completely trust the others. • Nodes use RPC to talk to each other RMI? Applications ? RPC streams datagrams • Huge leverage in high-level interfaces. • Same old distributed system story. ? RPC streams datagrams Applications » CORBA? DCOM? IIOP? » One or all of the above. VIAL/VIPL h Wire(s) 69

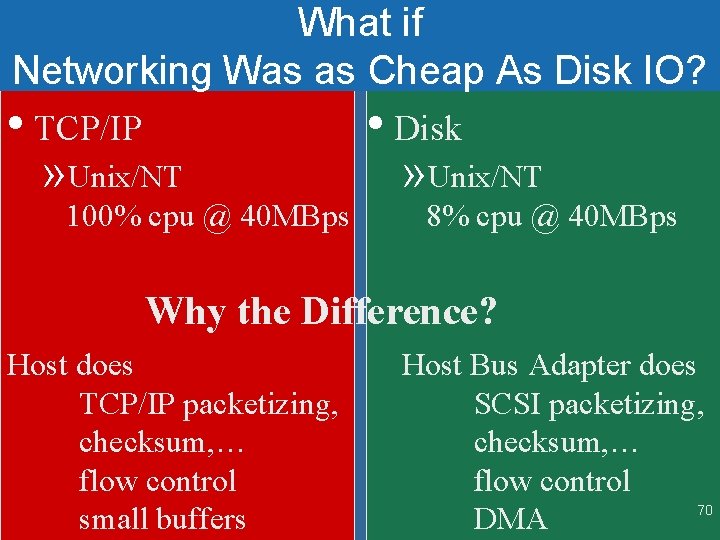

What if Networking Was as Cheap As Disk IO? • TCP/IP » Unix/NT 100% cpu @ 40 MBps • Disk » Unix/NT 8% cpu @ 40 MBps Why the Difference? Host does TCP/IP packetizing, checksum, … flow control small buffers Host Bus Adapter does SCSI packetizing, checksum, … flow control 70 DMA

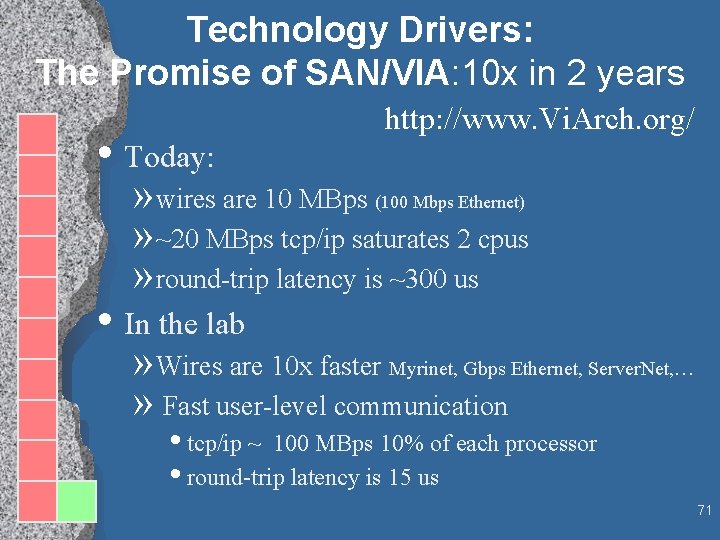

Technology Drivers: The Promise of SAN/VIA: 10 x in 2 years • Today: http: //www. Vi. Arch. org/ » wires are 10 MBps (100 Mbps Ethernet) » ~20 MBps tcp/ip saturates 2 cpus » round-trip latency is ~300 us • In the lab » Wires are 10 x faster Myrinet, Gbps Ethernet, Server. Net, … » Fast user-level communication • tcp/ip ~ 100 MBps 10% of each processor • round-trip latency is 15 us 71

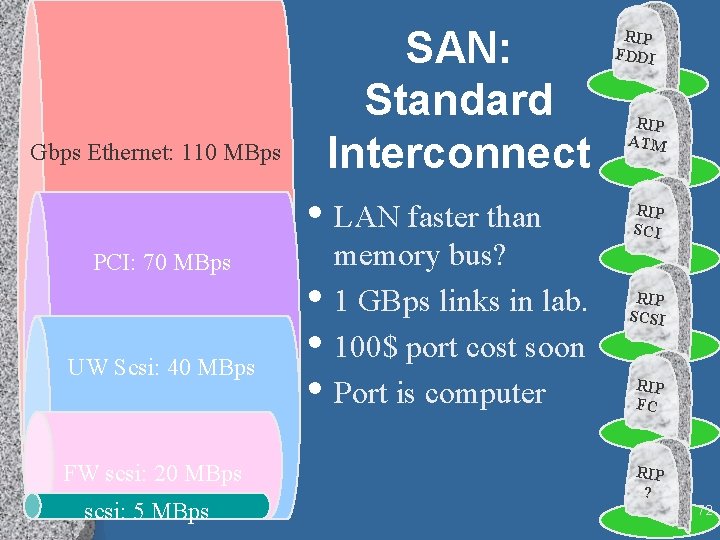

SAN: Standard Interconnect Gbps Ethernet: 110 MBps • LAN faster than PCI: 70 MBps UW Scsi: 40 MBps FW scsi: 20 MBps scsi: 5 MBps • • • memory bus? 1 GBps links in lab. 100$ port cost soon Port is computer RIP FDDI RIP ATM RIP SCI RIP SCSI RIP FC RIP ? 72

Technology Drivers Plug & Play Software • RPC is standardizing: (DCOM, IIOP, HTTP) » Gives huge TOOL LEVERAGE » Solves the hard problems for you: • naming, • security, • directory service, • operations, . . . • Commoditized programming environments » Free. BSD, Linix, Solaris, …+ tools » Net. Ware + tools » Win. CE, Win. NT, …+ tools » Java. OS + tools • Apps gravitate to data. • General purpose OS on controller runs apps. 73

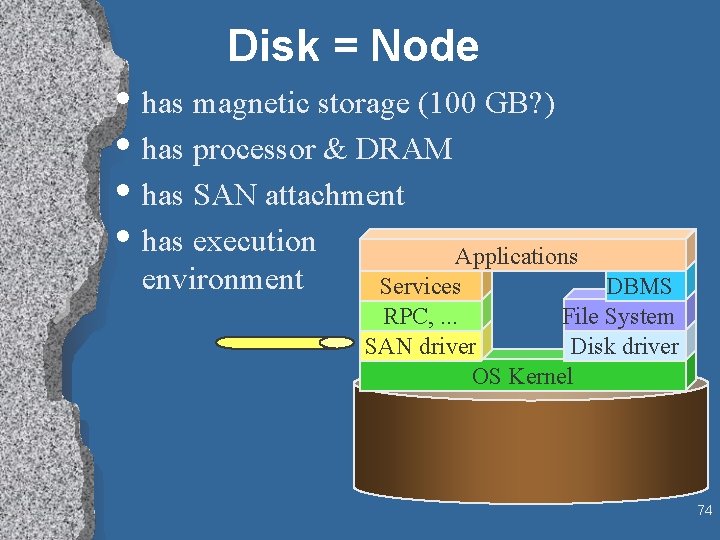

Disk = Node • has magnetic storage (100 GB? ) • has processor & DRAM • has SAN attachment • has execution Applications environment Services DBMS RPC, . . . File System SAN driver Disk driver OS Kernel 74

Penny Sort Ground Rules http: //research. microsoft. com/barc/Sort. Benchmark • How much can you sort for a penny. » Hardware and Software cost » Depreciated over 3 years » 1 M$ system gets about 1 second, » 1 K$ system gets about 1, 000 seconds. » Time (seconds) = System. Price ($) / 946, 080 • Input and output are disk resident • Input is » 100 -byte records (random data) » key is first 10 bytes. • Must create output file • and fill with sorted version of input file. Daytona (product) and Indy (special) categories 75

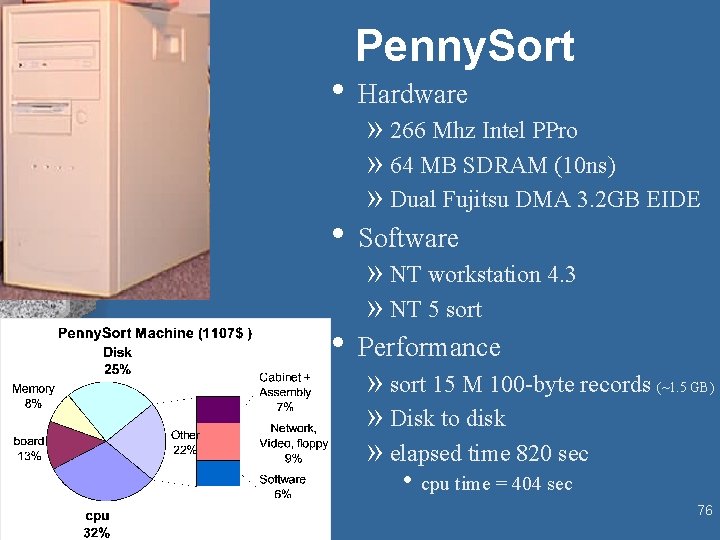

Penny. Sort • Hardware » 266 Mhz Intel PPro » 64 MB SDRAM (10 ns) » Dual Fujitsu DMA 3. 2 GB EIDE • Software » NT workstation 4. 3 » NT 5 sort • Performance » sort 15 M 100 -byte records » Disk to disk » elapsed time 820 sec (~1. 5 GB) • cpu time = 404 sec 76

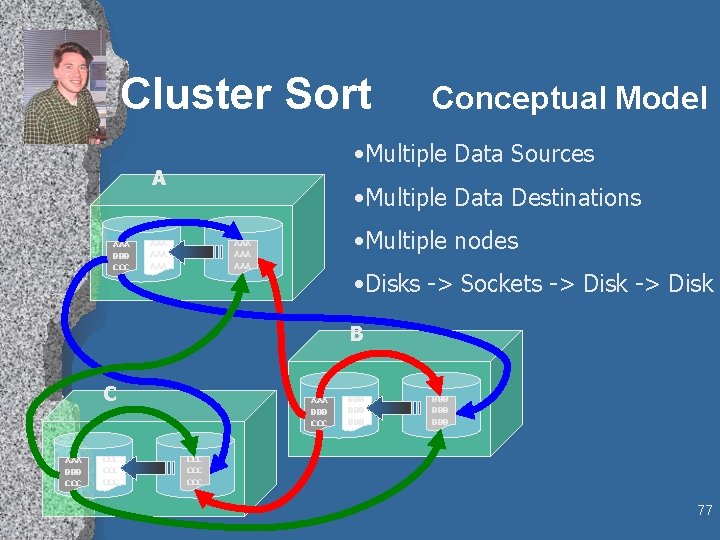

Cluster Sort • Multiple Data Sources A AAA BBB CCC Conceptual Model • Multiple Data Destinations AAA AAA • Multiple nodes AAA AAA • Disks -> Sockets -> Disk B C AAA BBB CCC CCC AAA BBB CCC BBB BBB BBB CCC CCC 77

Cluster Install & Execute • If this is to be used by others, it must be: • Easy to install • Easy to execute • Installations of distributed systems take time and can be tedious. (AM 2, Glu. Guard) • Parallel Remote execution is non-trivial. (GLUnix, LSF) How do we keep this “simple” and “built-in” to NTCluster. Sort ? 78

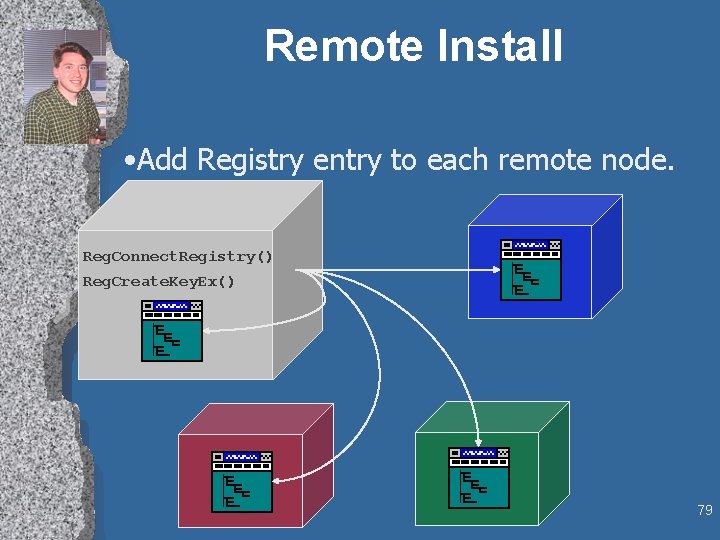

Remote Install • Add Registry entry to each remote node. Reg. Connect. Registry() Reg. Create. Key. Ex() 79

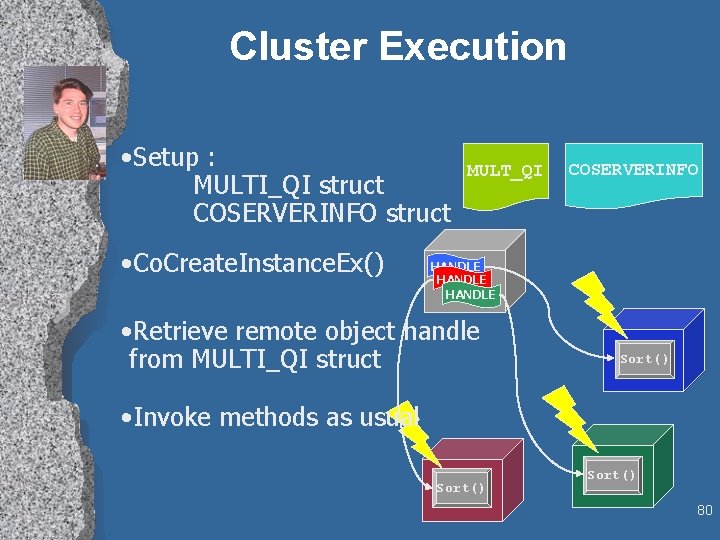

Cluster Execution • Setup : MULTI_QI struct COSERVERINFO struct • Co. Create. Instance. Ex() MULT_QI COSERVERINFO HANDLE • Retrieve remote object handle from MULTI_QI struct Sort() • Invoke methods as usual Sort() 80

Public Service • Gordon Bell » Computer Museum » Vanguard Group » Edits column in CACM • Jim Gray » National Research Council Computer Science and Telecommunications Board » Presidential Advisory Committee on NGI-IT-HPPC. • Tom Barclay » USGS and Russian cooperative research 81

A Plug for Co. RR • Co. RR = • • • Computer Science Research Repository All computer science literature in cyberspace http: //xxx. lanl. gov/archive/cs Endorsed by CACM Reviewed & Refereed EJournals will evolve from this archive PLEASE submit articles Copyright issues are still problematic 82

BARC Microsoft BARC Bay Area Research Center Tom Barclay Tyler Beam (U VA)* Gordon Bell Joe Barrera Josh Coates (UCB)* Jim Gemmell Jim Gray Steve Lucco Erik Riedel (CMU)* Eve Schooler (Cal Tech) Don Slutz Catherine Van Ingen (NTFS)* http: //www. research. Microsoft. com/barc/ 83

- Slides: 83