Advanced Artificial Intelligence Lecture 5 B Convolutional Neural

Advanced Artificial Intelligence Lecture 5 B: Convolutional Neural Network 2

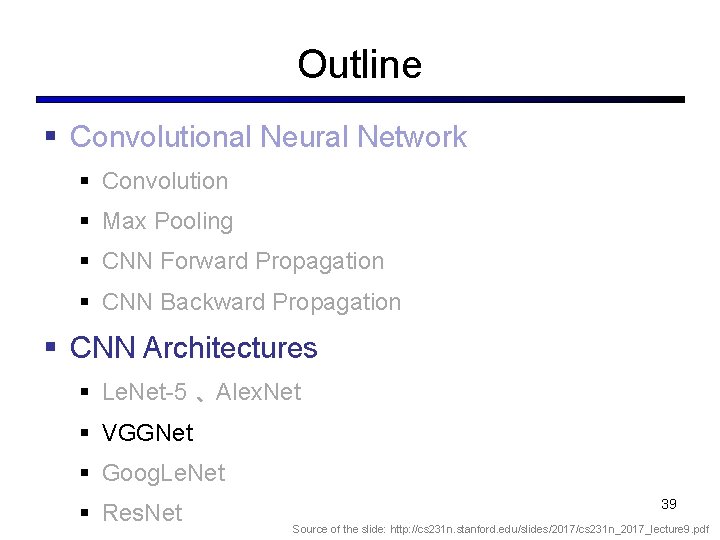

Outline § Convolutional Neural Network § CNN Forward Propagation § CNN Backward Propagation § CNN Architectures § Le. Net-5 、 Alex. Net § VGGNet § Goog. Le. Net § Res. Net 2

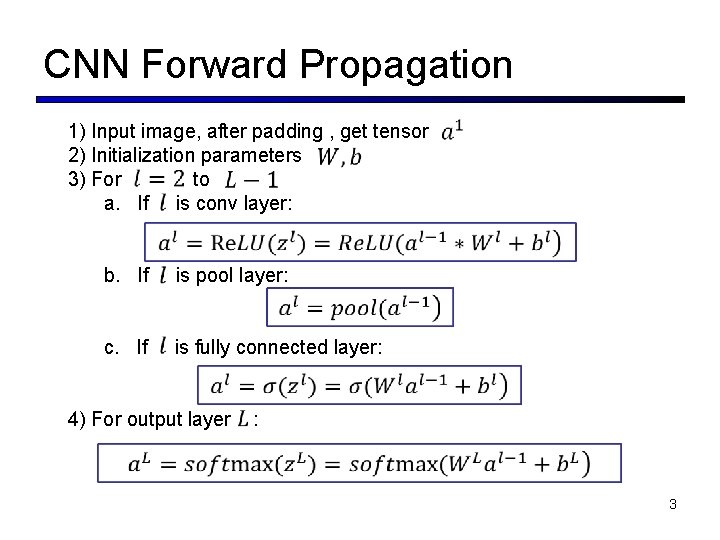

CNN Forward Propagation 1) Input image, after padding , get tensor 2) Initialization parameters 3) For to a. If is conv layer: b. If is pool layer: c. If is fully connected layer: 4) For output layer : 3

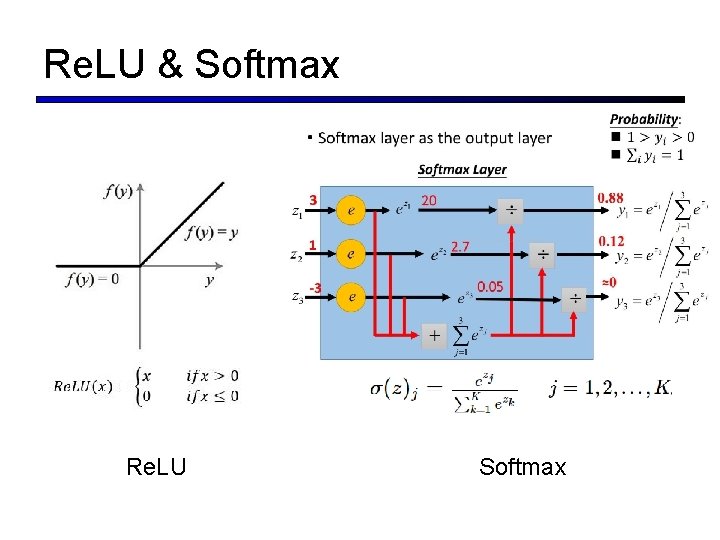

Re. LU & Softmax Re. LU Softmax

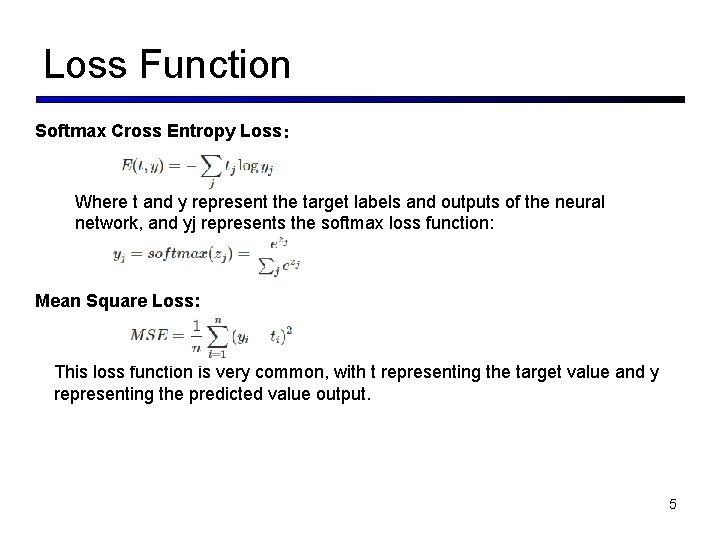

Loss Function Softmax Cross Entropy Loss: Where t and y represent the target labels and outputs of the neural network, and yj represents the softmax loss function: Mean Square Loss: This loss function is very common, with t representing the target value and y representing the predicted value output. 5

Outline § Convolutional Neural Network § CNN Forward Propagation § CNN Backward Propagation § CNN Architectures § Le. Net-5 、 Alex. Net § VGGNet § Goog. Le. Net § Res. Net 6

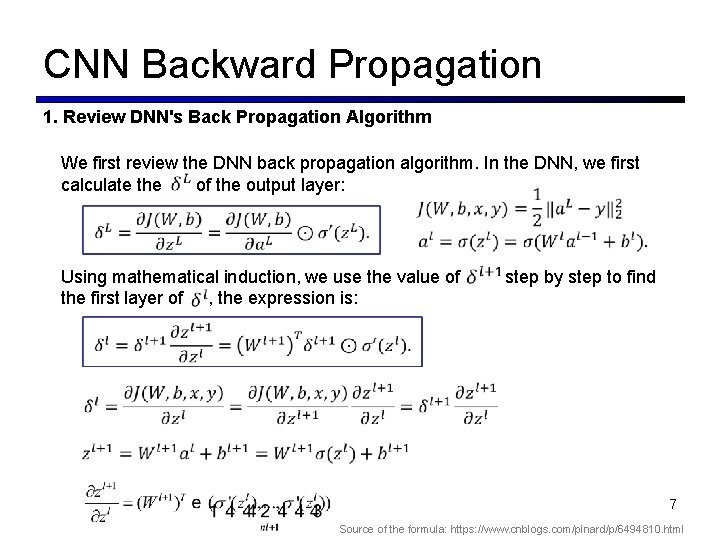

CNN Backward Propagation 1. Review DNN's Back Propagation Algorithm We first review the DNN back propagation algorithm. In the DNN, we first calculate the of the output layer: Using mathematical induction, we use the value of step by step to find the first layer of , the expression is: 7 Source of the formula: https: //www. cnblogs. com/pinard/p/6494810. html

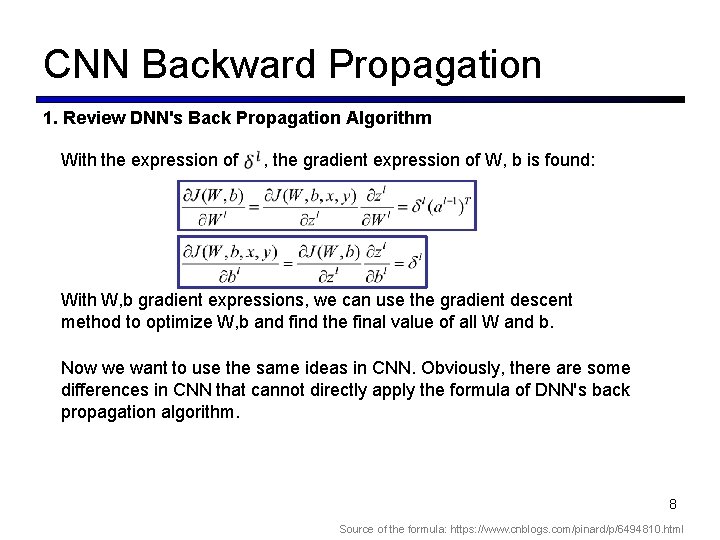

CNN Backward Propagation 1. Review DNN's Back Propagation Algorithm With the expression of , the gradient expression of W, b is found: With W, b gradient expressions, we can use the gradient descent method to optimize W, b and find the final value of all W and b. Now we want to use the same ideas in CNN. Obviously, there are some differences in CNN that cannot directly apply the formula of DNN's back propagation algorithm. 8 Source of the formula: https: //www. cnblogs. com/pinard/p/6494810. html

CNN Backward Propagation 2. CNN's Back Propagation Algorithm Idea To apply DNN's back-propagation algorithm to CNN, there are several issues that need to be addressed: 1) The pooling layer does not have an activation function. This problem is better solved. We can make the activation function of the pooling layer σ(z)=z, that is, after activation, it is itself. The derivative of this pooling layer activation function is 1. 2) When the pooling layer compresses the input when it propagates in the forward direction, we now need to deduce backwards. This method of derivation is completely different from DNN. 3) The convolutional layer is the output of the current layer through the tensor convolution, or the summation of several matrix convolutions. This is very different from the DNN. The DNN's full-connected layer is directly matrix multiplied to obtain the current layer's Output. In this way, when the convolutional layer propagates backwards, the recalculation method of in the upper layer must be different. 4) For the convolutional layer, since the operation used by W is a convolution, the method of deriving W and b for all the convolution kernels of the layer from is also different. 9 Source of the formula: https: //www. cnblogs. com/pinard/p/6494810. html

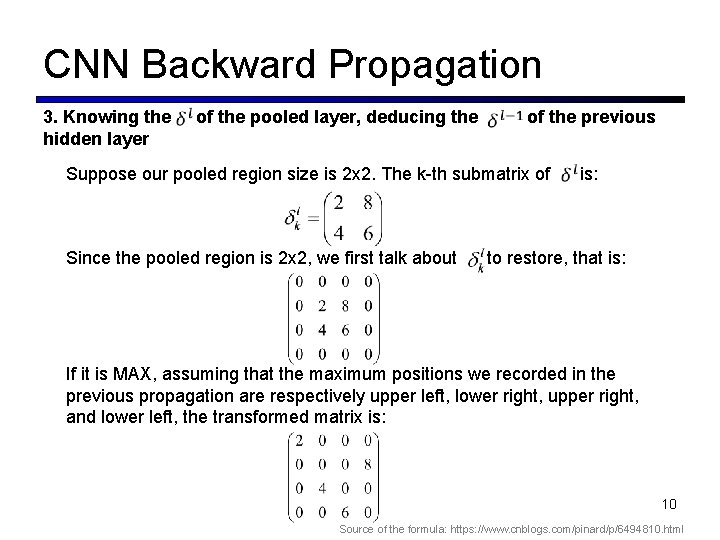

CNN Backward Propagation 3. Knowing the hidden layer of the pooled layer, deducing the of the previous Suppose our pooled region size is 2 x 2. The k-th submatrix of is: Since the pooled region is 2 x 2, we first talk about to restore, that is: If it is MAX, assuming that the maximum positions we recorded in the previous propagation are respectively upper left, lower right, upper right, and lower left, the transformed matrix is: 10 Source of the formula: https: //www. cnblogs. com/pinard/p/6494810. html

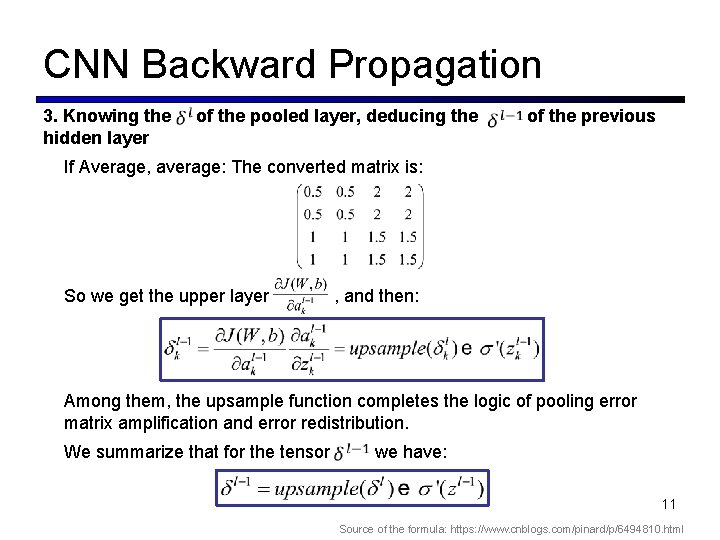

CNN Backward Propagation 3. Knowing the hidden layer of the pooled layer, deducing the of the previous If Average, average: The converted matrix is: So we get the upper layer , and then: Among them, the upsample function completes the logic of pooling error matrix amplification and error redistribution. We summarize that for the tensor we have: 11 Source of the formula: https: //www. cnblogs. com/pinard/p/6494810. html

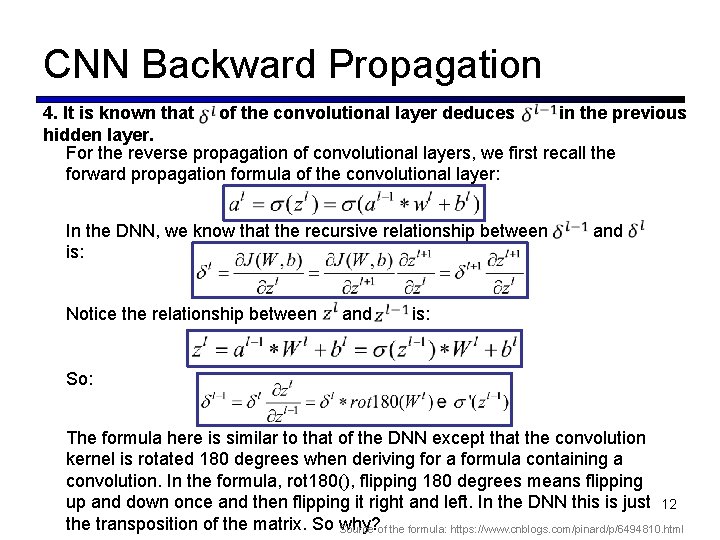

CNN Backward Propagation 4. It is known that of the convolutional layer deduces in the previous hidden layer. For the reverse propagation of convolutional layers, we first recall the forward propagation formula of the convolutional layer: In the DNN, we know that the recursive relationship between and is: Notice the relationship between and is: So: The formula here is similar to that of the DNN except that the convolution kernel is rotated 180 degrees when deriving for a formula containing a convolution. In the formula, rot 180(), flipping 180 degrees means flipping up and down once and then flipping it right and left. In the DNN this is just 12 the transposition of the matrix. So why? Source of the formula: https: //www. cnblogs. com/pinard/p/6494810. html

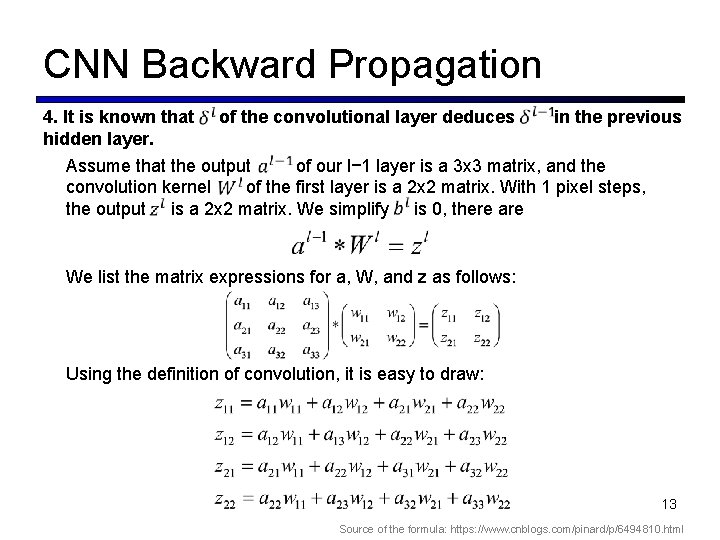

CNN Backward Propagation 4. It is known that of the convolutional layer deduces in the previous hidden layer. Assume that the output of our l− 1 layer is a 3 x 3 matrix, and the convolution kernel of the first layer is a 2 x 2 matrix. With 1 pixel steps, the output is a 2 x 2 matrix. We simplify is 0, there are We list the matrix expressions for a, W, and z as follows: Using the definition of convolution, it is easy to draw: 13 Source of the formula: https: //www. cnblogs. com/pinard/p/6494810. html

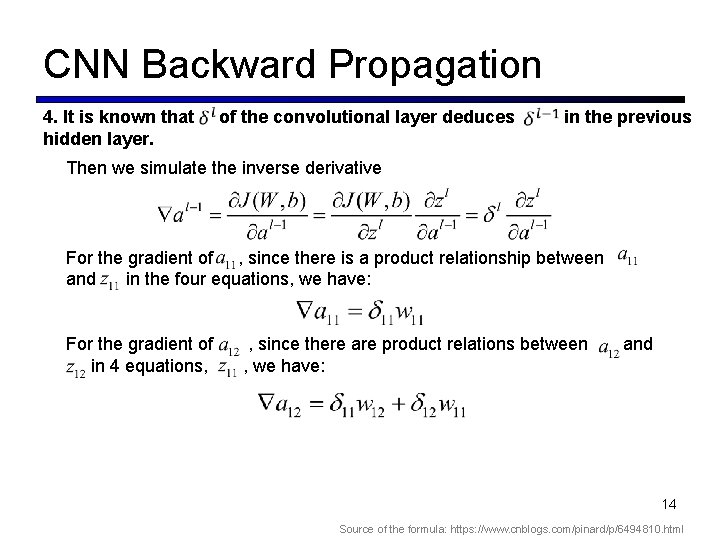

CNN Backward Propagation 4. It is known that hidden layer. of the convolutional layer deduces in the previous Then we simulate the inverse derivative For the gradient of , since there is a product relationship between and in the four equations, we have: For the gradient of , since there are product relations between and in 4 equations, , we have: 14 Source of the formula: https: //www. cnblogs. com/pinard/p/6494810. html

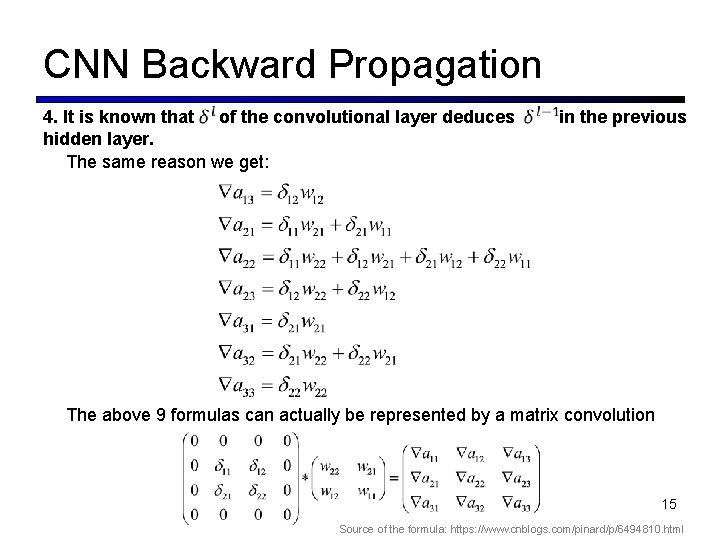

CNN Backward Propagation 4. It is known that of the convolutional layer deduces hidden layer. The same reason we get: in the previous The above 9 formulas can actually be represented by a matrix convolution 15 Source of the formula: https: //www. cnblogs. com/pinard/p/6494810. html

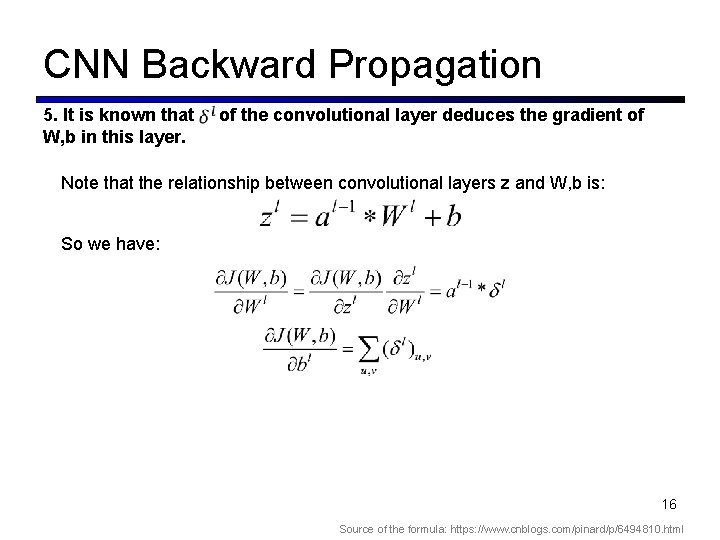

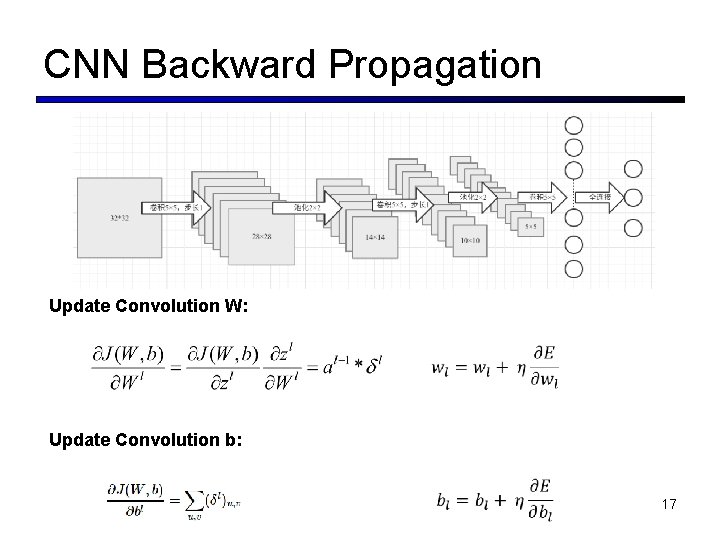

CNN Backward Propagation 5. It is known that W, b in this layer. of the convolutional layer deduces the gradient of Note that the relationship between convolutional layers z and W, b is: So we have: 16 Source of the formula: https: //www. cnblogs. com/pinard/p/6494810. html

CNN Backward Propagation Update Convolution W: Update Convolution b: 17

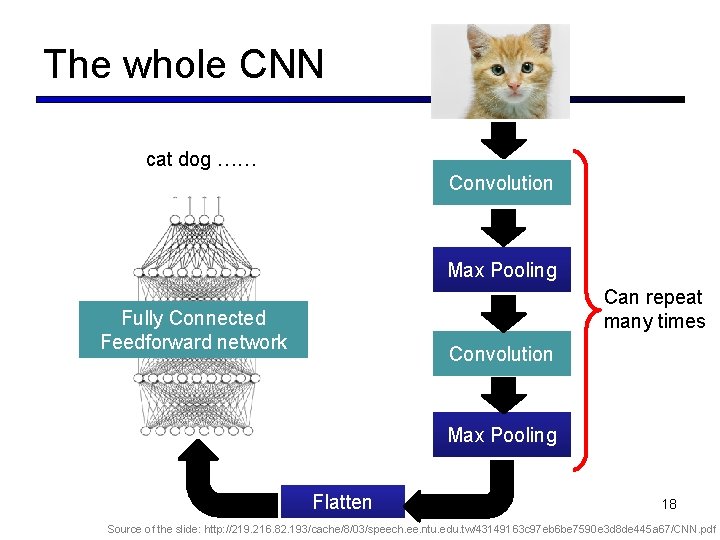

The whole CNN cat dog …… Convolution Max Pooling Can repeat many times Fully Connected Feedforward network Convolution Max Pooling Flatten 18 Source of the slide: http: //219. 216. 82. 193/cache/8/03/speech. ee. ntu. edu. tw/43149163 c 97 eb 6 be 7590 e 3 d 8 de 445 a 67/CNN. pdf

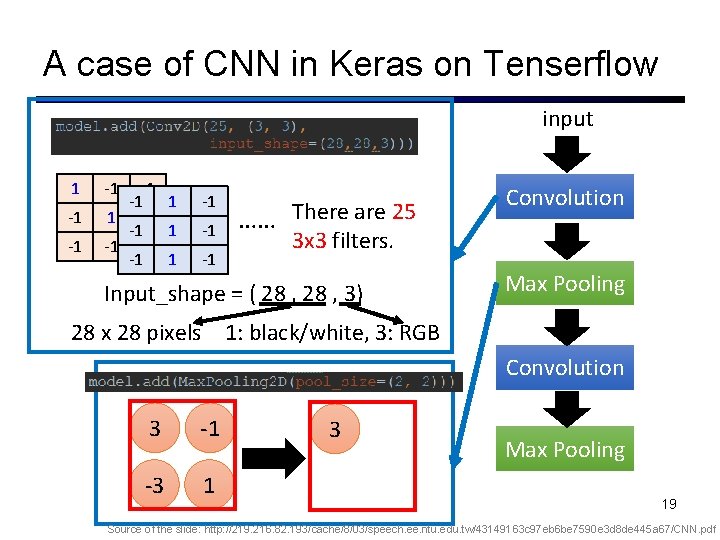

A case of CNN in Keras on Tenserflow input 1 -1 -1 -1 1 -1 -1 -1 …… There are 25 3 x 3 filters. Input_shape = ( 28 , 3) Convolution Max Pooling 28 x 28 pixels 1: black/white, 3: RGB Convolution 3 -1 -3 1 3 Max Pooling 19 Source of the slide: http: //219. 216. 82. 193/cache/8/03/speech. ee. ntu. edu. tw/43149163 c 97 eb 6 be 7590 e 3 d 8 de 445 a 67/CNN. pdf

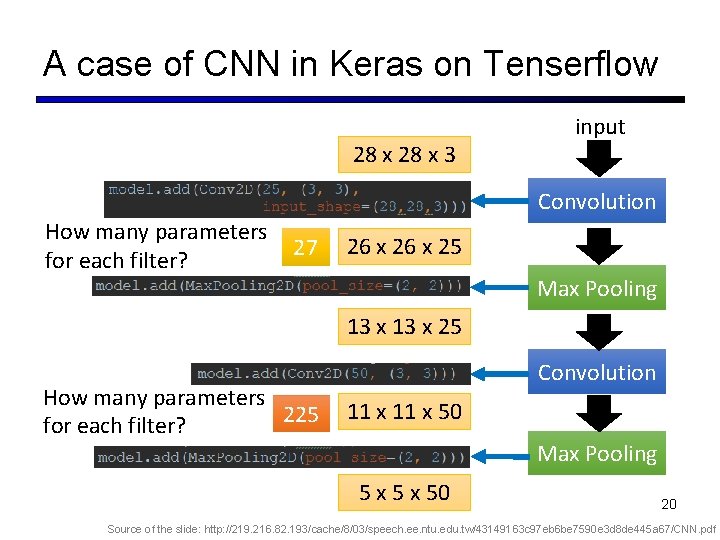

A case of CNN in Keras on Tenserflow 28 x 3 input Convolution How many parameters for each filter? 27 26 x 25 Max Pooling 13 x 25 How many parameters 225 for each filter? Convolution 11 x 50 Max Pooling 5 x 50 20 Source of the slide: http: //219. 216. 82. 193/cache/8/03/speech. ee. ntu. edu. tw/43149163 c 97 eb 6 be 7590 e 3 d 8 de 445 a 67/CNN. pdf

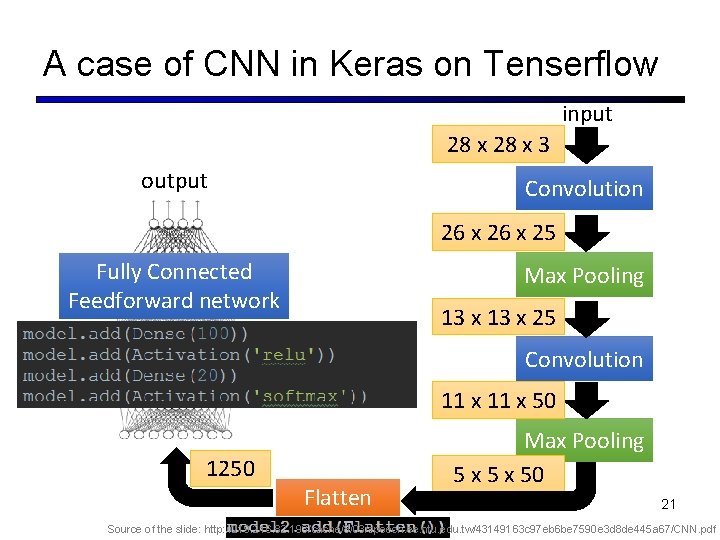

A case of CNN in Keras on Tenserflow input 28 x 3 output Convolution 26 x 25 Fully Connected Feedforward network Max Pooling 13 x 25 Convolution 11 x 50 1250 Flatten Max Pooling 5 x 50 21 Source of the slide: http: //219. 216. 82. 193/cache/8/03/speech. ee. ntu. edu. tw/43149163 c 97 eb 6 be 7590 e 3 d 8 de 445 a 67/CNN. pdf

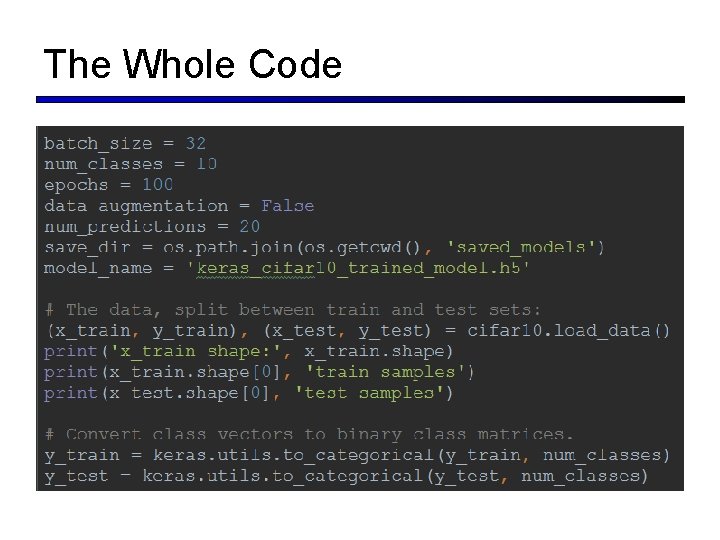

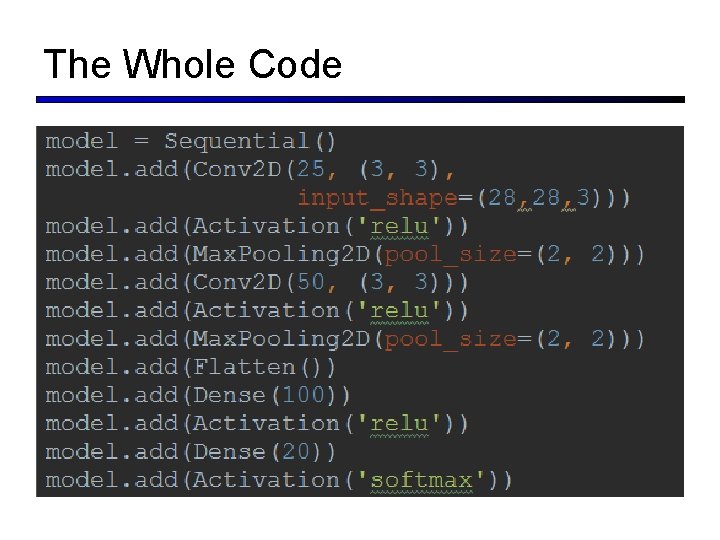

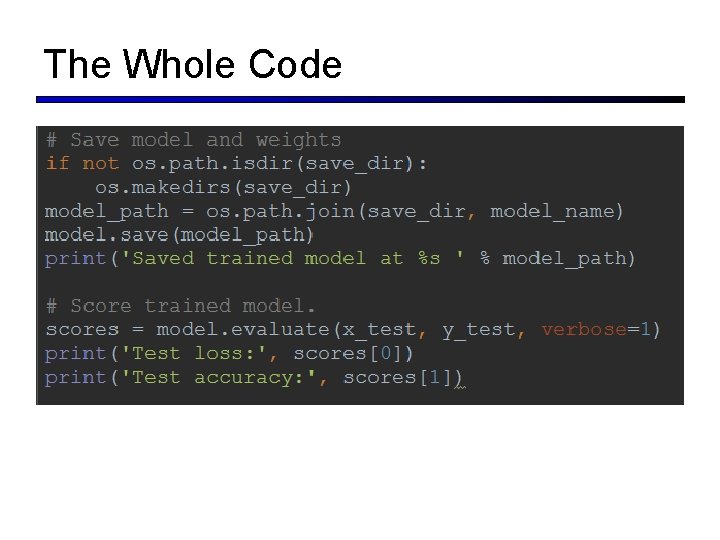

The Whole Code

The Whole Code

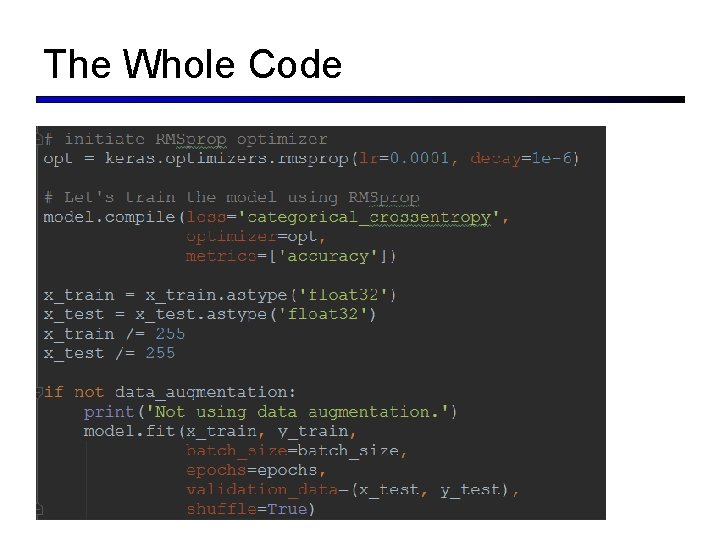

The Whole Code

The Whole Code

Outline § Convolutional Neural Network § CNN Forward Propagation § CNN Backward Propagation § CNN Architectures § Le. Net-5 、 Alex. Net § VGGNet § Goog. Le. Net § Res. Net 26

CNN Architectures § Case Studies § § Alex. Net VGG Goog. Le. Net Res. Net § Also… § § Ni. N(Network in Network) Wide Res. Net Res. Ne. XT Stochastic Depth Dense. Net Fractal. Net Squeeze. Net 27

Outline § Convolutional Neural Network § Convolution § Max Pooling § CNN Forward Propagation § CNN Backward Propagation § CNN Architectures § Le. Net-5 、 Alex. Net § VGGNet § Goog. Le. Net § Res. Net 28

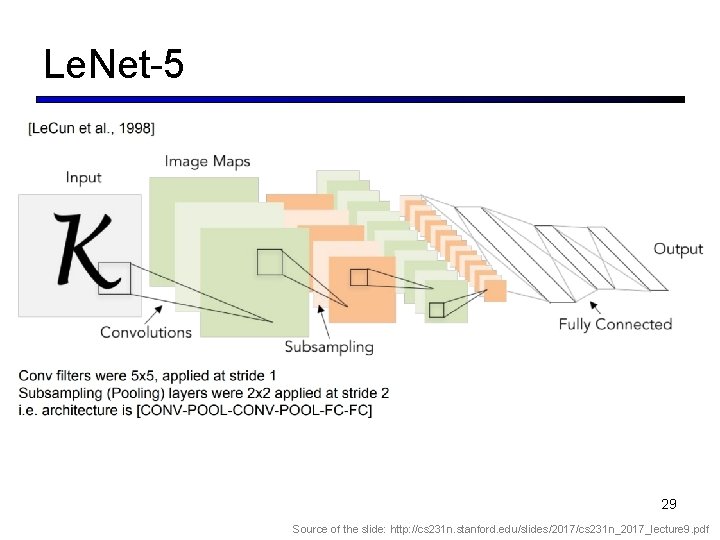

Le. Net-5 29 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

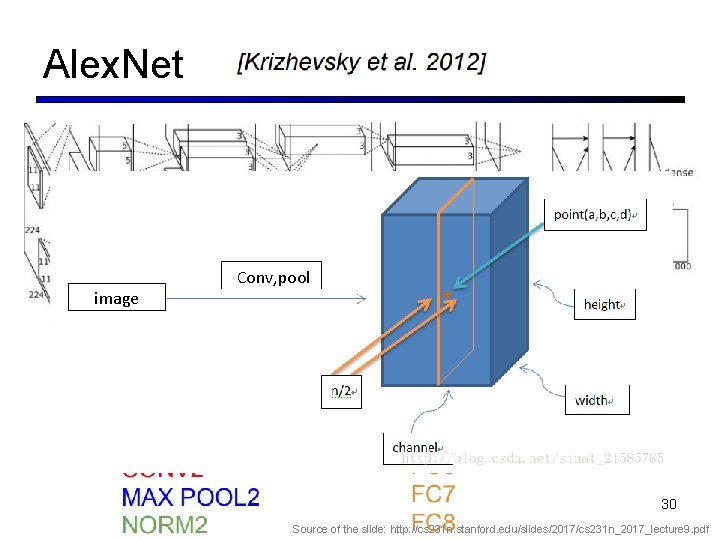

Alex. Net image Conv, pool 30 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

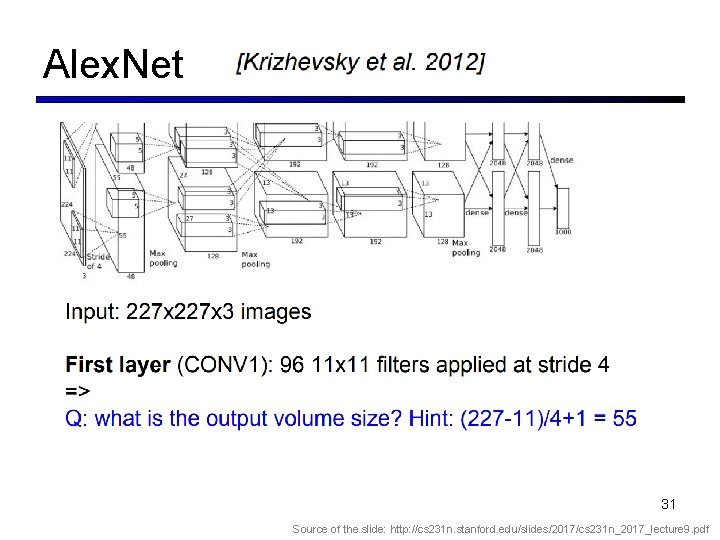

Alex. Net 31 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

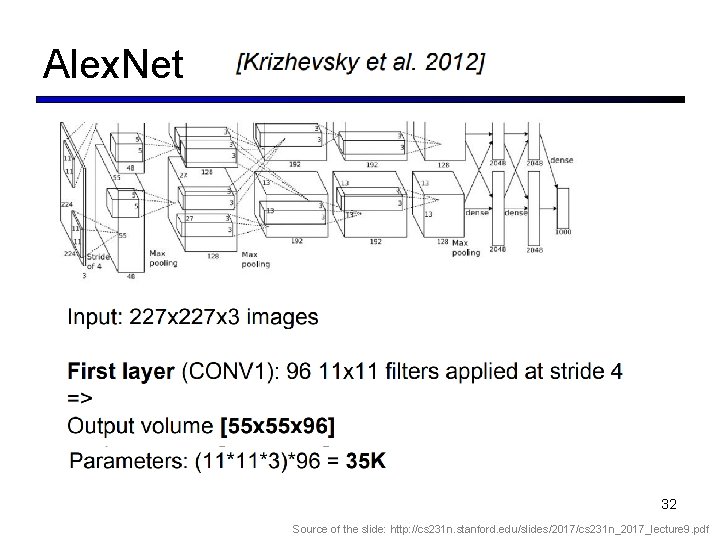

Alex. Net 32 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

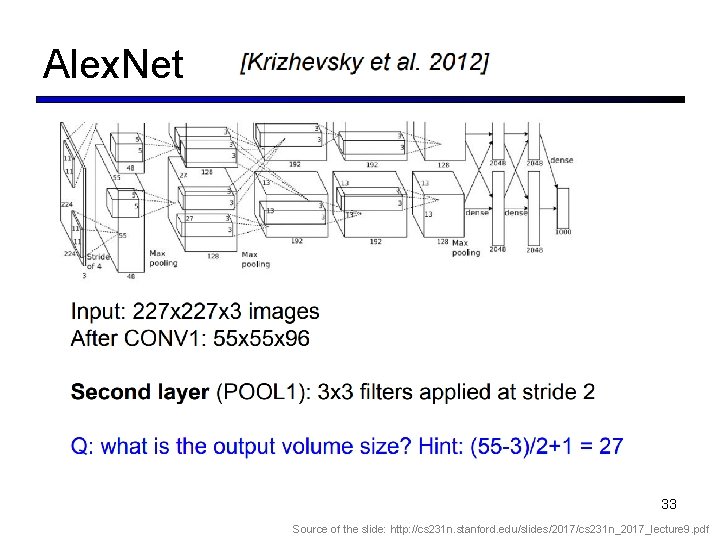

Alex. Net 33 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

Alex. Net 34 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

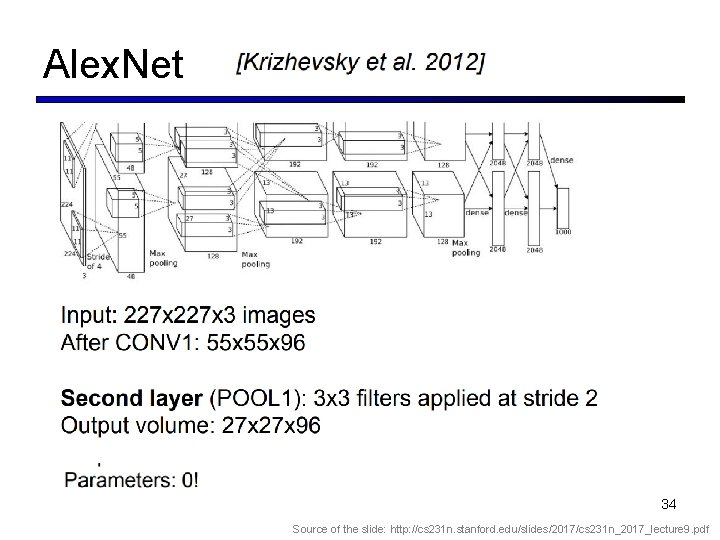

Alex. Net 35 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

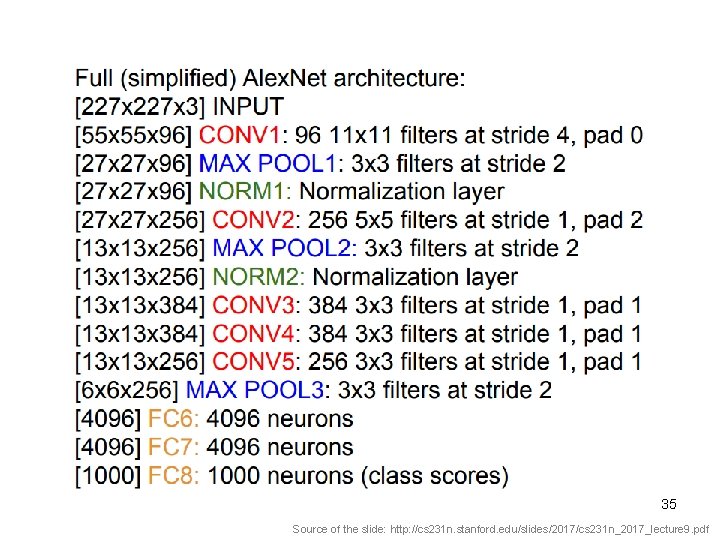

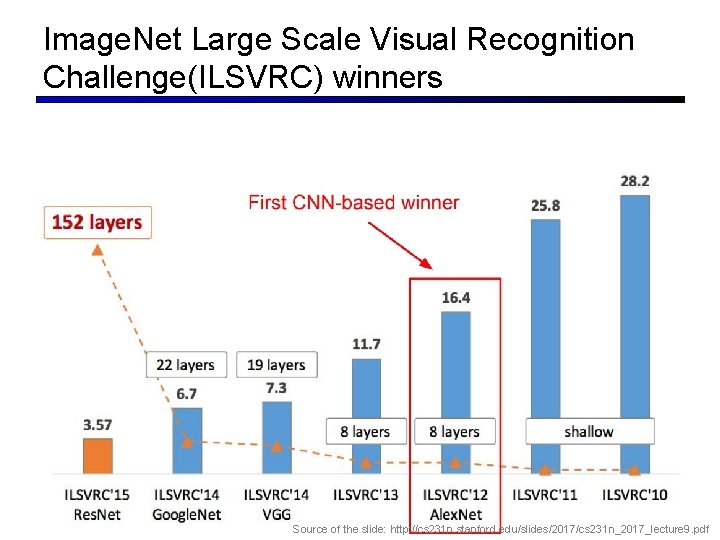

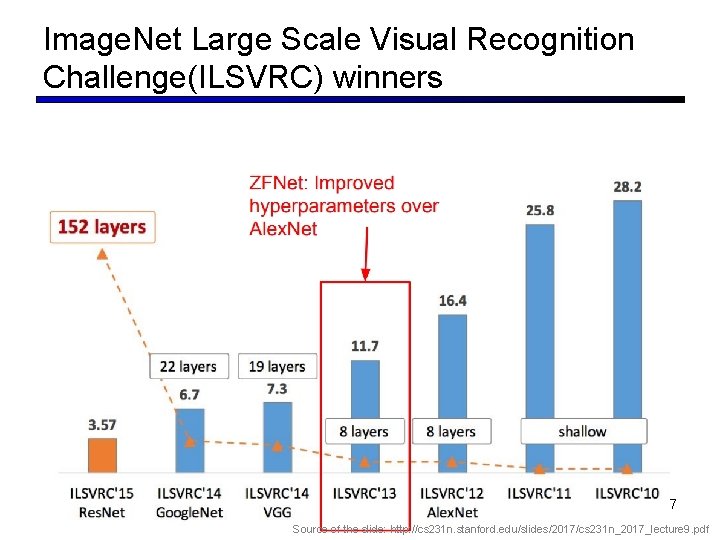

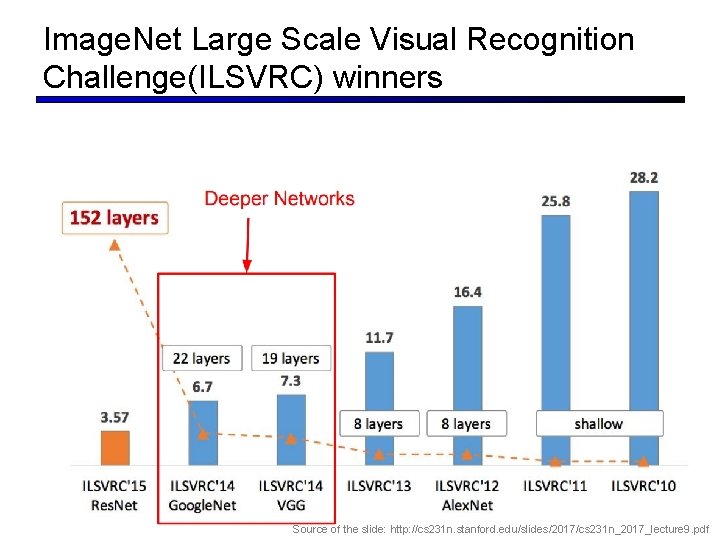

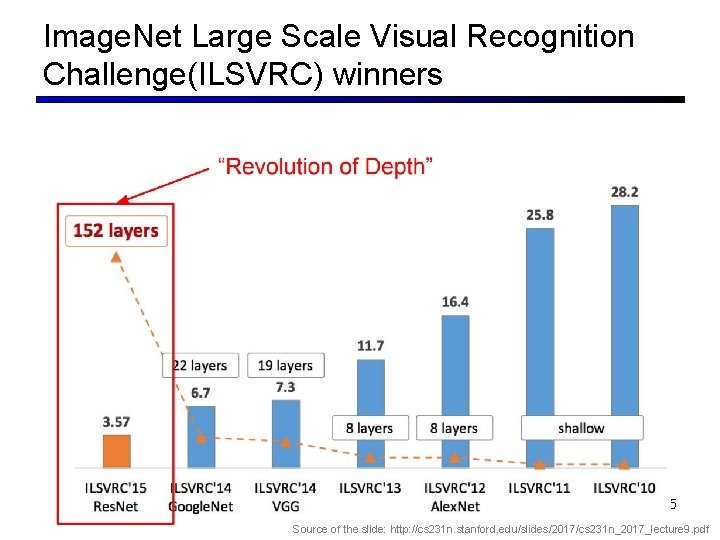

Image. Net Large Scale Visual Recognition Challenge(ILSVRC) winners 36 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

Image. Net Large Scale Visual Recognition Challenge(ILSVRC) winners 37 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

Image. Net Large Scale Visual Recognition Challenge(ILSVRC) winners 38 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

Outline § Convolutional Neural Network § Convolution § Max Pooling § CNN Forward Propagation § CNN Backward Propagation § CNN Architectures § Le. Net-5 、 Alex. Net § VGGNet § Goog. Le. Net § Res. Net 39 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

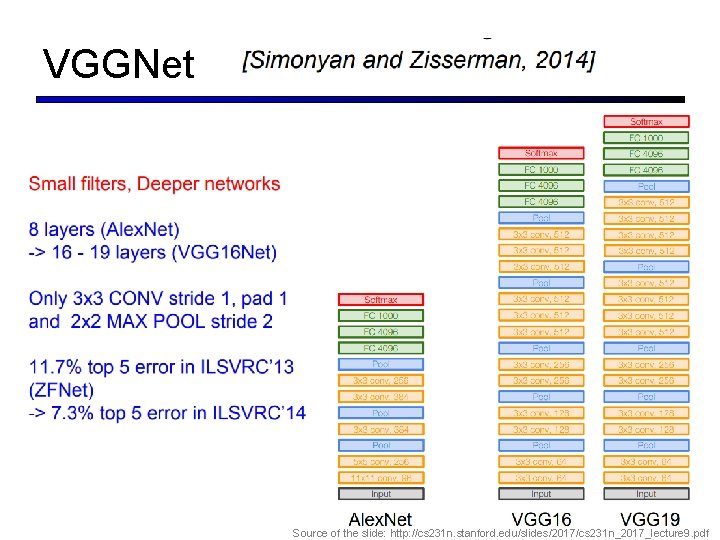

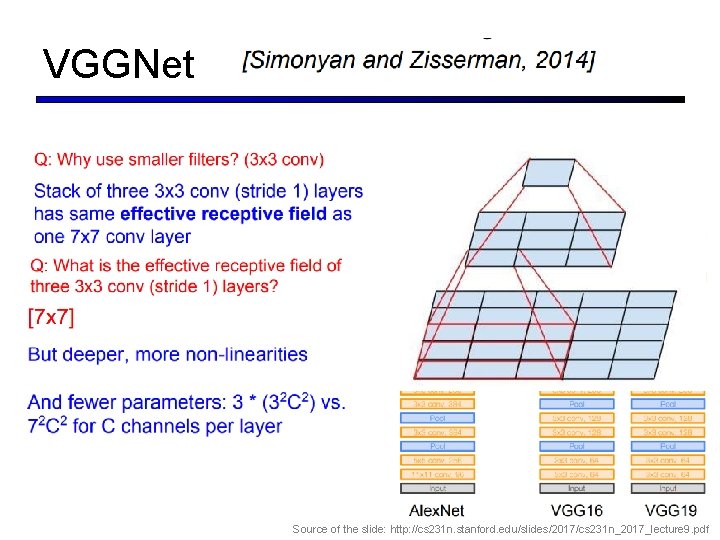

VGGNet 40 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

VGGNet 41 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

Outline § Convolutional Neural Network § Convolution § Max Pooling § CNN Forward Propagation § CNN Backward Propagation § CNN Architectures § Le. Net-5 、 Alex. Net § VGGNet § Goog. Le. Net § Res. Net 42

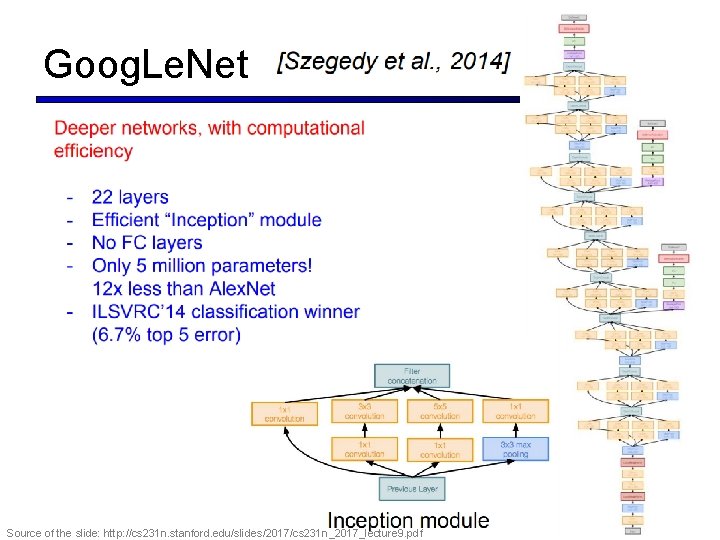

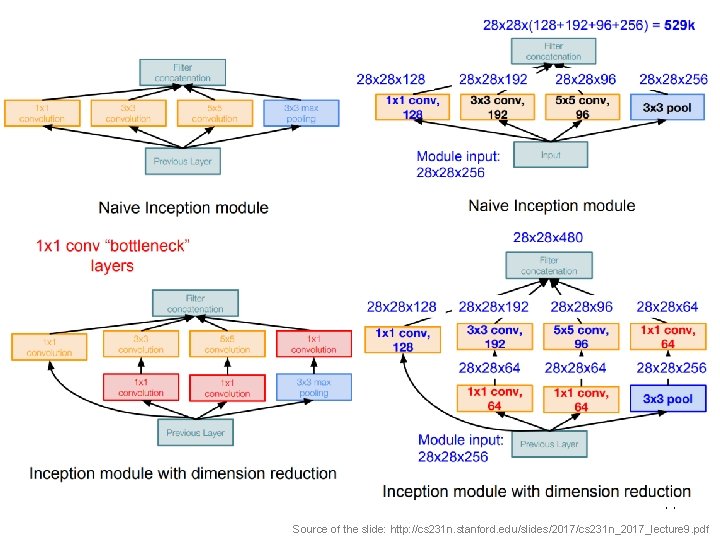

Goog. Le. Net 43 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

Alex. Net 44 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

Image. Net Large Scale Visual Recognition Challenge(ILSVRC) winners 45 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

Outline § Convolutional Neural Network § Convolution § Max Pooling § CNN Forward Propagation § CNN Backward Propagation § CNN Architectures § Le. Net-5 、 Alex. Net § VGGNet § Goog. Le. Net § Res. Net 46

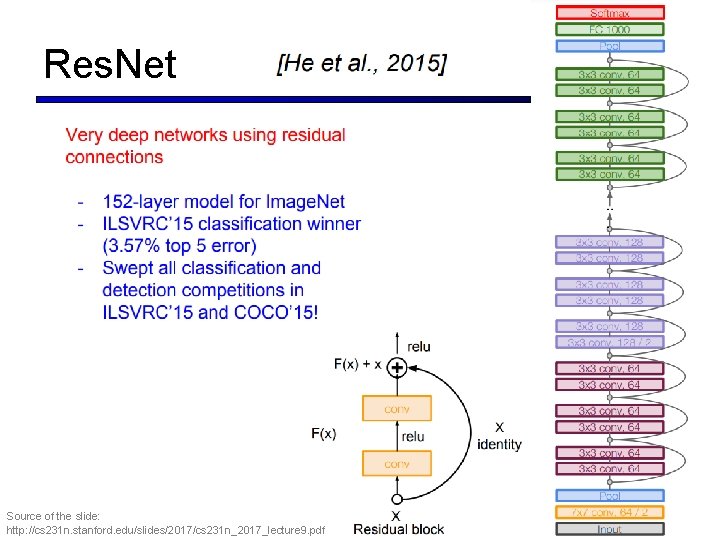

Res. Net Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf 47

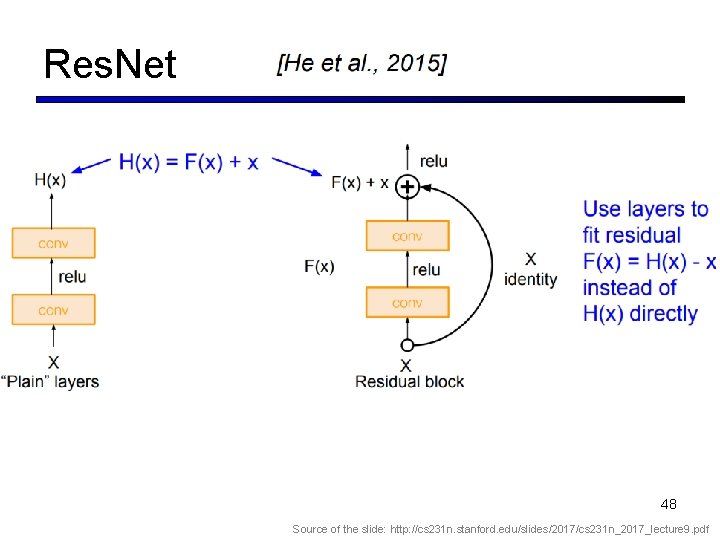

Res. Net 48 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

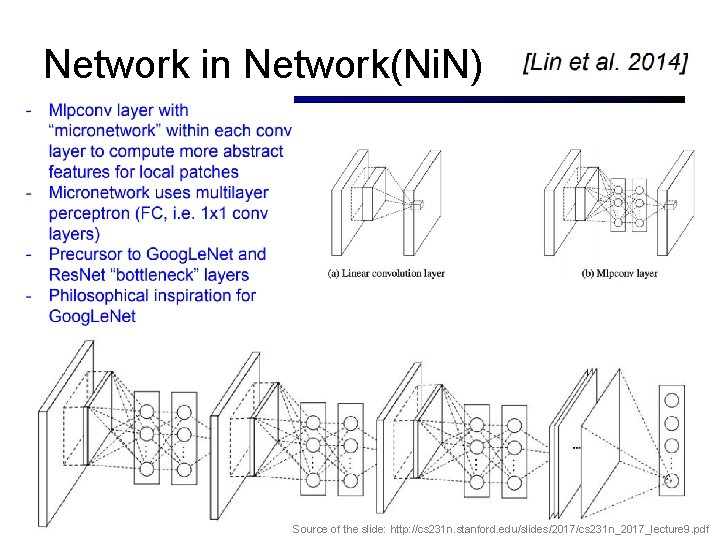

Network in Network(Ni. N) 49 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

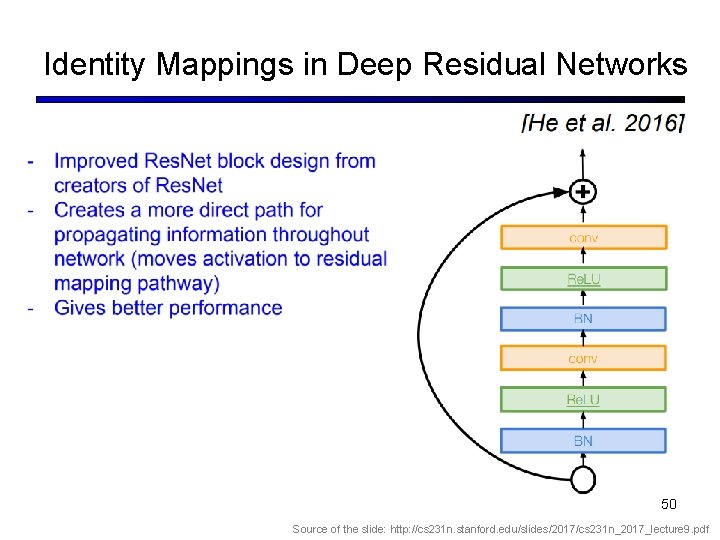

Identity Mappings in Deep Residual Networks 50 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

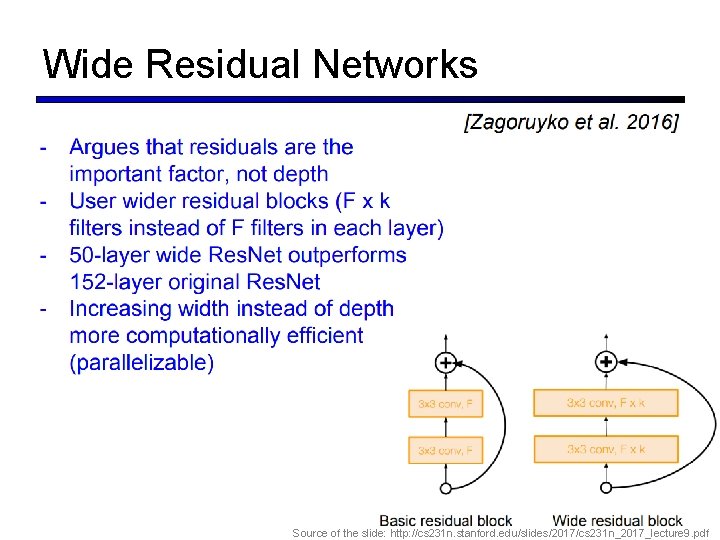

Wide Residual Networks 51 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

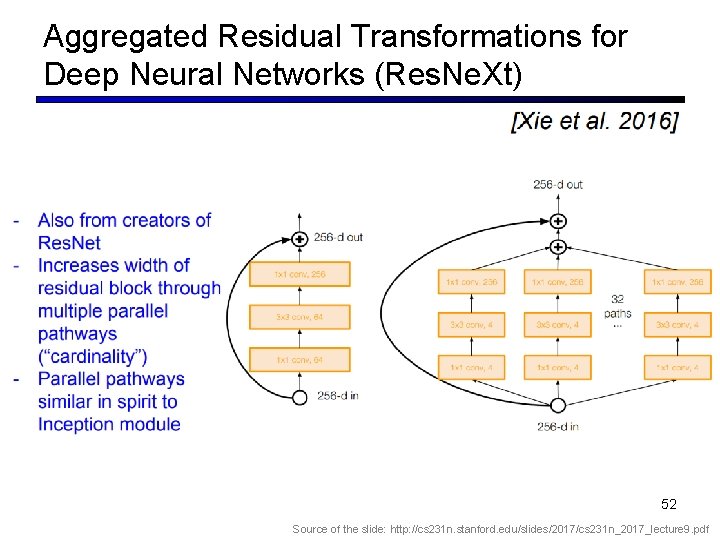

Aggregated Residual Transformations for Deep Neural Networks (Res. Ne. Xt) 52 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

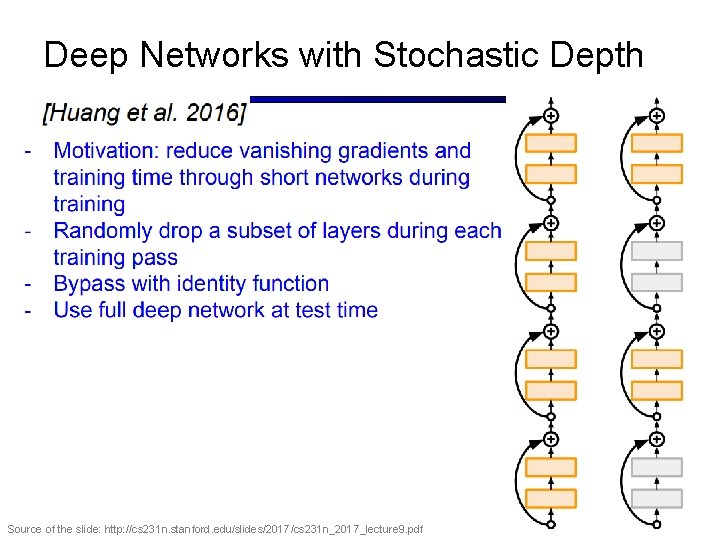

Deep Networks with Stochastic Depth 53 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

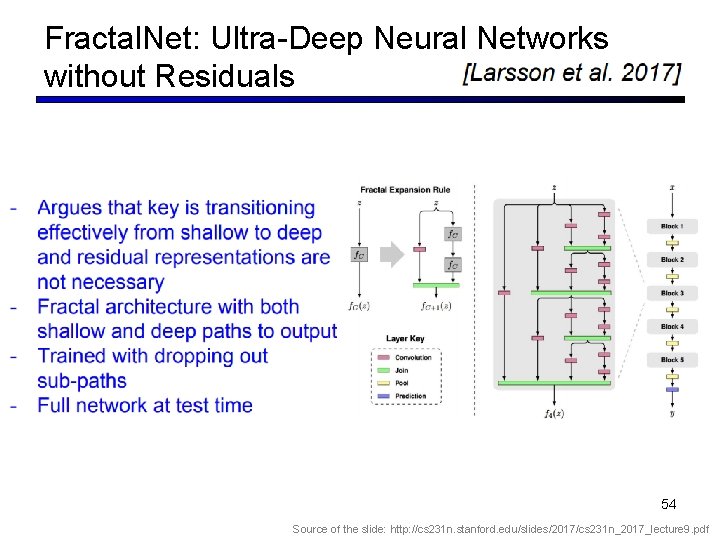

Fractal. Net: Ultra-Deep Neural Networks without Residuals 54 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

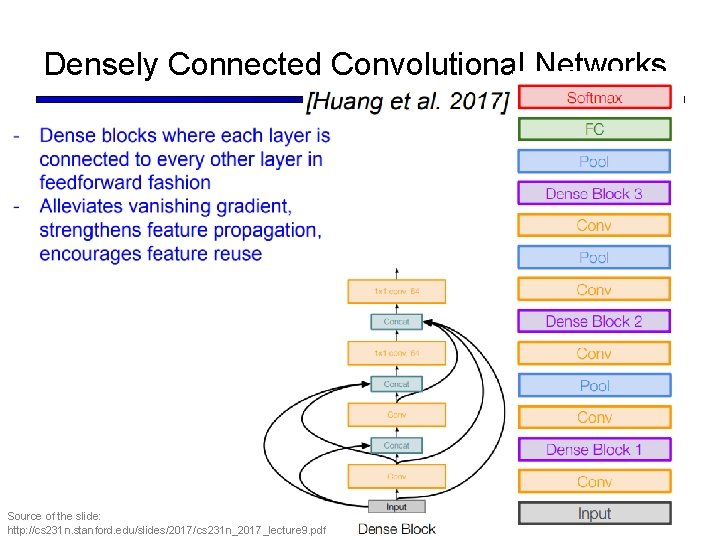

Densely Connected Convolutional Networks Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf 55

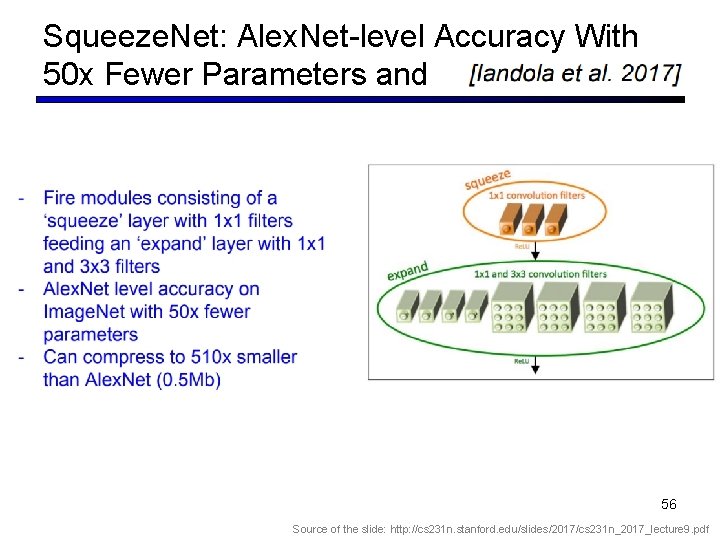

Squeeze. Net: Alex. Net-level Accuracy With 50 x Fewer Parameters and 56 Source of the slide: http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 9. pdf

- Slides: 56