AAAI 2014 Tutorial Latent Tree Models Nevin L

- Slides: 27

AAAI 2014 Tutorial Latent Tree Models Nevin L. Zhang Dept. of Computer Science & Engineering The Hong Kong Univ. of Sci. & Tech. http: //www. cse. ust. hk/~lzhang

HKUST 2014 HKUST 1988

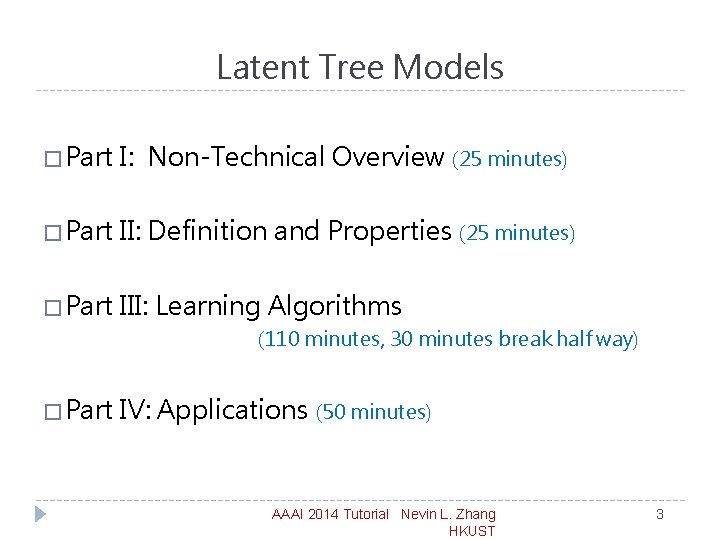

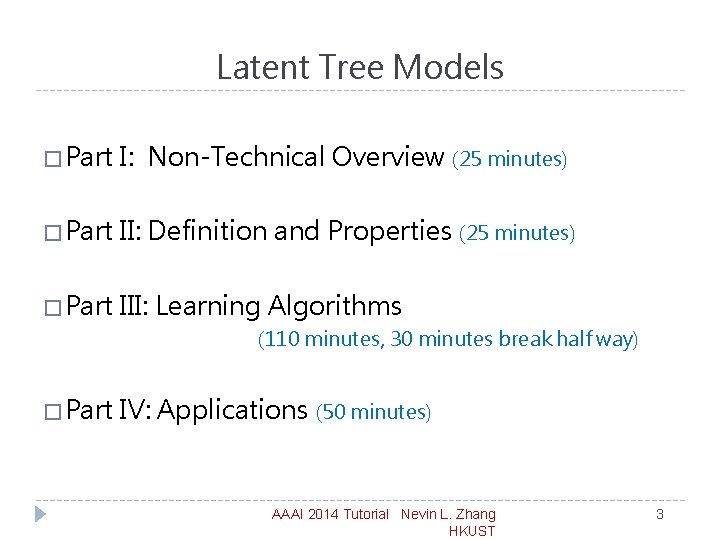

Latent Tree Models � Part I: Non-Technical Overview (25 minutes) � Part II: Definition and Properties (25 minutes) � Part III: Learning Algorithms (110 minutes, 30 minutes break half way) � Part IV: Applications (50 minutes) AAAI 2014 Tutorial Nevin L. Zhang HKUST 3

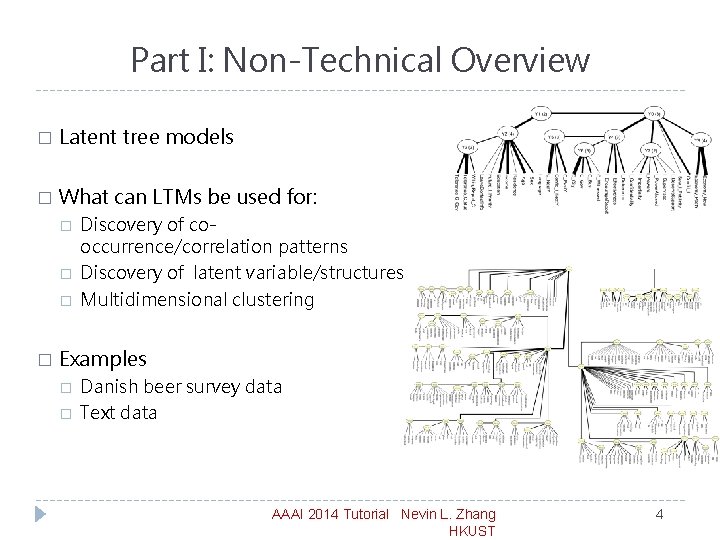

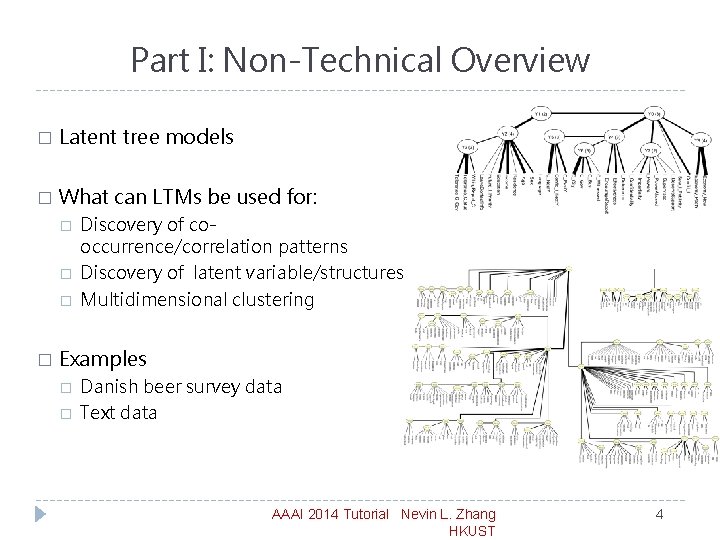

Part I: Non-Technical Overview � Latent tree models � What can LTMs be used for: � � Discovery of cooccurrence/correlation patterns Discovery of latent variable/structures Multidimensional clustering Examples � � Danish beer survey data Text data AAAI 2014 Tutorial Nevin L. Zhang HKUST 4

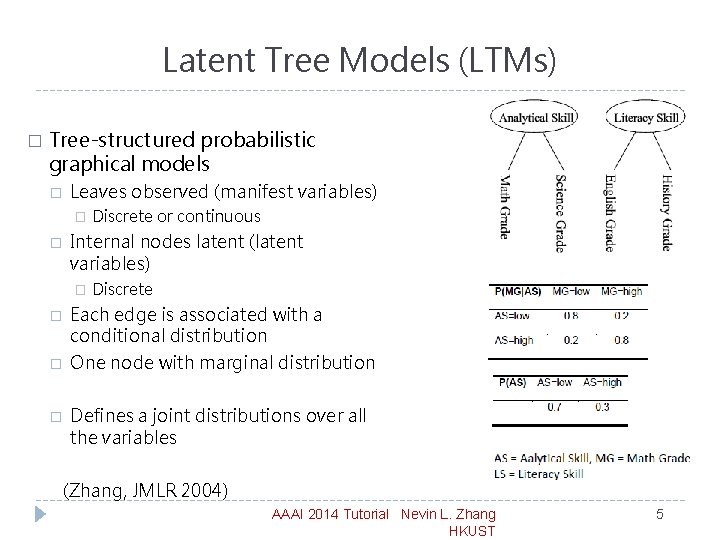

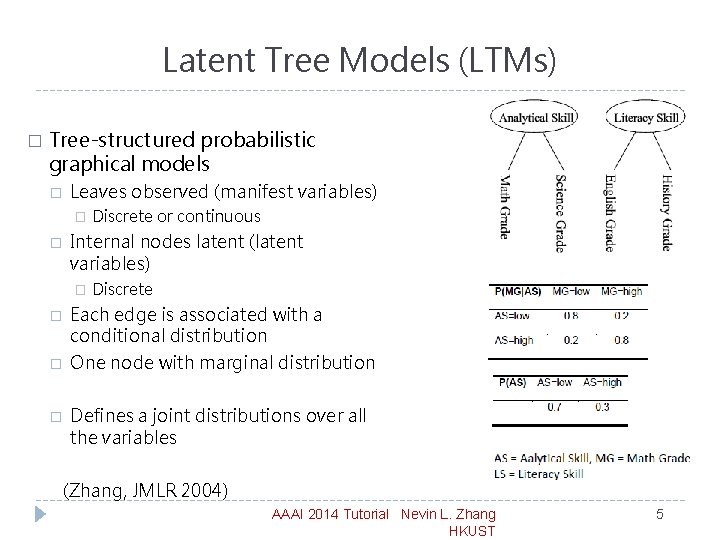

Latent Tree Models (LTMs) � Tree-structured probabilistic graphical models � Leaves observed (manifest variables) � � Internal nodes latent (latent variables) � � Discrete or continuous Discrete Each edge is associated with a conditional distribution One node with marginal distribution Defines a joint distributions over all the variables (Zhang, JMLR 2004) AAAI 2014 Tutorial Nevin L. Zhang HKUST 5

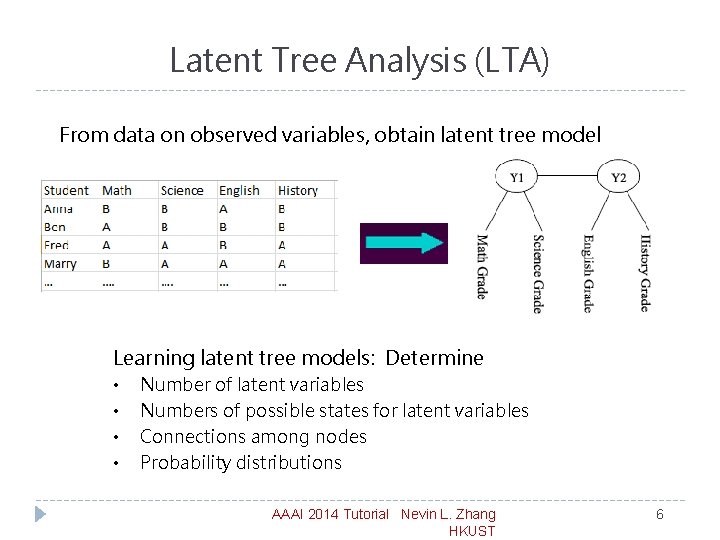

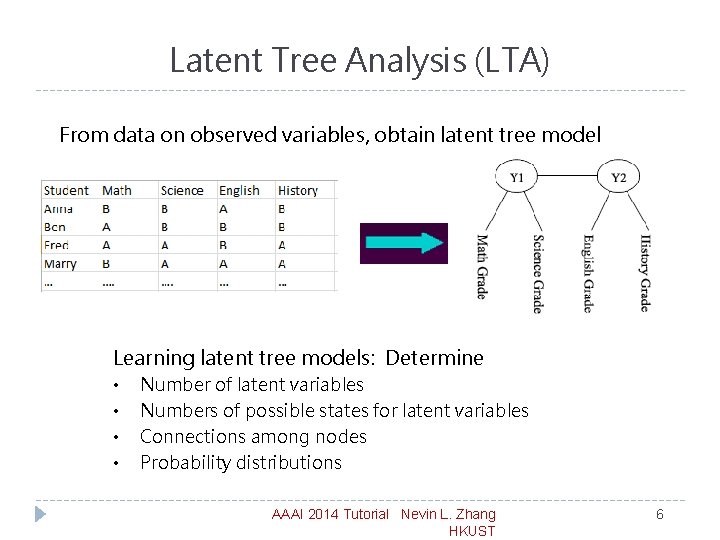

Latent Tree Analysis (LTA) From data on observed variables, obtain latent tree model Learning latent tree models: Determine • • Number of latent variables Numbers of possible states for latent variables Connections among nodes Probability distributions AAAI 2014 Tutorial Nevin L. Zhang HKUST 6

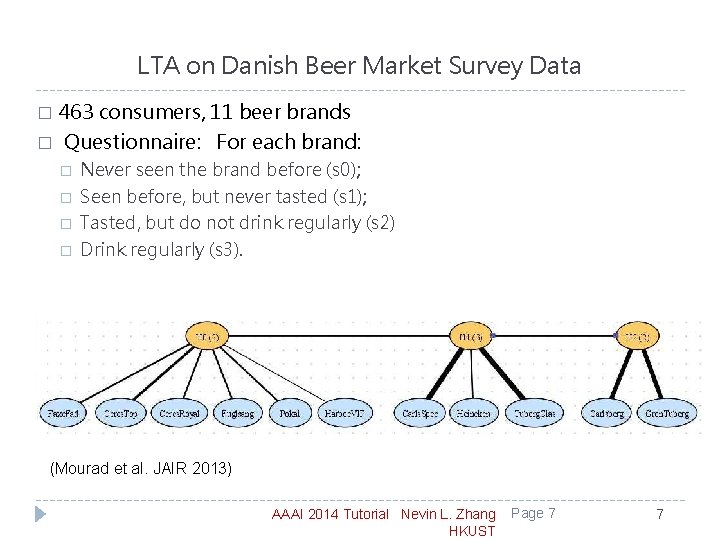

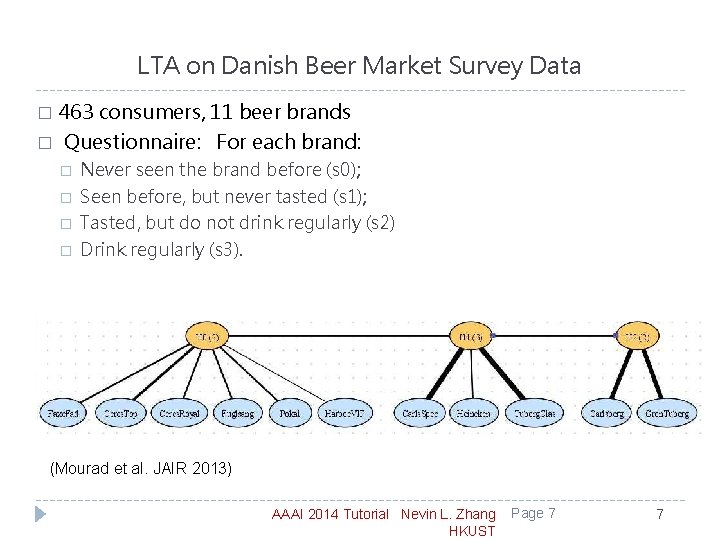

LTA on Danish Beer Market Survey Data 463 consumers, 11 beer brands � Questionnaire: For each brand: � � � Never seen the brand before (s 0); Seen before, but never tasted (s 1); Tasted, but do not drink regularly (s 2) Drink regularly (s 3). (Mourad et al. JAIR 2013) AAAI 2014 Tutorial Nevin L. Zhang HKUST Page 7 7

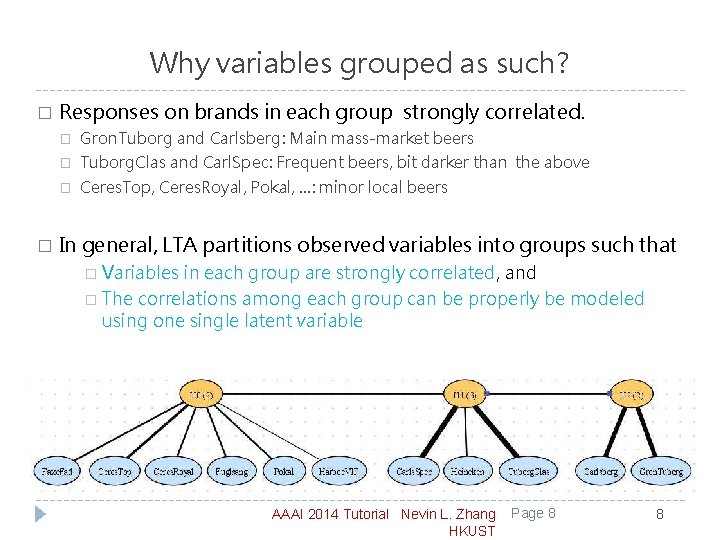

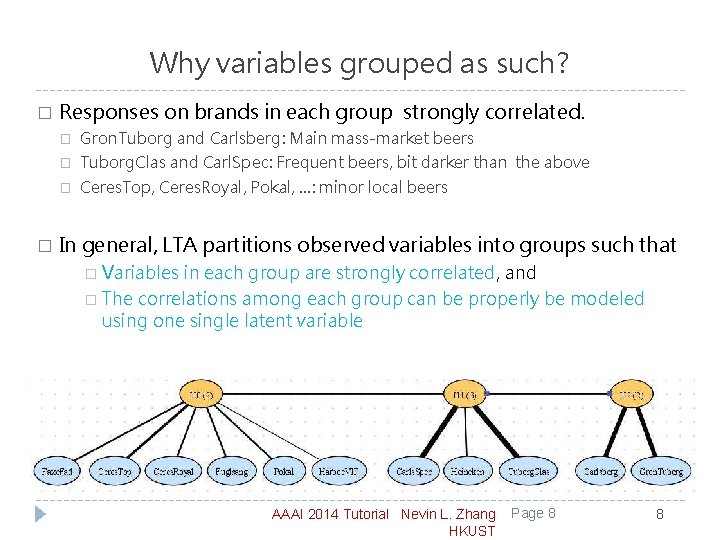

Why variables grouped as such? � � Responses on brands in each group strongly correlated. � Gron. Tuborg and Carlsberg: Main mass-market beers � Tuborg. Clas and Carl. Spec: Frequent beers, bit darker than the above � Ceres. Top, Ceres. Royal, Pokal, …: minor local beers In general, LTA partitions observed variables into groups such that � Variables in each group are strongly correlated, and � The correlations among each group can be properly be modeled using one single latent variable AAAI 2014 Tutorial Nevin L. Zhang HKUST Page 8 8

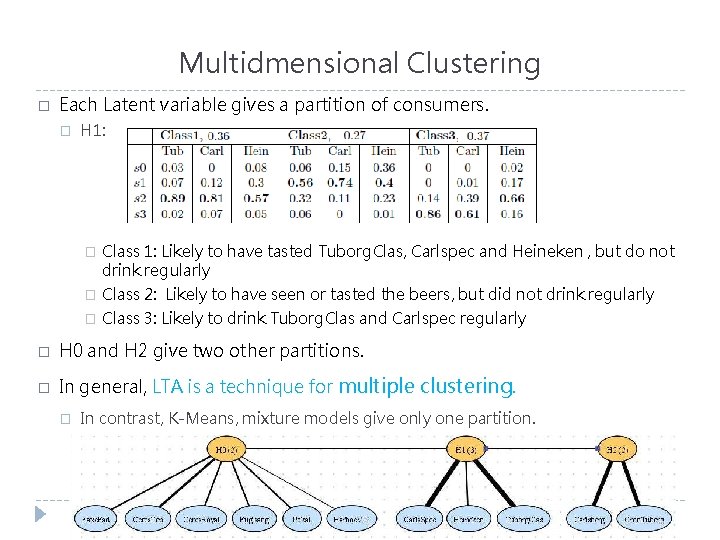

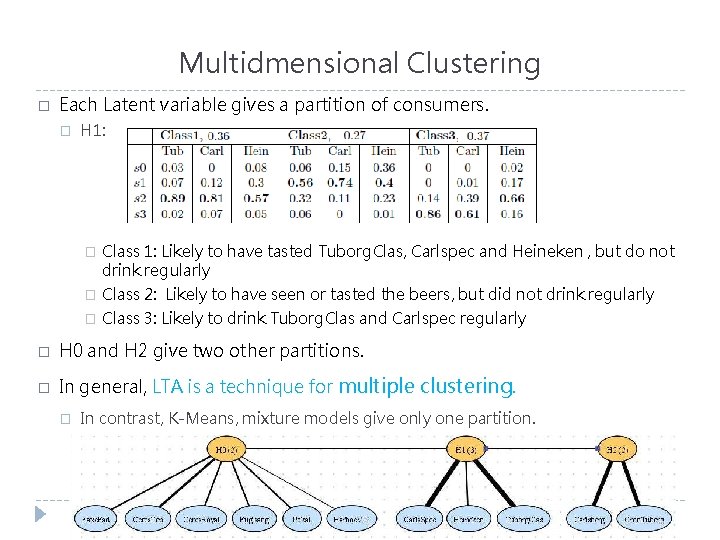

Multidmensional Clustering � Each Latent variable gives a partition of consumers. � H 1: � Class 1: Likely to have tasted Tuborg. Clas, Carlspec and Heineken , but do not drink regularly � Class 2: Likely to have seen or tasted the beers, but did not drink regularly � Class 3: Likely to drink Tuborg. Clas and Carlspec regularly � H 0 and H 2 give two other partitions. � In general, LTA is a technique for multiple clustering. � In contrast, K-Means, mixture models give only one partition. AAAI 2014 Tutorial Nevin L. Zhang HKUST Page 9 9

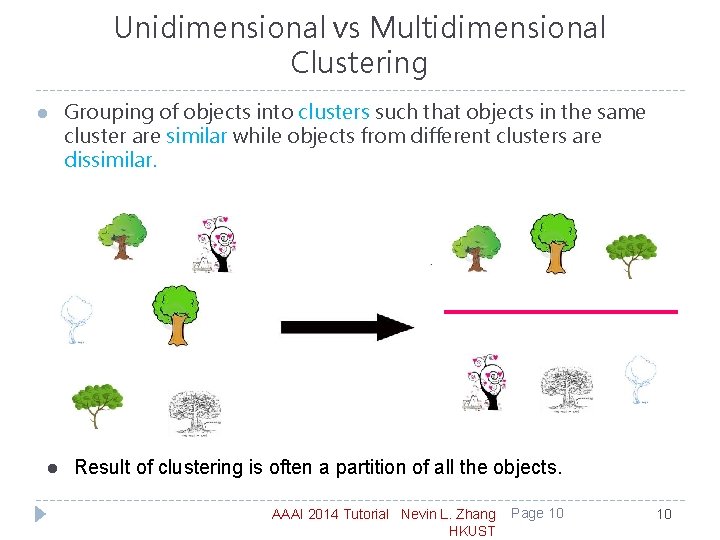

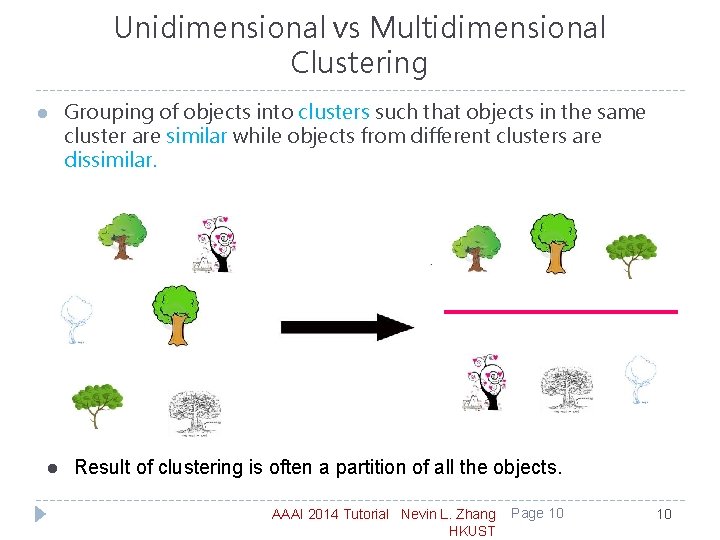

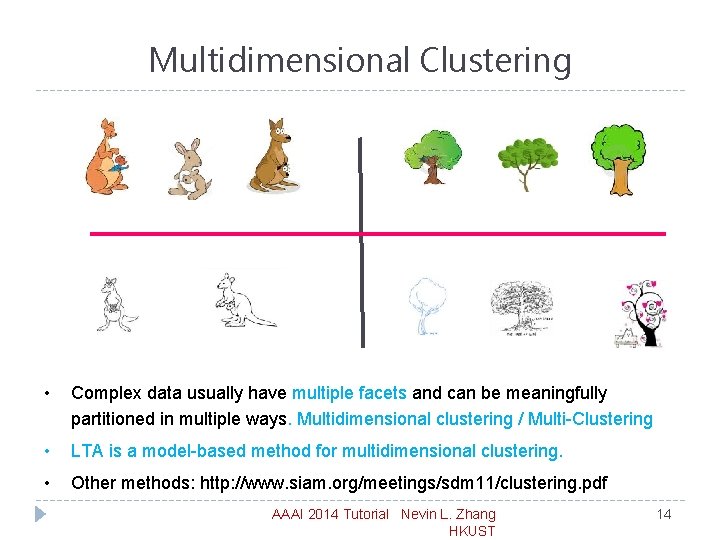

Unidimensional vs Multidimensional Clustering l l Grouping of objects into clusters such that objects in the same cluster are similar while objects from different clusters are dissimilar. Result of clustering is often a partition of all the objects. AAAI 2014 Tutorial Nevin L. Zhang HKUST Page 10 10

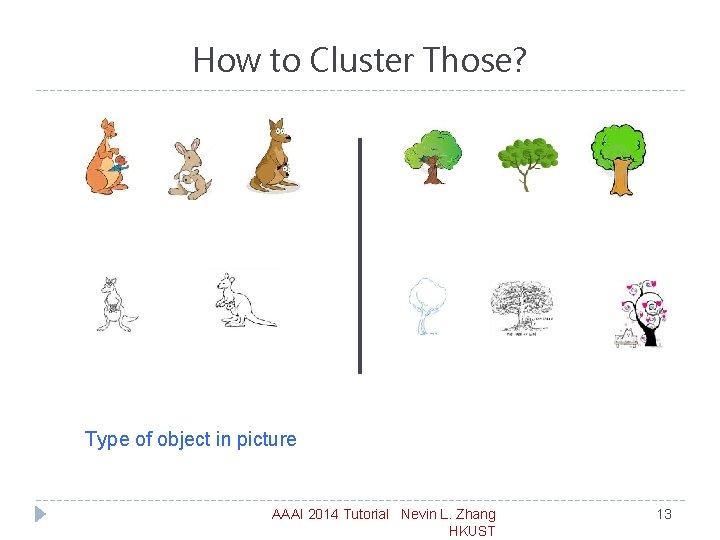

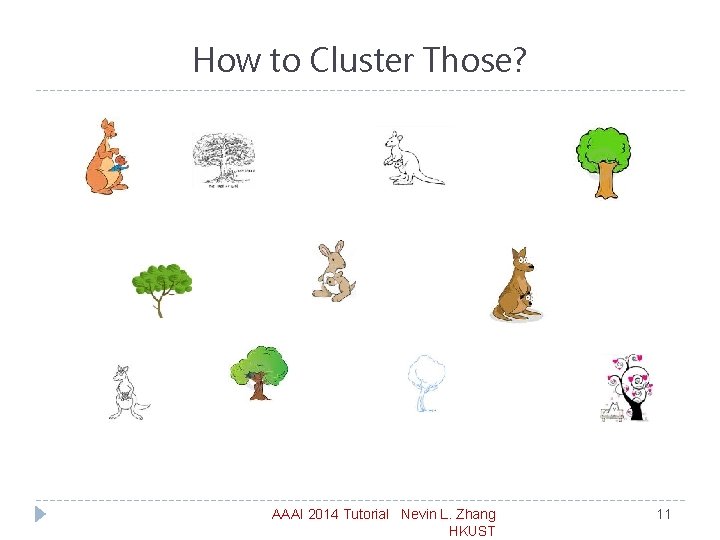

How to Cluster Those? AAAI 2014 Tutorial Nevin L. Zhang HKUST 11

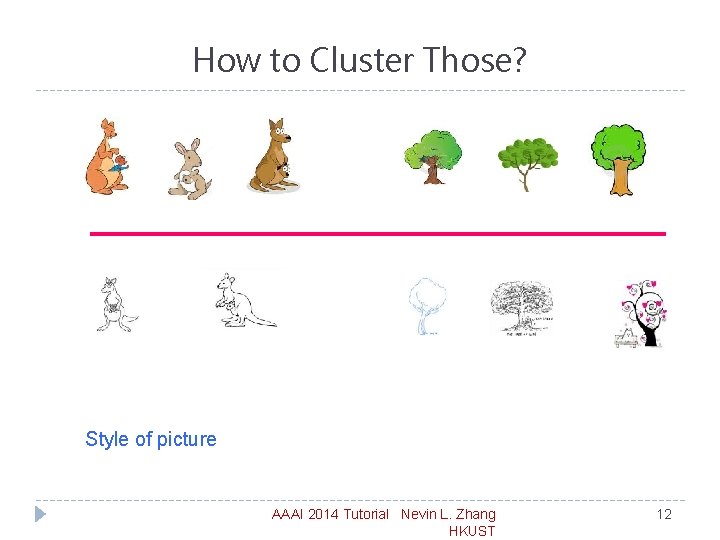

How to Cluster Those? Style of picture AAAI 2014 Tutorial Nevin L. Zhang HKUST 12

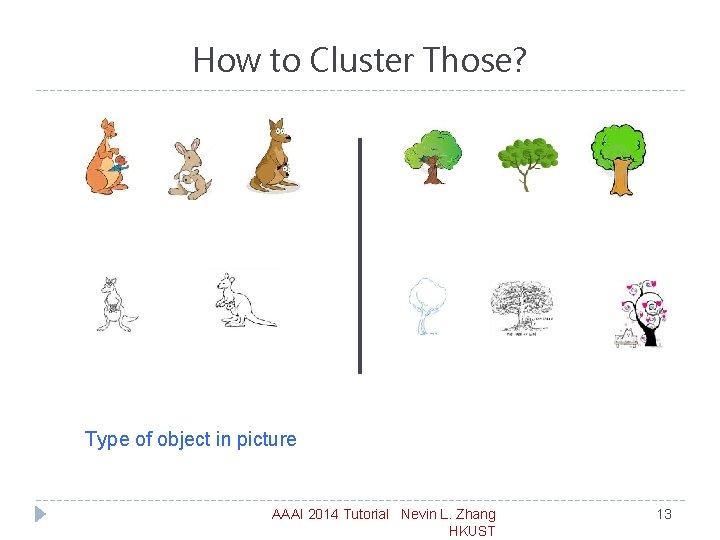

How to Cluster Those? Type of object in picture AAAI 2014 Tutorial Nevin L. Zhang HKUST 13

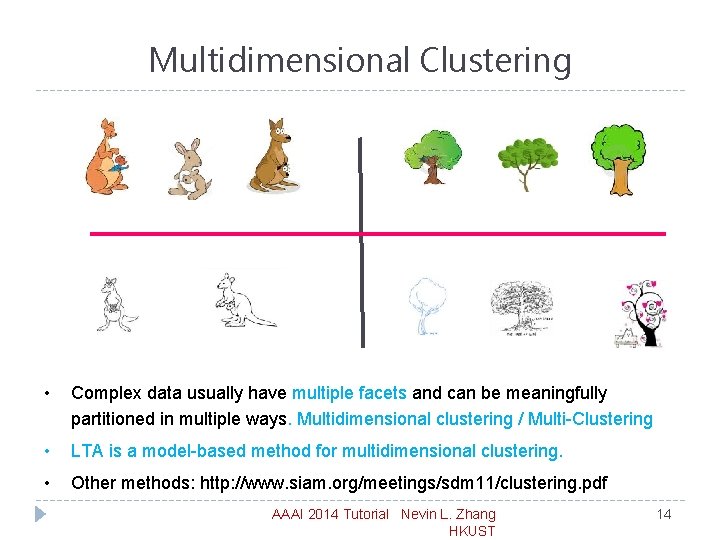

Multidimensional Clustering • Complex data usually have multiple facets and can be meaningfully partitioned in multiple ways. Multidimensional clustering / Multi-Clustering • LTA is a model-based method for multidimensional clustering. • Other methods: http: //www. siam. org/meetings/sdm 11/clustering. pdf AAAI 2014 Tutorial Nevin L. Zhang HKUST 14

Clustering of Variables and Objects � LTA produces a partition of observed variables. � For each cluster of variables, it produces a partition of objects. AAAI 2014 Tutorial Nevin L. Zhang HKUST 15

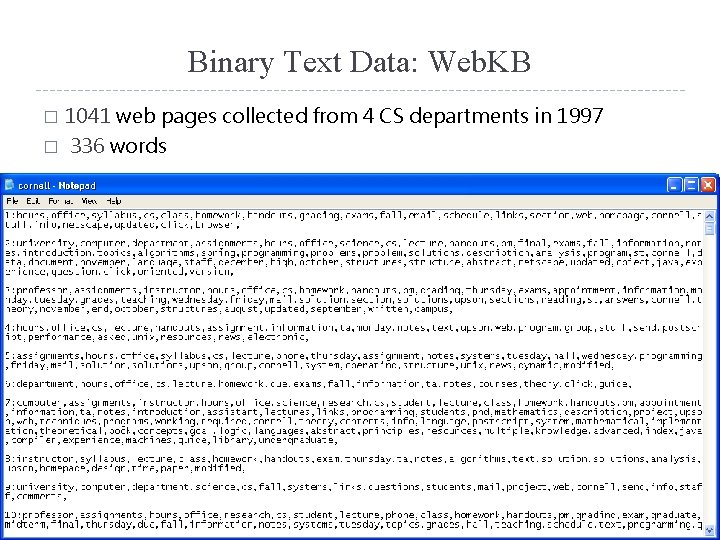

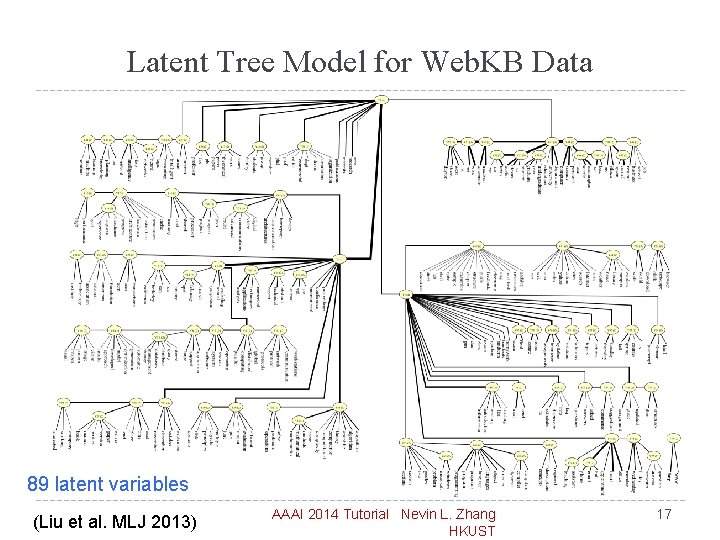

Binary Text Data: Web. KB 1041 web pages collected from 4 CS departments in 1997 � 336 words � AAAI 2014 Tutorial Nevin L. Zhang HKUST 16

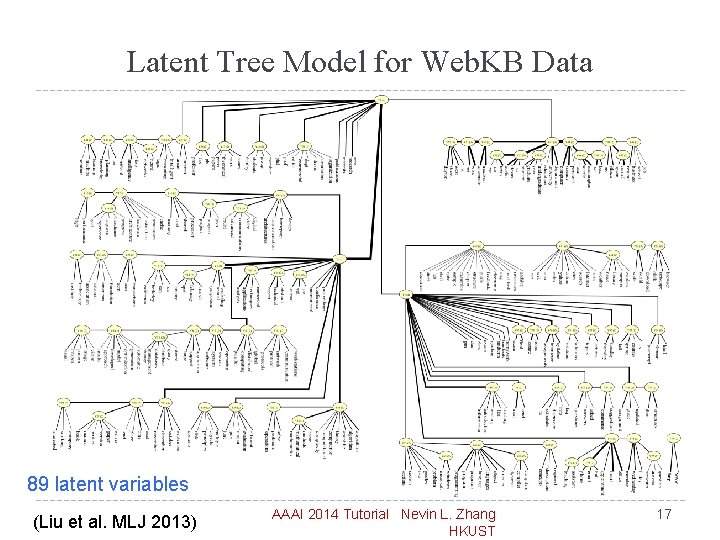

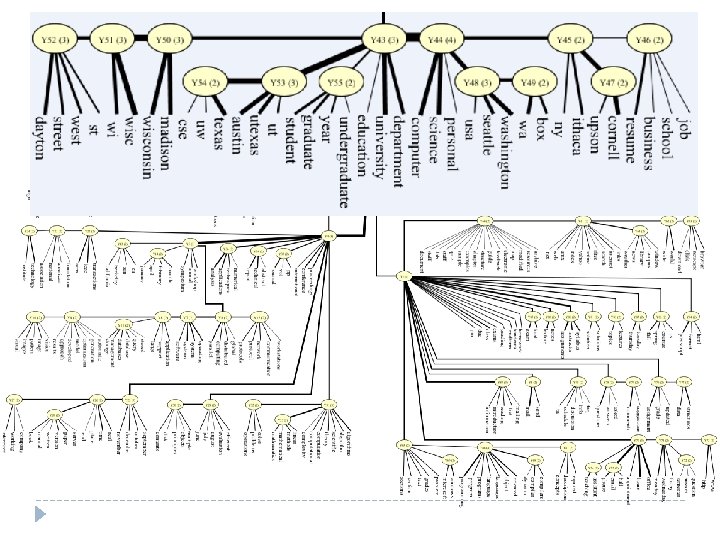

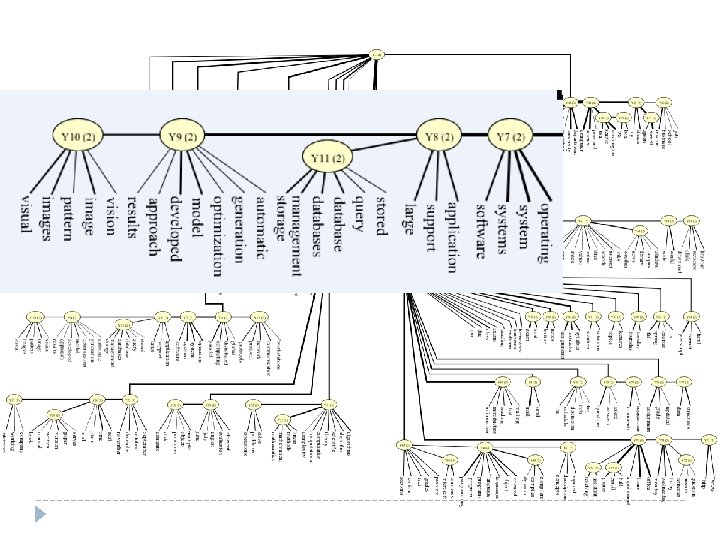

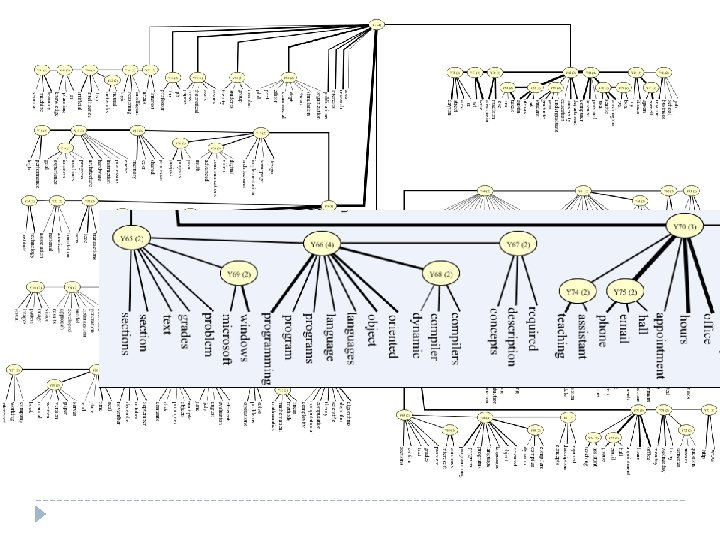

Latent Tree Model for Web. KB Data 89 latent variables (Liu et al. MLJ 2013) AAAI 2014 Tutorial Nevin L. Zhang HKUST 17

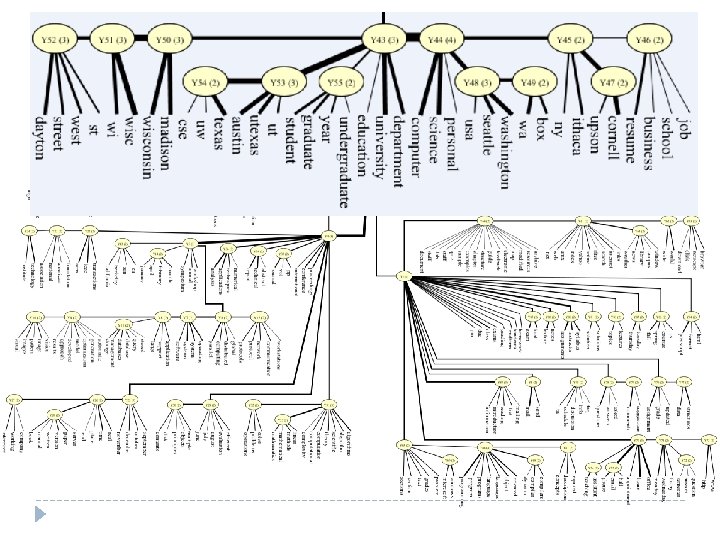

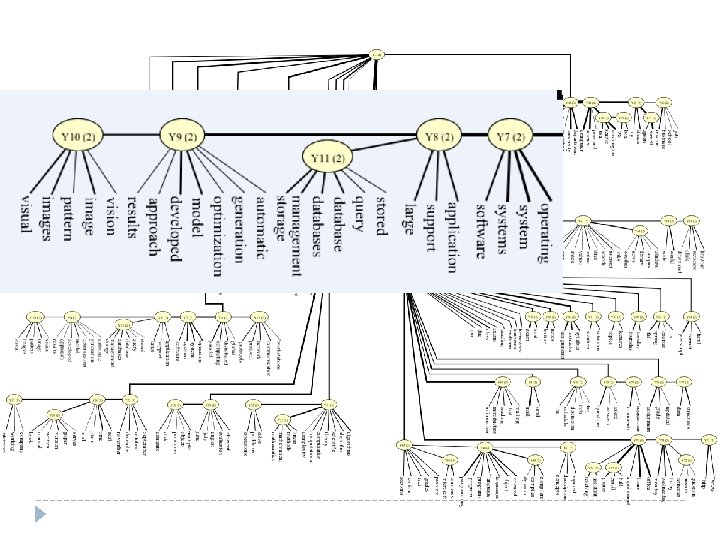

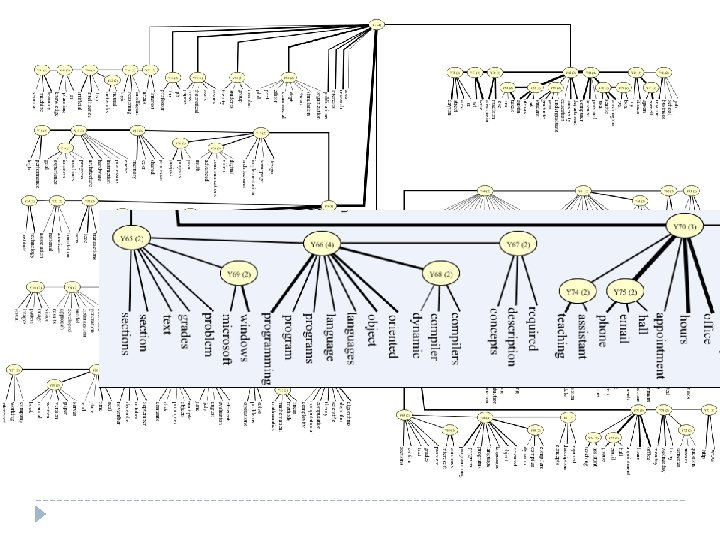

Latent Tree Modes for Web. KB Data

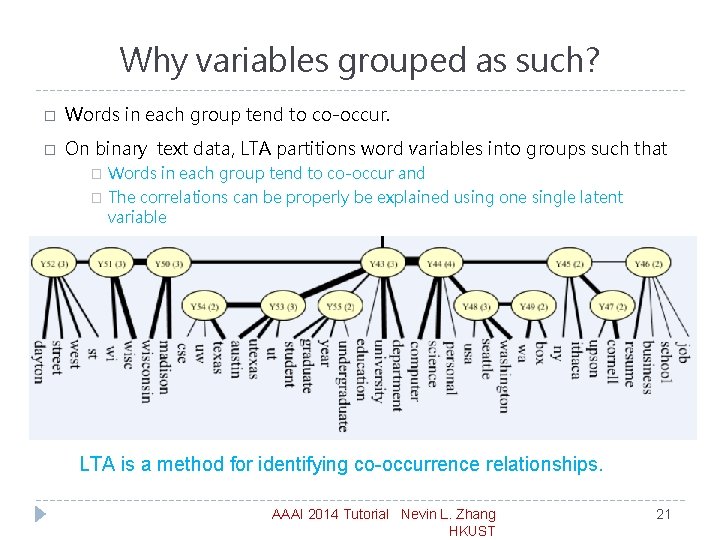

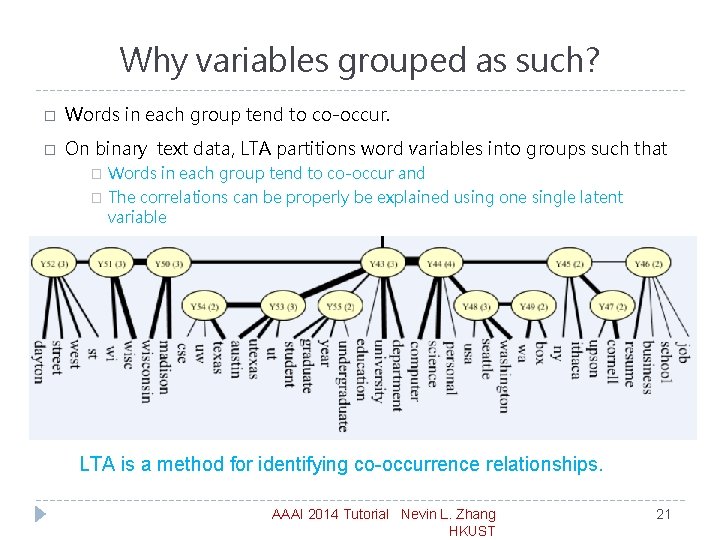

Why variables grouped as such? � Words in each group tend to co-occur. � On binary text data, LTA partitions word variables into groups such that � Words in each group tend to co-occur and � The correlations can be properly be explained using one single latent variable LTA is a method for identifying co-occurrence relationships. AAAI 2014 Tutorial Nevin L. Zhang HKUST 21

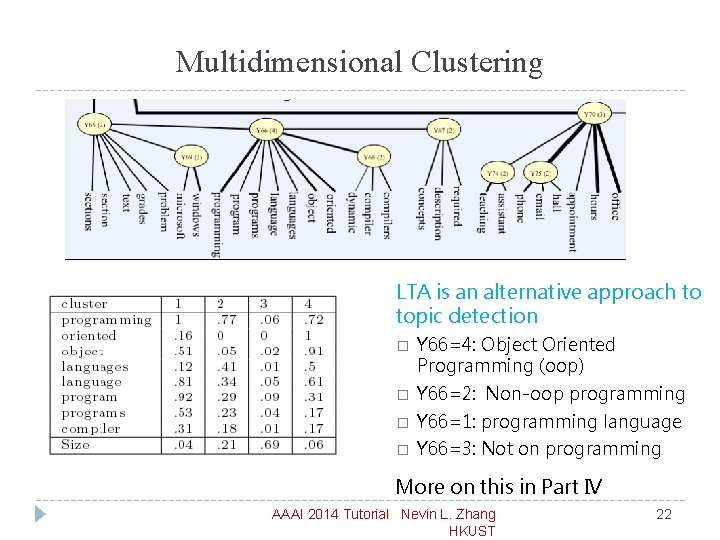

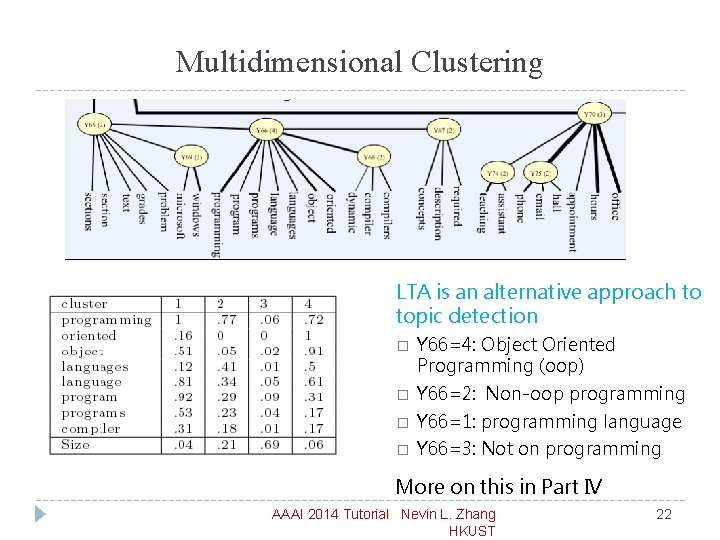

Multidimensional Clustering LTA is an alternative approach to topic detection � Y 66=4: Object Oriented Programming (oop) � Y 66=2: Non-oop programming � Y 66=1: programming language � Y 66=3: Not on programming More on this in Part IV AAAI 2014 Tutorial Nevin L. Zhang HKUST 22

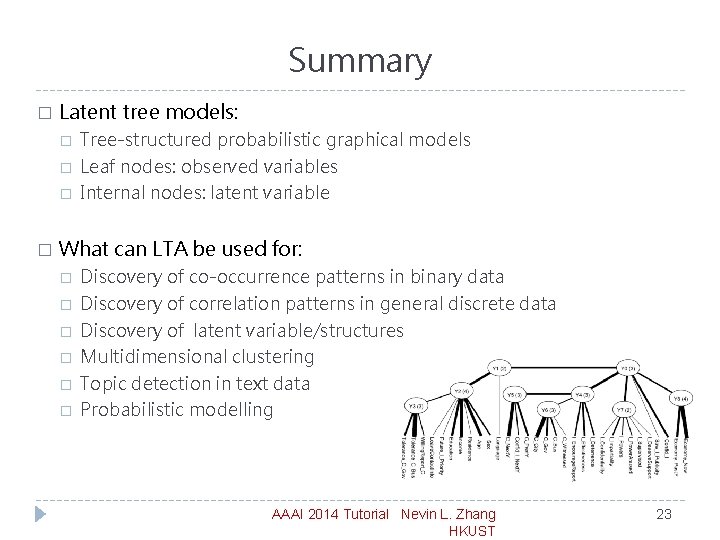

Summary � Latent tree models: � � Tree-structured probabilistic graphical models Leaf nodes: observed variables Internal nodes: latent variable What can LTA be used for: � � � Discovery of co-occurrence patterns in binary data Discovery of correlation patterns in general discrete data Discovery of latent variable/structures Multidimensional clustering Topic detection in text data Probabilistic modelling AAAI 2014 Tutorial Nevin L. Zhang HKUST 23

Key References: � Anandkumar, A. , Chaudhuri, K. , Hsu, D. , Kakade, S. M. , Song, L. , & Zhang, T. (2011). Spectral methods for learning multivariate latent tree structure. In Twenty-Fifth Conference in Neural Information Processing Systems (NIPS-11). � Anandkumar, A. , Ge, R. , Hsu, D. , Kakade, S. M. , and Telgarsky, M. Tensor decompositions for learning latent variable models. In Preprint, 2012 a. � Anandkumar, A. , Hsu, D. , and Kakade, S. M. A method of moments for mixture models and hidden Markov models. In An abridged version appears in the Proc. Of COLT, 2012 b. � Choi, M. J. , Tan, V. Y. , Anandkumar, A. , & Willsky, A. S. (2011). Learning latent tree graphical models. Journal of Machine Learning Research, 12, 1771– 1812. � Friedman, N. , Ninio, M. , Pe’er, I. , & Pupko, T. (2002). A structural EM algorithm for phylogenetic inference. . Journal of Computational Biology, 9(2), 331– 353. � Harmeling, S. , & Williams, C. K. I. (2011). Greedy learning of binary latent trees. IEEE Transactions on Pattern Analysis and Machine Intelligence, 33(6), 1087– 1097. � Hsu, D. , Kakade, S. , & Zhang, T. (2009). A spectral algorithm for learning hidden Markov models. In The 22 nd Annual Conference on Learning Theory (COLT 2009).

Key References: � E. Mossel, S. Roch, and A. Sly. Robust estimation of latent tree graphical models: Inferring hidden states with inexact parameters. Submitted. http: //arxiv. org/abs/1109. 4668, 2011. � Mourad, R. , Sinoquet, C. , & Leray, P. (2011). A hierarchical Bayesian network approach for linkage disequilibrium modeling and data-dimensionality reduction prior to genomewide association studies. BMC Bioinformatics, 12, 16. � Mourad R. , Sinoquet C. , Zhang N. L. , Liu T. F. and Leray P. (2013). A survey on latent tree models and applications. Journal of Artificial Intelligence Research, 47, 157 -203 , 13 May 2013. doi: 10. 1613/jair. 3879. � Parikh, A. P. , Song, L. , & Xing, E. P. (2011). A spectral algorithm for latent tree graphical models. In Proceedings of the 28 th International Conference on Machine Learning (ICML-2011). � Saitou, N. , & Nei, M. (1987). The neighbor-joining method: A new method for reconstructing phylogenetic trees. . Molecular Biology and Evolution, 4(4), 406– 425. � Song, L. , Parikh, A. , & Xing, E. (2011). Kernel embeddings of latent tree graphical models. In Twenty -Fifth Conference in Neural Information Processing Systems (NIPS-11). � Tan, V. Y. F. , Anandkumar, A. , & Willsky, A. (2011). Learning high-dimensional Markov forest distributions: Analysis of error rates. Journal of Machine Learning Research, 12, 1617– 1653. AAAI 2014 Tutorial Nevin L. Zhang HKUST 25

Key References: � T. Chen and N. L. Zhang (2006). Quartet-based learning of shallow latent variables. In Proceedings of the Third European Workshop on Probabilistic Graphical Model, 59 -66 , September 12 -15, 2006. � Chen, T. , Zhang, N. L. , Liu, T. , Poon, K. M. , & Wang, Y. (2012). Model-based multidimensional clustering of categorical data. Artificial Intelligence, 176(1), 2246– 2269. � Liu, T. F. , Zhang, N. L. , Liu, A. H. , & Poon, L. K. M. (2013). Greedy learning of latent tree models for multidimensional clustering. Machine Learning, doi: 10. 1007/s 10994 -013 -5393 -0. � Liu, T. F. , Zhang, N. L. , and Chen, P. X. (2014). Hierarchical latent tree analysis for topic detection. ECML, 2014 � Poon, L. K. M. , Zhang, N. L. , Chen, T. , & Wang, Y. (2010). Variable selection in modelbased clustering: To do or to facilitate. In Proceedings of the 27 th International Con-ference on Machine Learning (ICML-2010). � Wang, Y. , Zhang, N. L. , & Chen, T. (2008). Latent tree models and approximate inference in Bayesian networks. Journal of Articial Intelligence Research, 32, 879– 900. � Wang, X. F. , Guo, J. H. , Hao, L. Z. , Zhang, N. L. , & P. X. Chen (2013). Recovering discrete latent tree models by spectral methods. � Wang, X. F. , Zhang, N. L. (2014). A Study of Recently Discovered Equalities about Latent Tree Models using Inverse Edges. PGM 2014. � Zhang, N. L. (2004). Hierarchical latent class models for cluster analysis. The Journal of Machine Learning Research, 5, 697– 723. � Zhang, N. L. , & Kocka, T. (2004 a). Effective dimensions of hierarchical latent class models. Journal of Articial Intelligence Research, 21, 1– 17. AAAI 2014 Tutorial Nevin L. Zhang HKUST 26

Key References: � Zhang, N. L. , & Kocka, T. (2004 b). Efficient learning of hierarchical latent class models. In Proceedings of the 16 th IEEE International Conference on Tools with Artificial Intelligence (ICTAI), pp. 585– 593. � Zhang, N. L. , Nielsen, T. D. , & Jensen, F. V. (2004). Latent variable discovery in classification models. Artificial Intelligence in Medicine, 30(3), 283– 299. � Zhang, N. L. , Wang, Y. , & Chen, T. (2008). Discovery of latent structures: Experience with the Co. IL Challenge 2000 data set*. Journal of Systems Science and Complexity, 21(2), 172– 183. � Zhang, N. L. , Yuan, S. , Chen, T. , & Wang, Y. (2008). Latent tree models and diagnosis in traditional Chinese medicine. Artificial Intelligence in Medicine, 42(3), 229– 245. � Zhang, N. L. , Yuan, S. , Chen, T. , & Wang, Y. (2008). Statistical Validation of TCM Theories. Journal of Alternative and Complementary Medicine, 14(5): 583 -7. � Zhang, N. L. , Fu, C. , Liu, T. F. , Poon, K. M. , Chen, P. X. , Chen, B. X. , Zhang, Y. L. (2014). The Latent Tree Analysis Approach to Patient Subclassification in Traditional Chinese Medicine. Evidence. Based Complementary and Alternative Medicine. � Xu, Z. X. , Zhang, N. L. , Wang, Y. Q. , Liu, G. P. , Xu, J. , Liu, T. F. , and Liu A. H. (2013). Statistical Validation of Traditional Chinese Medicine Syndrome Postulates in the Context of Patients with Cardiovascular Disease. The Journal of Alternative and Complementary Medicine. 18, 1 -6. � Zhao, Y. Zhang , N. L. , Wang, T. F. , Wang, Q. G. (2014). Discovering Symptom Co-Occurrence Patterns from 604 Cases of Depressive Patient Data using Latent Tree Models. The Journal of Alternative and Complementary Medicine. 20(4): 265 -71.